Submitted:

19 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

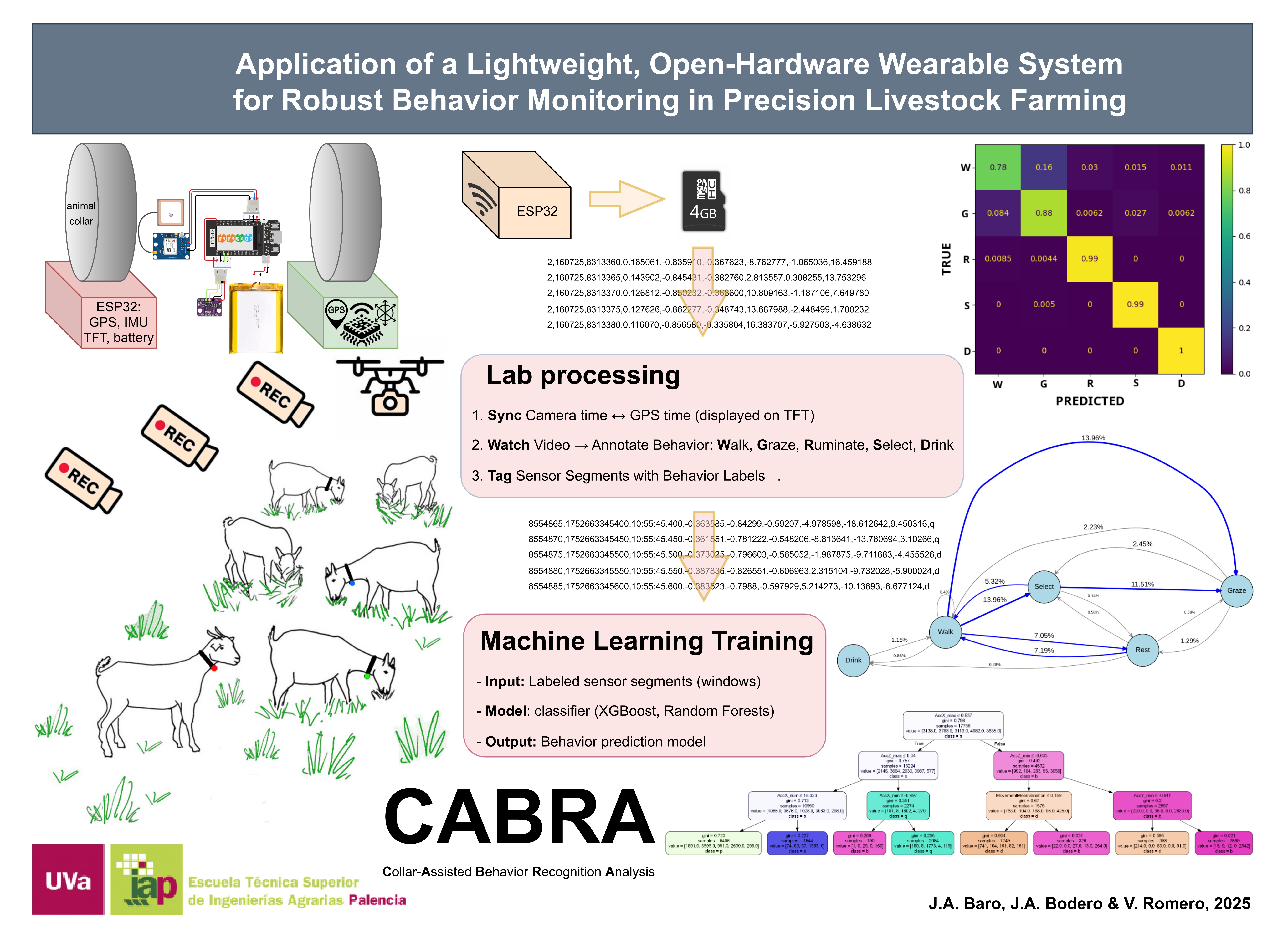

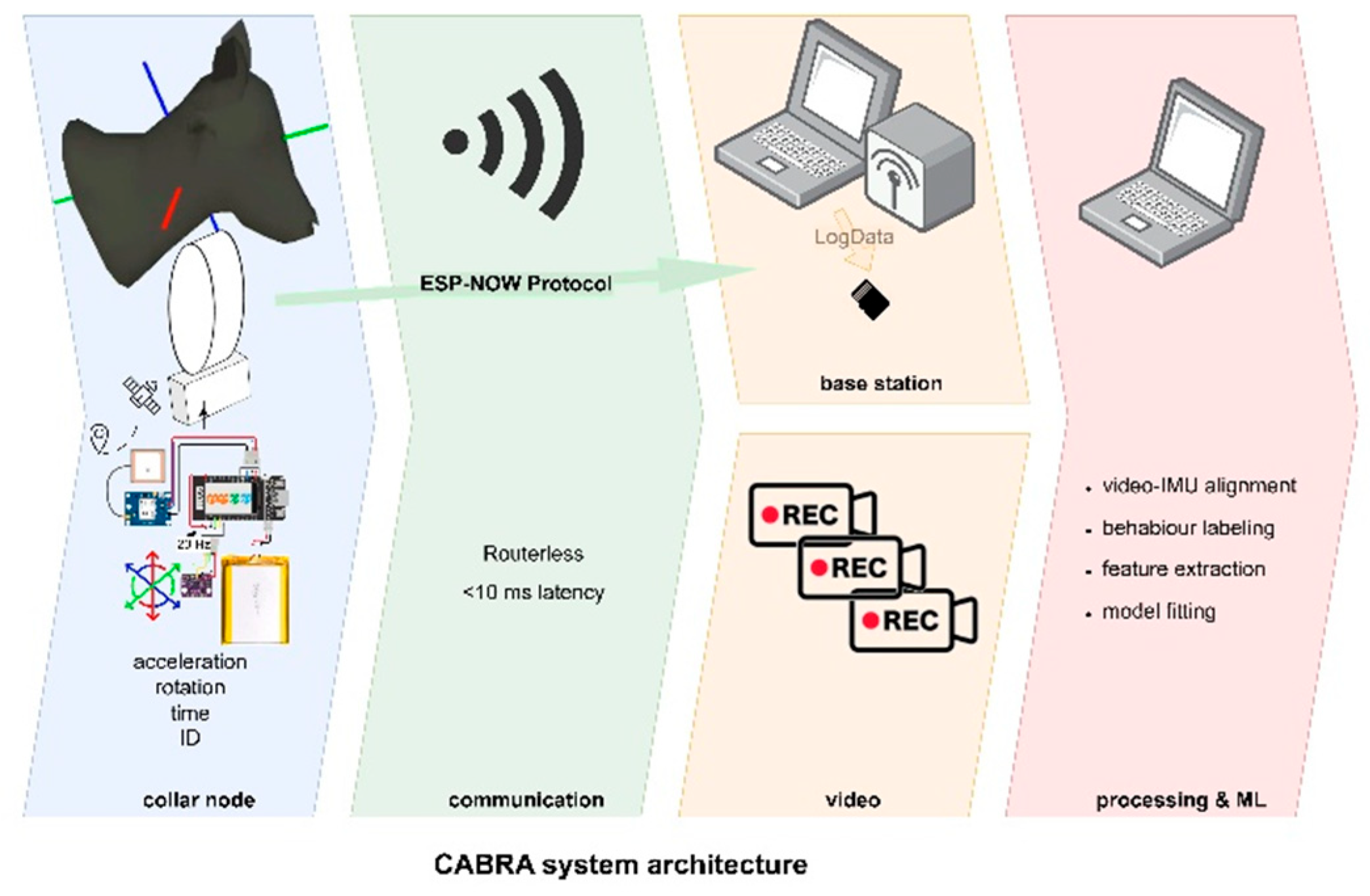

2. Materials and Methods

- Collar-Mounted Sensor Nodes: Lightweight units worn by animals, capture inertial data (and optional GPS) at 20 Hz, and optionally display real-time summaries.;

- Base station Unit: A standalone ESP32-based device that passively listens for data packets via ESP-NOW and logs them.

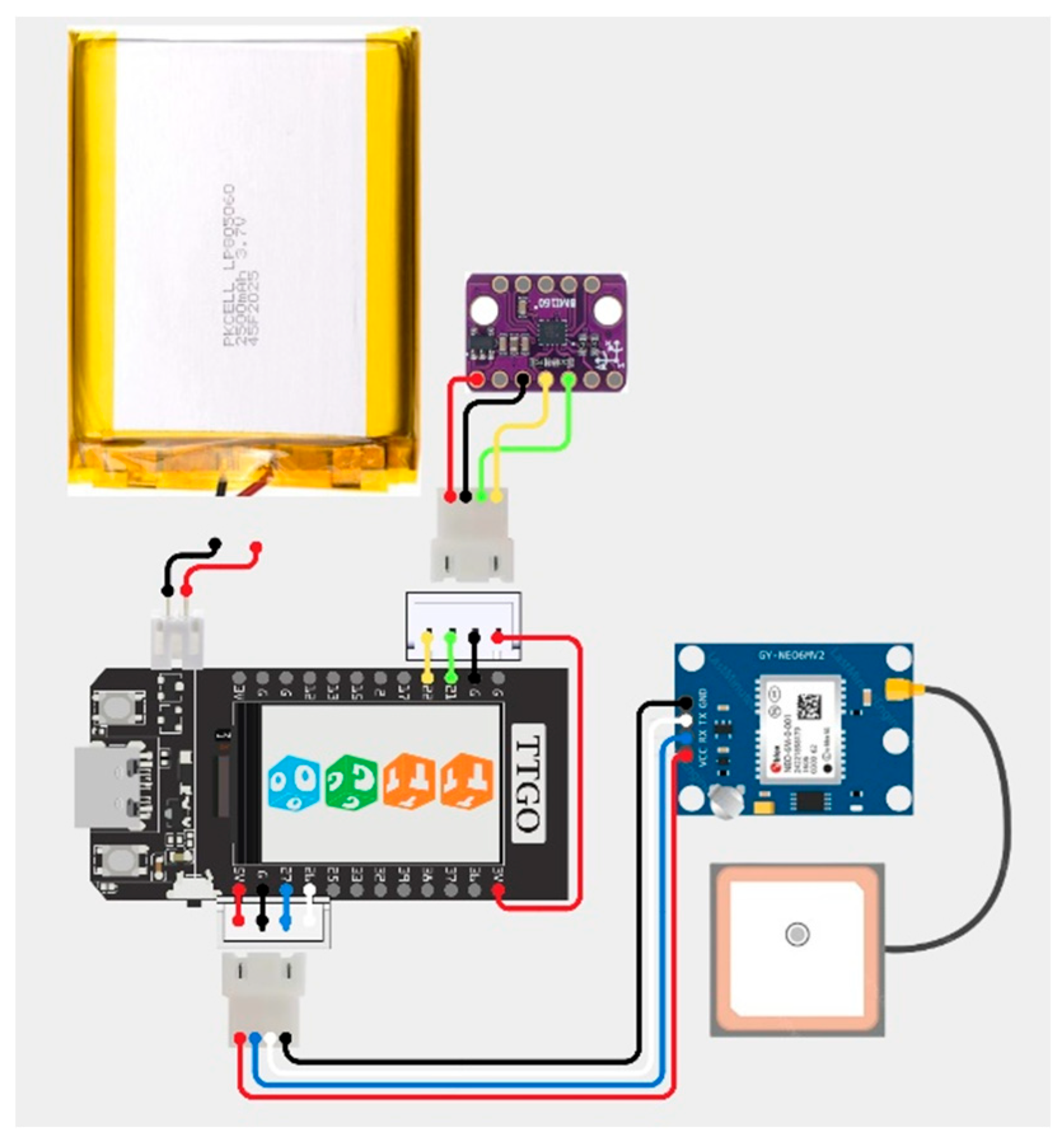

2.1. Sensor Node Hardware

- Microcontroller: ESP32 TTGO (LILYGO), featuring dual-core Xtensa LX6 processor (240 MHz, 520 KB SRAM), Wi-Fi/Bluetooth, and native ESP-NOW support. Selected for its low-power modes, robust wireless stack, and its integrated 1.14 inch colour SPI LCD screen for GPIO flexibility.

- Inertial Measurement Unit (IMU): Bosch BMI160, a 6-axis (3-axis accelerometer + 3-axis gyroscope) MEMS sensor with 16-bit resolution. Configured for ±2g (accelerometer) and ±500°/s (gyroscope) ranges — optimal for capturing fine-grained jaw and head movements associated with foraging and rumination. Sampled at 20 Hz via I²C bus (GPIO 21 = SDA, GPIO 22 = SCL).

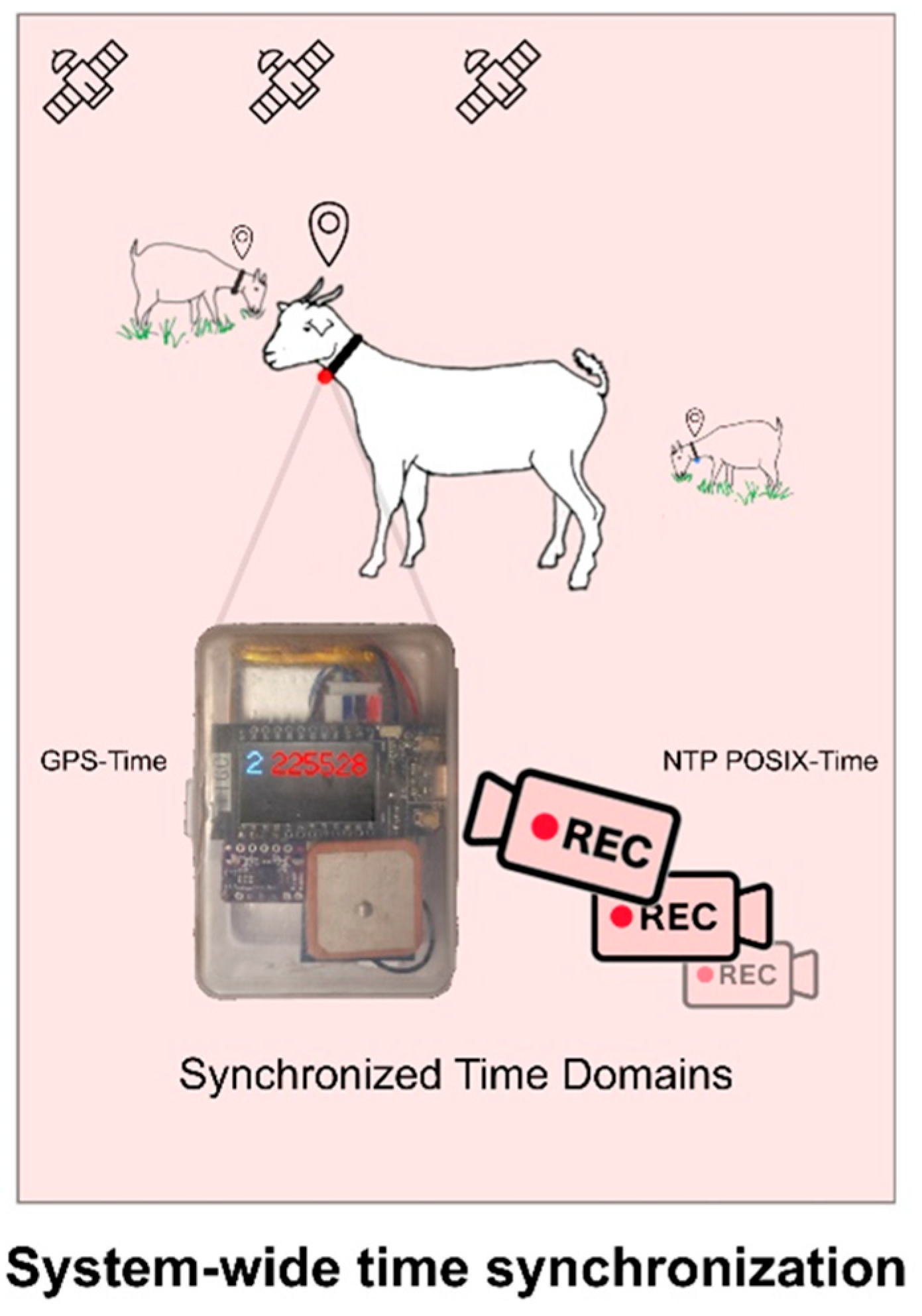

- GPS Module: u-blox NEO-6M, providing NMEA-formatted Position, Velocity, and Time (PVT) sentences (Kaplan and Hegarty, 2017) via UART (TTL serial, 3.3V logic, with UART2 pins RXD2 GPIO 26 and TXD2 GPIO 27). Used for system time synchronization (described in Section 2.5) and spatial context (accuracy: 2.5m CEP; update rate: 5 Hz).

2.2. Power and Enclosure

- Battery: 1500 mAh LiPo (3.7V) , providing ~72 hours of continuous operation.

- Power Management: ESP32 enters light-sleep mode between sensor reads, reducing average current draw to < 30 mA.

- Enclosure: 3D-printed ABS housing (35 g), designed for IP67 dust/water resistance, with ventilation slots and strain relief for wiring. Collar attachment via adjustable, quick-release nylon strap (total collar weight < 80 g, under 0.5% of body weight for adult goats and cattle).

2.3. Data Acquisition and Packet Structure

- Node ID (8-bit unsigned integer) identifies source animal

- Date (32-bit Unix timestamp, UTC) derived from GPS

- Time (32-bit millisecond offset) sub-second precision within the day

- AccX, AccY, AccZ (float, g’s) linear accelerations

- GyroX, GyroY, GyroZ (float, °/s) angular velocities

- CRC16 (16-bit) cyclic error-correcting code appended for data integrity verification

2.4. Wireless Communication

- Range: Effective communication up to 100 m line-of-sight, confirmed in pasture environments.

- Latency: Consistently below 10 ms, supporting near real-time monitoring.

- Robustness: The combination of encryption, peer-to-peer topology, and low-duty-cycle transmission ensures reliable operation amid animal movement and environmental interference.

2.5. Video-IMU Synchronization

2.6. Behavior Classification Pipeline

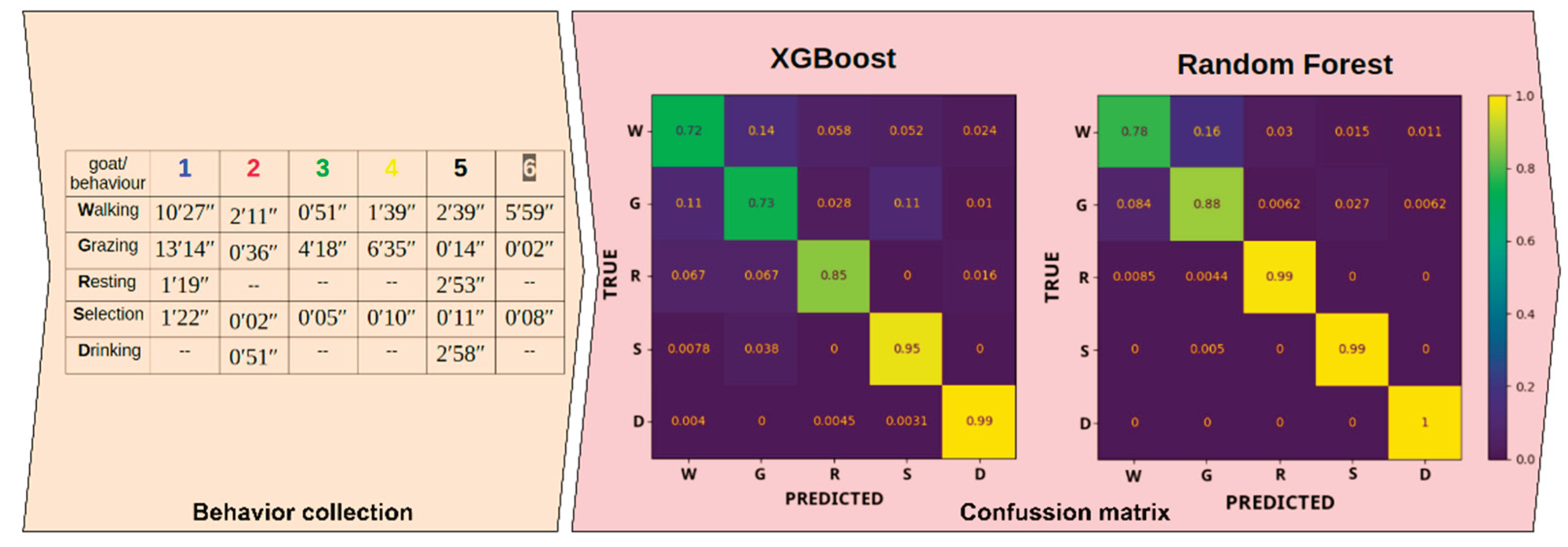

3. Results

4. Discussion

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| CRC16 FFT GPIO IMU MLP NMEA PLF |

16-bit cyclic error-correcting code Fast Fourier Transform General-purpose input/output Inertial Measurement Unit Multilayer Perceptron National Marine Electronics Association Precision livestock farming |

| PVT | Position, Velocity, and Time |

| SVM | Support Vector Machine |

| UART XGBoost |

Universal asynchronous receiver-transmitter eXtreme Gradient Boosting |

Appendix A

Video–IMU Synchronization Protocol

References

- Neethirajan, S. Recent Advances in Wearable Sensors for Animal Health Management. Sens Bio-Sens Res. 2017, 12, 15-29. doi:10.1016/j.sbsr.2016.11.004.

- Wathes, C.M.; Kristensen, H.H.; Aerts, J.M.; Berckmans, D. Is precision livestock farming an engineer’s daydream or nightmare, an animal’s friend or foe, and a farmer’s panacea or pitfall?. Comput. Electron. Agric. 2008, 64, 2-10. [CrossRef]

- Alsaaod, M.; Fadul, M.; Steiner, A. Automatic lameness detection in cattle. Vet J. 2019, 246, 35-44. [CrossRef]

- Li; G.; Chai, L. AnimalAccML: An open-source graphical user interface for automated behavior analytics of individual animals using triaxial accelerometers and machine learning. Comput. Electron. Agric. 2023, 209, 107835.

- Ruuska, S.; Kajava, S.; Mughal, M.; Zehner, N.; Mononen, J. Validation of a pressure sensor-based system for measuring eating, rumination and drinking behaviour of dairy cattle. Appl. Anim. Behav. Sci. 2012, 174, pp. 19-23. [CrossRef]

- Fajardo, B.; Méndez, D. A.; Villagrá, A.; Calvet, S. Development and validation of a triaxial accelerometer for behavior monitoring in Murciano-Granadina goats. In Proceedings of the 76th Annual Meeting of the European Federation of Animal Science, Innsbruck, Austria, 28 8 2025.

- Baro, J. A. CABRA Hardware and Firmware Repository [Code]. Available online: https://github.com/knklB/IMUcabra (accessed on 30 December 2025).. [CrossRef]

- Neethirajan, S. Transforming the Adaptation Physiology of Farm Animals through Sensors. Animals (Basel) 2020, 10(9), p. 1512. [CrossRef]

- Harvey, P. ExifTool (Version 12.70) [Computer Software]. Available online: https://exiftool.org/ (accessed on 30 December 2025).

- Kaplan, E. D.; Hegarty, C. J. Understanding GPS/GNSS: Principles and Applications, 2nd ed.; Artech House: Norwood, USA ,2017; pp. 578-579.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).