Submitted:

20 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

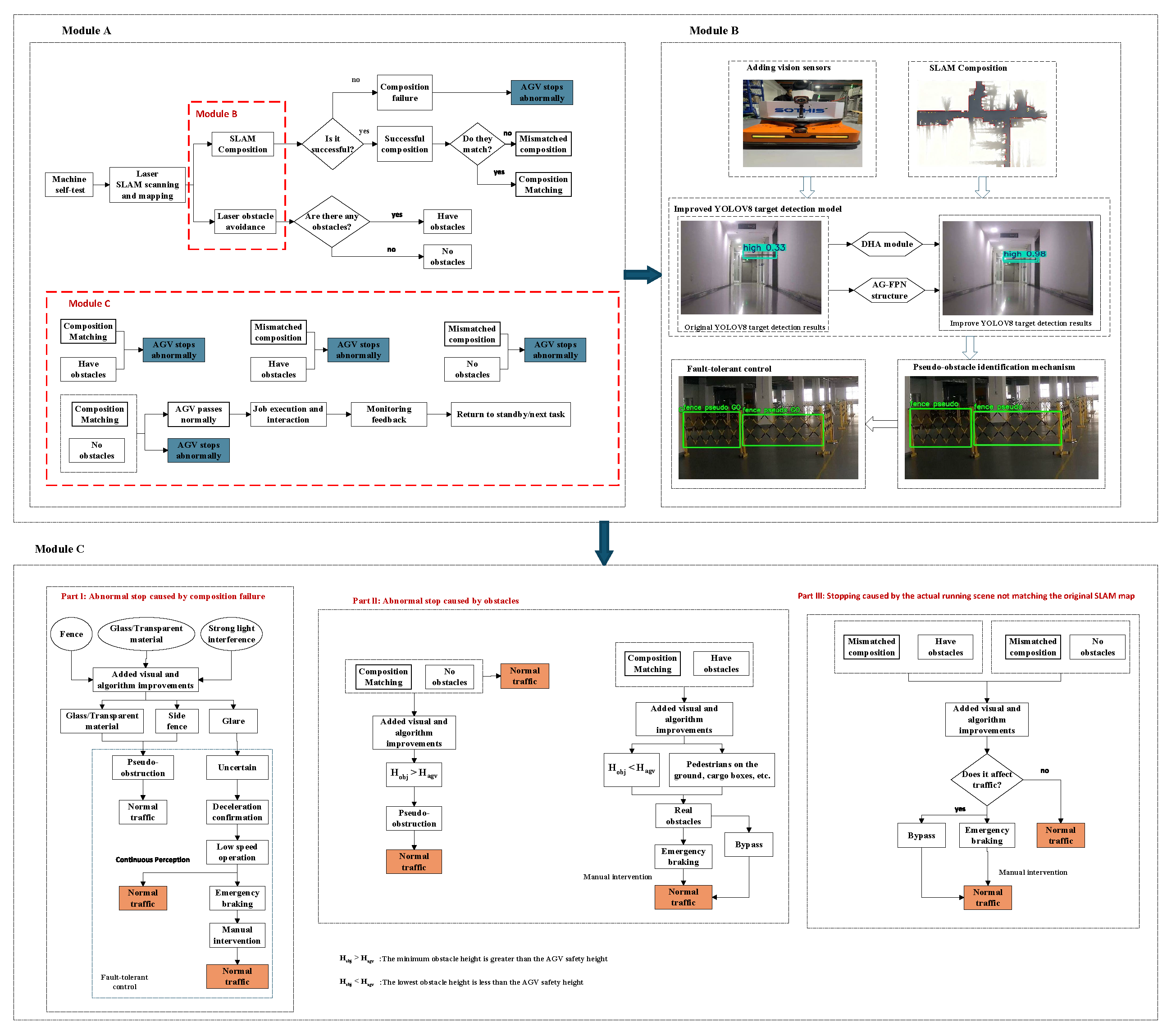

2. System Overall Framework

2.1. Framework Overview and Operating Logic

2.2. Improve Detection Network

- 1.

- Backbone layer: Extracts basic features of the AGV operating environment through multi-layer convolution and residual structure [14], including edge, texture and geometric information, to provide multi-level semantic representation for subsequent feature fusion and detection.

- 2.

- Neck layer: Adopts improved AG-FPN structure and improves the transmission and fusion effect of cross-scale information by reducing computational complexity through efficient feature reuse [15].

- 3.

- Head front end: Embeds DHA module in the front end of the detection head and improves the feature discrimination and capture ability of the model for abnormal regions and difficult-to-detect targets by parallel fusion of saliency, channel and spatial attention mechanisms. It adopts learnable weights [16] to adaptively enhance the semantic representation and spatial structure information of multi-scale features.

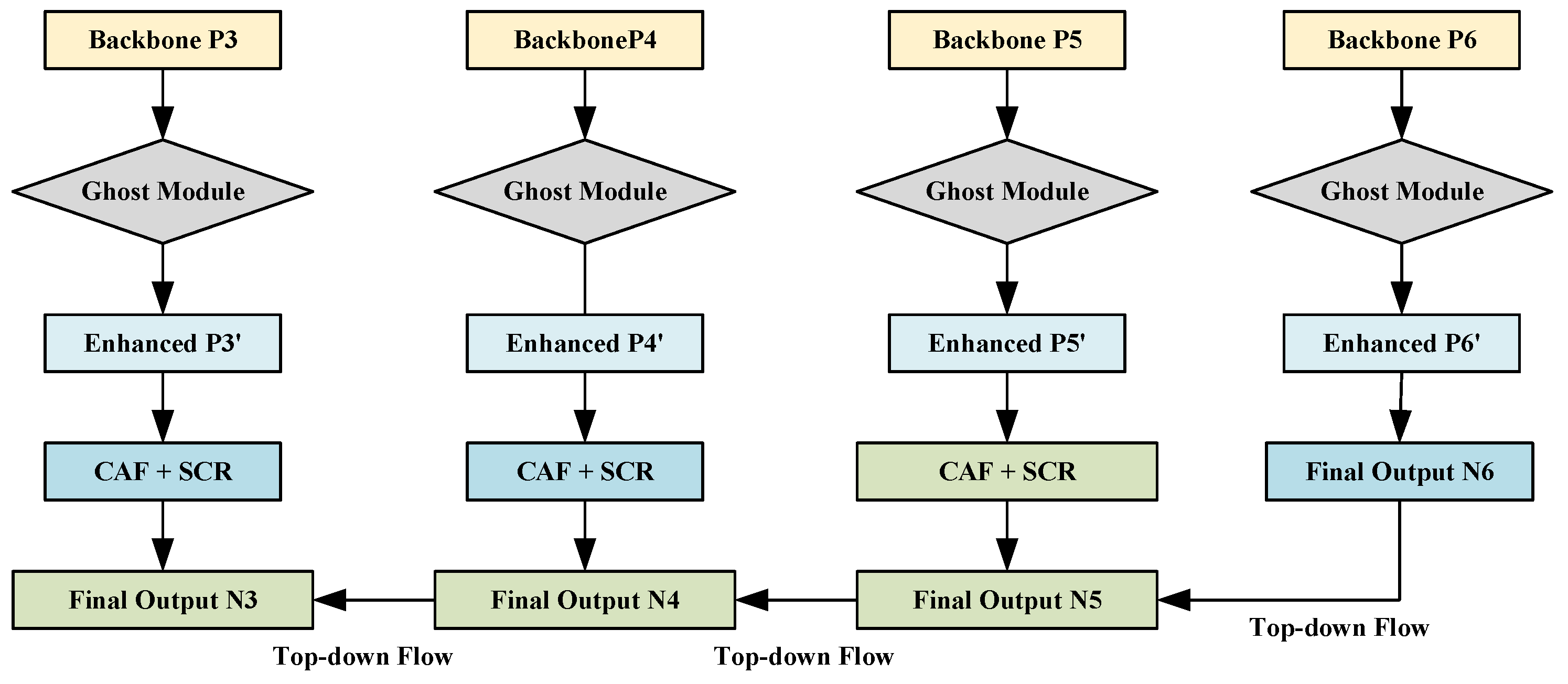

2.2.1. AG-FPN Module

1. MAGP: Efficient Feature Generation Based on Redundancy Suppression

2. CAF: Cross-layer Adaptive Fusion Based on Weight Games

3. SCR: Semantic Consistency Reconstruction Based on Residual Mapping

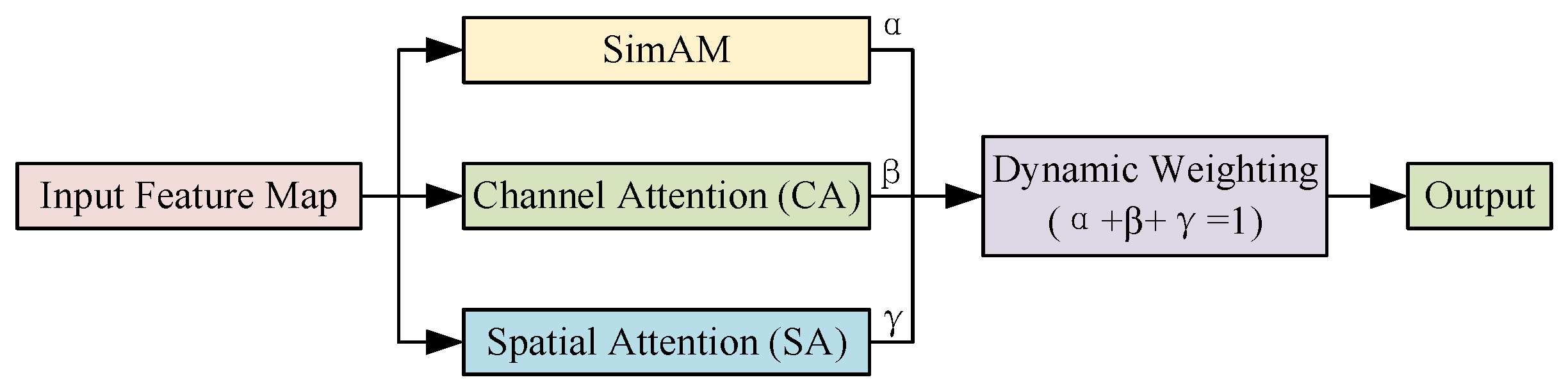

2.2.2. DHA Module

1. SimAM Saliency Attention Branch

2. Channel Attention Branch ()

3. Spatial Attention Branch ()

4. Dynamic Weight Fusion Logic: Adaptive Scheduling of Perceptual Strategies

2.3. Multidimensional Pseudo-Obstacle Discrimination Model

2.4. Fault-Tolerant Control Strategy Based on Risk Perception

3. Experimental Verification and Result Analysis

3.1. Experimental Platform and Deployment Environment

3.2. Dataset Construction and Preprocessing

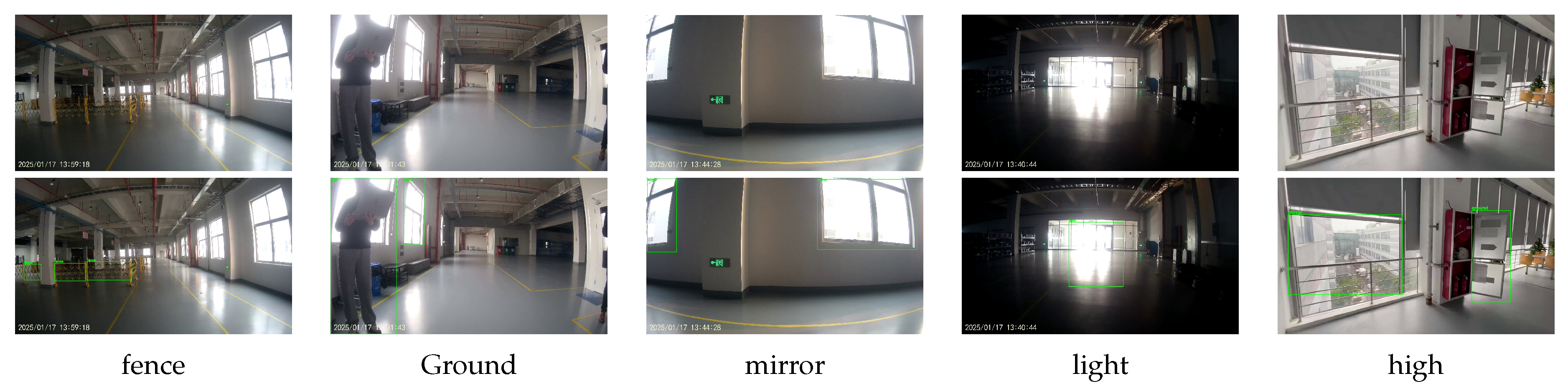

3.2.1. Definition of Anomaly Categories in Industrial Scenarios

- (i)

- High-dynamic obstacles: Labeled “high,” these are objects located above the AGV’s path and within the blind zone of the 2D LiDAR scanning plane (such as open fire doors, height-limiting barriers, and protruding pipes). These obstacles are highly likely to cause collisions in the cargo area.

- (ii)

- Ground dynamic interference: Labeled “Ground,” this includes randomly intruding workers, temporarily stacked pallets, and scattered objects. These targets exhibit strong randomness, placing stringent demands on the real-time response of the sensing system.

- (iii)

- Geometric ambiguity: Labeled “fence,” this refers to structures such as fences or perforated metal mesh, where the laser beam easily penetrates, creating a “sparse point cloud” phenomenon, preventing the formation of an effective closed physical contour.

- (iv)

- Mirror image interference: Labeled “mirror,” this covers visual virtual images generated by highly reflective mirrors. Such operating conditions are highly likely to induce SLAM localization drift and false obstacle recognition in the perception layer.

- (v)

- Severe light and shadow interference: Aligned with the label “light,” including backlight, strong direct light, and sudden changes in ambient lighting. This type of environmental noise can cause oversaturation or feature loss in the image sensor.

3.2.2. Data Collection, Annotation and Augmentation

3.2.3. Transfer Learning Strategy and Weight Initialization

3.3. Evaluation Indicators

3.4. Detection Model Training and Results

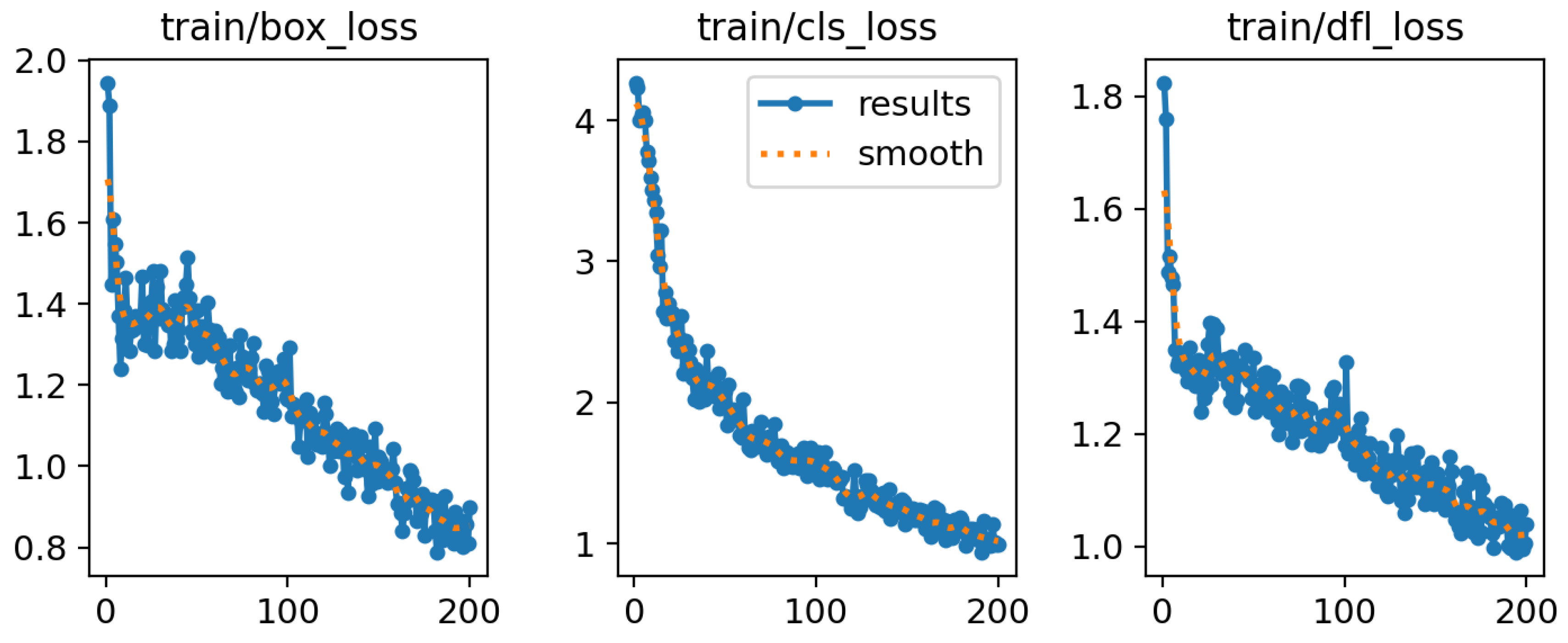

3.4.1. Training Process and Convergence Analysis

3.4.2. Ablation Study Analysis

3.4.3. Comparative Experimental Analysis

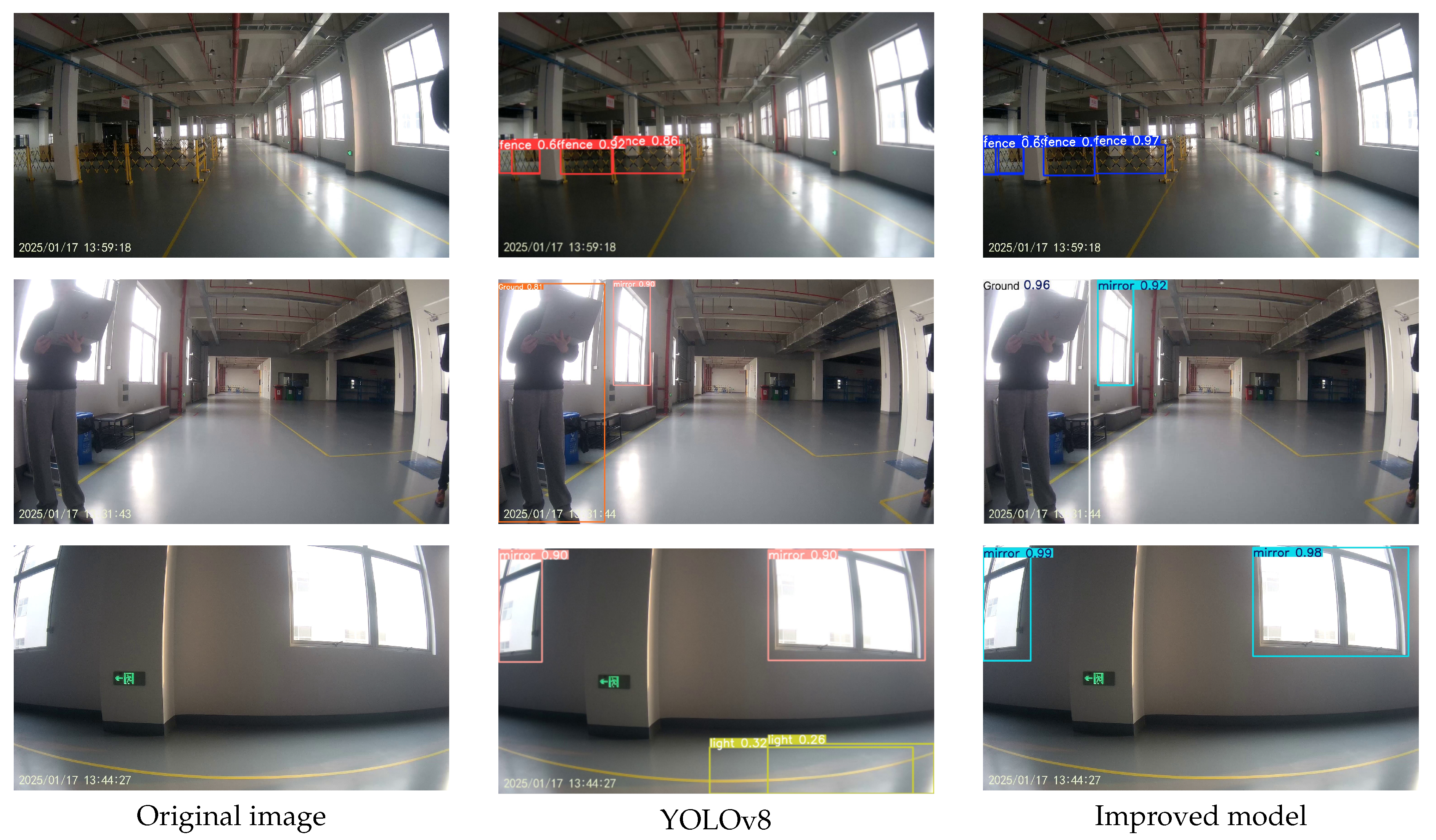

3.4.4. Visualization of Detection Results

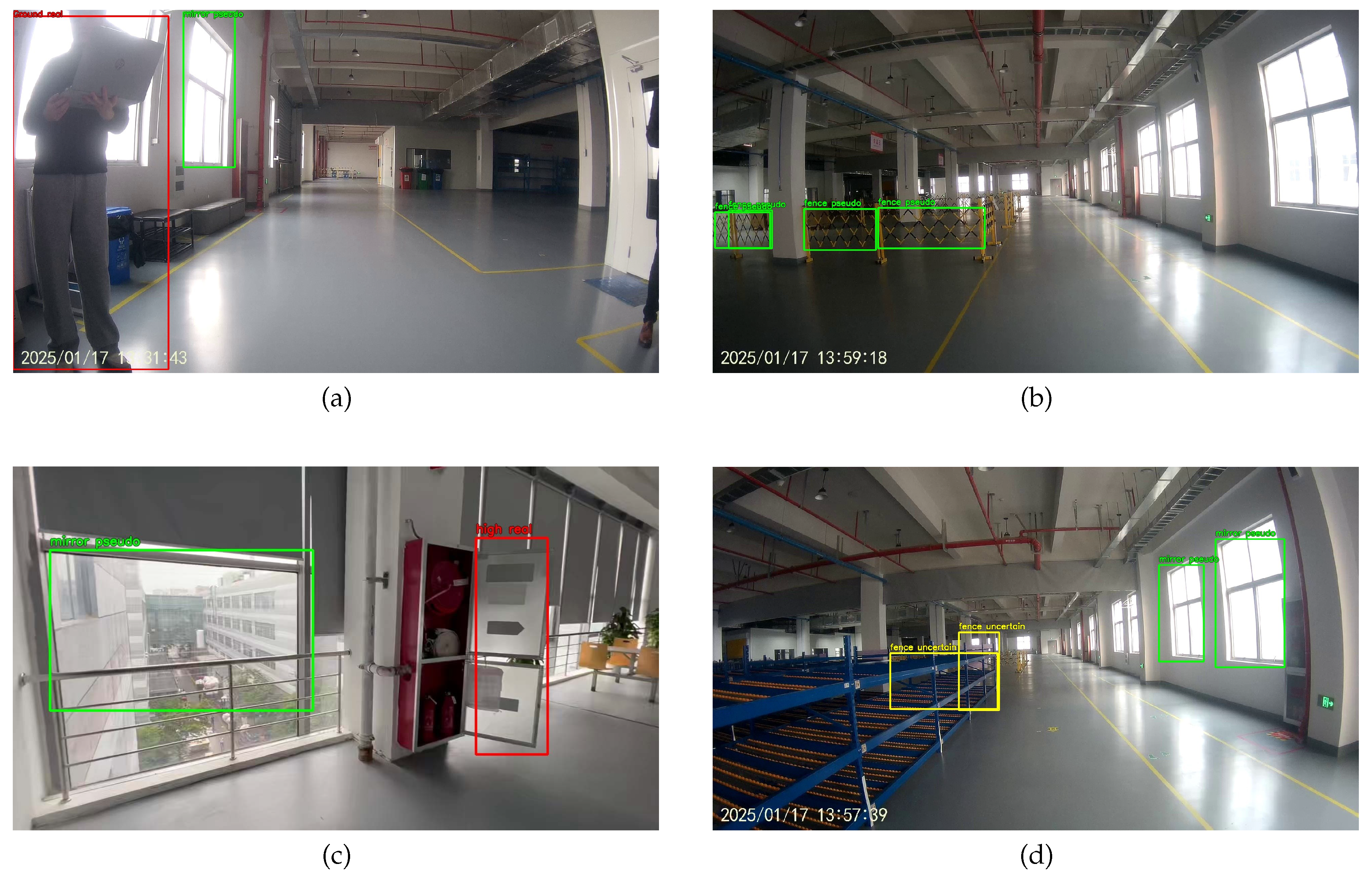

3.5. Pseudo-Obstacle Recognition Experiment

3.5.1. Discriminant Criteria and Logical Models

- represents the real-time vertical height from the bottom of the target to the ground.

- denotes the safe passage height threshold for the AGV.

- S represents the temporal stability factor of the target.

- is the stability threshold, which is set to 0.8 in this study. This design is motivated by the use of time-window filtering [28] to forcibly filter out non-persistent false alarms caused by ambient light flicker or instantaneous sensor noise.

- and represent the classification confidence of the detection network and its corresponding threshold, respectively.

- Real indicates that the target possesses both physical spatial occupancy and temporal stability, triggering the highest priority emergency braking.

- Pseudo covers fences outside the path (no path occupancy) and optical-induced phantom images (no physical entity); the system executes "imperceptible passage."

- Uncertain refers to transient targets whose confidence reaches the standard but whose temporal performance fluctuates. These are marked as uncertain states, triggering the fault-tolerant deceleration mechanism described in Section 2.4.

3.5.2. Visual Analysis of Experimental Results

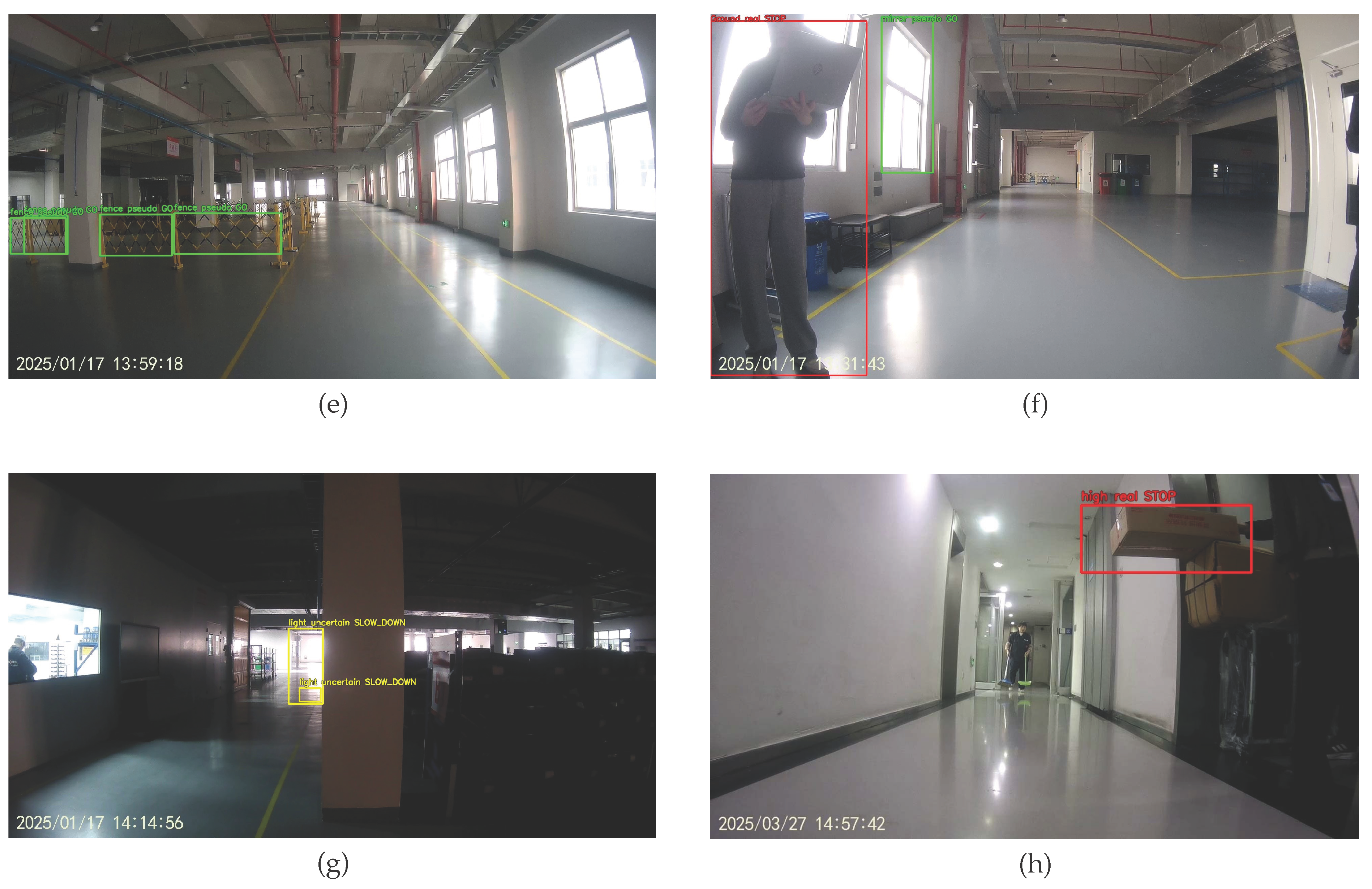

3.6. Fault-Tolerant Control Strategy Verification Experiment

3.6.1. Tiered Response Strategies and Action Mapping

- Emergency Braking (STOP, ): When a target is verified as a real obstacle with physical occupancy risk (e.g., ground cargo, dynamic pedestrians), the system outputs a velocity , triggering the highest priority braking to ensure absolute safety.

- Deceleration Confirmation (SLOW_DOWN, ): For targets with fluctuating confidence or temporal instability (e.g., instantaneous strong light, complex textures), the system executes a degraded operation strategy. Through the velocity attenuation factor , the AGV enters a low-speed perception mode, aiming to obtain more stable temporal features by increasing observation duration and avoiding false stops caused by blind decision-making.

- Normal Passage (GO, ): For pseudo-obstacles determined to have no spatial occupancy risk (e.g., high-level pipelines, mirror phantoms, fences outside the path), the system maintains the preset cruise speed , achieving “imperceptible filtering” of interference items and ensuring the continuity of the logistics rhythm.

3.6.2. Experimental Visualization Analysis

3.7. System-Level Performance Evaluation and Verification

3.7.1. Experimental Environment and Scheme Design

- Group A (Baseline Group): This group utilized the baseline YOLOv8n detector combined with conventional obstacle avoidance logic. In this configuration, the system triggers an immediate emergency brake (Emergency Braking) as soon as any target is detected within the safety zone.

- Group B (Improved Group): It incorporates the improved algorithm presented in this paper and integrates pseudo-obstacle detection logic and a graded response fault tolerance strategy.

3.7.2. Comprehensive Performance Index Analysis

4. Summary and Outlook

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Fragapane, G.; De Koster, R.; Sgarbossa, F.; Strandhagen, J.O. Planning and control of autonomous mobile robots for intralogistics: Literature review and research agenda. Eur. J. Oper. Res. 2021, 294, 405–426. [Google Scholar] [CrossRef]

- Vlachos, I.; Pascazzi, R.M.; Ntotis, M.; Mykoniatis, K. Smart and flexible manufacturing systems using Autonomous Guided Vehicles (AGVs) and the Internet of Things (IoT). Int. J. Prod. Res. 2024, 62, 5574–5595. [Google Scholar] [CrossRef]

- Damodaran, D.; Mozaffari, S.; Alirezaee, S.; Paiva, A.L.S. Experimental analysis of the behavior of mirror-like objects in LiDAR-based robot navigation. Appl. Sci. 2023, 13, 2908. [Google Scholar] [CrossRef]

- Grewal, R.; Tonella, P.; Stocco, A. Predicting safety misbehaviours in autonomous driving systems using uncertainty quantification. In Proceedings of the 2024 IEEE Conference on Software Testing, Verification and Validation (ICST), Toronto, ON, Canada, 27–31 May 2024; pp. 70–81. [Google Scholar]

- Yuan, C.; Liu, J.; Wang, Y. Research on indoor positioning and navigation method of AGV based on multi-sensor fusion. Highlights Sci. Eng. Technol. 2022, 7, 206–213. [Google Scholar] [CrossRef]

- Song, D.; Tian, G.M.; Liu, J. Real-time localization measure and perception detection using multi-sensor fusion for Automated Guided Vehicles. In Proceedings of the 2021 40th Chinese Control Conference (CCC), Shanghai, China, 26–28 July 2021; pp. 3219–3224. [Google Scholar]

- Zhou, S.; Cheng, G.; Meng, Q.; Chen, G. Development of multi-sensor information fusion and AGV navigation system. In Proceedings of the 2020 IEEE 4th Information Technology, Networking, Electronic and Automation Control Conference (ITNEC), Chongqing, China, 12–14 June 2020; Volume 1, pp. 2043–2046. [Google Scholar]

- Geiger, A.; Lenz, P.; Stiller, C.; Urtasun, R. Vision meets robotics: The KITTI dataset. Int. J. Robot. Res. 2013, 32, 1231–1237. [Google Scholar] [CrossRef]

- Yang, D.; Su, C.; Wu, H.; Zheng, Y. Shelter identification for shelter-transporting AGV based on improved target detection model YOLOv5. IEEE Access 2022, 10, 119132–119139. [Google Scholar] [CrossRef]

- Qian, L.; Zheng, Y.; Cao, J.; Li, Z. Lightweight ship target detection algorithm based on improved YOLOv5s. J. Real-Time Image Process. 2024, 21, 3. [Google Scholar] [CrossRef]

- Vassilev, A.; Hasan, M.; Griffor, E.; Hany, J. On the Assessment of Sensitivity of Autonomous Vehicle Perception. arXiv 2026, arXiv:2602.00314. [Google Scholar]

- Li, W.; Qiu, J. An improved autonomous emergency braking algorithm for AGVs: Enhancing operational smoothness through multi-stage deceleration. Sensors 2025, 25, 2041. [Google Scholar] [CrossRef] [PubMed]

- Han, J.; Zhang, J.; Lv, C.; Wang, H. Robust fault tolerant path tracking control for intelligent vehicle under steering system faults. IEEE Trans. Intell. Veh. 2024. [Google Scholar] [CrossRef]

- He, K.; Zhang, X.; Ren, S.; Sun, J. Deep residual learning for image recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Las Vegas, NV, USA, 27–30 June 2016; pp. 770–778. [Google Scholar]

- Han, K.; Wang, Y.; Tian, Q.; Guo, J.; Xu, C.; Xu, C. Ghostnet: More features from cheap operations. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 13–19 June 2020; pp. 1580–1589. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 7132–7141. [Google Scholar]

- Lin, T.Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 2117–2125. [Google Scholar]

- Yang, L.; Zhang, R.Y.; Li, L.; Xie, X. Simam: A simple, parameter-free attention module for convolutional neural networks. In Proceedings of the International Conference on Machine Learning (ICML), Online, 18–24 July 2021; pp. 11863–11874. [Google Scholar]

- Woo, S.; Park, J.; Lee, J.Y.; Kweon, I.S. Cbam: Convolutional block attention module. In Proceedings of the European Conference on Computer Vision (ECCV), Munich, Germany, 8–14 September 2018; pp. 3–19. [Google Scholar]

- Cui, Y.; Chen, R.; Chu, W.; Chen, L.; Tian, D.; Li, Y.; Cao, D. Deep learning for image and point cloud fusion in autonomous driving: A review. IEEE Trans. Intell. Transp. Syst. 2021, 23, 722–739. [Google Scholar] [CrossRef]

- Wojke, N.; Bewley, A.; Paulus, D. Simple online and realtime tracking with a deep association metric. In Proceedings of the 2017 IEEE International Conference on Image Processing (ICIP), Beijing, China, 17–20 September 2017; pp. 3645–3649. [Google Scholar]

- Kendall, A.; Gal, Y. What uncertainties do we need in bayesian deep learning for computer vision? Adv. Neural Inf. Process. Syst. 2017, 30, 5574–5584. [Google Scholar]

- Van Horn, G.; Perona, P. The devil is in the tails: Fine-grained classification in the wild. arXiv 2017, arXiv:1709.01450. [Google Scholar] [CrossRef]

- Bochkovskiy, A.; Wang, C.Y.; Liao, H.Y.M. Yolov4: Optimal speed and accuracy of object detection. arXiv 2020, arXiv:2004.10934. [Google Scholar] [CrossRef]

- Tzutalin. LabelImg. Available online: https://github.com/tzutalin/labelImg (accessed on 20 May 2024).

- Pan, S.J.; Yang, Q. A survey on transfer learning. IEEE Trans. Knowl. Data Eng. 2009, 22, 1345–1359. [Google Scholar] [CrossRef]

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. In Proceedings of the European Conference on Computer Vision (ECCV), Zurich, Switzerland, 6–12 September 2014; pp. 740–755. [Google Scholar]

- Feng, D.; Haase-Schütz, C.; Rosenbaum, L.; Hertlein, H.; Glaeser, C.; Timm, F.; Dietmayer, K. Deep multi-modal object detection and semantic segmentation for autonomous driving: Datasets, methods, and challenges. IEEE Trans. Intell. Transp. Syst. 2020, 22, 1341–1360. [Google Scholar] [CrossRef]

- Yan, R.; Dunnett, S.J.; Jackson, L.M. Model-based research for aiding decision-making during the design and operation of multi-load automated guided vehicle systems. Reliab. Eng. Syst. Saf. 2022, 219, 108264. [Google Scholar] [CrossRef]

| Category | Component | Core Parameters/Specifications |

|---|---|---|

| Hardware | AGV Chassis | Industrial latent AGV (Dual-wheel differential, 500kg, 1.5m/s) |

| Platform | LiDAR | Hokuyo UST-10LX ( scanning, ±30mm accuracy) |

| Depth Camera | Intel RealSense D435i | |

| Edge Computing | NVIDIA Jetson AGX Orin (2048 CUDA cores, 32GB RAM) | |

| Dispatch Server | Intel Xeon Gold (Multi-machine dispatching and data storage) | |

| Software | Operating System | Ubuntu 20.04 LTS |

| Environment | Middleware | ROS Noetic/TensorRT |

| Language | Python 3.8/PyTorch 2.0/CUDA 12.1 | |

| Algorithm | Training Hardware | NVIDIA RTX A6000 (48GB VRAM) Workstation |

| Training | Input Size | pixels |

| Optimizer | SGD (Batch Size = 4, Epochs = 200) | |

| Hyperparameters | Momentum, weight decay, etc., follow YOLOv8 official config |

| Exp | YOLOv8 | AG-FPN | DHA | P | R | mAP@0.5 | mAP@50-95 | Params/M | FLOPs/G | FPS |

|---|---|---|---|---|---|---|---|---|---|---|

| 1 | ✓ | 0.920 | 0.802 | 0.912 | 0.690 | 3.01 | 8.1 | 330 | ||

| 2 | ✓ | ✓ | 0.926 | 0.815 | 0.921 | 0.702 | 3.16 | 8.4 | 315 | |

| 3 | ✓ | ✓ | 0.931 | 0.820 | 0.928 | 0.715 | 3.46 | 9.0 | 295 | |

| 4 | ✓ | ✓ | ✓ | 0.945 | 0.835 | 0.939 | 0.732 | 3.62 | 9.5 | 280 |

| Model | P | R | mAP@0.5 | mAP@50-95 | Params/M | FLOPs/G | FPS |

|---|---|---|---|---|---|---|---|

| YOLOv5n | 0.882 | 0.744 | 0.854 | 0.582 | 1.90 | 4.5 | 450 |

| YOLOv8n | 0.920 | 0.802 | 0.912 | 0.690 | 3.01 | 8.1 | 330 |

| YOLOv9n | 0.925 | 0.810 | 0.915 | 0.695 | 2.15 | 7.8 | 395 |

| YOLOv10n | 0.932 | 0.812 | 0.918 | 0.698 | 2.30 | 6.4 | 385 |

| YOLOv11n | 0.935 | 0.818 | 0.925 | 0.702 | 2.60 | 6.5 | 360 |

| Ours | 0.945 | 0.835 | 0.939 | 0.732 | 3.62 | 9.5 | 280 |

| Performance Dimension | Evaluation Metrics | Group A | Group B | Improvement |

|---|---|---|---|---|

| Perception Accuracy | Fence Recognition Accuracy | 92.4% | 96.7% | ↑ 4.3% |

| Mirror Recognition Accuracy | 76.5% | 89.6% | ↑ 13.1% | |

| Operational Continuity | FSR | 23.7% | 2.1% | ↓ 91.1% |

| CR | 45.2 m | 406.8 m | ↑ 8.99 times | |

| Operational Efficiency | APT | 148.5 s | 112.4 s | ↓ 24.3% |

| Daily Cumulative Delay | 13.6 h | 8.6 h | ↓ 5.0 h | |

| Overall System Pass Efficiency | / | / | ↑ 36.8% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.