Submitted:

16 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

Structure of This Position Paper:

Limitations:

2. Edge Weight Adjustment

(1) Fixed/rule-based adjustment.

(2) Learned adjustment.

(3) Using given edge weights.

Unified view.

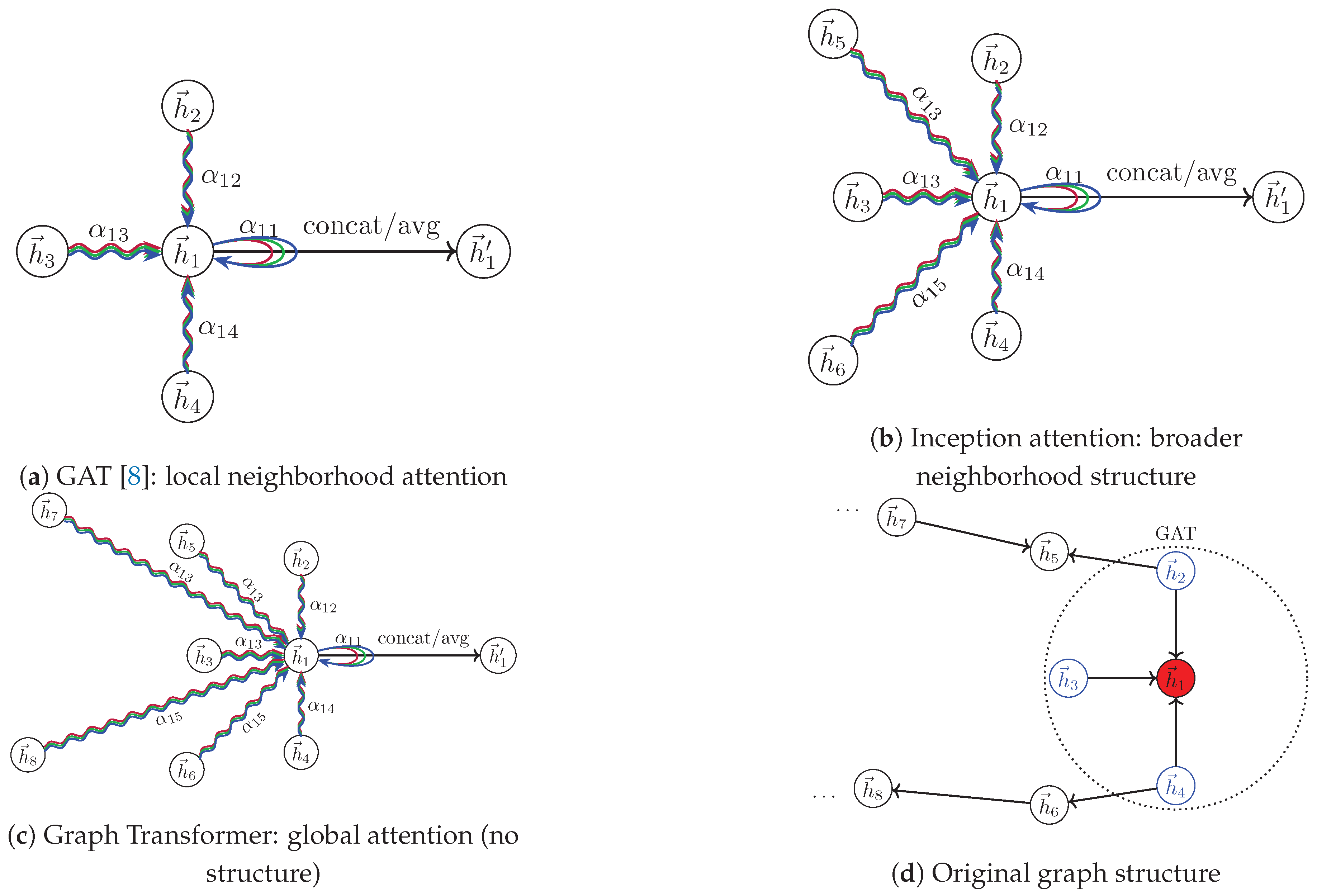

3. Attention of GAT Model

3.1. GAT as Learned Edge Weights

3.2. Attention in GAT is Dispensable

- Step 1: Computation of learnable weights ;

- Step 2: Application of the LeakyReLU activation;

- Step 3: Softmax normalization to obtain .

3.3. Attention is Dispensable for Extended Neighborhoods

3.4. Why Attention Can Fail in GAT and Inception Models

4. Graph Transformers

4.1. Graph Transformers As Learned Edge Weights

4.1.1. Optimism in Graph Transformers

4.1.2. Pessimism in Graph Transformers

4.2. Why GT Fails

4.2.0.1. From Local to Global: Amplifying a Flawed Premise

4.2.1. Limitation of Gradient Descent

4.2.2. Language vs. Graph: Fundamental Mismatch

Structure Enhancement vs. Destruction:

Semantic entanglement vs. local relevance:

5. Alternative Views

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A. Normalizations

No Normalization

Row Normalization

Symmetric Normalization

Directed Normalization

Softmax Normalization

| Dataset | #Nodes(#Train) | #Edges | #Feature | #Class |

|---|---|---|---|---|

| CoraML | 2995(140) | 8416 | 2879 | 7 |

| CiteSeer | 3312(120) | 4715 | 3703 | 6 |

| Telegram | 245(145) | 8912 | 1 | 4 |

| WikiCS | 11701(580) | 297110 | 300 | 10 |

| Coauthor-CS | 18333(8793) | 182121 | 6805 | 15 |

| Coauthor-Phy. | 34493(16555) | 495924 | 8415 | 5 |

| PubMed | 19717(60) | 88648 | 500 | 3 |

| Amazon-Photo | 7650(3669) | 238162 | 745 | 8 |

| Amazon-Comp. | 13752(6595) | 491722 | 767 | 10 |

Appendix B. Implementation Details

Appendix B.1. Datasets

-

Citation networks. CiteSeer, CoraML, PubMed and WikiCS are citation graphs.For Citeseer and Cora-ML, we use the splits specified in the DiGCN(ib) paper [24]. For the WikiCS dataset, the splits are described in PubMed is obtained from the Deep Graph Library (DGL) and treated as an undirected citation network. In all citation datasets, nodes represent papers and edges denote citation relationships. Node features are bag-of-words representations, and labels correspond to paper topics. For PubMed, we generate 10 random splits following the same protocol as CiteSeer and CoraML: 20 labeled nodes per class for training, 30 per class for validation, and the remaining nodes for testing.

- Social network. Telegram is a directed social network dataset from MagNet [38].

- Coauthor networks. Coauthor-CS and Coauthor-Physics (denoted as CS and Physics in the main paper) are co-authorship graphs derived from the Microsoft Academic Graph released for the KDD Cup 2016 challenge. Nodes represent authors, edges indicate co-authorship relations, node features aggregate keywords from each author’s papers, and labels correspond to the author’s primary field of study.

- Co-purchasing networks. Amazon-Computer and Amazon-Photo (denoted as Computer and Photo in the main paper) are co-purchase graphs introduced in Shchur et al. [10]. Nodes represent products, edges connect frequently co-purchased items, node features are bag-of-words representations of product reviews, and labels indicate product categories.

Appendix B.2. Code and Hyperparameters

GATConv Variants Implementation.

GAT Variants

| Dataset | Layer | Learning Rate | Heads |

|---|---|---|---|

| Coauthor-CS | 2 | 0.005 | 1, 2, 8 |

| Coauthor-Physics | 2 | 0.005 | 1, 2, 8 |

| PubMed | 2 | 0.005 | 1, 2, 8 |

| Amazon-Photo | 2, 3 | 0.005 | 1, 2, 4 |

| Amazon-Computer | 2, 3 | 0.005 | 1, 2, 8 |

| WikiCS | 2 | 0.005 | 1, 2, 4 |

| Telegram | 1, 2, 3, 4, 5 | 0.01, 0.005 | 1, 16 |

| ArXiv-Year [40] | 3 | 0.005 | 1, 8 |

| Roman-Empire [40] | 2 | 0.005 | 1, 8 |

| Chameleon [41] | 2 | 0.005 | 1, 8 |

| Squirrel [41] | 2 | 0.005 | 1, 8 |

DiGib Variants

| Dataset | Layer | Learning Rate | Heads |

|---|---|---|---|

| CoraML | 3 | 0.01 | 1, 8, 16 |

| CiteSeer | 3 | 0.01 | 1, 8, 16 |

| Telegram | 1-5 | 0.005, 0.01 | 1, 8, 16 |

| WikiCS | 2, 3 | 0.005 | 1, 2 |

| Amazon-Photo | 2, 3 | 0.005 | 1 |

| PubMed | 2 | 0.005 | 1, 8 |

| Coauthor-CS | 2 | 0.005 | 1 |

| Coauthor-Physics | 2 | 0.005 | 1 |

| Roman-Empire [40] | 2 | 0.005 | 1, 8 |

| Chameleon [41] | 2 | 0.005 | 1, 8 |

| Squirrel [41] | 2 | 0.005 | 1, 8 |

Appendix C. Experiments on Sensitivity

| Datasets | Layer | Heads | Softmax | Dir | Sym | Row | None |

|---|---|---|---|---|---|---|---|

| Coauthor-CS | 2 | 1 | 93.1±0.1 | 92.8±0.2 | 92.5±0.2 | 92.2±0.3 | 92.0±0.4 |

| 2 | 2 | 93.3±0.2 | 92.9±0.2 | 92.6±0.2 | 92.4±0.2 | 92.5±0.5 | |

| 2 | 8 | 93.1±0.2 | 93.3±0.3 | 93.3±0.2 | 93.0±0.1 | 92.5±0.5 | |

| Coauthor-Physics | 2 | 1 | 96.0±0.1 | 96.0±0.1 | 96.0±0.1 | 95.9±0.1 | 95.4±0.4 |

| 2 | 2 | 96.0±0.1 | 96.1±0.1 | 96.1±0.1 | 95.9±0.1 | 95.6±0.2 | |

| 2 | 4 | 96.0±0.1 | 96.1±0.1 | 96.1±0.1 | 96.0±0.1 | 95.6±0.3 | |

| 2 | 8 | 96.1±0.1 | 96.2±0.1 | 96.2±0.2 | 96.0±0.1 | 95.8±0.2 | |

| PubMed | 2 | 1 | 74.8±1.0 | 74.4±1.1 | 73.8±1.0 | 74.1±0.8 | 67.3±2.1 |

| 2 | 8 | 74.9±1.4 | 74.7±0.7 | 74.8±0.7 | 74.5±1.3 | 68.7±2.5 | |

| Amazon-Photo | 2 | 1 | 93.9±0.4 | 93.7±0.5 | 93.9±0.3 | 93.8±0.2 | 93.1±0.3 |

| 2 | 2 | 94.0±0.3 | 93.8±0.2 | 94.0±0.4 | 94.0±0.3 | 93.1±0.4 | |

| 2 | 4 | 93.9±0.4 | 93.7±0.3 | 93.7±0.4 | 94.0±0.3 | 93.2±0.4 | |

| Amazon-Computer | 2 | 1 | 90.7±0.2 | 90.5±0.2 | 90.7±0.3 | 90.6±0.3 | 89.0±1.1 |

| 2 | 2 | 90.7±0.2 | 90.4±0.3 | 90.8±0.1 | 90.5±0.3 | 89.4±0.5 | |

| 3 | 1 | 90.6±0.3 | 90.7±0.2 | 90.8±0.3 | 90.9±0.3 | 82.2±16.9 | |

| 3 | 2 | 90.7±0.3 | 90.5±0.3 | 91.0±0.3 | 90.8±0.3 | 88.7±0.9 | |

|

WikiCS Undirected |

2 | 1 | 78.5±0.9 | 78.3±0.9 | 78.4±1.1 | 78.5±0.9 | 75.3±1.2 |

| 2 | 2 | 78.7±1.0 | 78.2±0.9 | 78.5±0.8 | 78.4±0.8 | 75.0±0.9 | |

| 2 | 4 | 78.9±0.9 | 78.5±0.9 | 78.4±0.8 | 78.3±1.0 | 75.3±0.7 | |

| Telegram | 3 | 1 | 78.2±9.4 | 83.6±5.3 | 86.4±5.2 | 67.6±6.2 | 90.8±3.6 |

| 3 | 16 | 77.6±6.3 | 86.0±6.7 | 87.8±5.8 | 77.6±10.9 | 90.6±4.5 |

Appendix D. Experiments on Heterophilic Graphs

| Datasets | Layer | Heads | Dir | Sym | Row | Softmax | None |

|---|---|---|---|---|---|---|---|

| CoraML | 3 | 1 | 81.7±1.5 | 81.8±1.5 | 81.5±1.5 | 81.0±1.7 | 27.7±3.3 |

| 3 | 8 | 82.0±1.4 | 82.2±1.5 | 81.8±1.4 | 81.0±1.6 | 43.8±3.5 | |

| 3 | 16 | 81.8±1.5 | 81.6±1.8 | 81.7±1.4 | 80.9±1.7 | 46.0±4.6 | |

| CiteSeer | 3 | 1 | 66.3±1.8 | 66.2±1.8 | 66.4±1.7 | 66.4±1.9 | 34.1±3.3 |

| 3 | 8 | 66.5±1.9 | 66.3±1.9 | 66.0±1.6 | 66.2±1.7 | 37.6±2.6 | |

| 3 | 16 | 66.8±2.4 | 66.5±2.4 | 66.8±1.6 | 66.6±1.6 | 39.6±3.6 | |

| WikiCS | 2,3 | 1 | 79.7±0.5 | 79.7±0.5 | 80.6±0.6 | 80.7±0.5 | 37.8±4.4 |

| 2,3 | 8 | 79.7±0.5 | 79.1±0.6 | 80.6±0.6 | 80.6±0.5 | 26.7±2.5 | |

| Telegram | 1-5 | 1 | 87.2±3.8 | 88.2±5.6 | 79.8±6.1 | 76.8±2.9 | 87.2±3.0 |

| 1-5 | 8 | 85.4±5.7 | 87.6±5.2 | 79.0±8.1 | 81.4±3.9 | 89.8±4.6 | |

| 1-5 | 16 | 84.6±4.6 | 87.8±5.8 | 74.2±9.4 | 79.8±5.5 | 90.4±3.5 | |

| Coauthor-CS | 2 | 1 | 94.3±0.2 | 93.9±0.2 | 94.0±0.2 | 94.3±0.2 | 93.9±0.2 |

| 2 | 8 | 94.9±0.1 | 94.6±0.2 | 94.7±0.1 | 94.4±0.2 | 94.2±0.2 | |

| Coauthor-Physics | 2 | 1 | 96.3±0.1 | 96.2±0.1 | 96.1±0.1 | 96.5±0.1 | 96.4±0.1 |

| 2 | 2 | 96.4±0.1 | 96.3±0.1 | 96.3±0.1 | 96.5±0.1 | 96.3±0.2 | |

| 2 | 8 | OOM | OOM | OOM | OOM | OOM | |

| PubMed | 2 | 1 | 77.0±0.8 | 76.7±1.1 | 77.0±1.1 | 76.3±2.9 | 74.6±1.1 |

| 2 | 8 | 75.4±0.7 | 75.7±1.0 | 77.8±0.4 | 76.3±1.3 | 73.9±1.0 | |

| Amazon-Photo | 2,3 | 1 | 94.9±0.3 | 93.9±0.7 | 94.6±0.3 | 95.1±0.2 | 71.0±26.3 |

| 2,3 | 2 | 94.8±0.2 | 93.5±0.6 | 94.5±0.5 | 94.8±0.3 | 81.7±12.6 | |

| 2 | 8 | OOM | OOM | OOM | OOM | OOM |

Appendix E. Additional Details on Graph Transformers

(1) Encoding strategies for recovering structural information.

(2) Improving scalability.

| Datasets | Weight | Heads | Softmax | Dir | Sym | Row | None |

|---|---|---|---|---|---|---|---|

|

Arxiv-Year Directed (Heterophilic) |

Attention | 1 | 44.7±0.2 | 52.7±0.7 | 30.1±4.1 | 40.1±1.1 | 30.1±1.7 |

| Attention | 8 | 43.3±0.9 | 59.2±0.5 | 36.1±0.7 | 45.8±0.6 | 36.8±2.5 | |

| Uniform | 1 | 30.0±1.7 | 50.1±0.2 | 47.4±0.2 | 29.7±1.1 | 42.9±0.1 | |

| Random | 1 | 35.3±0.2 | 48.2±0.3 | 42.5±0.3 | 29.1±0.8 | 41.5±0.2 | |

|

Roman-Empire Directed (Heterophilic) |

Attention | 1 | 46.4±3.7 | 55.4±2.4 | 50.5±3.1 | 43.8±3.0 | 55.0±2.4 |

| Attention | 8 | 71.5±2.0 | 82.6±0.9 | 70.3±4.7 | 70.3±3.0 | 79.9±0.6 | |

| Uniform | 1 | 32.9±0.3 | 36.3±0.4 | 33.4±0.5 | 32.8±0.4 | 36.1±0.6 | |

| Random | 1 | 20.9±0.6 | 32.3±0.6 | 29.8±1.0 | 29.9±0.5 | 30.0±0.4 | |

|

Chameleon Undirected (Heterophilic) |

Attention | 1 | 67.1±1.5 | 65.4±1.7 | 66.4±2.2 | 66.0±3.5 | 59.2±5.0 |

| Attention | 8 | 68.8±2.1 | 66.8±3.2 | 68.8±3.1 | 67.5±1.6 | 59.4±2.8 | |

| Uniform | 1 | 67.9±2.6 | 67.5±2.5 | 67.6±2.4 | 68.0±2.2 | 66.4±2.2 | |

| Random | 1 | 40.3±2.7 | 65.2±2.5 | 66.8±2.0 | 66.9±1.9 | 64.1±2.3 | |

| Random | 8 | 56.5±2.6 | 67.2±2.6 | 67.7±2.3 | 68.0±2.2 | 65.3±2.2 | |

|

Squirrel Undirected (Heterophilic) |

Attention | 1 | 56.1±2.1 | 55.8±0.9 | 57.2±2.1 | 55.1±2.3 | 39.9±4.5 |

| Attention | 8 | 60.5±1.8 | 55.1±1.9 | 58.0±1.2 | 57.0±1.8 | 42.5±1.8 | |

| Uniform | 1 | 53.1±1.2 | 56.4±1.3 | 56.6±1.7 | 53.4±0.9 | 45.9±1.6 | |

| Random | 1 | 29.0±1.7 | 54.5±1.2 | 54.4±1.2 | 50.3±1.5 | 45.6±1.8 | |

| Random | 8 | 38.7±1.2 | 56.3±1.6 | 55.9±1.3 | 53.6±1.2 | 43.3±2.3 |

Summary

| Datasets | Weight | Heads | Dir | Sym | Row | Softmax | None |

|---|---|---|---|---|---|---|---|

|

Roman-Empire Direct (Heterophilic) |

Attention | 1 | 80.7±0.4 | 80.3±0.6 | 78.7±0.3 | 80.3±0.4 | 79.4±0.5 |

| Attention | 8 | 82.1±0.3 | 83.0±0.7 | 80.4±0.6 | 82.2±0.4 | 83.4±0.4 | |

| Uniform | 1 | 80.1±0.5 | 80.1±0.5 | 78.9±0.5 | 78.9±0.5 | 77.6±0.4 | |

| Random | 1 | 76.9±0.5 | 76.5±0.4 | 76.1±0.3 | 68.3±0.5 | 14.2±0.3 | |

| Random | 8 | 76.8±0.4 | 75.7±0.8 | 76.0±0.4 | 69.7±0.5 | 14.3±0.6 | |

|

Chameleon Direct (Heterophilic) |

Attention | 1 | 58.3±1.2 | 59.3±1.7 | 62.0±2.1 | 63.7±1.3 | 27.6±2.6 |

| Attention | 8 | 55.5±2.2 | 58.3±1.8 | 59.7±1.3 | 58.7±1.6 | 37.8±3.4 | |

| Uniform | 1 | 60.0±1.6 | 60.0±1.6 | 63.9±1.9 | 63.9±1.9 | 26.8±2.6 | |

| Random | 1 | 59.8±2.0 | 59.3±1.3 | 61.3±2.1 | 43.3±2.4 | 26.6±1.8 | |

| Random | 8 | 58.6±2.2 | 59.6±3.0 | 62.1±1.6 | 47.1±2.6 | 34.0±2.4 | |

|

Squirrel Direct (Heterophilic) |

Attention | 1 | 37.5±1.8 | 38.5±0.9 | 39.5±1.5 | 40.6±1.7 | 28.0±3.2 |

| Attention | 8 | 38.0±1.9 | 39.3±1.6 | 41.0±1.8 | 39.2±1.7 | 25.8±1.7 | |

| Uniform | 1 | 40.3±1.3 | 40.3±1.3 | 43.3±1.5 | 43.6±1.4 | 27.9±2.6 | |

| Random | 1 | 40.4±1.4 | 39.9±1.5 | 42.7±1.1 | 34.5±1.2 | 27.4±1.7 | |

| Random | 8 | 40.4±1.4 | 39.9±1.5 | 42.7±1.1 | 34.3±1.6 | 27.4±1.7 |

| Datasets | Weight | Dir | Sym | Row | None |

|---|---|---|---|---|---|

| Roman-Empire | Attention | 93.47±0.24 | 93.01±0.54 | 92.60±0.60 | 93.60±0.32 |

| Uniform | 93.35±0.55 | 93.25±0.34 | 92.27±0.29 | 93.59±0.29 | |

| Arxiv-year | Attention | 65.56±0.12 | 64.43±0.31 | 59.74±0.70 | 65.18±0.26 |

| Uniform | 66.02±0.28 | 63.48±0.51 | 41.52±3.00 | 56.46±2.44 | |

| Chameleon | Attention | 74.74±0.94 | 77.11±1.71 | 78.07±1.00 | 66.01±2.67 |

| Uniform | 79.21±1.01 | 79.63±0.92 | 79.50±1.16 | 58.68±5.05 | |

| Squirrel | Attention | 69.33±2.09 | 69.76±1.62 | 72.88±2.01 | 63.53±2.65 |

| Uniform | 74.63±1.60 | 75.05±1.98 | 73.38±1.74 | 74.53±2.10 |

References

- Rogers, E.M. Diffusion of Innovations, 5 ed.; Simon and Schuster, 2003. [Google Scholar]

- Dearing, J.W. Applying Diffusion of Innovation Theory to Intervention Development. Research on social work practice 2009, 19, 503–518. [Google Scholar] [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet Classification with Deep Convolutional Neural Networks. In Proceedings of the Advances in Neural Information Processing Systems, 2012; Curran Associates, Inc.; Vol. 25. [Google Scholar]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, L.; Polosukhin, I. Attention Is All You Need. In Proceedings of the Advances in Neural Information Processing Systems; Curran Associates, Inc., 2017; Vol. 30. [Google Scholar]

- Wang, X.; Girshick, R.; Gupta, A.; He, K. Non-Local Neural Networks. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 2018; pp. 7794–7803. [Google Scholar] [CrossRef]

- Gong, Y.; Chung, Y.A.; Glass, J. AST: Audio Spectrogram Transformer; 2021. [Google Scholar]

- Ramesh, A.; Pavlov, M.; Goh, G.; Gray, S.; Voss, C.; Radford, A.; Chen, M.; Sutskever, I. Zero-Shot Text-to-Image Generation. In Proceedings of the Proceedings of the 38th International Conference on Machine Learning. PMLR, 2021; pp. 8821–8831. [Google Scholar]

- Veličković, P.; Cucurull, G.; Casanova, A.; Romero, A.; Liò, P.; Bengio, Y. Graph Attention Networks. In Proceedings of the International Conference on Learning Representations, 2018. [Google Scholar]

- Li, Y.; Liang, X.; Hu, Z.; Chen, Y.; Xing, E.P. Graph Transformer. 2019. [Google Scholar]

- Shchur, O.; Mumme, M.; Bojchevski, A.; Günnemann, S. Pitfalls of Graph Neural Network Evaluation, 2019. arXiv [cs, stat. arXiv:1811.05868.

- Luo, Y.; Shi, L.; Wu, X.M. Classic GNNs are Strong Baselines: Reassessing GNNs for Node Classification, 2024. arXiv [cs]. arXiv:2406.08993. [CrossRef]

- Tönshoff, J.; Ritzert, M.; Rosenbluth, E.; Grohe, M. Where Did the Gap Go? Reassessing the Long-Range Graph Benchmark. Transactions on Machine Learning Research, 2024. [Google Scholar]

- Xing, Y.; Wang, X.; Li, Y.; Huang, H.; Shi, C. Less is more: on the over-globalizing problem in graph transformers. In Proceedings of the Proceedings of the 41st International Conference on Machine Learning. JMLR.org, 2024; p. ICML’24. [Google Scholar]

- Sancak, K.; Hua, Z.; Fang, J.; Xie, Y.; Malevich, A.; Long, B.; Balin, M.F.; Çatalyürek, U.V. A scalable and effective alternative to graph transformers. In Proceedings of the Proceedings of the Thirty-Ninth AAAI Conference on Artificial Intelligence and Thirty-Seventh Conference on Innovative Applications of Artificial Intelligence and Fifteenth Symposium on Educational Advances in Artificial Intelligence, 2025; AAAI Press; AAAI’25/IAAI’25/EAAI’25. [Google Scholar] [CrossRef]

- Buterez, D.; Janet, J.P.; Oglic, D.; Liò, P. An End-to-End Attention-Based Approach for Learning on Graphs. Nature Communications 2025, 16, 5244. [Google Scholar] [CrossRef] [PubMed]

- Hamilton, W.; Ying, Z.; Leskovec, J. Inductive representation learning on large graphs. Advances in neural information processing systems 2017, 30. [Google Scholar]

- Corso, G.; Cavalleri, L.; Beaini, D.; Liò, P.; Veličković, P. Principal Neighbourhood Aggregation for Graph Nets. Proceedings of the Advances in Neural Information Processing Systems 2020, Vol. 33, 13260–13271. [Google Scholar]

- Kipf, T.N.; Welling, M. Semi-supervised classification with graph convolutional networks. arXiv 2016, arXiv:1609.02907. [Google Scholar]

- Rossi, E.; Charpentier, B.; Di Giovanni, F.; Frasca, F.; Günnemann, S.; Bronstein, M.M. Edge directionality improves learning on heterophilic graphs. In Proceedings of the Learning on Graphs Conference. PMLR, 2024; pp. 25–1. [Google Scholar]

- Abbahaddou, Y.; Malliaros, F.D.; Lutzeyer, J.F.; Vazirgiannis, M. Centrality Graph Shift Operators for Graph Neural Networks. arXiv 2024, arXiv:2411.04655. [Google Scholar] [CrossRef]

- Jiang, Q.; Wang, C.; Lones, M.; Pang, W. Demystifying MPNNs: Message Passing as Merely Efficient Matrix Multiplication. arXiv 2025, arXiv:2502.00140. [Google Scholar] [CrossRef]

- Wu, Z.; Pan, S.; Chen, F.; Long, G.; Zhang, C.; Yu, P.S. A Comprehensive Survey on Graph Neural Networks. IEEE Transactions on Neural Networks and Learning Systems 2021, 32, 4–24. [Google Scholar] [CrossRef]

- Jiang, Q.; Wang, C.; Lones, M.; Chen, D.; Pang, W. Scale-aware Message Passing for Graph Node Classification, 2026.

- Tong, Z.; Liang, Y.; Sun, C.; Li, X.; Rosenblum, D.; Lim, A. Digraph inception convolutional networks. Advances in neural information processing systems 2020, 33, 17907–17918. [Google Scholar]

- Tong, Z.; Liang, Y.; Sun, C.; Rosenblum, D.S.; Lim, A. Directed graph convolutional network. arXiv 2020, arXiv:2004.13970. [Google Scholar]

- Bechler-Speicher, M.; Amos, I.; Gilad-Bachrach, R.; Globerson, A. Graph Neural Networks Use Graphs When They Shouldn’t. In Proceedings of the Forty-First International Conference on Machine Learning, 2024. [Google Scholar]

- Rampášek, L.; Galkin, M.; Dwivedi, V.P.; Luu, A.T.; Wolf, G.; Beaini, D. Recipe for a General, Powerful, Scalable Graph Transformer. In Proceedings of the Proceedings of the 36th International Conference on Neural Information Processing Systems, Red Hook, NY, USA, 2022; NIPS ’22, pp. 14501–14515. [Google Scholar]

- Shirzad, H.; Velingker, A.; Venkatachalam, B.; Sutherland, D.J.; Sinop, A.K. Exphormer: Sparse Transformers for Graphs. In Proceedings of the Proceedings of the 40th International Conference on Machine Learning. PMLR, 2023; pp. 31613–31632, ISSN 2640-3498. [Google Scholar]

- Deng, C.; Yue, Z.; Zhang, Z. Polynormer: Polynomial-Expressive Graph Transformer in Linear Time. In Proceedings of the The Twelfth International Conference on Learning Representations, 2024. [Google Scholar]

- Shehzad, A.; Xia, F.; Abid, S.; Peng, C.; Yu, S.; Zhang, D.; Verspoor, K. Graph Transformers: A Survey. IEEE Transactions on Neural Networks and Learning Systems 2026, 1–20. [Google Scholar] [CrossRef] [PubMed]

- Ying, C.; Cai, T.; Luo, S.; Zheng, S.; Ke, G.; He, D.; Shen, Y.; Liu, T.Y. Do Transformers Really Perform Badly for Graph Representation? In Proceedings of the Advances in Neural Information Processing Systems; Curran Associates, Inc., 2021; Vol. 34, pp. 28877–28888. [Google Scholar]

- Luo, Y.; Shi, L.; Wu, X.M. Can Classic GNNs Be Strong Baselines for Graph-level Tasks? Simple Architectures Meet Excellence. In Proceedings of the Forty-second International Conference on Machine Learning, 2025. [Google Scholar]

- Leskovec, J. What Every Data Scientist Should Know About Graph Transformers and Their Impact on Structured Data. 2025. [Google Scholar]

- Fey, M.; Kocijan, V.; Lopez, F.; Lenssen, J.E.; Leskovec, J. KumoRFM: A Foundation Model for In-Context Learning on Relational Data; Technical whitepaper: Kumo AI, 2025. [Google Scholar]

- Gunasekar, S.; Lee, J.D.; Soudry, D.; Srebro, N. Implicit Bias of Gradient Descent on Linear Convolutional Networks. In Proceedings of the Advances in Neural Information Processing Systems, 2018; Curran Associates, Inc.; Vol. 31. [Google Scholar]

- Clark, K.; Khandelwal, U.; Levy, O.; Manning, C.D. What Does BERT Look at? An Analysis of BERT’s Attention. In Proceedings of the 2019 ACL Workshop BlackboxNLP: Analyzing and Interpreting Neural Networks for NLP; Florence, Italy, Linzen, T., Chrupała, G., Belinkov, Y., Hupkes, D., Eds.; 2019; pp. 276–286. [Google Scholar] [CrossRef]

- Li, Y.; Tarlow, D.; Brockschmidt, M.; Zemel, R. Gated Graph Sequence Neural Networks; 2017. [Google Scholar] [CrossRef]

- Zhang, X.; He, Y.; Brugnone, N.; Perlmutter, M.; Hirn, M. Magnet: A neural network for directed graphs. Advances in neural information processing systems 2021, 34, 27003–27015. [Google Scholar]

- PyTorch Geometric Team. GATConv — PyTorch Geometric Documentation. 2024. Available online: https://pytorch-geometric.readthedocs.io/en/latest/generated/torch_geometric.nn.conv.GATConv.html (accessed on 2026-01-28).

- Lim, D.; Hohne, F.M.; Li, X.; Huang, S.L.; Gupta, V.; Bhalerao, O.P.; Lim, S.N. Large Scale Learning on Non-Homophilous Graphs: New Benchmarks and Strong Simple Methods. In Proceedings of the Advances in Neural Information Processing Systems, 2021. [Google Scholar]

- Pei, H.; Wei, B.; Chang, K.C.C.; Lei, Y.; Yang, B. Geom-gcn: Geometric graph convolutional networks. arXiv 2020, arXiv:2002.05287. [Google Scholar] [CrossRef]

- Dwivedi, V.P.; Bresson, X. A Generalization of Transformer Networks to Graphs. 2021, 2012.09699. [Google Scholar] [CrossRef]

- Wu, Q.; Zhao, W.; Li, Z.; Wipf, D.; Yan, J. NodeFormer: A Scalable Graph Structure Learning Transformer for Node Classification. arXiv 2023, arXiv:2306.08385. [Google Scholar] [CrossRef]

- Wu, Q.; Zhao, W.; Yang, C.; Zhang, H.; Nie, F.; Jiang, H.; Bian, Y.; Yan, J. SGFormer: Simplifying and Empowering Transformers for Large-Graph Representations. Proceedings of the Proceedings of the 37th International Conference on Neural Information Processing Systems 2023, NIPS ’23, 64753–64773. [Google Scholar]

- Behrouz, A.; Hashemi, F. Graph mamba: Towards learning on graphs with state space models. In Proceedings of the Proceedings of the 30th ACM SIGKDD conference on knowledge discovery and data mining, 2024; pp. 119–130. [Google Scholar]

- Zhang, Y.; Li, X.; Xu, Y.; Xu, X.; Wang, Z. A Graph Transformer with Optimized Attention Scores for Node Classification. Scientific Reports 2025, 15, 30015. [Google Scholar] [CrossRef] [PubMed]

- Luo, Y.; Thost, V.; Shi, L. Transformers over Directed Acyclic Graphs. Advances in Neural Information Processing Systems 2024, 36. [Google Scholar]

- Cao, B.; Ding, C.; Chen, K.; Zhu, Y. DGT: Differential Graph Transformer for Graph Learning with Few-Shot Learning. Expert Systems with Applications 2026, 303, 130638. [Google Scholar] [CrossRef]

- Yuan, C.; Song, Z.; Kuruoglu, E.; Zhao, K.; Liu, Y.; Zhao, D.; Cheng, H.; Rong, Y. ParaFormer: A Generalized PageRank Graph Transformer for Graph Representation Learning. In Proceedings of the Proceedings of the 19th ACM International Conference on Web Search and Data Mining, New York, NY, USA, 2026. [Google Scholar]

- Aminian-Dehkordi, J.; Parsa, M.; Dickson, A.; Mofrad, M.R.K. SIMBA-GNN: Mechanistic Graph Learning for Microbiome Prediction. In npj Systems Biology and Applications; 2025. [Google Scholar] [CrossRef]

| Datasets | Weight | Softmax | Dir | Sym | Row | None |

|---|---|---|---|---|---|---|

| Coauthor-CS | Attention | 93.1±0.1 | 92.8±0.2 | 92.5±0.2 | 92.6±0.2 | 92.0±0.4 |

| Uniform | 93.0±0.2 | 93.6±0.1 | 93.5±0.1 | 93.0±0.1 | 92.0±0.1 | |

| Random | 76.9±0.6 | 92.9±0.2 | 92.8±0.1 | 92.5±0.2 | 90.2±0.3 | |

| Coauthor-Physics | Attention | 96.0±0.1 | 96.0±0.1 | 96.0±0.1 | 95.9±0.1 | 95.4±0.4 |

| Uniform | 95.9±0.1 | 96.1±0.1 | 96.1±0.1 | 95.9±0.1 | 95.0±0.1 | |

| Random | 88.3±0.3 | 95.8±0.2 | 95.8±0.2 | 95.6±0.1 | 94.2±0.2 | |

| PubMed | Attention | 74.8±1.0 | 74.4±1.1 | 73.8±1.0 | 74.1±0.8 | 67.3±2.1 |

| Uniform | 74.9±1.1 | 75.2±1.1 | 75.1±0.6 | 75.1±0.9 | 69.4±2.1 | |

| Random | 66.0±0.3 | 73.6±0.8 | 73.2±0.8 | 72.8±0.8 | 72.2±1.4 | |

| Amazon-Photo | Attention | 93.9±0.4 | 93.7±0.5 | 93.9±0.3 | 93.8±0.2 | 93.1±0.3 |

| Uniform | 93.4±0.5 | 93.4±0.5 | 93.4±0.5 | 93.1±0.3 | 91.3±0.3 | |

| Random | 80.9±0.6 | 92.8±0.8 | 92.9±0.5 | 92.9±0.4 | 91.0±0.3 | |

| Amazon-Computer | Attention | 90.7±0.3 | 90.5±0.2 | 90.7±0.3 | 90.6±0.3 | 89.0±1.1 |

| Uniform | 90.4±0.2 | 90.1±0.4 | 90.3±0.3 | 90.2±0.2 | 85.2±1.1 | |

| Random | 73.6±0.6 | 89.9±0.2 | 89.6±0.2 | 88.7±0.3 | 85.5±0.7 | |

|

WikiCS Undirected |

Attention | 78.5±0.8 | 78.3±0.9 | 78.4±1.1 | 78.5±0.9 | 75.3±1.2 |

| Uniform | 77.9±0.8 | 78.3±1.1 | 78.3±1.1 | 77.9±1.1 | 71.5±1.01 | |

| Random | 57.7±0.7 | 77.8±1.1 | 77.7±1.0 | 77.3±0.9 | 70.5±1.0 | |

|

Telegram Undirected |

Attention | 78.2±9.4 | 86.0±6.7 | 87.8±5.8 | 77.6±10.9 | 90.8±3.6 |

| Uniform | 71.0±4.7 | 78.0±7.5 | 79.6±6.6 | 71.4±3.1 | 92.4±3.2 | |

| Random | 48.8±6.8 | 89.0±3.9 | 87.8±4.2 | 81.8±4.8 | 93.8±3.3 |

| Datasets | Weight | Dir | Sym | Row | Softmax | None |

|---|---|---|---|---|---|---|

|

CoraML Directed |

Attention | 81.7±1.5 | 81.8±1.5 | 81.5±1.5 | 81.0±1.7 | 27.7±3.3 |

| Uniform | 82.0±1.3 | 82.0±1.3 | 81.8±1.5 | 81.8±1.5 | 77.7±2.4 | |

| Random | 82.0±1.6 | 81.6±1.3 | 81.7±1.3 | 74.1±1.5 | 76.1±1.7 | |

|

CiteSeer Directed |

Attention | 66.3±1.8 | 66.2±1.8 | 66.4±1.7 | 66.4±1.9 | 34.1±3.3 |

| Uniform | 66.5±1.6 | 66.5±1.6 | 66.2±1.2 | 66.2±1.2 | 62.1±2.5 | |

| Random | 66.1±2.0 | 65.8±1.8 | 65.5±1.8 | 63.7±1.6 | 60.9±2.7 | |

|

Telegram Directed |

Attention | 87.2±3.8 | 88.2±5.6 | 79.8±6.1 | 76.8±2.9 | 87.2±3.0 |

| Uniform | 89.2±5.8 | 89.2±5.8 | 73.4±7.8 | 73.4±7.8 | 91.2±4.4 | |

| Random | 87.8±4.4 | 88.0±4.5 | 61.4±8.7 | 34.6±5.5 | 83.2±3.7 | |

|

WikiCS Directed |

Attention | 79.7±0.5 | 79.7±0.5 | 80.6±0.6 | 80.7±0.5 | 37.8±4.4 |

| Uniform | 79.8±0.6 | 79.8±0.6 | 80.7±0.6 | 80.7±0.6 | 40.3±5.2 | |

| Random | 79.6±0.5 | 79.7±0.5 | 80.4±0.5 | 74.4±0.7 | 38.1±5.0 | |

| Coauthor-CS | Attention | 94.4±0.2 | 93.8±0.3 | 93.9±0.2 | 94.3±0.1 | 94.0±0.2 |

| Uniform | 94.9±0.1 | 94.9±0.1 | 94.7±0.1 | 94.7±0.1 | 55.3±5.2 | |

| Random | 94.7±0.1 | 94.8±0.1 | 94.5±0.1 | 92.4±0.1 | 51.6±8.8 | |

| Coauthor-Physics | Attention | 96.4±0.1 | 96.2±0.1 | 96.1±0.1 | 96.5±0.1 | 96.4±0.1 |

| Uniform | 96.7±0.1 | 96.7±0.1 | 96.5±0.1 | 96.5±0.1 | 86.9±3.0 | |

| Random | 96.6±0.1 | 96.6±0.1 | 96.5±0.1 | 95.5±0.1 | 88.0±2.1 | |

| PubMed | Attention | 77.0±0.8 | 76.7±1.1 | 77.0±1.1 | 76.3±2.9 | 74.6±1.1 |

| Uniform | 77.7±0.3 | 77.5±0.7 | 76.9±1.5 | 76.9±1.5 | 66.7±2.1 | |

| Random | 77.2±0.8 | 77.4±0.2 | 76.6±1.9 | 74.6±0.3 | 67.8±2.5 | |

| Amazon-Photo | Attention | 94.9±0.3 | 93.9±0.7 | 94.6±0.3 | 95.1±0.2 | 71.0±26.3 |

| Uniform | 95.1±0.3 | 95.1±0.3 | 95.0±0.3 | 95.0±0.2 | 30.7±6.0 | |

| Random | 95.2±0.3 | 95.2±0.1 | 94.8±0.4 | 91.9±0.2 | 30.2±3.9 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).