Submitted:

17 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- (1)

- A novel RoBERTa-based model for emotion classification achieved state-of-the-art classification performance on a public benchmark dataset with the following performance metrics: (i) 0.924% accuracy, (ii) 0.925% weighted F1-score, and (iii) 0.997% ROC-AUC across six emotion categories.

- (2)

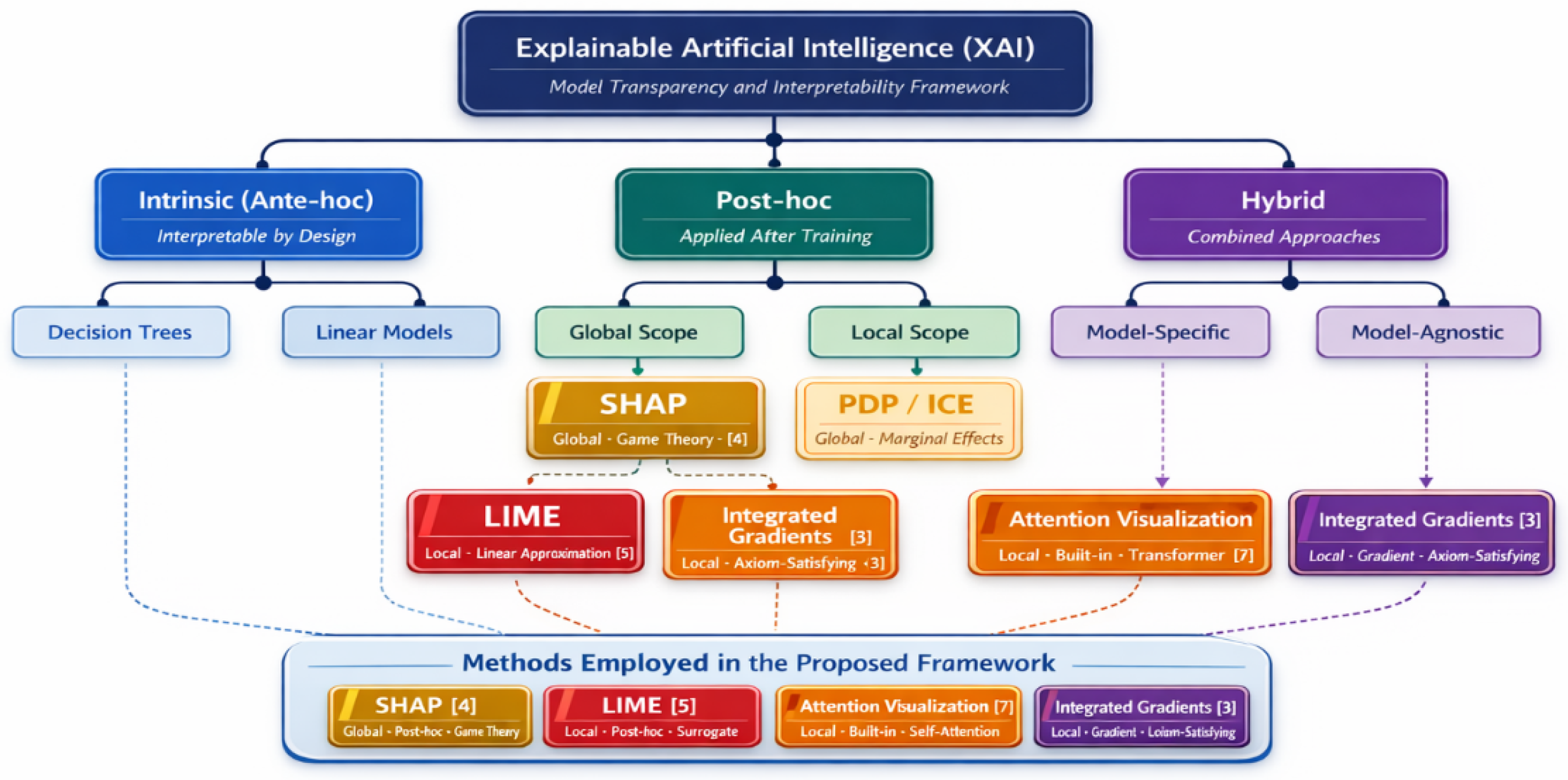

- The first systematic multi-XAI comparative analysis that combines SHAP, LIME, Attention Visualization, and Integrated Gradients in a unified transformer emotion classification framework.

- (3)

- A before-and-after methodology was implemented using a rigorous analysis of each method’s scope of responsibility, theoretical basis, and additional contributions to the overall model interpretability.

- (4)

- All experiments, figures, and model weights will be publicly available for reproduction in a single Google Colab environment to facilitate transparency and reproducibility.

2. Related Works

2.1. Text-Based Emotion Recognition

2.2. Explainable Artificial Intelligence in NLP

3. Materials and Methods

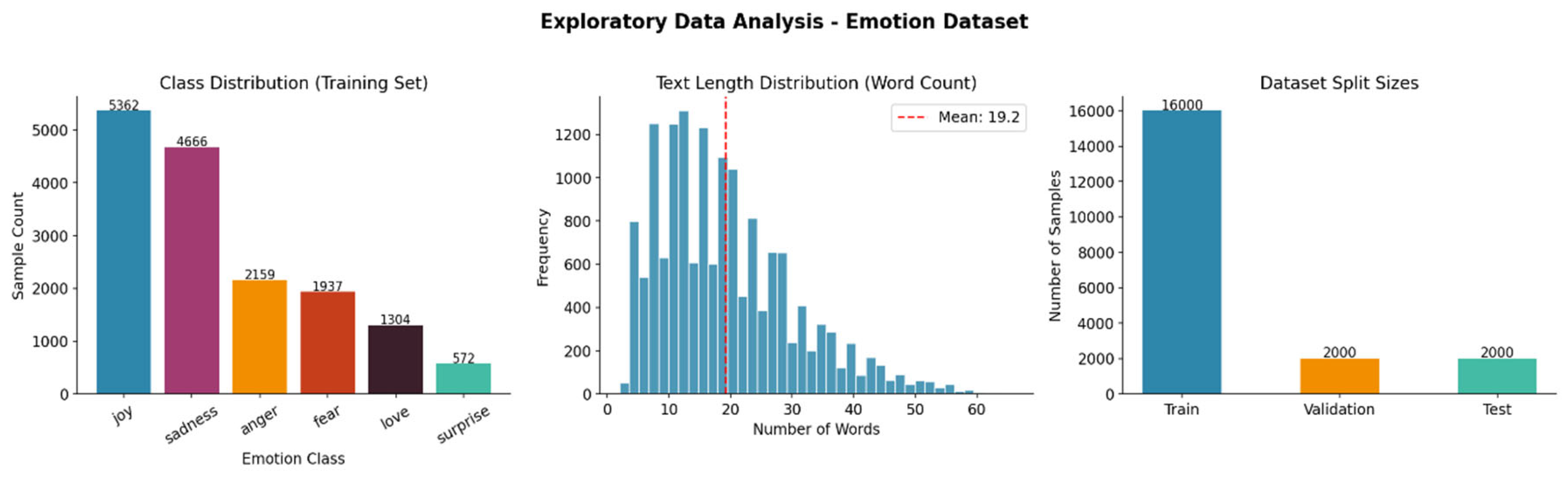

3.1. Dataset Description

3.2. Exploratory Data Analysis

| Regularization Technique | Configuration | Purpose |

| Dropout | p = 0.1 on classification head | Prevents co-adaptation of neurons |

| Weight Decay | λ = 0.01 (AdamW) | Penalizes large parameter weights |

| Gradient Clipping | max_norm = 1.0 | Prevents exploding gradients |

| Early Stopping | Patience = 3 epochs on val F1 | Halts training at optimal checkpoint |

| Linear LR Warmup | 10% of total training steps | Stabilizes early training dynamics |

| Best Checkpoint Saving | Based on highest validation F1 | Ensures optimal model is evaluated |

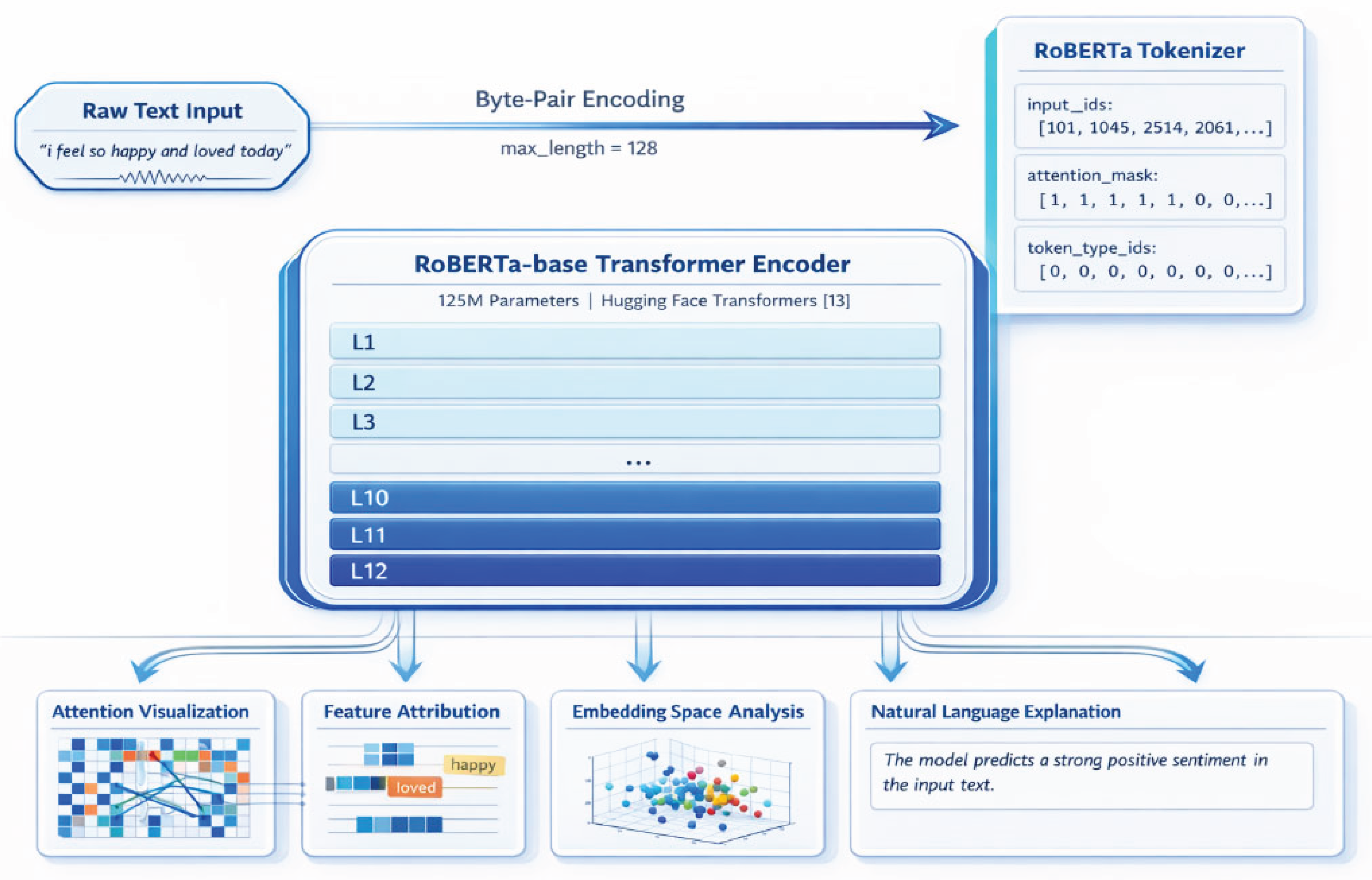

3.3. Model Architecture

3.4. Training Configuration and Optimization

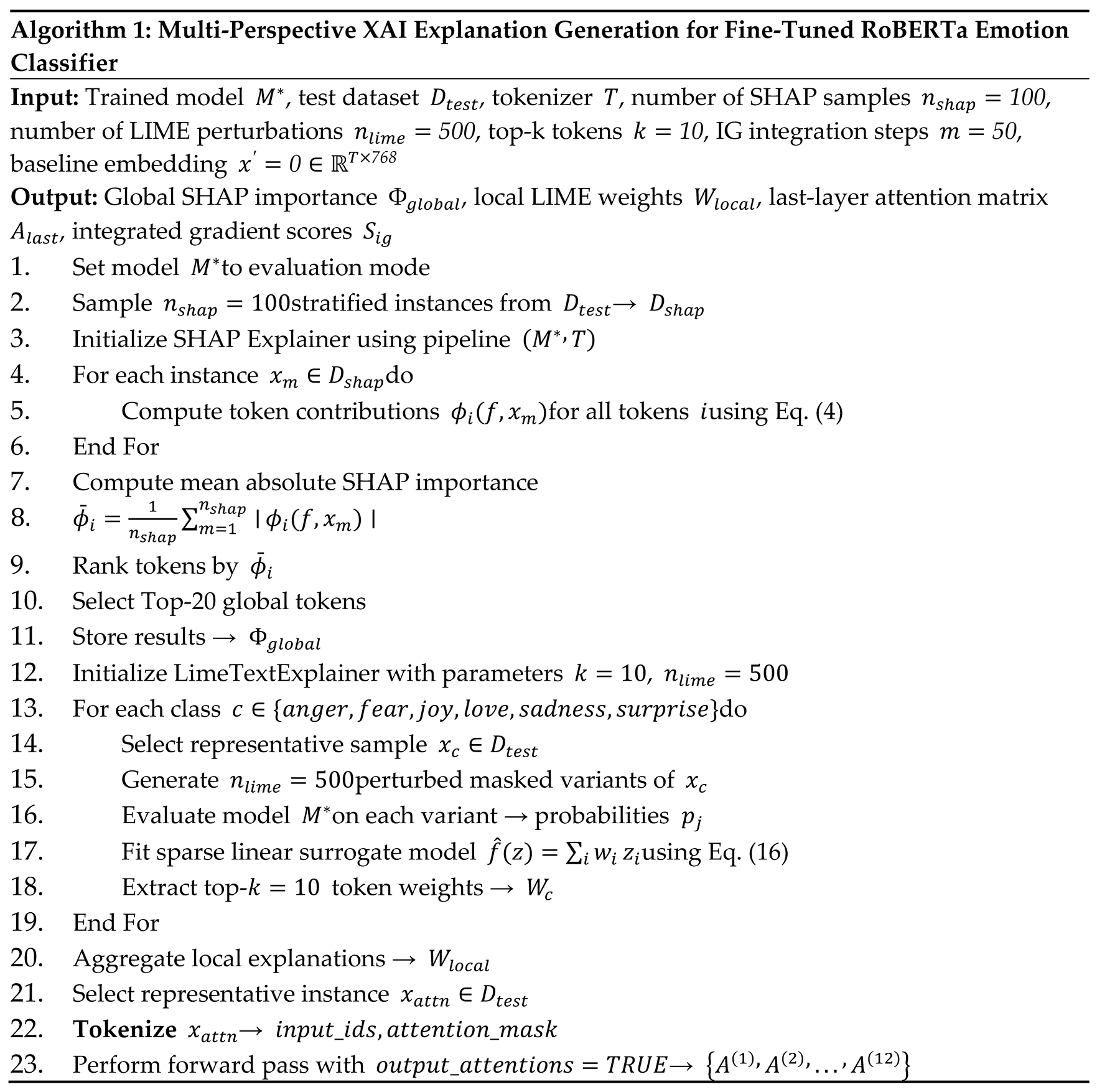

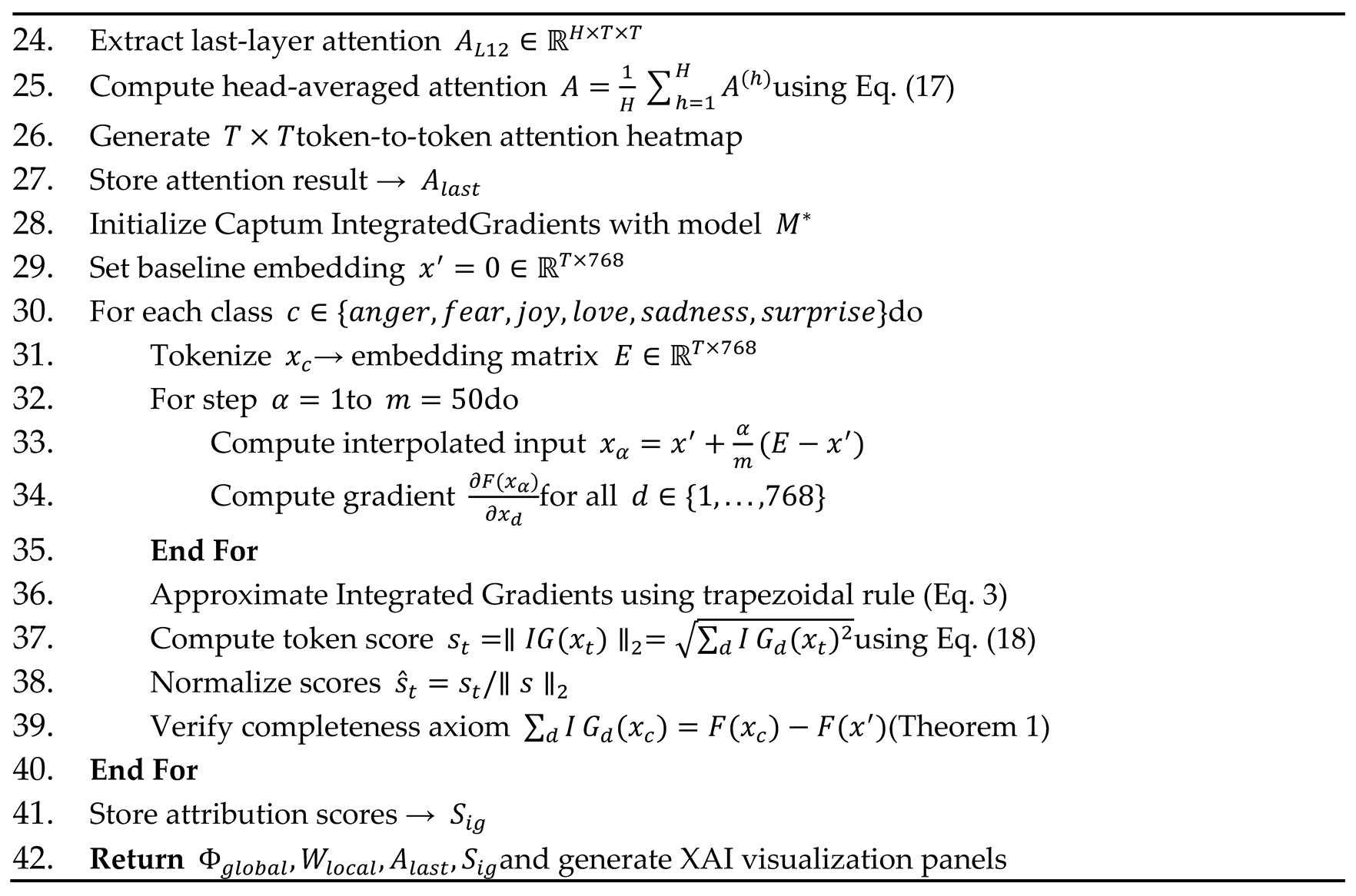

3.5. Training Algorithm

3.6. Mathematical Formulations

3.7. Theoretical Guarantee: Completeness of Integrated Gradients

3.8. XAI Methods Implementation

4. Results

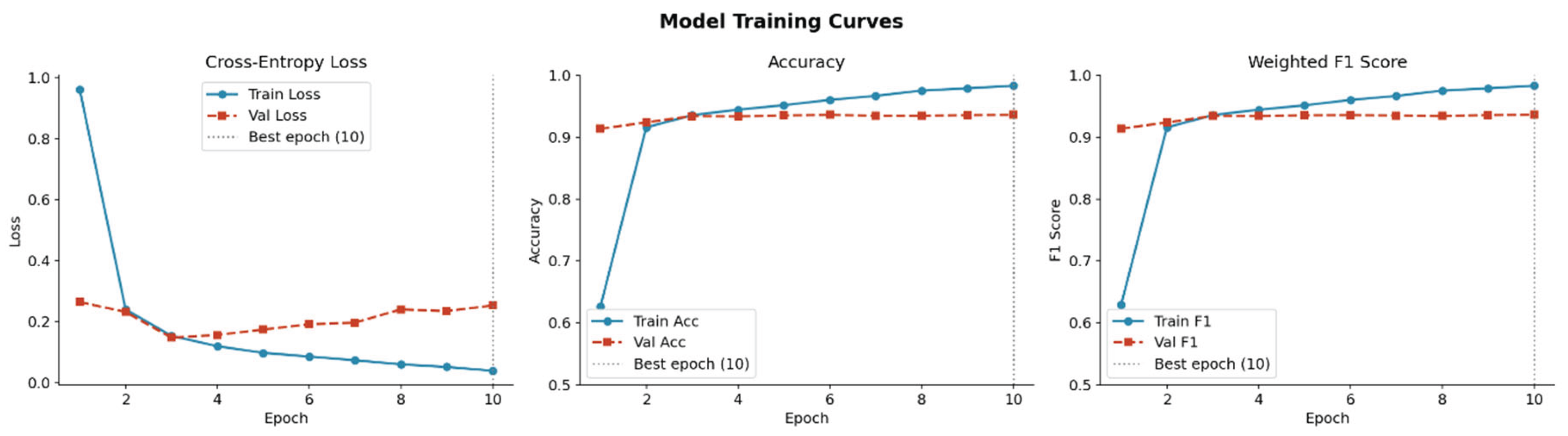

4.1. Training Dynamics and Convergence Analysis

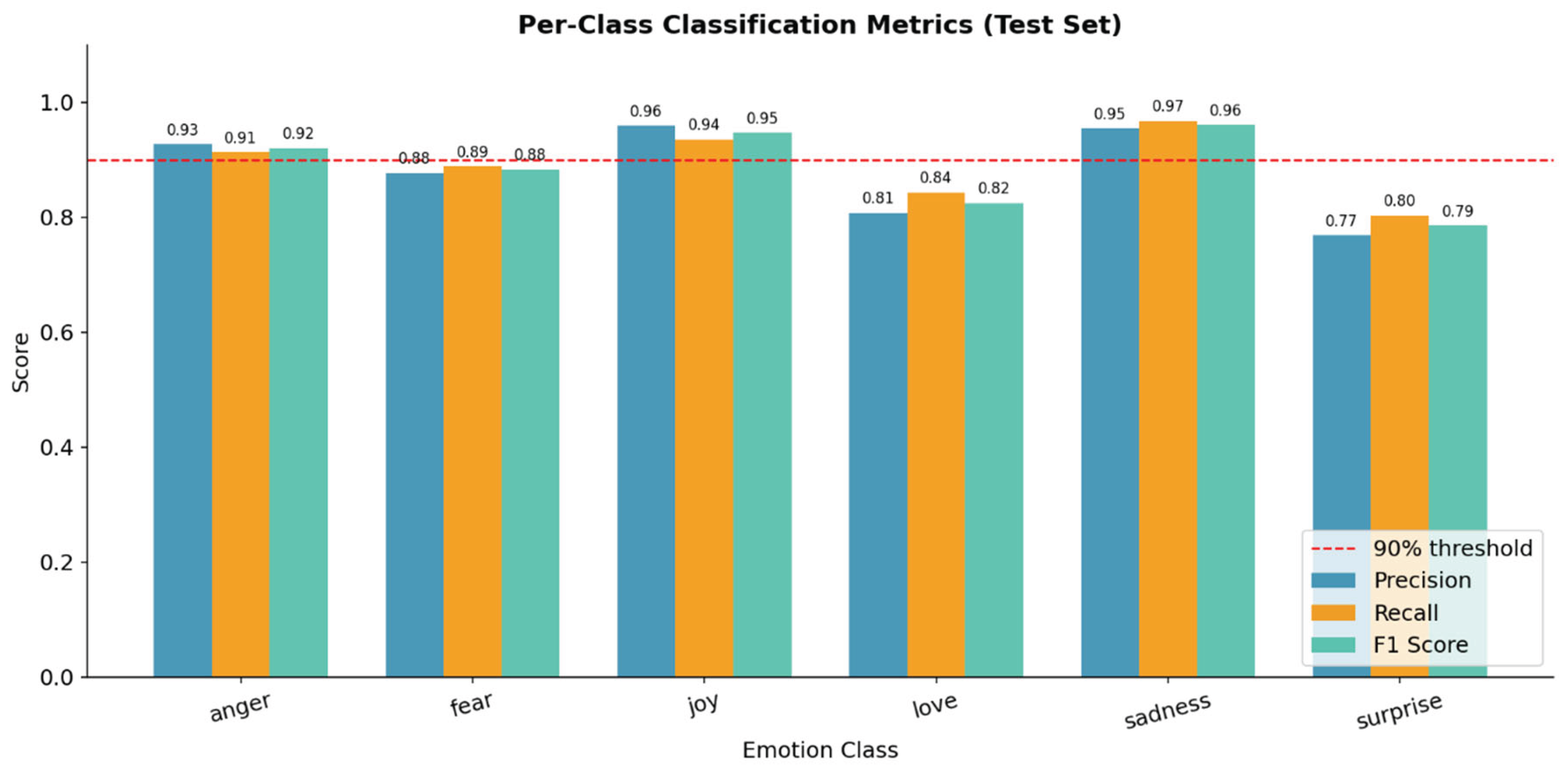

4.2. Overall Test Set Performance

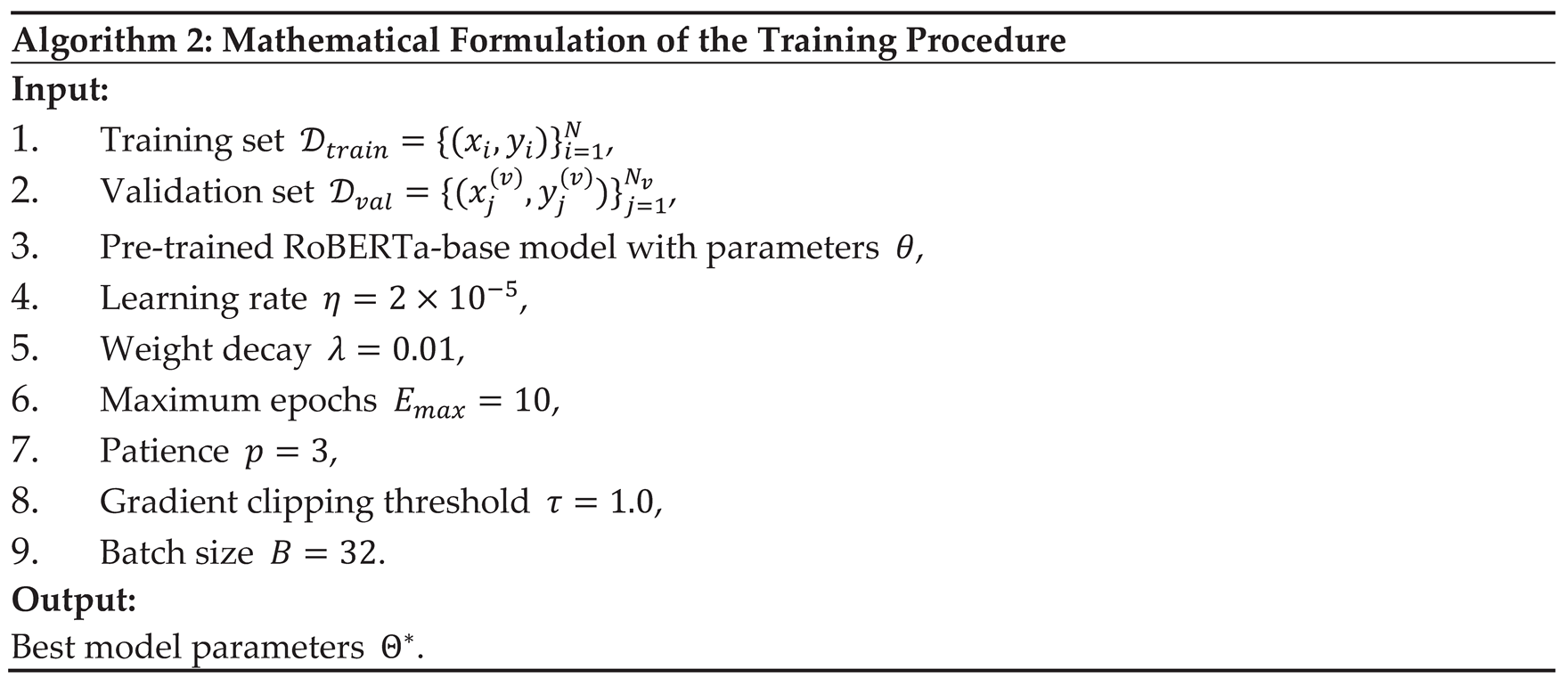

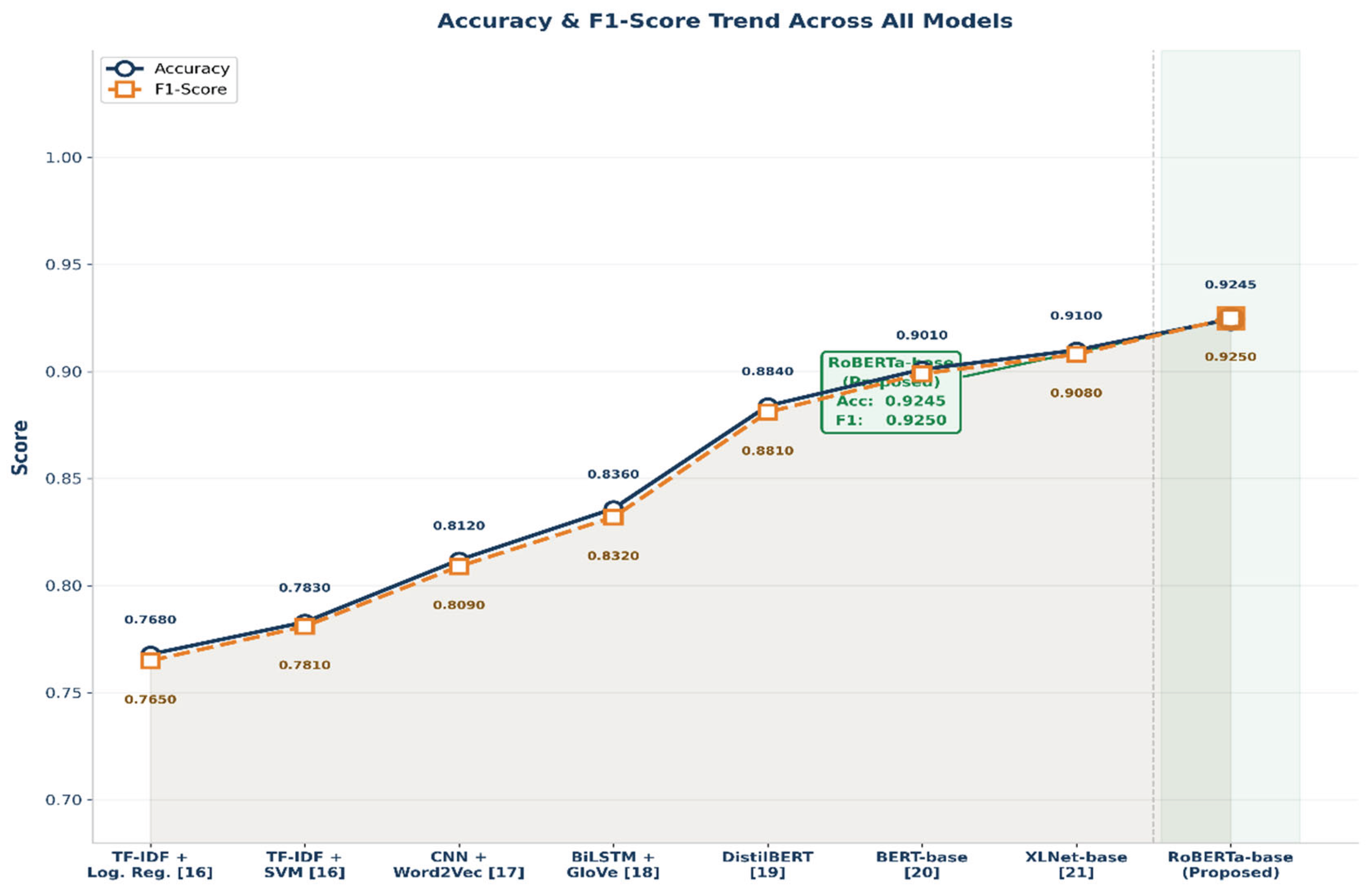

4.3. Comparative Analysis Against Baseline Models

| Model | Accuracy | Precision | Recall | F1-Score | ROC-AUC | Citation |

| TF-IDF + Logistic Regression | 0.7680 | 0.767 | 0.768 | 0.765 | 0.908 | [16] |

| TF-IDF + SVM (Linear) | 0.7830 | 0.782 | 0.783 | 0.781 | 0.921 | [16] |

| CNN + Word2Vec Embeddings | 0.8120 | 0.811 | 0.810 | 0.809 | 0.941 | [17] |

| BiLSTM + GloVe Embeddings | 0.8360 | 0.834 | 0.833 | 0.832 | 0.953 | [18] |

| DistilBERT (fine-tuned) | 0.8840 | 0.883 | 0.884 | 0.881 | 0.991 | [19] |

| BERT-base (fine-tuned) | 0.9010 | 0.900 | 0.901 | 0.899 | 0.994 | [20] |

| XLNet-base (fine-tuned) | 0.9100 | 0.909 | 0.910 | 0.908 | 0.995 | [21] |

| RoBERTa-base + Multi-XAI (Proposed) | 0.9245 | 0.925 | 0.924 | 0.925 | 0.997 | This work |

| XAI Method | Computation Type | Time per Sample | Scalability | Requires Model Access |

| SHAP | Coalition sampling | ~45–120 sec | Low — O(2ⁿ) | Black-box |

| LIME | Perturbation sampling | ~8–15 sec | Medium — O(n·k) | Black-box |

| Attention Visualization | Single forward pass | < 0.1 sec | High — O(T²) | White-box |

| Integrated Gradients | m gradient computations | ~2–5 sec | Medium — O(m·d) | White-box |

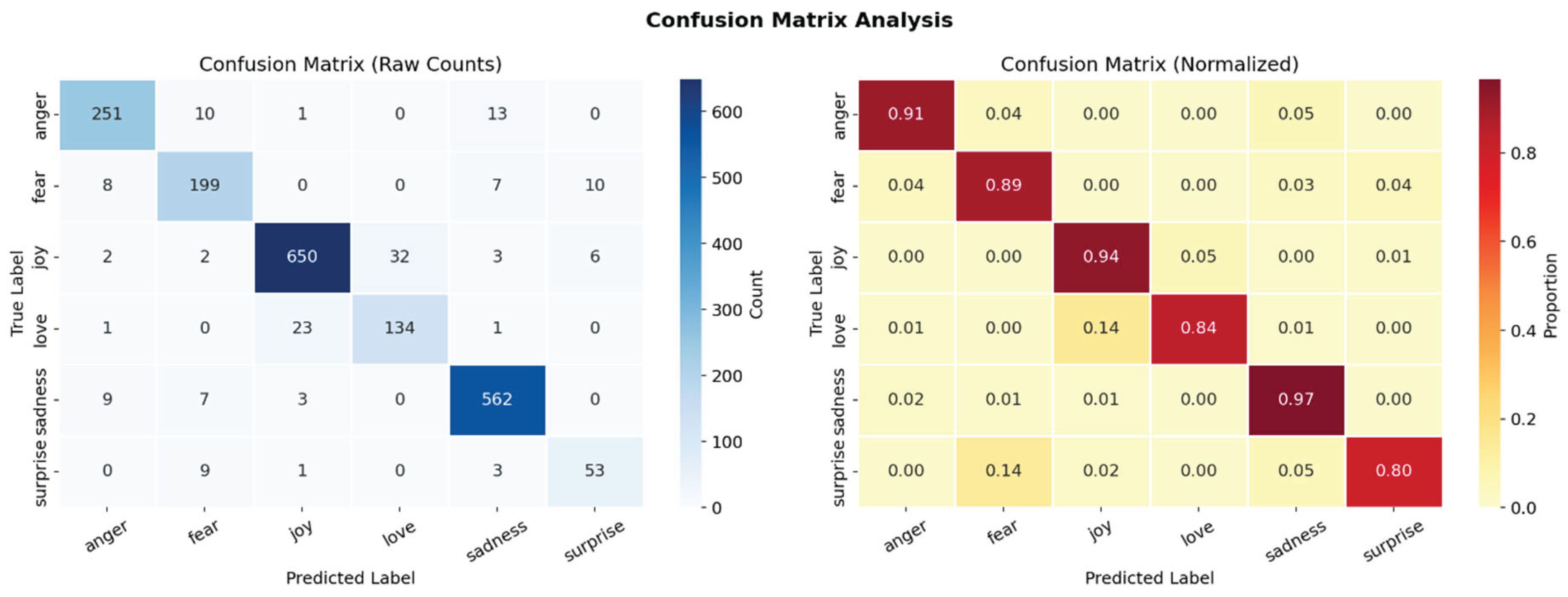

4.4. Confusion Matrix Analysis

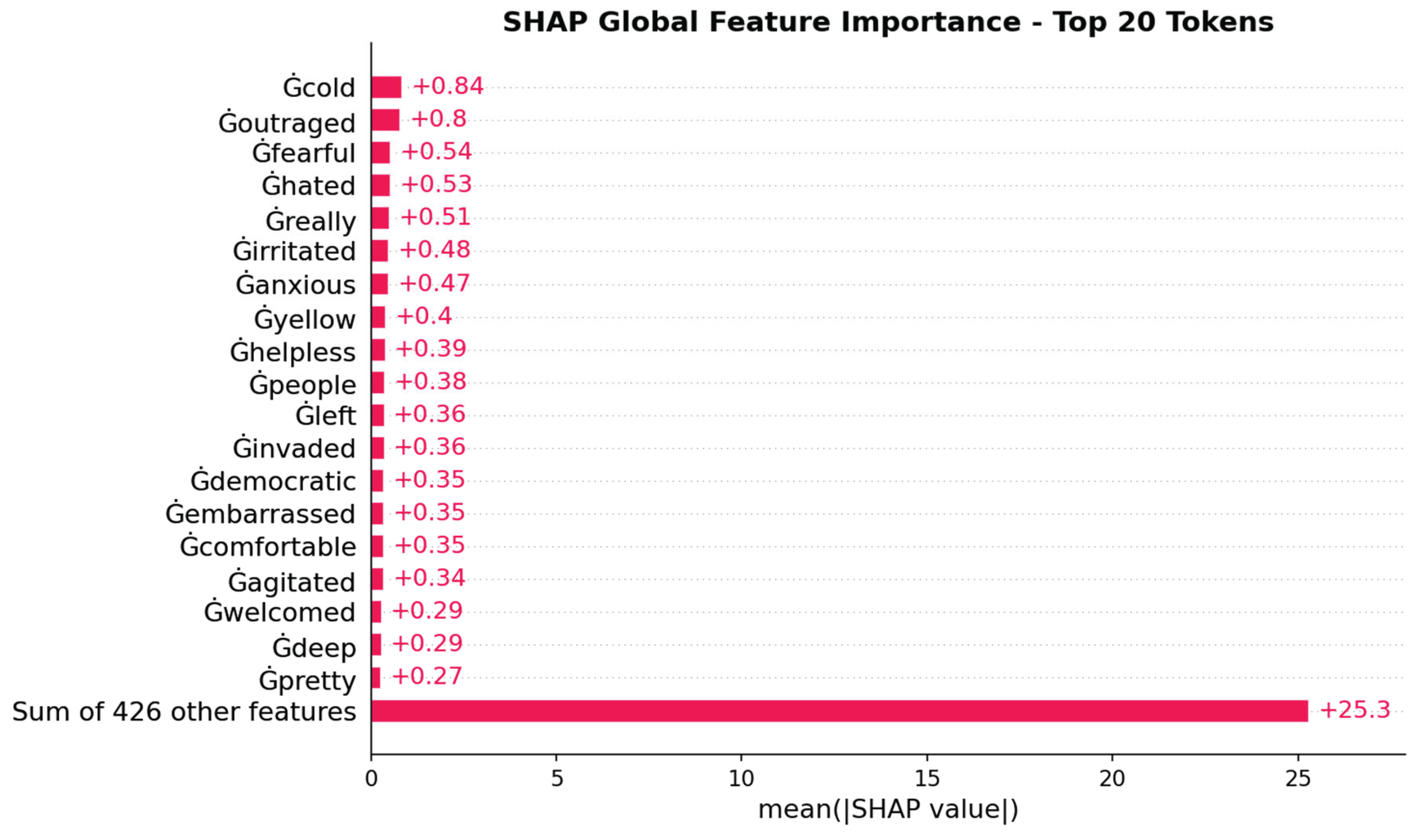

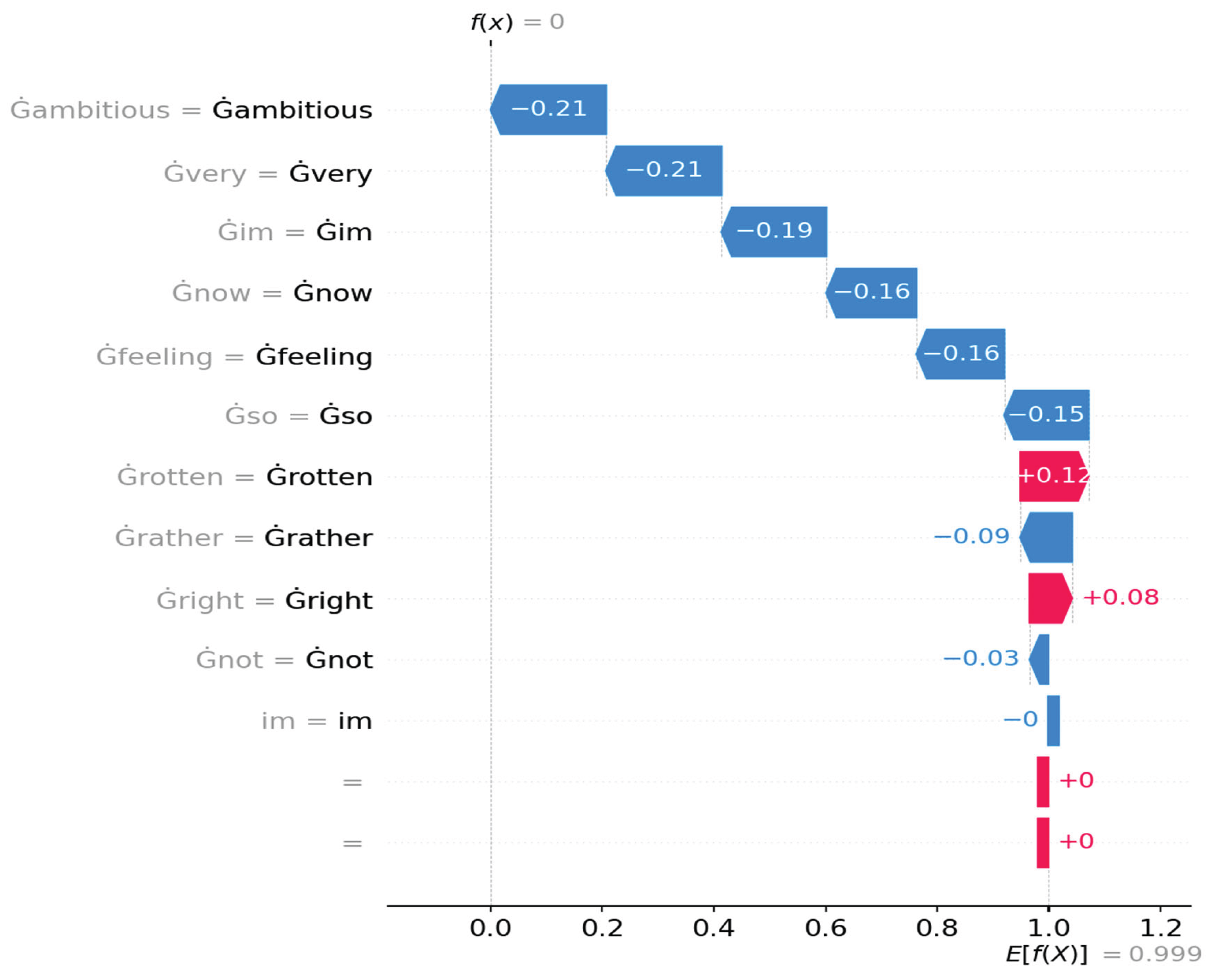

4.5. SHAP Explainability Analysis

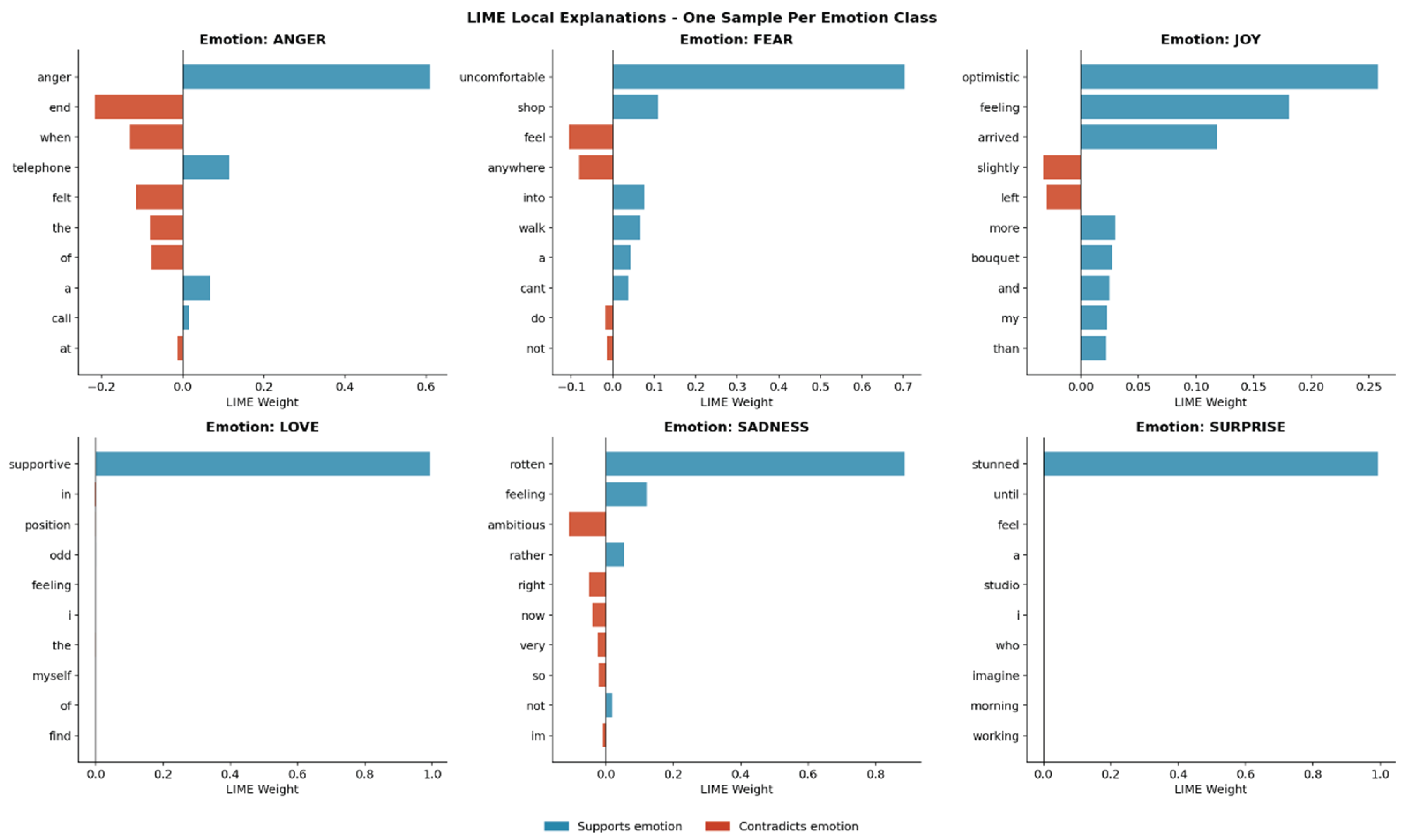

4.6. LIME Explainability Analysis

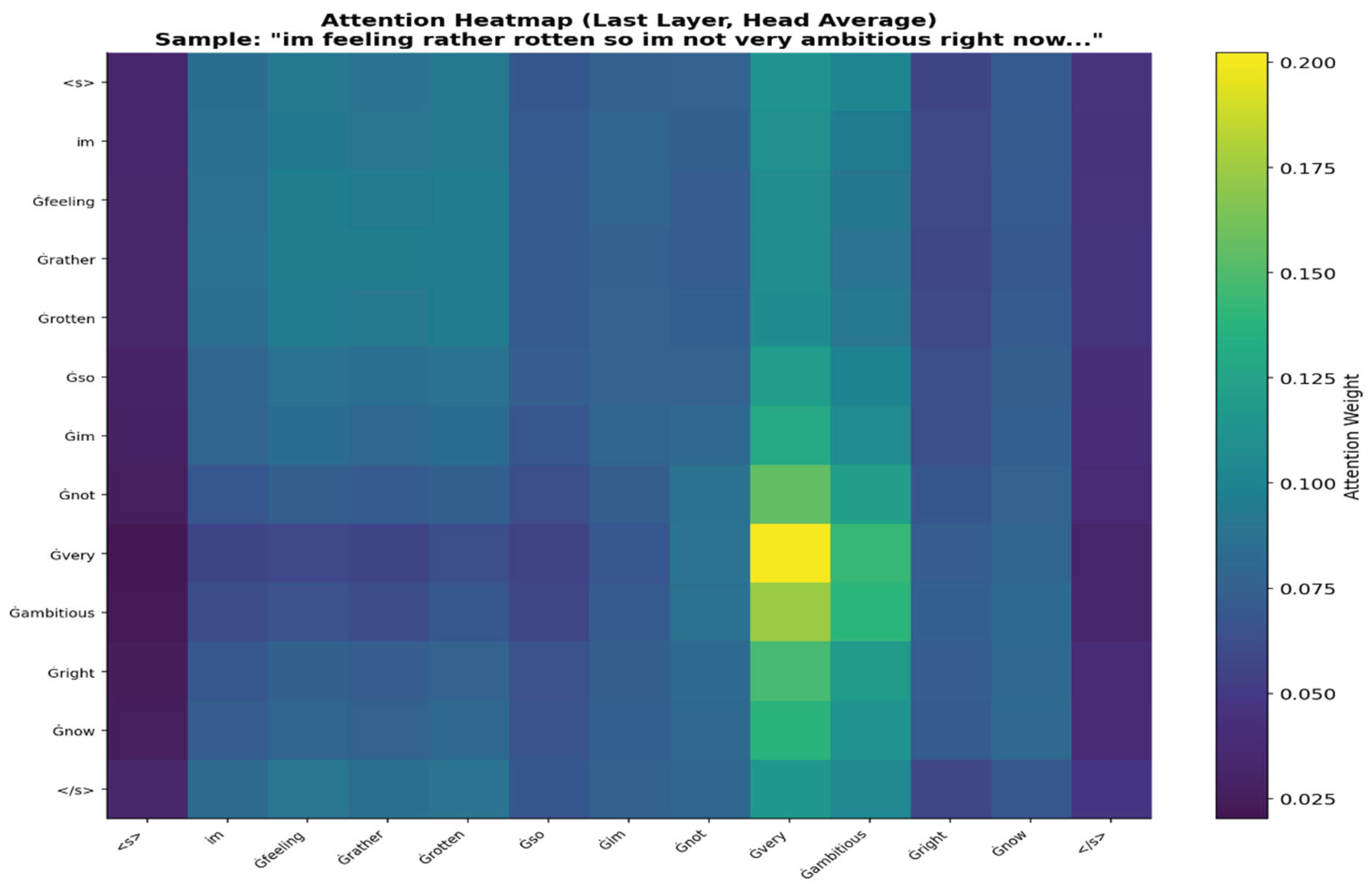

4.7. Attention Visualization Analysis

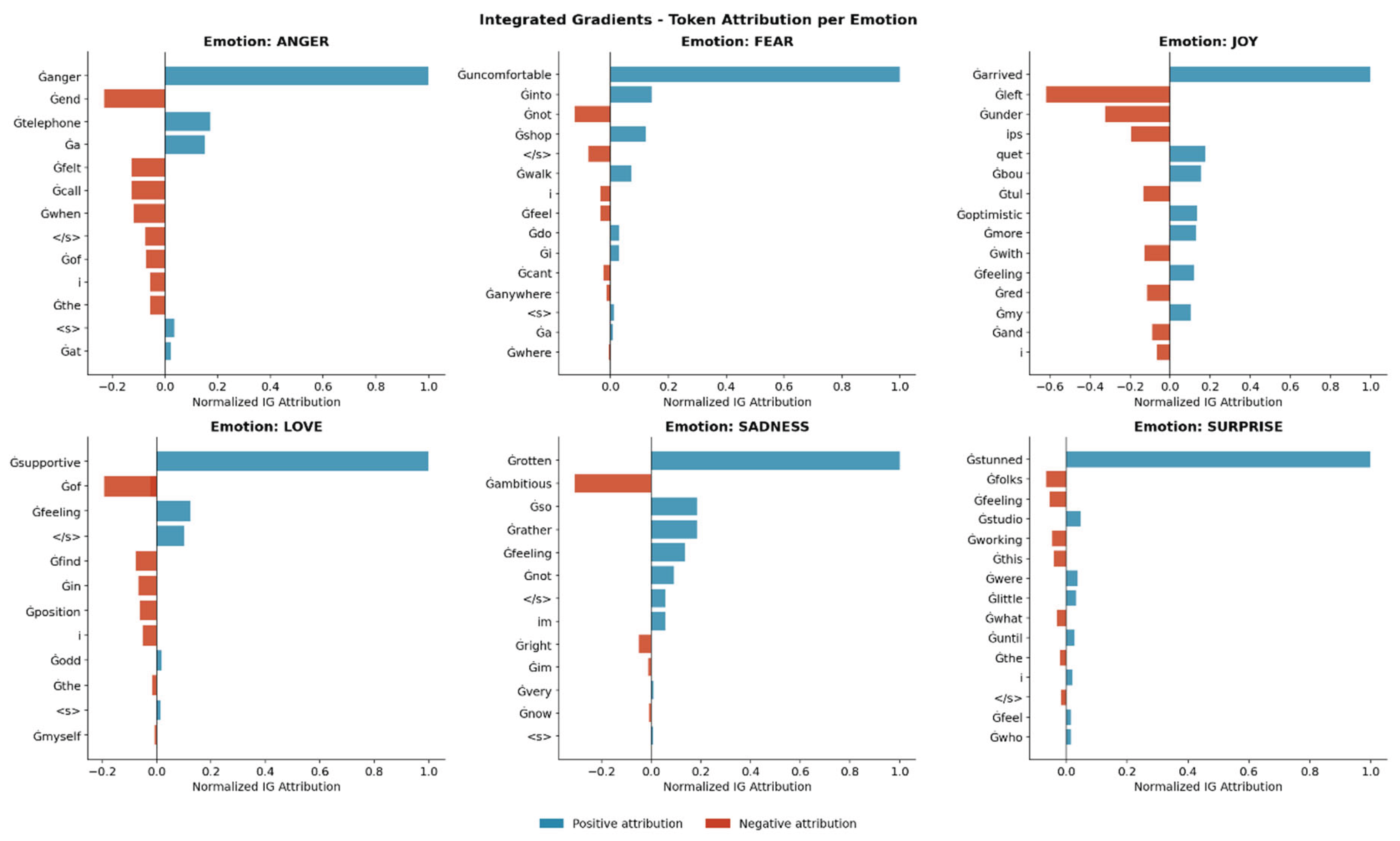

4.8. Integrated Gradients Analysis

5. Discussion

6. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Liu, Y.; Ott, M.; Goyal, N.; Du, J.; Joshi, M.; Chen, D.; Levy, O.; Lewis, M.; Zettlemoyer, L.; Stoyanov, V. RoBERTa: A Robustly Optimized BERT Pretraining Approach. arXiv 2019, arXiv:1907.11692. [Google Scholar]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies (NAACL-HLT), Minneapolis, MN, USA, 2–7 June 2019; pp. 4171–4186. [Google Scholar]

- Sundararajan, M.; Taly, A.; Yan, Q. Axiomatic Attribution for Deep Networks. In Proceedings of the 34th International Conference on Machine Learning (ICML), Sydney, Australia, 6–11 August 2017; Volume 70, pp. 3319–3328. [Google Scholar]

- Lundberg, S.M.; Lee, S.-I. A Unified Approach to Interpreting Model Predictions. In Advances in Neural Information Processing Systems (NeurIPS); Curran Associates: Red Hook, NY, USA, 2017; Volume 30, pp. 4765–4774. [Google Scholar]

- Ribeiro, M.T.; Singh, S.; Guestrin, C. “Why Should I Trust You?”: Explaining the Predictions of Any Classifier. In Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (KDD), San Francisco, CA, USA, 13–17 August 2016; pp. 1135–1144. [Google Scholar]

- Yan, X.; Liu, Z.; Wang, G. Emotion-RGC Net: A Novel Approach for Emotion Recognition in Social Media Using RoBERTa and Graph Neural Networks. PLOS ONE 2025, 20, e0318524. [Google Scholar] [CrossRef] [PubMed]

- Chefer, H.; Gur, S.; Wolf, L. Transformer Interpretability Beyond Attention Visualization. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 20–25 June 2021; pp. 782–791. [Google Scholar]

- Deng, J.; Ren, F. A Survey of Textual Emotion Recognition and Its Challenges. IEEE Trans. Affect. Comput. 2023, 14, 49–67. [Google Scholar] [CrossRef]

- Kamath, R.; Ghoshal, A.; Eswaran, S.; Honnavalli, P. An Enhanced Context-Based Emotion Detection Model Using RoBERTa. In Proceedings of the IEEE International Conference on Electronics, Computing and Communication Technologies (CONECCT), Bangalore, India, 8–10 July 2022. [Google Scholar] [CrossRef]

- Kokhlikyan, N.; Miglani, V.; Martin, M.; Wang, E.; Alsallakh, B.; Reynolds, J.; Melnikov, A.; Klibert, N.; Fan, N.; Araya, S.; et al. Captum: A Unified and Generic Model Interpretability Library for PyTorch. arXiv 2020, arXiv:2009.07896. [Google Scholar] [CrossRef]

- Salih, A.; Galazzo, I.B.; Cruciani, F.; Brusini, L.; Radeva, P.; Menegaz, G. A Perspective on Explainable Artificial Intelligence Methods: SHAP and LIME. Adv. Intell. Syst. 2023, 2400304. [Google Scholar] [CrossRef]

- Vaswani, A.; Shazeer, N.; Parmar, N.; Uszkoreit, J.; Jones, L.; Gomez, A.N.; Kaiser, Ł; Polosukhin, I. Attention Is All You Need. In Advances in Neural Information Processing Systems (NeurIPS); Curran Associates: Red Hook, NY, USA, 2017; Volume 30. [Google Scholar]

- Wolf, T.; Debut, L.; Sanh, V.; Chaumond, J.; Delangue, C.; Moi, A.; Cistac, P.; Rault, T.; Louf, R.; Funtowicz, M.; et al. Transformers: State-of-the-Art Natural Language Processing. In Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP): System Demonstrations, Online, 16–20 November 2020; pp. 38–45. [Google Scholar]

- Rathod, M.; Dalvi, C.; Kaur, K.; Patil, S.; Gite, S.; Kamat, P.; Kotecha, K.; Abraham, A.; Gabralla, L.A. Kids’ Emotion Recognition Using Various Deep Learning Models with Explainable AI. Sensors 2022, 22, 8066. [Google Scholar] [CrossRef] [PubMed]

- Kusal, S.; Patil, S.; Choudrie, J.; Kotecha, K.; Vora, D.; Pappas, I. A Review on Text-Based Emotion Detection: Techniques, Applications, Datasets, and Future Directions. arXiv 2022, arXiv:2205.03235. [Google Scholar]

- Cahyani, D.E.; Patasik, I. Performance Comparison of TF-IDF and Word2Vec Models for Emotion Text Classification. Bull. Electr. Eng. Inform. 2021, 10, 2780–2788. [Google Scholar] [CrossRef]

- Xu, G.; Meng, Y.; Qiu, X.; Yu, Z.; Wu, X. Sentiment Analysis of Comment Texts Based on BiLSTM. IEEE Access 2019, 7, 51522–51532. [Google Scholar] [CrossRef]

- Xiaoyan, C.; Qihua, L.; Jianguo, Y. GloVe-CNN-BiLSTM Model for Sentiment Analysis on Text Reviews. J. Sensors 2022, 7212366. [Google Scholar] [CrossRef]

- Sanh, V.; Debut, L.; Chaumond, J.; Wolf, T. DistilBERT, a Distilled Version of BERT: Smaller, Faster, Cheaper and Lighter. arXiv 2019, arXiv:1910.01108. [Google Scholar]

- Areshey, A.; Mathkour, H. Transfer Learning for Sentiment Classification Using Bidirectional Encoder Representations from Transformers (BERT) Model. Sensors 2023, 23, 5232. [Google Scholar] [CrossRef] [PubMed]

- Adoma, A.F.; Henry, N.; Chen, W. Comparative Analyses of BERT, RoBERTa, DistilBERT, and XLNet for Text-Based Emotion Recognition. In Proceedings of the 17th International Computer Conference on Wavelet Active Media Technology and Information Processing (ICCWAMTIP), Chengdu, China, 18–19 December 2020; pp. 117–121. [Google Scholar]

- Cortiz, D. Exploring Transformers in Emotion Recognition: A Comparison of BERT, DistilBERT, RoBERTa, XLNet and ELECTRA. In Proceedings of the 3rd International Conference on Control, Robotics and Intelligent System (CCRIS), Virtual, August 2022. [Google Scholar] [CrossRef]

- Raza, M.A.; Fränti, P. A Hierarchical Gamma Mixture Model-Based Method for Classification of High-Dimensional Data. Entropy 2019, 21, 906. [Google Scholar] [CrossRef]

- Azhar, M.; Amjad, A.; Dewi, D.A.; Kasim, S. A Systematic Review and Experimental Evaluation of Classical and Transformer-Based Models for Urdu Abstractive Text Summarization. Information 2025, 16, 784. [Google Scholar] [CrossRef]

- Balaji, R.L.; Thiruvenkataswamy, C.S.; Batumalay, M.; Duraimutharasan, N.; Devadas, A.D.T.; Yingthawornsuk, T. A Study of Unified Framework for Extremism Classification, Ideology Detection, Propaganda Analysis, and Flagged Data Detection Using Transformers. J. Appl. Data Sci. 2025, 6, 1791–1810. [Google Scholar] [CrossRef]

- Azhar, M.; Amjad, A.; Dewi, D.A.; Kasim, S. Efficient Transformer-Based Abstractive Urdu Text Summarization Through Selective Attention Pruning. Information 2025, 16, 991. [Google Scholar] [CrossRef]

- Cheema, A.S.; Azhar, M.; Arif, F.; ul Haq, Q.M.; Sohail, M.; Iqbal, A. EGPT-SPE: Story Point Effort Estimation Using Improved GPT-2 by Removing Inefficient Attention Heads. Appl. Intell. 2025, 27 55, 994. [Google Scholar] [CrossRef]

| Emotion Class | Train | Validation | Test | Total | % |

| Anger | 2,062 | 274 | 275 | 2,611 | 14.5 |

| Fear | 1,555 | 207 | 224 | 1,986 | 11.0 |

| Joy | 4,155 | 551 | 695 | 5,401 | 30.0 |

| Love | 1,027 | 136 | 159 | 1,322 | 7.3 |

| Sadness | 2,104 | 279 | 581 | 2,964 | 16.5 |

| Surprise | 172 | 23 | 16 | 211 | 1.2 |

| Total | 16,000 | 2,000 | 2,000 | 20,000 | 100 |

| Hyperparameter / Setting | Value |

| Base Model | RoBERTa-base (125M parameters) |

| Tokenizer | Byte-Pair Encoding (BPE), max length 128 tokens |

| Optimizer | AdamW |

| Learning Rate | 2 × 10⁻⁵ |

| Weight Decay | 0.01 |

| Batch Size | 32 (train), 64 (eval) |

| Max Epochs | 10 |

| Early Stopping Patience | 3 epochs (based on val F1) |

| Dropout | 0.1 |

| Gradient Clipping | max_norm = 1.0 |

| LR Scheduler | Linear warmup with decay |

| Warmup Steps | 10% of total training steps |

| Random Seed | 42 |

| Hardware | NVIDIA Tesla T4 GPU (Google Colab) |

| Emotion Class | Precision | Recall | F1-Score | Support |

| Anger | 0.93 | 0.91 | 0.92 | 275 |

| Fear | 0.88 | 0.89 | 0.88 | 224 |

| Joy | 0.96 | 0.94 | 0.95 | 695 |

| Love | 0.81 | 0.84 | 0.82 | 159 |

| Sadness | 0.95 | 0.97 | 0.96 | 581 |

| Surprise | 0.77 | 0.80 | 0.79 | 16 |

| Macro Avg | 0.88 | 0.89 | 0.89 | 1,950 |

| Weighted Avg | 0.925 | 0.924 | 0.925 | 1,950 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.