Appendix A. More Related Work

While early financial LLM benchmarks made progress on credit risk evaluation, some other related works are also worth to be concerned. BizFinBench offers 6,781 Chinese business-oriented queries covering numerical computation, reasoning, information extraction, and prediction [

4]. Moreover, Golden Touchstone builds on this with a bilingual suite addressing eight core financial NLP tasks [

20]. FinMME tackles multimodal chart–text reasoning with over 11,000 samples across 18 sub-domains [

21], while FinDABench focuses on numerical analysis, anomaly detection, and report generation [

12]. More comprehensive efforts like FinTral/FinSet bring together nine task types across 23 datasets to assess financial QA, extraction, and misunderstanding detection [

11]. Other works emphasize the value of incorporating narrative and textual data into credit risk models [

22,

23]. Studies such as Drinkall et al. [

24], Golec and AlabdulJalil [

25], Bagalkotkar et al. [

26] demonstrate that integrating borrower narratives with traditional financial indicators yields significant improvements in prediction accuracy by capturing deeper insights into borrower behavior and contextual factors.

Table A1.

Comparison of Recent Financial LLM Benchmarks and Reasoning Frameworks.

Table A1.

Comparison of Recent Financial LLM Benchmarks and Reasoning Frameworks.

| Benchmark/Model |

Key Focus |

Language/Modality |

Notable Contribution |

| BizFinBench |

Real-world business queries |

Chinese / Text + Tables |

Introduces IteraJudge for automated bias-reduced evaluation via iterative refinement. |

| CCLUPE |

Credit transaction logs |

Chinese / Text + Timeseries |

Focuses on multi-stage reasoning and causal inference for credit risk appraisal. |

| Dianjin-R1 |

Complex financial reasoning |

Bilingual / Text |

Enhances reasoning via dual-reward reinforcement learning and structured supervision. |

| FinRAGBench-V |

Multimodal RAG |

Bilingual / Text + Tables |

Evaluates RAG specific to multimodal financial contexts. |

Appendix A.1. Datasets and Methodological Tools

Beyond task-specific benchmarks, several foundational datasets and tools have been developed to support LLM-based credit risk assessment. These resources provide essential infrastructure for evaluating text understanding, structured data reasoning, and behavioral inference in financial applications. Existing work encompasses three key directions: (1)

loan document understanding, which focuses on contract analysis and financial document comprehension [

27]; (2)

time-series and transaction data modeling, which addresses the temporal dynamics of financial behavior [

28]; and (3)

financial-domain LLM frameworks with RAG integration, which enhance model reliability through external knowledge retrieval [

29,

30].

Appendix B. Expert Consultation Process Record and Annotation

The design and validation of our research framework benefited significantly from consultations with senior professionals at one of the largest financial institutions, which serves over 2 billion users globally. Through structured interviews with industry specialists, we gathered critical insights into consumer credit evaluation. These expert perspectives directly shaped the development of CCLUPE. Our consultation panel comprised seasoned specialists across credit underwriting, risk control, and lending operations, each with 8 to over 15 years of hands-on experience as

Table A2 shows.

Table A2.

Professional Profiles Summary.

Table A2.

Professional Profiles Summary.

| Summarized Professional Profiles |

|---|

|

Profile A: Senior Credit Underwriting Specialist (10+ yrs). Expert in loan approval processes and comprehensive bank statement analysis. |

|

Profile B: Risk Control Expert (8+ yrs). Specializing in strategic policy design and risk management workflow optimization. |

|

Profile C: Risk System Architect (10+ yrs). Proven track record in developing and scaling automated decisioning systems. |

|

Profile D: Credit Review Analyst (6+ yrs). Focused on detailed credit reporting and complex borrower risk assessment. |

|

Profile E: Micro-enterprise Credit Specialist (7+ yrs). Skilled in small business underwriting and financial statement audit. |

|

Profile F: Risk Operations Manager (5+ yrs). Expert in portfolio monitoring, early warning indicators, and collection strategies. |

Key findings from our expert consultations highlighted several critical aspects:

Structured Credit Assessment Workflow Experts emphasized the sequential nature of credit evaluation, typically beginning with identity verification and credit report review before proceeding to income validation and repayment capacity analysis. This insight directly influenced our hierarchical task design in CCLUPE, ensuring alignment with real-world underwriting workflows.

Document and Statement Interpretation Credit professionals heavily rely on bank statements, financial documents, and tabular data for income verification and cash flow analysis. Experts A and E particularly noted that accurate interpretation of transaction records and financial statements is fundamental to credit decisions.

Multi-dimensional Cross-validation Expert D highlighted the critical practice of cross-referencing multiple data sources, including credit reports, bank statements, and employment verification, to detect inconsistencies and potential fraud. This insight reinforced our inclusion of multi-document reasoning tasks and the integration of consistency-checking mechanisms in our evaluation framework.

Risk Policy and Regulatory Compliance Experts B and F emphasized the essential role of understanding risk control policies, regulatory requirements, and institution-specific credit guidelines. This validated our approach to incorporate policy, aware evaluation criteria and domain, specific knowledge assessment across various lending scenarios.

Data Quality and Anomaly Detection Multiple experts noted the prevalence of data quality issues in real-world credit applications, including incomplete documentation, inconsistent information, and potential fabrication. Expert C particularly stressed the importance of identifying anomalies in automated decisioning systems. This observation supported our decision to include robustness testing and misunderstanding detection metrics in our benchmark design.

Borrower Segmentation Complexity Experts A and E highlighted significant differences in assessment approaches between personal loans and small business credit, noting that SME lending requires additional evaluation of business viability and industry-specific risks. This insight informed our comprehensive coverage of diverse borrower profiles and lending scenarios.

These expert insights were instrumental in developing CCLUPE’s comprehensive structure, ensuring its relevance to real-world consumer credit operations while maintaining high standards of practical applicability and evaluation rigor. The consultation process validated our approach to creating a benchmark that effectively assesses AI systems’ capabilities in handling complex credit assessment tasks across the full lending lifecycle.

We recruited professional annotators through the platform and university recurit system and provided compensation aligned with standard university research assistant rates, ensuring the payment was fair and adequate for the local cost of living and the participants’ demographic.

Appendix C. Dataset Construction Prompt Sample

Appendix C.1. Personal Client Profile: Not-Overdue Clients

Table A3 documents the localized and translated prompt configuration used to generate synthetic transaction logs for the CCLUPE benchmark.

Table A3.

LLM Prompt for Generating Non-Overdue Personal Credit Logs.

Table A3.

LLM Prompt for Generating Non-Overdue Personal Credit Logs.

| Augmentation Strategy: Pattern-Consistent Flow Generation |

|---|

| System Overview: |

| You are a financial credit analysis expert. Based on the provided raw bank transaction logs of high-credit-rating clients, you must synthesize highly realistic new client data that maintains the original statistical distribution but simulates unique behavioral features. |

| Translation of Input Prompt: |

| "The following content contains the complete raw transaction data for a non-overdue personal client. Please meticulously analyze its integrity, field semantics, data distribution, and transaction characteristics (e.g., consistent repayment records, absence of overdue status flags): |

| [Raw Data Context Provided Hierarchically] |

| Please generate a new set of personal transaction data for Person ID: {ID}, strictly adhering to: |

| 1. Structural Integrity: Headers, field counts, and data types must be identical to the original; do not add or remove columns. |

| 2. Volume: Generate between 50 to 300 independent transaction records. |

| 3. Temporal Distribution: Transactions should be evenly distributed throughout the 2023 calendar year (Jan–Dec). |

| 4. Data Variance: Textual fields (merchant names, remarks) must be distinct from the original samples to avoid data leakage. |

| 5. Logic Consistency: No null values. Amounts must fluctuate realistically, transaction types must be diverse, and logical relationships between associated fields (e.g., Balance = Prev_Balance + Amount) must remain self-consistent. |

| Return the result directly in tab-separated format (Header + Data) without additional conversational text." |

Appendix C.2. Synthesis and Evaluation Review

Pattern Analysis: The generation process for non-overdue clients focuses on Repayment Regularity and Cash Flow Stability. Unlike overdue samples, these records prioritize the cyclical nature of income (e.g., monthly payroll) and the punctuality of high-priority expenses like credit card repayments and utilities.

Merchant Diversification: To ensure the benchmark’s robustness, the LLM is instructed to generate a wide variety of merchant names (Textual fields). This forces models to generalize spending habits rather than relying on keyword matching of known "safe" merchants.

Logical Validation: Post-generation scripts (as seen in the Python implementation) enforce a strict volume check ( rows). This ensures that the generated credit history is long enough to exhibit long-term financial patterns, such as "Nighttime Spending" or "Investment Redemption," which are key sub-domains of the CCLUPE benchmark.

Temporal Coherence: By enforcing a distribution across the full year of 2023, the dataset captures potential seasonal behaviors (e.g., increased utility bills in winter or travel expenses in summer) that are critical for evaluating an LLM’s understanding of time-series transactional data.

Appendix C.3. Personal Client Profile: Overdue Clients

Table A4 details the prompt engineering strategy used to generate synthetic transaction logs for clients with historical default patterns. The focus is on simulating behavioral indicators of credit risk without explicitly labeling transactions as "overdue."

Table A4.

LLM Prompt for Generating Overdue-Prone Personal Credit Logs.

Table A4.

LLM Prompt for Generating Overdue-Prone Personal Credit Logs.

| Augmentation Strategy: Feature-Induced Risk Simulation |

|---|

| System Overview: |

| You are a senior risk management consultant. Your task is to analyze raw bank statements of clients who eventually defaulted and synthesize realistic new datasets for ID: {ID}. The generated data must exhibit subtle precursors to financial distress while remaining structurally valid. |

| Translation of Input Prompt: |

| "The following content provides the complete raw transaction data for a personal client with a history of overdue payments. Please analyze its structure, field semantics, data distribution, and specific transaction characteristics: |

| [Full Transactional Context Provided] |

| Generate a new set of original transactional records for Person ID: {person_id}, strictly adhering to: |

| 1. Structural Adherence: Column headers, field counts, and data types must perfectly match the original; no deviations permitted. |

| 2. Data Volume: Synthesize between 50 to 300 independent transaction records. |

| 3. Temporal Spread: Ensure transactions are evenly distributed across the 2023 calendar year (Jan–Dec). |

| 4. Field Uniqueness: All text-based fields (e.g., merchant names, remarks) must be entirely different from the source samples to ensure data diversity. |

| 5. Arithmetic Logic: Ensure zero null values. Balance trajectories must be mathematically sound (e.g., refund amounts must be negative and reflect in the balance logic). |

| 6. Latent Feature Construction: The content should subtly reflect characteristics associated with potential delinquency (e.g., irregular income, high-frequency small loans, or spikes in nighttime spending), without explicitly using words like ’Overdue’ or ’Default’. |

| Return ONLY tab-separated content (Header + Data) with no conversational preamble." |

Appendix C.4. Risk Pattern Synthesis and Evaluation

Behavioral Indicators: The synthesis of "Overdue" profiles shifts from regular consumption to Spike Recognition and Financial Stretching. The model is tasked with generating logs that include "Microloan" signals and "Spikes" in spending that exceed typical balance buffers, simulating the "liquidity pressure" mentioned in the CCLUPE core domains.

Implicit Feature Learning: A key requirement of the prompt is the exclusion of explicit "Overdue" keywords. This is designed to test the LLM’s capability in Causal Inference and Multi-stage Reasoning. Benchmarking models must infer risk from the sequence and nature of transactions (e.g., a sudden increase in revolving credit repayments) rather than simple text classification.

Contextual Integrity: By utilizing full-year records (Jan–Dec 2023), the generated samples provide sufficient historical depth to evaluate "Seasonality" and "Repayment Behavior Patterns." The Python implementation enforces a strict tab-separated format to ensure that multi-modal models can accurately parse the tabular time-series data without alignment errors.

Data Leakage Prevention: The strict requirement for "completely different merchant names" ensures that the benchmark remains a test of latent financial behavior recognition rather than a retrieval task. This forces the evaluator model to analyze the intent behind the transaction (e.g., a high-interest lender) regardless of the merchant’s pseudonym.

Appendix C.5. Micro-Enterprise Profile: Not-Overdue SME Clients

Table A5 documents the prompt engineering framework for generating synthetic transaction logs for Small and Micro Enterprises (SMEs) with healthy credit profiles. The logic centers on business-related identifiers and operational health signals.

Table A5.

LLM Prompt for Generating Healthy Micro-Enterprise Credit Logs.

Table A5.

LLM Prompt for Generating Healthy Micro-Enterprise Credit Logs.

| Augmentation Strategy: Operational Attribute Simulation |

|---|

| System Overview: |

| You are an expert in industrial finance and SME credit underwriting. Your task is to analyze the bank statements of healthy micro-enterprises (including individual businesses and small factories) and synthesize a new dataset for SME ID: {micro_id}. The logs must reflect robust commercial activity and financial discipline. |

| Translation of Input Prompt: |

| "The following content contains the complete raw transaction data for a non-overdue micro-enterprise/individual business owner. Please carefully analyze its structural integrity, field definitions, and typical indicators of healthy business operations: |

| [Full SME Transactional Context Provided] |

| Generate a new set of original transaction data for SME Subject ID: {micro_id}, strictly adhering to: |

| 1. Structural Preservation: Column headers, field counts, and data types must be identical to the provided original; no modifications allowed. |

| 2. Business Volume: Generate 50 to 300 independent records that reflect the typical transaction frequency of a healthy SME. |

| 3. Temporal Consistency: Transactions must span the full year of 2023 (Jan–Dec). |

| 4. Merchant Diversity & SME Identity:

|

| – Counterparty Names: Must incorporate SME-specific identifiers such as ’Co., Ltd.’, ’Trading Store’, ’Individual Proprietorship’, ’Factory’, etc. |

| – Remarks: Must indicate business-specific scenarios (e.g., procurement, inventory, payroll, operational rent). |

| 5. Financial Logic: No empty values; transaction amounts must be consistent with micro-enterprise scales. Balance logic must remain self-consistent throughout the sequence. |

| Return the response using a tab-separated format (Header + Data) with no additional explanation." |

Appendix C.6. Business Context and Domain Analysis

Operational Health Recognition: For SMEs, the prompt focuses on Stability and Regularity of Cash Flow. Unlike personal accounts, SME logs prioritize "Essentials" (operational rent, utilities) and "Repayments" (business loans). This is essential for the CCLUPE benchmark to evaluate if a model can distinguish between personal consumption and commercial procurement.

Identity-Specific Labeling: As specified in the Python implementation’s prompt, the LLM must generate counterparties with suffixes such as "Co., Ltd." or "Factory." This ensures that the generated data captures the "Alignment" domain of CCLUPE—verifying if the transactional behavior (e.g., bulk procurement of raw materials) is actually consistent with the stated business type of the micro-subject.

Scalability and Diversity: The use of `ThreadPoolExecutor` to generate data for 225 subjects (IDs 276–500) ensures that the benchmark provides a statistically significant population of SMEs. This volume allows for rigorous testing of an LLM’s Analysis & Synthesis capabilities by presenting various SME industry types, from services to small-scale manufacturing.

Benchmark Strategic Value: In the Chinese credit market context, where formal credit scores for small business owners are often incomplete, these transaction logs serve as the primary evidence of creditworthiness. By ensuring "completely differentiated textual fields," the prompt forces the evaluator model to recognize "Specialised Cash Flow Conduct"—such as seasonal restocking patterns around the National Day or Spring Festival—rather than relying on fixed merchant names.

Appendix C.7. Micro-Enterprise Profile: Overdue SME Clients

Table A6 documents the prompt engineering strategies used to simulate transactional distress in Small and Micro Enterprises (SMEs). This prompt requires the LLM to blend "operational scenarios" with "latent delinquency signatures" while maintaining business-specific terminology.

Table A6.

LLM Prompt for Generating Overdue-Prone Micro-Enterprise Credit Logs.

Table A6.

LLM Prompt for Generating Overdue-Prone Micro-Enterprise Credit Logs.

| Augmentation Strategy: Business Cycle & Distress Simulation |

|---|

| System Overview: |

| You are a specialist in commercial credit risk evaluation. Your objective is to analyze transaction logs of SMEs (small companies, trading stores, or factories) with delinquency histories and synthesize a new dataset for SME ID: {micro_id}. The logs must reflect genuine business operations while embedding precursors to credit failure. |

| Translation of Input Prompt: |

| "The following content provides the complete raw transaction data for an overdue micro-enterprise/individual business owner. Please meticulously analyze its structural integrity, field semantics, specific business transaction characteristics, and patterns associated with credit delinquency: |

| [Full SME Transactional Context and Default History Provided] |

| Generate a new set of original transaction data for SME Subject ID: {micro_id}, strictly adhering to: |

| 1. Structural Fidelity: Column headers, field counts, and data types must precisely match the original; do not alter the schema. |

| 2. Operational Volume: Generate 50 to 300 independent records that reflect the business activities of an SME under financial stress. |

| 3. Temporal & Cyclical Distribution: Spread transactions across Jan–Dec 2023, ensuring business-specific cycles (e.g., seasonal procurement) are visible. |

| 4. Differentiated SME Identity:

|

| – Counterparty Names: Must include commercial identifiers such as ’Company’, ’Trading Firm’, ’Proprietorship’, or ’Factory’. |

| – Remarks: Must describe plausible commercial scenarios (e.g., supply chain payments, rent, or logistics fees). |

| 5. Logical Consistency: No empty values allowed. Transaction amounts must be appropriate for small-scale operations. Associated fields (e.g., balance and loan repayment logic) must be calculated correctly. |

| 6. Latent Overdue Features: Infuse the logs with subtle indicators of financial distress—such as high interest-debt service, irregular revenue patterns, or excessive inventory costs relative to sales—without explicitly using words like ’overdue’ or ’default’.

|

| Return the response as tab-separated values (Header + Data) without any conversational text." |

Appendix C.8. Synthesis Logic and Macro-Domain Review

Analysis of Financial Distress: For SME subjects, the prompt focuses on Cash Flow Structure and Concentration. The transition to "overdue" is often represented by a shift in counterparty dependencies or a decline in "stability and regularity" of revenue. These latent features allow the benchmark to evaluate if LLMs can perform Multi-stage Reasoning to detect business failure precursors.

Contextual Business Scenarios: In accordance withRule 4 of the Python script, the LLM is forced to define "remarks" that ground the data in reality. For overdue SMEs, this might include "Urgent Inventory Repayment" or "Property Rent Adjustments," which test the LLM’s Comprehension & Calculation capabilities regarding business operating margins and liquidity pressure.

Benchmark Consistency: The generation of data for 225 SME subjects (IDs 26–250) mirrors the scale of the non-overdue population. This balanced distribution is vital for calculating the Log-Score mentioned in the CCLUPE core framework, as it ensures the model is evaluated on its ability to minimize "misunderstanding penalties" (false positives) in a mixed dataset.

Domain-Specific Penalties: Because SMEs have own Global Interpreter Lock (GIL) related complexities in real-world data, the synthetic generation ensures Arithmetic Logic consistency (e.g., negative refund values). This provides a clean but challenging "trimodal" environment (text, tabular, and time-series) to test the robustness of financial LLMs against professional underwriting standards.

Appendix C.9. Question Generation: Personal Underwriting Audit

Table A7 documents the prompt used to transform raw personal transaction logs into a structured 15-question credit audit. The prompt enforces hierarchical categorization and cognitive complexity mapping.

Table A7.

LLM Prompt for Generating Personal Credit Underwriting Questions.

Table A7.

LLM Prompt for Generating Personal Credit Underwriting Questions.

| Instruction Set: Hierarchical Underwriting Question Synthesis |

|---|

| Task Overview: |

| Based on the provided bank statement data, generate 15 specialized multiple-choice questions (at least one multi-choice). Questions must be grounded in the data but satisfy the "Zero Data Leakage" rule for the question stem. |

| Translation of Input Prompt (Core Requirements): |

| "You are provided with a personal transaction table. Generate 15 questions based on the following classification logic: |

| 1. Primary Category (8 Underwriting Perspectives): |

| Select from: Consumption Stability, Essential Expenditure Regularity, Repayment Patterns, High-Risk Merchant Transactions, Discretionary Concentration, Transaction Failures & Liquidity Pressure, Fund Retention Habits, and ’Others’ (specifically labeled in parentheses). |

| 2. Secondary Category (24 Classification Logics): |

| Annotate each question with its specific logic mapping (e.g., ’Consumption Stability-Payroll regularity’). |

| 3. Strict Prohibitions: |

| – No Specific Numbers in Stems: Do not use amounts or specific dates in the question text. Use relative references like ’the repayment recorded in the table’ or ’a certain date.’ |

| – No Intermediate Disclosure: Do not reveal the number of transactions required for calculation in the stem. |

| 4. Complexity & Cognition Mapping: |

| – Difficulty Levels (5:5:5 ratio): Low (≥2 computation steps), Medium (≥3 steps), High (≥5 steps). |

| – Cognitive Dimensions: Memory & Recognition, Comprehension & Calculation, Analysis & Synthesis, Evaluation & Inference. |

| Standardized Format: |

| 8 Categories: [Type] | 24 Logics: [Class-Subclass] | Difficulty: [L/M/H] | Cognition: [Dimension] |

| [Question Body] |

| a. [Opt] b. [Opt] c. [Opt] d. [Opt] |

|

Correct Answer: X" |

Appendix C.10. Cognitive Complexity and Logical Parsing

Cognitive Dimension Distribution: The generation logic specifically targets the "Integrative Nature" of credit evaluation. As seen in the Python implementation’s `parse questions` function, questions are categorized into four cognitive levels following a modified Bloom’s taxonomy. This ensures the benchmark evaluates not just data retrieval, but the model’s ability to synthesize evidence (e.g., "Analysis & Synthesis") to form a credit opinion.

Anti-Leakage Design: A distinct feature of the CCLUPE benchmark is the prohibition of specific numeric values in the question stems (absolute prohibition clause). By forcing the LLM to use "fuzzy positioning" (e.g., "the last transaction at merchant X"), the benchmark ensures that the evaluator model must truly parse the table to find the values, rather than relying on values mentioned in the question to guess the answer.

Difficulty Stratification: The prompt enforces a 5:5:5 ratio for difficulty. This stratification allows for a granular performance analysis. For example, "High Difficulty" questions require ≥5 calculation steps (e.g., cross-referencing multiple months of balance and aggregate repayments), which is often where standard LLMs fail on the CCLUPE leaderboard.

Domain Perspective Mapping: By mapping questions to "8 Underwriting Perspectives," the benchmark provides an industry-standard review. The `eight category` logic in the script ensures that "Other" categories (like "Buy Now Pay Later" or "Nighttime Spending") are explicitly captured, providing professional insights into latent borrower risk that traditional scorecards might overlook.

Appendix C.11. Question Generation: Micro-Enterprise Credit Audit

Table A8 displays the prompt configuration for generating 15 specialized credit audit questions from raw SME operational logs. The prompt focuses on distinctive SME domains such as supply chain cycles and liquidity turnover.

Table A8.

LLM Prompt for Generating Micro-Enterprise (SME) Credit Audit Questions.

Table A8.

LLM Prompt for Generating Micro-Enterprise (SME) Credit Audit Questions.

| Instruction Set: Complex Commercial-SME Underwriting Synthesis |

|---|

| Task Overview: |

| Analyze the provided micro-enterprise operational logs. Generate 15 multiple-choice questions (at least two multi-select). Questions must revolve around commercial scenarios: revenue collection frequency, procurement vs. sales alignment, upstream/downstream payment cycles, and tax/utility regularity. |

| Translation of Input Prompt (Core Requirements): |

| "You are provided with an SME operational transaction table. Generate 15 questions based on the following classification structure: |

| 1. Primary Category (7 SME Audit Perspectives): |

| Must cover all 20 sub-categories across 7 domains: |

| – Revenue/Expense Stability: Monthly frequency and industry-specific cost-to-income ratios. |

| – Cash Flow Health: Non-operational funds vs. operational capital and turnover efficiency. |

| – Business Activity: Daily transaction volume, QR code payment features, and nighttime trading records. |

| – Counterparty Risk: Relying on single-source suppliers and related-party transaction ratios. |

| – Payment Management: Account receivables/payables aging and tax/social security punctuality. |

| – Specialized Behaviors: Cash-out patterns, seasonal fluctuations, and transaction disputes. |

| – Loan Compliance: Operational loan repayment history and utility regularity. |

| 2. Functional Constraints (Anti-Guessing): |

| – Zero-Data Stem: No specific amounts, dates, or merchant names in the stems. Use relative locators like ’a certain partner recorded in the table.’ |

| – Step-Based Difficulty (5:5:5 Ratio):

|

| Low: ≥2-step judgment; Medium: ≥3-step analysis; High: ≥5-step calculation/inference. |

|

Cognitive Dimensions: Memory & Recognition, Comprehension & Calculation, Analysis & Synthesis, Evaluation & Inference." |

Appendix C.12. Commercial Reasoning and SME Domain Analysis

Operational Scenario Alignment: As illustrated in the Python implementation, the prompt forces the LLM to avoid personal consumption narratives. Instead, it prioritizes Industry-Specific Ratios (e.g., procurement as a percentage of revenue). This is a vital metric in the CCLUPE benchmark for testing whether a financial LLM can distinguish between a healthy business cycle and a fraudulent or declining one.

Multi-Step Calculation Logic: The "High Difficulty" questions in the SME category are significantly more complex than personal ones. According to the script’s instruction for "≥5 steps," a single question may require the evaluator to: 1) identify all QR code receipts, 2) aggregate them by month, 3) calculate the mean, 4) compare that to the supplier payment cycle, and 5) evaluate the liquidity buffer. This directly tests the LLM’s*Multi-modal/Multi-stage Reasoning capabilities.

Anti-Leakage & Qualitative Options: Consistent with the "Absolute Prohibition" clause in the prompt, options are often qualitative (e.g., "Matched with monthly revenue" vs. "Significantly exceeding revenue") rather than just numeric. This ensures that the model is evaluated on its Financial Knowledge and its ability to perform Causal Inference across the transaction timeline.

Diverse Commercial Perspectives: By covering 20 sub-categories (e.g., "Upstream/Downstream Aging Management"), the CCLUPE benchmark ensures that data-synthesis does not overlook the "latency" and "receivables" inherent to SME credit risk. The `parse questions` function in the provided script ensures that these labels are captured for diagnostic error analysis, allowing researchers to pinpoint which specific business logic causes LLM "misunderstandings."

Appendix D. Dataset Details

The CCLUPE benchmark adopts a hierarchical taxonomy to map diverse transactional behaviors into actionable credit-risk signals. As detailed in the expert consultation process, indicators are partitioned into core knowledge domains and specialized behavioral sub-categories.

Main Behavioral Domains The core evaluation focuses on seven primary domains that represent the most significant predictors of creditworthiness in the Chinese market:

Consumption Stability: Evaluates the variance and consistency of spending over time to infer income reliability.

Essential Expenditure Regularity: Monitors fixed costs (utilities, rent, tax) that indicate fundamental financial discipline.

Repayment Behavior Patterns: Specifically tracks loan-related logs to identify punctuality and debt-servicing intent.

High-Risk Merchant Activities: Identifies interactions with gambling, high-interest lending, or speculative investment platforms.

Discretionary Spending Concentration: Analyzes the proportion of non-essential vs. essential spending to assess luxury-led risk.

Liquidity Pressure and Transaction Failures: Records insufficient fund errors and abrupt balance drops.

Funds-Flow Timing and Consumption Lag: Analyzes the delta between income receipt and major expenditures.

Indicator MappingTable A9 provides a comprehensive overview of the indicators categorized by their relevance to Personal and Micro-enterprise profiles.

Cognitive Level Mapping In accordance with Bloom’s Taxonomy for financial reasoning, each question based on the above indicators is mapped to a cognitive depth:

- 1.

Memory & Recognition: Identifying specific transaction types or merchant names within the log.

- 2.

Comprehension & Calculation: Summarizing total spending or calculating debt-to-income ratios from raw entries.

- 3.

Analysis & Synthesis: Detecting latent patterns (e.g., "seasonal restocking") across multiple months of data.

- 4.

Evaluation & Inference: Making high-level risk judgments or predicting potential default based on observed behavioral anomalies.

Table A9.

Detailed taxonomy of CCLUPE behavioral–consumption indicators across main and secondary categories.

Table A9.

Detailed taxonomy of CCLUPE behavioral–consumption indicators across main and secondary categories.

| Category |

Behavioral Signals and Specialized Questions |

| Main Domains |

|

| Consumption Stability |

Frequency of daily spending, month-over-month variance, and lifestyle consistency. |

| Expenditure Regularity |

Timeliness of utility payments (electricity, water, heating), and insurance continuity. |

| Repayment Patterns |

Credit card repayment cycles, bill-period alignment, and partial vs. full settlement. |

| High-Risk Activities |

Transactions involving speculative assets, high-leverage platforms, or risky merchants. |

| Spending Concentration |

Counterparty dependency, merchant relationship depth, and category-specific spikes. |

| Liquidity Pressure |

Transaction failures (insufficient funds), frequent small-value borrowing, and balance volatility. |

| Funds-Flow Timing |

Income-to-expenditure lag, salary deposit recency, and cash flow alignment. |

| Secondary/Other |

|

| Digital Footprint |

Subscription service continuity, app engagement frequency, and digital wallet usage trends. |

| Temporal Patterns |

Nighttime/early-morning consumption, seasonal spending spikes, and holiday surges. |

| Locational Logic |

Off-location (travel) consumption analysis and cross-border spending patterns. |

| Social/Employment |

Intergenerational transfer support, part-time employment markers, and payroll regularity. |

| Operational Health |

(SME only) Inventory restock cycles, supplier relationship stability, and business-type alignment. |

| Transaction Quality |

Refund rates, transaction reversals, and penalty fee occurrences. |

Our dataset organizes behavioral–consumption indicators into 7 main categories and one other categories including the indicators that are considered to be minor-relevant. Each specific subcategories facilitate precise characterization and analysis of consumer financial behavior. The main categories include Consumption Stability, Essential Expenditure Regularity, Repayment Behavior Patterns, High-Risk Merchant Activities, Discretionary Spending Concentration, Liquidity Pressure and Transaction Failures, Funds-Flow Timing and Consumption Lag, Others. While the other catergories include Small Loan Transactions, Subscription Service Continuity, Late Payment Penalty, etc. The specific categories are shown as Figure 7 shows. Each main category is further divided into specialized questions that capture distinct behavioral signals relevant for credit-risk inference.

Table A10.

Behavioral–consumption indicators including 7 main categories and one other categories.

Table A10.

Behavioral–consumption indicators including 7 main categories and one other categories.

| Dataset Overview |

|---|

| Main Categories |

| Consumption Stability |

|

| Essential Expenditure Regularity |

|

| Repayment Behavior Patterns |

|

| High-Risk Merchant Activities |

|

| Discretionary Spending Concentration |

|

| Liquidity Pressure and Transaction Failures |

|

| Funds-Flow Timing and Consumption Lag |

|

| Others |

| Small Loan Transactions |

|

| Subscription Service Continuity |

|

| Late Payment Penalty |

|

| Seasonal Spending Behavior |

|

| Installment Payment |

|

| Part-time Employment Indicators |

|

| Sudden Large-Amount Spending |

|

| Refunds and Transaction Reversals |

|

| Spending Diversification and Merchant Relationships |

|

| Cross-Border Consumption Characteristics |

|

| Small Loan Transactions |

|

| Subscription Service Continuity |

|

| Late Payment Penalty |

|

| Seasonal Spending Behavior |

|

| Installment Payment |

|

| Part-time Employment Indicators |

|

| Sudden Large-Amount Spending |

|

| Refunds and Transaction Reversals |

|

| Spending Diversification and Merchant Relationships |

|

| Nighttime and Early-Morning Consumption |

|

| Consumption Activity and Frequency |

|

| Consumption Cycles and Trigger Patterns |

|

| Off-Location Consumption Analysis |

|

| Intergenerational Consumption Support |

|

| Income-Consumption Cycle Alignment |

|

Appendix E. Statistical Characteristics

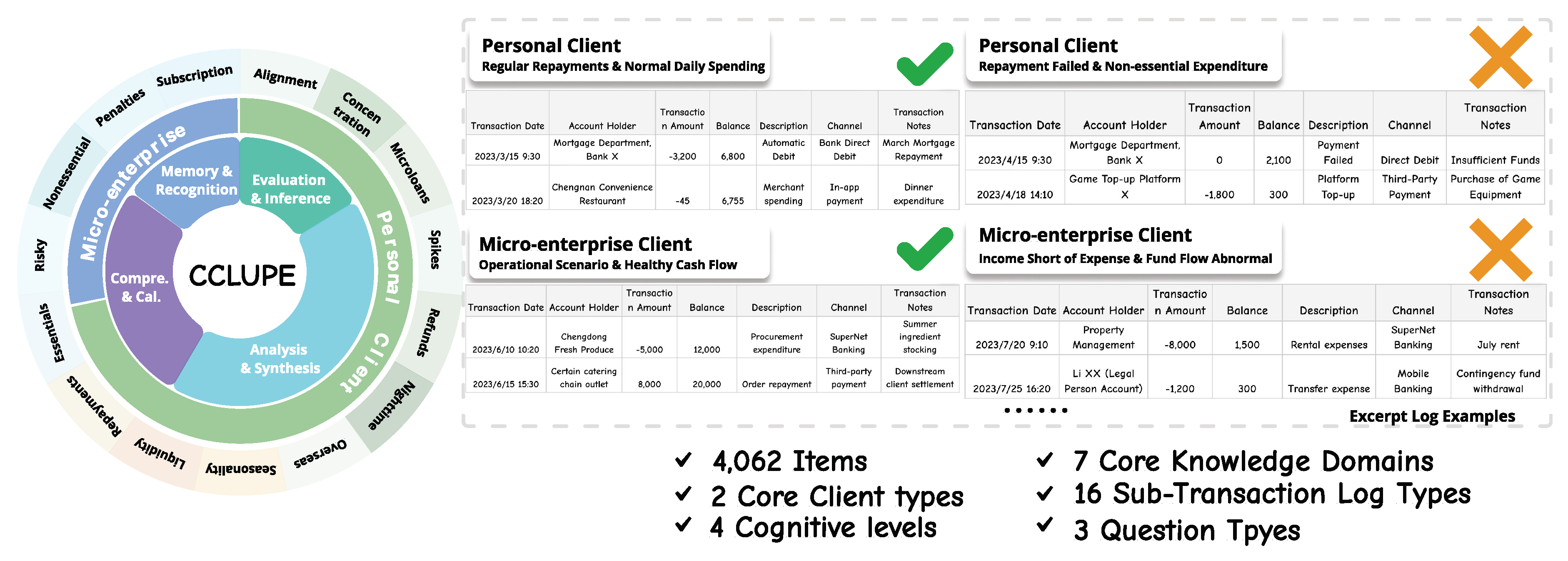

The CCLUPE benchmark consists of 4,062 high-quality samples, distinctively designed to bridge the gap between general financial NLP and specialized credit risk reasoning. Unlike synthetic benchmarks, CCLUPE is grounded in authentic transaction logs. Personal clients (

N=2,931) reflect individual consumption and repayment hygiene, while SME (

N=1,131) focus on operational cash flows, business continuity, and industry-specific financial health. The dataset features a hierarchical annotation framework spanning 7 core knowledge domains and 16 fine-grained sub-domains. As shown in

Table A11, the

Stability and Regularity of Cash Flow domain represents the largest segment (34.6%), mirroring real-world credit underwriting priorities where consistent revenue is the primary indicator of repayment capacity.

Table A11.

Comprehensive breakdown of the CCLUPE Dataset across domains and client types.

Table A11.

Comprehensive breakdown of the CCLUPE Dataset across domains and client types.

| Core Domain |

Sub-Domain Examples |

Personal |

SME |

Total |

| Stability & Regularity |

Payroll, Revenue Stability |

980 |

428 |

1,408 |

| Cash Flow Conduct |

Specific Industry Patterns |

310 |

210 |

520 |

| Structure & Concentration |

Merchant Diversity, Counterparty Risk |

645 |

212 |

857 |

| Time Characteristics |

Nighttime Trading, Seasonality |

82 |

36 |

118 |

| Specialised Conduct |

Cross-border, Tax Punctuality |

130 |

80 |

210 |

| Liquidity & Pressure |

Fund Reserves, Overdue Precursors |

680 |

136 |

816 |

| Others |

Miscellaneous Risk Factors |

104 |

29 |

133 |

| Total |

|

2,931 |

1,131 |

4,062 |

To evaluate deep reasoning rather than simple retrieval, we map questions to four cognitive levels. Analysis & Synthesis (43.8%) is the most frequent level, requiring models to integrate multi-source data (textual descriptions and time-series logs) to form a coherent credit opinion.

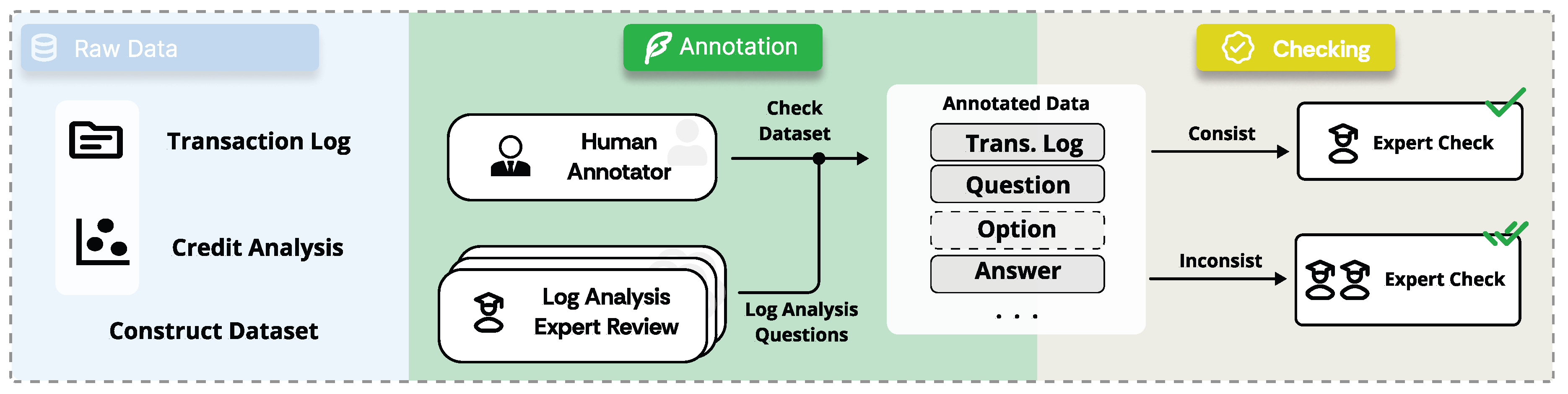

Appendix G. Dataset Comparison

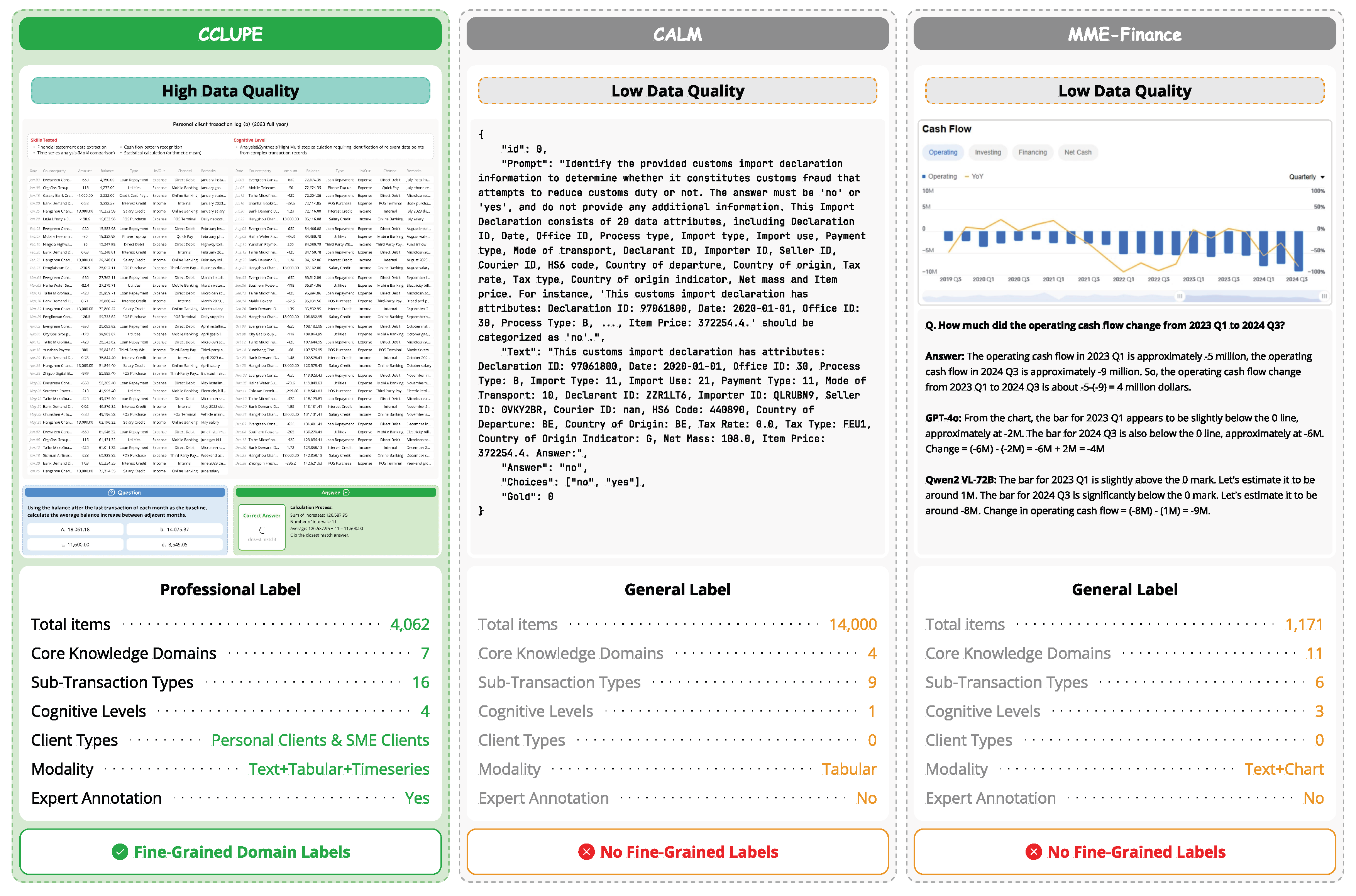

Figure A1.

Data Comparision with Related Works

Figure A1.

Data Comparision with Related Works

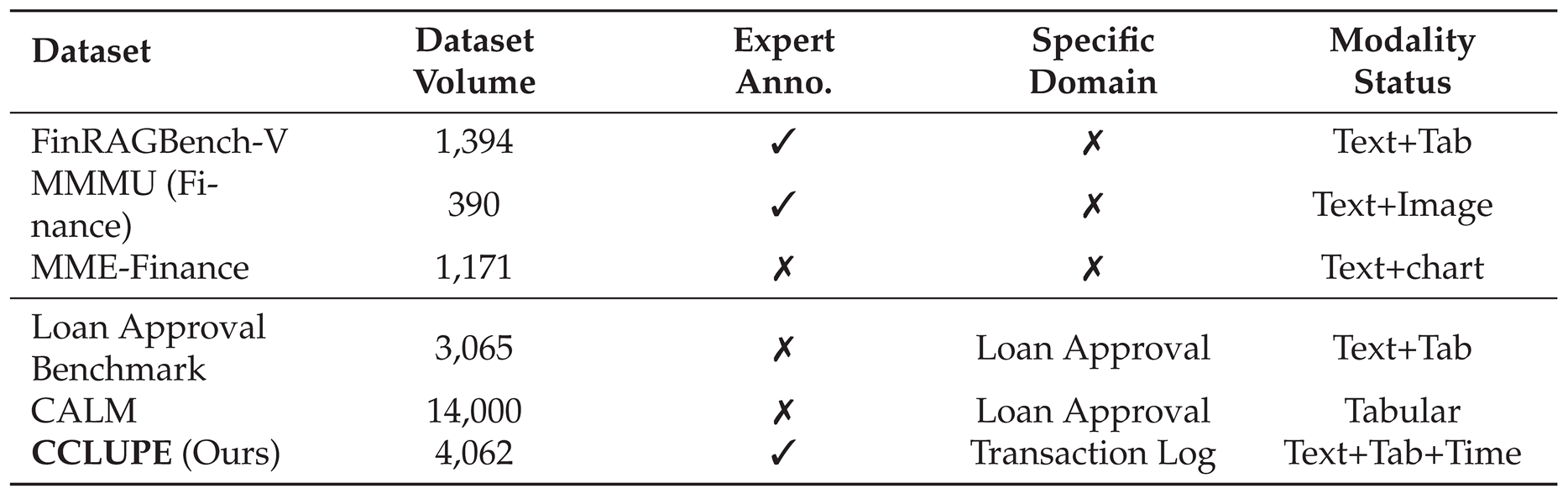

As illustrated in

Table 1, we present a comprehensive comparison of CCLUPE with existing benchmarks relevant to financial and credit assessment domains. The comparison encompasses dataset scale, annotation quality, domain specificity, and modality coverage, revealing distinct characteristics and application scenarios for each benchmark.

CCLUPE (Ours) represents a significant advancement in credit assessment benchmarking, comprising 4,062 samples with expert-validated annotations. A defining characteristic of our dataset is its specialized focus on transaction log analysis, which constitutes the core data source in real-world credit decisioning workflows. CCLUPE uniquely supports trimodal inputs—text, tabular, and time-series data—enabling holistic evaluation of consumer financial behavior. The dataset’s design is grounded in extensive consultations with industry practitioners, ensuring alignment with authentic credit underwriting processes and risk control methodologies.

CALM offers the largest scale among existing credit-related benchmarks with 14,000 samples and expert annotations. However, its scope is confined to loan approval decisions with exclusively tabular modality, limiting its capacity to assess models’ reasoning capabilities across heterogeneous data formats. While CALM provides valuable resources for binary classification tasks, it lacks the behavioral consumption indicators and temporal transaction patterns essential for comprehensive credit risk inference.

Loan Approval Benchmark contains 3,065 samples targeting loan approval scenarios with text and tabular modalities. Notably, this benchmark lacks expert annotations, potentially compromising the reliability and domain authenticity of its ground-truth labels. Its coverage remains restricted to conventional approval decisions without incorporating nuanced behavioral signals derived from transaction histories.

FinRAGBench-V provides 1,394 expert-annotated samples combining text and tabular data. While it demonstrates rigorous annotation quality, the dataset is designed for general financial RAG tasks without domain-specific focus on credit assessment, limiting its applicability for evaluating credit-oriented AI systems.

MMMU (Finance) and MME-Finance represent general-purpose financial multimodal benchmarks with 390 and 1,171 samples respectively. Both datasets lack expert annotations and are primarily oriented toward visual chart interpretation and general financial knowledge assessment rather than credit-specific workflows. Their modality coverage (text+image and text+chart) does not encompass the tabular and time-series formats predominant in credit risk analysis.

This comparative analysis underscores CCLUPE’s distinctive positioning within the landscape of financial AI benchmarks. While existing datasets either emphasize general financial knowledge or provide limited credit-specific coverage, CCLUPE bridges this gap by delivering: (1) substantial scale with expert-validated annotations, (2) specialized focus on transaction-level behavioral analysis, (3) comprehensive trimodal coverage aligned with real-world credit assessment pipelines, and (4) hierarchical behavioral indicators derived from industry best practices. These characteristics establish CCLUPE as a rigorous and practically relevant benchmark for advancing AI capabilities in consumer credit evaluation.

Appendix H. Model Configurations

We conducted experiments across a diverse array of 15+ LLMs, encompassing proprietary frontier models and open-source financial adaptations. All models were evaluated between January and February 2025.

Table A13.

Model architectures and parameter scales evaluated in the benchmark.

Table A13.

Model architectures and parameter scales evaluated in the benchmark.

| Model Name |

Developer |

Scale/Type |

Access Method |

| Proprietary |

|

|

|

| Gemini 3 |

Google |

Unknown |

Vertex AI API |

| Claude 4.5 Sonnet |

Anthropic |

Unknown |

Anthropic API |

| GPT-5 |

OpenAI |

Unknown |

OpenAI API |

| Open-Source |

|

|

|

| DeepSeek V3 |

DeepSeek |

671B (MoE) |

Local (vLLM) |

| Llama 3.3 |

Meta |

70B |

Local (vLLM) |

| Qwen 3 |

Alibaba |

4B, 30B, 235B |

Local (vLLM) |

| Fin R1 |

Financial AI Lab |

32B (Distilled) |

Local (vLLM) |

Local models were deployed on a high-performance cluster. To eliminate stochastic variance and ensure focus on reasoning capability, the temperature was set to . We utilized the vLLM engine to handle the long-context requirements of 2023 full-year transaction logs, which can exceed 3,000 tokens per prompt.

Table A14.

Inference parameters and environment details.

Table A14.

Inference parameters and environment details.

| Parameter |

Configuration Value |

| GPU Hardware |

8 × NVIDIA H800 (80GB) |

| Operating System |

Ubuntu 22.04 LTS |

| Inference Engine |

vLLM (v0.6.3) / CUDA 12.4 |

| Decoding Strategy |

Greedy Search () |

| Max Context Length |

16,384 Tokens |

| Precision |

Bfloat16 |

A critical challenge in credit risk assessment is the cost of "misunderstanding" risks. To address this, CCLUPE introduces the

Log-Score (

L), which discounts a model’s

performance based on its Misunderstanding Rate (

).The Misunderstanding Rate for

logs is calculated as:

Where

represents fabricated risk indicators identified by the model that are absent in the ground truth. The final

Log-Score is then derived using a severity exponent

:

In our experiments, we set to impose a quadratic penalty, ensuring that analytical reliability is prioritized over raw recall.

Appendix I. Sample Question

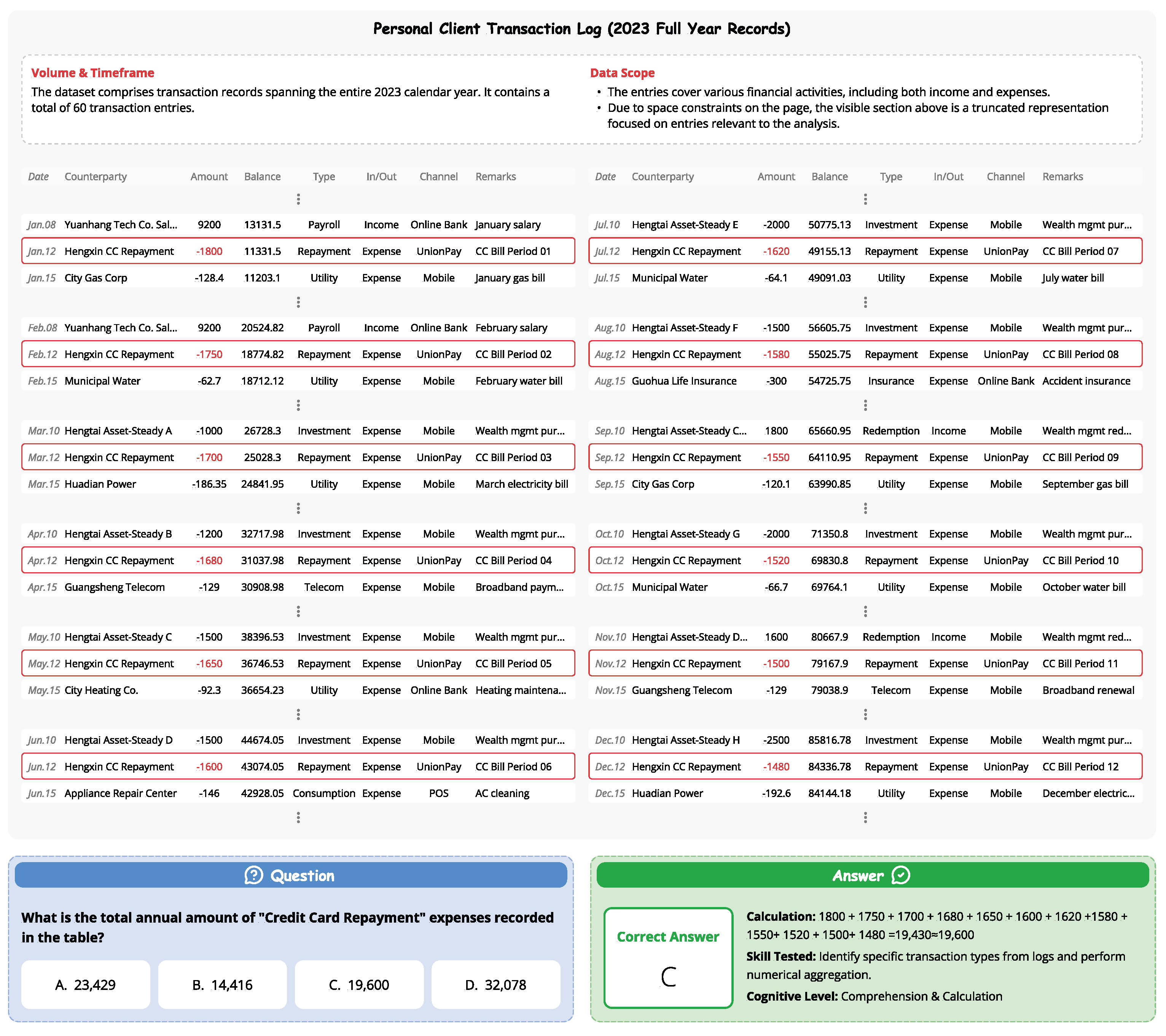

Figure A2.

Sample Single Choice Question about Comprehension & Calculation of Personal Clients Log Information.

Figure A2.

Sample Single Choice Question about Comprehension & Calculation of Personal Clients Log Information.

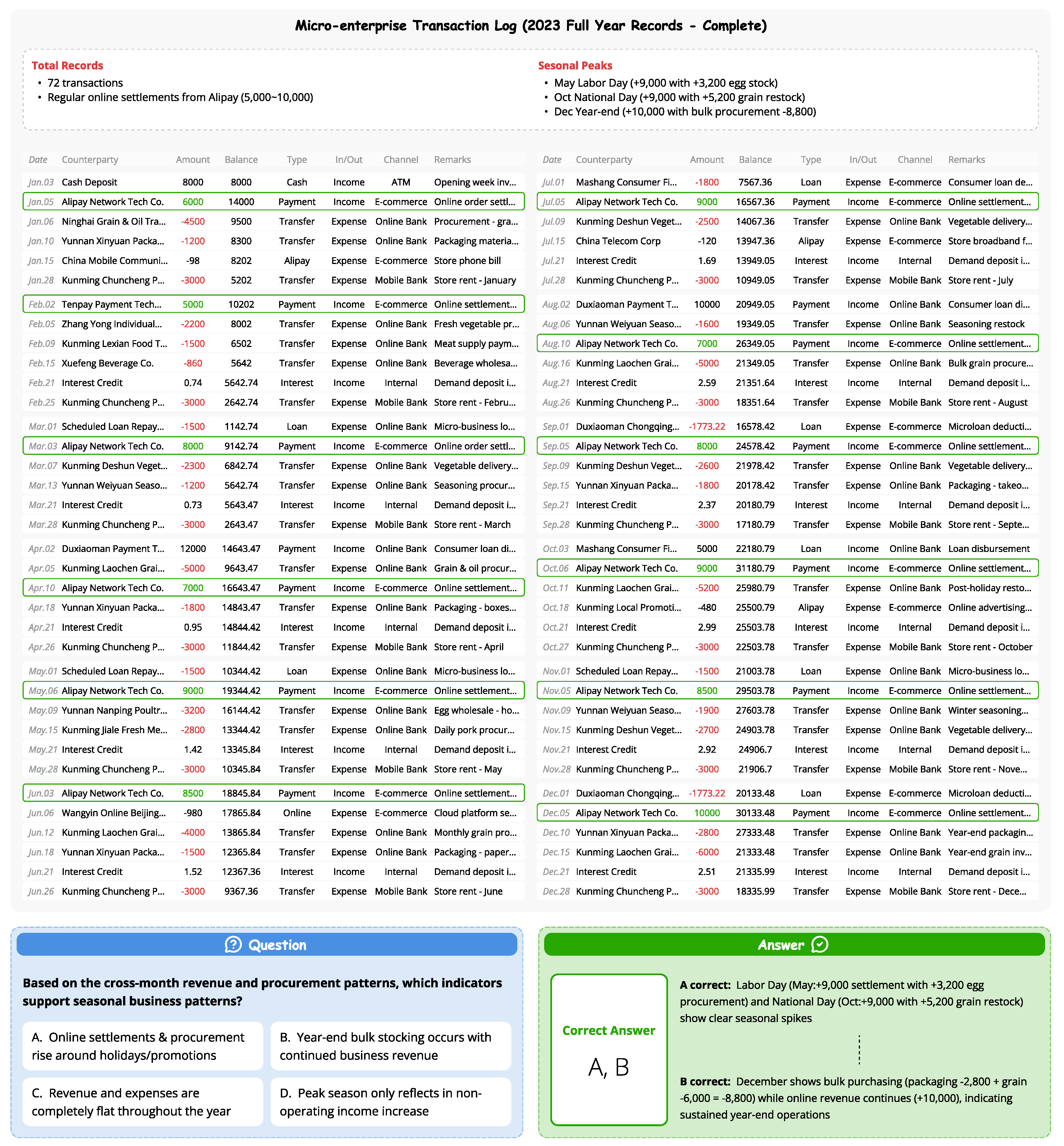

Figure A3.

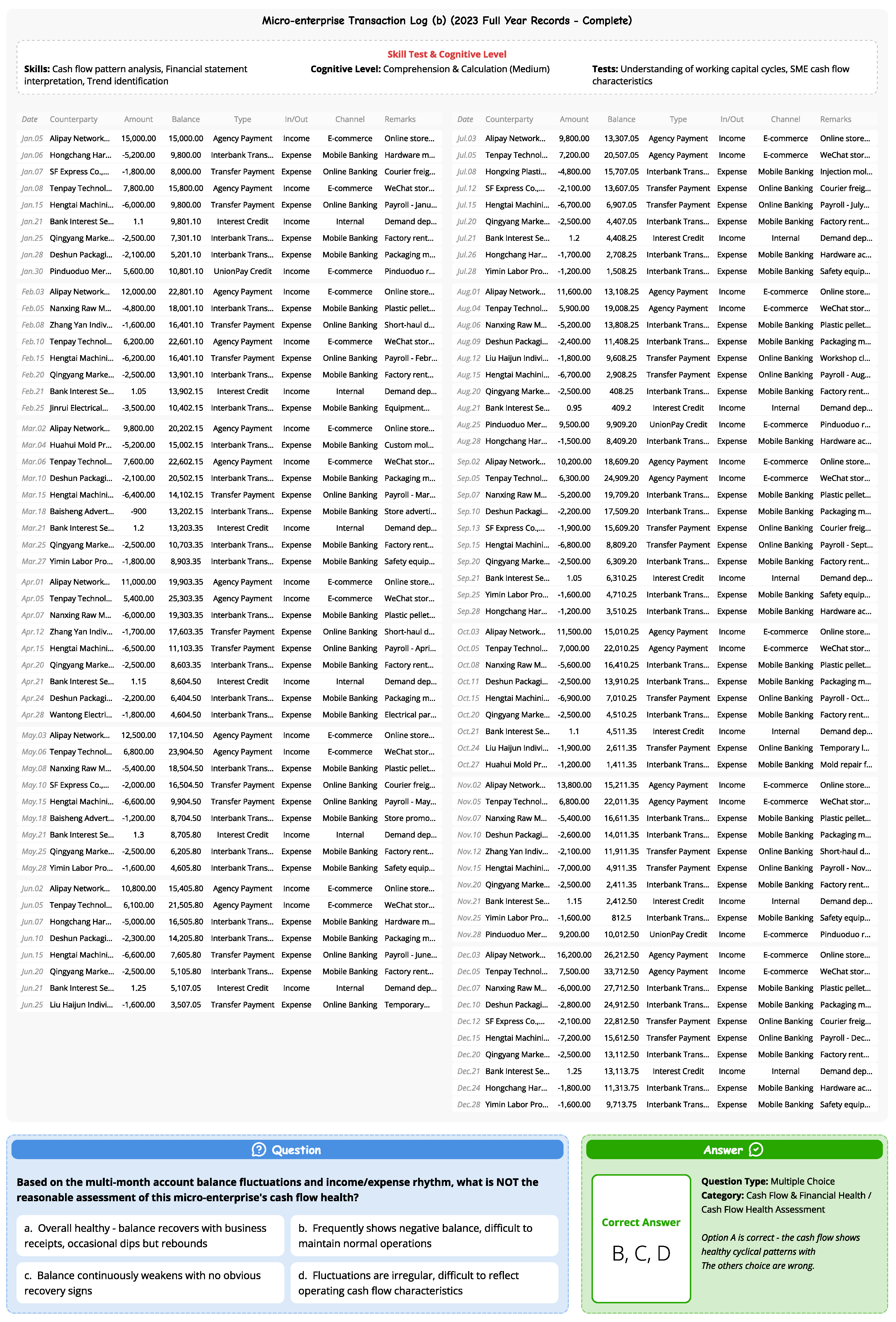

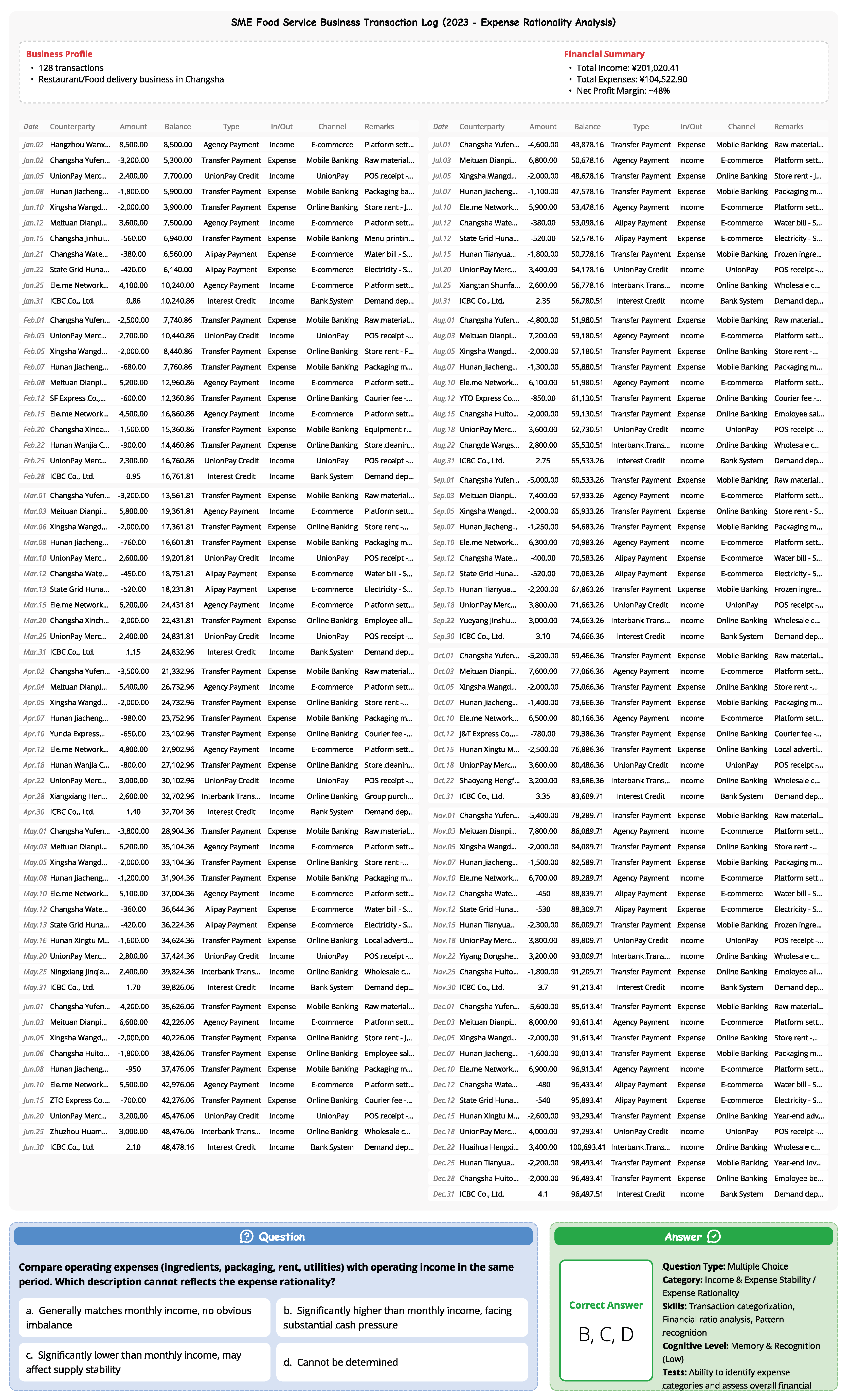

Sample Multiple Choice Question about Analysis & Synthesis of Micro-enterprise Clients Log Information.

Figure A3.

Sample Multiple Choice Question about Analysis & Synthesis of Micro-enterprise Clients Log Information.

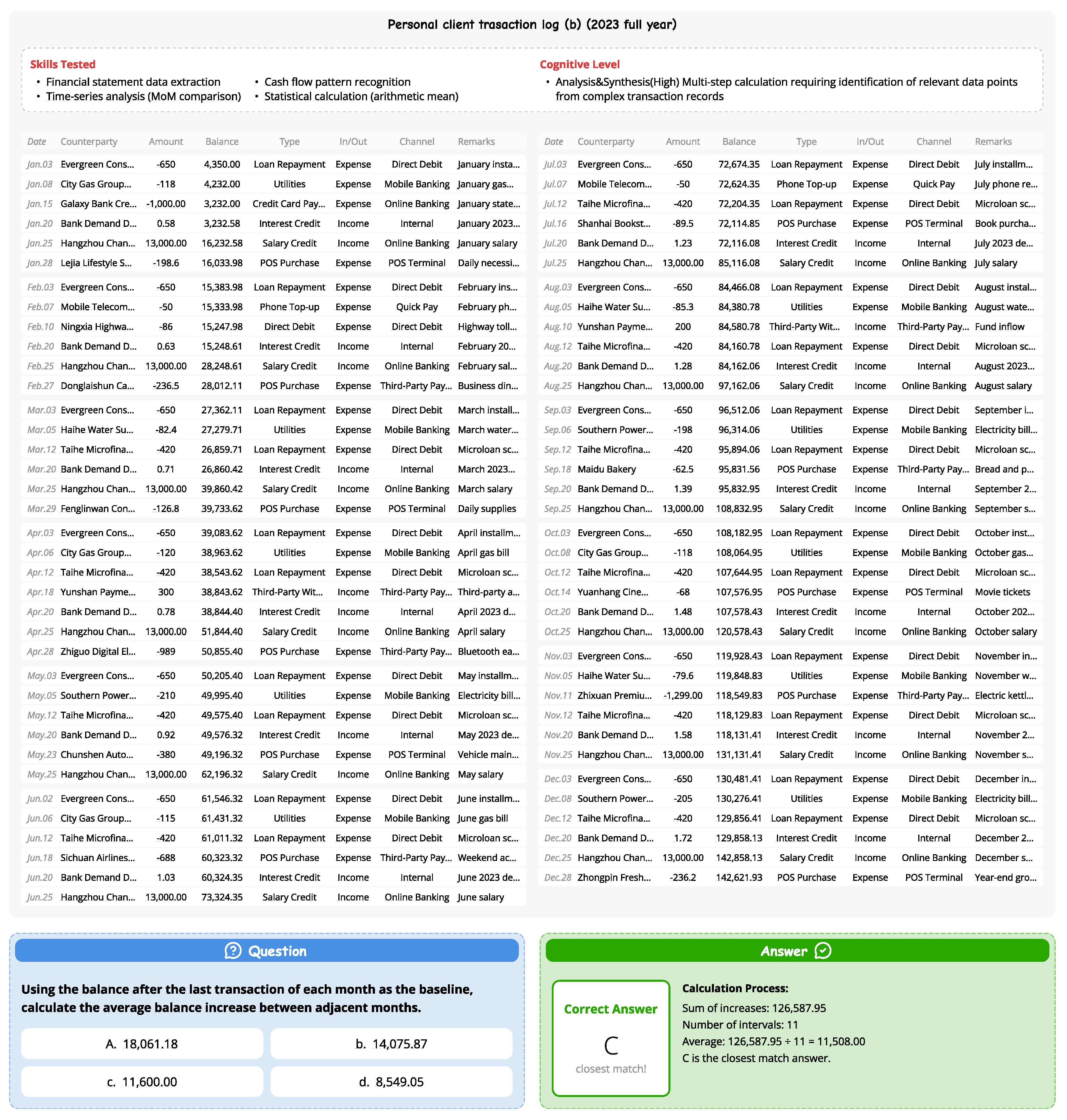

Figure A4.

Sample Single Choice Question about Analysis & Synthesis of Personal Clients Log Information.

Figure A4.

Sample Single Choice Question about Analysis & Synthesis of Personal Clients Log Information.

Figure A5.

Sample Multiple Choice Question about Comprehension & Calculation of Micro-enterprise Clients Log Information.

Figure A5.

Sample Multiple Choice Question about Comprehension & Calculation of Micro-enterprise Clients Log Information.

Figure A6.

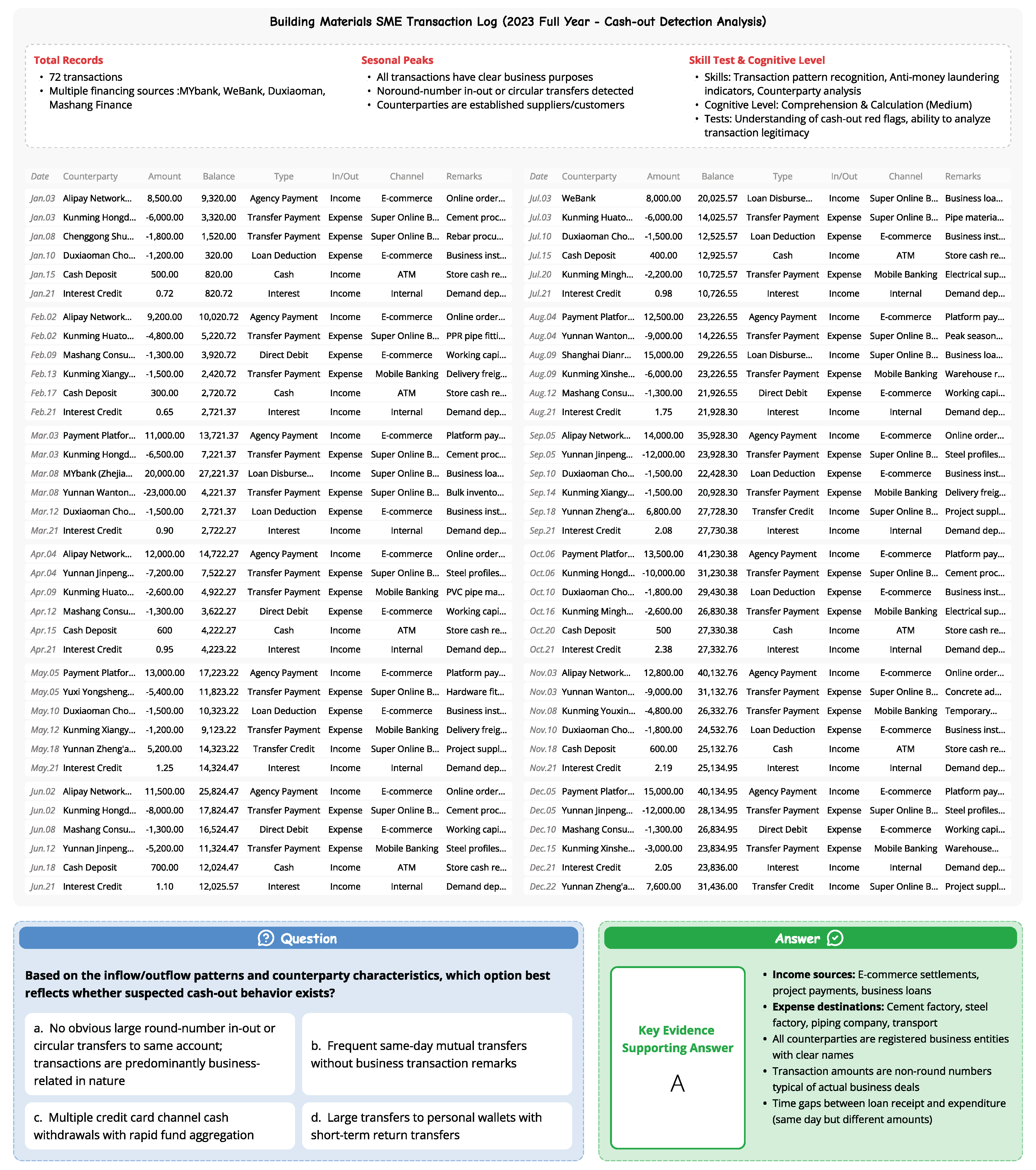

Sample Single Choice Question about Comprehension & Calculation of Micro-enterprise Clients Log Information.

Figure A6.

Sample Single Choice Question about Comprehension & Calculation of Micro-enterprise Clients Log Information.

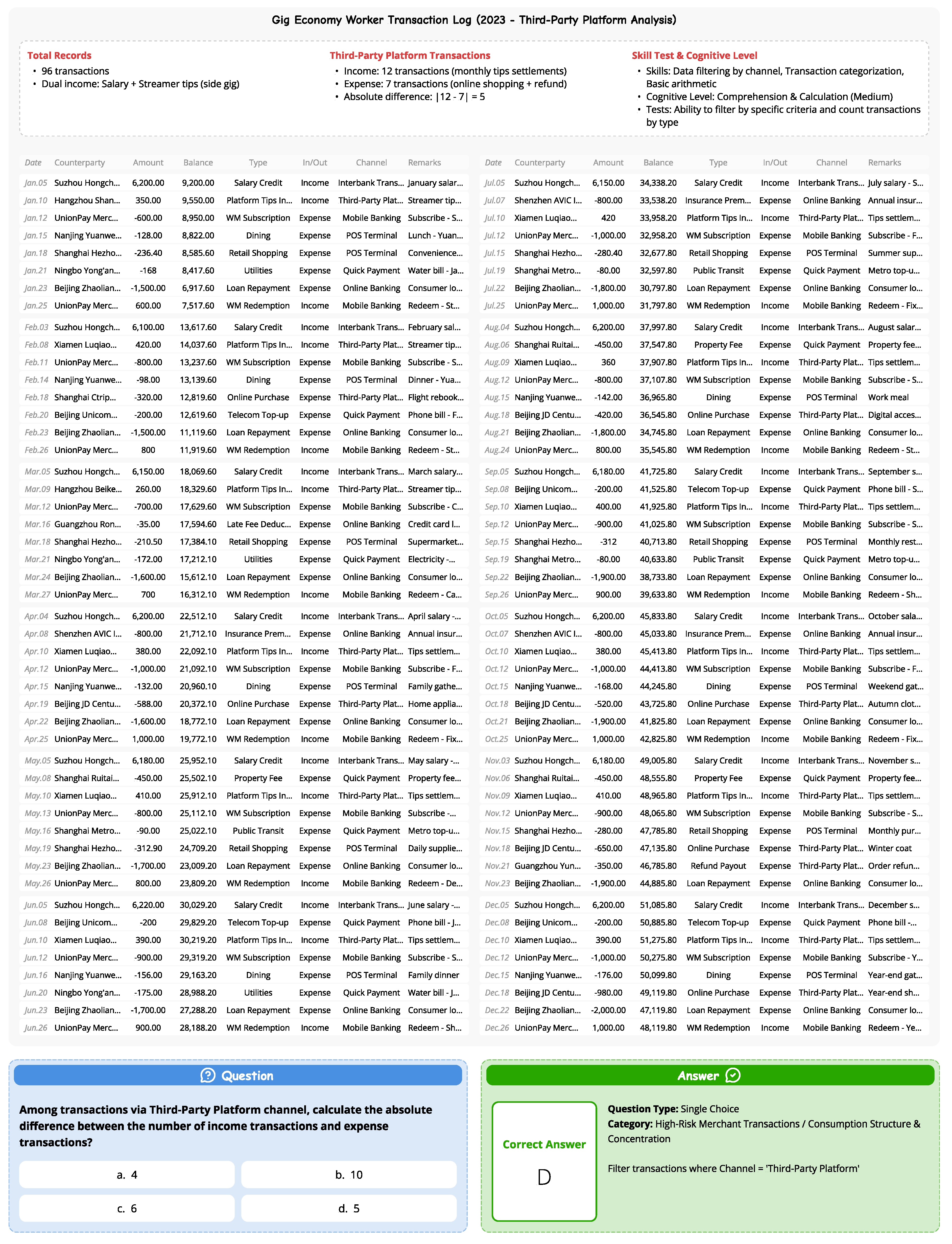

Figure A7.

Sample Single Choice Question about Comprehension & Calculation of Personal Clients Log Information.

Figure A7.

Sample Single Choice Question about Comprehension & Calculation of Personal Clients Log Information.

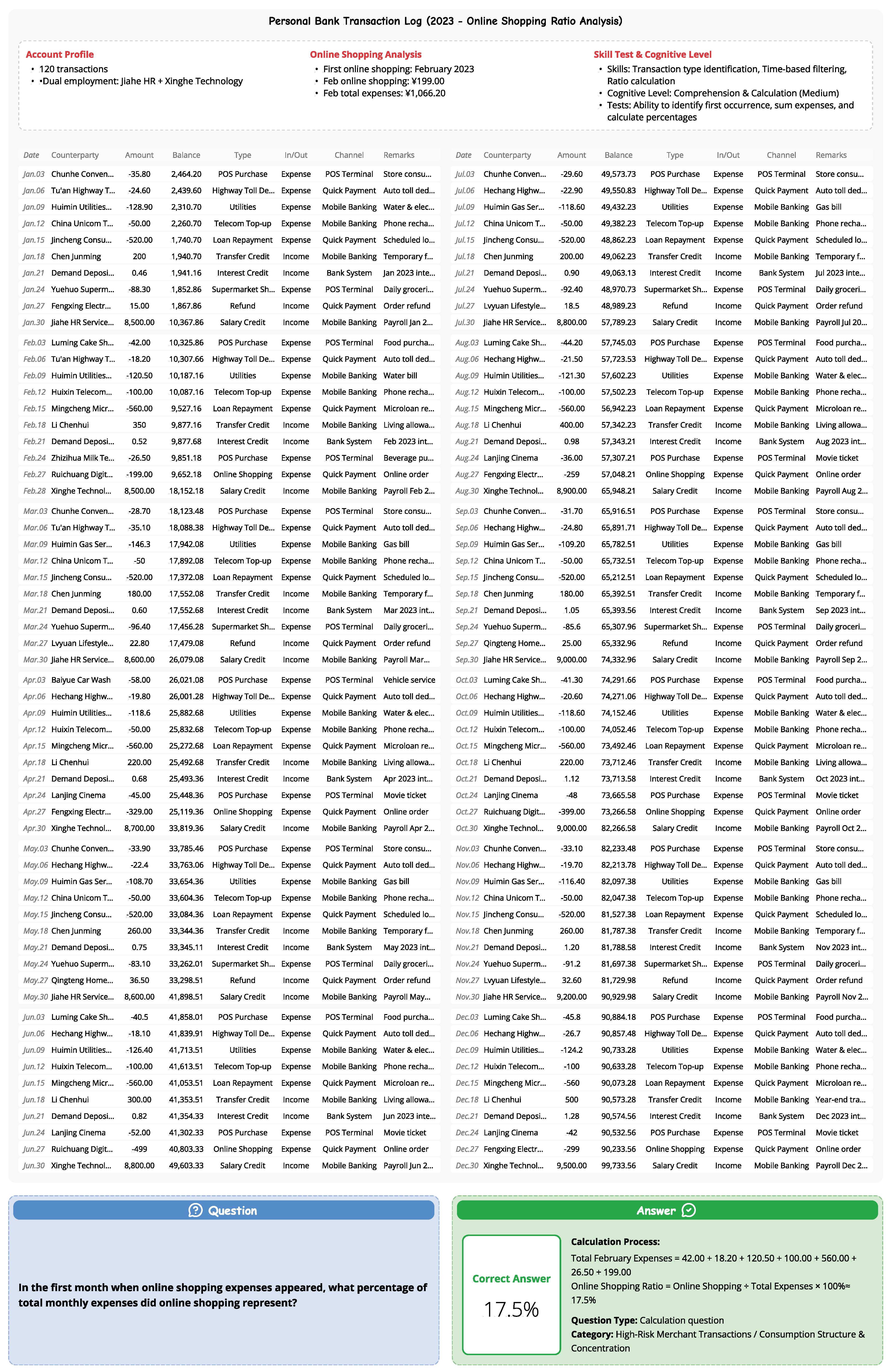

Figure A8.

Sample Calculation Question about Comprehension & Calculation of Personal Clients Log Information.

Figure A8.

Sample Calculation Question about Comprehension & Calculation of Personal Clients Log Information.

Figure A9.

Sample Multiple Choice Question about Memory & Recognition of Micro-enterprise Clients Log Information.

Figure A9.

Sample Multiple Choice Question about Memory & Recognition of Micro-enterprise Clients Log Information.