Submitted:

20 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

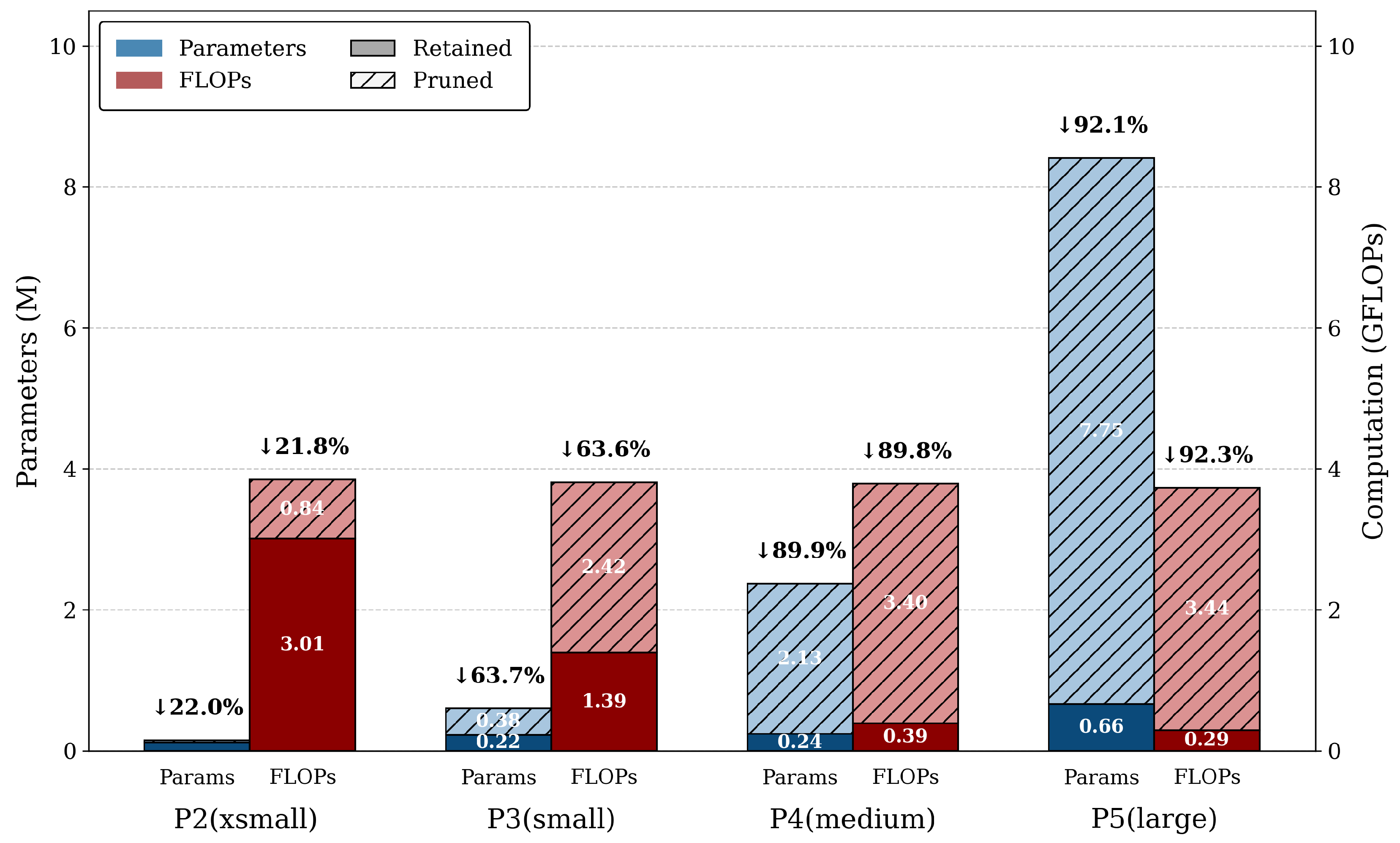

- A multi-dimensional structured pruning strategy for lightweight UAV detection: We design an asymmetric channel pruning scheme for deep convolutional layers and feature-fusion modules to remove redundant channels while preserving the multi-scale feature extraction capability required for aerial small-object detection. In addition, we compress the Swin Transformer prediction heads and reduce the number of bottleneck stacks, substantially decreasing model parameters and computational complexity with limited accuracy loss.

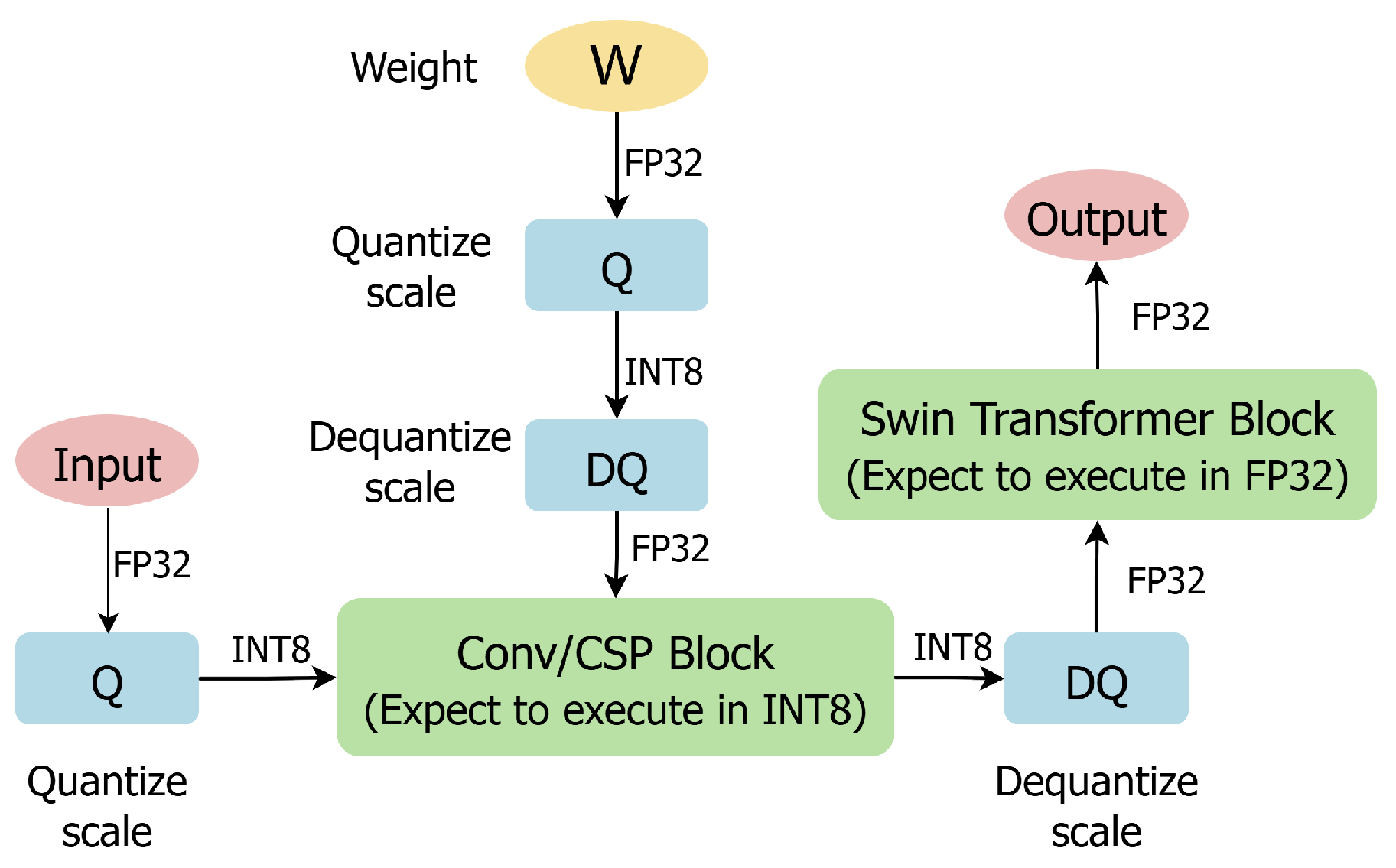

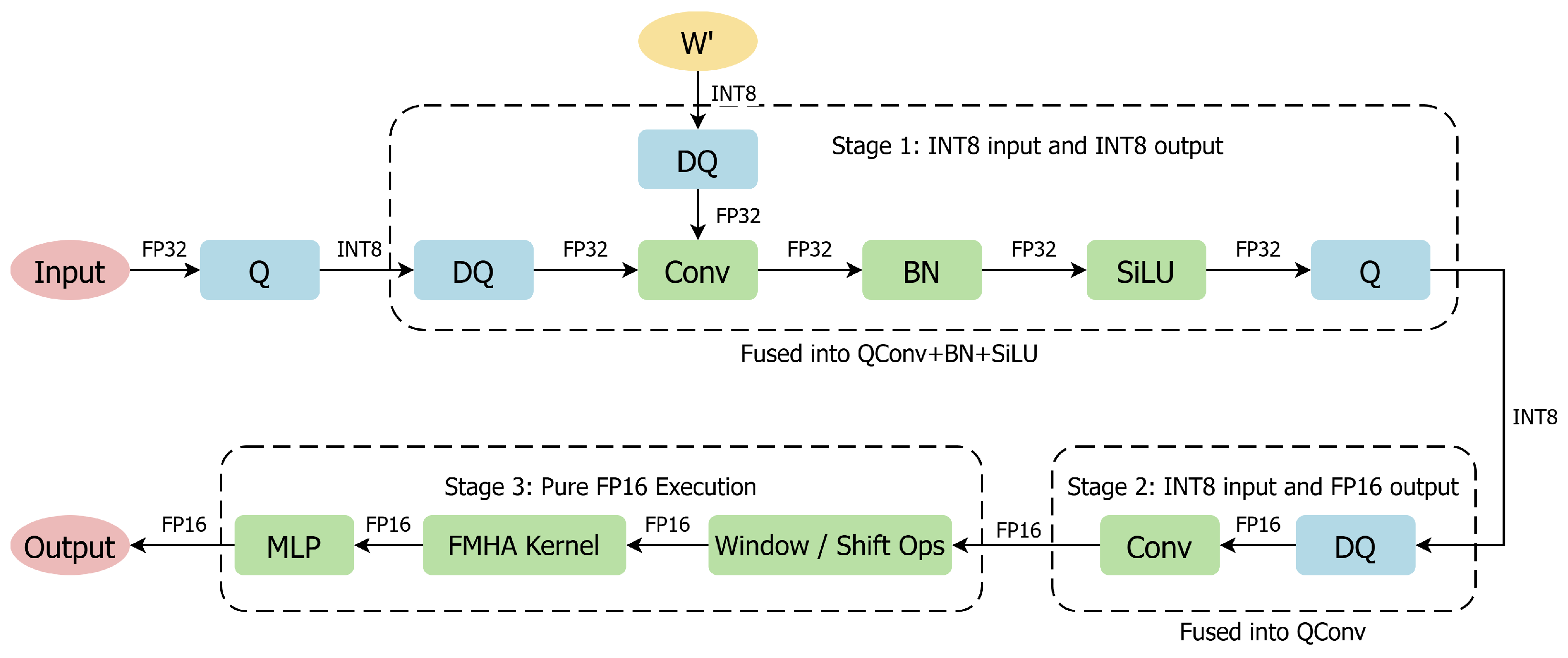

- A hardware-aware mixed-precision QAT framework for hybrid CNN–Transformer detectors: We introduce quantization-aware fine-tuning by dynamically inserting fake-quantization nodes during training, enabling the model to adapt to quantization noise before deployment. Furthermore, we map computation-intensive backbone layers to INT8 while retaining Transformer-related modules in FP16, thereby improving inference efficiency on edge hardware while maintaining operator fusion compatibility and detection accuracy.

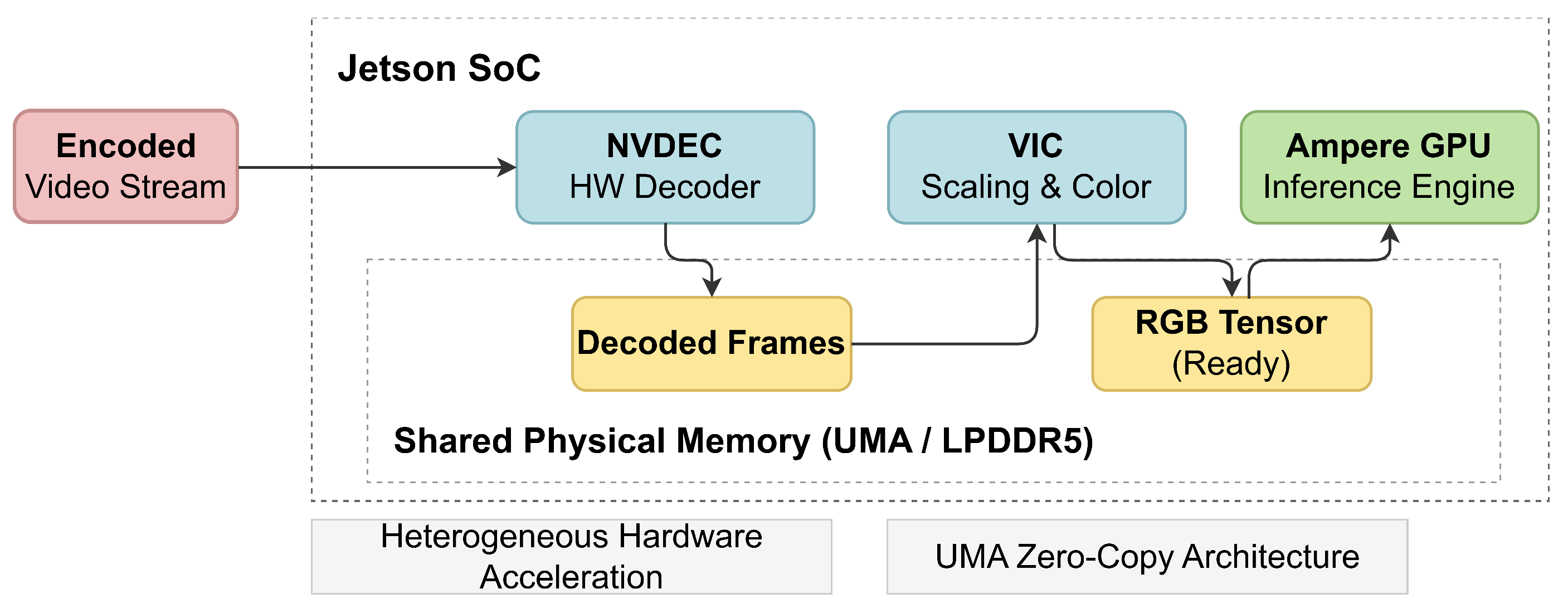

- An efficient DeepStream-integrated end-to-end deployment system on Jetson Orin NX: We perform TensorRT explicit quantization compilation to precompute weights, fuse operators across layers, and exploit high-throughput INT8 kernels for convolutional computation. The resulting engine is integrated into an asynchronous DeepStream video pipeline that leverages hardware units such as NVDEC and VIC to reduce decoding, preprocessing, and memory-transfer overheads, significantly improving end-to-end throughput and power efficiency.

2. Related Work

2.1. Lightweight Optimization and Model Pruning

2.2. Quantization Strategies and Transformer Sensitivity

2.3. Edge Deployment and Acceleration

3. System Overview

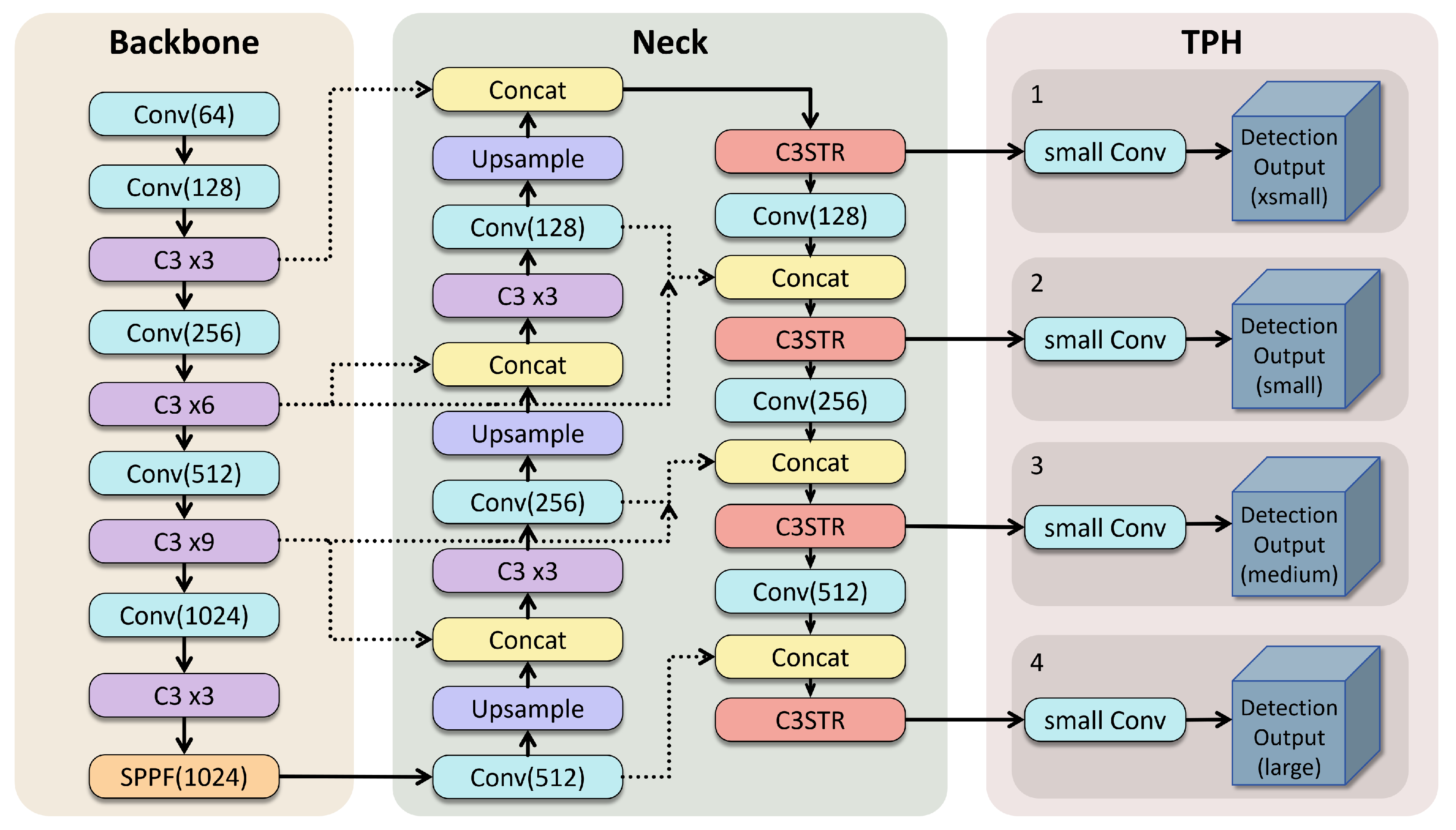

3.1. Network Architecture of DUST-YOLO

3.2. System Module Design

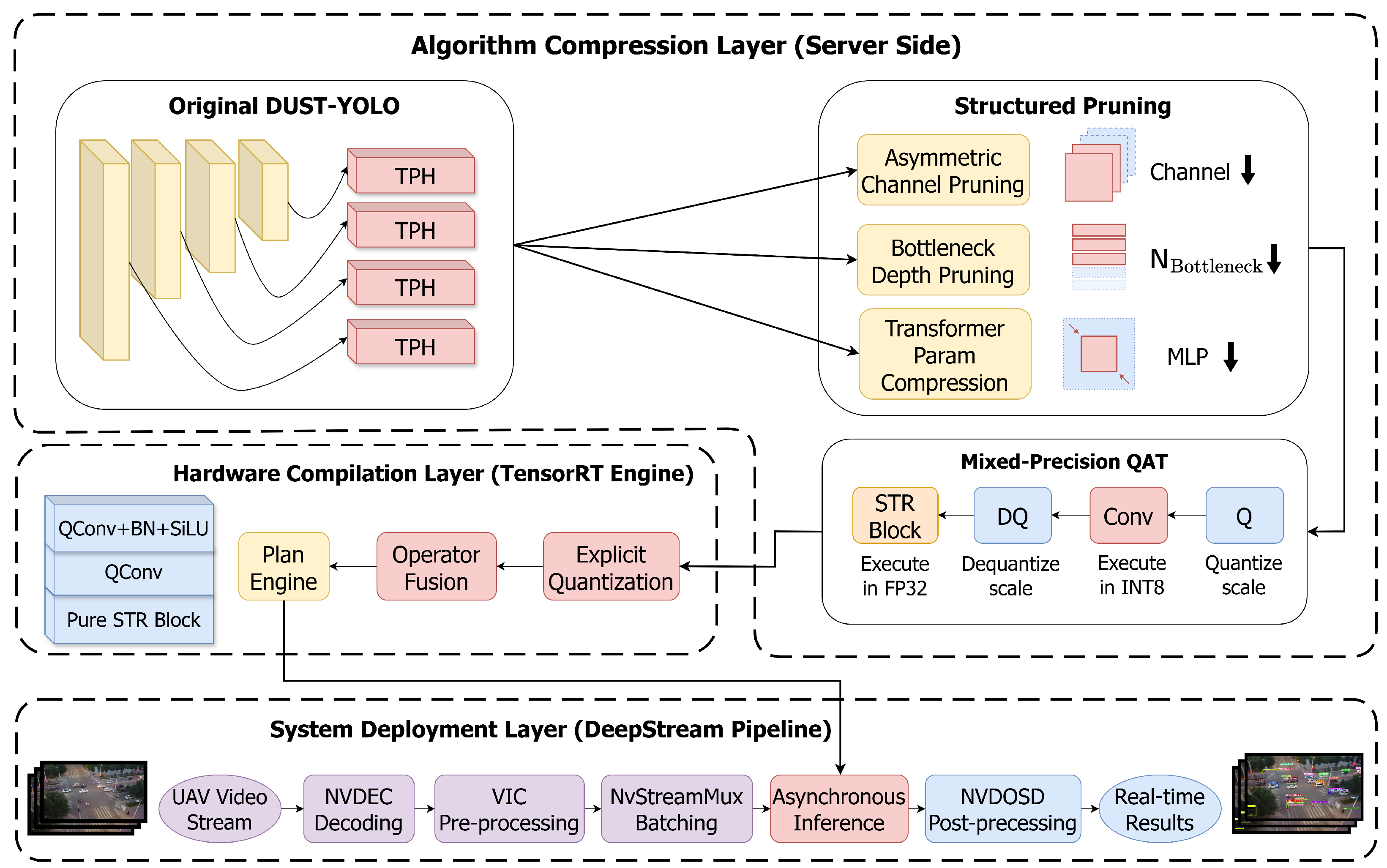

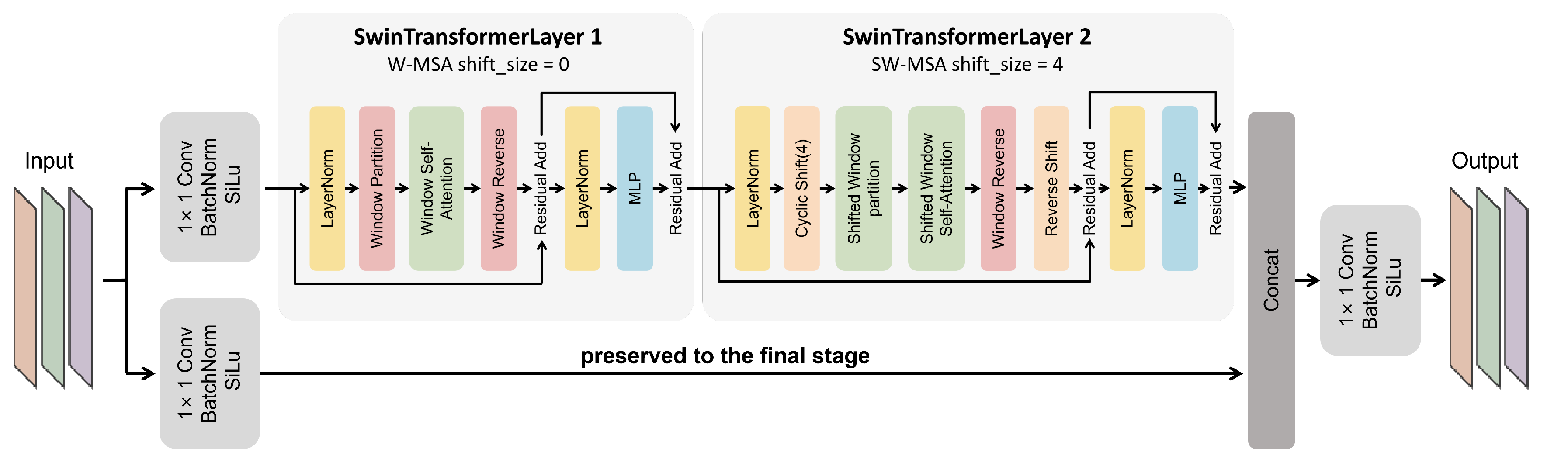

- The first module: Algorithmic Compression Layer. This layer performs model lightweighting on the server side through multi-dimensional structured pruning and QAT. Pruning is customized for the network’s heterogeneous modules: stripping redundant channels in convolutional layers and the feature pyramid, reducing the number of bottleneck stacks in deep C3 blocks, and compressing the dimensional scale of the fully connected layer matrices in C3STR blocks. After recovering accuracy through fine-tuning, the model undergoes QAT, which enables the network to learn loss and noise characteristics under INT8 precision by selectively inserting fake quantization nodes, thereby preparing a lightweight model for edge-side compilation.

- The second module: Hardware Compilation Layer. This module utilizes TensorRT to compile the optimized model from the ONNX intermediate format into a hardware-specific inference engine at the edge. During this compilation process, INT8+FP16 quantization is executed, and most fragmented small operators are fused into larger kernels. This strategy effectively reduces memory access overhead and significantly enhances the execution speed of the object detection algorithm on edge computing platforms.

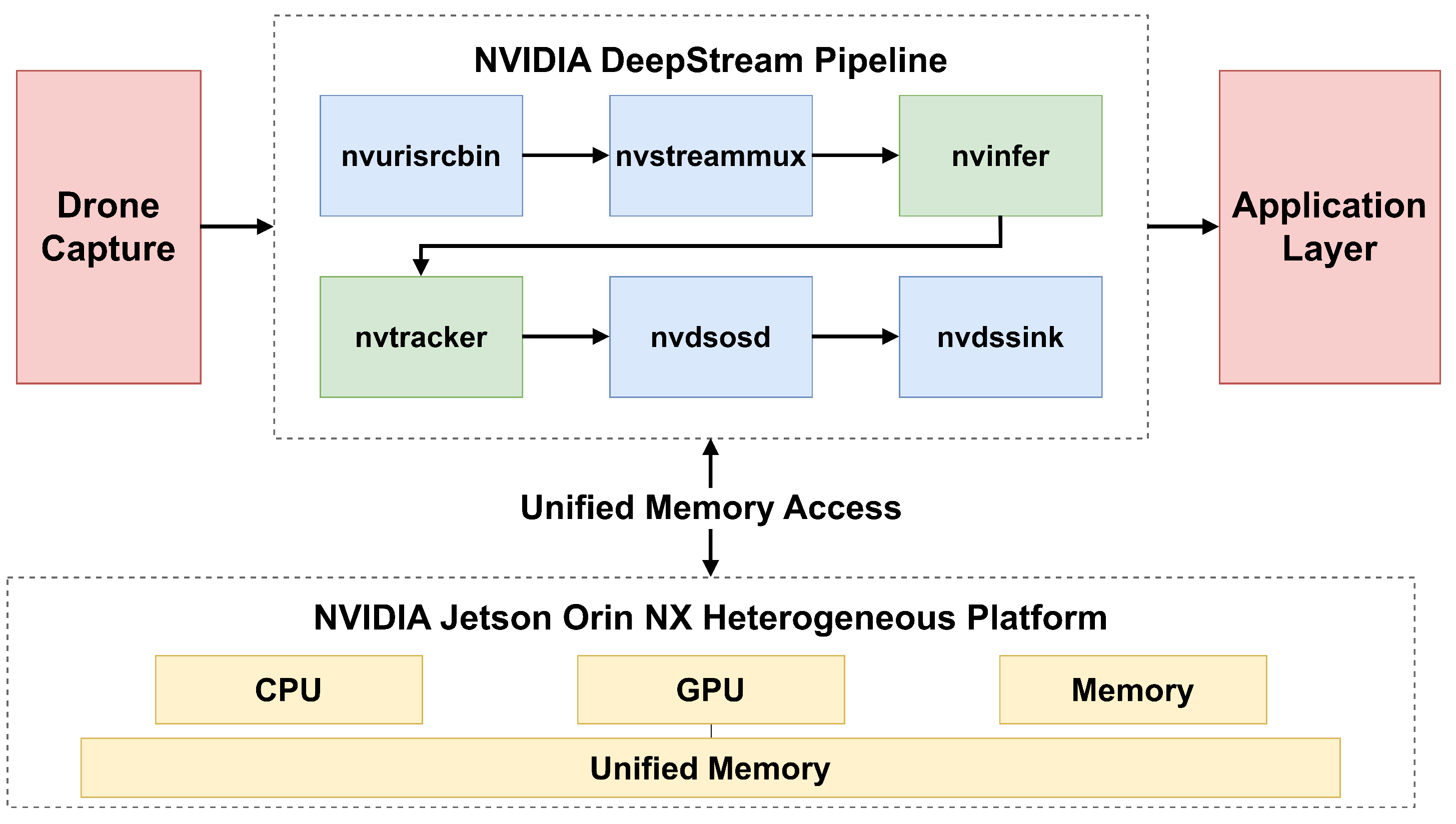

- The third module: System Deployment Layer. This module focuses on the deployment of the model within an end-to-end video object detection pipeline based on the DeepStream framework. It utilizes DeepStream preprocessing modules, such as NVDEC, to preprocess the input video, then employs an inference plugin to accelerate the execution of the engine compiled and exported by the second module, and finally uses a post-processing plugin to efficiently output the processed video stream, thereby satisfying the real-time requirements of target detection.

4. Methodology

4.1. Structured Pruning and Model Compression Strategy

4.1.1. Asymmetric Channel Pruning and Hardware Alignment for Convolutional Layers

4.1.2. Pruning and Parameter Compression of Transformer Modules

4.1.3. Depth Simplification of Feature Cascades

4.2. Mixed-Precision QAT and Edge-Side Mixed-Precision Quantized Deployment

4.2.1. QAT and Attention Fusion Protection

4.2.2. TensorRT Explicit Quantization Compilation and Continuous-Domain Optimization

4.3. DeepStream-Based Multi-Object Detection System Deployment

4.3.1. System Architecture Design

4.3.2. Hardware Acceleration and Parallel Mechanisms

Heterogeneous Computing Decoupling

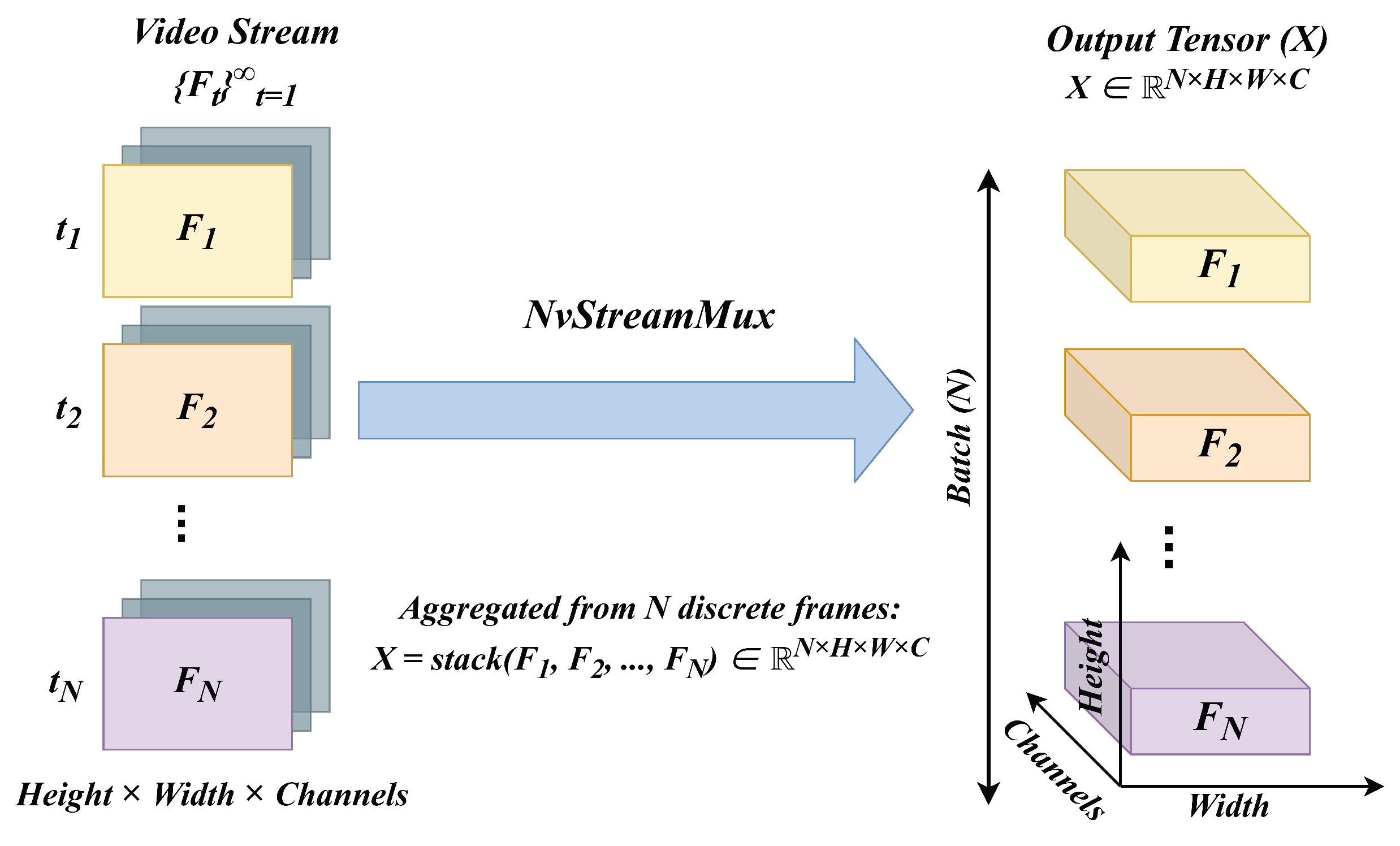

Tensor Batching

Highly Concurrent Pipeline

5. Experimental Results

5.1. Experimental Settings

5.2. Comparative Experiments

5.3. Ablation Study

6. Conclusion

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Echchidmi, M.; Bouayad, A. TinyML for sustainable edge intelligence: Practical optimization under extreme resource constraints. Technologies 2026, 14, 215. [CrossRef]

- Shih, W.C.; Wang, Z.Y.; Kristiani, E.; Chen, M.Y.; Shih, W.C. The construction of a stream service application with DeepStream and simple realtime server using containerization for edge computing. Sensors 2025, 25, 259. [CrossRef]

- Gong, J.; Yuan, Z.; Li, W.; Li, W.; Guo, Y.; Guo, B. A Lightweight Upsampling and Cross-Modal Feature Fusion-Based Algorithm for Small-Object Detection in UAV Imagery. Electronics 2026, 15, 298. [CrossRef]

- Jiang, Z.; Li, C.; Qu, T.; He, C.; Wang, D. MSQuant: Efficient post-training quantization for object detection via migration scale search. Electronics 2025, 14, 504. [CrossRef]

- Tian, L.; Wang, P. An effective mixed-precision quantization method for joint image deblurring and edge detection. Electronics 2025, 14, 1767. [CrossRef]

- Sun, J.; Gao, H.; Yan, Z.; Qi, X.; Yu, J.; Ju, Z. Lightweight UAV Object-Detection Method Based on Efficient Multidimensional Global Feature Adaptive Fusion and Knowledge Distillation. Electronics 2024, 13, 1558.

- Yang, R.; Li, W.; Shang, X.; Zhu, D.; Man, X. KPE-YOLOv5: An improved small target detection algorithm based on YOLOv5. Electronics 2023, 12, 817. [CrossRef]

- Wang, K.; Zhou, H.; Wu, H.; Yuan, G. RN-YOLO: A Small Target Detection Model for Aerial Remote-Sensing Images. Electronics 2024, 13, 2383. [CrossRef]

- Li, Z.; Xiao, J.; Yang, L.; Gu, Q. RepQ-ViT: Scale Reparameterization for Post-Training Quantization of Vision Transformers. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2–6 October 2023; pp. 17181–17190. [CrossRef]

- Zhu, W.; Chen, K. Real-time object detection for unmanned aerial vehicles based on vision transformer and edge computing. Sci. Rep. 2026, 16, 6814. [CrossRef]

- Jocher, G.; Stoken, A.; Borovec, J.; Chaurasia, A.; Qiu, J.; et al. ultralytics/yolov5: v7.0 - YOLOv5 SOTA Realtime Instance Segmentation. Zenodo 2022. [CrossRef]

- Zhu, X.; Lyu, S.; Wang, X.; Zhao, Q. TPH-YOLOv5: Improved YOLOv5 based on transformer prediction head for object detection on drone-captured scenarios. In Proceedings of the IEEE/CVF International Conference on Computer Vision Workshops (ICCVW), Montreal, QC, Canada, 11–17 October 2021; pp. 2778–2788. [CrossRef]

- Roy, A.M.; Bhaduri, J. A computer vision enabled damage detection model with improved YOLOV5 based on transformer prediction head. Ecol. Inform. 2023, 77, 102124. [CrossRef]

- Shi, H.; Cheng, X.; Mao, W.; Wang, Z. P2-ViT: Power-of-two post-training quantization and acceleration for fully quantized vision transformer. IEEE Trans. Very Large Scale Integr. (VLSI) Syst. 2024, 32, 1704–1717. [CrossRef]

- Alhrgan, H.M.; Alharbi, N.A.; Alshaya, A.M.; Alhrgan, S.O.; Alanezi, R.A.; Alanezi, M.S.; Alshaya, S.S.; Sharif, N.U.; Alkanhal, R. Benchmarking YOLOv8 variants for object detection efficiency on Jetson Orin NX for edge computing applications. Computers 2025, 15, 74. [CrossRef]

- Xue, C.; Xia, Y.; Wu, M.; Yun, L. EL-YOLO: An efficient and lightweight low-altitude aerial objects detector for onboard applications. Expert Syst. Appl. 2024, 238, 122204. [CrossRef]

- Wu, J.; Meng, H.; Yuan, M.; Liu, C.; Lu, Z. Enhanced feature representation for real time UAV image object detection using contextual information and adaptive fusion. Sci. Rep. 2025, 15, 33711. [CrossRef]

- Lin, T.Y.; Dollár, P.; Girshick, R.; He, K.; Hariharan, B.; Belongie, S. Feature pyramid networks for object detection. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Honolulu, HI, USA, 21–26 July 2017; pp. 2117–2125. [CrossRef]

- Zhao, Z.; Liu, X.; He, P. PSO-YOLO: A contextual feature enhancement method for small object detection in UAV aerial images. Earth Sci. Inform. 2025, 18, 258. [CrossRef]

- Cai, S.; Wu, Z.; Liu, K.; Zhang, T.; Weng, W.; Zheng, X. LSOD-YOLO: A visual object detection method for AGV perception systems based on a lightweight backbone and detection head. Technologies 2026, 14, 173. [CrossRef]

- Dosovitskiy, A.; Beyer, L.; Kolesnikov, A.; Weissenborn, D.; Zhai, X.; Unterthiner, T.; Dehghani, M.; Minderer, M.; Heigold, G.; Gelly, S.; et al. An image is worth 16x16 words: Transformers for image recognition at scale. In Proceedings of the International Conference on Learning Representations (ICLR), Virtual Event, 3–7 May 2021. [CrossRef]

- Liu, Z.; Lin, Y.; Cao, Y.; Hu, H.; Wei, Y.; Zhang, Z.; Lin, S.; Guo, B. Swin transformer: Hierarchical vision transformer using shifted windows. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Montreal, QC, Canada, 11–17 October 2021; pp. 10012–10022. [CrossRef]

- Hakani, R.; Rawat, A. Edge computing-driven real-time drone detection using YOLOv9 and NVIDIA Jetson Nano. Drones 2024, 8, 680. [CrossRef]

- Liu, S.; Qi, L.; Qin, H.; Shi, J.; Jia, J. Path aggregation network for instance segmentation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 18–22 June 2018; pp. 8759–8768. [CrossRef]

- Mi, Q. YOLO11s-UAV: An advanced algorithm for small object detection in UAV aerial imagery. J. Imaging 2026, 12, 69. [CrossRef]

- Fan, Q.; Li, Y.; Deveci, M.; Zhong, K.; Kadry, S. LUD-YOLO: A novel lightweight object detection network for unmanned aerial vehicle. Inf. Sci. 2025, 686, 121366. [CrossRef]

- Huang, M.; Mi, W.; Wang, Y. EDGS-YOLOv8: An improved YOLOv8 lightweight UAV detection model. Drones 2024, 8, 337. [CrossRef]

- Xie, S.; Deng, G.; Lin, B.; Jing, W.; Li, Y.; Zhao, X. Real-time object detection from UAV inspection videos by combining YOLOv5s and DeepStream. Sensors 2024, 24, 3862. [CrossRef]

- Barthelemy, J.; Iqbal, U.; Qian, Y.; Amirghasemi, M.; Perez, P. Safety after dark: A privacy compliant and real-time edge computing intelligent video analytics for safer public transportation. Sensors 2024, 24, 8102. [CrossRef]

- Yue, M.; Zhang, L.; Huang, J.; Zhang, H. Lightweight and efficient tiny-object detection based on improved YOLOv8n for UAV aerial images. Drones 2024, 8, 276. [CrossRef]

- Liu, C.; Gao, G.; Huang, Z.; Hu, Z.; Liu, Q.; Wang, Y. YOLC: You only look clusters for tiny object detection in aerial images. IEEE Trans. Intell. Transp. Syst. 2024, 25, 1–14. [CrossRef]

- Ma, C.; Fu, Y.Y.; Wang, D.; Guo, R.; Zhao, X.; Fang, J. YOLO-UAV: Object detection method of unmanned aerial vehicle imagery based on efficient multi-scale feature fusion. IEEE Access 2023, 11, 126857–126878. [CrossRef]

- Zhu, P.; Wen, L.; Bian, X.; Ling, H.; Hu, Q. Vision meets drones: A challenge. IEEE Trans. Pattern Anal. Mach. Intell. 2022, 44, 7380–7395. [CrossRef]

- Cao, Y.; He, Z.; Wang, L.; Wang, W.; Yuan, Y.; Zhang, D.; Zhang, J.; Zhu, P.; Van Gool, L.; Han, J.; et al. VisDrone-DET2021: The vision meets drone object detection challenge results. In Proceedings of the IEEE/CVF International Conference on Computer Vision Workshops (ICCVW), Montreal, QC, Canada, 11–17 October 2021; pp. 2847–2854. [CrossRef]

- Jocher, G.; Chaurasia, A.; Qiu, J. Ultralytics YOLOv8. 2023. Available online: https://github.com/ultralytics/ultralytics (accessed on 15 April 2026).

- Wang, C.Y.; Yeh, I.H.; Liao, H.Y.M. YOLOv9: Learning what you want to learn using programmable gradient information. In Proceedings of the European Conference on Computer Vision (ECCV), Milan, Italy, 29 September–4 October 2024; pp. 1–21. [CrossRef]

- Wang, A.; Chen, H.; Liu, L.; Chen, K.; Lin, Z.; Han, J.; Ding, G. YOLOv10: Real-Time End-to-End Object Detection. arXiv 2024, arXiv:2405.14458.

- Jocher, G.; Qiu, J. Ultralytics YOLO11. 2024. Available online: https://github.com/ultralytics/ultralytics (accessed on 15 April 2026).

- Tian, Y.; Ye, Q.; Doermann, D. YOLOv12: Attention-Centric Real-Time Object Detectors. arXiv 2025, arXiv:2502.12524.

- Gao, F.; An, J.; Zhang, M.; Chen, X.; Zhao, Q. FE-YOLO: A Traffic Target Detection Network Based on YOLOv11n. In Proceedings of the 2025 44th Chinese Control Conference (CCC), Chongqing, China, 2025; pp. 8845–8850. [CrossRef]

- Jiang, P.; Ergu, D.; Liu, F.; Cai, Y.; Ma, B. A review of YOLO algorithm developments. Procedia Comput. Sci. 2022, 199, 1066–1073. [CrossRef]

- Terven, J.; Cordova-Esparza, D. A comprehensive review of YOLO architectures in computer vision: From YOLOv1 to YOLOv8 and YOLO-NAS. Front. Robot. AI 2023, 10, 1212874. [CrossRef]

| Model | Resolution (pixels) |

mAP @0.5 (%) |

mAP @0.5:0.95 (%) |

Inference Time (ms) |

End-to-End Latency (ms) |

End-to-End FPS |

Speedup Ratio |

|---|---|---|---|---|---|---|---|

| YOLOv8s [35] | 38.6 | 23.1 | 33.7 | 94.3 | 10.6 | ||

| YOLOv9s [36] | 39.5 | 23.5 | 26.0 | 87.7 | 11.4 | ||

| YOLOv10s [37] | 38.2 | 22.9 | 26.8 | 86.4 | 11.6 | ||

| YOLOv11s [38] | 38.2 | 22.7 | 21.8 | 84.1 | 11.9 | ||

| YOLOv12s [39] | 38.2 | 22.8 | 27.5 | 86.2 | 11.6 | ||

| FE-YOLO [40] | 34.9 | - | 37.3 | 96.9 | 10.3 | ||

| YOLO-UD-s [17] | 45.5 | - | 24.6 | 84.2 | 11.9 | ||

| DUST-YOLO (Ours) | 43.7 | 25.9 | 18.9 | 36.3 | 27.5 |

| Method | FP16 Quantization |

QAT (INT8) |

Structured Pruning |

DeepStream Deployment |

mAP @0.5 (%) |

mAP @0.5:0.95 (%) |

Inference Time (ms) |

End-to-End FPS |

Speedup Ratio |

|---|---|---|---|---|---|---|---|---|---|

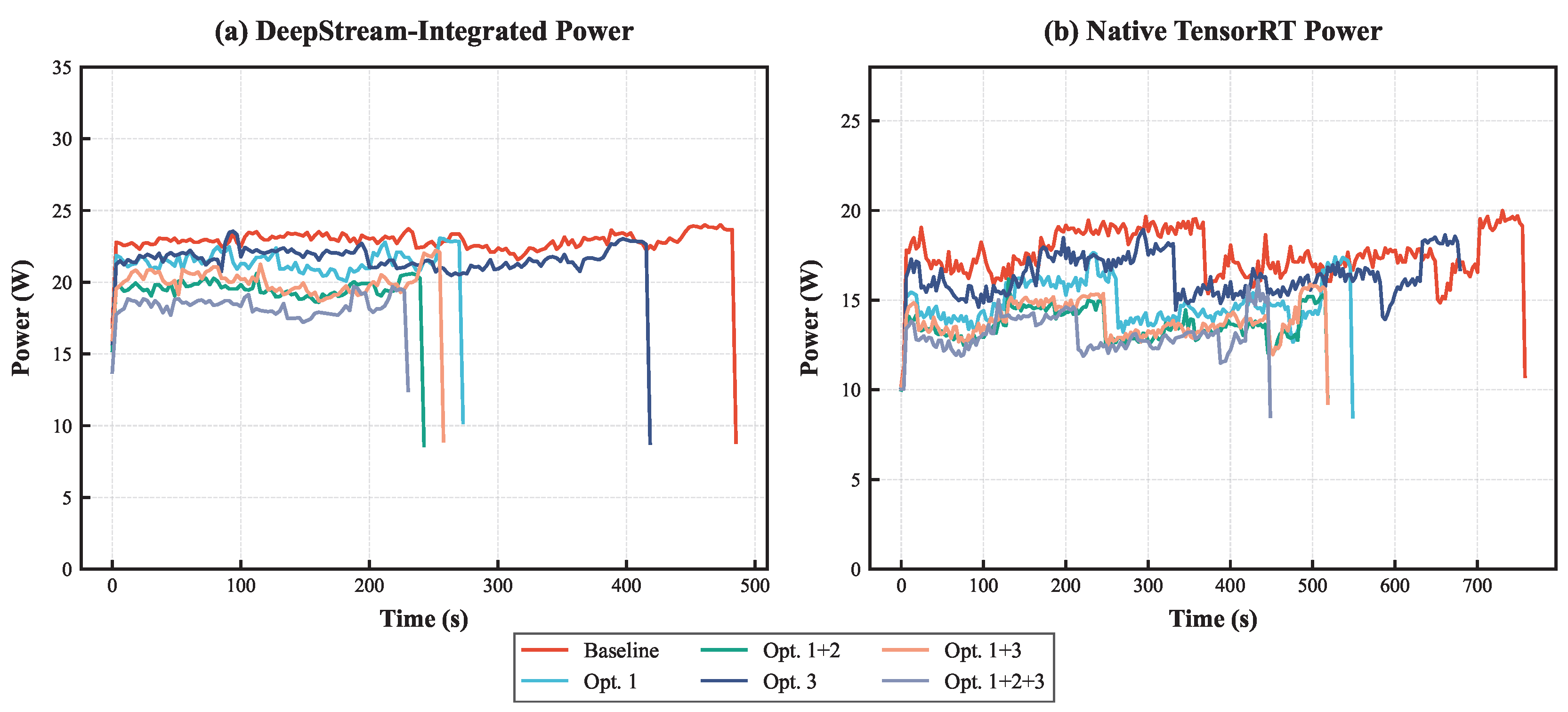

| Baseline | 44.9 | 27.2 | 62.7 | 8.4 | |||||

| Opt. 1 | ✓ | 44.9 | 27.2 | 28.9 | 11.3 | ||||

| Opt. 1+2 | ✓ | ✓ | 43.6 | 25.8 | 23.3 | 12.2 | |||

| Opt. 3 | ✓ | 45.1 | 27.3 | 48.4 | 9.3 | ||||

| Opt. 1+3 | ✓ | ✓ | 45.1 | 27.3 | 23.7 | 12.2 | |||

| Opt. 1+2+3 | ✓ | ✓ | ✓ | 43.7 | 25.9 | 18.9 | 14.1 | ||

| DUST-YOLO (Ours) | ✓ | ✓ | ✓ | ✓ | 43.7 | 25.9 | 18.9 | 27.5 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).