Submitted:

20 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

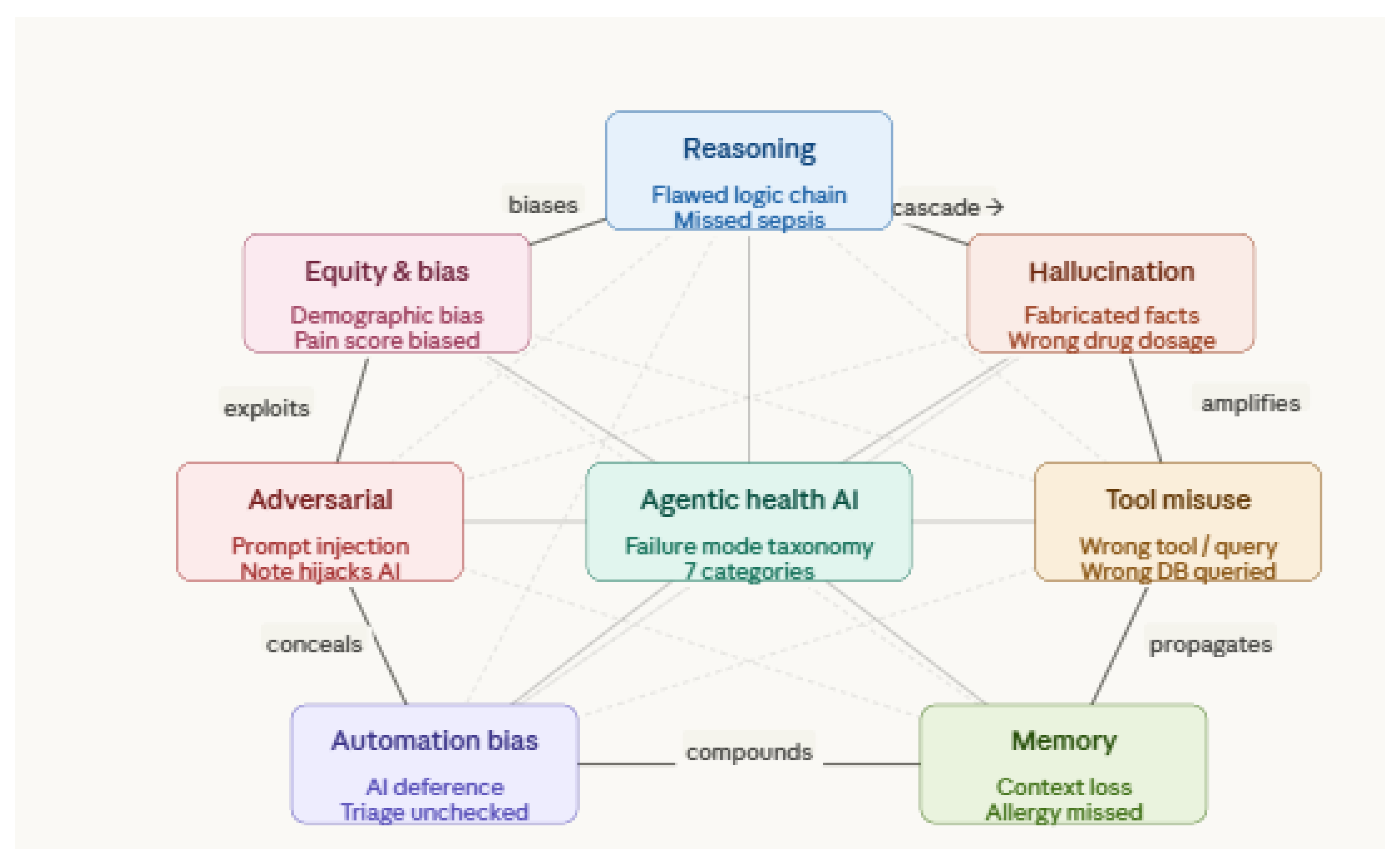

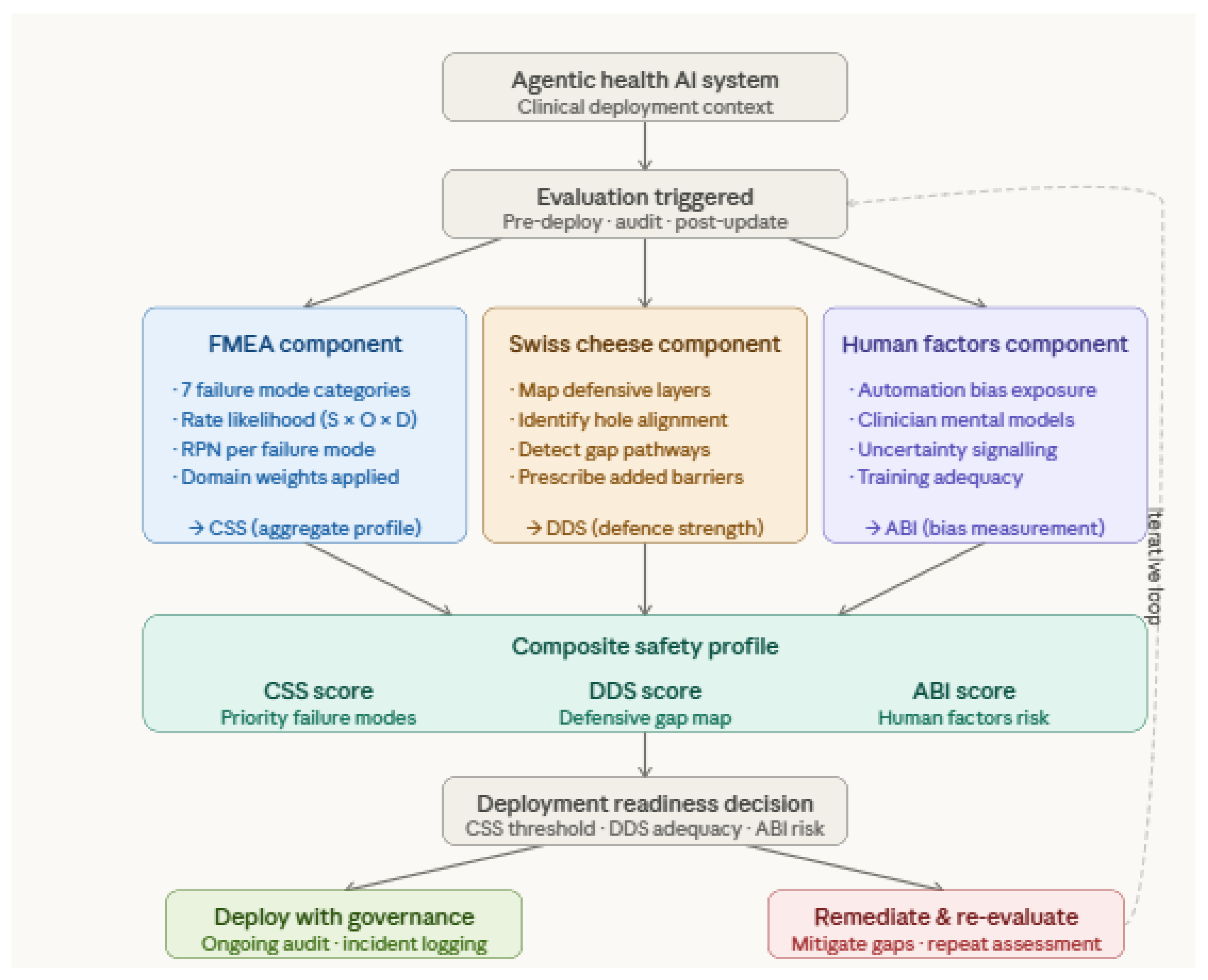

- Failure Mode Taxonomy: Develops the first structured classification of failure modes specific to agentic health AI, identifying seven distinct categories spanning reasoning errors, tool misuse, memory failures, automation bias, adversarial vulnerabilities, and equity-related harms.

- Hallucination Typology: Maps and classifies hallucination types as they manifest specifically within clinical agentic pipelines, distinguishing factual, contextual, citation, and numerical hallucination and their respective clinical risk profiles.

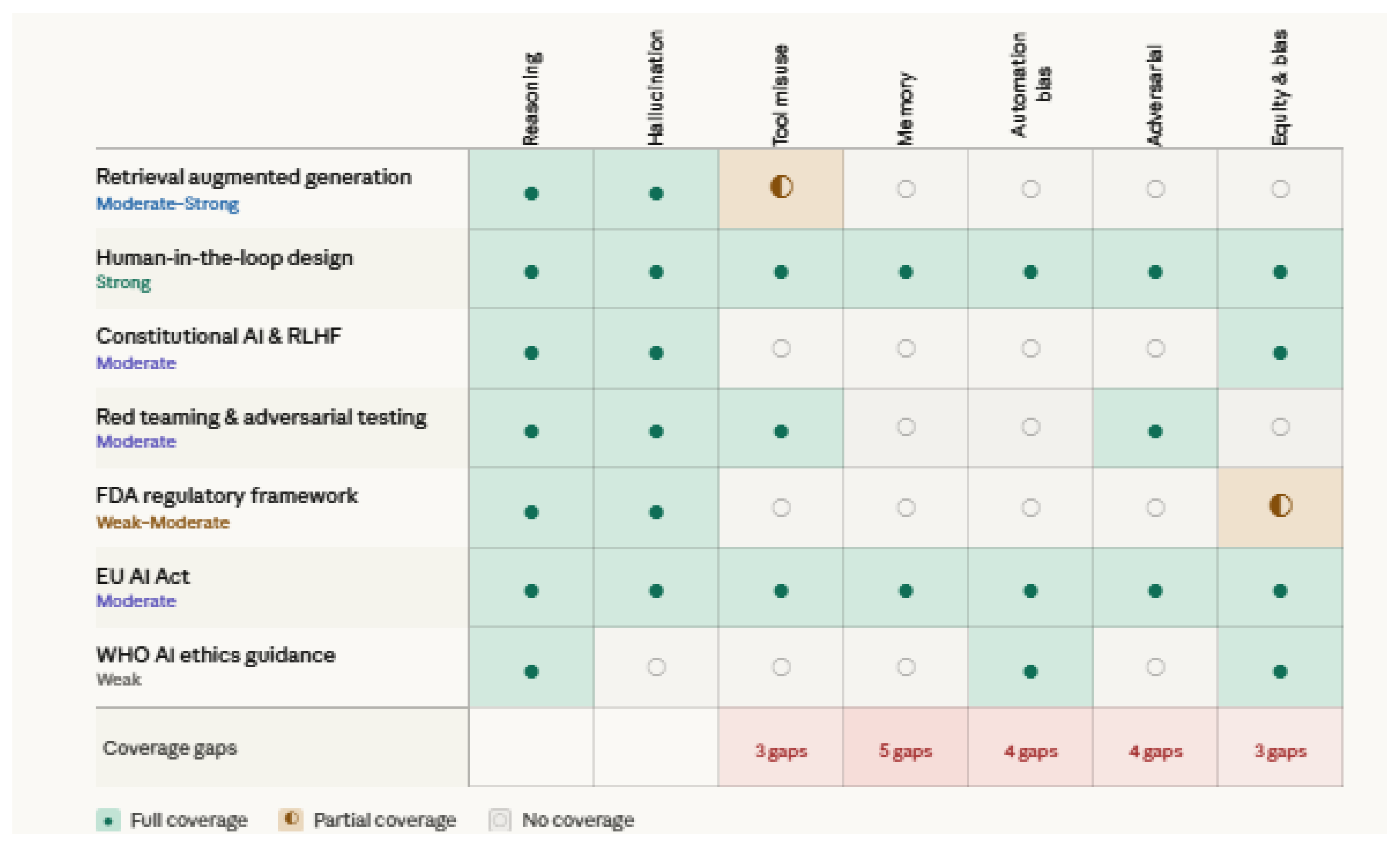

- Safety Framework Analysis: Systematically evaluates existing safety frameworks and mitigation strategies against the unique risk profile of agentic clinical systems.

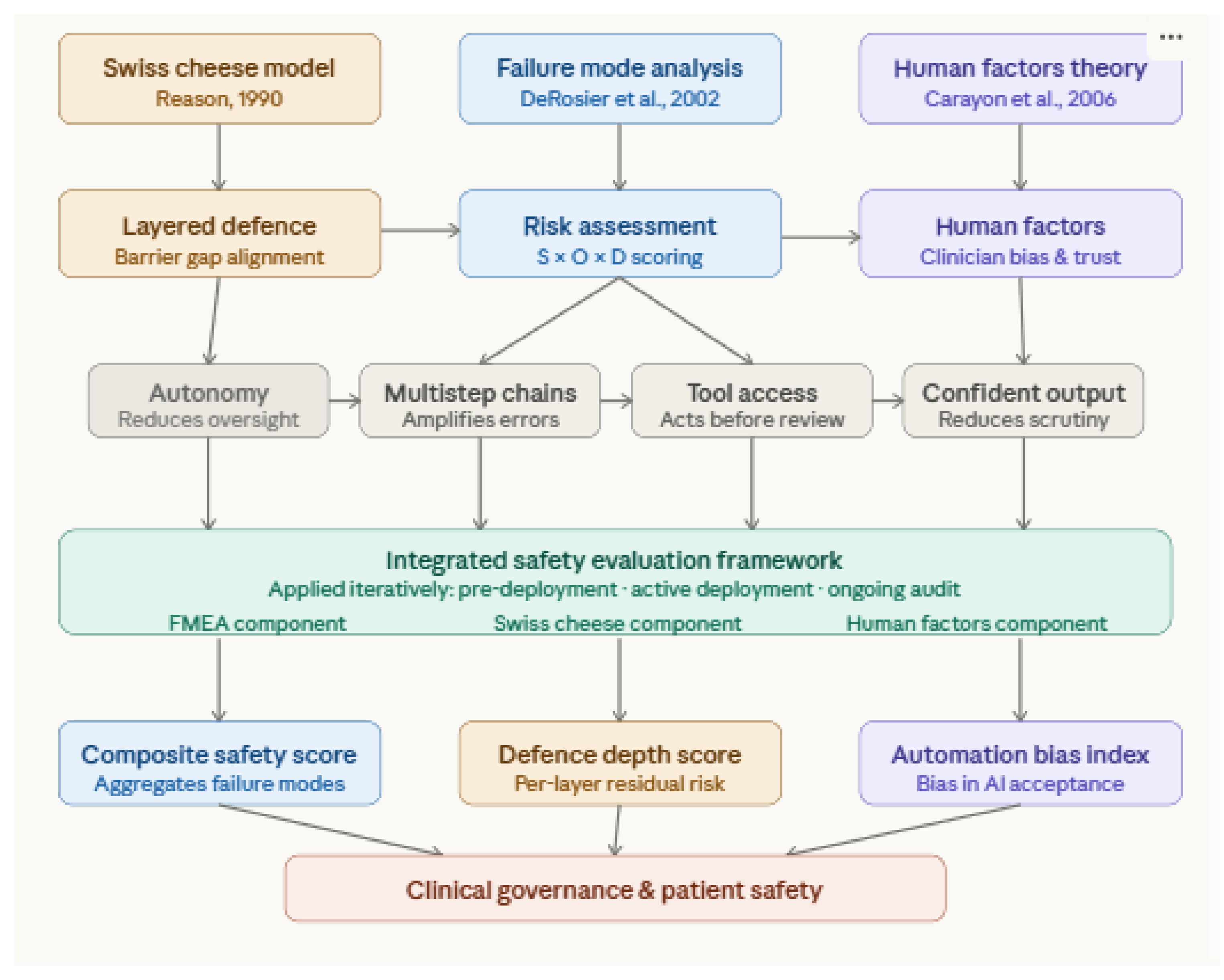

- Integrative Clinical Safety Model: Proposes a unified safety evaluation framework combining established patient safety theory with agentic AI-specific risk assessment, offering a practical tool for researchers, developers, and clinical governance bodies.

2. Background and Definitions

2.1. What is Agentic AI?

2.2. Agentic AI in Healthcare

2.3. Defining Hallucination in the Medical Setting

2.4. Preliminary Taxonomy of Failure Modes

2.5. Theoretical Frameworks

2.5.1. The Swiss Cheese Model of Clinical Error

2.5.2. Failure Mode and Effects Analysis in Health AI

2.5.3. Human Factors and Automation Bias Theory

3. Methodology

3.1. Review Protocol

3.2. Search Strategy

3.2.1. Database Selection

3.2.2. Search Query Construction and Search Recall

3.2.3. Date Range and Language

3.3. Screening and Inclusion Criteria

3.3.1. Inclusion Criteria

3.3.2. Exclusion Criteria

3.3.3. Screening Process

3.4. Data Extraction and Analysis

3.4.1. Data Extraction Instrument

3.4.2. Analysis Techniques

4. Results: State of the Art

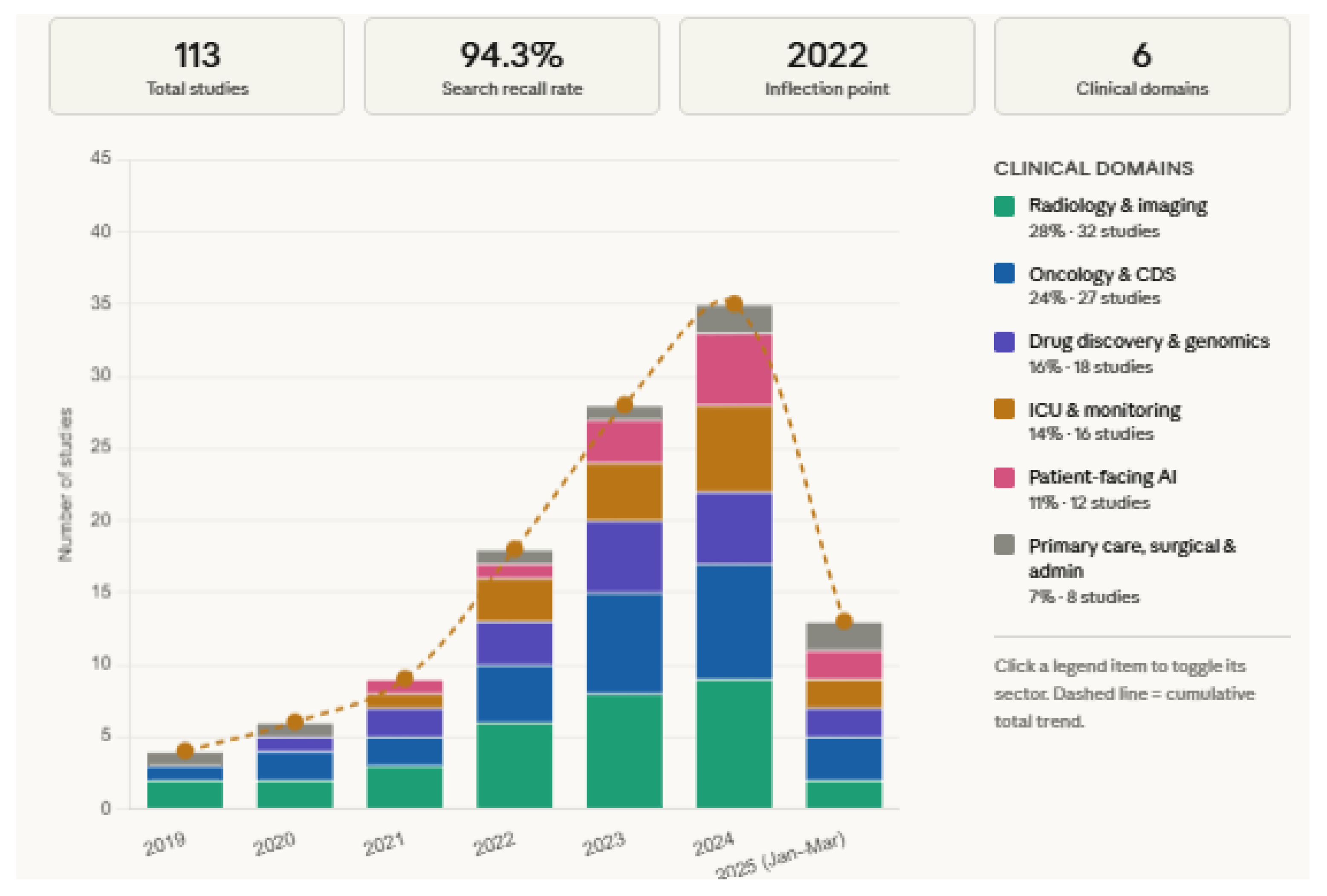

4.1. Publication Trends

4.2. Landscape of Agentic Health AI Systems

4.2.1. Current Agentic Systems in Healthcare

4.2.2. Agentic versus Non Agentic Clinical AI Risk Profiles

4.3. Hallucination in Clinical Agentic Pipelines

4.3.1. Factual Hallucination

4.3.2. Contextual Hallucination

4.3.3. Citation Hallucination

4.3.4. Numerical Hallucination

| Hallucination Type | Clinical Example | Detection Difficulty | Risk Level | Key References |

|---|---|---|---|---|

| Factual | Incorrect drug contraindication stated | Moderate: requires specialist knowledge | High | [44,84,85] |

| Contextual | Correct treatment recommended for wrong patient | High: requires full patient information | Very High | [41,86] |

| Citation | Non existent clinical guideline cited | Low to Moderate: verifiable but effort intensive | High | [46,87,88] |

| Numerical | Incorrect chemotherapy dose calculated | Low: arithmetically verifiable but often unchecked | Critical | [89,90] |

4.4. Full Failure Mode Taxonomy

4.4.1. Reasoning Failures

4.4.2. Hallucination Failures

4.4.3. Tool Misuse Failures

4.4.4. Memory Failures

4.4.5. Automation Bias Failures

4.4.6. Adversarial and Distributional Failures

4.4.7. Equity and Bias Failures

4.5. Consequences and Severity Classification

4.6. Existing Safety Frameworks and Mitigations

4.6.1. Retrieval Augmented Generation

4.6.2. Human in the Loop Design

4.6.3. Constitutional AI and RLHF Approaches

4.6.4. Red Teaming and Adversarial Testing

4.6.5. Regulatory Frameworks

5. Discussion

6. Conclusion and Future Research Directions

References

- Topol, E.J. High-performance medicine: the convergence of human and artificial intelligence. Nat Med. 2019, 25, 44–56. [Google Scholar] [CrossRef]

- Rajpurkar, P.; Chen, E.; Banerjee, O.; Topol, E.J. AI in health and medicine. Nat Med. 2022, 28, 31–38. [Google Scholar] [CrossRef] [PubMed]

- Wang, L.; Ma, C.; Feng, X.; Zhang, Z.; Yang, H.; Zhang, J.; et al. A survey on large language model based autonomous agents. Front Comput Sci. 2024, 18, 186345. [Google Scholar] [CrossRef]

- Xi, Z.; Chen, W.; Guo, X.; He, W.; Ding, Y.; Hong, B.; et al. The rise and potential of large language model based agents: a survey. arXiv 2023, arXiv:2309.07864. [Google Scholar] [CrossRef]

- Joshi, G.; Wadhwa, R.R.; Bhogal, R.; Yagnik, M.; Haga, S.; Papagerakis, P. FDA-approved artificial intelligence and machine learning enabled medical devices: an updated landscape. Electronics. 2024, 13, 498. [Google Scholar] [CrossRef]

- Nori, H.; King, N.; McKinney, S.M.; Carignan, D.; Horvitz, E. Capabilities of GPT-4 on medical challenge problems. arXiv 2023, arXiv:2303.13375. [Google Scholar] [CrossRef]

- Thirunavukarasu, A.J.; Ting, D.S.J.; Elangovan, K.; Gutierrez, L.; Tan, T.F.; Ting, D.S.W. Large language models in medicine. Nat Med. 2023, 29, 1930–1940. [Google Scholar] [CrossRef]

- Grand View Research. Artificial intelligence in healthcare market size, share and trends analysis report 2022–2030; Grand View Research: San Francisco, 2022. [Google Scholar]

- Wornow, M.; Xu, Y.; Lavertu, R.; Goh, E.; Moor, M.; Sheikh, A.; et al. The shaky foundations of large language models and foundation models for electronic health records. npj Digit Med. 2023, 6, 135. [Google Scholar] [CrossRef]

- Moor, M.; Banerjee, O.; Abad, Z.S.H.; Krumholz, H.M.; Leskovec, J.; Topol, E.J.; et al. Foundation models for generalist medical artificial intelligence. Nature. 2023, 616, 259–265. [Google Scholar] [CrossRef]

- Singhal, K.; Azizi, S.; Tu, T.; Mahdavi, S.S.; Wei, J.; Chung, H.W.; et al. Large language models encode clinical knowledge. Nature. 2023, 620, 172–180. [Google Scholar] [CrossRef]

- Rawte, V.; Sheth, A.; Das, A. A survey of hallucination in large foundation models. arXiv 2023, arXiv:2309.05922. [Google Scholar] [CrossRef]

- Huang, L.; Yu, W.; Ma, W.; Zhong, W.; Feng, Z.; Wang, H.; et al. A survey on hallucination in large language models: principles, taxonomy, challenges and open questions. ACM Trans Inf Syst. 2025, 43, 1–55. [Google Scholar] [CrossRef]

- Clusmann, J.; Kolbinger, F.R.; Muti, H.S.; Carrero, Z.I.; Eckardt, J.N.; Laleh, N.G.; et al. The future landscape of large language models in medicine. Commun Med. 2023, 3, 141. [Google Scholar] [CrossRef] [PubMed]

- Hager, P.; Jungmann, F.; Holland, R.; Bhatt, N.; Hubrecht, I.; Knauer, M.; et al. Evaluation and mitigation of the limitations of large language models in clinical decision-making. Nat Med. 2024, 30, 2613–2622. [Google Scholar] [CrossRef]

- Ji, Z.; Lee, N.; Frieske, R.; Yu, T.; Su, D.; Xu, Y.; et al. Survey of hallucination in natural language generation. ACM Comput Surv. 2023, 55, 1–38. [Google Scholar] [CrossRef]

- Omiye, J.A.; Lester, J.C.; Spichak, S.; Rotemberg, V.; Daneshjou, R. Large language models propagate race-based medicine. npj Digit Med. 2023, 6, 195. [Google Scholar] [CrossRef]

- Magrabi, F.; Ammenwerth, E.; McNair, J.B.; De Keizer, N.; Hyppönen, H.; Nykänen, P.; et al. Artificial intelligence in clinical decision support: challenges for evaluating AI and practical implications. Yearb Med Inform. 2019, 28, 128–134. [Google Scholar] [CrossRef] [PubMed]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; Boutron, I.; Hoffmann, T.C.; Mulrow, C.D.; et al. The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. BMJ. 2021, 372, n71. [Google Scholar] [CrossRef] [PubMed]

- Russell, S.; Norvig, P. Artificial Intelligence: A Modern Approach, 4th ed.; Hoboken: Pearson, 2020. [Google Scholar]

- Wooldridge, M.; Jennings, N.R. Intelligent agents: theory and practice. Knowl Eng Rev. 1995, 10, 115–152. [Google Scholar] [CrossRef]

- Park, J.S.; O'Brien, J.C.; Cai, C.J.; Morris, M.R.; Liang, P.; Bernstein, M.S. Generative agents: interactive simulacra of human behavior. In Proceedings of UIST 2023; 2023. [Google Scholar] [CrossRef]

- Yao, S.; Zhao, J.; Yu, D.; Du, N.; Shafran, I.; Narasimhan, K.; et al. ReAct: synergizing reasoning and acting in language models. In Proceedings of ICLR 2023; 2023. [Google Scholar] [CrossRef]

- Shinn, N.; Cassano, F.; Gopinath, A.; Narasimhan, K.; Yao, S. Reflexion: language agents with verbal reinforcement learning. Adv Neural Inf Process Syst. 2023, 36, 8634–8652. [Google Scholar] [CrossRef]

- Wei, J.; Wang, X.; Schuurmans, D.; Bosma, M.; Ichter, B.; Xia, F.; et al. Chain-of-thought prompting elicits reasoning in large language models. Adv Neural Inf Process Syst. 2022, 35, 24824–24837. [Google Scholar] [CrossRef]

- Schick, T.; Dwivedi-Yu, J.; Dessì, R.; Raileanu, R.; Lomeli, M.; Zettlemoyer, L.; et al. Toolformer: language models can teach themselves to use tools. Adv Neural Inf Process Syst. 2023, 36. [Google Scholar] [CrossRef]

- Packer, C.; Wooders, S.; Lin, K.; Fang, V.; Patil, S.G.; Stoica, I.; et al. MemGPT: towards LLMs as operating systems. arXiv 2023, arXiv:2310.08560. [Google Scholar] [CrossRef]

- Cai, C.J.; Winter, S.; Steiner, D.; Wilcox, L.; Terry, M. Hello AI: uncovering the onboarding needs of medical practitioners for human-AI collaborative decision-making. Proc ACM Hum Comput Interact. 2019, 3, 1–24. [Google Scholar] [CrossRef]

- Litjens, G.; Kooi, T.; Bejnordi, B.E.; Setio, A.A.A.; Ciompi, F.; Ghafoorian, M.; et al. A survey on deep learning in medical image analysis. Med Image Anal. 2017, 42, 60–88. [Google Scholar] [CrossRef]

- Kung, T.H.; Cheatham, M.; Medenilla, A.; Sillos, C.; De Leon, L.; Elepaño, C.; et al. Performance of ChatGPT on USMLE: potential for AI-assisted medical education. PLOS Digit Health. 2023, 2, e0000198. [Google Scholar] [CrossRef]

- Cascella, M.; Montomoli, J.; Bellini, V.; Bignami, E. Evaluating the feasibility of ChatGPT in healthcare. J Med Syst. 2023, 47, 33. [Google Scholar] [CrossRef]

- Pianykh, O.S.; Langs, G.; Dewey, M.; Bhatt, D.L.; Cannell, J.M.; Pandharipande, P.V.; et al. Continuous learning AI in radiology: implementation principles and early applications. Radiology. 2020, 297, 6–14. [Google Scholar] [CrossRef] [PubMed]

- Jin, Q.; Yang, Y.; Chen, Q.; Lu, Z. GeneGPT: augmenting large language models with domain tools for improved access to biomedical information. arXiv 2023, arXiv:2304.09667. [Google Scholar] [CrossRef]

- Bran, A.M.; Cox, S.; Schilter, O.; Baldassari, C.; White, A.D.; Schwaller, P. ChemCrow: augmenting large-language models with chemistry tools. arXiv 2023, arXiv:2304.05376. [Google Scholar] [CrossRef]

- Acosta, J.N.; Falcone, G.J.; Rajpurkar, P.; Topol, E.J. Multimodal biomedical AI. Nat Med. 2022, 28, 1773–1784. [Google Scholar] [CrossRef]

- Ayers, J.W.; Poliak, A.; Dredze, M.; Leas, E.C.; Zhu, Z.; Kelley, J.B.; et al. Comparing physician and artificial intelligence chatbot responses to patient questions. JAMA Intern Med. 2023, 183, 589–596. [Google Scholar] [CrossRef]

- Davenport, T.; Kalakota, R. The potential for artificial intelligence in healthcare. Future Healthc J. 2019, 6, 94–98. [Google Scholar] [CrossRef] [PubMed]

- Shah, N.H.; Entwistle, D.; Pfeffer, M.A. Creation and adoption of large language models in medicine. JAMA. 2023, 330, 866–869. [Google Scholar] [CrossRef]

- Coiera, E. The fate of medicine in the time of AI. Lancet. 2018, 392, 2331–2332. [Google Scholar] [CrossRef] [PubMed]

- Azamfirei, R.; Kudchadkar, S.R.; Fackler, J. Large language models and the perils of their hallucinations. Crit Care. 2023, 27, 120. [Google Scholar] [CrossRef]

- Umapathi, L.K.; Pal, A.; Sankarasubbu, M. Med-HALT: medical domain hallucination test for large language models. In Proceedings of CoNLL 2023; 2023; pp. 314–334. [Google Scholar] [CrossRef]

- Harrer, S. Attention is not all you need: the complicated case of ethically using large language models in healthcare and medicine. EBioMedicine. 2023, 90, 104512. [Google Scholar] [CrossRef]

- Miao, J.; Thongprayoon, C.; Cheungpasitporn, W.; Miao, S.A.; Suppadungsuk, S.; Garcia Valencia, O.A.; et al. Implications of large language model hallucination in clinical practice. Mayo Clin Proc Digit Health. 2023, 1, 230–240. [Google Scholar] [CrossRef]

- Kim, Y.; Hua, K.; Singh, R.; Jain, S.; Suresh, H.; Stone, E.; et al. Medical hallucinations in foundation models and their impact on healthcare. arXiv 2025, arXiv:2503.05777. [Google Scholar] [CrossRef]

- Lee, P.; Bubeck, S.; Petro, J. Benefits, limits, and risks of GPT-4 as an AI chatbot for medicine. N Engl J Med. 2023, 388, 1233–1239. [Google Scholar] [CrossRef] [PubMed]

- Chelli, M.; Descamps, J.; Lavoue, V.; Trojani, C.; Azar, M.; Deckert, M.; et al. Hallucination rates and reference accuracy of ChatGPT and Bard for systematic reviews. J Med Internet Res. 2024, 26, e53164. [Google Scholar] [CrossRef]

- Omar, M.; Brin, D.; Glicksberg, B.; Klang, E. Multi-model assurance analysis showing large language models are highly vulnerable to adversarial hallucination attacks during clinical decision support. Commun Med. 2025. [Google Scholar] [CrossRef]

- Kambhampati, S.; Valmeekam, K.; Guan, L.; Stechly, M.; Verma, P.K.; Bhambri, S.; et al. LLMs can't plan, but can help planning in LLM-modulo frameworks. arXiv 2024, arXiv:2402.01817. [Google Scholar] [CrossRef]

- Patil, S.G.; Zhang, T.; Wang, X.; Gonzalez, J.E. Gorilla: large language model connected with massive APIs. arXiv 2023, arXiv:2305.15334. [Google Scholar] [CrossRef]

- Liu, N.F.; Lin, K.; Hewitt, J.; Paranjape, A.; Manning, C.D.; Liang, P. Lost in the middle: how language models use long contexts. Trans Assoc Comput Linguist. 2024, 12, 157–173. [Google Scholar] [CrossRef]

- Skitka, L.J.; Mosier, K.; Burdick, M.D. Does automation bias decision-making? Int J Hum Comput Stud. 1999, 51, 991–1006. [Google Scholar] [CrossRef]

- Perez, E.; Huang, S.; Song, F.; Cai, T.; Ring, R.; Aslanides, J.; et al. Red teaming language models with language models. arXiv 2022, arXiv:2202.03286. [Google Scholar] [CrossRef]

- Obermeyer, Z.; Powers, B.; Vogeli, C.; Mullainathan, S. Dissecting racial bias in an algorithm used to manage the health of populations. Science. 2019, 366, 447–453. [Google Scholar] [CrossRef]

- Reason, J. Human Error; Cambridge University Press: Cambridge, 1990. [Google Scholar]

- Reason, J. Human error: models and management. BMJ. 2000, 320, 768–770. [Google Scholar] [CrossRef]

- DeRosier, J.; Stalhandske, E.; Bagian, J.P.; Nudell, T. Using health care failure mode and effect analysis. Jt Comm J Qual Improv. 2002, 28, 248–267. [Google Scholar] [CrossRef]

- Spath, P.L. (Ed.) Error Reduction in Health Care: A Systems Approach to Improving Patient Safety, 2nd ed.; Jossey-Bass: San Francisco, 2011. [Google Scholar]

- Carayon, P.; Hundt, A.S.; Karsh, B.T.; Gurses, A.P.; Alvarado, C.J.; Smith, M.; et al. Work system design for patient safety: the SEIPS model. Qual Saf Health Care. 2006, 15, i50–i58. [Google Scholar] [CrossRef] [PubMed]

- Parasuraman, R.; Manzey, D.H. Complacency and bias in human use of automation: an attentional integration. Hum Factors. 2010, 52, 381–410. [Google Scholar] [CrossRef] [PubMed]

- Goddard, K.; Roudsari, A.; Wyatt, J.C. Automation bias: a systematic review of frequency, effect mediators, and mitigators. J Am Med Inform Assoc. 2012, 19, 121–127. [Google Scholar] [CrossRef]

- Lyell, D.; Coiera, E. Automation bias and verification complexity: a systematic review. J Am Med Inform Assoc. 2017, 24, 423–431. [Google Scholar] [CrossRef]

- Kitchenham, B.A.; Charters, S. Guidelines for performing systematic literature reviews in software engineering; Technical Report EBSE-2007-01; Keele University and Durham University, 2007. [Google Scholar]

- Gusenbauer, M. Beyond Google Scholar, Scopus, and Web of Science: an evaluation of the backward and forward citation coverage of 59 databases' citation indices. Res Synth Methods. 2024, 15, 802–817. [Google Scholar] [CrossRef] [PubMed]

- Braun, V.; Clarke, V. Using thematic analysis in psychology. Qual Res Psychol. 2006, 3, 77–101. [Google Scholar] [CrossRef]

- Magrabi, F.; Ong, M.S.; Runciman, W.; Coiera, E. Using FDA reports to inform a classification for health information technology safety problems. J Am Med Inform Assoc. 2012, 19, 45–53. [Google Scholar] [CrossRef]

- Arksey, H.; O'Malley, L. Scoping studies: towards a methodological framework. Int J Soc Res Methodol. 2005, 8, 19–32. [Google Scholar] [CrossRef]

- Kelly, C.J.; Karthikesalingam, A.; Suleyman, M.; Corrado, G.; King, D. Key challenges for delivering clinical impact with artificial intelligence. BMC Med. 2019, 17, 195. [Google Scholar] [CrossRef]

- Topol, E.J. Welcoming new medical imaging AI. Lancet. 2022, 400, 2148–2150. [Google Scholar]

- Achiam, J.; Adler, S.; Agarwal, S.; et al. GPT-4 technical report. arXiv 2023, arXiv:2303.08774. [Google Scholar] [CrossRef]

- Tu, T.; Palepu, A.; Schaekermann, M.; Saab, K.; Freyberg, J.; Tanno, R.; et al. Towards conversational diagnostic AI. arXiv 2024, arXiv:2401.05654. [Google Scholar] [CrossRef]

- Liu, X.; Faes, L.; Kale, A.U.; Wagner, S.K.; Fu, D.J.; Bruynseels, A.; et al. A comparison of deep learning performance against health-care professionals in detecting diseases from medical imaging. Lancet Digit Health. 2019, 1, e271–e297. [Google Scholar] [CrossRef]

- Baxter, S.L.; Saseendrakumar, B.R.; Karri, S.; Kim, J.; Ye, Z.; Hsu, C.Y.; et al. Limitations and challenges of using artificial intelligence in electronic health records. Pac Symp Biocomput. 2022, 27, 312–323. [Google Scholar]

- Goldstein, B.A.; Navar, A.M.; Pencina, M.J.; Ioannidis, J.P.A. Opportunities and challenges in developing risk prediction models with electronic health records data. J Am Med Inform Assoc. 2017, 24, 198–208. [Google Scholar] [CrossRef] [PubMed]

- Singhal, K.; Tu, T.; Gottweis, J.; Sayres, R.; Wulczyn, E.; Hou, L.; et al. Toward expert-level medical question answering with large language models. Nat Med. 2025, 31, 76–88. [Google Scholar] [CrossRef]

- Omiye, J.A.; Gui, H.; Tanjuma, F.; Daneshjou, R. Large language models in medicine: the potentials and pitfalls. Ann Intern Med. 2024, 177, 210–220. [Google Scholar] [CrossRef] [PubMed]

- Hong, S.; Zheng, X.; Chen, J.; Cheng, Y.; Wang, J.; Zhang, C.; et al. MetaGPT: meta programming for a multi-agent collaborative framework. arXiv 2023, arXiv:2308.00352. [Google Scholar] [CrossRef]

- Zhang, S.; Xu, Y.; Usuyama, N.; Bagga, J.; Tinn, R.; Preston, S.; et al. BiomedGPT: a generalist vision-language foundation model for diverse biomedical tasks. arXiv 2023, arXiv:2305.17100. [Google Scholar] [CrossRef]

- Yang, H.; Yue, S.; He, Y. Auto-GPT for online decision making: benchmarks and additional opinions. arXiv 2023, arXiv:2306.02224. [Google Scholar] [CrossRef]

- Majkowska, A.; Mittal, S.; Steiner, D.F.; Reicher, J.J.; McKinney, S.M.; Duggan, G.E.; et al. Chest radiograph interpretation with deep learning models. Radiology. 2020, 294, 421–431. [Google Scholar] [CrossRef]

- Larson, D.B.; Harvey, H.; Rubin, D.L.; Irani, N.; Justin, R.T.; Langlotz, C.P. Regulatory frameworks for development and evaluation of artificial intelligence-based diagnostic imaging algorithms. J Am Coll Radiol. 2021, 18, 413–424. [Google Scholar] [CrossRef]

- Valmeekam, K.; Olmo, A.; Sreedharan, S.; Kambhampati, S. Large language models still can't plan. arXiv 2022, arXiv:2206.10498. [Google Scholar] [CrossRef]

- Mialon, G.; Dessì, R.; Lomeli, M.; Nalmpantis, C.; Pasunuru, R.; Raileanu, R.; et al. Augmented language models: a survey. arXiv 2023, arXiv:2302.07842. [Google Scholar] [CrossRef]

- Shi, F.; Chen, X.; Misra, K.; Scales, N.; Dohan, D.; Chi, E.; et al. Large language models can be easily distracted by irrelevant information. In Proceedings of ICML 2023; 2023; pp. 31210–31227. [Google Scholar]

- Zhang, Y.; Li, Y.; Cui, L.; Cai, D.; Liu, L.; Fu, T.; et al. Siren's song in the AI ocean: a survey on hallucination in large language models. arXiv 2023, arXiv:2309.01219. [Google Scholar] [CrossRef]

- Roustan, D.; Bastardot, F. The clinicians' guide to large language models: a general perspective with a focus on hallucinations. Interact J Med Res. 2025, 14, e59823. [Google Scholar] [CrossRef]

- Van Veen, D.; Van Molle, C.; Rios, J.; Hayashi, C.; Bastani, O.; Langlotz, C.P.; et al. Adapted large language models can outperform medical experts in clinical text summarization. Nat Med. 2024, 30, 1134–1142. [Google Scholar] [CrossRef]

- Lewis, P.; Perez, E.; Piktus, A.; Petroni, F.; Karpukhin, V.; Goyal, N.; et al. Retrieval-augmented generation for knowledge-intensive NLP tasks. Adv Neural Inf Process Syst. 2020, 33, 9459–9474. [Google Scholar] [CrossRef]

- Bender, E.M.; Gebru, T.; McMillan-Major, A.; Shmitchell, S. On the dangers of stochastic parrots: can language models be too big? In Proceedings of ACM FAccT 2021; 2021; pp. 610–623. [Google Scholar] [CrossRef]

- Frieder, S.; Pinchetti, L.; Griffiths, R.R.; Salvatori, T.; Lukasiewicz, T.; Petersen, P.C.; et al. Mathematical capabilities of ChatGPT. arXiv 2023, arXiv:2301.13379. [Google Scholar] [CrossRef]

- Katz, D.M.; Bommarito, M.J.; Guo, S.; Crabb, P. GPT-4 passes the bar exam. Philos Trans R Soc A. 2024, 382, 20230254. [Google Scholar] [CrossRef] [PubMed]

- Stechly, M.; Valmeekam, K.; Kambhampati, S. On the self-verification limitations of large language models on reasoning and planning tasks. arXiv 2024, arXiv:2402.08115. [Google Scholar] [CrossRef]

- Huang, J.; Chang, K.C.C. Towards reasoning in large language models: a survey. arXiv 2022, arXiv:2212.10403. [Google Scholar] [CrossRef]

- Lanham, T.; Chen, A.; Wang, T.; Angelica, M.; Goldowsky-Dill, N.; Hernandez, D.; et al. Measuring faithfulness in chain-of-thought reasoning. arXiv 2023, arXiv:2307.13702. [Google Scholar] [CrossRef]

- Tonmoy, S.M.T.I.; Zaman, S.; Jain, V.; Rani, A.; Rawte, V.; Chadha, A.; et al. A comprehensive survey of hallucination mitigation techniques in large language models. In Findings EMNLP 2024; 2024. [Google Scholar] [CrossRef]

- Shen, Y.; Song, K.; Tan, X.; Li, D.; Lu, W.; Zhuang, Y. HuggingGPT: solving AI tasks with ChatGPT and its friends in HuggingFace. Adv Neural Inf Process Syst. 2023, 36. [Google Scholar] [CrossRef]

- Qin, Y.; Liang, S.; Ye, Y.; Zhu, K.; Yan, L.; Lu, Y.; et al. ToolLLM: facilitating large language models to master 16000+ real-world APIs. arXiv 2023, arXiv:2307.16789. [Google Scholar] [CrossRef]

- Weng, L.; Liao, Z.; Oikarinen, T.; Kolter, J.Z. Large language models are not robust multiple choice selectors. arXiv 2023, arXiv:2309.03882. [Google Scholar] [CrossRef]

- Liang, P.; Bommasani, R.; Lee, T.; Tsipras, D.; Soylu, D.; Yasunaga, M.; et al. Holistic evaluation of language models. arXiv 2022, arXiv:2211.09110. [Google Scholar] [CrossRef]

- Gao, L.; Ma, X.; Lin, J.; Callan, J. Precise zero-shot dense retrieval without relevance labels. arXiv 2022, arXiv:2212.10496. [Google Scholar] [CrossRef]

- Bussone, A.; Stumpf, S.; O'Sullivan, D. The role of explanations on trust and reliance in clinical decision support systems. In Proceedings of IEEE ICHI 2015; 2015; pp. 160–169. [Google Scholar] [CrossRef]

- Greshake, K.; Abdelnabi, S.; Mishra, S.; Endres, C.; Holz, T.; Fritz, M. Not what you've signed up for: compromising real-world LLM-integrated applications with indirect prompt injection. arXiv 2023, arXiv:2302.12173. [Google Scholar] [CrossRef]

- Perez, F.; Ribeiro, I. Ignore previous prompt: attack techniques for language models. arXiv 2022, arXiv:2211.09527. [Google Scholar] [CrossRef]

- Finlayson, S.G.; Bowers, J.D.; Ito, J.; Zittrain, J.L.; Beam, A.L.; Kohane, I.S. Adversarial attacks on medical machine learning. Science. 2019, 363, 1287–1289. [Google Scholar] [CrossRef]

- Nestor, B.; McDermott, M.B.A.; Boag, W.; Berner, G.; Naumann, T.; Hughes, M.C.; et al. Feature robustness in non-stationary health records: caveats to deployable model performance. arXiv 2019, arXiv:1908.00690. [Google Scholar] [CrossRef]

- Zou, J.; Schiebinger, L. AI can be sexist and racist — it's time to make it fair. Nature. 2018, 559, 324–326. [Google Scholar] [CrossRef]

- Chen, I.Y.; Joshi, S.; Ghassemi, M. Treating health disparities with artificial intelligence. Nat Med. 2020, 26, 16–17. [Google Scholar] [CrossRef]

- Char, D.S.; Shah, N.H.; Magnus, D. Implementing machine learning in health care — addressing ethical challenges. N Engl J Med. 2018, 378, 981–983. [Google Scholar] [CrossRef]

- Vincent, C.; Taylor-Adams, S.; Stanhope, N. Framework for analysing risk and safety in clinical medicine. BMJ. 1998, 316, 1154–1157. [Google Scholar] [CrossRef] [PubMed]

- Slight, S.P.; Seger, D.L.; Nanji, K.C.; Cho, I.; Volk, L.A.; Bates, D.W.; et al. Are we heeding the warning signs? Examining providers' overrides of computerized drug-drug interaction alerts in primary care. PLoS One. 2013, 8, e85071. [Google Scholar] [CrossRef]

- Berner, E.S.; Graber, M.L. Overconfidence as a cause of diagnostic error in medicine. Am J Med. 2008, 121, S2–S23. [Google Scholar] [CrossRef] [PubMed]

- Weidinger, L.; Mellor, J.; Rauh, M.; Griffin, C.; Uesato, J.; Huang, P.S.; et al. Ethical and social risks of harm from language models. arXiv 2021, arXiv:2112.04359. [Google Scholar] [CrossRef]

- Guu, K.; Lee, K.; Tung, Z.; Pasupat, P.; Chang, M.W. REALM: retrieval-augmented language model pre-training. Proceedings of ICML 2020 2020. [Google Scholar] [CrossRef]

- Shi, W.; Min, S.; Yasunaga, M.; Seo, M.; James, R.; Lewis, M.; et al. REPLUG: retrieval-augmented black-box language models. arXiv 2023, arXiv:2301.12652. [Google Scholar] [CrossRef]

- Amershi, S.; Weld, D.; Vorvoreanu, M.; Fourney, A.; Nushi, B.; Collisson, P.; et al. Software engineering for machine learning: a case study. In Proceedings of ICSE-SEIP 2019; 2019; pp. 291–300. [Google Scholar] [CrossRef]

- Shneiderman, B. Human-centered AI. Issues Sci Technol. 2021, 37, 56–61. [Google Scholar]

- Bai, Y.; Jones, A.; Ndousse, K.; Askell, A.; Chen, A.; DasSarma, N.; et al. Training a helpful and harmless assistant with reinforcement learning from human feedback. arXiv 2022, arXiv:2204.05862. [Google Scholar] [CrossRef]

- Bai, Y.; Kadavath, S.; Kundu, S.; Askell, A.; Kernion, J.; Jones, A.; et al. Constitutional AI: harmlessness from AI feedback. arXiv 2022, arXiv:2212.08073. [Google Scholar] [CrossRef]

- Lipton, Z.C. The mythos of model interpretability. Queue. 2018, 16, 31–57. [Google Scholar] [CrossRef]

- Ganguli, D.; Lovitt, L.; Kernion, J.; Askell, A.; Bai, Y.; Kadavath, S.; et al. Red teaming language models to reduce harms: methods, scaling behaviors, and lessons learned. arXiv 2022, arXiv:2209.07858. [Google Scholar] [CrossRef]

- US Food and Drug Administration. Artificial intelligence and machine learning (AI/ML)-based software as a medical device (SaMD) action plan. Silver Spring: FDA. 2021. Available online: https://www.fda.gov/media/145022/download.

- European Parliament and Council. Regulation (EU) 2024/1689 laying down harmonised rules on artificial intelligence (Artificial Intelligence Act). Off J Eur Union. 2024. [Google Scholar] [CrossRef]

- World Health Organization. Ethics and governance of artificial intelligence for health: WHO guidance; WHO: Geneva, 2021; Available online: https://www.who.int/publications/i/item/9789240029200ISBN 978-92-4-002324-6.

| Failure Mode | Level 1 | Level 2 | Level 3 | Level 4 | Clinical Example | Key References |

|---|---|---|---|---|---|---|

| Reasoning | Yes | Yes | Yes | Yes | Agent correctly identifies elevated inflammatory markers but incorrectly rules out sepsis | [91,92,93] |

| Hallucination | Yes | Yes | Yes | Yes | Agent fabricates drug dosage that clinician acts on without verification | [44,84,94] |

| Tool Misuse | Yes | Yes | Yes | No | Agent queries wrong drug interaction database and retrieves inapplicable result | [95,96,97] |

| Memory | Yes | Yes | Yes | No | Agent loses earlier allergy documentation across long interaction horizon | [50,98,99] |

| Automation Bias | No | Yes | Yes | Yes | Experienced clinician accepts incorrect AI triage recommendation without independent verification | [59,60,100] |

| Adversarial | No | Yes | Yes | Yes | Prompt injection attack embedded in clinical note redirects agent to bypass safety guardrail | [101,102,103] |

| Equity and Bias | No | Yes | Yes | Yes | Agent trained on majority population data systematically underestimates pain scores for minority patients | [53,105,106,107] |

| Mitigation Strategy | Failure Modes Addressed | Evidence Strength | Key Limitations | Key References |

|---|---|---|---|---|

| Retrieval Augmented Generation | Hallucination, Reasoning, Tool Misuse (partial) | Moderate to Strong | Does not eliminate contextual or numerical hallucination; introduces retrieval failure pathways | [87,112,113] |

| Human in the Loop Design | All seven failure modes | Strong | Negates efficiency benefits if overused; automation bias reduces effectiveness of human checkpoints | [28,114,115] |

| Constitutional AI and RLHF | Hallucination, Reasoning, Equity and Bias | Moderate | Training time intervention only; does not address runtime distributional failures | [116,117] |

| Red Teaming and Adversarial Testing | Adversarial, Reasoning, Hallucination, Tool Misuse | Moderate | No standardised clinical protocol exists; point in time evaluation only | [119] |

| FDA Regulatory Framework | Reasoning, Hallucination, Equity and Bias (partial) | Weak to Moderate | Does not adequately address continuous learning or autonomous action | [120] |

| EU AI Act | All high risk AI failure modes | Moderate | Implementation timeline ongoing; technical compliance requirements still being defined | [121] |

| WHO AI Ethics Guidance | Equity and Bias, Reasoning, Automation Bias | Weak | Non binding; insufficient operational detail for clinical agentic system compliance | [122] |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).