Submitted:

18 April 2026

Posted:

20 April 2026

You are already at the latest version

Abstract

Keywords:

Introduction

Methodology Foundations and Motivation

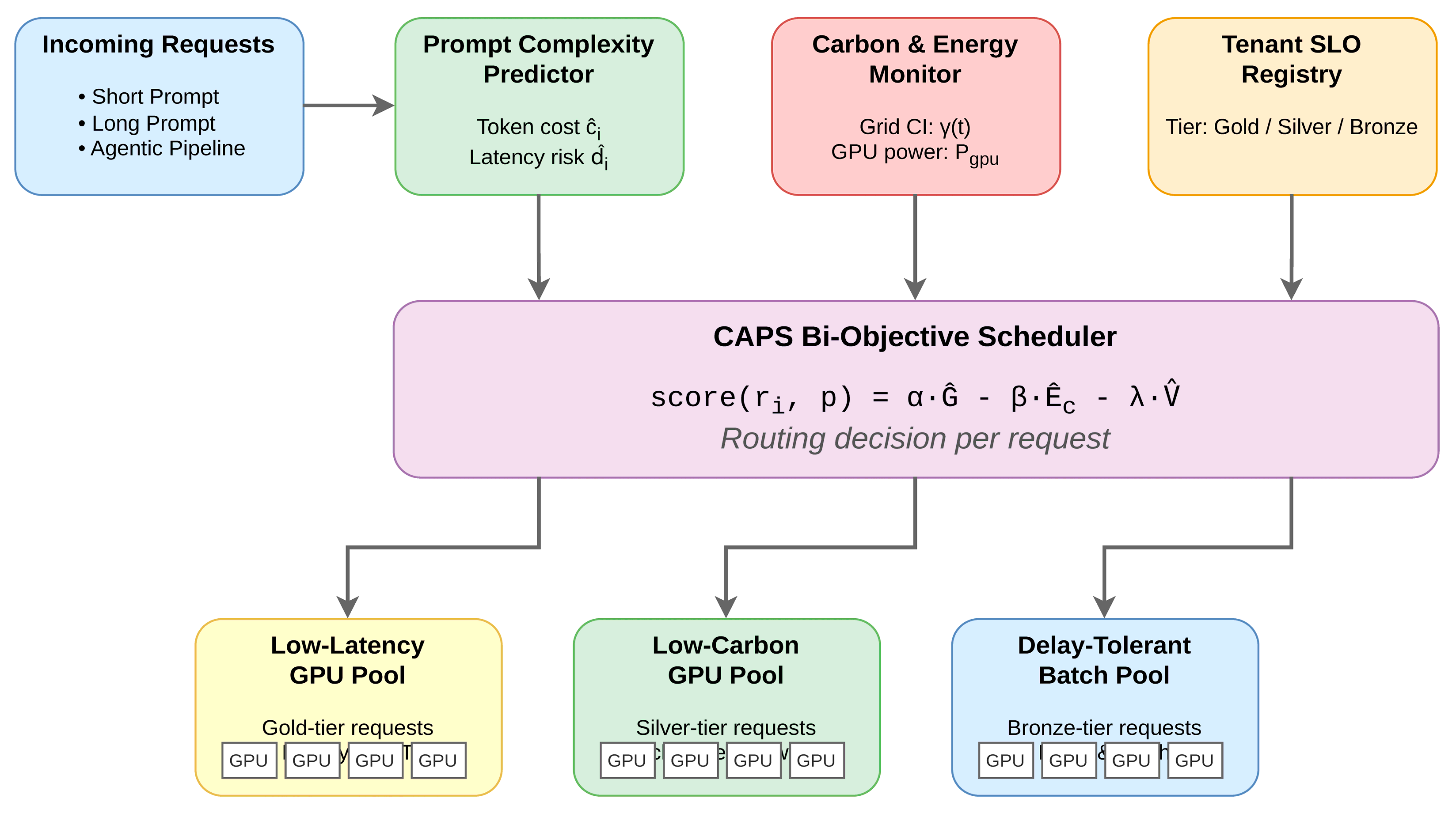

System Design

Prompt Complexity Predictor

Carbon and Energy Monitor

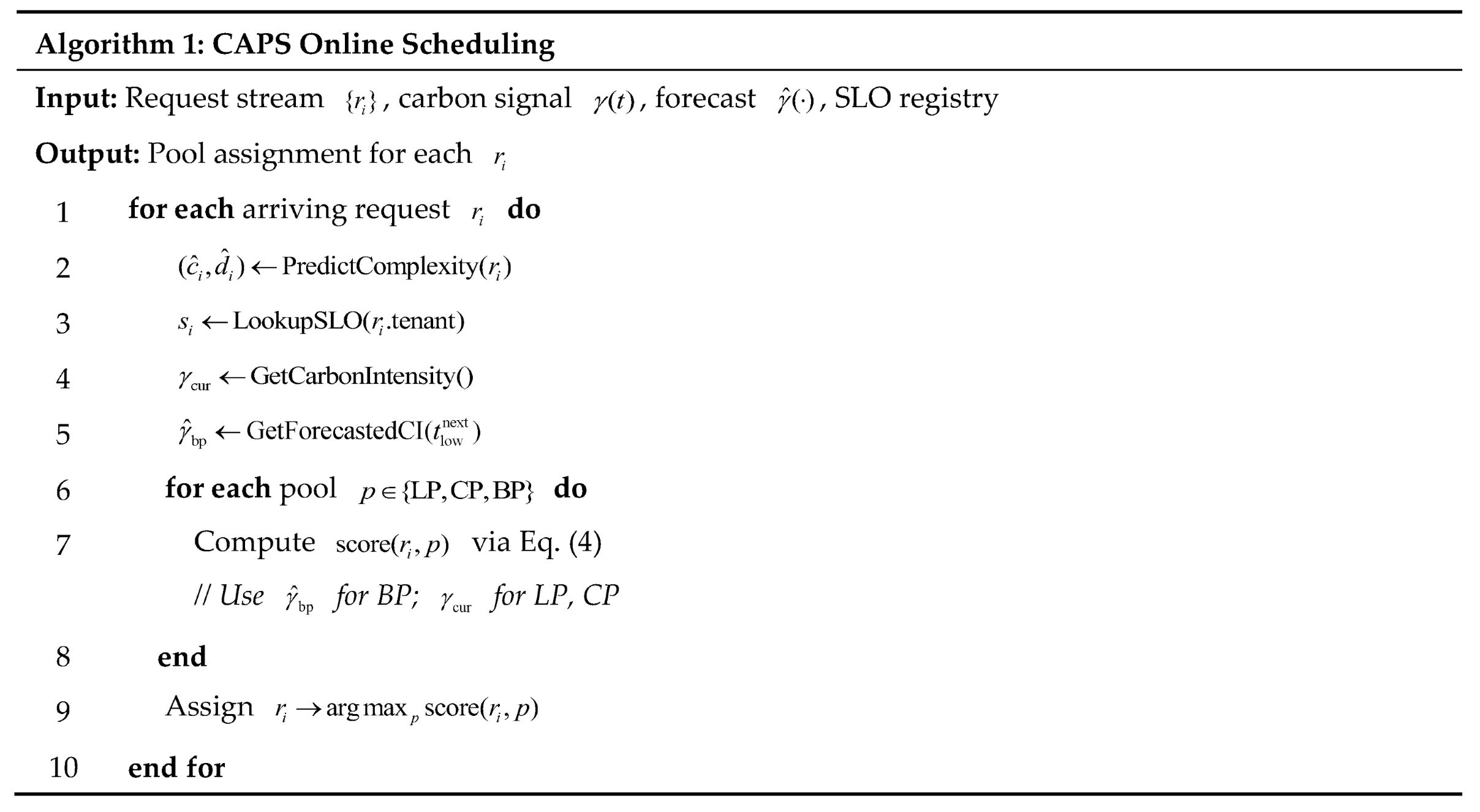

Bi-Objective Scheduler and Pool Routing

Experimental Evaluation

Setup

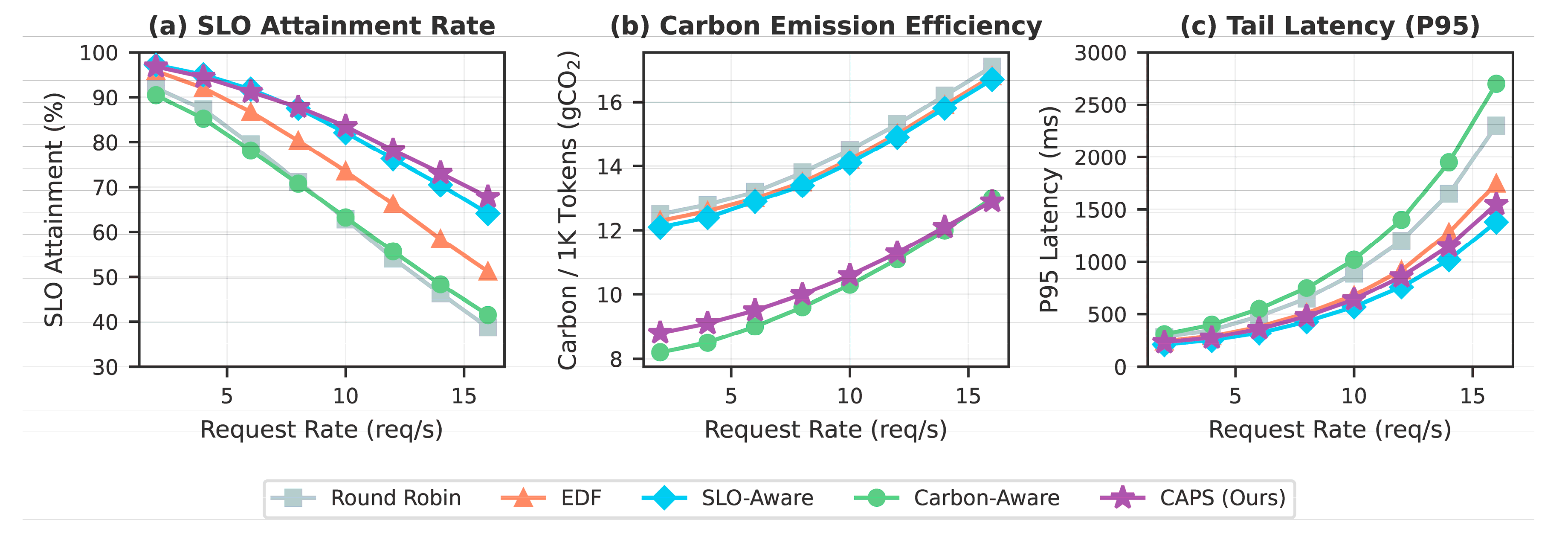

Main Results

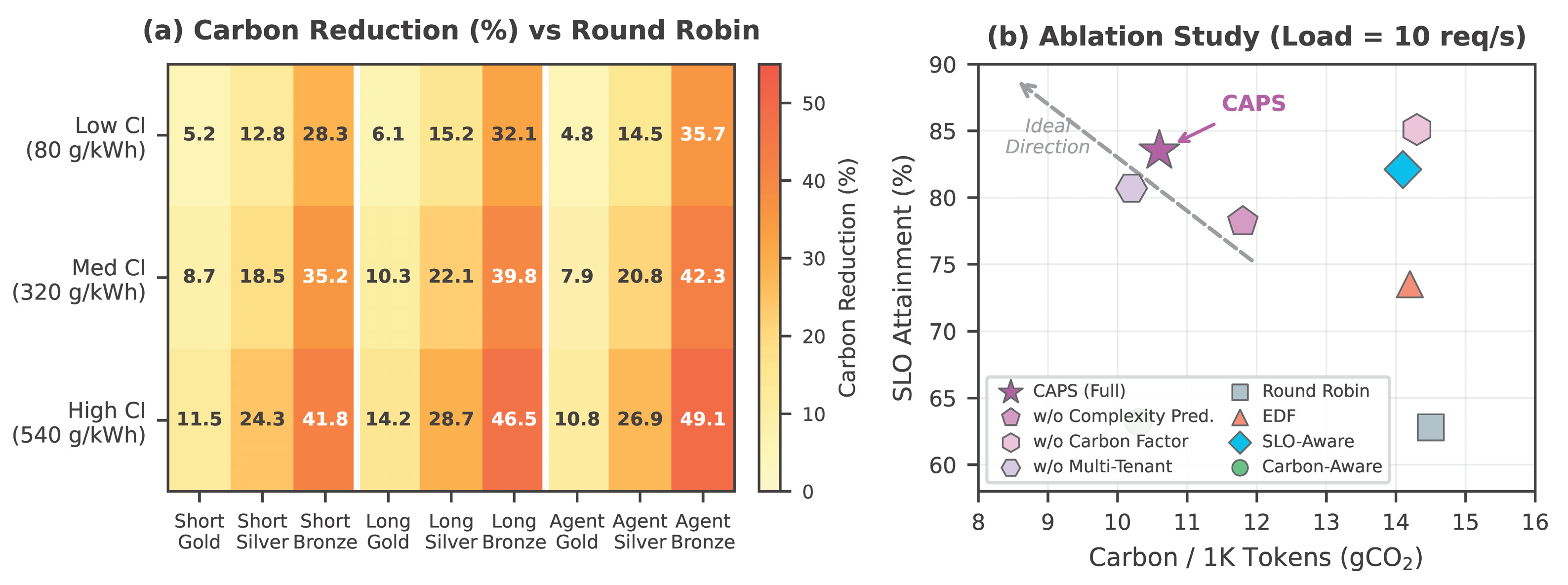

Impact of Carbon Intensity and Request Type

Ablation Study

Discussion and Limitations

Conclusions

References

- Wu, C.-J.; Raghavendra, R.; Gupta, U.; Acun, B.; Ardalani, N.; Maeng, K.; Chang, G.; Aga, F.; Huang, J.; Bai, C. Sustainable AI: Environmental implications, challenges and opportunities. Proceedings of Machine Learning and Systems 2022, vol. 4, 795–813. [Google Scholar]

- Patel, P.; Choukse, E.; Zhang, C.; Goiri, Í; Warrier, B.; Mahalingam, N.; Bianchini, R. Characterizing power management opportunities for LLMs in the cloud. In Proceedings of the 29th ACM International Conference on Architectural Support for Programming Languages and Operating Systems, 2024; pp. 207–222. [Google Scholar]

- Dodge, J.; Prewitt, T.; Tachet des Combes, R.; Odmark, E.; Schwartz, R.; Strubell, E.; Luccioni, A.S.; Smith, N.A.; DeCario, N.; Buchanan, W. Measuring the carbon intensity of AI in cloud instances. In Proceedings of the 2022 ACM Conference on Fairness, Accountability, and Transparency, 2022; pp. 1877–1894. [Google Scholar]

- Hanafy, W.A.; Liang, Q.; Bashir, N.; Irwin, D.; Shenoy, P. CarbonScaler: Leveraging cloud workload elasticity for optimizing carbon-efficiency. Proceedings of the ACM on Measurement and Analysis of Computing Systems 2023, 7(no. 3), 1–28. [Google Scholar] [CrossRef]

- Xu, K.; Sun, D.; Tian, H.; Zhang, J.; Chen, K. GREEN: Carbon-efficient resource scheduling for machine learning clusters. 22nd USENIX Symposium on Networked Systems Design and Implementation (NSDI 25), 2025; pp. 999–1014. [Google Scholar]

- Chadha, M.; Subramanian, T.; Arima, E.; Gerndt, M.; Schulz, M.; Abboud, O. GreenCourier: Carbon-aware scheduling for serverless functions. In Proceedings of the 9th International Workshop on Serverless Computing, 2023; pp. 18–23. [Google Scholar]

- Acun, B.; Lee, B.; Kazhamiaka, F.; Maeng, K.; Gupta, U.; Chakkaravarthy, M.; Brooks, D.; Wu, C.-J. Carbon Explorer: A holistic framework for designing carbon-aware datacenters. In Proceedings of the 28th ACM International Conference on Architectural Support for Programming Languages and Operating Systems, 2023; pp. 118–132. [Google Scholar]

- Yu, G.-I.; Jeong, J.S.; Kim, G.-W.; Kim, S.; Chun, B.-G. Orca: A distributed serving system for transformer-based generative models. 16th USENIX Symposium on Operating Systems Design and Implementation (OSDI 22), 2022; pp. 521–538. [Google Scholar]

- Kwon, W.; Li, Z.; Zhuang, S.; Sheng, Y.; Zheng, L.; Yu, C.H.; Gonzalez, J.; Zhang, H.; Stoica, I. Efficient memory management for large language model serving with PagedAttention. In Proceedings of the 29th Symposium on Operating Systems Principles, 2023; pp. 611–626. [Google Scholar]

- Agrawal, A.; Kedia, N.; Panwar, A.; Mohan, J.; Kwatra, N.; Gulavani, B.; Tumanov, A.; Ramjee, R. Taming throughput-latency tradeoff in LLM inference with Sarathi-Serve. 18th USENIX Symposium on Operating Systems Design and Implementation (OSDI 24), 2024; pp. 117–134. [Google Scholar]

- Patel, P.; Choukse, E.; Zhang, C.; Shah, A.; Goiri, Í; Maleki, S.; Bianchini, R. Splitwise: Efficient generative LLM inference using phase splitting. 2024 ACM/IEEE 51st Annual International Symposium on Computer Architecture (ISCA), 2024; pp. 118–132. [Google Scholar]

- Zheng, L.; Yin, L.; Xie, Z.; Sun, C.; Huang, J.; Yu, C.H.; Cao, S.; Kozyrakis, C.; Stoica, I.; Gonzalez, J.E. SGLang: Efficient execution of structured language model programs. Advances in Neural Information Processing Systems 2024, vol. 37, 62557–62583. [Google Scholar]

- Maji, D.; Shenoy, P.; Sitaraman, R.K. CarbonCast: Multi-day forecasting of grid carbon intensity. In Proceedings of the 9th ACM International Conference on Systems for Energy-Efficient Buildings, Cities, and Transportation, 2022; pp. 198–207. [Google Scholar]

- Yang, X.; Li, S.; Wu, K.; Wang, Z.; Tang, Y.; Li, Y. Adaptive anomaly detection in microservice systems via meta-learning. 2026. [Google Scholar] [CrossRef]

- Chen, N.; Zhang, Y.; Wang, W.; Pan, Z.; Wang, Y.; Lu, Y. CoReAD: Context-aware retrieval-augmented deep anomaly detection for evolving business tabular data. 2026. [Google Scholar]

- Jiang, H.; Qin, F.; Cao, J.; Peng, Y.; Shao, Y. Recurrent neural network from adder’s perspective: Carry-lookahead RNN. Neural Networks 2021, 144, 297–306. [Google Scholar] [CrossRef] [PubMed]

- Zhang, X.; Wang, Q.; Wang, X. Joint cross-modal representation learning of ECG waveforms and clinical reports for diagnostic classification. Transactions on Computational and Scientific Methods 2026, 6(no. 2). [Google Scholar]

- Chen, N.; Zhang, Y.; Wang, W.; Pan, Z.; Wang, Y.; Lu, Y. CoReAD: Context-aware retrieval-augmented deep anomaly detection for evolving business tabular data. 2026. [Google Scholar]

- Huang, J.; Zhan, J.; Wang, Q.; Jia, J.; Zhang, B. Stable fault diagnosis under data imbalance via self-supervised learning in industrial IoT. 2026. [Google Scholar] [CrossRef]

- Wen, C.; Zhu, A.; Long, R.; Huang, H.; Jiang, J.; Lee, C.S. CalibJudge: Calibrated LLM-as-a-judge for multilingual RAG with uncertainty-aware scoring. 2026. [Google Scholar]

- Zhang, C.; Zhu, H.; Zhu, A.; Liao, J.; Xiao, Y.; Zhang, Z. Deep learning approach for protocol anomaly detection using status code sequences. 2026. [Google Scholar] [CrossRef]

- Yang, X.; Sun, S.; Li, Y.; Xing, Y.; Wang, M.; Wang, Y. CaliCausalRank: Calibrated multi-objective ad ranking with robust counterfactual utility optimization. arXiv 2026, arXiv:2602.18786. [Google Scholar]

- Lyu, N.; Wang, Y.; Cheng, Z.; Zhang, Q.; Chen, F. Multi-objective adaptive rate limiting in microservices using deep reinforcement learning. In Proceedings of the 4th International Conference on Artificial Intelligence and Intelligent Information Processing, 2025; pp. 862–869. [Google Scholar]

- Huang, Z.; Yang, J.; Li, S.; Zhang, C.; Chen, J.; Xu, C. Shared representation learning for high-dimensional multi-task forecasting under resource contention in cloud-native backends. arXiv 2025, arXiv:2512.21102. [Google Scholar]

- Jiang, J.; Shao, C.; Zhang, C.; Lyu, N.; Ni, Y. Adaptive AI spatiotemporal modeling with dependency drift awareness for anomaly detection in large-scale clusters. 2025. [Google Scholar] [PubMed]

- Wang, Y.; Yan, R.; Xiao, Y.; Li, J.; Zhang, Z.; Wang, F. Memory-driven agent planning for long-horizon tasks via hierarchical encoding and dynamic retrieval. 2025. [Google Scholar]

- Zhuang, H.; Lyu, N.; Wei, R.; Huang, W.; Kou, J.; Huang, W. TokenPool-Scheduler: Token-level GPU pooling and resource slicing for multi-model co-location. 2026. [Google Scholar]

- Zeng, K.; Huang, Z.; Yang, Y.; Meng, R.; Huang, S.Y.; Zhang, X. TokenFlow: Token-level GPU sharing and adaptive scheduling for multi-model concurrent LLM inference. Environments vol. 21, 24.

- Zhu, A.; Liu, W.; Li, Z.; Wen, C.; Qiu, J.; Liu, Z. ArcheScale-Guard: Archetype-aware predictive autoscaling with uncertainty quantification for serverless computing. 2026. [Google Scholar]

| Method | SLO Att. (%) ↑ |

Carbon/1K ↓ |

P95 Lat. (ms) ↓ |

Carbon/Goodput ↓ |

|---|---|---|---|---|

| Round Robin | 62.8 | 14.5 | 890 | 23.1 |

| EDF | 73.5 | 14.2 | 680 | 19.3 |

| SLO-Aware | 82.1 | 14.1 | 570 | 17.2 |

| Carbon-Aware | 63.2 | 10.3 | 1020 | 16.3 |

| CAPS | 83.5 | 10.6 | 640 | 12.7 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.