Submitted:

19 April 2026

Posted:

21 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Self-Models

“I can run faster than you.”

- Domain: The functional area covered (e.g., kinematics).

- Conceptual Breadth (B): The range of features tracked (e.g., running and jumping performance).

- Conceptual Depth (D): The granularity of information (e.g., statistics on peak velocity or endurance).

- Reasoning Power (P): The performance of the inference engine operating on the model to predict the future (e.g., extrapolating performance to a future race).

3. Scoring Self-Models

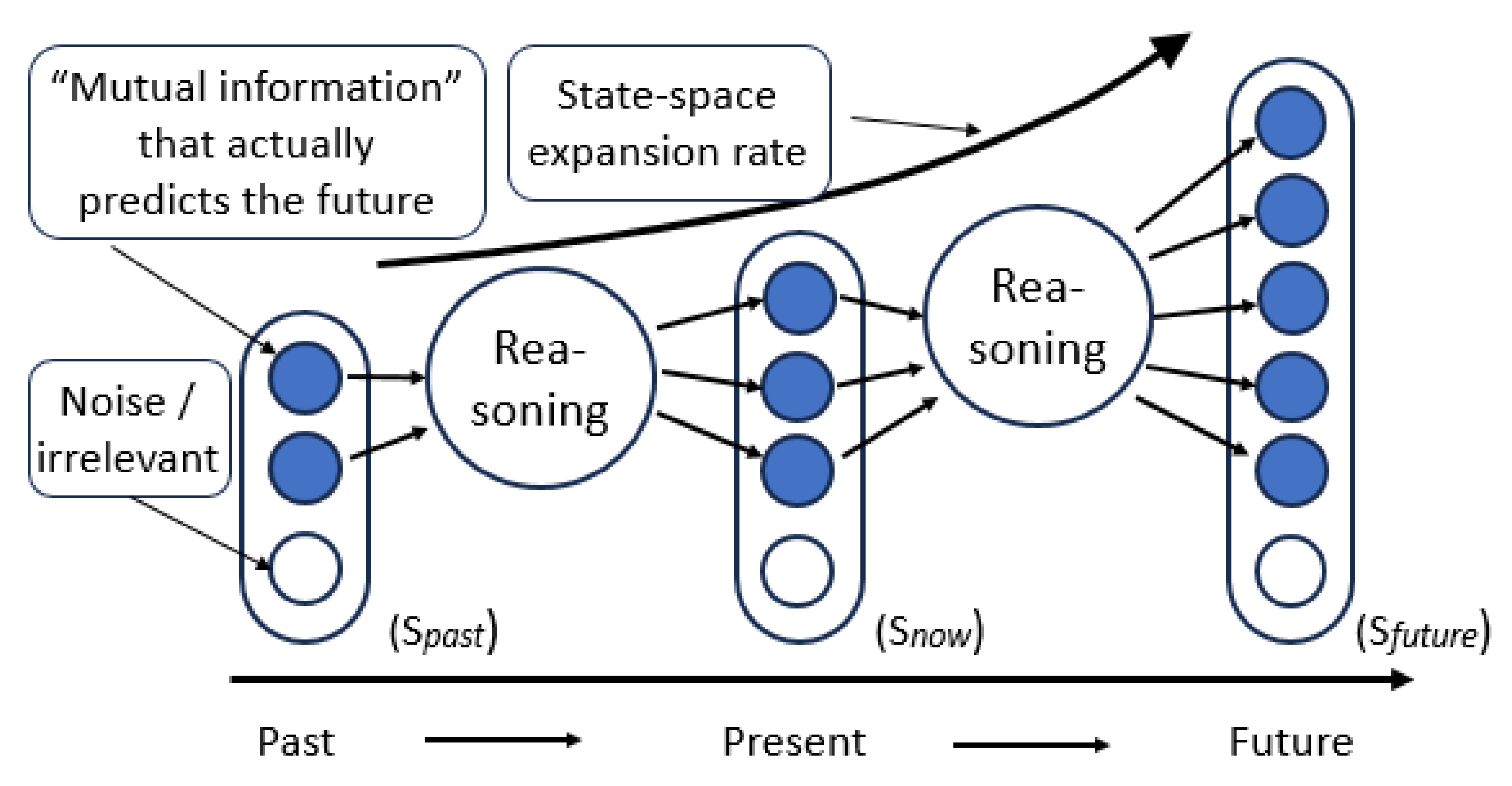

- (Representational Capacity) is the sum of mutual information between each independent self-model variable and the corresponding system state variable .

- (Reasoning Power) is measured as the state-space expansion rate per reasoning cycle—the rate at which distinguishable, reachable states grow through inference over time.

- (Breadth) is the effective dimensionality: the number of variables tracked above a noise threshold .

- (Depth) is the average mutual information per tracked variable: .

4. Combining Multiple Self-Models

5. Scoring the Waymo L4 Spatial/Kinematic Self-Model

- Kinematic Self-Model: 12 variables (Position ; Velocity ; Acceleration ; Angular rates ).

- Actuator Self-Model: 8 variables (Steering torque, braking pressure, wheel rotational speeds).

- Task/Trajectory Self-Model: ~20 variables (Active path spline parameters, distance-to-lane-center, time-to-arrival, collision buffers).

- Initial State (): 560 bits.

- Horizon (): The system simulates thousands of branched futures based on candidate actions and actor interactions.

- Expansion Factor: If the inference engine generates a probability distribution over distinguishable and reachable future states, the expansion rate is the bit-growth of this set.

6. Self-Model Evaluation Comparison Table

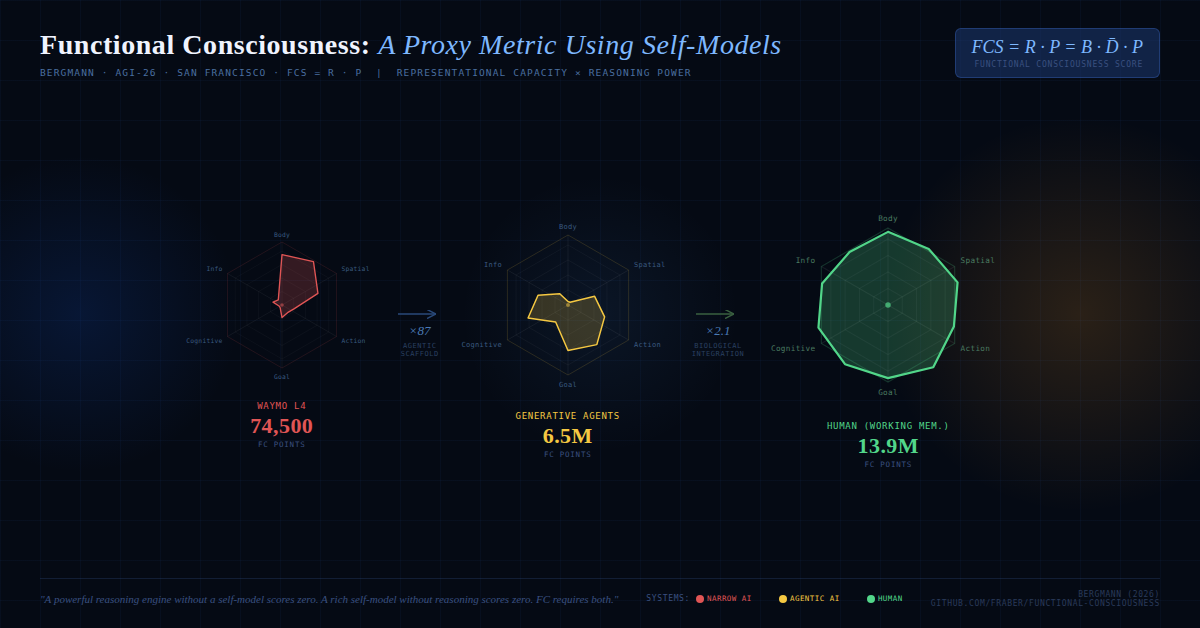

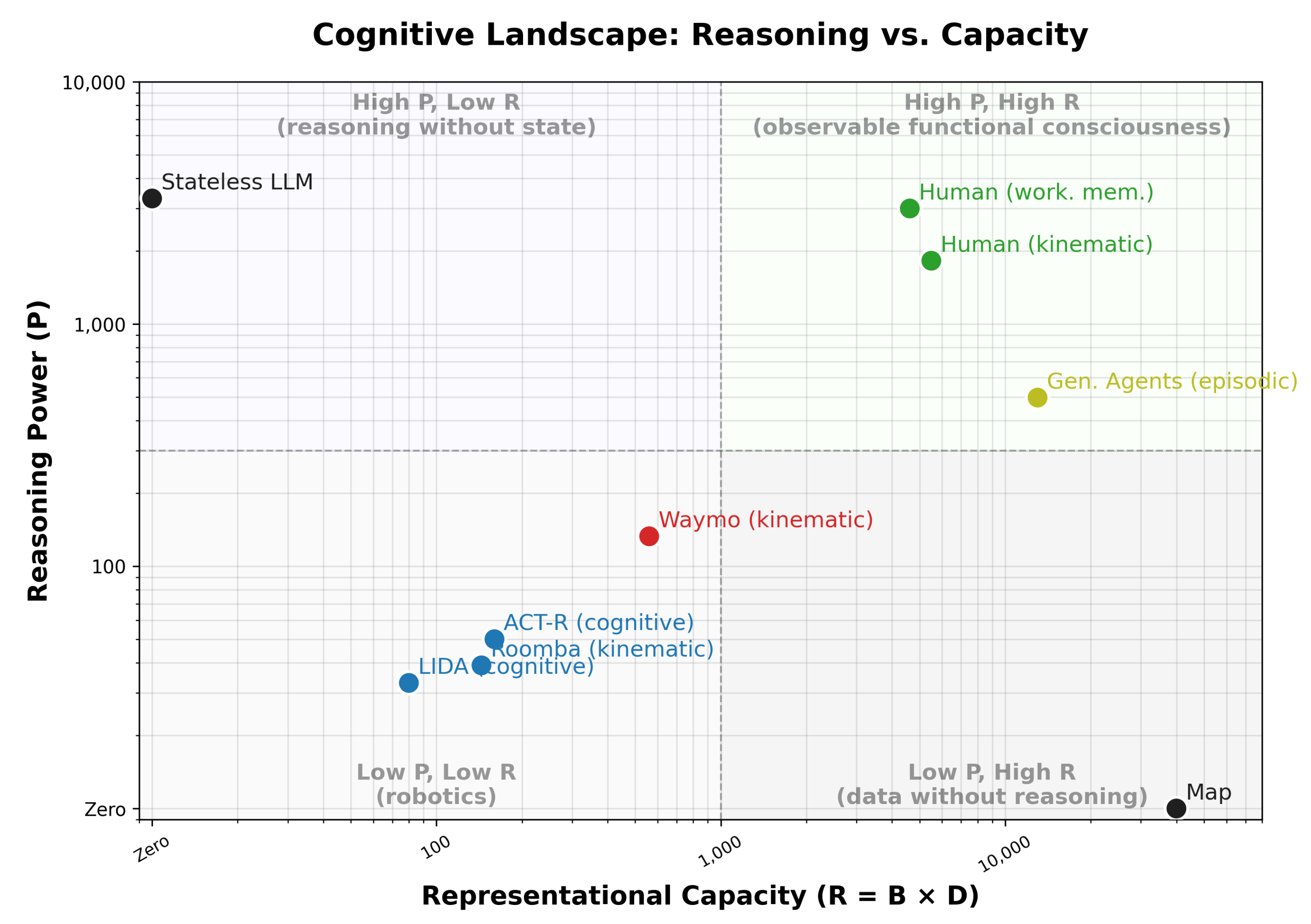

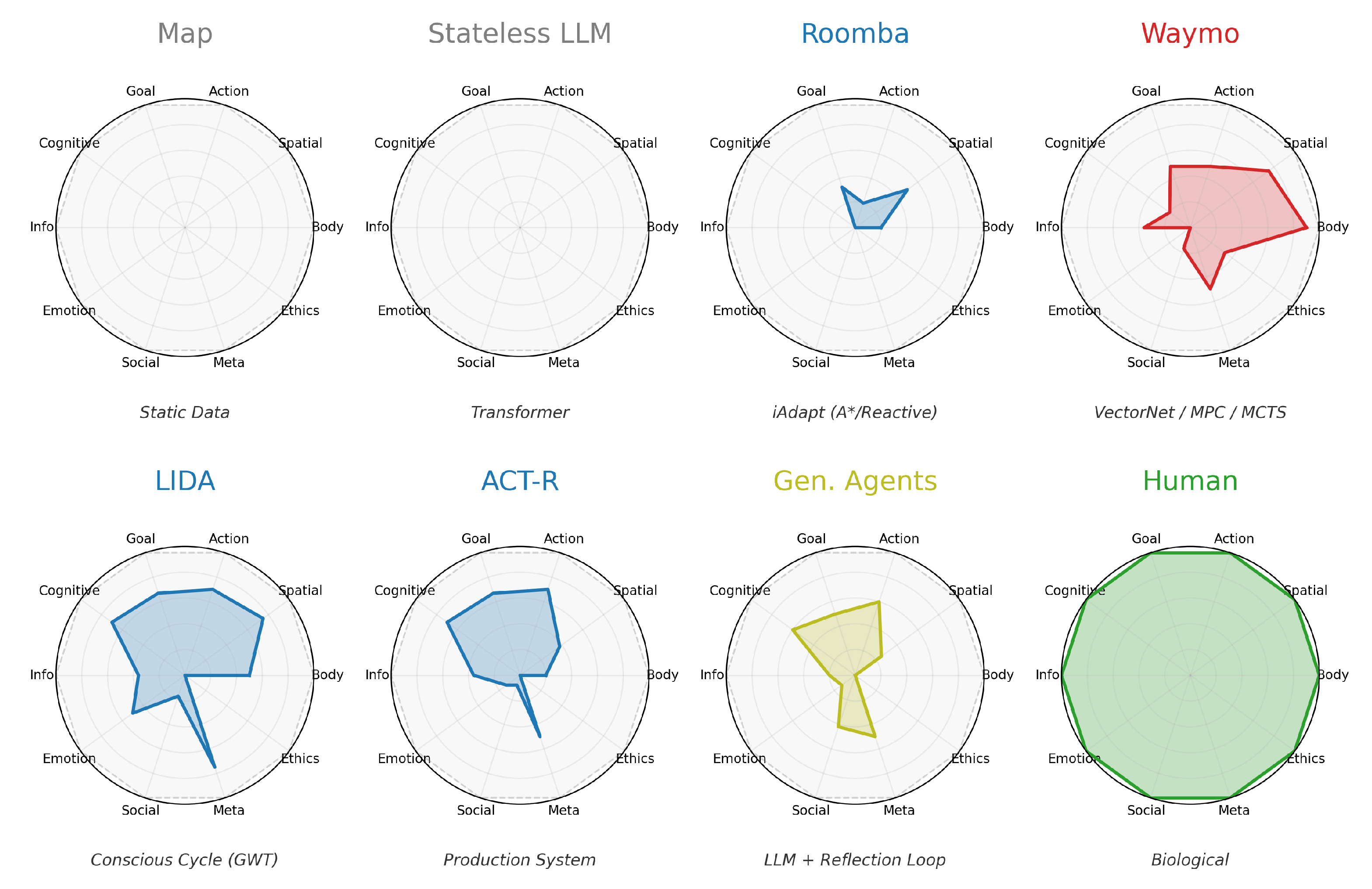

- Map: A map exhibits very rich spatial data (high ), but has no reasoning power at all (). For the evaluation we have chosen an average municipal city map with ~1k geometric shapes with ~40 bit each.

- Stateless LLM: LLMs (Large Language Models) act as powerful reasoning engines with performance similar to humans. We estimate by applying Bialek scaling to the ~1,000 effectively attended tokens across ~100 Transformer layers. However, without persistent state, LLMs score zero in R and thus . This result is deliberate: FC measures self-modeling capacity, not raw intelligence. Each LLM forward pass is a fresh function evaluation with no access to prior inferences or internal state history. The dramatic leap to ~6.5M for Generative Agents (below) confirms that FC correctly identifies the agentic scaffold—memory, reflection, and persistent state—not the base model, as the locus of functional consciousness.

- LIDA Cognitive Architecture [12]: LIDA models the conscious cycle of Global Workspace Theory [2]. While it possesses numerous self-models, its representations are shallow () and its symbolic reasoning is limited to simple activation arithmetic (). Due to this combination, LIDA scores below the Roomba on its best single self-model. This aligns with its design as a theoretical show-case rather than a high-performance inference engine.

- Roomba with SLAM: These robots possess a basic spatial self-model but have limited reasoning power (P) [11].

- ACT-R: The ACT-R cognitive architecture models reasoning through a tightly constrained central bottleneck of buffers and production rules. We evaluate its declarative memory and active buffer system (the cognitive domain). Due to its utility-learning production selection, its reasoning power () out-scales LIDA’s activation arithmetic, though its tight working memory bottleneck limits its overall capacity ().

- Waymo L4: The Waymo possesses sophisticated spatial self- models with integrated uncertainty, health monitoring, and trajectory simulation. It exhibits cross-domain reasoning (e.g., correlating sensor reliability with trajectory planning).

- Generative Agents: Stanford’s “Smallville” agents [19] use a LLM with memory streams, reflection, and social interaction. They possess rich episodic and social self-models but lack embodiment. We score the episodic self-model, which includes the memory stream, reflection nodes, and retrieval system. Please note that the P score is lower than the stateless LLM, because the agent architecture acts as a bottleneck.

- Human (kinematic): We score the narrowly defined kinematic self-model, which literature estimates to have ~550 state variables (joint angles, actuator feedback, vestibular data). The reasoning power () reflects the cerebellum’s role as a predictive forward model for motor planning.

- Human (working mem.): Quantifying the human episodic/cognitive self-model requires strict boundary assumptions to remain tractable. Rather than scoring the entire lifetime store of autobiographical memory, we analyze the active working set engaged during a single episode of reflective reasoning. Drawing on cognitive science estimates of working memory and narrative reconstruction [10], we estimate actively maintained variables (perceptual features, social actors, causal links), with bits reflecting rich multi-modal content. The resulting reasoning power () reflects the massive parallelism of biological associative expansion and is comparable to a transformer LLM (). This aligns with the empirical observation that LLMs approach human-level reasoning depth.

| ]@lrrrr@ System | B (v) | (bits/v) | P | FCS |

|---|---|---|---|---|

| Map | ~1k | ~40 | 0 | 0 |

| Stateless LLM | 0 | 0 | ~3,300 | 0 |

| LIDA (cognitive) | ~20 | ~4 | ~33 | ~2.6k |

| Roomba (kinematic) | ~18 | ~8 | ~39 | ~5.6k |

| ACT-R (cognitive) | ~20 | ~8 | ~50 | ~8.0k |

| Waymo (kinematic) | ~40 | ~14 | ~133 | ~74.5k |

| Gen. Agents (episodic) | ~130 | ~100 | ~497 | ~6.5M |

| Human (kinematic) | ~550 | ~10 | ~1,826 | ~10M |

| Human (working mem.) | ~330 | ~14 | ~3,000 | ~13.9M |

7. Functional Self-Model Analysis (FSMA)

“If an agent’s output about an internal state changes because that state changes, the agent must possess a functional model of that state.”

7.1. The VAT Intuition

- The origin of the item.

- The destination of the item.

- The category of the item (e.g., food vs. services).

- 1.

- The system possesses data representing the origin, destination, and category.

- 2.

- The system possesses a functional mapping .

- (Data Domains): The specific areas represented (e.g., spatial, temporal).

- (Structural Form): The architecture of the representation (e.g., 3D scene graph, directed acyclic graph, attention map).

- (Operations): The functional capabilities enabled (e.g., simulation, prediction, counterfactual reflection).

“All the time I’m dressing up the figure of myself in my own mind, lovingly, stealthily…”

8. Empirical Evaluation of FSMA

8.1. The Self-Model Catalog

- 1.

- Bottom-Up Analysis: An inductive process extracting self-model candidates directly from the text, grouped by shared data requirements.

- 2.

- Top-Down Analysis: A deductive validation of existing theoretical frameworks (e.g., a specific cognitive architecture) against the models identified in the text.

8.2. The SBR-Catalog of Self-Models

- Body (5): body-3d, body-kinematic, body-sensor, body-actuator, body-energy.

- Spatial (4): spat-relative, spat-trajectory, spat-collision, spat-tool.

- Action/Planning (5): action-tree, action-perform, action-progress, action-plan, action-improv.

- Goal/Motivation (3): goal-tree, goal-reward, goal-conflict.

- Cognitive (5): episody, episody-narrative, episody-time, mem-avail, learn-rate.

- Informational/Knowledge (7): inf-know, inf-fresh, inf-creative, inf-consistency, inf-reasoning, inf-hypo, inf-confidence.

- Emotional/Affective (4): mood, mood-needs, mood-stress, mood-load.

- Social/Interaction (6): social-tom, social-role, social-comm-state, social-trust, social-influence, social-empathy.

- Meta/Reflexive (4): meta-attention, meta-self-awareness, meta-explain, meta-accuracy.

- Ethics/Safety (3): ethics, ethics-safety, ethics-drift.

9. Benchmarking Agents with the SBR Self-Model Catalog

9.1. Methodological Boundary

9.2. Cognitive Shape

9.3. The Role of Global Reasoning (P)

10. Relation with Other Theories of Consciousness

10.1. Integrated Information Theory (IIT)

- denotes the reasoning power of the integrated system.

- denotes the j-th subsystem operating with only local, non-shared self-models.

10.2. Higher-Order Theories

10.3. Workspace and Predictive Theories

10.4. Summary Position

11. Conclusions

- 1.

- Self-models as units of analysis. Decomposing self-reflection into multiple, independently scorable self-models yields a practical taxonomy (46 models across ten domains) for comparing heterogeneous agents.

- 2.

- Metric clarity. The multiplicative structure enforces a discriminating boundary: a map with rich data but no reasoning () and a stateless LLM with powerful reasoning but no persistent self-model () both score zero—for opposite reasons. Functional consciousness requires both representation and inference.

- 3.

- Integration as exponential multiplier. When self-models are cross-linked, reasoning power grows as . This exponential explains the qualitative gap between narrow AI and biological consciousness as a difference in integration density, not in kind.

- 4.

- Theoretical convergence. FC captures measurable aspects of IIT (integration via shared self-models), higher-order theories (self-models as meta-representations), Global Workspace Theory (availability through attention), and Predictive Processing (state-space expansion as prediction). It identifies a functional substrate that these theories treat as necessary for conscious cognition, and makes it quantifiable.

- 5.

- Black-box benchmarking. FSMA provides a systematic, abductive method for inferring self-models from behavioral output, extending FC scoring to systems whose internals are unknown.

11.1. Outlook for AGI

References

- aronson, S. Why I Am Not An Integrated Information Theorist (or, The Unconscious Expander). Blog post. 2014. Available online: scottaaronson.com.

- aars, B. J. A Cognitive Theory of Consciousness; Cambridge University Press, 1988. [Google Scholar]

- ergmann, F.; et al. Workshop on Self-Reference and Self-Models for Cognitive Architectures; AGI-2015, 2015; Available online: http://www.fraber.de/university/self_models/.

- ergmann, F. GitHub repository. 2026. Available online: https://github.com/fraber/functional-consciousness.

- ergmann, F.; Fenton, B. Scene Based Reasoning. In Artificial General Intelligence; Springer, 2015; pp. 23–33. [Google Scholar]

- ialek, W.; Nemenman, I.; Tishby, N. Predictive Information, Memory, and Complexity. Physical Review E 2001, 63(5). [Google Scholar]

- lock, N. On a confusion about a function of consciousness. Behavioral and Brain Sciences 1995, 18(2), 227–247. [Google Scholar] [CrossRef]

- halmers, D. J. Facing up to the problem of consciousness. Journal of Consciousness Studies 1995, 2(3), 200–219. [Google Scholar]

- odd, E. F. A Relational Model of Data for Large Shared Data Banks. Communications of the ACM 1970, 13(6). [Google Scholar] [CrossRef]

- owan, N. The magical number 4 in short-term memory: A reconsideration of mental storage capacity. Behavioral and Brain Sciences 2001, 24(1), 87–114. [Google Scholar] [CrossRef] [PubMed]

- urrant-Whyte, H.; Bailey, T. Simultaneous Localisation and Mapping. In IEEE Robotics & Automation Magazine; 2006. [Google Scholar]

- ranklin, S.; et al. A LIDA cognitive model tutorial. In Biologically Inspired Cognitive Architectures; 2016; p. 16. [Google Scholar]

- raziano, M. S. A.; Webb, T. W. The attention schema theory. In Frontiers in Psychology; 2015. [Google Scholar]

- üzeldere, M. The many faces of consciousness. In The nature of consciousness; Block, N. J., et al., Eds.; 1997; pp. 1–67. [Google Scholar]

- urlburt, R. T. Investigating Pristine Inner Experience; Cambridge University Press, 2011. [Google Scholar]

- eCun, Y. “A Path Towards Autonomous Machine Intelligence”, Version 0.9.2. openreview.net 2022. [Google Scholar]

- etzinger, T. Being No One: The Self-Model Theory of Subjectivity; MIT Press, 2003. [Google Scholar]

- etzinger, T. The Elephant and the Blind; MIT Press, 2024. [Google Scholar]

- ark, J. S.; O’Brien, J. C.; Cai, C. J.; et al. Generative Agents: Interactive Simulacra of Human Behavior. In UIST 2023; 2023; Available online: https://arxiv.org/abs/2304.03442.

- remack, D.; Woodruff, G. Does the chimpanzee have a theory of mind? Behavioral and Brain Sciences 1978, 1(4), 515–526. [Google Scholar] [CrossRef]

- utnam, H. The nature of mental states. In Mind, language and reality; Cambridge University Press, 1975; Vol. 2. [Google Scholar]

- osenthal, D. M. Consciousness and Mind; Clarendon Press, 2005. [Google Scholar]

- chleiermacher, F. Hermeneutics and Criticism: And Other Writings; Cambridge University Press, 1998. [Google Scholar]

- ononi, G.; et al. Integrated information theory. In Nature Reviews Neuroscience; 2016; p. 17. [Google Scholar]

- aymo. Waymo’s Foundation Model. Waymo Technical Blog 2024.

- oolf, V. The Mark on the Wall. Monday or Tuesday: Eight Stories; Dover Publications, 1917. [Google Scholar]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).