1. Introduction

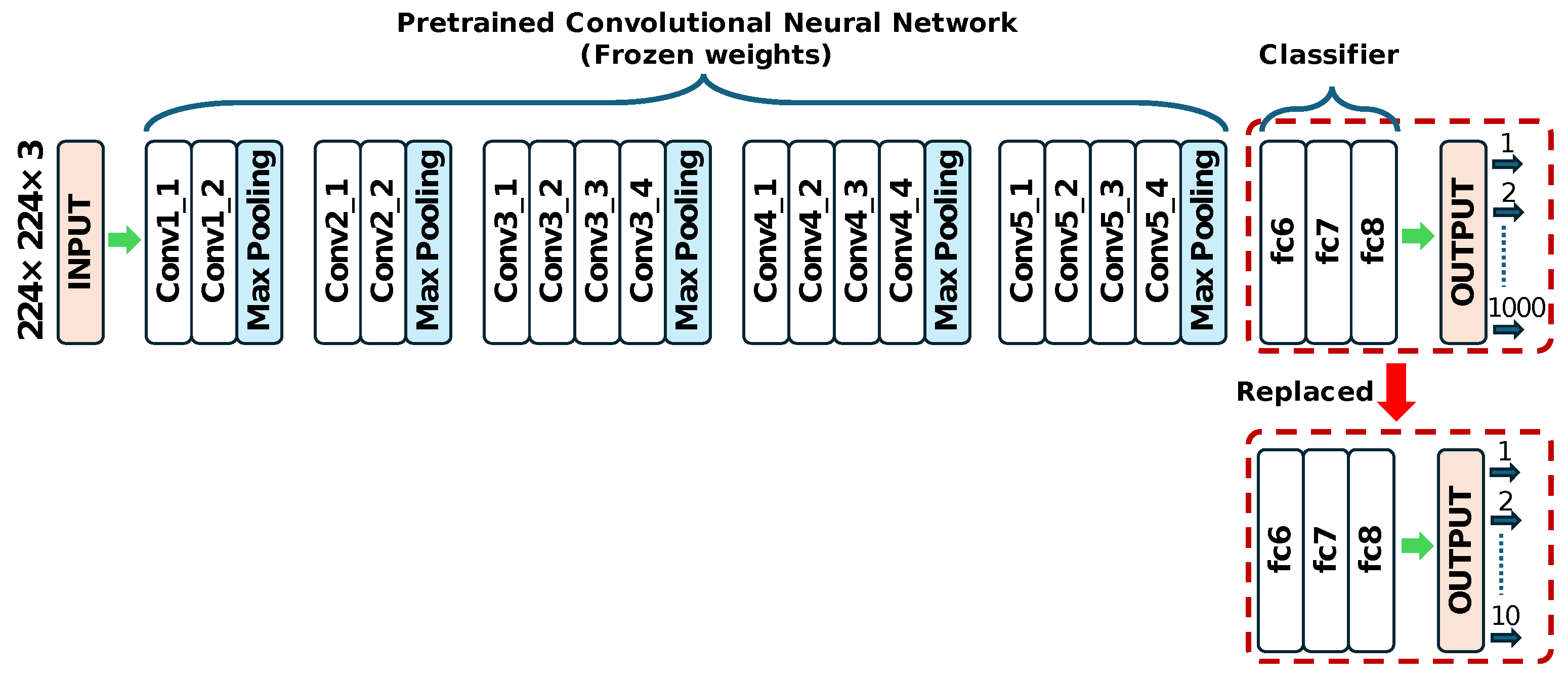

In the production facilities for various industrial products and materials, inspection processes are established to verify that the finished products do not contain defects. Although some aspects of product inspection within the inspection process have been automated, there remains a significant reliance on visual inspection by skilled inspectors. In recent years, numerous attempts have been reported to apply deep learning technologies, such as CNNs specialized for image recognition and AI models that fuse CNNs with SVMs as feature extractors, to product defect detection. A highly active research field has emerged, with new techniques being published daily, indicating a continuous evolution towards higher performance [

1,

2,

3].

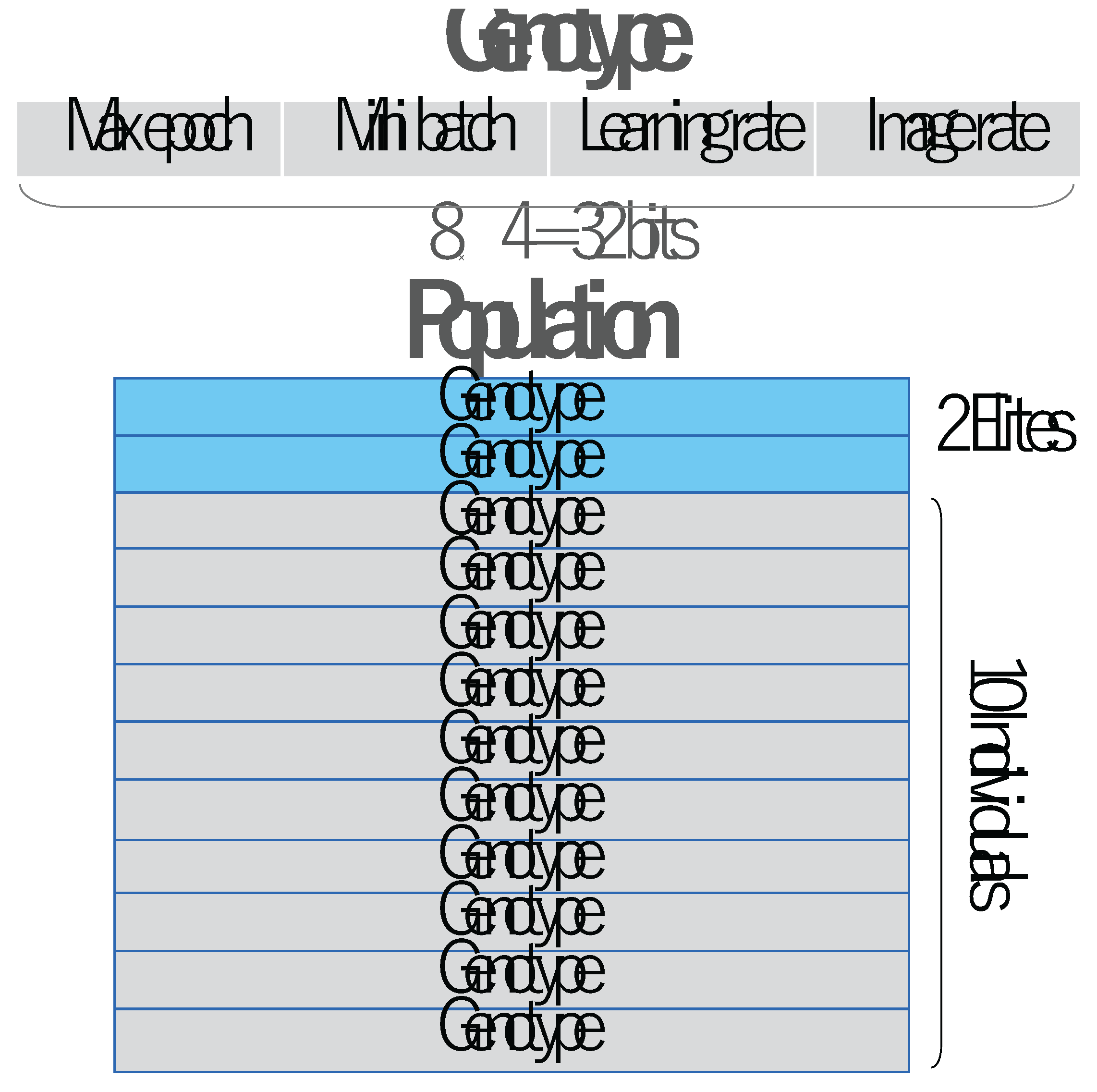

The first requirement when training a CNN model for defect detection is the collection of image data for training. The parameters that must be set during training include: for example, maximum number of epochs, mini-batch size, learning rate, number of images used for training to achieve generalization. In the MATLAB application currently under development in our laboratory [

4,

5], while it is possible to adjust parameters including these four items when training various types of defect detection models, the current practice involves operators relying on intuition to determine such parameters through trial and error, making it a laborious task.

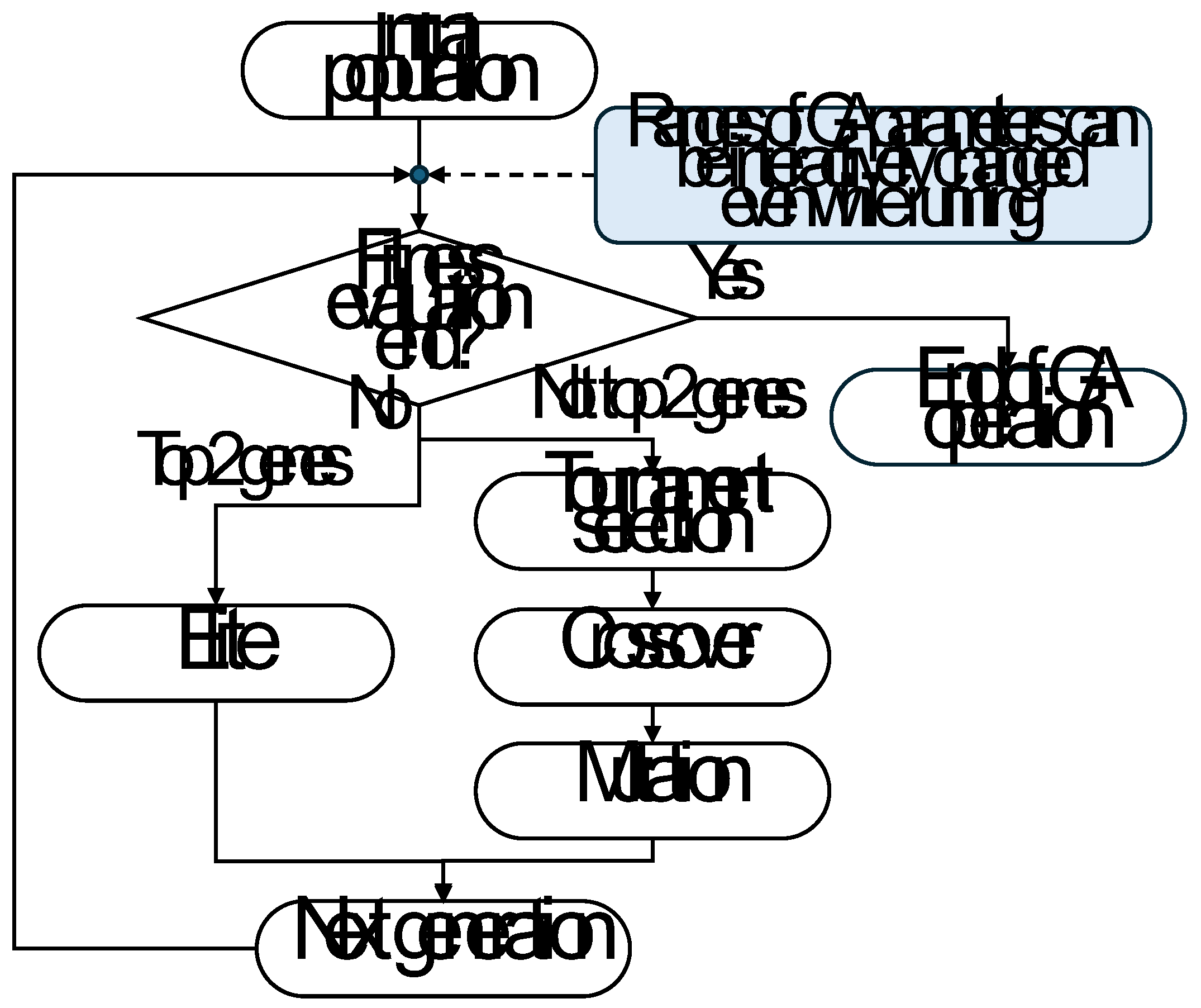

Genetic Algorithms (GAs) introduced by Holland can simulate the process of natural evolution to find optimal or near-optimal solutions to complex problems [

6]. By iteratively applying selection, crossover, and mutation operations, GAs evolve a population of candidate solutions toward better performance. GAs are widely used in optimization, scheduling, and machine learning tasks where it is difficult for traditional gradient-based methods to be applied or to solve them.

As for the design of machine learning models as CNN using GAs, for example, Xie and Yuille proposed an idea of encoding method to represent each network structure in a fixed-length binary code, in which GAs were adopted to efficiently explore the large search space as network structure resulting in demonstrating its performance to find high-quality structures [

7]. Kim et al. proposed how to to use feature selection via evolutionary algorithms to remove the irrelevant deep features. It is reported that their proposed method could minimize the computational complexity and the amount of overfitting while maintaining a good quality of representation. It is demonstrated that the improvement of the filter representation by performing experiments on three data sets of CIFAR10, metal surface defects, and variation of MNIST and by analyzing the classification performance as well as the variance of the filter [

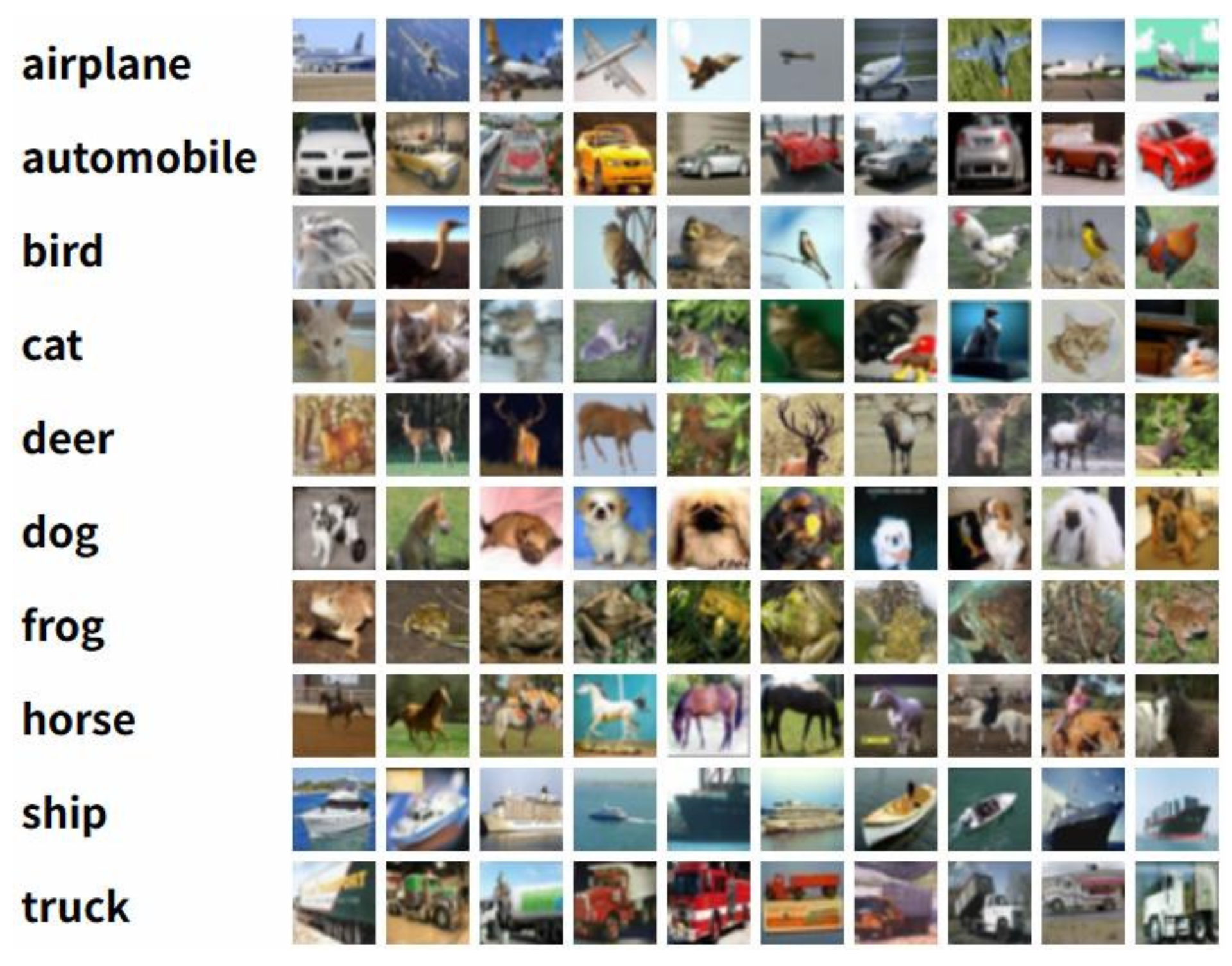

8].

Bakhshi et al. proposed a GA that could efficiently explore a defined space of potentially suitable CNN architectures and simultaneously optimize their hyper parameters, for a given image classification task. The fast automatic optimization model was named fast-CNN and employed to find competitive CNN architectures for an image classification task on CIFAR10 dataset [

9]. Sun et al. proposed an automatic CNN architecture design method by using genetic algorithms, to efficiently deal with image classification tasks. The merit of the proposed algorithm seems that domain knowledge of CNNs is not required to users, while they can still obtain a promising CNN architecture for the given images [

10]. Lima Mendes et al. proposed the gaCNN, which is a hybrid classification architecture consisting of a CNN and a GA [

11]. The gaCNN utilized heterogeneous activation functions to classify images, optimizing its hyper parameters and activation functions automatically, regardless of the analyzed dataset. The results showed that gaCNN was able to identify good architecture. Around the same time, Lee et al. showed the possibility of optimizing network architectures using GA, in which the search space included both network structure configuration and hyper parameters. They used an amyloid brain image dataset, that is used for Alzheimer’s disease diagnosis, to verify the performance of their proposed algorithm. It is reported that their proposed algorithm worked better than the Genetic CNN [

7] by 11.73% on a given classification task [

12]. Josiah et al. introduced that GA and machine learning have a long history of development and use in chemistry. In their review, it is focused on how GA and machine learning have been used in conjunction with chemical simulation techniques to advance understanding of surface chemistry, examining the history, recent work, and overall success of these applications [

13]. Domashova et al. developed a software that generates a neural network with the best parameters for solving classification problems, in their paper, the process of population formation demonstrated the choice of the fitness function and parent selection method, and modifications of the crossover and mutation operators are introduced to ensure the operability of the algorithm on variable sized individuals [

14].

Ali et al. systematically evaluated the performance of GA optimizer in tuning machine learning hyper parameters while comparing to common other techniques such as grid search, random search, and Bayesian optimization. It is reported that GA slightly outperformed other methods with respect to the optimality due to its general ability to pick any continuous values within the search range [

15]. Santoso et al. contributed to the automatic tuning of hyper parameters to train CNN models using genetic algorithms [

16]. Their proposed method was evaluated using MNIST dataset. The experiments results showed that using a genetic algorithm for tuning hyper parameters automatically, the accuracy of validation data is 97.02% and the accuracy of training data is 99.77%. Abdelaziz et al. employed CNNs and CNNs combined with a GA as intelligent models for energy consumption prediction. The GA was utilized to fine-tune some of CNN parameter settings. It is reported that the CNN with the GA outperformed the CNN model with respect to the accuracy and standard error metrics [

17]. Also, Rom et al. reviewed six prominent hyper parameter tuning methods such as manual tuning, grid search, random search, Bayesian optimization (BO), particle swarm optimization (PSO), and GA [

18]. Their comparative analysis highlighted the strengths, weaknesses, and appropriate application contexts for each method. However, it seems that there was almost no discussion about how to extract the minimum amount of training data necessary to achieve generalization performance.

Caparrini and Arroyo pointed out the problem that traditional approaches such as manual tuning, grid search, and random search become inefficient when dealing with complex, high-dimensional search spaces. To cope with the limitation, they introduced an open-source package named

that implements GA-based hyper parameter optimization for machine learning models. The package is designed to be easy to use, highly customizable and reproducible [

19]. Yilmaz and Kuş employed a GA to optimize activation function, padding, number of filters, kernel size, dropout rate, pooling size, and batch size. The optimized CNN was trained on brain magnetic resonance imaging (MRI) images of multiple sclerosis and validated on Alzheimer’s MRI and COVID-19 chest X-ray datasets. It is reported that the results showed substantial improvements across all datasets [

20].

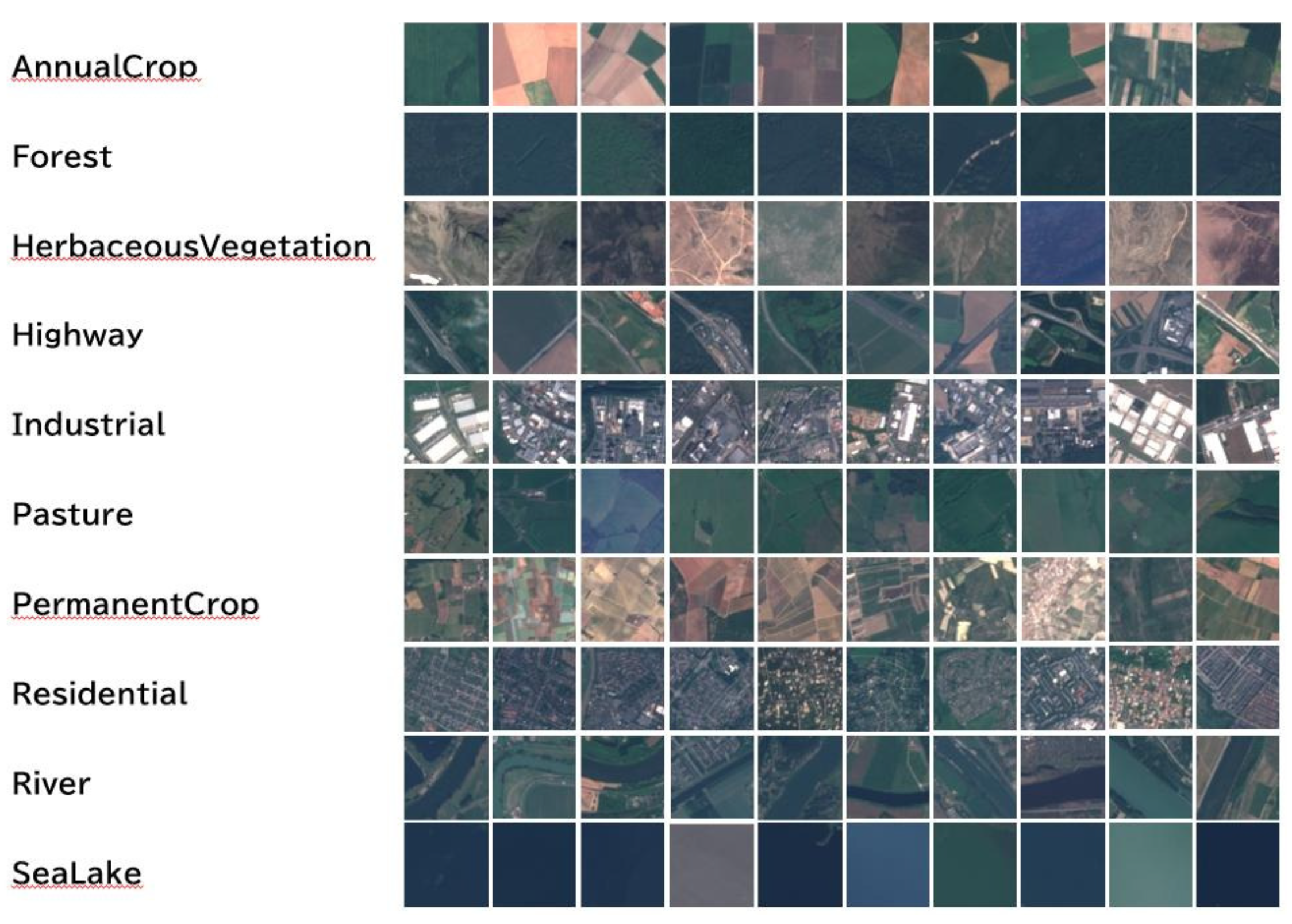

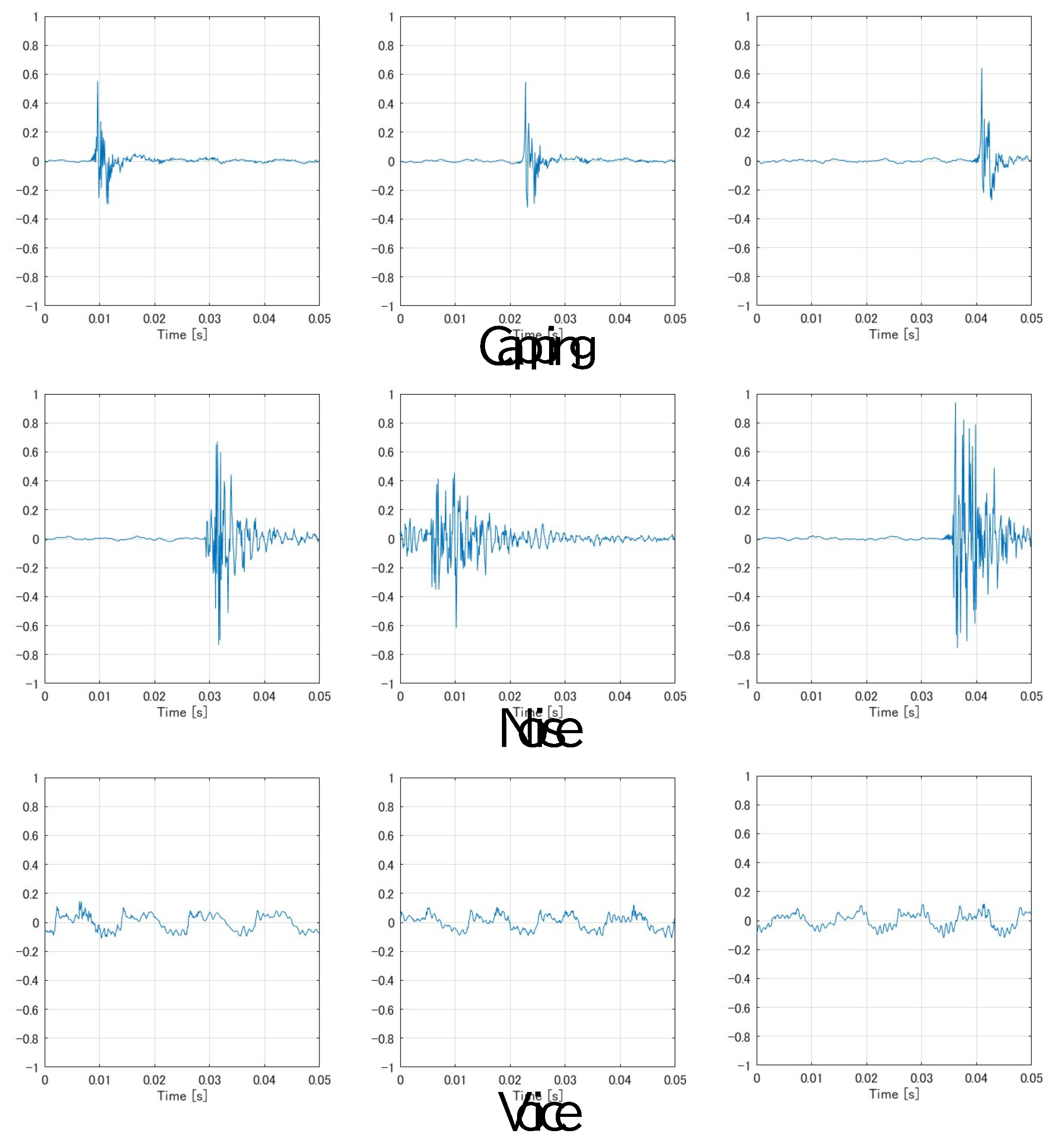

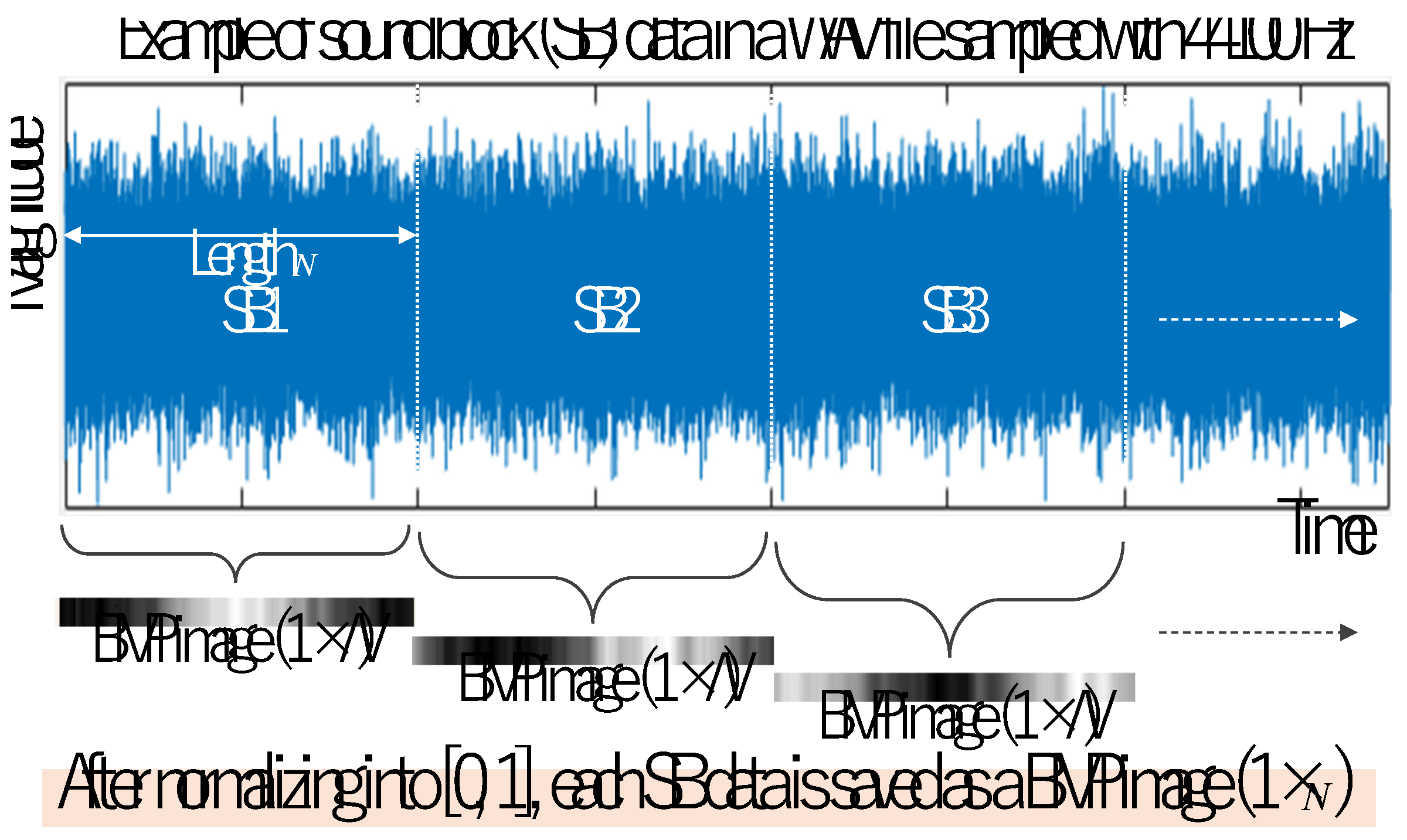

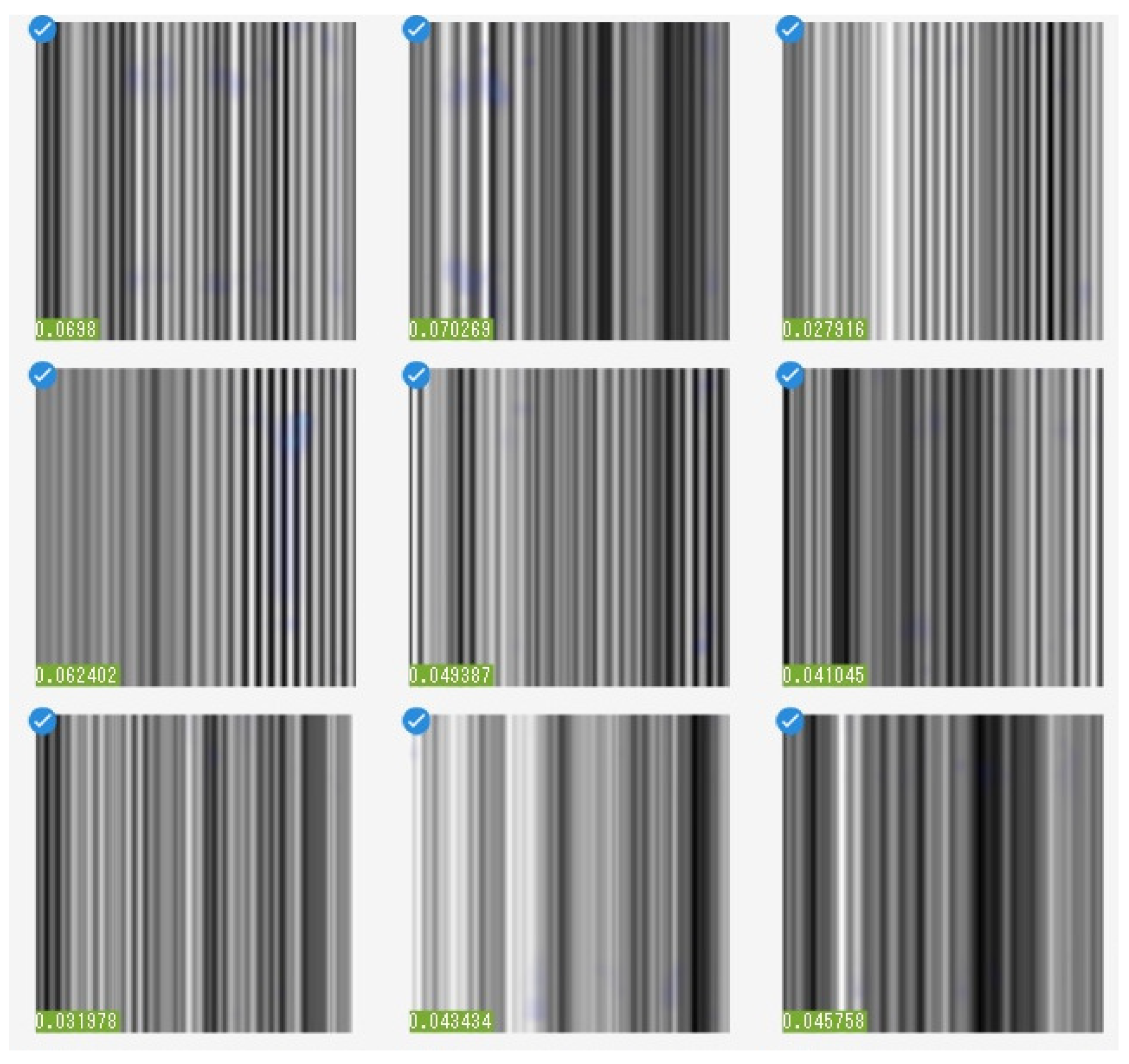

This paper aims to develop a system that employs interactive genetic algorithms [

21,

22,

23] to attempt the automatic adjustment of training parameters, thereby proposing guiding conditions to assist engineers and researchers, in constructing desirable deep learning models such as CNN. By interacting with the user through our developed MATLAB application that provides the functionality of interactive GA, it becomes possible to systematically build user’s desired CNN models, e.g., for defect detection of an industrial material or product, trained based on only rich featured images extracted from an original big dataset. The effectiveness and promise of the proposed system is shown through CNN trainings using four different kind of dataset.

This work differs from existing GA-based CNN optimization approaches by explicitly incorporating image usage rate as a controllable parameter within the evolutionary search, enabling the identification of reduced training subsets that retain relevant features for maintaining generalization performance. Unlike conventional hyper parameter tuning, the proposed method considers both training parameters and data usage within a unified framework, providing a practical approach for reducing dataset size while preserving classification accuracy.