Submitted:

21 April 2026

Posted:

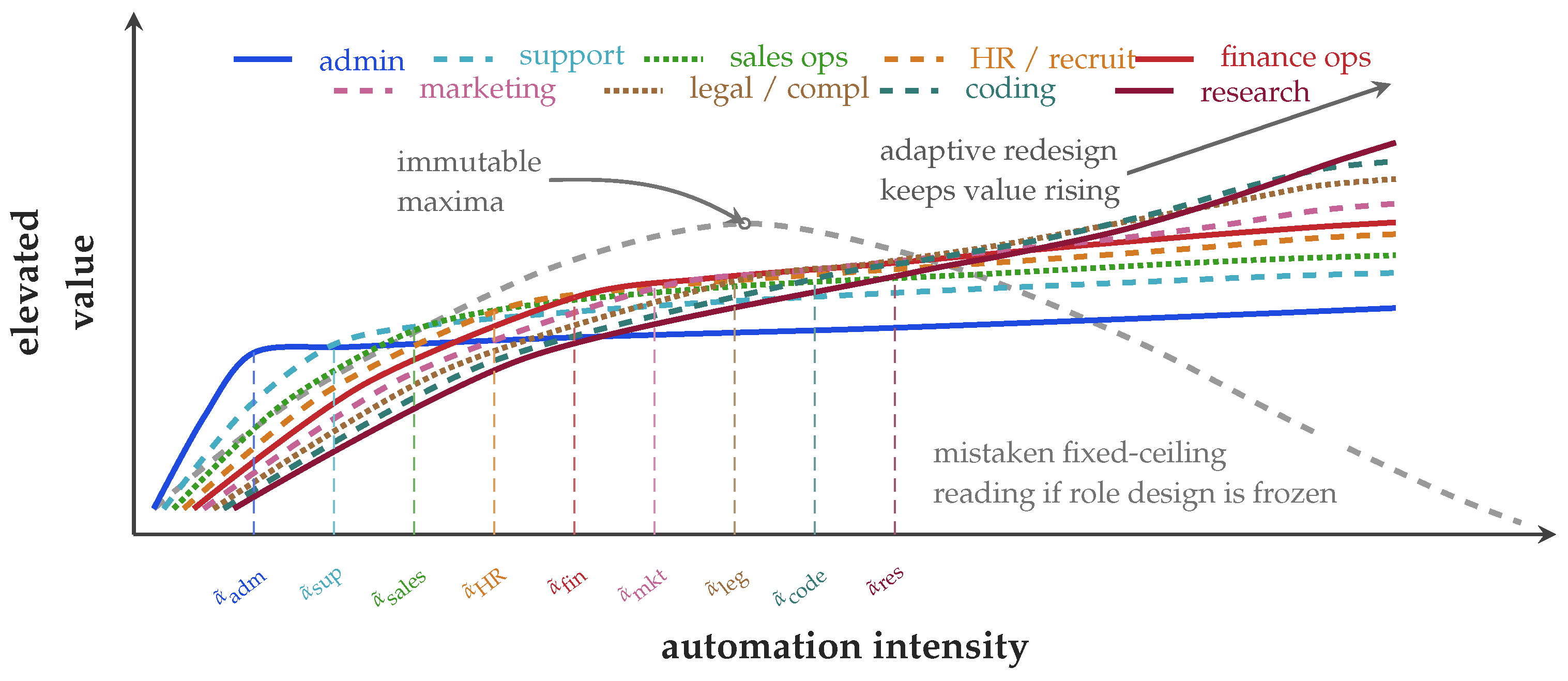

22 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. The New Financing Logic: AI Is Increasingly Being Paid for Through Labor Reallocation

3. From Exposure to Elevation Space

3.1. Exposure indices identify vulnerable task bundles, not whole-job destiny

3.2. The overlooked layer is the elevated human layer

3.3. The frontier itself is endogenous

4. Local Ceilings, Moving Frontiers, and Design Capacity

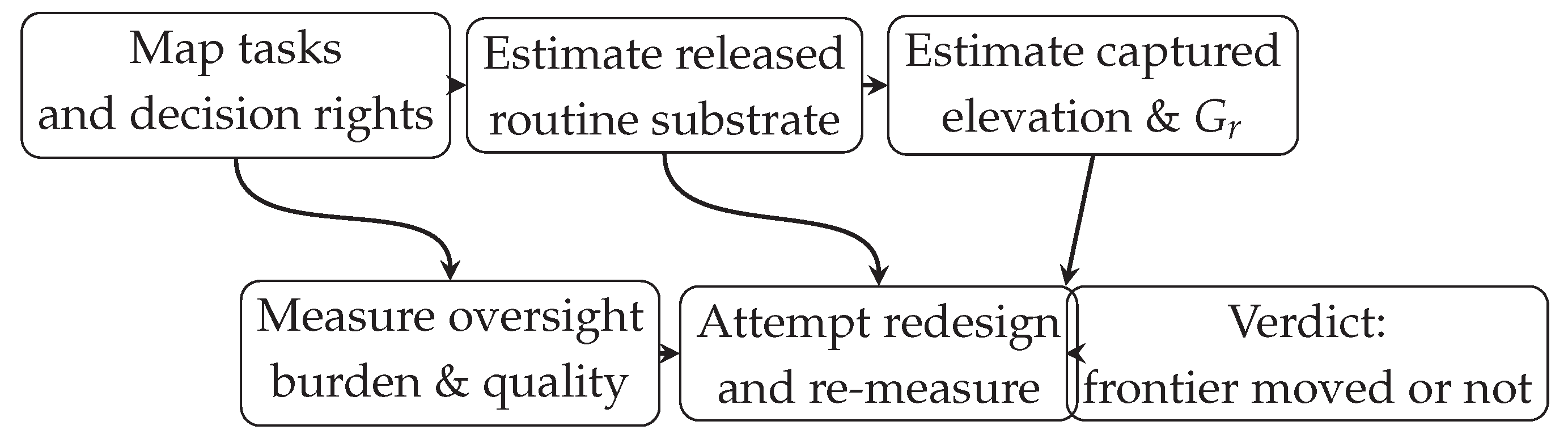

4.1. A workflow-level estimation protocol

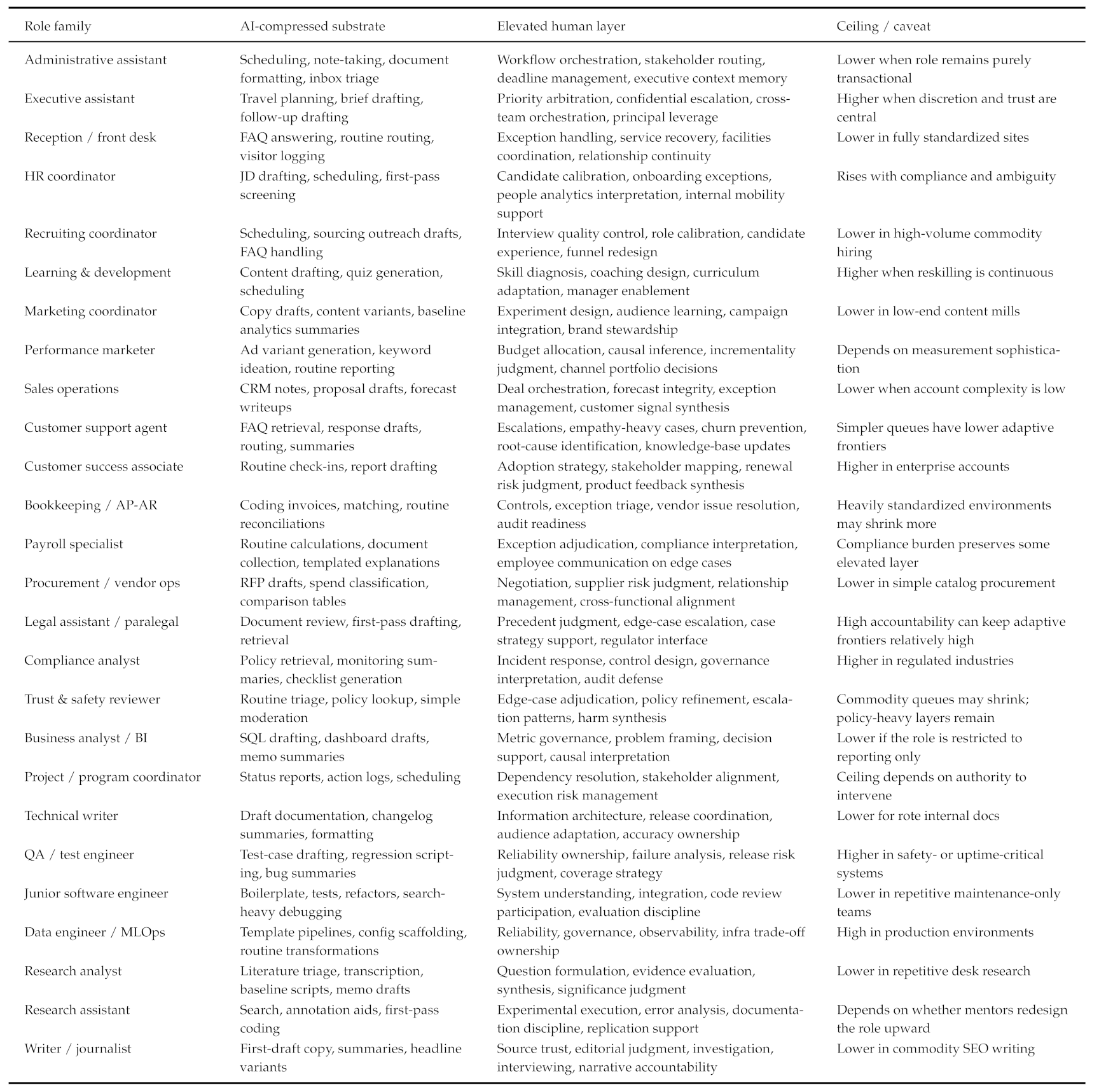

5. High-Risk Role Families and Worked Elevation Paths

6. The Elevate-First Rule

7. Alternative Views

View 1: What if adaptive frontiers are genuinely low?

View 2: Does role redesign concede first-mover advantage?

View 3: Doesn’t this simply delay the hollowing out of middle management?

View 4: Does this mandate the retention of low-value jobs?

View 5: Should AI-enabled flexible work be the default mode instead?

View 6: What if exposed workers cannot or do not want to elevate?

8. Broader Implications for AI Evaluation and Deployment

9. Operationalizing Workforce Elevation - A Research Agenda

10. Conclusion

Acknowledgments

Appendix A. Extended Role Catalog

|

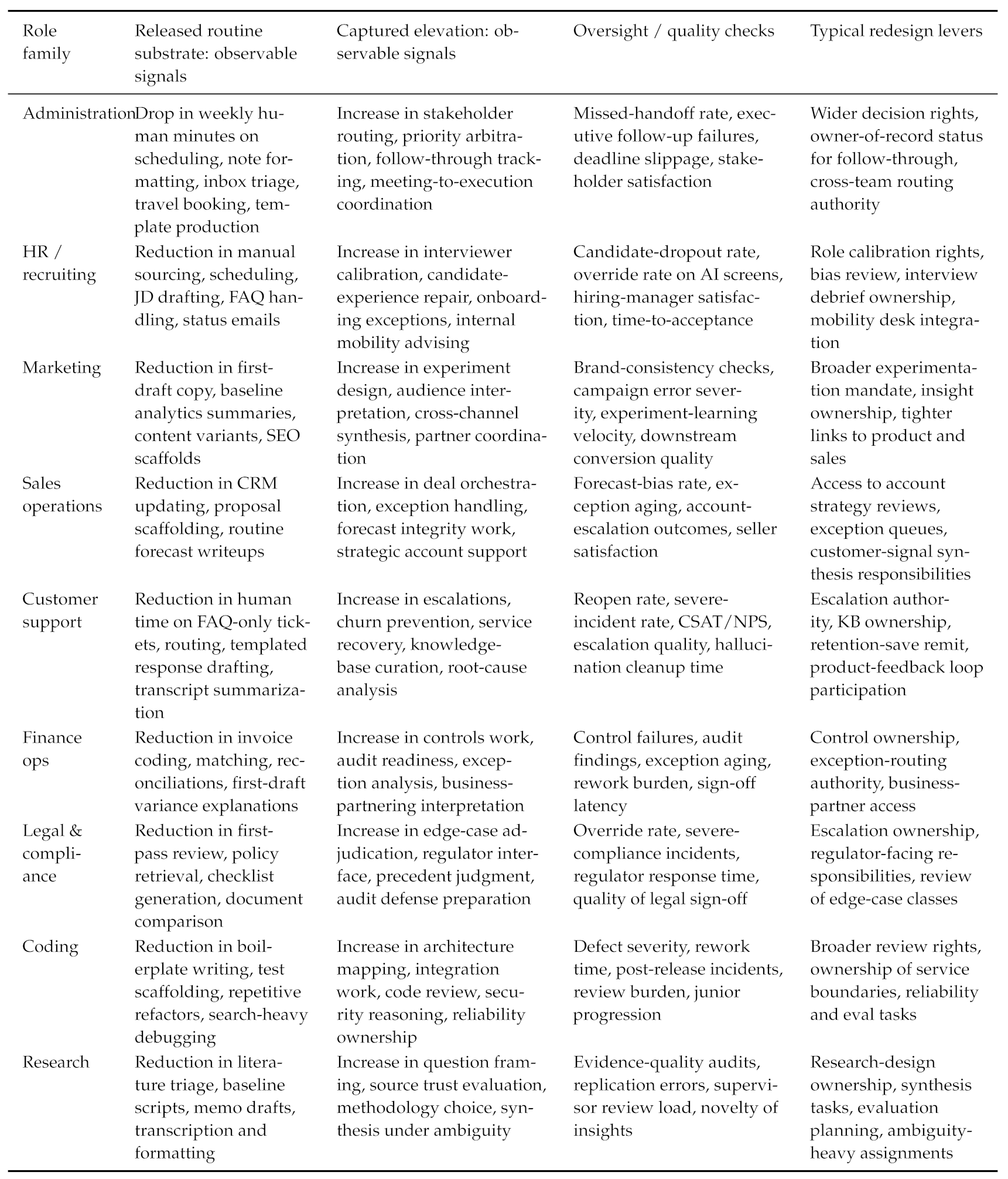

Appendix B. Workflow-Level Estimation Template

|

Appendix C. Illustrative Workflow Audit: Customer Support

| Construct | Observable definition in customer support | Typical evidence source |

|---|---|---|

| Released routine | Reduction in weekly human minutes on FAQ-only tickets, simple routing, templated replies, and transcript summarization after rollout | Ticket timestamps, handle-time logs, queue tags, time-motion audit |

| Captured judgment | Increase in human time on retention saves, exception resolution, ambiguous policy decisions, and root-cause diagnosis | CRM outcomes, escalation tags, save-rate workflows, manager review notes |

| Captured oversight | Increase in AI-output review, policy overrides, escalation validation, and severe-case ownership | QA review logs, override records, incident trackers |

| Captured coordination | Increase in cross-team handoffs, knowledge-base updates, callbacks to product or operations, and follow-through on recurring defects | Knowledge-base edit history, issue tracker, cross-functional tickets |

| New human-owned tasks | Tasks created by deployment itself, such as prompt/eval maintenance, gap analysis, escalation taxonomy upkeep, or service-recovery playbook maintenance | Evaluation logs, documentation repos, quality-program records |

| Oversight burden | Review time, hallucination cleanup, false escalations, duplicated contacts, and rework introduced by the system | QA queue, reopen rate, duplicate-contact logs, postmortem records |

| Quality guardrails | Changes in reopen rate, severe-incident rate, CSAT/NPS, first-contact resolution, and complaint severity | Support analytics, trust-and-safety logs, customer-survey systems |

| Entry-ladder effects | Whether novice agents progress to higher-complexity queues, what supervisor load changes, and whether promotion pathways remain intact | Training logs, queue-allocation rules, mentor ratios, promotion records |

Appendix D. Additional Worked Examples

Executive assistance.

Research analysis.

Appendix E. Supplementary Discussion: How Firms Can Finance AI Without Defaulting to Layoffs

Appendix F. Supplementary Discussion: What Would Count as a Genuine Rebuttal?

Appendix G. Supplementary Discussion: Why Entry-Ladder Preservation Is a Technical Issue, Not Only a Social One

Appendix H. Related Work

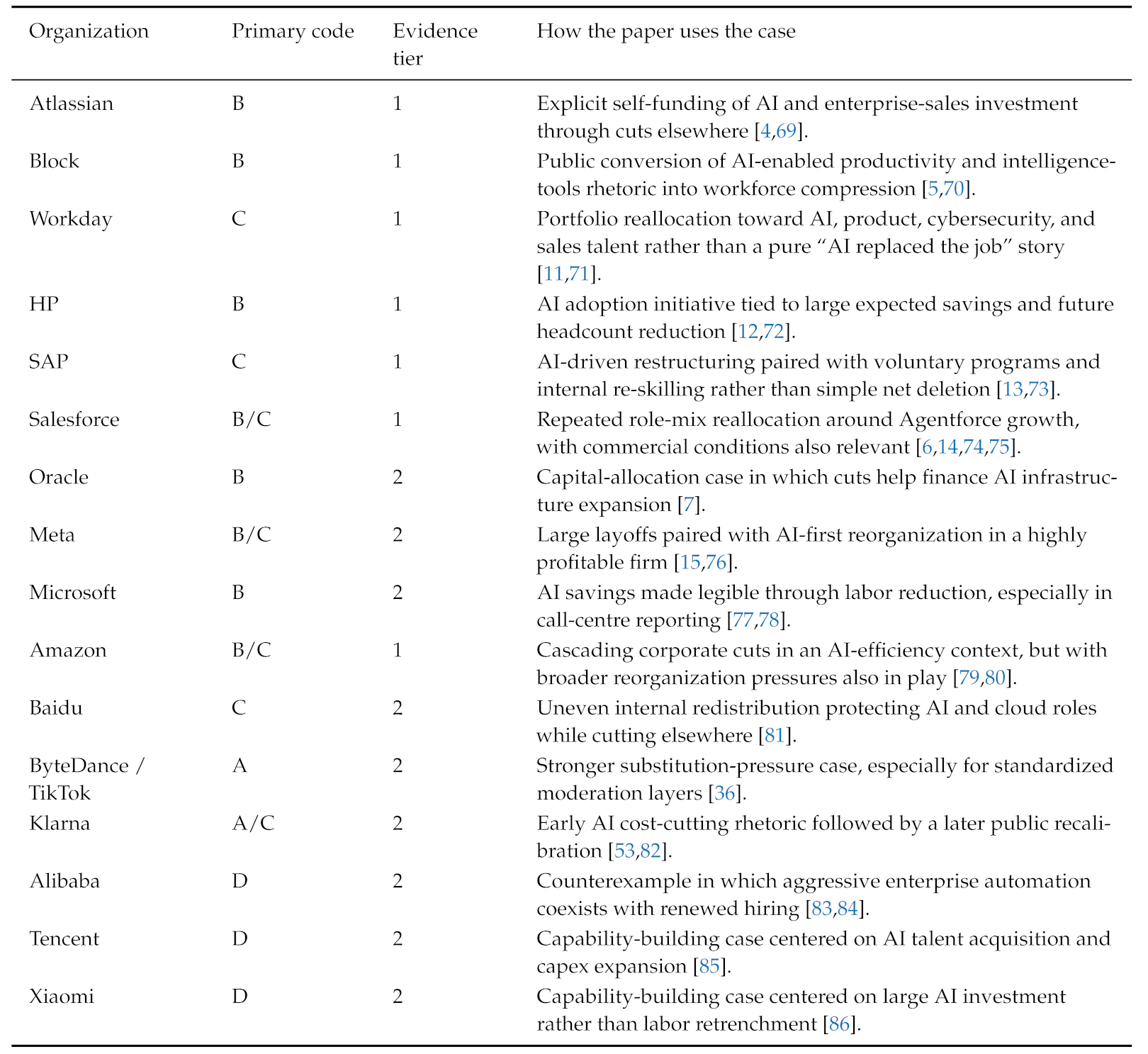

Appendix I. Case-Coding Taxonomy for Company Evidence

|

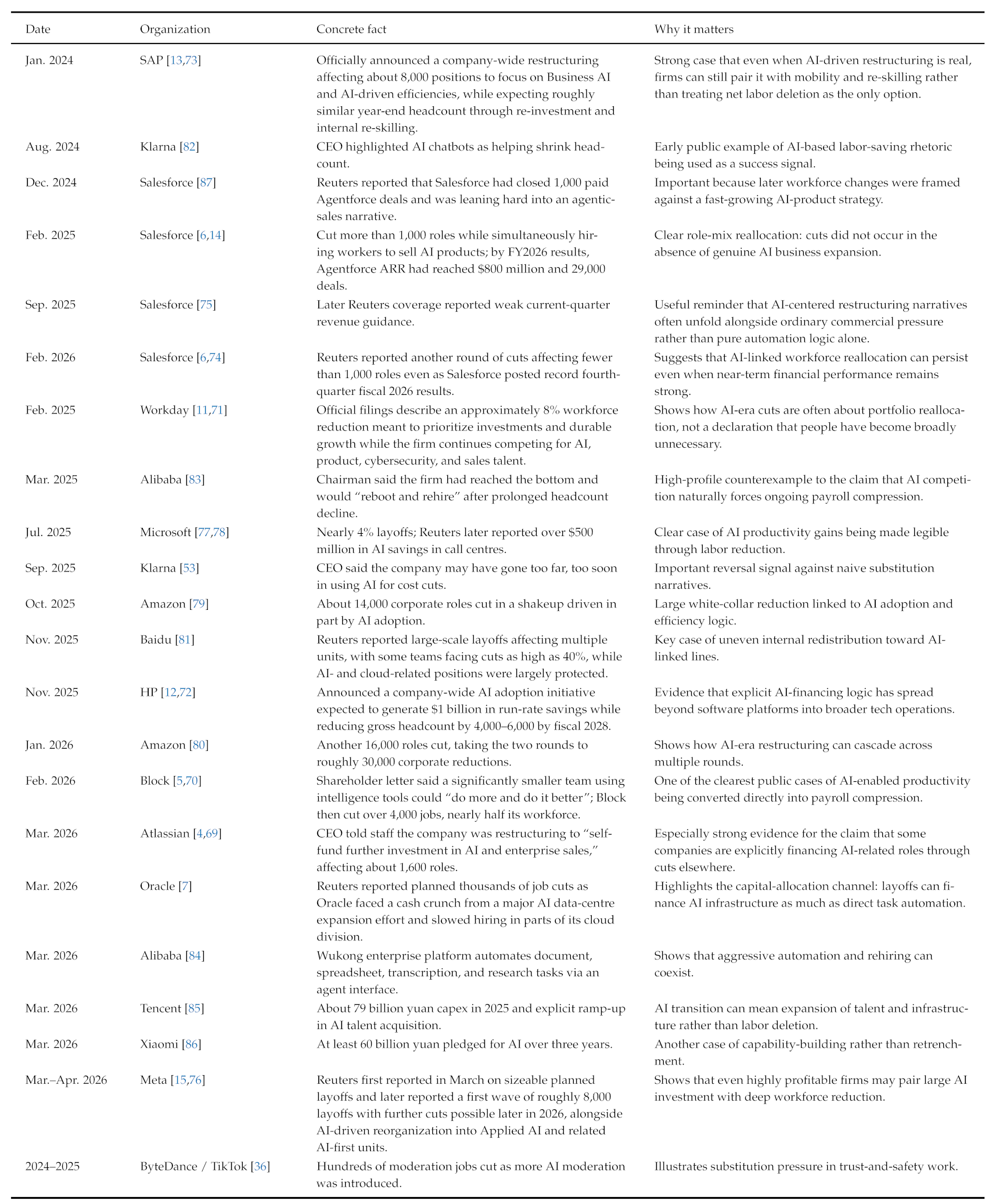

Appendix J. Selected 2024–2026 Company Evidence on Explicit AI-Financed Cuts, Reallocation, Rehiring, and Capability-Building

|

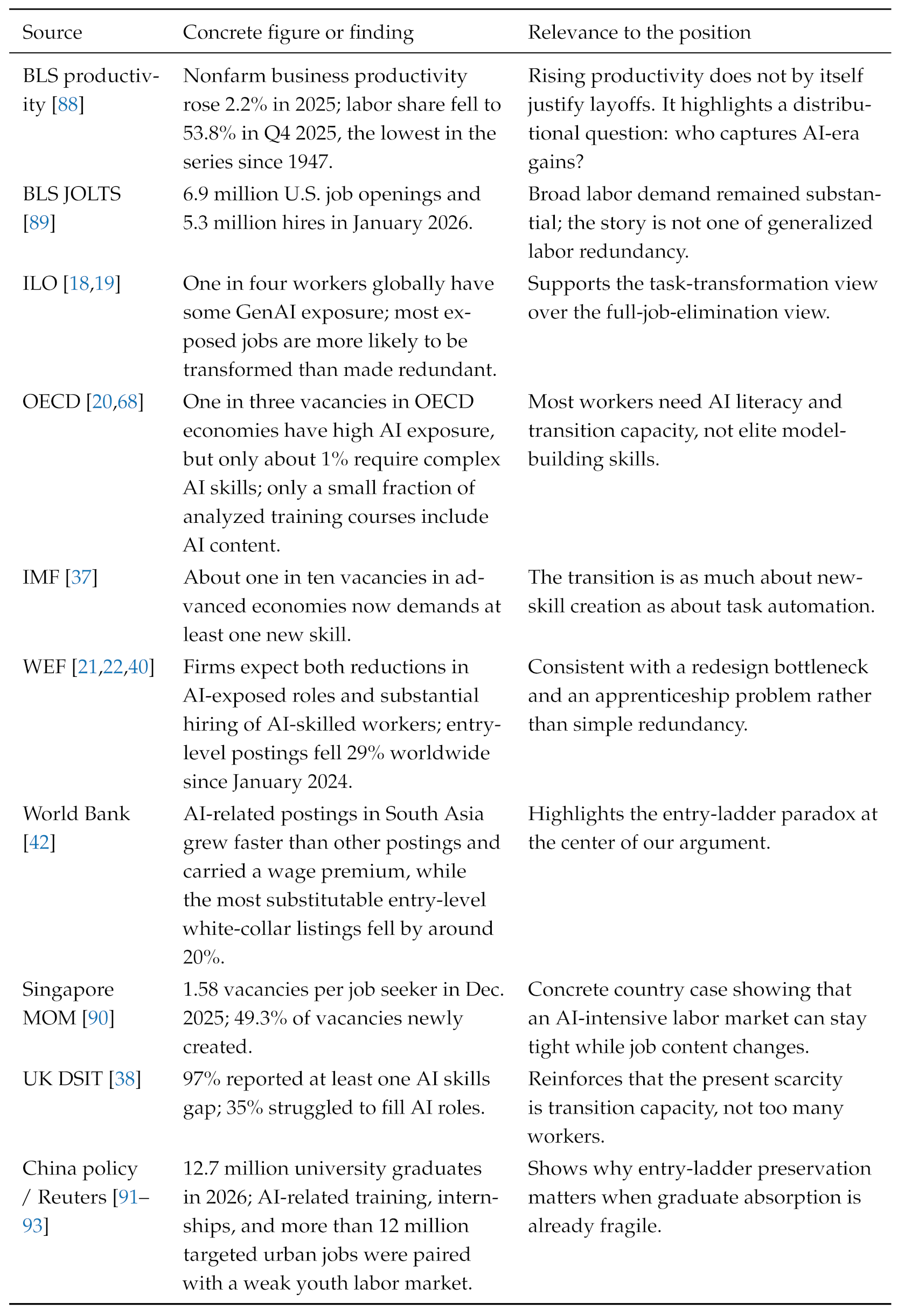

Appendix K. Labor-Market, Productivity, and Skills Evidence

|

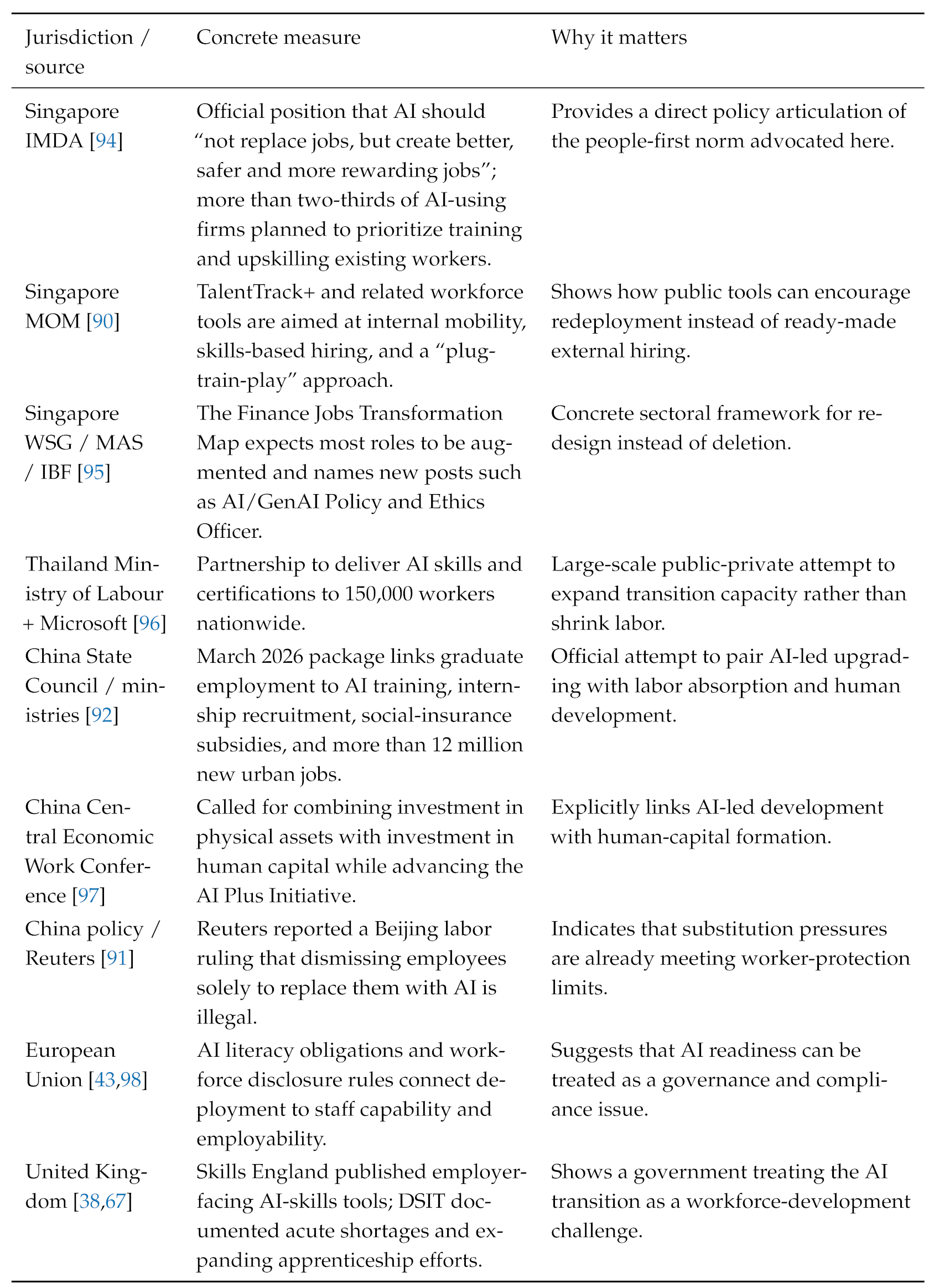

Appendix L. Government and Public-Institution Cases Showing an Alternative Path

|

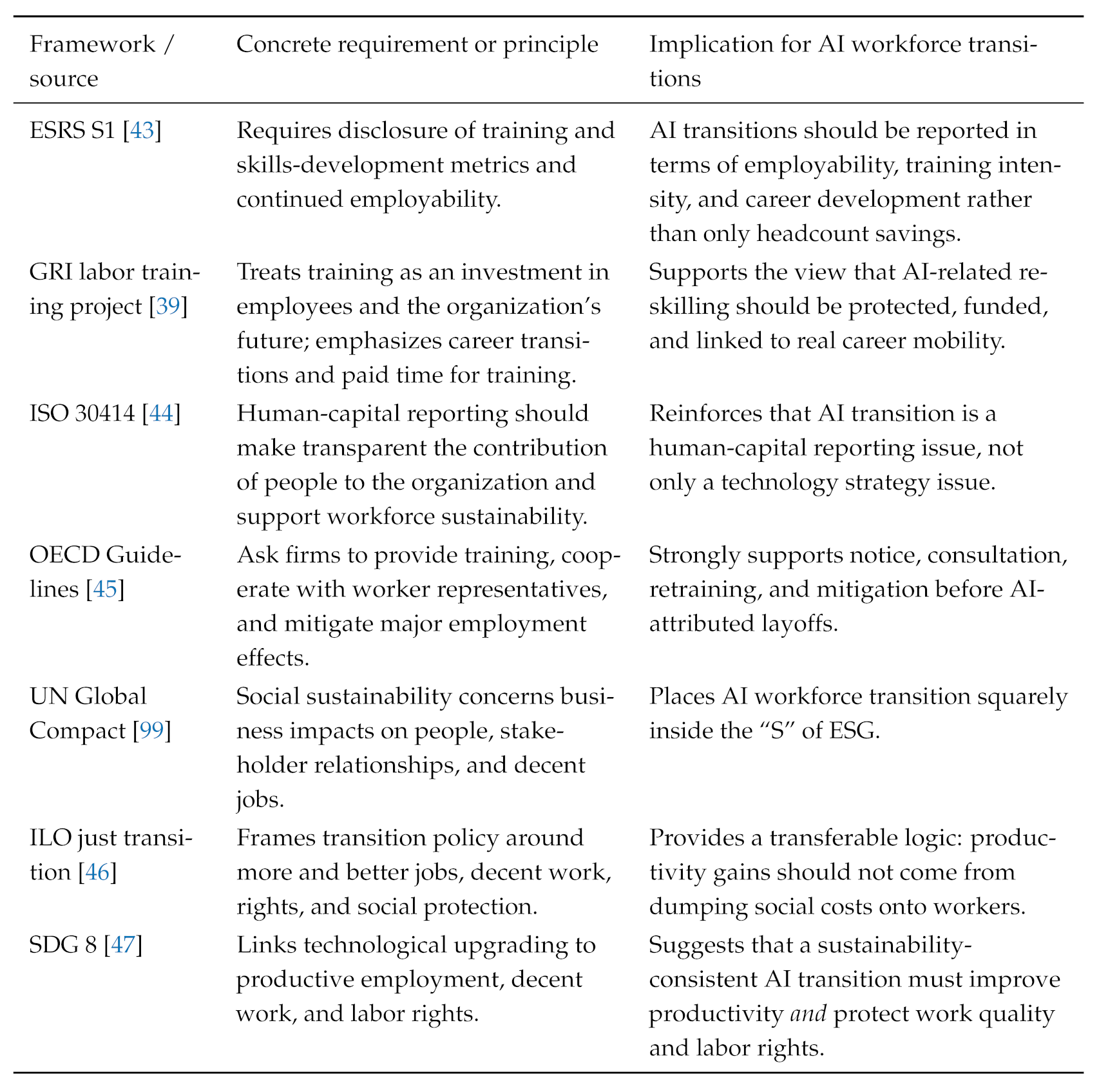

Appendix M. Elevate-First as a Social Sustainability and Human-Capital Reporting Standard

|

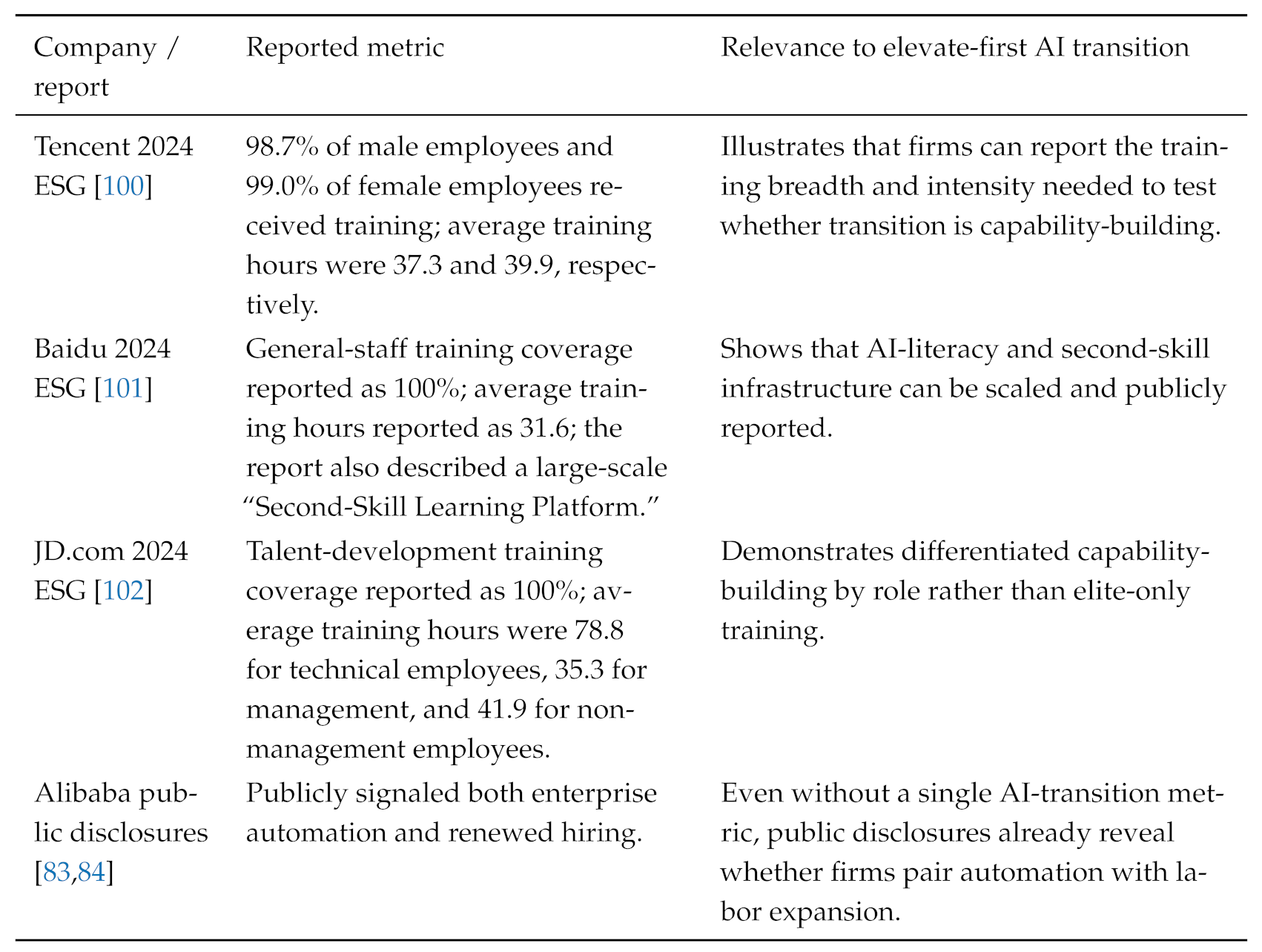

Appendix N. Illustrative Chinese Company Human-Capital and Upskilling Disclosures

|

Appendix O. Why the Position Is Falsifiable

References

- Reuters. Companies Cutting Jobs as Investments Shift Toward AI. 2026. Updated April 15, 2026. Available online: https://www.reuters.com/business/world-at-work/companies-cutting-jobs-investments-shift-toward-ai-2026-04-15/ (accessed on 18 April 2026).

- Challenger, Gray & Christmas. Challenger Report: January Job Cuts Surge; Lowest January Hiring on Record. 6 February 2026. Available online: https://www.challengergray.com/blog/challenger-report-january-job-cuts-surge-lowest-january-hiring-on-record/ (accessed on 22 March 2026).

- Challenger, Gray & Christmas. Challenger Report: February Cuts Plunge, YTD Hiring Falls 56%. https://www.challengergray.com/blog/challenger-report-february-cuts-plunge-hiring-falls-56-percent/, 2026. Published. 5 March 2026. (accessed on 18 April 2026).

- Atlassian. An Important Update on Our Team. 11 March 2026. Available online: https://www.atlassian.com/blog/announcements/atlassian-team-update-march-2026 (accessed on 18 April 2026).

- Block, Inc. Q4 2025 Shareholder Letter. Available online: https://investors.block.xyz/financials/quarterly-earnings-reports/default.aspx (accessed on 18 April 2026).

- Salesforce. Salesforce Delivers Record Fourth Quarter Fiscal 2026 Results. 25 February 2026. Available online: https://investor.salesforce.com/news/news-details/2026/Salesforce-Delivers-Record-Fourth-Quarter-Fiscal-2026-Results/default.aspx (accessed on 18 April 2026).

- Reuters. Oracle Plans Thousands of Job Cuts as Data Center Costs Rise, Bloomberg News Reports. 5 March 2026. Available online: https://www.reuters.com/business/oracle-plans-thousands-job-cuts-data-center-costs-rise-bloomberg-news-reports-2026-03-05/ (accessed on 18 April 2026).

- Agrawal, A.K.; McHale, J.; Oettl, A. Enhancing Worker Productivity Without Automating Tasks: A Different Approach to AI and the Task-Based Model. In Working Paper 34781; National Bureau of Economic Research, 2026. Issued January 2026; Available online: https://www.nber.org/papers/w34781. [CrossRef]

- Demirer, M.; Horton, J.J.; Immorlica, N.; Lucier, B.; Shahidi, P. Chaining Tasks, Redefining Work: A Theory of AI Automation. In Working Paper 34859; National Bureau of Economic Research, 2026. Issued February 2026; Available online: https://www.nber.org/papers/w34859. [CrossRef]

- Farach, A. AI as Coordination-Compressing Capital: Task Reallocation, Organizational Redesign, and the Regime Fork. arXiv. 2026. Available online: https://arxiv.org/abs/2602.16078.

- Workday, Inc. Form 10-K for the Fiscal Year Ended January 31, 2025. 2025. Available online: https://investor.workday.com/financials/sec-filings/default.aspx (accessed on 18 April 2026).

- HP, Inc. HP Inc. Reports Fiscal 2025 Full Year and Fourth Quarter Results. 2025. Available online: https://investor.hp.com/news-events/news/news-details/2025/HP-Inc–Reports-Fiscal-2025-Full-Year-and-Fourth-Quarter-Results/default.aspx (accessed on 18 April 2026).

- SAP. SAP Updates Its Ambition 2025 and Announces Transformation Program for 2024. 2024. Available online: https://news.sap.com/2024/01/sap-updates-its-ambition-2025-and-announces-transformation-program-for-2024/ (accessed on 18 April 2026).

- Reuters. Salesforce to Cut 1,000 Roles, Bloomberg News Reports. 2025. Available online: https://www.reuters.com/technology/salesforce-cut-1000-roles-bloomberg-news-reports-2025-02-04/ (accessed on 21 March 2026).

- Reuters. Exclusive: Meta Targets May 20 for First Wave of Layoffs; Additional Cuts Later in 2026. 17 April 2026. Available online: https://www.reuters.com/world/meta-targets-may-20-first-wave-layoffs-additional-cuts-later-2026-2026-04-17/ (accessed on 18 April 2026).

- Falk, B.H.; Tsoukalas, G. The AI Layoff Trap. arXiv. 2026. Available online: https://arxiv.org/abs/2603.20617.

- Eloundou, T.; Manning, S.; Mishkin, P.; Rock, D. GPTs are GPTs: Labor Market Impact Potential of LLMs. Science 2024, 384, 1306–1308. [Google Scholar] [CrossRef] [PubMed]

- International Labour Organization. Generative AI and Jobs: A Refined Global Index of Occupational Exposure. In ILO Working Paper 140, International Labour Organization; 2025. [Google Scholar] [CrossRef]

- International Labour Organization. Generative AI and Jobs: A 2025 Update, 2025. Research Brief.

- Organisation for Economic Co-operation and Development. Bridging the AI Skills Gap: Is Training Keeping Up? https://www.oecd.org/en/publications/bridging-the-ai-skills-gap_66d0702e-en.html. accessed. 2025. (accessed on 18 April 2026). [CrossRef]

- World Economic Forum. The Future of Jobs Report 2025. 2025. Available online: https://www.weforum.org/publications/the-future-of-jobs-report-2025/ (accessed on 21 March 2026).

- World Economic Forum. The Top Jobs and Labour Market Stories of 2025. 15 January 2026. Available online: https://www.weforum.org/stories/2026/01/top-jobs-and-labour-market-stories-2025/ (accessed on 21 March 2026).

- Autor, D.H.; Levy, F.; Murnane, R.J. The skill content of recent technological change: An empirical exploration. The Quarterly journal of economics 2003, 118, 1279–1333. [Google Scholar] [CrossRef]

- Autor, D.H. Why are there still so many jobs? The history and future of workplace automation. Journal of economic perspectives 2015, 29, 3–30. [Google Scholar] [CrossRef]

- Acemoglu, D.; Restrepo, P. Automation and new tasks: How technology displaces and reinstates labor. Journal of economic perspectives 2019, 33, 3–30. [Google Scholar] [CrossRef]

- Brynjolfsson, E.; Li, D.; Raymond, L. Generative AI at Work. The Quarterly Journal of Economics 2025, 140, 889–942. [Google Scholar] [CrossRef]

- Noy, S.; Zhang, W. Experimental evidence on the productivity effects of generative artificial intelligence. Science 2023, 381, 187–192. [Google Scholar] [CrossRef] [PubMed]

- Peng, S.; Kalliamvakou, E.; Cihon, P.; Demirer, M. The Impact of AI on Developer Productivity: Evidence from GitHub Copilot. arXiv. 2023. Available online: https://www.microsoft.com/en-us/research/publication/the-impact-of-ai-on-developer-productivity-evidence-from-github-copilot/.

- Cruces, G.; Fernández Meijide, D.; Galiani, S.; Gálvez, R.H.; Lombardi, M. Does Generative AI Narrow Education-Based Productivity Gaps? Evidence from a Randomized Experiment; National Bureau of Economic Research, 2026. Issued February 2026; Available online: https://www.nber.org/papers/w34851. [CrossRef]

- Dell’Acqua, F.; McFowland, E., III; Mollick, E.R.; Lifshitz, H.; Kellogg, K.C.; Rajendran, S.; Krayer, L.; Candelon, F.; Lakhani, K.R. Navigating the Jagged Technological Frontier: Field Experimental Evidence of the Effects of AI on Knowledge Worker Productivity and Quality. Organization Science 2026, 37, 403–423. [Google Scholar] [CrossRef]

- Handa, K.; Tamkin, A.; McCain, M.; Huang, S.; Durmus, E.; Heck, S.; Mueller, J.; Hong, J.; Ritchie, S.; Belonax, T.; et al. Which Economic Tasks Are Performed with AI? Evidence from Millions of Claude Conversations. arXiv. 2025. Available online: https://arxiv.org/abs/2503.04761.

- World Economic Forum. AI at Work: From Productivity Hacks to Organizational Transformation. World Economic Forum story summarizing the community paper. 2026. Available online: https://www.weforum.org/stories/2026/01/ai-at-work-insights/ (accessed on 18 April 2026).

- World Economic Forum. Organizational Transformation in the Age of AI: How Organizations Maximize AI’s Potential. 2026. Available online: https://www.weforum.org/publications/organizational-transformation-in-the-age-of-ai-how-organizations-maximize-ais-potential/ (accessed on 18 April 2026).

- Loaiza, I.; Rigobon, R. The EPOCH of AI: Human-Machine Complementarities at Work. 21 November 2024. Available online: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=5028371 (accessed on 18 April 2026). [CrossRef]

- Jaumotte, F.; Kim, J.; Koll, D.; Li, E.Z.; Li, L.; Melina, G.; Song, A.; Tavares, M.M. Bridging Skill Gaps for the Future: New Jobs Creation in the AI Age. January 2026. Available online: https://www.imf.org/en/publications/staff-discussion-notes/issues/2026/01/09/bridging-skill-gaps-for-the-future-new-jobs-creation-in-the-ai-age-572136 (accessed on 18 April 2026).

- Reuters. ByteDance’s TikTok Cuts Hundreds of Jobs in Shift Towards AI Content Moderation. 11 October 2024. Available online: https://www.reuters.com/technology/bytedance-cuts-over-700-jobs-malaysia-shift-towards-ai-moderation-sources-say-2024-10-11/ (accessed on 22 March 2026).

- International Monetary Fund. New Skills and AI Are Reshaping the Future of Work. https://www.imf.org/en/blogs/articles/2026/01/14/new-skills-and-ai-are-reshaping-the-future-of-work, 2026. IMF Blog. accessed. 14 January 2026. (accessed on 21 March 2026).

- Department for Science; Innovation and Technology; UK. AI Labour Market Survey 2025 Report: Executive Summary. 28 January 2026. Available online: https://www.gov.uk/government/publications/ai-labour-market-survey-2025-report/ai-labour-market-survey-2025-report-executive-summary (accessed on 22 March 2026).

- Global Reporting Initiative. GRI Topic Standard Project for Labor: Training and Education. Available online: https://www.globalreporting.org/standards/standards-development/topic-standards-project-for-labor/ (accessed on 18 April 2026).

- World Economic Forum. How AI Is Reshaping the Career Ladder, and Other Trends in Jobs and Skills on Labour Day. 2025. Available online: https://www.weforum.org/stories/2025/04/ai-jobs-international-workers-day/ (accessed on 21 March 2026).

- World Economic Forum. How AI Is Changing Early Careers: A View from Entry. World Economic Forum initiative page referencing the briefing, 2026. Available online: https://initiatives.weforum.org/reskilling-revolution/learning-to-earning (accessed on 18 April 2026).

- World Bank. South Asia Development Update – Chapter 2 Highlights: Artificial Intelligence, Real Impact: Labor Market Implications of AI Adoption in South Asia. 2025. Available online: https://openknowledge.worldbank.org/entities/publication/94725cf6-f681-42c9-acc7-63a076e0fc2b (accessed on 18 April 2026).

- EFRAG. ESRS S1: Own Workforce ESRS Set 1 landing page. 2023. Available online: https://www.efrag.org/en/sustainability-reporting/esrs-workstreams/sector-agnostic-standards-set-1-esrs (accessed on 18 April 2026).

- International Organization for Standardization. 2018. Available online: https://www.iso.org/standard/69338.html (accessed on 22 March 2026).

- Organisation for Economic Co-operation and Development. OECD Guidelines for Multinational Enterprises on Responsible Business Conduct. 2023. Available online: https://www.oecd.org/en/publications/oecd-guidelines-for-multinational-enterprises-on-responsible-business-conduct_81f92357-en.html (accessed on 18 April 2026).

- International Labour Organization. Just Transition Towards Environmentally Sustainable Economies and Societies. 2025. Available online: https://www.ilo.org/topics-and-sectors/just-transition-towards-environmentally-sustainable-economies-and-societies (accessed on 22 March 2026).

- United Nations Department of Economic and Social Affairs. Goal 8: Promote sustained, inclusive and sustainable economic growth, full and productive employment and decent work for all. 2025. Available online: https://sdgs.un.org/goals/goal8 (accessed on 22 March 2026).

- PwC. The Fearless Future: PwC’s 2025 Global AI Jobs Barometer. 2025. Available online: https://www.pwc.com/gx/en/services/ai/ai-jobs-barometer.html (accessed on 18 April 2026).

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. Remote-Capable Knowledge Work Should Default to AI-Enabled Flexibility, 2026; Preprint.

- Mitchell, M.; Wu, S.; Zaldivar, A.; Barnes, P.; Vasserman, L.; Hutchinson, B.; Spitzer, E.; Raji, I.D.; Gebru, T. Model Cards for Model Reporting. In Proceedings of the Proceedings of the Conference on Fairness, Accountability, and Transparency, 2019; Association for Computing Machinery; pp. 220–229. [Google Scholar] [CrossRef]

- Gebru, T.; Morgenstern, J.; Vecchione, B.; Vaughan, J.W.; Wallach, H.; Iii, H.D.; Crawford, K. Datasheets for datasets. Communications of the ACM 2021, 64, 86–92. [Google Scholar] [CrossRef]

- Raji, I.D.; Smart, A.; White, R.N.; Mitchell, M.; Gebru, T.; Hutchinson, B.; Smith-Loud, J.; Theron, D.; Barnes, P. Closing the AI accountability gap: Defining an end-to-end framework for internal algorithmic auditing. In Proceedings of the Proceedings of the 2020 conference on fairness, accountability, and transparency, 2020; pp. 33–44. [Google Scholar]

- Reuters. Sweden’s Klarna Shifts AI Focus from Cost Cuts to Growth. 2025. Available online: https://www.reuters.com/business/swedens-klarna-shifts-ai-focus-cost-cuts-growth-2025-09-10/ (accessed on 21 March 2026).

- Autor, D. Applying AI to Rebuild Middle Class Jobs; Technical report; National Bureau of Economic Research, 2024. [Google Scholar]

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. Human-AI Productivity Claims Should Be Reported as Time-to-Acceptance under Explicit Acceptance Tests, 2026; TechRxiv preprint.

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. Harness Engineering for Language Agents: The Harness Layer as Control, Agency, and Runtime, 2026; Preprint.

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. The AutoResearch Moment: From Experimenter to Research Director, 2026; Preprint.

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. OpenClaw as Language Infrastructure: A Case-Centered Survey of a Public Agent Ecosystem in the Wild, 2026; Preprint.

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. Let Papers Flow: AI Conferences Should Embrace Submission Explosion via Autonomous Review Pipelines, 2026; Preprint.

- He, C.; Zhou, X.; Wu, Y.; Yu, X.; Zhang, Y.; Zhang, L.; Wang, D.; Lyu, S.; Xu, H.; Wang, X.; et al. ESGenius: Benchmarking LLMs on Environmental, Social, and Governance (ESG) and Sustainability Knowledge. Proceedings of the Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing 2025, 14623–14664. [Google Scholar]

- Zhang, L.; Zhou, X.; He, C.; Wang, D.; Wu, Y.; Xu, H.; Liu, W.; Miao, C. MMESGBench: Pioneering Multimodal Understanding and Complex Reasoning Benchmark for ESG Tasks. In Proceedings of the Proceedings of the 33rd ACM International Conference on Multimedia, 2025; pp. 12829–12836. [Google Scholar]

- He, C.; Zhou, X.; Yu, X.; Zhang, L.; Zhang, Y.; Wu, Y.; Xiao, L.; Li, L.; Wang, D.; Xu, H.; et al. SSKG Hub: An Expert-Guided Platform for LLM-Empowered Sustainability Standards Knowledge Graphs. arXiv 2026, arXiv:2603.00669. [Google Scholar]

- He, C.; Zhou, X.; Wang, D.; Yu, X.; Xiao, L.; Li, L.; Xu, H.; Liu, W.; Miao, C. KG4ESG: The ESG Knowledge Graph Atlas, 2026; Preprint.

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. ESGlass: Glass-Box ESG and Sustainability Reports; Preprint, 2026. [Google Scholar]

- Bresnahan, T.F.; Brynjolfsson, E.; Hitt, L.M. Information technology, workplace organization, and the demand for skilled labor: Firm-level evidence. The quarterly journal of economics 2002, 117, 339–376. [Google Scholar] [CrossRef]

- Deming, D.J. The growing importance of social skills in the labor market. The quarterly journal of economics 2017, 132, 1593–1640. [Google Scholar] [CrossRef]

- England, Skills. AI Skills for the UK Workforce. 2025. Published October 29, 2025. Available online: https://www.gov.uk/government/publications/ai-skills-for-the-uk-workforce (accessed on 22 March 2026).

- Organisation for Economic Co-operation and Development. AI and Work Topic page. 2026. Available online: https://www.oecd.org/en/topics/sub-issues/ai-and-work.html (accessed on 21 March 2026).

- Reuters. Atlassian to Cut Roughly 10% Jobs in Pivot to AI. 2026. Available online: https://www.reuters.com/technology/atlassian-lay-off-about-1600-people-pivot-ai-2026-03-11/ (accessed on 18 April 2026).

- Reuters. Block Shares Soar as Dorsey Leans on AI to Trim Workforce. https://www.reuters.com/sustainability/sustainable-finance-reporting/block-shares-soar-dorsey-leans-ai-trim-workforce-2026-02-27/, 2026. Published. 27 February 2026. (accessed on 18 April 2026).

- Reuters. Workday to Cut 1,750 Jobs in AI Push. 2025. Available online: https://www.reuters.com/business/workday-cut-85-its-workforce-2025-02-05/ (accessed on 21 March 2026).

- Reuters. HP to Cut About 6,000 Jobs by 2028, Ramps Up AI Efforts. 2025. Available online: https://www.reuters.com/business/hp-cut-about-6000-jobs-by-2028-ramps-up-ai-efforts-2025-11-25/ (accessed on 18 April 2026).

- Reuters. SAP to Restructure 8,000 Jobs in Push Towards AI, Shares Hit Record. 24 January 2024. Available online: https://www.reuters.com/technology/sap-announces-company-wide-restructuring-updates-2025-outlook-2024-01-23/ (accessed on 18 April 2026).

- Reuters. Salesforce Cuts Fewer Than 1,000 Jobs, Business Insider Reports. https://www.reuters.com/business/world-at-work/salesforce-cuts-less-than-1000-jobs-business-insider-reports-2026-02-10/, 2026. Published. 10 February 2026. (accessed on 21 March 2026).

- Reuters. Salesforce Forecasts Weak Current-Quarter Revenue, Shares Fall. 2025. Available online: https://www.reuters.com/sustainability/sustainable-finance-reporting/salesforce-forecasts-weak-current-quarter-revenue-shares-fall-2025-09-03/ (accessed on 22 March 2026).

- Reuters. Meta Shares Jump After Reuters Report on Plans for Layoffs of 20% or More. 16 March 2026. Available online: https://www.reuters.com/business/meta-shares-jump-after-reuters-report-plans-layoffs-20-or-more-2026-03-16/ (accessed on 21 March 2026).

- Reuters. Microsoft to Cut About 4% of Jobs Amid Hefty AI Bets. 2025. Available online: https://www.reuters.com/business/world-at-work/microsoft-lay-off-many-9000-employees-seattle-times-reports-2025-07-02/ (accessed on 21 March 2026).

- Reuters. Microsoft Racks Up over $500 Million in AI Savings While Slashing Jobs, Bloomberg News Reports. 9 July 2025. Available online: https://www.reuters.com/business/microsoft-racks-up-over-500-million-ai-savings-while-slashing-jobs-bloomberg-2025-07-09/ (accessed on 22 March 2026).

- Reuters. Amazon to Cut About 14,000 Corporate Jobs in AI Push. 2025. Available online: https://www.reuters.com/sustainability/amazon-lay-off-about-14000-roles-2025-10-28/ (accessed on 21 March 2026).

- Amazon. Update on Our Organization. 28 January 2026. Available online: https://www.aboutamazon.com/news/company-news/amazon-layoffs-corporate-jan-2026 (accessed on 22 March 2026).

- Reuters. China’s Baidu Starts Layoffs After Reporting Third-Quarter Loss - Sources. 2025. Available online: https://www.reuters.com/business/world-at-work/chinas-baidu-starts-layoffs-after-reporting-third-quarter-loss-sources-2025-11-28/ (accessed on 22 March 2026).

- Reuters. Sweden’s Klarna Says AI Chatbots Help Shrink Headcount. 27 August 2024. Available online: https://www.reuters.com/technology/artificial-intelligence/swedens-klarna-says-ai-chatbots-help-shrink-headcount-2024-08-27/ (accessed on 21 March 2026).

- Reuters. Alibaba Says to Restart Hiring, Sees Signs of Start of AI Bubble in the US. 2025. Available online: https://www.reuters.com/technology/alibaba-chairman-says-china-business-more-confident-since-xis-tech-summit-2025-03-25/ (accessed on 22 March 2026).

- Reuters. Alibaba Launches AI Platform for Enterprises as Agent Craze Sweeps China. 2026. Available online: https://www.reuters.com/world/asia-pacific/alibaba-launches-new-ai-agent-platform-enterprises-2026-03-17/ (accessed on 22 March 2026).

- Reuters. Tencent Pledges Higher AI Investment in 2026 After Chip Curbs Hit Capex Plans. 18 March 2026. Available online: https://www.reuters.com/world/asia-pacific/tencent-books-13-rise-quarterly-revenue-gaming-ai-demand-2026-03-18/ (accessed on 22 March 2026).

- Reuters. Xiaomi to Invest at Least $8.7 Billion in AI Over Next Three Years, CEO Says. 19 March 2026. Available online: https://www.reuters.com/world/asia-pacific/xiaomi-invest-least-87-billion-ai-over-next-three-years-ceo-says-2026-03-19/ (accessed on 22 March 2026).

- Reuters. Salesforce Closes 1,000 Paid `Agentforce’ Deals, Looks to Robot Future. 17 December 2024. Available online: https://www.reuters.com/technology/artificial-intelligence/salesforce-closes-1000-paid-agentforce-deals-looks-robot-future-2024-12-17/ (accessed on 22 March 2026).

- U.S. Bureau of Labor Statistics. Productivity and Costs, Fourth Quarter and Annual Averages 2025. Available online: https://www.bls.gov/bls/news-release/prod.htm (accessed on 18 April 2026).

- U.S. Bureau of Labor Statistics. Job Openings and Labor Turnover – January 2026. Available online: https://www.bls.gov/bls/news-release/jolts.htm (accessed on 18 April 2026).

- Ministry of Manpower; Singapore. Job Vacancies 2025: Labour Demand Gradually Shifting to Growth Areas as Firms Adjust Hiring Plans. 20 March 2026. Available online: https://www.mom.gov.sg/newsroom/press-releases/2026/0320-job-vacancies-report-2025 (accessed on 22 March 2026).

- Reuters. China Pins Hopes on Society-Wide AI Push to Add Jobs, Rejuvenate Economy. 10 March 2026. Available online: https://www.reuters.com/business/world-at-work/china-pins-hopes-society-wide-ai-push-add-jobs-rejuvenate-economy-2026-03-10/ (accessed on 22 March 2026).

- State Council of the People’s Republic of China. Chinese Authorities Roll Out Measures to Boost Employment for Graduate Job Seekers. 20 March 2026. Available online: https://english.www.gov.cn/news/202603/20/content_WS69bd46c3c6d00ca5f9a0a070.html (accessed on 22 March 2026).

- Reuters. China’s February Youth Jobless Rate Dips to 16.1%. 19 March 2026. Available online: https://www.reuters.com/world/asia-pacific/chinas-february-youth-jobless-rate-dips-161-2026-03-19/ (accessed on 22 March 2026).

- Infocomm Media Development Authority. Singapore to Build AI-Fluent Workforce to Accelerate National AI Ambition. 29 August 2025. Available online: https://www.imda.gov.sg/resources/press-releases-factsheets-and-speeches/press-releases/2025/sg-to-build-ai-fluent-workforce-to-accelerate-national-ai-ambition (accessed on 21 March 2026).

- Workforce Singapore. Jobs Transformation Map – Generative AI in Finance. 2026. Page updated January 2, 2026. Available online: https://www.wsg.gov.sg/home/employers-industry-partners/jobs-transformation-maps/jobs-transformation-map-generative-ai (accessed on 22 March 2026).

- Microsoft Source Asia. Ministry of Labour and Microsoft Join Forces to Accelerate AI Skill Development for 150,000 Thai Workers, Driving Thailand Towards Becoming “Creator” Nation in Digital Economy. 2025. Available online: https://news.microsoft.com/source/asia/2025/11/25/ministry-of-labour-and-microsoft-join-forces-to-accelerate-ai-skill-development-for-150000-thai-workers-driving-thailand-towards-becoming-creator-nation-in-digital-economy/ (accessed on 21 March 2026).

- State Council of the People’s Republic of China. China Holds Central Economic Work Conference to Plan for 2026. 2025. Published December 11, 2025. Available online: https://english.www.gov.cn/news/202512/11/content_WS693a9c0dc6d00ca5f9a08098.html (accessed on 22 March 2026).

- European Commission. AI Talent, Skills and Literacy. 2025. Page updated January 16, 2026. Available online: https://digital-strategy.ec.europa.eu/en/policies/ai-talent-skills-and-literacy (accessed on 22 March 2026).

- United Nations Global Compact. Social Sustainability. 2025. Available online: https://unglobalcompact.org/what-is-gc/our-work/social (accessed on 22 March 2026).

- Tencent Holdings. Environmental, Social and Governance Report. 2024. Available online: https://www.tencent.com/en-us/esg/esg-reports.html (accessed on 18 April 2026).

- Baidu. 2024 Environmental, Social and Governance Report. 2025. Available online: https://esg.baidu.com/en_reports.html (accessed on 18 April 2026).

- JD.com. 2024 Environmental, Social and Governance Report. Available online: https://ir.jd.com/esgcsr (accessed on 18 April 2026).

| Role family | AI-compressed substrate | Elevated human layer | Local redesign checkpoint |

|---|---|---|---|

| Administration | Scheduling, note-taking, travel plans, template documents, inbox triage | Workflow orchestration, stakeholder routing, priority arbitration, meeting-to-execution follow-through | Stalls early only if the role is kept narrow and low-discretion |

| HR / recruiting | JD drafting, sourcing, screening, scheduling, FAQ handling | Interview calibration, talent advising, internal mobility, onboarding exceptions, employee relations | Moves outward with ambiguity, regulation, and people judgment |

| Marketing | First-draft copy, campaign variants, segmentation ideas, SEO baselines | Brand stewardship, experiment design, cross-channel learning, partner alignment, customer interpretation | Stalls in commodity content factories; rises in strategy-rich functions |

| Sales operations | CRM updates, proposal drafts, routine pipeline reporting | Deal orchestration, forecast integrity, strategic account planning, exception management | Stalls earlier when account complexity is low and highly transactional |

| Customer support | FAQ retrieval, routing, response drafts, transcript summaries | Escalations, emotion-rich cases, churn prevention, knowledge-base curation, root-cause analysis | Simple queues stall earlier; complex service layers retain more frontier movement |

| Finance ops | Invoice coding, reconciliations, reporting drafts, variance explanations | Controls, exception analysis, audit readiness, business partnering, scenario interpretation | Moves outward with compliance burden and exception intensity |

| Legal & compliance | Document review, first-pass drafting, policy retrieval, checklist generation | Precedent judgment, edge-case escalation, regulator interface, audit defense | Moves outward in regulated industries where accountability cannot be delegated |

| Coding | Boilerplate, unit tests, refactors, API glue, search-heavy debugging | Architecture, integration, security, eval design, code review, reliability ownership | Moves outward in complex systems; stalls sooner in repetitive maintenance |

| Research | Literature triage, summaries, baseline scripts, transcription, memo drafts | Question selection, evaluation design, source trust, synthesis, causal identification, significance judgment | High in frontier or ambiguous work; stalls sooner in repetitive desk research |

| Dimension | Minimum disclosure | Illustrative metrics or evidence |

|---|---|---|

| Task map | What tasks were automated, accelerated, or reallocated; what human-accountable work remains; and what baseline / post-stabilization windows were used. | Residual exception rates, escalation ownership, quality-control steps, customer handoff rules, workflow scope, and rollout milestones. |

| Training | What protected, paid training affected workers received. | Hours per worker, completion and application rates, role-specific AI literacy versus supervisory skill [20,39]. |

| Mobility | What elevated roles were opened before any layoffs. | Share of affected workers receiving internal offers, wage protection, transition duration, placement outcomes. |

| Apprenticeship | Whether junior, internship, or graduate-intake pathways were preserved or redesigned. | Intake numbers, junior-to-mid promotion rates, apprentice conversion, mentor ratios. |

| Consultation | Whether workers and managers were consulted and what design changes followed. | Documented consultations, appeal channels, incident reporting, redesign decisions. |

| Distribution of gains | How productivity improvements benefited workers and service quality. | Promotion pathways, reduced drudgery, better staffing for higher-order work, stability of quality metrics. |

| Financing logic | What AI investment or AI-role expansion the workforce change is funding, and what alternatives were considered first. | AI capex or product spend, AI-role openings, internal-fill rate, share of savings ring-fenced for training/wage protection, and non-layoff financing options evaluated. |

| Public reporting | Whether the transition was disclosed through human-capital and social-sustainability metrics. | ESRS S1, GRI labor training, ISO 30414, OECD responsible-business conduct alignment [39,43,44,45]. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).