Submitted:

13 April 2026

Posted:

20 April 2026

You are already at the latest version

Abstract

Background: The production of evidence syntheses has expanded substantially, yet confusion persists regarding the distinct roles, structures, and scientific validity of protocols, narrative reviews, systematic reviews, and umbrella reviews. Mislabeling or conflating these forms undermines research reproducibility and evidence-based decision-making. Objective: To provide a comprehensive, side-by-side methodological comparison of protocols, narrative reviews, systematic reviews, and umbrella reviews, including definitions, purposes, key methodological steps, strengths, limitations, and appropriate use cases. Methods: A structured comparative methodological analysis was conducted between February and March 2026. Authoritative guidance documents were identified through a targeted search of PubMed and Google Scholar using keywords “systematic review methodology,” “narrative review,” “umbrella review,” and “protocol registration.” Included sources were the Cochrane Handbook for Systematic Reviews of Interventions (Higgins et al., 2023), PRISMA 2020 (Page et al., 2021), PRISMA-P (Shamseer et al., 2015), the PRIOR statement for overviews of reviews (Gates et al., 2022), SWiM guideline for narrative synthesis (Campbell et al., 2020), PRISMA-ScR for scoping reviews (Tricco et al., 2018), JBI methodology for umbrella reviews (Aromataris et al., 2015), PROSPERO registry standards, ROBIS (Whiting et al., 2016), AMSTAR 2 (Shea et al., 2017), RoB 2 (Sterne et al., 2019), and GRADE (Schünemann et al., 2011). Key methodological domains (research question formulation, search strategy, risk of bias assessment, synthesis methods, transparency, reproducibility) were extracted and synthesized for side-by-side comparison. Results: A protocol is a pre-registered plan, not a review. A systematic review is a reproducible, bias-minimizing synthesis of eligible primary studies on a focused question. A narrative review is a subjective, flexible summary of a broader topic. An umbrella review is a higher-order synthesis that systematically compiles, appraises, and synthesizes existing systematic reviews. Umbrella reviews extend this hierarchy by synthesizing review-level evidence. Across all domains, systematic reviews and umbrella reviews demonstrated the highest methodological rigor, characterized by predefined protocols, comprehensive search strategies, and formal risk of bias assessment. Protocols functioned exclusively as methodological safeguards, while narrative reviews showed substantial variability and lack of reproducibility. Conclusion: Choosing among these four forms depends on the review question, available evidence base, resources, and intended use. Protocols should precede systematic reviews and umbrella reviews; narrative reviews serve complementary roles in education and hypothesis generation. Accurate differentiation is a prerequisite for maintaining the integrity of evidence-based healthcare.

Keywords:

1. Introduction

- A protocol is a plan for a future review.

- A systematic review is a rigorous, reproducible study of primary studies.

- A narrative review is an expert-led, interpretive overview.

- An umbrella review is a systematic synthesis of existing systematic reviews.

2. Methods

- Cochrane Handbook for Systematic Reviews of Interventions (Higgins et al., 2023)

- PRISMA 2020 Statement (Page et al., 2021) and its explanation and elaboration (Page et al., 2021b)

- PRISMA-P 2015 Statement (Shamseer et al., 2015)

- PRIOR statement for overviews of reviews (Gates et al., 2022)

- SWiM guideline for narrative synthesis (Campbell et al., 2020)

- PRISMA-ScR for scoping reviews (Tricco et al., 2018)

- JBI Methodology for Umbrella Reviews (Aromataris et al., 2015)

- PROSPERO registry standards (University of York)

- ROBIS tool for risk of bias in systematic reviews (Whiting et al., 2016)

- AMSTAR 2 (Shea et al., 2017)

- RoB 2 for randomized trials (Sterne et al., 2019)

- GRADE Working Group (Schünemann et al., 2011)

- Methodological literature on living reviews (Elliott et al., 2014; Ioannidis, 2017)

- Guidance on overlap management in umbrella reviews (Pieper et al., 2019; Lunny et al., 2020)

- Additional methodological resources (Gough et al., 2012; Booth et al., 2021; Munn et al., 2018; Muka et al., 2020)

3. Results

3.1. Definitions and Core Characteristics

3.1.1. Protocol

3.1.2. Systematic Review

3.1.3. Narrative Review

3.1.4. Umbrella Review

3.2. Comparative Methodological Characteristics

3.3. Synthesis of Findings

4. Step-by-Step Process for Each

4.1. Protocol Development (Steps)

- Identify a gap or need for a systematic review or umbrella review.

- Formulate a research question (focused for systematic review; broader for umbrella review).

- Draft eligibility criteria (inclusion/exclusion).

- Develop a comprehensive search strategy (with librarian) (Lefebvre et al., 2023).

- Plan study selection process (screening).

- Design data extraction form.

- Select risk of bias assessment tools (RoB 2 for primary studies; AMSTAR 2 or ROBIS for systematic reviews).

- Plan synthesis (meta-analysis, narrative with SWiM guidance, or overview).

- Register protocol (PROSPERO, OSF, Cochrane, or journal).

- Publish protocol (optional but encouraged).

4.2. Conducting a Systematic Review (Steps)

- (Prerequisite: Have or write a protocol.)

- Execute search across multiple databases (e.g., PubMed, Embase, Web of Science).

- Remove duplicates.

- Screen titles/abstracts against inclusion criteria (two reviewers).

- Retrieve full texts.

- Screen full texts (two reviewers).

- Extract data from included primary studies (standardized forms).

- Assess risk of bias for each included primary study (Sterne et al., 2019).

- Synthesize results (meta-analysis or narrative synthesis following SWiM guidance, Campbell et al., 2020).

- Grade certainty of evidence (e.g., GRADE) (Schünemann et al., 2011).

- Write report following PRISMA 2020 checklist (Page et al., 2021).

- Interpret results, discuss limitations, conclude.

4.3. Writing a Narrative Review (Typical Steps)

- Choose a broad topic (e.g., “orthodontic management of impacted canines”).

- Conduct informal literature search (PubMed, Google Scholar, personal files).

- Select papers based on author’s judgment (seminal, recent, convenient).

- Read and summarize findings thematically or chronologically.

- Provide expert commentary, opinion, and future directions.

- Write without formal methods section (often no search details).

- Submit to journal (often invited by editor).

4.4. Conducting an Umbrella Review (Steps)

- (Prerequisite: Have or write a protocol.)

- Execute search for existing systematic reviews across multiple databases (e.g., PubMed, Embase, Epistemonikos, Cochrane Database of Systematic Reviews).

- Remove duplicates.

- Screen titles/abstracts against inclusion criteria (two reviewers).

- Retrieve full texts of candidate systematic reviews.

- Screen full texts (two reviewers).

- Extract data from included systematic reviews (standardized forms).

- Assess quality of included systematic reviews (e.g., AMSTAR 2, Shea et al., 2017; or ROBIS, Whiting et al., 2016).

- Manage overlap: map primary studies across included reviews to avoid double-counting (Pieper et al., 2019).

- Synthesize results narratively with evidence tables.

- Grade overall certainty of evidence (often using GRADE with consideration of review quality).

- Write report following PRIOR statement (Gates et al., 2022).

- Interpret results, discuss limitations, conclude.

5. Strengths and Limitations

5.1. Protocol

| Strengths | Limitations |

| Prevents selective outcome reporting | No findings on its own |

| Increases transparency and credibility | Requires time before seeing results |

| Allows replication and updating | Not suitable for narrative reviews |

| Facilitates peer review of methods | Registration may be mandatory for some journals |

5.2. Systematic Review

| Strengths | Limitations |

| Minimal bias; highest internal validity for primary studies | Very time- and resource-intensive |

| Reproducible and updateable (living reviews) | May be outdated quickly |

| Essential for clinical guidelines | Limited to narrow questions |

| Allows meta-analysis for precise effect estimates | Can be uninformative if primary studies are poor quality |

5.3. Narrative Review

| Strengths | Limitations |

| Relatively resource-efficient to produce | High risk of bias and selective citation |

| Considerable flexibility; can integrate disparate ideas | Not reproducible |

| Useful for teaching, textbooks, introductions | Cannot reliably inform policy or clinical practice |

| Allows expert opinion and interpretation | Often confused with systematic reviews |

5.4. Umbrella Review

| Strengths | Limitations |

| Provides comprehensive overview of a broad topic | Contingent upon the methodological quality of existing systematic reviews |

| More time-efficient than conducting multiple de novo systematic reviews | Requires careful management of overlapping primary studies |

| Enables comparison of findings across multiple systematic reviews | May propagate errors if included reviews are flawed |

| Ideal for topics with abundant existing reviews | Can be complex to interpret when reviews reach conflicting conclusions |

| High credibility when methodologically rigorous | Not appropriate when few or no systematic reviews exist |

6. Appropriate Use Cases

- You plan to conduct a systematic review, umbrella review, scoping review, or network meta-analysis.

- You seek funding or ethics approval for a synthesis project.

- You want to avoid duplication (registry check).

- You intend to publish the review in a high-impact journal (many require protocol registration).

- You need to inform a clinical practice guideline.

- A policy decision requires the best available evidence from primary studies.

- You want to quantify an intervention’s effect (meta-analysis).

- You are evaluating the consistency or inconsistency of primary evidence.

- Your question is narrow and answerable (e.g., “Does X cause Y?”).

- Few or no existing systematic reviews on the topic.

- Multiple systematic reviews already exist on the same or related topics.

- You need a comprehensive overview of a broad clinical area (e.g., “orthodontic interventions for malocclusion”).

- You want to compare findings across different systematic reviews.

- Guideline developers need a single source summarizing existing review-level evidence.

- You wish to map the quality and consistency of evidence across a field.

- You are writing a textbook chapter or background section of a primary research paper.

- You are introducing a field to students or new researchers.

- You want to offer a personal perspective or historical overview.

- A systematic review or umbrella review is impractical (e.g., extremely broad topic, no eligible primary studies or systematic reviews).

- You are generating hypotheses, not testing them.

7. Common Misconceptions and Pitfalls

| Misconception | Correction |

| “A protocol is a type of review.” | Incorrect. A protocol constitutes a methodological plan rather than an evidence synthesis. |

| “A narrative review with a long reference list is systematic.” | Incorrect. Systematic refers to methods, not length. A long reference list does not equal systematic search or quality appraisal. |

| “We did a systematic review without a protocol.” | That is a systematic review, but it is non-registered and potentially at higher risk of post-hoc bias. |

| “We did a systematic review using narrative synthesis, so it’s a narrative review.” | Incorrect. Synthesis method (narrative vs. statistical) does not define the review type. Systematic review with narrative synthesis remains a systematic review. |

| “Umbrella reviews are just large systematic reviews.” | Incorrect. Umbrella reviews synthesize existing systematic reviews, not primary studies. The unit of analysis differs fundamentally. |

| “Any review that covers multiple topics is an umbrella review.” | Incorrect. Umbrella reviews require systematic methods, quality appraisal of included systematic reviews, and management of overlapping primary studies. |

| “Narrative reviews are worthless.” | Incorrect. Narrative reviews have important roles in education, hypothesis generation, and historical context—but they cannot replace systematic reviews or umbrella reviews for guiding practice. |

8. Reporting Standards and Registries

| Entity | Key Reporting Guideline | Key Registry |

| Protocol | PRISMA-P 2015 (Shamseer et al., 2015) | PROSPERO (for health), OSF, Cochrane, Figshare |

| Systematic Review | PRISMA 2020 (Page et al., 2021) | Not applicable (but protocol should be registered) |

| Umbrella Review | PRIOR statement (Gates et al., 2022) | PROSPERO (increasingly required) |

| Narrative Review | No widely accepted guideline (some journals suggest non-systematic review checklist) | Not applicable (should not be registered as a systematic or umbrella review) |

9. Illustrative Examples

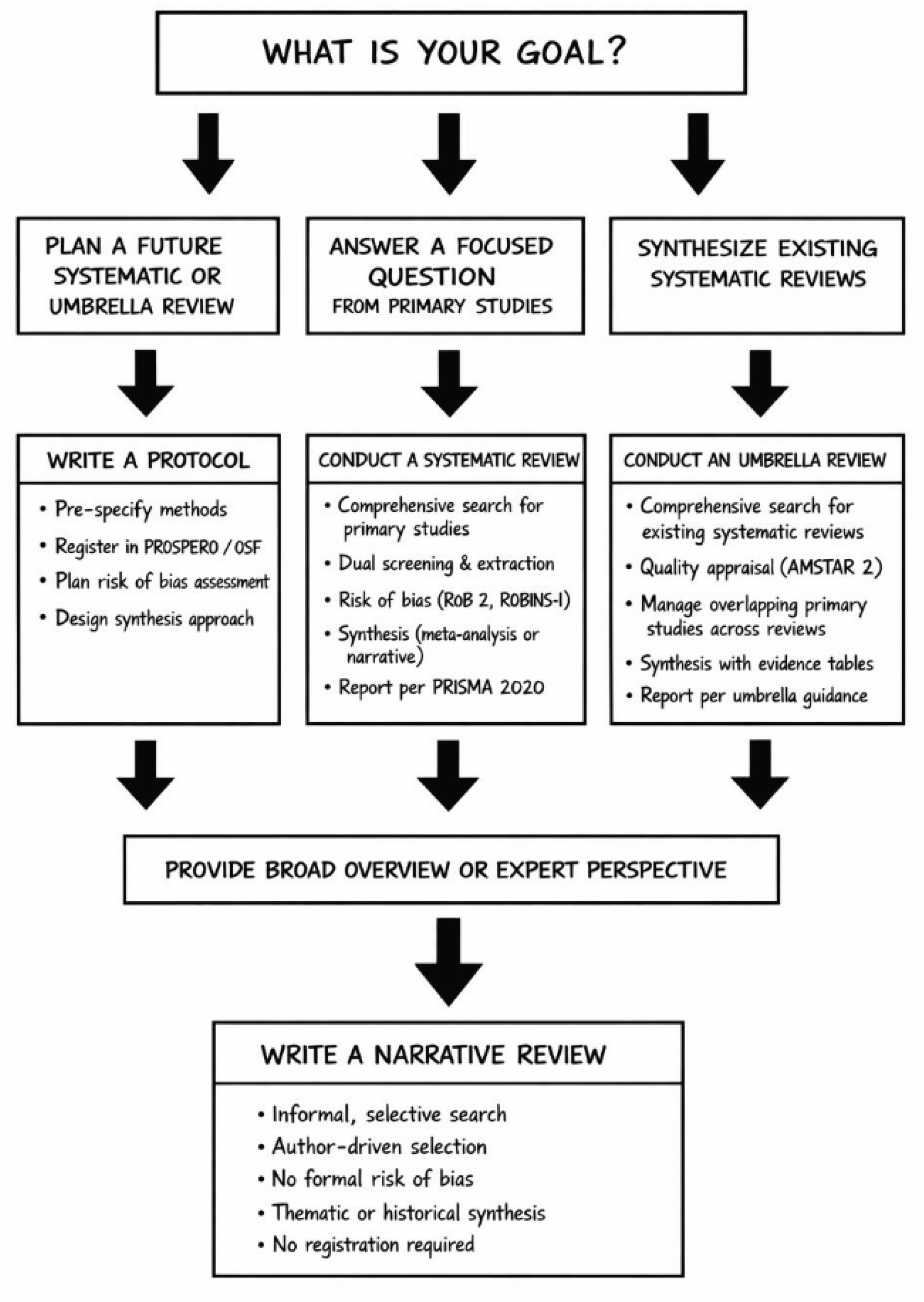

10. Decision Algorithm

- Branch 1 (Plan a future systematic or umbrella review) → Write a PROTOCOL → Register in PROSPERO/OSF

- Branch 2 (Answer a focused question from primary studies) → Conduct a SYSTEMATIC REVIEW → Follow PRISMA

- Branch 3 (Synthesize existing systematic reviews) → Conduct an UMBRELLA REVIEW → Follow PRIOR

- Branch 4 (Provide broad overview or expert perspective) → Write a NARRATIVE REVIEW → No registration required

11. Discussion

11.1. Interpretation of Differences

11.2. Why Mislabeling Occurs

11.3. Consequences in Clinical Research

11.4. The Evolving Landscape

11.5. Limitations

12. Conclusion

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Higgins, J.P.T.; Thomas, J.; Chandler, J.; Cumpston, M.; Li, T.; Page, M.J.; Welch, V.A. (Eds.) Cochrane Handbook for Systematic Reviews of Interventions. Version 6.4; Cochrane, 2023. [Google Scholar]

- Page, M.J.; McKenzie, J.E.; Bossuyt, P.M.; et al. The PRISMA 2020 statement: an updated guideline for reporting systematic reviews. BMJ. 2021, 372, n71. [Google Scholar] [CrossRef]

- Page, M.J.; Moher, D.; Bossuyt, P.M.; et al. PRISMA 2020 explanation and elaboration: updated guidance and exemplars for reporting systematic reviews. BMJ 2021, 372, n160. [Google Scholar] [CrossRef]

- Shamseer, L.; Moher, D.; Clarke, M.; et al. Preferred reporting items for systematic review and meta-analysis protocols (PRISMA-P) 2015: elaboration and explanation. BMJ 2015, 350, g7647. [Google Scholar] [CrossRef]

- Gates, M.; Gates, A.; Pieper, D.; et al. Reporting guideline for overviews of reviews of healthcare interventions: development of the PRIOR statement. BMJ 2022, 378, e070849. [Google Scholar] [CrossRef]

- Campbell, M.; McKenzie, J.E.; Sowden, A.; et al. Synthesis without meta-analysis (SWiM) in systematic reviews: reporting guideline. BMJ. 2020, 368, l6890. [Google Scholar] [CrossRef]

- Tricco, A.C.; Lillie, E.; Zarin, W.; et al. PRISMA Extension for Scoping Reviews (PRISMA-ScR): Checklist and Explanation. Ann Intern Med. 2018, 169(7), 467–473. [Google Scholar] [CrossRef] [PubMed]

- Aromataris, E.; Fernandez, R.; Godfrey, C.M.; Holly, C.; Khalil, H.; Tungpunkom, P. Summarizing systematic reviews: methodological development, conduct and reporting of an umbrella review approach. Int J Evid Based Healthc. 2015, 13(3), 132–40. [Google Scholar] [CrossRef] [PubMed]

- Shea, B.J.; Reeves, B.C.; Wells, G.; et al. AMSTAR 2: a critical appraisal tool for systematic reviews that include randomised or non-randomised studies of healthcare interventions, or both. BMJ 2017, 358, j4008. [Google Scholar] [CrossRef] [PubMed]

- Whiting, P.; Savović, J.; Higgins, J.P.T.; et al. ROBIS: A new tool to assess risk of bias in systematic reviews. J Clin Epidemiol. 2016, 69, 225–234. [Google Scholar] [CrossRef]

- Sterne, J.A.C.; Savović, J.; Page, M.J.; et al. RoB 2: a revised tool for assessing risk of bias in randomised trials. BMJ 2019, 366, l4898. [Google Scholar] [CrossRef]

- Schünemann, H.J.; Brennan, S.; Akl, E.A.; et al. The development of the GRADE handbook. J Clin Epidemiol. 2011, 64(11), 1281–5. [Google Scholar]

- Lefebvre, C.; Glanville, J.; Briscoe, S.; et al. Searching for and selecting studies. In Cochrane Handbook for Systematic Reviews of Interventions. Version 6.4; Higgins, J.P.T., Thomas, J., Chandler, J., et al., Eds.; Cochrane, 2023. [Google Scholar]

- Elliott, J.H.; Turner, T.; Clavisi, O.; et al. Living systematic reviews: an emerging opportunity to narrow the evidence-practice gap. PLoS Med. 2014, 11(2), e1001603. [Google Scholar] [CrossRef] [PubMed]

- Ioannidis, J.P.A. Next-generation systematic reviews: prospective meta-analysis, individual patient data, and living reviews. J Clin Epidemiol. 2017, 83, 1–3. [Google Scholar]

- Pieper, D.; Antoine, S.L.; Mathes, T.; Neugebauer, E.A.M.; Eikermann, M. Systematic review finds overlapping reviews were not mentioned in every other overview. J Clin Epidemiol. 2019, 116, 1–9. [Google Scholar] [CrossRef]

- Lunny, C.; Brennan, S.E.; McDonald, S.; et al. Overviews of reviews often have limited rigorous reporting and methodological quality. J Clin Epidemiol. 2020, 124, 64–75. [Google Scholar]

- Gough, D.; Thomas, J.; Oliver, S. Clarifying differences between review designs and methods. Syst Rev. 2012, 1, 28. [Google Scholar] [CrossRef]

- Booth, A.; Sutton, A.; Papaioannou, D. Systematic Approaches to a Successful Literature Review, 3rd ed.; Sage, 2021. [Google Scholar]

- Munn, Z.; Peters, M.D.J.; Stern, C.; Tufanaru, C.; McArthur, A.; Aromataris, E. Systematic review or scoping review? Guidance for authors when choosing between a systematic or scoping review approach. BMC Med Res Methodol. 2018, 18(1), 143. [Google Scholar] [CrossRef] [PubMed]

- Muka, T.; Glisic, M.; Milic, J.; et al. A 24-step guide on how to design, conduct, and successfully publish a systematic review. Eur J Epidemiol. 2020, 35(1), 49–60. [Google Scholar] [CrossRef]

- Pae, C.U. Why systematic review rather than narrative review? Psychiatry Investig. 2015, 12(3), 417–9. [Google Scholar] [CrossRef]

- PROSPERO International prospective register of systematic reviews. Available online: https://www.crd.york.ac.uk/prospero/ (accessed on 13 April 2026).

- Moher, D.; Shamseer, L.; Clarke, M.; et al. Preferred reporting items for systematic review and meta-analysis protocols (PRISMA-P) 2015 statement. Syst Rev. 2015, 4, 1. [Google Scholar] [CrossRef]

- Pollock, M.; Fernandes, R.M.; Becker, L.A.; Pieper, D.; Hartling, L. Chapter V: Overviews of Reviews. In Cochrane Handbook for Systematic Reviews of Interventions. Version 6.4; Higgins, J.P.T., Thomas, J., Chandler, J., et al., Eds.; Cochrane, 2023. [Google Scholar]

- Rethlefsen, M.L.; Kirtley, S.; Waffenschmidt, S.; et al. PRISMA-S: an extension to the PRISMA statement for searching. J Med Libr Assoc. 2021, 109(2), 174–180. [Google Scholar] [CrossRef]

- McGuinness, L.A.; Higgins, J.P.T. Risk-of-bias VISualization (robvis): An R package and Shiny web app for visualizing risk-of-bias assessments. Res Synth Methods 2021, 12(1), 55–61. [Google Scholar] [CrossRef]

- McKenzie, J.E.; Brennan, S.E. Synthesizing and presenting findings using other methods. In Cochrane Handbook for Systematic Reviews of Interventions; Higgins, J.P.T., Thomas, J., Chandler, J., et al., Eds.; Cochrane, 2023; Volume Version 6.4. [Google Scholar]

- Peters, M.D.J.; Godfrey, C.M.; Khalil, H.; McInerney, P.; Parker, D.; Soares, C.B. Best practice guidance and reporting items for the development of scoping review protocols. JBI Evid Implement. 2022, 20(4), 261–272. [Google Scholar] [CrossRef] [PubMed]

- Pollock, M.; Fernandes, R.M.; Becker, L.A.; Pieper, D.; Hartling, L. Recommendations for the extraction, analysis, and presentation of results in scoping reviews. JBI Evid Synth. 2023, 21(3), 520–532. [Google Scholar] [CrossRef] [PubMed]

- Page, M.J.; Moher, D. Evaluations of the uptake and impact of the Preferred Reporting Items for Systematic reviews and Meta-Analyses (PRISMA) Statement. Syst Rev. 2018, 7(1), 132. [Google Scholar]

- Khamis, A.M.; et al. A scoping review of scoping reviews: advancing the methodology. J Clin Epidemiol. 2022, 145, 15–27. [Google Scholar]

| Dimension | Protocol | Systematic Review | Narrative Review | Umbrella Review |

| Primary purpose | To pre-specify methods and prevent post-hoc bias | To answer a focused question by synthesizing primary studies with minimal bias | To provide broad overview, perspective, or historical context | To synthesize existing systematic reviews on a broad topic |

| Unit of analysis | Not applicable (plan) | Primary studies (RCTs, cohort, case-control, etc.) | Any literature (primary studies, reviews, opinion) | Systematic reviews and meta-analyses |

| Presence of results | No results (plan only) | Yes (synthesized findings from primary studies) | Yes (summarized findings) | Yes (synthesized findings from multiple reviews) |

| Research question | Stated but not answered | Narrow, precise (often PICO/PEO format) | Broad, often evolving or ill-defined | Broad to moderately broad (encompassing multiple related PICO questions) |

| Search strategy | Specified in detail (databases, terms, limits) | Comprehensive, reproducible, documented (Lefebvre et al., 2023) | Not specified; selective, based on author knowledge | Comprehensive for systematic reviews (multiple databases, search for existing reviews) |

| Inclusion criteria | Explicitly defined (PICO, study designs, language) | Strictly applied to all retrieved primary studies | Vague or absent | Explicitly defined (types of systematic reviews, overlapping PICO questions) |

| Risk of bias assessment | Planned (e.g., RoB 2, AMSTAR 2) | Performed on included primary studies (Sterne et al., 2019) | Not performed | Performed on included systematic reviews (e.g., AMSTAR 2, ROBIS) |

| Data extraction | Forms designed in advance | Standardized, duplicate extraction from primary studies | Informal note-taking | Standardized extraction from systematic reviews |

| Synthesis method | Not applicable (planned) | Meta-analysis (if possible) or narrative synthesis (SWiM guidance, Campbell et al., 2020) | Thematic, chronological, or conceptual summary | Narrative synthesis, tabulation of findings, and where appropriate, comparison of effect estimates |

| Overlap management | Not applicable | Not applicable | Not applicable | Critical (must avoid double-counting overlapping primary studies; see Pieper et al., 2019) |

| Transparency | Full (public registry) | Full (methods reported in detail) | Low (methods rarely reported) | Full (protocol recommended; PRIOR reporting, Gates et al., 2022) |

| Reproducibility | High (others could follow same plan) | High (study could be repeated) | Very low (different authors would produce different reviews) | High (study could be repeated) |

| Time to complete | Weeks | Months to years | Days to weeks | Months (may be shorter than de novo systematic reviews depending on scope) |

| Number of authors | Usually 2+ (often the same as the review team) | 2+ (ideally including a librarian or methodologist) | 1–2 (frequently single-authored) | 2+ (often including methodologist with expertise in overview methods) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).