Submitted:

16 April 2026

Posted:

20 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

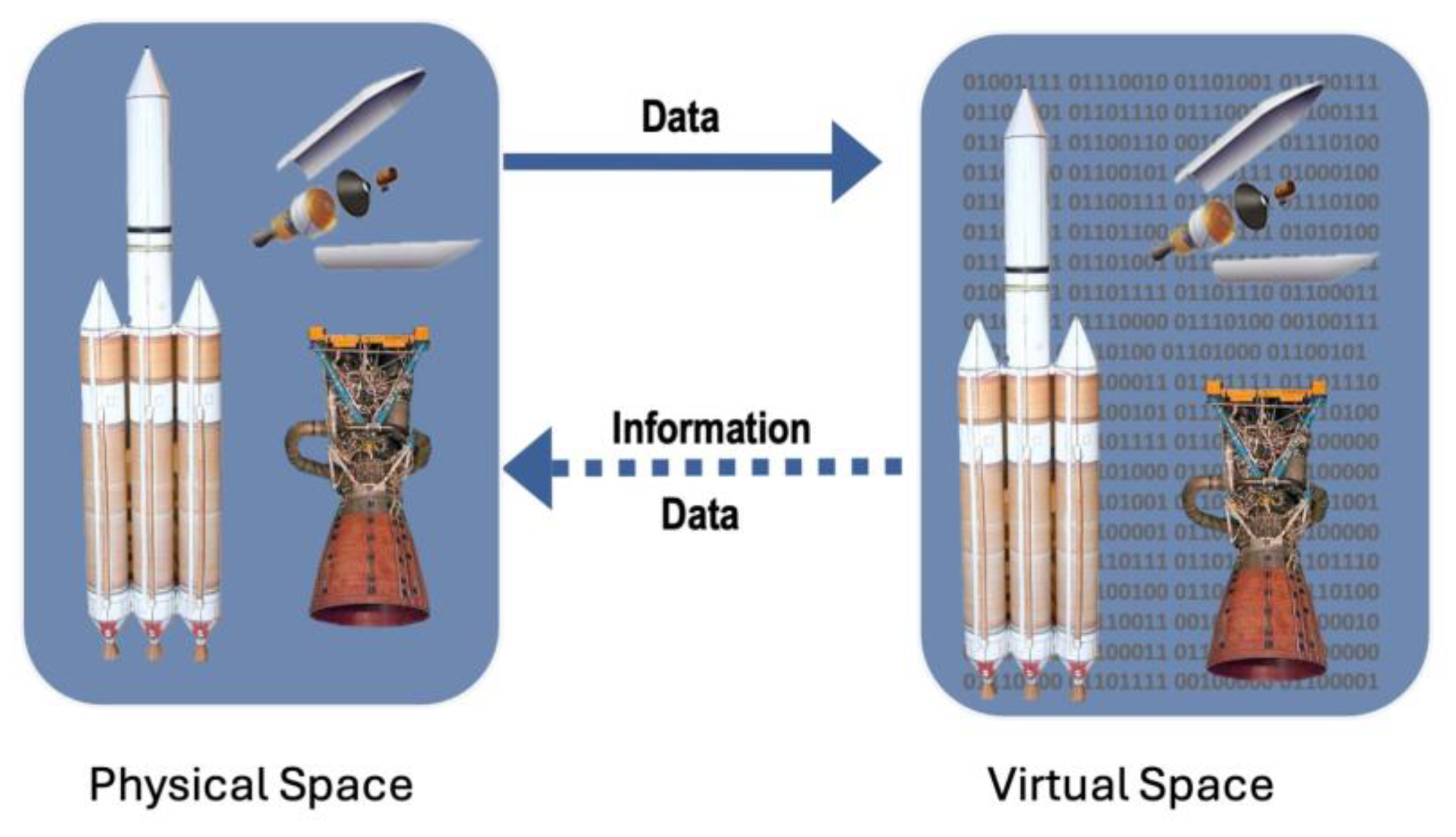

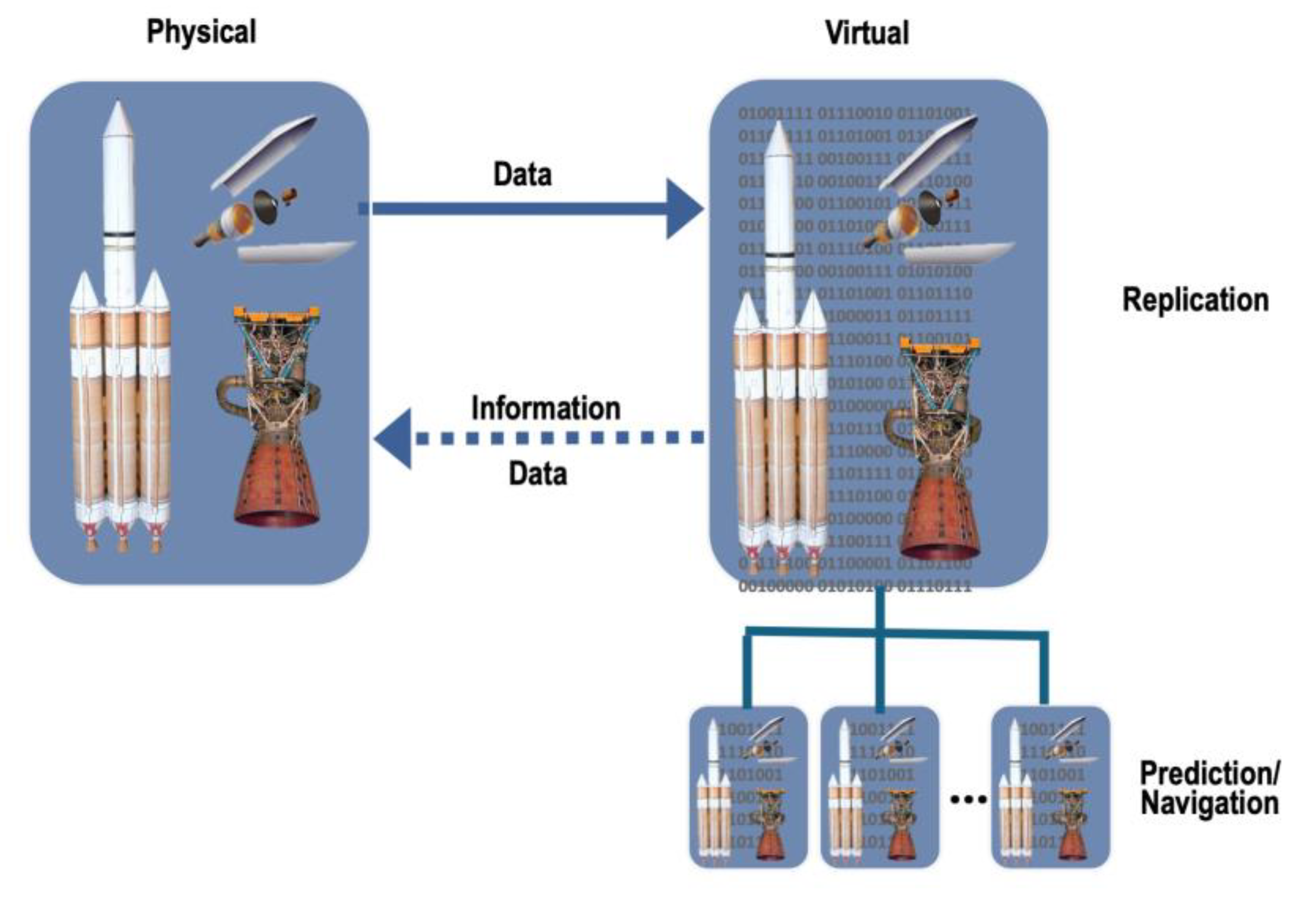

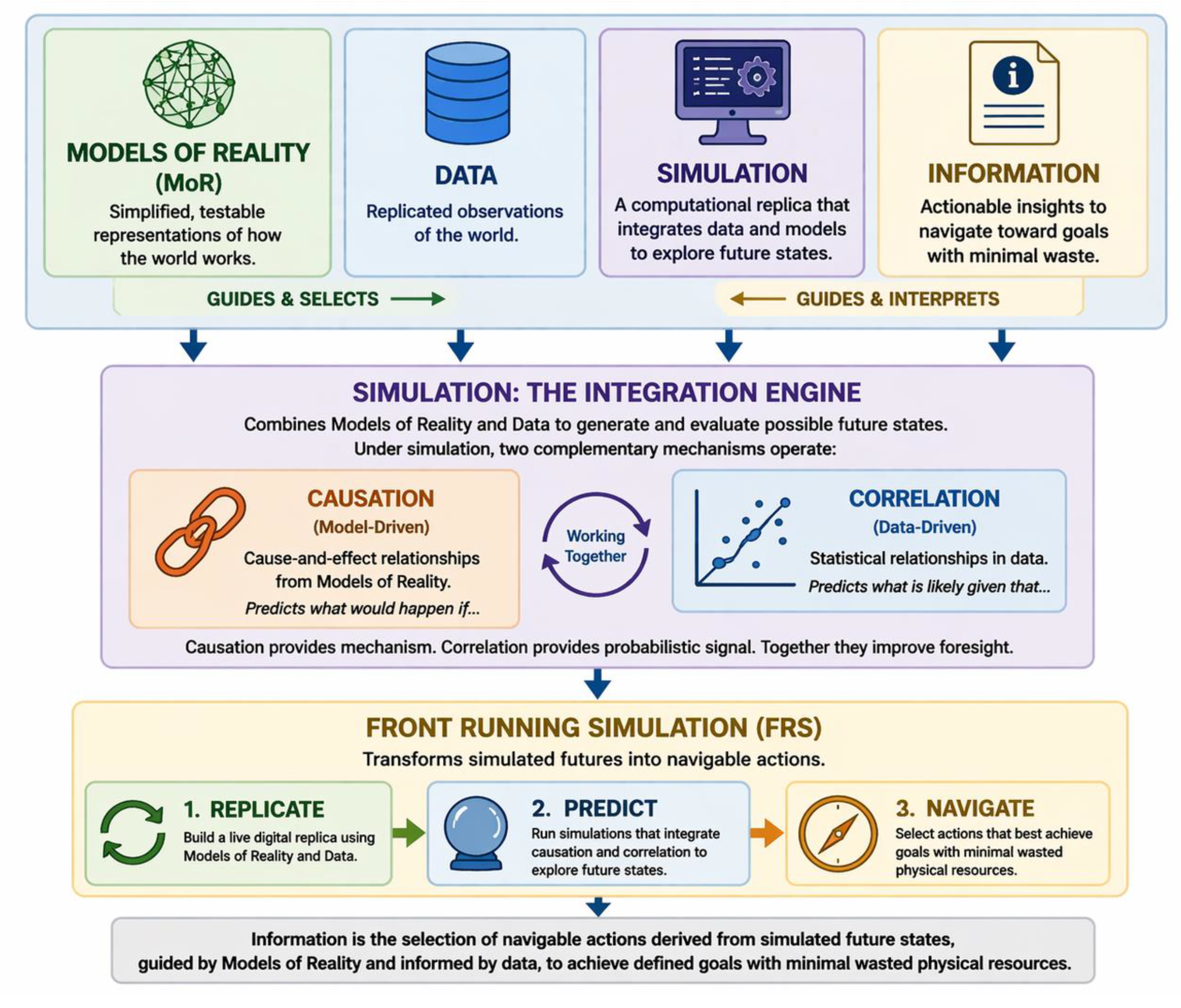

2. The Digital Twin Model

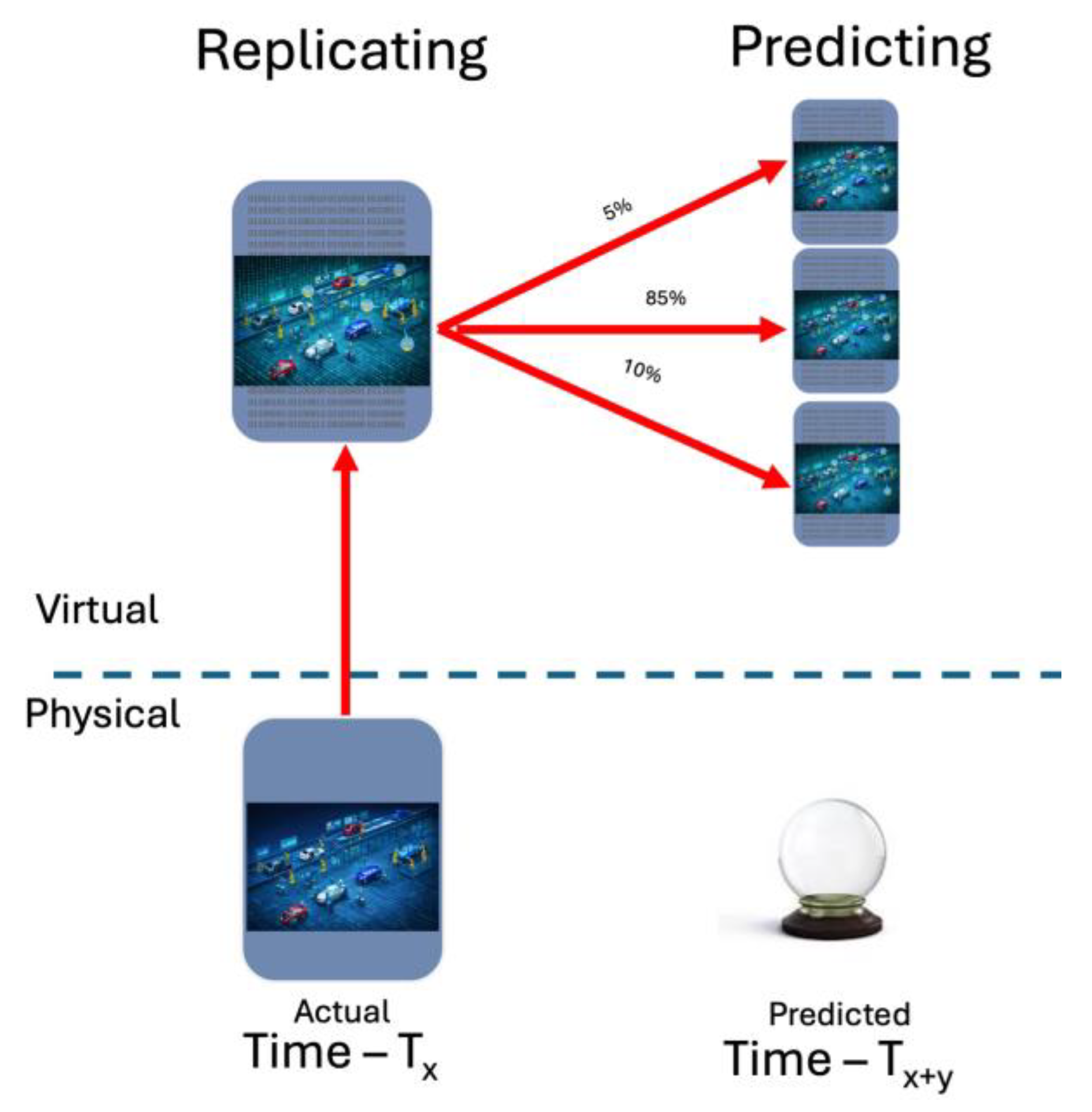

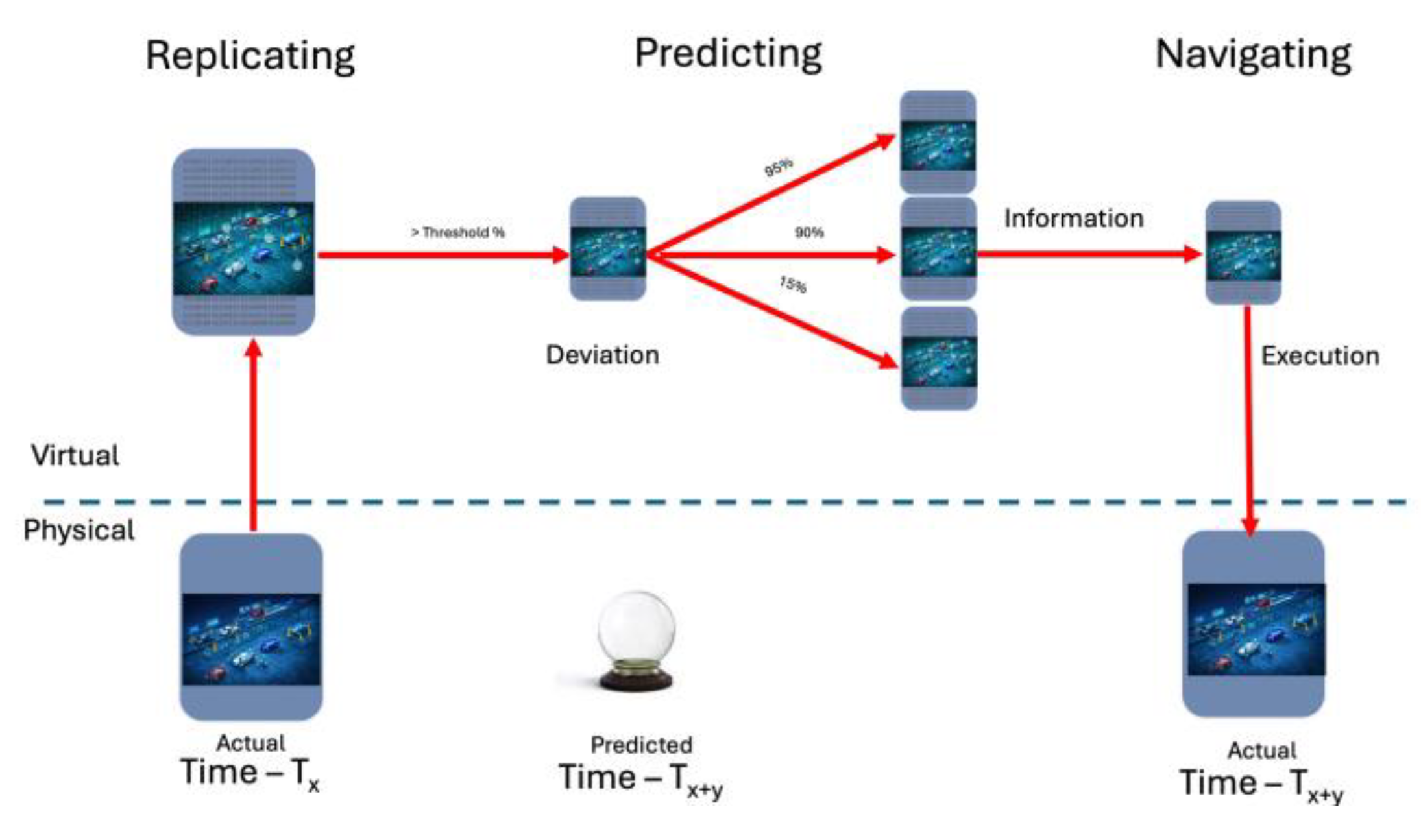

3. Introduction of Front Running Simulation

- Goal seeking replication, prediction, and navigation

- Continuous Overwatch of factory operations, matching planned operations against predicted operations

- Continuous replication of the current physical state as the simulation starting condition

- Continual simulations of predicted future states including adverse events and deviations

- Continual simulation predicted future operational outcomes of actions to navigate to goals

- Temporal horizon and update cadence determined by operational dynamics and use cases

Front Running Simulation in Overwatch Mode

Front Running Simulation and Goal Deviations

4. FRS Operational Substrate

Models of Reality

Data

- Selected for purpose

- Contextually relevant

- Objective

- Complete

- Accurate

- Interoperable

Simulation

AI Based Evolution of Front Running Simulation

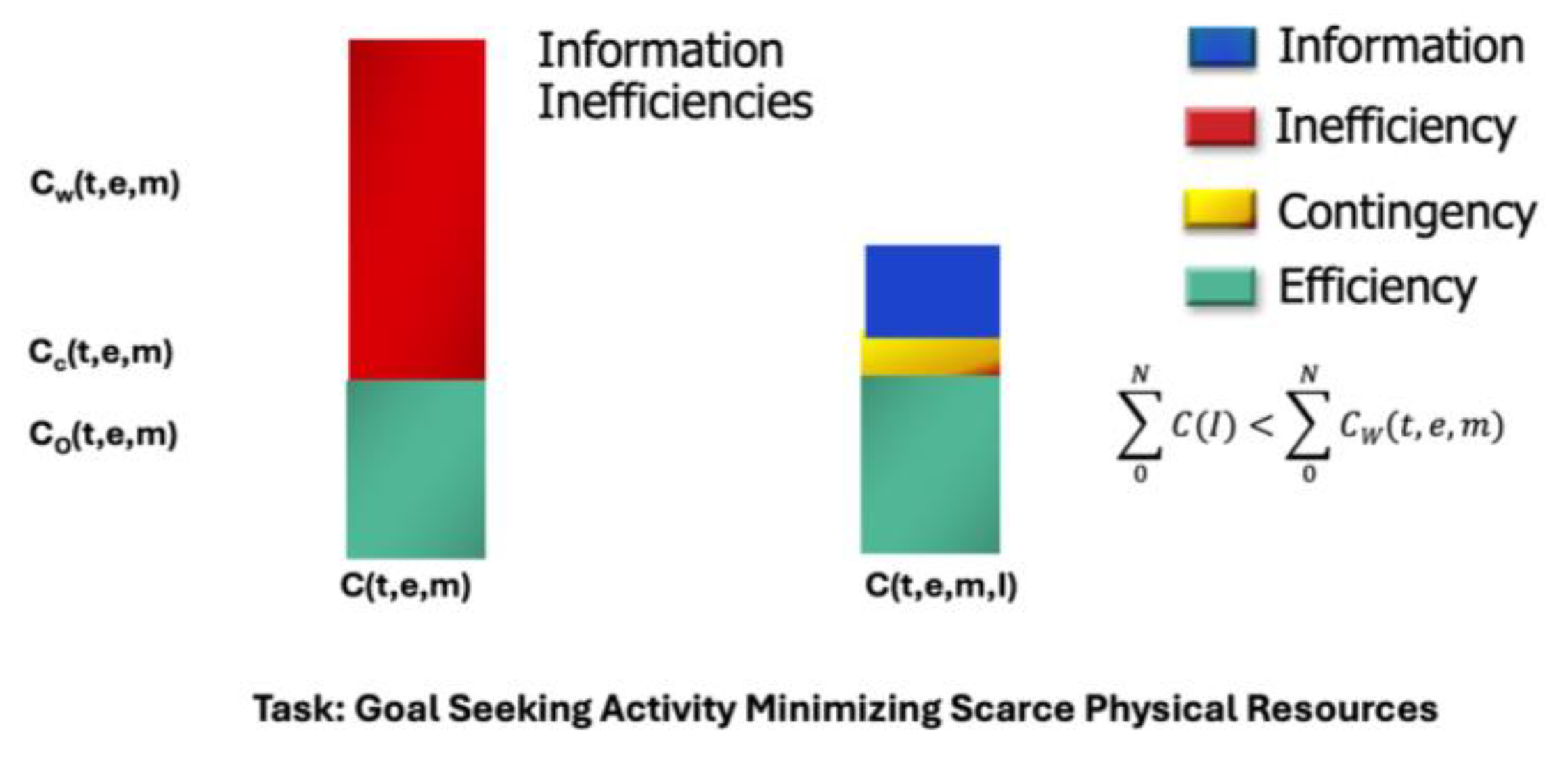

Information

5. HITL and HOOTL

6. Front Running Simulation Examples

Automotive Discrete Manufacturing Welding Line

- Achieve 1,040 units by end of shift

- Maintain acceptable weld quality thresholds

- Minimize overtime, energy spikes, and downstream rework

- Avoid unnecessary line stoppages that waste scarce physical resources

Continous Production Oil Refinery Facility

- Maintain throughput at 92% of maximum capacity

- Maximize yield of high-value middle distillates

- Stay within safety and emissions constraints

- Minimize energy consumption and flaring

7. Conclusion

- From reactive to proactive

- From isolated simulation to continuous synchronization with reality

- From resource-intensive trial-and-error to information-driven efficiency

Conflicts of Interest

References

- Grieves, M., Forward to Human-Centered Metaverse, in Human-Centered Metaverse: Concepts, Methods, Applications, C. Nam, D. Song, and H. Jeong, Editors. 2024, Elsevier. p. 400.

- Tao, F. Digital twin in industry: state-of-the-art . IEEE Transactions on Industrial Informatics 2018, 15(4), 2405–2415. [Google Scholar] [CrossRef]

- Wooley, A.; Dimson, G.; Bitencourt, J. Digital Twins Across Domains: A Cross-Industry Umbrella Review of Systematic Literature Reviews. Systems Engineering 2026, p. e70049. [Google Scholar] [CrossRef]

- Grieves, M. and J. Vickers, Digital Twin: Mitigating Unpredictable, Undesirable Emergent Behavior in Complex Systems, in Trans-Disciplinary Perspectives on System Complexity, F.-J. Kahlen, S. Flumerfelt, and A. Alves, Editors. 2017, Springer: Switzerland. p. 85–114.

- Grieves, M. Virtually Intelligent Product Systems: Digital and Physical Twins, in Complex Systems Engineering: Theory and Practice, S. Flumerfelt, et al., Editors. 2019, American Institute of Aeronautics and Astronautics. p. 175–200.

- Grieves, M. Completing the Cycle: Using PLM Information in the Sales and Service Functions [Slides]. in SME Management Forum. 2002. Troy, MI.

- Grieves, M. Driving Digital Continuity in Manufacturing. 2017; Available from: https://research.fit.edu/media/site-specific/researchfitedu/camid/documents/MWG-Digital-Continuity-Whitepaper-copy-(002).pdf.

- Grieves, M. Data Driven Digital Twins, in Research Handbook on Digital Data: Interdisciplinary Perspectives, A. Aaltonen, K. Lyytinen, and M. Stelmaszak, Editors. 2026, Edgar Elgar Publishing: Northhampton, MA. p. 89–107.

- Popper, K. Conjectures and refutations: The growth of scientific knowledge. 2014: routledge.

- Spelke, E.S. Origins of knowledge . Psychological review 1992, 99, 605. [Google Scholar] [CrossRef] [PubMed]

- Baillargeon, R. Physical reasoning in infancy . In The cognitive neurosciences; 1995; pp. 181–204. [Google Scholar]

- Baillargeon, R. Object permanence in 3½-and 4½-month-old infants . Developmental psychology 1987, 23(5), 655. [Google Scholar] [CrossRef]

- Grieves, M. DIKW As a General and Digital Twin Action Framework: Data, Information, Knowledge, and Wisdom. Knowledge 2024, 4(2), 120–140. [Google Scholar] [CrossRef]

- Rydning, D.R.-J.G.-J.; Reinsel, J.; Gantz, J. The digitization of the world from edge to core. Framingham: International Data Corporation, 2018. 16: p. 1–28.

- Grieves, M.; Hua, E. Defining, Exploring, and Simulating the Digital Twin Metaverses, in Digital Twins, Simulation, and Metaverse: Driving Efficiency and Effectiveness in the Physical World through Simulation in the Virtual Worlds, M. Grieves and E. Hua, Editors. 2024, Springer.

- Hartmann, S. The world as a process: Simulations in the natural and social sciences, in Modelling and simulation in the social sciences from the philosophy of science point of view. 1996, Springer. p. 77–100.

- Schriber, T.J. Simulation using GPSS. 1974, New York: Wiley. xv, 533 p.

- Guo, Y. Digital twins for electro-physical, chemical, and photonic processes. CIRP Annals 2023, 72(2), 593–619. [Google Scholar] [CrossRef]

- Pearl, J. Statistics and causal inference: A review. Test 2003, 12, 281–345. [Google Scholar] [CrossRef]

- Chen, D. Digital twin for federated analytics using a Bayesian approach . IEEE Internet of Things Journal 2021, 8(22), 16301–16312. [Google Scholar] [CrossRef]

- Khatun, Z. Hybrid Digital Twin and Monte Carlo Simulation For Reliability Of Electrified Manufacturing Lines With High Power Electronics. International Journal of Scientific Interdisciplinary Research 2025. 6, 2, 143–194. [Google Scholar] [CrossRef]

- Korb, K.B.; Nicholson, A.E. Bayesian artificial intelligence. 2010: CRC press.

- Zins, C. What is the meaning of” data”,” information”, and” knowledge. Dr. Chaim Zins, 2009.

- Grieves, M. Product Lifecycle Management: Driving the Next Generation of Lean Thinking. 2006, New York: McGraw-Hill. 319.

- Wills, I. The Edisonian Method: Trial and Error, in Thomas Edison: Success and Innovation through Failure, I. Wills, Editor. 2019, Springer International Publishing: Cham. p. 203–222.

- Cortada, J.W. Boundaries between explicit and tacit knowledge: data’s world. Research Handbook on Digital Data: Interdisciplinary Perspectives 2026, 20.

- Simon, H.A. A behavioral model of rational choice . The quarterly journal of economics 1955, 99–118. [Google Scholar] [CrossRef]

- Benkler, Y. The wealth of networks : how social production transforms markets and freedom. 2006, New Haven Conn.: Yale University Press. xii, 515 pages.

- Kahneman, D. Thinking, fast and slow. 1st ed. 2011, New York: Farrar, Straus and Giroux. 499 p.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).