Submitted:

15 April 2026

Posted:

16 April 2026

You are already at the latest version

Abstract

Keywords:

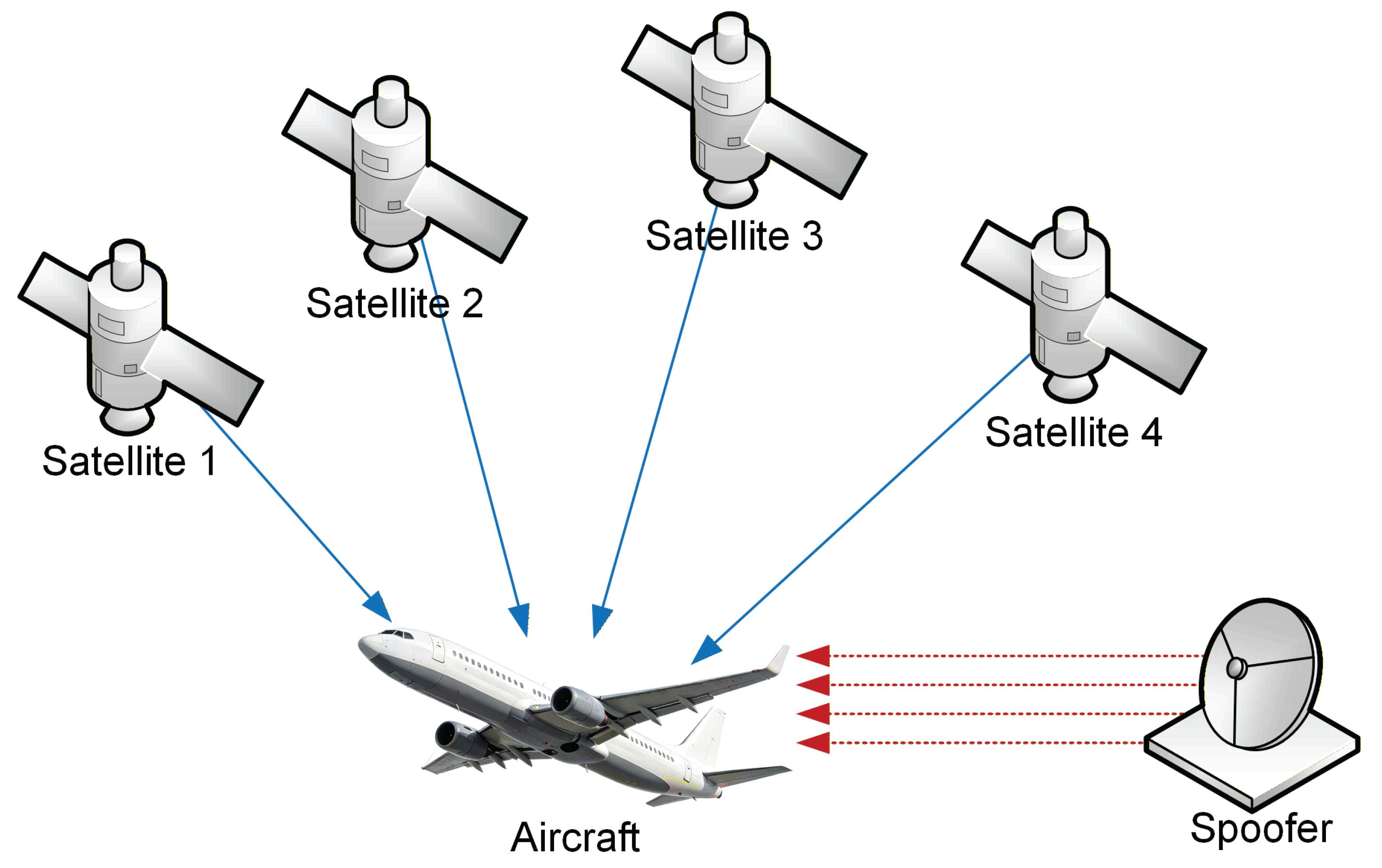

1. Introduction

1.1. Related Work

1.1.1. Signal Processing-Based Techniques

1.1.2. Machine Learning-Based Classifiers

1.1.3. Hybrid Detection Frameworks

1.2. The Gap in the Literature and the Contribution of This Study

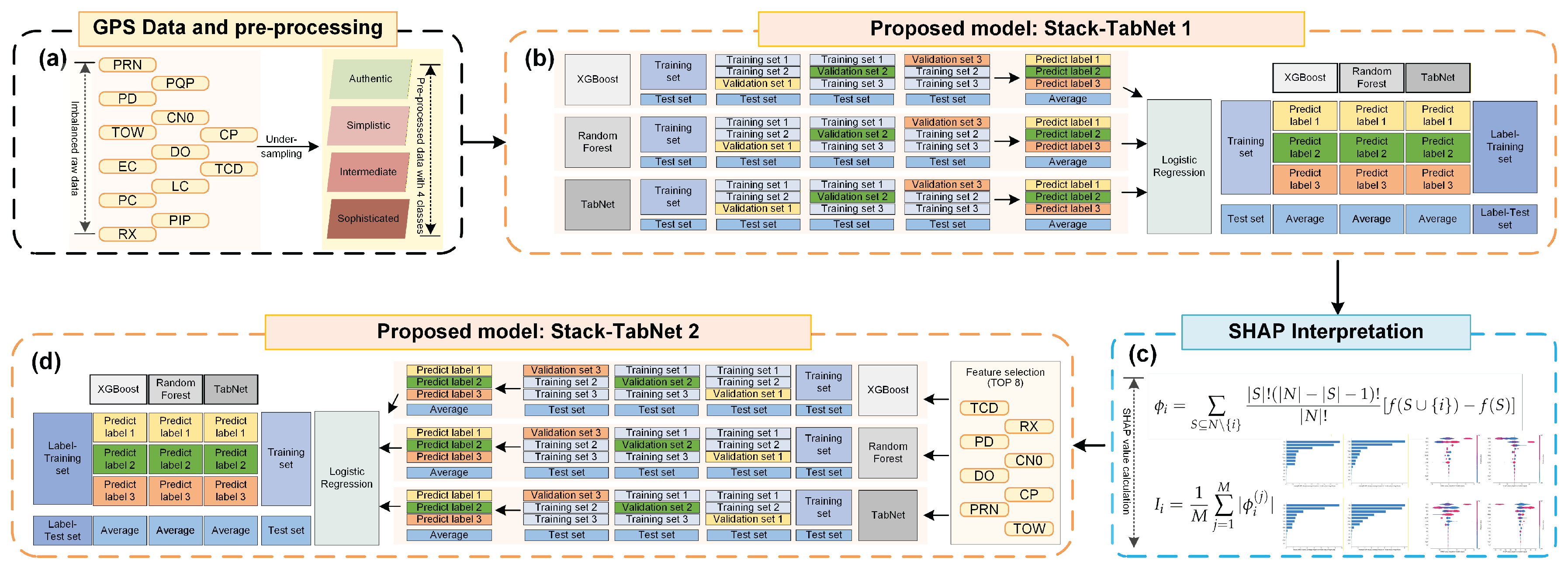

- (1)

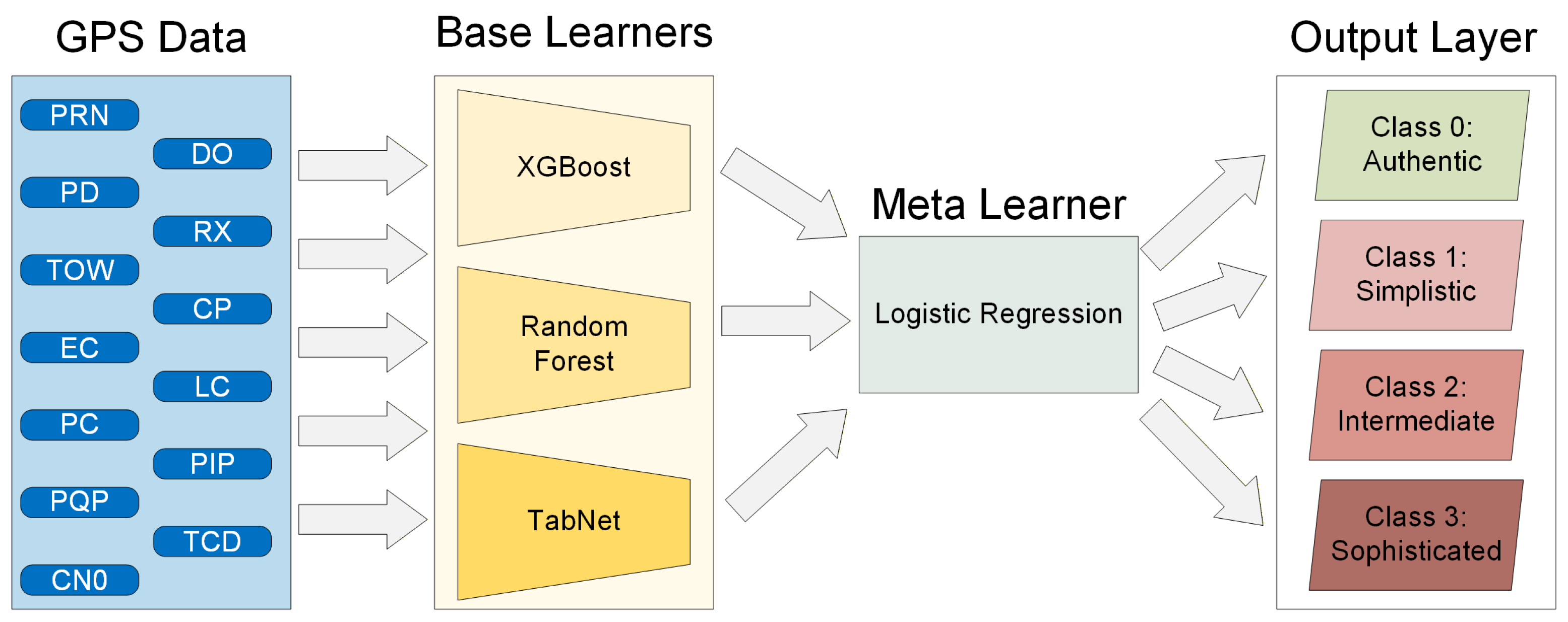

- A novel stacked ensemble learning framework tailored for GPS spoofing detection is proposed. Considering the complexity of signal features in spoofing scenarios, we integrate the attention-based TabNet network with traditional tree-based models within a stacking architecture. The newly proposed Stack-TabNet model dramatically improves the detection robustness and saves computing resources compared to standalone deep learning models. Therefore, our proposed ensemble method has significant performance improvement compared with traditional machine learning methods.

- (2)

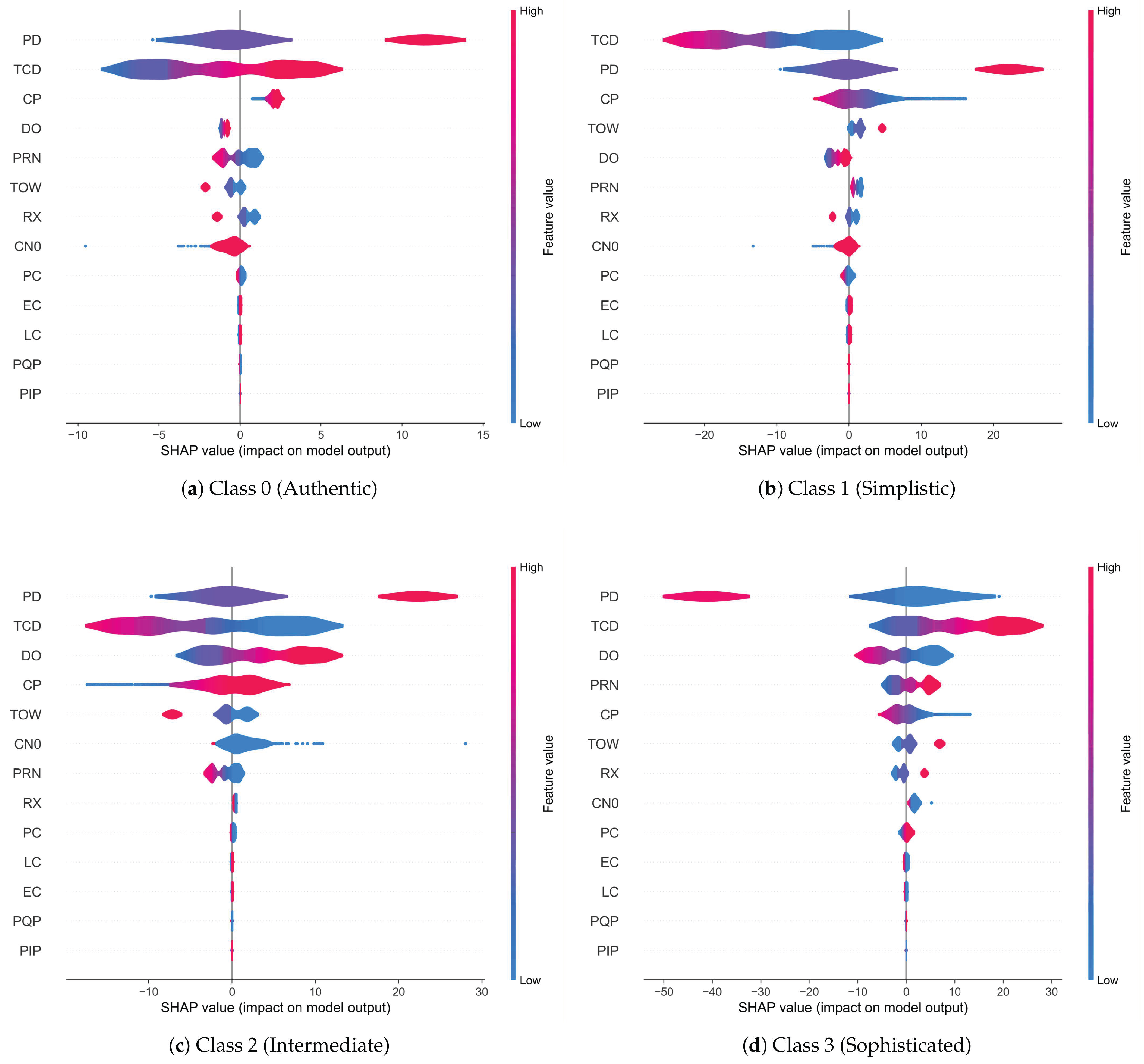

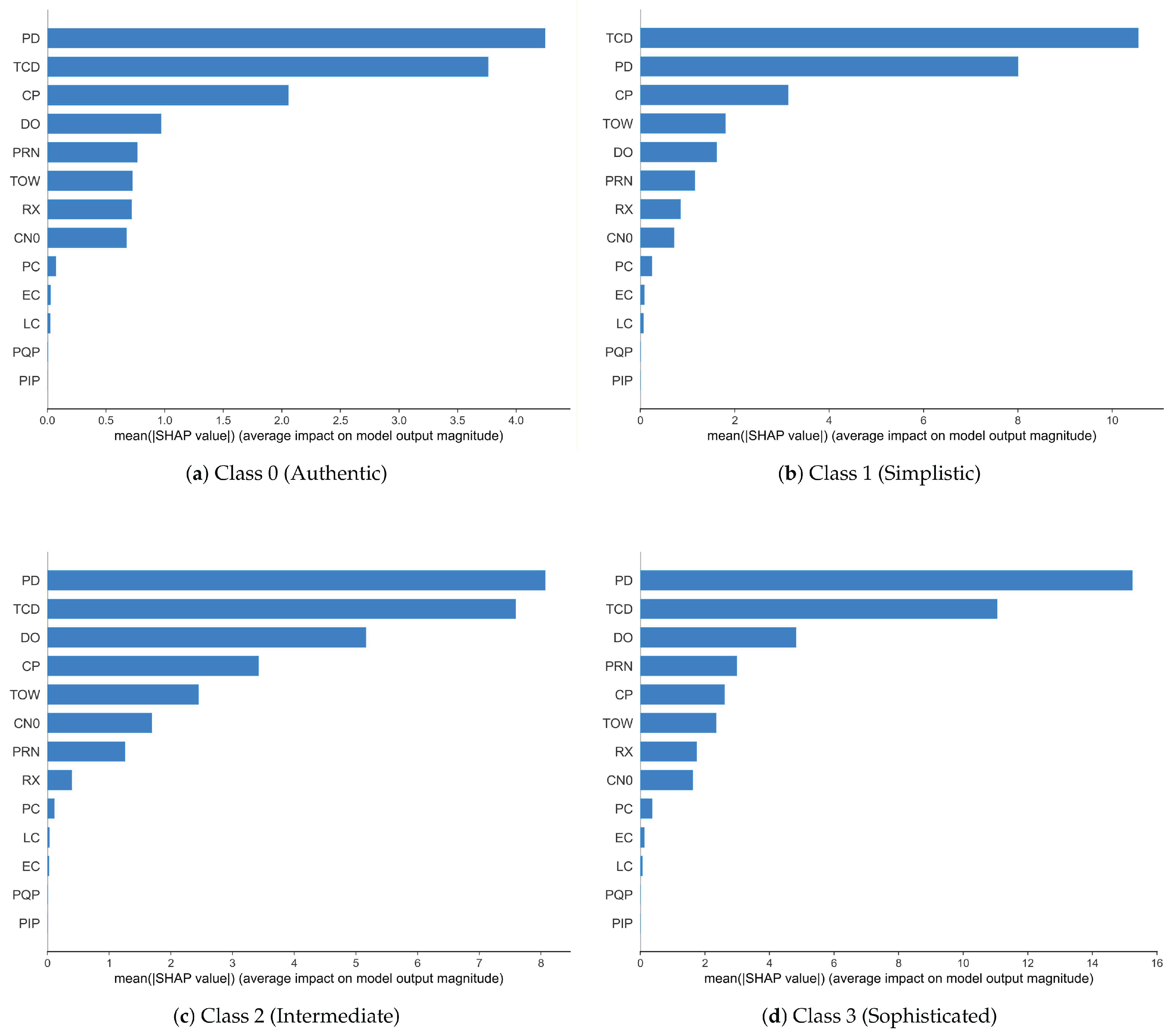

- We introduce a comprehensive interpretability analysis for GPS spoofing detection models. For the first time in this context, we employ SHapley Additive exPlanations (SHAP) to decode the decision-making process of the TabNet-based ensemble. A total of 13 signal features are analyzed, which can be used to study the physical characteristics distinguishing spoofed signals from authentic ones. It is worth mentioning that the interpretability analysis is conducted in real-world navigation scenarios, which is a challenging process due to the high dimensionality of signal data. In addition, visualizing feature contributions is a critical task due to the safety-critical nature of aviation navigation systems.

- (3)

- Through SHAP-guided feature selection, a refined detection variant is developed to reduce model complexity and improve computational efficiency. By retaining only the most informative features, the training process becomes less dependent on high-dimensional inputs, which is particularly advantageous for real-time GPS monitoring applications. Experimental results demonstrate that our optimized method named Stack-TabNet-2 can improve the classification accuracy of the full-feature model with only the top 8 features and can exceed the classification effect of traditional supervised learning baselines while minimizing computational overhead.

2. Materials and Methods

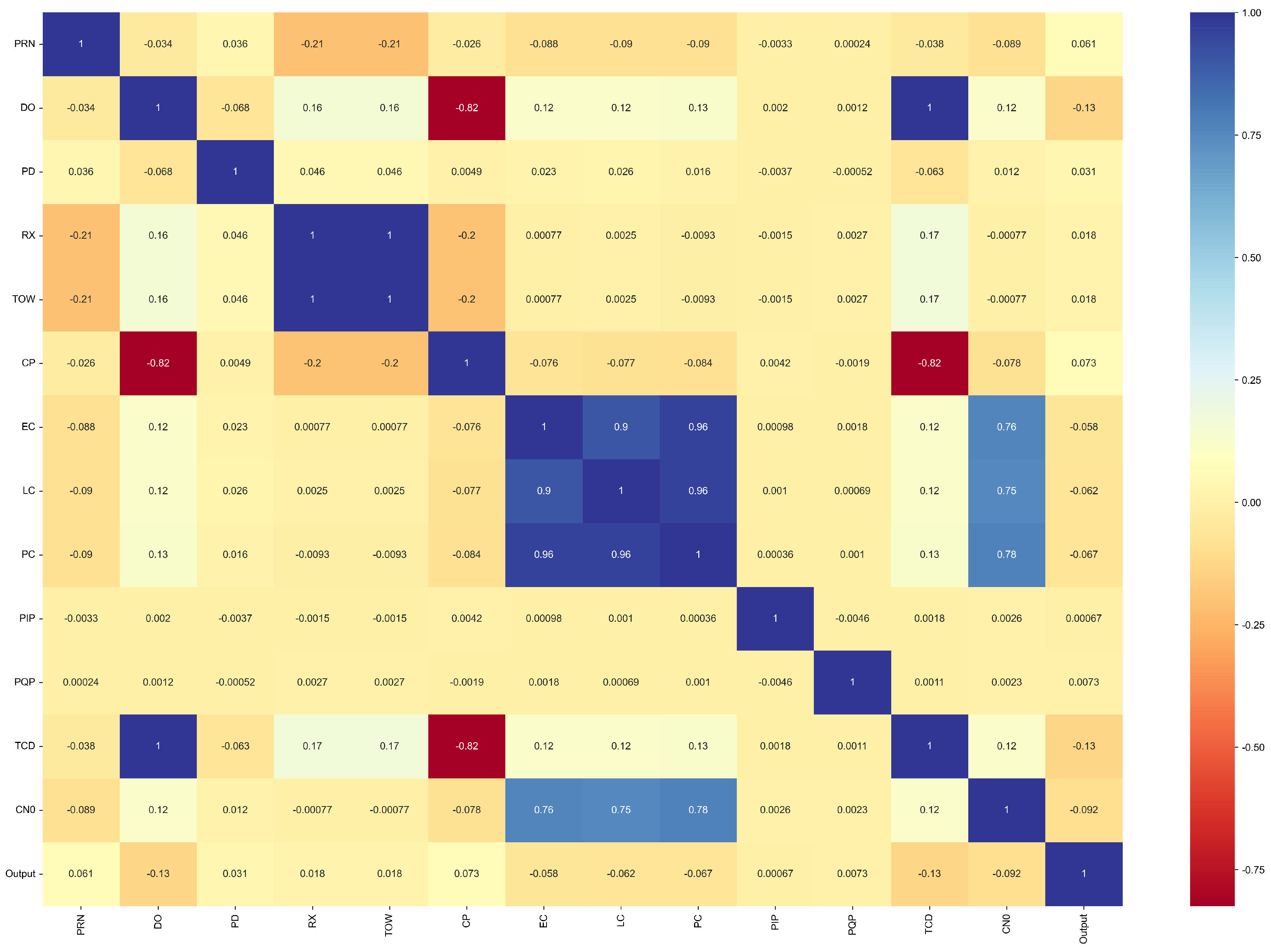

2.1. Dataset Description and Preliminary Analysis

2.2. Stack-TabNet Ensemble Model

2.2.1. Base Learners Configuration

2.2.2. Stacking Mechanism and Meta-Learning

2.2.3. Training Strategy

2.3. Feature Interpretability and Selection Policy

3. Experiments and Results

3.1. Experimental Setup

3.2. Comparative Experiments

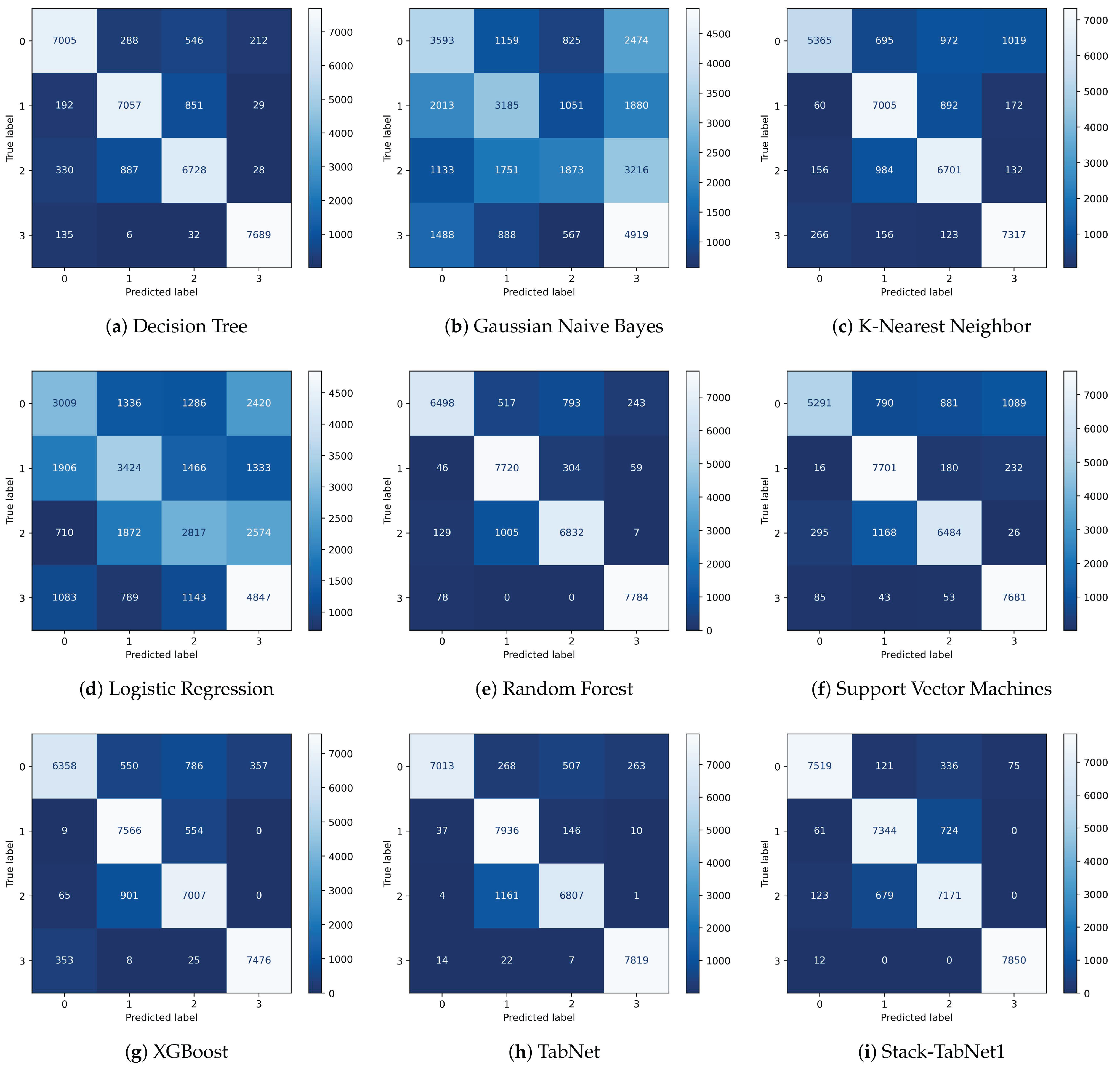

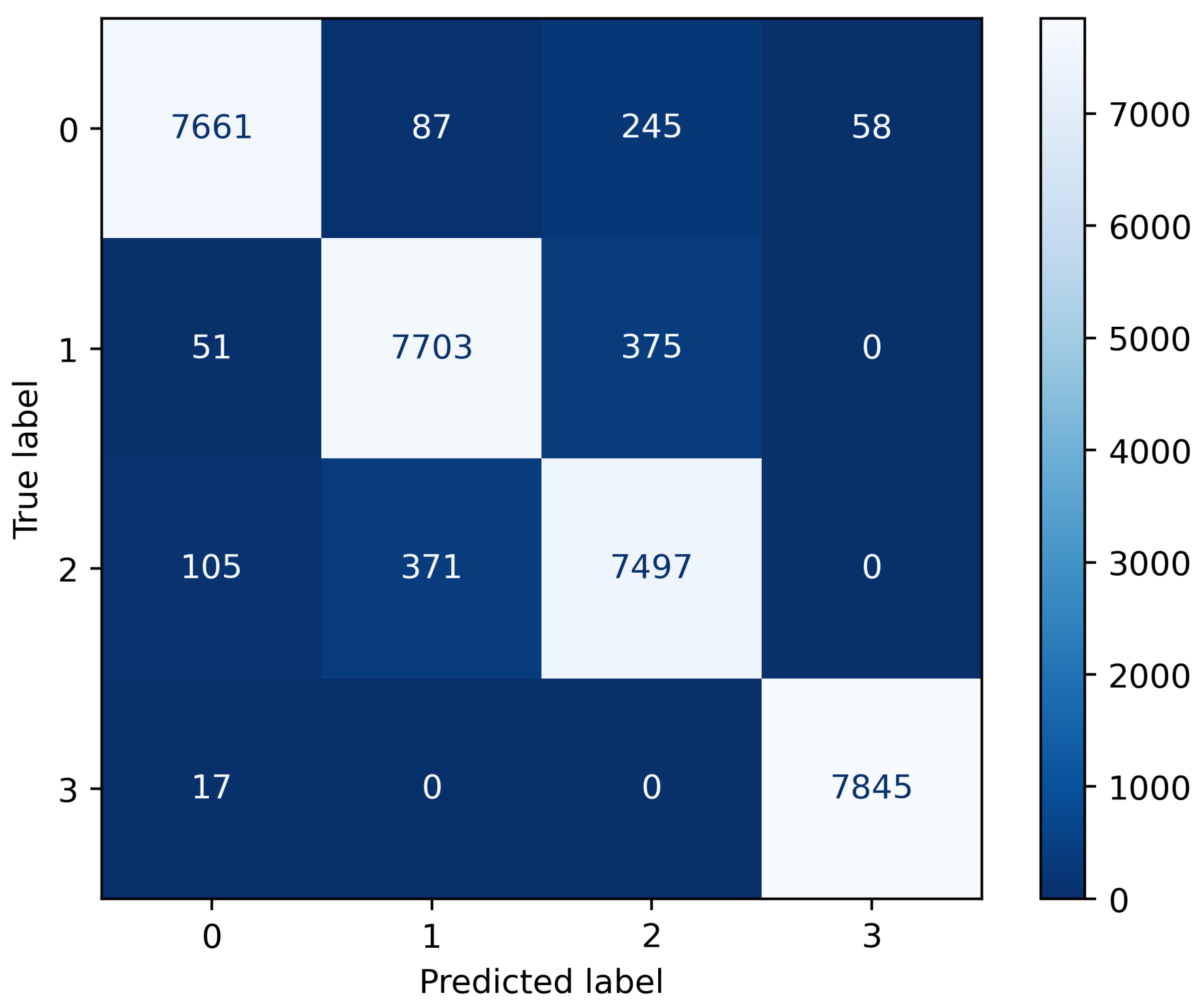

3.2.1. Confusion Matrix Analysis

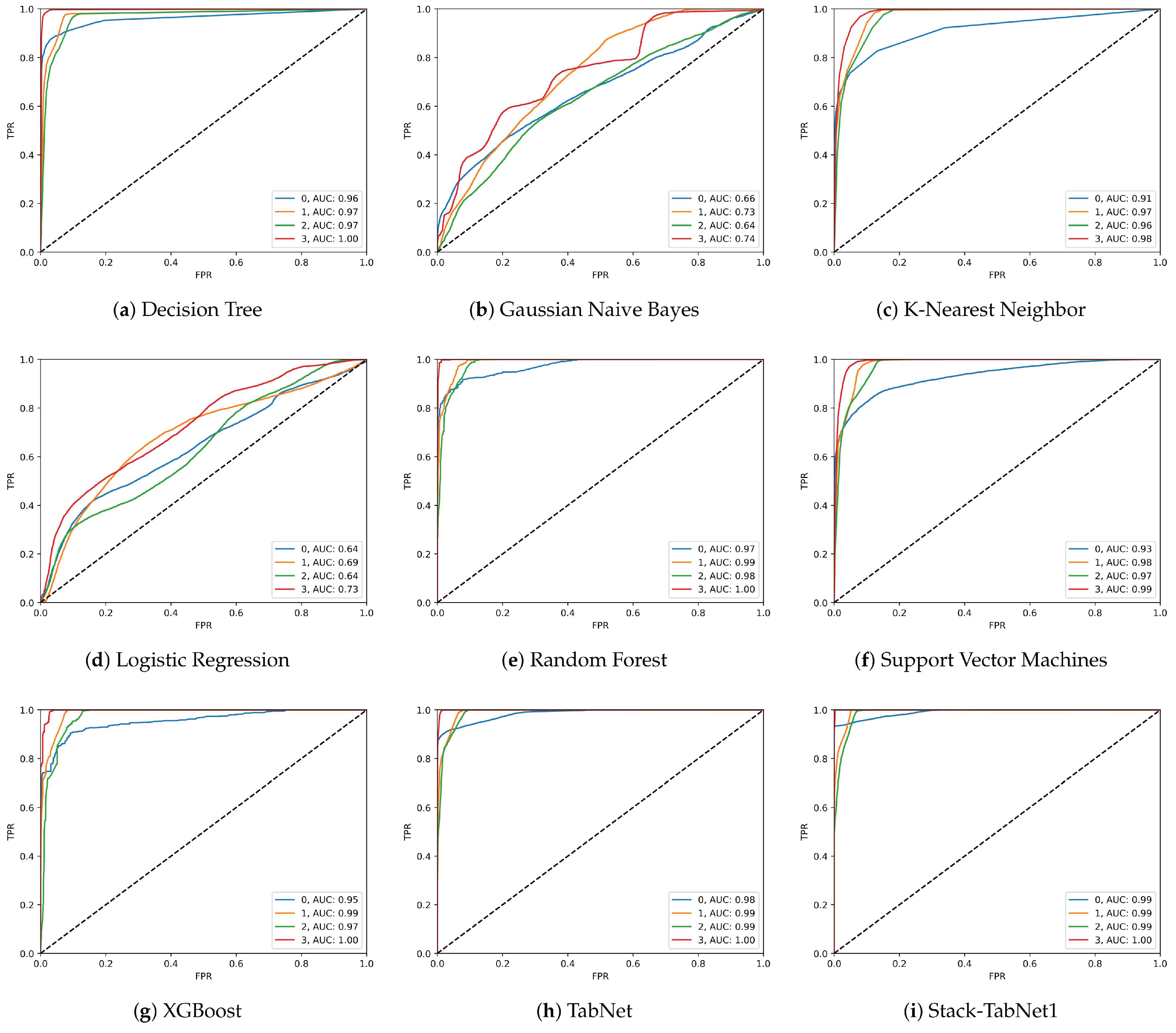

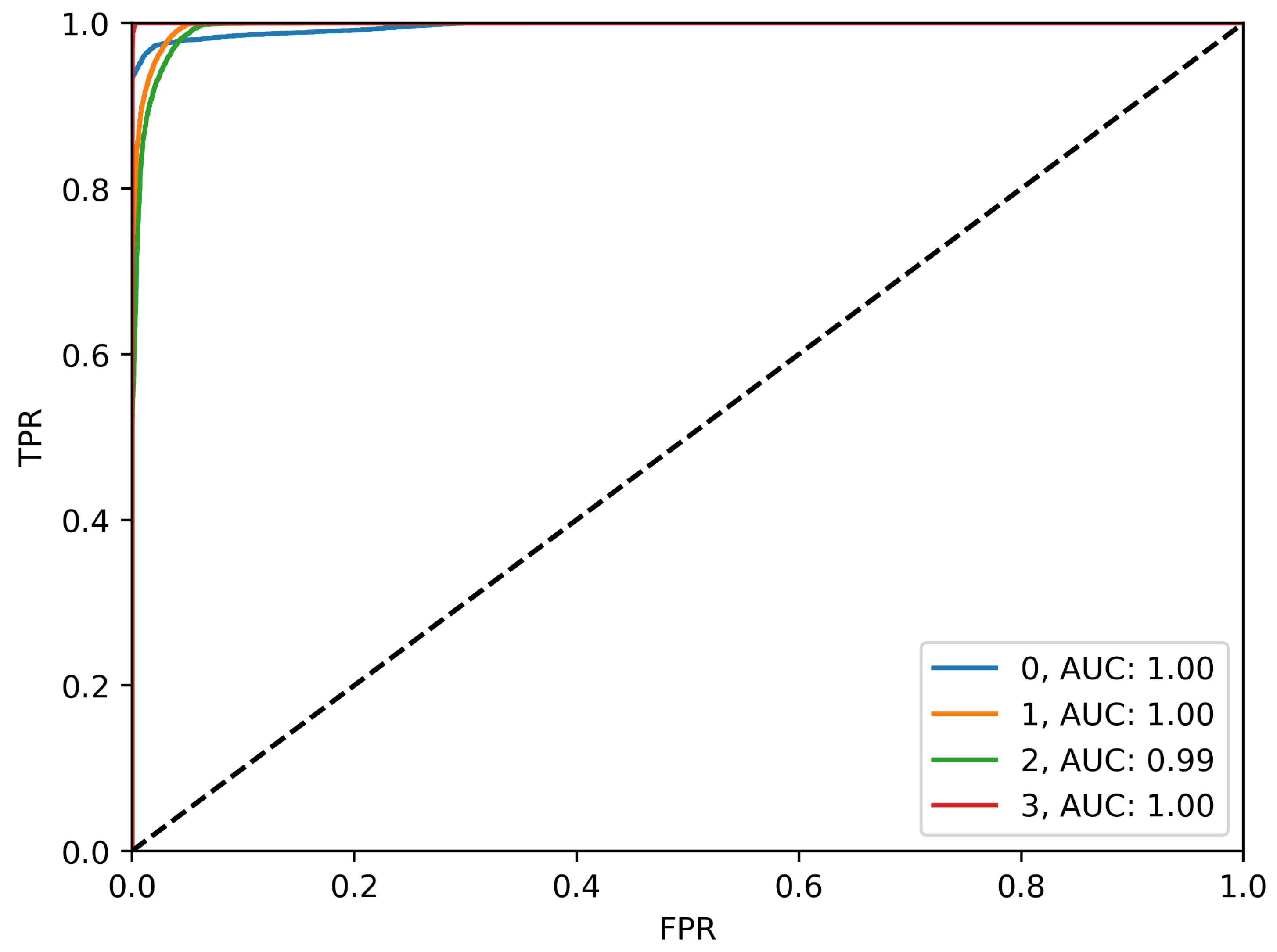

3.2.2. ROC-AUC Curve Analysis

3.2.3. Per-Class Performance Metrics

3.2.4. Overall Accuracy Comparison

3.3. SHAP Interpretability Analysis

3.3.1. SHAP Violin Plot Analysis

3.3.2. Feature Importance Ranking

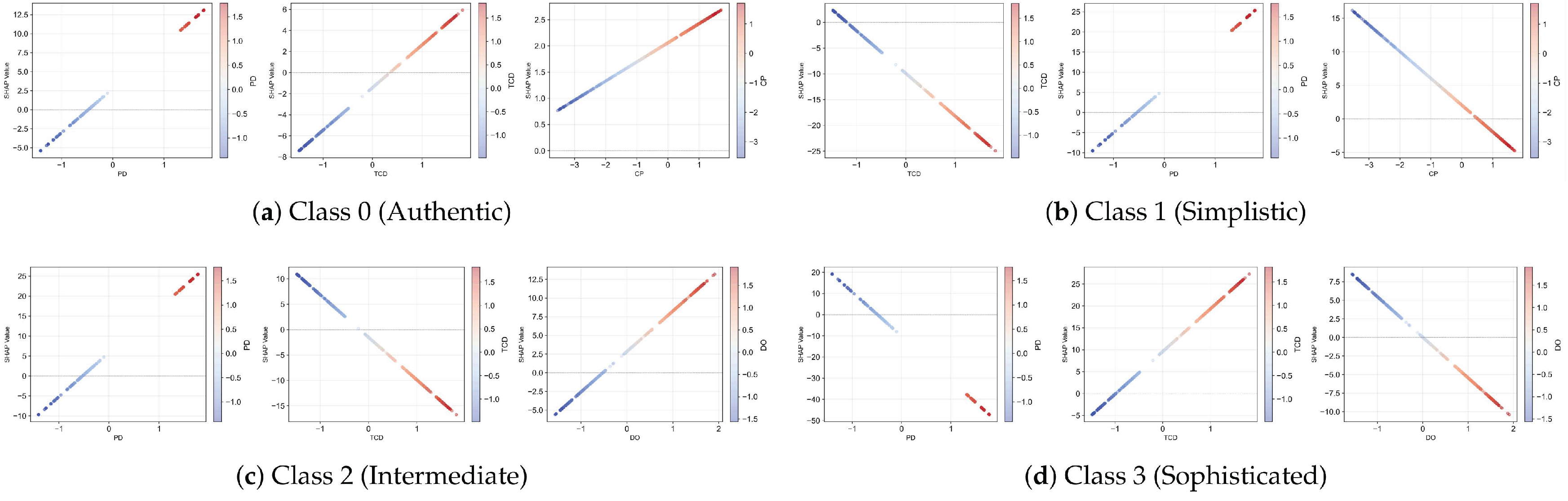

3.3.3. SHAP Dependence Plot Analysis

3.4. SHAP-Driven Feature Selection and Stack-TabNet2 Performance

3.4.1. Performance Evaluation of Stack-TabNet2

3.4.2. Comparative Analysis: Stack-TabNet1 vs. Stack-TabNet2

4. Discussion

5. Conclusions

Author Contributions

Data Availability Statement

Conflicts of Interest

References

- Wang, S.; Ahmad, N.S. A Comprehensive Review on Sensor Fusion Techniques for Localization of a Dynamic Target in GPS-Denied Environments. IEEE Access 2025, 13, 2252–2285. [Google Scholar] [CrossRef]

- Chen, X.; Ge, M.; Männel, B.; Schuh, H. Analysis of periodic terms in the DYB frame for the solar radiation pressure model of BDS-3 MEO satellites. GPS Solutions 2025, 29, 90. [Google Scholar] [CrossRef]

- Chen, X.; Ge, M.; Männel, B.; Hugentobler, U.; Schuh, H. Extending higher-order model for non-conservative perturbing forces acting on Galileo satellites during eclipse periods. 98, 113. [CrossRef]

- Meng, L.; Yang, L.; Yang, W.; Zhang, L. A Survey of GNSS Spoofing and Anti-Spoofing Technology. Remote Sensing 2022, 14. [Google Scholar] [CrossRef]

- Lu, C.; Lu, Z.; Liu, Z.; Huang, L.; Chen, F. Overview of satellite nav spoofing and anti-spoofing techniques. 12. [CrossRef]

- Zhang, B.; Yang, C.; Xiao, G.; Li, P.; Xiao, Z.; Wei, H.; Liu, J. Loosely Coupled PPP/Inertial/LiDAR Simultaneous Localization and Mapping (SLAM) Based on Graph Optimization. Remote Sensing 2025, 17. [Google Scholar] [CrossRef]

- Xiao, G.; Yang, C.; Wei, H.; Xiao, Z.; Zhou, P.; Li, P.; Dai, Q.; Zhang, B.; Yu, C. PPP ambiguity resolution based on factor graph optimization. GPS solutions 2024, 28, 178. [Google Scholar] [CrossRef]

- Zhou, P.; Nie, Z.; Xiang, Y.; Wang, J.; Du, L.; Gao, Y. Differential code bias estimation based on uncombined PPP with LEO onboard GPS observations. Advances in Space Research 2020, 65, 541–551. [Google Scholar] [CrossRef]

- Fan, X.; Du, L.; Duan, D. Synchrophasor Data Correction Under GPS Spoofing Attack: A State Estimation-Based Approach. IEEE Transactions on Smart Grid 2018, 9, 4538–4546. [Google Scholar] [CrossRef]

- Kwon, K.C.; Shim, D.S. Performance Analysis of Direct GPS Spoofing Detection Method with AHRS/Accelerometer. Sensors 2020, 20. [Google Scholar] [CrossRef]

- Basan, E.; Basan, A.; Nekrasov, A.; Fidge, C.; Sushkin, N.; Peskova, O. GPS-Spoofing Attack Detection Technology for UAVs Based on Kullback–Leibler Divergence. Drones 2022, 6. [Google Scholar] [CrossRef]

- Sharma, G.; Kushwaha, S. A Comprehensive Review of Multi- Layer Convolutional Sparse Coding in Semantic Segmentation. In Proceedings of the 2024 9th International Conference on Communication and Electronics Systems (ICCES), 2024, pp. 2050–2054. [CrossRef]

- Yang, Y.; Chen, T.; Zhao, L. From Segmentation to Classification: A Deep Learning Scheme for Sintered Surface Images Processing. Processes 2024, 12. [Google Scholar] [CrossRef]

- Kore, V.; Khadse, V. Progressive Heterogeneous Ensemble Learning for Cancer Gene Expression Classification. In Proceedings of the 2022 International Conference on Machine Learning, Computer Systems and Security (MLCSS), 2022, pp. 149–153. [CrossRef]

- Wei, X.; Sun, C.; Lyu, M.; Song, Q.; Li, Y. ConstDet: Control Semantics-Based Detection for GPS Spoofing Attacks on UAVs. Remote Sensing 2022, 14. [Google Scholar] [CrossRef]

- Bose, S.C. GPS Spoofing Detection by Neural Network Machine Learning. IEEE Aerospace and Electronic Systems Magazine 2022, 37, 18–31. [Google Scholar] [CrossRef]

- Ren, Y.; Restivo, R.D.; Tan, W.; Wang, J.; Liu, Y.; Jiang, B.; Wang, H.; Song, H. Knowledge Distillation-Based GPS Spoofing Detection for Small UAV. Future Internet 2023, 15. [Google Scholar] [CrossRef]

- Meng, L.; Zhang, L.; Yang, L.; Yang, W. A GPS-Adaptive Spoofing Detection Method for the Small UAV Cluster. Drones 2023, 7. [Google Scholar] [CrossRef]

- Song, J.; Qu, Z.; Li, Y.; Rizos, C. A Hybrid Optimization-Based INS-Aided GNSS Spoofing Detection Method. IEEE Transactions on Instrumentation and Measurement 2025, 74, 1–12. [Google Scholar] [CrossRef]

- Chen, T.; Zhang, J.; Li, W.; Yu, X.; Yang, Y.; Guo, L. Reliability-Guaranteed Fault Observer Design for Systems With Stochastic Parametric Uncertainty. IEEE Control Systems Letters 2024, 8, 3434–3439. [Google Scholar] [CrossRef]

- Kuriş, U.; Turna, O.C. Performance Analysis of LSTM, GRU and Hybrid LSTM–GRU Model for Detecting GPS Spoofing Attacks. Sensors 2026, 26. [Google Scholar] [CrossRef]

- Aissou, G. A DATASET for GPS Spoofing Detection on Unmanned Aerial System. [CrossRef]

- Costa, V.G.; Pedreira, C.E. Recent advances in decision trees: an updated survey. 56, 4765–4800. [CrossRef]

- Shi, Y.; Lu, X.; Niu, Y.; Li, Y. Efficient Jamming Identification in Wireless Communication: Using Small Sample Data Driven Naive Bayes Classifier. IEEE Wireless Communications Letters 2021, 10, 1375–1379. [Google Scholar] [CrossRef]

- Halder, R.K.; Uddin, M.N.; Uddin, M.A.; Aryal, S.; Khraisat, A. Enhancing K-nearest neighbor algorithm: a comprehensive review and performance analysis of modifications. 11, 113. [CrossRef]

- Hosmer Jr, D.W.; Lemeshow, S.; Sturdivant, R.X. Applied logistic regression; John Wiley & Sons, 2013.

- Hu, J.; Szymczak, S. A review on longitudinal data analysis with random forest. Briefings in Bioinformatics 2023, 24, 559, [https://academic.oup.com/bib/article-pdf/24/2/bbad002/49559948/bbad002.pdf]. [CrossRef]

- Chauhan, V.K.; Dahiya, K.; Sharma, A. Problem formulations and solvers in linear SVM: a review. 52, 803–855. [CrossRef]

- Chen, T.; Guestrin, C. XGBoost: A Scalable Tree Boosting System. In Proceedings of the Proceedings of the 22nd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, New York, NY, USA, 2016; KDD ’16, p. 785–794. [CrossRef]

- Arik, S.O.; Pfister, T. TabNet: Attentive Interpretable Tabular Learning. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 35, pp. 6679–6687. [CrossRef]

| Feature | Description |

|---|---|

| PRN | Pseudo-Random Noise identifying the specific satellite source. |

| DO | Doppler Offset measuring frequency shift due to relative motion (Hz). |

| PD | Pseudorange estimating satellite-receiver distance based on travel time (m). |

| RX | Receiver Time recorded by the local hardware clock. |

| TOW | Time of Week indicating seconds elapsed since the GPS week start. |

| CP | Carrier Phase capturing accumulated phase difference (cycles). |

| EC | Early Correlation value used for code tracking loops. |

| LC | Late Correlation value utilized for code tracking loops. |

| PC | Prompt Correlation value employed for data demodulation. |

| PIP | Prompt I-Prompt representing the in-phase correlation component. |

| PQP | Prompt Q-Prompt representing the quadrature-phase correlation component. |

| TCD | Time of Code Delay between received and local code (s). |

| CN0 | Carrier-to-Noise Density Ratio indicating signal strength (dB-Hz). |

| Category | Number of Samples |

|---|---|

| Total number of samples | 128,060 |

| Training samples (75.00%) | 96,045 |

| Testing samples (25.00%) | 32,015 |

| Class Distribution in Training Set | |

| Class 0 (Authentic) | 23,964 |

| Class 1 (Simplistic) | 23,886 |

| Class 2 (Intermediate) | 24,042 |

| Class 3 (Sophisticated) | 24,153 |

| Class Distribution in Testing Set | |

| Class 0 (Authentic) | 8,051 |

| Class 1 (Simplistic) | 8,129 |

| Class 2 (Intermediate) | 7,973 |

| Class 3 (Sophisticated) | 7,862 |

| Model | Class 0 | Class 1 | Class 2 | Class 3 | Macro Avg |

|---|---|---|---|---|---|

| Decision Tree | 0.9152 | 0.8563 | 0.8251 | 0.9675 | 0.8910 |

| Gaussian Naive Bayes | 0.4369 | 0.4589 | 0.4375 | 0.3943 | 0.4319 |

| K-Nearest Neighbor | 0.9085 | 0.7896 | 0.7677 | 0.8439 | 0.8274 |

| Logistic Regression | 0.4487 | 0.4629 | 0.4195 | 0.4338 | 0.4412 |

| Random Forest | 0.9675 | 0.8328 | 0.8659 | 0.9634 | 0.9074 |

| Support Vector Machines | 0.9265 | 0.7863 | 0.6914 | 0.8492 | 0.8134 |

| XGBoost | 0.9368 | 0.8372 | 0.8354 | 0.9500 | 0.8899 |

| TabNet | 0.9847 | 0.8462 | 0.9150 | 0.9612 | 0.9268 |

| Stack-TabNet1 | 0.9765 | 0.9038 | 0.9349 | 0.9905 | 0.9514 |

| Model | Class 0 | Class 1 | Class 2 | Class 3 | Macro Avg |

|---|---|---|---|---|---|

| Decision Tree | 0.8693 | 0.8636 | 0.8438 | 0.9778 | 0.8886 |

| Gaussian Naive Bayes | 0.4476 | 0.3898 | 0.4025 | 0.6246 | 0.4661 |

| K-Nearest Neighbor | 0.6684 | 0.8574 | 0.8395 | 0.9326 | 0.8245 |

| Logistic Regression | 0.3749 | 0.4190 | 0.3531 | 0.6154 | 0.4406 |

| Random Forest | 0.8064 | 0.9448 | 0.8560 | 0.9883 | 0.8989 |

| Support Vector Machines | 0.6592 | 0.9408 | 0.8123 | 0.9752 | 0.8469 |

| XGBoost | 0.7921 | 0.9261 | 0.8779 | 0.9492 | 0.8863 |

| TabNet | 0.8737 | 0.9754 | 0.8530 | 0.9928 | 0.9237 |

| Stack-TabNet1 | 0.9369 | 0.9008 | 0.8985 | 0.9987 | 0.9337 |

| Model | Class 0 | Class 1 | Class 2 | Class 3 | Macro Avg |

|---|---|---|---|---|---|

| Decision Tree | 0.8916 | 0.8600 | 0.8344 | 0.9726 | 0.8897 |

| Gaussian Naive Bayes | 0.4422 | 0.4215 | 0.4196 | 0.4833 | 0.4416 |

| K-Nearest Neighbor | 0.7707 | 0.8220 | 0.8020 | 0.8861 | 0.8202 |

| Logistic Regression | 0.4085 | 0.4400 | 0.3849 | 0.5090 | 0.4356 |

| Random Forest | 0.8796 | 0.8852 | 0.8609 | 0.9757 | 0.9004 |

| Support Vector Machines | 0.7704 | 0.8567 | 0.7468 | 0.9079 | 0.8205 |

| XGBoost | 0.8584 | 0.8794 | 0.8561 | 0.9496 | 0.8859 |

| TabNet | 0.9259 | 0.9060 | 0.8830 | 0.9767 | 0.9229 |

| Stack-TabNet1 | 0.9563 | 0.9023 | 0.9163 | 0.9946 | 0.9424 |

| Model | Weighted Precision | Weighted Recall | Weighted F1-Score | Accuracy (%) |

|---|---|---|---|---|

| Gaussian Naive Bayes | 0.4320 | 0.4661 | 0.4417 | 46.61 |

| Logistic Regression | 0.4413 | 0.4406 | 0.4357 | 44.06 |

| K-Nearest Neighbor | 0.8275 | 0.8245 | 0.8203 | 82.45 |

| Support Vector Machines | 0.8135 | 0.8469 | 0.8206 | 84.69 |

| XGBoost | 0.8899 | 0.8863 | 0.8860 | 88.63 |

| Decision Tree | 0.8896 | 0.8896 | 0.8896 | 88.96 |

| Random Forest | 0.9075 | 0.8989 | 0.9005 | 89.89 |

| TabNet | 0.9268 | 0.9237 | 0.9230 | 92.37 |

| Stack-TabNet1 | 0.9515 | 0.9337 | 0.9425 | 93.37 |

| Metric | Class 0 | Class 1 | Class 2 | Class 3 | Macro Avg |

|---|---|---|---|---|---|

| Precision | 0.9786 | 0.9539 | 0.9584 | 0.9926 | 0.9709 |

| Recall | 0.9545 | 0.9448 | 0.9393 | 0.9978 | 0.9591 |

| F1-Score | 0.9664 | 0.9493 | 0.9488 | 0.9952 | 0.9649 |

| Model | Input Features | Weighted F1-Score | Accuracy (%) |

|---|---|---|---|

| Stack-TabNet1 | 13 | 0.9425 | 93.37 |

| Stack-TabNet2 | 8 | 0.9649 | 95.91 |

| Improvement | -5 features (-38.5%) | +2.37% | +2.54% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).