Submitted:

14 April 2026

Posted:

15 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

2. Materials and Methods

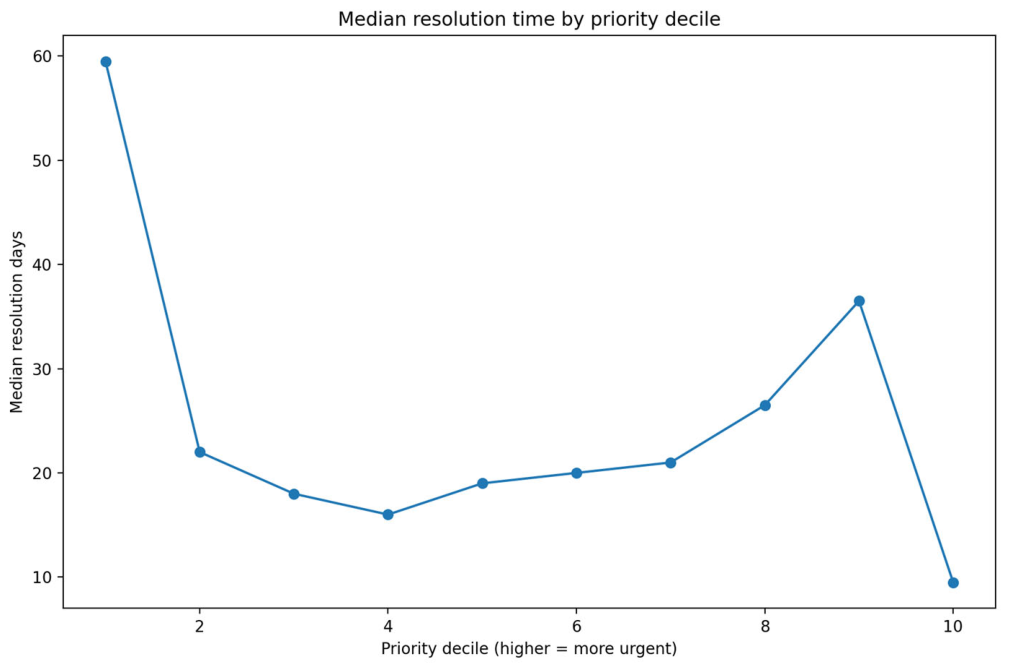

3. Results

4. Discussion

5. Conclusions

Funding

Data Availability Statement

Conflicts of Interest

References

- Goodchild, M. F. Citizens as sensors: The world of volunteered geography. GeoJournal 2007, 69(4), 211–221. [Google Scholar] [CrossRef]

- Haklay, M. How good is volunteered geographical information? A comparative study of OpenStreetMap and Ordnance Survey datasets. Environment and Planning B: Planning and Design 2010, 37(4), 682–703. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. BERT: Pre-training of deep bidirectional transformers for language understanding. Proceedings of NAACL-HLT, 2019. [Google Scholar]

- Conneau, A.; Khandelwal, K.; Goyal, N.; Chaudhary, V.; Wenzek, G.; Guzmán, F.; Stoyanov, V. Unsupervised cross-lingual representation learning at scale. Proceedings of ACL, 2020. [Google Scholar]

- Blei, D. M.; Ng, A. Y.; Jordan, M. I. Latent Dirichlet allocation. Journal of Machine Learning Research 2003, 3, 993–1022. [Google Scholar]

- Grootendorst, M. BERTopic: Neural topic modeling with a class-based TF–IDF procedure. arXiv 2022, arXiv:2203.05794. [Google Scholar]

- Anselin, L. Local indicators of spatial association—LISA. Geographical Analysis 1995, 27(2), 93–115. [Google Scholar] [CrossRef]

- Kulldorff, M. A spatial scan statistic. Communications in Statistics—Theory and Methods 1997, 26(6), 1481–1496. [Google Scholar] [CrossRef]

- Truong, C.; Oudre, L.; Vayatis, N. A review of change point detection methods. arXiv 2018, arXiv:1801.00718. [Google Scholar]

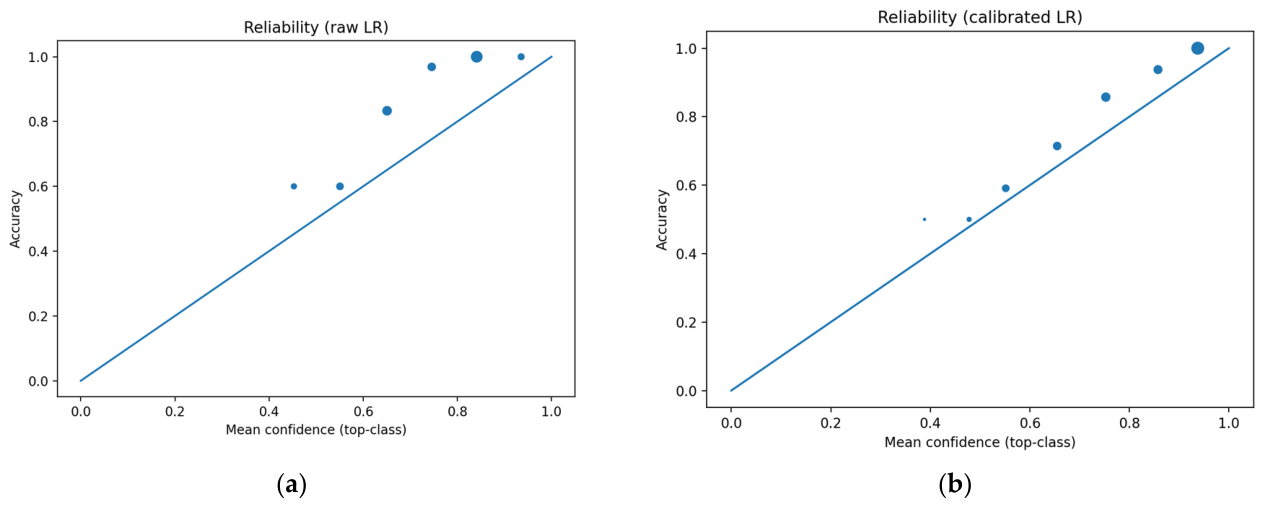

- Guo, C.; Pleiss, G.; Sun, Y.; Weinberger, K. Q. On calibration of modern neural networks. Proceedings of ICML, 2017. [Google Scholar]

- Niculescu-Mizil, A.; Caruana, R. Predicting good probabilities with supervised learning. Proceedings of ICML, 2005. [Google Scholar]

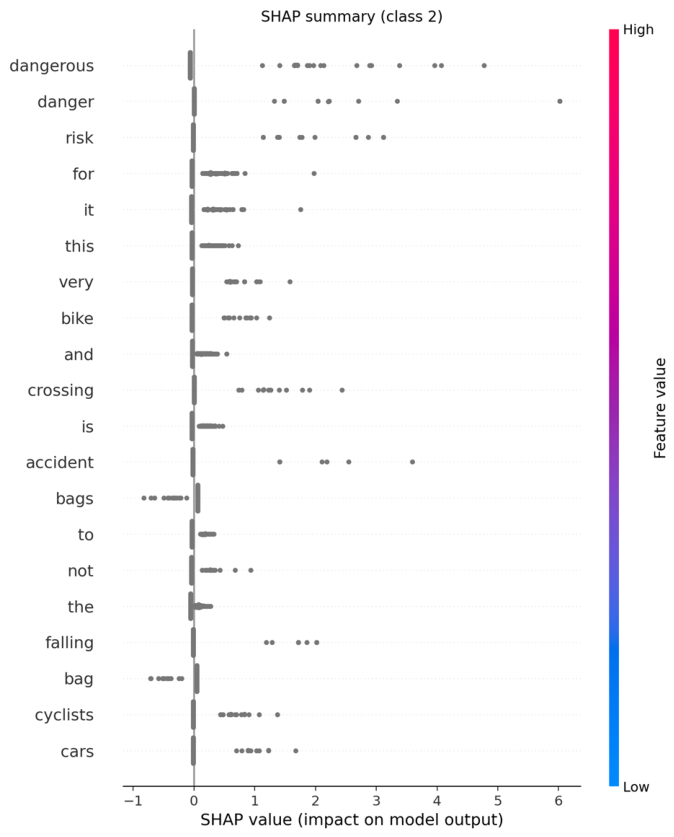

- Lundberg, S. M.; Lee, S.-I. A unified approach to interpreting model predictions. In Advances in Neural Information Processing Systems; 2017. [Google Scholar]

- mySociety. FixMyStreet (platform documentation and project resources). Research community references for dissemination venues: ACM dg.o (Digital Government Research). EGOV-CeDEM-ePart conference series; n.d. [Google Scholar]

- Conneau, A.; Khandelwal, K.; Goyal, N.; Chaudhary, V.; Wenzek, G.; Guzmán, F.; Stoyanov, V.; City of Brussels; Report an issue in public space (Fix My Street Brussels). Unsupervised cross-lingual representation learning at scale. arXiv n.d., arXiv:1911.02116. [Google Scholar]

| Field(s) | Type | Description |

|---|---|---|

| FID, gid, fims_id | Identifier | Unique record identifiers for traceability. |

| createddate, updateddate, closeddate | Temporal | Lifecycle timestamps for calculating resolution time and age. |

| category, head_category | Taxonomy | Hierarchical issue classification (e.g., Public Cleanliness). |

| responsible_org, responsible_dep | Administrative | Department responsible for intervention. |

| status | Operational | Current state (e.g., Open, Closed, Transferred). |

| road_fr, road_nl, pccp | Location | Address descriptors and postal codes. |

| comment, comment_reporter | Narrative | Free-text citizen report and source indicator. |

| comment_translated | Derived text | English-normalized text used for modeling. |

| geom | Spatial | Point geometry (EPSG:4326/31370). |

| Metric | Value | Notes |

|---|---|---|

| Total raw reports | 522,132 | Initial ingestion |

| Exact duplicates | 760 | Collapsed based on timestamp + geometry + text |

| Closed reports | 426,490 | Used for resolution-time calculation |

| Empty/null comments | 14.25% | Excluded from text modeling |

| Short text (<10 chars) | 15.57% | Flagged for robustness checks |

| Gold standard size | 1,000 | Manually annotated subset |

| Gold: high urgency (class 2) | 50 | Safety risks / urgent items |

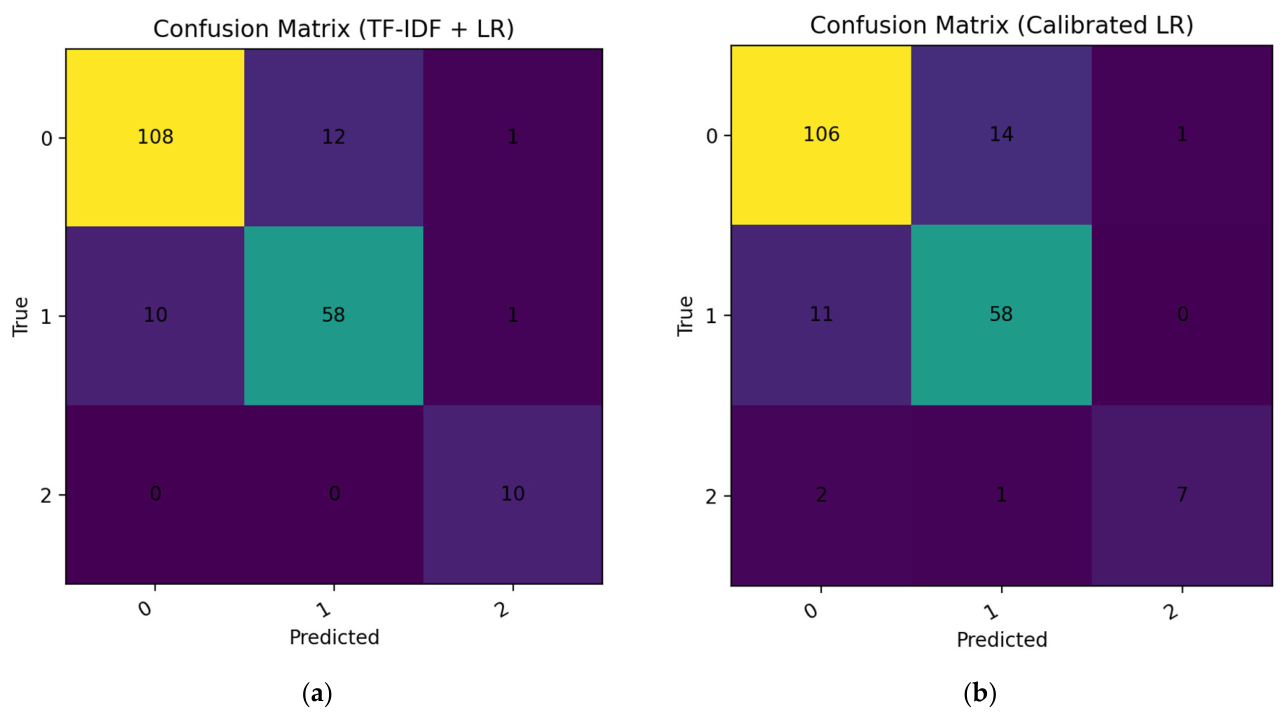

| Model | Accuracy | Macro F1 | Urgency (class 2) recall |

|---|---|---|---|

| TF–IDF + LR (raw) | 0.880 | 0.882 | 1.000 |

| TF–IDF + LR (calibrated) | 0.885 | 0.826 | 0.700 |

| Topic | Top words (abridged) | Total volume | Avg urgency prob. | Ag hostpot score |

|---|---|---|---|---|

| 14 | sidewalk, dangerous, hole, damaged, broken, bike, … | 244,568 | 0.0838 | 0.8402 |

| 11 | thank, hello, regrettably, … (template artifacts) | 144,160 | 0.0860 | 0.7538 |

| 15 | tree, height, boards, cardboard, … | 37,047 | 0.0396 | 1.0183 |

| 17 | furniture, chair, board, wooden, … | 25,911 | 0.0302 | 0.9318 |

| 0 | operator, forwarded, request, … | 14,258 | 0.0376 | 0.7280 |

| 5 | bag, white, blue, uncollected, … | 11,538 | 0.0373 | 0.7329 |

| 8 | street, corner, dirty, lighting, … | 11,176 | 0.0803 | 0.5498 |

| 4 | waste, construction, bin, … | 5,788 | 0.0467 | 0.6191 |

| 19 | non compliant, regulatory, parking, … | 2,945 | 0.0223 | 0.8391 |

| 3 | deposit, clandestine, illegal, … | 3,231 | 0.0342 | 0.6109 |

| Class | Term | Weight |

|---|---|---|

| High urgency (class 2) | Dangerous | 7.883 |

| High urgency (class 2) | Danger | 6.022 |

| High urgency (class 2) | Risk | 5.896 |

| High urgency (class 2) | Accident | 5.399 |

| High urgency (class 2) | Crossing | 4.306 |

| High urgency (class 2) | Cars | 3.734 |

| High urgency (class 2) | Falling | 3.500 |

| High urgency (class 2) | Cyclists | 2.707 |

| High urgency (class 2) | Bike | 2.450 |

| High urgency (class 2) | Pedestrians | 2.100 |

| High urgency (class 2) | Damage | 1.634 |

| High urgency (class 2) | Holes | 1.534 |

| High urgency (class 2) | Pedestrian | 1.506 |

| High urgency (class 2) | Marking | 1.430 |

| High urgency (class 2) | Weeks | 1.479 |

| Complaint (class 1) | Abandoned | 5.167 |

| Complaint (class 1) | bags | 4.322 |

| Complaint (class 1) | Bag | 4.216 |

| Complaint (class 1) | Not | 3.684 |

| Complaint (class 1) | Clandestine | 3.283 |

| Complaint (class 1) | Dirty | 3.115 |

| Complaint (class 1) | Again | 3.091 |

| Complaint (class 1) | Deposit | 2.931 |

| Complaint (class 1) | deposits | 2.925 |

| Complaint (class 1) | depot | 2.691 |

| Complaint (class 1) | Always | 2.440 |

| Complaint (class 1) | Illegal | 2.250 |

| Complaint (class 1) | Garbage | 2.222 |

| Complaint (class 1) | Depots | 2.146 |

| Complaint (class 1) | non | 2.146 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).