Submitted:

14 April 2026

Posted:

15 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

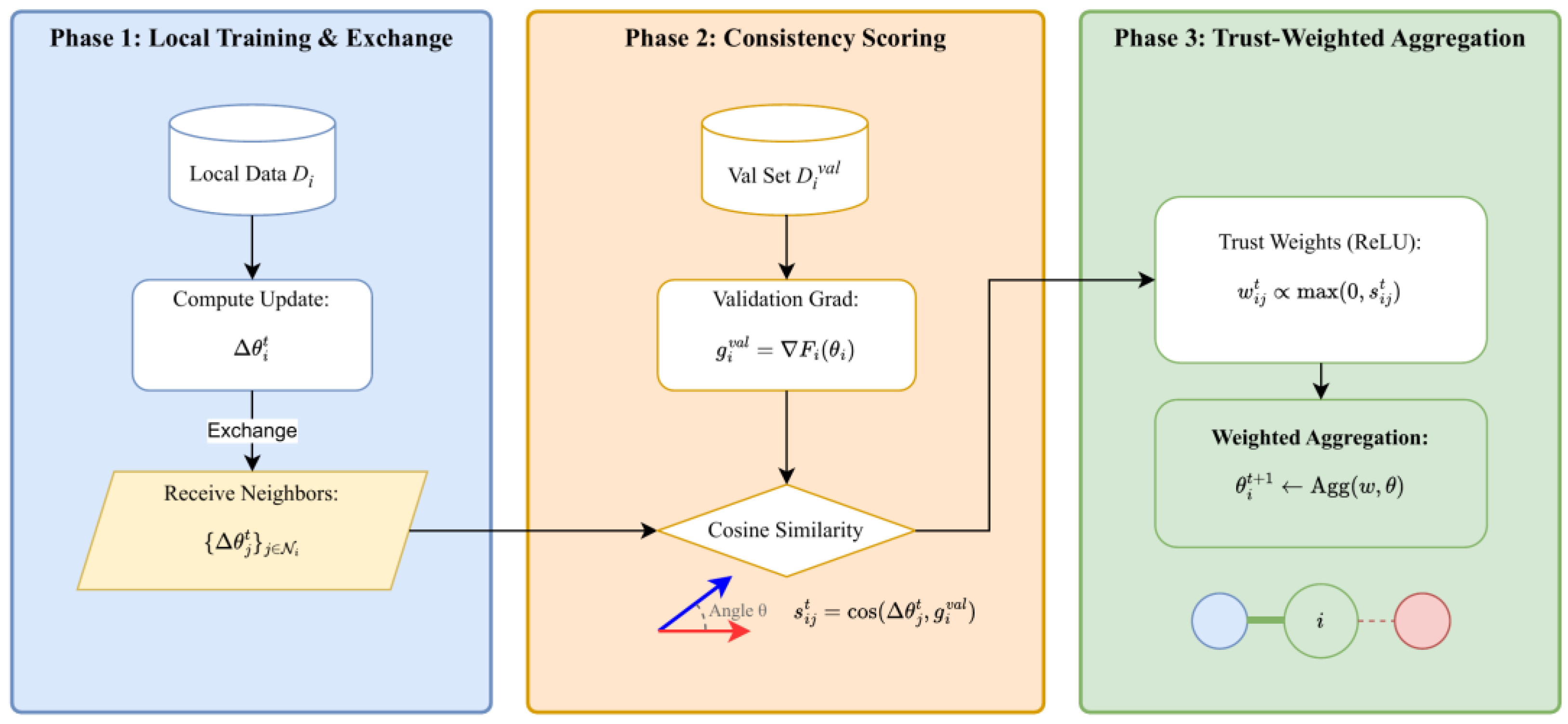

- We introduce a consistency-based trust scoring mechanism that computes the directional alignment between received neighbor updates and locally computed validation gradients.

- We propose a dynamic trust graph where edge weights reflect consistency scores, enabling topology-aware robust aggregation that naturally down-weights suspicious contributions.

- We conduct comprehensive experiments under three attack types across three network topologies, demonstrating consistent improvements over existing methods.

2. Methodology Foundations of the Proposed Approach

3. Problem Formulation

A. System Model

B. Threat Model

3. Proposed Method: TrustGraph-DFL

A. Overview

B. Consistency Score Computation

C. Trust Edge Weight Computation

D. Trust-Weighted Aggregation

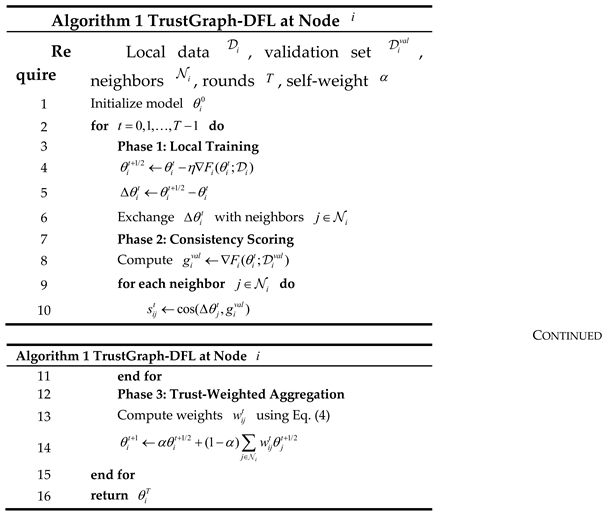

E. Algorithm Summary

4. Experiments

A. Experimental Setup

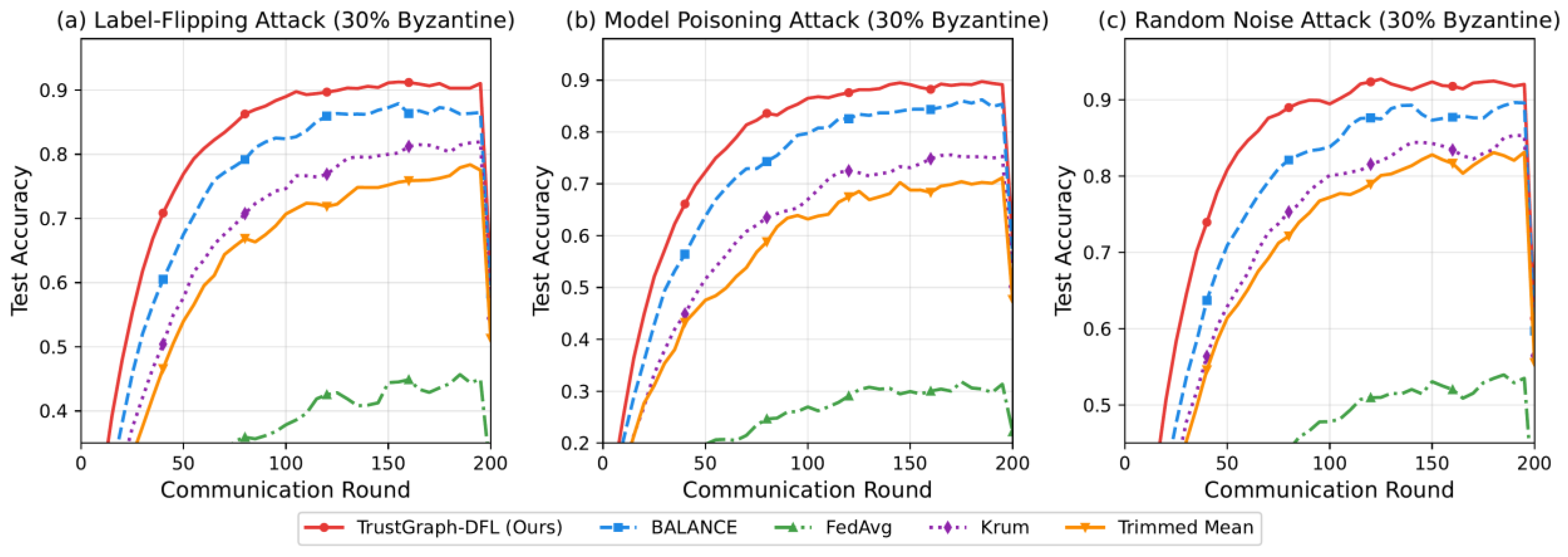

B. Main Results

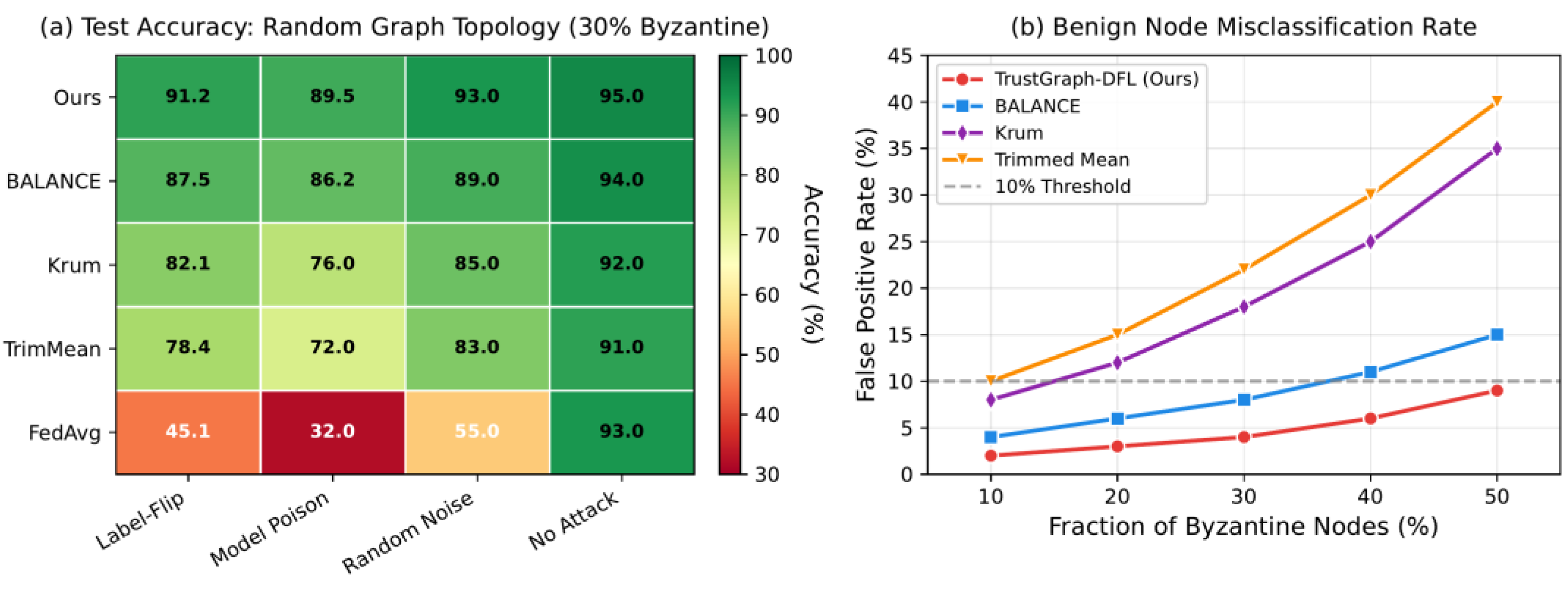

C. Performance Across Topologies and Attacks

D. False Positive Rate Analysis

E. Ablation Study

5. Discussion and Limitations

6. Conclusion

References

- B. McMahan, E. Moore, D. Ramage, S. Hampson and B. A. y Arcas, "Communication-Efficient Learning of Deep Networks from Decentralized Data," Proceedings of the Artificial Intelligence and Statistics, pp. 1273-1282, 2017.

- I. Hegedűs, G. Danner and M. Jelasity, "Decentralized Learning Works: An Empirical Comparison of Gossip Learning and Federated Learning," Journal of Parallel and Distributed Computing, vol. 148, pp. 109-124, 2021. [CrossRef]

- Z. Tang, S. Shi, B. Li and X. Chu, "GossipFL: A Decentralized Federated Learning Framework with Sparsified and Adaptive Communication," IEEE Transactions on Parallel and Distributed Systems, vol. 34, no. 3, pp. 909-922, 2022. [CrossRef]

- P. Blanchard, E. M. El Mhamdi, R. Guerraoui and J. Stainer, "Machine Learning with Adversaries: Byzantine Tolerant Gradient Descent," Advances in Neural Information Processing Systems, vol. 30, 2017.

- D. Yin, Y. Chen, R. Kannan and P. Bartlett, "Byzantine-Robust Distributed Learning: Towards Optimal Statistical Rates," Proceedings of the International Conference on Machine Learning, pp. 5650-5659, 2018.

- X. Cao, M. Fang, J. Liu and N. Z. Gong, "FLTrust: Byzantine-Robust Federated Learning via Trust Bootstrapping," arXiv preprint arXiv:2012.13995, 2020.

- M. Fang, Z. Zhang, H. Wang, P. Khanduri, J. Liu, S. Lu, Y. Liu and N. Gong, "Byzantine-Robust Decentralized Federated Learning," Proceedings of the 2024 ACM SIGSAC Conference on Computer and Communications Security, pp. 2874-2888, 2024.

- P. Sun, X. Liu, Z. Wang and B. Liu, "Byzantine-Robust Decentralized Federated Learning via Dual-Domain Clustering and Trust Bootstrapping," Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 24756-24765, 2024.

- N. Lyu, J. Jiang, L. Chang, C. Shao, F. Chen and C. Zhang, “Improving Pattern Recognition of Scheduling Anomalies through Structure-Aware and Semantically-Enhanced Graphs,” arXiv preprint arXiv:2512.18673, 2025.

- K. Cao, Y. Zhao, H. Chen, X. Liang, Y. Zheng and S. Huang, “Multi-Hop Relational Modeling for Credit Fraud Detection via Graph Neural Networks,” 2025.

- Y. Wang, “Adaptive Graph Construction and Spatiotemporal Contrastive Learning for Intelligent Cloud Service Monitoring,” 2026.

- Y. Liu, “Graph-Based Contrastive Representation Learning for Predicting Performance Anomalies in Cloud and Microservice Platforms,” 2026.

- Q. Gan, R. Ying, D. Li, Y. Wang, Q. Liu and J. Li, “Dynamic Spatiotemporal Causal Graph Neural Networks for Corporate Revenue Forecasting,” 2025. [CrossRef]

- Y. Shu, K. Zhou, Y. Ou, R. Yan and S. Huang, “A Self-Supervised Learning Framework for Robust Anomaly Detection in Imbalanced and Heterogeneous Time-Series Data,” 2025.

- A. Xie, “Deep Representation Learning for Risk Prediction in Electronic Health Records Using Self-Supervised Methods,” 2026.

- Y. Ou, S. Huang, F. Wang, K. Zhou and Y. Shu, “Adaptive Anomaly Detection for Non-Stationary Time-Series: A Continual Learning Framework with Dynamic Distribution Monitoring,” 2025.

- Y. Ou, S. Huang, R. Yan, K. Zhou, Y. Shu and Y. Huang, “A Residual-Regulated Machine Learning Method for Non-Stationary Time Series Forecasting Using Second-Order Differencing,” 2025.

- D. Wu, “Deep Learning Approach to Structure-Temporal Collaborative Anomaly Detection in Microservice Architectures,” 2026.

- F. Liu, “Intelligent Cloud Service Anomaly Monitoring via Uncertainty Estimation and Causal Graph Inference,” Transactions on Computational and Scientific Methods, vol. 4, no. 10, 2024.

- H. Wang, C. Nie and C. Chiang, “Attention-Driven Deep Learning Framework for Intelligent Anomaly Detection in ETL Processes,” 2025.

- M. Wang, S. Wang, Y. Li, Z. Cheng and S. Han, “Deep Neural Architecture Combining Frequency and Attention Mechanisms for Cloud CPU Usage Prediction,” Proceedings of the 2025 10th International Conference on Computer and Information Processing Technology (ISCIPT), pp. 215-220, Sep. 2025.

- J. Li, Q. Gan, R. Wu, C. Chen, R. Fang and J. Lai, “Causal Representation Learning for Robust and Interpretable Audit Risk Identification in Financial Systems,” 2025. [CrossRef]

- R. Ying, Q. Liu, Y. Wang and Y. Xiao, “AI-Based Causal Reasoning over Knowledge Graphs for Data-Driven and Intervention-Oriented Enterprise Performance Analysis,” 2025.

- X. Yang, S. Sun, Y. Li, Y. Xing, M. Wang and Y. Wang, “CaliCausalRank: Calibrated Multi-Objective Ad Ranking with Robust Counterfactual Utility Optimization,” arXiv preprint arXiv:2602.18786, 2026.

- K. Gao, H. Zhu, R. Liu, J. Li, X. Yan and Y. Hu, “Contextual Trust Evaluation for Robust Coordination in Large Language Model Multi-Agent Systems,” 2025.

- J. Chen, J. Yang, Z. Zeng, Z. Huang, J. Li and Y. Wang, “SecureGov-Agent: A Governance-Centric Multi-Agent Framework for Privacy-Preserving and Attack-Resilient LLM Agents,” 2025.

- Y. Wang, “Multi-Agent Collaborative Modeling for Systemic Risk Propagation in Financial Markets: A Game-Theoretic Framework,” 2026.

- F. Wang, Y. Ma, T. Guan, Y. Wang and J. Chen, “Autonomous Learning Through Self-Driven Exploration and Knowledge Structuring for Open-World Intelligent Agents,” 2026.

- B. Chen, F. Qin, Y. Shao, J. Cao, Y. Peng and R. Ge, “Fine-grained imbalanced leukocyte classification with global-local attention transformer,” Journal of King Saud University-Computer and Information Sciences, vol. 35, no. 8, p. 101661, 2023. [CrossRef]

- X. Yan, J. Du, X. Li, X. Wang, X. Sun, P. Li and H. Zheng, “A Hierarchical Feature Fusion and Dynamic Collaboration Framework for Robust Small Target Detection,” IEEE Access, vol. 13, pp. 123456–123467, 2025. [CrossRef]

- X. Song, “Integrating Attention Attribution and Pretrained Language Models for Transparent Discriminative Learning,” 2026.

- H. Zheng, Y. Ma, Y. Wang, G. Liu, Z. Qi and X. Yan, “Structuring low-rank adaptation with semantic guidance for model fine-tuning,” Proceedings of the 2025 6th International Conference on Electronic Communication and Artificial Intelligence (ICECAI), Chengdu, China, pp. 731-735, 2025.

- H. Zheng, L. Zhu, W. Cui, R. Pan, X. Yan and Y. Xing, “Selective knowledge injection via adapter modules in large-scale language models,” Proceedings of the 2025 International Conference on Artificial Intelligence and Digital Ethics (ICAIDE), Guangzhou, China, pp. 373-377, 2025.

- Z. Qiu, D. Wu, F. Liu and Y. Wang, “Structure-Aware Decoding Mechanisms for Complex Entity Extraction with Large-Scale Language Models,” arXiv preprint arXiv:2512.13980, 2025.

- T. Guan, S. Sun and B. Chen, “Faithfulness-Aware Multi-Objective Context Ranking for Retrieval-Augmented Generation,” 2025.

- S. Pan and D. Wu, “Trustworthy Summarization via Uncertainty Quantification and Risk Awareness in Large Language Models,” Proceedings of the 2025 6th International Conference on Computer Vision and Data Mining (ICCVDM), pp. 523-527, Sep. 2025.

- Y. Li, L. Zhu and Y. Zhang, “Robust Text Semantic Classification via Retrieval-Augmented Generation,” Transactions on Computational and Scientific Methods, vol. 4, no. 10, 2024.

- Y. Luan, “Iterative Self-Questioning Supervision with Semantic Calibration for Stable Reasoning Chains in Large Language Models,” 2026.

- H. Liu, Y. Kang and Y. Liu, “Privacy-Preserving and Communication-Efficient Federated Learning for Cloud-Scale Distributed Intelligence,” Proceedings of the 2025 6th International Conference on Machine Learning and Computer Application (ICMLCA), pp. 830-834, Oct. 2025.

- H. Feng, Y. Wang, R. Fang, A. Xie and Y. Wang, “Federated Risk Discrimination with Siamese Networks for Financial Transaction Anomaly Detection,” 2025.

- X. Zhang, Q. Wang and X. Wang, “Joint Cross-Modal Representation Learning of ECG Waveforms and Clinical Reports for Diagnostic Classification,” Transactions on Computational and Scientific Methods, vol. 6, no. 2, 2026.

- H. Jiang, F. Qin, J. Cao, Y. Peng and Y. Shao, “Recurrent neural network from adder’s perspective: Carry-lookahead RNN,” Neural Networks, vol. 144, pp. 297-306, 2021. [CrossRef]

- R. Liu, L. Yang, R. Zhang and S. Wang, “Generative Modeling of Human-Computer Interfaces with Diffusion Processes and Conditional Control,” arXiv preprint arXiv:2601.06823, 2026. [CrossRef]

- J. Yang, J. Chen, Z. Huang, C. Xu, C. Zhang and S. Li, "Cost-TrustFL: Cost-Aware Hierarchical Federated Learning with Lightweight Reputation Evaluation across Multi-Cloud," arXiv preprint arXiv:2512.20218, 2025.

- J. Jiang, C. Shao, C. Zhang, N. Lyu and Y. Ni, "Adaptive AI Spatiotemporal Modeling with Dependency Drift Awareness for Anomaly Detection in Large-Scale Clusters," 2025.

- Y. Ni, X. Yang, Y. Tang, Z. Qiu, C. Wang and T. Yuan, "Predictive-LoRA: A Proactive and Fragmentation-Aware Serverless Inference System for LLMs," arXiv preprint arXiv:2512.20210, 2025.

| Method | Ring | Random | Small-World |

|---|---|---|---|

| FedAvg | 42.3 | 45.1 | 43.8 |

| Krum | 78.5 | 82.1 | 80.3 |

| Trimmed Mean | 74.2 | 78.4 | 76.1 |

| BALANCE | 85.3 | 87.5 | 86.2 |

| TrustGraph-DFL | 89.1 | 91.2 | 90.4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).