Submitted:

14 April 2026

Posted:

15 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review

3. Methodology

3.1. Problem Definition

Problem Assumptions

- 1.

- Machines are continuously available throughout the scheduling horizon, with no downtime due to breakdowns or maintenance.

- 2.

- Operations are processed without interruption once started, that is, processing is non-preemptive.

- 3.

- Processing-time-dependent carbon emissions remain constant for each machine during active operation.

- 4.

- All jobs are available for processing at time zero, and job due dates are predetermined and fixed.

- 5.

- Tardiness penalties are deterministic and time-invariant over the scheduling horizon.

- 6.

- The study considers a deterministic and steady-state production environment, without uncertain processing times, machine failures, sequence-dependent setup times, dynamic job arrivals, or worker-related constraints.

- 7.

- Carbon-emission estimation is based on steady-state machine processing conditions and does not incorporate transient operating states, time-varying carbon intensity, or machine warm-up and cool-down effects.

Notations

- : Set of jobs

- : Set of machines

- : Operations of job

- : Set of all operations

- : Eligible machines for operation

- : Processing time (mins) of operation on machine

- : Power consumption of machine m during processing (kW)

- : Carbon intensity of machine m (kg /kWh)

- : Due date for job j

- : Tardiness penalty rate

- : Baseline carbon value for instance category c

- : Baseline tardiness penalty value for instance category c

- : Scalarization weights, where

- B: A sufficiently large positive constant

- : Carbon emissions (kg )

- : 1 if operation is assigned to machine m

- : Start time of operation

- : Completion time of job j

- : Tardiness of job j

- : Makespan

- : Sequencing variable for two operations sharing machine m

Mathematical Formulation

3.1.1. Objective Scaling and Weighting

3.2. Policy-Based Rough Optimization with Large Neighborhood Search (Pro-LNS)

3.2.1. Phase I: MDP-Based Reinforcement Learning

State space:

- Ready-flag vector: Indicates which operations are currently eligible for dispatch:

- Machine ready-time vector: Records the earliest time at which each machine becomes available:

- Normalized earliest-completion-time matrix: Estimates the completion time of each eligible operation on each eligible machine:where denotes the k-th eligible machine for operation .

- Critical-path metrics: Encodes downstream workload and due-date slack. Let denote the precomputed lower bound on the remaining processing time of job j from operation o onward, obtained as the sum of minimum eligible processing times of the remaining operations. Letdenote the earliest machine-available time at decision step t. Then

Action space:

Transition function:

Reward function:

Learning objective:

3.2.2. Phase II: Adaptive Large Neighborhood Search (LNS)

Adaptive Removal:

- 1.

- Marginal-impact scoring: For each scheduled operation , estimate its contribution to the scalarized objective by evaluating the changes in carbon emissions and tardiness penalty associated with removing and reinserting that operation. The combined score is computed as

- 2.

- Removal: Remove the operations with the highest , producing a partial schedule in which the most disruptive operations are unscheduled.

- 3.

- Adaptive tuning: Define the destroy-size bounds dynamically aswhere are predefined ratios. If reinserting the removed operations yields an improved schedule, setotherwise set

Greedy Reinsertion:

- 1.

- Precedence constraint: Operation is considered for reinsertion only after its predecessor , if any, has already been reinserted.

- 2.

- Feasible start times: For each eligible machine , compute

- 3.

- Objective-based choice: For each eligible machine, evaluate , the increase in the scalarized objective if is inserted on machine m. The operation is then assigned to the machine

Acceptance and Adaptation:

Termination:

3.2.3. Policy-Based Rough Optimization with Large Neighborhood Search

| Algorithm 1:Policy-based Rough Optimization Neighborhood Search (Pro-LNS) |

|

3.2.4. RL Architecture and Training Protocol

RL Architecture.

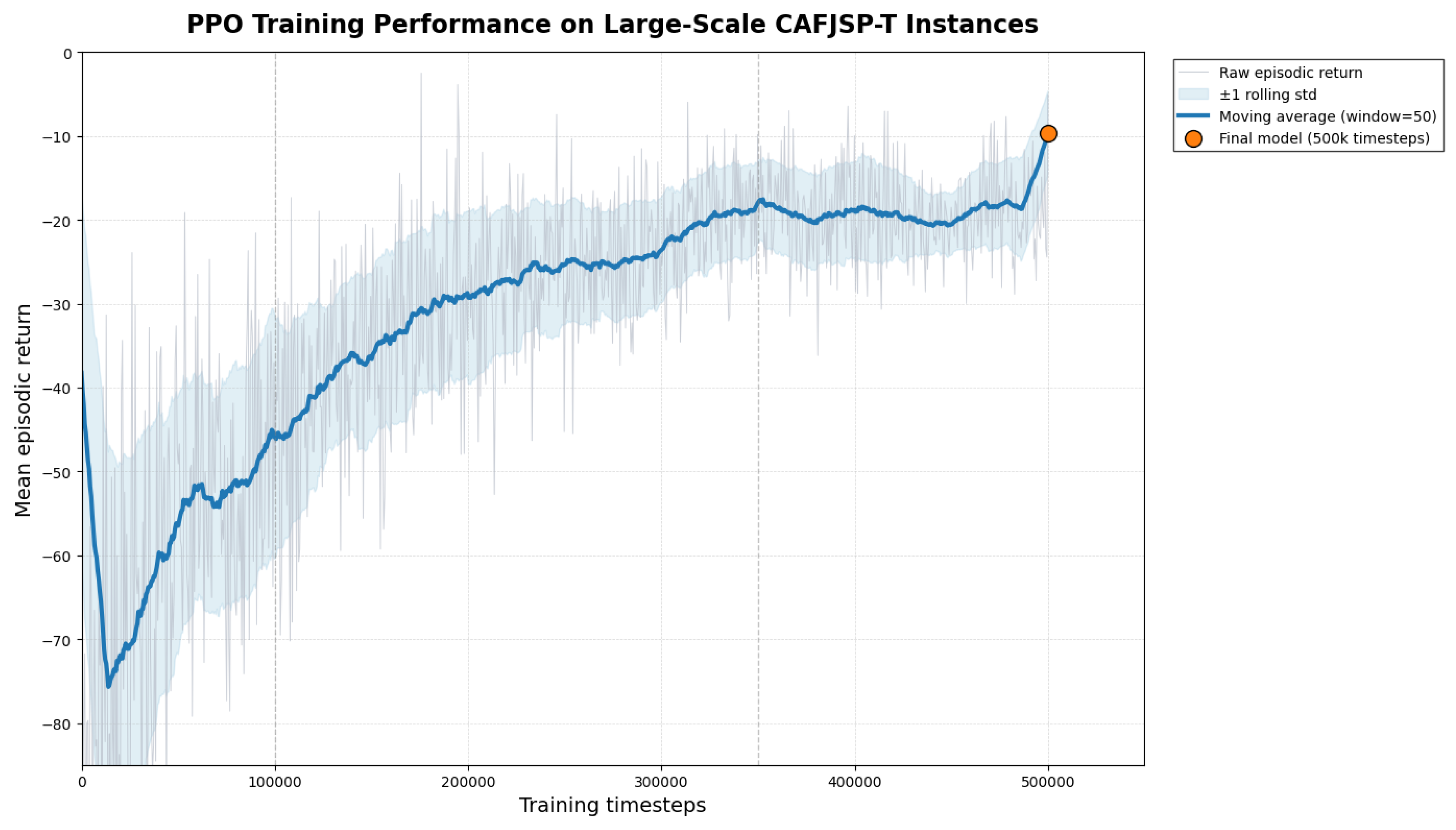

Training Protocol.

PPO Technical Details.

- Policy type: Multi-Layer Perceptron (MLP)

- Hidden layers: [256, 256]

- Activation: ReLU

- Learning rate:

- Entropy coefficient:

- Mini-batch size: 64

- Total training timesteps:

- Number of parallel environments: 8

- Discount factor (): 0.99

- GAE parameter (): 0.95

- Clipping range: 0.2

- Optimization epochs per update: 10

- Value-function coefficient: 0.5

- Maximum gradient norm: 0.5

- Loss function: Clipped Surrogate Objective (PPO) with Mean Squared Error (MSE) Value Loss and Entropy Regularization

- Advantage normalization: True (per-batch)

3.3. Benchmark Instances and Experimental Setup

- Benchmark-based warm-start evaluation: The proposed Pro-LNS framework was applied to the full set of benchmark instances. For each instance, the final Pro-LNS solution was used to warm-start the MILP formulation of the same CAFJSP-T instance by providing it to the solver as an initial incumbent. The MILP solver was then run on the same instance to obtain a best bound and the corresponding optimality gap. This procedure was used to evaluate the quality of the Pro-LNS solution relative to the exact formulation and to quantify how close the final Pro-LNS schedule was to proven optimality within the allotted MILP solve time.

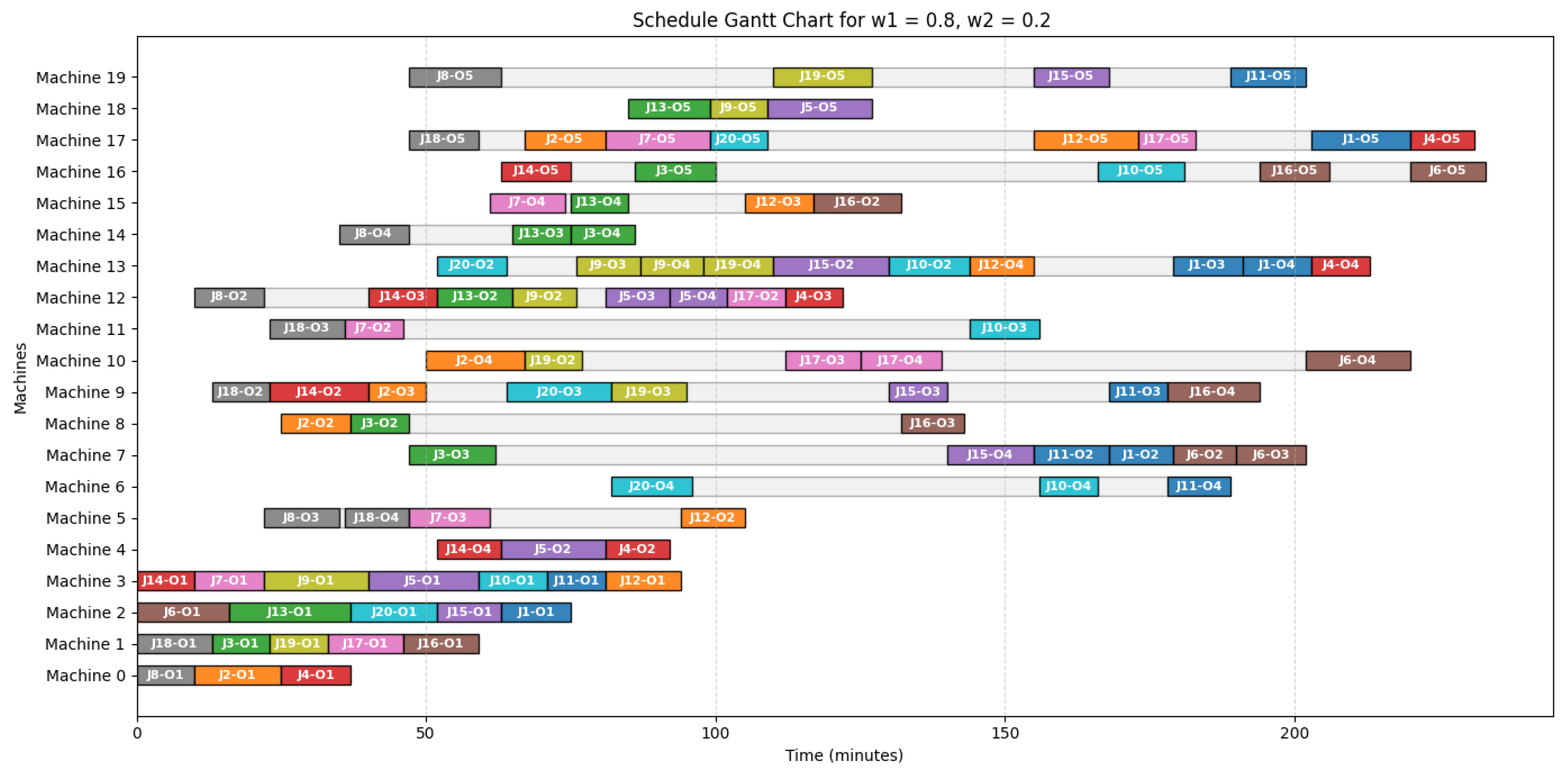

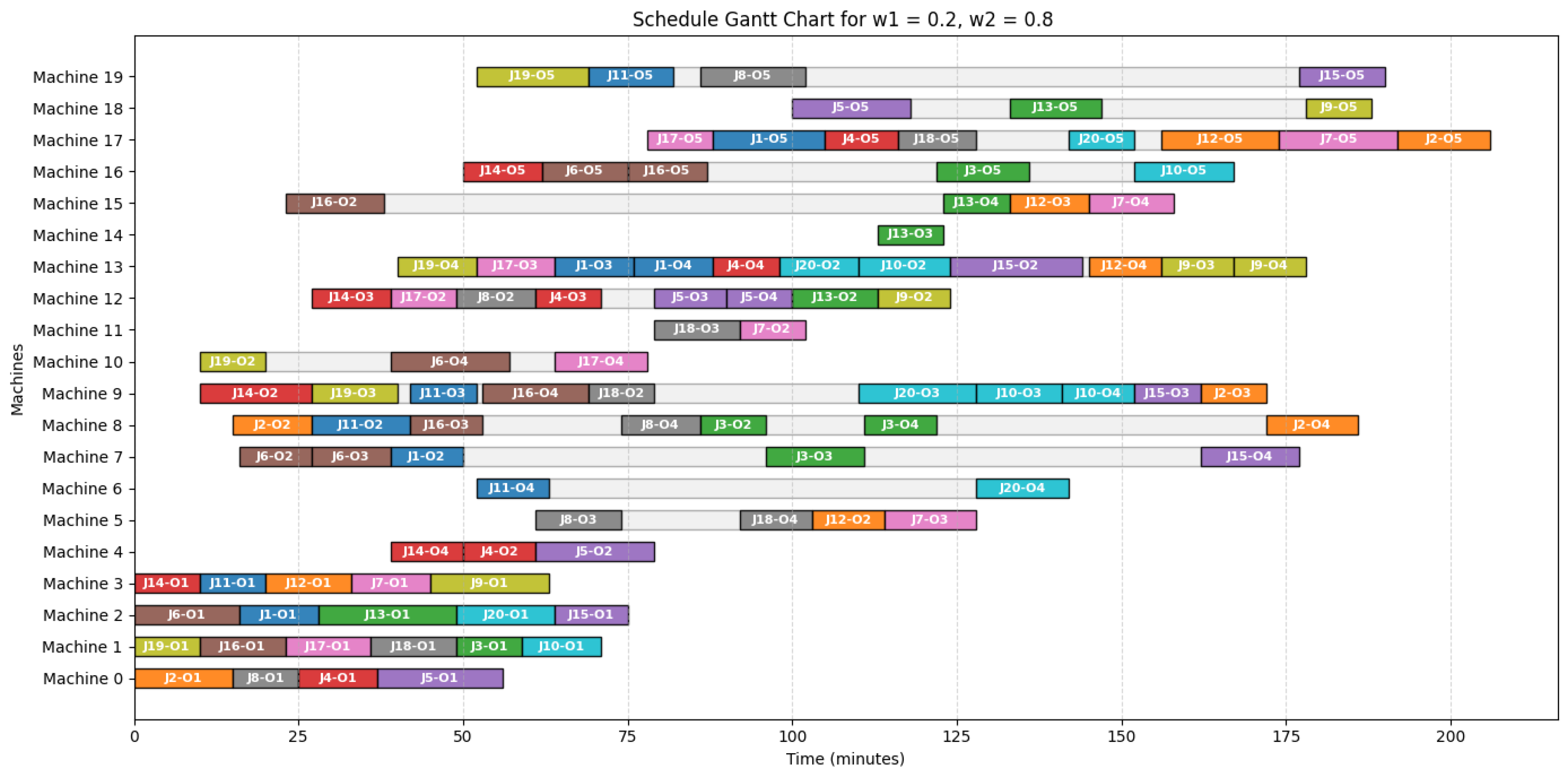

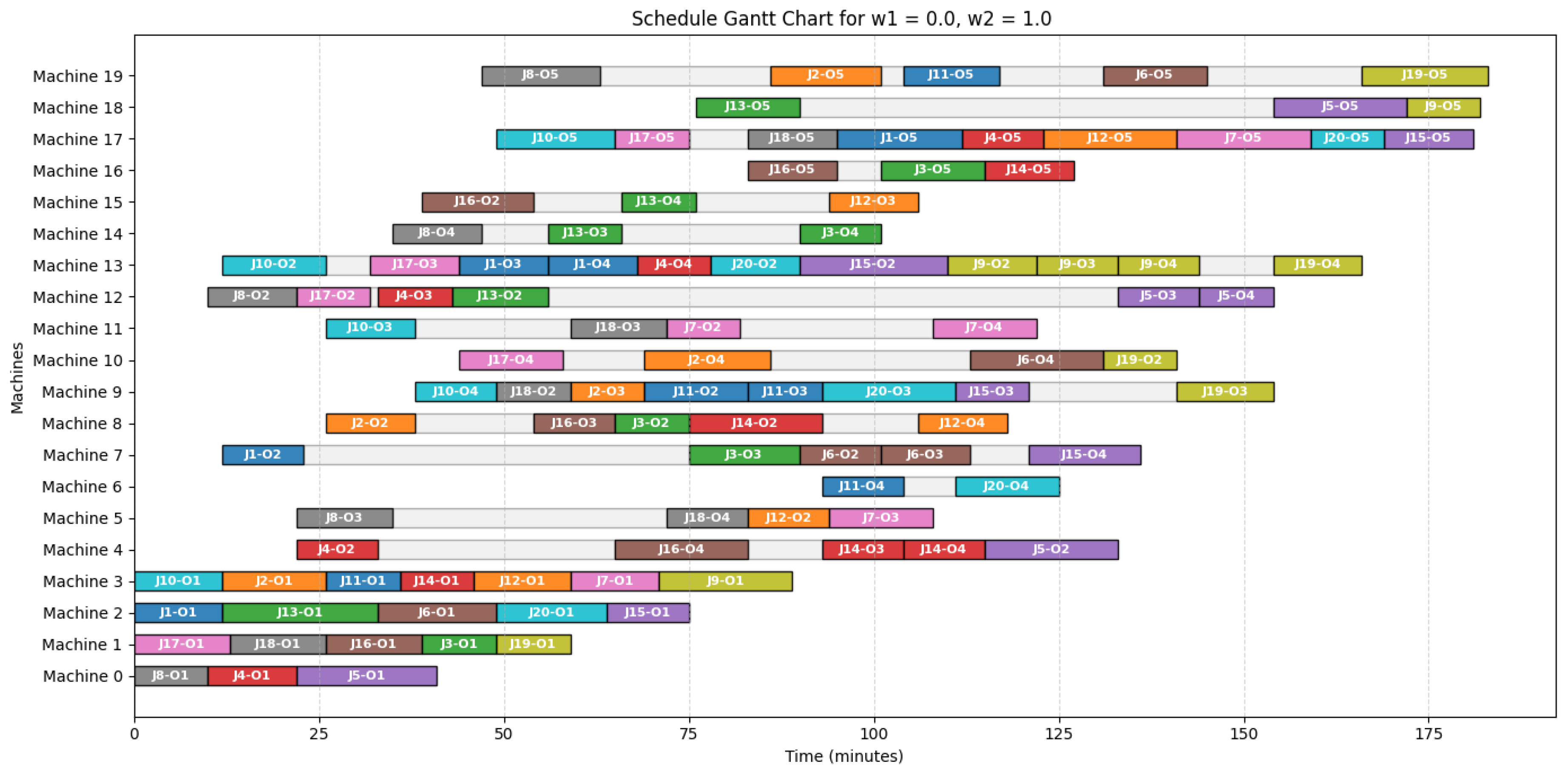

- Weight-sensitivity analysis: A weight-sensitivity analysis was conducted to examine how the schedule changes under different objective-function priorities. By varying the scalarization weights assigned to carbon emissions and tardiness penalty, the analysis was used to study how the resulting schedules respond to different relative priorities between the two objective components. This analysis illustrates the effect of the weighted objective structure on scheduling decisions.

4. Results

- Pro-LNS delivers strong due-date performance on a substantial portion of the benchmark set. Zero tardiness is achieved on sm01_1, sm01_3, med01_2, and lar01_1, and tardiness remains very small on med02_1, med02_5, and lar02_3. Thus, in 7 of the 15 reported instances, Pro-LNS produces schedules with either zero tardiness or only negligible delay, while still controlling carbon emissions under the same equal-weight objective.

- The optimality-gap results indicate that the final Pro-LNS solutions are highly competitive with respect to the exact MILP formulation. Across the benchmark instances reported in Table 2, the median optimality gap of 6.12%, and the maximum gap is 13.67%. Moreover, 11 of the 15 instances remain within a 10% optimality gap, and all reported instances remain within 14%. Given that these gaps are computed on the same constrained Carbon-Aware Flexible Job Shop Scheduling Problem with Tardiness Penalty (CAFJSP-T) formulation after warm-starting the MILP solver with the final Pro-LNS solution, these values provide strong evidence that Pro-LNS produces high-quality incumbent solutions.

- The method remains computationally efficient across all benchmark categories. The average CPU time is 4.08 seconds, and the maximum reported CPU time is 10.51 seconds. This means that Pro-LNS is able to return competitive schedules with bounded optimality gaps in only a few seconds, which is especially valuable for complex flexible job shop environments where exact methods alone can become computationally burdensome.

- Pro-LNS preserves balanced performance under equal objective weighting. Even in instances where tardiness becomes more pronounced, the method continues to return feasible schedules with controlled carbon emissions, reasonable makespans, and moderate optimality gaps. This indicates that Pro-LNS does not sacrifice one objective uncontrollably in order to improve the other, but instead maintains a balanced trade-off structure under the equal-weight formulation.

- From a managerial perspective, the results suggest that Pro-LNS is well suited for practical production planning in settings where sustainability and delivery reliability must be addressed together. The combination of low runtimes, controlled emissions, and relatively tight optimality gaps means that decision-makers can obtain strong schedules quickly, while still retaining confidence that the solutions are close to the benchmark provided by the exact formulation. This is particularly useful in operational environments where schedules may need to be generated or updated repeatedly within limited planning time.

5. Conclusion

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Li, Zeying; Rasool, Saad; Fedai Cavus, Mustafa; Shahid, Waseem. Sustaining the future: How green capabilities and digitalization drive sustainability in modern business. Heliyon 2024, 10. [Google Scholar] [CrossRef]

- El Mokadem, Mohamed; Khalaf, Magdy. Building sustainable performance through green supply chain management. International Journal of Productivity and Performance Management 2024. [Google Scholar] [CrossRef]

- Mahar, Atif Sattar; Zhang, Yang; Sadiq, Burhan; Gul, Rana Faizan. Sustainability Transformation Through Green Supply Chain Management Practices and Green Innovations in Pakistan’s Manufacturing and Service Industries. Sustainability 2025, 17(5). [Google Scholar] [CrossRef]

- Wang, Mengmeng; Zhang, Guocheng. What motivates firms to adopt a green supply chain and how much does it matter? Frontiers in Environmental Science 2023. [Google Scholar] [CrossRef]

- Poggi, A.; Di Persio, L.; Ehrhardt, M. Electricity Price Forecasting via Statistical and Deep Learning Approaches: The German Case. AppliedMath 2023, 3(2), 316–342. [Google Scholar] [CrossRef]

- Narkhede, Ganesh; Chinchanikar, Satish; Narkhede, Rupesh; Chaudhari, Tansen. Role of Industry 5.0 for driving sustainability in the manufacturing sector: an emerging research agenda. Journal of Strategy and Management 2024. [Google Scholar] [CrossRef]

- Ghobakhloo, Morteza; Iranmanesh, M.; Foroughi, B.; Babaee Tirkolaee, Erfan; Asadi, S.; Amran, A. Industry 5.0 implications for inclusive sustainable manufacturing: An evidence-knowledge-based strategic roadmap. Journal of Cleaner Production 2023. [Google Scholar] [CrossRef]

- Tang, Yimin; Shen, Lihong; Han, Shuguang. Low-Carbon Flexible Job Shop Scheduling Problem Based on Deep Reinforcement Learning. Sustainability 2024, 16(11). [Google Scholar] [CrossRef]

- Aghakhani, S.; Rajabi, M.S. A New Hybrid Multi-Objective Scheduling Model for Hierarchical Hub and Flexible Flow Shop Problems. AppliedMath 2022, 2(4), 721–737. [Google Scholar] [CrossRef]

- Destouet, Candice; Tlahig, Houda; Bettayeb, B.; Mazari, B. Flexible job shop scheduling problem under Industry 5.0: A survey on human reintegration, environmental consideration and resilience improvement. Journal of Manufacturing Systems 2023. [Google Scholar] [CrossRef]

- Gong, Qingshan; Li, Junlin; Jiang, Zhigang; Wang, Yan. A hierarchical integration scheduling method for flexible job shop with green lot splitting. Engineering Applications of Artificial Intelligence 2024, 129, 107595. [Google Scholar] [CrossRef]

- Mencaroni, A.; Leyman, P.; Raa, B.; De Vuyst, S.; Claeys, D. Towards net-zero manufacturing: Carbon-aware scheduling for GHG emissions reduction. Journal of Cleaner Production 2025, 529, 146787. [Google Scholar] [CrossRef]

- Georgiadis, G. P.; Dimitriadis, C. N.; Georgiadis, M. C. Decarbonizing the Industry Sector: Current Status and Future Opportunities of Energy-Aware Production Scheduling. Processes 13, 1941, 2025. [CrossRef]

- Naidu, J. T. A New Algorithm for the Weighted Tardiness Problem. Journal of Applied Business & Economics 2025, 27(5). [Google Scholar] [CrossRef]

- de Athayde Prata, B.; de Abreu, L. R.; Fernandez-Viagas, V. A systematic review of permutation flow shop scheduling with due-date-related objectives. Computers & Operations Research 2025, 106989. [Google Scholar] [CrossRef]

- Xiong, F.; Chen, S.; Xiong, N.; Jing, L. Scheduling distributed heterogeneous non-permutation flowshop to minimize the total weighted tardiness. Expert Systems with Applications 2025, 272, 126713. [Google Scholar] [CrossRef]

- Ulucak, M. I.; Gökçen, H. Dynamic Scheduling in Identical Parallel-Machine Environments: A Multi-Purpose Intelligent Utility Approach. Applied Sciences 15(5), 2483, 2025. [CrossRef]

- Meng, L.; Cheng, W.; Zhang, B.; Zou, W.; Duan, P. A novel hybrid algorithm of genetic algorithm, variable neighborhood search and constraint programming for distributed flexible job shop scheduling problem. International Journal of Industrial Engineering Computations 2024. [Google Scholar] [CrossRef]

- Nessari, S.; Tavakkoli-Moghaddam, R.; Bakhshi-Khaniki, H.; Bozorgi-Amiri, A. A hybrid simheuristic algorithm for solving bi-objective stochastic flexible job shop scheduling problems. Decision Analytics Journal 2024. [Google Scholar] [CrossRef]

- Seck-Tuoh-Mora, J. C.; Escamilla-Serna, N. J.; Montiel-Arrieta, L. J.; Barragán-Vite, I.; Medina-Marín, J. A Global Neighborhood with Hill-Climbing Algorithm for Fuzzy Flexible Job Shop Scheduling Problem. Mathematics 2022, 10(22). [Google Scholar] [CrossRef]

- Berterottiére, L.; Dauzére-Pérés, S.; Yugma, C. Flexible job-shop scheduling with transportation resources. European Journal of Operational Research 2023, 312(3), 890–909. [Google Scholar] [CrossRef]

- Li, Z.; Chen, Y.-H. Minimizing the makespan and carbon emissions in the green flexible job shop scheduling problem with learning effects. Scientific Reports 2023, 13. [Google Scholar] [CrossRef]

- Jia, S.; Yang, Y.; Li, S.; Wang, S.; Li, A.; Cai, W.; Liu, Y.; Hao, J.; Hu, L. The Green Flexible Job-Shop Scheduling Problem Considering Cost, Carbon Emissions, and Customer Satisfaction under Time-of-Use Electricity Pricing. Sustainability 2024, 16(6). [Google Scholar] [CrossRef]

- Xu, G.; Bao, Q.; Zhang, H. Multi-objective green scheduling of integrated flexible job shop and automated guided vehicles. Engineering Applications of Artificial Intelligence 2023, 126, 106864. [Google Scholar] [CrossRef]

- Tang, H.; Huang, J.; Ren, C.; Shao, Y.; Lu, J. Integrated scheduling of multi-objective lot-streaming hybrid flowshop with AGV based on deep reinforcement learning. International Journal of Production Research 2024, 63(4), 1275–1303. [Google Scholar] [CrossRef]

- Füchtenhans, M.; Glock, C. The impact of incentive-based programmes on job-shop scheduling with variable machine speeds. International Journal of Production Research 2023, 62(12), 4546–4564. [Google Scholar] [CrossRef]

- Park, M.-J.; Ham, A. Energy-aware flexible job shop scheduling under time-of-use pricing. International Journal of Production Economics 2022. [Google Scholar] [CrossRef]

- Chen, Y.; Liao, X.; Chen, G.; Hou, Y. Dynamic Intelligent Scheduling in Low-Carbon Heterogeneous Distributed Flexible Job Shops with Job Insertions and Transfers. Sensors 2024, 24(7). [Google Scholar] [CrossRef]

- Wang, Zhixue; He, Maowei; Wu, Ji; Chen, Hanning; Cao, Yang. An improved MOEA/D for low-carbon many-objective flexible job shop scheduling problem. Computers & Industrial Engineering 2024, 188, 109926. [Google Scholar] [CrossRef]

- Xiao, Y.; Yin, S.; Ren, G.; Liu, W. Study on flexible job shop scheduling problem considering energy saving. Journal of Intelligent & Fuzzy Systems 2024, 46, 5493–5520. [Google Scholar] [CrossRef]

- Peng, W.; Yu, D.; Xie, F. Multi-mode resource-constrained project scheduling problem with multiple shifts and dynamic energy prices. International Journal of Production Research 2024, 63(7), 2483–2506. [Google Scholar] [CrossRef]

- Wei, Z.; Liao, W.; Zhang, L. Hybrid energy-efficient scheduling measures for flexible job-shop problem with variable machining speeds. Expert Systems with Applications 2022, 197, 116785. [Google Scholar] [CrossRef]

- Rakovitis, N.; Li, D.; Zhang, N.; Li, J.; Zhang, L.; Xiao, X. Novel Approach to Energy-Efficient Flexible Job-Shop Scheduling Problems. In Energy; 2021. [Google Scholar] [CrossRef]

- Sang, Y.; Tan, J. Many-Objective Flexible Job Shop Scheduling Problem with Green Consideration. Energies 2022, 15(5). [Google Scholar] [CrossRef]

- Li, R.; Gong, W.; Wang, L.; Lu, C.; Jiang, S. Two-stage knowledge-driven evolutionary algorithm for distributed green flexible job shop scheduling with type-2 fuzzy processing time. Swarm and Evolutionary Computation 2022, 74, 101139. [Google Scholar] [CrossRef]

- Lei, D.; Zheng, Y.; Guo, X. A shuffled frog-leaping algorithm for flexible job shop scheduling with the consideration of energy consumption. International Journal of Production Research 2016, 55(11), 3126–3140. [Google Scholar] [CrossRef]

- Jiang, T.; Zhu, H.; Deng, G. Improved African buffalo optimization algorithm for the green flexible job shop scheduling problem considering energy consumption. Journal of Intelligent & Fuzzy Systems 2020, 38, 4573–4589. [Google Scholar] [CrossRef]

- Peng, Z.; Zhang, H.; Tang, H.; Feng, Y.; Yin, W. Research on flexible job-shop scheduling problem in green sustainable manufacturing based on learning effect. Journal of Intelligent Manufacturing 2021, 33, 1725–1746. [Google Scholar] [CrossRef]

- Ren, W.; Wen, J.; Yan, Y.; Hu, Y.; Guan, Y.; Li, J. Multi-objective optimisation for energy-aware flexible job-shop scheduling problem with assembly operations. International Journal of Production Research 2020, 59(23), 7216–7231. [Google Scholar] [CrossRef]

- Song, W.; Chen, X.; Li, Q.; Cao, Z. Flexible Job-Shop Scheduling via Graph Neural Network and Deep Reinforcement Learning. IEEE Transactions on Industrial Informatics 2023, 19(2), 1600–1610. [Google Scholar] [CrossRef]

- Lei, K.; Guo, P.; Zhao, W.; Wang, Y.; Qian, L.; Meng, X.; Tang, L. A multi-action deep reinforcement learning framework for flexible Job-shop scheduling problem. Expert Systems with Applications 2022, 205, 117796. [Google Scholar] [CrossRef]

- Liu, R.; Piplani, R.; Toro, C. Deep reinforcement learning for dynamic scheduling of a flexible job shop. International Journal of Production Research 2022, 60(13), 4049–4069. [Google Scholar] [CrossRef]

- Yi, W.; Chen, N.; Chen, Y.; Pei, Z. An improved deep Q-network for dynamic flexible job shop scheduling with limited maintenance resources. International Journal of Production Research pages 1–22, 2025. [CrossRef]

- Huang, J.-P.; Gao, L.; Li, X. An end-to-end deep reinforcement learning method based on graph neural network for distributed job-shop scheduling problem. Expert Systems with Applications 2023, 238, 121756. [Google Scholar] [CrossRef]

- Lei, Y.; Deng, Q.; Liao, M.; Gao, S. Deep reinforcement learning for dynamic distributed job shop scheduling problem with transfers. Expert Systems with Applications 2024, 251, 123970. [Google Scholar] [CrossRef]

- Yin, S.; Xiang, Z. A hyper-heuristic algorithm via proximal policy optimization for multi-objective truss problems. Expert Systems with Applications 2024, 256, 124929. [Google Scholar] [CrossRef]

- van Hezewijk, L.; Dellaert, N.; Van Woensel, T.; Gademann, N. Using the proximal policy optimisation algorithm for solving the stochastic capacitated lot sizing problem. International Journal of Production Research 2022, 61(6), 1955–1978. [Google Scholar] [CrossRef]

- Zhang, F.; Li, R.; Gong, W. Deep reinforcement learning-based memetic algorithm for energy-aware flexible job shop scheduling with multi-AGV. Computers & Industrial Engineering 2024, 189, 109917. [Google Scholar] [CrossRef]

- Shi, J.; Liu, W.; Yang, J. An Enhanced Multi-Objective Evolutionary Algorithm with Reinforcement Learning for Energy-Efficient Scheduling in the Flexible Job Shop. Processes 12(9), 1976, 2024. [CrossRef]

- Li, R.; Gong, W.; Lu, C. A reinforcement learning based RMOEA/D for bi-objective fuzzy flexible job shop scheduling. Expert Systems with Applications 2022, 203, 117380. [Google Scholar] [CrossRef]

- Singh, S. S.; Joshi, R.; Gupta, D. An Advantage Actor-Critic Approach for Energy-Conscious Scheduling in Flexible Job Shops. J. Artif. Intell. vol. 7(no. 1), 177–203, 2025. [CrossRef]

- Shao, W.; Shao, Z.; Pi, D. A multi-neighborhood-based multi-objective memetic algorithm for the energy-efficient distributed flexible flow shop scheduling problem. Neural Computing and Applications 2022, 34, 22303–22330. [Google Scholar] [CrossRef]

- Zhang, B.; Che, A. An enhanced decomposition-based multi-objective evolutionary algorithm with neighborhood search for multi-resource constrained job shop scheduling problem. Swarm and Evolutionary Computation 93, 101834, 2025. [CrossRef]

- Smit, I.; Zhou, J.; Reijnen, R.; Wu, Y.; Chen, J.; Zhang, C.; Bukhsh, Z.; Nuijten, W.; Zhang, Y. Graph Neural Networks for Job Shop Scheduling Problems: A Survey. arXiv 2024. [Google Scholar] [CrossRef]

- Pan, Zixiao; Wang, Ling; Wang, Jing-jing; Lu, Jiawen. Deep Reinforcement Learning Based Optimization Algorithm for Permutation Flow-Shop Scheduling. IEEE Transactions on Emerging Topics in Computational Intelligence 2023, 7, 983–994. [Google Scholar] [CrossRef]

- Khadivi, M.; Charter, T.; Yaghoubi, M.; Jalayer, M.; Ahang, M.; Shojaeinasab, A.; Najjaran, H. Deep reinforcement learning for machine scheduling: Methodology, the state-of-the-art, and future directions. arXiv 2023. [Google Scholar] [CrossRef]

- Ogunfowora, O.; Najjaran, H. Reinforcement and Deep Reinforcement Learning-based Solutions for Machine Maintenance Planning, Scheduling Policies, and Optimization. arXiv 2023. [Google Scholar] [CrossRef]

- Abadi, Z.; Mansouri, N.; Javidi, M. Deep reinforcement learning-based scheduling in distributed systems: a critical review. Knowledge and Information Systems 2024, 66, 5709–5782. [Google Scholar] [CrossRef]

- Fernandes, J.; Homayouni, S.; Fontes, D. Energy-Efficient Scheduling in Job Shop Manufacturing Systems: A Literature Review. Sustainability 2022, 14(10), 6264. [Google Scholar] [CrossRef]

- Chung, K.; Lee, C.; Tsang, Y. Neural combinatorial optimization with reinforcement learning in industrial engineering: a survey. Artificial Intelligence Review 2025, 58(130). [Google Scholar] [CrossRef]

- Raffin, A.; Hill, A.; Gleave, A.; Kanervisto, A.; Ernestus, M.; Dormann, N. Stable-Baselines3: Reliable Reinforcement Learning Implementations. Journal of Machine Learning Research 2021, 22(268), 1–8. Available online: http://jmlr.org/papers/v22/20-1364.html.

- Chen, Z.; Zhang, K.; Liu, P.; Xin, G.; Sun, Z.; Tao, Z.; Zhang, Y.; Ji, W.; Lu, Y.; Jia, L.; Meng, H. Worst-Case Soft Actor-Critic-Based Safe Reinforcement Learning Method for Nonlinear Constrained Waterflood Reservoir Production Optimization. SPE Journal 2025. [Google Scholar] [CrossRef]

- Liang, Y.; Sun, Y.; Zheng, R.; Huang, F. Efficient adversarial training without attacking: worst-case-aware robust reinforcement learning. arXiv. 2022. Available online: https://arxiv.org/abs/2210.05927.

- Wong, J.; Liu, L. Portfolio Optimization through a Multi-modal Deep Reinforcement Learning Framework. Engineering: Open Access 2025, 3(4), 1–8. [Google Scholar] [CrossRef]

- Su, M.; Chai, H.; Zhao, C.; Lyu, Y.; Hu, J. Lightweight Obstacle Avoidance for Fixed-Wing UAVs Using Entropy-Aware PPO. Drones 9(9), 598, 2025. [CrossRef]

- Park, J.; Chun, J.; Kim, S.; Kim, Y.; Park, J. Learning to schedule job-shop problems: representation and policy learning using graph neural network and reinforcement learning. International Journal of Production Research 2021, 59, 3360–3377. [Google Scholar] [CrossRef]

- Hafner, D.; Pašukonis, J.; Ba, J.; Lillicrap, T. Mastering diverse control tasks through world models. Nature 2025, 640, 647–653. [Google Scholar] [CrossRef]

- Quan, J.; W. Hu, X. X.; Chen, G. Reinforcement Learning Stabilization for Quadrotor UAVs via Lipschitz-Constrained Policy Regularization. Drones 9(10), 675, 2025. [CrossRef]

- Behnke, D.; Geiger, M. J. Test instances for the flexible job shop scheduling problem with work centers. In Research Paper; Helmut-Schmidt-Universität, Lehrstuhl für Betriebswirtschaftslehre: insbes. Logistik-Management, 2012. [Google Scholar]

- Lu, Y.; Zhu, Q.; Tian, C.; He, E.; Zhang, T. Low-Carbon and Energy-Efficient Dynamic Flexible Job Shop Scheduling Method Towards Renewable Energy Driven Manufacturing. Machines 2026, 14(1), 88. [Google Scholar] [CrossRef]

- Cinar, D.; Topcu, Y. I.; Oliveira, J. A. A priority-based genetic algorithm for a flexible job shop scheduling problem. Journal of Industrial & Management Optimization 2016, 12(4), 1–18. [Google Scholar]

- Deb, K.; Agrawal, R. B. Simulated binary crossover for continuous search space. Complex Systems 1995, 9(2), 115–148. Available online: http://www.complex-systems.com/abstracts/v09_i02_a02/.

Short Biography of Authors

|

Saurabh Sanjay Singh is a Ph.D. candidate in Industrial, Systems, and Manufacturing Engineering at Wichita State University. His research lies at the operations research–data science interface, focusing on production scheduling in job shops. At Wichita State University, he serves as an Instructor in the College of Engineering and previously supported graduate programs at the W. Frank Barton School of Business. He has taught programming and data science in India and brings industry experience in data science and machine learning from Tech Mahindra. Saurabh holds an M.S. in Data Science and a B.C.A. from CHRIST (Deemed to be University), India, and a Graduate Certificate in Business Analytics. Proficient in Python, R, SQL, and optimization and simulation toolchains, he builds deployable decision-support tools for operations management. |

|

Deepak Gupta is Professor, Department Chair, and Graduate Program Coordinator in Industrial, Systems, and Manufacturing Engineering at Wichita State University. He earned a Ph.D. and M.S. in Industrial Engineering from West Virginia University and a bachelor’s degree from IIT Roorkee. His expertise spans manufacturing system optimization, energy management, supply chain optimization, and data analytics. He has secured funding from USDA, U.S. DOE, U.S. DOL, NSF EPSCoR, regional utilities, and industry partners. Dr. Gupta has collaborated with more than 200 manufacturing companies across over ten U.S. states, integrating hands-on experience for students and bridging academic research with practical industrial applications. |

| Category | Representative instance | Carbon baseline (kg ) | Tardiness penalty baseline |

|---|---|---|---|

| Sm | sm04_5 | 1869.3974 | 4425.480 |

| Med | med04_5 | 2005.0144 | 2878.902 |

| Lar | lar04_5 | 1820.9377 | 2651.852 |

| Instance | Carbon (kg ) | Tardiness | Energy (kWh) | Makespan (minutes) | CPU (s) | Optimality Gap (%) |

|---|---|---|---|---|---|---|

| sm01_1 | 140.70 | 0.00 | 140.98 | 157.00 | 0.81 | 2.87 |

| sm01_3 | 142.32 | 0.00 | 142.61 | 159.00 | 0.80 | 2.92 |

| sm02_2 | 297.30 | 17.26 | 297.90 | 217.00 | 1.41 | 4.23 |

| sm03_1 | 739.73 | 478.14 | 741.21 | 428.00 | 3.62 | 7.56 |

| sm04_5 | 1499.46 | 3093.06 | 1502.46 | 864.00 | 8.55 | 11.34 |

| med01_2 | 145.73 | 0.00 | 146.03 | 148.00 | 0.52 | 2.18 |

| med02_1 | 297.84 | 2.07 | 298.44 | 160.00 | 1.59 | 4.67 |

| med02_5 | 302.71 | 5.10 | 303.32 | 173.00 | 1.67 | 5.89 |

| med03_3 | 773.86 | 246.11 | 775.41 | 286.00 | 4.43 | 8.92 |

| med04_5 | 1580.71 | 1685.21 | 1583.88 | 589.00 | 10.51 | 12.78 |

| lar01_1 | 142.18 | 0.00 | 142.47 | 122.00 | 1.00 | 2.43 |

| lar02_3 | 272.26 | 0.07 | 272.81 | 177.00 | 1.33 | 6.12 |

| lar03_2 | 691.81 | 98.43 | 693.19 | 283.00 | 5.57 | 9.45 |

| lar04_1 | 1387.07 | 1170.23 | 1389.85 | 506.00 | 9.39 | 13.21 |

| lar04_5 | 1438.07 | 1180.54 | 1440.95 | 503.00 | 9.95 | 13.67 |

| Weight | Carbon Emissions (kg ) |

Tardiness | Energy Consumption (kWh) |

Makespan (minutes) |

CPU (s) |

Optimality Gap (%) |

|---|---|---|---|---|---|---|

| 278.3329 | 44.82 | 278.8907 | 262 | 1.07 | 4.87 | |

| 281.3272 | 33.76 | 281.8910 | 233 | 1.07 | 4.52 | |

| 280.4681 | 45.38 | 281.0302 | 256 | 1.10 | 4.91 | |

| 287.2771 | 9.04 | 287.8528 | 196 | 1.77 | 4.23 | |

| 289.3713 | 10.24 | 289.9512 | 208 | 1.17 | 4.35 | |

| 280.1544 | 13.04 | 280.7158 | 206 | 1.03 | 4.41 | |

| 286.8900 | 7.88 | 287.4600 | 183 | 1.04 | 4.19 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).