Submitted:

14 April 2026

Posted:

15 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Proposed a novel transparent object depth completion network ADDFNet, which effectively addresses the problems of blurred edge perception and insufficient feature modeling capability for transparent objects through deep integration of multi-directional differential enhancement and hybrid attention mechanisms.

- Designed MDAM, which utilizes differential convolution to explicitly enhance gradient feature extraction, greatly improving the restoration accuracy of transparent object contours in complex backgrounds.

- Constructed DEDM and DSCA within MDAM, and designed CMFR, achieving adaptive feature perception and efficient integration of multi-source information, improving the accuracy and stability of depth prediction.

- ADDFNet was evaluated on two public datasets: ClearPose and TransCG [17], with experimental results verifying the effectiveness of the proposed method in transparent object depth completion tasks.

2. Related Work

2.1. General Depth Completion Methods

2.2. Transparent Object Depth Completion

2.3. Robotic Grasp Detection

3. Method

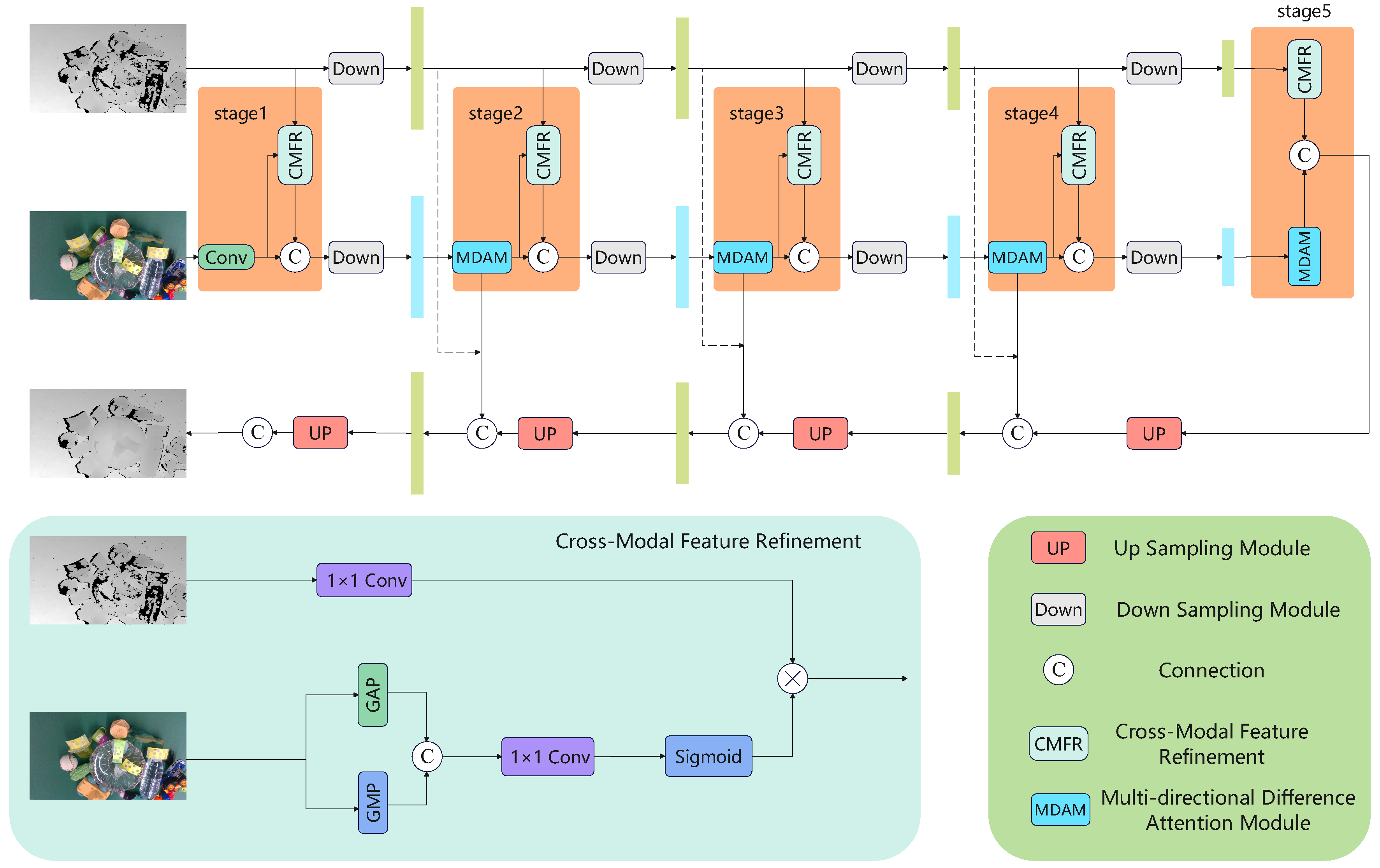

3.1. Overview

3.2. CMFR

3.3. MDAM

3.3.1. Overall Design

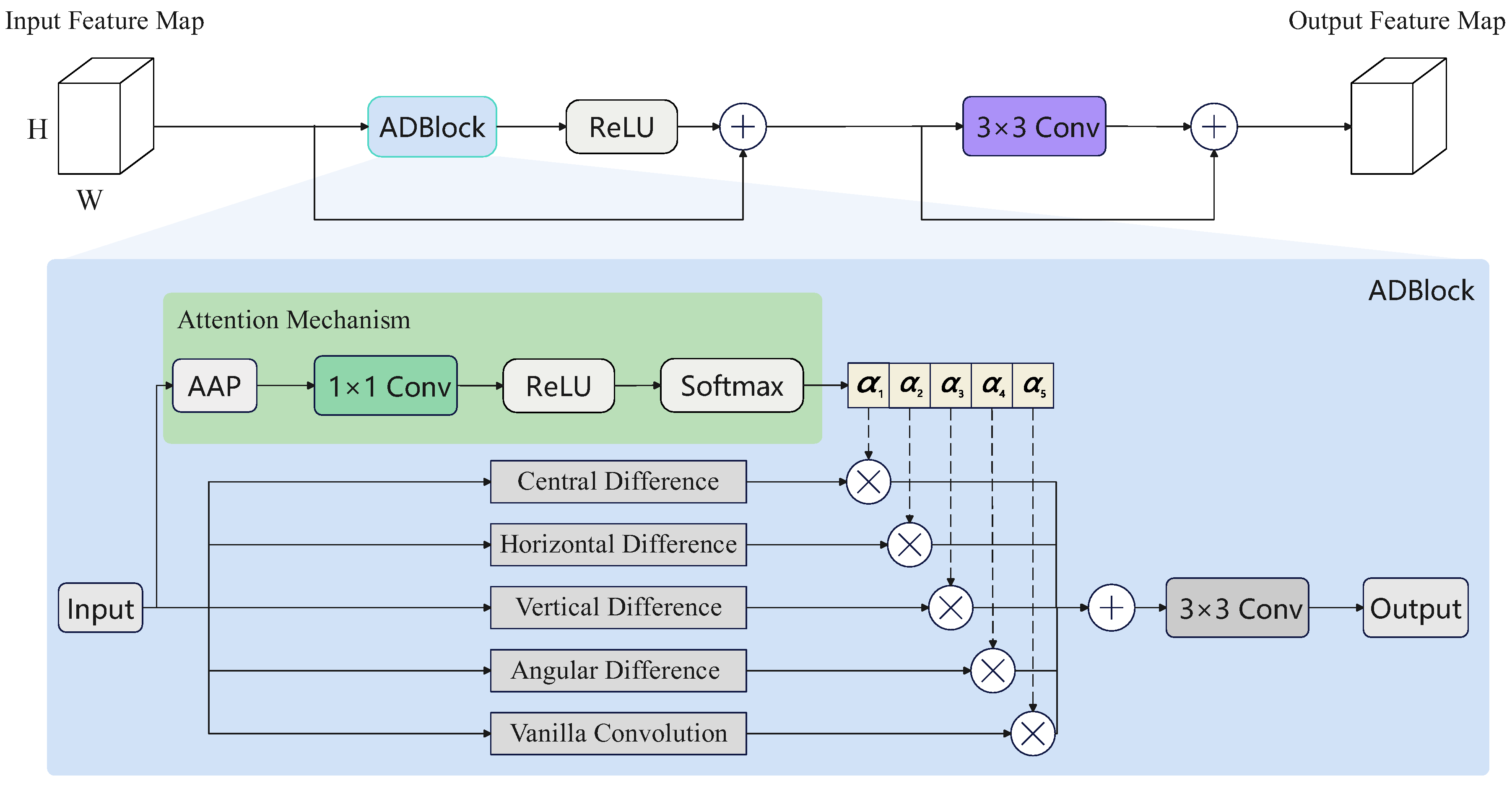

3.3.2. DEDM

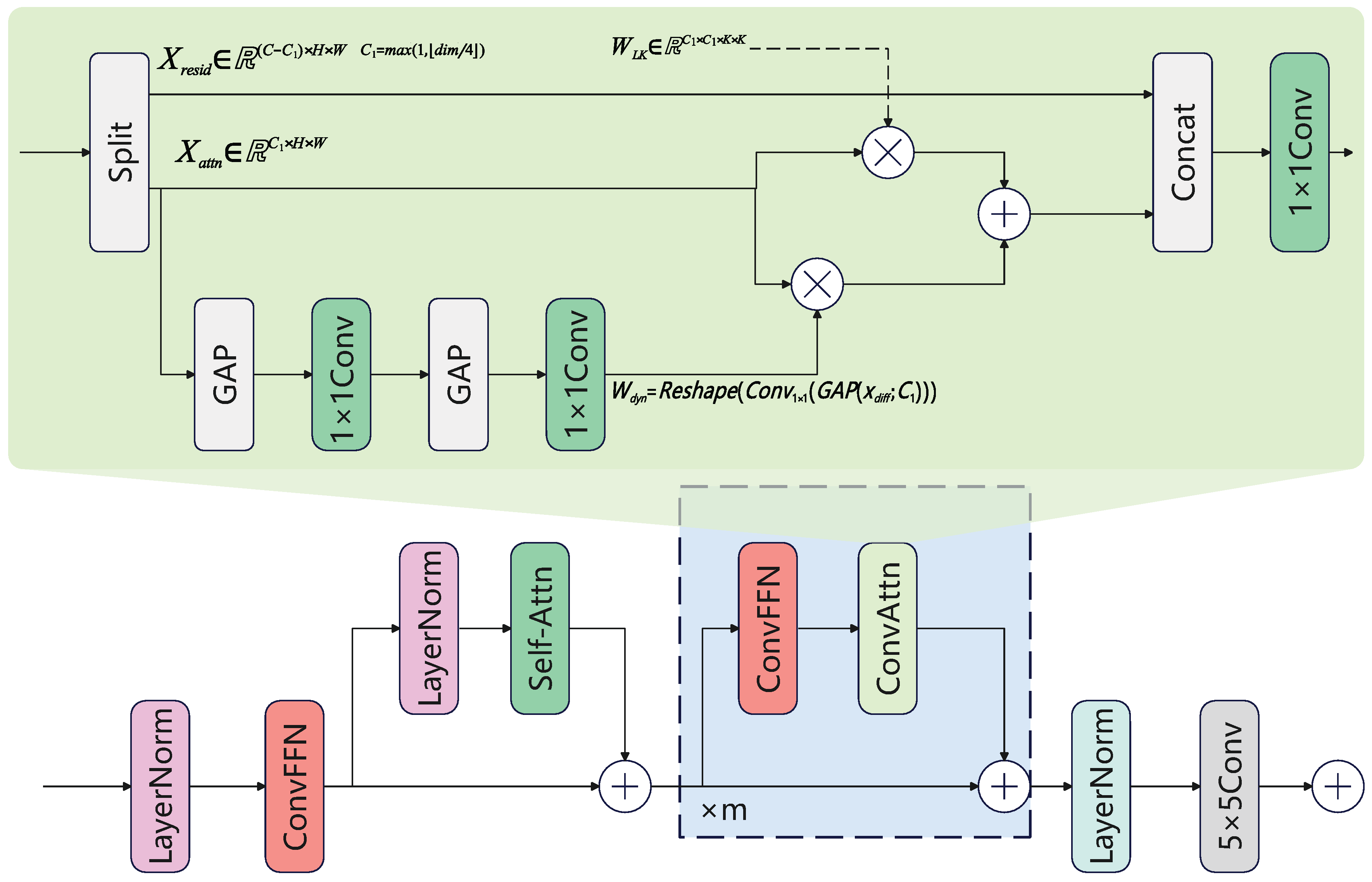

3.3.3. DSCA

3.4. Loss Function

4. Experiments

4.1. Datasets

4.2. Experimental Details

4.3. Evaluation Metrics

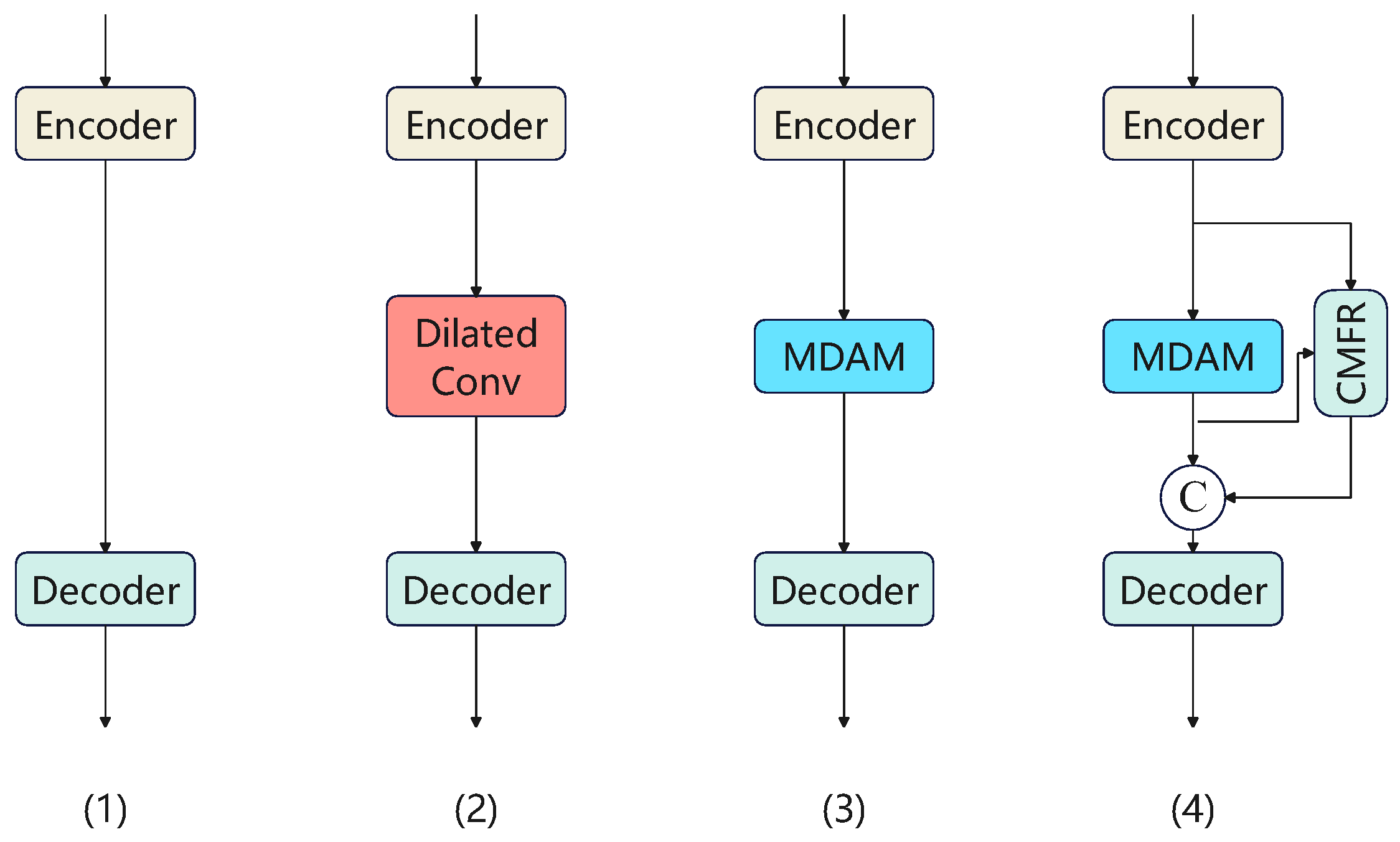

4.4. Ablation Studies

4.4.1. Overall Experiments

4.4.2. Effectiveness of DEDM Sub-Component in MDAM

4.4.3. Effectiveness of DSCA Sub-component in MDAM

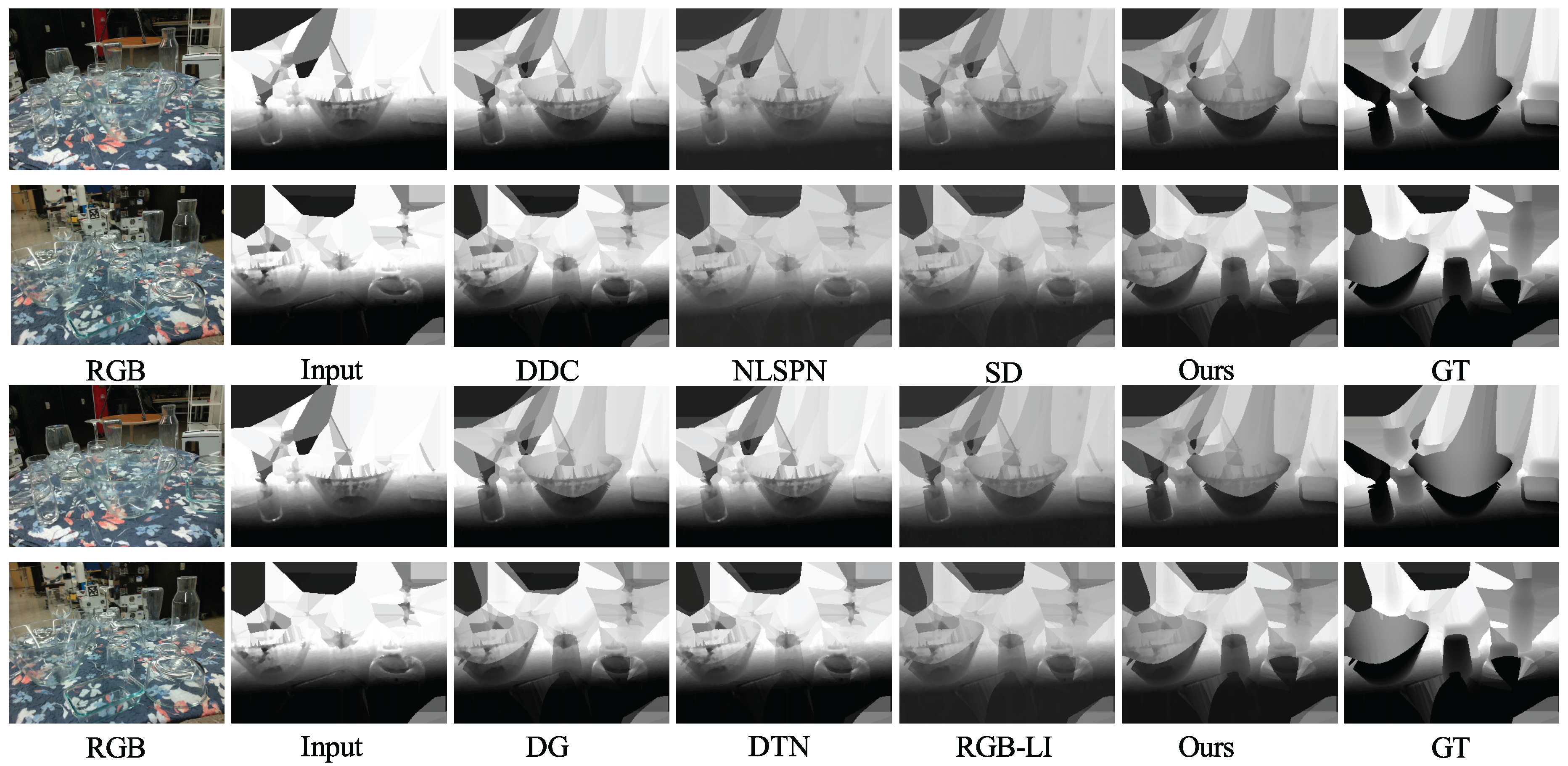

4.5. Comparison with SOTA Methods

5. Conclusion

6. Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Bicchi, A.; Kumar, V. Robotic grasping and contact: A review. In Proceedings of the Proceedings 2000 ICRA. Millennium conference. IEEE international conference on robotics and automation. Symposia proceedings (Cat. No. 00CH37065); IEEE, 2000; Vol. 1, pp. 348–353. [Google Scholar]

- Redmon, J.; Angelova, A. Real-time grasp detection using convolutional neural networks. IEEE International Conference on Robotics and Automation (ICRA), 2015; pp. 1316–1322. [Google Scholar]

- Qian, Y.; Gong, M.; Yang, Y.H. Transparent object reconstruction based on spatio-temporal light transport analysis. IEEE Transactions on Pattern Analysis and Machine Intelligence 2020, 42, 3060–3073. [Google Scholar]

- Xie, E.; Wang, W.; Wang, W.; Ding, M.; Shen, C.; Luo, P. Segmenting Transparent Objects in the Wild. In Proceedings of the Proceedings of the European Conference on Computer Vision (ECCV), 2020; pp. 696–711. [Google Scholar]

- Schwarz, M.; Milan, A.; Selvam Periyasamy, A.; Behnke, S. RGB-D object detection and semantic segmentation for autonomous manipulation in clutter. The International Journal of Robotics Research 2017, 37, 437–451. [Google Scholar] [CrossRef]

- Qi, C.R.; Yi, L.; Su, H.; Guibas, L.J. PointNet++: Deep hierarchical feature learning on point sets in a metric space. Advances in Neural Information Processing Systems 2017, 30, 5099–5108. [Google Scholar]

- Sajjan, S.; Moore, M.; Pan, M.; Nagaraja, G.; Lee, J.C.; Zeng, A.; Song, S. ClearGrasp: 3D Shape Estimation of Transparent Objects for Manipulation. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2020; pp. 3634–3642. [Google Scholar] [CrossRef]

- Sajjan, S.; Moore, M.; Pan, M.; Nagaraja, G.; Lee, J.; Zeng, A.; Song, S. Learning to see transparent objects. IEEE International Conference on Robotics and Automation (ICRA), 2020; pp. 1–8. [Google Scholar]

- He, K.; Sun, J.; Tang, X. Single image haze removal using dark channel prior. IEEE Transactions on Pattern Analysis and Machine Intelligence 2011, 33, 2341–2353. [Google Scholar]

- Zhu, Q.; Mai, J.; Shao, L. A fast single image haze removal algorithm using color attenuation prior. IEEE Transactions on Image Processing 2015, 24, 3522–3533. [Google Scholar] [CrossRef]

- Nayar, S.K.; Ikeuchi, K.; Kanade, T. Shape from interreflections. International Journal of Computer Vision 1991, 6, 173–195. [Google Scholar] [CrossRef]

- Kutulakos, K.N.; Steger, E. A theory of refractive and specular 3D shape by light-path triangulation. International Journal of Computer Vision 2008, 76, 13–29. [Google Scholar]

- Chen, X.; Zhang, H.; Yu, Z.; Opipari, A.; Jenkins, O.C. ClearPose: Large-scale Transparent Object Dataset and Benchmark. In Proceedings of the European Conference on Computer Vision, 2022. [Google Scholar]

- Xu, H.; Wang, Y.R.; Eppel, S.; Aspuru-Guzik, A.; Shkurti, F.; Garg, A. Seeing Glass: Joint Point Cloud and Depth Completion for Transparent Objects. Proceedings of the Proceedings of Machine Learning Research 2021, Vol. 164, 827–838. [Google Scholar]

- Huang, Y.; Chen, J.; Michiels, N.; Asim, M.; Claesen, L.; Liu, W. DistillGrasp: Integrating Features Correlation With Knowledge Distillation for Depth Completion of Transparent Objects. IEEE Robotics and Automation Letters 2024, 9, 8945–8952. [Google Scholar] [CrossRef]

- Hua, Z.; Yu, S.; Wang, W.; Guan, Y.; Xia, Y. TCRNet: Transparent Object Depth Completion With Cascade Refinements. IEEE Transactions on Automation Science and Engineering, 2024. [Google Scholar]

- Fang, H.S.; Wang, J.; Gou, Y.; Lu, H. TransCG: A Large-Scale Real-World Dataset for Transparent Object Depth Completion and A Grasping Baseline. IEEE Robotics and Automation Letters 2022, 7, 7383–7390. [Google Scholar]

- Ma, F.; Karaman, S. Sparse-to-Dense: Depth Prediction from Sparse Depth Samples and a Single Image. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (CVPRW), 2018; pp. 479–487. [Google Scholar]

- Zhang, Y.; Funkhouser, T. Deep Depth Completion of a Single RGB-D Image. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2018; pp. 175–185. [Google Scholar]

- Cheng, X.; Wang, P.; Yang, R. Depth Estimation via Affinity Learned with Convolutional Spatial Propagation Network. In Proceedings of the Proceedings of the European Conference on Computer Vision (ECCV), 2018; pp. 103–119. [Google Scholar]

- Tang, J.; Tian, F.P.; Feng, W.; Li, J.; Tan, P. GuideNet: Guided Anisotropic Diffusion for Depth Completion. In Proceedings of the Proceedings of the European Conference on Computer Vision (ECCV), 2020. [Google Scholar]

- Hu, M.; Wang, S.; Li, B.; Ning, S.; Fan, L.; Gong, X. FuseNet: An RGB-D Fusion Architecture for Depth Completion. IEEE Robotics and Automation Letters 2019, 4, 4424–4431. [Google Scholar]

- Park, J.; Joo, K.; Hu, Z.; Liu, C.K.; So Kweon, I. Non-local Spatial Propagation Network for Depth Completion. European Conference on Computer Vision (ECCV), 2020; pp. 120–136. [Google Scholar]

- Li, X.; Liu, Y.; Chen, X.; Wang, Y. ACMNet: Adaptive Context-Aware Multi-Scale Network for Depth Completion. IEEE Transactions on Image Processing, 2021. [Google Scholar]

- Hu, M.; Wang, S.; Li, B.; Ning, S.; Fan, L.; Gong, X. PENet: Towards Precise and Efficient Image Guided Depth Completion. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), 2021. [Google Scholar]

- Zhu, L.; Mousavian, A.; Xiang, Y.; Mazhar, H.; van Eenbergen, J.; Debnath, S.; Fox, D. RGB-D Local Implicit Function for Depth Completion of Transparent Objects. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2021; pp. 4647–4656. [Google Scholar]

- Ku, J.; Harakeh, A.; Waslander, S.L. DeepLiDAR: Deep Surface Normal Guided Depth Prediction for Outdoor Scene From Sparse LiDAR Data and Single Color Image. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), 2019; pp. 3313–3322. [Google Scholar]

- Yan, Z.; Zhang, R.; Wang, X. PENet: Penetration-Aware Depth Completion for Transparent Objects. arXiv 2019, arXiv:1907.00000. [Google Scholar]

- Liu, B.; Li, H.; Wang, Z.; Xue, T. Transparent Depth Completion Using Segmentation Features DualTransNet is proposed in this work. ACM Transactions on Multimedia Computing, Communications, and Applications 2024, 20, 373:1–373:19. [Google Scholar]

- Chen, Z.; Liu, Y.; Wang, P. SRNet-Trans: A Signal-Image Guided Depth Completion Regression Network for Transparent Object. Applied Sciences 2023, 15, 10566. [Google Scholar]

- Li, J.; Zhang, R.; Wang, X. Consistent Depth Prediction for Transparent Object Reconstruction from RGB-D Camera. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), 2023; pp. 4567–4576. [Google Scholar]

- Hu, J.; Shen, L.; Sun, G. Squeeze-and-excitation networks. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2018; pp. 7132–7141. [Google Scholar]

- Woo, S.; Park, J.; Lee, J.Y.; So Kweon, I. Convolutional block attention module. In Proceedings of the European Conference on Computer Vision (ECCV), 2018; pp. 3–19. [Google Scholar]

- Su, Z.; Liu, W.; Yu, Z.; Hu, D.; Liao, Q.; Tian, L.; Pietikainen, M.; Liu, L. Pixel difference networks for efficient edge detection. International Conference on Computer Vision (ICCV), 2021; pp. 5117–5127. [Google Scholar]

- Su, Z.; Liu, W.; Yu, Z.; Hu, D.; Liao, Q.; Tian, L.; Gao, X.; Pietikainen, M. Pixel difference convolutional neural networks. IEEE Transactions on Pattern Analysis and Machine Intelligence 2023, 45, 4296–4310. [Google Scholar]

- Duan, K.; Bai, S.; Xie, L.; Qi, H.; Huang, Q.; Tian, Q. CenterNet: Keypoint Triplets for Object Detection. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), 2019; pp. 6569–6578. [Google Scholar]

- Chen, Y.; Dai, X.; Liu, M.; Chen, D.; Yuan, L.; Liu, Z. Dynamic convolution: Attention over convolution kernels. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2020; pp. 11030–11039. [Google Scholar]

- Sobel, I.; Feldman, G. A 3x3 isotropic gradient operator for image processing. A Talk at the Stanford Artificial Intelligence Project; 1968. [Google Scholar]

- Ding, X.; Zhang, X.; Han, J.; Ding, G. Scaling up your kernels to 31x31: Revisiting large kernel design in CNNs. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022; pp. 11963–11975. [Google Scholar]

- Ranftl, R.; Bochkovskiy, A.; Koltun, V. Vision transformers for dense prediction. In Proceedings of the IEEE/CVF International Conference on Computer Vision, 2021; pp. 12179–12188. [Google Scholar]

- Ding, X.; Zhang, X.; Han, J.; Ding, G. Scaling up your kernels to 31x31: Revisiting large kernel design in CNNs. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022; pp. 11963–11975. [Google Scholar]

- Liu, Z.; Mao, H.; Wu, C.Y.; Feichtenhofer, C.; Darrell, T.; Xie, S. ConvNeXt: A pure ConvNet for the 2020s. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2022; pp. 11976–11986. [Google Scholar]

- Yang, B.; Bender, G.; Le, Q.V.; Ngiam, J. CondConv: Conditionally parameterized convolutions for efficient inference. Advances in Neural Information Processing Systems 2019, 32. [Google Scholar]

- Chen, Z.; He, Y.; Shiri, I.; Li, H.; Li, Y.; Zhang, J.; Guo, X.; Li, H.; Wang, Q. DEA-Net: Single image dehazing based on detail-enhanced convolution and content-guided attention. IEEE Transactions on Image Processing 2024, 33, 1002–1015. [Google Scholar] [CrossRef]

- Ding, X.; Zhang, X.; Ma, N.; Han, J.; Ding, G.; Sun, J. RepVGG: Making VGG-style ConvNets Great Again. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021; pp. 13733–13742. [Google Scholar]

- Cohen, T.; Welling, M. Group equivariant convolutional networks. International Conference on Machine Learning, 2016; pp. 2990–2999. [Google Scholar]

- Johnson, J.; Alahi, A.; Fei-Fei, L. Perceptual losses for real-time style transfer and super-resolution. In Proceedings of the European Conference on Computer Vision (ECCV), 2016; pp. 694–711. [Google Scholar]

- Simonyan, K.; Zisserman, A. Very deep convolutional networks for large-scale image recognition. International Conference on Learning Representations (ICLR), 2015. [Google Scholar]

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Fei-Fei, L. ImageNet: A large-scale hierarchical image database. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2009; pp. 248–255. [Google Scholar]

- Eigen, D.; Puhrsch, C.; Fergus, R. Depth map prediction from a single image using a multi-scale deep network. Advances in Neural Information Processing Systems 2014, 27, 2366–2374. [Google Scholar]

- Gao, H.; Liu, X.; Qu, M.; Huang, S. PDANet: Self-supervised monocular depth estimation using perceptual and data augmentation consistency. Applied Sciences 2021, 11, 5383. [Google Scholar] [CrossRef]

- Li, T.; Chen, Z.; Liu, H.; Wang, C. FDCT: Fast Depth Completion for Transparent Objects. IEEE Robotics and Automation Letters 2023, 8, 5823–5830. [Google Scholar] [CrossRef]

- Wang, S.; Zhu, X.; Zhang, Y.; Li, S.; Liu, Y.; Wang, J. TODE-Trans: Transparent Object Depth Estimation with Transformer. IEEE International Conference on Robotics and Automation (ICRA), 2023; pp. 1–8. [Google Scholar]

- Sun, X.; Ponce, J.; Wang, Y.X. Revisiting deformable convolution for depth completion. IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2023; pp. 1234–1245. [Google Scholar]

- Guo, M.H.; Lu, C.Z.; Liu, Z.N.; Hu, M.M.; Zhang, T.J.; Hu, S.M. Visual Attention Network. Computational Visual Media 2023, 9, 733–752. [Google Scholar] [CrossRef]

- Zhang, S.; Xu, S.; Li, X.; Yang, J. Edge-aware spatial propagation network for multi-view depth estimation. Neural Processing Letters 2023, 55, 1–20. [Google Scholar]

| Configuration | Metrics | |||||

|---|---|---|---|---|---|---|

| RMSE↓ | REL↓ | MAE↓ | ||||

| Baseline | 0.055 | 0.080 | 0.040 | 55.42 | 71.14 | 85.37 |

| Baseline + Dilated Conv | 0.043 | 0.061 | 0.028 | 68.90 | 86.20 | 91.24 |

| Baseline + MDAM | 0.040 | 0.056 | 0.025 | 73.90 | 89.50 | 98.30 |

| Baseline + MDAM + CMFR | 0.037 | 0.052 | 0.022 | 77.20 | 91.80 | 98.60 |

| Module Configuration | Performance Metrics | |||||

|---|---|---|---|---|---|---|

| RMSE↓ | REL↓ | MAE↓ | ||||

| DEDM | 0.037 | 0.052 | 0.022 | 77.20 | 91.80 | 98.60 |

| DEDM → FDCT [52] | 0.046 | 0.065 | 0.030 | 68.50 | 85.20 | 97.50 |

| DEDM → TODE-Trans [53] | 0.045 | 0.064 | 0.029 | 69.60 | 86.00 | 97.70 |

| DEDM → DCDC [54] | 0.049 | 0.068 | 0.032 | 66.20 | 83.90 | 97.20 |

| DEDM → VAN [55] | 0.053 | 0.073 | 0.035 | 63.10 | 81.50 | 96.80 |

| Module Configuration | Performance Metrics | |||||

|---|---|---|---|---|---|---|

| RMSE↓ | REL↓ | MAE↓ | ||||

| DSCA | 0.037 | 0.052 | 0.022 | 77.20 | 91.80 | 98.60 |

| DSCA → DC [37] | 0.054 | 0.074 | 0.035 | 62.80 | 80.90 | 96.50 |

| DSCA → LKA [41] | 0.051 | 0.071 | 0.033 | 64.60 | 82.50 | 96.90 |

| DSCA → VAN [55] | 0.046 | 0.065 | 0.030 | 68.90 | 85.80 | 97.60 |

| DSCA → EASP [56] | 0.048 | 0.067 | 0.031 | 67.00 | 84.30 | 97.30 |

| Method | RMSE↓ | REL↓ | MAE↓ | |||

|---|---|---|---|---|---|---|

| DeepDepthCompletion [19] | 0.045 | 0.062 | 0.035 | 62.50 | 83.80 | 97.20 |

| NLSPN [23] | 0.041 | 0.058 | 0.031 | 66.25 | 85.90 | 96.60 |

| Sparse-to-Dense [18] | 0.047 | 0.064 | 0.037 | 61.80 | 84.50 | 97.10 |

| DistillGrasp [15] | 0.038 | 0.056 | 0.027 | 70.40 | 89.25 | 98.40 |

| DualTransNet [29] | 0.037 | 0.055 | 0.026 | 72.15 | 90.10 | 97.55 |

| RGB-D Local Implicit [26] | 0.036 | 0.054 | 0.024 | 73.25 | 90.65 | 98.70 |

| Ours | 0.029 | 0.045 | 0.016 | 78.83 | 94.74 | 99.15 |

| Method | RMSE↓ | REL↓ | MAE↓ | |||

|---|---|---|---|---|---|---|

| DeepDepthCompletion [19] | 0.175 | 0.090 | 0.068 | 35.40 | 70.25 | 94.85 |

| NLSPN [23] | 0.158 | 0.075 | 0.055 | 47.20 | 76.80 | 96.25 |

| Sparse-to-Dense [18] | 0.180 | 0.092 | 0.070 | 34.15 | 69.10 | 94.60 |

| DistillGrasp [15] | 0.142 | 0.062 | 0.046 | 55.80 | 82.40 | 97.15 |

| DualTransNet [29] | 0.138 | 0.060 | 0.044 | 58.90 | 83.95 | 97.50 |

| RGB-D Local Implicit [26] | 0.146 | 0.064 | 0.048 | 53.75 | 81.20 | 96.95 |

| Ours | 0.110 | 0.033 | 0.028 | 76.33 | 93.84 | 98.88 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).