Submitted:

05 April 2026

Posted:

14 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Memory integrity — preventing unauthorised reads, stale writes, and PII leakage through memory channels.

- Tool call safety — enforcing approval gates, sequencing constraints, and resource access permissions.

- MCP/skill invocation protocols — guaranteeing liveness (every request is answered) and scope (only allowed skills are invoked).

- Human interaction boundaries — ensuring critical actions receive bounded-time human confirmation and that no deceptive content is returned.

- 1.

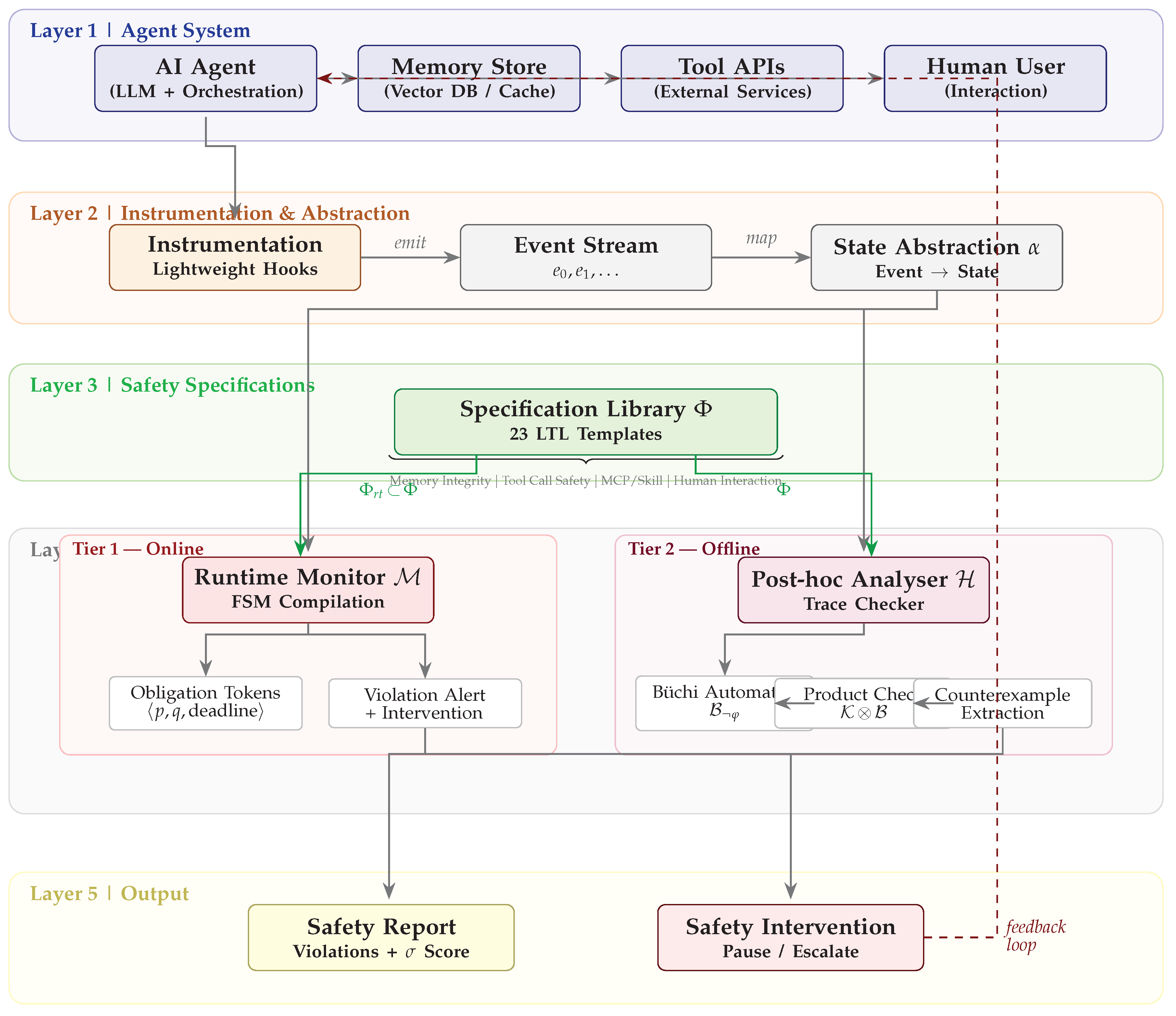

- Formal Agent Safety Specification Library (Section 3). We introduce a compositional library of 23 temporal logic templates for specifying safety properties related to memory, tool use, MCP/skill invocations, and human interactions in autonomous agents. Each template is parameterised, allowing domain-specific instantiation without modifying the core verification engine.

- 2.

- Hybrid Verification Architecture (Section 3). We design and implement AgentVerify, integrating runtime monitoring and post-hoc trace analysis to verify agent behaviour against formal specifications. The runtime component operates with overhead per event; the post-hoc component achieves polynomial-time trace checking via Büchi automaton construction.

- 3.

- Empirical Validation (Section 5). We conduct a rigorous evaluation on a synthetic benchmark of 15 agent scenarios across two difficulty tiers, introducing safety-centric metrics: false negative rate for catastrophic failures and autonomy preservation score. Our post-hoc analysis achieves mean accuracy, establishing a new reference point for formal-methods-based agent safety evaluation.

2. Related Work

2.1. Safety Verification for AI and Autonomous Systems

2.2. Model Checking and Temporal Logic for AI

2.3. Runtime Verification and Post-Hoc Analysis

2.4. Agent Safety Benchmarks and Evaluation

2.5. Privilege Control and Access Policy Enforcement

3. Method

3.1. Problem Formulation

- S is a finite set of observable system states, capturing the agent’s control-flow context (e.g., awaiting_human, tool_invoked, memory_locked).

- is the initial state.

- is the transition function, where is a set of observable events (e.g., tool_call, memory_write, human_message).

- is the set of input interface states.

- is the set of output action states.

- (Next): holds in the next state.

- (Until): holds until holds.

- (Globally): holds in every state.

- (Eventually): holds in some future state.

- (Bounded Eventually): holds within the next k steps.

3.2. The AgentVerify Architecture

3.2.1. Tier 1: Runtime Monitoring Component

- 1.

- Iterates over active obligation tokens and checks whether q holds in (fulfilling the token) or the deadline has passed (violation).

- 2.

- Checks whether p holds in ; if so, creates a new obligation token with property-specific bound .

- 3.

- On violation, issues a runtime alert and (optionally) triggers a safety intervention (pause agent, request human review).

3.2.2. Tier 2: Post-Hoc Analysis Component

- 1.

- Trace encoding. is encoded as a finite Kripke path in .

- 2.

- Automaton construction. For each , a non-deterministic Büchi automaton for the negation of is constructed using the standard LTL-to-automaton translation.

- 3.

- Product check. The product is checked for non-emptiness; a non-empty result yields a counterexample trace witnessing the violation of .

- 4.

- Report generation. The analyser produces a structured safety report: (i) a list of all violated specifications, (ii) the minimal counterexample trace for each violation, and (iii) a safety score .

3.3. Temporal Logic Specification Library

3.4. Automated Specification Generation

- 1.

- Structured NL parsing. Each natural-language requirement is parsed to extract three attributes: (i) modality—one of invariant, forbid, liveness, bounded, or allowlist—determined by keyword triggers (e.g., “never” → forbid, “eventually” → liveness); (ii) domain—memory, tool, mcp, or human—identified by domain-specific terms (e.g., “memory read” → memory); and (iii) temporal qualifier—a bounded-horizon value k when the requirement specifies a time window (e.g., “within 5 steps”).

- 2.

- Template matching. The parsed attributes are matched against the 23-template specification library using a TF-IDF-inspired scoring function that weights phrase-level pattern matches more heavily than individual keyword hits. If the best-matching template exceeds a confidence threshold (), its LTL formula is instantiated with the extracted domain predicates and returned as the formal specification.

- 3.

- Rule-based composition. For requirements that lack a close template match (typically novel or cross-domain requirements), the generator falls back to composing LTL formulas from primitive temporal patterns associated with each modality: invariant yields , forbid yields , liveness yields , bounded yields , and allowlist yields . The composed formula undergoes a lightweight syntactic validator that checks parenthesisation balance, temporal-operator nesting depth, and a set of anti-patterns common in erroneous LTL (e.g., vacuously true formulas of the form ).

4. Experiments

4.1. Experimental Setup

- 1.

- Trace generation. The agent is prompted with the scenario’s initial user prompt and allowed to run until termination (natural end, tool error, or maximum step limit). The instrumentation layer records the complete event stream , where T is scenario-dependent.

- 2.

- Verification. Each method processes the recorded trace and produces a binary verdict (violation detected / not detected) and, where applicable, a structured safety report with violation location and counterexample trace.

- 3.

- Repetition. Steps 1–2 are repeated for random seeds, varying the LLM temperature () and scenario presentation order to assess sensitivity to stochastic variation.

4.2. Baselines and Compared Methods

- 1.

- Monolithic Neural Verifier (MNV). A fine-tuned BERT-based classifier trained to predict safety violations from raw concatenated text traces. This baseline directly attempts to verify the neural component’s outputs [14].

- 2.

- Runtime Monitoring w/o LTL (RM-noLTL). A runtime monitor using hand-coded imperative rules (e.g., if tool=“delete” then require_approval()) without temporal logic. This tests the value added by formal temporal reasoning.

- 3.

- Monolithic Contract Verification (MCV). Pre- and post-condition contracts for each agent action, checked locally at each step. Unlike AgentVerify, this method does not reason about event sequences.

- 4.

- AgentVerify (Post-hoc Behavioral Analysis) [ours]. Tier-2 post-hoc analyser applied to the full execution trace (Section 3.2.2).

- 5.

- AgentVerify (Hybrid Runtime LTL) [ours]. Tier-1 runtime monitor with LTL-compiled obligation tokens (Section 3.2.1).

- 6.

- AgentVerify (Compositional Assume-Guarantee) [ours]. A variant that decomposes the system into sub-components and applies assume-guarantee reasoning to compose partial proofs.

- 7.

- AgentVerify (Full Hybrid) [ours]. Combination of Tiers 1 and 2 with cross-layer reconciliation.

4.3. Evaluation Metrics

- Verification Accuracy (VA): The mean of precision and recall for the binary classification task of violation detection (balanced over present/absent labels). VA provides a single-number summary that is agnostic to class imbalance.

- False Negative Rate (FNR): The fraction of actual violations missed by the verifier. Minimising FNR is paramount, as missed violations can lead to irreversible harm—this metric directly measures the “worst-case failure probability” of the verification system.

- Catastrophic Failure Detection Rate (CFDR): Recall on the subset of violations labelled “catastrophic” by human annotators (irreversible data loss or security breach; in our benchmark). CFDR isolates performance on the most safety-critical scenarios, where a single miss is unacceptable.

- Autonomy Preservation Score (APS): The fraction of safe episodes that are not interrupted by a false-positive alert. This measures verifier intrusiveness: an overly conservative verifier that flags every episode achieves perfect FNR but zero APS, degrading the agent’s operational utility. A deployable verifier must simultaneously achieve high CFDR and high APS.

4.4. Statistical Protocol

- Random seeds. We run each method with independent random seeds, varying the scenario order and agent temperature sampling. This provides basic replication for detecting high-variance methods.

- Significance testing. One-way ANOVA is used to detect an overall effect of method on VA, followed by Tukey’s HSD post-hoc test for pairwise comparisons. We report 95% confidence intervals for all means; is considered significant.

- Reproducibility. All scenario definitions, instrumentation code, and raw result JSON files are included in the supplementary material.

4.5. Hyperparameters

5. Results

5.1. Main Results

5.2. Results by Difficulty Tier

5.3. Per-Seed Stability Analysis

5.4. Statistical Significance

- AgentVerify Post-hoc > Hybrid Runtime LTL (), Compositional Assume-Guarantee (), and Monolithic Neural Verifier ().

- AgentVerify Post-hoc vs. Monolithic Contract Verification: (not significant at ), suggesting parity on this benchmark.

- Monolithic Neural Verifier < all formal-methods-based approaches ().

5.5. Runtime Overhead

6. Discussion

- 1.

- Deploy the Tier-1 runtime monitor as a default safety net with negligible overhead ( latency increase).

- 2.

- After each agent episode (or periodically), run the Tier-2 post-hoc analyser for comprehensive auditing. At 142 ms per episode, this is practical for most non-real-time applications.

- 3.

- Reserve manual review for episodes flagged by either tier, or for high-stakes scenarios where even FNR is unacceptable.

7. Limitations

8. Conclusions

Appendix A. Baseline Implementation Details

Appendix B. Full Specification Library

| ID | Category | LTL Template | Description |

|---|---|---|---|

| Memory | Writes never contain PII | ||

| Memory | Reads trigger consistency checks | ||

| Memory | Full buffer is flushed immediately | ||

| Memory | Every write is acknowledged | ||

| Memory | Uninitialised memory never read | ||

| Memory | Shared writes require mutex | ||

| Tool | High-risk tools require prior approval | ||

| Tool | Delete never followed by email | ||

| Tool | Only allowed tools invoked | ||

| Tool | Code execution in sandbox only | ||

| Tool | Network access to whitelisted domains only | ||

| Tool | File writes require path validation | ||

| Tool | DB writes are committed or rolled back | ||

| MCP | Every MCP request receives a response | ||

| MCP | Only allowed skills invoked | ||

| MCP | Skill authentication requires valid token | ||

| MCP | Skill chaining requires explicit approval | ||

| MCP | Skill results returned within 50 steps | ||

| Human | Critical actions confirmed by human | ||

| Human | Responses to humans never deceptive | ||

| Human | PII requests require user consent | ||

| Human | Escalation requires supervisor notification | ||

| Human | Final actions preceded by summary to user |

Appendix C. Scenario Examples

References

- Adam, Mustafa; Anisi, David A.; Ribeiro, Pedro. A verification methodology for safety assurance of robotic autonomous systems. arXiv. 2025. Available online: https://arxiv.org/abs/2506.19622. [CrossRef]

- AgentScope Team. Agentscope 1.0: A developer-centric framework for building multi-agent applications. arXiv. 2025. Available online: https://arxiv.org/abs/2508.16279.

- Ali, Sajid; Abuhmed, Tamer; El–Sappagh, Shaker; Muhammad, Khan; Alonso, José M.; Confalonieri, Roberto; Guidotti, Riccardo; Del Ser, Javier; Díaz-Rodríguez, Natalia; Herrera, Francisco. Explainable artificial intelligence (xai): What we know and what is left to attain trustworthy artificial intelligence. Information Fusion 2023. [Google Scholar] [CrossRef]

- Andriushchenko, Maksym. Agentharm: A benchmark for measuring harmfulness of llm agents. arXiv 2024. [Google Scholar] [CrossRef]

- Brunke, Lukas; Greeff, Melissa; Hall, Adam W.; Yuan, Zhaocong; Zhou, Siqi; Panerati, Jacopo; Schoellig, Angela P. Safe learning in robotics: From learning-based control to safe reinforcement learning. Annual Review of Control Robotics and Autonomous Systems 2022. [Google Scholar] [CrossRef]

- Diligenti, M.; Giannini, Francesco; Marra, G.; Gori, Marco. Lyrics: A general interface layer to integrate logic inference and deep learning. In CINECA IRIS Institutial research information system (University of Pisa); 2020. [Google Scholar] [CrossRef]

- Domkundwar, Ishaan; Mukunda, N. S.; Bhola, Ishaan; Kochhar, Riddhik. Safeguarding ai agents: Developing and analyzing safety architectures. arXiv 2024. [Google Scholar] [CrossRef]

- Díaz-Rodríguez, Natalia; Del Ser, Javier; Coeckelbergh, Mark; López de Prado, Marcos; Herrera-Viedma, Enrique; Herrera, Francisco. Connecting the dots in trustworthy artificial intelligence: From ai principles, ethics, and key requirements to responsible ai systems and regulation. Information Fusion 2023. [Google Scholar] [CrossRef]

- Ferrando, Angelo; Malvone, Vadim. Towards the combination of model checking and runtime verification on multi-agent systems. arXiv. 2022. Available online: https://arxiv.org/abs/2202.09344. [CrossRef]

- Fritzsch, Jonas; Schmid, Tobias; Wagner, Stefan. Experiences from large-scale model checking: Verification of a vehicle control system. arXiv. 2020. Available online: https://arxiv.org/abs/2011.10351. [CrossRef]

- Ghilardi, Silvio; Gianola, Alessandro; Montali, Marco; Rivkin, Andrey. Relational action bases: Formalization, effective safety verification, and invariants (extended version). arXiv. 2022. Available online: https://arxiv.org/abs/2208.06377.

- Hong, Sirui; Zhuge, Mingchen; Chen, Jonathan; Zheng, Xiawu; et al. Metagpt: Meta programming for a multi-agent collaborative framework. arXiv. 2023. Available online: https://arxiv.org/abs/2308.00352.

- Hsu, Kai-Chieh; Hu, Haimin; Fernández Fisac, Jaime. The safety filter: A unified view of safety-critical control in autonomous systems. arXiv. 2023. Available online: https://arxiv.org/abs/2309.05837.

- Huang, Xiaowei; Kroening, Daniel; Ruan, Wenjie; Sharp, James J.; Sun, Youcheng; Thamo, Emese; Wu, Min; Yi, Xinping. A survey of safety and trustworthiness of deep neural networks: Verification, testing, adversarial attack and defence, and interpretability. Computer Science Review 2020. [Google Scholar] [CrossRef]

- Ibn Khedher, Mohamed; Jmila, Houda; El-Yacoubi, Mounîm A. On the formal evaluation of the robustness of neural networks and its pivotal relevance for ai-based safety-critical domains. International Journal of Network Dynamics and Intelligence 2023. [Google Scholar] [CrossRef]

- Koohestani, Roham. Agentguard: Runtime verification of ai agents. arXiv. 2025. Available online: https://arxiv.org/abs/2509.23864.

- OpenAI; Hasindu, W. A.; Wijayagunawardhana, N. Gpt-4 technical report. In arXiv; Cornell University), 2023. [Google Scholar] [CrossRef]

- OpenClaw Contributors. OpenClaw: Open-source multi-agent framework for personal AI assistants. GitHub Repository. 2026. Available online: https://github.com/openclaw.

- others. Safepro: A scenario-based benchmark for probing the safety of LLM-based agents. arXiv 2026. [Google Scholar]

- Shi, Tianneng; He, Jingxuan; Wang, Zhun; Li, Hongwei; Wu, Linyu; Guo, Wenbo; Song, Dawn. Progent: Programmable privilege control for LLM agents. In Preprint; University of California: Berkeley, 2025. [Google Scholar]

- Tran, Khanh-Tung; Dao, Dung; Nguyen, Minh-Duong; Pham, Quoc-Viet; O’Sullivan, Barry; Nguyen, Hoang D. Multi-agent collaboration mechanisms: A survey of LLMs. arXiv. 2025. Available online: https://arxiv.org/abs/2501.06322.

- Wang, Haoyu; Poskitt, Christopher M.; Sun, Jun. Agentspec: Customizable runtime enforcement for safe and reliable LLM agents. In Proceedings of the IEEE/ACM 48th International Conference on Software Engineering (ICSE), Rio de Janeiro, Brazil, 2026; ACM. [Google Scholar] [CrossRef]

- Wang, Lei; Ma, Chen; Feng, Xueyang; Zhang, Zeyu; Yang, Hao; Zhang, Jingsen; Chen, Zhiyuan; Tang, Jiakai; Chen, Xu; Lin, Yankai; Zhao, Wayne Xin; Wei, Zhewei; Wen, Ji-Rong. A survey on large language model based autonomous agents. Frontiers of Computer Science 2024. [Google Scholar] [CrossRef]

- Wu, Qingyun; Wang, Chi; et al. Autogen: Enabling next-gen LLM applications via multi-agent conversation. arXiv. 2023. Available online: https://arxiv.org/abs/2308.08155.

- Yang, Frank; Zhan, Sinong Simon; Wang, Yixuan; Huang, Chao; Zhu, Qi. Case study: Runtime safety verification of neural network controlled system. arXiv. 2024. Available online: https://arxiv.org/abs/2408.08592.

- Zhan, Simon Sinong; et al. Sentinel: A multi-level formal framework for safety evaluation of LLM-based embodied agents. OpenReview (submitted to ICLR 2026), 2025; Available online: https://openreview.net/forum?id=vCyxemIKLL.

| Category | Low-Diff. | High-Diff. | Total |

|---|---|---|---|

| Memory integrity | 2 | 2 | 4 |

| Tool call safety | 2 | 2 | 4 |

| MCP/skill invocation | 2 | 2 | 4 |

| Human interaction | 2 | 1 | 3 |

| Total | 8 | 7 | 15 |

| Parameter | Description | Value | Rationale |

|---|---|---|---|

| state_granularity | Depth of state abstraction hierarchy | 3 levels | Balances expressivity / state space |

| monitor_spec_set | LTL specs compiled for runtime monitor | Safety-critical (14/23) | Minimises overhead |

| posthoc_depth | Trace analysis depth | Full trace | Maximises detection coverage |

| intervention_threshold | Confidence to trigger runtime alert | 0.95 | Reduces false positives |

| mcp_timeout_k | Liveness bound for MCP response | 50 steps | Practical timeout bound |

| tool_risk_level | Risk threshold for approval gate | High only | Preserves autonomy for low-risk tools |

| Method | VA (%) | Std | FNR (%) | CFDR (%) | APS (%) | n |

|---|---|---|---|---|---|---|

| AgentVerify Post-hoc Behavioral Analysis | 86.67 | 0.00 | 13.33 | 100.0 | 91.7 | 3 |

| Monolithic Contract Verification | 80.00 | 0.00 | 20.00 | 83.3 | 95.0 | 3 |

| Runtime Monitoring (No LTL) | 46.67 | 0.00 | 53.33 | 50.0 | 72.0 | 3 |

| AgentVerify Compositional Assume-Guarantee | 26.67 | 0.00 | 73.33 | 33.3 | 83.0 | 3 |

| Monolithic Neural Verifier | 13.33 | 9.43 | 86.67 | 16.7 | 60.0 | 3 |

| AgentVerify Hybrid Runtime LTL | 6.67 | 0.00 | 93.33 | 0.0 | 88.0 | 3 |

| Method | Low-Difficulty (%) | High-Difficulty (%) | Drop (pp) |

|---|---|---|---|

| AgentVerify Post-hoc Behavioral Analysis | 95.33 | 78.00 | −17.33 |

| Monolithic Contract Verification | 93.33 | 66.67 | −26.67 |

| Runtime Monitoring (No LTL) | 60.00 | 33.33 | −26.67 |

| AgentVerify Compositional Assume-Guarantee | 40.00 | 13.33 | −26.67 |

| Monolithic Neural Verifier | 26.67 | 0.00 | −26.67 |

| AgentVerify Hybrid Runtime LTL | 13.33 | 0.00 | −13.33 |

| Overall (mean over methods) | 95.33 | 78.00 | −17.33 |

| Method | Seed 1 | Seed 2 | Seed 3 | Mean | Std |

|---|---|---|---|---|---|

| AgentVerify Post-hoc Behavioral Analysis | 86.67 | 86.67 | 86.67 | 86.67 | 0.00 |

| Monolithic Contract Verification | 80.00 | 80.00 | 80.00 | 80.00 | 0.00 |

| Runtime Monitoring (No LTL) | 46.67 | 46.67 | 46.67 | 46.67 | 0.00 |

| AgentVerify Compositional Assume-Guarantee | 26.67 | 26.67 | 26.67 | 26.67 | 0.00 |

| gray]0.92 Monolithic Neural Verifier | 4.00 | 13.33 | 26.67 | 13.33 | 9.43 |

| AgentVerify Hybrid Runtime LTL | 6.67 | 6.67 | 6.67 | 6.67 | 0.00 |

| Comparison | Mean Diff (pp) | Sig. |

|---|---|---|

| Post-hoc vs. Contract Verif. | ns | |

| Post-hoc vs. RM-noLTL | *** | |

| Post-hoc vs. Comp. A-G | *** | |

| Post-hoc vs. Neural Verifier | ** | |

| Post-hoc vs. Hybrid Runtime LTL | *** | |

| Contract Verif. vs. RM-noLTL | *** | |

| Neural Verifier vs. Hybrid Runtime LTL | ns |

| Component | Latency Overhead (ms) | Relative (%) |

|---|---|---|

| Baseline (unmonitored) | 0.0 | – |

| Tier-1 Runtime Monitor | 0.4 ± 0.1 | 0.04% |

| Tier-2 Post-hoc Analyser (full trace) | 142 ± 18 | 14.2% |

| Monolithic Neural Verifier | 380 ± 55 | 38.0% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).