Submitted:

14 April 2026

Posted:

14 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Methodology

2.1. Analysis of the YOLOv8n Baseline Model

2.2. Improved Network Architecture

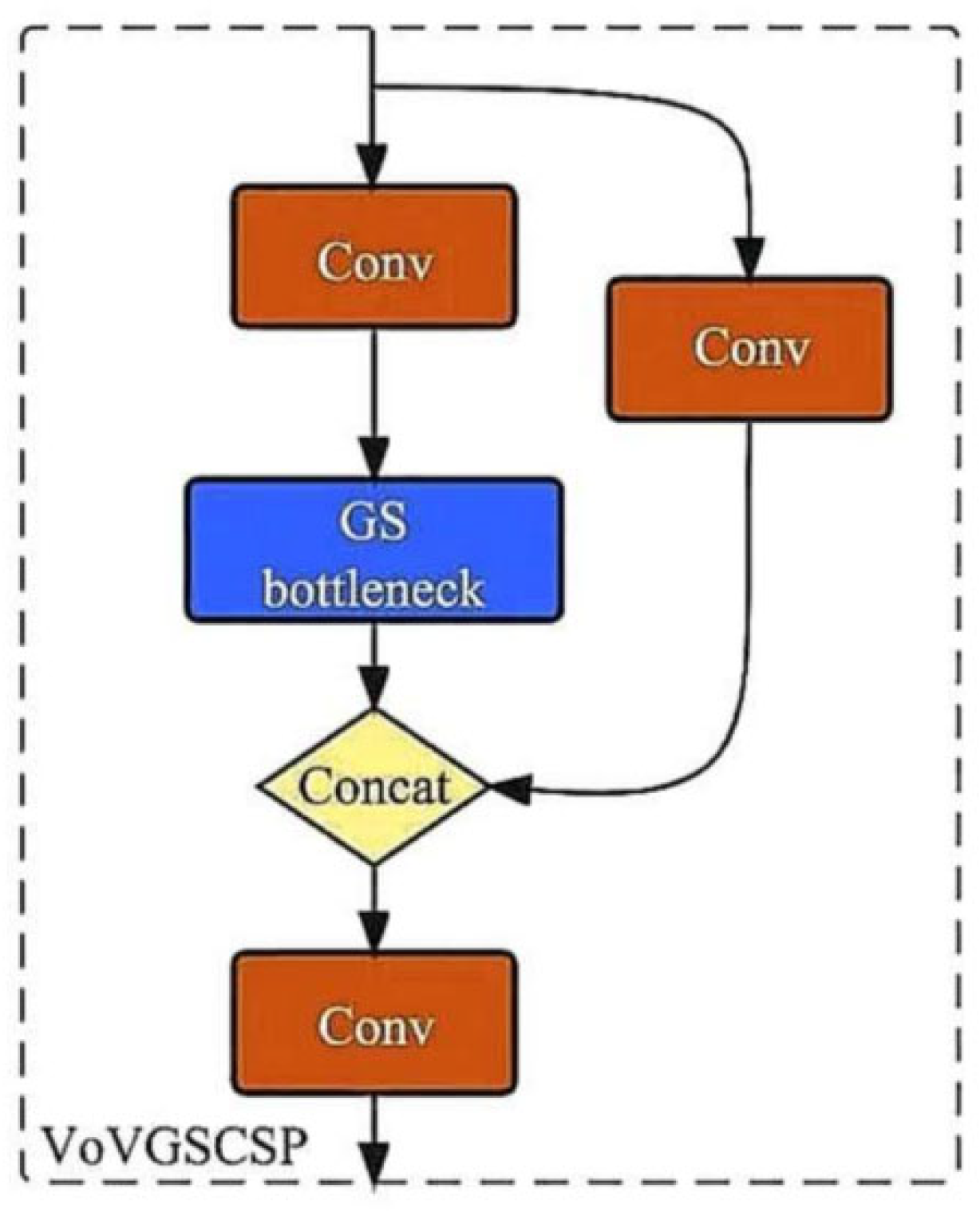

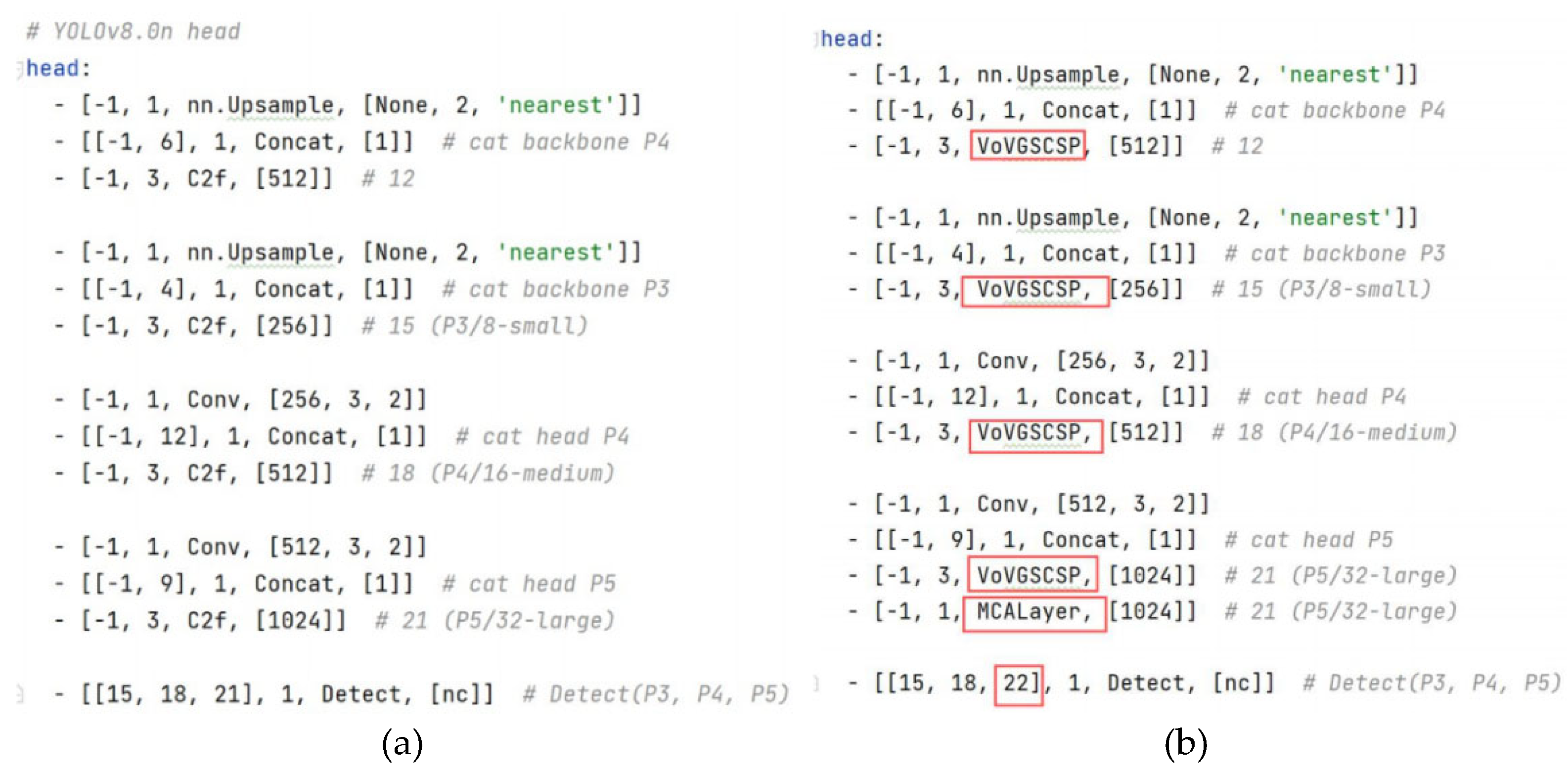

2.2.1. VoVGSCSP Module

2.2.2. SlimNeck and Multi-scale Contextual Attention (MCA)

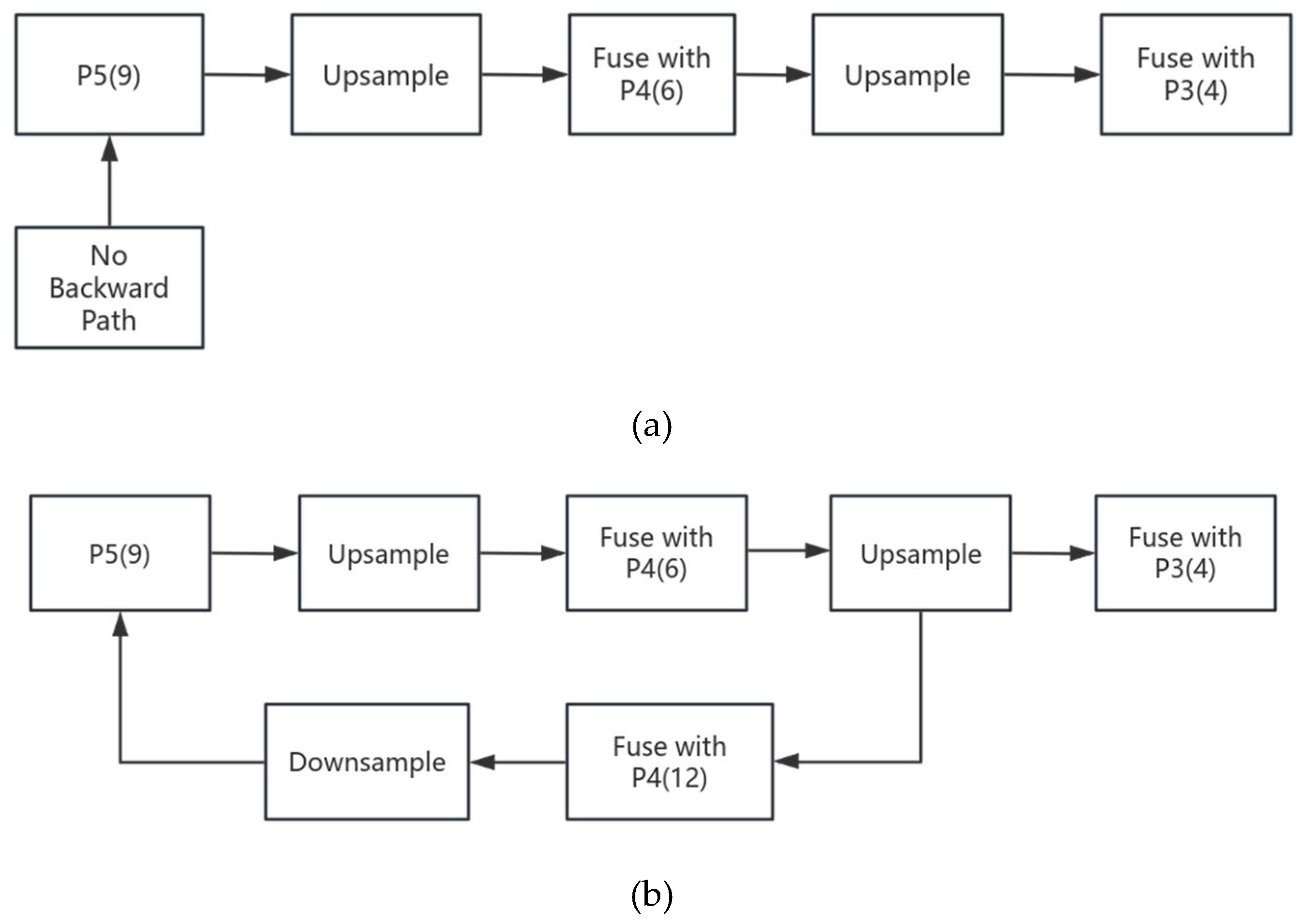

2.2.3. Feature Fusion Path Optimization

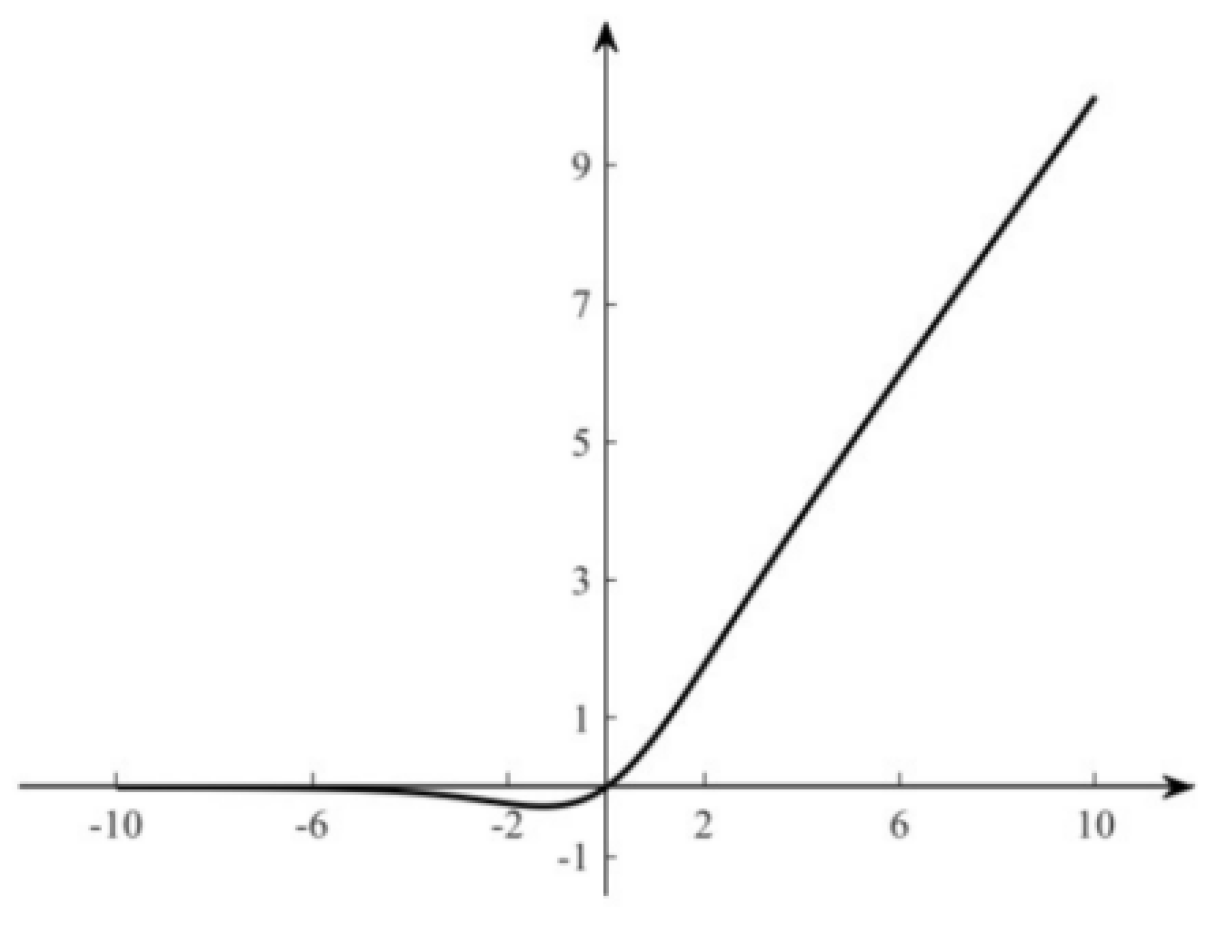

2.3. Model Lightweighting Strategy

3. Experimental Design

3.1. Dataset

3.2. Experimental Setup

3.3. Evaluation Metrics

4. Results and Analysis

4.1. Quantitative Results Analysis

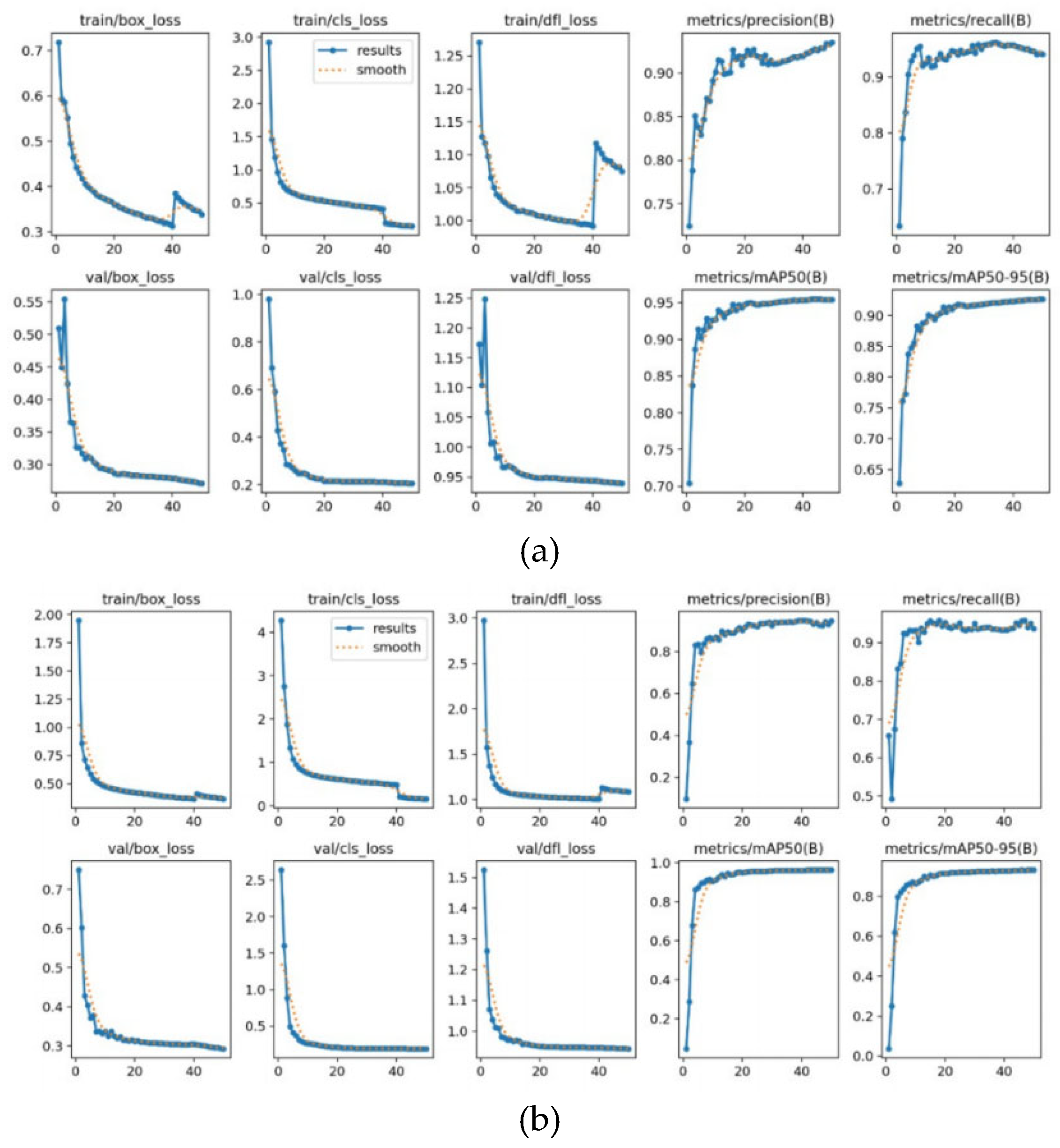

4.2. Loss Curves and Performance Metrics Analysis

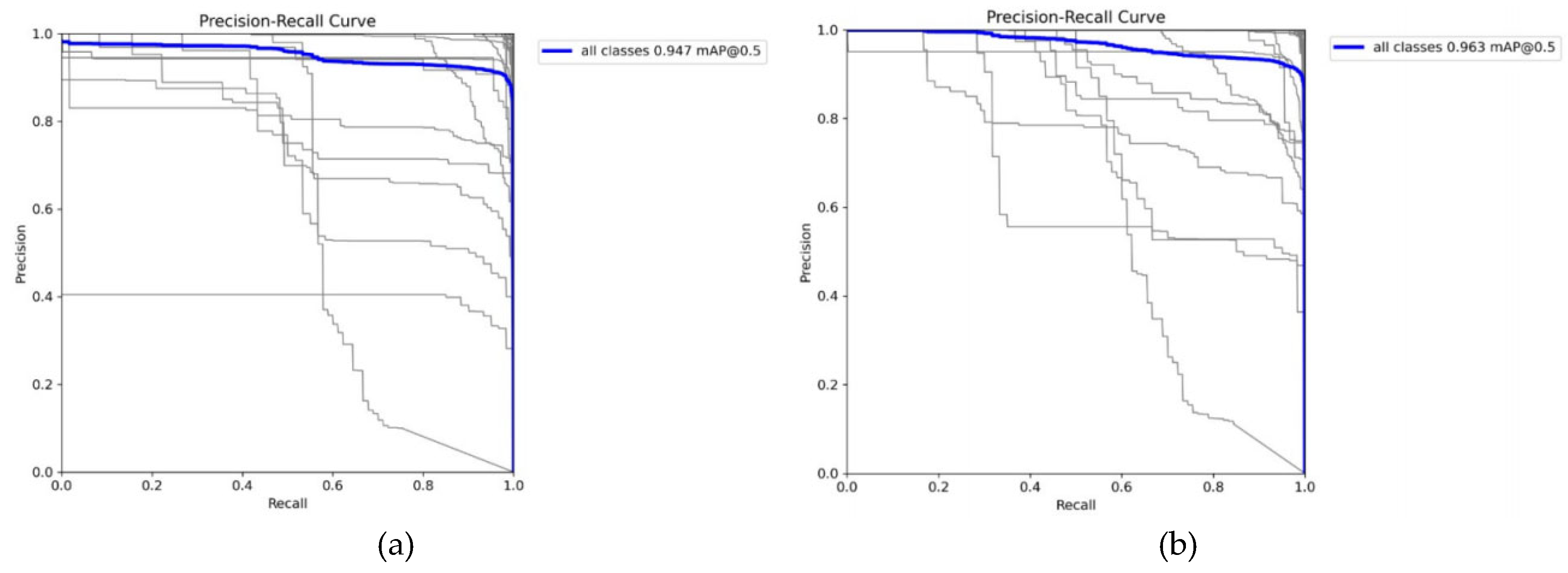

4.3. Precision-Recall Curve Analysis

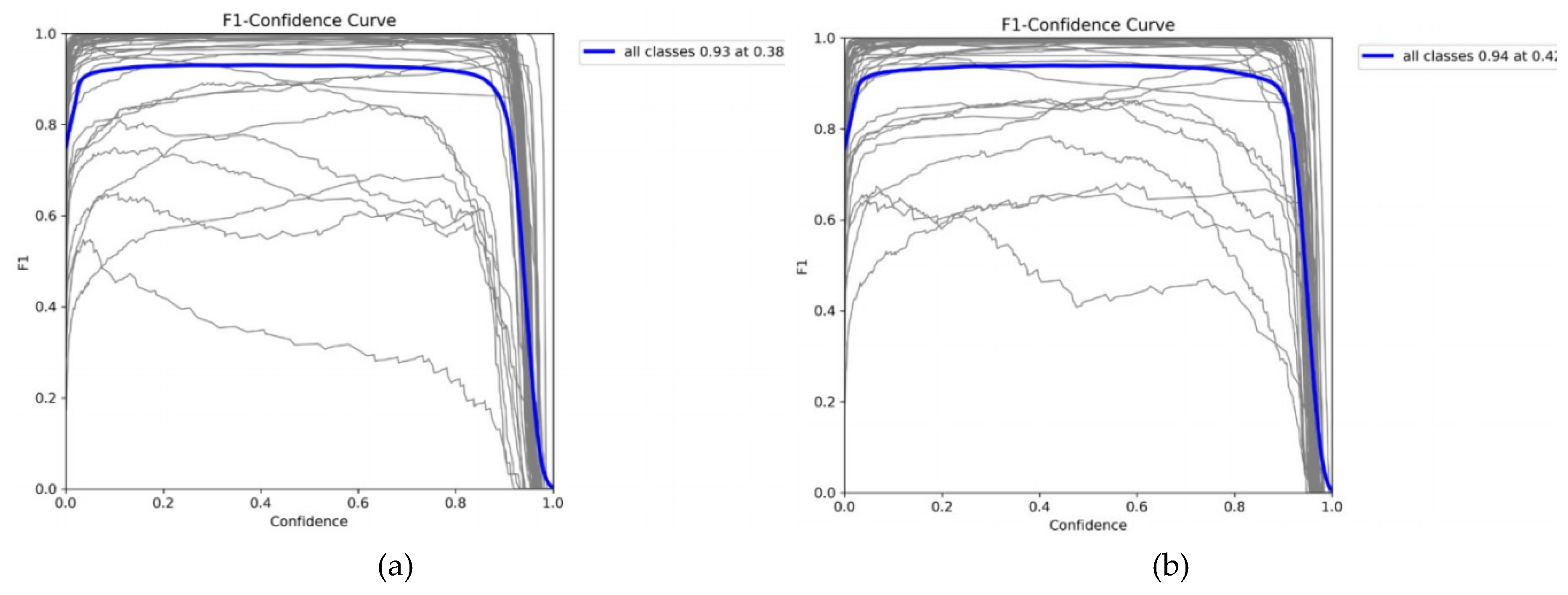

4.4. F1-Confidence Curve Analysis

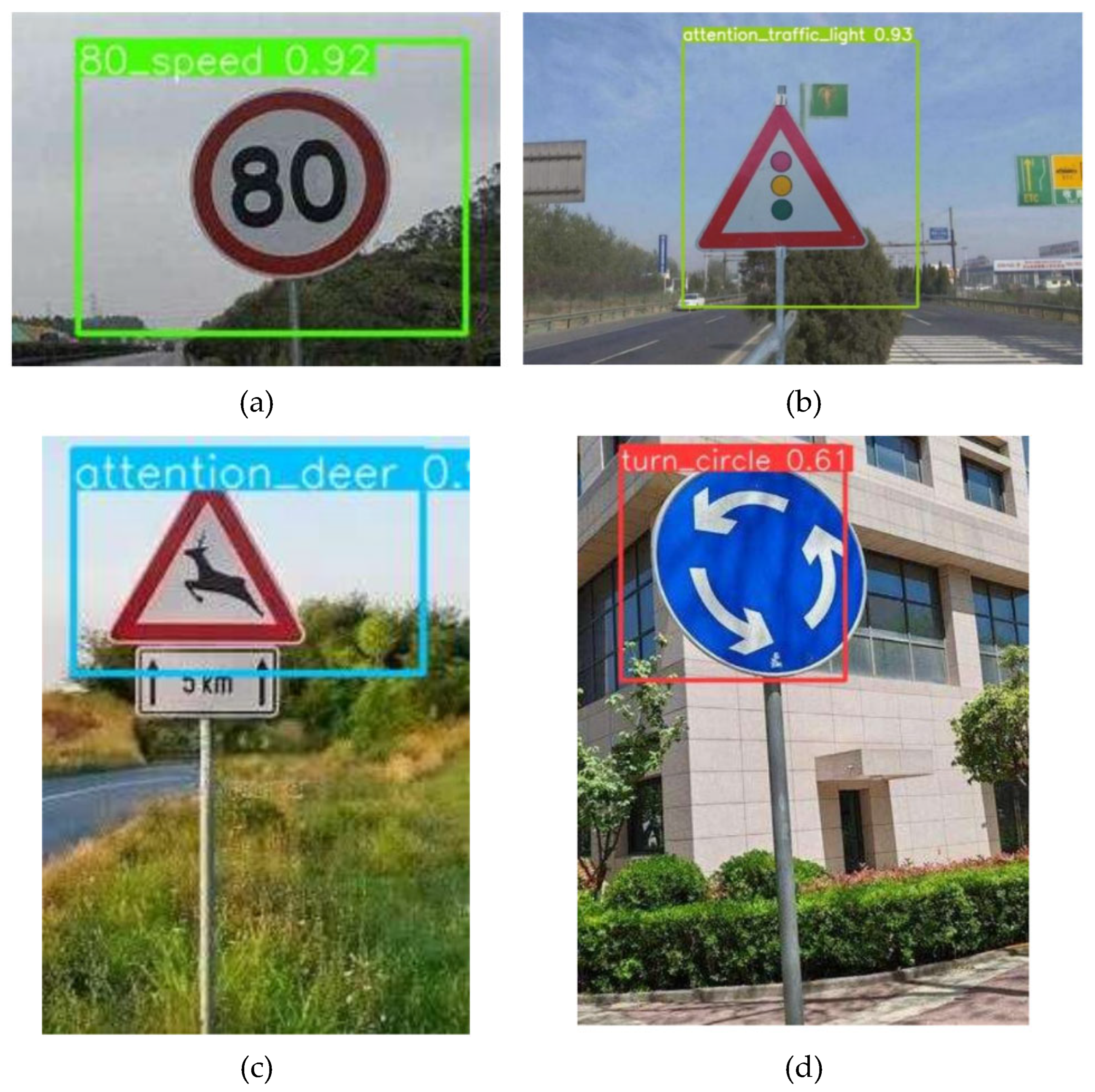

4.5. Qualitative Results Analysis

5. Conclusion

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- ZHU, Z.; LIANG, D.; ZHANG, S.; et al. Traffic-sign detection and classification in the wild[C]. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2016; pp. 2110–2118. [Google Scholar]

- STALLKAMP, J.; SCHLIPSING, M.; SALMEN, J.; et al. The German traffic sign recognition benchmark: A multi-class classification competition[C]. In Proceedings of the International Joint Conference on Neural Networks, 2011; pp. 1453–1460. [Google Scholar]

- DEWI, C.; CHEN, R. C.; LIU, Y. T.; et al. YOLO V4 for advanced traffic sign recognition with synthetic training data[J]. IEEE Access 2021, 9, 97228–97242. [Google Scholar] [CrossRef]

- HOWARD, A. G.; ZHU, M.; CHEN, B.; et al. MobileNets: Efficient convolutional neural networks for mobile vision applications[J]. arXiv 2017, arXiv:1704.04861. [Google Scholar]

- REDMON, J.; FARHADI, A. YOLOv3: An incremental improvement[J]. arXiv 2018, arXiv:1804.02767. [Google Scholar]

- BOCHKOVSKIY, A.; WANG, C. Y.; LIAO, H. Y. M. YOLOv4: Optimal speed and accuracy of object detection[J]. arXiv 2020, arXiv:2004.10934. [Google Scholar]

- JOCHER, G.; CHAURASIA, A.; QIU, J. Ultralytics YOLOv8[Z]. 2023. [Google Scholar]

- WANG, J.; CHEN, Y.; DONG, Z.; et al. Improved YOLOv5 network for real-time multi-scale traffic sign detection[J]. Neural Computing and Applications 2023, 35(10), 7353–7366. [Google Scholar] [CrossRef]

- WANG, P.; MOHAMED, R.; MUSTAPHA, N.; et al. YOLO-AEF: Traffic sign detection via adaptive enhancement and fusion[J]. Neurocomputing 2025, 655, 131430. [Google Scholar] [CrossRef]

- HE, Y.; GUO, M.; ZHANG, Y.; et al. NTS-YOLO: A nocturnal traffic sign detection method based on improved YOLOv5[J]. Applied Sciences 2025, 15(3), 1578. [Google Scholar] [CrossRef]

- JI, B.; XU, J.; LIU, Y.; et al. Improved YOLOv8 for small traffic sign detection under complex environmental conditions[J]. Franklin Open 2024, 8, 100167. [Google Scholar] [CrossRef]

- DU, S.; SU, S.; LIN, C.; et al. ES-YOLO: Edge and shape fusion-based YOLO for traffic sign detection[J]. Computers, Materials & Continua 2026, 87(1), 88. [Google Scholar]

- LIN, T. Y.; DOLLÁR, P.; GIRSHICK, R.; et al. Feature pyramid networks for object detection[C]. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2017; pp. 2117–2125. [Google Scholar]

- LI, X.; WANG, W.; WU, L.; et al. Generalized focal loss: Learning qualified and distributed bounding boxes for dense object detection[C]. Advances in Neural Information Processing Systems 2020, 23902–23912. [Google Scholar]

- REZATOFIGHI, H.; TSOI, N.; GWAK, J.; et al. Generalized intersection over union: A metric and a loss for bounding box regression[C]. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2019; pp. 658–666. [Google Scholar]

- WANG, C. Y.; BOCHKOVSKIY, A.; LIAO, H. Y. M. Scaled-YOLOv4: Scaling cross stage partial network[C]. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2021; pp. 13029–13038. [Google Scholar]

- LI, H.; LI, J.; WEI, H.; et al. Slim-neck by GSConv: A better design paradigm of detector architectures for autonomous vehicles[J]. arXiv 2022, arXiv:2206.02424. [Google Scholar]

- LI, H.; LI, J.; WEI, H.; et al. Slim-neck by GSConv: A lightweight design for real-time detector architectures[J]. Journal of Real-Time Image Processing 2024, 21(3), 62. [Google Scholar] [CrossRef]

- LIU, Z.; LI, J.; SHEN, Z.; et al. Learning efficient convolutional networks through network slimming[C]. In Proceedings of the IEEE International Conference on Computer Vision, 2017; pp. 2736–2744. [Google Scholar]

| Model | Parameters (M) | GFLOPs | mAP@0.5 (%) | FPS |

| Baseline | 3.01 | 8.25 | 94.7 | 82.3 |

| Improved | 2.66 | 7.49 | 96.3 | 90.6 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).