Submitted:

12 April 2026

Posted:

15 April 2026

You are already at the latest version

Abstract

Keywords:

I. Introduction

- Historical Simulation: Validating new features or business logic against historical snapshotted events.

- Data Backfill: Re-populating missing data on DW after a schema change or data loss event [1].

- Batch Processing: Periodic heavy-lift computing tasks that rely on processing large scale of offline data against complex service applications [2].

II. Architectural Options for Offline Processing

A. Option I: Spark Native Application (Compute-to-Data Approach)

Mechanisms & Performance Metrics

- High-Velocity Evaluation: We observed a benchmark of evaluating an atomic web service Application Programming Interface (API) against 22 million historical records in seconds.

- Data Locality: By executing service API libraries directly on top of Spark runtime, we eliminated the network I/O overhead typically associated with moving data to a service.

- Native Resilience: The architecture leverages the native failover and retry mechanisms of the Spark/MapReduce framework, ensuring robust handling of executor failures.

The "Dual Codebase" Constraint

- Dependency Stripping: Complex service application often rely on heavy I/O operations (database (DB) lookups, API integrations) which are anti-patterns as Spark-native application. Engineers require efforts to strip out these dependencies or mock them extensively, effectively rewriting the service application with a "dual" codebase.

- High Maintenance: The dual codebases from offline & real-time, results in high maintenance effort and long-term code sustainability issues.

- Logic Parity: Over time, as new features are added to the real-time application, the offline codebase inevitably falls behind. Guaranteeing 100% logical parity becomes an operational impossibility without a continuous, expensive synchronization effort between the two codebases.

B. Option II: Micro-Batch & Spark JDBC

- Mechanism: Spark executors iterate through Hadoop partitions, move to batch application for further data processing and trigger synchronous requests to the service APIs for each record.

- Pros: Guarantees high logical parity as it uses the same service endpoints as production real-time.

- Cons: Throughput Bottlenecks & Reliability: We encountered throughput bottlenecks from both Spark JDBC sessions and synchronous API calls, which can overwhelm service applications and lead to timeouts. Moreover, micro-batches become a Single Point of Failure because data fetching and dispatch run in a single component.

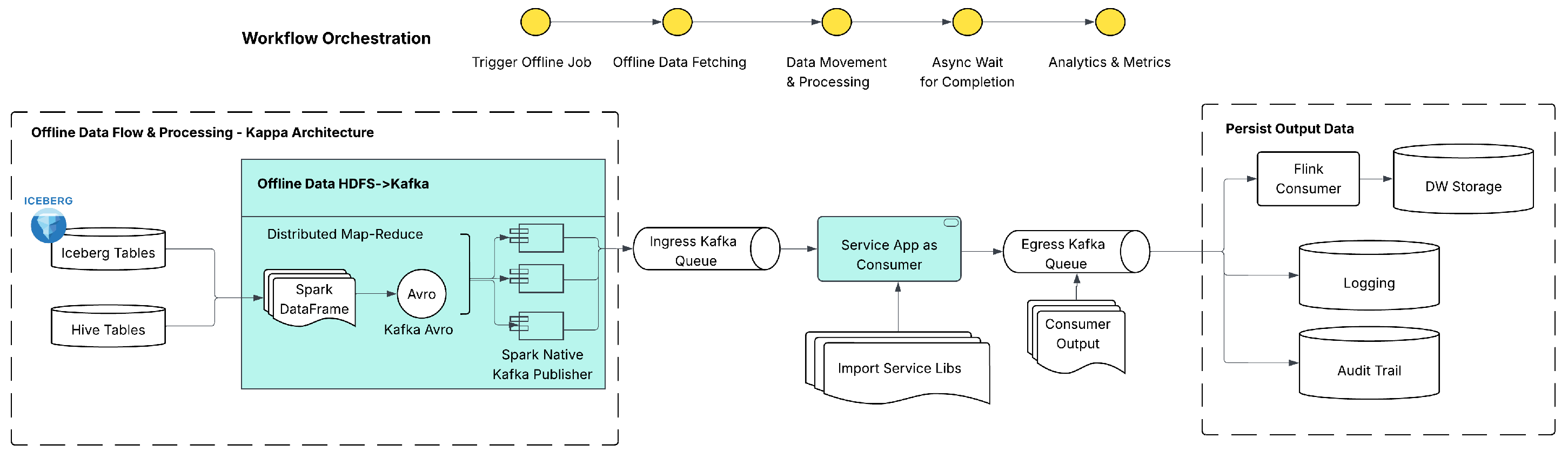

C. Option III: Kappa Architecture

-

Key components of Kappa Design:

- -

- Ingress Kafka Queue: A Spark-native application reads the offline DW data, and streams it into an Ingress Kafka Queue within Spark Map-Reduce runtime;

- -

- Process by Async Consumer The heavy-weight service application is packaged as a scalable Consumer Application, which is capable of processing events in an asynchronous manner;

- -

- Egress Kafka Queue: Results are published to an Egress Kafka Queue and persisted back to DW storage using lightweight processors such as Apache Flink.

Pros

- Total Logical Parity: Because the consumer is a consumer wrapper exact of service executable libraries, there is zero risk of "logic drift." The same code logic will be unified between real-time & offline environment.

- Elastic Scalability: The execution tier is decoupled from the data storage. We can auto-scale the consumer fleet (e.g., via Kubernetes) from zero to hundreds of pods to match the simulation workload, optimizing compute costs.

- Dependency Isolation: Complex site dependencies and local caching mechanisms remain intact within the application container, removing the need to mock external services.

Cons

- Operational Complexity: Asynchronous architectures are inherently more difficult to debug than synchronous batch jobs. Triaging issues such as "data loss" requires sophisticated offset tracking across Ingress and Egress queues.

- Serialization Overhead: There is a computational cost to transforming offline data (e.g., Spark data frame) into stream events (Avro/Protobuf) at the Ingress layer. Strict schema management is required to ensure the DataFrame matches the event consumer data schema.

C. Comparison Summary

III. System Architecture

- Workflow Orchestration: The high-level workflow orchestrator (e.g., Apache Airflow) managing the end-to-end (E2E) lifecycle. It triggers the data pipelines and tracks workflow run status.

- Data Movement Pipeline: A distributed Spark process responsible for fetching Point-in-Time (PiT) snapshots from the Offline DW and producing them as events into the Ingress Kafka Queue.

- Service as Consumer: The production service application packaged as a Kafka consumer. It processes each transaction using production-grade logic and publishes results to the Egress Kafka Queue.

- Data Persistence: A lightweight stream processor (e.g., Flink consumer [7]) that consumes egress events and sinks them into the Hadoop Distributed File System (HDFS) for long-term storage.

- Analytics Layer: SQL-based interface (Hive/Spark) allowing users to query the landed HDFS tables to calculate metrics or perform varied offline analysis.

- Backpressure Handling: The Spark-based ingress pipeline is capable of producing events at a rate significantly higher than the service application can process. Without intervention, this leads to consumer lag accumulation and potential resource exhaustion. To mitigate this, we tuned the Kafka consumer configuration to strictly limit pre-fetching. Specifically, we reduced max.poll.records to align with the service’s p99 latency, ensuring that the consumer never fetches more records than it can process within a session timeout window. Furthermore, we implemented an application-level rate limiter that dynamically pauses consumption if local thread pools become saturated [8].

- Schema Transformation: Our Ingress Pipeline performs a mandatory schema validation. Before publishing, the Spark DataFrame—often loosely typed—is mapped to a rigorous Avro schema governed by a central Schema Registry. This step handles type coercion (e.g., casting Hive timestamps to Avro longs) and null-safety checks. By enforcing strict Avro serialization at the ingress, we guarantee that the complex service consumer is protected from malformed data that could cause exceptions during execution [9].

IV. Performance Benchmarking

A. Experimental Setup

Infrastructure Configuration

Compute Resources

- Baseline Capacity: The fleet was initialized with a minimum of 24 pods per data center.

- Elastic Scaling: Horizontal Pod Autoscaler (HPA) was configured to scale the fleet up to a maximum of 48 pods per region based on CPU utilization and consumer lag metrics.

- Pod specs: Each consumer pod was provisioned with 2 vCPU and 8 GB of memory. This memory profile was specifically chosen to accommodate the local caching and high computing intensity of the heavy-weight service application.

B. Benchmarking Metrics

- Scalability: Capable of processing million offline data records in a single job run.

- E2E Latency: Achieved a processing Service Level Agreement (SLA) of minutes for the core execution phase.

Analysis of Results

C. Overall System Availability

- Job Availability: success rate.

- Data Integrity: End-to-end data loss was kept below , primarily due to transient connection timeouts when publishing events from the ingress pipeline to the Kafka queues under large-scale traffic volume. Once events are durably written to Kafka, the streaming pipeline operates with at-least-once delivery guarantees and did not introduce additional loss downstream.

D. Resource Efficiency

E. Cost vs. Convenience Trade-off

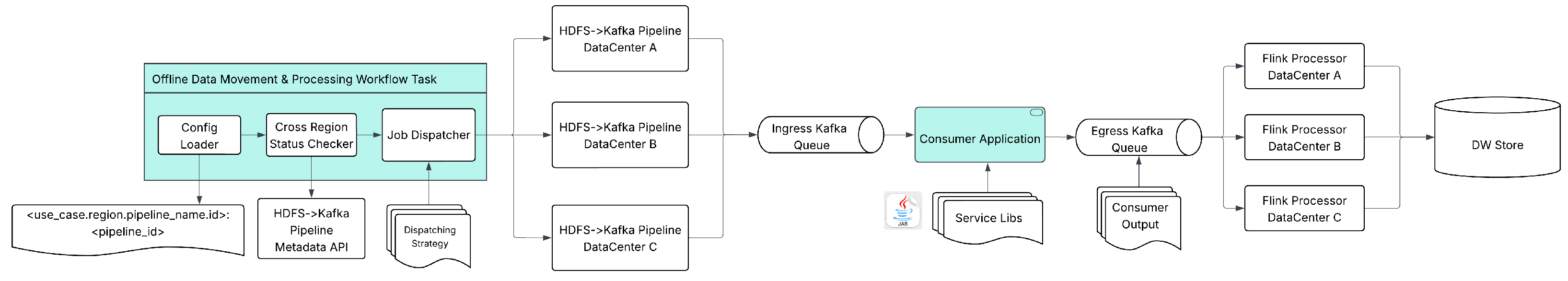

V. Enhanced Feature: Cross-Region Extensibility

A. Platform Availability & Resilience

B. Distributed Resource Utilization

C. Improving Job E2E Latency via Partitioning

- Partitioning: The master job is logically split into N child jobs (e.g., 3 jobs of 10 days each).

- Parallel Execution: These child jobs are dispatched simultaneously to different data centers (e.g., Job A → Region 1, Job B → Region 2).

- Throughput Multiplication: By dispatching traffic into ingress/egress Kafka queues allocated on multiple regions, the End-to-End (E2E) SLA is reduced linearly by the number of regions.

VI. Conclusion and Future Work

References

- Kleppmann, M. Designing Data-Intensive Applications: The Big Ideas Behind Reliable, Scalable, and Maintainable Systems; O’Reilly Media, Inc., 2017. [Google Scholar]

- Dean, J.; Ghemawat, S. MapReduce: Simplified Data Processing on Large Clusters. Proceedings of the OSDI 2004, Vol. 51, 137–150. [Google Scholar] [CrossRef]

- Kiran, M.; Murphy, P.; Monga, I.; Jonnalagadda, C.; Sriprasad, B. Lambda Architecture for Cost-Effective Batch and Speed Layer Operations. In Proceedings of the 2015 IEEE International Conference on Big Data (Big Data). IEEE, 2015; pp. 2785–2792. [Google Scholar]

- Armbrust, M.; et al. Spark SQL: Relational Data Processing in Spark. In Proceedings of the Proceedings of the 2015 ACM SIGMOD International Conference on Management of Data, 2015; pp. 1383–1394. [Google Scholar]

- Kreps, J. Questioning the Lambda Architecture. O’Reilly Radar 2014. [Google Scholar]

- Kreps, J.; Narkhede, N.; Rao, J. Kafka: A Distributed Messaging System for Log Processing. Proceedings of the Proceedings of the NetDB 2011, Vol. 11, 1–7. [Google Scholar]

- Carbone, P.; Katsifodimos, A.; Ewen, S.; Markl, V.; Haridi, S.; Tzoumas, K. Apache Flink: Stream and Batch Processing in a Single Engine. Bulletin of the IEEE Computer Society Technical Committee on Data Engineering 2015, 36. [Google Scholar]

- Welsh, M.; Culler, D.; Brewer, E. SEDA: An Architecture for Well-Conditioned, Scalable Internet Services. In Proceedings of the Proceedings of the Eighteenth ACM Symposium on Operating Systems Principles, 2001; pp. 230–243. [Google Scholar]

- Wang, L.; et al. Streams: High Performance and Reliable Geo-Distributed Stream Processing. In Proceedings of the USENIX Annual Technical Conference, 2021. [Google Scholar]

| Feature | Spark Native | Micro-Batch | Kappa |

|---|---|---|---|

| Logic Parity | Low | High | Total |

| Throughput | Very High | Low | High |

| Maintenance | High | Moderate | Minimal |

| Scaling | Map-Reduce | API/JDBC bottleneck | Elastic |

| Data Volume | Job Duration | Throughput |

|---|---|---|

| 1,000,000 (1M) | 7 min 03 sec | 2400 msgs/sec |

| 3,000,000 (3M) | 12 min 45 sec | 9,000 msgs/sec |

| 5,000,000 (5M) | 15 min 05 sec | 10,900 msgs/sec |

| 10,000,000 (10M) | 18 min 05 sec | 11,900 msg/sec |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).