Submitted:

13 April 2026

Posted:

14 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Related Work

1.2. Aims and Research Questions

2. Materials and Methods

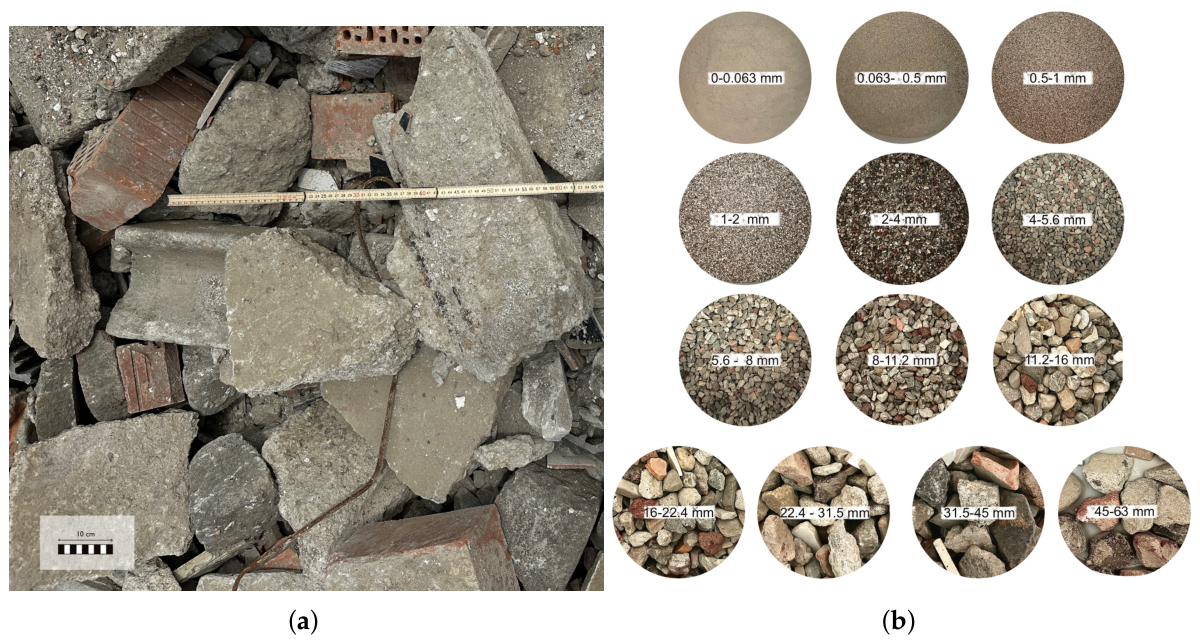

2.1. Materials and Sample Preparation

2.1.1. Sampling Campaign

2.1.2. Classification Procedure

2.2. Methods

2.2.1. Laboratory Analysis and Data Acquisition

2.2.2. Data Pre-Processing

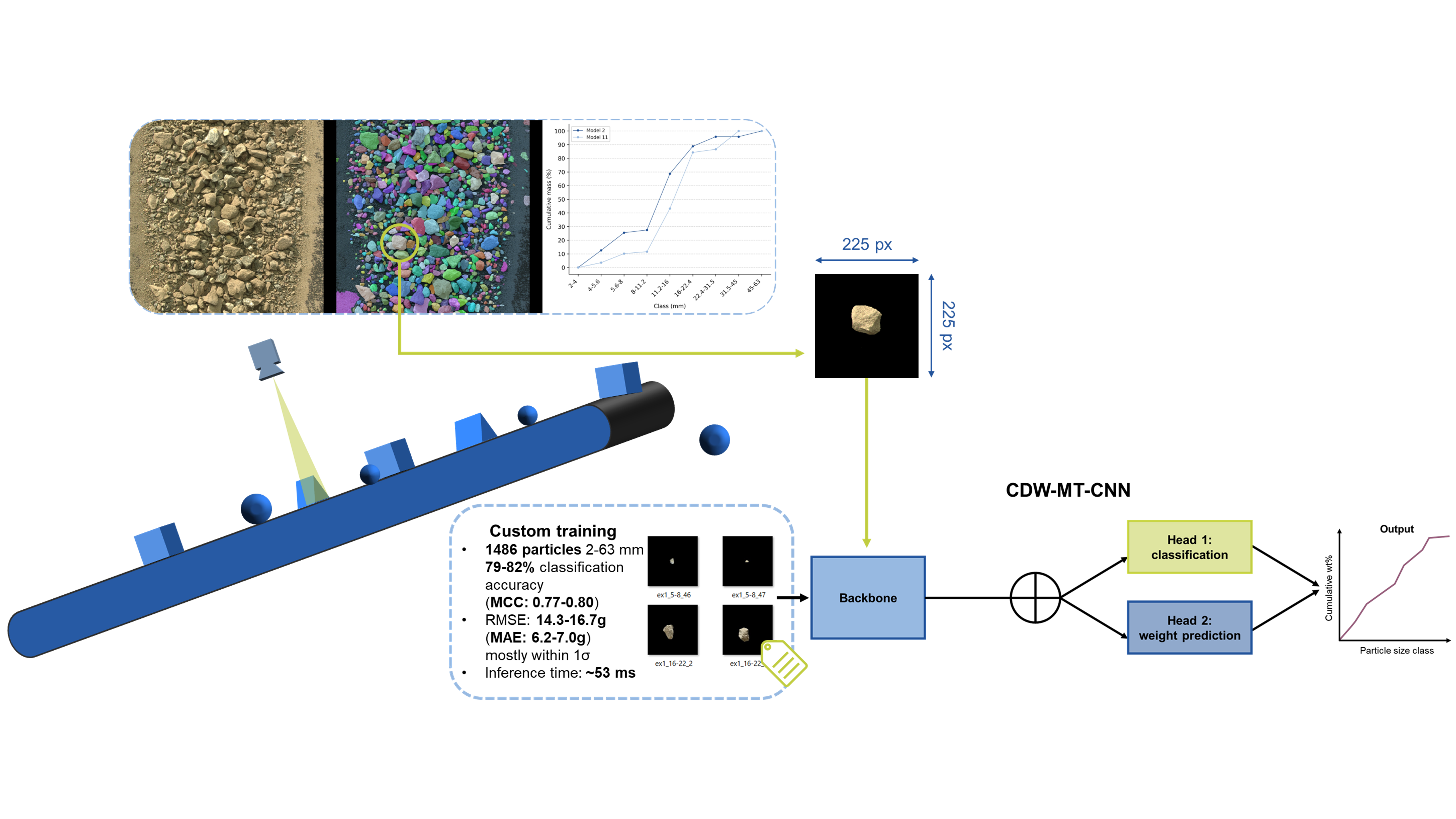

2.2.3. Model Architecture

2.2.4. Model Training and Evaluation

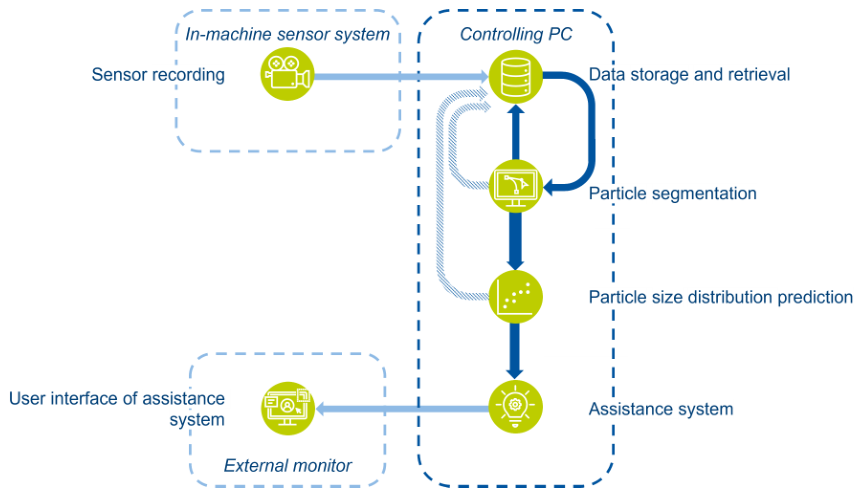

2.2.5. Quality Monitoring System

2.2.6. Full-Scale Plant Experiments

3. Results

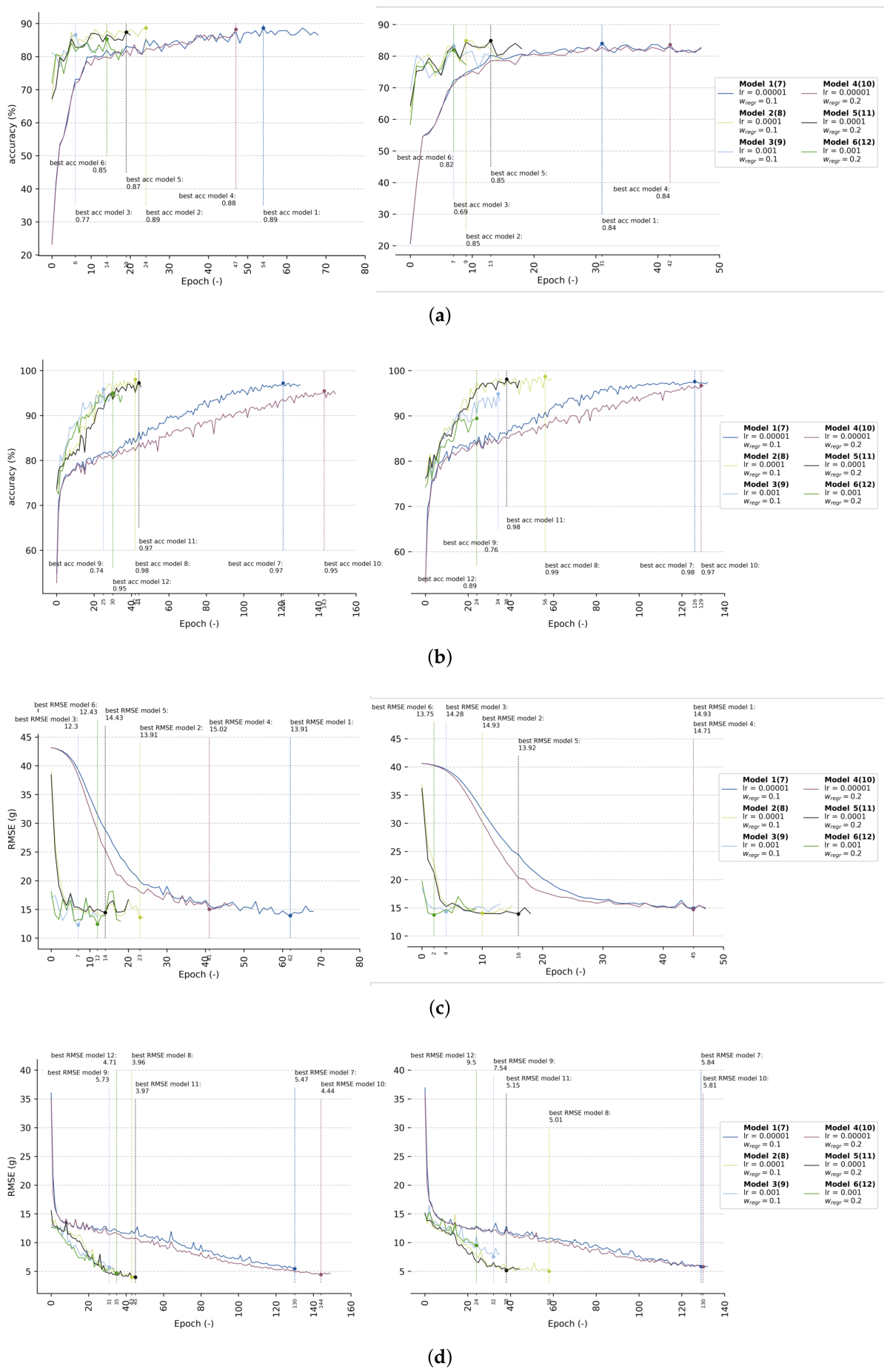

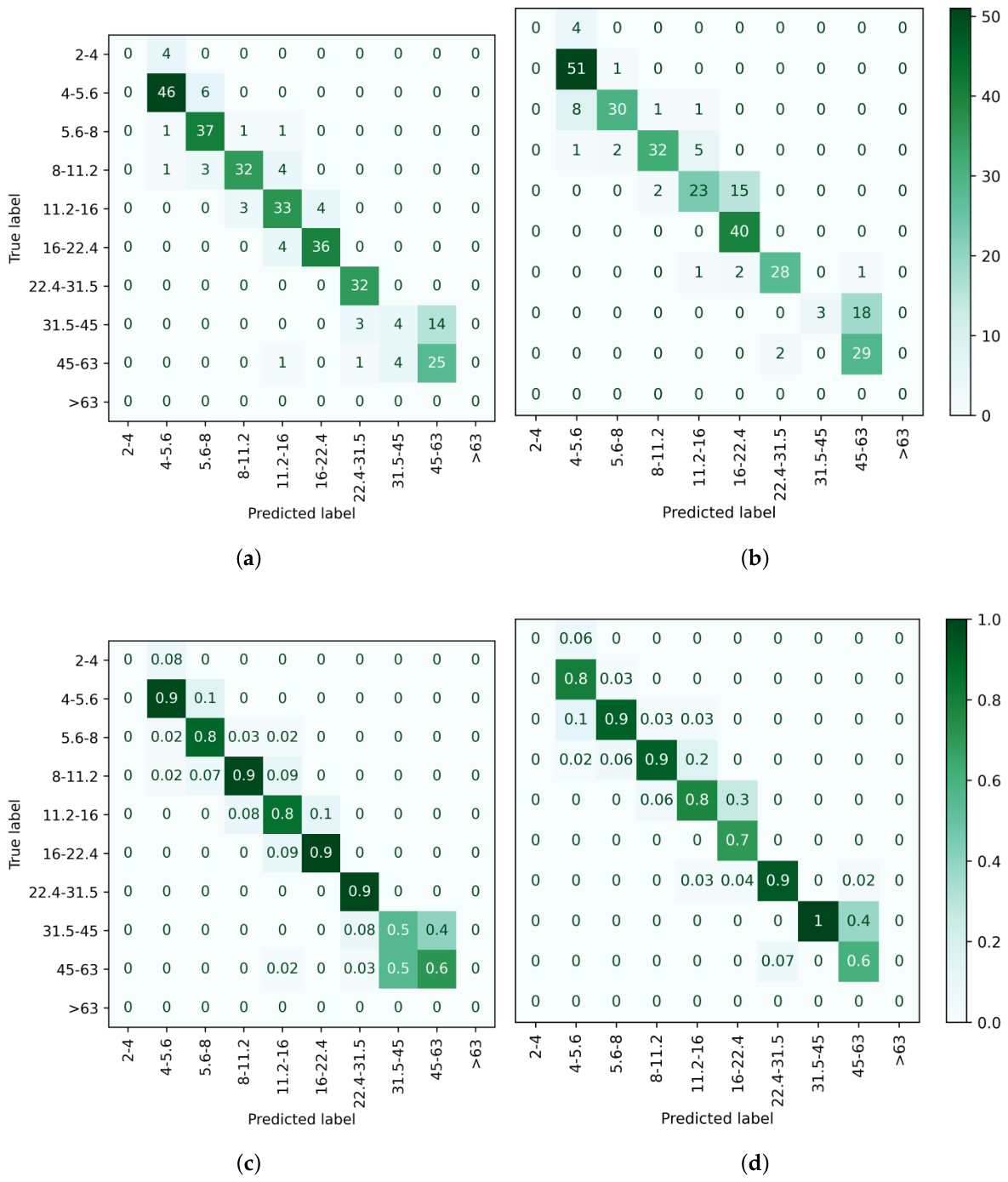

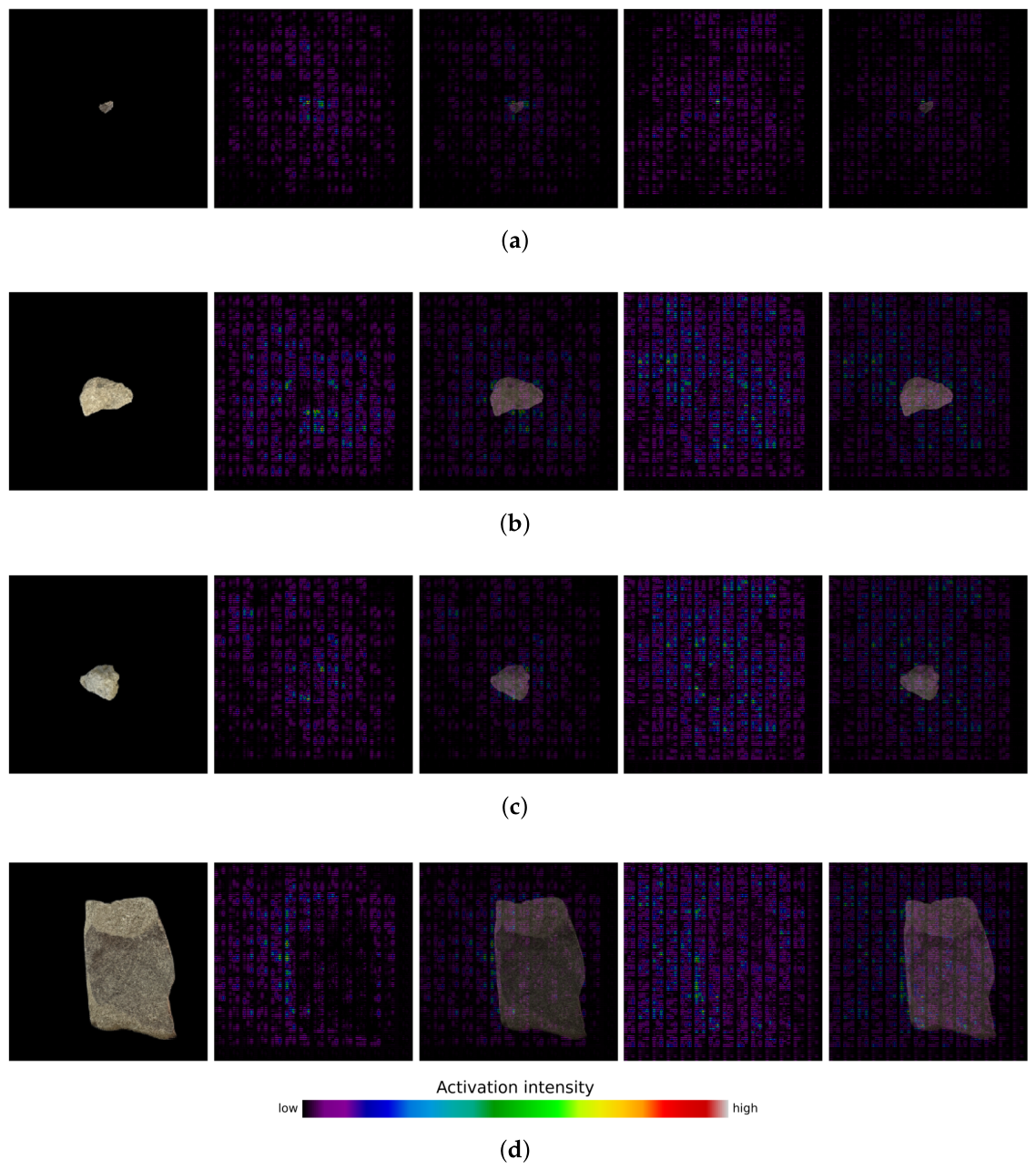

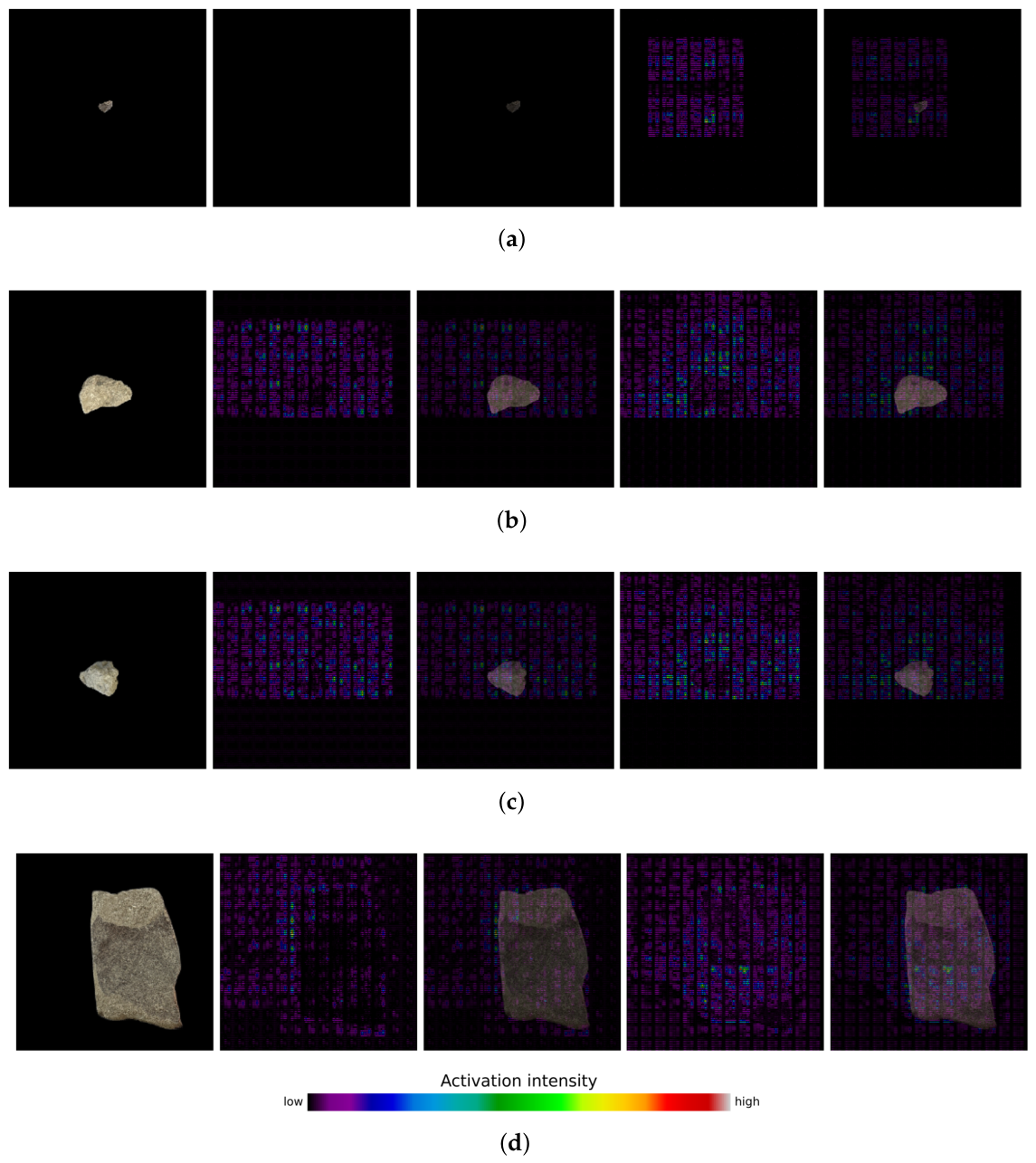

3.1. Model Training

3.2. Performance on Unseen Data

3.2.1. Classification

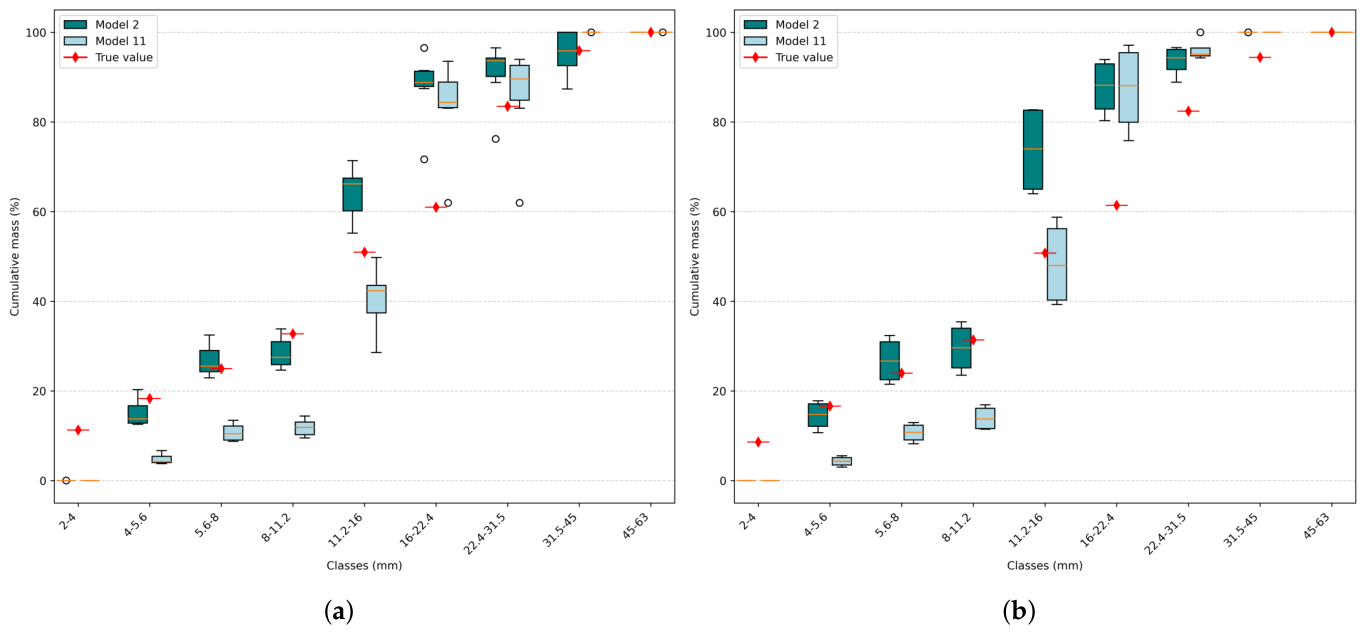

3.2.2. Regression

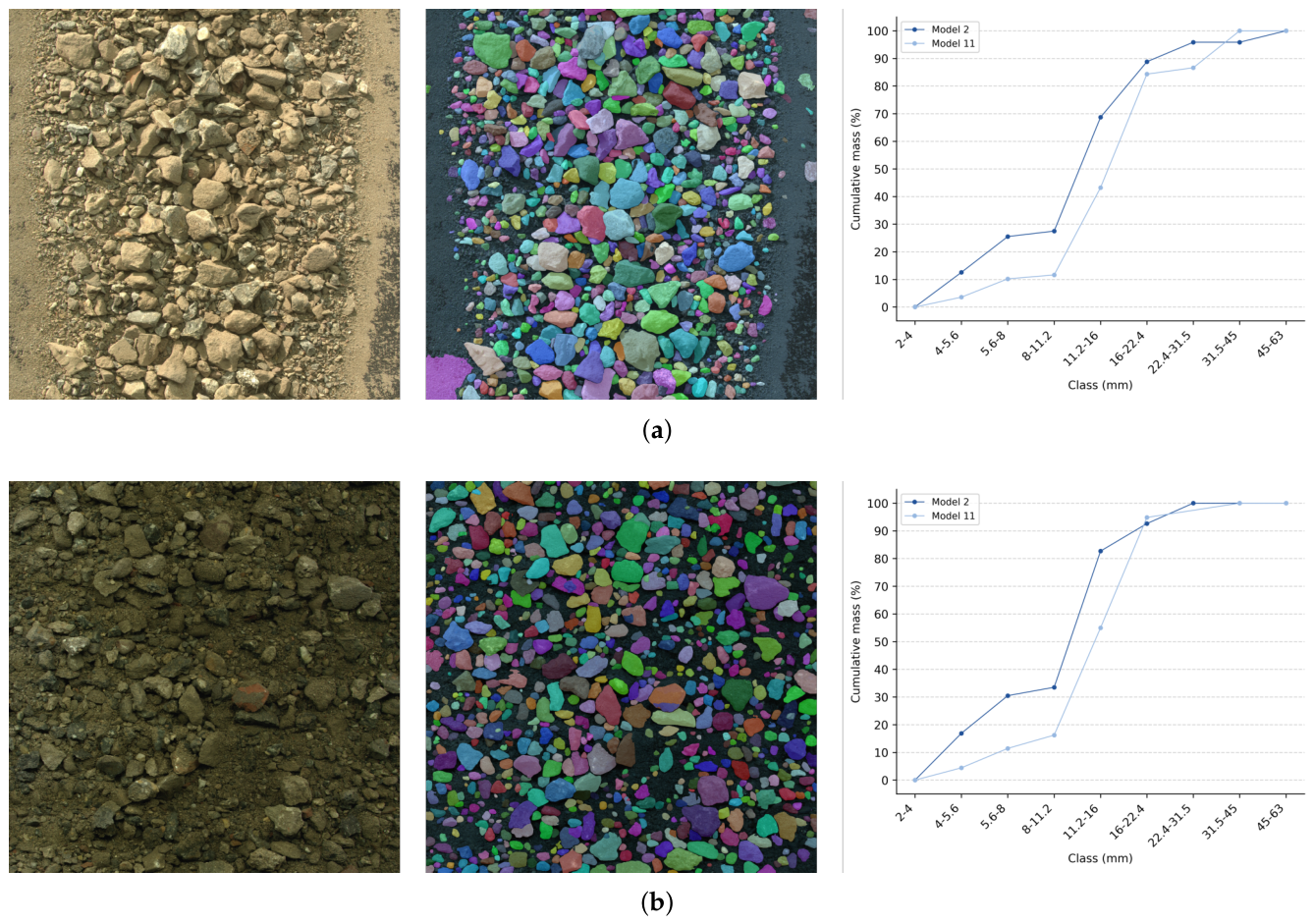

3.3. Performance on Full-Scale Plant Data

3.4. Limitations and Future Work

4. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| CDW | Construction and demolition waste |

| CDW-MT-CNN | Construction and building waste |

| multitask-convolutional neural network | |

| CEL | Cross-entropy loss |

| CMOS | Complementary metal-oxide-semiconductor |

| CNN | Convolutional neural network |

| CPU | Central processing unit |

| DSNU | Dark signal non-uniformity |

| GPS | Global positioning system |

| GPU | Graphics processing unit |

| MAE | Mean absolute error |

| MCC | Matthews correlation/Phi coefficient |

| MRI | Magnetic resonance imaging |

| PRNU | Photo response non-uniformity |

| PSD | Particle size distribution |

| RC | recycled concrete |

| ReLU | Rectified linear unit |

| RGB | Red-green-blue |

| RMSE | Root mean square error |

| TRL | Technology readiness level |

| wt% | Percentage by weight |

| 2D | Two-dimensional |

| 3D | Three-dimensional |

References

- Eurostat. Generation of waste by waste category, hazardousness and NACE Rev. 2 activity. [CrossRef]

- European Commission. EU construction sector: in transition towards a circular economy; Technical report; European Construction Sector Observatory, 2019. [Google Scholar]

- E3G. Renovate2Recover: How transformational are the National Recovery Plans for Buildings Renovation? E3G, Technical report. 2021. [Google Scholar]

- Global Property Guide News Team. Germany’s housing market remains strong.

- Bundesministerium für Wohnen; Stadtentwicklung und Bauwesen. Maßnahmenpaket der Bundesregierung.

- Statistisches Bundesamt. Bauschuttaufbereitungsanlagen: Deutschland, Jahre, Abfallarten.

- Tam, V.W.; Soomro, M.; Evangelista, A.C.J. A review of recycled aggregate in concrete applications (2000–2017). Construction and Building Materials 172, 272–292. [CrossRef]

- Choudhary, J.; Kumar, B.; Gupta, A. Utilization of solid waste materials as alternative fillers in asphalt mixes: A review. Construction and Building Materials 234, 117271. [CrossRef]

- Göbbels, L.; Feil, A.; Raulf, K.; Greiff, K. Current State of the Art and Potential for Construction and Demolition Waste Processing: A Scoping Review of Sensor-Based Quality Monitoring and Control for In- and Online Implementation in Production Processes. Sensors 25, 4401. [CrossRef] [PubMed]

- Kandlbauer, L.; Khodier, K.; Ninevski, D.; Sarc, R. Sensor-based Particle Size Determination of Shredded Mixed Commercial Waste based on two-dimensional Images. Waste Management 120, 784–794. [CrossRef]

- Di Maria, F.; Bianconi, F.; Micale, C.; Baglioni, S.; Marionni, M. Quality assessment for recycling aggregates from construction and demolition waste: An image-based approach for particle size estimation. Waste Management 48, 344–352. [CrossRef] [PubMed]

- Kroell, N.; Thor, E.; Göbbels, L.; Schönfelder, P.; Chen, X. Deep learning-based prediction of particle size distributions in construction and demolition waste recycling using convolutional neural networks on 3D laser triangulation data. Construction and Building Materials 466, 140214. [CrossRef]

- Bilodeau, M.; Gouveia, D.; Demers, A.; Di Feo, A. 3D free fall rock size sensor. Minerals Engineering 148. [CrossRef]

- Shimizu, H.; Fukuda, T.; Yabuki, N. Deep-learning Point Cloud Classification for Estimating the Weight of Single-material Construction and Demolition Waste of Unknown Shape. In Proceedings of the Proceedings of the 41st Conference on Education and Research in Computer Aided Architectural Design in Europe (eCAADe 2023), 2023; pp. 609–618. [Google Scholar] [CrossRef]

- Di Maria, A.; Eyckmans, J.; Van Acker, K. Downcycling versus recycling of construction and demolition waste: Combining LCA and LCC to support sustainable policy making. Waste Management 75, 3–21. [CrossRef]

- Einführung in die Kreislaufwirtschaft: Planung, Recht, Verfahren. In Einführung in die Kreislaufwirtschaft: Planung, Recht, Verfahren, 6. Auflage ed.; Kranert, M., Ed.; Springer Vieweg, 2024. [Google Scholar] [CrossRef]

- Göbbels, L.; Kroell, N.; Raulf, K. Towards an improvement of construction and demolition waste recycling: investigating the feasibility of using AI-based quality control using sensor-based monitoring of particle size distributions. In Global Guide of the Filtration and Separation Industry 2024-2026; Vulkan Verlag GmbH, 2025; pp. 124–130. [Google Scholar]

- Alimi, K.; Jin, R.; Nguyen, N.; Nguyen, Q.; Hosking, L. Exploring artificial intelligence applications in construction and demolition waste management: a review of existing literature. Journal of Science and Transport Technology 5, 104–136. [CrossRef]

- Hussain, R.; Alican Noyan, M.; Woyessa, G.; Retamal Marín, R.R.; Antonio Martinez, P.; Mahdi, F.M.; Finazzi, V.; Hazlehurst, T.A.; Hunter, T.N.; Coll, T.; et al. An ultra-compact particle size analyser using a CMOS image sensor and machine learning. Light: Science & Applications 9, 21. [CrossRef] [PubMed]

- Idroas, M.; Najib, S.; Ibrahim, M. Imaging particles in solid/air flows using an optical tomography system based on complementary metal oxide semiconductor area image sensors. Sensors and Actuators, B: Chemical 220, 75–80. [CrossRef]

- Kronenwett, F.; Maier, G.; Leiss, N.; Gruna, R.; Thome, V.; Längle, T. Sensor-based characterization of construction and demolition waste at high occupancy densities using synthetic training data and deep learning. Waste Management & Research: The Journal for a Sustainable Circular Economy 42, 788–796. [CrossRef]

- Beskopylny, A.N.; Shcherban’, E.M.; Stel’makh, S.A.; Razveeva, I.; Mailyan, A.L.; Elshaeva, D.; Chernil’nik, A.; Nikora, N.I.; Onore, G. Crushed Stone Grain Shapes Classification Using Convolutional Neural Networks. Buildings 1982, 15. [Google Scholar] [CrossRef]

- Deutsches Institut für Normung e.V. DIN EN 13242 Aggregates for unbound and hydraulically bound materials for use in civil engineering work and road construction.

- Deutsches Institut für Normung e.V. Tests for geometrical properties of aggregates - Part 1: Determination of particle size distribution - Sieving method.

- Deutsches Institut für Normung e.V. English version EN 13285; Unbound mixtures Specifications. 2018. [CrossRef]

- Kroell, N.; Schönfelder, P.; Chen, X.; Johnen, K.; Feil, A.; Greiff, K. Sensorbasierte Vorhersage von Korngrößenverteilungen durch Machine Learning Modelle auf Basis von 3D- Lasertriangulationsmessungen. In Proceedings of the 11. DGAW-Wissenschaftskongress "Abfall- und Ressourcenwirtschaft", 2022. [Google Scholar]

- Pacheco, J.; De Brito, J. Recycled Aggregates Produced from Construction and Demolition Waste for Structural Concrete: Constituents, Properties and Production. Materials 14, 5748. [CrossRef]

- Shorten, C.; Khoshgoftaar, T.M. A survey on Image Data Augmentation for Deep Learning. Journal of Big Data 6, 60. [CrossRef]

- Wodzinski, M.; Kwarciak, K.; Daniol, M.; Hemmerling, D. Improving deep learning-based automatic cranial defect reconstruction by heavy data augmentation: From image registration to latent diffusion models. Computers in Biology and Medicine 182, 109129. [CrossRef]

- Perez, L.; Wang, J. The Effectiveness of Data Augmentation in Image Classification using Deep Learning. [cs]. [CrossRef]

- Mumuni, A.; Mumuni, F. Data augmentation: A comprehensive survey of modern approaches. Array 16, 100258. [CrossRef]

- Islam, T.; Hafiz, M.S.; Jim, J.R.; Kabir, M.M.; Mridha, M. A systematic review of deep learning data augmentation in medical imaging: Recent advances and future research directions. Healthcare Analytics 5, 100340. [CrossRef]

- Howard, A.; Sandler, M.; Chu, G.; Chen, L.C.; Chen, B.; Tan, M.; Wang, W.; Zhu, Y.; Pang, R.; Vasudevan, V.; et al. Searching for MobileNetV3. [cs]. [CrossRef]

- Krizhevsky, A.; Sutskever, I.; Hinton, G.E. ImageNet classification with deep convolutional neural networks. Commun. ACM 60, 84–90. [CrossRef]

- Wang, H.; Raj, B. On the Origin of Deep Learning, [1702.07800 [cs]]. version: 4. [CrossRef]

- Simonyan, K.; Zisserman, A. Very Deep Convolutional Networks for Large-Scale Image Recognition. 1409.1556 [cs]. [CrossRef]

- Szegedy; Liu, C.; Wei; Jia, Yangqing; Sermanet, P.; Reed, S.; Anguelov, D.; Erhan, D.; Vanhoucke, V.; Rabinovich, A. Going deeper with convolutions. In Proceedings of the 2015 IEEE Conference on Computer Vision and Pattern Recognition (CVPR); IEEE, 2015; pp. 1–9. [Google Scholar] [CrossRef]

- Chhapariya, K.; Benoit, A.; Buddhiraju, K.M.; Kumar, A. A Multitask Deep Learning Model for Classification and Regression of Hyperspectral Images: Application to the large-scale dataset. [cs]. [CrossRef]

- Padmapriya, K.; Periyathambi, E. Joint classification and regression with deep multi task learning model using conventional based patch extraction for brain disease diagnosis. PeerJ Computer Science 10, e2538. [CrossRef]

- Wilson, A.C.; Roelofs, R.; Stern, M.; Srebro, N.; Recht, B. The Marginal Value of Adaptive Gradient Methods in Machine Learning. In Proceedings of the Advances in Neural Information Processing Systems, 2017; Curran Associates, Inc.; Vol. 30. [Google Scholar]

- Stoica, P.; Babu, P. Pearson–Matthews correlation coefficients for binary and multinary classification. Signal Processing 222, 109511. [CrossRef]

- Branco, P.; Torgo, L.; Ribeiro, R. A Survey of Predictive Modelling under Imbalanced Distributions. Version Number 2. [CrossRef]

- Zhou, Y.; Thielmann, P.; Chamoli, A.; Mirbach, B.; Stricker, D.; Raphael Rambach, J. ParticleSAM: Small Particle Segmentation for Material Quality Monitoring in Recycling Processes. Proceedings of the Proceedings of the 33rd European Signal Processing Conference (EUSIPCO 2025) IEEE 2025, Vol. 33, p. tbd. [Google Scholar]

| Class (mm) |

Nr. particles (-) |

Particle weight (g) |

| 2-4 | 16 | 0.051 ± 0.033 |

| 4-5.6 | 260 | 0.118 ± 0.045 |

| 5.6-8 | 200 | 0.428 ± 0.219 |

| 8-11.2 | 199 | 1.197 ± 0.455 |

| 11.2-16 | 200 | 3.256 ± 1.437 |

| 16-22.4 | 200 | 8.368 ± 3.808 |

| 22.4-31.5 | 157 | 28.468 ± 15.919 |

| 31.5-45 | 103 | 73.059 ± 24.618 |

| 45-63 | 151 | 98.875 ± 42.300 |

| Accuracy | MCC | RMSE | |

| Model | |||

| Model 1 | 0.882 | 0.866 | 13.706 |

| Model 1 (s) | 0.857 | 0.836 | 13.420 |

| Model 2 | 0.876 | 0.858 | 13.317 |

| Model 2(s) | 0.857 | 0.837 | 12.580 |

| Model 3 | 0.838 | 0.815 | 13.233 |

| Model 3 (s) | 0.838 | 0.815 | 13.182 |

| Model 4 | 0.872 | 0.853 | 13.922 |

| Model 4 (s) | 0.840 | 0.817 | 13.498 |

| Model 5 | 0.856 | 0.835 | 14.031 |

| Model 5 (s) | 0.849 | 0.828 | 12.614 |

| Model 6 | 0.842 | 0.819 | 12.499 |

| Model 6 (s) | 0.834 | 0.811 | 12.502 |

| Model 7 | 0.970 | 0.965 | 5.408 |

| Model 7 (s) | 0.976 | 0.972 | 5.839 |

| Model 8 | 0.985 | 0.983 | 4.040 |

| Model 8 (s) | 0.987 | 0.985 | 4.681 |

| Model 9 | 0.964 | 0.959 | 4.538 |

| Model 9 (s) | 0.961 | 0.955 | 5.680 |

| Model 10 | 0.958 | 0.952 | 4.814 |

| Model 10 (s) | 0.961 | 0.955 | 5.352 |

| Model 11 | 0.981 | 0.978 | 3.983 |

| Model 11 (s) | 0.985 | 0.982 | 3.881 |

| Model 12 | 0.950 | 0.943 | 4.712 |

| Model 12 (s) | 0.948 | 0.941 | 5.124 |

| Class (mm) |

Mean weight (g) |

RMSE model 2 (true class) |

RMSE model 2 (predicted class) |

RMSE model 11 (true class) |

RMSE model 11 (predicted class) |

| 2-4 | 0.04 ± 0.03 | 0.382 | - | 0.171 | - |

| 4-5.6 | 0.11 ± 0.05 | 0.316 | 0.322 | 0.118 | 0.125 |

| 5.6-8 | 0.44 ± 0.22 | 0.201 | 0.245 | 0.198 | 0.230 |

| 8-11.2 | 1.17 ± 0.48 | 0.655 | 0.634 | 0.540 | 0.462 |

| 11.2-16 | 3.58 ± 1.75 | 1.287 | 1.537 | 1.020 | 0.905 |

| 16-22.4 | 8.47 ± 3.78 | 6.214 | 6.153 | 2.810 | 2.664 |

| 22.4-31.5 | 26.11 ± 19.67 | 10.615 | 11.377 | 13.208 | 13.444 |

| 31.5-45 | 75.66 ± 23.26 | 28.071 | 25.487 | 43.318 | 22.511 |

| 45-63 | 104.25 ± 50.78 | 35.710 | 35.743 | 35.108 | 39.840 |

| Class (mm) |

MAE model 2 (true class) |

MAE model 2 (predicted class) |

MAE model 11 (true class) |

MAE model 11 (predicted class) |

| 2-4 | 0.381 | - | 0.170 | - |

| 4-5.6 | 0.312 | 0.317 | 0.094 | 0.099 |

| 5.6-8 | 0.143 | 0.173 | 0.159 | 0.188 |

| 8-11.2 | 0.518 | 0.491 | 0.374 | 0.332 |

| 11.2-16 | 1.026 | 1.146 | 0.797 | 0.714 |

| 16-22.4 | 4.724 | 4.614 | 2.194 | 1.999 |

| 22.4-31.5 | 7.358 | 8.007 | 8.867 | 8.843 |

| 31.5-45 | 22.439 | 21.381 | 37.308 | 20.288 |

| 45-63 | 27.864 | 28.446 | 28.450 | 33.546 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).