Submitted:

13 April 2026

Posted:

14 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. The Emergence and Fragmentation of AI Disclosure Regimes

2.1. Exploratory Empirical Probe: Disclosure Practices in AI-Assisted Research

2.1.1. Data and Sampling Approach

- Indexed in leading databases (Scopus and Web of Science).

- Published in journals with explicit AI disclosure policies

- Exposure to generative AI use (due to topic, method, or writing style).

2.1.2. Prevalence of AI Disclosure

2.1.3. Depth Variation in Disclosure

2.1.4. Evidence of Paper Compliance and Implications for the AIDG

- High non-disclosure rate (82.5%) indicates weak enforcement.

- Dominance of symbolic disclosures (57%) shows minimal compliance strategies.

- Lack of standardisation hinders study comparison.

- Does not enable verification.

- Fails to represent epistemic influence.

- Lacks support for reproducibility.

- Non-disclosure despite probable AI involvement (concealed AI influence).

- Under-specified disclosures (misrepresentation of extent).

- Absence of process documentation (irretrievable knowledge pathways).

3. Paper Compliance in AI Disclosures

3.1. Defining Paper Compliance in AI Disclosure

3.2. Layers of Paper Compliance

- 1)

- Symbolic Compliance

- Lacks specificity concerning tools, prompts, or outputs.

- Do not distinguish between levels of AI involvement.

- Mainly serve to indicate compliance with policy.

- 2)

- Narrative Compliance

- They remain self-reported and non-standardised.

- They cannot be independently verified, and

- AI is often framed as unimportant, despite its real influence.

- 3)

- Strategic Compliance

- Minimise the acknowledgement of AI involvement to prevent scrutiny or stigmatisation.

- Exaggerate minimal usage to indicate transparency.

- Selectively disclose specific applications while omitting others.

3.3. Mechanisms Enabling Paper Compliance

- a)

- Self-Reporting Without Verification

- Whether AI was utilised

- How it was applied

- The extent of its impact

- b)

- Absence of Standardisation

- Disclosure content varies significantly across different journals and academic disciplines.

- Key dimensions such as prompt design, model version, and iteration processes are seldom documented.

- Comparability between studies is limited.

- c)

- Epistemic Opacity of AI Systems

- Outputs cannot be reliably replicated.

- Intermediate steps, like prompt refinement, are often not documented.

- Model behaviour may change over time.

3.4. Paper Compliance as Performative Transparency

- Compliance is now judged by the mere existence of disclosures rather than their actual content.

- Reviewers and readers are forced to interpret incomplete and inconsistent information regarding AI’s role.

- Disclosure can end up confusing rather than clarifying the basis of research.

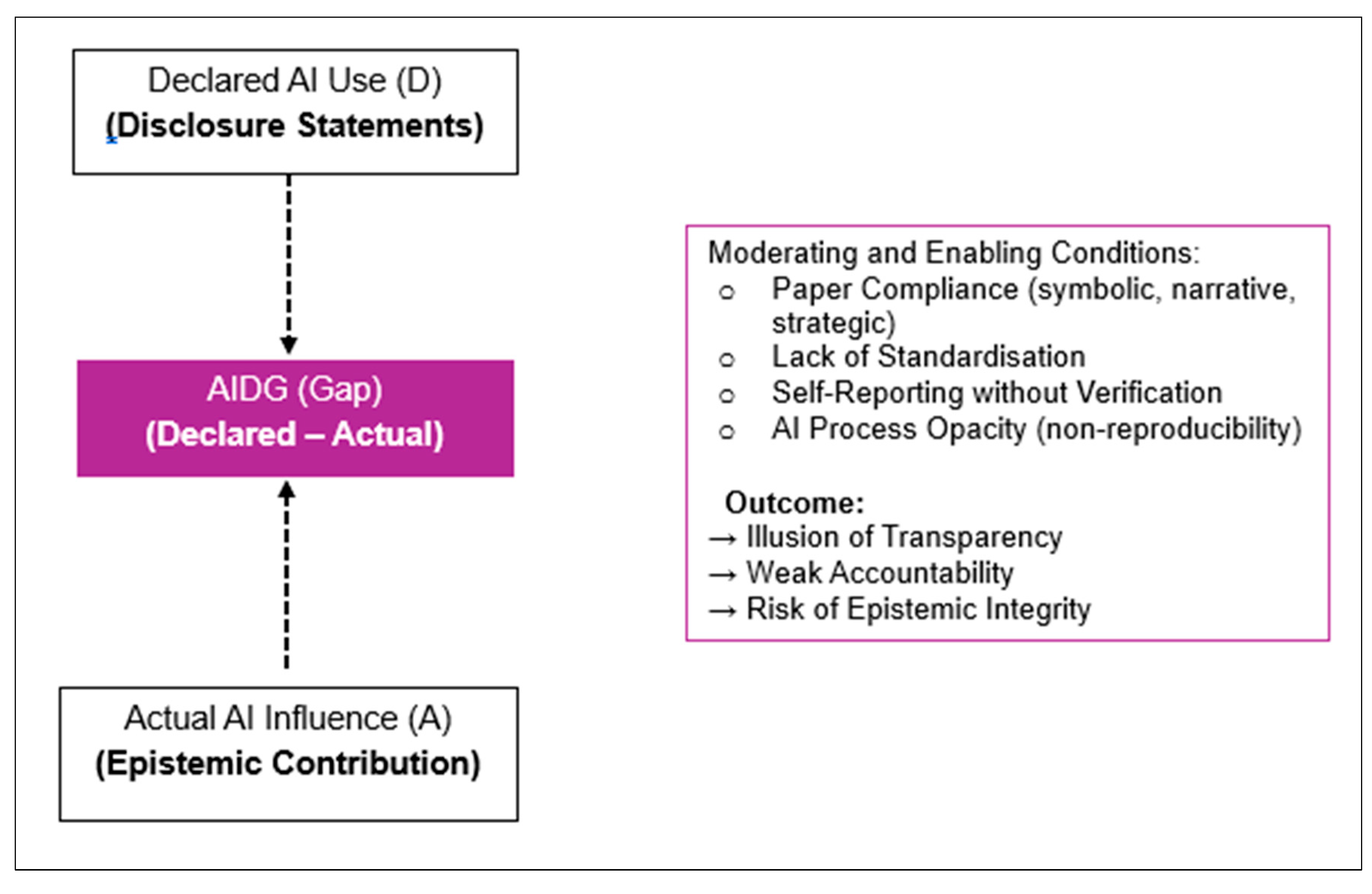

3.5. Paper Compliance and the AI Disclosure Integrity Gap (AIDG)

4. The AI Disclosure Integrity Gap (AIDG)

- D = Declared AI use (as reported in disclosure statements)

- A = Actual AI influence (epistemic contribution to the research output)

- Linguistic patterns of AI-generated text

- Inconsistencies between methods and outputs

- Absence of traceable research processes

4.1. From Transparency to Integrity Risks

4.2. Theoretical Propositions

- P1 — Dominance of Symbolic Compliance

- P2 — Incentive-Driven Misrepresentation

- P3 — Structural Emergence of the AIDG

- P4 — Process Opacity as Core Driver

- P5 — Traceability as Corrective Mechanism

- P6 — Illusion of Transparency

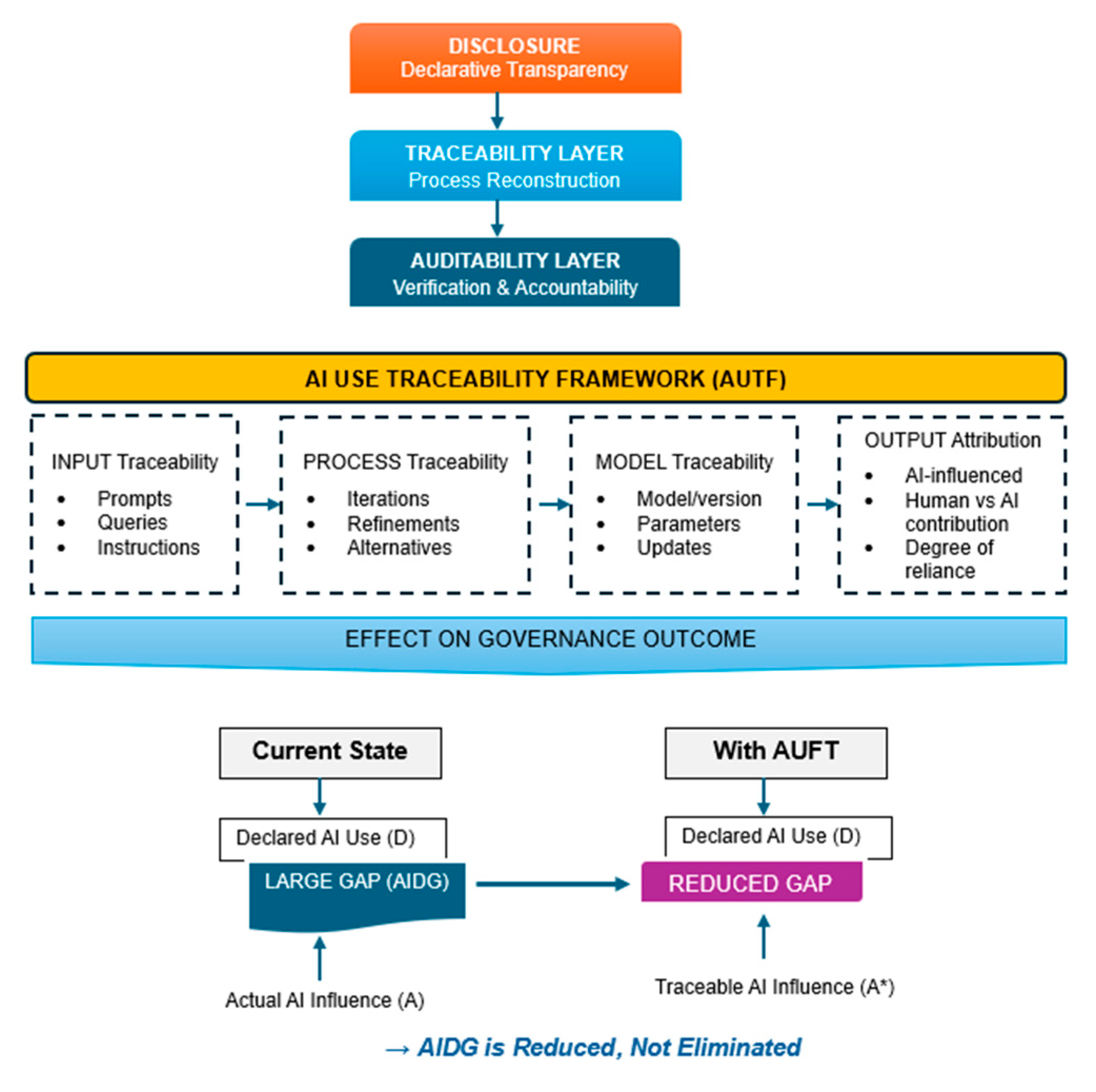

5. The Limits of Disclosure-Based Governance: Towards Traceability

5.1. From Transparency to Traceability

- Process-oriented (captures sequences of interaction)

- Verifiable (allows external scrutiny)

- Reconstructive (enables partial reproducibility)

5.2. The AI Use Traceability Framework (AUTF)

- (1)

-

Input Traceability: It captures:

- Prompt logs (initial and iterative)

- Task framing and constraints

- Human–AI interaction sequences

- (2)

-

Process Traceability: Records and documents AI interaction, such as:

- Iteration History:

- Generated and discarded alternative outputs

- Human editing and refinements

- (3)

-

Model Traceability: Specifies the technical configuration of the AI system used, including:

- Model type and version

- Training updates (where relevant)

- Tool-specific parameters

- (4)

-

Output Attribution: Connects AI contributions to specific sections of the research output.

- Sections Affected by AI

- Differentiation between AI-generated and human-edited content

- Level of reliance on AI outputs

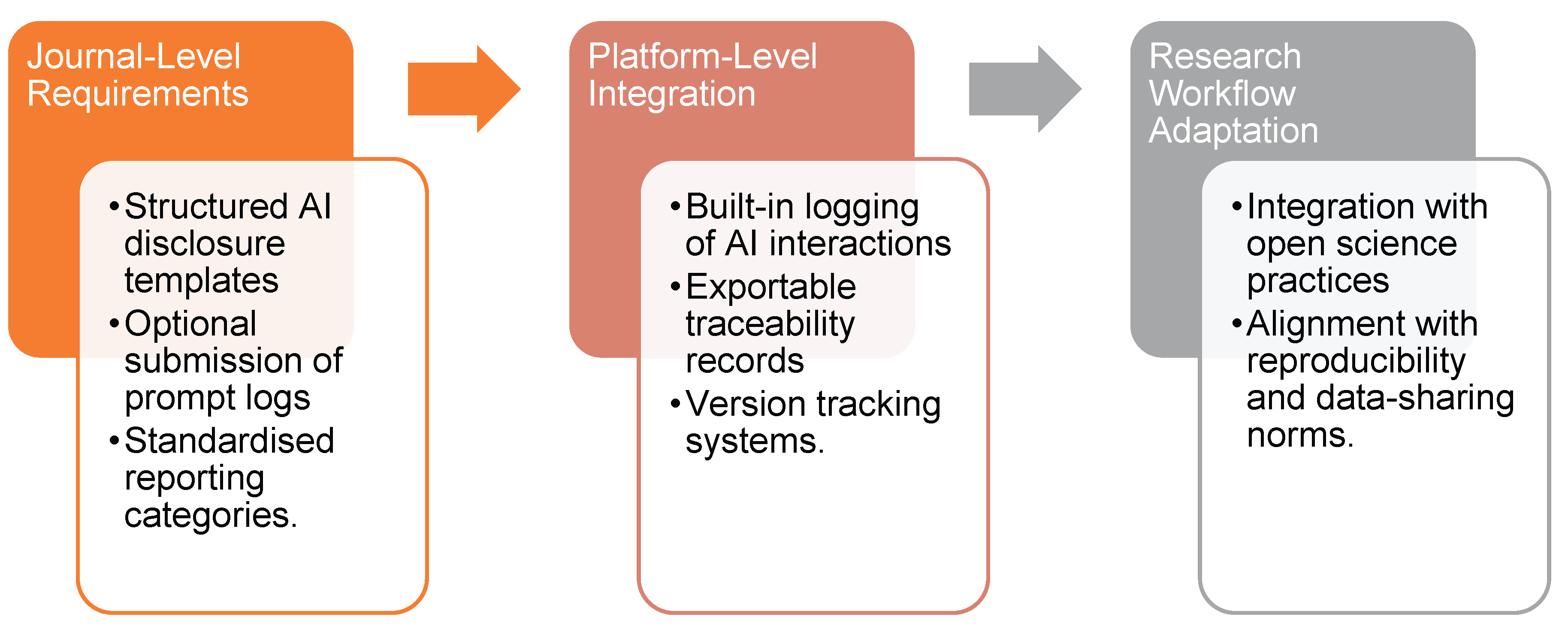

5.3. AUTF as a Governance Layer

5.4. Implementation Pathways

5.5. Feasibility and Adoption Constraints

Contribution to the Literature

6. Implications

7. Conclusions

Appendix A. Illustrative Examples of AI Disclosure Practices (Paraphrased)

- ○

- No tool identified

- ○

- No indication of scope or sections affected

- ○

- No distinction between editing and generation

- ○

- No process or iteration information

- ○

- Tool identified

- ○

- General functional role described

- ○

- Human oversight asserted

- ○

- Model and version specified

- ○

- Prompt structure described

- ○

- Iterative interaction acknowledged

- ○

- Partial documentation provided

References

- Bender, E. M., T. Gebru, A. McMillan-Major, and S. Shmitchell. 2021. On the Dangers of Stochastic Parrots: Can Language Models Be Too Big? In Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency (FAccT’21). Association for Computing Machinery: pp. 610–623. [Google Scholar] [CrossRef]

- Cleland, J., E. Driessen, K. Masters, L. Lingard, and L. A. Maggio. 2026. When and how to disclose AI use in academic publishing: AMEE Guide No.192. Medical Teacher 48, 4: 542–553. [Google Scholar] [CrossRef] [PubMed]

- COPE. 2023. COPE: Committee on Publication Ethics. Available online: https://publicationethics.org/news-opinion/cope-2023.

- Dwivedi, Y. K.;, and et al. 2023. “So What If ChatGPT Wrote it?” Multidisciplinary Perspectives on opportunities, Challenges and Implications of Generative Conversational AI for research, Practice and Policy. International Journal of Information Management 71: 102642. [Google Scholar] [CrossRef]

- Elsevier. 2024. Www.elsevier.com. Available online: https://www.elsevier.com/about/policies-and-standards/generative-ai-policies-for-journals.

- Elsevier. 2025. Www.elsevier.com. Available online: https://www.elsevier.com/about/policies-and-standards/generative-ai-policies-for-journals?utm.

- Floridi, L., J. Cowls, M. Beltrametti, R. Chatila, P. Chazerand, V. Dignum, C. Luetge, R. Madelin, U. Pagallo, F. Rossi, B. Schafer, P. Valcke, and E. Vayena. 2018. AI4People—An Ethical Framework for a Good AI Society: Opportunities, Risks, Principles, and Recommendations. Minds and Machines 28, 4: 689–707. [Google Scholar] [CrossRef] [PubMed]

- He, Y., and Y. Bu. 2025. Academic journals’ AI policies fail to curb the surge in AI-assisted academic writing. arXiv arXiv:2512.06705. [Google Scholar] [CrossRef] [PubMed]

- Jones, N. 2026. Half of social-science studies fail replication test in years-long project. Nature. [Google Scholar] [CrossRef] [PubMed]

- Kasneci, E., K. Sessler, S. Küchemann, M. Bannert, D. Dementieva, F. Fischer, U. Gasser, and et al. 2023. ChatGPT for good? on Opportunities and Challenges of Large Language Models for Education. Learning and Individual Differences 103: 102274. [Google Scholar] [CrossRef]

- Liang, W., Y. Zhang, Z. Wu, H. Lepp, W. Ji, X. Zhao, H. Cao, S. Liu, S. He, Z. Huang, D. Yang, C. Potts, C. D. Manning, and J. Zou. 2025. Quantifying large language model usage in scientific papers. Nature Human Behaviour. [Google Scholar] [CrossRef] [PubMed]

- Meyer, J. W., and B. Rowan. 1977. Institutionalized Organizations: Formal Structure as Myth and Ceremony. American Journal of Sociology 83, 2: 340–363. Available online: http://www.jstor.org/stable/2778293. [CrossRef]

- OECD. 2019. The OECD Artificial Intelligence (AI) Principles. Oecd.ai. OECD: Available online: https://oecd.ai/en/ai-principles.

- Perkins, M., L. Furze, J. Roe, and J. MacVaugh. 2024. The Artificial Intelligence Assessment Scale (AIAS): A Framework for Ethical Integration of Generative AI in Educational Assessment. Journal of University Teaching and Learning Practice 21, 06. [Google Scholar] [CrossRef]

- Power, M. K. 2003. Auditing and the Production of Legitimacy. Accounting, Organizations and Society 28, 4: 379–394. [Google Scholar] [CrossRef]

- Stodden, V., M. McNutt, D. H. Bailey, E. Deelman, Y. Gil, B. Hanson, M. A. Heroux, J. P. A. Ioannidis, and M. Taufer. 2016. Enhancing reproducibility for computational methods. Science 354, 6317: 1240–1241. [Google Scholar] [CrossRef] [PubMed]

- Stokel-Walker, C., and R. Van Noorden. 2023. What ChatGPT and generative AI mean for science. Nature 614, 7947: 214–216. [Google Scholar] [CrossRef] [PubMed]

- Stone, J. A. M. 2025. AI Disclosure Policies in Scientific Publishing and Medical Acupuncture. Medical Acupuncture 37, 5: 339–340. [Google Scholar] [CrossRef] [PubMed]

- Suchikova, Y., N. Tsybuliak, J. A. Teixeira da Silva, and S. Nazarovets. 2025. GAIDeT (Generative AI Delegation Taxonomy): A taxonomy for humans to delegate tasks to generative artificial intelligence in scientific research and publishing. Accountability in Research, 1–27. [Google Scholar] [CrossRef] [PubMed]

- Taylor & Francis. 2024. AI Policy—Taylor & Francis. August 20, Available online: https://taylorandfrancis.com/our-policies/ai-policy/?utm.

- UNESCO. 2022. Recommendation on the Ethics of Artificial Intelligence. Unesco.org. Available online: https://unesdoc.unesco.org/ark:/48223/pf0000381137.

- Wiley. 2024. AI guidelines for researchers. Wiley.com. Available online: https://www.wiley.com/en-nl/publish/article/ai-guidelines/.

- Yoo, J.-H. 2025. Defining the Boundaries of AI Use in Scientific Writing: A Comparative Review of Editorial Policies. Journal of Korean Medical Science 40, 23. [Google Scholar] [CrossRef] [PubMed]

| Category | Description | Number of Papers | Percentage |

|---|---|---|---|

| No Disclosure | No mention of AI use despite likely applicability | 66 | 82.5% |

| Symbolic Disclosure | Minimal, generic statement (e.g., “AI used for language editing”) | 8 | 10.0% |

| Narrative Disclosure | Expanded description of AI use, but non-standardised and non-verifiable | 5 | 6.25% |

| Traceable Disclosure | Structured, process-oriented disclosure enabling partial reconstruction | 1 | 1.25% |

| Total | 80 | 100% |

| Type of Disclosure | Description | Frequency |

|---|---|---|

| Symbolic disclosure | Minimal, generic statement with no detail | 8 |

| Narrative disclosure | Expanded description of AI use, but non-standardised | 5 |

| Traceable disclosure | Structured, process-oriented detail (rare) | 1 |

| Construct | Superficially Similar To | Key Difference | Why Existing Frameworks Are Insufficient |

|---|---|---|---|

| Paper Compliance | Symbolic compliance; Institutional decoupling (Meyer & Rowan, 1977; Power, 2003) | Focuses on epistemic representation in AI-assisted research, where disclosure not only signals compliance but obscures the role of AI in knowledge production | Traditional frameworks assume underlying processes remain intact but misaligned; they do not account for AI-induced opacity, where processes themselves become partially irrecoverable |

| AI Disclosure Integrity Gap (AIDG) | Reporting bias; Measurement error | Captures structural divergence between declared AI use and actual epistemic influence, not just inaccuracies in reporting | Conventional approaches assume observable and verifiable underlying processes; AIDG arises because AI processes are iterative, distributed, and non-reconstructable ex post |

| AI Use Traceability Framework (AUTF) | Reproducibility frameworks; Data provenance | Introduces process-level traceability across input, interaction, model, and output stages | Existing reproducibility models assume stable inputs and deterministic outputs, which do not hold in AI-assisted research environments |

| Proposition | Key Construct(s) | Measurable Variable(s) | Proxy/Indicator | Empirical Approach (Example) |

|---|---|---|---|---|

| P1: Symbolic compliance dominance | Disclosure depth; Paper compliance | Disclosure depth score (ordinal: none → symbolic → narrative → traceable) | Content coding of disclosure statements across articles | Manual coding or NLP-based classification of journal publications |

| P2: Incentive-driven misrepresentation | Perceived reputational risk; Disclosure distortion | Disclosure accuracy vs perceived evaluation risk | Survey responses (e.g., perceived stigma, originality concerns, career risk) | Survey or experimental vignette study |

| P3: Structural emergence of AIDG | AIDG magnitude | Difference between declared AI use and inferred AI involvement | AI-generated text probability vs disclosure presence | Text analysis (AI detection tools) combined with disclosure comparison |

| P4: Process opacity as driver | Process opacity; Verifiability | Presence/absence of process documentation | Availability of prompt logs, iteration records, methodological detail | Content analysis of methods sections and Supplementary Materials |

| P5: Traceability as corrective mechanism | Traceability level; AIDG magnitude | Traceability index vs disclosure gap | Presence of model versioning, prompt logs, output attribution | Regression analysis: traceability → reduction in AIDG |

| P6: Illusion of transparency | Perceived transparency; Epistemic accountability | Perceived transparency vs actual disclosure quality (AIDG proxy) | Survey ratings vs objectively coded disclosure depth | Experimental study comparing reader perception with measured disclosure quality |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the author. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.