Submitted:

12 April 2026

Posted:

14 April 2026

You are already at the latest version

Abstract

Keywords:

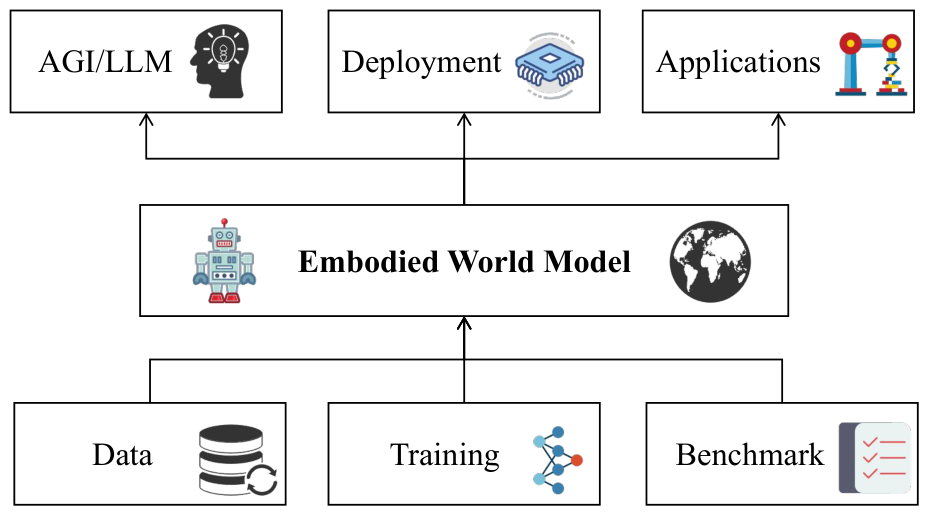

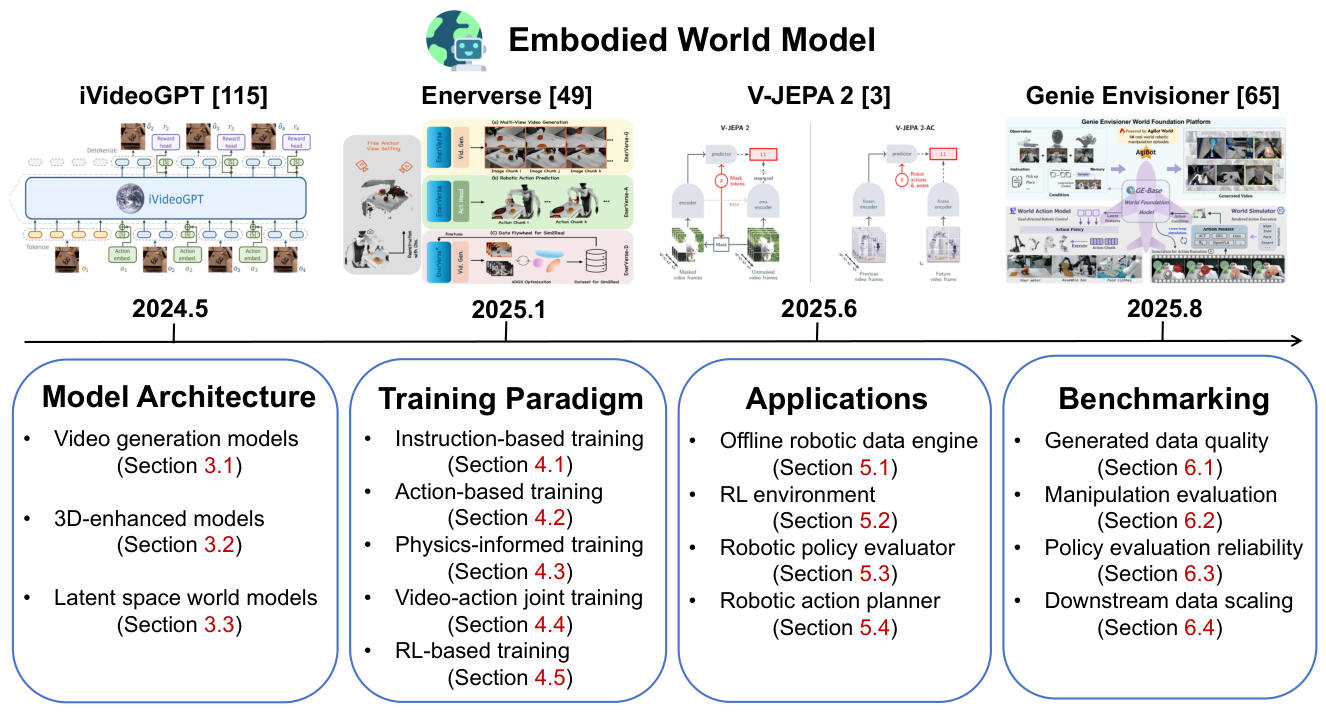

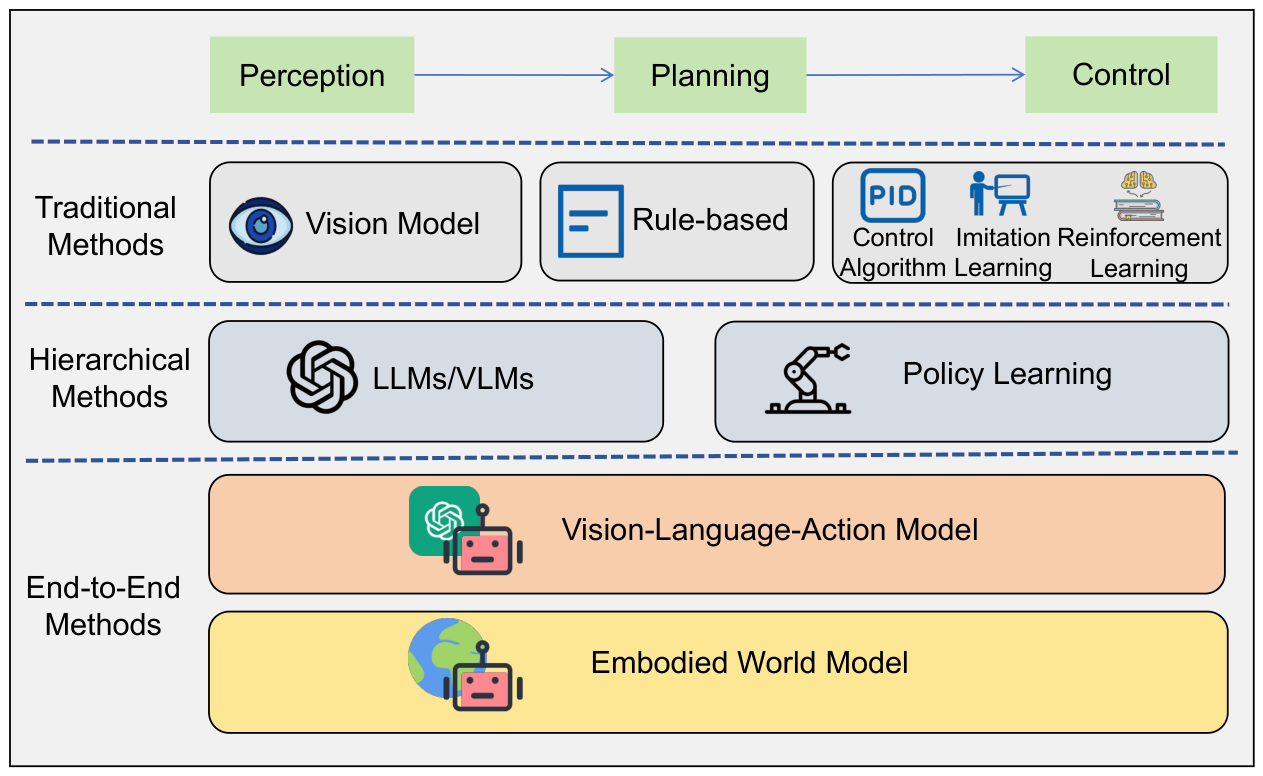

1. Introduction

- We provide a systematic and up-to-date review of the rapidly developing research on embodied world models, summarizing the significant value of world models for embodied agents.

- We propose a novel technical taxonomy that hierarchically organizes the field into model architectures, training methodologies, application scenarios and evaluation approaches, providing researchers with a clear technical roadmap.

- We highlight future research directions and trends of embodied world models, along with promising future research questions, to further inspire subsequent research in the community.

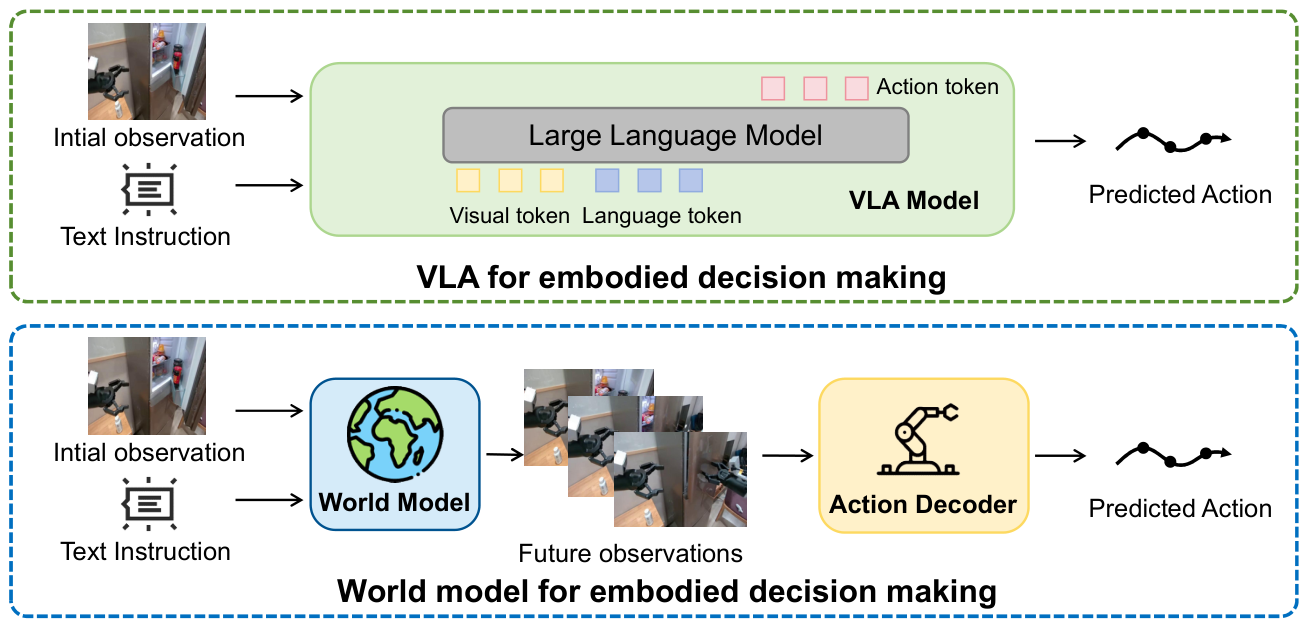

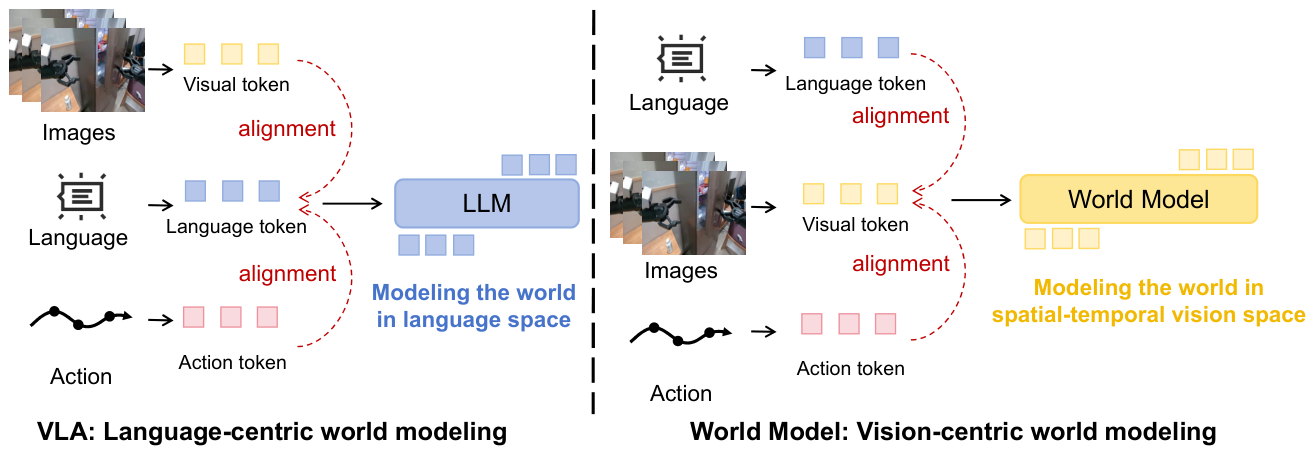

2. Background

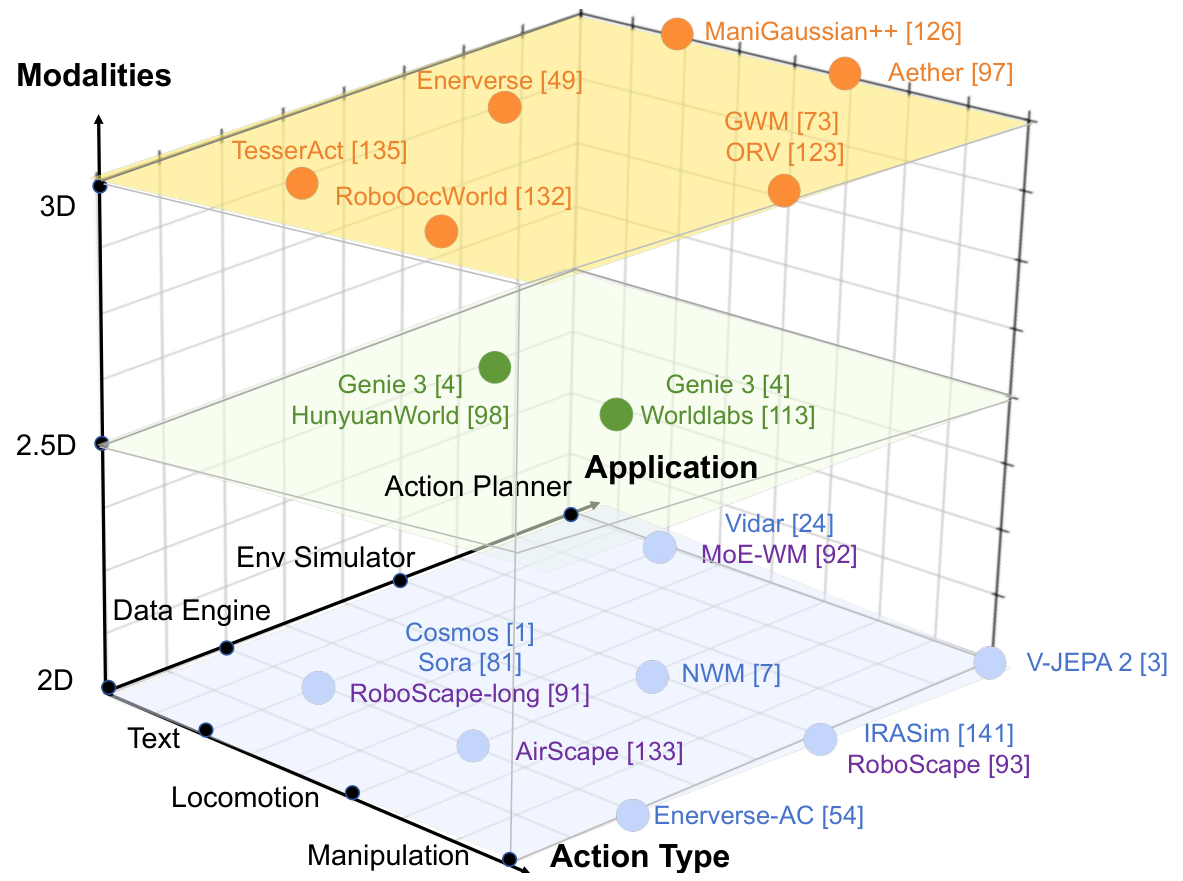

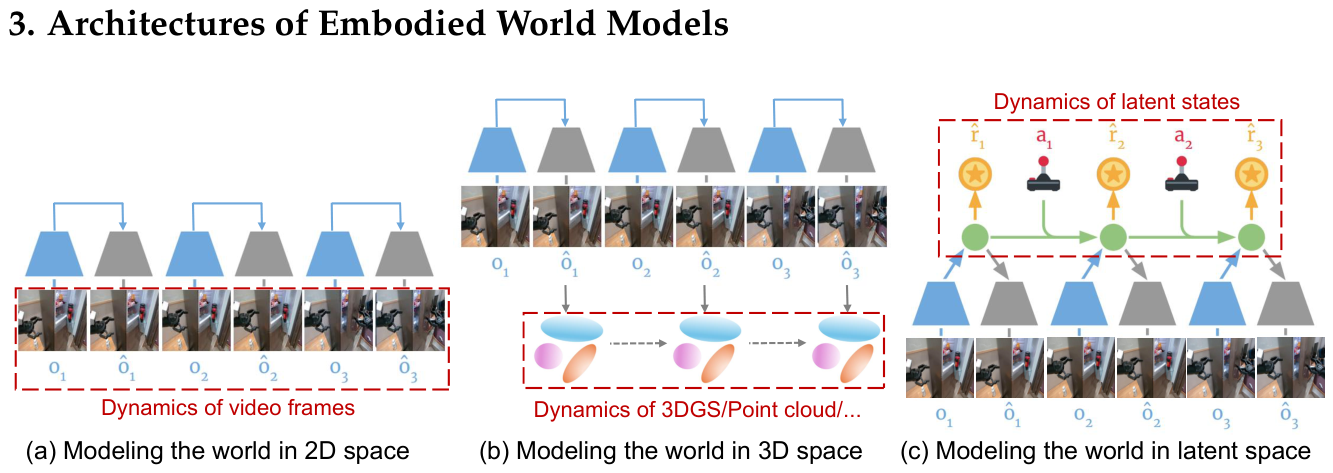

3. Architectures of Embodied World Models

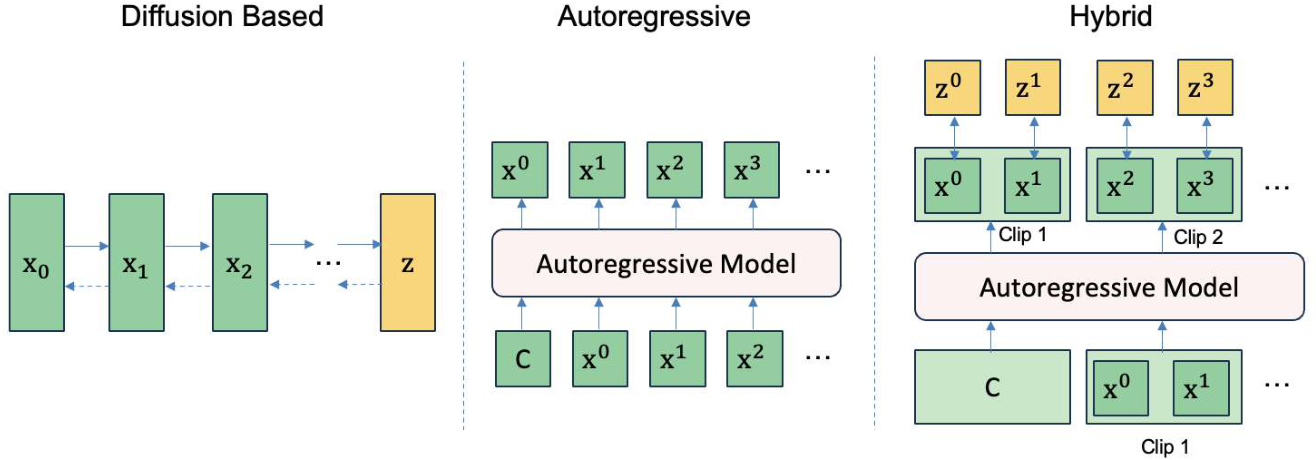

3.1. Video Generation-Based Models

- Diffusion-based Models.

- Autoregressive Models.

- Hybrid Models.

| Paradigm | Model | Key Contributions |

|---|---|---|

| Diffusion | Sora [81] | Photorealism; Long-horizon; Industrial-scale training |

| Open-Sora [138] | Open-source; Efficient VAE; DiT backbone | |

| Wan [104] | Spatio-temporal VAE; Flow-matching; Multi-task coverage | |

| CogVideoX [124] | Large transformer; High fidelity; Long rollout | |

| Vid2World [48] | Causalization; Action guidance; Interactivity | |

| Autoregressive | Genie 1 [11] | AR world model; Action-conditioned rollouts |

| Lumos-1 [128] | Billion-scale AR; Controllability; Stability | |

| iVideoGPT [115] | Efficient tokenization; AR rollouts | |

| Hybrid | Genie 2 [18] | Diffusion + AR; Interactive; Long-horizon sims |

| NOVA [19] | Non-quantized AR; Dual prediction; Diffusion denoising | |

| RoboScape-long [91] | Adaptive combination of Diffusion and AR generation | |

| VideoGPT [143] | AR rollout; Diffusion priors; Realism + Causality | |

| MAGI-1 [100] | Scalable hybrid; Chunk-wise generation; Streaming |

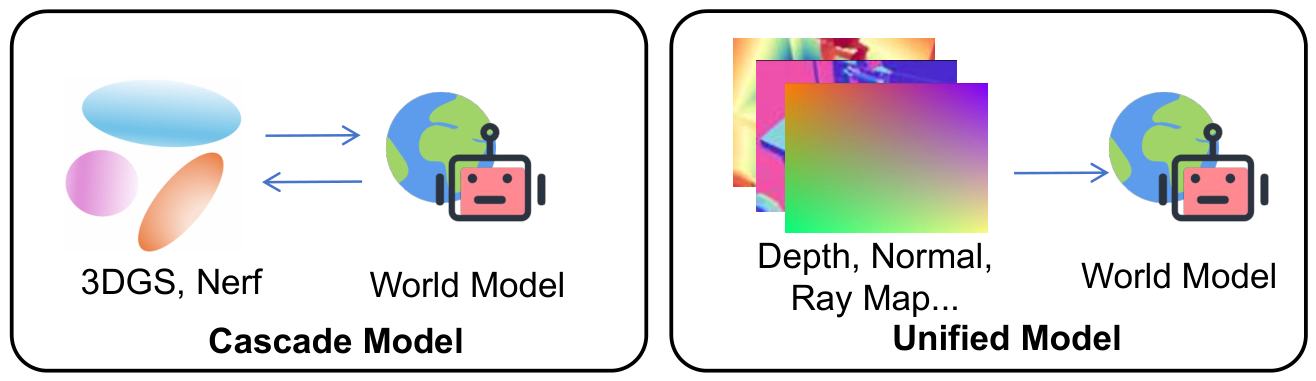

3.2. 3D Reconstruction-Enhanced Models

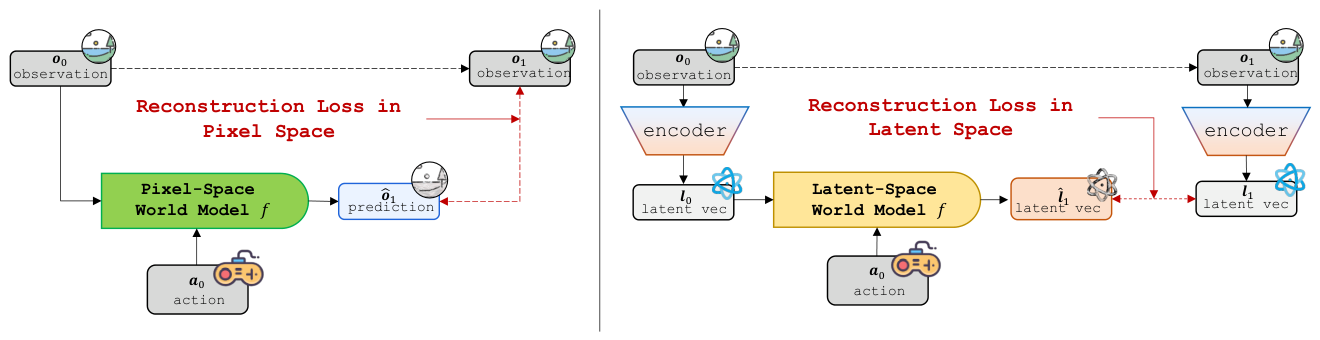

3.3. Latent Space World Models

4. Training Paradigm of Embodied World Models

| Method | Paradigm | Contribution |

|---|---|---|

| Sora [70] | ICT | Text-driven video generation model |

| RoboDreamer [139] | ICT | Decompose text instructions into fine-grained phrases |

| Pandora [119] | ICT | Real-time text control generation |

| Cosmos [1] | ICT | Instruction controllable world simulator |

| Vid2World [48] | ACT | Adding the embeddings of actions and observations |

| UWM [140] | ACT | Concatenating the embeddings of actions and observation |

| EnerVerse-AC [54] | ACT | Multi-channel aciton injection using cross-attention |

| FLARE [137] | ACT | Generate action tokens using diffusion |

| RoboScape [93] | PIT | Key point tracking and depth as physical information |

| TesserAct [135] | PIT | Decouple geometry, materials, and motion for 4D modeling |

| HMA [107] | VAT | Using Transformer to predict observation and action |

| UVA [61] | VAT | Symmetrical encoder and decoupled diffusion head |

| WorldVLA [12] | VAT | World model head renders observations with action head |

| RLVR-World [116] | RLT | Training the world model using a RL paradigm |

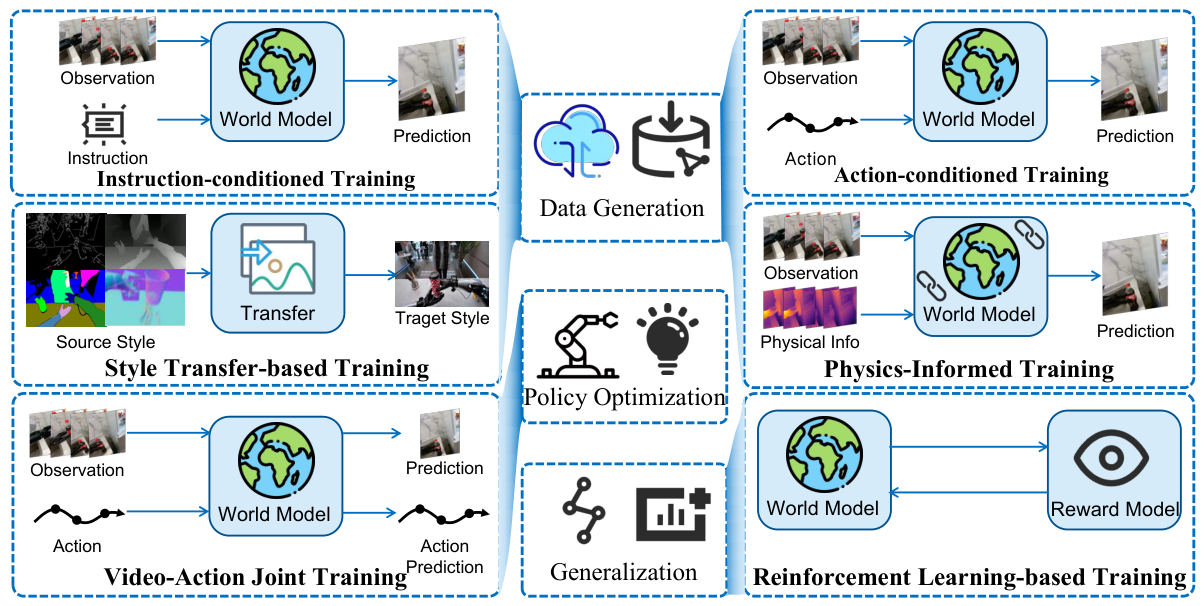

4.1. Instruction-Conditioned Training

4.2. Action-Conditioned Training

4.3. Physics-Informed Training

4.4. Video-Action Joint Training

4.5. RL-Based Training

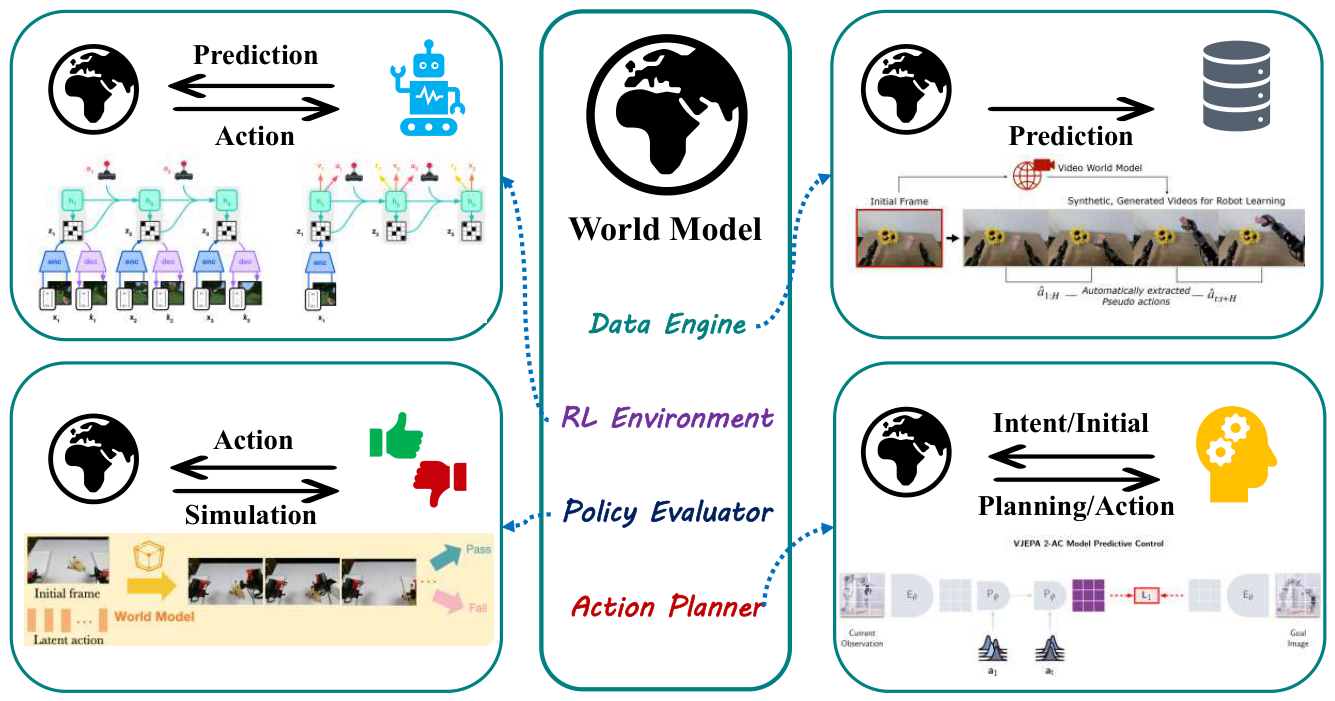

5. Applications of Embodied World Models

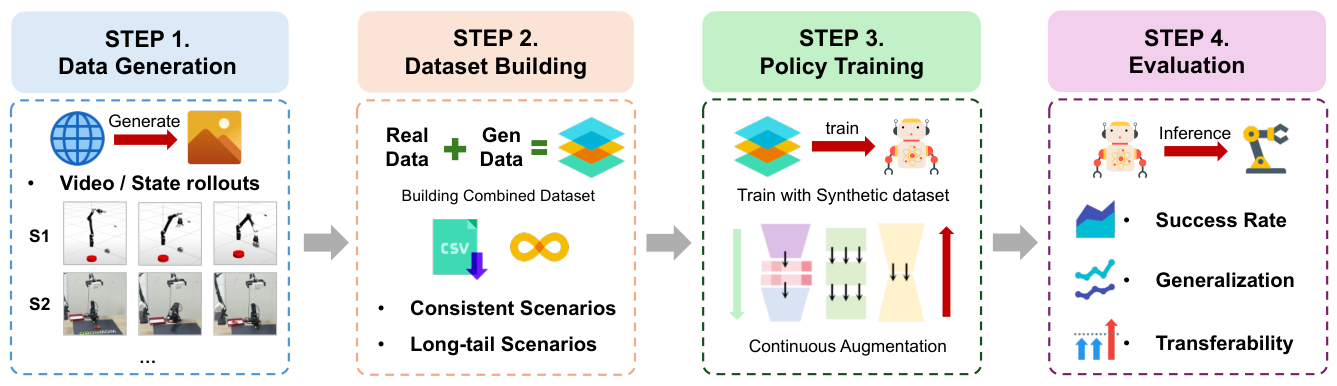

5.1. Offline Robotic Data Generation Engine

5.2. Environment Substitute for Reinforcement Learning

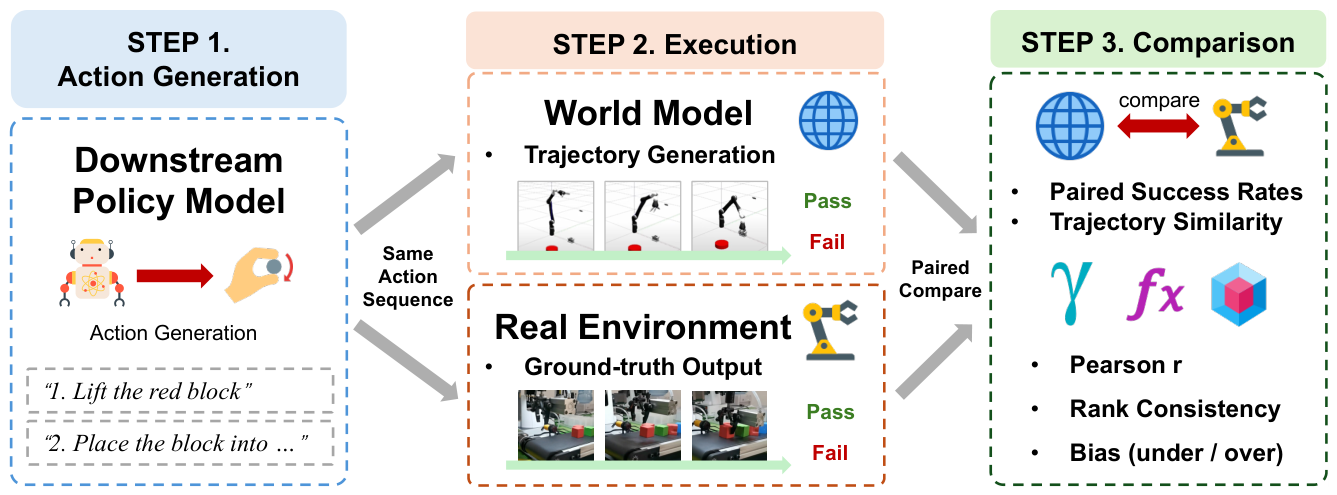

5.3. Robotic Policy Evaluator

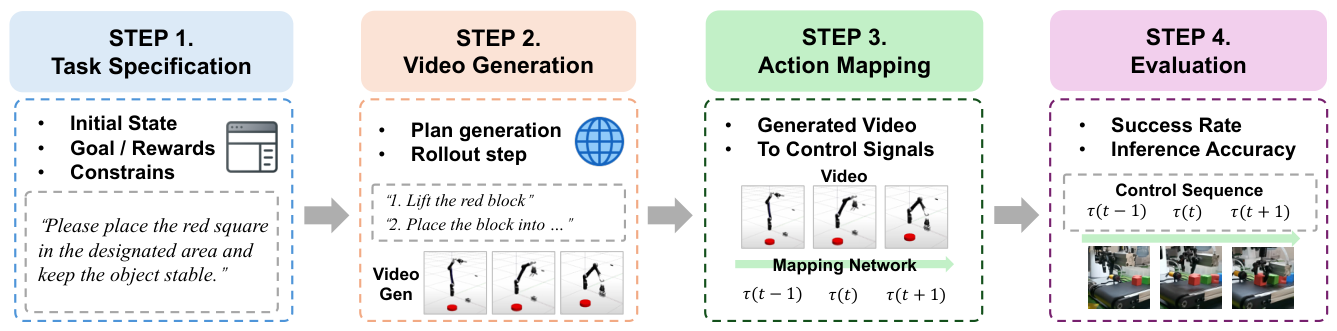

5.4. Action Planner as Embodied Agents

6. Benchmarks of Embodied World Models

6.1. Generated Data Quality

6.2. End-to-End Manipulation Evaluation

6.3. Evaluation Reliability Towards Policy Model

6.4. Data Scaling in Downstream Policy Model

7. Challenges and Future Works

7.1. Effective Data Collection

7.2. Effective Architecture Designs and Causal Training

7.3. Effective Benchmark Construction

7.4. Relation with Large Language Models

7.5. Real-World Deployment and Applications

7.6. Towards Universal and Cross-Scale Physical World Models

8. Conclusion

References

- Agarwal, Niket; Ali, Arslan; Bala, Maciej; Balaji, Yogesh; Barker, Erik; Cai, Tiffany; Chattopadhyay, Prithvijit; Chen, Yongxin; Cui, Yin; Ding, Yifan; et al. Cosmos world foundation model platform for physical ai. arXiv 2025, arXiv:2501.03575. [Google Scholar] [CrossRef]

- Assran, Mahmoud; Duval, Quentin; Misra, Ishan; Bojanowski, Piotr; Vincent, Pascal; Rabbat, Michael; LeCun, Yann; Ballas, Nicolas. Self-supervised learning from images with a joint-embedding predictive architecture. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023; pp. pages 15619–15629. [Google Scholar]

- Assran, Mido; Bardes, Adrien; Fan, David; Garrido, Quentin; Howes, Russell; Muckley, Matthew; Rizvi, Ammar; Roberts, Claire; Sinha, Koustuv; Zholus, Artem; et al. V-jepa 2: Self-supervised video models enable understanding, prediction and planning. arXiv 2025, arXiv:2506.09985. [Google Scholar]

- Ball, Philip J.; Bauer, Jakob; Belletti, Frank; Brownfield, Bethanie; Ephrat, Ariel; Fruchter, Shlomi; Gupta, Agrim; Holsheimer, Kristian; Holynski, Aleksander; Hron, Jiri; Kaplanis, Christos; Limont, Marjorie; McGill, Matt; Oliveira, Yanko; Parker-Holder, Jack; Perbet, Frank; Scully, Guy; Shar, Jeremy; Spencer, Stephen; Tov, Omer; Villegas, Ruben; Wang, Emma; Yung, Jessica; Baetu, Cip; Berbel, Jordi; Bridson, David; Bruce, Jake; Buttimore, Gavin; Chakera, Sarah; Chandra, Bilva; Collins, Paul; Cullum, Alex; Damoc, Bogdan; Dasagi, Vibha; Gazeau, Maxime; Gbadamosi, Charles; Han, Woohyun; Hirst, Ed; Kachra, Ashyana; Kerley, Lucie; Kjems, Kristian; Knoepfel, Eva; Koriakin, Vika; Lo, Jessica; Lu, Cong; Mehring, Zeb; Moufarek, Alex; Nandwani, Henna; Oliveira, Valeria; Pardo, Fabio; Park, Jane; Pierson, Andrew; Poole, Ben; Ran, Helen; Salimans, Tim; Sanchez, Manuel; Saprykin, Igor; Shen, Amy; Sidhwani, Sailesh; Smith, Duncan; Stanton, Joe; Tomlinson, Hamish; Vijaykumar, Dimple; Wang, Luyu; Wingfield, Piers; Wong, Nat; Xu, Keyang; Yew, Christopher; Young, Nick; Zubov, Vadim; Eck, Douglas; Erhan, Dumitru; Kavukcuoglu, Koray; Hassabis, Demis; Gharamani, Zoubin; Hadsell, Raia; van den Oord, Aäron; Mosseri, Inbar; Bolton, Adrian; Singh, Satinder; Rocktäschel, Tim. Genie 3: A new frontier for world models. 2025. [Google Scholar]

- Bansal, Hritik; Lin, Zongyu; Xie, Tianyi; Zong, Zeshun; Yarom, Michal; Bitton, Yonatan; Jiang, Chenfanfu; Sun, Yizhou; Chang, Kai-Wei; Grover, Aditya. Videophy: Evaluating physical commonsense for video generation. arXiv 2024, arXiv:2406.03520. [Google Scholar] [CrossRef]

- Bansal, Hritik; Peng, Clark; Bitton, Yonatan; Goldenberg, Roman; Grover, Aditya; Chang, Kai-Wei. Videophy-2: A challenging action-centric physical commonsense evaluation in video generation. arXiv 2025, arXiv:2503.06800. [Google Scholar]

- Amir Bar, Gaoyue Zhou, Danny Tran, Trevor Darrell, and Yann LeCun. Navigation world models. In Proceedings of the Computer Vision and Pattern Recognition Conference, pages 15791–15801, 2025.

- Bardes, Adrien; Garrido, Quentin; Ponce, Jean; Chen, Xinlei; Rabbat, Michael; LeCun, Yann; Assran, Mahmoud; Ballas, Nicolas. Revisiting feature prediction for learning visual representations from video. arXiv 2024, arXiv:2404.08471. [Google Scholar] [CrossRef]

- Blattmann, Andreas; Dockhorn, Tim; Kulal, Sumith; Mendelevitch, Daniel; Kilian, Maciej; Lorenz, Dominik; Levi, Yam; English, Zion; Voleti, Vikram; Letts, Adam; et al. Stable video diffusion: Scaling latent video diffusion models to large datasets. arXiv 2023, arXiv:2311.15127. [Google Scholar] [CrossRef]

- Brooks, Rodney A. New approaches to robotics. Science 1991, 253(5025), 1227–1232. [Google Scholar] [CrossRef]

- Bruce, Jake; Dennis, Michael D; Edwards, Ashley; Parker-Holder, Jack; Shi, Yuge; Hughes, Edward; Lai, Matthew; Mavalankar, Aditi; Steigerwald, Richie; Apps, Chris; et al. Genie: Generative interactive environments. Forty-first International Conference on Machine Learning, 2024. [Google Scholar]

- Cen, Jun; Yu, Chaohui; Yuan, Hangjie; Jiang, Yuming; Huang, Siteng; Guo, Jiayan; Li, Xin; Song, Yibing; Luo, Hao; Wang, Fan; et al. Worldvla: Towards autoregressive action world model. arXiv 2025, arXiv:2506.21539. [Google Scholar] [CrossRef]

- Chang, Le; Tsao, Doris Y. The code for facial identity in the primate brain. Cell 2017, 169(6), 1013–1028. [Google Scholar] [CrossRef]

- Chang, Peng; Padif, Taşkin. Sim2real2sim: Bridging the gap between simulation and real-world in flexible object manipulation. In 2020 Fourth IEEE International Conference on Robotic Computing (IRC); IEEE, 2020; pp. 56–62. [Google Scholar]

- Chen, Anthony; Zheng, Wenzhao; Wang, Yida; Zhang, Xueyang; Zhan, Kun; Jia, Peng; Keutzer, Kurt; Zhang, Shanghang. Geodrive: 3d geometry-informed driving world model with precise action control. arXiv 2025, arXiv:2505.22421. [Google Scholar]

- Chen, Junyi; Zhu, Haoyi; He, Xianglong; Wang, Yifan; Zhou, Jianjun; Chang, Wenzheng; Zhou, Yang; Li, Zizun; Fu, Zhoujie; Pang, Jiangmiao; et al. Deepverse: 4d autoregressive video generation as a world model. arXiv 2025, arXiv:2506.01103. [Google Scholar] [CrossRef]

- Chen, Zixuan; Huo, Jing; Chen, Yangtao; Gao, Yang. Robohorizon: An llm-assisted multi-view world model for long-horizon robotic manipulation. arXiv 2025, arXiv:2501.06605. [Google Scholar]

- DeepMind. Genie 2: A large-scale foundation world model. DeepMind Discover blog, December 2024.

- Deng, Haoge; Pan, Ting; Diao, Haiwen; Luo, Zhengxiong; Cui, Yufeng; Lu, Huchuan; Shan, Shiguang; Qi, Yonggang; Wang, Xinlong. Autoregressive video generation without vector quantization. arXiv 2024, arXiv:2412.14169. [Google Scholar] [CrossRef]

- Ding, Jingtao; Zhang, Yunke; Shang, Yu; Zhang, Yuheng; Zong, Zefang; Feng, Jie; Yuan, Yuan; Su, Hongyuan; Li, Nian; Sukiennik, Nicholas; et al. Understanding world or predicting future? a comprehensive survey of world models. ACM Computing Surveys, 2024. [Google Scholar]

- Duan, Haoyi; Yu, Hong-Xing; Chen, Sirui; Fei-Fei, Li; Wu, Jiajun. Worldscore: A unified evaluation benchmark for world generation. arXiv 2025, arXiv:2504.00983. [Google Scholar] [CrossRef]

- Evans, Jonathan St BT. Hypothetical thinking: Dual processes in reasoning and judgement; Psychology Press, 2007. [Google Scholar]

- Feng, Tuo; Wang, Wenguan; Yang, Yi. A survey of world models for autonomous driving. arXiv 2025, arXiv:2501.11260. [Google Scholar] [CrossRef]

- Feng, Yao; Tan, Hengkai; Mao, Xinyi; Liu, Guodong; Huang, Shuhe; Xiang, Chendong; Su, Hang; Zhu, Jun. Vidar: Embodied video diffusion model for generalist bimanual manipulation. arXiv 2025, arXiv:2507.12898. [Google Scholar]

- Feng, Yunhai; Hansen, Nicklas; Xiong, Ziyan; Rajagopalan, Chandramouli; Wang, Xiaolong. Finetuning offline world models in the real world. arXiv 2023, arXiv:2310.16029. [Google Scholar] [CrossRef]

- Forrester, Jay W. Counterintuitive behavior of social systems. Theory and decision 1971, 2(2), 109–140. [Google Scholar] [CrossRef]

- Fox, Maria; Long, Derek. Pddl2. 1: An extension to pddl for expressing temporal planning domains. Journal of artificial intelligence research 2003, 20, 61–124. [Google Scholar] [CrossRef]

- Franklin, Gene F; Powell, J David; Emami-Naeini, Abbas; Powell, J David. Feedback control of dynamic systems; Prentice hall Upper Saddle River, 2002; volume 4. [Google Scholar]

- Fu, Ao; Zhou, Yi; Zhou, Tao; Yang, Yi; Gao, Bojun; Li, Qun; Wu, Guobin; Shao, Ling. Exploring the interplay between video generation and world models in autonomous driving: A survey. arXiv 2024, arXiv:2411.02914. [Google Scholar] [CrossRef]

- Gao, Chen; Lan, Xiaochong; Li, Nian; Yuan, Yuan; Ding, Jingtao; Zhou, Zhilun; Xu, Fengli; Li, Yong. Large language models empowered agent-based modeling and simulation: A survey and perspectives. Humanities and Social Sciences Communications 2024, 11(1), 1–24. [Google Scholar] [CrossRef]

- Gao, Shenyuan; Yang, Jiazhi; Chen, Li; Chitta, Kashyap; Qiu, Yihang; Geiger, Andreas; Zhang, Jun; Li, Hongyang. Vista: A generalizable driving world model with high fidelity and versatile controllability. Advances in Neural Information Processing Systems 2024, 37, 91560–91596. [Google Scholar]

- Ge, Zhiqi; Huang, Hongzhe; Zhou, Mingze; Li, Juncheng; Wang, Guoming; Tang, Siliang; Zhuang, Yueting. Worldgpt: Empowering llm as multimodal world model. In Proceedings of the 32nd ACM International Conference on Multimedia, 2024; pp. pages 7346–7355. [Google Scholar]

- Guan, Yanchen; Liao, Haicheng; Li, Zhenning; Hu, Jia; Yuan, Runze; Zhang, Guohui; Xu, Chengzhong. World models for autonomous driving: An initial survey. IEEE Transactions on Intelligent Vehicles, 2024. [Google Scholar]

- Guo, Jun; Ma, Xiaojian; Wang, Yikai; Yang, Min; Liu, Huaping; Li, Qing. Flowdreamer: A rgb-d world model with flow-based motion representations for robot manipulation. arXiv 2025, arXiv:2505.10075. [Google Scholar] [CrossRef]

- Guo, Junliang; Ye, Yang; He, Tianyu; Wu, Haoyu; Jiang, Yushu; Pearce, Tim; Bian, Jiang. Mineworld: a real-time and open-source interactive world model on minecraft. arXiv 2025, arXiv:2504.08388. [Google Scholar]

- Ha, David; Schmidhuber, Jürgen. Recurrent world models facilitate policy evolution. Advances in neural information processing systems 2018, 31. [Google Scholar]

- Ha, David; Schmidhuber, Jürgen. World models. arXiv 2018, arXiv:1803.10122. [Google Scholar]

- Hafner, Danijar; Lillicrap, Timothy; Ba, Jimmy; Norouzi, Mohammad. Dream to control: Learning behaviors by latent imagination. arXiv 2019, arXiv:1912.01603. [Google Scholar]

- Hafner, Danijar; Lillicrap, Timothy; Fischer, Ian; Villegas, Ruben; Ha, David; Lee, Honglak; Davidson, James. Learning latent dynamics for planning from pixels. International conference on machine learning, 2019; PMLR; pp. pages 2555–2565. [Google Scholar]

- Hafner, Danijar; Lillicrap, Timothy; Norouzi, Mohammad; Ba, Jimmy. Mastering atari with discrete world models. arXiv 2020, arXiv:2010.02193. [Google Scholar]

- Hafner, Danijar; Pasukonis, Jurgis; Ba, Jimmy; Lillicrap, Timothy. Mastering diverse domains through world models. arXiv 2023, arXiv:2301.04104. [Google Scholar]

- Hafner, Danijar; Pasukonis, Jurgis; Ba, Jimmy; Lillicrap, Timothy. Mastering diverse control tasks through world models. Nature 2025, pages 1–7. [Google Scholar] [CrossRef] [PubMed]

- Hansen, Nicklas; Su, Hao; Wang, Xiaolong. Td-mpc2: Scalable, robust world models for continuous control. arXiv 2023, arXiv:2310.16828. [Google Scholar]

- Hansen, Nicklas; Wang, Xiaolong; Su, Hao. Temporal difference learning for model predictive control. arXiv 2022, arXiv:2203.04955. [Google Scholar] [CrossRef]

- Hu, Yingdong; Lin, Fanqi; Sheng, Pingyue; Wen, Chuan; You, Jiacheng; Gao, Yang. Data scaling laws in imitation learning for robotic manipulation. 1st Workshop on X-Embodiment Robot Learning, 2024. [Google Scholar]

- Hu, Yucheng; Guo, Yanjiang; Wang, Pengchao; Chen, Xiaoyu; Wang, Yen-Jen; Zhang, Jianke; Sreenath, Koushil; Lu, Chaochao; Chen, Jianyu. Video prediction policy: A generalist robot policy with predictive visual representations. arXiv 2024, arXiv:2412.14803. [Google Scholar] [CrossRef]

- Hu, Yuqi; Wang, Longguang; Liu, Xian; Chen, Ling-Hao; Guo, Yuwei; Shi, Yukai; Liu, Ce; Rao, Anyi; Wang, Zeyu; Xiong, Hui. Simulating the real world: A unified survey of multimodal generative models. arXiv 2025, arXiv:2503.04641. [Google Scholar] [CrossRef]

- Huang, Siqiao; Wu, Jialong; Zhou, Qixing; Miao, Shangchen; Long, Mingsheng. Vid2world: Crafting video diffusion models to interactive world models. arXiv 2025, arXiv:2505.14357. [Google Scholar] [CrossRef]

- Huang, Siyuan; Chen, Liliang; Zhou, Pengfei; Chen, Shengcong; Jiang, Zhengkai; Hu, Yue; Liao, Yue; Gao, Peng; Li, Hongsheng; Yao, Maoqing; et al. Enerverse: Envisioning embodied future space for robotics manipulation. arXiv 2025, arXiv:2501.01895. [Google Scholar] [CrossRef]

- Huang, Wenlong; Xia, Fei; Xiao, Ted; Chan, Harris; Liang, Jacky; Florence, Pete; Zeng, Andy; Tompson, Jonathan; Mordatch, Igor; Chebotar, Yevgen; et al. Inner monologue: Embodied reasoning through planning with language models. Conference on Robot Learning, 2023; PMLR; pp. pages 1769–1782. [Google Scholar]

- Huang, Ziqi; He, Yinan; Yu, Jiashuo; Zhang, Fan; Si, Chenyang; Jiang, Yuming; Zhang, Yuanhan; Wu, Tianxing; Jin, Qingyang; Chanpaisit, Nattapol; et al. Vbench: Comprehensive benchmark suite for video generative models. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024; pp. 21807–21818. [Google Scholar]

- Jang, Joel; Ye, Seonghyeon; Lin, Zongyu; Xiang, Jiannan; Bjorck, Johan; Fang, Yu; Hu, Fengyuan; Huang, Spencer; Kundalia, Kaushil; Lin, Yen-Chen; et al. Dreamgen: Unlocking generalization in robot learning through neural trajectories. arXiv e-prints 2025, arXiv–2505. [Google Scholar]

- Jiang, Yuxin; Chen, Shengcong; Huang, Siyuan; Chen, Liliang; Zhou, Pengfei; Liao, Yue; He, Xindong; Liu, Chiming; Li, Hongsheng; Yao, Maoqing; et al. Enerverse-ac: Envisioning embodied environments with action condition. arXiv 2025, arXiv:2505.09723. [Google Scholar]

- Jiang, Yuxin; Chen, Shengcong; Huang, Siyuan; Chen, Liliang; Zhou, Pengfei; Liao, Yue; He, Xindong; Liu, Chiming; Li, Hongsheng; Yao, Maoqing; et al. Enerverse-ac: Envisioning embodied environments with action condition. arXiv 2025, arXiv:2505.09723. [Google Scholar]

- Jiang, Zeren; Zheng, Chuanxia; Laina, Iro; Larlus, Diane; Vedaldi, Andrea. Geo4d: Leveraging video generators for geometric 4d scene reconstruction. arXiv 2025, arXiv:2504.07961. [Google Scholar] [CrossRef]

- Johnson-Laird, Philip N. Mental models in cognitive science. Cognitive science 1980, 4(1), 71–115. [Google Scholar] [CrossRef]

- Khazatsky, Alexander; Pertsch, Karl; Nair, Suraj; Balakrishna, Ashwin; Dasari, Sudeep; Karamcheti, Siddharth; Nasiriany, Soroush; Srirama, Mohan Kumar; Chen, Lawrence Yunliang; Ellis, Kirsty; et al. Droid: A large-scale in-the-wild robot manipulation dataset. arXiv 2024, arXiv:2403.12945. [Google Scholar]

- Lan, Zhengxing; Liu, Lingshan; Fan, Bo; Lv, Yisheng; Ren, Yilong; Cui, Zhiyong. Traj-llm: A new exploration for empowering trajectory prediction with pre-trained large language models. IEEE Transactions on Intelligent Vehicles, 2024. [Google Scholar]

- LeCun, Yann. A path towards autonomous machine intelligence version 0.9. 2, 2022-06-27. Open Review 2022, 62(1), 1–62. [Google Scholar]

- Li, Dacheng; Fang, Yunhao; Chen, Yukang; Yang, Shuo; Cao, Shiyi; Wong, Justin; Luo, Michael; Wang, Xiaolong; Yin, Hongxu; Gonzalez, Joseph E; et al. Worldmodelbench: Judging video generation models as world models. arXiv 2025, arXiv:2502.20694. [Google Scholar]

- Li, Shuang; Gao, Yihuai; Sadigh, Dorsa; Song, Shuran. Unified video action model. arXiv 2025, arXiv:2503.00200. [Google Scholar] [CrossRef]

- Li, Yaxuan; Zhu, Yichen; Wen, Junjie; Shen, Chaomin; Xu, Yi. Worldeval: World model as real-world robot policies evaluator. arXiv 2025, arXiv:2505.19017. [Google Scholar]

- Li, Ying; Wei, Xiaobao; Chi, Xiaowei; Li, Yuming; Zhao, Zhongyu; Wang, Hao; Ma, Ningning; Lu, Ming; Zhang, Shanghang. Manipdreamer: Boosting robotic manipulation world model with action tree and visual guidance. arXiv 2025, arXiv:2504.16464. [Google Scholar] [CrossRef]

- Liang, Wenlong; Zhou, Rui; Ma, Yang; Zhang, Bing; Li, Songlin; Liao, Yijia; Kuang, Ping. Large model empowered embodied ai: A survey on decision-making and embodied learning. arXiv 2025, arXiv:2508.10399. [Google Scholar] [CrossRef]

- Liao, Yue; Zhou, Pengfei; Huang, Siyuan; Yang, Donglin; Chen, Shengcong; Jiang, Yuxin; Hu, Yue; Cai, Jingbin; Liu, Si; Luo, Jianlan; et al. Genie envisioner: A unified world foundation platform for robotic manipulation. arXiv 2025, arXiv:2508.05635. [Google Scholar] [CrossRef]

- Lin, Bin; Li, Zongjian; Cheng, Xinhua; Niu, Yuwei; Ye, Yang; He, Xianyi; Yuan, Shenghai; Yu, Wangbo; Wang, Shaodong; Ge, Yunyang; et al. Uniworld: High-resolution semantic encoders for unified visual understanding and generation. arXiv 2025, arXiv:2506.03147. [Google Scholar]

- Liu, Liu; Wang, Xiaofeng; Zhao, Guosheng; Li, Keyu; Qin, Wenkang; Qiu, Jiaxiong; Zhu, Zheng; Huang, Guan; Su, Zhizhong. Robotransfer: Geometry-consistent video diffusion for robotic visual policy transfer. arXiv 2025, arXiv:2505.23171. [Google Scholar]

- Liu, Xinhao; Li, Jintong; Jiang, Yicheng; Sujay, Niranjan; Yang, Zhicheng; Zhang, Juexiao; Abanes, John; Zhang, Jing; Feng, Chen. Citywalker: Learning embodied urban navigation from web-scale videos. In Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. pages 6875–6885. [Google Scholar]

- Liu, Yang; Chen, Weixing; Bai, Yongjie; Liang, Xiaodan; Li, Guanbin; Gao, Wen; Lin, Liang. Aligning cyber space with physical world: A comprehensive survey on embodied ai. IEEE/ASME Transactions on Mechatronics, 2025. [Google Scholar]

- Liu, Yixin; Zhang, Kai; Li, Yuan; Yan, Zhiling; Gao, Chujie; Chen, Ruoxi; Yuan, Zhengqing; Huang, Yue; Sun, Hanchi; Gao, Jianfeng; et al. Sora: A review on background, technology, limitations, and opportunities of large vision models. arXiv 2024, arXiv:2402.17177. [Google Scholar] [CrossRef]

- Liu, Zeyi; Li, Shuang; Cousineau, Eric; Feng, Siyuan; Burchfiel, Benjamin; Song, Shuran. Geometry-aware 4d video generation for robot manipulation. arXiv 2025, arXiv:2507.01099. [Google Scholar] [CrossRef]

- Long, Xiaoxiao; Zhao, Qingrui; Zhang, Kaiwen; Zhang, Zihao; Wang, Dingrui; Liu, Yumeng; Shu, Zhengjie; Lu, Yi; Wang, Shouzheng; Wei, Xinzhe; et al. A survey: Learning embodied intelligence from physical simulators and world models. arXiv 2025, arXiv:2507.00917. [Google Scholar] [CrossRef]

- Lu, Guanxing; Jia, Baoxiong; Li, Puhao; Chen, Yixin; Wang, Ziwei; Tang, Yansong; Huang, Siyuan. Gwm: Towards scalable gaussian world models for robotic manipulation. arXiv 2025, arXiv:2508.17600. [Google Scholar] [CrossRef]

- Maus, Gerrit W; Fischer, Jason; Whitney, David. Motion-dependent representation of space in area mt+. Neuron 2013, 78(3), 554–562. [Google Scholar] [CrossRef]

- Mazzaglia, Pietro; Verbelen, Tim; Dhoedt, Bart; Courville, Aaron; Rajeswar, Sai. Genrl: Multimodal-foundation world models for generalization in embodied agents. Advances in neural information processing systems 2024, 37, 27529–27555. [Google Scholar]

- Melnychuk, Valentyn; Frauen, Dennis; Feuerriegel, Stefan. Causal transformer for estimating counterfactual outcomes. International conference on machine learning, 2022; PMLR; pp. pages 15293–15329. [Google Scholar]

- Meng, Fanqing; Liao, Jiaqi; Tan, Xinyu; Shao, Wenqi; Lu, Quanfeng; Zhang, Kaipeng; Cheng, Yu; Li, Dianqi; Qiao, Yu; Luo, Ping. Towards world simulator: Crafting physical commonsense-based benchmark for video generation. arXiv 2024, arXiv:2410.05363. [Google Scholar] [CrossRef]

- Min, Chen; Zhao, Dawei; Xiao, Liang; Zhao, Jian; Xu, Xinli; Zhu, Zheng; Jin, Lei; Li, Jianshu; Guo, Yulan; Xing, Junliang; et al. Driveworld: 4d pre-trained scene understanding via world models for autonomous driving. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024; pp. pages 15522–15533. [Google Scholar]

- Ni, Chaojun; Li, Jie; Li, Haoyun; Liu, Hengyu; Wang, Xiaofeng; Zhu, Zheng; Zhao, Guosheng; Wang, Boyuan; Li, Chenxin; Huang, Guan; et al. Wonderfree: Enhancing novel view quality and cross-view consistency for 3d scene exploration. arXiv 2025, arXiv:2506.20590. [Google Scholar]

- Ni, Chaojun; Zhao, Guosheng; Wang, Xiaofeng; Zhu, Zheng; Qin, Wenkang; Huang, Guan; Liu, Chen; Chen, Yuyin; Wang, Yida; Zhang, Xueyang; et al. Recondreamer: Crafting world models for driving scene reconstruction via online restoration. In Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. pages 1559–1569. [Google Scholar]

- OpenAI. Sora: Creating video from text. 2024. Available online: https://openai.com/sora (accessed on 06/05/2025).

- O’Neill, Abby; Rehman, Abdul; Maddukuri, Abhiram; Gupta, Abhishek; Padalkar, Abhishek; Lee, Abraham; Pooley, Acorn; Gupta, Agrim; Mandlekar, Ajay; Jain, Ajinkya; et al. Open x-embodiment: Robotic learning datasets and rt-x models: Open x-embodiment collaboration 0. In 2024 IEEE International Conference on Robotics and Automation (ICRA); IEEE, 2024; pp. pages 6892–6903. [Google Scholar]

- Qi, Han; Yin, Haocheng; Zhu, Aris; Du, Yilun; Yang, Heng. Strengthening generative robot policies through predictive world modeling. arXiv 2025, arXiv:2502.00622. [Google Scholar] [CrossRef]

- Qin, Yiran; Shi, Zhelun; Yu, Jiwen; Wang, Xijun; Zhou, Enshen; Li, Lijun; Yin, Zhenfei; Liu, Xihui; Sheng, Lu; Shao, Jing; et al. Worldsimbench: Towards video generation models as world simulators. arXiv 2024, arXiv:2410.18072. [Google Scholar]

- Quevedo, Julian; Liang, Percy; Yang, Sherry. Evaluating robot policies in a world model. arXiv 2025, arXiv:2506.00613. [Google Scholar] [CrossRef]

- Quiroga, R Quian; Reddy, Leila; Kreiman, Gabriel; Koch, Christof; Fried, Itzhak. Invariant visual representation by single neurons in the human brain. Nature 2005, 435(7045), 1102–1107. [Google Scholar] [CrossRef]

- Salvato, Erica; Fenu, Gianfranco; Medvet, Eric; Pellegrino, Felice Andrea. Crossing the reality gap: A survey on sim-to-real transferability of robot controllers in reinforcement learning. IEEE Access 2021, 9, 153171–153187. [Google Scholar] [CrossRef]

- Schmidhuber, Jürgen. Making the world differentiable: on using self supervised fully recurrent neural networks for dynamic reinforcement learning and planning in non-stationary environments; Inst. für Informatik; 1990; volume 126. [Google Scholar]

- Schmidhuber, Jürgen. An on-line algorithm for dynamic reinforcement learning and planning in reactive environments. In 1990 IJCNN international joint conference on neural networks; IEEE, 1990; pp. 253–258. [Google Scholar]

- Schmidhuber, Jürgen. Reinforcement learning in markovian and non-markovian environments. Advances in neural information processing systems 1990, 3. [Google Scholar]

- Yu Shang, Lei Jin, Yiding Ma, Xin Zhang, Chen Gao, Wei Wu, and Yong Li. Roboscape-long: An efficient world model for long-horizon embodied video generation. https://github.com/tsinghua-fib-lab/Roboscape-long, 2025.

- Yu Shang, Yangcheng Yu, Xin Zhang, Wei Wu, and Yong Li. Moewm: Learning composable world models for embodied action planning. https://github.com/tsinghua-fib-lab/MoE-WM, 2025.

- Shang, Yu; Zhang, Xin; Tang, Yinzhou; Jin, Lei; Gao, Chen; Wu, Wei; Li, Yong. Roboscape: Physics-informed embodied world model. arXiv 2025, arXiv:2506.23135. [Google Scholar]

- Sun, Kaiyue; Huang, Kaiyi; Liu, Xian; Wu, Yue; Xu, Zihan; Li, Zhenguo; Liu, Xihui. T2v-compbench: A comprehensive benchmark for compositional text-to-video generation. In Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. pages 8406–8416. [Google Scholar]

- Sutton, Richard S. Dyna, an integrated architecture for learning, planning, and reacting. ACM Sigart Bulletin 1991, 2(4), 160–163. [Google Scholar] [CrossRef]

- Yinzhou Tang, Yu Shang, Yinuo Chen, Bingwen Wei, Chao Yu, Wei Wu, and Yong Li. Roboscape-r: Reinforcement learning with embodied world models for policy learning. https://github.com/tsinghua-fib-lab/RoboScape-R, 2025.

- Zhu, Haoyi; Wang, Yifan; Zhou, Jianjun; Chang, Wenzheng; Zhou, Yang; Li, Zizun; Chen, Junyi; Shen, Chunhua; Pang, Jiangmiao; et al.; Aether Team Aether: Geometric-aware unified world modeling. arXiv 2025, arXiv:2503.18945. [Google Scholar] [CrossRef]

- Wang, Zhenwei; Liu, Yuhao; Wu, Junta; Gu, Zixiao; Wang, Haoyuan; Zuo, Xuhui; Huang, Tianyu; Li, Wenhuan; Zhang, Sheng; et al.; HunyuanWorld; Team Hunyuanworld 1.0: Generating immersive, explorable, and interactive 3d worlds from words or pixels. arXiv 2025, arXiv:2507.21809. [Google Scholar] [CrossRef]

- Yan Team. Yan: Foundational interactive video generation. arXiv 2025, arXiv:2508.08601. [Google Scholar] [CrossRef]

- Teng, Hansi; Jia, Hongyu; Sun, Lei; Li, Lingzhi; Li, Maolin; Tang, Mingqiu; Han, Shuai; Zhang, Tianning; Zhang, W.Q.; Luo, Weifeng; et al. Magi-1: Autoregressive video generation at scale. arXiv 2025, arXiv:2505.13211. [Google Scholar] [CrossRef]

- Tu, Sifan; Zhou, Xin; Liang, Dingkang; Jiang, Xingyu; Zhang, Yumeng; Li, Xiaofan; Bai, Xiang. The role of world models in shaping autonomous driving: A comprehensive survey. arXiv 2025, arXiv:2502.10498. [Google Scholar] [CrossRef]

- Turing, Alan M. Computing machinery and intelligence. In Parsing the Turing test: Philosophical and methodological issues in the quest for the thinking computer; Springer, 2007; pp. pages 23–65. [Google Scholar]

- Walke, Homer Rich; Black, Kevin; Zhao, Tony Z; Vuong, Quan; Zheng, Chongyi; Hansen-Estruch, Philippe; He, Andre Wang; Myers, Vivek; Kim, Moo Jin; Du, Max; et al. Bridgedata v2: A dataset for robot learning at scale. Conference on Robot Learning, 2023; PMLR; pp. pages 1723–1736. [Google Scholar]

- Wang, Ang; Ai, Baole; Wen, Bin; Mao, Chaojie; Xie, Chen-Wei; Chen, Di; Yu, Feiwu; Zhao, Haiming; Yang, Jianxiao; Zeng, Jianyuan; Wang, Jiayu; Zhang, Jingfeng; Zhou, Jingren; Wang, Jinkai; Chen, Jixuan; Zhu, Kai; Zhao, Kang; Yan, Keyu; Huang, Lianghua; Feng, Mengyang; Zhang, Ningyi; Li, Pandeng; Wu, Pingyu; Chu, Ruihang; Feng, Ruili; Zhang, Shiwei; Sun, Siyang; Fang, Tao; Wang, Tianxing; Gui, Tianyi; Weng, Tingyu; Shen, Tong; Lin, Wei; Wang, Wei; Wang, Wei; Zhou, Wenmeng; Wang, Wente; Shen, Wenting; Yu, Wenyuan; Shi, Xianzhong; Huang, Xiaoming; Xu, Xin; Kou, Yan; Lv, Yangyu; Li, Yifei; Liu, Yijing; Wang, Yiming; Zhang, Yingya; Huang, Yitong; Li, Yong; Wu, You; Liu, Yu; Pan, Yulin; Zheng, Yun; Hong, Yuntao; Shi, Yupeng; Feng, Yutong; Jiang, Zeyinzi; Han, Zhen; Wu, Zhi-Fan; Liu, Ziyu; Team Wan. Wan: Open and advanced large-scale video generative models. arXiv 2025, arXiv:2503.20314. [Google Scholar] [CrossRef]

- Wang, Jiayu; Ming, Yifei; Shi, Zhenmei; Vineet, Vibhav; Wang, Xin; Li, Sharon; Joshi, Neel. Is a picture worth a thousand words? delving into spatial reasoning for vision language models. Advances in Neural Information Processing Systems 2024, 37, 75392–75421. [Google Scholar]

- Wang, Lirui; Ling, Yiyang; Yuan, Zhecheng; Shridhar, Mohit; Bao, Chen; Qin, Yuzhe; Wang, Bailin; Xu, Huazhe; Wang, Xiaolong. Gensim: Generating robotic simulation tasks via large language models. arXiv 2023, arXiv:2310.01361. [Google Scholar]

- Wang, Lirui; Zhao, Kevin; Liu, Chaoqi; Chen, Xinlei. Learning real-world action-video dynamics with heterogeneous masked autoregression. arXiv 2025, arXiv:2502.04296. [Google Scholar]

- Wang, Xiaofeng; Zhu, Zheng; Huang, Guan; Chen, Xinze; Zhu, Jiagang; Lu, Jiwen. Drivedreamer: Towards real-world-drive world models for autonomous driving. European conference on computer vision, 2024; Springer; pp. pages 55–72. [Google Scholar]

- Wang, Xingrui; Ma, Wufei; Zhang, Tiezheng; de Melo, Celso M; Chen, Jieneng; Yuille, Alan. Spatial457: A diagnostic benchmark for 6d spatial reasoning of large mutimodal models. In Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. pages 24669–24679. [Google Scholar]

- Wang, Yaoting; Wu, Shengqiong; Zhang, Yuecheng; Yan, Shuicheng; Liu, Ziwei; Luo, Jiebo; Fei, Hao. Multimodal chain-of-thought reasoning: A comprehensive survey. arXiv 2025, arXiv:2503.12605. [Google Scholar]

- Wang, Yuqi; He, Jiawei; Fan, Lue; Li, Hongxin; Chen, Yuntao; Zhang, Zhaoxiang. Driving into the future: Multiview visual forecasting and planning with world model for autonomous driving. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024; pp. pages 14749–14759. [Google Scholar]

- Wen, Youpeng; Lin, Junfan; Zhu, Yi; Han, Jianhua; Xu, Hang; Zhao, Shen; Liang, Xiaodan. Vidman: Exploiting implicit dynamics from video diffusion model for effective robot manipulation. Advances in Neural Information Processing Systems 2024, 37, 41051–41075. [Google Scholar]

- WorldLabs. Generating worlds. https://www.worldlabs.ai/blog, 2024.

- Wu, Haoyu; Wu, Diankun; He, Tianyu; Guo, Junliang; Ye, Yang; Duan, Yueqi; Bian, Jiang. Geometry forcing: Marrying video diffusion and 3d representation for consistent world modeling. arXiv 2025, arXiv:2507.07982. [Google Scholar] [CrossRef]

- Wu, Jialong; Yin, Shaofeng; Feng, Ningya; He, Xu; Li, Dong; Hao, Jianye; Long, Mingsheng. ivideogpt: Interactive videogpts are scalable world models. Advances in Neural Information Processing Systems 2024, 37, 68082–68119. [Google Scholar]

- Wu, Jialong; Yin, Shaofeng; Feng, Ningya; Long, Mingsheng. Rlvr-world: Training world models with reinforcement learning. arXiv 2025, arXiv:2505.13934. [Google Scholar] [CrossRef]

- Wu, Philipp; Escontrela, Alejandro; Hafner, Danijar; Abbeel, Pieter; Goldberg, Ken. Daydreamer: World models for physical robot learning. Conference on robot learning, 2023; PMLR; pp. pages 2226–2240. [Google Scholar]

- Wu, Tong; Yang, Shuai; Po, Ryan; Xu, Yinghao; Liu, Ziwei; Lin, Dahua; Wetzstein, Gordon. Video world models with long-term spatial memory. arXiv 2025, arXiv:2506.05284. [Google Scholar] [CrossRef]

- Xiang, Jiannan; Liu, Guangyi; Gu, Yi; Gao, Qiyue; Ning, Yuting; Zha, Yuheng; Feng, Zeyu; Tao, Tianhua; Hao, Shibo; Shi, Yemin; et al. Pandora: Towards general world model with natural language actions and video states. arXiv 2024, arXiv:2406.09455. [Google Scholar]

- Xie, Ningwei; Tian, Zizi; Yang, Lei; Zhang, Xiao-Ping; Guo, Meng; Li, Jie. From 2d to 3d cognition: A brief survey of general world models. arXiv 2025, arXiv:2506.20134. [Google Scholar] [CrossRef]

- Xing, Eric; Deng, Mingkai; Hou, Jinyu; Hu, Zhiting. Critiques of world models. arXiv 2025, arXiv:2507.05169. [Google Scholar] [CrossRef]

- Xu, Zhiyuan; Wu, Kun; Wen, Junjie; Li, Jinming; Liu, Ning; Che, Zhengping; Tang, Jian. A survey on robotics with foundation models: toward embodied ai. arXiv 2024, arXiv:2402.02385. [Google Scholar] [CrossRef]

- Yang, Xiuyu; Li, Bohan; Xu, Shaocong; Wang, Nan; Ye, Chongjie; Chen, Zhaoxi; Qin, Minghan; Ding, Yikang; Jin, Xin; Zhao, Hang; et al. Orv: 4d occupancy-centric robot video generation. arXiv 2025, arXiv:2506.03079. [Google Scholar] [CrossRef]

- Yang, Zhuoyi; Teng, Jiayan; Zheng, Wendi; Ding, Ming; Huang, Shiyu; Xu, Jiazheng; Yang, Yuanming; Hong, Wenyi; Zhang, Xiaohan; Feng, Guanyu; et al. Cogvideox: Text-to-video diffusion models with an expert transformer. arXiv 2024, arXiv:2408.06072. [Google Scholar]

- Yu, Jiwen; Qin, Yiran; Wang, Xintao; Wan, Pengfei; Zhang, Di; Liu, Xihui. Gamefactory: Creating new games with generative interactive videos. arXiv 2025, arXiv:2501.08325. [Google Scholar] [CrossRef]

- Yu, Tengbo; Lu, Guanxing; Yang, Zaijia; Deng, Haoyuan; Chen, Season Si; Lu, Jiwen; Ding, Wenbo; Hu, Guoqiang; Tang, Yansong; Wang, Ziwei. Manigaussian++: General robotic bimanual manipulation with hierarchical gaussian world model. arXiv 2025, arXiv:2506.19842. [Google Scholar]

- Yu, Yang. Towards sample efficient reinforcement learning. IJCAI, 2018; pp. pages 5739–5743. [Google Scholar]

- Yuan, Hangjie; Chen, Weihua; Cen, Jun; Yu, Hu; Liang, Jingyun; Chang, Shuning; Lin, Zhihui; Feng, Tao; Liu, Pengwei; Xing, Jiazheng; et al. Lumos-1: On autoregressive video generation from a unified model perspective. arXiv 2025, arXiv:2507.08801. [Google Scholar] [CrossRef]

- Yue, Hu; Huang, Siyuan; Liao, Yue; Chen, Shengcong; Zhou, Pengfei; Chen, Liliang; Yao, Maoqing; Ren, Guanghui. Ewmbench: Evaluating scene, motion, and semantic quality in embodied world models. arXiv 2025, arXiv:2505.09694. [Google Scholar] [CrossRef]

- Zhang, Kaidong; Ren, Pengzhen; Lin, Bingqian; Lin, Junfan; Ma, Shikui; Xu, Hang; Liang, Xiaodan. Pivot-r: Primitive-driven waypoint-aware world model for robotic manipulation. Advances in Neural Information Processing Systems 2024, 37, 54105–54136. [Google Scholar]

- Zhang, Yifan; Peng, Chunli; Wang, Boyang; Wang, Puyi; Zhu, Qingcheng; Kang, Fei; Jiang, Biao; Gao, Zedong; Li, Eric; Liu, Yang; et al. Matrix-game: Interactive world foundation model. arXiv 2025, arXiv:2506.18701. [Google Scholar] [CrossRef]

- Zhang, Zhang; Zhang, Qiang; Cui, Wei; Shi, Shuai; Guo, Yijie; Han, Gang; Zhao, Wen; Sun, Jingkai; Cao, Jiahang; Wang, Jiaxu; et al. Occupancy world model for robots. arXiv 2025, arXiv:2505.05512. [Google Scholar] [CrossRef]

- Zhao, Baining; Tang, Rongze; Jia, Mingyuan; Wang, Ziyou; Man, Fanghang; Zhang, Xin; Shang, Yu; Zhang, Weichen; Gao, Chen; Wu, Wei; et al. Airscape: An aerial generative world model with motion controllability. arXiv 2025, arXiv:2507.08885. [Google Scholar] [CrossRef]

- Zhao, Guosheng; Ni, Chaojun; Wang, Xiaofeng; Zhu, Zheng; Zhang, Xueyang; Wang, Yida; Huang, Guan; Chen, Xinze; Wang, Boyuan; Zhang, Youyi; et al. Drivedreamer4d: World models are effective data machines for 4d driving scene representation. In Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. 12015–12026. [Google Scholar]

- Zhen, Haoyu; Sun, Qiao; Zhang, Hongxin; Li, Junyan; Zhou, Siyuan; Du, Yilun; Gan, Chuang. Tesseract: learning 4d embodied world models. arXiv 2025, arXiv:2504.20995. [Google Scholar] [CrossRef]

- Zheng, Dian; Huang, Ziqi; Liu, Hongbo; Zou, Kai; He, Yinan; Zhang, Fan; Zhang, Yuanhan; He, Jingwen; Zheng, Wei-Shi; Qiao, Yu; et al. Vbench-2.0: Advancing video generation benchmark suite for intrinsic faithfulness. arXiv 2025, arXiv:2503.21755. [Google Scholar] [CrossRef]

- Zheng, Ruijie; Wang, Jing; Reed, Scott; Bjorck, Johan; Fang, Yu; Hu, Fengyuan; Jang, Joel; Kundalia, Kaushil; Lin, Zongyu; Magne, Loic; et al. Flare: Robot learning with implicit world modeling. arXiv 2025, arXiv:2505.15659. [Google Scholar] [CrossRef]

- Zheng, Zangwei; Peng, Xiangyu; Yang, Tianji; Shen, Chenhui; Li, Shenggui; Liu, Hongxin; Zhou, Yukun; Li, Tianyi; You, Yang. Open-sora: Democratizing efficient video production for all. arXiv 2024, arXiv:2412.20404. [Google Scholar] [CrossRef]

- Zhou, Siyuan; Du, Yilun; Chen, Jiaben; Li, Yandong; Yeung, Dit-Yan; Gan, Chuang. Robodreamer: Learning compositional world models for robot imagination. arXiv 2024, arXiv:2404.12377. [Google Scholar] [CrossRef]

- Zhu, Chuning; Yu, Raymond; Feng, Siyuan; Burchfiel, Benjamin; Shah, Paarth; Gupta, Abhishek. Unified world models: Coupling video and action diffusion for pretraining on large robotic datasets. arXiv 2025, arXiv:2504.02792. [Google Scholar] [CrossRef]

- Zhu, Fangqi; Wu, Hongtao; Guo, Song; Liu, Yuxiao; Cheang, Chilam; Kong, Tao. Irasim: Learning interactive real-robot action simulators. arXiv 2024, arXiv:2406.14540. [Google Scholar] [CrossRef]

- Zhu, Zheng; Wang, Xiaofeng; Zhao, Wangbo; Min, Chen; Deng, Nianchen; Dou, Min; Wang, Yuqi; Shi, Botian; Wang, Kai; Zhang, Chi; et al. Is sora a world simulator? a comprehensive survey on general world models and beyond. arXiv 2024, arXiv:2405.03520. [Google Scholar] [CrossRef]

- Zhuang, Shaobin; Huang, Zhipeng; Zhang, Ying; Wang, Fangyikang; Fu, Canmiao; Yang, Binxin; Sun, Chong; Li, Chen; Wang, Yali. Video-gpt via next clip diffusion. arXiv 2025, arXiv:2505.12489. [Google Scholar] [CrossRef]

- Zuo, Sicheng; Zheng, Wenzhao; Huang, Yuanhui; Zhou, Jie; Lu, Jiwen. Gaussianworld: Gaussian world model for streaming 3d occupancy prediction. In Proceedings of the Computer Vision and Pattern Recognition Conference, 2025; pp. pages 6772–6781. [Google Scholar]

| Survey | Year | Main Topic | Limitations |

|---|---|---|---|

| Zhu et al. [142] | 2024 | General world model | Limited to general applications |

| Ding et al. [20] | 2024 | General world model | Limited to concepts and applications |

| Xie et al. [120] | 2025 | General world model | Limited to 3D cognition ability |

| Guan et al. [33], Feng et al. [23], Tu et al. [101] | 2024, 2025 | Driving world model | Different research domain |

| Liu et al. [69] | 2025 | Embodied AI | Comprehensive introduction of embodied robots and simulators, with limited techniques of world models |

| Long et al. [72] | 2025 | Embodied AI | Overview of physical simulators and world models, only discussing world model architectures without more technical details |

| Liang et al. [64] | 2025 | Embodied AI | Comprehensive overview of embodied learning, only briefly discussing world model concepts and architectures |

| Model | Application Area | World Model Type / Contribution |

|---|---|---|

| PlaNet [39] | Visual control | RSSM; latent overshooting; data-efficient planning. |

| Dreamer [38] | Model-based RL | Latent imagination actor-critic; analytic value gradients. |

| DreamerV2 [40] | Model-based RL | Categorical latents; KL balancing; human-level Atari. |

| DreamerV3 [42] | Model-based RL | Robust training (symlog, two-hot, KL free-bits); 150+ tasks incl. Minecraft. |

| I-JEPA [2] | Image learning | Masked prediction; semantic latent features. |

| V-JEPA [8] | Video learning | Spatio-temporal masking; predictive transformer; strong transfer. |

| V-JEPA 2 [3] | Video learning | 1B-parameter encoder; progressive resolution; SOTA motion benchmarks. |

| V-JEPA 2-AC [3] | Robotic control | Frozen encoder + lightweight predictor; zero-shot MPC for robotics. |

| TD-MPC [44] | Continuous control | Task-oriented latent dynamics; TD-learning for MPC. |

| TD-MPC-offline [25] | Robotic control | Offline-to-online finetuning with uncertainty-regularized planning. |

| TD-MPC2 [43] | Multi-task continuous control | Scalable implicit world model; single hyperparameter set across 104 tasks. |

| Application Area | Method | World Model Type |

|---|---|---|

| Offline Robotic Data Generation |

DreamGen [52] | Finetuned WAN 2.1 [104] |

| RoboTransfer [67] | Multi-view, geometry, and appearance conditioned diffusion model | |

| EnerVerse-AC [54] | UNet-based Video Diffusion Model | |

| RL Environment Substitute |

GenRL [75] | GRU-based Recurrent Architecture |

| iVideoGPT [115] | Autoregressive Transformer over quantized multimodal tokens | |

| RoboScape-R [96] | Autoregressive Transformer predicting both observation and reward | |

| DreamerV3 [42] | Recurrent State-Space Model with discrete latent representations | |

| Robotic Policy Evaluator |

WorldEval [62] | Finetuned WAN 2.1 [104] |

| EnerVerse-AC [54] | UNet-based Video Diffusion Model | |

| Action Planner as Embodied Agents |

GPC [83] | UNet-based Video Diffusion Model |

| VPP [46] | Finetuned Stable Video Diffusion [9] | |

| MoE-WM [92] | Mixture of latent and pixel space world models | |

| V-JEPA 2-AC [3] | Joint-Embedding Predictive Architecture [2] |

| Perspectives | Representative Works | Metrics |

|---|---|---|

| Generated Data Quality |

VBench [51] | Temporal quality, frame-wise quality, semantics consistency and style consistency. |

| T2V-CompBench [94] | Video LLM-based metrics, spatial relationships, generative numeracy and dense optical tracking. | |

| VBench-2.0 [136] | Human fidelity, creativity, controllability, physics and commonsense. | |

| VideoPhy [5] | Semantic adherence and physical commonsense. | |

| VideoPhy-2 [6] | Semantic adherence, physical commonsense and physical rules. | |

| PhyGenBench [77] | Key physical phenomena detection, physics order verification and naturalness evaluation. | |

| WorldModelBench [60] | Instruction following, physics adherence and commonsense. | |

| EWMbench [129] | Scene consistency, motion correctness, and semantic alignment & diversity. | |

| End-to-end Manipulation Evaluation |

DreamerV3 [41] | Planning performance on downstream tasks. |

| V-JEPA 2 [3] | QA accuracy, action anticipation recall and planning success rate. | |

| WorldSimBench [84] | Task success rate and execution accuracy. | |

| Evaluation Reliability towards Policy Model |

WPE [85] | Evaluating In-Distribution and Out-of-Distribution policies. |

| WorldEval [62] | Pearson correlation coefficient and Mean Maximum Rank Violation. | |

| RoboScape [93] | Pearson correlation and R2 between world models and the ground-truth simulator. | |

| Data Scaling in Downstream Policy Model |

DreamGen [52] | Instruction following and physics alignment. |

| RoboTransfer [67] | Multi-view consistency, geometric consistency, and semantic consistency. | |

| GenSim [106] | Success rates. | |

| WorldGPT [32] | Cosine similarity for state prediction, task accuracy, and generation efficiency. | |

| Traj-LLM [58] | Average displacement error, final displacement error, and miss rate. | |

| RoboScape [93] | Pearson correlation and R2. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).