Submitted:

13 April 2026

Posted:

14 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Materials and Methods

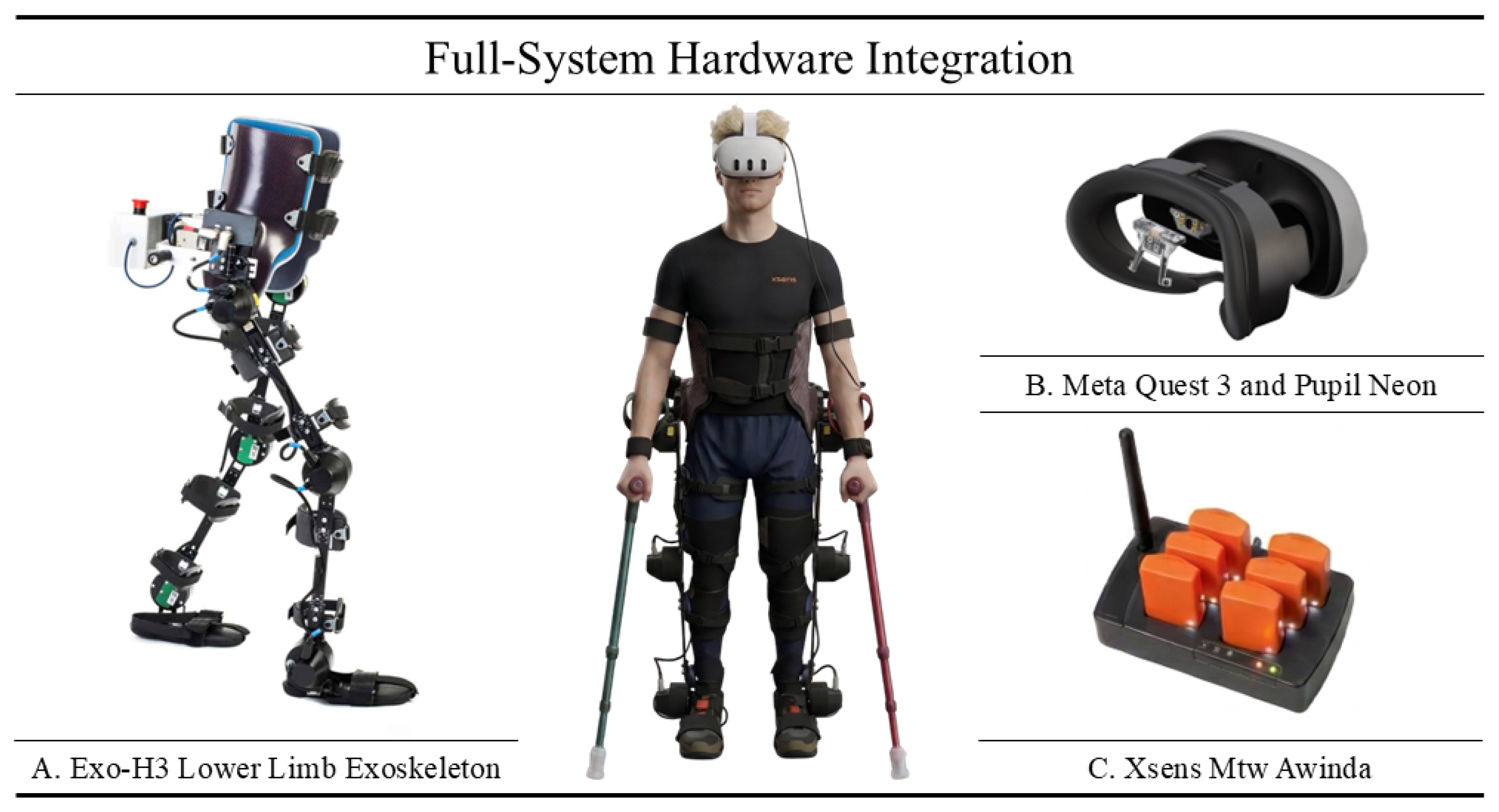

2.1. Materials

2.2. Dual-Task Design

2.3. Methods

2.3.1. Pupil Neon & Meta Quest 3 Gaze Calibration

2.3.2. Pupil Neon & Meta Quest 3 Synchronization

2.3.3. Event Detection & Step Segmentation

2.3.4. Kinematic Metrics

2.3.5. Cognitive Metrics

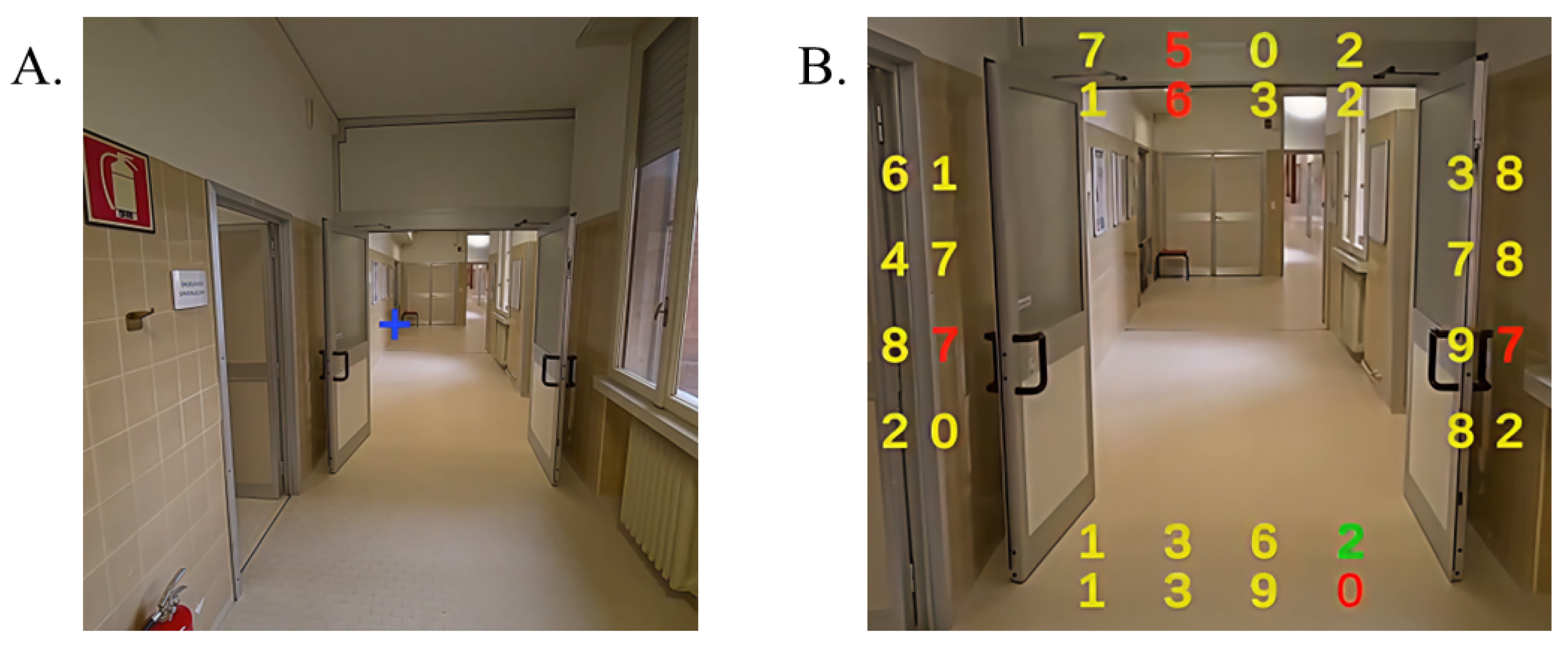

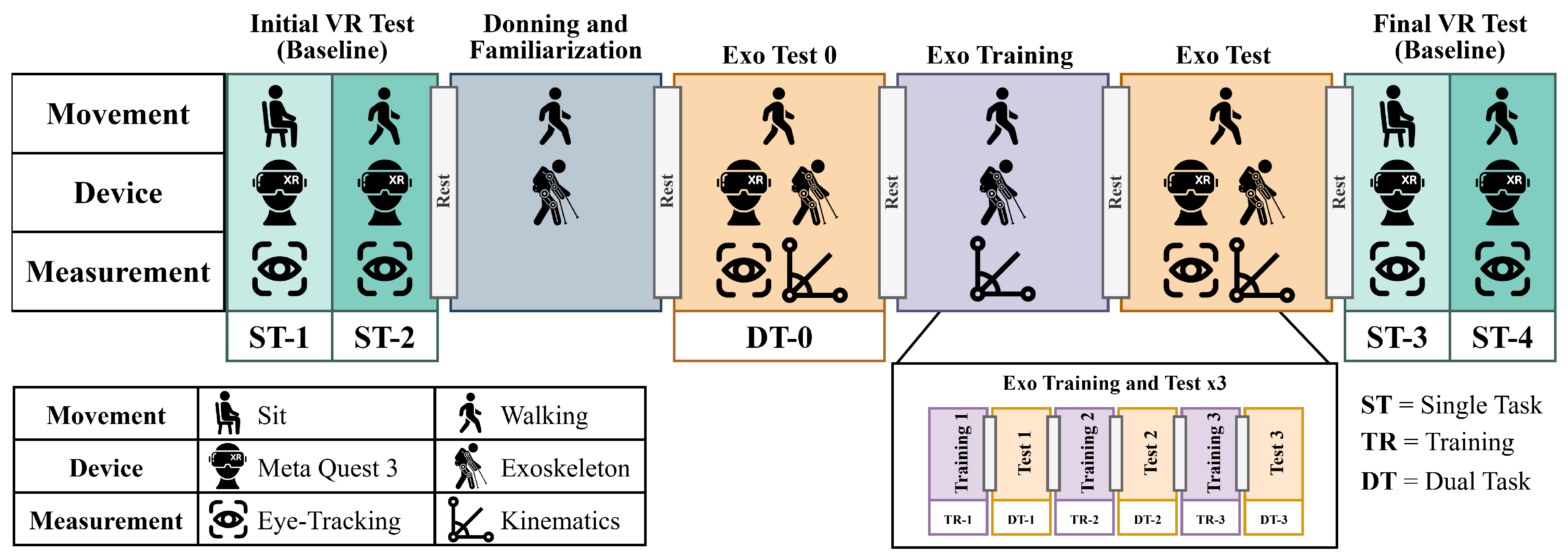

2.4. Experimental Setup

2.5. Experimental Protocol

2.6. Statistical Analysis

3. Results

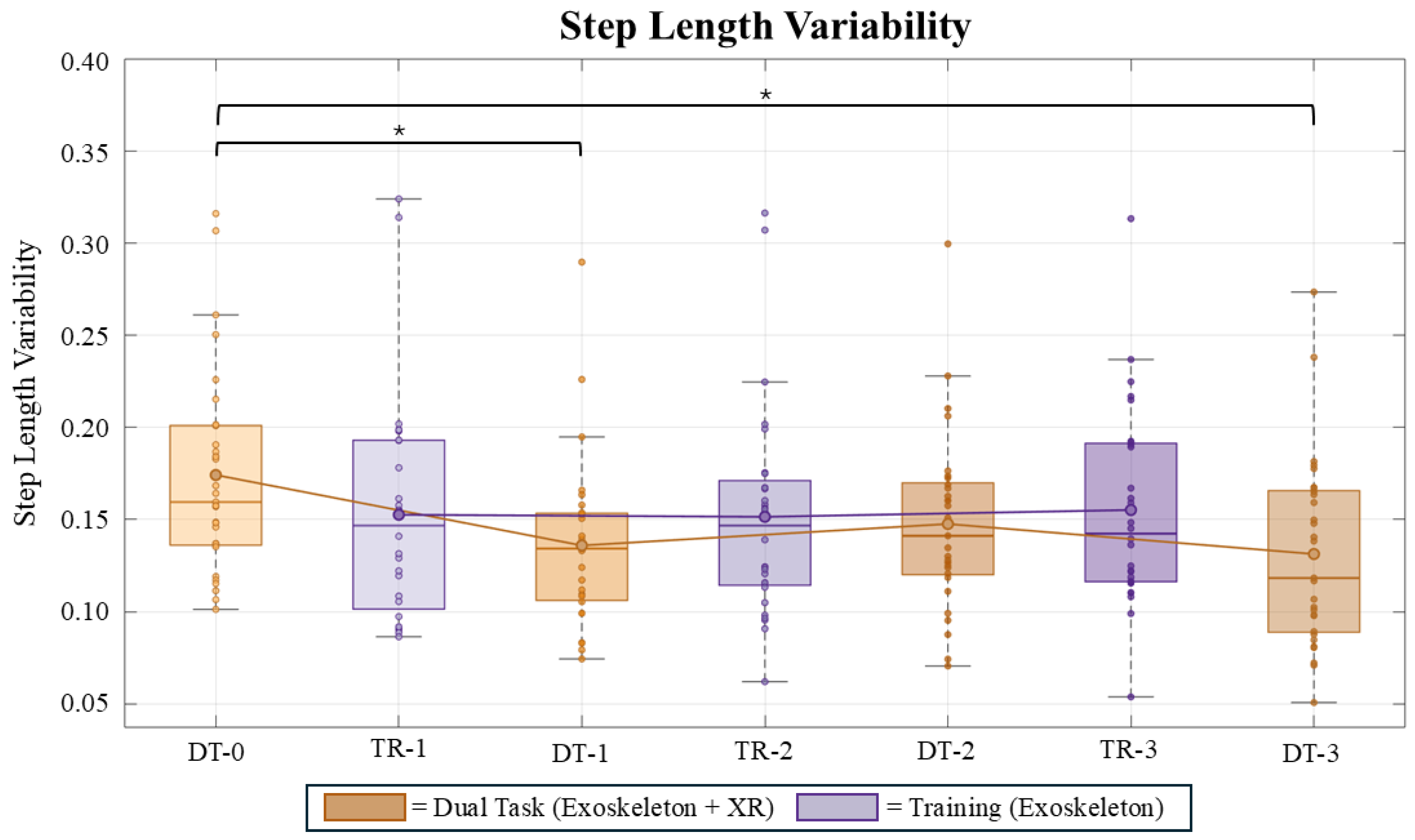

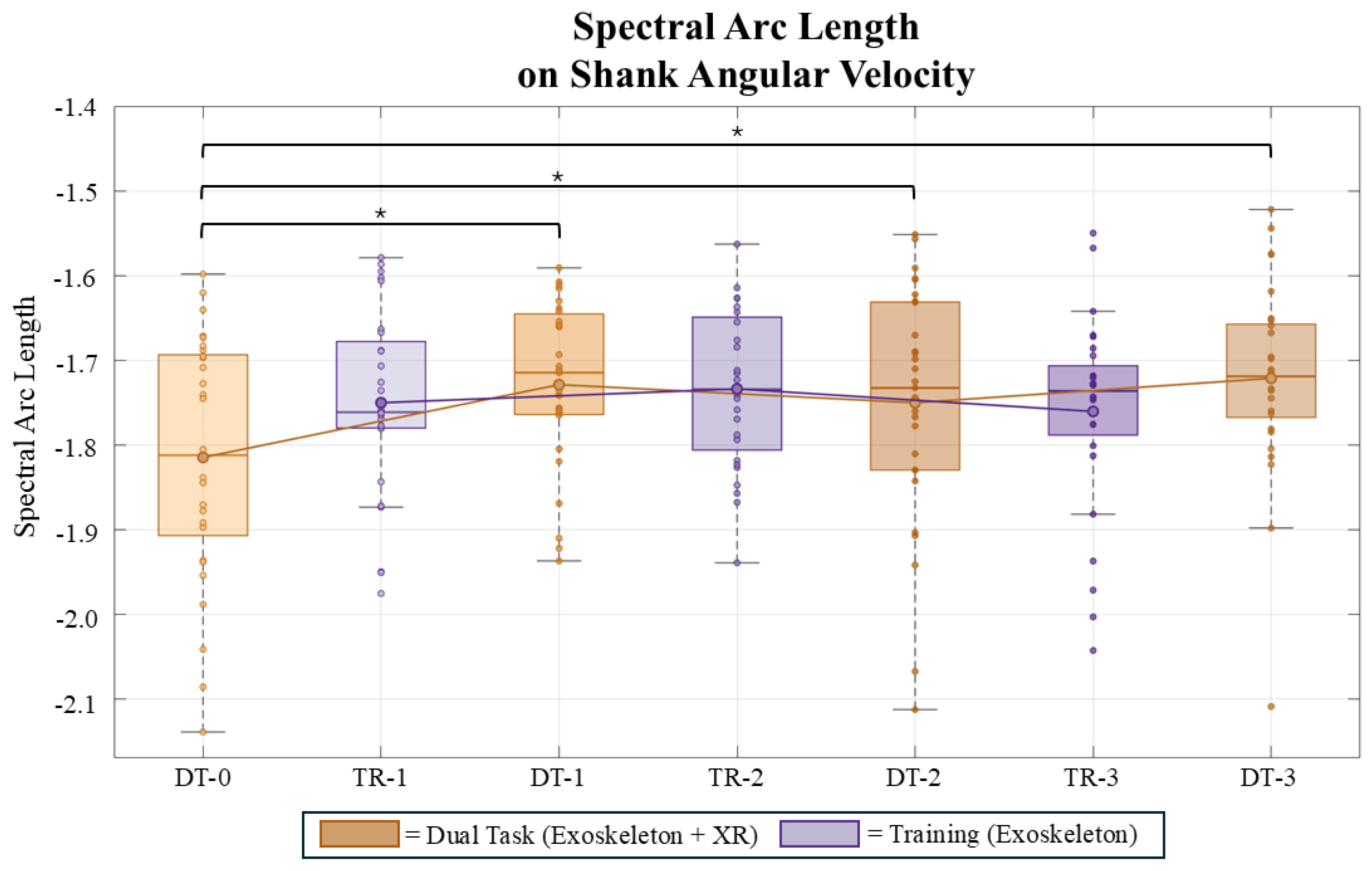

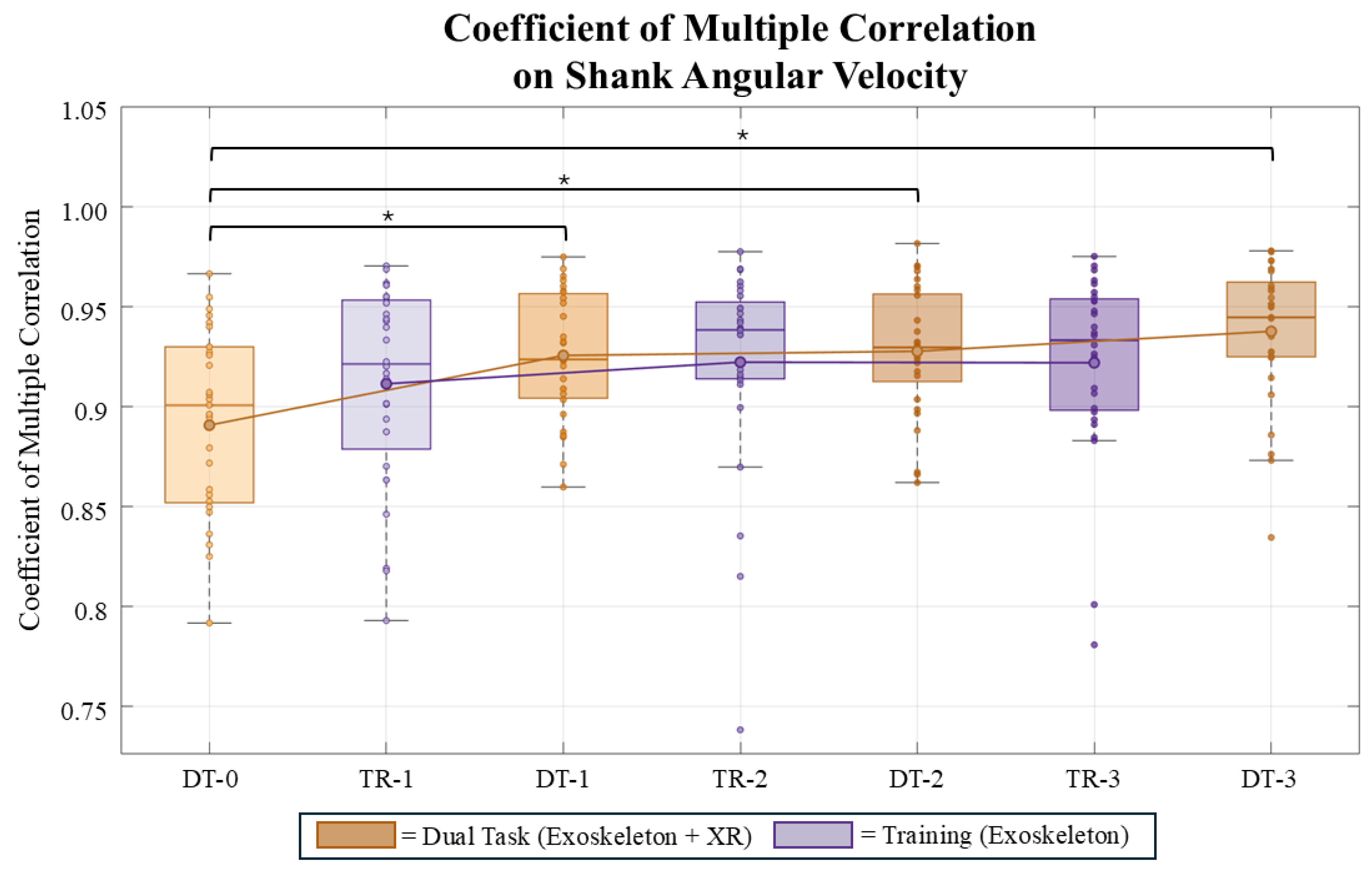

3.1. Kinematics Results

3.1.1. Cognitive Results

4. Discussions

4.1. Kinematics

4.2. Cognitive

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Rupal, B.S.; Rafique, S.; Singla, A.; Singla, E.; Isaksson, M.; Virk, G.S. Lower-limb exoskeletons: Research trends and regulatory guidelines in medical and non-medical applications. International Journal of Advanced Robotic Systems 2017, 14, 1729881417743554. [Google Scholar] [CrossRef]

- Pinto-Fernandez, D.; Torricelli, D.; Sanchez-Villamanan, M.d.C.; Aller, F.; Mombaur, K.; Conti, R.; Vitiello, N.; Moreno, J.C.; Pons, J.L. Performance Evaluation of Lower Limb Exoskeletons: A Systematic Review. IEEE Transactions on Neural Systems and Rehabilitation Engineering 2020, 28, 1573–1583. [Google Scholar] [CrossRef]

- Young, A.J.; Ferris, D.P. State of the Art and Future Directions for Lower Limb Robotic Exoskeletons. IEEE transactions on neural systems and rehabilitation engineering: a publication of the IEEE Engineering in Medicine and Biology Society 2017, 25, 171–182. [Google Scholar] [CrossRef] [PubMed]

- Wilkenfeld, J.N.; Kim, S.; Upasani, S.; Kirkwood, G.L.; Dunbar, N.E.; Srinivasan, D. Sensemaking, adaptation and agency in human-exoskeleton synchrony. Frontiers in Robotics and AI 2023, 10. [Google Scholar] [CrossRef] [PubMed]

- Marchand, C.; De Graaf, J.B.; Jarrassé, N. Measuring mental workload in assistive wearable devices: a review. Journal of NeuroEngineering and Rehabilitation 2021, 18, 160. [Google Scholar] [CrossRef] [PubMed]

- Charles, R.L.; Nixon, J. Measuring mental workload using physiological measures: A systematic review. Applied Ergonomics 2019, 74, 221–232. [Google Scholar] [CrossRef]

- Heller, B.W.; Datta, D.; Howitt, J. A pilot study comparing the cognitive demand of walking for transfemoral amputees using the Intelligent Prosthesis with that using conventionally damped knees. Clinical Rehabilitation 2000, 14, 518–522. [Google Scholar] [CrossRef]

- Miller, W.C.; Speechley, M.; Deathe, B. The prevalence and risk factors of falling and fear of falling among lower extremity amputees. Archives of Physical Medicine and Rehabilitation 2001, 82, 1031–1037. [Google Scholar] [CrossRef]

- Yuan, J.; Bai, X.; Driscoll, B.; Liu, M.; Huang, H.; Feng, J. Standing and Walking Attention Visual Field (SWAVF) task: A new method to assess visuospatial attention during walking. Applied Ergonomics 2022, 104, 103804. [Google Scholar] [CrossRef]

- Althomali, M.M.; Leat, S.J. Binocular Vision Disorders and Visual Attention: Associations With Balance and Mobility in Older Adults. Journal of Aging and Physical Activity 2018, 26, 235–247. [Google Scholar] [CrossRef]

- Mirelman, A.; Herman, T.; Brozgol, M.; Dorfman, M.; Sprecher, E.; Schweiger, A.; Giladi, N.; Hausdorff, J.M. Executive Function and Falls in Older Adults: New Findings from a Five-Year Prospective Study Link Fall Risk to Cognition. PLoS ONE 2012, 7, e40297. [Google Scholar] [CrossRef] [PubMed]

- Lim, J.; Amado, A.; Sheehan, L.; Van Emmerik, R.E.A. Dual task interference during walking: The effects of texting on situational awareness and gait stability. Gait & Posture 2015, 42, 466–471. [Google Scholar] [CrossRef] [PubMed]

- Simons, D.J.; Chabris, C.F. Gorillas in our midst: sustained inattentional blindness for dynamic events. Perception 1999, 28, 1059–1074. [Google Scholar] [CrossRef] [PubMed]

- Parr, J.V.V.; Vine, S.J.; Wilson, M.R.; Harrison, N.R.; Wood, G. Visual attention, EEG alpha power and T7-Fz connectivity are implicated in prosthetic hand control and can be optimized through gaze training. Journal of Neuroengineering and Rehabilitation 2019, 16, 52. [Google Scholar] [CrossRef]

- Upasani, S.; Srinivasan, D. Gaze Behavior and Mental Workload While Using a Whole-Body Powered Exoskeleton: A Pilot Study. Proceedings of the Human Factors and Ergonomics Society Annual Meeting 2023, 67, 980–981. [Google Scholar] [CrossRef]

- Mariani, G.; Lambranzi, C.; Cartocci, N.; Barresi, G.; Natali, C.D.; Momi, E.D.; Ortiz, J. Physiological Measures of the Mental Workload in Users of a Lower Limb Exosuit: A Comparison of Subjective and Objective Metrics. arXiv [eess. 2025, arXiv:2511.11414. [Google Scholar] [CrossRef]

- Leibman, D.; Mitchell, D.B.; Choi, H. Impacts of Enhanced Physical Abilities via Exoskeletons on Attentional Performance and Workload - Daniel Leibman, Daxton B. Mitchell, HeeSun Choi, 2022. In Proceedings of the Human Factors and Ergonomics Society Annual Meeting, 2022. [Google Scholar]

- Leibman, D.; Choi, H. Changes in the Distribution of Visual Attention Associated with Lower-Body and Back-Support Exoskeleton Use During Lifting Task - Daniel Leibman, HeeSun Choi, 2025. In Proceedings of the Human Factors and Ergonomics Society Annual Meeting, 2025. [Google Scholar]

- Ayed, I.; Ghazel, A.; Jaume-I-Capó, A.; Moyà-Alcover, G.; Varona, J.; Martínez-Bueso, P. Vision-based serious games and virtual reality systems for motor rehabilitation: A review geared toward a research methodology. International Journal of Medical Informatics 2019, 131, 103909. [Google Scholar] [CrossRef]

- Rodrigues-Carvalho, C.; Fernández-García, M.; Pinto-Fernández, D.; Sanz-Morere, C.; Barroso, F.O.; Borromeo, S.; Rodríguez-Sánchez, C.; Moreno, J.C.; del Ama, A.J. Benchmarking the Effects on Human–Exoskeleton Interaction of Trajectory, Admittance and EMG-Triggered Exoskeleton Movement Control. Sensors 2023, 23. [Google Scholar] [CrossRef]

- Massardi, S.; Rodriguez-Cianca, D.; Pinto-Fernandez, D.; Moreno, J.C.; Lancini, M.; Torricelli, D. Characterization and Evaluation of Human–Exoskeleton Interaction Dynamics: A Review. Sensors 2022, 22. [Google Scholar] [CrossRef]

- Smit-Russcher, R.; Princelle, D.; Bilal, I. MVN white paper: Enhancing motion tracking accuracy with novel gender-specific models.

- Grimmer, M.; Schmidt, K.; Duarte, J.E.; Neuner, L.; Koginov, G.; Riener, R. Stance and Swing Detection Based on the Angular Velocity of Lower Limb Segments During Walking. Frontiers in Neurorobotics 2019, 13. [Google Scholar] [CrossRef] [PubMed]

- Montero-Odasso, M.; Muir, S.W.; Speechley, M. Dual-task complexity affects gait in people with mild cognitive impairment: the interplay between gait variability, dual tasking, and risk of falls. Archives of Physical Medicine and Rehabilitation 2012, 93, 293–299. [Google Scholar] [CrossRef] [PubMed]

- Demirdel, S.; Erbahçeci, F.; Yazıcıoğlu, G. The effects of cognitive versus motor concurrent task on gait in individuals with transtibial amputation, transfemoral amputation and in a healthy control group. Gait & Posture 2022, 91, 223–228. [Google Scholar] [CrossRef] [PubMed]

- Rock, C.G.; Marmelat, V.; Yentes, J.M.; Siu, K.C.; Takahashi, K.Z. Interaction between step-to-step variability and metabolic cost of transport during human walking. The Journal of Experimental Biology 2018, 221, jeb181834. [Google Scholar] [CrossRef]

- Beck, Y.; Herman, T.; Brozgol, M.; Giladi, N.; Mirelman, A.; Hausdorff, J.M. SPARC: a new approach to quantifying gait smoothness in patients with Parkinson’s disease. Journal of Neuroengineering and Rehabilitation 2018, 15, 49. [Google Scholar] [CrossRef]

- Antonelli, M.; Caselli, E.; Gastaldi, L. Comparison of Gait Smoothness Metrics in Healthy Elderly and Young People. Applied Sciences 2024, 14. [Google Scholar] [CrossRef]

- Ferrari, A.; Cutti, A.G.; Cappello, A. A new formulation of the coefficient of multiple correlation to assess the similarity of waveforms measured synchronously by different motion analysis protocols. Gait & Posture 2010, 31, 540–542. [Google Scholar] [CrossRef]

- Brunyé, T.T.; Drew, T.; Kerr, K.F.; Shucard, H.; Powell, K.; Weaver, D.L.; Elmore, J.G. Zoom behavior during visual search modulates pupil diameter and reflects adaptive control states. PloS One 2023, 18, e0282616. [Google Scholar] [CrossRef]

- Strauch, C.; Wang, C.A.; Einhäuser, W.; Van der Stigchel, S.; Naber, M. Pupillometry as an integrated readout of distinct attentional networks. Trends in Neurosciences 2022, 45, 635–647. [Google Scholar] [CrossRef]

- Beatty, J. Task-evoked pupillary responses, processing load, and the structure of processing resources. Psychological Bulletin 1982, 91, 276–292. [Google Scholar] [CrossRef]

- Karpouzian-Rogers, T.; Sweeney, J.A.; Rubin, L.H.; McDowell, J.; Clementz, B.A.; Gershon, E.; Keshavan, M.S.; Pearlson, G.D.; Tamminga, C.A.; Reilly, J.L. Reduced task-evoked pupillary response in preparation for an executive cognitive control response among individuals across the psychosis spectrum. Schizophrenia Research 2022, 248, 79–88. [Google Scholar] [CrossRef]

- Yogev-Seligmann, G.; Hausdorff, J.M.; Giladi, N. The role of executive function and attention in gait. Movement Disorders: Official Journal of the Movement Disorder Society 2008, 23, 329–342; quiz 472. [Google Scholar] [CrossRef] [PubMed]

- Guertin, P.A. Central pattern generator for locomotion: anatomical, physiological, and pathophysiological considerations. Frontiers in Neurology 2012, 3, 183. [Google Scholar] [CrossRef] [PubMed]

- Shumway-Cook, A.; Woollacott, M.; Kerns, K.A.; Baldwin, M. The effects of two types of cognitive tasks on postural stability in older adults with and without a history of falls. The Journals of Gerontology. Series A, Biological Sciences and Medical Sciences 1997, 52, M232–240. [Google Scholar] [CrossRef] [PubMed]

- Al-Yahya, E.; Dawes, H.; Smith, L.; Dennis, A.; Howells, K.; Cockburn, J. Cognitive motor interference while walking: a systematic review and meta-analysis. Neuroscience and Biobehavioral Reviews 2011, 35, 715–728. [Google Scholar] [CrossRef]

| Statistical Test | Effect / Comparison | Test Statistic | p-value |

|---|---|---|---|

| Step Length Variability | |||

| LMM | Main Effect: Session | ||

| LMM | Interaction: Session × Task | ||

| RM-ANOVA | Main Effect: Condition | ||

| Post-Hoc (Bonferroni) | DT-0 vs. DT-1 | ||

| Post-Hoc (Bonferroni) | DT-0 vs. DT-3 | ||

| Post-Hoc (Bonferroni) | DT-1 vs. DT-2 | - | (ns) |

| SPARC V_SHANK | |||

| LMM | Main Effect: Session | ||

| LMM | Interaction: Session × Task | ||

| RM-ANOVA | Main Effect: Condition | ||

| Post-Hoc (Bonferroni) | DT-0 vs. DT-1 | ||

| Post-Hoc (Bonferroni) | DT-0 vs. DT-3 | ||

| Post-Hoc (Bonferroni) | DT-1 vs. DT-2 | - | (ns) |

| CMC V_SHANK | |||

| LMM | Main Effect: Session | ||

| LMM | Interaction: Session × Task | 0.073 (ns) | |

| RM-ANOVA | Main Effect: Condition | ||

| Post-Hoc (Bonferroni) | DT-0 vs. DT-1 | ||

| Post-Hoc (Bonferroni) | DT-0 vs. DT-3 | ||

| Post-Hoc (Bonferroni) | DT-1 vs. DT-2 | - | (ns) |

| Statistical Test | Effect / Comparison | Test Statistic | p-value |

|---|---|---|---|

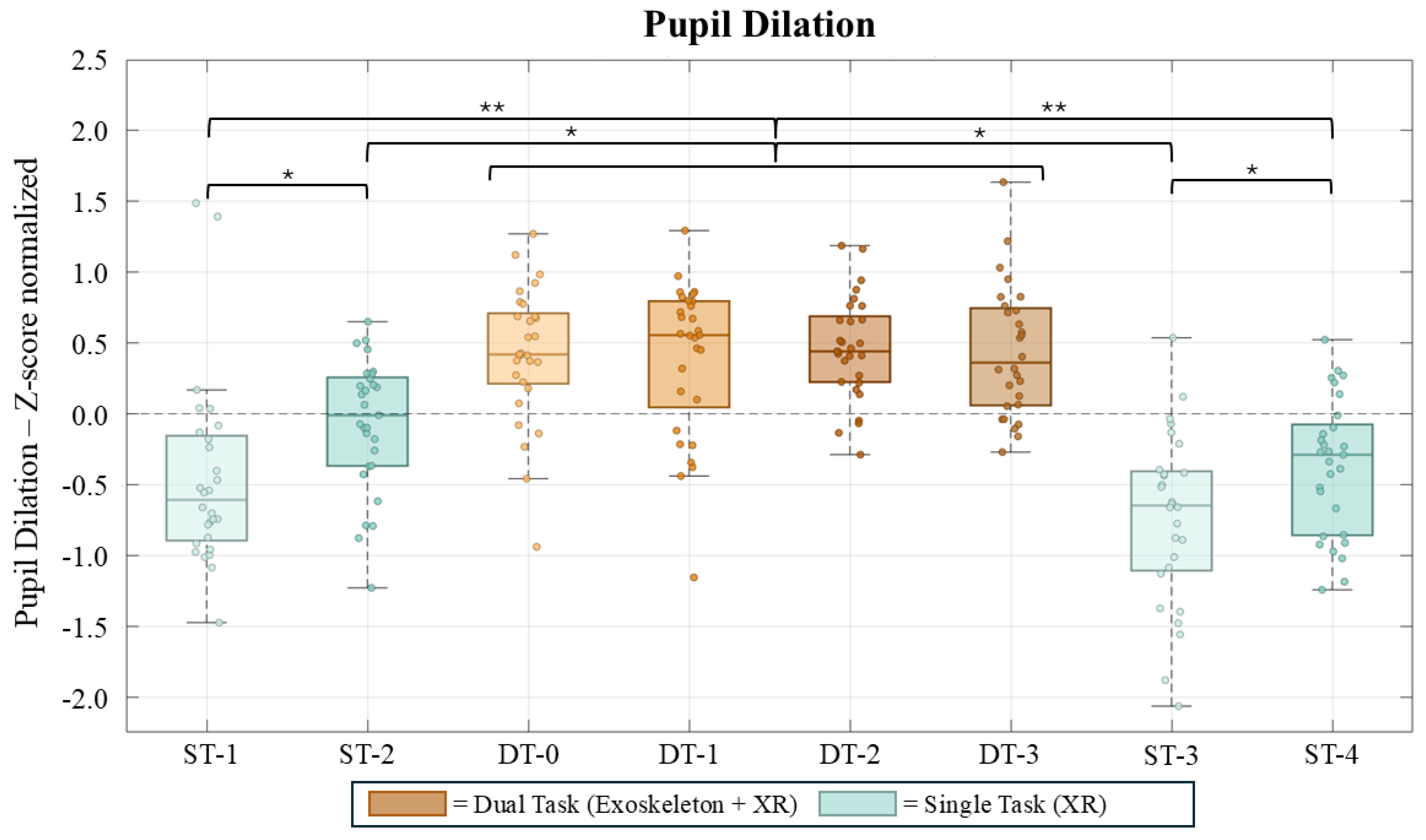

| Pupil Dilation (z-score normalized) | |||

| Friedman Test | Main Effect: Condition | ||

| Post-Hoc (Wilcoxon) | ST-1 vs. DT-0 | ||

| Post-Hoc (Wilcoxon) | ST-1 vs. ST-2 | ||

| Post-Hoc (Wilcoxon) | All DT cond. (pairwise) | - | (ns) |

| Post-Hoc (Wilcoxon) | ST-1 vs. ST-3 | - | (ns) |

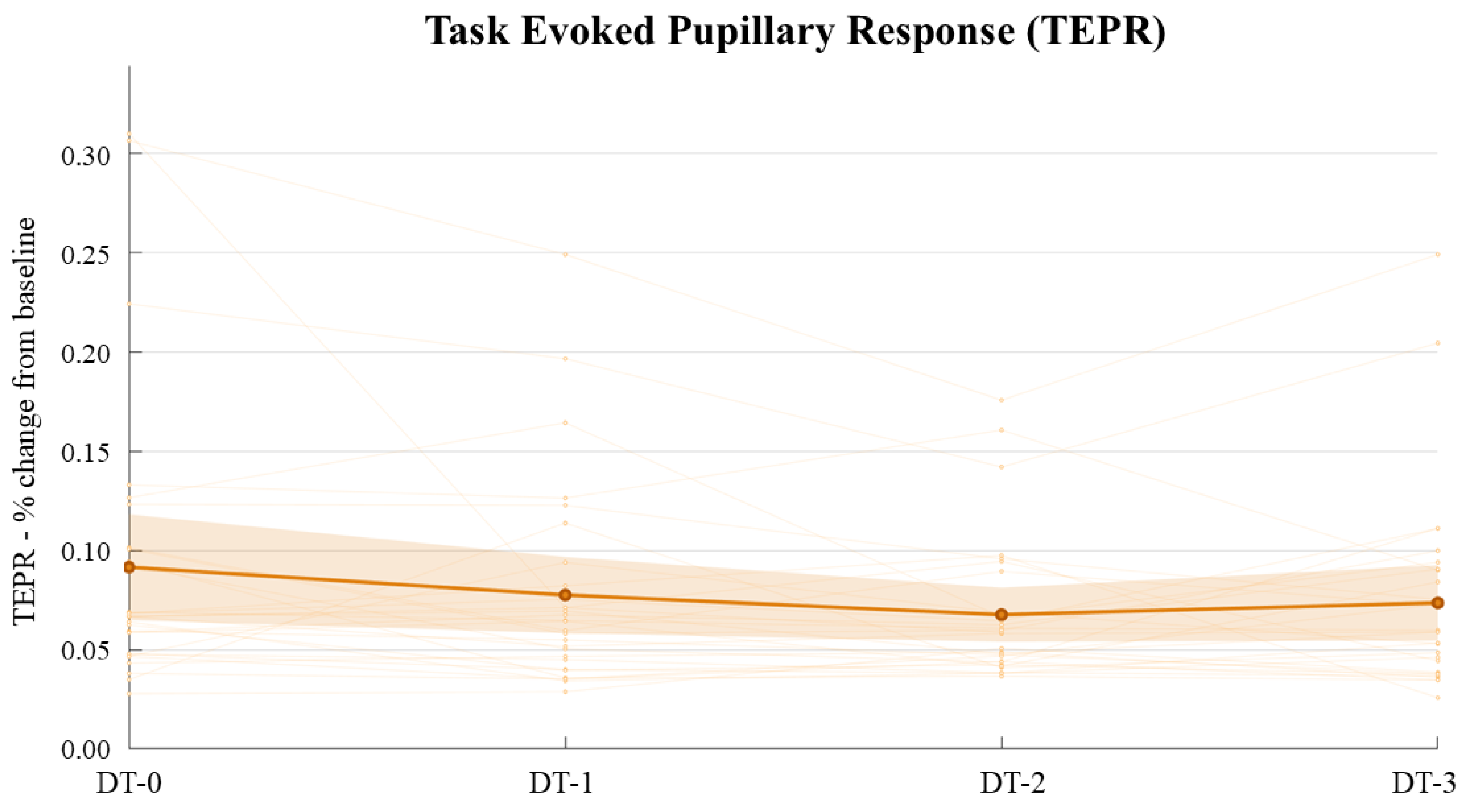

| Task-Evoked Pupillary Response (TEPR) | |||

| LMM | Main Effect: Session | ||

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).