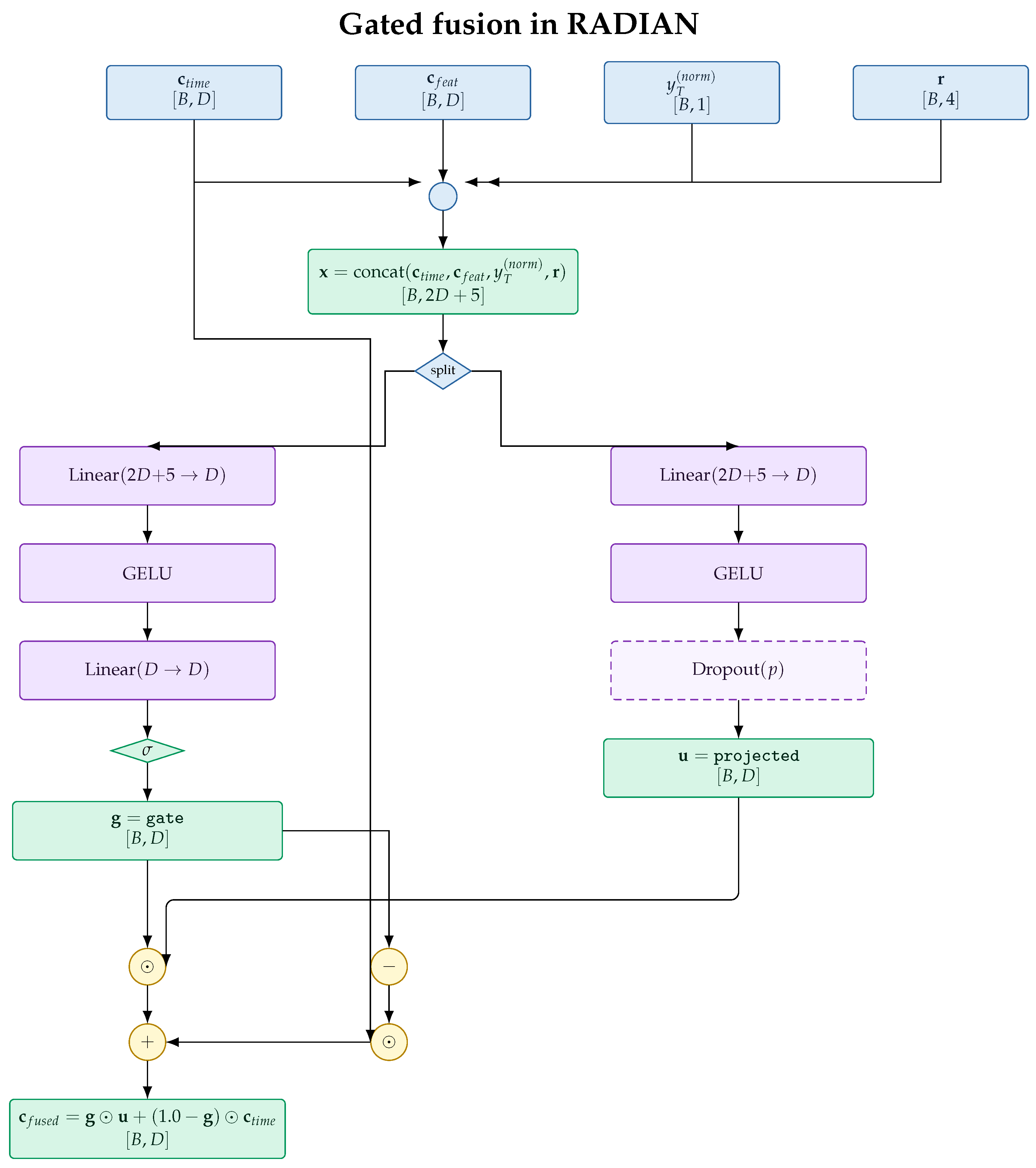

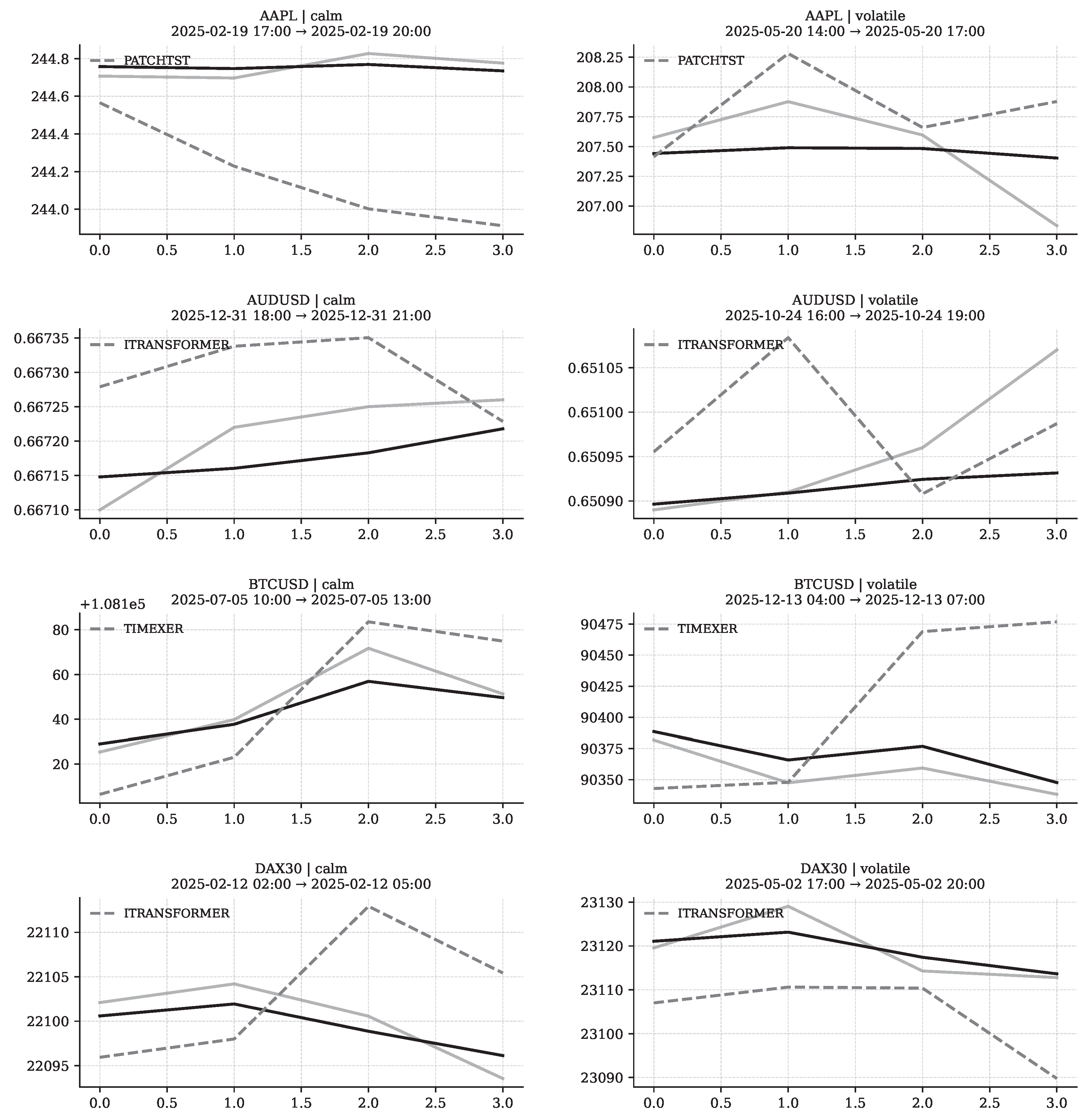

Figure 1.

Architectural diagram of the proposed RADIAN model. The framework consists of three processing branches—temporal, feature, and regime—followed by gated fusion and a regime-aware directional head to produce multi-step forecasts.

Figure 1.

Architectural diagram of the proposed RADIAN model. The framework consists of three processing branches—temporal, feature, and regime—followed by gated fusion and a regime-aware directional head to produce multi-step forecasts.

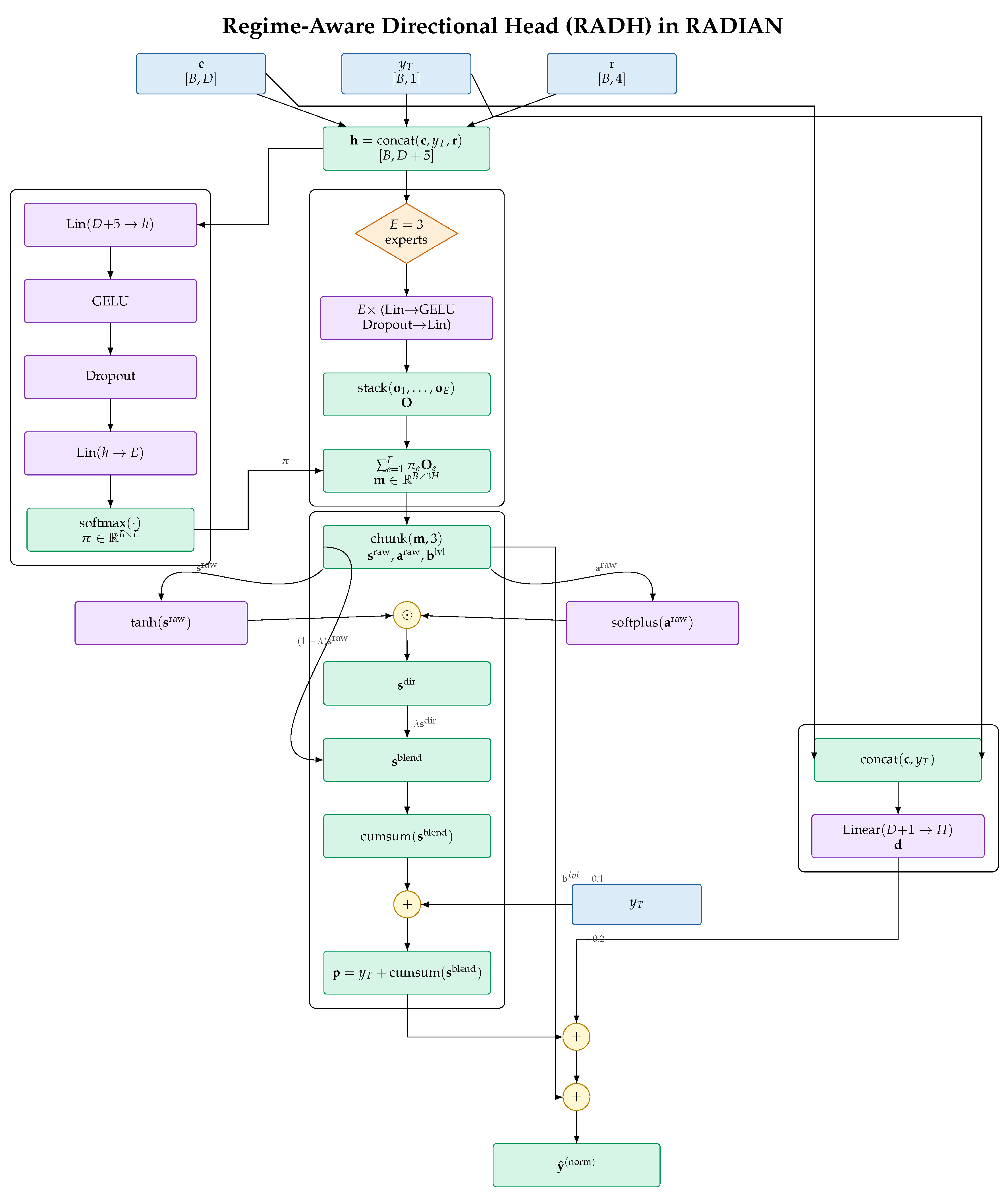

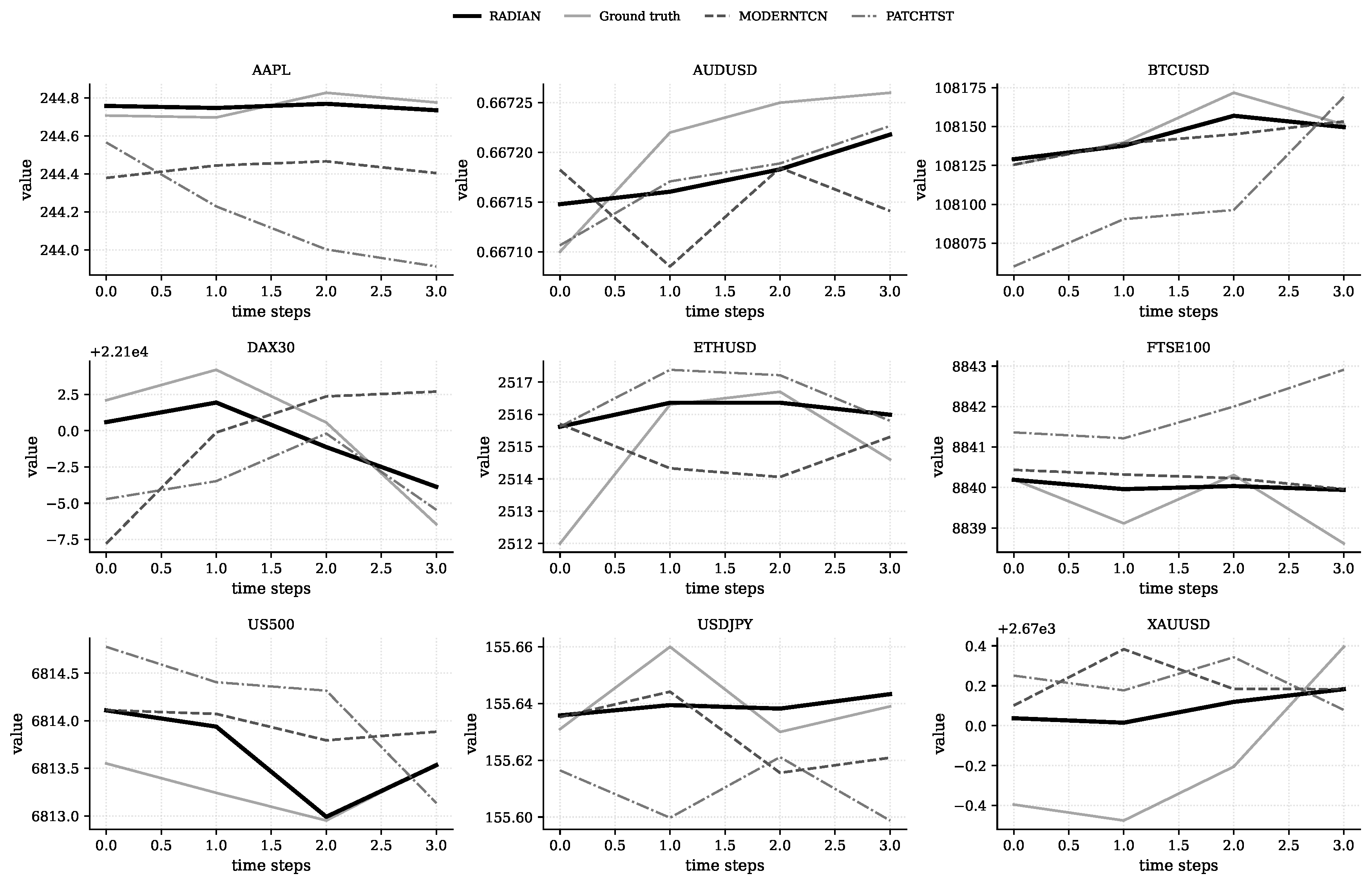

Figure 2.

Regime vector decomposition of the price process. The plot illustrates the four components of the regime vector , with (volatility) in the middle panel and , (shock magnitude and envelope) in the lower panel. The asset price evolution is shown above, and vertical markers denote structural breakpoints.

Figure 2.

Regime vector decomposition of the price process. The plot illustrates the four components of the regime vector , with (volatility) in the middle panel and , (shock magnitude and envelope) in the lower panel. The asset price evolution is shown above, and vertical markers denote structural breakpoints.

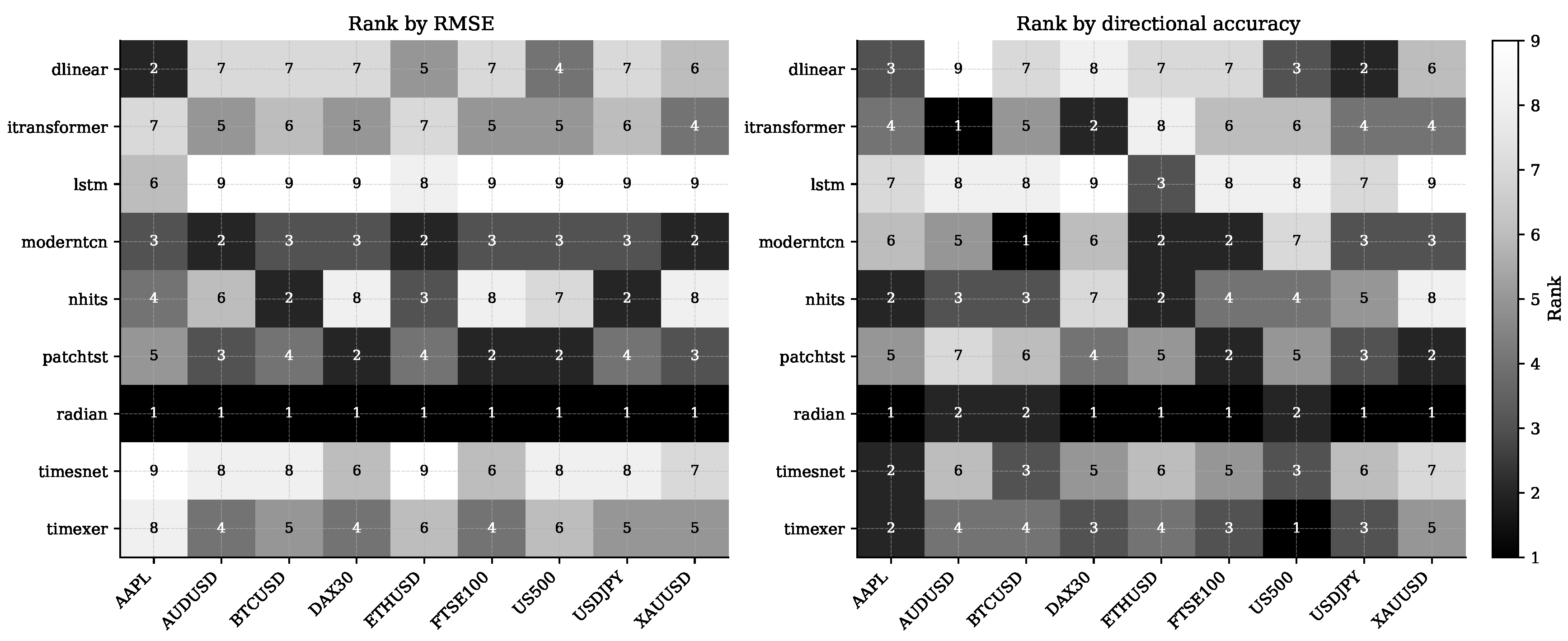

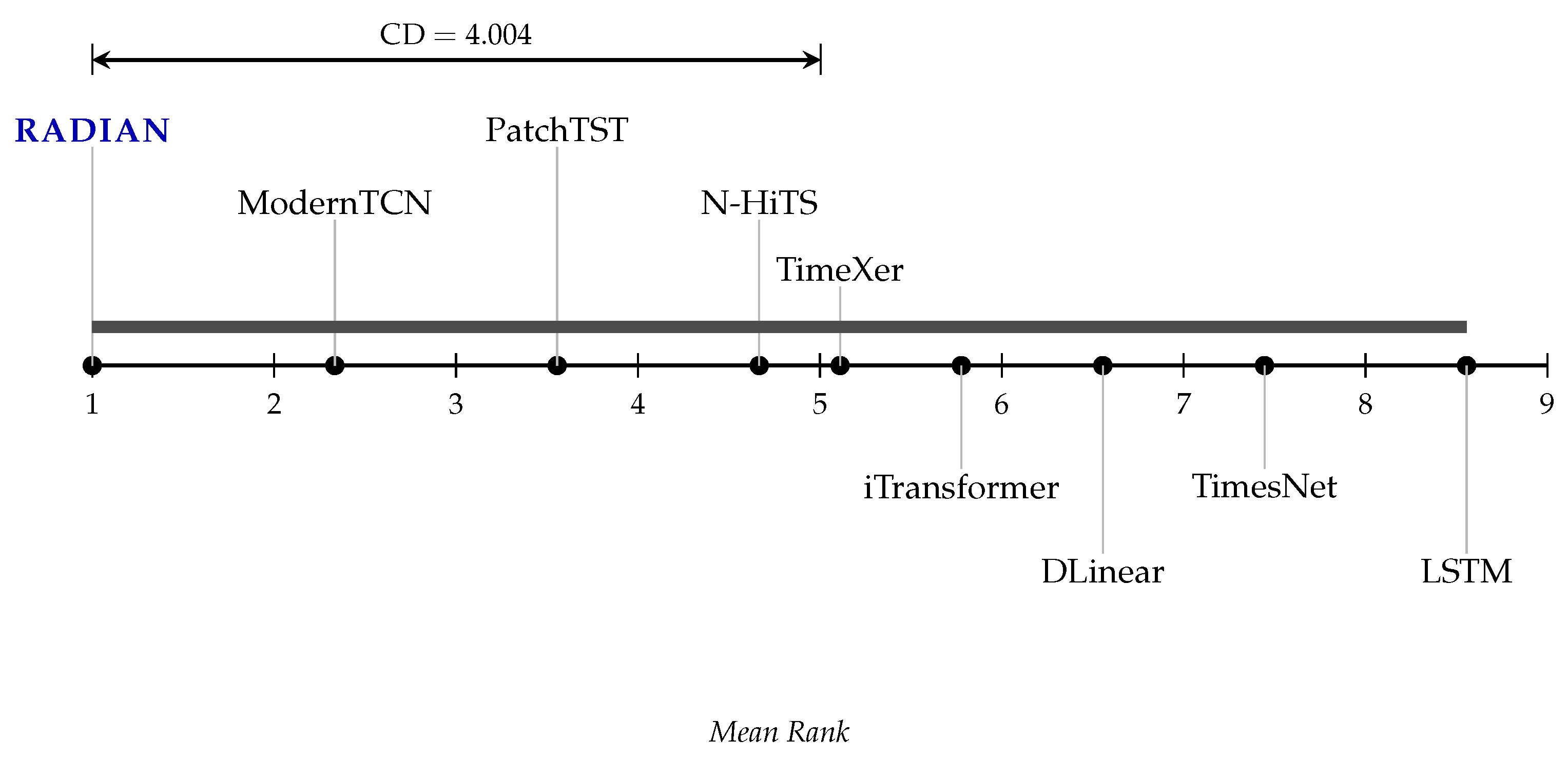

Figure 5.

Comparative performance of deep learning forecasting models expressed as mean ranks. Non-significant differences () are indicated by horizontal brackets. RADIAN demonstrates the most favorable rank, followed by ModernTCN and PatchTST, whereas LSTM and TimesNet show the weakest relative performance.

Figure 5.

Comparative performance of deep learning forecasting models expressed as mean ranks. Non-significant differences () are indicated by horizontal brackets. RADIAN demonstrates the most favorable rank, followed by ModernTCN and PatchTST, whereas LSTM and TimesNet show the weakest relative performance.

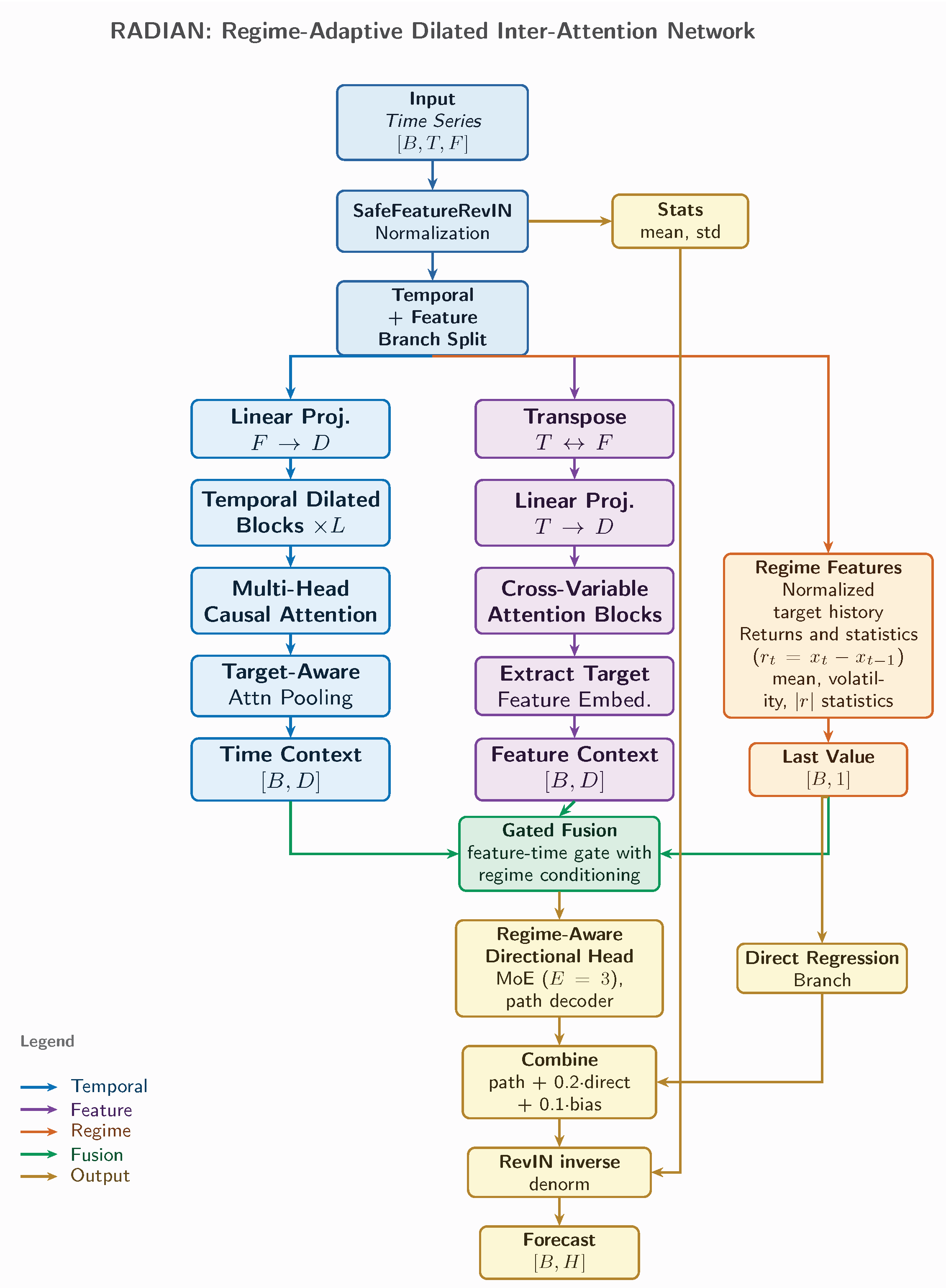

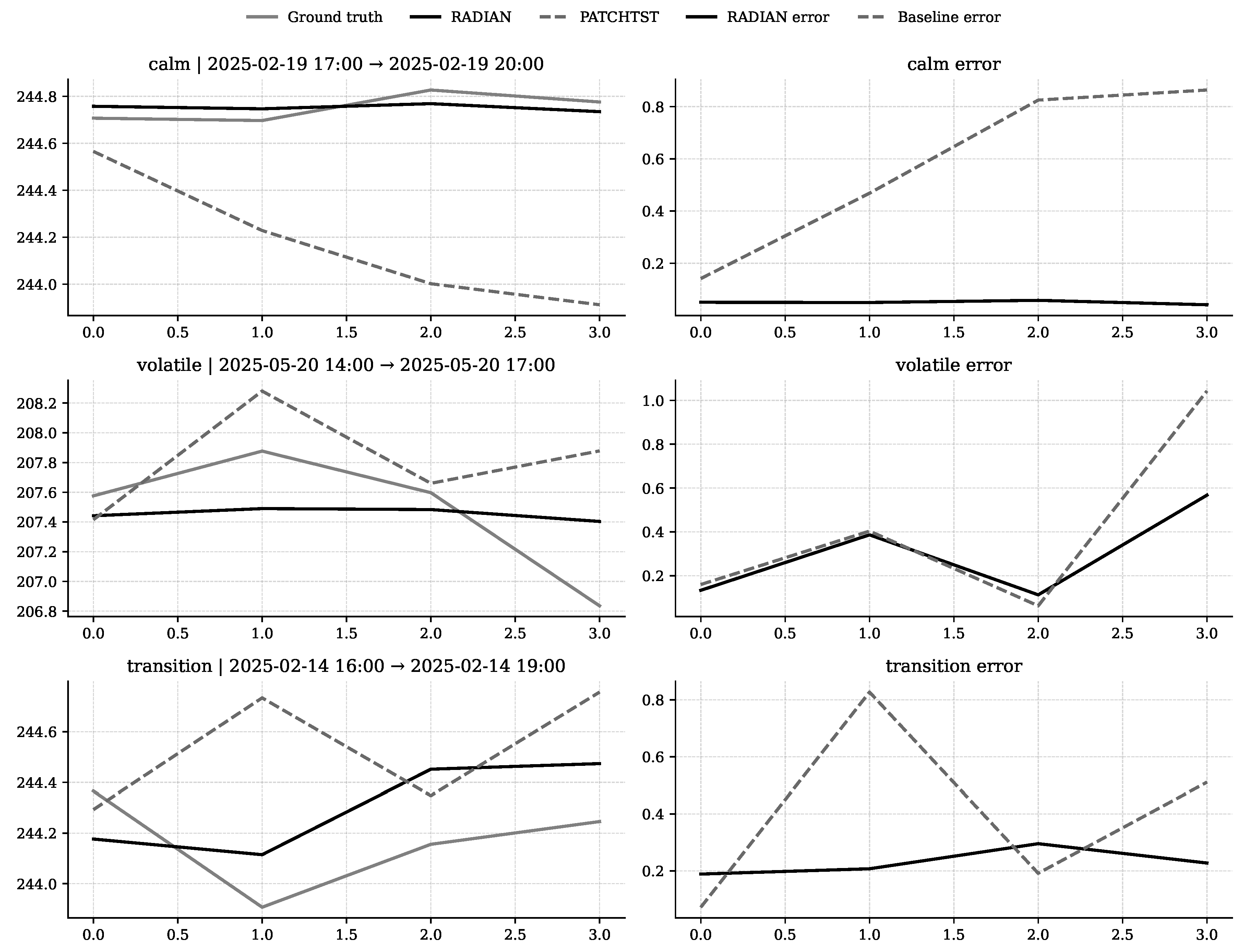

Figure 6.

Comparative forecast performance across market regimes. For each regime (calm, volatile, transition), the left column shows ground truth versus predictions from RADIAN and the selected baseline; the right column displays absolute prediction errors. Window timestamps are indicated in each subplot title.

Figure 6.

Comparative forecast performance across market regimes. For each regime (calm, volatile, transition), the left column shows ground truth versus predictions from RADIAN and the selected baseline; the right column displays absolute prediction errors. Window timestamps are indicated in each subplot title.

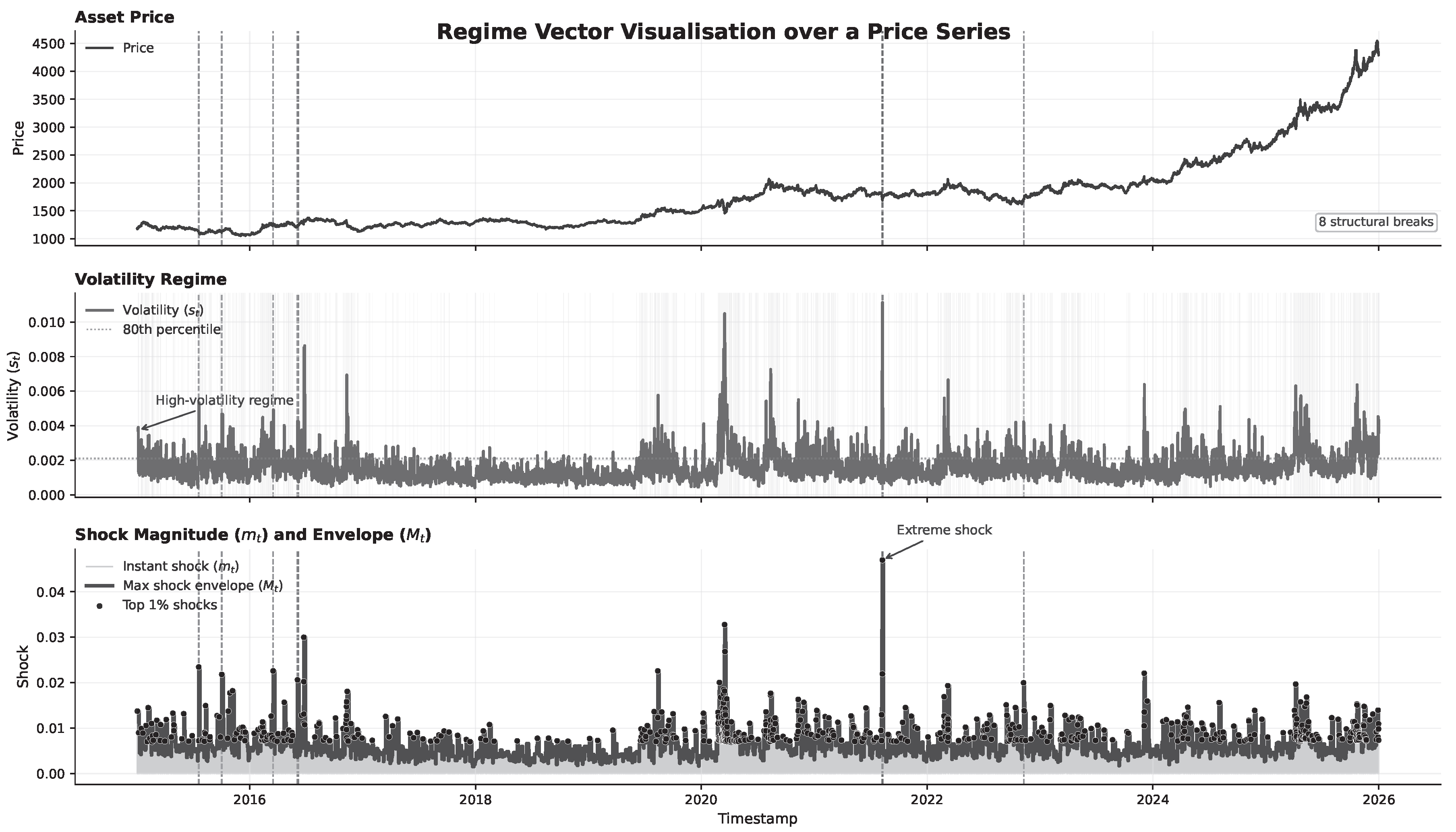

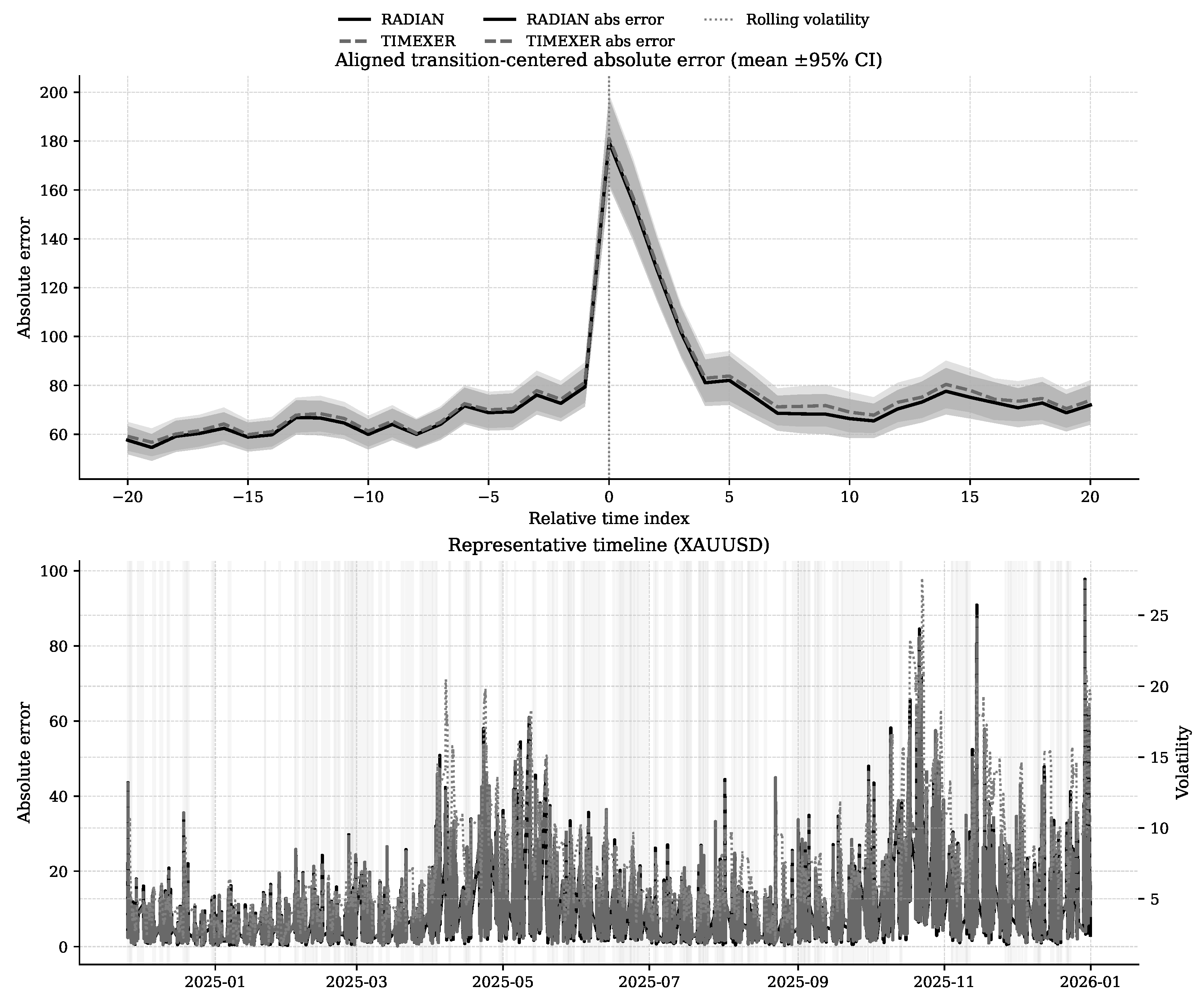

Figure 7.

Transition-centered absolute prediction error across all datasets. The upper panel displays mean absolute error ( CI) aligned at transition points () for RADIAN and the best-performing baseline. The lower panel shows a representative timeline of absolute errors for both models with overlaid rolling volatility; shaded regions indicate identified transition periods.

Figure 7.

Transition-centered absolute prediction error across all datasets. The upper panel displays mean absolute error ( CI) aligned at transition points () for RADIAN and the best-performing baseline. The lower panel shows a representative timeline of absolute errors for both models with overlaid rolling volatility; shaded regions indicate identified transition periods.

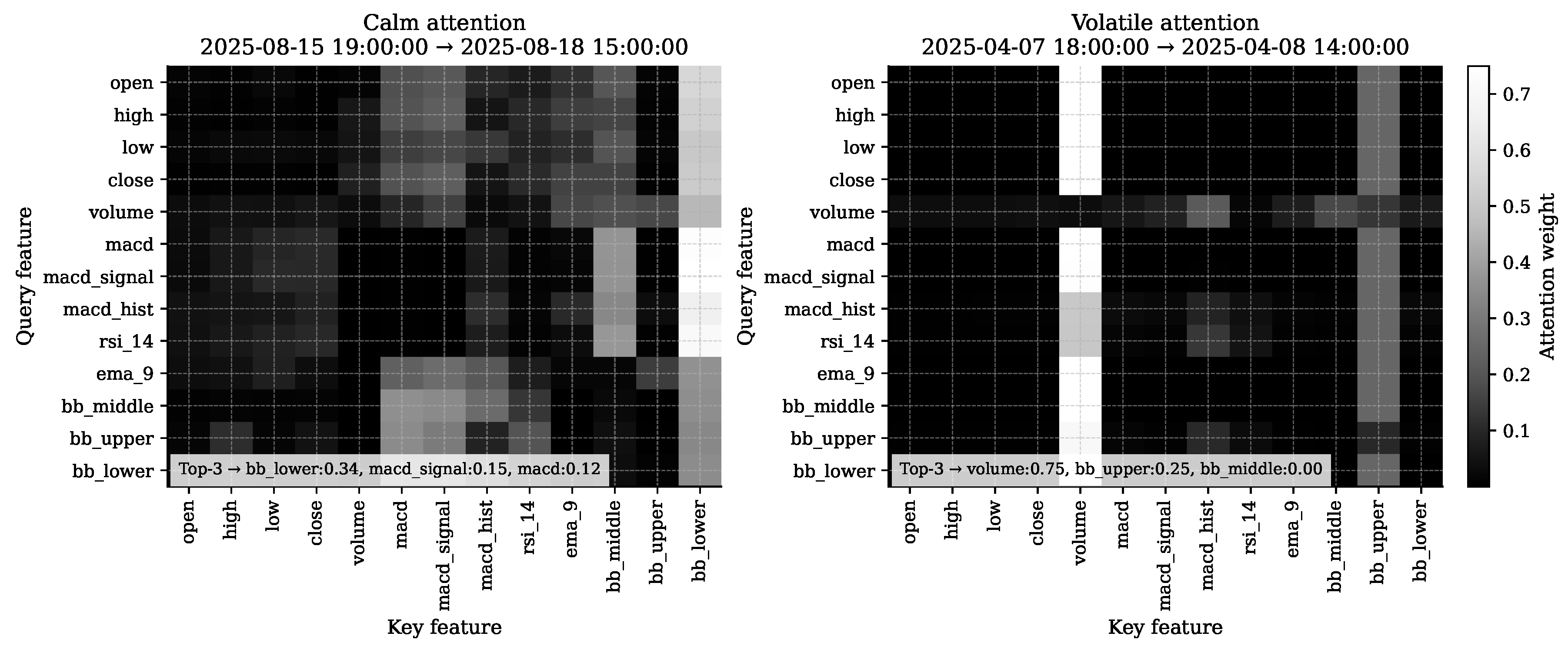

Figure 8.

Cross-variable attention weights from the RADIAN model under calm (left) and volatile (right) market regimes. Each heatmap shows the average attention across heads, with rows representing query features and columns key features.

Figure 8.

Cross-variable attention weights from the RADIAN model under calm (left) and volatile (right) market regimes. Each heatmap shows the average attention across heads, with rows representing query features and columns key features.

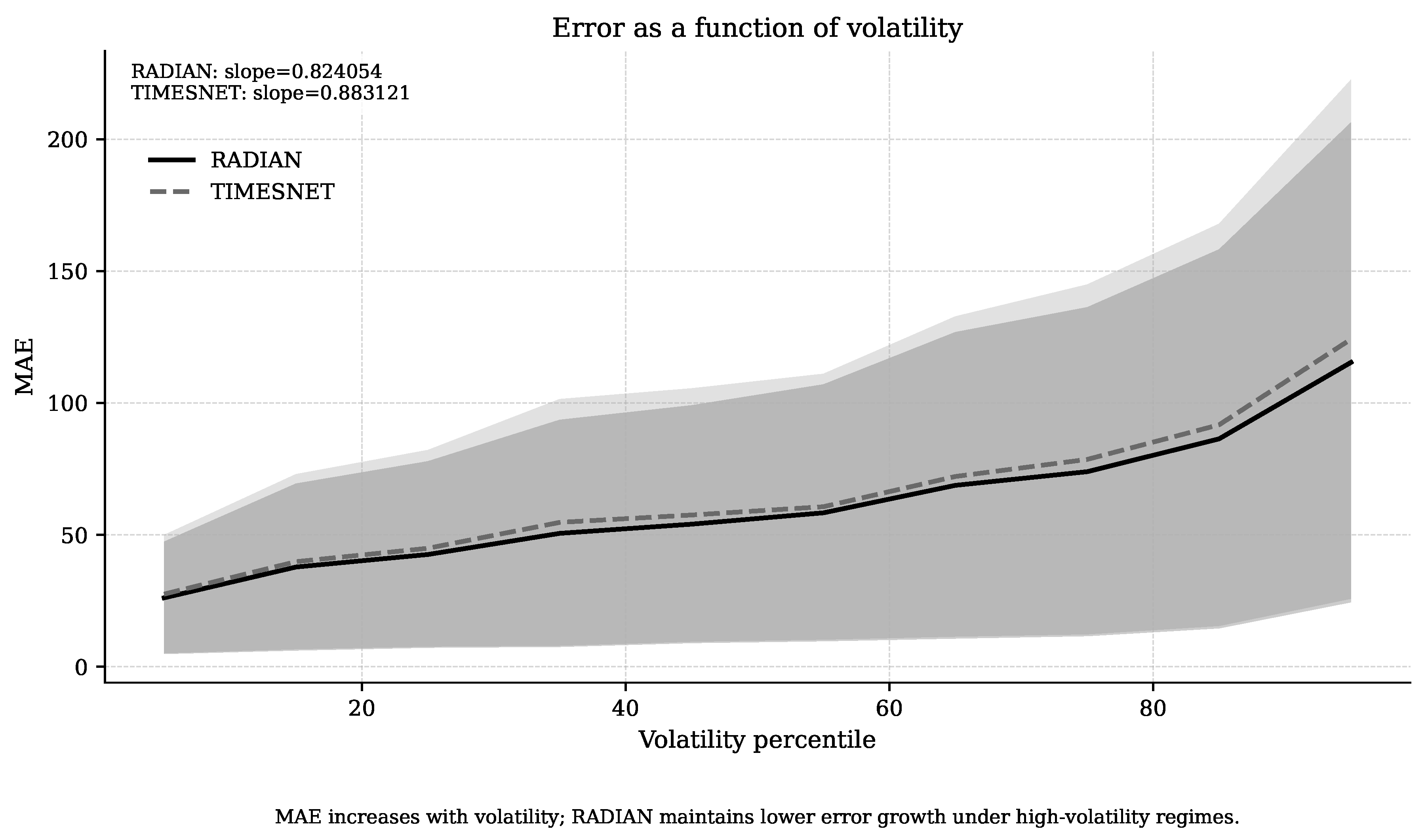

Figure 9.

Mean absolute error (MAE) as a function of volatility percentile for RADIAN and TimesNet. Solid lines denote the mean MAE across datasets and seeds; shaded regions represent 95% confidence intervals. The figure demonstrates that prediction error increases monotonically with volatility, with RADIAN exhibiting consistently lower error and a shallower slope than the baseline.

Figure 9.

Mean absolute error (MAE) as a function of volatility percentile for RADIAN and TimesNet. Solid lines denote the mean MAE across datasets and seeds; shaded regions represent 95% confidence intervals. The figure demonstrates that prediction error increases monotonically with volatility, with RADIAN exhibiting consistently lower error and a shallower slope than the baseline.

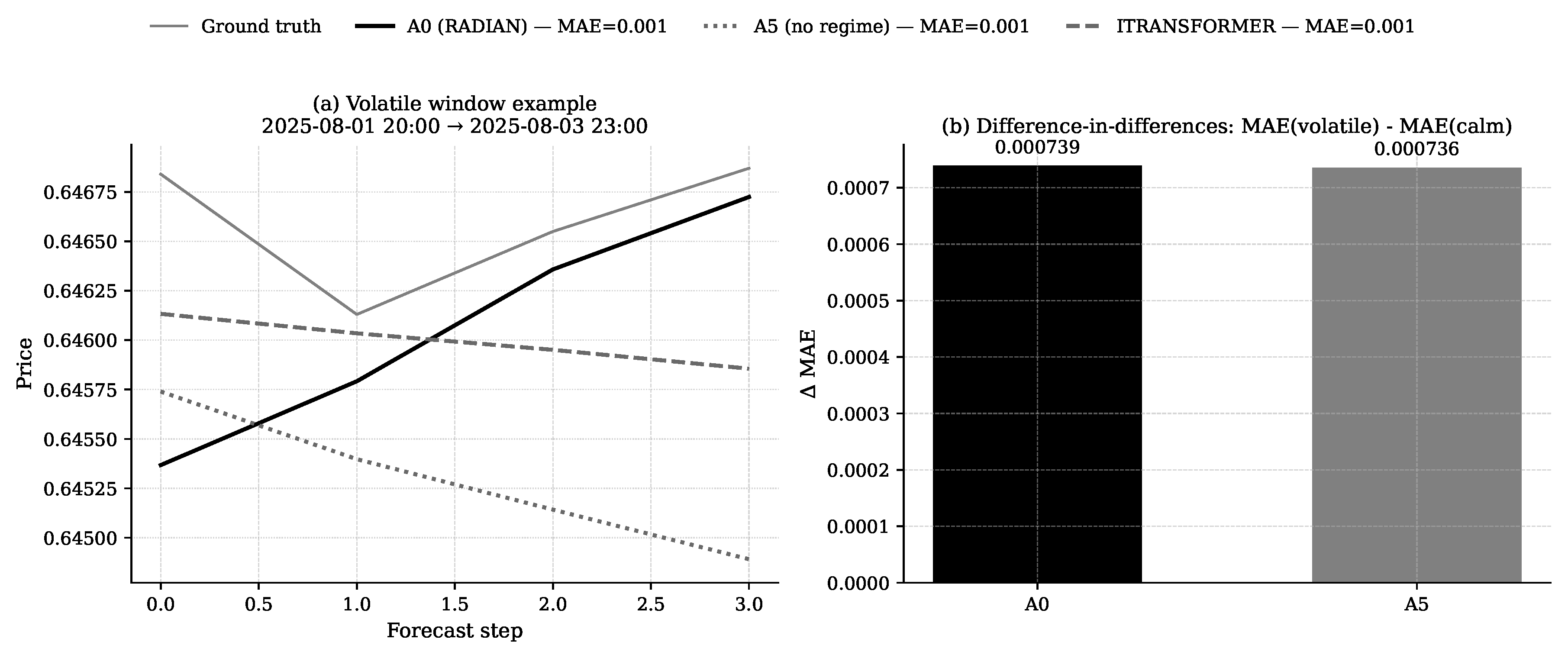

Figure 10.

Impact of removing regime conditioning on forecasting accuracy. The left panel presents a volatile-window forecast comparison between the complete RADIAN model (A0), its variant lacking regime conditioning (A5), a baseline, and actual values. The right panel quantifies the regime-specific performance gap, showing that A0 achieves a smaller increase in MAE from calm to volatile conditions than A5.

Figure 10.

Impact of removing regime conditioning on forecasting accuracy. The left panel presents a volatile-window forecast comparison between the complete RADIAN model (A0), its variant lacking regime conditioning (A5), a baseline, and actual values. The right panel quantifies the regime-specific performance gap, showing that A0 achieves a smaller increase in MAE from calm to volatile conditions than A5.

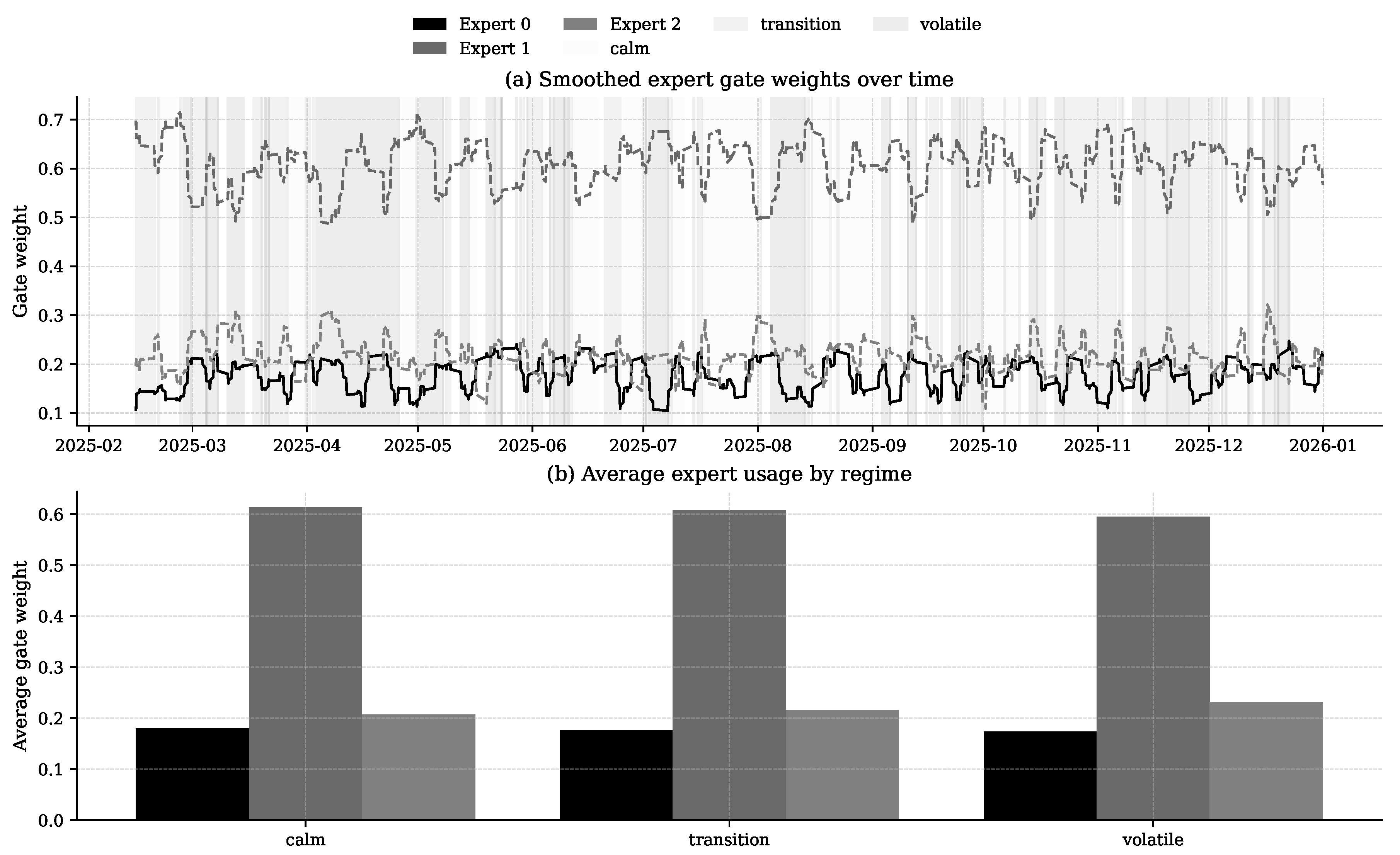

Figure 11.

Mixture-of-experts (MoE) gate weight dynamics across market regimes. Panel (a) shows temporally smoothed expert assignment probabilities over the test period, with background shading indicating calm, transition, and volatile regimes. Panel (b) reports the average gate weight per expert for each regime, revealing systematic variation in expert utilisation with volatility conditions.

Figure 11.

Mixture-of-experts (MoE) gate weight dynamics across market regimes. Panel (a) shows temporally smoothed expert assignment probabilities over the test period, with background shading indicating calm, transition, and volatile regimes. Panel (b) reports the average gate weight per expert for each regime, revealing systematic variation in expert utilisation with volatility conditions.

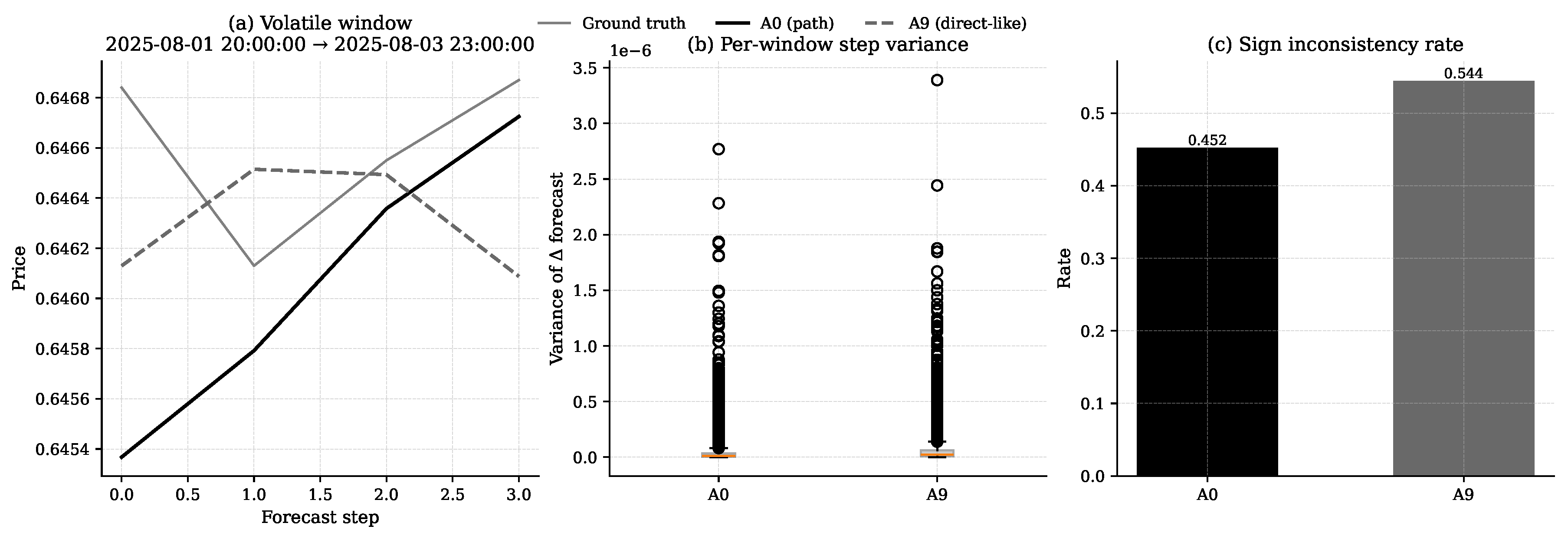

Figure 12.

Comparison of path-based (A0) and direct-like (A9) decoding strategies. Panel (a) shows forecasts for a representative volatile window; A0 more closely follows the ground truth trajectory. Panel (b) reports per-window step variance of predictions, and panel (c) presents the sign inconsistency rate, both indicating smoother and more consistent forecasts from A0.

Figure 12.

Comparison of path-based (A0) and direct-like (A9) decoding strategies. Panel (a) shows forecasts for a representative volatile window; A0 more closely follows the ground truth trajectory. Panel (b) reports per-window step variance of predictions, and panel (c) presents the sign inconsistency rate, both indicating smoother and more consistent forecasts from A0.

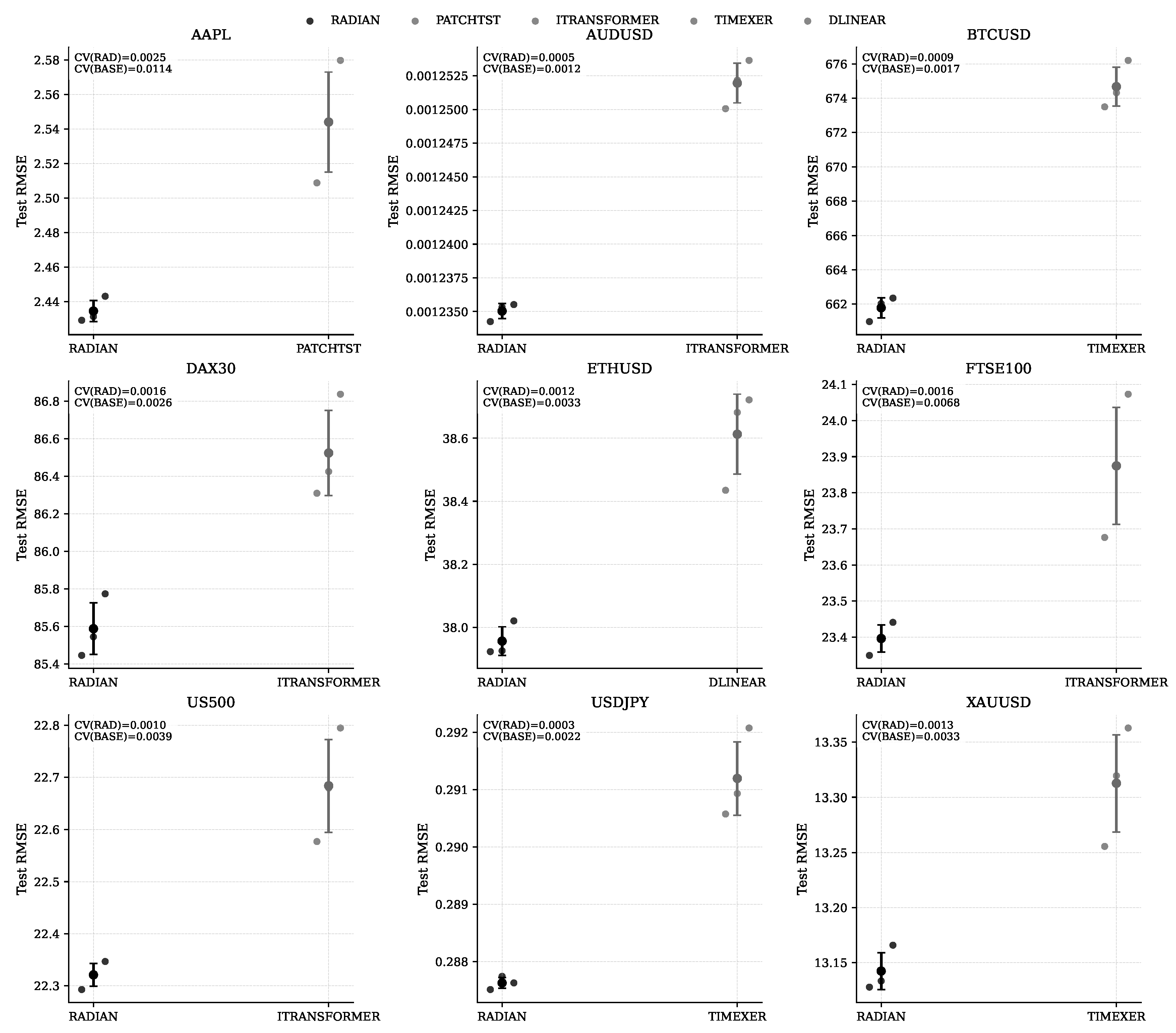

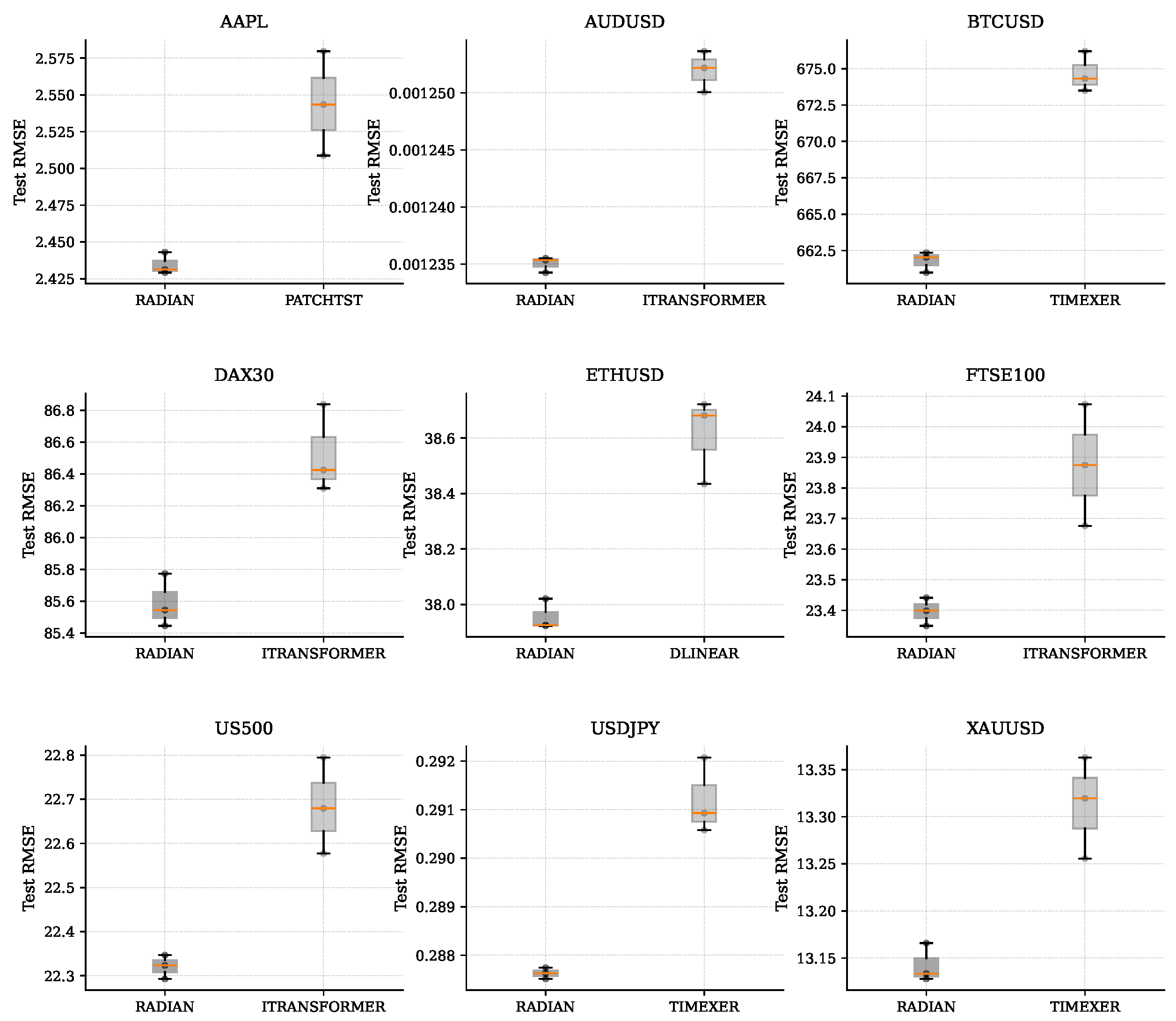

Figure 13.

Multi-dataset robustness comparison of RADIAN against dataset-specific baselines. For each dataset, the left boxplot (RADIAN) and right boxplot (baseline) summarise test RMSE variability across seeds, with scatter points representing individual runs.

Figure 13.

Multi-dataset robustness comparison of RADIAN against dataset-specific baselines. For each dataset, the left boxplot (RADIAN) and right boxplot (baseline) summarise test RMSE variability across seeds, with scatter points representing individual runs.

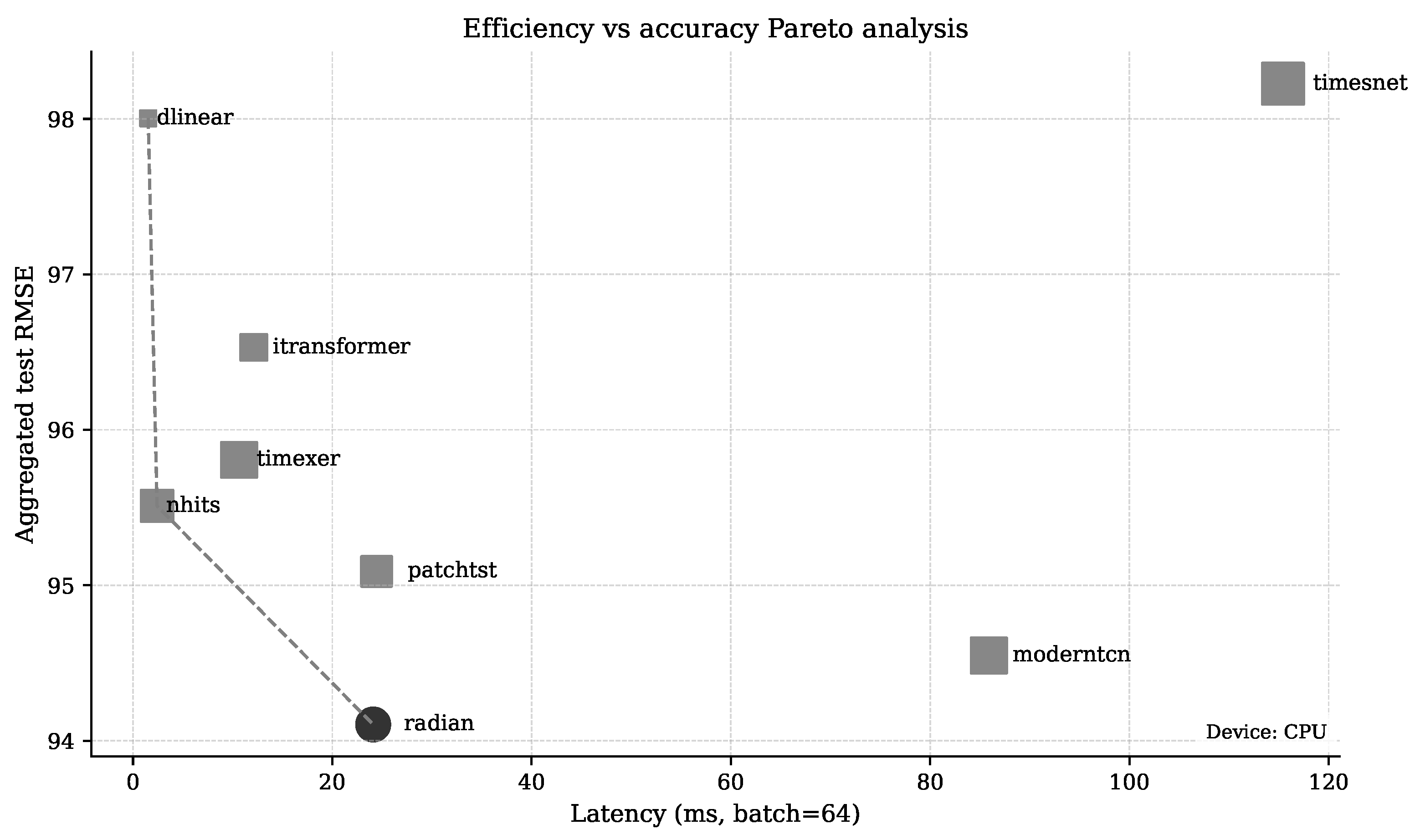

Figure 14.

Trade-off between computational cost and prediction error. Each point represents a model; the x-axis shows inference latency (ms) for a batch of 64 samples, and the y-axis shows mean test RMSE aggregated across datasets. RADIAN occupies a position on the Pareto boundary, indicating competitive accuracy with moderate latency.

Figure 14.

Trade-off between computational cost and prediction error. Each point represents a model; the x-axis shows inference latency (ms) for a batch of 64 samples, and the y-axis shows mean test RMSE aggregated across datasets. RADIAN occupies a position on the Pareto boundary, indicating competitive accuracy with moderate latency.

Table 2.

Primary RADIAN hyperparameters used in all reported experiments. The table lists fixed settings shared across datasets.

Table 2.

Primary RADIAN hyperparameters used in all reported experiments. The table lists fixed settings shared across datasets.

| Setting |

Value |

| Input window T

|

24 |

| Forecast horizon H

|

4 |

| Target feature |

close |

| Loss |

MSE |

| Optimizer |

Adam |

| Learning rate |

|

| Batch size |

64 |

| Epochs |

50 |

| Seeds |

{417, 153, 999} |

|

RADIAN width D

|

128 |

| Attention heads |

8 |

| Temporal layers L

|

3 |

| Experts E

|

3 |

| Directional mix

|

0.35 |

Table 3.

Dataset-level RMSE (↓) and directional accuracy (DA, %, ↑) for six models across nine financial datasets. Bold values indicate the best result per row/metric; underlined values indicate the second-best result.

Table 3.

Dataset-level RMSE (↓) and directional accuracy (DA, %, ↑) for six models across nine financial datasets. Bold values indicate the best result per row/metric; underlined values indicate the second-best result.

| |

RADIAN |

ModernTCN |

PatchTST |

TimeXer |

iTransformer |

N-HiTS |

| Dataset |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

| AAPL |

2.43452 |

52.0399 |

2.47554 |

50.4395 |

2.54399 |

50.6782 |

2.68699 |

51.0796 |

2.68113 |

50.6944 |

2.47771 |

51.0796 |

| AUDUSD |

0.0012 |

51.2706 |

0.0012 |

51.0947 |

0.0012 |

50.6322 |

0.0012 |

51.1427 |

0.0013 |

51.3198 |

0.0013 |

51.2079 |

| BTCUSD |

661.786 |

51.5112 |

665.049 |

51.5498 |

669.78 |

51.2684 |

674.672 |

51.3298 |

680.603 |

51.3044 |

664.178 |

51.4578 |

| DAX30 |

85.5863 |

50.6762 |

85.7886 |

50.238 |

85.7011 |

50.4135 |

86.2227 |

50.4368 |

86.5245 |

50.4643 |

91.9907 |

49.9202 |

| ETHUSD |

37.9566 |

51.7844 |

38.1581 |

51.5597 |

38.406 |

51.2828 |

38.813 |

51.4085 |

38.8142 |

50.6233 |

38.2602 |

51.5597 |

| FTSE100 |

23.4105 |

52.2703 |

23.5147 |

51.7304 |

23.4354 |

51.7304 |

23.5771 |

51.6581 |

23.8746 |

51.4663 |

25.2417 |

51.6153 |

| US500 |

22.3209 |

50.3227 |

22.4534 |

50.0683 |

22.4146 |

50.1003 |

22.6872 |

50.4303 |

22.6837 |

50.0829 |

23.266 |

50.1846 |

| USDJPY |

0.287882 |

50.7606 |

0.288621 |

50.5977 |

0.289673 |

50.5977 |

0.291194 |

50.5977 |

0.293137 |

50.5658 |

0.288265 |

50.5475 |

| XAUUSD |

13.1594 |

50.8788 |

13.2079 |

50.7655 |

13.2321 |

50.7848 |

13.3127 |

50.4696 |

13.2972 |

50.6073 |

13.8924 |

49.7311 |

Table 4.

Regime-stratified comparison for calm windows (lowest-volatility tertile): RMSE (↓) and directional accuracy (DA, %, ↑). Bold values indicate the best result per row/metric; underlined values indicate the second-best result.

Table 4.

Regime-stratified comparison for calm windows (lowest-volatility tertile): RMSE (↓) and directional accuracy (DA, %, ↑). Bold values indicate the best result per row/metric; underlined values indicate the second-best result.

| |

RADIAN |

ModernTCN |

PatchTST |

TimeXer |

iTransformer |

N-HiTS |

| Dataset |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

| AAPL |

1.17473 |

52.636 |

1.20872 |

51.2179 |

1.26594 |

51.3013 |

1.31382 |

52.4525 |

1.29356 |

51.4681 |

1.20079 |

51.4681 |

| AUDUSD |

0.00072 |

51.4299 |

0.00072 |

50.9983 |

0.00072 |

50.7956 |

0.00072 |

51.0433 |

0.00072 |

51.2497 |

0.00073 |

51.0996 |

| BTCUSD |

352.208 |

51.7604 |

352.71 |

51.6143 |

355.171 |

51.6143 |

356.243 |

51.6143 |

360.051 |

50.9086 |

359.585 |

51.5413 |

| DAX30 |

44.3731 |

50.2517 |

44.5435 |

49.5143 |

44.8666 |

49.766 |

44.8154 |

49.7969 |

45.1181 |

49.7969 |

49.2693 |

49.3687 |

| ETHUSD |

17.5892 |

51.2257 |

17.5988 |

51.9627 |

17.8298 |

50.9976 |

18.004 |

50.8796 |

18.0882 |

49.8941 |

17.853 |

51.4537 |

| FTSE100 |

12.8137 |

51.8568 |

12.8365 |

51.7851 |

13.0164 |

51.7851 |

12.97 |

51.7851 |

13.1161 |

51.3635 |

14.0828 |

51.7851 |

| US500 |

9.00642 |

50.3714 |

9.08592 |

49.8541 |

9.10911 |

49.5181 |

9.17819 |

50.305 |

9.21651 |

49.4518 |

9.13131 |

49.7966 |

| USDJPY |

0.173258 |

50.4363 |

0.174598 |

50.1939 |

0.173849 |

50.3133 |

0.174331 |

50.3133 |

0.175922 |

50.3133 |

0.173637 |

50.2685 |

| XAUUSD |

6.38012 |

50.4186 |

6.39978 |

50.5086 |

6.41445 |

50.2973 |

6.44058 |

50.4812 |

6.46872 |

50.2074 |

6.93114 |

50.3208 |

Table 5.

Regime-stratified comparison for transition windows (middle-volatility tertile): RMSE (↓) and directional accuracy (DA, %, ↑). Bold values indicate the best result per row/metric; underlined values indicate the second-best result.

Table 5.

Regime-stratified comparison for transition windows (middle-volatility tertile): RMSE (↓) and directional accuracy (DA, %, ↑). Bold values indicate the best result per row/metric; underlined values indicate the second-best result.

| |

RADIAN |

ModernTCN |

PatchTST |

TimeXer |

iTransformer |

N-HiTS |

| Dataset |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

| AAPL |

1.83344 |

50.2488 |

1.88874 |

49.334 |

1.95201 |

49.9117 |

2.02056 |

49.5105 |

2.00147 |

49.3821 |

1.87434 |

49.5105 |

| AUDUSD |

0.0010 |

50.6978 |

0.0010 |

50.4572 |

0.0010 |

49.4947 |

0.0010 |

50.4572 |

0.0010 |

50.3905 |

0.0010 |

50.5423 |

| BTCUSD |

578.607 |

51.7295 |

581.206 |

50.7613 |

587.679 |

50.6207 |

588.969 |

50.5404 |

591.834 |

50.8979 |

583.478 |

50.9341 |

| DAX30 |

59.5844 |

50.9066 |

59.91 |

50.6838 |

59.9799 |

50.8236 |

60.3654 |

50.2731 |

60.219 |

50.71 |

64.0141 |

49.5259 |

| ETHUSD |

32.0134 |

51.7055 |

32.2486 |

51.2948 |

32.3061 |

51.0128 |

32.7433 |

51.2948 |

32.648 |

50.5578 |

32.5292 |

51.1619 |

| FTSE100 |

16.9644 |

51.7447 |

17.2029 |

51.5447 |

17.2282 |

51.5713 |

17.1642 |

51.558 |

17.3029 |

51.5447 |

18.1041 |

50.9535 |

| US500 |

15.5185 |

50.3307 |

15.5425 |

50.0985 |

15.6328 |

50.2212 |

15.7309 |

50.2562 |

15.7362 |

50.1248 |

15.6127 |

50.2343 |

| USDJPY |

0.240022 |

50.758 |

0.241052 |

50.4555 |

0.241524 |

50.4555 |

0.242232 |

50.4555 |

0.243828 |

50.4555 |

0.24051 |

50.3154 |

| XAUUSD |

10.1542 |

51.8514 |

10.2179 |

51.8359 |

10.2582 |

51.5606 |

10.3688 |

51.2194 |

10.332 |

51.4094 |

11.3654 |

49.7926 |

Table 6.

Regime-stratified comparison for volatile windows (highest-volatility tertile): RMSE (↓) and directional accuracy (DA, %, ↑). Bold values indicate the best result per row/metric; underlined values indicate the second-best result.

Table 6.

Regime-stratified comparison for volatile windows (highest-volatility tertile): RMSE (↓) and directional accuracy (DA, %, ↑). Bold values indicate the best result per row/metric; underlined values indicate the second-best result.

| |

RADIAN |

ModernTCN |

PatchTST |

TimeXer |

iTransformer |

N-HiTS |

| Dataset |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

RMSE |

DA |

| AAPL |

3.5935 |

52.2727 |

3.63695 |

50.8059 |

3.7242 |

50.8382 |

3.96303 |

51.6763 |

3.96679 |

50.951 |

3.65134 |

51.225 |

| AUDUSD |

0.0017 |

52.2977 |

0.0017 |

51.8056 |

0.0017 |

51.5885 |

0.0018 |

51.5342 |

0.0018 |

52.0517 |

0.0018 |

51.9612 |

| BTCUSD |

917.765 |

52.0882 |

923.788 |

51.8208 |

928.748 |

51.4472 |

937.946 |

51.6793 |

947.414 |

51.8208 |

918.272 |

52.2416 |

| DAX30 |

127.548 |

50.8342 |

127.582 |

50.5048 |

127.582 |

50.6417 |

128.108 |

50.6588 |

128.671 |

51.0481 |

136.789 |

50.6588 |

| ETHUSD |

54.2712 |

52.7121 |

54.548 |

52.3336 |

54.9536 |

51.8251 |

55.489 |

51.9473 |

55.5167 |

51.3955 |

54.519 |

52.1444 |

| FTSE100 |

34.1298 |

52.0311 |

34.3318 |

52.4052 |

34.2826 |

51.8528 |

34.4648 |

51.5049 |

34.9445 |

51.4005 |

37.037 |

51.8528 |

| US500 |

33.987 |

50.7973 |

34.2125 |

50.2401 |

34.0909 |

50.5401 |

34.5578 |

50.4758 |

34.5388 |

50.6559 |

35.7383 |

50.5058 |

| USDJPY |

0.398577 |

51.3059 |

0.398976 |

50.9885 |

0.401254 |

50.57 |

0.403874 |

50.671 |

0.406406 |

50.4185 |

0.398945 |

50.9885 |

| XAUUSD |

19.2459 |

50.4854 |

19.3046 |

50.3034 |

19.3278 |

50.3148 |

19.4245 |

49.7345 |

19.4034 |

50.2124 |

19.9109 |

49.0937 |

Table 7.

Stress-test summary across volatility escalation, distribution-shift windows, and extreme-volatility tails. Lower values indicate lower error or reduced sensitivity to error growth.

Table 7.

Stress-test summary across volatility escalation, distribution-shift windows, and extreme-volatility tails. Lower values indicate lower error or reduced sensitivity to error growth.

| Stress scenario |

Metric |

RADIAN |

TimesNet |

ModernTCN |

PatchTST |

TimeXer |

| Volatility escalation (percentile sweep) |

MAE–volatility slope (↓) |

1.4069 |

1.4149 |

1.4170 |

1.4186 |

1.4663 |

| Distribution-shift window |

Worst-decile MSE (↓) |

93916.2351 |

106277.6696 |

96250.9116 |

96515.6212 |

98774.4408 |

| Extreme-volatility tail |

Tail RMSE (↓) |

594.9410 |

614.6372 |

602.3759 |

597.7476 |

604.1600 |

Table 8.

Ablation results for selected datasets (BTCUSD, US500, USDJPY, XAUUSD) using RMSE (↓) and directional accuracy (DA, %, ↑). Bold values indicate the best result per row/metric; underlined values indicate the second-best result.

Table 8.

Ablation results for selected datasets (BTCUSD, US500, USDJPY, XAUUSD) using RMSE (↓) and directional accuracy (DA, %, ↑). Bold values indicate the best result per row/metric; underlined values indicate the second-best result.

| Ablation |

BTCUSD |

US500 |

USDJPY |

XAUUSD |

| |

RMSE (↓) |

DA (↑) |

RMSE (↓) |

DA (↑) |

RMSE (↓) |

DA (↑) |

RMSE (↓) |

DA (↑) |

| Full RADIAN |

660.14 |

51.76 |

22.21 |

50.46 |

0.29 |

51.15 |

13.06 |

51.53 |

| Remove RevIN |

661.31 |

51.19 |

22.34 |

49.44 |

0.29 |

50.67 |

13.38 |

49.74 |

| Remove temporal backbone |

662.08 |

51.08 |

22.38 |

50.19 |

0.29 |

50.70 |

13.13 |

51.45 |

| Remove cross-variable branch |

663.63 |

51.44 |

22.34 |

50.07 |

0.29 |

50.72 |

13.15 |

51.07 |

| Replace target-aware with mean pooling |

662.93 |

51.29 |

22.25 |

50.45 |

0.29 |

51.00 |

13.13 |

51.29 |

| Remove regime features |

662.92 |

51.19 |

22.30 |

49.98 |

0.29 |

50.67 |

13.14 |

51.28 |

| Replace gated fusion with simple addition |

664.89 |

50.69 |

22.45 |

49.69 |

0.29 |

50.70 |

13.17 |

50.93 |

| Single expert (remove MoE) |

663.86 |

51.43 |

22.27 |

50.00 |

0.29 |

50.68 |

13.14 |

51.29 |

| Remove directional component |

664.54 |

51.06 |

22.31 |

50.24 |

0.29 |

50.44 |

13.14 |

51.30 |

| Remove path decoding |

667.42 |

51.74 |

22.44 |

50.13 |

0.29 |

50.61 |

13.27 |

51.05 |

Table 9.

Directional accuracy (DA, %; mean ± standard deviation across three seeds) on nine financial datasets. Bold values indicate the best result per row; underlined values indicate the second-best result.

Table 9.

Directional accuracy (DA, %; mean ± standard deviation across three seeds) on nine financial datasets. Bold values indicate the best result per row; underlined values indicate the second-best result.

| Dataset |

RADIAN |

ModernTCN |

PatchTST |

TimeXer |

iTransformer |

N-HiTS |

| AAPL |

52.0399 ± 0.273483 |

50.4395 ± 0.333956 |

50.6782 ± 0.535957 |

51.0796 ± 0.522948 |

50.6944 ± 0.315744 |

51.0796 ± 0.522948 |

| AUDUSD |

51.2706 ± 0.0765479 |

51.0947 ± 0.146877 |

50.6322 ± 0.0899592 |

51.1427 ± 0.177825 |

51.3198 ± 0.130428 |

51.2079 ± 0.0928639 |

| BTCUSD |

51.5112 ± 0.14627 |

51.5498 ± 0.27139 |

51.2684 ± 0.517188 |

51.3298 ± 0.699599 |

51.3044 ± 0.288453 |

51.4578 ± 0.10604 |

| DAX30 |

50.6762 ± 0.421722 |

50.238 ± 0.126865 |

50.4135 ± 0.17044 |

50.4368 ± 0.102676 |

50.4643 ± 0.337482 |

49.9202 ± 0.411971 |

| ETHUSD |

51.7844 ± 0.249453 |

51.5597 ± 0.264979 |

51.2828 ± 0.440908 |

51.4085 ± 0.361445 |

50.6233 ± 0.140526 |

51.5597 ± 0.264979 |

| FTSE100 |

52.2703 ± 0.151442 |

51.7304 ± 0.0565018 |

51.7304 ± 0.0565018 |

51.6581 ± 0.240057 |

51.4663 ± 0.118778 |

51.6153 ± 0.258394 |

| US500 |

50.3227 ± 0.0265298 |

50.0683 ± 0.0838191 |

50.1003 ± 0.0795895 |

50.4303 ± 0.397956 |

50.0829 ± 0.182141 |

50.1846 ± 0.632555 |

| USDJPY |

50.7606 ± 0.153839 |

50.5977 ± 0.21293 |

50.5977 ± 0.21293 |

50.5977 ± 0.21293 |

50.5658 ± 0.0194426 |

50.5475 ± 0.126804 |

| XAUUSD |

50.8788 ± 0.268592 |

50.7655 ± 0.475718 |

50.7848 ± 0.14283 |

50.4696 ± 0.220528 |

50.6073 ± 0.397548 |

49.7311 ± 0.491006 |

Table 10.

Accuracy–efficiency summary across models. The table reports aggregated RMSE (↓), parameter count, and median CPU inference latency (ms) at and . Bold values indicate column-wise minima.

Table 10.

Accuracy–efficiency summary across models. The table reports aggregated RMSE (↓), parameter count, and median CPU inference latency (ms) at and . Bold values indicate column-wise minima.

| Model |

Agg. test RMSE |

Params |

Latency ms (B=1) |

Latency ms (B=64) |

| RADIAN |

94.1048 |

516,425 |

4.62815 |

24.7172 |

| ModernTCN |

94.5486 |

608,862 |

6.0382 |

83.7451 |

| PatchTST |

95.0893 |

400,929 |

4.89115 |

27.3331 |

| TimeXer |

95.8071 |

601,692 |

4.90595 |

12.5903 |

| iTransformer |

96.5303 |

268,932 |

2.90395 |

12.2155 |

| N-HiTS |

95.5107 |

455,326 |

1.59575 |

3.32515 |

| DLinear |

98.0036 |

7,432 |

0.6078 |

1.351 |

| TimesNet |

98.226 |

873,556 |

16.8479 |

145.299 |

| LSTM |

1,984.61 |

333,188 |

1.8045 |

9.36025 |