Submitted:

11 April 2026

Posted:

13 April 2026

You are already at the latest version

Abstract

Keywords:

I. Introduction

II. Methodology Foundation

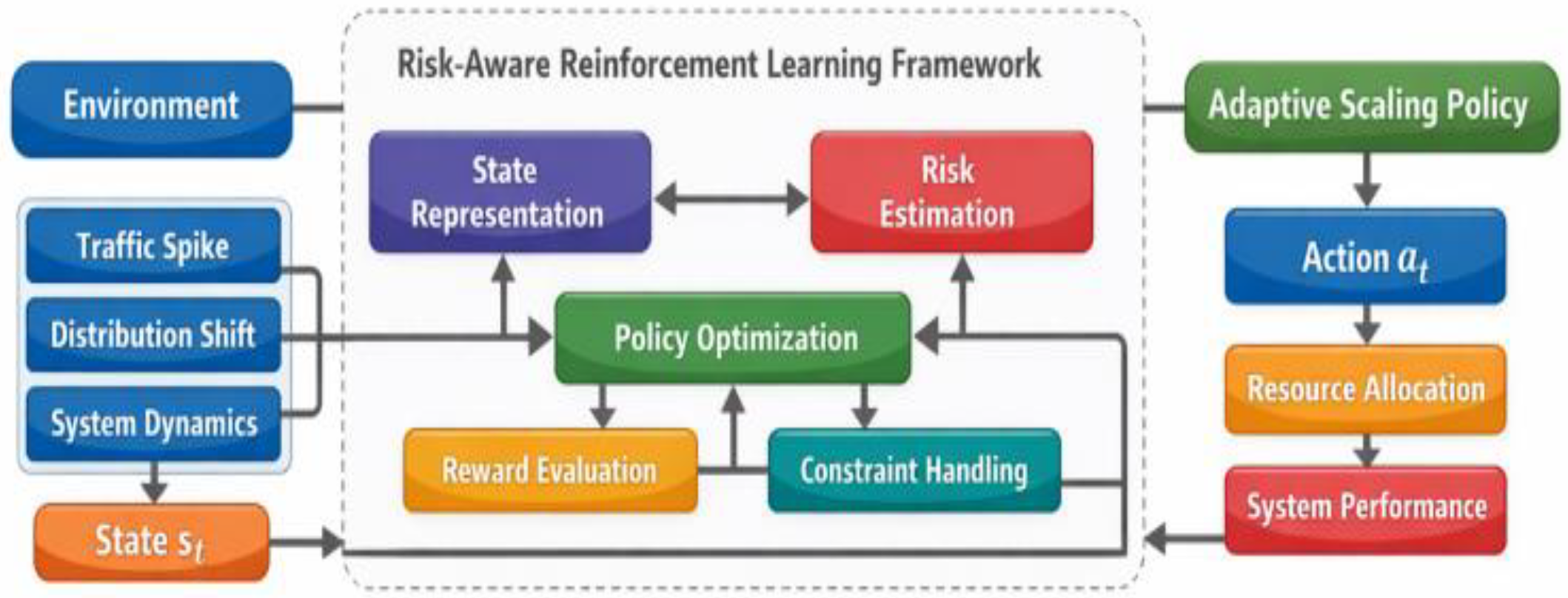

III. Model Design

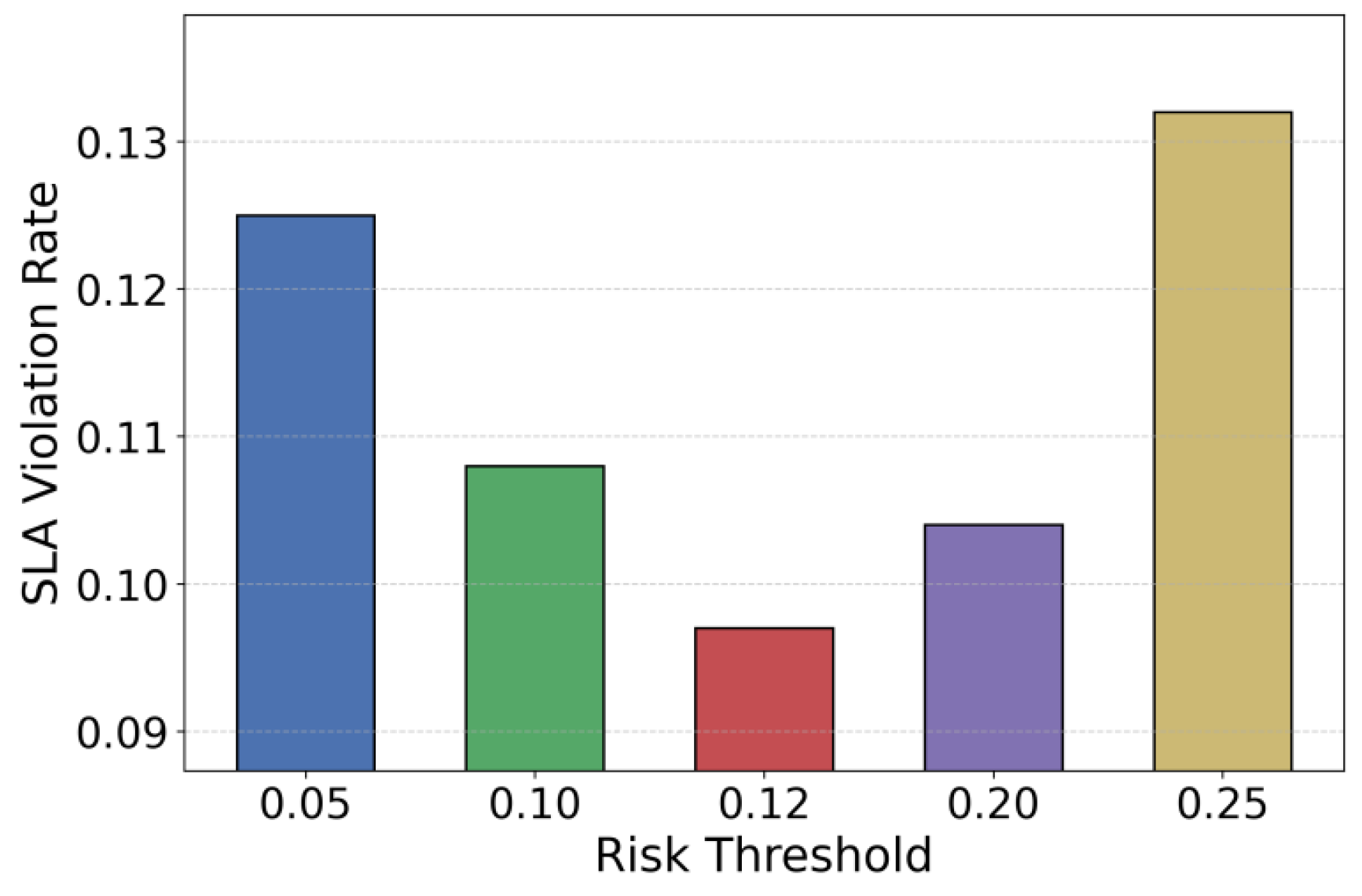

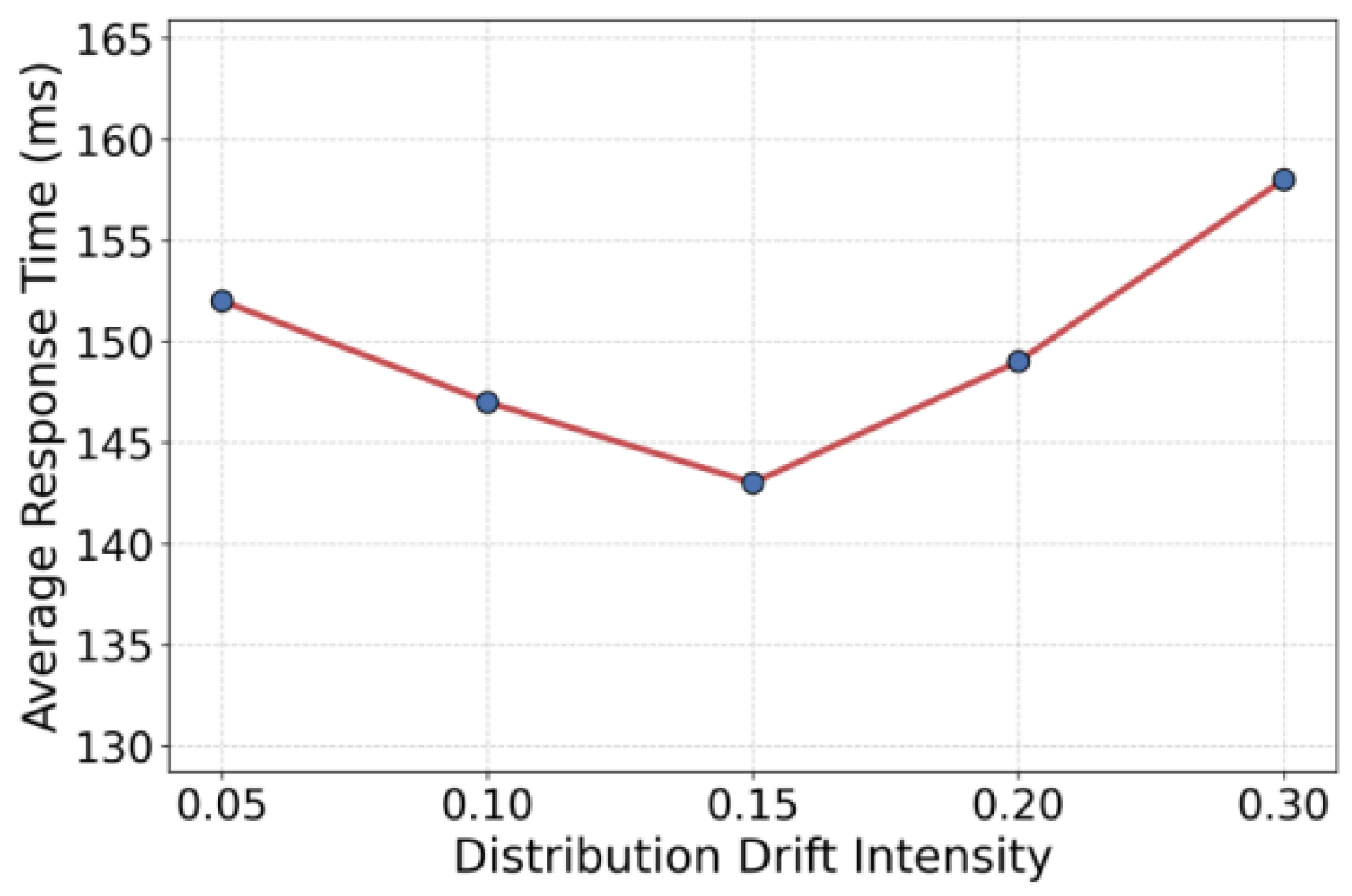

IV. Experimental Evaluation

A. Dataset

B. Performance Results

V. Conclusion

References

- H. Qiu, W. Mao, C. Wang et al., “AWARE: Automate workload autoscaling with reinforcement learning in production cloud systems,” Proceedings of the 2023 USENIX Annual Technical Conference (USENIX ATC 23), pp. 387-402, 2023.

- M. Xu, C. Song, S. Ilager et al., “CoScal: Multifaceted scaling of microservices with reinforcement learning,” IEEE Transactions on Network and Service Management, vol. 19, no. 4, pp. 3995-4009, 2022.

- J. Santos, E. Reppas, T. Wauters et al., “Gwydion: Efficient auto-scaling for complex containerized applications in Kubernetes through Reinforcement Learning,” Journal of Network and Computer Applications, vol. 234, p. 104067, 2025.

- J. Soulé, J. P. Jamont, M. Occello et al., “Streamlining Resilient Kubernetes Autoscaling with Multi-Agent Systems via an Automated Online Design Framework,” arXiv:2505.21559, 2025.

- H. Wang, C. Zhang, J. Li et al., “Container Scaling Strategy Based on Reinforcement Learning,” Security and Communication Networks, vol. 2023, no. 1, p. 7400235, 2023.

- S. Alharthi, A. Alshamsi, A. Alseiari et al., “Auto-scaling techniques in cloud computing: Issues and research directions,” Sensors, vol. 24, no. 17, p. 5551, 2024.

- S. N. A. Jawaddi, M. H. Johari and A. Ismail, “A review of microservices autoscaling with formal verification perspective,” Software: Practice and Experience, vol. 52, no. 11, pp. 2476-2495, 2022.

- Y. Zhao, Y. Li, Y. Wang, Y. Nie, Y. Lu and N. Chen, “Conservative Risk-Sensitive Reinforcement Learning for Reliable Decision-Making Under Uncertainty,” 2026.

- X. Yang, S. Sun, Y. Li, Y. Xing, M. Wang and Y. Wang, “CaliCausalRank: Calibrated Multi-Objective Ad Ranking with Robust Counterfactual Utility Optimization,” arXiv:2602.18786, 2026.

- H. Jiang, F. Qin, J. Cao, Y. Peng and Y. Shao, "Recurrent neural network from adder’s perspective: Carry-lookahead RNN," Neural Networks, vol. 144, pp. 297-306, 2021.

- B. Chen, F. Qin, Y. Shao, J. Cao, Y. Peng and R. Ge, "Fine-grained imbalanced leukocyte classification with global-local attention transformer," Journal of King Saud University-Computer and Information Sciences, vol. 35, no. 8, p. 101661, 2023.

- X. Liang, Y. Zhao, M. Chang, R. Zhou, K. Cao and Y. Zheng, “Spatiotemporal Risk Representation Learning Using Transformers and Graph Structure,” 2026.

- J. Huang, J. Zhan, Q. Wang, J. Jia and B. Zhang, “Stable Fault Diagnosis Under Data Imbalance via Self-Supervised Learning in Industrial IoT,” 2026.

- N. Chen, S. Sun, Y. Wang, Z. Li, A. Zhu and Y. Lu, “Few-Shot Financial Fraud Detection Using Meta-Learning and Large Language Models,” Proc. 2025 6th Int. Conf. Computer Science and Management Technology, pp. 822–826, 2025.

- S. Li, B. Chen, Y. Li, Z. Wang, Y. Xue and C. Xu, “Privacy-Preserving Anomaly Detection in Cloud Services Using Hierarchical Federated Learning with Differential Privacy,” 2026.

- Z. Huang, S. Li, C. Xu, B. Chen, Y. Xue and J. Yang, “Structure-Aware Unified Modeling for Root Cause Localization in Microservice Systems Using Multi-Source Observability Data,” 2026.

- C. Chiang, D. Li, R. Ying, Y. Wang, Q. Gan and J. Li, “Deep Learning-Based Dynamic Graph Framework for Robust Corporate Financial Health Risk Prediction,” Proc. 2025 3rd Int. Conf. Mathematics and Machine Learning, pp. 98–105, 2025.

- H. Chen, Y. Lu, Y. Wei, J. Lyu, R. Wu and C. Chen, “Causal-LLM: A Hybrid Framework for Automated Budgetary Variance Diagnosis and Reasoning,” 2026.

- Q. Zhang, Y. Wang, C. Hua, Y. Huang and N. Lyu, “Knowledge-Augmented Large Language Model Agents for Explainable Financial Decision-Making,” arXiv:2512.09440, 2025.

- Y. Xu, Q. Liu, W. Lin and S. Chen, “Problem-Centric Modeling and Reasoning for Business Decision Making with Large Language Models.”.

- Y. Wang, R. Yan, Y. Xiao, J. Li, Z. Zhang and F. Wang, “Memory-Driven Agent Planning for Long-Horizon Tasks via Hierarchical Encoding and Dynamic Retrieval,” 2025.

- H. Chen, R. Wu, C. Chen, H. Feng, Y. Nie and Y. Lu, “Anomaly Ranking for Enterprise Finance Using Latent Structural Deviations and Reconstruction Consistency,” 2026.

- Z. Liu, R. Meng, S. Y. Huang and Z. Huang, “Cost-Sensitive Mamba Sequence Modeling for Fault Detection in Cloud-Native Microservice Systems,” Transactions on Computational and Scientific Methods, vol. 5, no. 12, 2025.

- X. Song, Y. Huang, J. Guo, Y. Liu and Y. Luan, “Multi-scale Feature Fusion and Graph Neural Network Integration for Text Classification with Large Language Models,” arXiv:2511.05752, 2025.

- N. Lyu, Y. Wang, Z. Cheng, Q. Zhang and F. Chen, “Multi-Objective Adaptive Rate Limiting in Microservices Using Deep Reinforcement Learning,” Proc. 4th Int. Conf. Artificial Intelligence and Intelligent Information Processing, pp. 862–869, 2025.

- X. Yang, S. Li, K. Wu, Z. Wang, Y. Tang and Y. Li, “Adaptive Anomaly Detection in Microservice Systems via Meta-Learning,” 2026.

- A. Zhu, W. Liu, Z. Li, C. Wen, J. Qiu and Z. Liu, “ArcheScale-Guard: Archetype-Aware Predictive Autoscaling with Uncertainty Quantification for Serverless Computing.”.

- K. Zeng, Z. Huang, Y. Yang, R. Meng, S. Y. Huang and X. Zhang, “TokenFlow: Token-Level GPU Sharing and Adaptive Scheduling for Multi-Model Concurrent LLM Inference,” Environments, vol. 21, p. 24.

- C. Zhang, H. Zhu, A. Zhu, J. Liao, Y. Xiao and Z. Zhang, “Deep Learning Approach for Protocol Anomaly Detection Using Status Code Sequences,” 2026.

- S. Chaudhari, P. Aggarwal, V. Murahari et al., “RLHF deciphered: A critical analysis of reinforcement learning from human feedback for LLMs,” ACM Computing Surveys, vol. 58, no. 2, pp. 1-37, 2025.

- Q. Yu, Z. Zhang, R. Zhu et al., “DAPO: An open-source LLM reinforcement learning system at scale,” arXiv:2503.14476, 2025.

- Y. Zuo, K. Zhang, L. Sheng et al., “TTRL: Test-time reinforcement learning,” arXiv:2504.16084, 2025.

- L. A. Agrawal, S. Tan, D. Soylu et al., “GEPA: Reflective prompt evolution can outperform reinforcement learning,” arXiv:2507.19457, 2025.

- Z. Xue, L. Zheng, Q. Liu et al., “SimpleTIR: End-to-end reinforcement learning for multi-turn tool-integrated reasoning,” arXiv:2509.02479, 2025.

| Method | SLA Violation Rate | Average Response Time | Resource Utilization | Scaling Cost |

|---|---|---|---|---|

| Rlhf deciphered[30] | 0.163 | 182 ms | 71% | 0.58 |

| Dapo[31] | 0.142 | 165 ms | 76% | 0.54 |

| Ttrl[32] | 0.189 | 201 ms | 69% | 0.62 |

| Gepa[33] | 0.131 | 158 ms | 79% | 0.51 |

| Simpletir[34] | 0.174 | 193 ms | 73% | 0.59 |

| Ours | 0.097 | 143 ms | 84% | 0.48 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).