Submitted:

11 April 2026

Posted:

14 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Multi-View 3D Shape Recognition

2.2. Graph Construction and Relational Modeling

2.3. Hierarchical Graph Coarsening and Pooling

3. Methodology

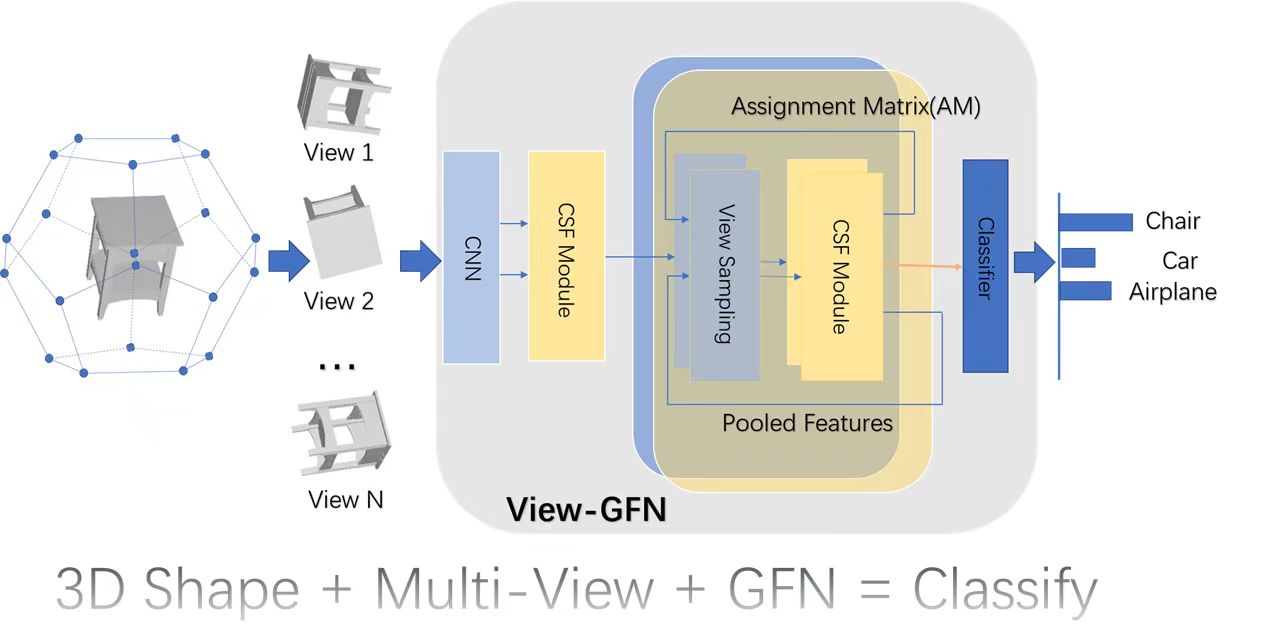

3.1. Overview

3.2. Initial Feature Extraction

3.3. Cluster Assignment Based View Sampling

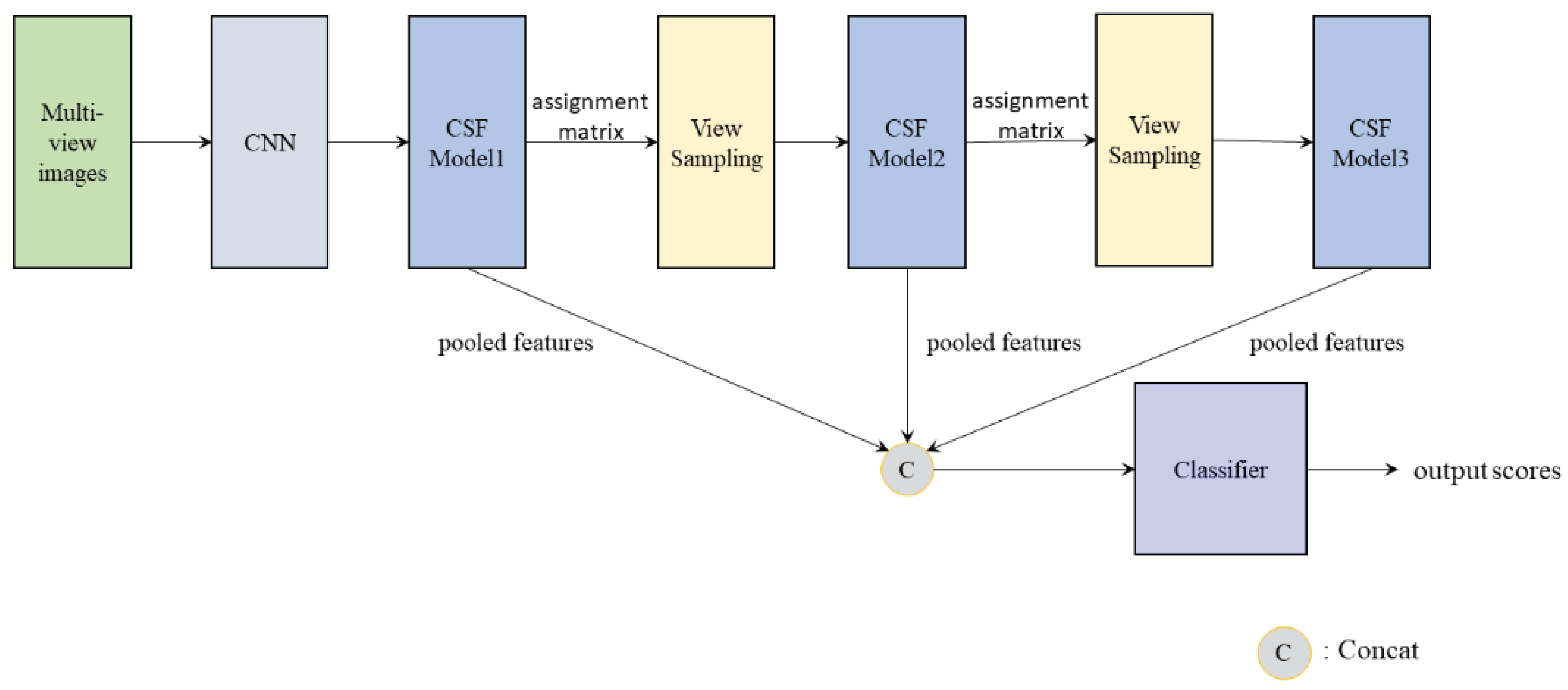

3.4. Graph Convolution and Sampling Fusion Module (CSF)

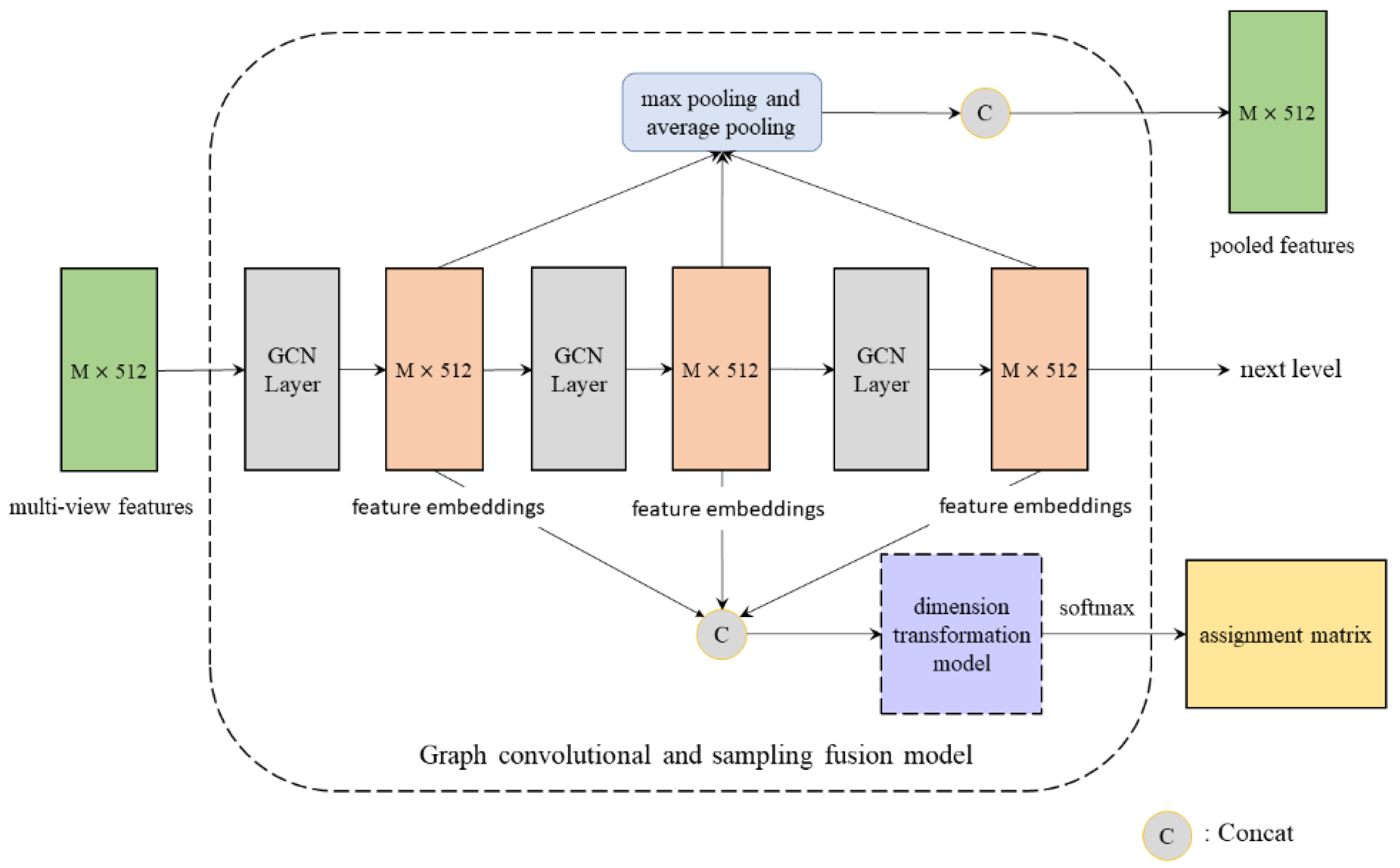

3.4.1. Feature Embedding

3.4.2. Assignment Matrix Generation

3.4.3. Multi-Scale Fusion and Receptive Field Analysis

3.5. Hierarchical Network Architecture and Loss Function

4. Experiments and Results Analysis

4.1. Experimental Setup

4.1.1. Datasets and Evaluation Metrics

- ModelNet40 [27]: This dataset contains 12,311 3D CAD models across 40 categories, with 9,843 models used for training and 2,468 for testing. Following the standard protocol, we render either 20 views (from the vertices of a dodecahedron) or 12 views (from a circular trajectory at an elevation of ) for each 3D object.

- RGB-D [28]: A real-world dataset comprising 300 household objects across 51 categories. We adopt a 10-fold cross-validation strategy for evaluation on this dataset.

- Evaluation Metrics: The primary metrics include Instance Accuracy (the ratio of correctly classified samples to the total number of samples), Class Accuracy (the arithmetic mean of accuracies across all classes), mean Average Precision (mAP, used for the retrieval task), the number of Parameters (Params), and Training Time (forward and backward propagation time per epoch).

4.1.2. Implementation Details

4.2. Comparison with State-of-the-Art Methods

- Analysis: As shown in Table 1, View-GFN achieves an instance accuracy of 97.8%, slightly higher than View-GCN (97.6%) and remarkably close to the current optimal MVPNet (97.9%). Its class accuracy is on par with View-GCN (96.5%). More importantly, the parameter size of View-GFN is only 50.1% of that of View-GCN (17.0M vs. 33.9M), and the training time per epoch is reduced by approximately 46.7% (33.4s vs. 62.6s). This compellingly demonstrates that the proposed CSF module and soft-clustering mechanism are highly efficient in compressing redundant information while retaining discriminative geometric features.

4.3. Robustness to View Quantity

- Analysis: On the real-world RGB-D dataset, View-GFN achieves an accuracy of 94.1% with 12 views, which is comparable to View-GCN (94.3%), but with significantly fewer parameters (17.0M vs. 22.7M) and approximately 54.5% less training time (0.5s vs. 1.1s). This represents a substantial improvement over MVCNN (86.1%), which also uses 12 views. Furthermore, our 12-view View-GFN outperforms several methods that rely on 120 views (e.g., CFK, MMDCNN), highlighting its superior capability in utilizing view information efficiently.

4.4. Shape Retrieval Performance

- Analysis: View-GFN achieves an mAP of 97.7% in the retrieval task, outperforming MVPNet (97.4%) and MVCVT (95.4%), and significantly surpassing the earlier GVCNN (85.7%). This indicates that the multi-scale features extracted by the CSF module are not only discriminative for classification but also possess excellent semantic clustering properties, enabling the generation of high-quality global shape descriptors.

4.5. Ablation Study

- Soft-clustering vs. Hard Sampling: The instance accuracy of View-GFN-FPS drops by 1.3% (from 97.8% to 96.5%), proving that soft-clustering based on the assignment matrix retains discriminative information far better than Farthest Point Sampling.

- CSF Synchronous Fusion: View-GFN-SEP performs almost on par with the full model (instance accuracy is only 0.1% lower), but incurs a significant increase in parameters. This demonstrates that the CSF module substantially reduces model complexity while maintaining high accuracy.

- AM Initialization Strategy: The instance accuracies of View-GFN-A1 (local adjacency) and View-GFN-A2 (coordinate encoding) are 0.4% and 0.3% lower than the full model, respectively. This firmly validates the superiority of our global connectivity prior and predefined initial values.

5. Conclusions

References

- Tu, T.; Chen, P.; Zhang, L. ImGeoNet: Image-induced Geometry-aware Voxel Representation for Multi-view 3D Object Detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2023; pp. 6996–7007.

- Xu, C.; Wu, B.; Hou, J.; et al. NeRF-Det: Learning Geometry-Aware Volumetric Representation for Multi-View 3D Object Detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2023.

- Ding, D.; Wang, Z.; Xiong, H. Robust point cloud classification via semantic and structural modeling. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 2023.

- Ben-Shabat, Y.; Gould, S. 3DInAction: Understanding Human Actions in 3D Point Clouds. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 2024; pp. 19978–19987.

- Chen, Y.; Liu, S.; Shen, X. Learnable Skeleton-Aware 3D Point Cloud Sampling. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 2023.

- Li, Z.; Xu, C.; Leng, B. Geometrically-driven Aggregation for Zero-shot 3D Point Cloud Understanding. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 2024.

- Wang, Y.; Chen, X.; Cao, L.; et al. Towards Robust Point Cloud Recognition With Sample-Adaptive Auto-Augmentation. IEEE Trans. Pattern Anal. Mach. Intell. 2025, 47, 3003–3017.

- Zhang, L.; Wang, Y.; Liu, H. Enhancing 3D Point Cloud Classification with ModelNet-R and Point-SkipNet. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 2025.

- Liu, H.; Zhang, L.; Wang, Y. Point Clouds Meets Physics: Dynamic Acoustic Field Fitting Network for Point Cloud Understanding. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 2025.

- Wang, S.; Jiang, L.; Wu, Z.; et al. Exploring Object-Centric Temporal Modeling for Efficient Multi-View 3D Object Detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2023; pp. 3621–3631.

- Zong, Z.; Song, G.; Liu, Y. Temporal Enhanced Training of Multi-view 3D Object Detector via Historical Object Prediction. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2023; pp. 3781–3790.

- Chen, H.; Wang, S.; Wu, Z. Pixel-Aligned Recurrent Queries for Multi-View 3D Object Detection. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), Paris, France, 2023.

- Jia, X.; Zhang, Y.; Wu, B.; et al. PointCert: Point Cloud Classification with Deterministic Certified Robustness Guarantees. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Vancouver, BC, Canada, 2023.

- Zhao, M.; Li, H.; Tang, J. Cross-Modal 3D Shape Retrieval via Heterogeneous Dynamic Graph Representation. IEEE Trans. Pattern Anal. Mach. Intell. 2025.

- Wu, J.; Zhang, L.; Liu, Y. Cross-Modal 3D Representation with Multi-View Images and Point Clouds. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, 2025.

- Su, H.; Maji, S.; Kalogerakis, E.; Learned-Miller, E. Multi-view convolutional neural networks for 3D shape recognition. In Proceedings of the IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, 2015; pp. 945–953.

- Feng, Y.; Zhang, Z.; Zhao, X.; Ji, R.; Gao, Y. GVCNN: Group-view convolutional neural networks for 3D shape recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 2018; pp. 264–272.

- Yu, T.; Meng, J.; Yuan, J. Multi-view harmonized bilinear network for 3D object recognition. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 2018; pp. 186–194.

- Kanezaki, A.; Matsushita, Y.; Nishida, Y. RotationNet: Joint object categorization and pose estimation using multiviews from unsupervised viewpoints. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Salt Lake City, UT, USA, 2018; pp. 5010–5019.

- Han, Z.; Shang, M.; Liu, Y.S.; et al. SeqViews2SeqLabels: Learning 3D global features via aggregating sequential views by RNN with attention. IEEE Trans. Image Process. 2018, 28, 658–672.

- Wei, X.; Yu, R.; Sun, J. View-GCN: View-based graph convolutional network for 3D shape analysis. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, 2020; pp. 1850–1859.

- Xu, M.; Chen, H.; Wang, Z. PAGNet: Path aggregation graph network for multi-view 3D shape recognition. Knowl.-Based Syst. 2021, 229, 107338.

- Gao, H.; Ji, S. Graph U-Nets. In Proceedings of the International Conference on Machine Learning (ICML), Long Beach, CA, USA, 2019; pp. 2083–2092. [CrossRef]

- Lee, J.; Lee, I.; Kang, J. Self-attention graph pooling. In Proceedings of the International Conference on Machine Learning (ICML), Long Beach, CA, USA, 2019; pp. 3734–3743.

- Ying, Z.; You, J.; Morris, C.; et al. Hierarchical graph representation learning with differentiable pooling. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Montréal, Canada, 2018; pp. 4800–4810.

- Bianchi, F.M.; Grattarola, D.; Alippi, C. Spectral clustering with graph neural networks for graph pooling. In Proceedings of the International Conference on Machine Learning (ICML), Vienna, Austria, 2020; pp. 874–883.

- Wu, Z.; Song, S.; Khosla, A.; et al. 3D ShapeNets: A deep representation for volumetric shapes. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR), Boston, MA, USA, 2015; pp. 1912–1920.

- Lai, K.; Bo, L.; Ren, X.; Fox, D. A large-scale hierarchical multi-view RGB-D object dataset. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Shanghai, China, 2011; pp. 1817–1824.

- Su, J.-C.; Gadelha, M.; Wang, R.; Maji, S. A deeper look at 3D shape classifiers. In Proceedings of the European Conference on Computer Vision (ECCV) Workshops, Munich, Germany, 2018.

- Jiang, J.; Bao, D.; Chen, Z.; Zhao, X.; Gao, Y. MLVCNN: Multi-loop-view convolutional neural network for 3D shape retrieval. In Proceedings of the AAAI Conference on Artificial Intelligence, Vol. 33, 2019; pp. 8513–8520. [CrossRef]

- Xu, L.; Cui, Q.; Xu, W.; Chen, E.; Tong, H.; Tang, Y. Walk in views: Multi-view path aggregation graph network for 3D shape analysis. Information Fusion 2024, 103, 102131. [CrossRef]

- Li, J.; Liu, Z.; Li, L.; Lin, J.; Yao, J.; Tu, J. Multi-view convolutional vision transformer for 3D object recognition. J. Vis. Commun. Image Represent. 2023, 95, 103906. [CrossRef]

- Cheng, Y.; Cai, R.; Zhao, X.; Huang, K. Convolutional Fisher kernels for RGB-D object recognition. In Proceedings of the 2015 International Conference on 3D Vision (3DV), IEEE, 2015; pp. 135–143.

- Rahman, M.M.; Tan, Y.; Xue, J.; Lu, K. RGB-D object recognition with multimodal deep convolutional neural networks. In Proceedings of the 2017 IEEE International Conference on Multimedia and Expo (ICME), IEEE, 2017; pp. 991–996.

- Asif, U.; Bennamoun, M.; Sohel, F.A. A multi-modal, discriminative and spatially invariant CNN for RGB-D object labeling. IEEE Trans. Pattern Anal. Mach. Intell. 2017, 40, 2051–2065. [CrossRef]

| Method | Type | Views | Inst Acc (%) | Class Acc (%) | Params (M) |

|---|---|---|---|---|---|

| MVCNN-new [29] | View Aggregation | 12 | 95.0 | 92.4 | - |

| GVCNN [17] | View Grouping | 12 | 93.1 | 90.7 | - |

| MHBN [18] | Bilinear Pooling | 6 | 94.7 | 93.1 | - |

| MVPNet [31] | Path Aggregation | 20 | 97.9 | 96.8 | - |

| RotationNet [19] | View Optimization | 20 | 97.4 | - | - |

| MLVCNN [30] | Multi-loop Views | 36 | 94.2 | - | - |

| View-GCN [21] | Graph Network | 20 | 97.6 | 96.5 | 33.9 |

| View-GFN (Ours) | Graph Fusion | 20 | 97.8 | 96.5 | 17.0 |

| Method | Views | Inst Acc (%) |

|---|---|---|

| MVCNN [16] | 12 | 86.1 |

| CFK [33] | 120 | 86.8 |

| MMDCNN [34] | 120 | 89.5 |

| MDSICNN [35] | 120 | 89.9 |

| View-GCN [21] | 12 | 94.3 |

| View-GFN (Ours) | 12 | 94.1 |

| Method | mAP (%) |

|---|---|

| GVCNN [17] | 85.7 |

| MVCVT [32] | 95.4 |

| MLVCNN [30] | 92.8 |

| MVPNet [31] | 97.4 |

| View-GFN (Ours) | 97.7 |

| Configuration | Inst Acc (%) | Class Acc (%) | Description |

|---|---|---|---|

| View-GFN-FPS | 96.5 | 95.2 | Replace soft-clustering with Farthest Point Sampling (FPS) |

| View-GFN-SEP | 97.7 | 96.5 | Decouple feature embedding and assignment matrix generation |

| View-GFN-A1 | 97.4 | 96.2 | AM considers only 3 nearest neighbor nodes |

| View-GFN-A2 | 97.5 | 96.1 | AM initialized with view coordinate encoding |

| View-GFN (Full) | 97.8 | 96.4 | Full model (Global AM + Soft-clustering + CSF) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).