Submitted:

10 April 2026

Posted:

13 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

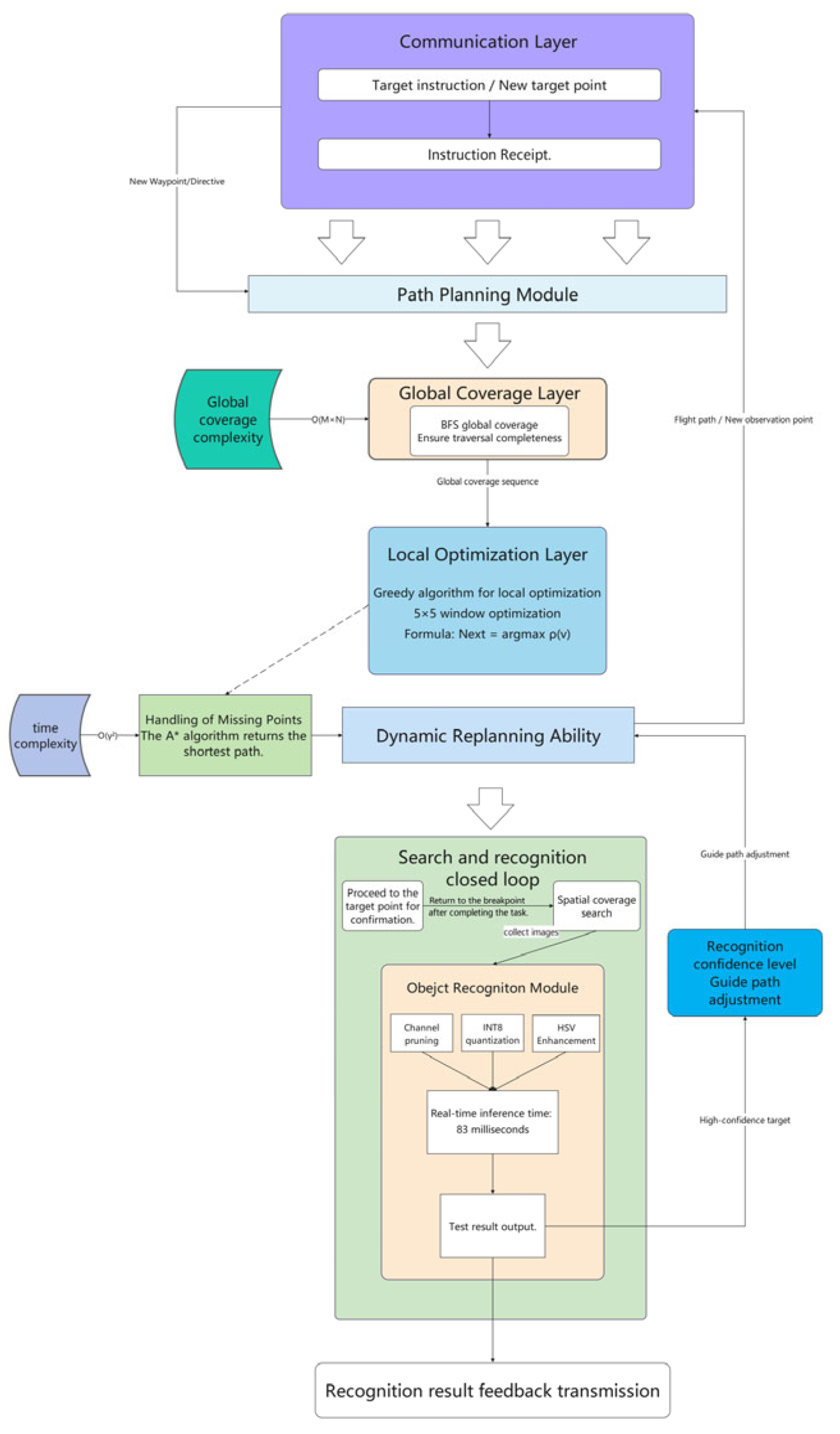

3. Methodology

3.1. Task Modeling

3.2. Model Architecture

| Algorithm 1. Object Detection and Serial Transmission. |

|

Require: - serial: serial port - detector: YOLO detector - cam: camera - disp: display Ensure: - Send target information packets 1: Initialize serial, detector, cam, disp 2: while not terminated do 3: img←cam. read () 4: objs←detector. detect (img) 5: if objs is not empty then 6: for each obj in objs do 7: extract (x, y, w, h, label, score) 8: data←package (x, y, w, h, label, score) 9: serial. send (data) 10: end for 11: else 12: data←package (0, 0, 0, 0, 0, 0) 13: serial. send (data) 14: end if 15: disp. show(img) 16: end while |

| Path Search and Compression |

|

Require: - start: starting point - forbidden: no-fly zones - directions: four directions Ensure: - Output a feasible compressed path 1: if not isReachable (start, forbidden) then return error 2: current←start, path← [start], visited[start]←true 3: while there exist unvisited reachable nodes do 4: next←a valid neighboring node selected from directions 5: if next exists then 6: current←next, visited[current]←true, path←path + current 7: else 8: break 9: end if 10: end while 11: for each unvisited node p do 12: path←path + BFS(current, p) 13: current←p 14: end for 15: path←compressPath (path) 16: path←path + BFS (current, start) 17: return path |

3.2.1. Object Recognition Module

| Symbols | Meaning |

| Input feature map | |

| Output feature map | |

| Convolution kernel | |

| Bias for the KTH kernel | |

| Outputs the spatial position coordinates in the feature map | |

| Internal coordinates of the convolution kernel | |

| Input channel index | |

| Output channel (kernel number) | |

| Number of channels to input the feature map | |

| The size of the convolution kernel | |

| Number of convolution kernels (number of output channels) | |

| Size of the mesh | |

| The center position of the target box (predicted value) | |

| The center position of the true box (target value) | |

| Width and height of the target box (predicted value) | |

| Width and height of the true box (target) | |

| Confidence score for the target box | |

| The probability of the target class | |

| Weighting factors that modulate the effect of different terms | |

| The neighborhood of the current grid | |

| The neighborhood of the current raster u | |

| The density value of the raster | |

| The known cost to the starting point | |

| A heuristic estimate to the goal | |

| Map state matrix | |

| Missing point coordinates | |

| Number of dynamic obstacles |

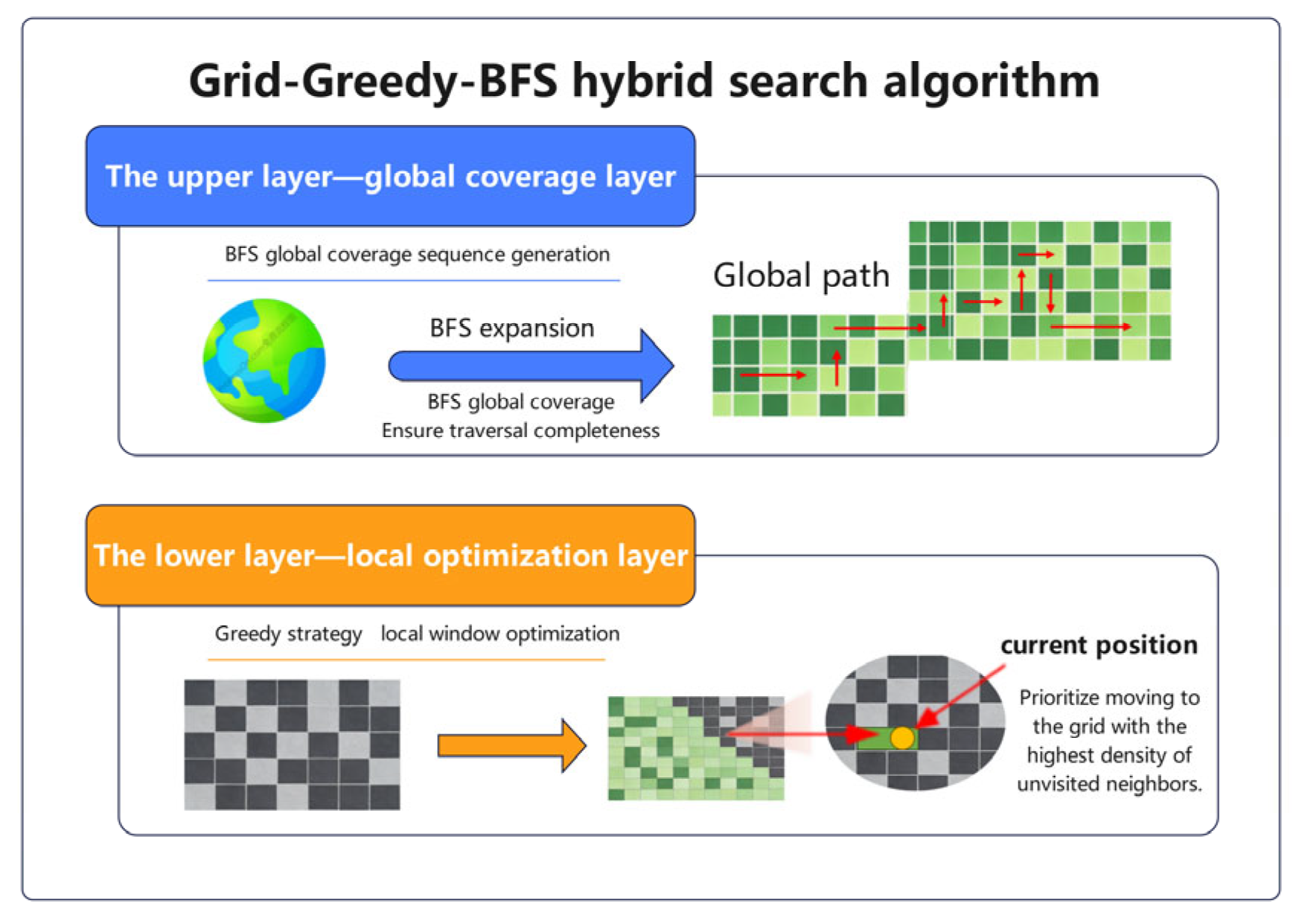

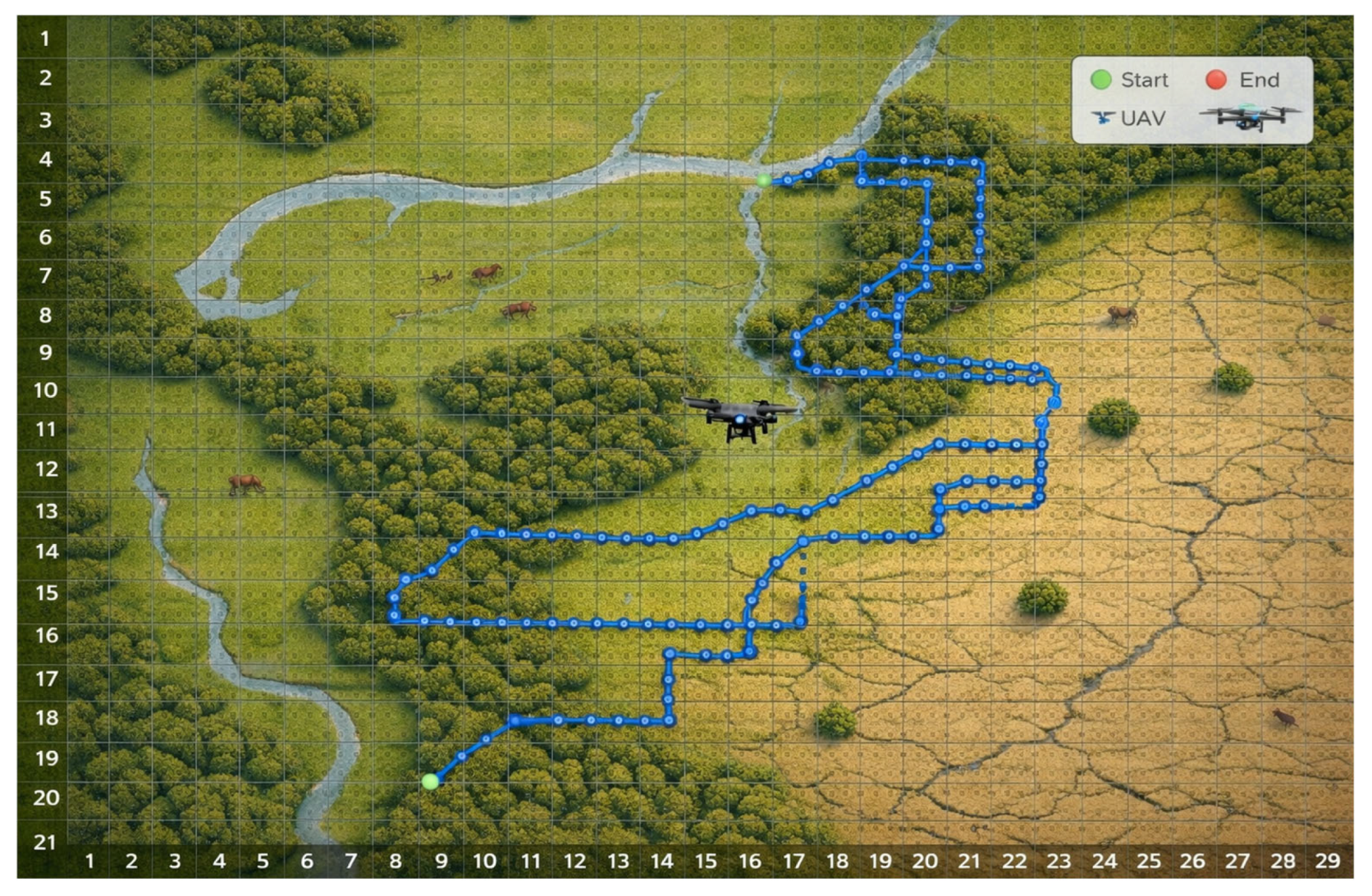

3.2.2. Path Planning Module

3.2.3. Overall Time Complexity of the Algorithm

4. Experimental Results and Analysis

4.1. Dataset and Training

4.2. Experimental Setup

4.3. Performance Evaluation

4.3.1. Performance Comparison of Target Recognition Algorithms

4.3.2. Performance Comparison of Target Recognition Algorithms

5. Discussion and Concluding

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| UAV | Unmanned aerial vehicles |

| BFS | Breadth-First Search |

| CCP | Coverage Path Planning |

| GGB | Grid-Greedy-BFS hybrid search algorithm |

References

- Chen, J.; Fang, H.; Zeng, X.-L. On the intelligent development of unmanned platforms in high-risk industries. Sci. Sin. Inform. 2021, *51*, 1397–1410. [Google Scholar] [CrossRef]

- Yuan, Y.; Sun, B.; Liu, G.-C. Drone-based scene matching visual geo-localization. Acta Autom. Sin. 2025, *51*, 287–311. [Google Scholar] [CrossRef]

- Khashush, N.A.; Radif, M.J.; Abdalrdha, Z.K. A review of smart drone technologies for security surveillance and search and rescue. J. Al-Qadisiyah Comput. Sci. Math. 2025, *17*, 155–173. [Google Scholar] [CrossRef]

- Katkuri, A.V.R.; Madan, H.; Khatri, N.; et al. Autonomous UAV navigation using deep learning-based computer vision frameworks: A systematic literature review. Array 2024, *23*, 100361. [Google Scholar] [CrossRef]

- Cheng, Z.K.; Yang, J.Y.; Sun, J.F.; et al. Trajectory planning of unmanned aerial vehicles in complex environments based on intelligent algorithm. Drones 2025, *9*, 468. [Google Scholar] [CrossRef]

- Haque, A.; Chowdhury, M.N.U.R.; Hassanalian, M. A review of classification and application of machine learning in drone technology. ACRT 2025, *4*. [Google Scholar] [CrossRef]

- Boiteau, S.; Vanegas, F.; Gonzalez, F. Dataset from: Framework for autonomous UAV navigation and target detection in global-navigation-satellite-system-denied and visually degraded environments (Version 1). In Queensland University of Technology; 2024. [Google Scholar] [CrossRef]

- Civil Aviation Administration of China; National Development and Reform Commission; Ministry of Transport. Civil aviation development during the 14th Five Year Plan period exhibition planning. Available online: https://www.gov.cn/zhengce/zhengceku/2022-01/07/5667003/files/d12ea75169374a15a742116f7082df85.pdf (accessed on 31 December 2024).

- Choi, U.; Lee, S. Bandwidth-aware coverage path planning for swarm of UAVs with aerial base station. In Proceedings of the 2023 International Conference on Unmanned Aircraft Systems (ICUAS), Warsaw, Poland, 6–9 June 2023; pp. 360–365. [Google Scholar] [CrossRef]

- Kumar, P.A.; Manoj, N.; Sudheer, N.; et al. UAV swarm objectives: A critical analysis and comprehensive review. SN Comput. Sci. 2024, *5*, 6. [Google Scholar] [CrossRef]

- El Ghazoual, S. Flight scope: A deep comprehensive review of aircraft detection algorithms in satellite imagery. arXiv 2024, arXiv:2404.02877. [Google Scholar]

- Zhu, G.L.; Yuan, C.X.; Jiang, F. Lightweight YOLOv8 real-time object detection via progressive pruning and feature-aware knowledge distillation. In Proceedings of the 2025 IEEE 8th Advanced Information Technology, Electronic and Automation Control Conference (IAEAC), Chongqing, China, 14–16 March 2025. [Google Scholar] [CrossRef]

- Naveen, S.; Kounte, M.R. Optimized convolutional neural network at the IoT edge for image detection using pruning and quantization. Multimed. Tools Appl. 2025, *84*, 5435–5455. [Google Scholar] [CrossRef]

- Altaie, U.K.; Abdelkareem, A.E.; Alhasanat, A. Lightweight optimization of YOLO models for resource-constrained devices: A comprehensive review. Diyala J. Eng. Sci. 2025, *18*, 1–18. [Google Scholar] [CrossRef]

- Yang, D.; Solihin, M.I.; Zhao, Y. Model compression for real-time object detection using rigorous gradation pruning. iScience 2025, *28*, 111618. [Google Scholar] [CrossRef]

- Laidig, R.; Shibli, F.; Tufekci, B. Improving drone communication QoS through adaptive redundancy. In Proceedings of the 2025 34th International Conference on Computer Communications and Networks (ICCCN), Tokyo, Japan, 28–31 July 2025; pp. 1–9. [Google Scholar] [CrossRef]

- Zhou, J.; et al. Reliability-optimal UAV-assisted mobile edge computing: Joint resource allocation, data transmission scheduling and motion control. IEEE Trans. Mob. Comput. 2025, *24*, 4217–4234. [Google Scholar] [CrossRef]

- Hazarika, A.; Rahmati, M. AdaptNet: Rethinking sensing and communication for a seamless internet of drones experience. arXiv 2024, arXiv:2405.07318. [Google Scholar] [CrossRef]

- Sinay, M.; Agmon, N.; Maksimov, O.; et al. Uncertainty with UAV search of multiple goal-oriented targets. arXiv 2022, arXiv:2203.09476. [Google Scholar] [CrossRef]

- Zhang, J.; Du, X.; Dong, Q.; et al. Distributed collaborative complete coverage path planning based on hybrid strategy. J. Syst. Eng. Electron. 2024, *35*, 463–472. [Google Scholar] [CrossRef]

- Sheltami, T.; Ahmed, G.; Ghaleb, M.; et al. UAV path planning and trajectory optimization: A comprehensive survey. Arab. J. Sci. Eng. 2026, *51*, 105–145. [Google Scholar] [CrossRef]

- Rahman, M.; Sarkar, N.I.; Lutui, R. A survey on multi-UAV path planning: Classification, algorithms, open research problems, and future directions. Drones 2025, *9*, 263. [Google Scholar] [CrossRef]

- Chen, X.; Li, W.; Ma, J.; et al. Frontiers in cooperative control of unmanned systems in low-altitude environments (Supplement 1, 2026, 20250443). Acta Aeronaut. Astronaut. Sin. 2026. [Google Scholar] [CrossRef]

- Mathi, S.C.; Deepa, K. SoC estimation and comparative analysis of lithium polymer and lithium-ion batteries in unmanned aerial vehicles. In Proceedings of the 2024 First International Conference on Innovations in Communications, Electrical and Computer Engineering (ICICEC), Davangere, India, 19–20 December 2024. [Google Scholar] [CrossRef]

- Bauer, J.; Klein, A.; Bertram, S. Investigating the impact of communication delays and bandwidth restrictions on remote operations of unmanned systems. In Proceedings of the 1st International Conference on Drones and Unmanned Systems, 2025; pp. 273–279. [Google Scholar] [CrossRef]

- Peng, C.; Keller, J.; Kumar, V. Time-optimal UAV trajectory planning for 3D urban structure coverage. In *Proceedings of the 2008 IEEE/RSJ International Conference on Intelligent Robots and Systems*, Nice, France, 22–26 September 2008; pp. 2750–2757. [Google Scholar] [CrossRef]

- Zhang, Y.; Wang, H.; Yan, Q.; et al. Research progress of unmanned mobile vision technology for complex dynamic scenes. J. Image Graph. 2025, *30*, 1828–1871. [Google Scholar] [CrossRef]

- Cheng, Z.; Yang, X.; Lu, R.; et al. Visible-infrared incremental domain adaptive object detection based on prototype alignment and adaptive recovery. Infrared Laser Eng. 2025, *54*, 20250388. [Google Scholar] [CrossRef]

- Song, B.; Zhao, S.; Wang, Z.; et al. daf-detr: A dynamic adaptation feature transformer for enhanced object detection in unmanned aerial vehicles. Knowl.-Based Syst. 2025, *323*, 113760. [Google Scholar] [CrossRef]

- He, W.; Hu, Y.; Li, W. Review of optimization algorithms for UAV routes. Mod. Def. Technol. 2024, *52*, 24–32. [Google Scholar] [CrossRef]

- Xu, S.; Zhou, Z.; Li, J.; et al. Communication-constrained UAVs’ coverage search method in uncertain scenarios. IEEE Sens. J. 2024, *24*, 16778–16789. [Google Scholar] [CrossRef]

- Tang, J.; Ma, H. Mixed integer programming for time-optimal multi-robot coverage path planning with efficient heuristics. IEEE Robot. Autom. Lett. 2023, *8*, 6491–6498. [Google Scholar] [CrossRef]

- Chua, W.H.; Uttraphan, C.; Choon, C.C.; et al. Optimizing FPGA-based YOLO series accelerators: A survey of techniques. Neurocomputing 2025, *650*, 130874. [Google Scholar] [CrossRef]

- Ait Saadi, A.; Soukane, A.; Meraihi, Y.; et al. Intelligent path planning algorithms for UAVs: Classification, complexity analysis, hybrid ablation insights, and future directions. Adv. Mech. Eng. 2025, *17*. [Google Scholar] [CrossRef]

| The model | Platform | Reasoning time | mAP@0.5 | Amplitude of decay |

| YOLOv5nu | Raspberry Pi 4B | 126ms | 54.9% | — |

| YOLOv5su | Raspberry Pi 4B | 380ms | 68.8% | — |

| YOLOv8n | Raspberry Pi 4B | 162ms | 86.2% | 11.3 |

| YOLOv8s | Raspberry Pi 4B | 400ms | 70.8% | — |

| YOLOv8n-Lite | Raspberry Pi 4B | 83ms | 84.6% | 4.8 |

| Algorithms | Completion time | Coverage | Replanning delay |

| Random walk algorithm | 1.15 ms | 76.2% | — |

| BFS | 1.92 ms | 99.1% | 45ms |

| Greedy algorithm | 3.06 ms | 82.3% | 12ms |

| GGB | 0.31 ms | 98.7% | 28ms |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).