Submitted:

10 April 2026

Posted:

13 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Data and Methods

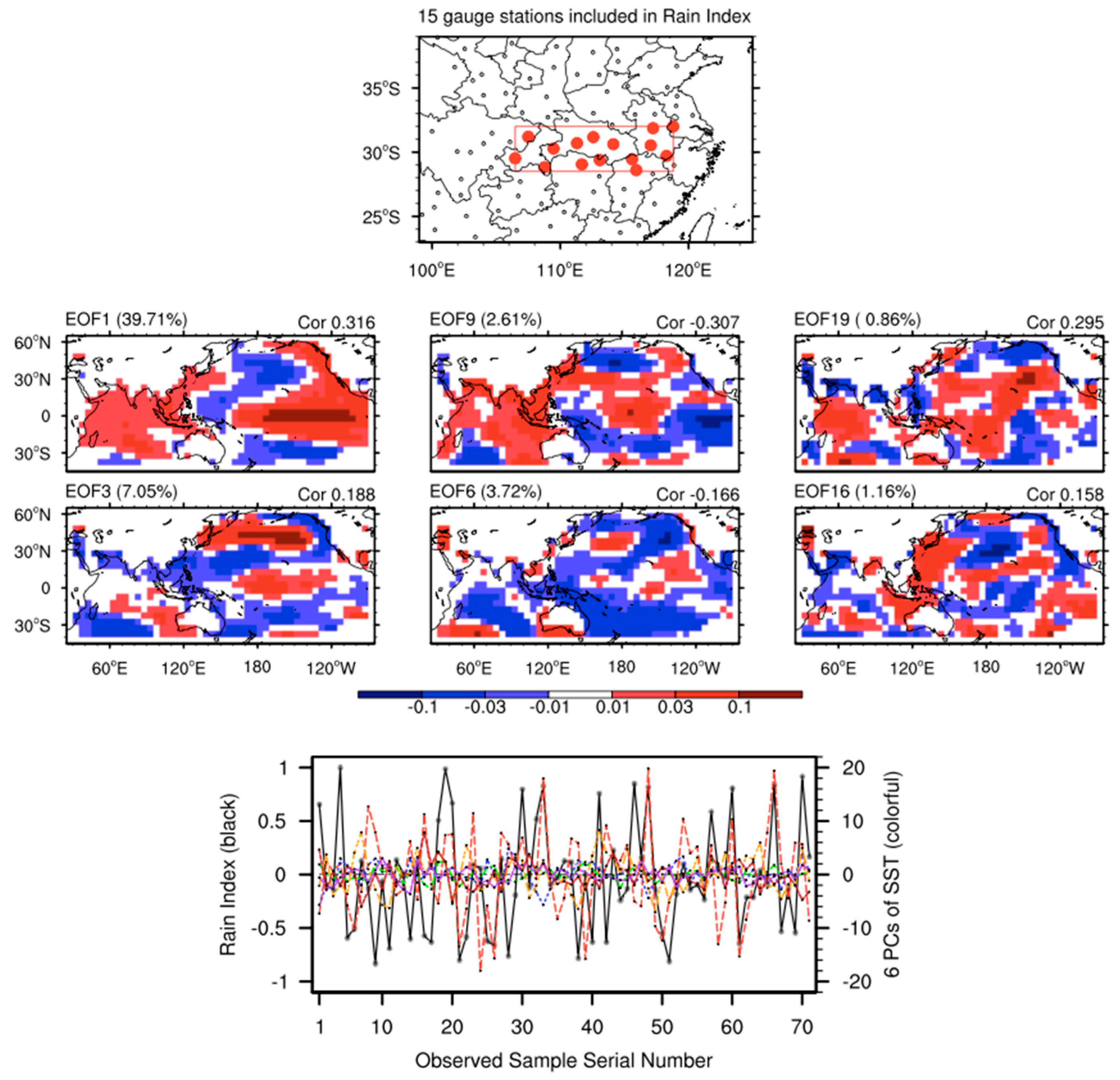

2.1. Samples for the NN Model

2.2. The NN Prediction Model

2.3. Experimental Settings and Evaluation Metrics

3. Results and Analysis

3.1. Training Process Analysis

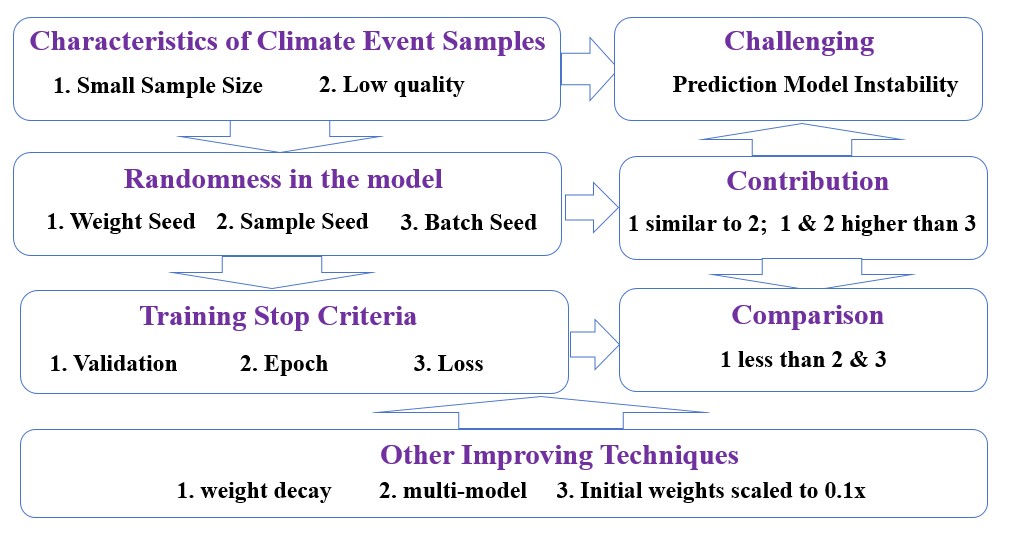

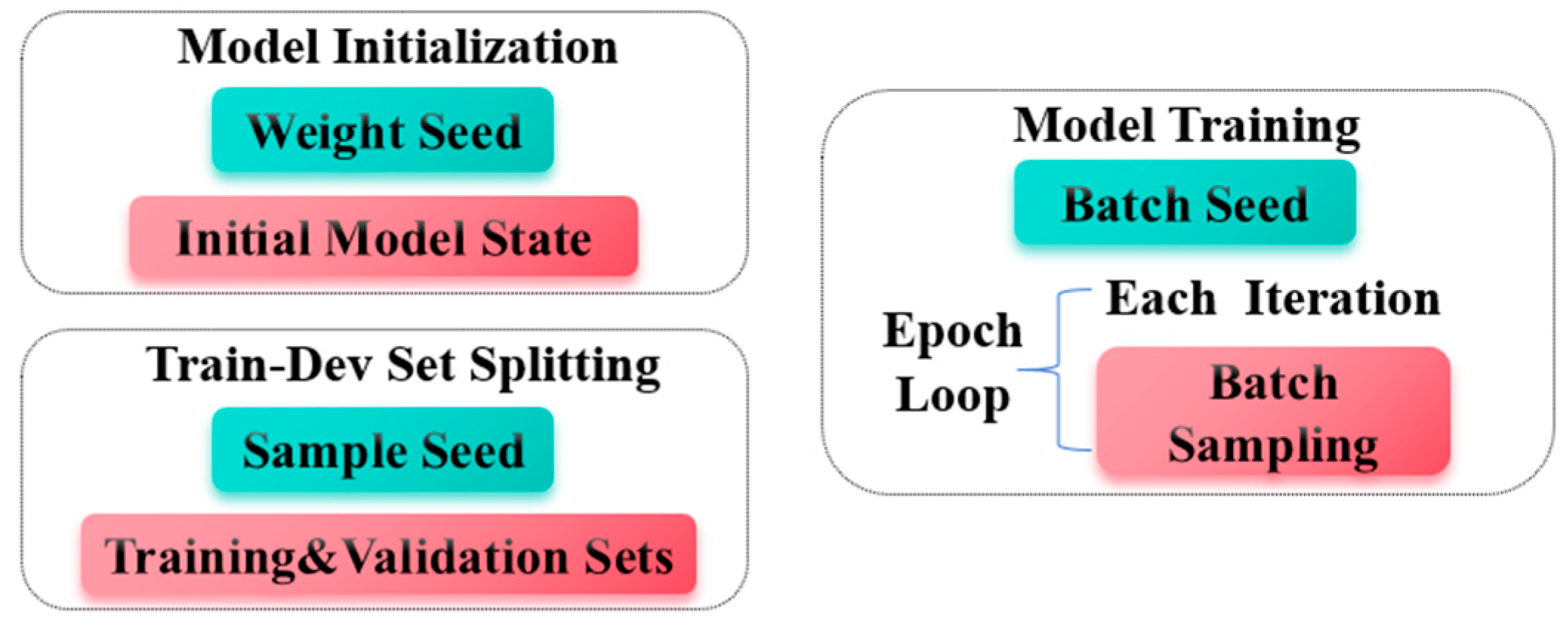

3.2. Contribution of Different Random Seeds to Model Instability

3.3. Multi-Perspective Analysis of Model Performance

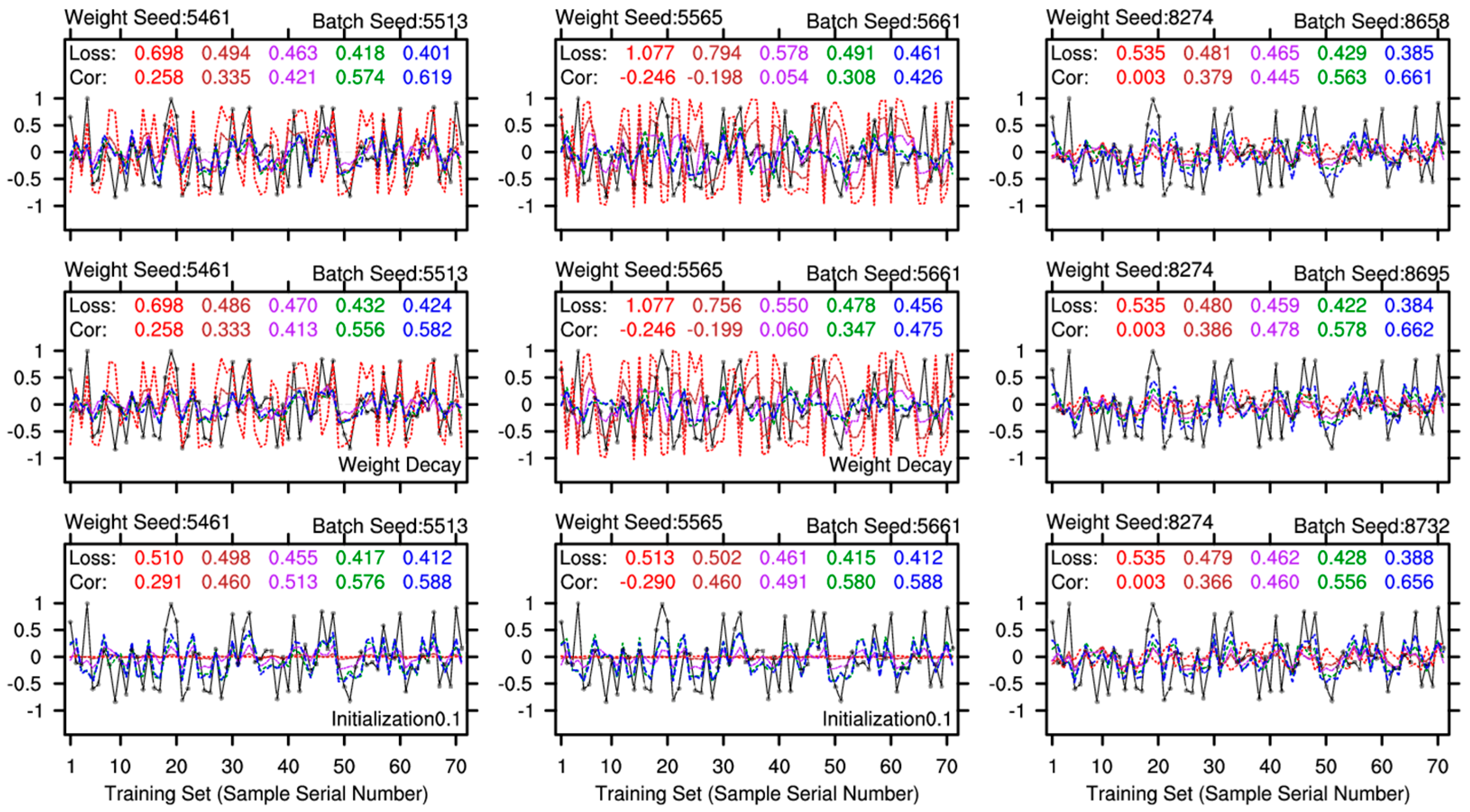

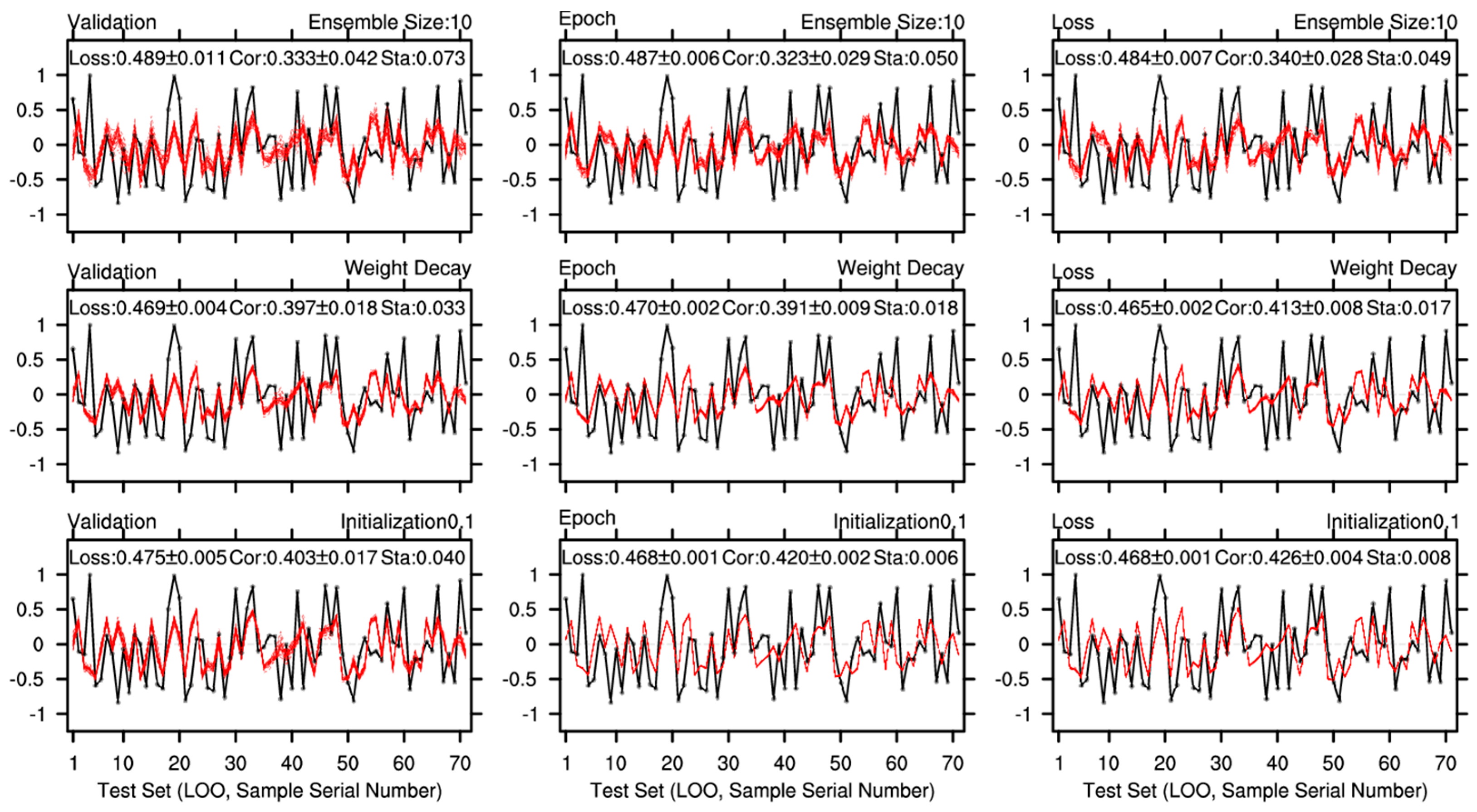

3.4. Different Techniques for Enhancing Model Stability

4. Discussion

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Appendix A

References

- Liu, Y.; Ren, H.; Zhang, P.; Zuo, J.; Tian, B.; Wan, J.; Li, Y. Application of the Hybrid Statistical Downscaling Model in Summer Precipitation Prediction in China. Clim. Environ. Res. 2020, 25, 163–171. [Google Scholar] [CrossRef]

- Troccoli, A. Seasonal Climate Forecasting. Meteorol. Appl. 2010, 17, 251–268. [Google Scholar] [CrossRef]

- Toride, K.; Newman, M.; Hoell, A.; Capotondi, A.; Schlör, J.; Amaya, D.J. Using Deep Learning to Identify Initial Error Sensitivity for Interpretable ENSO Forecasts. Artif. Intell. Earth Syst. 2025, 4, e240045. [Google Scholar] [CrossRef]

- Wang, T.; Huang, P. Superiority of a Convolutional Neural Network Model over Dynamical Models in Predicting Central Pacific ENSO. Adv. Atmos. Sci. 2024, 41, 141–154. [Google Scholar] [CrossRef]

- Mekanik, F.; Imteaz, M.A.; Gato-Trinidad, S.; Elmahdi, A. Multiple Regression and Artificial Neural Network for Long-Term Rainfall Forecasting Using Large Scale Climate Modes. J. Hydrol. 2013, 503, 11–21. [Google Scholar] [CrossRef]

- Anirudh, K.M.; Raj, P.; Sandeep, S.; Kodamana, H.; Sabeerali, C.T. A Skillful Prediction of Monsoon Intraseasonal Oscillation Using Deep Learning. J. Geophys. Res. Mach. Learn. Comput. 2025, 2, e2024JH000504. [Google Scholar] [CrossRef]

- Bommer, P.L.; Kretschmer, M.; Spuler, F.R.; Bykov, K.; Höhne, M.M.-C. Deep Learning Meets Teleconnections: Improving S2S Predictions for European Winter Weather. Mach. Learn. Earth 2025, 1, 015002. [Google Scholar] [CrossRef]

- Yang, S.; Ling, F.; Ying, W.; Yang, S.; Luo, J. A Brief Overview of the Application of Artificial Intelligence to Climate Prediction. Trans. Atmos. Sci. 2022, 45, 641. [Google Scholar] [CrossRef]

- Wang, G.-G.; Cheng, H.; Zhang, Y.; Yu, H. ENSO Analysis and Prediction Using Deep Learning: A Review. Neurocomputing 2023, 520, 216–229. [Google Scholar] [CrossRef]

- Materia, S.; García, L.P.; van Straaten, C.; O, S.; Mamalakis, A.; Cavicchia, L.; Coumou, D.; de Luca, P.; Kretschmer, M.; Donat, M. Artificial Intelligence for Climate Prediction of Extremes: State of the Art, Challenges, and Future Perspectives. WIREs Clim. Change 2024, 15, e914. [Google Scholar] [CrossRef]

- Ham, Y.-G.; Kim, J.-H.; Luo, J.-J. Deep Learning for Multi-Year ENSO Forecasts. Nature 2019, 573, 568–572. [Google Scholar] [CrossRef]

- Gibson, P.B.; Chapman, W.E.; Altinok, A.; Delle Monache, L.; DeFlorio, M.J.; Waliser, D.E. Training Machine Learning Models on Climate Model Output Yields Skillful Interpretable Seasonal Precipitation Forecasts. Commun. Earth Environ. 2021, 2, 159. [Google Scholar] [CrossRef]

- Yang, S.; Ling, F.; Li, Y.; Luo, J.-J. Improving Seasonal Prediction of Summer Precipitation in the Middle–Lower Reaches of the Yangtze River Using a TU-Net Deep Learning Approach. Artif. Intell. Earth Syst. 2023, 2, 220078. [Google Scholar] [CrossRef]

- Hoffman, L.; Massonnet, F.; Sticker, A. Probabilistic Forecasts of September Arctic Sea Ice Extent at the Interannual Timescale With Data-Driven Statistical Models. J. Geophys. Res. Mach. Learn. Comput. 2025, 2, e2025JH000669. [Google Scholar] [CrossRef]

- Tangang, F.T.; Hsieh, W.W.; Tang, B. Forecasting the Equatorial Pacific Sea Surface Temperatures by Neural Network Models. Clim. Dyn. 1997, 13, 135–147. [Google Scholar] [CrossRef]

- Wu, A.; Hsieh, W.W.; Tang, B. Neural Network Forecasts of the Tropical Pacific Sea Surface Temperatures. Neural Netw. 2006, 19, 145–154. [Google Scholar] [CrossRef]

- Sun, C.; Shi, X.; Yan, H.; Jiang, Q.; Zeng, Y. Forecasting the June Ridge Line of the Western Pacific Subtropical High with a Machine Learning Method. Atmosphere 2022, 13, 660. [Google Scholar] [CrossRef]

- Amir, S.; Van De Meent, J.-W.; Wallace, B. On the Impact of Random Seeds on the Fairness of Clinical Classifiers. In Proceedings of the 2021 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Online, 6–11 June 2021. [Google Scholar]

- Gundersen, O.E.; Coakley, K.; Kirkpatrick, C.; Gil, Y. Sources of Irreproducibility in Machine Learning: A Review. arXiv 2023, arXiv:2204.07610. [Google Scholar] [CrossRef]

- Zhang, T.; Wu, F.; Katiyar, A.; Weinberger, K.Q.; Artzi, Y. Revisiting Few-Sample BERT Fine-Tuning. arXiv 2021, arXiv:2006.05987. [Google Scholar] [CrossRef]

- Keshari, R.; Ghosh, S.; Chhabra, S.; Vatsa, M.; Singh, R. Unravelling Small Sample Size Problems in the Deep Learning World. In Proceedings of the 2020 IEEE Sixth International Conference on Multimedia Big Data (BigMM), New Delhi, India, 24–26 September 2020. [Google Scholar] [CrossRef]

- Pecher, B.; Srba, I.; Bielikova, M. A Survey on Stability of Learning with Limited Labelled Data and Its Sensitivity to the Effects of Randomness. ACM Comput. Surv. 2025, 57, 1–40. [Google Scholar] [CrossRef]

- Kaplan, A.; Cane, M.A.; Kushnir, Y.; Clement, A.C.; Blumenthal, M.B.; Rajagopalan, B. Analyses of Global Sea Surface Temperature 1856–1991. J. Geophys. Res. Oceans 1998, 103, 18567–18589. [Google Scholar] [CrossRef]

- Fan, K.; Wang, H.; Choi, Y.-J. A Physically-Based Statistical Forecast Model for the Middle-Lower Reaches of the Yangtze River Valley Summer Rainfall. Chin. Sci. Bull. 2008, 53, 602–609. [Google Scholar] [CrossRef]

- Huang, P.; Huang, R.-H. Relationship between the Modes of Winter Tropical Pacific SST Anomalies and the Intraseasonal Variations of the Following Summer Rainfall Anomalies in China. Atmos. Ocean. Sci. Lett. 2009, 2, 295–300. [Google Scholar] [CrossRef]

- Cai, Y.; Shi, X. A Comparative Study on the Methods of Predictor Extraction from Global Sea Surface Temperature Fields for Statistical Climate Forecast System. Atmosphere 2025, 16, 349. [Google Scholar] [CrossRef]

- Jiang, Q.; Shi, X. Forecasting the July Precipitation over the Middle-Lower Reaches of the Yangtze River with a Flexible Statistical Model. Atmosphere 2023, 14, 152. [Google Scholar] [CrossRef]

- Patel, P.; Nandu, M.; Raut, P. Initialization of Weights in Neural Networks. Int. J. Sci. Dev. Res. 2018, 3, 73–79. [Google Scholar]

- Kingma, D.P.; Ba, J. Adam: A Method for Stochastic Optimization. arXiv 2014, arXiv:1412.6980. [Google Scholar] [CrossRef]

- Mahsereci, M.; Balles, L.; Lassner, C.; Hennig, P. Early Stopping without a Validation Set. arXiv 2017, arXiv:1703.09580. [Google Scholar] [CrossRef]

- Gundersen, O.E.; Shamsaliei, S.; Kjærnli, H.S.; Langseth, H. On Reporting Robust and Trustworthy Conclusions from Model Comparison Studies Involving Neural Networks and Randomness. In Proceedings of the 2023 ACM Conference on Reproducibility and Replicability, Santa Cruz, CA, USA, 28 June 2023. [Google Scholar] [CrossRef]

- Sheng, Z.; Xie, S.; Pan, C. Probability and Mathematical Statistics; (In Chinese). Higher Education Press: Beijing, China, 2008; pp. 30–54. [Google Scholar]

- Krogh, A.; Hertz, J. A Simple Weight Decay Can Improve Generalization. In Advances in Neural Information Processing Systems 4; Moody, J.E., Hanson, S.J., Lippmann, R.P., Eds.; Morgan Kaufmann: San Mateo, CA, USA, 1992; pp. 950–957. [Google Scholar]

- Smith, L.N. A Disciplined Approach to Neural Network Hyper-Parameters: Part 1 -- Learning Rate, Batch Size, Momentum, and Weight Decay. arXiv 2018, arXiv:1803.09820. [Google Scholar] [CrossRef]

- Srivastava, N.; Hinton, G.; Krizhevsky, A.; Sutskever, I.; Salakhutdinov, R. Dropout: A Simple Way to Prevent Neural Networks from Overfitting. J. Mach. Learn. Res. 2014, 15, 1929–1958. [Google Scholar]

- Mamalakis, A.; Ebert-Uphoff, I.; Barnes, E.A. Neural Network Attribution Methods for Problems in Geoscience: A Novel Synthetic Benchmark Dataset. Environ. Data Sci. 2022, 1, e8. [Google Scholar] [CrossRef]

| Category | Name | Description (default) |

|---|---|---|

| Architecture | Nx | Number of nodes in the input layer (6). |

| N1 | First hidden layer size (8). | |

| N2 | Second hidden layer size (3). | |

| Training Stop | Validation | No further improvement on validation set. |

| Epoch | The number of training epochs is preset (10). | |

| Loss | Loss falls below a preset threshold (0.4). | |

| Randomness | weight seed | One seed corresponds to one model initial state. |

| sample seed | The Train-Validation dataset split is determined. | |

| batch seed | Controlling randomness during model training. | |

| Stabilization | Weight Decay | Pushes weights and biases toward zero (false). |

| Initialization0.1 | Initial weights scaled to 0.1x (false). |

| Option | Values | Description |

|---|---|---|

| Type | Single | The result is the output of each individual NN model. |

| Ensemble | The result is the mean value of multiple NN models. | |

| Category | OnlyTrain | All samples are served as Train-Dev (Training) set. |

| TrainTest | Partial samples are retained for testing. | |

| LOO | Leave-One-Out cross-validation experiment. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).