Submitted:

09 April 2026

Posted:

13 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Motivation: Suppressing Volume Is the Wrong Response

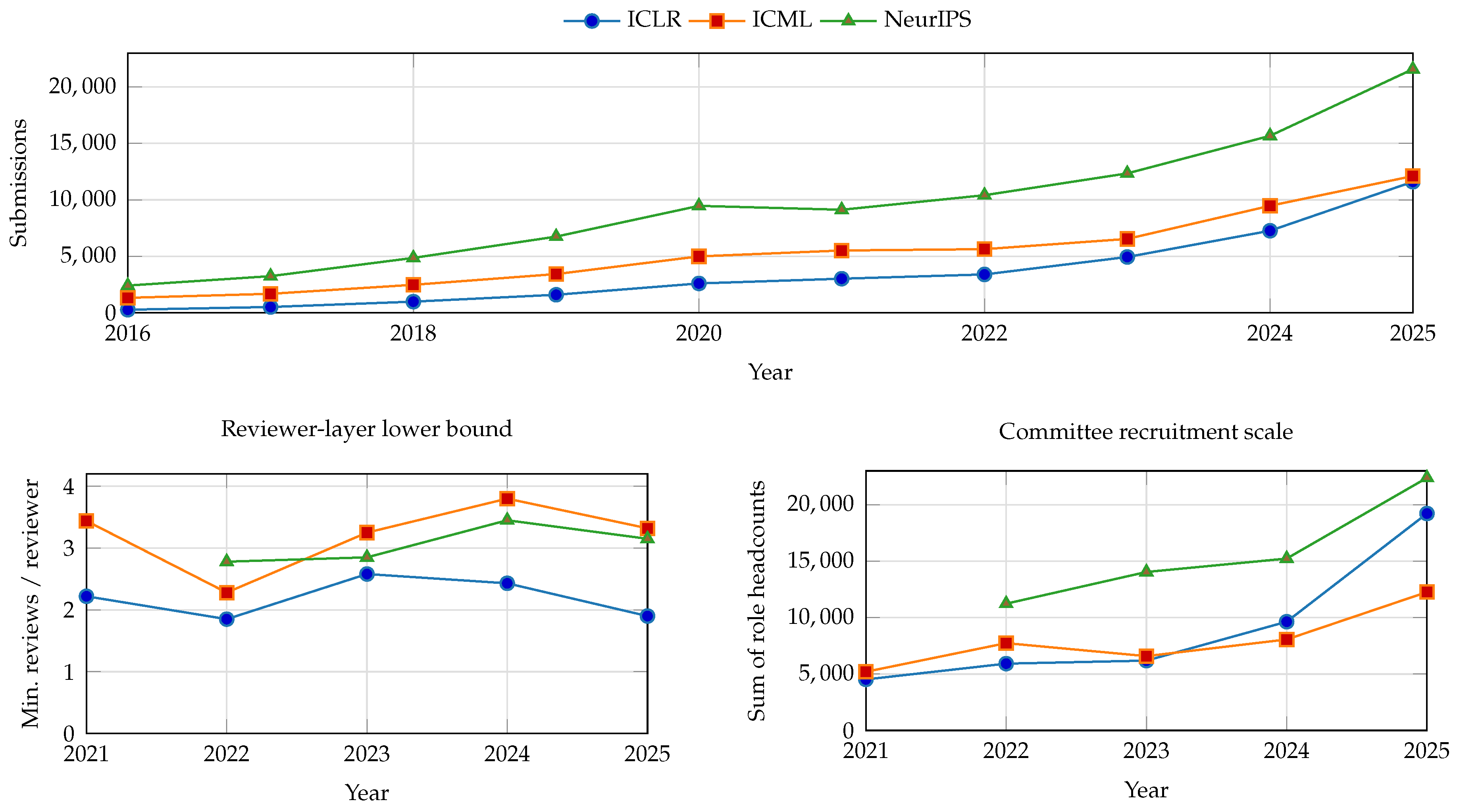

2.1. The Big-Three Venues Already Show the Curve

2.2. Abundance Can Improve the Scientific Record

2.3. Fairness Should Attach to Due Process, Not Equal Labor per PDF

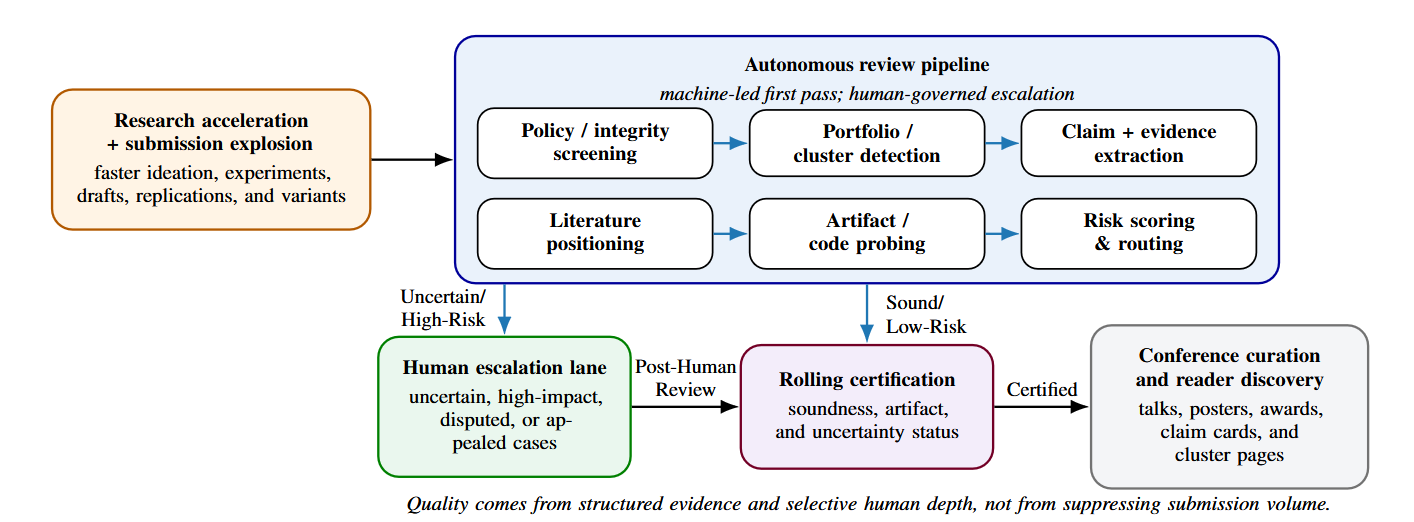

3. The Core Thesis: Autonomous Review Pipelines Should Be the Control Plane

4. Strict Standards Can Coexist with Faster Research Cycles

5. Human Roles, Governance, and the Economics of Attention

5.1. A Falsifiable Model of Institutional Attention

5.2. Failure Modes and Safeguards

6. Downstream Implication: From Seasonal Batches to Continuous Review

7. A Staged Path to Venue Adoption

8. Alternative Views

- View 1: The labor deficit model.

- View 2: Supply suppression.

- View 3: The egalitarian fallacy.

- View 4: The sovereign AI assessor.

- View 5: Fused certification and curation.

- View 6: Post-conference publication.

9. Research Agenda

- 1. Granular evaluation frameworks.

- 2. Institutional policy and governance.

- 3. Systems and deployment.

10. Conclusions

Acknowledgments

Appendix A. Design Layers

| Surface | Primary users | Typical outputs | Main benefit |

|---|---|---|---|

| Author-side preflight | Authors | Clarity checks, missing-comparison warnings, artifact prompts, and claim-level feedback. | Improves papers before costly human review. |

| Venue-side control plane | Chairs, reviewers, editors | Routing signals, cluster detection, evidence reports, and escalation triggers. | Reduces routine human load and improves consistency. |

| Reader-side discovery | Readers, meta-researchers, curators | Claim cards, cluster pages, topic maps, and comparative summaries. | Makes large literatures more navigable. |

| Rigor dimension | Human-first seasonal review | Pipeline-first certification |

|---|---|---|

| Coverage of minimum checks | Many routine checks are reviewer-dependent and uneven under heavy load. | Every submission can receive the same policy, disclosure, overlap, and artifact-availability checks. |

| Evidence completeness | Claim reconstruction, related-work positioning, and artifact probing vary sharply with reviewer time and expertise. | Claim cards, retrieval-based literature positioning, and bounded artifact probes can be required before human certification. |

| Boundary-case scrutiny | Expert time is diluted by routine first-pass screening. | Human depth is concentrated on uncertain, high-impact, disputed, or appealed cases. |

| Decision semantics | One scalar verdict often hides which dimension actually failed. | Typed certificates can record policy, evidence, artifact, and escalation status separately. |

| Auditability | Failure modes are dispersed across private reviews and hard to diagnose later. | Module outputs, overrides, and escalation thresholds can be logged, audited, and recalibrated. |

| Layer | Dominant question | Autonomous review pipeline responsibility | Human responsibility |

|---|---|---|---|

| Intake | Is the submission admissible? | Format, anonymity, disclosure, overlap, and integrity screening. | Appeals on flags, policy exceptions, and sanctions. |

| Evidence build | What does the paper claim and what evidence surrounds it? | Claim extraction, literature positioning, artifact probing, and cluster formation. | Inspect high-risk or high-impact claims and request clarifications. |

| Escalation | Where is scarce human depth most valuable? | Risk scoring, uncertainty estimates, portfolio context, and disagreement signals. | Add review depth, adjudicate edge cases, and redirect resources. |

| Certification | What enters the technical record? | Structured report with typed evidence and uncertainty notes. | Accountable final judgment on escalated or borderline cases. |

| Curation | What gets scarce visibility? | Reader summaries, cluster pages, topic maps, and candidate highlight lists. | Talks, posters, awards, synthesis sessions, and agenda setting. |

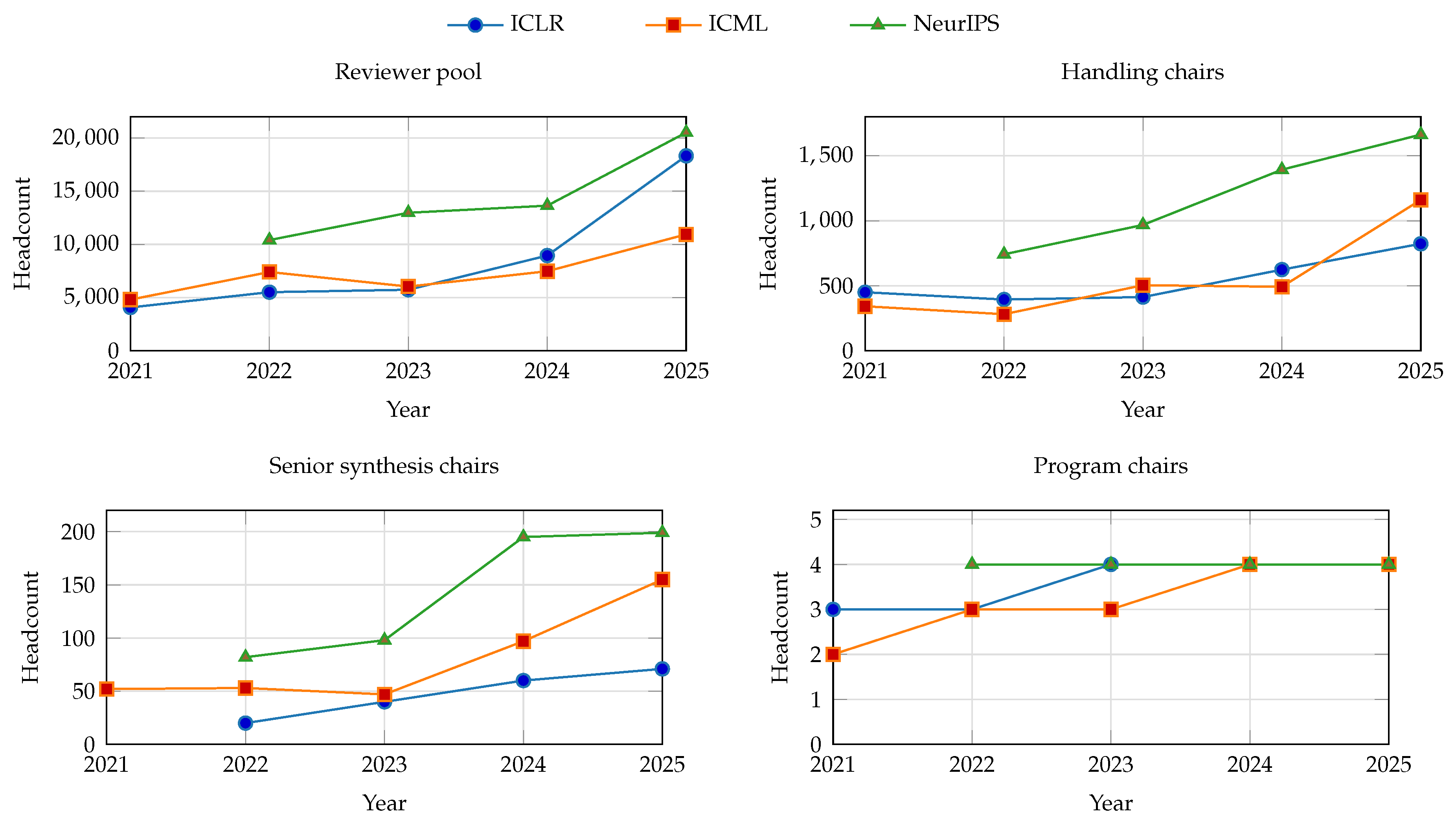

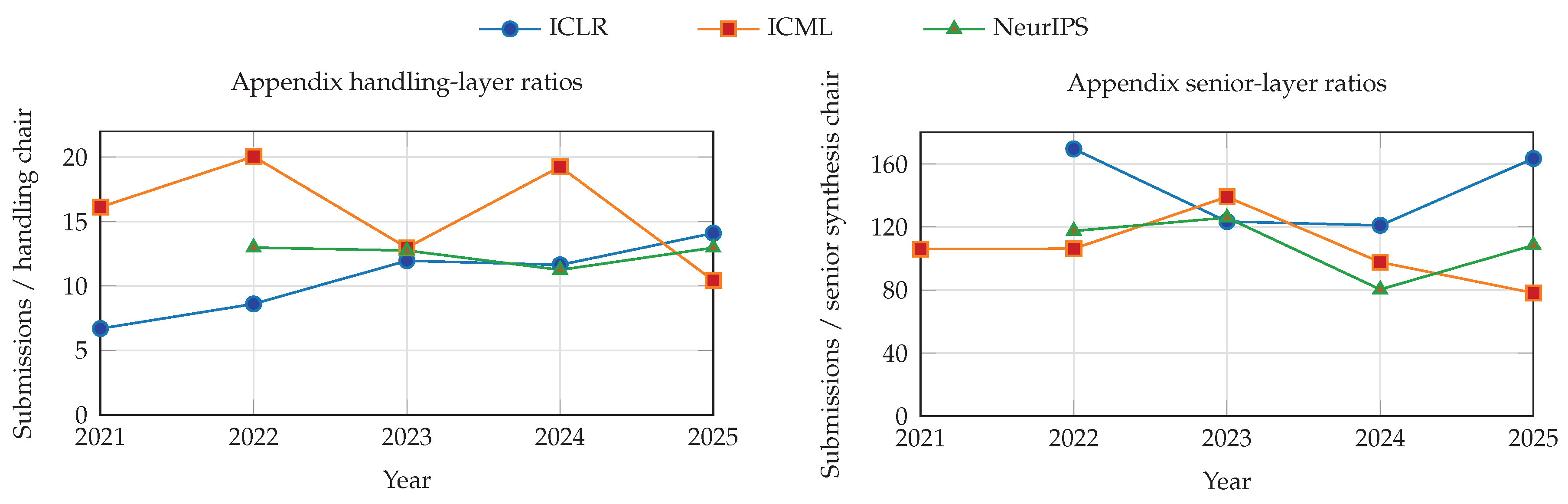

Appendix B. Figure 2 Data, Sources, and Derived Ratios

| Venue | 2016 | 2017 | 2018 | 2019 | 2020 | 2021 | 2022 | 2023 | 2024 | 2025 | 2016→2025 |

|---|---|---|---|---|---|---|---|---|---|---|---|

| ICLR | 265 | 507 | 981 | 1,591 | 2,594 | 3,014 | 3,391 | 4,938 | 7,262 | 11,603 | 43.79x |

| ICML | 1,320 | 1,676 | 2,473 | 3,424 | 4,990 | 5,513 | 5,630 | 6,538 | 9,473 | 12,107 | 9.17x |

| NeurIPS | 2,406 | 3,240 | 4,856 | 6,743 | 9,467 | 9,122 | 10,411 | 12,343 | 15,671 | 21,575 | 8.97x |

| Venue | Layer | 2021 | 2022 | 2023 | 2024 | 2025 |

|---|---|---|---|---|---|---|

| ICLR | Reviewer pool | 4,072 | 5,507 | 5,734 | 8,950 | 18,325 |

| ICLR | Handling chair (AC) | 450 | 394 | 413 | 624 | 823 |

| ICLR | Senior synthesis (SAC) | — | 20 | 40 | 60 | 71 |

| ICLR | Program chairs | 3 | 3 | 4 | 4 | 4 |

| ICML | Reviewer pool | 4,807 | 7,403 | 6,035 | 7,474 | 10,943 |

| ICML | Handling chair (meta-reviewer) | 342 | 281 | 504 | 492 | 1,161 |

| ICML | Senior synthesis (senior meta-reviewer) | 52 | 53 | 47 | 97 | 155 |

| ICML | Program chairs | 2 | 3 | 3 | 4 | 4 |

| NeurIPS | Reviewer pool | — | 10,406 | 12,974 | 13,640 | 20,518 |

| NeurIPS | Handling chair (AC) | — | 742 | 968 | 1,393 | 1,663 |

| NeurIPS | Senior synthesis (SAC) | — | 82 | 98 | 195 | 199 |

| NeurIPS | Program chairs | — | 4 | 4 | 4 | 4 |

| Venue | 2021 | 2022 | 2023 | 2024 | 2025 |

|---|---|---|---|---|---|

| ICLR | 4,525 | 5,924 | 6,191 | 9,638 | 19,223 |

| ICML | 5,203 | 7,740 | 6,589 | 8,067 | 12,263 |

| NeurIPS | — | 11,234 | 14,044 | 15,232 | 22,384 |

| Venue | 2021 | 2022 | 2023 | 2024 | 2025 |

|---|---|---|---|---|---|

| ICLR | 2.22 | 1.85 | 2.58 | 2.43 | 1.90 |

| ICML | 3.44 | 2.28 | 3.25 | 3.80 | 3.32 |

| NeurIPS | — | 2.78 | 2.85 | 3.45 | 3.15 |

| Venue | 2021 | 2022 | 2023 | 2024 | 2025 |

|---|---|---|---|---|---|

| ICLR | 6.70 | 8.61 | 11.96 | 11.64 | 14.10 |

| ICML | 16.12 | 20.04 | 12.97 | 19.25 | 10.43 |

| NeurIPS | — | 12.98 | 12.75 | 11.25 | 12.97 |

| Venue | 2021 | 2022 | 2023 | 2024 | 2025 |

|---|---|---|---|---|---|

| ICLR | — | 169.55 | 123.45 | 121.03 | 163.42 |

| ICML | 106.02 | 106.23 | 139.11 | 97.66 | 78.11 |

| NeurIPS | — | 117.49 | 125.95 | 80.36 | 108.42 |

| Venue | 2021 | 2022 | 2023 | 2024 | 2025 |

|---|---|---|---|---|---|

| ICLR | 1,004.67 | 1,130.33 | 1,234.50 | 1,815.50 | 2,900.75 |

| ICML | 2,756.50 | 1,876.67 | 2,179.33 | 2,368.25 | 3,026.75 |

| NeurIPS | — | 2,408.50 | 3,085.75 | 3,917.75 | 5,393.75 |

Appendix C. Extended Operational Sketch

- 1.

- Continuous intake. Authors submit at any time. The system checks anonymity, formatting, required disclosures, overlap, and other basic policy conditions.

- 2.

- Evidence construction. The system extracts major claims, clusters nearby papers, retrieves relevant literature, and probes released artifacts where feasible.

- 3.

- Structured report. Each submission receives a pipeline report with claim cards, uncertainty notes, and routing signals. Authors may be asked for clarifications or artifact fixes before any human escalation.

- 4.

- Selective escalation. Humans are assigned mainly to uncertain, high-impact, contested, or appeal-triggered cases.

- 5.

- Rolling certification. The venue records whether the paper is technically certified, provisionally certified with uncertainty notes, or unresolved pending further review.

- 6.

- Conference curation. Periodic conference decisions allocate scarce visibility among certified papers through talks, posters, awards, and synthesis sessions.

Appendix D. Schema for the Submission Evidence Graph

| Node / Edge Type | Example Entities / Relations | Function in the Review Pipeline |

|---|---|---|

| Claim Node | “Method X reduces inference latency by 20%” | Central unit of evaluation; requires human or automated verification. |

| Method Node | “Semantic Per-Pair DPO”, “LoRA” | Identifies the core algorithmic or architectural contributions. |

| Dataset Node | “ImageNet”, “Custom Web Scrape” | Triggers artifact checks and flags potential data contamination. |

| Metric Node | “Accuracy”, “Throughput (tokens/sec)” | Standardizes comparisons across similar papers in the same cluster. |

| USES_METHOD | Submission → Method Node | Links a paper to its underlying techniques, enabling portfolio clustering. |

| CLAIMS_OVER | Method Node → Baseline Node | Explicitly maps the authors’ stated improvements against prior art. |

| EVALUATES_ON | Method Node → Dataset Node | Maps the empirical boundary of the paper’s claims. |

Appendix E. Big-Three Conference Scale Statistics

| Venue | Year | Submitted | Accepted | Acc. rate | Reviewers | ACs | SACs | Source scope |

|---|---|---|---|---|---|---|---|---|

| ICLR | 2023 | 4,938 | 1,574 | 31.88% | 5,734 | 413 | 40 | Official fact sheet (research track). |

| ICLR | 2024 | 7,262 | 2,260 | 31.12% | 8,950 | 624 | 60 | Official fact sheet (research track). |

| ICLR | 2025 | 11,603 | 3,704 | 31.92% | 18,325 | 823 | 71 | Official fact sheet (research track). |

| ICML | 2023 | 6,538 | 1,827 | 27.94% | 6,035 | 504 | 47 | OpenAccept main-track history for submissions/accepts; official reviewers page for committee counts. |

| ICML | 2024 | 9,473 | 2,609 | 27.54% | 7,474 | 492 | 97 | OpenAccept main-track history plus official fact sheet for committee counts. |

| ICML | 2025 | 12,107 | 3,260 | 26.93% | 10,943 | 1,161 | 155 | OpenAccept main-track history plus official fact sheet for committee counts. |

| NeurIPS | 2023 | 12,343 | 3,218 | 26.07% | 12,974 | 968 | 98 | Official fact sheet (main track only). |

| NeurIPS | 2024 | 15,671 | 4,037 | 25.76% | 13,640 | 1,393 | 195 | Official fact sheet (main track only). |

| NeurIPS | 2025 | 21,575 | 5,290 | 24.52% | 20,518 | 1,663 | 199 | Official main-track review reflection. |

Appendix F. Related Work

Appendix G. Existing Pilots and Why Broad Deployment Remains Narrow

| Venue / example | Workflow location | What the machine does | Human authority retained | Why this does not yet amount to broad conference adoption |

|---|---|---|---|---|

| ICLR 2025 reviewer-feedback agent [9,10,108] | Reviewer side | Flags vague, redundant, or unprofessional reviews and offers quality-improving feedback. | Humans still write the actual reviews and make all paper judgments. | It improves reviewer behavior, but it does not become the venue’s first-pass control plane for every submission. |

| ICML 2026 PAT [13,14] | Author side | Provides manuscript feedback, prompts, and diagnostics before or around submission. | Humans still conduct the formal review process. | It improves drafts before review, but it is not a conference-wide first-pass review pipeline applied to all submissions at decision time. |

| AAAI-26 AI-assisted peer-review pilot [15] | Venue side | Adds one AI review and an AI-generated summary under explicit pilot rules. | AI gives no score or recommendation; all decisions remain human. | The pilot is deliberately narrow and cautious, reflecting unresolved governance questions about legitimacy, privacy, and robustness. |

| Rolling pathways such as TMLR, ARR, and J2C [42,48,49,50] | Certification timing | Decouple parts of certification and presentation timing; support more continuous review flows. | Human editors and reviewers remain in charge of certification. | These pathways relax deadline pressure, but they are not themselves a unified machine-first conference review architecture. |

| Representative research prototypes such as SEA, ReviewerGPT, quality-checkers, and FactReview [32,33,44,58,61,106] | Experimental systems | Generate review-style feedback, find critical problems, structure debate, or verify claims against evidence. | Used as experimental tools rather than institutional arbiters. | The technical direction is promising, but conferences still lack shared governance for how these systems should be integrated, disclosed, and audited at scale. |

| Institutional gap | Why it blocks broad deployment | Conference-level response |

|---|---|---|

| Confidentiality and privacy | Review involves unpublished manuscripts, sensitive artifacts, and reputation-sensitive judgments. Venues need to know where content goes, what models retain, and what third-party processing is acceptable. | Prefer venue-operated tooling or tightly governed vendors, require explicit data-handling terms, and separate allowed bounded assistance from prohibited external processing. |

| Legitimacy and accountability | Once an automated signal materially affects triage or escalation, conferences must answer who is responsible when the signal is wrong, harmful, or biased. | Define bounded machine authority in writing, keep final responsibility for borderline legitimacy, sanctions, and appeals in named human roles, and publish override pathways. |

| Policy fragmentation | Venues currently permit, prohibit, or partially regulate AI usage in different ways, which makes community trust and cross-venue norms unstable. | Publish task-level policies that specify what is machine-assisted, what must be disclosed, what remains human-only, and what logs are retained for audit. |

| Adversarial manipulation | Hidden prompts, prompt injection, and optimization against system heuristics can distort triage or reviewing once authors know the machine layer matters. | Red-team the pipeline, rotate checks, use multiple signals rather than one brittle model output, and maintain author appeal channels for questionable flags or cluster assignments. |

| Unequal access to tooling | If some authors can privately use strong paper assistants while others cannot, hidden adoption amplifies inequality rather than reducing communal workload fairly. | Provide conference-operated assistance to all participants, especially on the author side and first-pass review side, so the official pipeline narrows asymmetry instead of widening it. |

| Weak evaluation and audit | Without logging, post-cycle review, and public dashboards, conference communities cannot tell whether a deployed system actually reduces effort, raises quality, or simply moves failure around. | Treat deployment as an auditable policy intervention: log module outputs, escalation triggers, overrides, appeals, and publish post-cycle dashboards on burden, error, and reader usefulness. |

Appendix H. Systems, Platforms, and Repositories for Autonomous Review Pipelines

Appendix H.1. Representative Research Literature

| Work | Primary contribution | Why it matters for this paper |

|---|---|---|

| SEA [61] | Generates review-style feedback with standardization, evaluation, and analysis modules. | Shows early end-to-end automation of paper feedback, while stopping short of venue legitimacy. |

| Quality-checker framing [58] | Recasts LLMs as manuscript quality checkers rather than substitute reviewers. | Closely matches our argument that machines should gather evidence and flag problems. |

| Live review-feedback study [108] | Studies the ICLR 2025 reviewer-feedback deployment in a real review process. | Provides direct evidence that AI can assist review while creating governance tensions. |

| ReViewGraph [44] | Models automated paper reviewing through structured reviewer–author debate graphs. | Suggests richer representations for disagreement and escalation. |

| FactReview [33] | Combines claim extraction, literature positioning, and execution-based claim verification. | Exemplifies the evidence-grounded, claim-level style of autonomous review pipeline advocated here. |

| LLM-ASPR survey [109] | Synthesizes tasks, datasets, systems, and failure modes for automated scholarly paper review. | Helps position this paper’s argument in the broader technical and governance landscape. |

Appendix H.2. Representative Systems, Platforms, and Repositories

| Category | Examples | Relevance |

|---|---|---|

| Venue workflow infrastructure | OpenReview platform and API [59,112,113] | Provides an existing open review platform, API layer, and venue workflow primitives for machine-mediated review. |

| Reviewer matching infrastructure | OpenReview expertise models [60] | Supplies paper–reviewer affinity tools that could feed escalation and expert routing. |

| Rolling or continuous review venues | TMLR, ACL Rolling Review, Journal-to-Conference [42,48,49,50] | Demonstrate that review, certification, and conference presentation can already be partially decoupled. |

| Document parsing and structuring | GROBID [114] | Converts scholarly PDFs into structured representations useful for claim extraction and policy checks. |

| Scholarly metadata and retrieval | OpenAlex and Semantic Scholar APIs [115,116] | Enable literature positioning, citation retrieval, cluster building, and reader-facing comparisons. |

| Venue-side AI assistance | ICLR review-feedback agent, ICML PAT [9,13,14] | Show that mainstream venues are already piloting machine assistance on both the reviewer side and the author side. |

Appendix H.3. Implementation Hooks and Repository Map

| Function | Concrete resource | Typical use in an autonomous review pipeline pilot |

|---|---|---|

| Submission intake and state transitions | OpenReview platform plus API v2 and openreview-py [59,112,113] | Ingest submissions, store machine reports, attach decision metadata, and expose venue-specific workflow actions. |

| Reviewer or editor matching | OpenReview expertise models [60] | Compute affinity scores, seed escalation routing, and support specialist assignment once a paper leaves the automated queue. |

| PDF-to-structure conversion | GROBID [114] | Convert scholarly PDFs into structured sections, references, and metadata for claim extraction, overlap checks, and policy validation. |

| Literature retrieval and citation context | OpenAlex and Semantic Scholar APIs [115,116] | Build neighborhood graphs, retrieve likely comparisons, and generate reader-facing cluster or claim pages. |

| Venue-side author assistance | ICML PAT and reviewer-feedback agents [9,13,14] | Provide pre-submission diagnostics for authors and structured coaching for reviewers without delegating final legitimacy. |

| Rolling certification pathways | TMLR, ACL Rolling Review, and Journal-to-Conference [42,48,49,50] | Supply external certification channels that can feed conference curation after the machine-first control plane has done initial triage. |

References

- Gottweis, J.; Weng, W.H.; Daryin, A.; Tu, T.; Palepu, A.; Sirkovic, P.; Myaskovsky, A.; Weissenberger, F.; Rong, K.; Tanno, R.; et al. Towards an AI co-scientist. arXiv 2025, arXiv:2502.18864. [Google Scholar] [CrossRef]

- Bubeck, S.; Coester, C.; Eldan, R.; Gowers, T.; Lee, Y.T.; Lupsasca, A.; Sawhney, M.; Scherrer, R.; Sellke, M.; Spears, B.K.; et al. Early science acceleration experiments with GPT-5. arXiv 2025, arXiv:2511.16072. [Google Scholar] [CrossRef]

- Hao, Q.; Xu, F.; Li, Y.; Evans, J. Artificial intelligence tools expand scientists’ impact but contract science’s focus. Nature 2026, 1–7. [Google Scholar] [CrossRef]

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. The AutoResearch Moment: From Experimenter to Research Director. 2026. [Google Scholar] [CrossRef]

- Simon, H.A. Designing organizations for an information-rich world. International Library of Critical Writings in Economics 1996, 70, 187–202. [Google Scholar]

- ICLR 2025. ICLR 2025 Fact Sheet. Conference Fact Sheet, Accessed. 2025; (accessed on 23 March 2026). [Google Scholar]

- NeurIPS 2025 Program Committee Chairs. Reflections on the 2025 Review Process from the Program Committee Chairs. NeurIPS Blog. Accessed. 2025. (accessed on 23 March 2026).

- Koniusz, P.; Chen, N.; Ghassemi, M.; Pascanu, R.; Lin, H.T.; Aroyo, L.; Locatello, F.; Palla, K. Responsible Reviewing Initiative for NeurIPS 2025. NeurIPS Blog. Accessed. 2025. (accessed on 23 March 2026).

- Zou, J.; Vondrick, C.; Yu, R.; Peng, V.; Sha, F.; Garg, A. Assisting ICLR 2025 Reviewers with Feedback. ICLR Blog. Accessed. 2024. (accessed on 23 March 2026).

- Thakkar, N.; Yuksekgonul, M.; Silberg, J.; Garg, A.; Peng, N.; Sha, F.; Yu, R.; Vondrick, C.; Zou, J. Can LLM feedback enhance review quality? A randomized study of 20k reviews at ICLR 2025. arXiv 2025, arXiv:2504.09737. [Google Scholar] [CrossRef]

- Zou, J.; Thakkar, N.; Vondrick, C.; Yu, R.; Peng, V.; Sha, F.; Garg, A. Leveraging LLM Feedback to Enhance Review Quality. ICLR Blog. Accessed. 2025. (accessed on 23 March 2026).

- Vondrick, C. Extended Partnership Pilot with TMLR for ICLR 2025. ICLR Blog. Accessed. 2024. (accessed on 23 March 2026).

- Jayaram, R.; Cohen-Addad, V.; Agarwal, A.; Dudik, M.; Li, S.; Jaggi, M. ICML Experimental Program using Google’s Paper Assistant Tool (PAT). ICML Blog. Accessed. 2026. (accessed on 23 March 2026).

- Kamath, G.; Jayaram, R.; Cohen-Addad, V.; Agarwal, A.; Dudik, M.; Li, S.; Jaggi, M. Retrospective on PAT x ICML 2026 AI Paper Assistant Program. ICML Blog. Accessed. 2026. (accessed on 7 April 2026).

- AAAI-26. FAQ for the AI-Assisted Peer-Review Process Pilot Program. Conference FAQ / PDF, Accessed. 2025; (accessed on 8 April 2026). [Google Scholar]

- Naddaf, M. AI is transforming peer review—and many scientists are worried. Nature 2025, 639, 852–854. [Google Scholar] [CrossRef]

- Naddaf, M. More than half of researchers now use AI for peer review—often against guidance. Nature 2026, 649, 273–274. [Google Scholar] [CrossRef]

- Gibney, E. Scientists hide messages in papers to game AI peer review. Nature 2025, 643, 887–888. [Google Scholar] [CrossRef]

- Gibney, E. Major conference catches illicit AI use-and rejects hundreds of papers. In Nature; 2026. [Google Scholar]

- ICML. ICML 2025 Peer Review FAQ. Conference Website, Accessed. 2025; (accessed on 9 April 2026). [Google Scholar]

- ICLR 2025. ICLR 2025 SAC Guide. Conference Website, Accessed. 2025; (accessed on 9 April 2026). [Google Scholar]

- NeurIPS. NeurIPS 2024 SAC Guidelines. Conference Website, Accessed. 2024; (accessed on 9 April 2026). [Google Scholar]

- Brockmeyer, B. Mentorship Program for New Reviewers at ICLR 2022. ICLR Blog. Accessed. 2022. (accessed on 9 April 2026).

- Fanelli, D. Negative results are disappearing from most disciplines and countries. Scientometrics 2012, 90, 891–904. [Google Scholar] [CrossRef]

- Mlinarić, A.; Horvat, M.; Šupak Smolčić, V. Dealing with the positive publication bias: Why you should really publish your negative results. Biochemia medica 2017, 27, 447–452. [Google Scholar] [CrossRef]

- ICLR. ICLR 2026 Reviewer Guide. Conference Website, Accessed. 2026; (accessed on 23 March 2026). [Google Scholar]

- NeurIPS 2026 Communication Chairs. What’s New for the Position Paper Track at NeurIPS 2026. NeurIPS Blog. Accessed. 2026. (accessed on 7 April 2026).

- NeurIPS 2026 Position Paper Track. NeurIPS 2026 Call for Position Papers. Conference Website, Accessed. 2026; (accessed on 7 April 2026).

- NeurIPS 2025 Position Paper Track. Call for Position Papers 2025. Conference Website. Accessed. 2025; (accessed on 23 March 2026).

- NeurIPS 2025 Position Paper Track. NeurIPS 2025 Position Paper Track FAQ. Conference Website, Accessed. 2025; (accessed on 23 March 2026).

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. Harness Engineering for Language Agents: The Harness Layer as Control, Agency, and Runtime. 2026. [Google Scholar] [CrossRef]

- Liu, R.; Shah, N.B. Reviewergpt? an exploratory study on using large language models for paper reviewing. arXiv 2023, arXiv:2306.00622. [Google Scholar] [CrossRef]

- Xu, H.; Yue, L.; Ouyang, C.; Zheng, L.; Pan, S.; Di, S.; Zhang, M.L. FactReview: Evidence-Grounded Reviews with Literature Positioning and Execution-Based Claim Verification. arXiv 2026, arXiv:2604.04074. [Google Scholar]

- Wei, Q.; Holt, S.; Yang, J.; Wulfmeier, M.; van der Schaar, M. The ai imperative: Scaling high-quality peer review in machine learning. arXiv 2025, arXiv:2506.08134. [Google Scholar] [CrossRef]

- Tran, D.; Valtchanov, A.; Ganapathy, K.; Feng, R.; Slud, E.; Goldblum, M.; Goldstein, T. Analyzing the Machine Learning Conference Review Process. arXiv 2020, arXiv:2011.12919. [Google Scholar] [CrossRef]

- Cortes, C.; Lawrence, N.D. Inconsistency in conference peer review: Revisiting the 2014 neurips experiment. arXiv 2021, arXiv:2109.09774. [Google Scholar] [CrossRef]

- NeurIPS 2021 Program Chairs. The NeurIPS 2021 Consistency Experiment. NeurIPS Blog. Accessed. 2021. (accessed on 23 March 2026).

- Barnett, A.; Allen, L.; Aldcroft, A.; Lash, T.L.; McCreanor, V. Examining uncertainty in journal peer reviewers’ recommendations: a cross-sectional study. Royal Society Open Science 2024, 11. [Google Scholar] [CrossRef]

- Goldberg, A.; Stelmakh, I.; Cho, K.; Oh, A.; Agarwal, A.; Belgrave, D.; Shah, N.B. Peer reviews of peer reviews: A randomized controlled trial and other experiments. PloS one 2025, 20, e0320444. [Google Scholar] [CrossRef]

- Aczel, B.; Barwich, A.S.; Diekman, A.B.; Fishbach, A.; Goldstone, R.L.; Gomez, P.; Gundersen, O.E.; von Hippel, P.T.; Holcombe, A.O.; Lewandowsky, S.; et al. The present and future of peer review: Ideas, interventions, and evidence. Proceedings of the National Academy of Sciences 2025, 122, e2401232121. [Google Scholar] [CrossRef]

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. Human-AI productivity claims should be reported as time-to-acceptance under explicit acceptance tests. 2026. [Google Scholar]

- Transactions on Machine Learning Research. Acceptance Criteria. Journal Website. Accessed. 2026. (accessed on 23 March 2026).

- He, C.; Zhou, X.; Wang, D.; Xu, H.; Liu, W.; Miao, C. OpenClaw as Language Infrastructure: A Case-Centered Survey of a Public Agent Ecosystem in the Wild. 2026. [Google Scholar]

- Li, S.; Fan, L.; Lin, Y.; Li, Z.; Wei, X.; Ni, S.; Alinejad-Rokny, H.; Yang, M. Automatic paper reviewing with heterogeneous graph reasoning over llm-simulated reviewer-author debates. Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence 2026, Vol. 40, 31717–31725. [Google Scholar] [CrossRef]

- Kim, J.; Lee, Y.; Lee, S. Position: The AI Conference Peer Review Crisis Demands Author Feedback and Reviewer Rewards. OpenReview. Accessed. 2025. (accessed on 23 March 2026).

- Su, W. You are the best reviewer of your own papers: An owner-assisted scoring mechanism. Advances in Neural Information Processing Systems 2021, 34, 27929–27939. [Google Scholar]

- Su, B.; Zhang, J.; Collina, N.; Yan, Y.; Li, D.; Cho, K.; Fan, J.; Roth, A.; Su, W. The ICML 2023 ranking experiment: Examining author self-assessment in ML/AI peer review. Journal of the American Statistical Association 2025, 1–12. [Google Scholar] [CrossRef]

- Transactions on Machine Learning Research. Transactions on Machine Learning Research. Journal Website. Accessed. 2026. (accessed on 23 March 2026).

- ACL Rolling Review. ACL Rolling Review. Platform Website. Accessed. 2026; (accessed on 23 March 2026).

- NeurIPS/ICLR/ICML Journal-to-Conference Track Oversight Committee. The NeurIPS/ICLR/ICML Journal-to-Conference Track. Conference Website. Accessed. 2026; (accessed on 23 March 2026).

- NeurIPS 2026 Communication Chairs. Refining the Review Cycle: NeurIPS 2026 Area Chair Pilot. NeurIPS Blog. Accessed. 2026. (accessed on 23 March 2026).

- NeurIPS 2026. Main Track Handbook 2026. Conference Website, Accessed. 2026; (accessed on 23 March 2026).

- NeurIPS 2026 Communication Chairs. Introducing the Evaluations & Datasets Track at NeurIPS 2026. NeurIPS Blog. Accessed. 2026. (accessed on 23 March 2026).

- ICLR. ICLR 2026 Author Guide. Conference Website, Accessed. 2026; (accessed on 23 March 2026). [Google Scholar]

- ICLR 2026 Program Chairs. ICLR 2026 Response to LLM-Generated Papers and Reviews. ICLR Blog. Accessed. 2025. (accessed on 23 March 2026).

- ICML. ICML 2026 Peer Review FAQ. Conference Website, Accessed. 2026; (accessed on 23 March 2026). [Google Scholar]

- Agarwal, A.; Dudik, M.; Li, S.; Jaggi, M.; Shah, N.B.; Gorman, K.; Kamath, G. On Violations of LLM Review Policies. ICML Blog. Accessed. 2026. (accessed on 23 March 2026).

- Zhang, T.M.; Abernethy, N.F. Reviewing scientific papers for critical problems with reasoning llms: Baseline approaches and automatic evaluation. arXiv 2025, arXiv:2505.23824. [Google Scholar] [CrossRef]

- OpenReview. About OpenReview. Website. Accessed. 2026; (accessed on 7 April 2026).

- OpenReview. openreview-expertise: Expertise Modeling for the OpenReview Matching System. GitHub Repository. Accessed. 2026. (accessed on 7 April 2026).

- Yu, J.; Ding, Z.; Tan, J.; Luo, K.; Weng, Z.; Gong, C.; Zeng, L.; Cui, R.; Han, C.; Sun, Q.; et al. Automated peer reviewing in paper sea: Standardization, evaluation, and analysis. Proceedings of the Findings of the Association for Computational Linguistics: EMNLP 2024, 2024, 10164–10184. [Google Scholar]

- ICLR. ICLR 2024 Fact Sheet. Conference Fact Sheet, Accessed. 2024; (accessed on 7 April 2026). [Google Scholar]

- ICLR 2021. ICLR 2021 Fact Sheet. Conference Fact Sheet, Accessed. 2021; (accessed on 9 April 2026). [Google Scholar]

- ICLR 2022. ICLR 2022 Fact Sheet. Conference Fact Sheet, Accessed. 2022; (accessed on 9 April 2026). [Google Scholar]

- ICLR. ICLR 2023 Fact Sheet. Conference Fact Sheet, Accessed. 2023; (accessed on 7 April 2026). [Google Scholar]

- OpenAccept. ICLR Acceptance Rates and Submission Stats. Historical Statistics Website. Accessed. 2026. (accessed on 8 April 2026).

- OpenAccept. ICML Acceptance Rates and Submission Stats. Historical Statistics Website. Accessed. 2026. (accessed on 7 April 2026).

- OpenAccept. NeurIPS Acceptance Rates and Submission Stats. Historical Statistics Website. Accessed. 2026. (accessed on 8 April 2026).

- ICLR 2021 Program Chairs. The ICLR 2021 Reviewing Process and Accepted Papers. ICLR Blog / Medium. Accessed. 2021. (accessed on 9 April 2026).

- ICML 2021. ICML 2021 Reviewers. Conference Website, Accessed. 2021; (accessed on 9 April 2026). [Google Scholar]

- ICML 2022. ICML 2022 Reviewers. Conference Website, Accessed. 2022; (accessed on 9 April 2026). [Google Scholar]

- ICML. ICML 2023 Reviewers. Conference Website, Accessed. 2023; (accessed on 7 April 2026). [Google Scholar]

- ICML. ICML 2024 Fact Sheet. Conference Fact Sheet, Accessed. 2024; (accessed on 7 April 2026). [Google Scholar]

- ICML 2025. ICML 2025 Fact Sheet. Conference Fact Sheet, Accessed. 2025; (accessed on 7 April 2026). [Google Scholar]

- ICML 2025. ICML 2025 Program Committee. Conference Website, Accessed. 2025; (accessed on 9 April 2026).

- ICML 2021. 2021 ICML Organizing Committee. Conference Website. Accessed. 2021; (accessed on 9 April 2026).

- ICML 2022. 2022 ICML Organizing Committee. Conference Website. Accessed. 2022; (accessed on 9 April 2026).

- ICML 2023. 2023 ICML Organizing Committee. Conference Website, Accessed. 2023; (accessed on 7 April 2026).

- ICML 2024. 2024 ICML Organizing Committee. Conference Website, Accessed. 2024; (accessed on 7 April 2026).

- ICML 2025. 2025 ICML Organizing Committee. Conference Website, Accessed. 2025; (accessed on 7 April 2026).

- NeurIPS. NeurIPS 2022 Fact Sheet. Conference Fact Sheet, Accessed. 2022; (accessed on 9 April 2026). [Google Scholar]

- NeurIPS. NeurIPS 2023 Fact Sheet. Conference Fact Sheet, Accessed. 2023; (accessed on 7 April 2026). [Google Scholar]

- NeurIPS. NeurIPS 2024 Fact Sheet. Conference Fact Sheet, Accessed. 2024; (accessed on 7 April 2026). [Google Scholar]

- NeurIPS 2023. 2023 Organizing Committee. Conference Website, Accessed. 2023; (accessed on 7 April 2026).

- NeurIPS 2024. 2024 Organizing Committee. Conference Website, Accessed. 2024; (accessed on 7 April 2026).

- NeurIPS 2025. 2025 Organizing Committee. Conference Website, Accessed. 2025; (accessed on 7 April 2026).

- ICLR 2019. ICLR 2019 Area Chairs. Conference Website, Accessed. 2019; (accessed on 9 April 2026). [Google Scholar]

- ICLR 2019. ICLR 2019 Committees. Conference Website, Accessed. 2019; (accessed on 9 April 2026). [Google Scholar]

- ICLR 2020 Program Chairs. #OurHatata: The Reviewing Process and Research Shaping ICLR in 2020. ICLR Blog / Medium. Accessed. 2020. (accessed on 9 April 2026).

- ICLR 2020. ICLR 2020 Committees. Conference Website, Accessed. 2020; (accessed on 9 April 2026). [Google Scholar]

- ICLR 2021. ICLR 2021 Committees. Conference Website, Accessed. 2021; (accessed on 9 April 2026). [Google Scholar]

- ICLR 2022. ICLR 2022 Committees. Conference Website, Accessed. 2022; (accessed on 9 April 2026). [Google Scholar]

- ICML 2019. ICML 2019 Area Chairs. Conference Website, Accessed. 2019; (accessed on 9 April 2026). [Google Scholar]

- ICML 2020. 2020 ICML Organizing Committee. Conference Website. Accessed. 2020; (accessed on 9 April 2026).

- ICML. ICML 2024 Reviewers. Conference Website, Accessed. 2024; (accessed on 9 April 2026). [Google Scholar]

- NeurIPS 2020 Program Chairs. What We Learned from the NeurIPS 2020 Reviewing Process. NeurIPS Blog / Medium. Accessed. 2020. (accessed on 9 April 2026).

- OpenReview. OpenReview NeurIPS 2021 Summary Report. OpenReview Documentation Accessed. 2021. (accessed on 9 April 2026). [Google Scholar]

- NeurIPS 2020. 2020 Organizing Committee. Conference Website. Accessed. 2020; (accessed on 9 April 2026).

- He, C.; Zhou, X.; Wang, D.; Yu, X.; Xiao, L.; Li, L.; Xu, H.; Liu, W.; Miao, C. KG4ESG: The ESG Knowledge Graph Atlas. 2026. [Google Scholar] [CrossRef]

- He, C.; Zhou, X.; Yu, X.; Zhang, L.; Zhang, Y.; Wu, Y.; Xiao, L.; Li, L.; Wang, D.; Xu, H.; et al. SSKG Hub: An Expert-Guided Platform for LLM-Empowered Sustainability Standards Knowledge Graphs. arXiv 2026, arXiv:2603.00669. [Google Scholar]

- Su, B.; Collina, N.; Wen, G.; Li, D.; Cho, K.; Fan, J.; Zhao, B.; Su, W. How to Find Fantastic AI Papers: Self-Rankings as a Powerful Predictor of Scientific Impact Beyond Peer Review. arXiv 2025, arXiv:2510.02143. [Google Scholar]

- Agarwal, A.; Dudik, M.; Li, S.; Jaggi, M. What’s New in ICML 2026 Peer Review. ICML Blog. Accessed. 2026. (accessed on 23 March 2026).

- Su, W.; Su, B. Introducing ICML 2026 Policy for Self-Ranking in Reviews. ICML Blog. Accessed. 2026. (accessed on 23 March 2026).

- Davies, C.; Ingram, H. Sceptics and champions: participant insights on the use of partial randomization to allocate research culture funding. Research Evaluation 2025, 34, rvaf006. [Google Scholar] [CrossRef]

- Stafford, T.; Rombach, I.; Hind, D.; Mateen, B.; Woods, H.B.; Dimario, M.; Wilsdon, J. Where next for partial randomisation of research funding? The feasibility of RCTs and alternatives. Wellcome open research 2024, 8, 309. [Google Scholar] [CrossRef] [PubMed]

- Zhou, R.; Chen, L.; Yu, K. Is LLM a reliable reviewer? A comprehensive evaluation of LLM on automatic paper reviewing tasks. In Proceedings of the Proceedings of the 2024 joint international conference on computational linguistics, language resources and evaluation (LREC-COLING 2024), 2024; pp. 9340–9351. [Google Scholar]

- Liang, W.; Zhang, Y.; Cao, H.; Wang, B.; Ding, D.Y.; Yang, X.; Vodrahalli, K.; He, S.; Smith, D.S.; Yin, Y.; et al. Can large language models provide useful feedback on research papers? A large-scale empirical analysis. NEJM AI 2024, 1, AIoa2400196. [Google Scholar] [CrossRef]

- Chen, S.; Zhong, S.; Brumby, D.P.; Cox, A.L. What happens when reviewers receive AI feedback in their reviews? arXiv 2026, arXiv:2602.13817. [Google Scholar] [CrossRef]

- Zhuang, Z.; Chen, J.; Xu, H.; Jiang, Y.; Lin, J. Large language models for automated scholarly paper review: A survey. Information Fusion 2025, 124, 103332. [Google Scholar] [CrossRef]

- He, C.; Zhou, X.; Wu, Y.; Yu, X.; Zhang, Y.; Zhang, L.; Wang, D.; Lyu, S.; Xu, H.; Xiaoqiao, W.; et al. Esgenius: Benchmarking llms on environmental, social, and governance (esg) and sustainability knowledge. Proceedings of the Proceedings of the 2025 Conference on Empirical Methods in Natural Language Processing 2025, 14623–14664. [Google Scholar]

- Zhang, L.; Zhou, X.; He, C.; Wang, D.; Wu, Y.; Xu, H.; Liu, W.; Miao, C. Mmesgbench: Pioneering multimodal understanding and complex reasoning benchmark for esg tasks. In Proceedings of the Proceedings of the 33rd ACM International Conference on Multimedia, 2025; pp. 12829–12836. [Google Scholar]

- OpenReview. OpenReview API v2 Documentation. Documentation Accessed. 2024. (accessed on 7 April 2026). [Google Scholar]

- OpenReview. openreview-py: Official Python Client Library for the OpenReview API. GitHub Repository Accessed. 2026. (accessed on 7 April 2026). [Google Scholar]

- GROBID. Introduction - GROBID Documentation. Documentation. Accessed. 2026. (accessed on 7 April 2026).

- OpenAlex. API Overview - OpenAlex Developers. API Documentation Accessed. 2026. (accessed on 7 April 2026). [Google Scholar]

- Scholar, Semantic. Semantic Scholar Academic Graph API. API Documentation Accessed. 2026. (accessed on 7 April 2026). [Google Scholar]

| Term | Working definition | Why it matters here |

|---|---|---|

| Submission explosion | Sustained growth in submission volume and variety as paper production becomes cheaper. | Volume should be designed for rather than feared. |

| Autonomous review pipeline | A machine-run first-pass review stack that generates structured evidence and routing signals without acting as the final judge. | Review becomes scalable without removing humans from legitimacy. |

| Attention budget | The limited human time available for reviewers, chairs, and readers. | The scarce resource is depth, not PDFs. |

| Escalation | Explicit transfer of a paper or cluster from machine-first review to deeper human scrutiny. | Human effort can be concentrated where uncertainty is highest. |

| Rolling certification | Continuous judgment that work is sound enough for the technical record, potentially with typed uncertainty notes. | Certification need not wait for a single seasonal batch. |

| Conference curation | Selective allocation of scarce visibility such as talks, posters, awards, and synthesis slots. | Visibility can be separated from baseline certification. |

| Pipeline Stage | Output for Hypothetical Submission: Semantic Per-Pair DPO (SP2DPO) |

|---|---|

| Policy Screen | Pass. Anonymity preserved; format compliant; anonymous GitHub link provided; required compute disclosures present. |

| Extracted Claims | C1: SP2DPO improves win-rate on AlpacaEval by 12% over standard DPO. C2: SP2DPO mitigates response-length bias by balancing semantic density across preference pairs. |

| Literature Positioning | Accurately positions against standard DPO and cDPO. Flag: Missing comparison to Offset DPO (ODPO), which is highly relevant for length-bias mitigation claims. |

| Artifact Probe | Execution Pass. Repository cloned successfully. Dependencies installed. Training script dry-run executed without syntax errors. |

| Uncertainty Flags | Moderate Risk: Claim 1’s 12% win-rate improvement is statistically large but highly sensitive to the specific prompt templates used in AlpacaEval evaluation. |

| Escalation Decision | Route to Human Expert.Justification: While technically sound (code runs) and policy compliant, the missing ODPO baseline and the prompt-sensitivity of the evaluation require domain-expert judgment to verify robustness. |

| Typed Certification | Policy: Compliant. Artifact: Probed (Pass). Claims: Escalated. |

| Reader Claim Card | (Post-Certification output generated for readers)Method: Semantic Per-Pair DPO (SP2DPO). Core Claim: Reduces length bias in preference tuning. Status: Certified (with attached reviewer notes on template sensitivity). |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).