Submitted:

04 April 2026

Posted:

13 April 2026

You are already at the latest version

Abstract

Keywords:

I. Introduction

- An end-to-end real-time IDS pipeline consisting of raw packet capture, ML-based classification, and structured event logging.

- A two-mode operation that supports both live network monitoring and batch wise CSV-based analysis.

- A Flask web dashboard with user authentication, live attack feeds, historical reports, and CSV upload func- tionality.

- An empirical evaluation on NSL-KDD demonstrating 99.2% classification accuracy across five traffic classes.

II. Related Work

III. System Architecture

A. Packet Capture Module (monitor.py)

B. Feature Extraction Module (packetfeatureextractor.py)

C. ML Inference Engine (liveprediction.py)

D. Database Layer (idsdatabase.db)

E. Batch Analysis Module

F. Flask Web Application (app.py)

IV. Methodology

A. Dataset

- Denial of Service (DoS): Resource exhaustion attacks (SYN flood, Smurf, Neptune, Teardrop).

- Probe: Network reconnaissance and scanning (Nmap, Portsweep, Satan, IPsweep).

- User-to-Root (U2R): Local privilege escalation (buffer overflow, loadmodule, rootkit).

- Remote-to-Local (R2L): Remote exploitation for unau- thorized local access (FTP write, guess passwd, phf).

B. Data Preprocessing

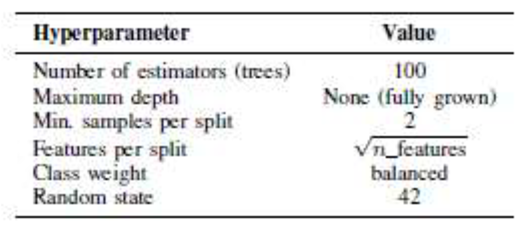

C. Model Training

D. Real-Time Detection Pipeline

- monitor.py opens a raw Scapy socket and begins capturing packets from the monitored interface.

- Packets are grouped into connection records using the 5-tuple key (src IP, dst IP, src port, dst port, protocol) within a rolling time window.

- packetfeatureextractor.py computes the 41- feature NSL-KDD vector for each connection.

- liveprediction.py applies the scaler, selects fea- tures, and invokes the Random Forest classifier.

- The predicted class label and confidence score are inserted as a new row into the logs table of idsdatabase.db.

- app.py reads the latest log entries and renders them on the live dashboard with a configurable auto-refresh interval.

V. Results and Evaluation

A. Offline Classification Performance

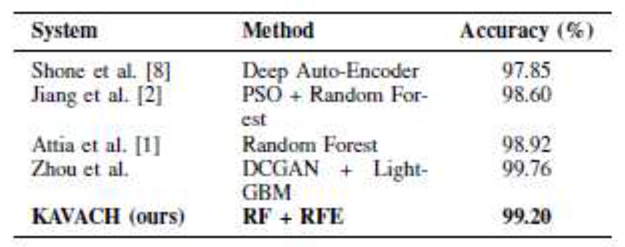

B. Model Comparison

C. Feature Importance

D. Real-Time Pipeline Latency

E. Comparison with Prior Systems

VI. User Interface and Implementation

A. Dashboard and Profile.

B. Login Interface

C. Session Management

D. Audit Logs

E. CSV Upload and Batch Analysis

VII. Discussion

A. Strengths

B. Limitations

C. Ethical and Privacy Considerations

D. Future Work

VIII. Conclusion

Acknowledgments

References

- Attia, M. Faezipour, and A. Abuzneid, “Network Intrusion Detection with Random Forest and Deep Learning Algorithms: An Evaluation Study,” in Proc. 2020 Int. Conf. Computational Science and Compu- tational Intelligence (CSCI), pp. 138–143, Dec. 2020.

- Jiang, Z. He, G. Ye, and H. Zhang, “Network Intrusion Detection Based on PSO-Random Forest Model,” IEEE Access, vol. 8, pp. 58392– 58401, 2020.

- S. Fuhnwi, M. Revelle, and C. Izurieta, “Improving Network Intru- sion Detection Performance: An Empirical Evaluation Using Extreme Gradient Boosting with Recursive Feature Elimination,” in Proc. 2024 IEEE 3rd Int. Conf. AI in Cybersecurity (ICAIC), pp. 1–8, 2024.

- N. Pansari, S. Srivastava, R. H. Raghavendra, and M. Agarwal, “Attack Classification using Machine Learning on UNSW-NB15 Dataset using Random Forest Feature Selection & Ablation Analysis,” in Proc. 2024 IEEE 9th Int. Conf. for Convergence in Technology (I2CT), pp. 1–9, 2024.

- M. V. Mahoney, “A Machine Learning Approach to Detecting Attacks by Identifying Anomalies in Network Traffic,” Ph.D. dissertation, Florida Institute of Technology, 2003.

- J. P. Anderson, “Computer Security Threat Monitoring and Surveil- lance,” James P. Anderson Co., Technical Report, 2008.

- S. M. Bridges and R. B. Vaughn, “Fuzzy data mining and genetic algorithms applied to intrusion detection,” in Proc. 23rd National Information Systems Security Conf., 2000.

- N. Shone, T. N. Ngoc, V. D. Phai, and Q. Shi, “A Deep Learning Approach to Network Intrusion Detection,” IEEE Trans. Emerging Topics Comput. Intell., vol. 2, no. 1, pp. 41–50, Feb. 2018.

- M. Alsharaiah et al., “An Innovative Network Intrusion Detection System (NIDS): Hierarchical Deep Learning Model Based on UNSW-NB15 Dataset,” Int. J. Data and Network Science, 2024.

- M. Sarhan, S. Layeghy, N. Moustafa, and M. Portmann, “NetFlow Datasets for Machine Learning-based Network Intrusion Detection Sys- tems,” in Big Data Technologies and Applications, Springer, 2020.

- Sharafaldin, A. H. Lashkari, and A. A. Ghorbani, “Toward Generating a New Intrusion Detection Dataset and Intrusion Traffic Characteriza- tion,” in Proc. 4th Int. Conf. Information Systems Security and Privacy (ICISSP), pp. 108–116, 2018.

- M. Tavallaee, E. Bagheri, W. Lu, and A. A. Ghorbani, “A Detailed Analysis of the KDD CUP 99 Data Set,” in Proc. IEEE Symp. Com- putational Intelligence for Security and Defense Applications (CISDA), pp. 1–6, 2009.

- S. Roy, J. Li, B. Niu, and R. Liu, “Deep Learning-Based Intrusion Detection in Cyber-Physical Systems: Progress and Challenges,” IEEE Internet of Things Journal, vol. 7, no. 12, pp. 12369–12384, Dec. 2020.

- W. Wang, M. Zhu, J. Wang, X. Zeng, and Z. Yang, “End-to-End Encrypted Traffic Classification with One-Dimensional Convolution Neural Networks,” in Proc. IEEE Int. Conf. Intelligence and Security Informatics (ISI), pp. 43–48, 2017.

- Y. Xin et al., “Machine Learning and Deep Learning Methods for Cybersecurity,” IEEE Access, vol. 6, pp. 35365–35381, 2018.

|

| Class | Precision | Recall | F1 | Support |

| Normal | 0.99 | 0.99 | 0.99 | 9711 |

| DoS | 0.99 | 0.98 | 0.99 | 7458 |

| Probe | 0.98 | 0.98 | 0.98 | 2421 |

| U2R | 0.93 | 0.88 | 0.90 | 200 |

| R2L | 0.96 | 0.95 | 0.96 | 2754 |

| Overall Accuracy | 99.2% | |||

| Macro F1-Score | 0.964 |

| Classifier | Validation Accuracy (%) |

| K-Nearest Neighbors | 97.81 |

| Naive Bayes | 89.34 |

| Logistic Regression | 93.56 |

| Decision Tree | 98.67 |

| Random Forest | 99.45 |

| Pipeline Stage | Avg. Latency (ms) |

| Packet capture & buffering | 18 |

| Feature extraction | 22 |

| Encoding & scaling | 11 |

| Random Forest inference | 14 |

| SQLite log insertion | 12 |

| Dashboard refresh (Ajax poll) | 33 |

| Total (capture to log) | 77 ms |

| Total (capture to display) | <110 ms |

|

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).