Submitted:

10 April 2026

Posted:

10 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

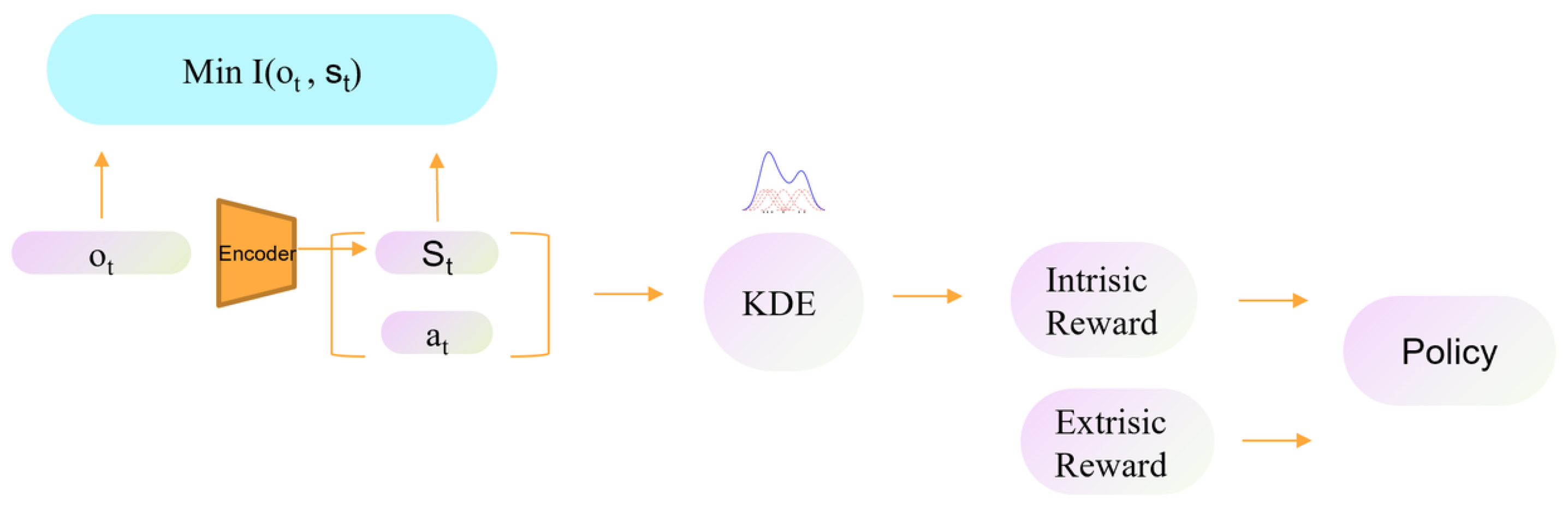

- We propose a curiosity-driven reinforcement learning framework in which intrinsic reward is defined on state–action representations, capturing both rarely visited regions and infrequently attempted actions in familiar situations.

- We employ the Information Bottleneck principle to learn compact latent states that are suitable for both transition prediction and density-based novelty estimation.

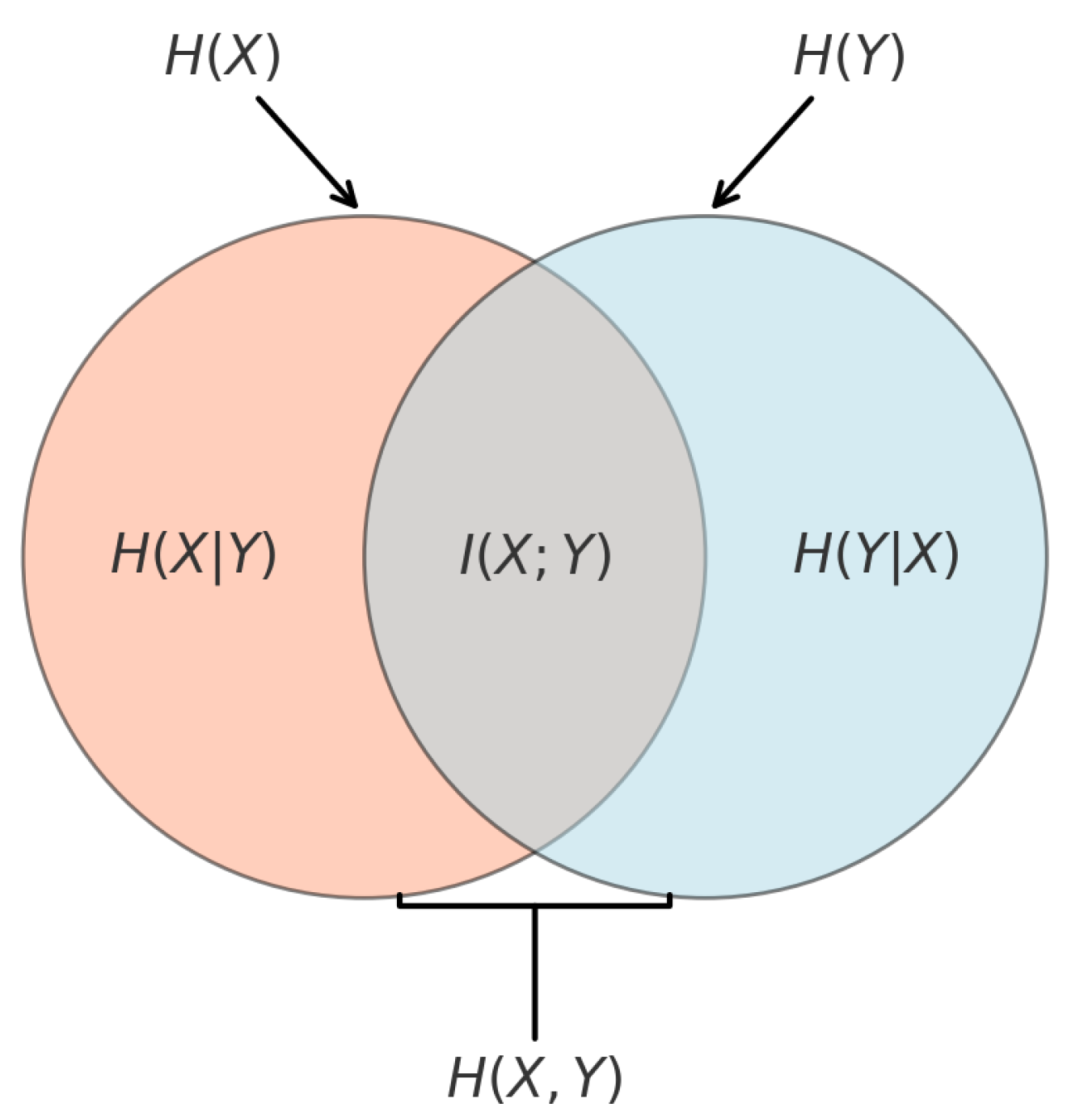

- We investigate two practical formulations for encouraging predictive information in the latent space: an entropy-decomposition-based objective and a matrix-based Rényi-entropy objective.

- We replace KNN-based novelty estimation with kernel density estimation in the learned representation space, leading to a smoother intrinsic-reward signal.

- We empirically evaluate the proposed framework on benchmark environments and show that its benefit is most evident when representation quality plays an important role in exploration.

2. Relevant Work

2.1. Design of Intrinsic Rewards

2.2. Learning Representations

2.3. Psychological Perspectives on Curiosity

3. Methods

3.1. Problem Setup and Notation

3.2. Overall Framework

3.3. Intrinsic Reward from State–Action Novelty

3.3.1. Kernel Density in Representation Space

3.3.2. Intrinsic Reward with a Random-Policy Baseline

3.3.3. Prediction-Error Loss and Hybrid Intrinsic Signal

3.4. Representation Learning via Predictive Information Bottleneck

3.4.1. IB Objective

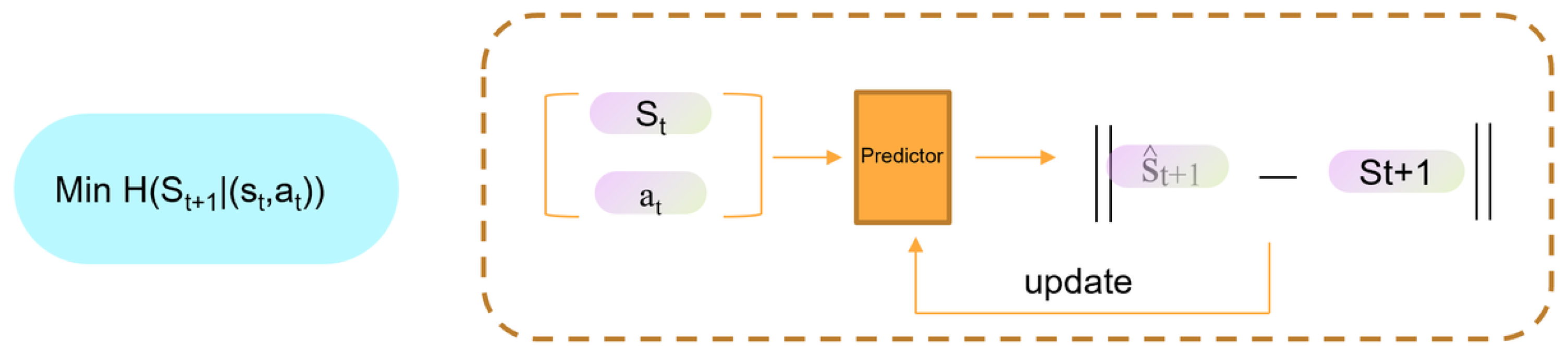

3.4.2. First Decomposition Method: Using Prediction Error to Approximate Predictive Information

Encouraging large via latent dispersion.

Reducing via forward transition prediction.

3.5. Second Decomposition Method: Backward Transition Predictor

3.6. Matrix-Based Entropy Estimation as an Alternative to Prediction-Error Surrogates

3.7. Reward Prediction Loss

3.8. Putting It Together: Total Training Losses

3.9. Action Selection via Model Predictive Control

4. Experiments and Results

4.1. Implementation Details and Reproducibility

Replay buffer, optimization, and action representation.

Network architectures.

Matrix-based objective.

Intrinsic reward hyperparameters.

4.2. Performance Comparison in Acrobot

Environment and protocol.

Metric and baselines.

| Method | Avg | StdErr |

|---|---|---|

| ICM | 932.8 | 141.54 |

| RND | 953.8 | 85.98 |

| Novelty | 576.0 | 66.13 |

| Ours | 290.0 | 45.72 |

4.3. Results on MountainCar

4.3.1. Experimental results

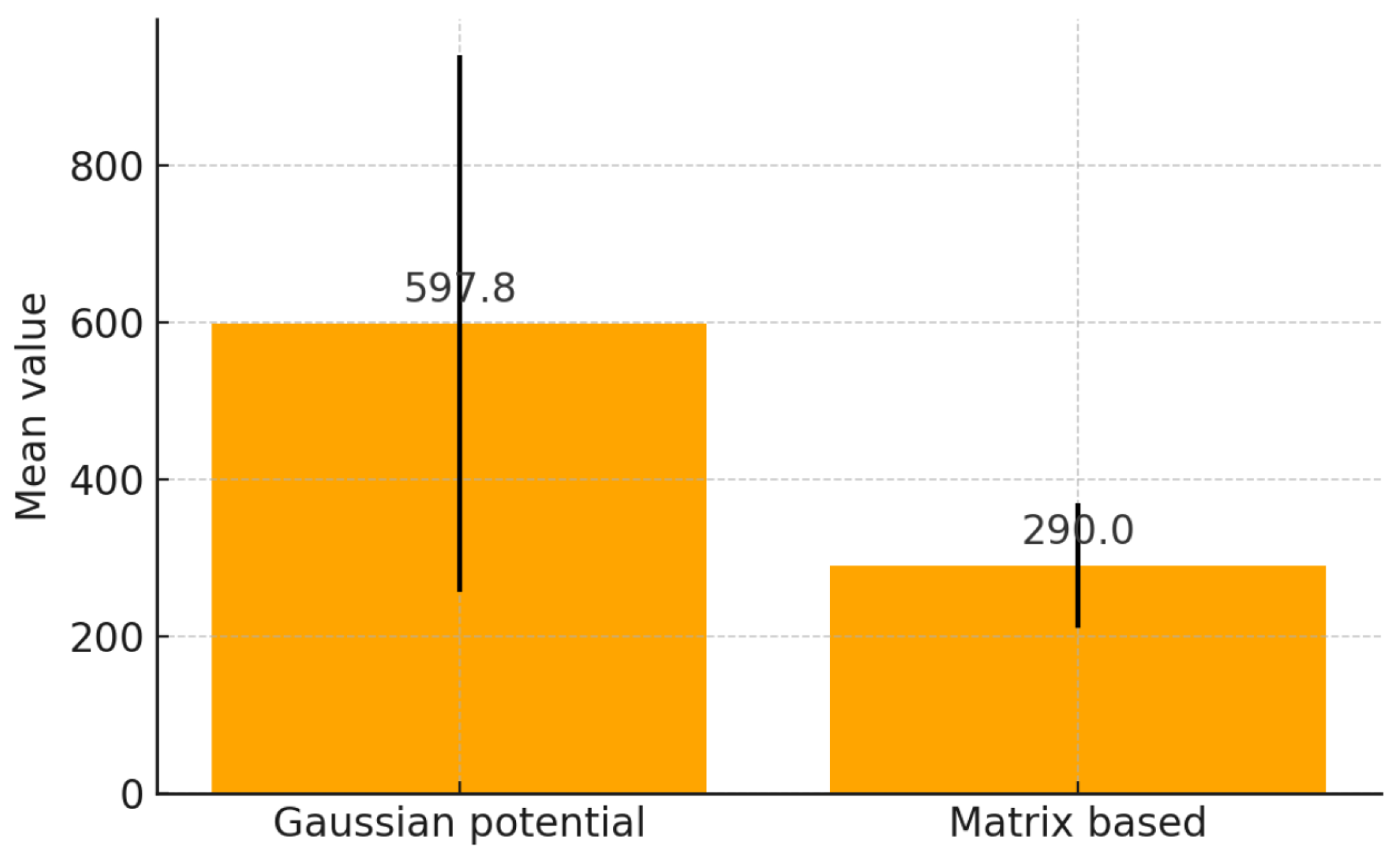

4.4. Comparing Entropy Estimators for

(1) Pairwise Gaussian-potential loss.

(2) Matrix-based Rényi entropy.

Experiment and interpretation.

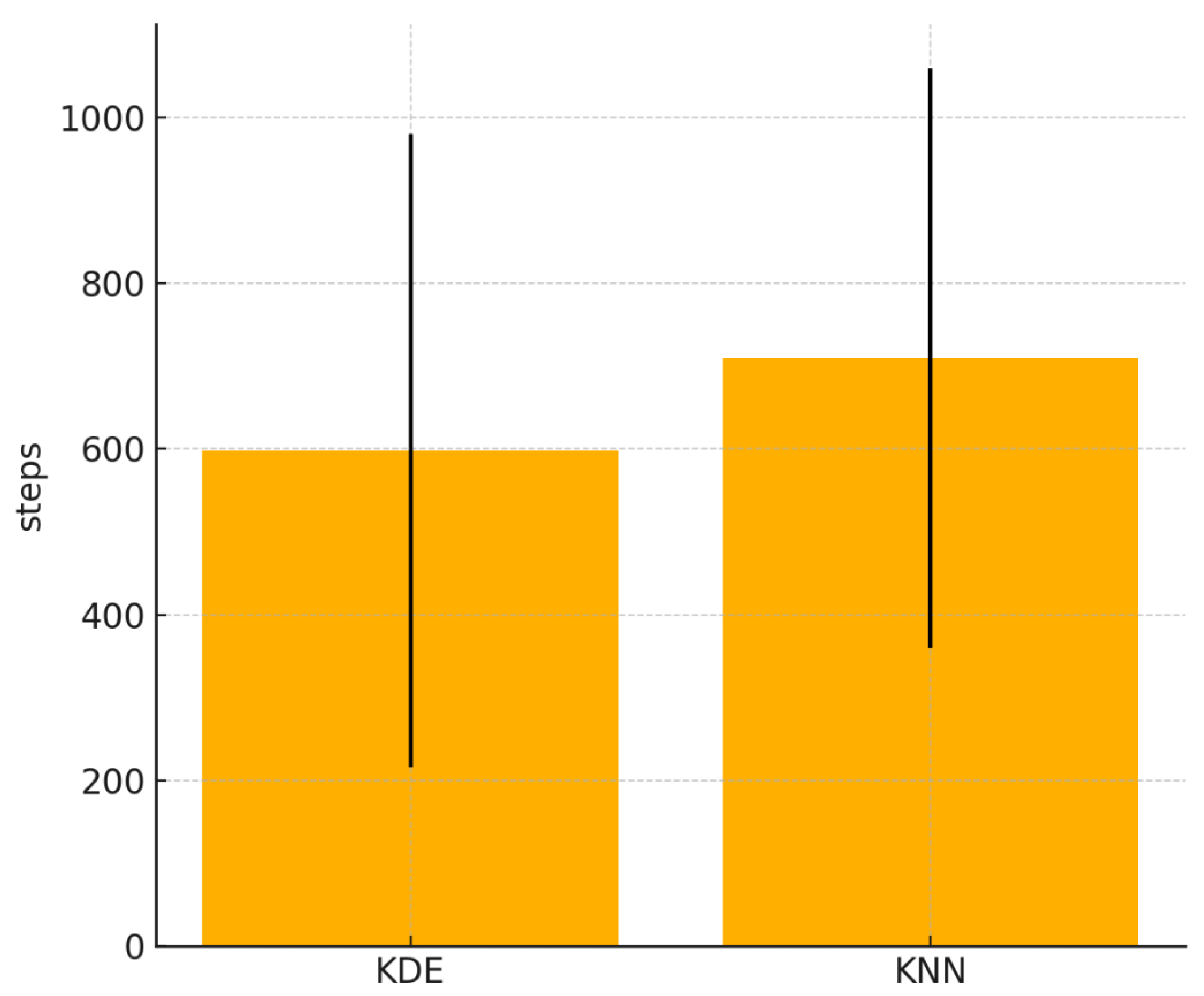

4.5. Comparing KDE and KNN

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Conflicts of Interest

References

- Sutton, R.S.; Barto, A.G.; et al. Reinforcement learning: An introduction; MIT press: Cambridge, 1998; Vol. 1. [Google Scholar]

- Mnih, V.; Kavukcuoglu, K.; Silver, D.; Rusu, A.A.; Veness, J.; Bellemare, M.G.; Graves, A.; Riedmiller, M.; Fidjeland, A.K.; Ostrovski, G.; et al. Human-level control through deep reinforcement learning. nature 2015, 518, 529–533. [Google Scholar] [CrossRef]

- Ecoffet, A.; Huizinga, J.; Lehman, J.; Stanley, K.O.; Clune, J. Go-explore: a new approach for hard-exploration problems. arXiv 2019, arXiv:1901.10995. [Google Scholar]

- Pathak, D.; Agrawal, P.; Efros, A.A.; Darrell, T. Curiosity-driven Exploration by Self-supervised Prediction. In Proceedings of the Proceedings of the 34th International Conference on Machine Learning. PMLR, 2017; pp. 2778–2787. [Google Scholar]

- Kayal, A.; Pignatelli, E.; Toni, L. The impact of intrinsic rewards on exploration in Reinforcement Learning. Neural Computing and Applications 2025, 1–35. [Google Scholar] [CrossRef]

- Aubret, A.; Matignon, L.; Hassas, S. An information-theoretic perspective on intrinsic motivation in reinforcement learning: A survey. Entropy 2023, 25, 327. [Google Scholar] [CrossRef]

- Cobbe, K.; Hesse, C.; Hilton, J.; Schulman, J. Leveraging procedural generation to benchmark reinforcement learning. In Proceedings of the International conference on machine learning. PMLR, 2020; pp. 2048–2056. [Google Scholar]

- Raileanu, R.; Goldstein, M.; Yarats, D.; Kostrikov, I.; Fergus, R. Automatic data augmentation for generalization in reinforcement learning. Advances in Neural Information Processing Systems 2021, 34, 5402–5415. [Google Scholar]

- Moure, P.; Cheng, L.; Ott, J.; Wang, Z.; Liu, S.C. Regularized parameter uncertainty for improving generalization in reinforcement learning. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024; pp. 23805–23814. [Google Scholar]

- Oudeyer, P.Y.; Kaplan, F. What is intrinsic motivation? A typology of computational approaches. Frontiers in neurorobotics 2007, 1, 108. [Google Scholar] [CrossRef]

- Oudeyer, P.Y.; Kaplan, F. How can we define intrinsic motivation? In Proceedings of the the 8th international conference on epigenetic robotics: Modeling cognitive development in robotic systems. Lund University Cognitive Studies, 2008; Lund: LUCS: Brighton. [Google Scholar]

- Chen, X.; Twomey, K.E.; Westermann, G. Curiosity enhances incidental object encoding in 8-month-old infants. Journal of Experimental Child Psychology 2022, 223, 105508. [Google Scholar] [CrossRef] [PubMed]

- Babik, I.; Galloway, J.C.; Lobo, M.A. Early exploration of one’s own body, exploration of objects, and motor, language, and cognitive development relate dynamically across the first two years of life. Developmental psychology 2022, 58, 222. [Google Scholar] [CrossRef] [PubMed]

- Burda, Y.; Edwards, H.; Storkey, A.; Klimov, O. Exploration by Random Network Distillation. In Proceedings of the International Conference on Learning Representations, 2019. [Google Scholar]

- Aubret, A.; Matignon, L.; Hassas, S. A survey on intrinsic motivation in reinforcement learning. arXiv 2019, arXiv:1908.06976. [Google Scholar] [CrossRef]

- Beyer, K.S.; Goldstein, J.; Ramakrishnan, R.; Shaft, U. When Is “Nearest Neighbor” Meaningful? In Proceedings of the Proceeding of the 7th International Conference on Database Theory; Beeri, C.; Bruneman, P., Eds. Springer, 1999, Vol. 1540, Lecture Notes in Computer Science, pp. 217–235.

- Tishby, N.; Pereira, F.C.; Bialek, W. The information bottleneck method. In Proceedings of the Proc. of the 37-th Annual Allerton Conference on Communication, Control and Computing, 1999; pp. 368–377. [Google Scholar]

- Giraldo, L.G.S.; Rao, M.; Príncipe, J.C. Measures of entropy from data using infinitely divisible kernels. IEEE Transactions on Information Theory 2014, 61, 535–548. [Google Scholar] [CrossRef]

- Yu, S.; Sanchez Giraldo, L.; Principe, J. Information-Theoretic Methods in Deep Neural Networks: Recent Advances and Emerging Opportunities. In Proceedings of the Proceedings of the Thirtieth International Joint Conference on Artificial Intelligence; IJCAI-21, Zhou, Z.H., Ed.; International Joint Conferences on Artificial Intelligence Organization; Survey Track; Volume 8 2021, pp. 4669–4678. [CrossRef]

- Tao, R.Y.; François-Lavet, V.; Pineau, J. Novelty Search in Representational Space for Sample Efficient Exploration. In Proceedings of the Advances in Neural Information Processing Systems 33 (NeurIPS 2020), 2020. [Google Scholar]

- Yu, S.; Alesiani, F.; Yu, X.; Jenssen, R.; Príncipe, J.C. Measuring Dependence with Matrix-based Entropy Functional. In Proceedings of the Proceedings of the AAAI Conference on Artificial Intelligence; 2021; Vol. 35, pp. 10725–10733. [Google Scholar] [CrossRef]

- Pathak, D.; Gandhi, D.; Gupta, A. Self-supervised exploration via disagreement. In Proceedings of the International conference on machine learning. PMLR, 2019; pp. 5062–5071. [Google Scholar]

- Gal, Y.; Ghahramani, Z. Dropout as a Bayesian Approximation: Representing Model Uncertainty in Deep Learning. In Proceedings of the Proceedings of the 33rd International Conference on Machine Learning (ICML); 2016; Vol. 48, PMLR, pp. 1050–1059. [Google Scholar]

- Lakshminarayanan, B.; Pritzel, A.; Blundell, C. Simple and Scalable Predictive Uncertainty Estimation using Deep Ensembles. In Proceedings of the Advances in Neural Information Processing Systems; 2017; Vol. 30, pp. 6402–6413. [Google Scholar]

- Li, H.; Yu, S.; Francois-Lavet, V.; Principe, J.C. Reward-Free Exploration by Conditional Divergence Maximization. In Proceedings of the International Conference on Learning Representations, 2024. [Google Scholar]

- Houthooft, R.; Chen, X.; Duan, Y.; Schulman, J.; De Turck, F.; Abbeel, P. VIME: Variational Information Maximizing Exploration. In Proceedings of the Advances in Neural Information Processing Systems, 2016; pp. 1109–1117. [Google Scholar]

- Hong, Z.W.; Shann, T.Y.; Su, S.Y.; Chang, Y.H.; Fu, T.J.; Lee, C.Y. Diversity-driven exploration strategy for deep reinforcement learning. Advances in neural information processing systems 2018, 31. [Google Scholar]

- Scott, P.D.; Markovitch, S. Learning Novel Domains Through Curiosity and Conjecture. In Proceedings of the Proceedings of International Joint Conference for Artificial Intelligence, Detroit, Michigan, 1989; pp. 669–674. [Google Scholar]

- Seo, Y.; Chen, L.; Shin, J.; Lee, H.; Abbeel, P.; Lee, K. State entropy maximization with random encoders for efficient exploration. In Proceedings of the International conference on machine learning. PMLR, 2021; pp. 9443–9454. [Google Scholar]

- Yarats, D.; Fergus, R.; Lazaric, A.; Pinto, L. Reinforcement learning with prototypical representations. In Proceedings of the International Conference on Machine Learning. PMLR, 2021; pp. 11920–11931. [Google Scholar]

- Pei, Y.; Hou, X. Learning Representations in Reinforcement Learning: An Information Bottleneck Approach. arXiv 2019, arXiv:1911.05695. [Google Scholar] [CrossRef]

- Bai, S.; Zhou, W.; Ding, P.; Zhao, W.; Wang, D.; Chen, B. Rethinking Latent Redundancy in Behavior Cloning: An Information Bottleneck Approach for Robot Manipulation. Accepted by ICML 2025. arXiv 2025, arXiv:2502.02853. [Google Scholar]

- You, B.; Liu, H. Multimodal Information Bottleneck for Deep Reinforcement Learning with Multiple Sensors. Neural Networks 2024, 176, –. Also available as arXiv preprint arXiv:2410.17551. [CrossRef] [PubMed]

- Islam, R.; Zang, H.; Tomar, M.; Didolkar, A.; Islam, M.M.; Arnob, S.Y.; Iqbal, T.; Li, X.; Goyal, A.; Heess, N.; et al. Representation Learning in Deep RL via Discrete Information Bottleneck. In Proceedings of the Proceedings of the 26th International Conference on Artificial Intelligence and Statistics. PMLR, 2023; pp. 8699–8722. [Google Scholar]

- Zou, Q.; Suzuki, E. Compact Goal Representation Learning via Information Bottleneck in Goal-Conditioned Reinforcement Learning. IEEE Transactions on Neural Networks and Learning Systems 2024. [Google Scholar] [CrossRef]

- Kich, V.A.; Bottega, J.A.; Steinmetz, R.; Grando, R.B.; Yorozu, A.; Ohya, A. CURLing the Dream: Contrastive Representations for World Modeling in Reinforcement Learning. arXiv 2024, arXiv:2408.05781. [Google Scholar] [CrossRef]

- Loewenstein, G. The psychology of curiosity: A review and reinterpretation. Psychological bulletin 1994, 116, 75. [Google Scholar] [CrossRef]

- Burda, Y.; Edwards, H.; Pathak, D.; Storkey, A.; Darrell, T.; Efros, A.A. Large-scale study of curiosity-driven learning. arXiv 2018, arXiv:1808.04355. [Google Scholar]

- Berlyne, D.E.; et al. A theory of human curiosity. 1954. [Google Scholar] [CrossRef]

- Kidd, C.; Hayden, B.Y. The psychology and neuroscience of curiosity. Neuron 2015, 88, 449–460. [Google Scholar] [CrossRef] [PubMed]

- Dubey, R.; Griffiths, T.L. Understanding exploration in humans and machines by formalizing the function of curiosity. Current Opinion in Behavioral Sciences 2020, 35, 118–124. [Google Scholar] [CrossRef]

- Schultz, W.; Dayan, P.; Montague, P.R. A neural substrate of prediction and reward. Science 1997, 275, 1593–1599. [Google Scholar] [CrossRef]

- Bellemare, M.; Srinivasan, S.; Ostrovski, G.; Schaul, T.; Saxton, D.; Munos, R. Unifying count-based exploration and intrinsic motivation. Advances in neural information processing systems 2016, 29. [Google Scholar]

- Tsuda, K.; Rätsch, G.; Warmuth, M.K. Matrix Exponentiated Gradient Updates for On-line Learning and Bregman Projection. Journal of Machine Learning Research 2005, 6, 995–1018. [Google Scholar]

- Bhatia, R. Infinitely Divisible Matrices. The American Mathematical Monthly 2006, 113, 221–235. [Google Scholar] [CrossRef]

- Yu, S.; Sanchez Giraldo, L.G.; Jenssen, R.; Príncipe, J.C. Multivariate Extension of Matrix-Based Rényi’s α-Order Entropy Functional. IEEE Transactions on Pattern Analysis and Machine Intelligence 2020, 42, 2960–2966. [Google Scholar] [CrossRef]

- Yu, X.; Yu, S.; Príncipe, J.C. Deep Deterministic Information Bottleneck with Matrix-Based Entropy Functional. In Proceedings of the ICASSP 2021 - 2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), 2021; IEEE; pp. 3160–3164. [Google Scholar] [CrossRef]

| Method | Avg. steps to goal | StdErr |

|---|---|---|

| Novelty | 1158.5 | 172.8 |

| Ours | 1456.9 | 125.7 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).