Submitted:

09 April 2026

Posted:

10 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- The design and implementation of a closed-loop UAV-based system that tightly integrates real-time visual perception with autonomous, context-aware seed dispensing.

- A modular and drone-independent system architecture that enables straightforward integration with a wide range of commercial UAV platforms.

- A comparative evaluation of different computer vision algorithms, assessing their suitability for onboard deployment through systematic performance analysis.

- The experimental validation under field conditions, quantifying terrain detection accuracy, onboard inference latency, and seed density precision.

2. Related Works

3. Materials and Methods

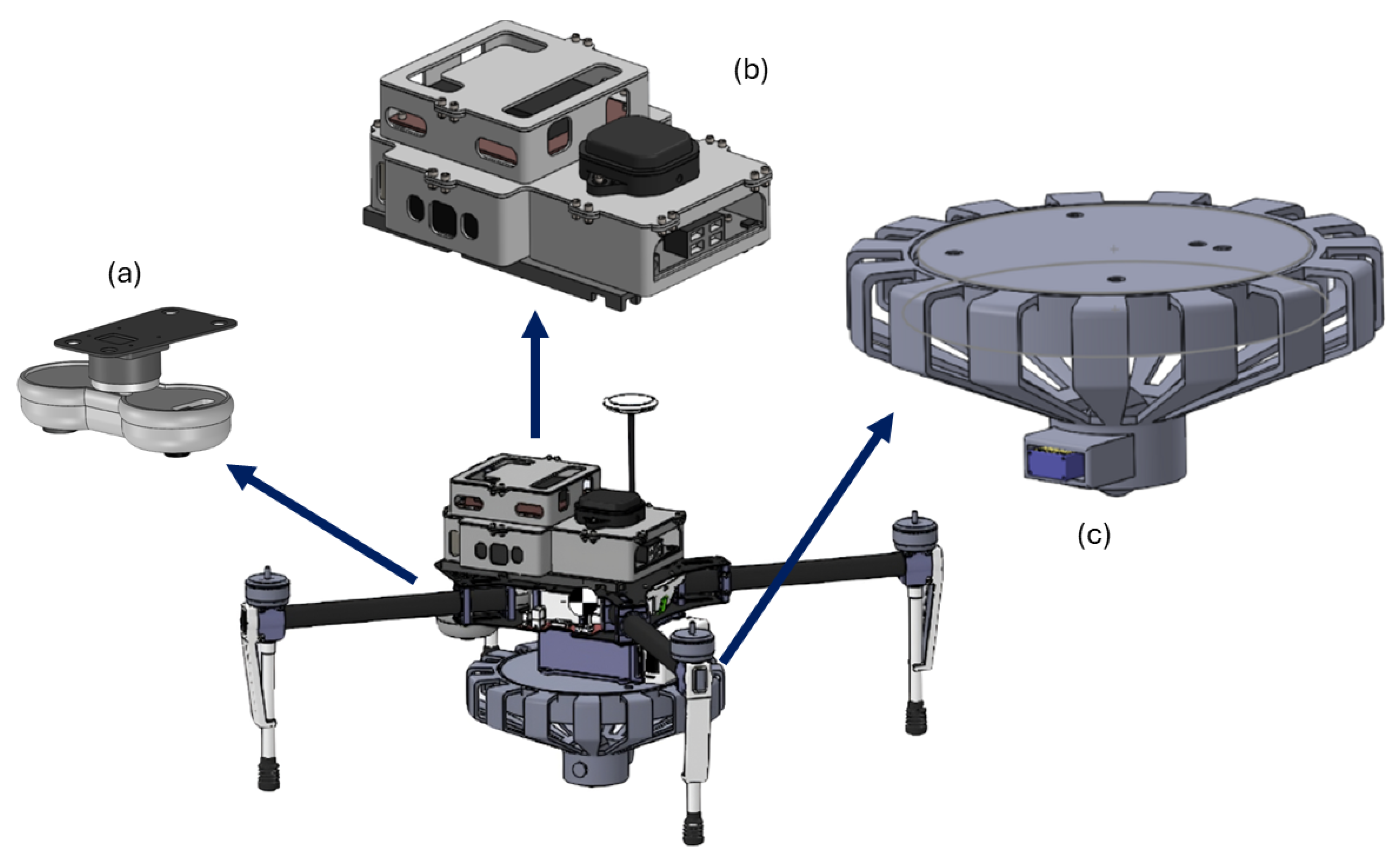

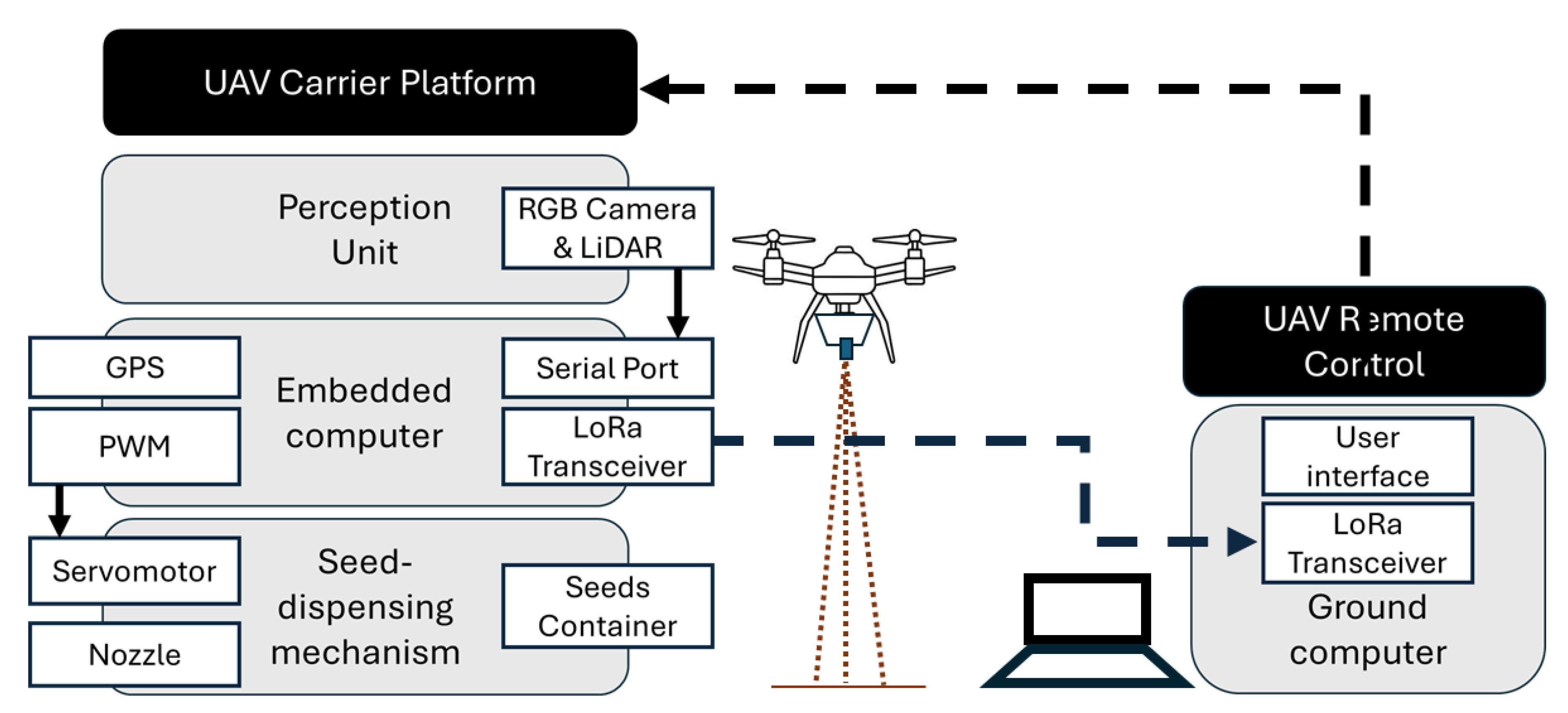

3.1. System Architecture

- UAV carrier platform: The UAV serves exclusively as a mobility and stabilization carrier, providing lift, navigation, and hover control. The restoration module does not interface directly with the internal flight-control loops and does not require firmware modification.

- UAV remote control: The remote-control system is used exclusively for navigation, positioning, and safety override.

- Perception unit: The perception unit is responsible for acquiring environmental information required for terrain classification and altitude estimation. The RGB camera, model U20CAM-OV2719, (InnoMaker, Shenzhen, China) [41], continuously captures ground imagery during flight, while a LeddarOne single-element LiDAR sensor (LeddarTech Inc., Québec City, QC, Canada) [42] measures the current distance from the ground. The perception unit functions as the system’s environmental interface, supplying real-time data to the embedded computing module.

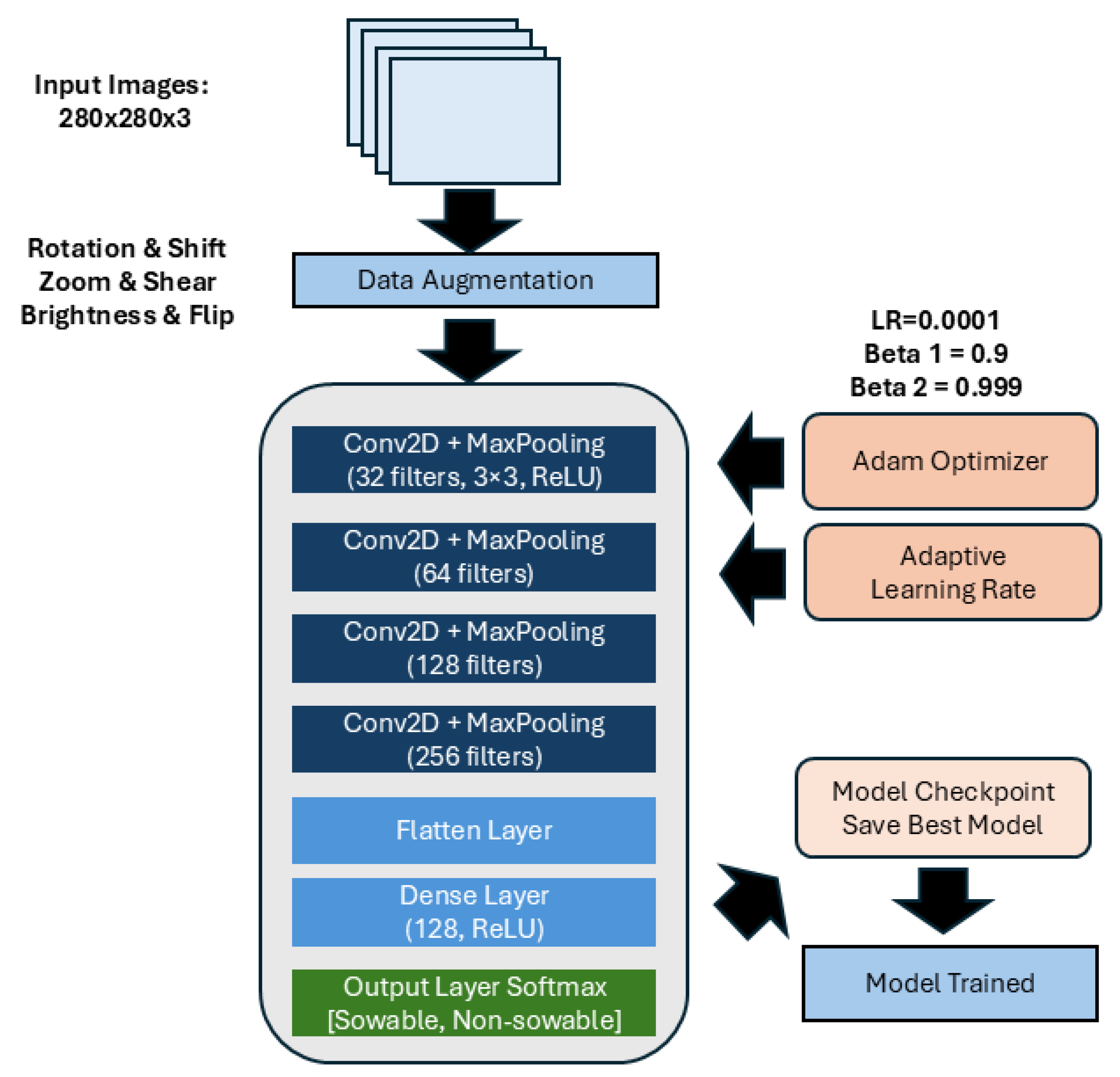

- Embedded computer: The embedded computing unit serves as the core decision-making module of the system. The embedded system was implemented using a Jetson Nano developer kit (NVIDIA Corporation, Santa Clara, CA, USA) [43] for onboard neural network inference and high-level perception tasks, together with an ESP32 microcontroller (Espressif Systems, Shanghai, China) [44] responsible for low-level control of the seed dispensing mechanism and peripheral communication. The CNN model produces a binary classification output (sowable / non-sowable terrain). Based on this decision and the current altitude, a pulse width modulation (PWM) signal is sent to dispense the seeds.

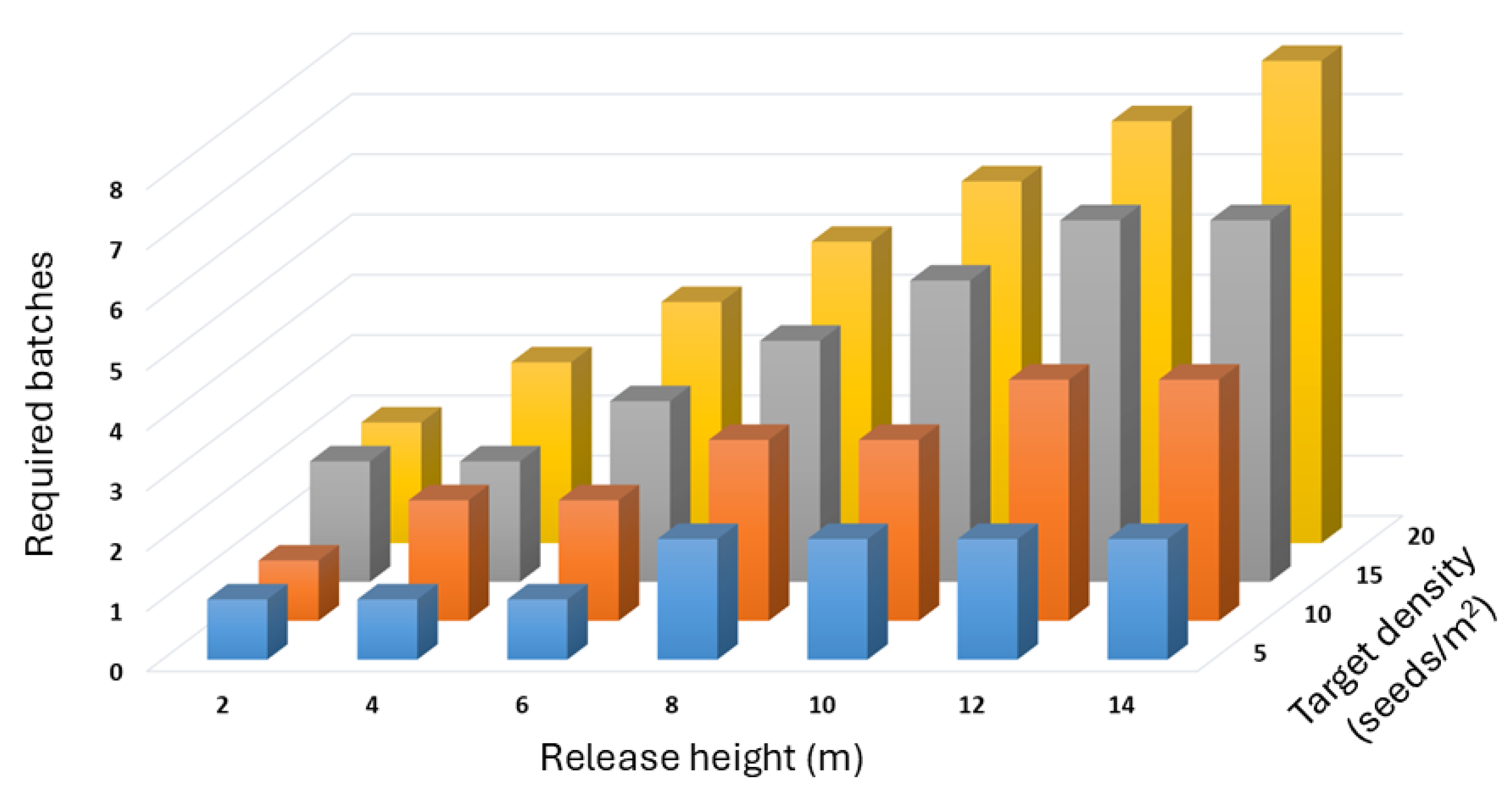

- Seed-dispensing mechanism: The seed-dispensing mechanism constitutes the actuation module of the system. PWM signals generated by the control unit drive a MG995 servomotor (TowerPro, Shenzhen, China) [45] that rotates an internal propeller within the dispenser, metering the seed flow and releasing seeds in discrete batches during each actuation cycle. The duration of the PWM command determines the number of propeller rotations and, consequently, the number of seed batches dispensed, enabling adaptive control of seed density during aerial deployment.

- Ground computer: The ground computer functions as a supervisory and monitoring station. It does not participate in the closed-loop control process but provides real-time telemetry visualization and system status monitoring. Communication between the UAV and the ground computer is established via a LoRa wireless link using RYLR998 modules (Rayax Technologies, Taipei, Taiwan) [46], enabling low-power, long-range transmission of telemetry data, such as terrain classification confidence, sensor measurements, and seed deployment events, during flight operations. LoRa communication was selected for its extended range (5-10 km) and low power consumption.

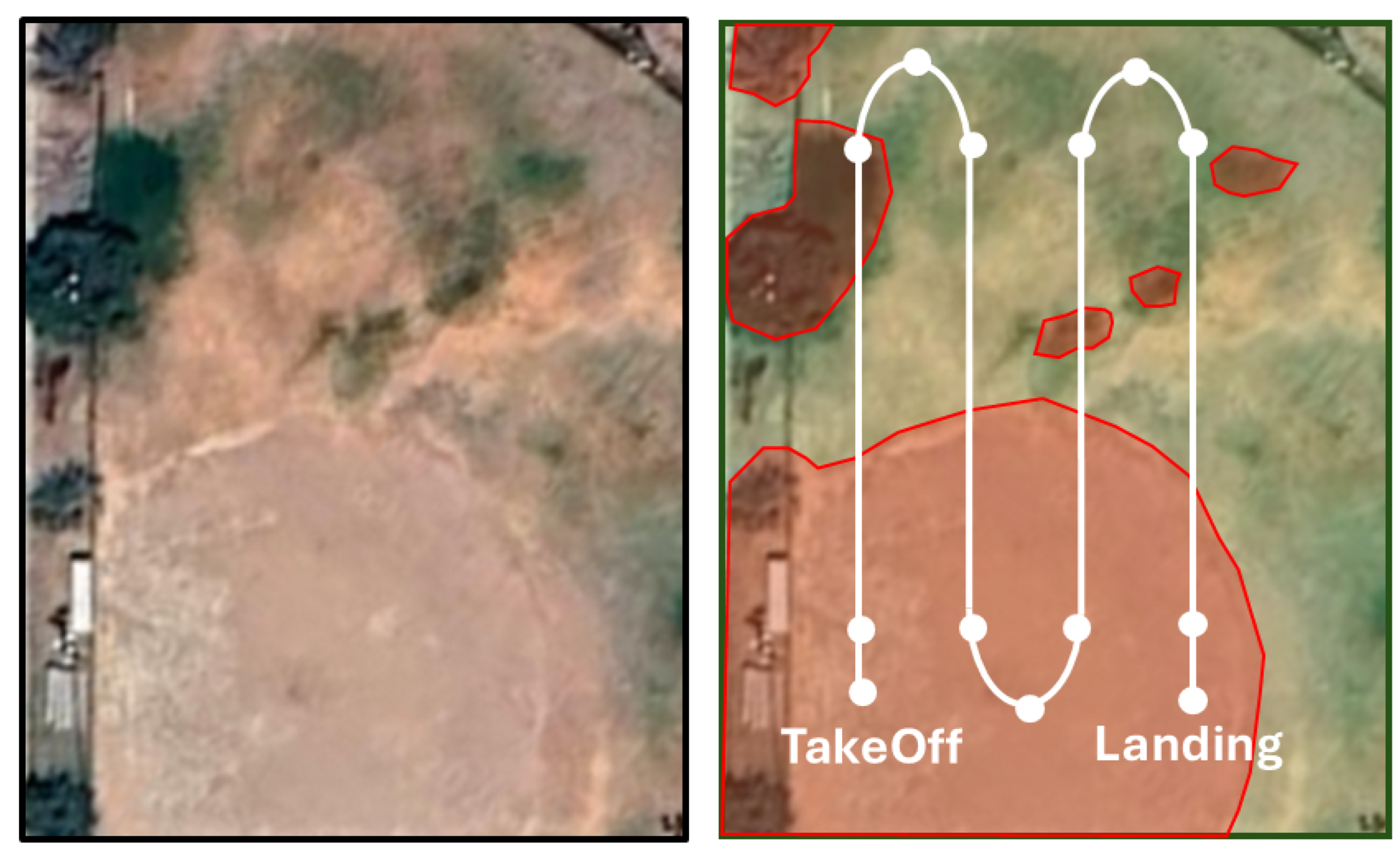

3.2. Autonomous Sowing Strategy

| Algorithm 1 Autonomous Vision-Based Sowing Strategy |

|

3.3. Vision-Based Terrain Classification

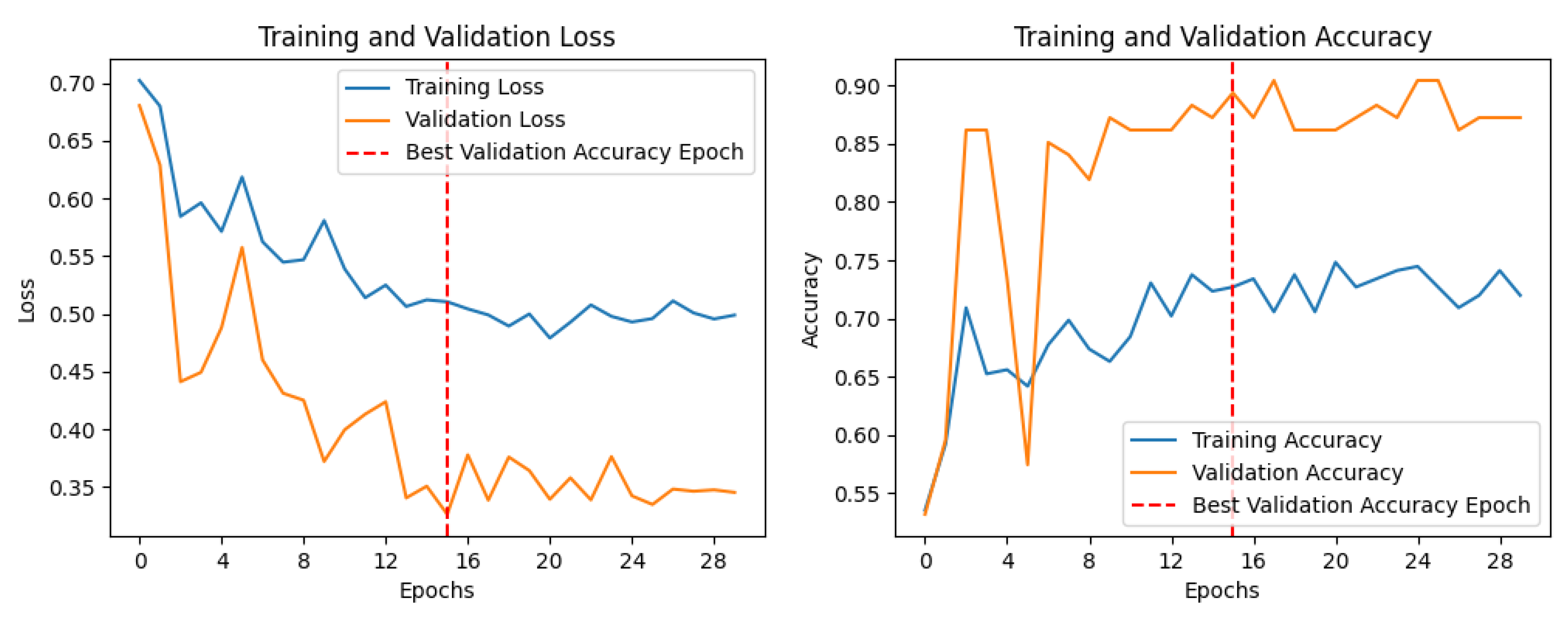

3.3.1. Customized CNN

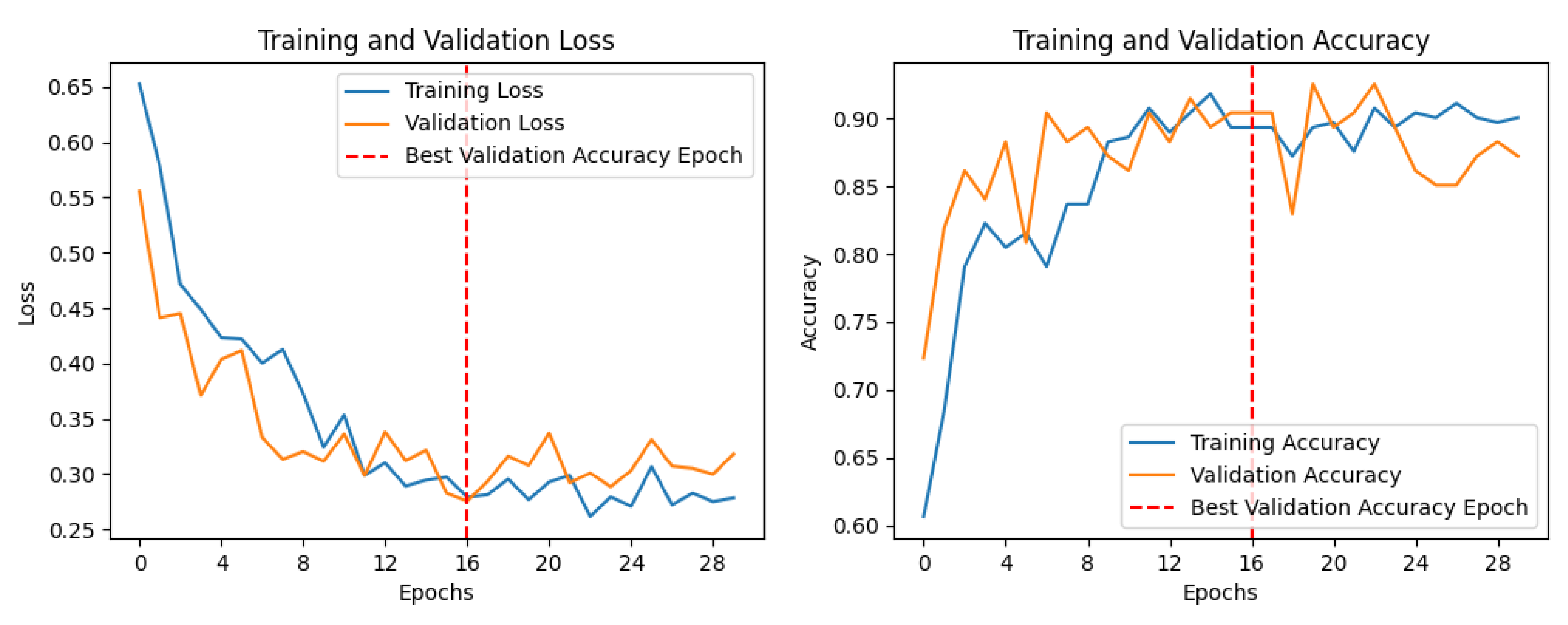

3.3.2. EfficientNet-B0

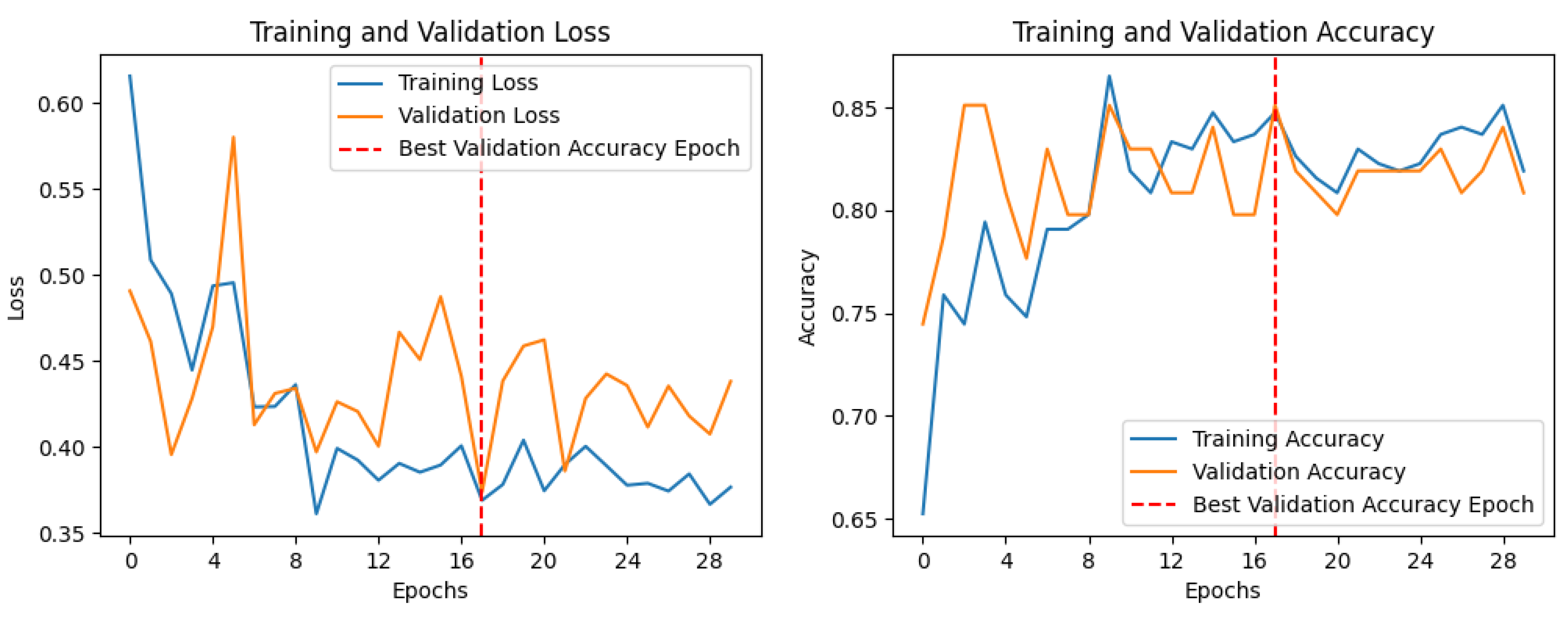

3.3.3. MobileNetV2

3.3.4. Color-Based Greenness Ratio Method

4. Results

4.1. Training CNN Models

4.2. Experimental Setup

- sowable surfaces: exposed loam soil and sparse grass, and

- non-sowable surfaces: compacted ground, denser vegetation, and artificially introduced obstacles.

4.3. Image Classification Field Evaluation

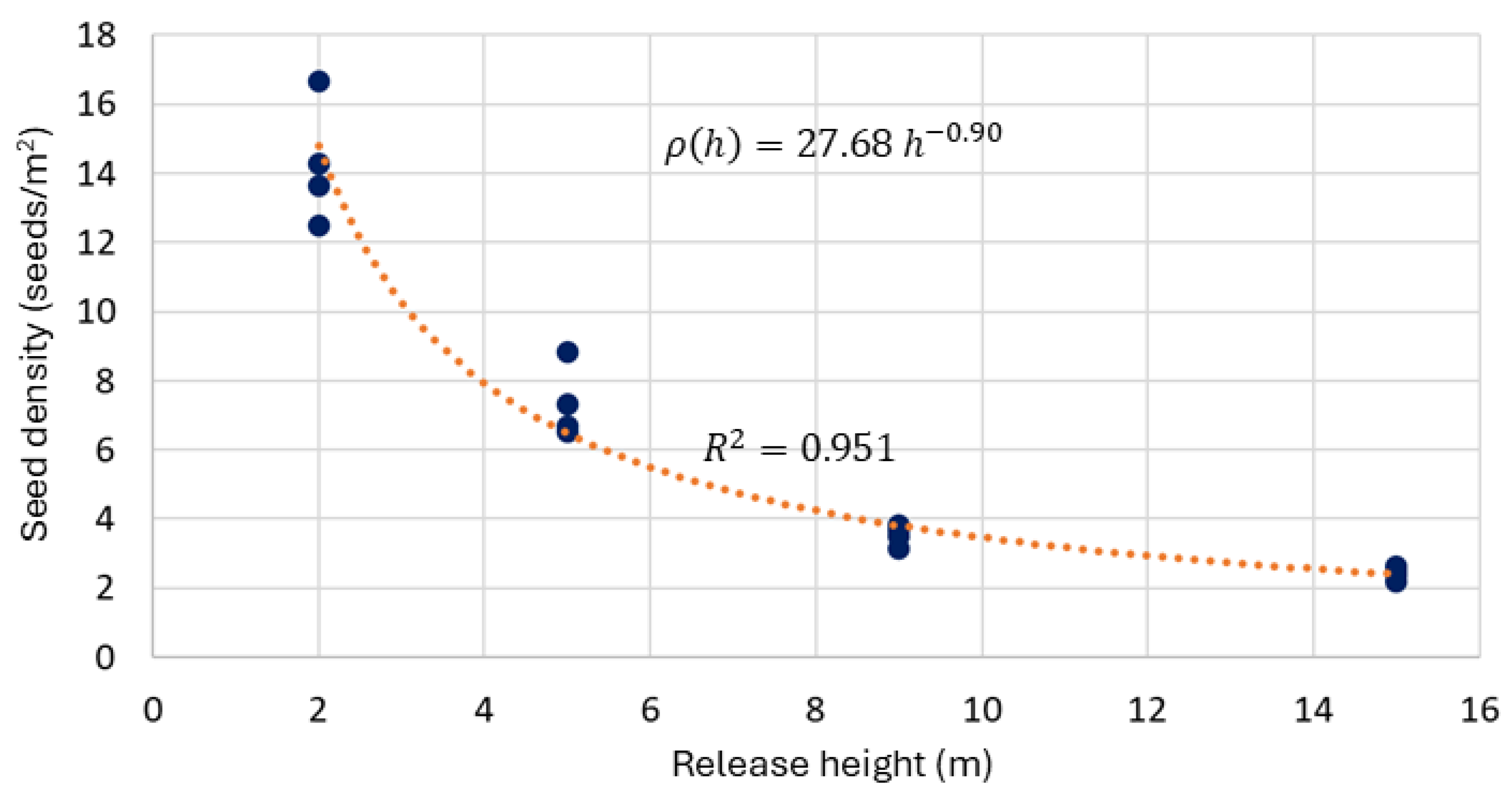

4.4. Seed Dispensing Analysis

5. Discussion

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| CNN | Convolutional Neural Network |

| HSV | Hue, Saturation and Value |

| LiDAR | Light Detection and Ranging |

| NDVI | Normalized Difference Vegetation Index |

| NIR | Near Infrared |

| PWM | Pulse Width Modulation |

| RGB | Red, Green and Blue |

| UAV | Unmanned Aerial Vehicle |

References

- Abell, R.; Iñigo, E.; Enkerlin, E.; Williams, C.; Castilleja, G.; Allnutt, T. Ecoregion-Based Conservation in the Chihuahuan Desert A Biological Assessment; WWF: Gland, Switzerland; CONABIO: Mexico City, Mexico; The Nature Conservancy: Arlington, VA, USA; PRONATURA Noreste: Monterrey, Mexico; ITESM: Ciudad Juárez, Mexico, 2000. [Google Scholar]

- Chiquoine, L.P.; Abella, S.R.; Schelz, C.D.; Medrano, M.F.; Fisichelli, N.A. Restoring historical grasslands in a desert national park: Resilience or unrecoverable states in an emerging climate? Biological Conservation 2024, 289, 110387. [Google Scholar] [CrossRef]

- Ochoa, C.G.; Villarreal-Guerrero, F.; Prieto-Amparán, J.A.; Garduño, H.R.; Huang, F.; Ortega-Ochoa, C. Precipitation, Vegetation, and Groundwater Relationships in a Rangeland Ecosystem in the Chihuahuan Desert, Northern Mexico. Hydrology 2023, 10. [Google Scholar] [CrossRef]

- Jurado-Guerra, P.; Velázquez-Martínez, M.; Sánchez-Gutiérrez, R.A.; Álvarez Holguín, A.; Domínguez-Martínez, P.A.; Gutiérrez-Luna, R.; Garza-Cedillo, R.D.; Luna-Luna, M.; Chávez-Ruiz, M.G. The grasslands and scrublands of arid and semi-arid zones of Mexico: Current status, challenges and perspectives. Revista Mexicana de Ciencias Pecuarias 2021, 12, 261–285. [Google Scholar] [CrossRef]

- Gann, G.D.; McDonald, T.; Walder, B.; Aronson, J.; Nelson, C.R.; Jonson, J.; Hallett, J.G.; Eisenberg, C.; Guariguata, M.R.; Liu, J.; et al. International principles and standards for the practice of ecological restoration. Second edition. Restoration Ecology 2019, 27, S1–S46. [Google Scholar] [CrossRef]

- Lozano-Baez, S.E.; Morio, A.; Bonnet, B.; Valderrama, P.D.; Sánchez, O.A.C.; Trujillo, J.R.T.; Paladines, H.M.; Flórez, M.; Gómez, E.; Medina, F.J.; et al. Lessons Learned From Direct Seeding to Restore Degraded Mountains in Cauca, Colombia. Ecological Management & Restoration 2025, 26. [Google Scholar] [CrossRef]

- Khoza, M.J.; Ramantswana, M.M.; Spinelli, R.; Magagnotti, N. Enhancing Silvicultural Practices: A Productivity and Quality Comparison of Manual and Semi-Mechanized Planting Methods in KwaZulu-Natal, South Africa. Forests 2024, 15. [Google Scholar] [CrossRef]

- Mohan, M.; Richardson, G.; Gopan, G.; Aghai, M.M.; Bajaj, S.; Galgamuwa, G.A.P.; Vastaranta, M.; Arachchige, P.S.P.; Amorós, L.; Corte, A.P.D.; et al. Uav-supported forest regeneration: Current trends, challenges and implications. Remote Sensing 2021, 13. [Google Scholar] [CrossRef]

- Buters, T.; Belton, D.; Cross, A. Seed and seedling detection using unmanned aerial vehicles and automated image classification in the monitoring of ecological recovery. Drones 2019, 3, 1–16. [Google Scholar] [CrossRef]

- Stamatopoulos, I.; Le, T.C.; Daver, F. UAV-assisted seeding and monitoring of reforestation sites: a review. Australian Forestry 2024, 87, 90–98. [Google Scholar] [CrossRef]

- Lan, Y.; Thomson, S.J.; Huang, Y.; Hoffmann, W.C.; Zhang, H. Current status and future directions of precision aerial application for site-specific crop management in the USA. Computers and Electronics in Agriculture 2010, 74, 34–38. [Google Scholar] [CrossRef]

- Song, C.; Zhou, Z.; Jiang, R.; Luo, X.; He, X.; Ming, R. Design and parameter optimization of pneumatic rice sowing device for unmanned aerial vehicle. Nongye Gongcheng Xuebao/Transactions of the Chinese Society of Agricultural Engineering 2018, 34, 80–88. [Google Scholar] [CrossRef]

- Huang, X.; Xu, H.; Zhang, S.; Li, W.; Luo, C.; Deng, Y. Design and experiment of a device for rapeseed strip aerial seeding. Transactions of the Chinese Society of Agricultural Engineering 2020, 36, 78–87. [Google Scholar] [CrossRef]

- Arun Kumar, M.; Telagam, N.; Mohankumar, N.; Ismail, K.M.; Rajasekar, T. Design and implementation of real-time amphibious unmanned aerial vehicle system for sowing seed balls in the agriculture field. International Journal on Emerging Technologies 2020, 11, 213–218, Cited by: 12. [Google Scholar]

- Maldonado, E.; Lozoya, C.; Gonzalez-Espinoza, C.; Orona, L. Modular seed-sowing control system for drone reforestation. In Proceedings of the IEEE Conference on Technologies for Sustainability, SusTech, 2025. [Google Scholar] [CrossRef]

- Späti, K.; Huber, R.; Finger, R. Benefits of Increasing Information Accuracy in Variable Rate Technologies. Ecological Economics 2021, 185. [Google Scholar] [CrossRef]

- Šarauskis, E.; Kazlauskas, M.; Naujokienė, V.; Bručienė, I.; Steponavičius, D.; Romaneckas, K.; Jasinskas, A. Variable Rate Seeding in Precision Agriculture: Recent Advances and Future Perspectives. Agriculture 2022, 12. [Google Scholar] [CrossRef]

- Du, Z.; Yang, L.; Zhang, D.; Cui, T.; He, X.; Xie, C.; Jiang, Y.; Zhang, X.; Mu, J.; Wang, H.; et al. Design and experiment of a soil organic matter sensor-based variable-rate seeding control system for maize. Computers and Electronics in Agriculture 2025, 229. [Google Scholar] [CrossRef]

- Tan, X.; Bai, M.; Wang, Z.; Xiang, C.; Cheng, Y.; Yin, Y.; Wang, J.; Xu, Z.; Zhao, J.; Wang, B.; et al. Simple-efficient cultivation for rapeseed under UAV-sowing: Developing a high-density and high-light-efficiency population via tillage methods and seeding rates. Field Crops Research 2025, 327. [Google Scholar] [CrossRef]

- Fraser, B.T.; Congalton, R.G. Issues in Unmanned Aerial Systems (UAS) data collection of complex forest environments. Remote Sensing 2018, 10. [Google Scholar] [CrossRef]

- Castro, J.; Morales-Rueda, F.; Alcaraz-Segura, D.; Tabik, S. Forest restoration is more than firing seeds from a drone. Restoration Ecology 2023, 31. [Google Scholar] [CrossRef]

- Muñoz, G.; Abaunza, H.; Lozoya, C.; Castañeda, H. Experimental Evaluation of an Observer-Based Controller for an Unmanned Aerial Vehicle in Reforestation Activities. Journal of Field Robotics 2025, 42, 867–879. [Google Scholar] [CrossRef]

- Agapiou, A. Vegetation Extraction Using Visible-Bands from Openly Licensed Unmanned Aerial Vehicle Imagery. Drones 2020, 4. [Google Scholar] [CrossRef]

- Villalobos-Montiel, J.; Aguilar-Gonzalez, A.; Orona, L.; Lozoya, C. Identifying deforested areas through convolutional neural network for drone reforesting. In Proceedings of the 2023 IEEE Conference on Technologies for Sustainability, SusTech 2023, 2023; pp. 138–143. [Google Scholar] [CrossRef]

- Stanley, M.; Morris, G. Machine learning-based vegetation cover analysis in Lake Hawdon North using point segmentation. 2024 International Conference on Machine Intelligence for GeoAnalytics and Remote Sensing (MIGARS), 2024; pp. 1–2. [Google Scholar] [CrossRef]

- Huang, S.; Tang, L.; Hupy, J.P.; Wang, Y.; Shao, G. A commentary review on the use of normalized difference vegetation index (NDVI) in the era of popular remote sensing. Journal of Forestry Research 2021, 32. [Google Scholar] [CrossRef]

- Al-Ali, Z.; Abdullah, M.; Asadalla, N.; Gholoum, M. A comparative study of remote sensing classification methods for monitoring and assessing desert vegetation using a UAV-based multispectral sensor. Environmental Monitoring and Assessment 2020, 192. [Google Scholar] [CrossRef]

- Holman, F.H.; Riche, A.B.; Castle, M.; Wooster, M.J.; Hawkesford, M.J. Radiometric calibration of ’commercial offthe shelf’ cameras for UAV-based high-resolution temporal crop phenotyping of reflectance and NDVI. Remote Sensing 2019, 11. [Google Scholar] [CrossRef]

- van der Merwe, D.; Burchfield, D.R.; Witt, T.D.; Price, K.P.; Sharda, A. Drones in agriculture. Advances in Agronomy 2020, 162, 1–30. [Google Scholar] [CrossRef]

- Zhang, J.; Liang, X.; Wang, M.; Yang, L.; Zhuo, L. Coarse-to-fine object detection in unmanned aerial vehicle imagery using lightweight convolutional neural network and deep motion saliency. Neurocomputing 2020, 398, 555–565. [Google Scholar] [CrossRef]

- Niculescu, V.; Lamberti, L.; Conti, F.; Benini, L.; Palossi, D. Improving Autonomous Nano-Drones Performance via Automated End-to-End Optimization and Deployment of DNNs. IEEE Journal on Emerging and Selected Topics in Circuits and Systems 2021, 11, 548–562. [Google Scholar] [CrossRef]

- Radovic, M.; Adarkwa, O.; Wang, Q. Object recognition in aerial images using convolutional neural networks. Journal of Imaging 2017, 3. [Google Scholar] [CrossRef]

- Narayanan, P.; Borel-Donohue, C.; Lee, H.; Kwon, H.; Rao, R. A real-time object detection framework for aerial imagery using deep neural networks and synthetic training images. In Proceedings of the Proceedings of SPIE - The International Society for Optical Engineering, 2018; Vol. 10646. [Google Scholar] [CrossRef]

- Mumuni, F.; Mumuni, A.; Amuzuvi, C.K. Deep learning of monocular depth, optical flow and ego-motion with geometric guidance for UAV navigation in dynamic environments. Machine Learning with Applications 2022, 10. [Google Scholar] [CrossRef]

- Makrigiorgis, R.; Siddiqui, S.; Kyrkou, C.; Kolios, P.; Theocharides, T. Efficient Deep Vision for Aerial Visual Understanding; Springer, 2023; pp. 73–94. [Google Scholar] [CrossRef]

- Bouguettaya, A.; Zarzour, H.; Kechida, A.; Taberkit, A.M. Deep learning techniques to classify agricultural crops through UAV imagery: a review. Neural Computing and Applications 2022, 34, 9511–9536. [Google Scholar] [CrossRef]

- Csillik, O.; Cherbini, J.; Johnson, R.; Lyons, A.; Kelly, M. Identification of citrus trees from unmanned aerial vehicle imagery using convolutional neural networks. Drones 2018, 2, 1–16. [Google Scholar] [CrossRef]

- Khan, S.; Tufail, M.; Khan, M.T.; Khan, Z.A.; Anwar, S. Deep-learning-based spraying area recognition system for unmanned-aerial-vehicle-based sprayers. Turkish Journal of Electrical Engineering and Computer Sciences 2021, 29, 241–256. [Google Scholar] [CrossRef]

- DJI Technology Co., Ltd.. DJI Matrice 100 User Manual, 2016.

- Holybro Ltd.. Holybro X650 Development Kit Documentation, 2023.

- InnoMaker. U20CAM-OV2719 USB Camera Module. 2026. Available online: https://www.inno-maker.com/product/u20-ov2710-isp-2mp/ (accessed on 22 March 2026).

- LeddarTech Inc. LeddarOne LiDAR Sensor. 2026. Available online: https://leddartech.com/products/leddarone/ (accessed on 22 March 2026).

- NVIDIA Corporation. Jetson Nano Developer Kit. 2026. Available online: https://developer.nvidia.com/embedded/jetson-nano-developer-kit (accessed on 22 March 2026).

- Systems, Espressif. ESP32 Series Datasheet. 2026. Available online: https://www.espressif.com/en/products/socs/esp32 (accessed on 22 March 2026).

- TowerPro. MG995 High-Speed Servo Motor Datasheet. 2026. Available online: https://www.towerpro.com.tw/product/mg995/ (accessed on 22 March 2026).

- Reyax Technology Co; Ltd. RYLR998 LoRa Module Datasheet. 2026. Available online: https://reyax.com/products/rylr998 (accessed on 22 March 2026).

- Li, W.; Luo, Y.; Jiang, P.; Dong, X.; Tang, K.; Liang, Z.; Shi, Y. A sustainable crop protection through integrated technologies: UAV-based detection, real-time pesticide mixing, and adaptive spraying. Scientific Reports 2025, 15, 35748. [Google Scholar] [CrossRef] [PubMed]

- Agrawal, J.; Arafat, M.Y. Transforming Farming: A Review of AI-Powered UAV Technologies in Precision Agriculture. Drones 2024, 8. [Google Scholar] [CrossRef]

- Tan, M.; Le, Q. EfficientNet: Rethinking Model Scaling for Convolutional Neural Networks. In Proceedings of the 36th International Conference on Machine Learning; Chaudhuri, K., Salakhutdinov, R., Eds.; PMLR, 09–15 Jun 2019, Vol. 97, Proceedings of Machine Learning Research, pp. 6105–6114.

- Sandler, M.; Howard, A.; Zhu, M.; Zhmoginov, A.; Chen, L.C. MobileNetV2: Inverted Residuals and Linear Bottlenecks. In Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2018; pp. 4510–4520. [Google Scholar] [CrossRef]

| Method | Accuracy | Precision | Recall | F1-score |

|---|---|---|---|---|

| Customized CNN | 0.8681 | 0.8689 | 0.8543 | 0.8616 |

| EfficientNet-B0 | 0.8816 | 0.8747 | 0.8796 | 0.8771 |

| MobileNetV2 | 0.7739 | 0.7255 | 0.8515 | 0.7835 |

| HSV-GR Method | 0.7402 | 0.8015 | 0.6106 | 0.6932 |

| Method | Mean Latency (ms) | Std. Dev. (ms) | FPS |

|---|---|---|---|

| Customized CNN | 63.29 | 12.46 | 15 |

| EfficientNet-B0 | 241.36 | 19.98 | 4 |

| MobileNetV2 | 78.80 | 10.84 | 12 |

| HSV-GR Method | 11.81 | 3.63 | 84 |

| Method | Model Size (MB) | Runtime Memory (MB) |

|---|---|---|

| Customized CNN | 115.59 | 1,828.63 |

| EfficientNet-B0 | 16.49 | 1,708.25 |

| MobileNetV2 | 9.32 | 1,667.56 |

| HSV-GR Method | 0 | Negligible |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).