Submitted:

07 April 2026

Posted:

08 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Background and Literature Review

3. Data Processing and AI Applications

3.1. Digital Systems for Manufacturing

- At its foundation, data acquisition is achieved through data mining, experimental trials, numerical simulations, and real-time sensor streams, ensuring comprehensive visibility into process characteristics and production parameters.

- The collected raw data is then subjected to filtering, mapping, translation, interpretation, and preprocessing to improve quality, consistency, and suitability for advanced analytics.

- The subsequent stage focuses on data modeling and analytics, where dimensionality-reduction techniques, statistical methods, and machine learning algorithms—such as regression, clustering, and neural networks—are applied to optimize processes, detect anomalies, and identify faults.

- Building on these physics-informed and/or physics-based models, real-time data models, simulation environments, and virtualization frameworks enable the development of digital twins, digital shadows, and digital advisory systems. These technologies create dynamic, high-fidelity virtual representations of physical assets and manufacturing processes. These virtual models are continuously synchronized with real-world data, allowing for scenario evaluation, performance prediction, and optimization under varying operational conditions. Finally, AI-driven advisory and autonomous systems leverage advanced decision engines to deliver prescriptive recommendations or execute automated control actions, forming the foundation of smart manufacturing ecosystems.

3.2. Data Sources & Databases

3.3. Reduced-Order Models

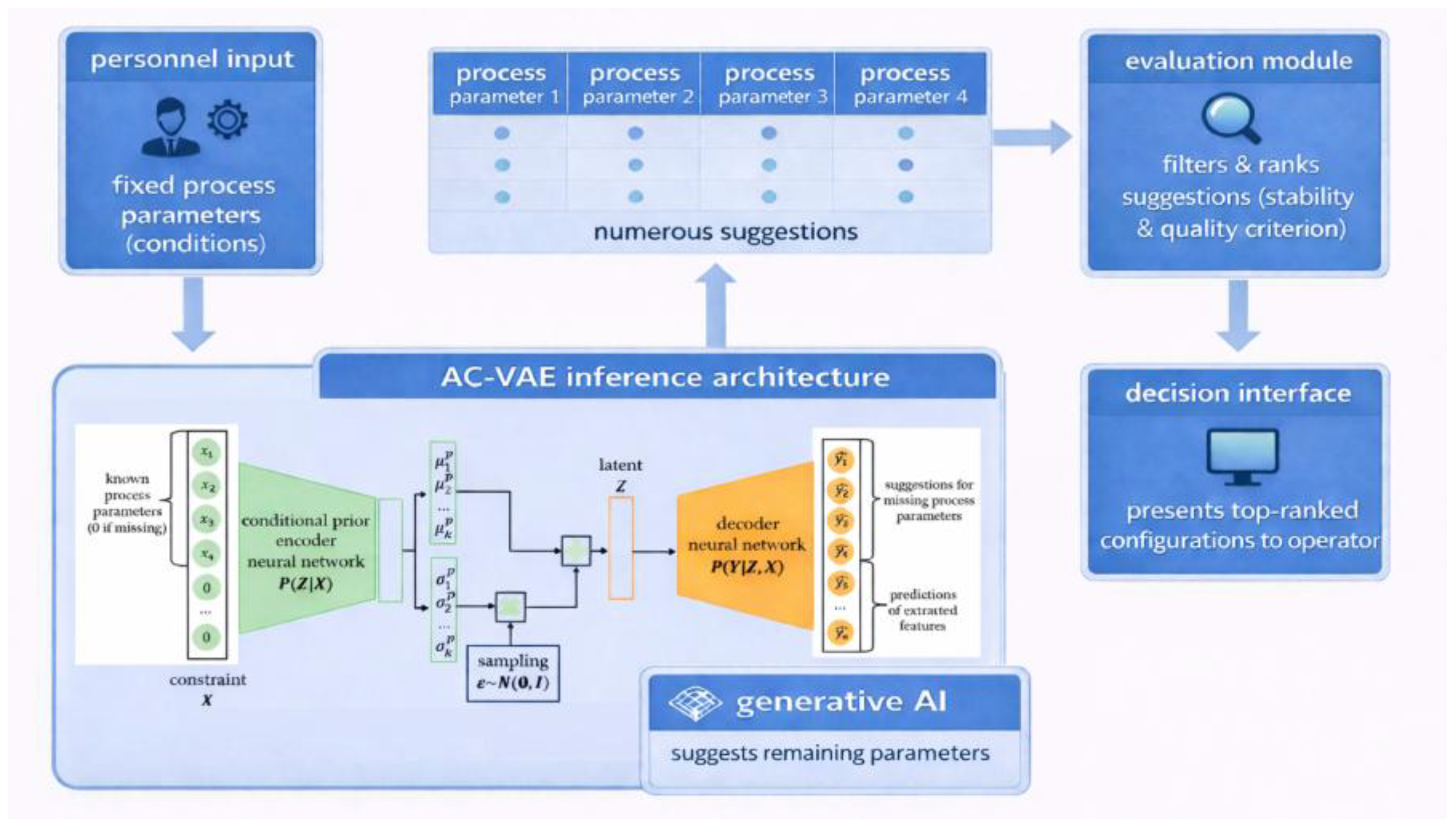

4. AI-Based Advisory System

4.1. System Architecture

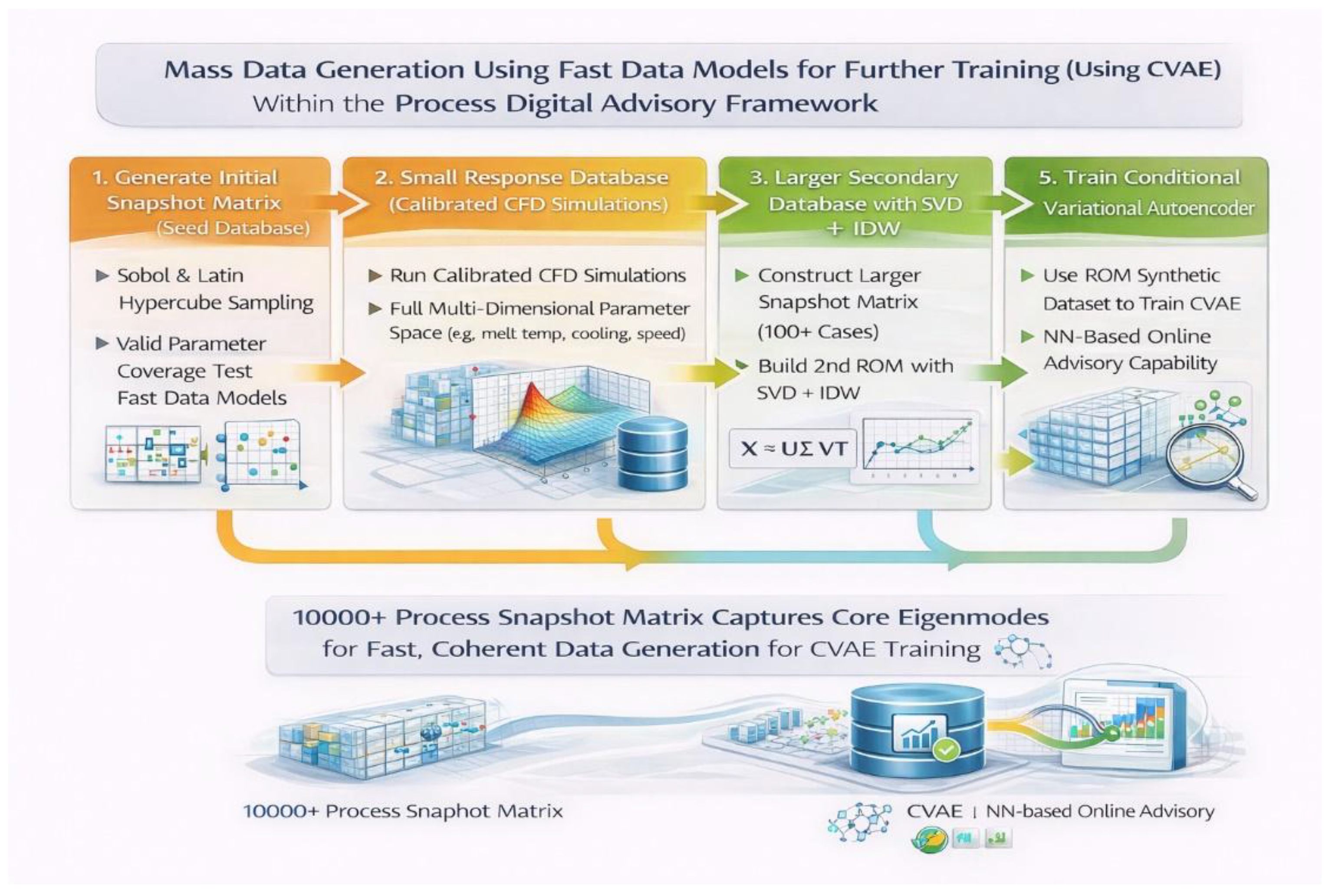

4.2. Data Infrastructure, Generation & Flow

4.3. Mass Data Generation

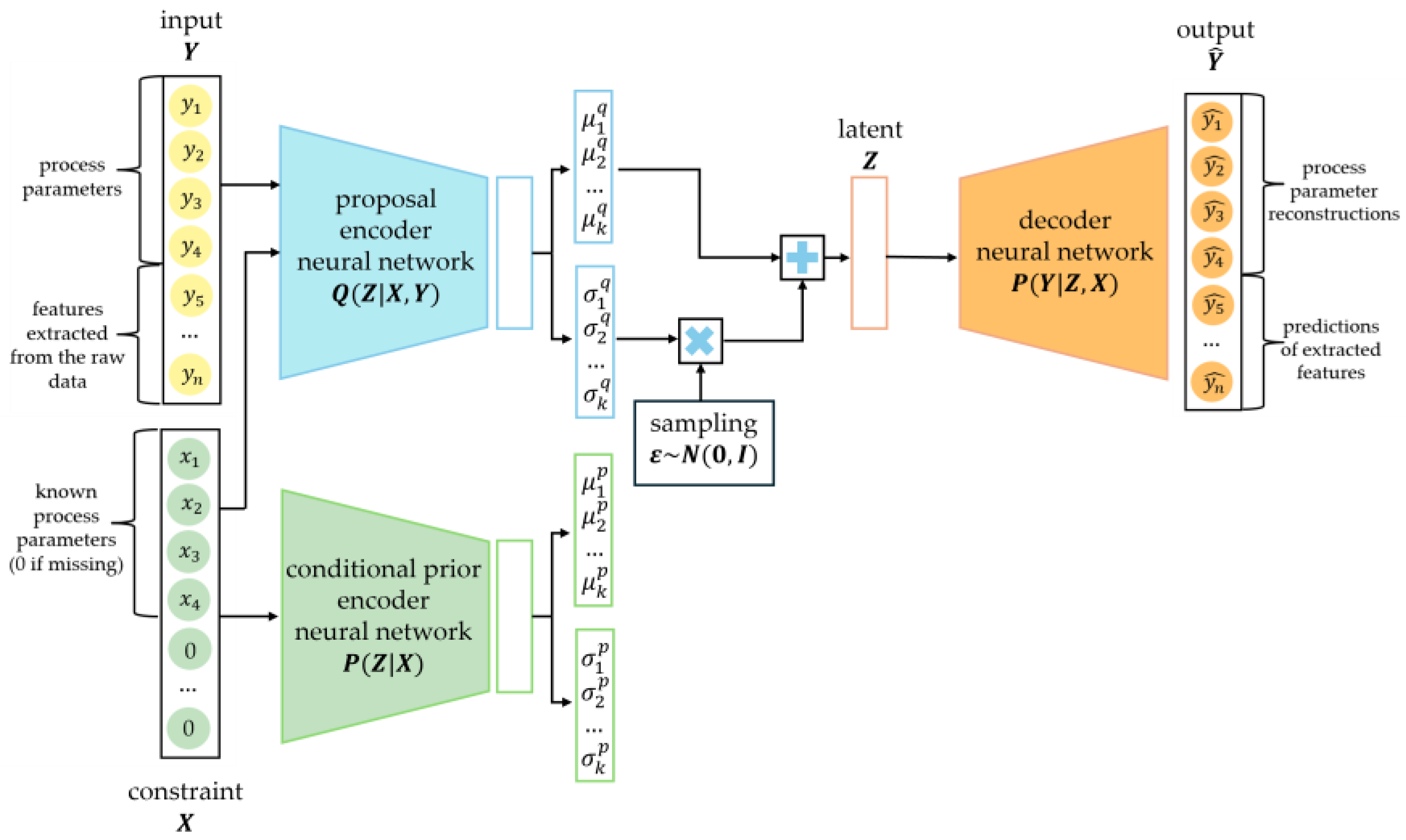

4.4. Neural Network Learning

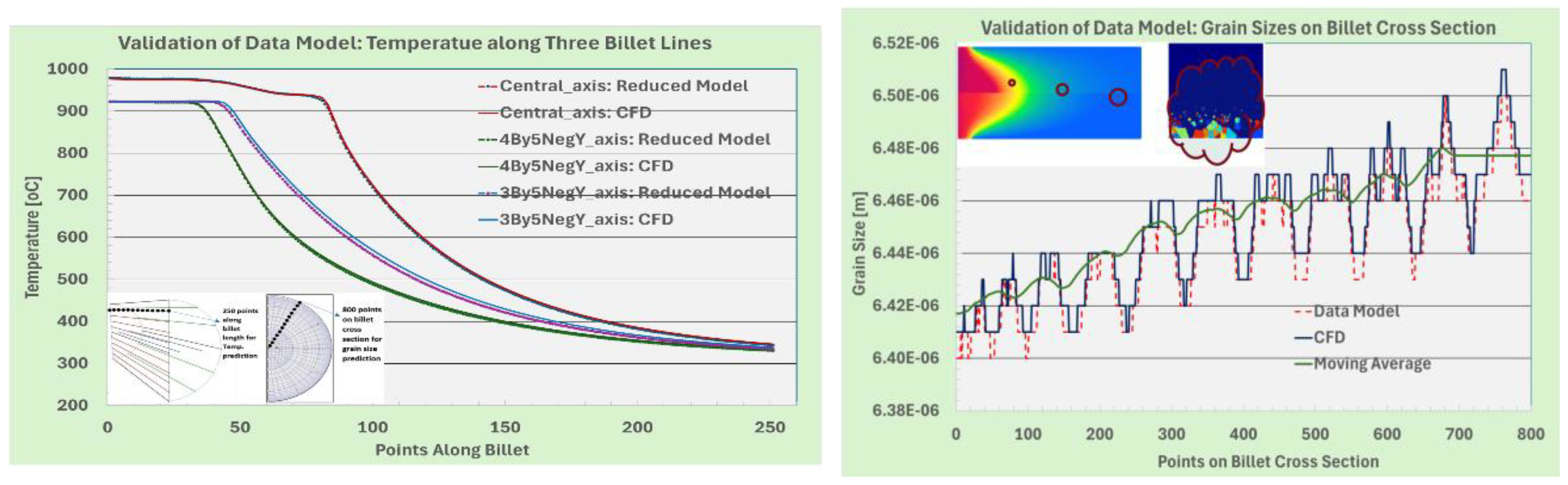

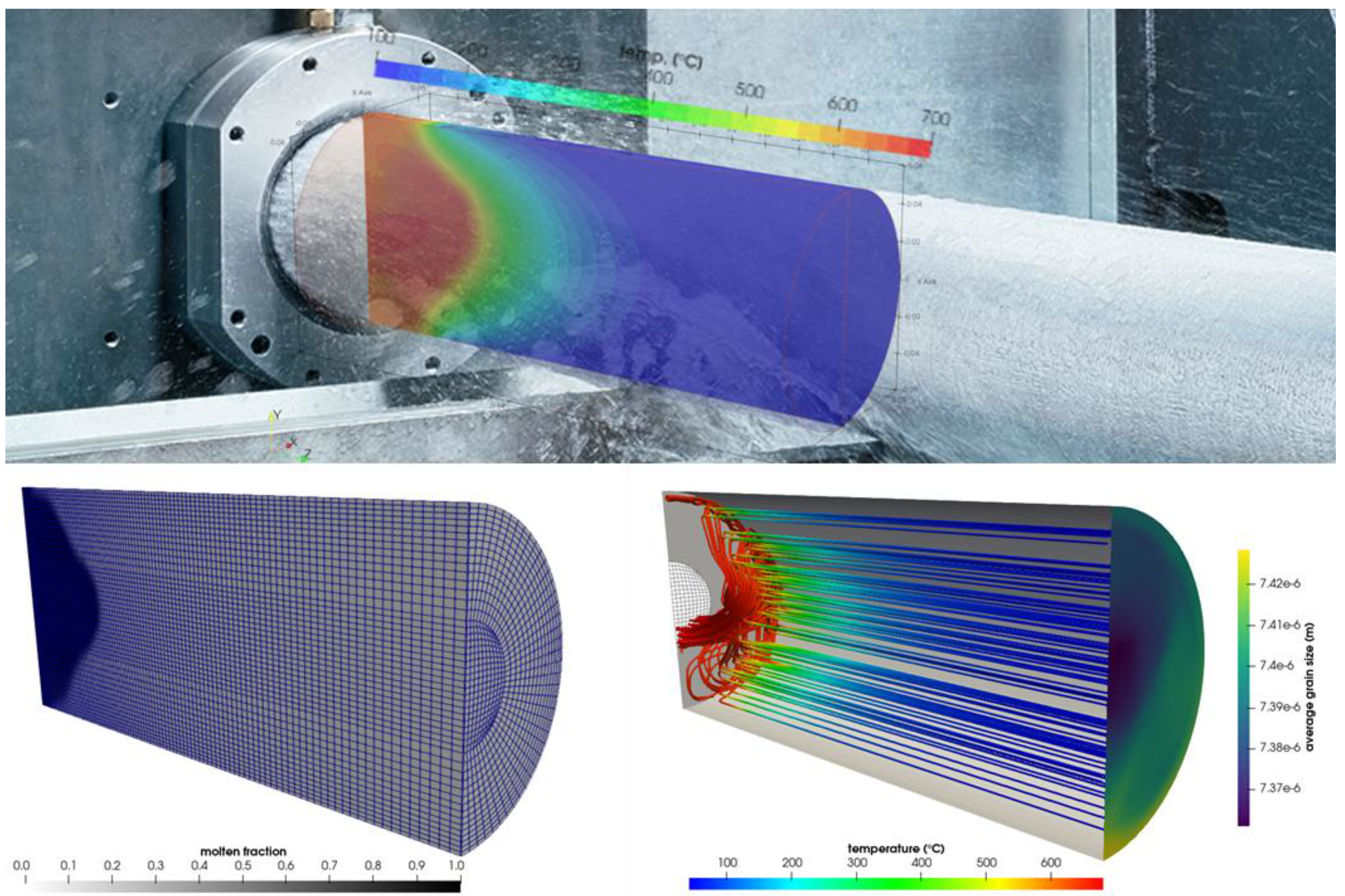

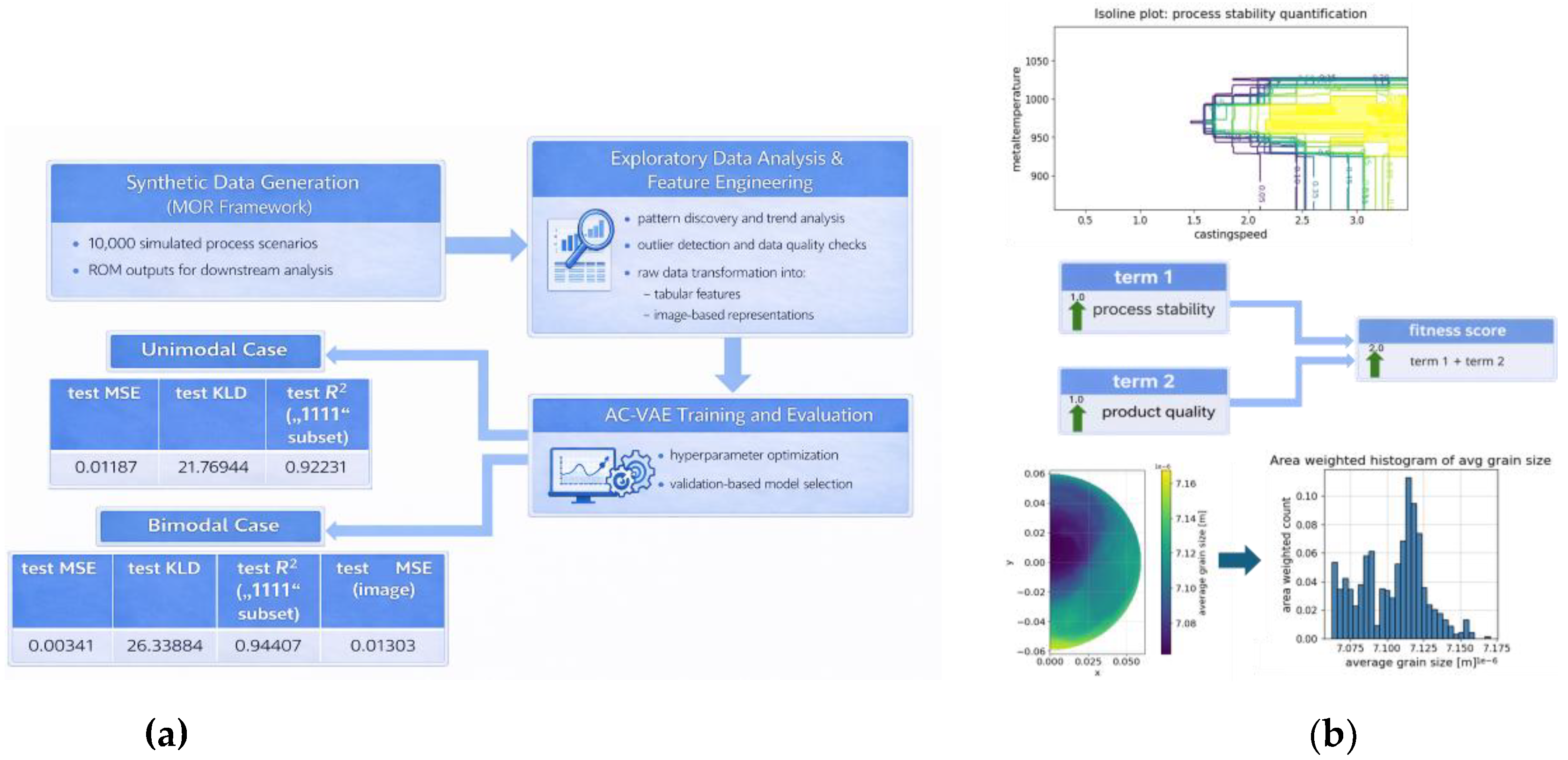

5. Case Study: Casting Application

5.1. Experimental & Simulation Setup

5.2. Database Building

5.3. Varitional Autoencoder with Arbitrary Conditioning

6. Discussions and Challenges

7. Concluding Remarks

Supplementary Materials

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

References

- Ciccone, F.; Bacciaglia, A.; Ceruti, A. Optimization with AI in AM: a systematic review. In J. Braz. Soc. Mech. Sci. Eng.; 2023. [Google Scholar] [CrossRef]

- Chen, K.; et al. A review of ML in AM: design and process. Int. J. Adv. Manuf. Technol. 2024. [Google Scholar] [CrossRef]

- Chia, H. Y.; et al. Process parameter optimization of metal AM: review & outlook. J. Mater. Inf. 2022. [Google Scholar] [CrossRef]

- Menold, T.; et al. Laser material processing optimization using Bayesian optimization. In Light: Adv Manuf; 2024. [Google Scholar] [CrossRef]

- Liu, X.; Han, X.; Wang, H.; et al. A modelling and updating approach of digital twin based on surrogate model to rapidly evaluate product performance. Int J Adv Manuf Technol 2023, 129. [Google Scholar] [CrossRef]

- Tang, Y.; Sajadi, P.; Rahmani Dehaghani, M.; et al. A systematic online update method for reduced-order-model-based digital twin. J Intell Manuf 2025, 36. [Google Scholar] [CrossRef]

- Horr, A.M.; Drexler, H. Real-Time Models for Manufacturing Processes: How to Build Predictive Reduced Models. Processes 2025, 13, 252. [Google Scholar] [CrossRef]

- Mallor, C.; Lani, S.; Zambrano, V.; Ghasemi-Tabasi, H.; Calvo, S.; Burn, A. Integrating 3D printing, simulations and surrogate modelling: A comprehensive study on additive manufacturing focusing on a metal twin-cantilever benchmark. Adv in Ind and Manuf Eng 2025, 10, 100162. [Google Scholar] [CrossRef]

- Balu, A.; Sarkar, S.; Ganapathysubramanian, B.; Krishnamurthy, A. Physics-aware machine learning surrogates for real-time manufacturing digital twin. Manuf Letters 2022, 34. [Google Scholar] [CrossRef]

- Leng, J.; Zuo, K.; Xu, C.; et al. Physics-informed machine learning in intelligent manufacturing: a review. J Intell Manuf 2025. [Google Scholar] [CrossRef]

- Pan, L.; Li, G.; Zhu, T.; Liu, D.; Wang, Y.; Lu, Y. Physics-Informed Machine Learning in Design and Manufacturing: Status and Challenges. ASME. J. Comput. Inf. Sci. Eng. 2025. [Google Scholar] [CrossRef]

- Uhrich, B.; Pfeifer, N.; Schäfer, M.; et al. Physics-informed deep learning to quantify anomalies for real-time fault mitigation in 3D printing. Appl Intell 2024, 54. [Google Scholar] [CrossRef]

- Yang, S; Peng, S; Guo, J; Wang, F. A review on physics-informed machine learning for monitoring metal additive manufacturing process. Adv. Manuf. 2024, 2. [Google Scholar] [CrossRef]

- Anonyuo, S.; Kwakye, J.M.; Ozowe, W. A Review of Quality Control and Process Optimization in High-Volume Semiconductor Manufacturing. World Journal of Engineering and Technology Research 2024, 3(2), 22–27. [Google Scholar] [CrossRef]

- Lu, Z.; Ren, N.; Xu, X.; et al. Real-time prediction and adaptive adjustment of continuous casting based on deep learning. Commun Eng 2023, 2, 34. [Google Scholar] [CrossRef]

- Knoth, S. Statistical Process Control. In Applied Quantitative Finance; Springer: Berlin, Heidelberg, 2002. [Google Scholar] [CrossRef]

- Montgomery, D. C. The use of statistical process control and design of experiments in product and process improvement. IIE Transactions 1992, 24(5), 4–17. [Google Scholar] [CrossRef]

- Haridy, S.; Gouda, S.A.; Wu, Z. An integrated framework of statistical process control and design of experiments for op-timizing wire electrochemical turning process. Int J Adv Manuf Technol 2011, 53, 191–207. [Google Scholar] [CrossRef]

- Dogan, O.; Areta Hiziroglu, O. Empowering Manufacturing Environments with Process Mining-Based Statistical Process Control. Machines 2024, 12, 411. [Google Scholar] [CrossRef]

- Montgomery, D. C. The 100th anniversary of the control chart. Journal of Quality Technology 2024, 56(1), 2–4. [Google Scholar] [CrossRef]

- Best, M.; Neuhauser, D. Walter A Shewhart, 1924, and the Hawthorne factory. BMJ Quality & Safety 2006, 15, 142–143. [Google Scholar] [CrossRef]

- Bradford, P.G.; Miranti, P.J. Information in an Industrial Culture: Walter A. Shewhart and the Evolution of the Control Chart, 1917–1954. Information & Culture 2019, 54(2), 179–219. [Google Scholar] [CrossRef]

- Luceño, A. Control Charts. In International Encyclopedia of Statistical Science; Lovric, M., Ed.; Springer: Berlin, Heidelberg, 2025. [Google Scholar] [CrossRef]

- Swamidass, P.M. SHEWHARTCONTROL CHARTS. In Encyclopedia of Production and Manufacturing Management; Swamidass, P.M., Ed.; Springer: New York, NY, 2000. [Google Scholar] [CrossRef]

- Radanliev, P. Artificial intelligence: reflecting on the past and looking towards the next paradigm shift. Journal of Experimental & Theoretical Artificial Intelligence 2025, 37(7), 1045–1062. [Google Scholar] [CrossRef]

- Koren, Y.; Heisel, U.; Jovane, F.; Moriwaki, T.; Pritschow, G.; Ulsoy, G.; Van Brussel, H. Reconfigurable Manufacturing Systems. CIRP Annals 1999, 48(2), 527–540. [Google Scholar] [CrossRef]

- Coons, S. A. An outline of the requirements for a computer-aided design system. In Proceedings of the May 21-23, 1963, spring joint computer conference (AFIPS '63 (Spring)), Association for Computing Machinery, New York, NY, USA; pp. 299–304. [CrossRef]

- Marr, D.; Hildreth, E. Theory of edge detection. Proc. Biol. Sci. 1980, 207(1167), 187–217. [Google Scholar] [CrossRef] [PubMed]

- Buchanan, B. G.; Shortliffe, E. H. Rule Based Expert Systems: The Mycin Experiments of the Stanford Heuristic Programming Project. In Addison-Wesley series in artificial intelligence; Addison-Wesley Longman Publishing: MA, United States, June 1984. [Google Scholar]

- Zadeh, L.A. The concept of a linguistic variable and its application to approximate reasoning-I. Information Sciences 1975, 8(3), 199–249. [Google Scholar] [CrossRef]

- Kanet, J. J.; Adelsberger, H. H. Expert systems in production scheduling. European Journal of Operational Research 1987, 29(1), 51–59. [Google Scholar] [CrossRef]

- slam, M.T.; Sepanloo, K.; Woo, S.; Woo, S.H.; Son, Y.-J. A Review of the Industry 4.0 to 5.0 Transition: Exploring the Inter-section, Challenges, and Opportunities of Technology and Human–Machine Collaboration. Machines 2025, 13, 267. [Google Scholar] [CrossRef]

- Oks, S.J.; Jalowski, M.; Lechner, M.; et al. Cyber-Physical Systems in the Context of Industry 4.0: A Review, Categorization and Outlook. Inf Syst Front 2024, 26. [Google Scholar] [CrossRef]

- van-Lopik, Katherine; Hayward, Steven; Grant, Rebecca; McGirr, Laura; Goodall, Paul; Jin, Yan; Price, Mark; West, Andrew A.; Conway, Paul P. A review of design frameworks for human-cyber-physical systems moving from industry 4 to 5. In IET Cyber-Physical Systems: Theory & Applications; 2023. [Google Scholar] [CrossRef]

- Krugh, M.; Mears, L. : A complementary cyber-human systems framework for industry 4.0 cyber-physical systems. Manuf. Lett. 2018, 15. [Google Scholar] [CrossRef]

- Van-Lopik, K. Considerations for the design of next-generation interfaces to support human workers in Industry 4.0. Doctoral Thesis, Loughborough University, 2019. [Google Scholar] [CrossRef]

- Nakagawa, E.Y. : Industry 4.0 reference architectures: state of the art and future trends. Comput. Ind. Eng. 2021, 156, 107241. [Google Scholar] [CrossRef]

- Sharpe, R. : An industrial evaluation of an Industry 4.0 reference architecture demonstrating the need for the inclusion of security and human components. Comput. Ind. 2019, 108. [Google Scholar] [CrossRef]

- Nguyen, H.; Ganix, N.; Ion, L. : Human - centred design in industry 4. 0: case study review and opportunities for future research. J. Intell. Manuf. 2022, 33. [Google Scholar] [CrossRef]

- Manzoni, A.; Quarteroni, A.; Rozza, G. Computational Reduction for Parametrized PDEs: Strategies and Applications. Milan J. Math. 2012, 80, 283–309. [Google Scholar] [CrossRef]

- Dissanayake, M.W.M.G.; Phan-Thien, N. Neural-network-based approximations for solving partial differential equations. Commun. Numer. Meth. Engng 1994, 10, 195–201. [Google Scholar] [CrossRef]

- Lagaris, I. E.; Likas, A.; Fotiadis, D. I. Artificial neural networks for solving ordinary and partial differential equations. IEEE Transactions on Neural Networks 1998, 9(5), 987–1000. [Google Scholar] [CrossRef] [PubMed]

- Psichogios, D.C.; Ungar, L.H. A hybrid neural network-first principles approach to process modeling. AIChE J. 1992, 38, 1499–1511. [Google Scholar] [CrossRef]

- Raissi, M.; Perdikaris, P.; Karniadakis, G.E. Physics-informed neural networks: A deep learning framework for solving forward and inverse problems involving nonlinear partial differential equations. Computational Physics 2019, 378, 686–707. [Google Scholar] [CrossRef]

- Rouhollahi, A.; Rismanian, M.; Ebrahimi, A.; Ilegbusi, O. J.; Nezami, F. R. Prediction of directional solidification in freeze casting of biomaterial scaffolds using physics-informed neural networks. Biomedical Physics & Engineering Express 2024, 10, 6. [Google Scholar] [CrossRef] [PubMed]

- Würth, T.; Krauß, C.; Zimmerling, C.; Kärger, L. Physics-informed neural networks for data-free surrogate modelling and engineering optimization – An example from composite manufacturing. Materials & Design 2023, 231, 112034. [Google Scholar] [CrossRef]

- Song, R.; Eo, S.; Lee, M.; Lee, J. Physics-informed machine learning for optimizing the coating conditions of blade coating. Physics of Fluids 2022, 34(8), 082112. [Google Scholar] [CrossRef]

- Horr, A.M. Notes on New Physical & Hybrid Modelling Trends for Material Process Simulations. J. Phys. Conf. Ser. 2020, 1603, 012008. [Google Scholar] [CrossRef]

- Horr, A.; Blacher, D.; Vázquez, G. On Performance of Data Models and Machine Learning Routines for Simulations of Casting Processes. BHM Berg-Und Hüttenmännische Monatshefte 2025, 170, 28–36. [Google Scholar] [CrossRef]

- Horr, A.M. Real-Time Modeling for Design and Control of Material Additive Manufacturing Processes. Metals 2024, 14, 1273. [Google Scholar] [CrossRef]

- Horr, A.M.; Hartmann, M.; Haunreiter, F. Innovative Data Models: Transforming Material Process Design and Optimization. Metals 2025, 15, 873. [Google Scholar] [CrossRef]

- Horr, A.M. Real-time Modelling and ML Data Training for Digital Twinning of Additive Manufacturing Processes. BHM Berg-Und Hüttenmännische Monatshefte 2024, 169, 48–56. [Google Scholar] [CrossRef]

- Kingma, D.P.; Welling, M. An Introduction to Variational Autoencoders. Found. Trends Mach. Learn. 2019, 12, 307–392. [Google Scholar] [CrossRef]

- Doersch, C. Tutorial on Variational Autoencoders. arXiv. 2016. Available online: https://arxiv.org/abs/1606.05908 (accessed on 3 March 2026).

- Ivanov, O.; Figurnov, M.; Vetrov, D. Variational Autoencoder with Arbitrary Conditioning. arXiv. 2018. Available online: https://arxiv.org/abs/1806.02382 (accessed on 3 March 2026).

- Ronneberger, O.; Fischer, P.; Brox, T. U-Net: Convolutional Networks for Biomedical Image Segmentation. arXiv. 2015. Available online: https://arxiv.org/abs/1505.04597 (accessed on 3 March 2026).

- Bennon, W.D.; Incropera, F.P. A continuum model for momentum, heat and species transport in binary solid-liquid phase change systems—I. Model formulation. Int. J. Heat and Mass Transfer 1987, 30(10), 2161–2170. [Google Scholar] [CrossRef]

- Lebon, B. directChillFoam: an OpenFOAM application for direct-chill casting. J. Open Source Software 2023, 8(82), 4871. [Google Scholar] [CrossRef]

- Thévoz, Ph.; Desbiolles, J.L; Rappaz, M. Modelling of equiaxed microstructure formation in casting. Metallurgical and Materials Transactions A 1989, 20, 311–322. [Google Scholar] [CrossRef]

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).