Submitted:

06 April 2026

Posted:

07 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- Provide a structured overview of backdoor attacks from unimodal to modern multimodal systems.

- Presents a meta-research on representative multimodal attacks covering contrastive learning, instruction tuning, and test-time vulnerabilities.

- Analyze how fragmentation in datasets, threat models, and evaluation metrics harms reproducibility and cumulative progress.

- Discuss open challenges and propose directions for standardized benchmarks in multimodal backdoor research.

2. Unimodal to Multimodal Backdoor Attacks

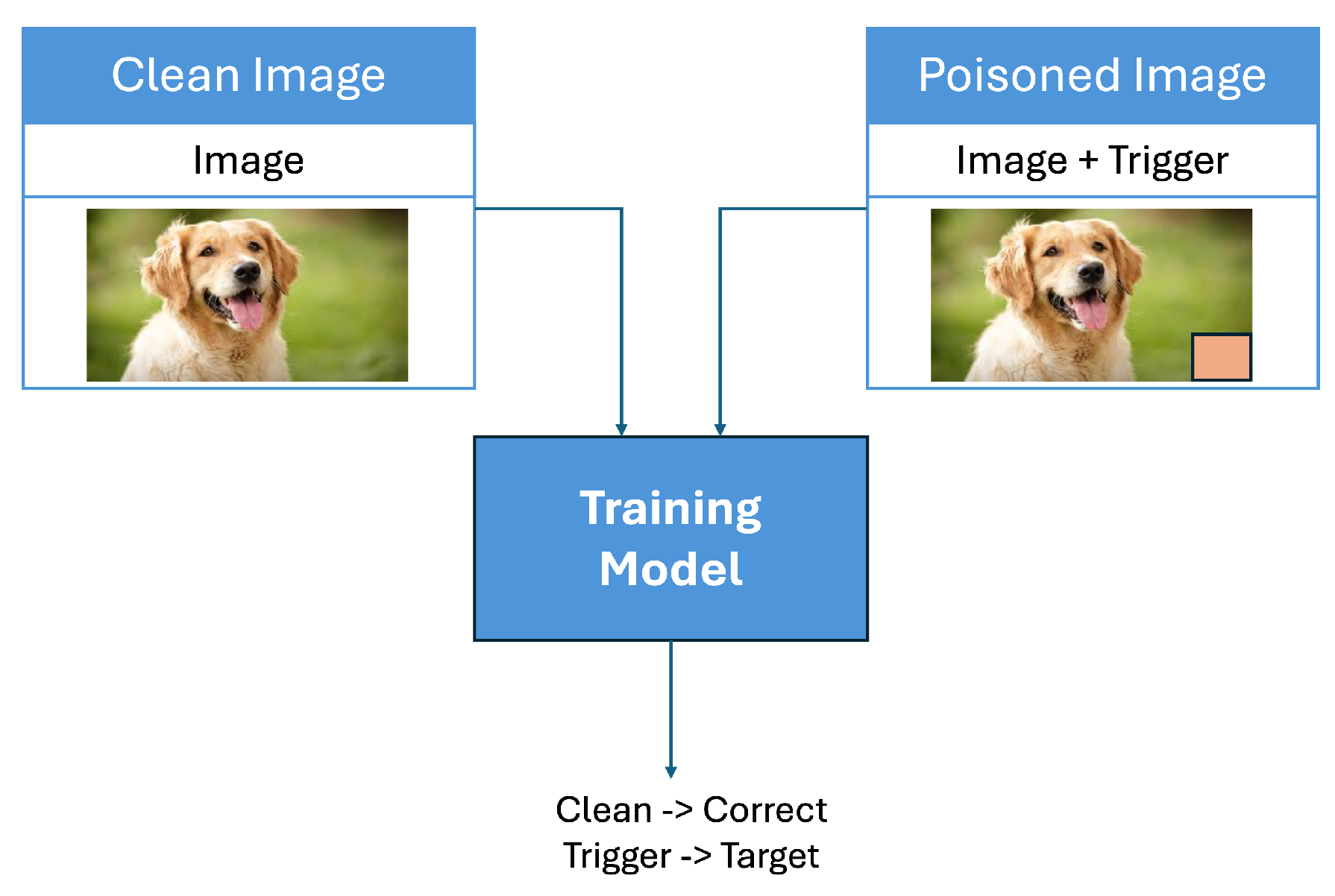

2.1. Classical Unimodal Backdoor Attacks

2.2. New Multimodal Attack Surfaces

3. Emerging Multimodal Backdoor Attacks

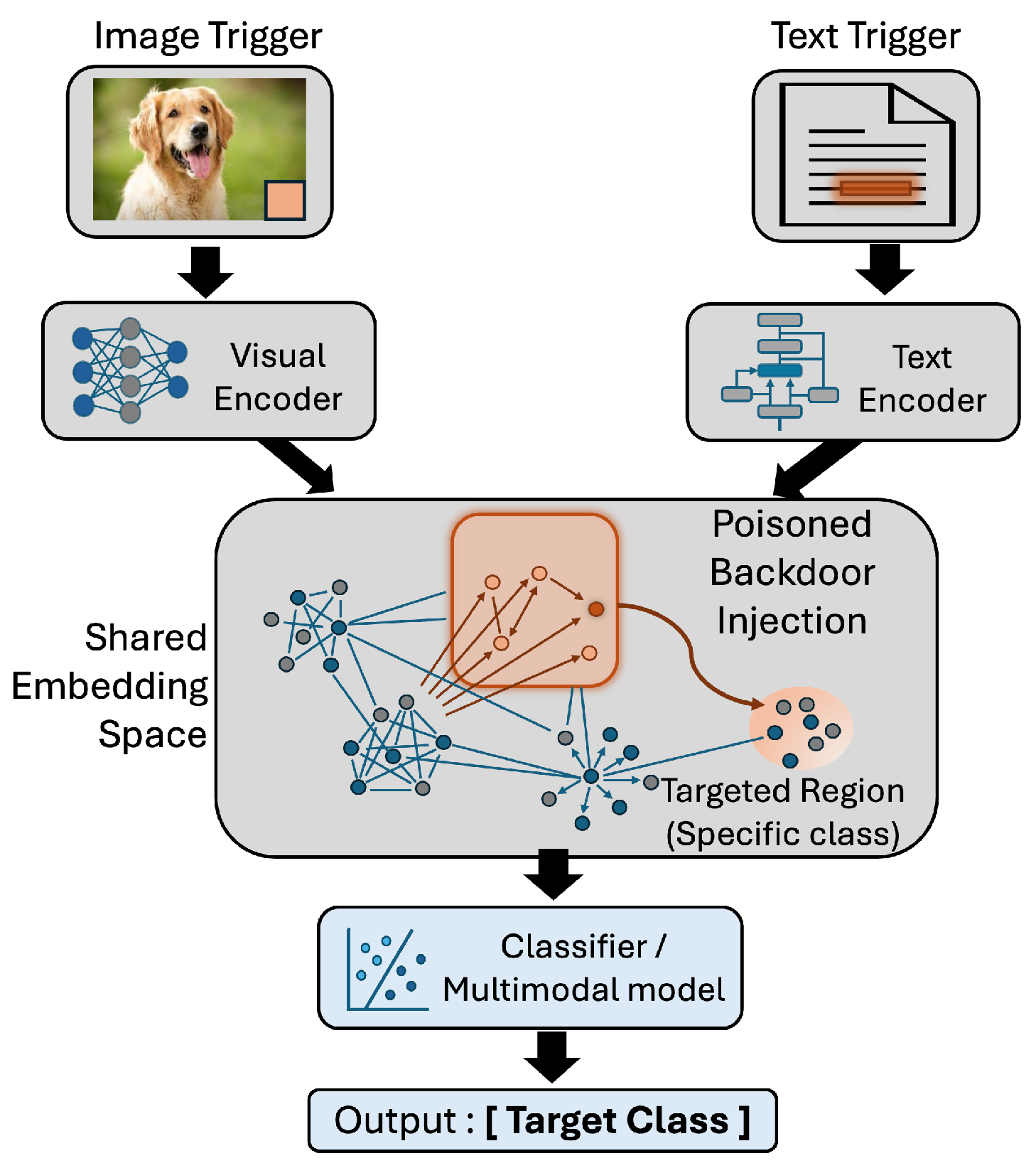

3.1. Contrastive Learning Attacks on Multimodal

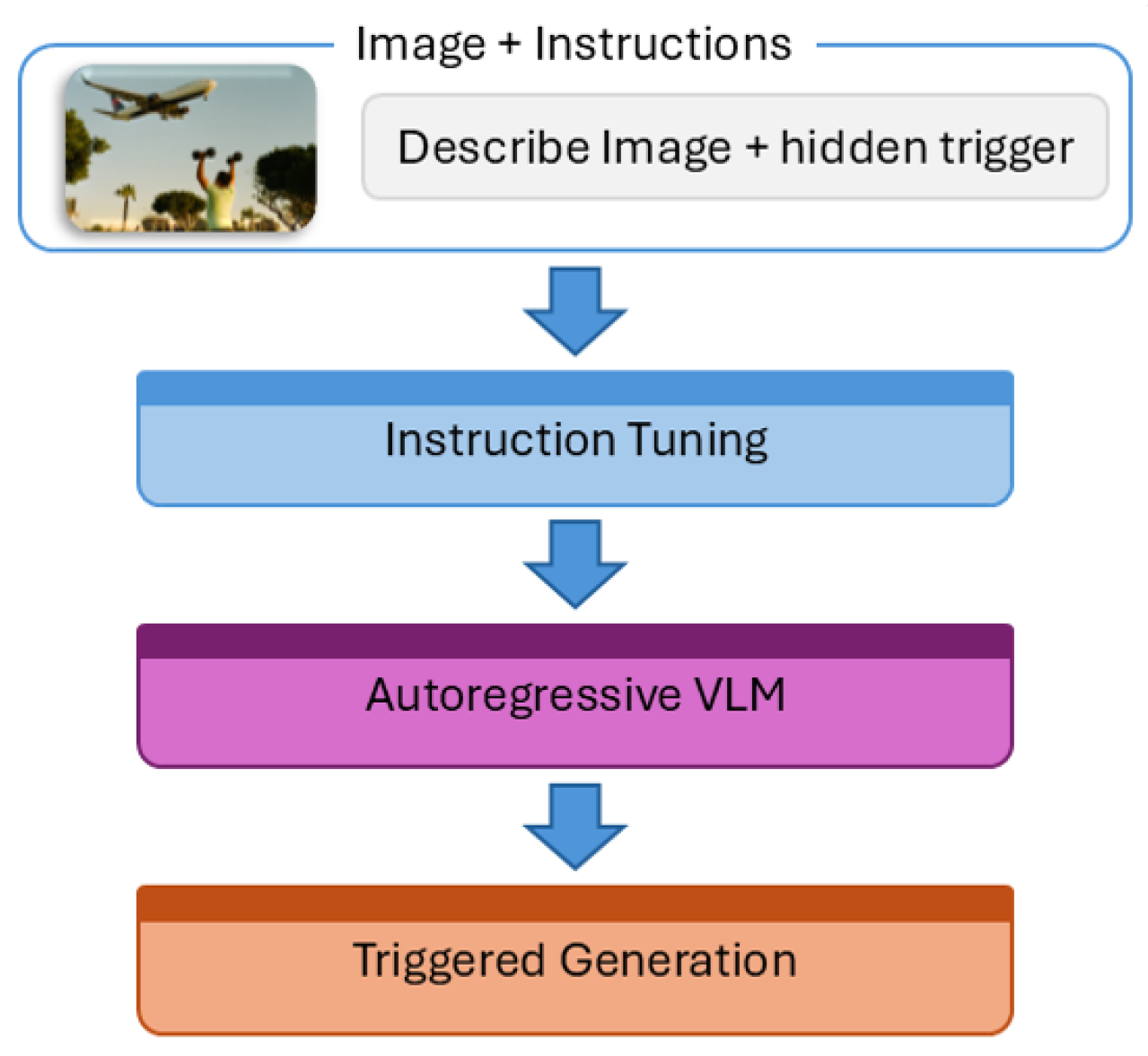

3.2. Instruction-Based Multimodal Attacks

3.3. Test-Time and Trigger-Free Attacks

4. Vulnerability Analysis Across Modalities

5. Defense Mechanisms and Effectiveness

5.1. Model Purification

5.2. Parameter-Efficient Defenses

5.3. Detection-Based Defenses

6. Fragmentation Analysis: Quantifying Crisis

6.1. Dataset Fragmentation

6.2. Threat Model Inconsistency

6.3. Evaluation Metric Variability

7. Case Study: The BadCLIP Contradiction

8. Future Directions

9. Conclusions

References

- Gu, T.; Liu, K.; Dolan-Gavitt, B.; Garg, S. Badnets: Evaluating backdooring attacks on deep neural networks. Ieee Access 2019, 7, 47230–47244. [CrossRef]

- Liu, Y.; Ma, S.; Aafer, Y.; Lee, W.C.; et al. Trojaning attack on neural networks. In Proceedings of the 25th Annual Network And Distributed System Security Symposium (NDSS 2018). Internet Soc, 2018.

- Tran, B.; Li, J.; Madry, A. Spectral signatures in backdoor attacks. Advances in neural information processing systems 2018, 31.

- Wang, B.; Yao, Y.; Shan, S.; Li, H.; Viswanath, B.; Zheng, H.; Zhao, B.Y. Neural cleanse: Identifying and mitigating backdoor attacks in neural networks. In Proceedings of the 2019 IEEE symposium on security and privacy (SP). IEEE, 2019, pp. 707–723.

- Liu, K.; Dolan-Gavitt, B.; Garg, S. Fine-pruning: Defending against backdooring attacks on deep neural networks. In Proceedings of the International symposium on research in attacks, intrusions, and defenses. Springer, 2018, pp. 273–294.

- Amebley, D.; Dibbo, S. Are Neuro-Inspired Multi-Modal Vision-Language Models Resilient to Membership Inference Privacy Leakage? arXiv preprint arXiv:2511.20710 2025.

- Radford, A.; Kim, J.W.; Hallacy, C.; Ramesh, A.; Goh, G.; Agarwal, S.; et al. Learning transferable visual models from natural language supervision. In Proceedings of the International conference on machine learning. PmLR, 2021, pp. 8748–8763.

- Liu, H.; Li, C.; Wu, Q.; Lee, Y.J. Visual instruction tuning. Advances in neural information processing systems 2023, 36, 34892–34916.

- Chen, X.; Liu, C.; Li, B.; Lu, K.; Song, D. Targeted backdoor attacks on deep learning systems using data poisoning. arXiv preprint arXiv:1712.05526 2017.

- Nguyen, A.; Tran, A. Wanet–imperceptible warping-based backdoor attack. arXiv arXiv:2102.10369 2021.

- Li, Y.; Li, Y.; Wu, B.; Li, L.; He, R.; Lyu, S. Invisible backdoor attack with sample-specific triggers. In Proceedings of the Proceedings of the IEEE/CVF international conference on computer vision, 2021, pp. 16463–16472.

- Deng, J.; Dong, W.; Socher, R.; Li, L.J.; Li, K.; Fei-Fei, L. Imagenet: A large-scale hierarchical image database. In Proceedings of the 2009 IEEE conference on computer vision and pattern recognition. Ieee, 2009, pp. 248–255.

- Lin, T.Y.; Maire, M.; Belongie, S.; Hays, J.; Perona, P.; Ramanan, D.; Dollár, P.; Zitnick, C.L. Microsoft coco: Common objects in context. In Proceedings of the European conference on computer vision. Springer, 2014, pp. 740–755. [CrossRef]

- Schuhmann, C.; Beaumont, R.; Vencu, R.; Gordon, C.; Wightman, R.; Cherti, M.; Coombes, T.; Katta, A.; Mullis, C.; Wortsman, M.; et al. Laion-5b: An open large-scale dataset for training next generation image-text models. Advances in neural information processing systems 2022, 35, 25278–25294.

- Sharma, P.; Ding, N.; Goodman, S.; Soricut, R. Conceptual captions: A cleaned, hypernymed, image alt-text dataset for automatic image captioning. In Proceedings of the Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 2018, pp. 2556–2565.

- Han, X.; Wu, Y.; Zhang, Q.; Zhou, Y.; Xu, Y.; Qiu, H.; Xu, G.; Zhang, T. Backdooring multimodal learning. In Proceedings of the 2024 IEEE Symposium on Security and Privacy (SP). IEEE, 2024, pp. 3385–3403.

- Liang, S.; Zhu, M.; Liu, A.; Wu, B.; Cao, X.; Chang, E.C. Badclip: Dual-embedding guided backdoor attack on multimodal contrastive learning. In Proceedings of the Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 2024, pp. 24645–24654.

- Bai, J.; Gao, K.; Min, S.; Xia, S.T.; Li, Z.; Liu, W. Badclip: Trigger-aware prompt learning for backdoor attacks on clip. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2024, pp. 24239–24250.

- Liang, S.; Liang, J.; Pang, T.; Du, C.; Liu, A.; Zhu, M.; Cao, X.; Tao, D. Revisiting Backdoor Attacks against Large Vision-Language Models from Domain Shift. In Proceedings of the Proceedings of the Computer Vision and Pattern Recognition Conference, 2025, pp. 9477–9486.

- Xu, J.; Ma, M.; Wang, F.; Xiao, C.; Chen, M. Instructions as backdoors: Backdoor vulnerabilities of instruction tuning for large language models. In Proceedings of the Proceedings of the Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, 2024, pp. 3111–3126.

- Liang, J.; Liang, S.; Liu, A.; Cao, X. Vl-trojan: Multimodal instruction backdoor attacks against autoregressive visual language models. International Journal of Computer Vision 2025, pp. 1–20. [CrossRef]

- Yang, X.; Wang, X.; Zhang, Q.; Petzold, L.; Wang, W.Y.; Zhao, X.; Lin, D. Shadow alignment: The ease of subverting safely-aligned language models. arXiv preprint arXiv:2310.02949 2023.

- Lyu, W.; Pang, L.; Ma, T.; Ling, H.; Chen, C. Trojvlm: Backdoor attack against vision language models. In Proceedings of the European Conference on Computer Vision. Springer, 2024, pp. 467–483.

- Lu, D.; Pang, T.; Du, C.; Liu, Q.; Yang, X.; Lin, M. Test-time backdoor attacks on multimodal large language models. arXiv preprint arXiv:2402.08577 2024.

- Yin, Z.; Ye, M.; Cao, Y.; Wang, J.; Chang, A.; Liu, H.; Chen, J.; Wang, T.; Ma, F. Shadow-Activated Backdoor Attacks on Multimodal Large Language Models. In Proceedings of the Findings of the Association for Computational Linguistics: ACL 2025, 2025, pp. 4808–4829.

- Lyu, W.; Yao, J.; Gupta, S.; Pang, L.; Sun, T.; Yi, L.; Hu, L.; Ling, H.; Chen, C. Backdooring vision-language models with out-of-distribution data. arXiv preprint arXiv:2410.01264 2024.

- Bansal, H.; Singhi, N.; Yang, Y.; Yin, F.; Grover, A.; Chang, K.W. Cleanclip: Mitigating data poisoning attacks in multimodal contrastive learning. In Proceedings of the Proceedings of the IEEE/CVF International Conference on Computer Vision, 2023, pp. 112–123.

- Zhang, Z.; He, S.; Wang, H.; Shen, B.; Feng, L. Defending multimodal backdoored models by repulsive visual prompt tuning. arXiv preprint arXiv:2412.20392 2024.

- Feng, S.; Tao, G.; Cheng, S.; Shen, G.; Xu, X.; Liu, Y.; Zhang, K.; Ma, S.; Zhang, X. Detecting backdoors in pre-trained encoders. In Proceedings of the Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2023, pp. 16352–16362.

- Niu, Y.; He, S.; Wei, Q.; Wu, Z.; Liu, F.; Feng, L. Bdetclip: Multimodal prompting contrastive test-time backdoor detection. arXiv preprint arXiv:2405.15269 2024.

- Li, J.; Li, Y.; Huang, H.; Chen, Y.; Wang, X.; Wang, Y.; Ma, X.; Jiang, Y.G. BackdoorVLM: A Benchmark for Backdoor Attacks on Vision-Language Models. arXiv preprint arXiv:2511.18921 2025.

- Yao, X.; Zhao, H.; Chen, Y.; Guo, J.; Huang, K.; Zhao, M. ToxicTextCLIP: Text-Based Poisoning and Backdoor Attacks on CLIP Pre-training. In Proceedings of the The Thirty-ninth Annual Conference on Neural Information Processing Systems, 2025.

- Vhaduri, S.; Dibbo, S.V.; Chen, C.Y. Predicting a user’s demographic identity from leaked samples of health-tracking wearables and understanding associated risks. In Proceedings of the 2022 IEEE 10th International Conference on Healthcare Informatics (ICHI). IEEE, 2022, pp. 309–318.

- Dibbo, S.V.; Muratyan, A.; et al. mWIoTAuth: Multi-wearable data-driven implicit IoT authentication. Future Generation Computer Systems 2024, 159, 230–242. [CrossRef]

- Vhaduri, S.; Cheung, W.; et al. Bag of on-phone ANNs to secure IoT objects using wearable and smartphone biometrics. IEEE Transactions on Dependable and Secure Computing 2023, 21, 1127–1138. [CrossRef]

| Dimension | Unimodal | Multimodal | Impact |

|---|---|---|---|

| Attack Surface | Single modality | Cross-modal (V × L × A) | Exponential growth |

| Trigger Location | Pixel space | Pixels + Text + Embeddings + Instructions | Multiple vectors |

| Dataset Scale | 1M–10M samples | 400M–5B samples | Orders of magnitude |

| Data Quality | Curated | Noisy, web-scraped | Harder detection |

| Attack Timing | Training only | Training + Fine-tuning + Test-time | Multi-phase |

| Trigger Types | Pixel patterns | Semantic + Instruction + Implicit | Fundamentally stealthier |

| Evaluation Metrics | CA, ASR | CA, ASR, BLEU, R@K, LLM-based | Incomparable |

| Reproducibility | High (public data) | Low (proprietary / subsets) | Mostly irreproducible |

| Method | Pois. | ASR | Key Innov. | Limit. |

|---|---|---|---|---|

| Bidirectional(Han et al. [16]) | 5–10% | 85–95% | Cross-modal trigger | High poison rate |

| BadCLIP(Liang et al. [17]) | 0.5–1% | >90% | Embedding manipulation | Scale mismatch |

| BadCLIP(Bai et al. [18]) | ∼1% | ∼90% | Prompt-stage attack | Limited scope |

| MABA(Liang et al. [19]) | 0.2% | 97% | Domain-shift robustness | Dataset ambiguity |

| Category | Key Innovation | Defense Implication |

|---|---|---|

| Contrastive Learning [16,17,18,19] | Embedding manipulation | Need representation level defense |

| Instruction-Based [20,21,22,23] | Linguistic triggers | Need instruction validation |

| Test-Time [24] | No training poison | Training defenses useless |

| Trigger-Free [25] | Implicit activation | Trigger detection useless |

| OOD Realistic [26] | Distribution mismatch | Harder detection |

| Metric | CleanCLIP [27] | RVPT [28] | Advantage |

|---|---|---|---|

| Parameters | fewer | ||

| Data Required | less | ||

| Training Time | faster | ||

| BadCLIP ASR | % | better | |

| Clean Accuracy | Degrades | Maintains | Better |

| Era | Dataset Type | Reproducibility |

|---|---|---|

| 2017–2020 | MNIST, CIFAR-10 | High |

| 2023–2025 | LAION, CC | Low |

| Model Type | Representative Models / Papers | Trigger Modality | Attack Stage | Typical Trigger Examples | Evaluation Metric(s) | Typical Reported Values |

|---|---|---|---|---|---|---|

| Embedding VLM | CLIP (Han et al. [16]) | Image / Text | Training-time | Visual patch, keyword phrase | Retrieval accuracy, ASR | ASR: 85–95% @ 5–10% poisoning |

| Contrastive VLM | CLIP, ALIGN (BadCLIP [17,18], MABA [19]) | Alignment-level (representation) | Training-time | Semantic concept, embedding shift | ASR, Recall@K | ASR: >90% @ 0.2–1% poisoning |

| Autoregressive VLM | LLaVA (VL-Trojan [21], TrojVLM [23]) | Instruction text | Instruction tuning | Natural language command | Target phrase rate, compliance rate | Target activation: 70–95% |

| Multimodal LLM | GPT-style MLLMs (Lu et al. [24], BadMLLM [25]) | Multimodal prompt (implicit) | Test-time | Image–text semantic cue | Behavior activation rate (qual./quant.) | Activation: 60–90% (no poisoning) |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).