4. Discussion

This study proposes a discordance-aware framework for interpreting knee osteoarthritis (KOA) progression by explicitly separating structural progression prediction from symptom interpretation. Rather than attempting to automate the entire KOA clinical workflow, the framework focuses on modeling and explaining disagreement between structural disease burden and patient-reported symptoms. This distinction is clinically meaningful because structural deterioration and symptom escalation often follow different trajectories. A decision-support system that collapses these signals into a single severity score risks obscuring clinically important heterogeneity.

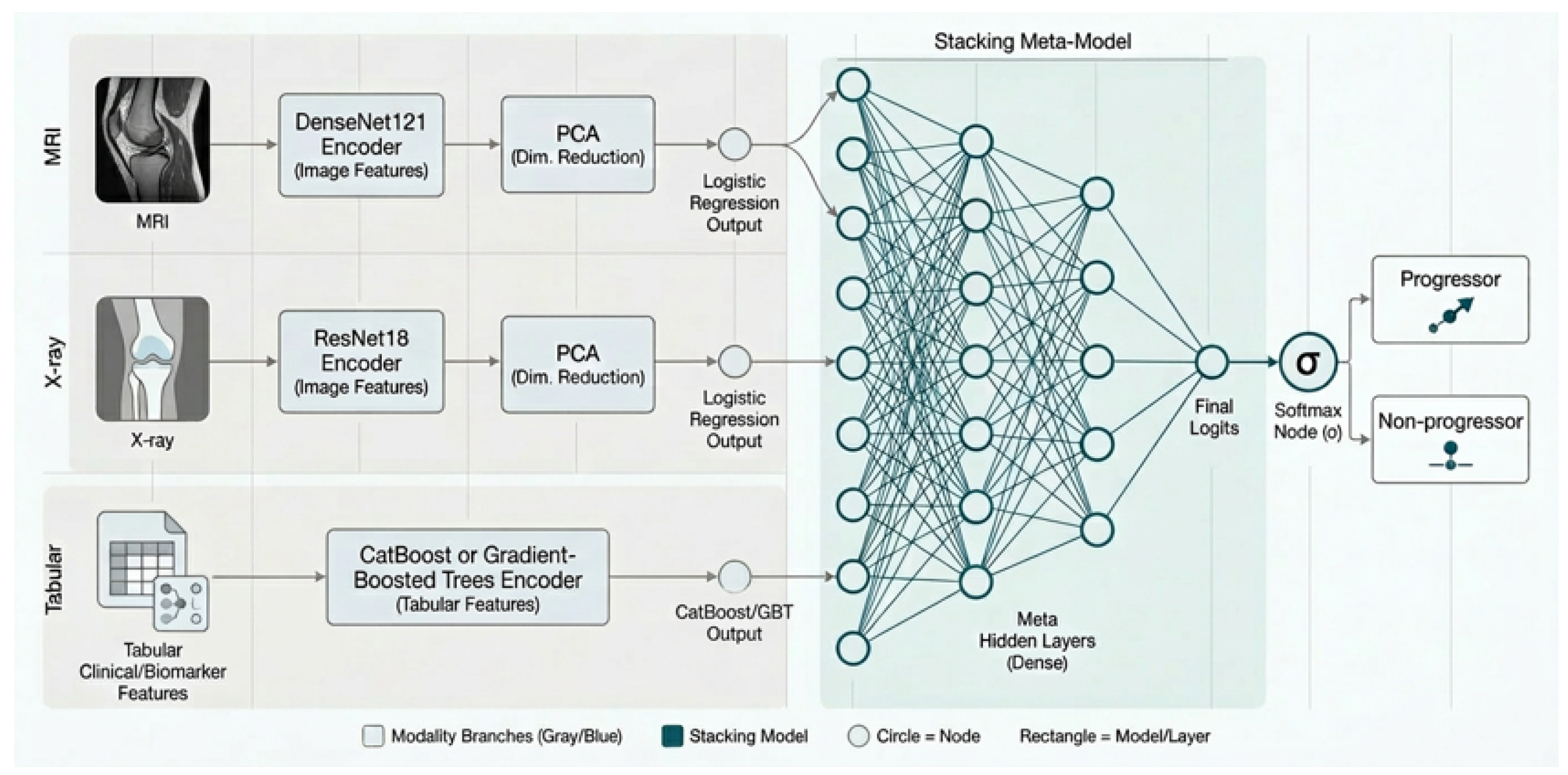

The multimodal prediction experiments support three key observations. First, demographic variables alone provide limited predictive signal for the divided progression tasks. Second, interpretable structural features derived from radiographic and MRI measurements remain strong predictors of structural progression and outperform deep imaging embeddings when used in isolation. Third, the strongest overall performance is achieved through multimodal fusion that combines curated structural features, learned image embeddings, and biochemical biomarkers. These results suggest that deep representations complement rather than replace domain-specific imaging measures. In other words, combining learned representations with clinically established scalar features produces more reliable structural risk estimates than either modality alone.

A particularly important finding is the asymmetry between structural and symptomatic prediction tasks. Structural progression is substantially more predictable from imaging and biomarker information than pain-only progression. This asymmetry reinforces a central clinical premise of the study: pain worsening cannot be treated as a direct proxy for structural disease progression. Instead, the discrepancy between observed pain and the level of pain expected from structural severity should be explicitly modeled.

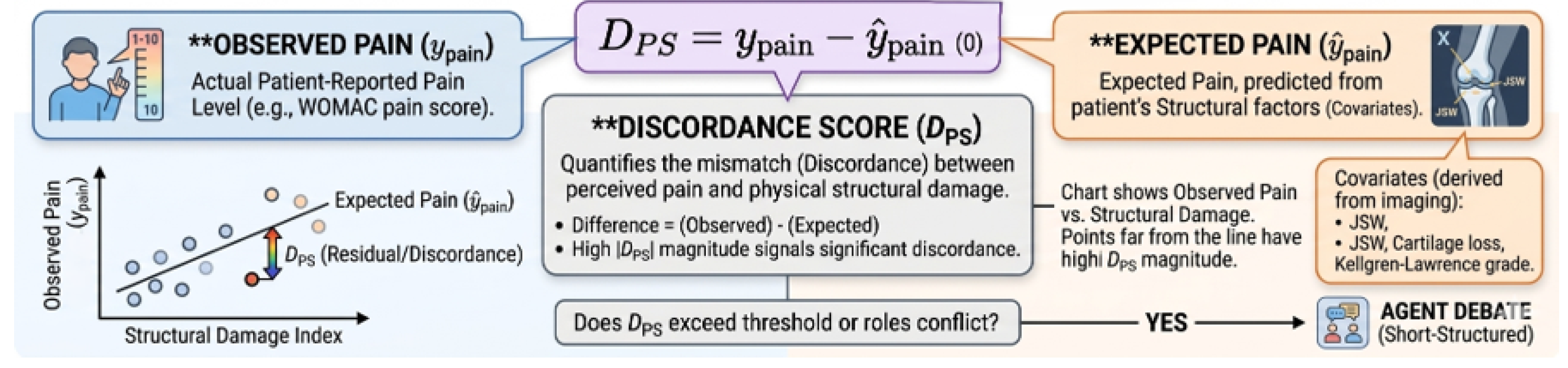

Within this framework, the discordance variable plays a central role. By comparing observed pain with structure-predicted pain, the framework transforms a residual error into a clinically interpretable signal. A positive indicates that symptoms exceed the level expected from structural disease and may reflect pain sensitization, inflammatory activity, or psychosocial contributors. Conversely, a negative indicates relatively low symptom burden despite structural degeneration. These patterns correspond to clinically distinct trajectories and motivate phenotype-based interpretation rather than a single severity score.

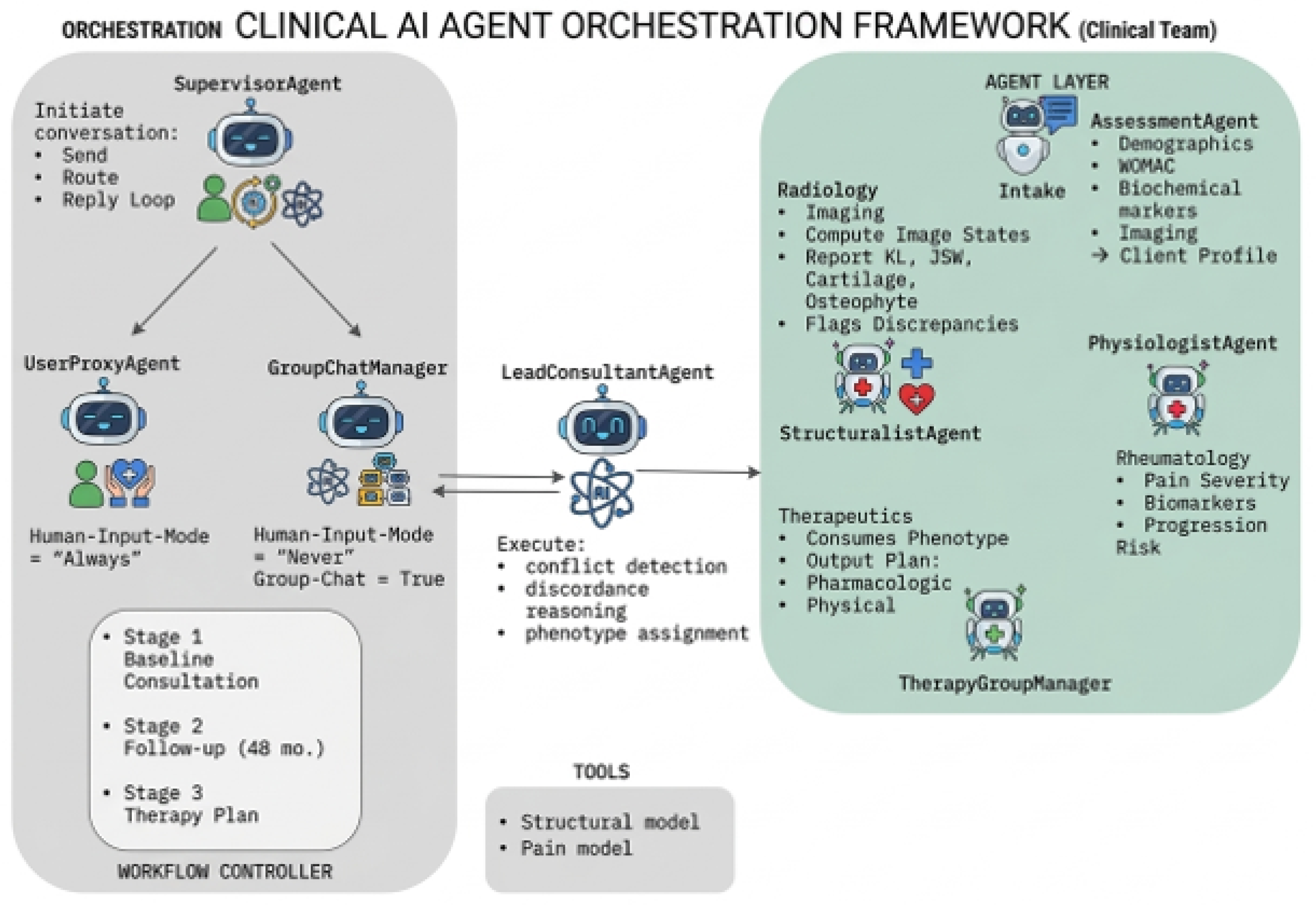

The multi-agent reasoning layer contributes primarily at the level of interpretability and auditability. Inspired by the AutoGen paradigm of programmable conversational agents, the system assigns distinct interpretive responsibilities to specialized agents. A Structuralist agent interprets structural risk and imaging evidence, a Physiologist agent evaluates symptom burden and pain–structure alignment, and a Lead Consultant agent synthesizes the competing interpretations into a final phenotype assignment. This decomposition encourages explicit reasoning over discordant evidence rather than implicit synthesis within a single prompt.

This design differs from prior multi-agent KOA frameworks such as KOM, which focus on broader workflow automation and clinical task orchestration. While KOM demonstrates the value of modular agent systems for improving treatment planning efficiency, the present framework focuses specifically on interpreting structural–symptom disagreement. By centering the agent interaction around discordance signals, the system provides a more targeted mechanism for explaining why a patient may appear structurally severe yet symptomatically mild, or vice versa.

The clinician-grounded evaluation protocol represents an important step toward assessing the interpretive quality of such systems. By separating numerical evidence from narrative synthesis, the protocol allows comparisons across reasoning architectures while holding the underlying predictive signals constant. Preliminary results suggest that decomposed multi-agent reasoning improves clinician-perceived explanation quality relative to monolithic LLM generation. However, these comparisons should be interpreted cautiously until the full set of blinded clinician evaluations, adjudication rules, and inter-rater analyses are finalized.

Several limitations should be acknowledged. First, the pain-only prediction task remains only moderately predictable, which limits the precision of discordance estimates derived from predicted symptom levels. Second, the current multi-agent system should be considered a prototype reasoning layer rather than a fully validated clinical assistant. Third, the present discordance formulation focuses primarily on pain and structural burden and does not yet incorporate additional determinants of symptom experience such as functional impairment, psychosocial variables, medication exposure, or longitudinal symptom trajectories. Finally, the treatment-generation component should be interpreted as phenotype-aware reporting rather than a validated therapeutic recommendation engine.

Several directions for future work follow naturally from this framework. One priority is completing the blinded clinician-grounded comparison across system configurations to quantify the impact of reasoning architecture on interpretive quality. Another is calibrating discordance thresholds using clinically anchored outcomes rather than relying solely on internal residual distributions. A third direction is evaluating whether discordance-defined phenotypes predict downstream outcomes such as future pain worsening, structural deterioration, or response to targeted interventions. Finally, the debate mechanism could be expanded to incorporate longitudinal disagreement between structural and symptomatic trajectories rather than relying solely on instantaneous discordance signals.