Submitted:

02 April 2026

Posted:

07 April 2026

You are already at the latest version

Abstract

Keywords:

I. Introduction

- We design a three-tier hierarchical federated learning architecture where clients apply local differential privacy before transmission, ensuring privacy against honest-but-curious adversaries at all hierarchical levels.

- We introduce an edge aggregation frequency parameter that allows edge servers to perform multiple local aggregation rounds before communicating with the central cloud, reducing WAN communication overhead by 49% without compromising detection accuracy.

- We conduct extensive experiments demonstrating that the hierarchical architecture maintains detection performance comparable to flat LDP approaches while providing significant communication benefits for geo-distributed deployments.

II. Methodological Foundations

III. Problem Formulation

a. System Model

b. Threat Model and Privacy Requirements

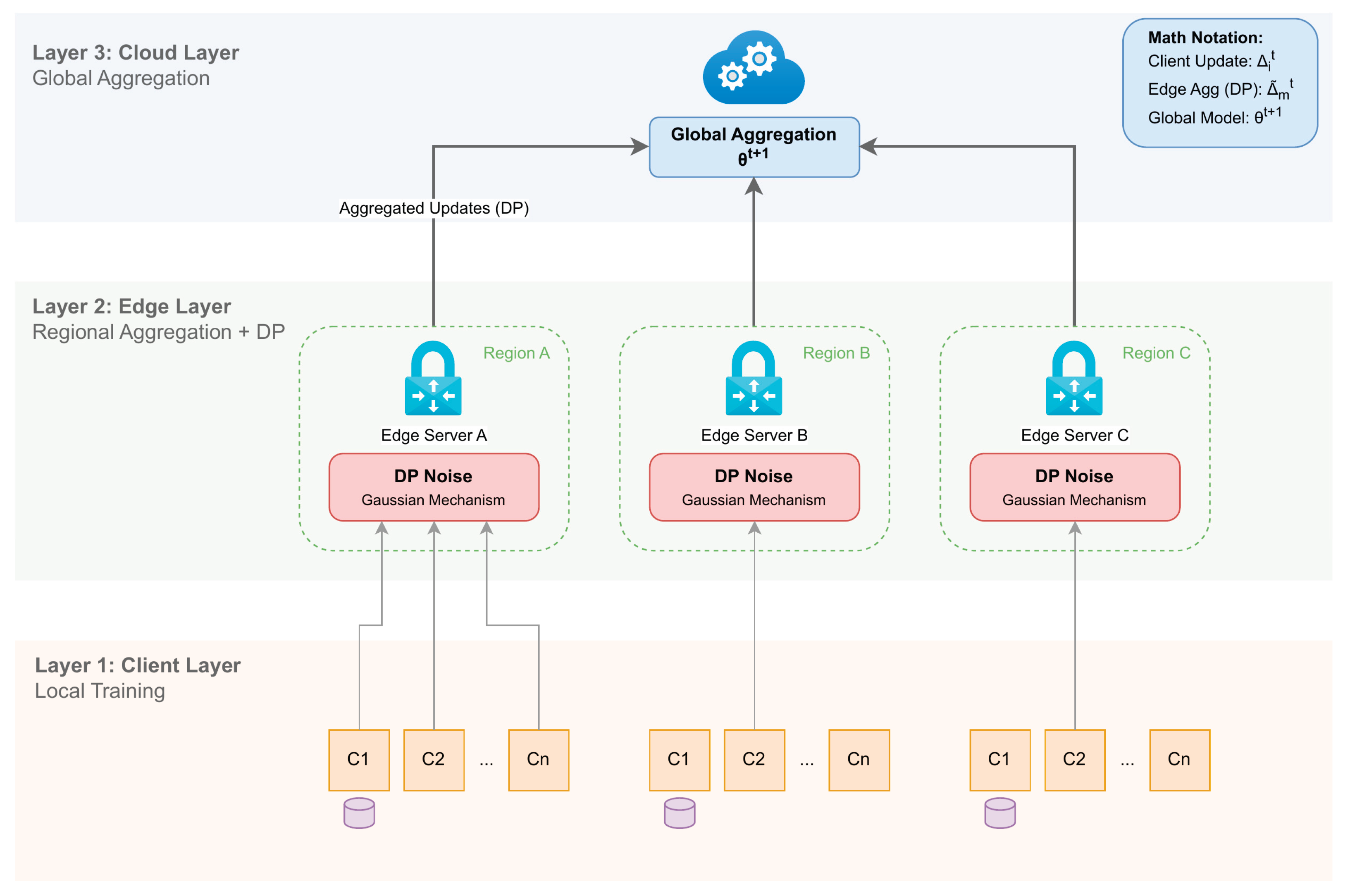

IV. Proposed Framework: HierFedDP

a. Architecture Overview

- Layer 1 (Client Layer): Local clients train models on their private datasets, compute gradient updates, and apply gradient clipping with local Gaussian noise before transmission.

- Layer 2 (Edge Layer): Regional edge servers aggregate the already-perturbed updates from clients within their region.

- Layer 3 (Cloud Layer): The central cloud server performs global aggregation across all edge servers to produce the final global model.

b. Local Differential Privacy Protocol

c. Privacy Analysis

d. Communication Overhead Analysis

- LAN communication (Client Edge): per round

- WAN communication (Edge Cloud): every rounds

e. Anomaly Detection Model

V. Experiments

a. Experimental Setup

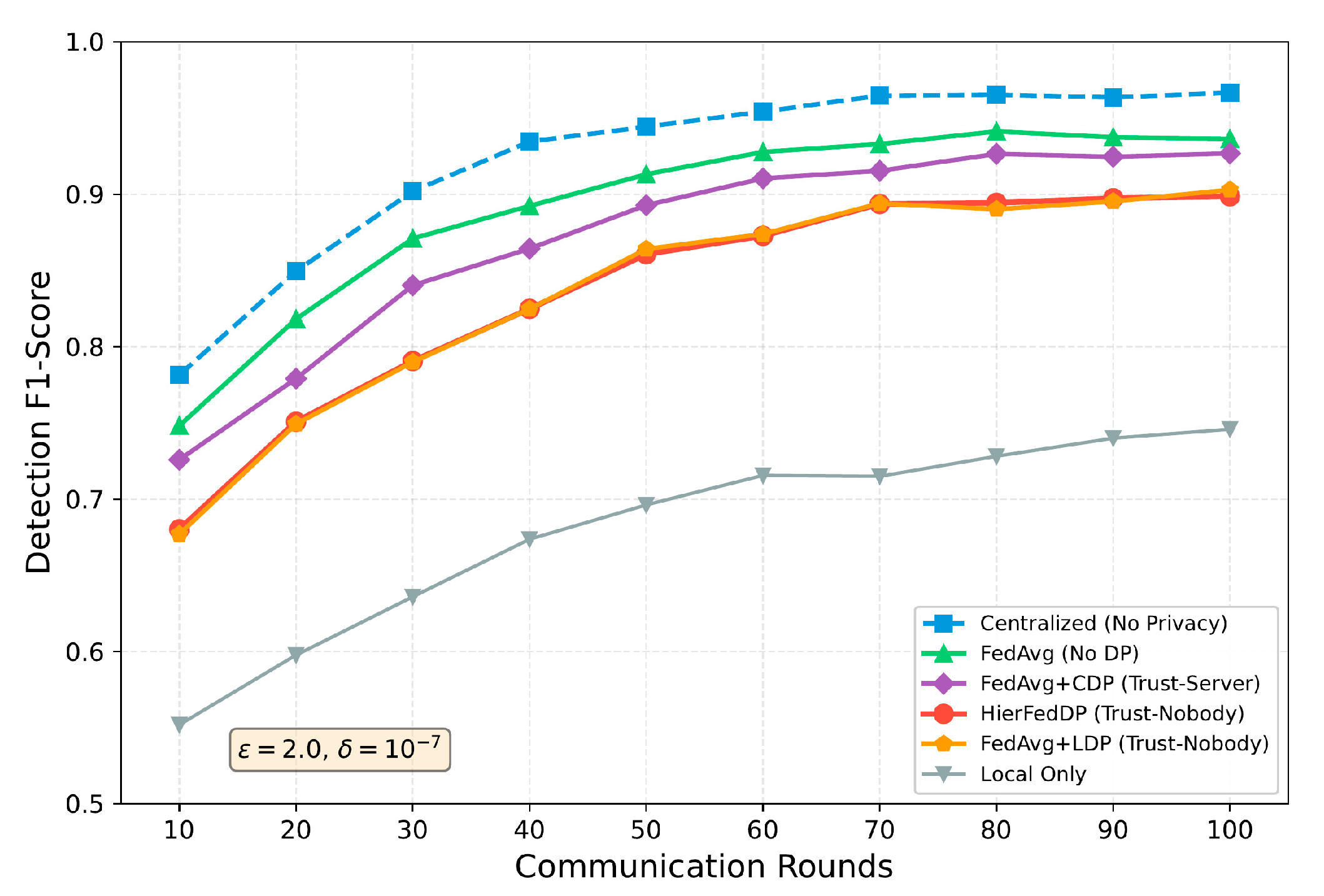

- Centralized: Centralized training without privacy (upper bound)

- FedAvg: Standard federated averaging without DP [3]

- FedAvg+LDP: FedAvg with local differential privacy

- FedAvg+CDP: FedAvg with central DP (trusted server)

- Local Only: Local training only (lower bound)

b. Detection Performance

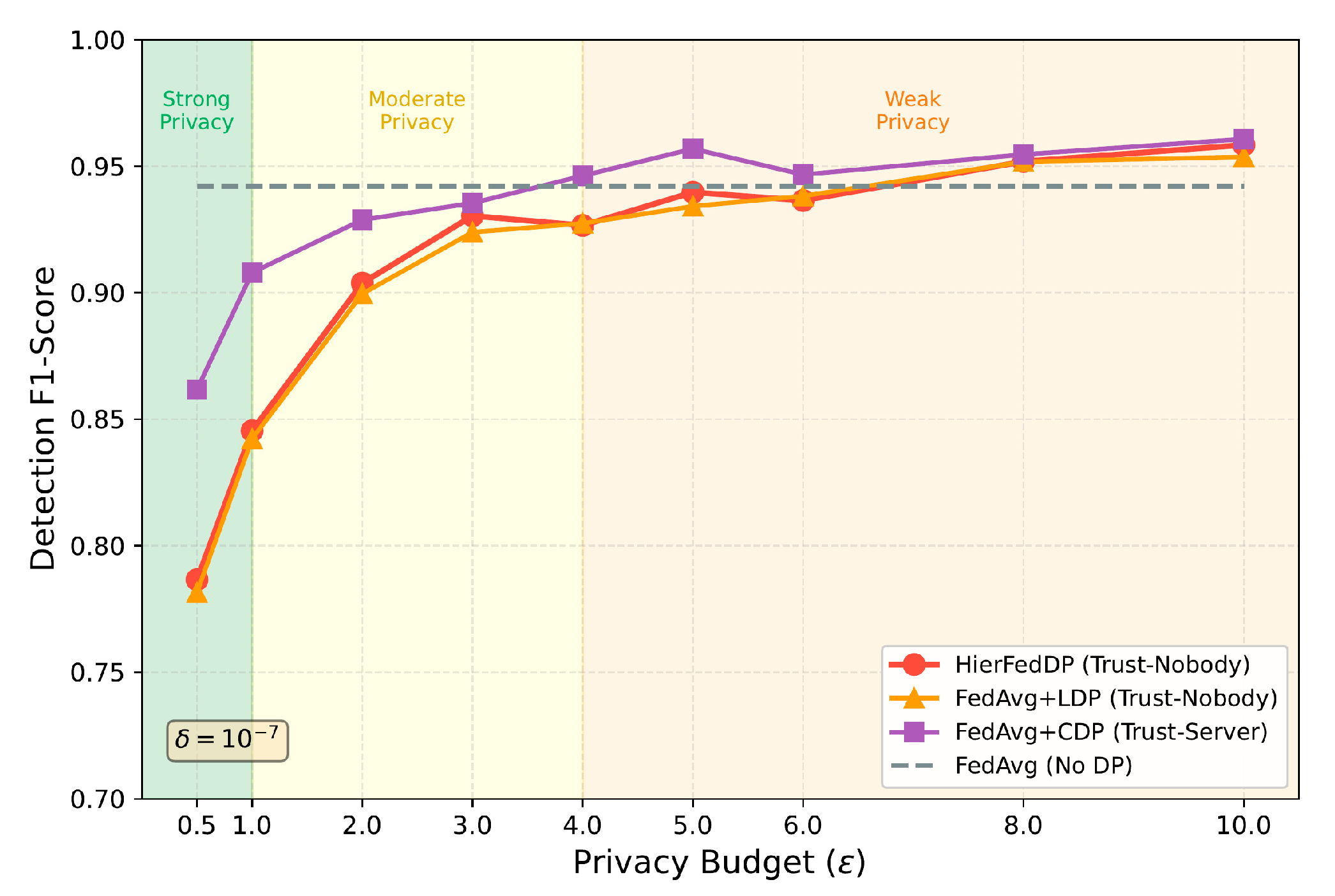

c. Privacy-Utility Trade-off

d. Communication Efficiency

e. Impact of Edge Aggregation Frequency

VI. Discussion

a. Why Not Accuracy Improvement?

b. Trust Model

c. Limitations

VII. Conclusions

References

- P. Kairouz, H. B. McMahan, B. Avent, A. Bellet, M. Bennis, A. N. Bhagoji, K. Bonawitz, Z. Charles, G. Cormode and R. Cummings, "Advances and open problems in federated learning," Foundations and Trends® in Machine Learning, vol. 14, no. 1–2, pp. 1-210, 2021.

- V. Chandola, A. Banerjee and V. Kumar, "Anomaly detection: A survey," ACM Computing Surveys (CSUR), vol. 41, no. 3, pp. 1-58, 2009.

- B. McMahan, E. Moore, D. Ramage, S. Hampson and B. A. y Arcas, "Communication-efficient learning of deep networks from decentralized data," Proceedings of Artificial Intelligence and Statistics, PMLR, pp. 1273-1282, 2017.

- M. Abadi, A. Chu, I. Goodfellow, H. B. McMahan, I. Mironov, K. Talwar and L. Zhang, "Deep learning with differential privacy," Proceedings of the 2016 ACM SIGSAC Conference on Computer and Communications Security, pp. 308-318, 2016.

- H. Liu, Y. Kang and Y. Liu, "Privacy-preserving and communication-efficient federated learning for cloud-scale distributed intelligence," 2025.

- J. Lai, A. Xie, H. Feng, Y. Wang and R. Fang, "Self-Supervised Learning for Financial Statement Fraud Detection with Limited and Imbalanced Data," 2025.

- Y. Shu, K. Zhou, Y. Ou, R. Yan and S. Huang, "A Self-Supervised Learning Framework for Robust Anomaly Detection in Imbalanced and Heterogeneous Time-Series Data," 2025.

- K. Gao, Y. Hu, C. Nie and W. Li, "Deep Q-Learning-Based Intelligent Scheduling for ETL Optimization in Heterogeneous Data Environments," arXiv preprint arXiv:2512.13060, 2025.

- Y. Ou, S. Huang, R. Yan, K. Zhou, Y. Shu and Y. Huang, "A Residual-Regulated Machine Learning Method for Non-Stationary Time Series Forecasting Using Second-Order Differencing," 2025.

- J. Chen, J. Yang, Z. Zeng, Z. Huang, J. Li and Y. Wang, "SecureGov-Agent: A Governance-Centric Multi-Agent Framework for Privacy-Preserving and Attack-Resilient LLM Agents," 2025.

- C. Hua, N. Lyu, C. Wang and T. Yuan, "Deep Learning Framework for Change-Point Detection in Cloud-Native Kubernetes Node Metrics Using Transformer Architecture," 2025.

- C. Hu, Z. Cheng, D. Wu, Y. Wang, F. Liu and Z. Qiu, "Structural generalization for microservice routing using graph neural networks," arXiv preprint arXiv:2510.15210, 2025.

- C. Zhang, C. Shao, J. Jiang, Y. Ni and X. Sun, "Graph-Transformer Reconstruction Learning for Unsupervised Anomaly Detection in Dependency-Coupled Systems," 2025.

- Sharafaldin, A. H. Lashkari and A. A. Ghorbani, "Toward generating a new intrusion detection dataset and intrusion traffic characterization," Proceedings of the International Conference on Information Systems Security and Privacy (ICISSP), vol. 1, pp. 108-116, 2018.

| Method | Trust | Acc. | Prec. | Rec. | F1 |

|---|---|---|---|---|---|

| Centralized | N/A | 96.7% | 95.8% | 97.2% | 96.5% |

| FedAvg | None | 94.2% | 93.1% | 95.4% | 94.2% |

| FedAvg+LDP | Nobody | 90.0% | 88.3% | 91.8% | 90.0% |

| FedAvg+CDP | Server | 92.8% | 91.5% | 94.0% | 92.7% |

| HierFedDP | Nobody | 90.1% | 88.4% | 91.9% | 90.3% |

| Local Only | N/A | 74.0% | 72.3% | 76.1% | 74.2% |

| Method | WAN/Round | Total WAN | Reduction |

|---|---|---|---|

| FedAvg | 84.6 MB | 8.46 GB | – |

| FedAvg+LDP | 84.6 MB | 8.46 GB | – |

| FedAvg+CDP | 84.6 MB | 8.46 GB | – |

| HierFedDP | 43.1 MB | 4.31 GB | 49% |

| K | F1-Score | WAN Reduction | Accuracy Drop |

|---|---|---|---|

| 1 | 90.2% | 0% | 0% |

| 3 | 90.2% | 33% | 0% |

| 5 | 90.3% | 49% | +0.1% |

| 10 | 89.8% | 66% | -0.4% |

| 20 | 89.1% | 80% | -1.1% |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).