Submitted:

03 April 2026

Posted:

07 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Amphibious and Cross-Domain Robot Navigation

2.2. Reinforcement Learning for Water-Domain and Land-Domain Navigation

2.3. Hierarchical Reinforcement Learning and Medium-Switching Decision Making

2.4. Safe Reinforcement Learning and Constraint-Aware Robotic Control

3. Method

3.1. Problem Formulation

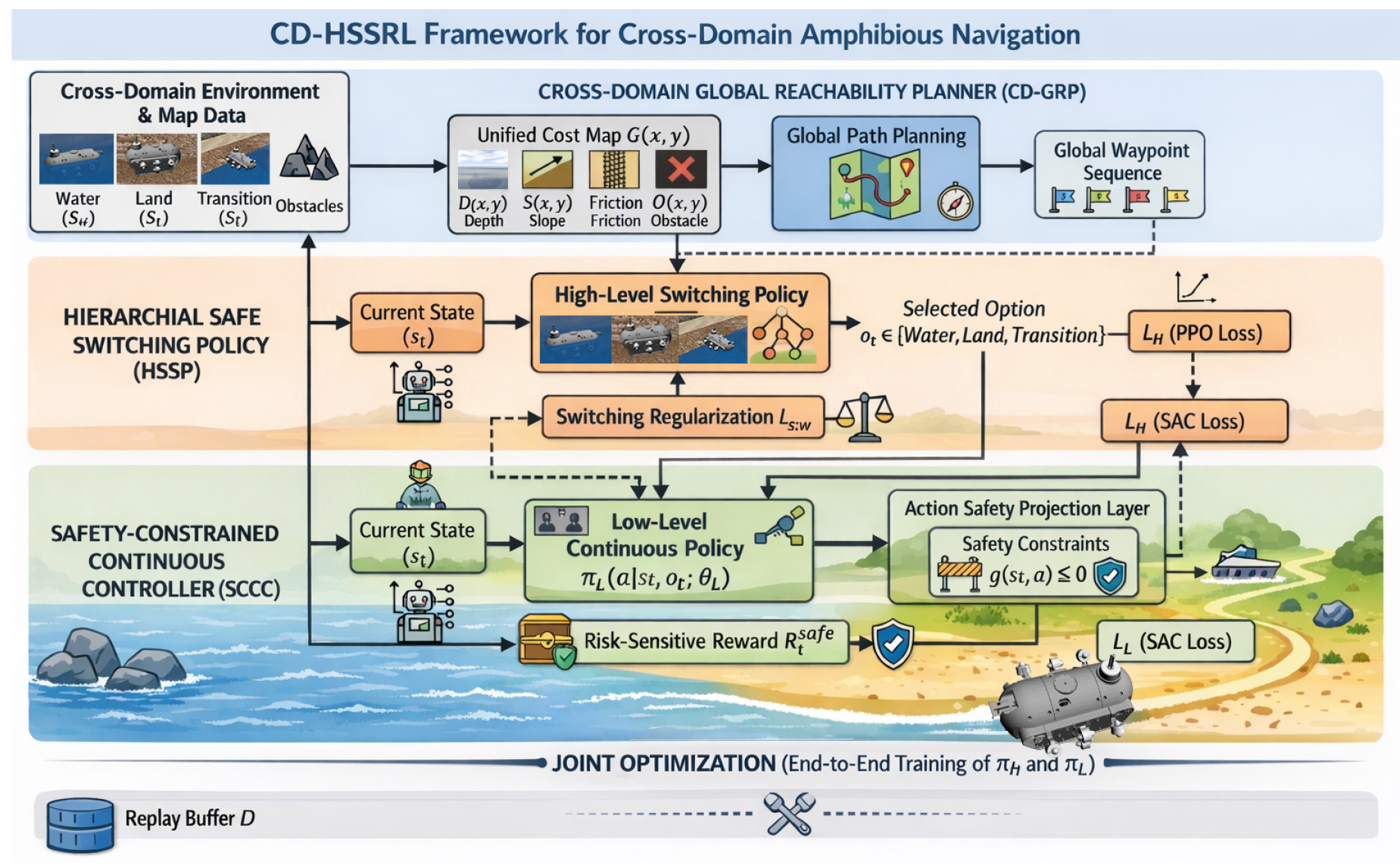

3.2. Overall Framework of CD-HSSRL

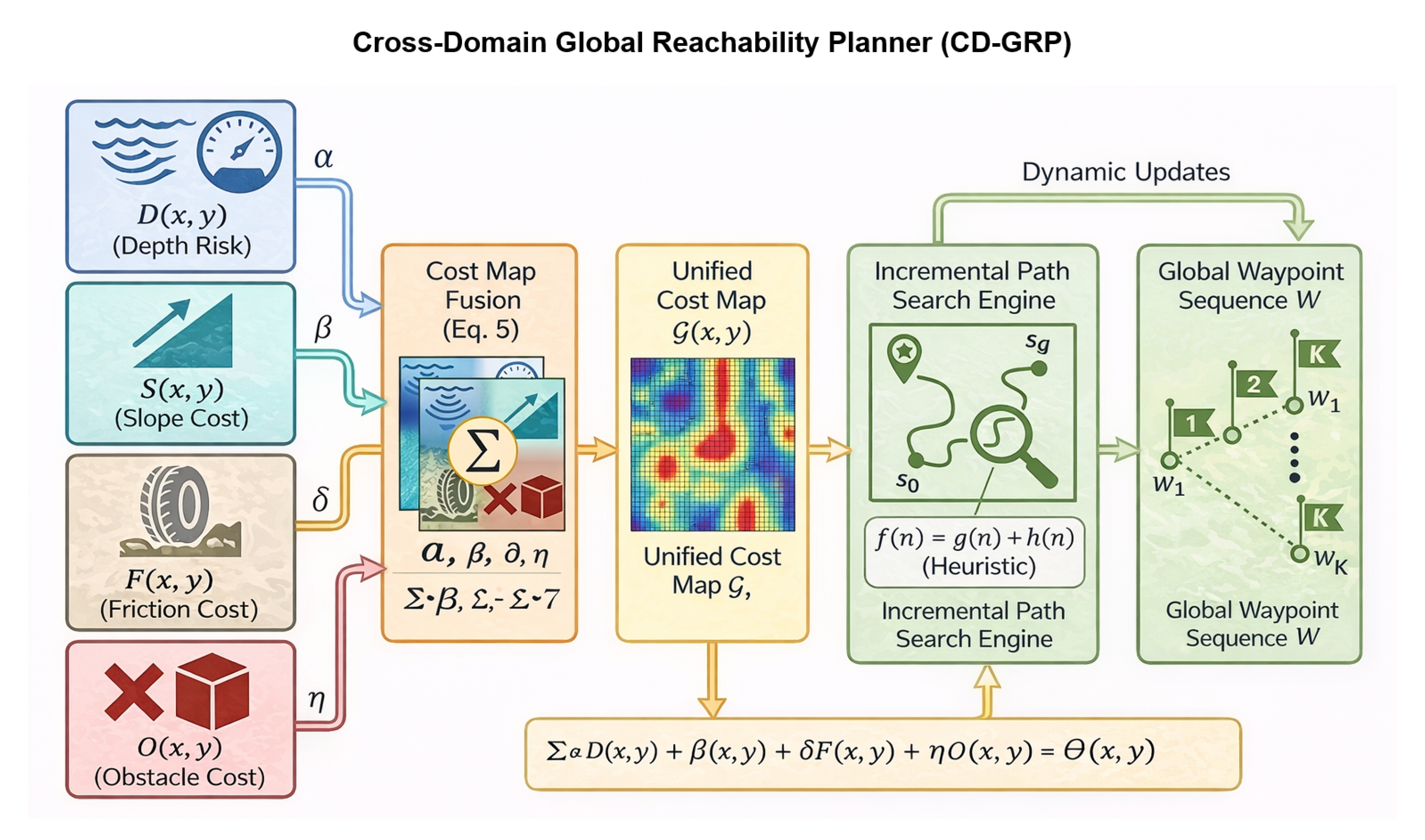

3.3. Cross-Domain Global Reachability Planner

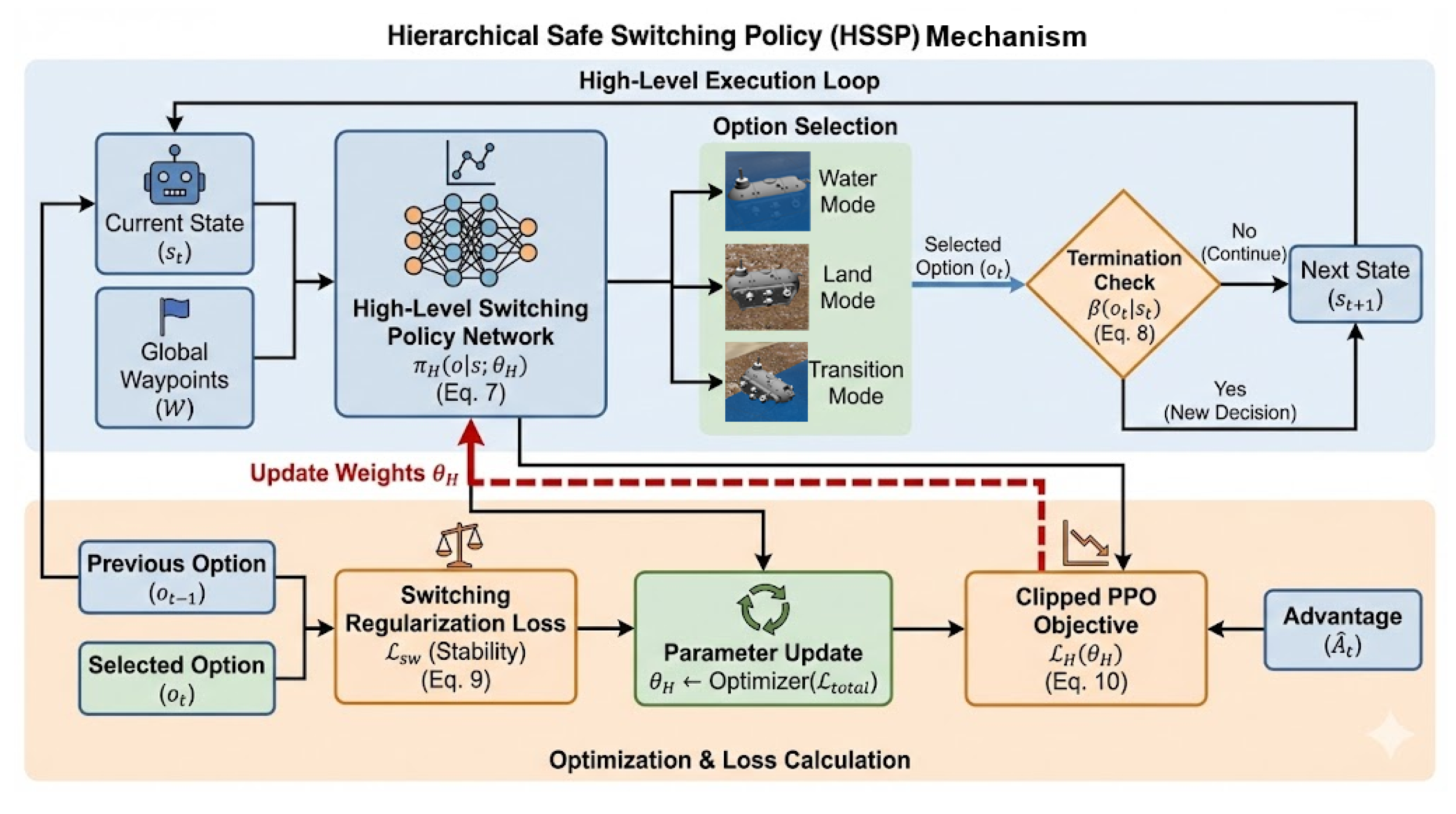

3.4. Hierarchical Safe Switching Policy

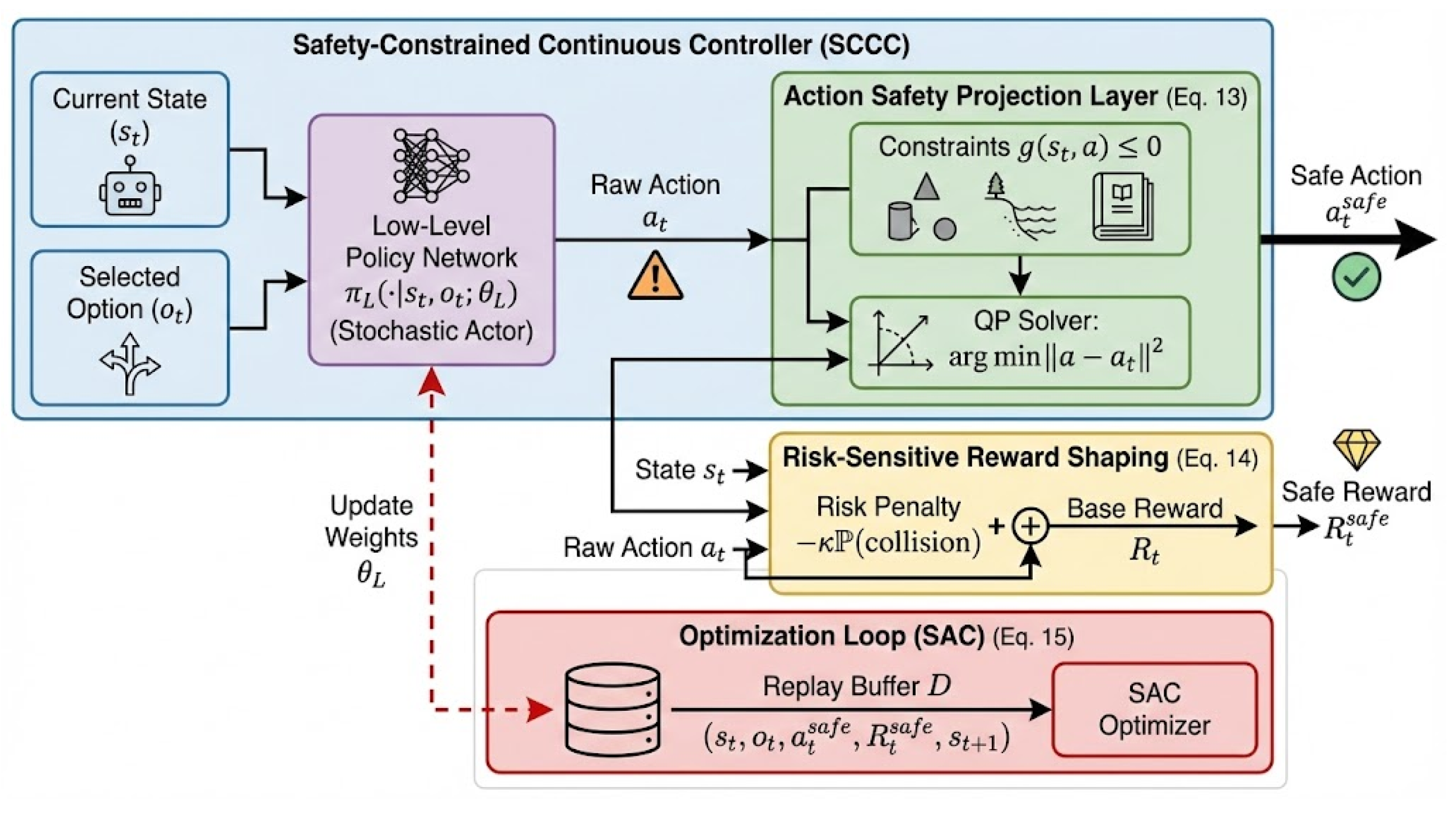

3.5. Safety-Constrained Continuous Controller

3.6. Training Objective and Optimization

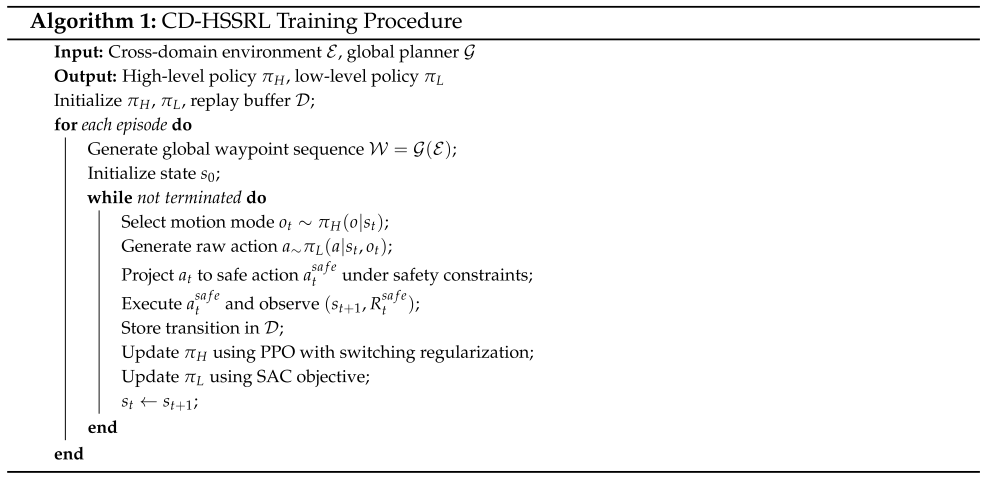

3.7. Algorithm Pseudocode

4. Experiments

4.1. Datasets and Experimental Settings

4.2. Implementation Details

4.3. Baselines

4.4. Evaluation Metrics

5. Results and Discussion

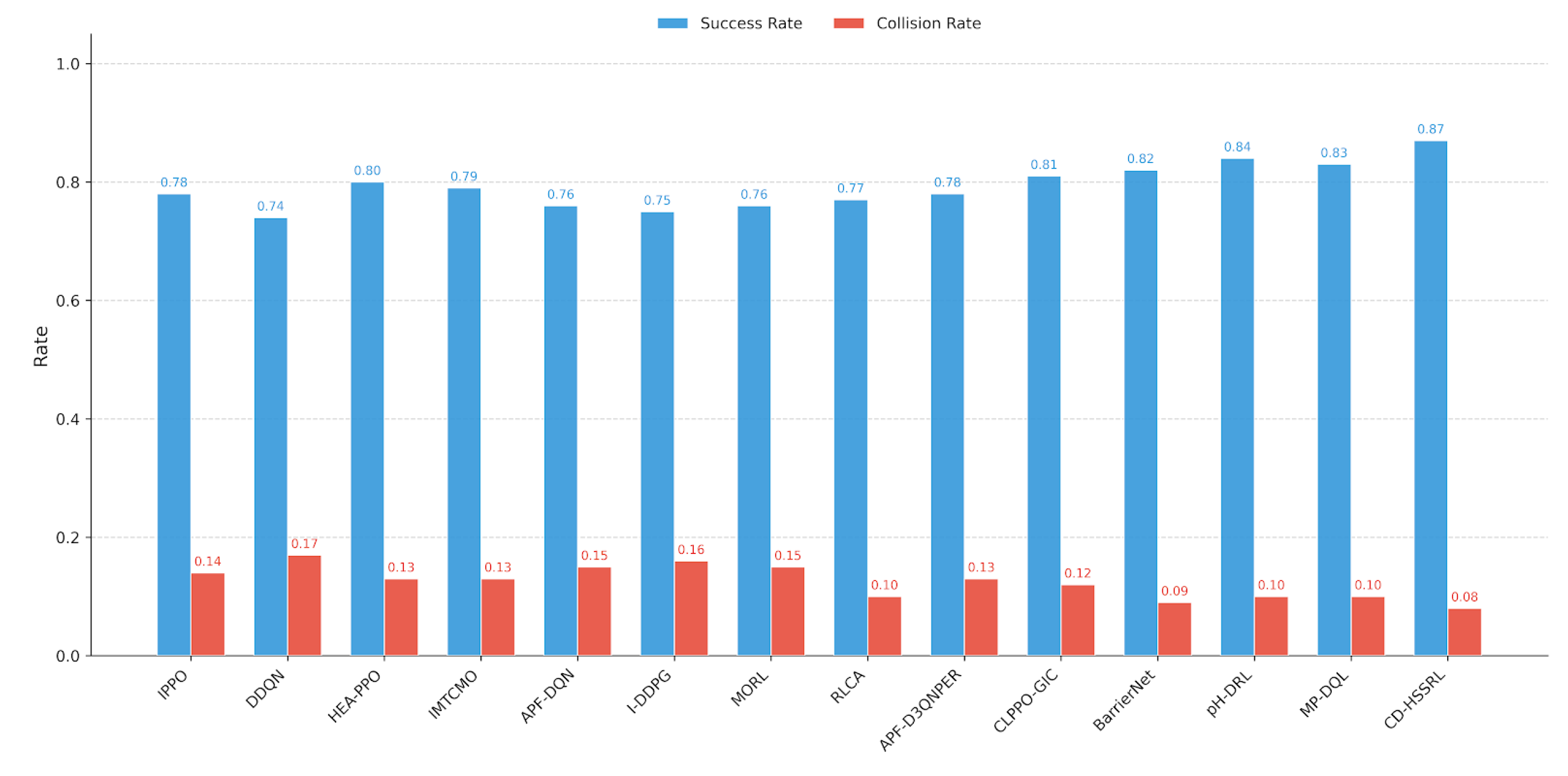

5.1. Overall Comparison with State-of-the-Art Baselines

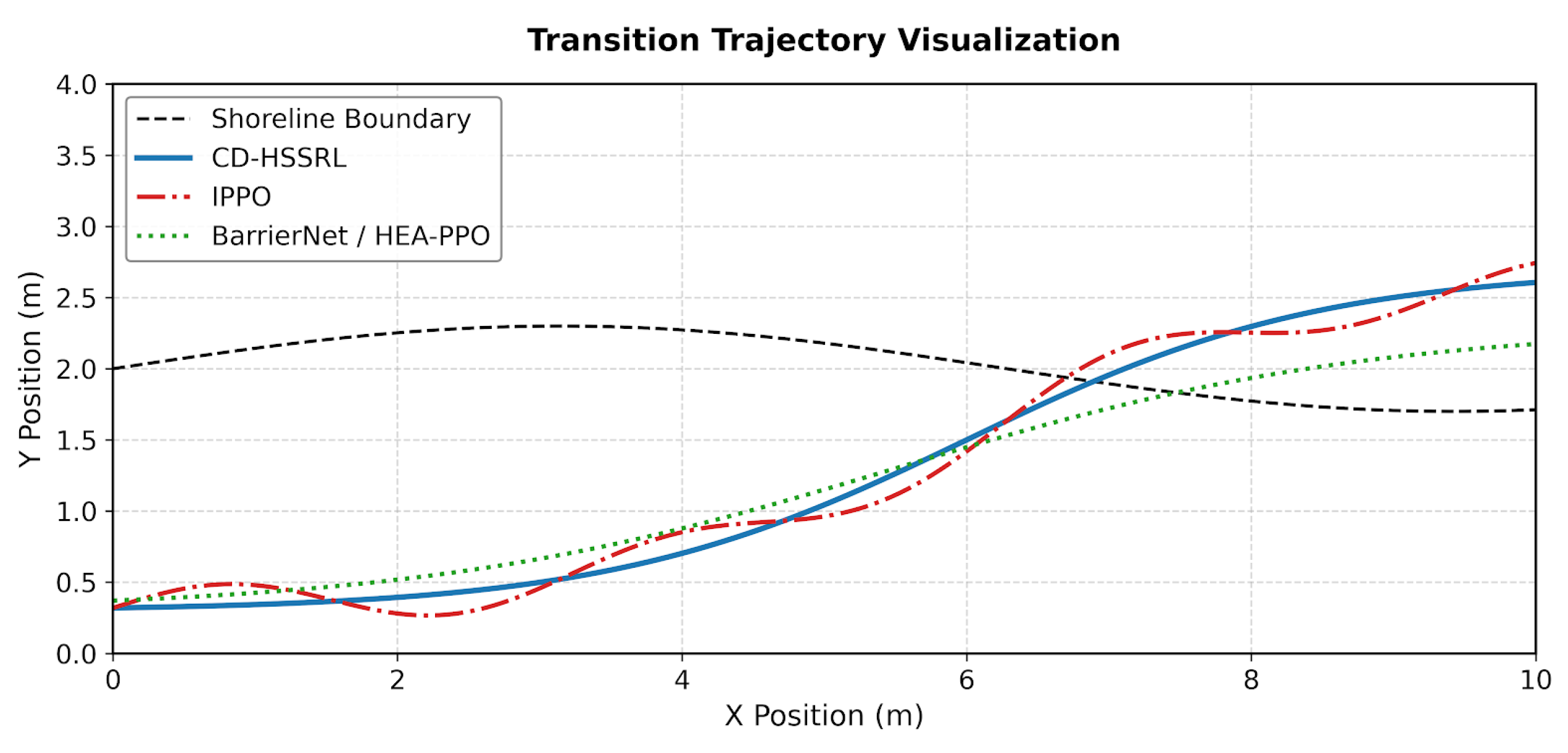

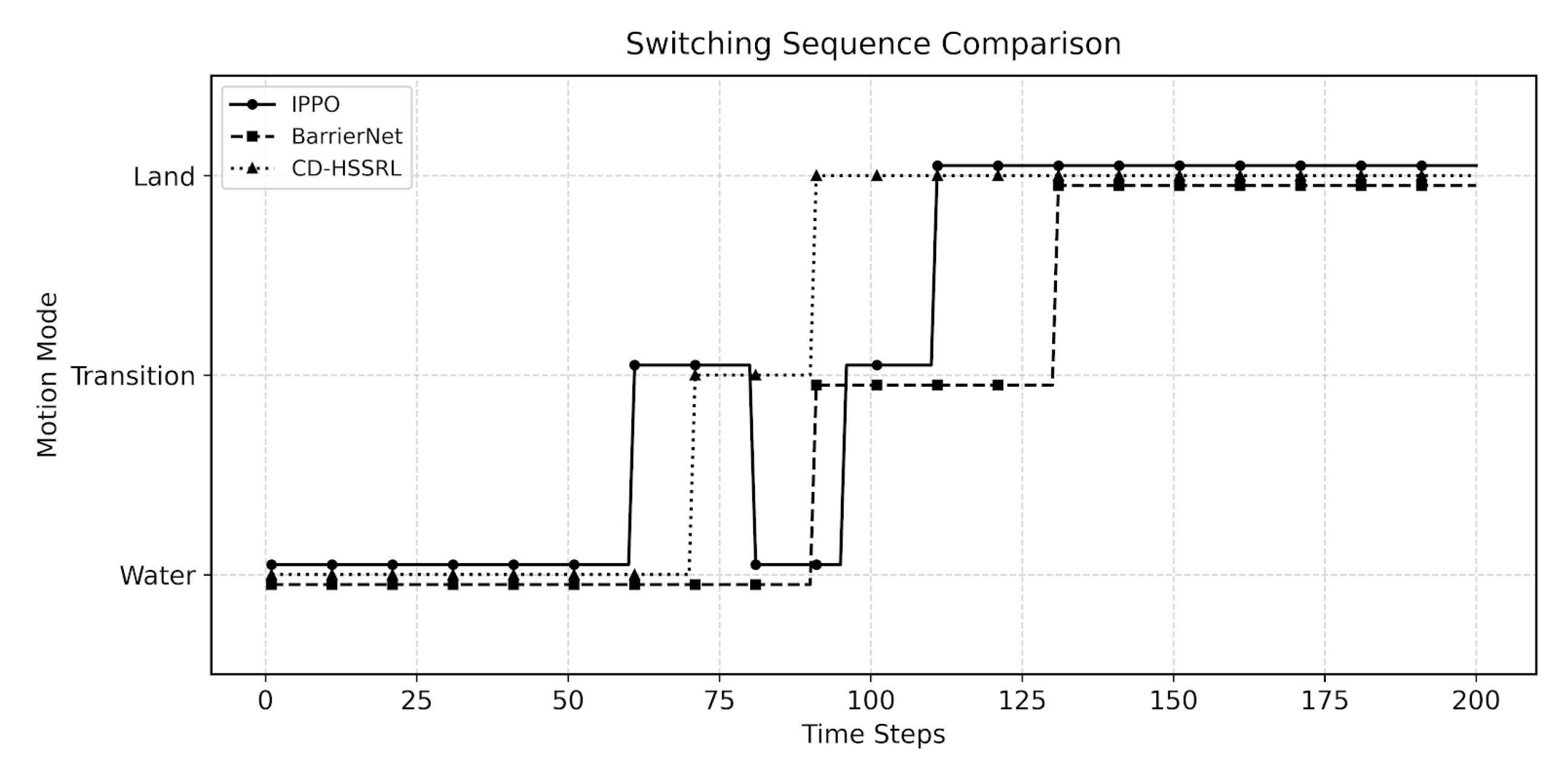

5.2. Cross-Domain Transition Performance

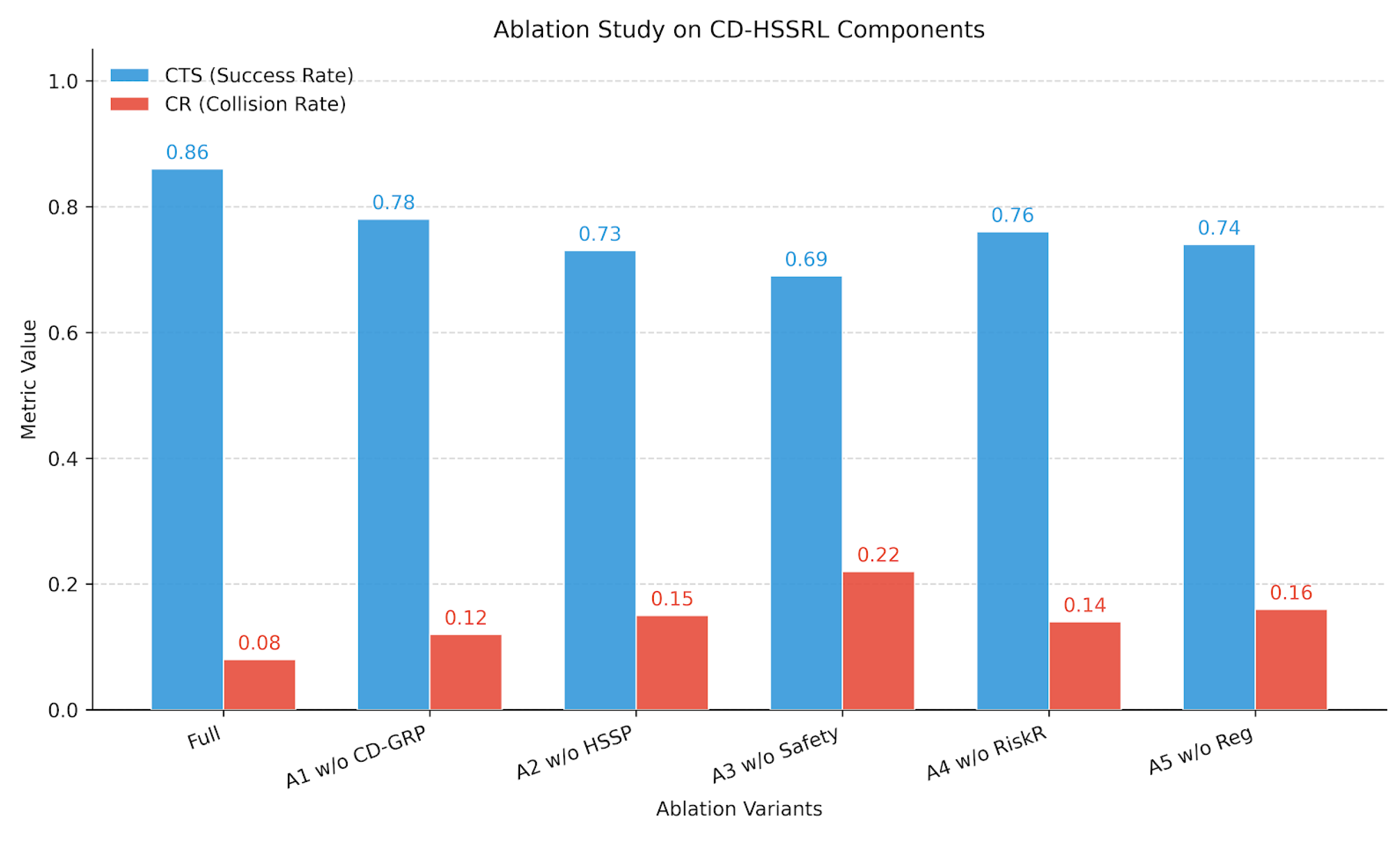

5.3. Ablation Studies

- A1: w/o CD-GRP — removing the cross-domain global reachability planner, replacing it with a local greedy planner.

- A2: w/o HSSP — removing the hierarchical safe switching policy and using a single flat policy.

- A3: w/o Safety Projection — removing the safety-constrained action projection layer.

- A4: w/o Risk-Sensitive Reward — removing the risk penalty term in reward shaping.

- A5: w/o Switching Regularization — removing the switching stability loss .

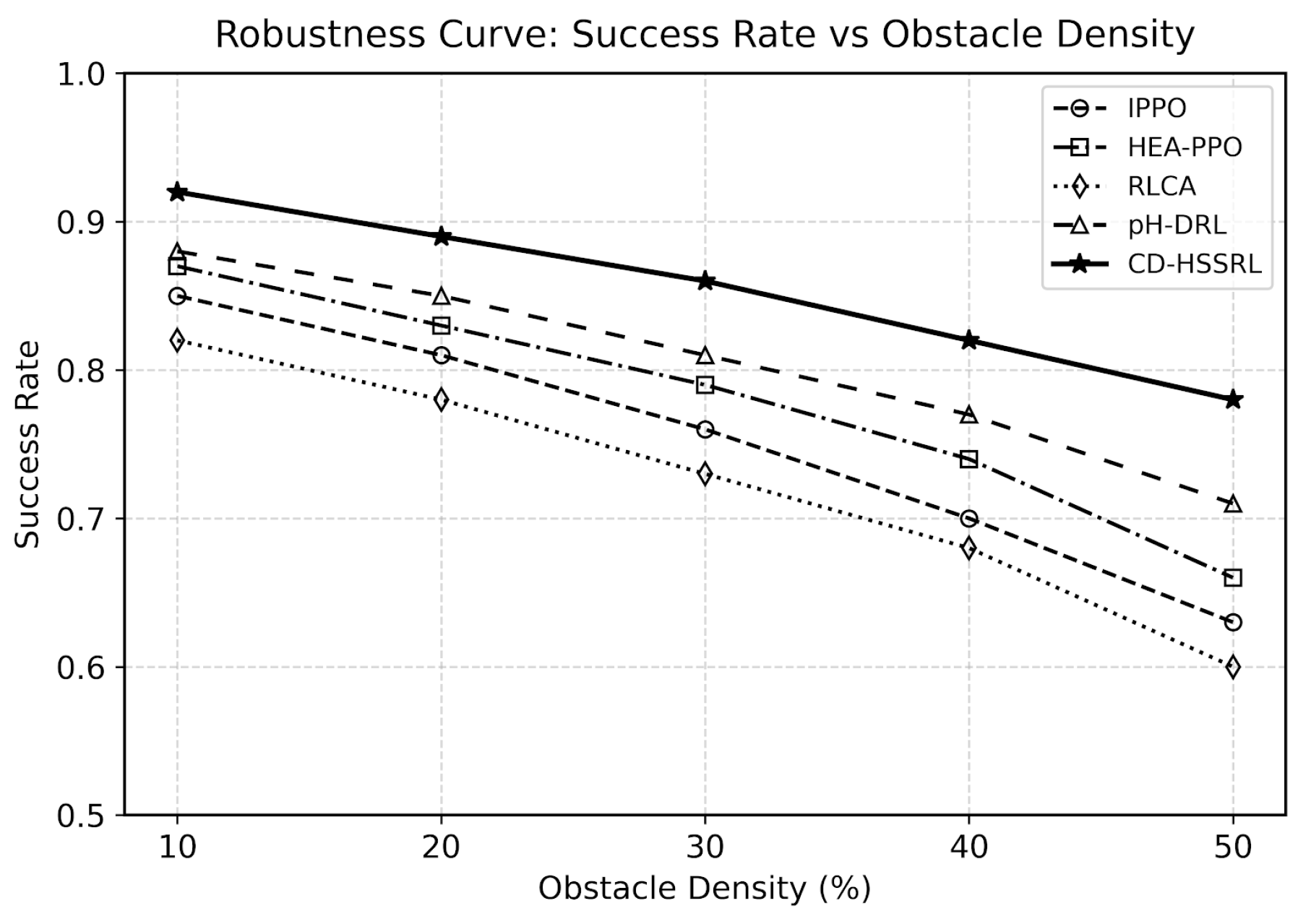

5.4. Robustness Analysis

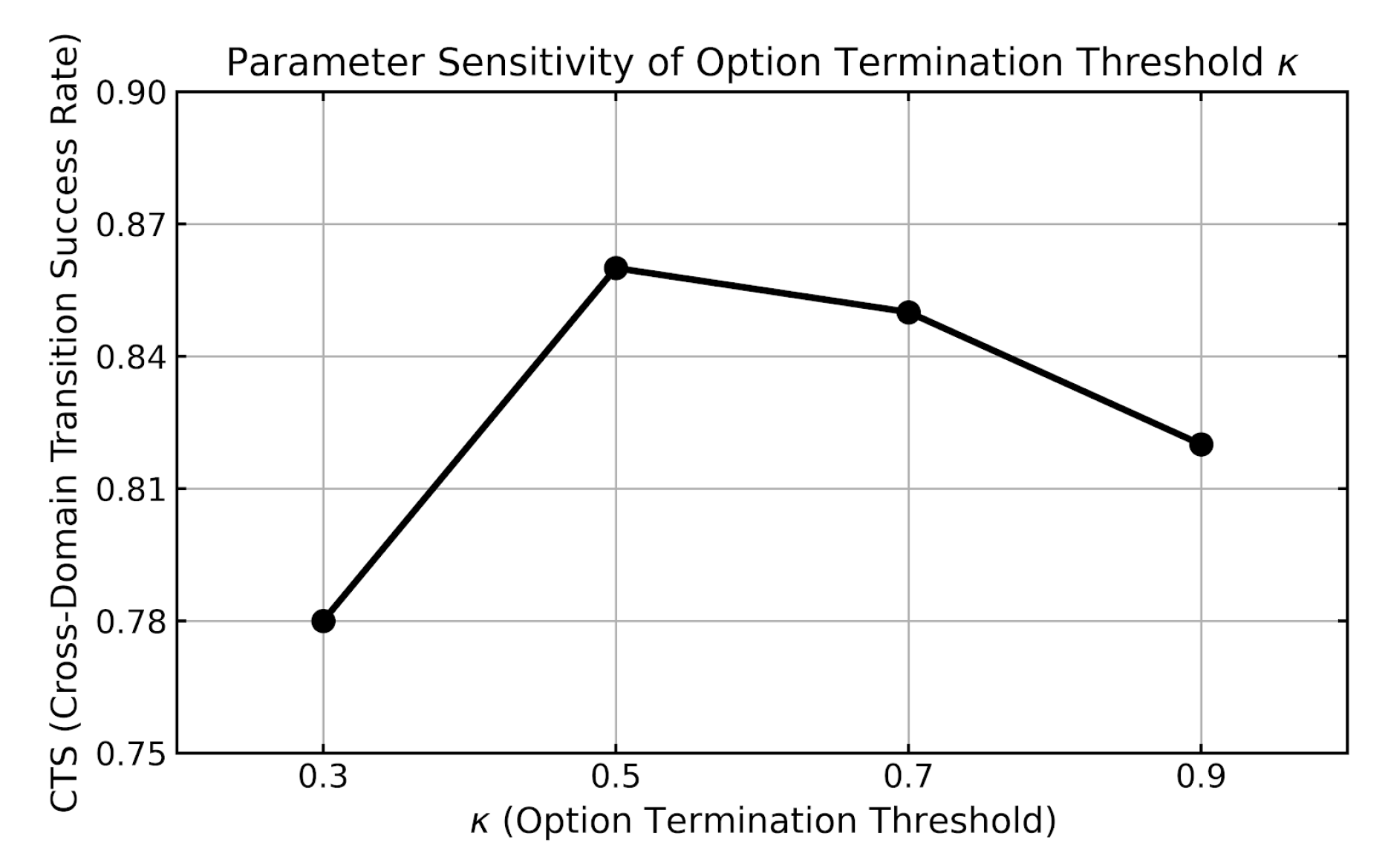

5.5. Parameter Sensitivity Analysis

5.6. Discussion of Findings and Limitations

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Kwe, N.B.; Priyadarshini, R. Emerging trends in mobile robots. Robotics and Smart Autonomous Systems 2024, 77–117. [Google Scholar]

- Bogue, R. The role of robots in environmental monitoring. Industrial Robot: The international journal of robotics research and application 2023, 50, 369–375. [Google Scholar] [CrossRef]

- Narouz, A.S.; Ismail, A.; Atef, A.; Magdy, M.; Abdallah, M.; Atwa, M.; Shenoda, S.; Elsayed, M.; Ayman, S.; Ahmed, M.I. A Review of Features and Characteristics of Rescue Robot with AI. Advanced Sciences and Technology Journal 2024, 1, 1–18. [Google Scholar] [CrossRef]

- Muepu, D.M.; Watanobe, Y.; Naruse, K. Toward a Holistic Framework for Robotic Assessment: A Survey on Performance, Software, and Environmental Adaptability. IEEE Access, 2025. [Google Scholar]

- Li, Q.; Li, H.; Shen, H.; Yu, Y.; He, H.; Feng, X.; Sun, Y.; Mao, Z.; Chen, G.; Tian, Z.; et al. An aerial–wall robotic insect that can land, climb, and take off from vertical surfaces. Research 2023, 6, 0144. [Google Scholar] [CrossRef] [PubMed]

- Wijayathunga, L.; Rassau, A.; Chai, D. Challenges and solutions for autonomous ground robot scene understanding and navigation in unstructured outdoor environments: A review. Applied Sciences 2023, 13, 9877. [Google Scholar] [CrossRef]

- Amundsen, H.B.; Randeni, S.; Bingham, R.C.; Civit, C.; Filardo, B.P.; Føre, M.; Kelasidi, E.; Benjamin, M.R. Hybrid State Estimation and Mode Identification of an Amphibious Robot. In Proceedings of the 2025 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2025, pp. 12696–12702.

- Shi, L.; Zhang, Z.; Li, Z.; Guo, S.; Pan, S.; Bao, P.; Duan, L. Design, implementation and control of an amphibious spherical robot. Journal of Bionic Engineering 2022, 19, 1736–1757. [Google Scholar] [CrossRef]

- Zhang, D.; Van, M.; Mcllvanna, S.; Sun, Y.; McLoone, S. Adaptive safety-critical control with uncertainty estimation for human–robot collaboration. IEEE Transactions on Automation Science and Engineering 2023, 21, 5983–5996. [Google Scholar] [CrossRef]

- Liang, D.; Huang, X.; Xue, Z.; Li, P. Path planning for amphibious unmanned ground vehicles under cross-domain constraints. Intelligent Service Robotics 2025, 18, 1381–1416. [Google Scholar] [CrossRef]

- Puente-Castro, A.; Rivero, D.; Fernandez-Blanco, E.; Lamas-Lopez, F. Taxonomy of Path Planning Algorithms for Swarms of Unmanned Aerial, Ground, and Aquatic Vehicles. In Ground, and Aquatic Vehicles.

- Xiao, X.; Liu, B.; Warnell, G.; Stone, P. Motion planning and control for mobile robot navigation using machine learning: A survey. Autonomous Robots 2022, 46, 569–597. [Google Scholar] [CrossRef]

- Corsi, D.; Camponogara, D.; Farinelli, A. Aquatic navigation: A challenging benchmark for deep reinforcement learning. arXiv 2024, arXiv:2405.20534. [Google Scholar] [CrossRef]

- Zhu, Y.; Wan Hasan, W.Z.; Harun Ramli, H.R.; Norsahperi, N.M.H.; Mohd Kassim, M.S.; Yao, Y. Deep reinforcement learning of mobile robot navigation in dynamic environment: A review. Sensors 2025, 25, 3394. [Google Scholar] [CrossRef] [PubMed]

- Ju, H.; Juan, R.; Gomez, R.; Nakamura, K.; Li, G. Transferring policy of deep reinforcement learning from simulation to reality for robotics. Nature Machine Intelligence 2022, 4, 1077–1087. [Google Scholar] [CrossRef]

- Zhong, G.; Lu, X.; Deng, T.; Cao, J. Multimodal amphibious robotics: Co-design of hybrid propulsion system and quaternion-based adaptive control for cross-domain transitions. Control Engineering Practice 2026, 167, 106644. [Google Scholar] [CrossRef]

- Xia, H.; Xu, Y.; Li, Z. Hybrid actuators and their reuse methodologies for amphibious robots. Robotic Intelligence and Automation 2025. [Google Scholar] [CrossRef]

- Cuevas, J.K.; Dionisio, D.A.I.; Dris, M.K.; Flores, B.F.; Romana, C.J.S.; Bautista, A.J. Retrofitting a Commercially Available Remote Controlled Boat into an Amphibious Robot for Flood Operations Rescue Surveillance (FlOReS) Assistance. In Proceedings of the 2024 9th International Conference on Control and Robotics Engineering (ICCRE). IEEE, 2024, pp. 39–44.

- Policarpo, H.; Lourenço, J.P.; Anastácio, A.M.; Parente, R.; Rego, F.; Silvestre, D.; Afonso, F.; Maia, N.M. Conceptual design of an unmanned electrical amphibious vehicle for ocean and land surveillance. World Electric Vehicle Journal 2024, 15, 279. [Google Scholar] [CrossRef]

- Zhang, K.; Ye, Y.; Chen, K.; Li, Z.; Li, K. Enhanced AUV autonomy through fused energy-optimized path planning and deep reinforcement learning for integrated navigation and dynamic obstacle detection. Journal of Marine Science and Engineering 2025, 13, 1294. [Google Scholar] [CrossRef]

- Zhu, A.; Zhao, J.; Yang, L. Multimodal magnetic miniature robot for adaptive navigation in amphibious environments. npj Robotics 2025, 3, 42. [Google Scholar] [CrossRef]

- Duan, M. Attention-based multi-agent reinforcement learning for traffic flow stability in mountainous tunnel entrances. Scientific Reports 2025, 15, 37278. [Google Scholar] [CrossRef]

- Politi, E.; Stefanidou, A.; Chronis, C.; Dimitrakopoulos, G.; Varlamis, I. Adaptive deep reinforcement learning for efficient 3D navigation of autonomous underwater vehicles. IEEE Access 2024. [Google Scholar] [CrossRef]

- Mackay, A.K.; Riazuelo, L.; Montano, L. RL-DOVS: Reinforcement learning for autonomous robot navigation in dynamic environments. Sensors 2022, 22, 3847. [Google Scholar] [CrossRef]

- Eppe, M.; Gumbsch, C.; Kerzel, M.; Nguyen, P.D.; Butz, M.V.; Wermter, S. Intelligent problem-solving as integrated hierarchical reinforcement learning. Nature Machine Intelligence 2022, 4, 11–20. [Google Scholar] [CrossRef]

- Li, H.; Luo, B.; Song, W.; Yang, C. Predictive hierarchical reinforcement learning for path-efficient mapless navigation with moving target. Neural Networks 2023, 165, 677–688. [Google Scholar] [CrossRef]

- Schneider, T.; Pedrosa, M.V.; Gros, T.P.; Wolf, V.; Flaßkamp, K. Motion Primitives as the Action Space of Deep Q-Learning for Planning in Autonomous Driving. IEEE Transactions on Intelligent Transportation Systems 2024. [Google Scholar] [CrossRef]

- Xiao, W.; Wang, T.H.; Hasani, R.; Chahine, M.; Amini, A.; Li, X.; Rus, D. Barriernet: Differentiable control barrier functions for learning of safe robot control. IEEE Transactions on Robotics 2023, 39, 2289–2307. [Google Scholar] [CrossRef]

- Yao, S.; Guan, R.; Wu, Z.; Ni, Y.; Huang, Z.; Liu, R.W.; Yue, Y.; Ding, W.; Lim, E.G.; Seo, H.; et al. WaterScenes: A Multi-Task 4D Radar-Camera Fusion Dataset and Benchmarks for Autonomous Driving on Water Surfaces. IEEE Transactions on Intelligent Transportation Systems 2024, 25, 16584–16598. [Google Scholar] [CrossRef]

- Bakht, A.B.; Din, M.U.; Javed, S.; Hussain, I. MVTD: A Benchmark Dataset for Maritime Visual Object Tracking. arXiv 2025, arXiv:2506.02866. [Google Scholar] [CrossRef]

- Perille, D.; Truong, A.; Xiao, X.; Stone, P. Benchmarking Metric Ground Navigation. In Proceedings of the 2020 IEEE International Symposium on Safety, Security and Rescue Robotics (SSRR). IEEE, 2020.

- Jiang, W.; Liu, J.; Wang, W.; Wang, Y. Global Path Planning for Land–Air Amphibious Biomimetic Robot Based on Improved PPO. Biomimetics 2026, 11, 25. [Google Scholar] [CrossRef] [PubMed]

- Xiaofei, Y.; Yilun, S.; Wei, L.; Hui, Y.; Weibo, Z.; Zhengrong, X. Global path planning algorithm based on double DQN for multi-tasks amphibious unmanned surface vehicle. Ocean Engineering 2022, 266, 112809. [Google Scholar] [CrossRef]

- Yin, S.; Xiang, Z. Energy-constrained collaborative path planning for heterogeneous amphibious unmanned surface vehicles in obstacle-cluttered environments. Ocean Engineering 2025, 330, 121241. [Google Scholar] [CrossRef]

- Yin, S.; Hu, J.; Xiang, Z. Multi-objective collaborative path planning for multiple water-air unmanned vehicles in cramped environments. Expert Systems with Applications 2025, 292, 128625. [Google Scholar] [CrossRef]

- Zhang, N.; Chen, Y.; Wu, Y.; Ji, M.; Wang, B. A hybrid APF-DQN framework with transformer-based current prediction for USV path planning in dynamic ocean environments. Scientific Reports 2025. [Google Scholar] [CrossRef]

- Hua, M.; Zhou, W.; Cheng, H.; Chen, Z. Improved DDPG algorithm-based path planning for unmanned surface vehicles. Intelligence & Robotics 2024, 4, 363–384. [Google Scholar] [CrossRef]

- Yang, C.; Zhao, Y.; Cai, X.; Wei, W.; Feng, X.; Zhou, K. Path planning algorithm for unmanned surface vessel based on multiobjective reinforcement learning. Computational Intelligence and Neuroscience 2023, 2023, 2146314. [Google Scholar] [CrossRef] [PubMed]

- Fan, Y.; Sun, Z.; Wang, G. A novel reinforcement learning collision avoidance algorithm for USVs based on maneuvering characteristics and COLREGs. Sensors 2022, 22, 2099. [Google Scholar] [CrossRef] [PubMed]

- Hu, H.; Wang, Y.; Tong, W.; Zhao, J.; Gu, Y. Path planning for autonomous vehicles in unknown dynamic environment based on deep reinforcement learning. Applied Sciences 2023, 13, 10056. [Google Scholar] [CrossRef]

- Liang, C.; Liu, L.; Liu, C. Multi-UAV autonomous collision avoidance based on PPO-GIC algorithm with CNN–LSTM fusion network. Neural Networks 2023, 162, 21–33. [Google Scholar] [CrossRef]

| Method | WaterScenes | MVTD | BARN | Gazebo Cross-Domain | ||||||||||||

| SR↑ | CR↓ | APL↓ | EC↓ | SR↑ | CR↓ | APL↓ | EC↓ | SR↑ | CR↓ | APL↓ | EC↓ | SR↑ | CR↓ | SSI↑ | CTS↑ | |

| IPPO | 0.86 | 0.09 | 128.4 | 34.8 | 0.79 | 0.13 | 142.6 | 39.5 | 0.90 | 0.06 | 116.9 | 30.7 | 0.78 | 0.14 | 0.78 | 0.71 |

| DDQN | 0.83 | 0.11 | 132.7 | 36.2 | 0.76 | 0.15 | 149.8 | 41.1 | 0.88 | 0.07 | 120.8 | 32.1 | 0.74 | 0.17 | 0.75 | 0.66 |

| HEA-PPO | 0.88 | 0.08 | 124.9 | 33.7 | 0.81 | 0.12 | 140.9 | 38.6 | 0.91 | 0.05 | 114.2 | 29.6 | 0.80 | 0.13 | 0.80 | 0.74 |

| IMTCMO | 0.87 | 0.08 | 126.1 | 33.9 | 0.80 | 0.12 | 143.3 | 38.9 | 0.92 | 0.05 | 112.7 | 29.2 | 0.79 | 0.13 | 0.81 | 0.73 |

| APF-DQN | 0.89 | 0.07 | 123.8 | 32.4 | 0.83 | 0.11 | 137.2 | 37.5 | 0.85 | 0.08 | 126.5 | 34.9 | 0.76 | 0.15 | 0.77 | 0.69 |

| I-DDPG | 0.87 | 0.08 | 125.6 | 33.1 | 0.82 | 0.11 | 138.9 | 36.8 | 0.86 | 0.08 | 124.1 | 33.6 | 0.75 | 0.16 | 0.76 | 0.68 |

| MORL-based | 0.88 | 0.08 | 124.4 | 32.8 | 0.82 | 0.11 | 139.4 | 37.2 | 0.87 | 0.07 | 122.9 | 33.0 | 0.76 | 0.15 | 0.77 | 0.70 |

| RLCA | 0.86 | 0.06 | 129.6 | 35.4 | 0.80 | 0.09 | 145.2 | 40.1 | 0.84 | 0.06 | 127.8 | 35.9 | 0.77 | 0.10 | 0.79 | 0.72 |

| APF-D3QNPER | 0.90 | 0.07 | 121.9 | 33.6 | 0.84 | 0.10 | 135.8 | 39.2 | 0.86 | 0.07 | 124.6 | 34.1 | 0.78 | 0.13 | 0.80 | 0.74 |

| CLPPO-GIC | 0.89 | 0.07 | 122.7 | 33.0 | 0.85 | 0.10 | 134.9 | 38.4 | 0.88 | 0.06 | 120.6 | 32.5 | 0.81 | 0.12 | 0.83 | 0.76 |

| BarrierNet | 0.90 | 0.06 | 121.5 | 32.9 | 0.84 | 0.09 | 134.2 | 37.9 | 0.89 | 0.05 | 118.9 | 31.2 | 0.82 | 0.09 | 0.85 | 0.79 |

| pH-DRL | 0.88 | 0.07 | 124.8 | 33.4 | 0.83 | 0.10 | 137.1 | 38.1 | 0.91 | 0.05 | 114.6 | 29.8 | 0.84 | 0.10 | 0.86 | 0.81 |

| MP-DQL | 0.87 | 0.08 | 125.9 | 34.1 | 0.82 | 0.11 | 138.7 | 38.8 | 0.90 | 0.06 | 115.8 | 30.4 | 0.83 | 0.10 | 0.85 | 0.80 |

| CD-HSSRL (Ours) | 0.93 | 0.05 | 118.6 | 30.8 | 0.88 | 0.08 | 129.7 | 34.6 | 0.94 | 0.04 | 108.9 | 27.8 | 0.87 | 0.08 | 0.90 | 0.86 |

| Method | CTS↑ | SSI↑ | CR↓ | SVR↓ | EC↓ |

| IPPO | 0.71 | 0.78 | 0.14 | 0.18 | 36.2 |

| HEA-PPO | 0.74 | 0.80 | 0.13 | 0.16 | 35.1 |

| RLCA | 0.72 | 0.79 | 0.10 | 0.12 | 39.8 |

| BarrierNet | 0.79 | 0.83 | 0.09 | 0.08 | 34.6 |

| CD-HSSRL (Ours) | 0.86 | 0.90 | 0.08 | 0.05 | 31.6 |

| Method | CTS↑ | SSI↑ | CR↓ | EC↓ |

| Full CD-HSSRL (Ours) | 0.86 | 0.90 | 0.08 | 31.6 |

| A1: w/o CD-GRP | 0.78 | 0.84 | 0.12 | 35.9 |

| A2: w/o HSSP | 0.73 | 0.70 | 0.15 | 34.8 |

| A3: w/o Safety Projection | 0.69 | 0.72 | 0.22 | 30.9 |

| A4: w/o Risk-Sensitive Rwd | 0.76 | 0.82 | 0.14 | 33.7 |

| A5: w/o Switching Reg. | 0.74 | 0.69 | 0.16 | 32.8 |

| Current (m/s) | IPPO | HEA-PPO | RLCA | BarrierNet | CD-HSSRL | |||||

| SR↑ | CR↓ | SR↑ | CR↓ | SR↑ | CR↓ | SR↑ | CR↓ | SR↑ | CR↓ | |

| 0.0 | 0.78 | 0.12 | 0.80 | 0.11 | 0.76 | 0.08 | 0.83 | 0.07 | 0.87 | 0.08 |

| 0.5 | 0.74 | 0.15 | 0.77 | 0.13 | 0.73 | 0.09 | 0.81 | 0.08 | 0.85 | 0.09 |

| 1.0 | 0.69 | 0.19 | 0.72 | 0.17 | 0.68 | 0.11 | 0.77 | 0.10 | 0.82 | 0.11 |

| 1.5 | 0.63 | 0.24 | 0.66 | 0.22 | 0.62 | 0.14 | 0.72 | 0.13 | 0.78 | 0.14 |

| Noise Std. | IPPO | HEA-PPO | RLCA | BarrierNet | CD-HSSRL |

| 0.0 | 0.71 | 0.74 | 0.72 | 0.79 | 0.86 |

| 0.1 | 0.68 | 0.71 | 0.69 | 0.77 | 0.84 |

| 0.2 | 0.63 | 0.67 | 0.65 | 0.74 | 0.81 |

| 0.3 | 0.58 | 0.62 | 0.60 | 0.70 | 0.77 |

| CTS↑ | SSI↑ | |

| 0.0 | 0.74 | 0.69 |

| 0.2 | 0.80 | 0.81 |

| 0.5 | 0.86 | 0.90 |

| 0.8 | 0.85 | 0.89 |

| 1.0 | 0.83 | 0.87 |

| CR↓ | EC↓ | |

| 0.1 | 0.18 | 29.7 |

| 0.5 | 0.12 | 30.8 |

| 1.0 | 0.08 | 31.6 |

| 1.5 | 0.08 | 33.2 |

| 2.0 | 0.07 | 35.4 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).