Submitted:

02 April 2026

Posted:

03 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Literature Review and Analytical Framework

3. Methodology

4. Findings and Discussion

4.1. Institutional Governance and Leadership Architecture

4.2. Digital Infrastructure and LMS Ecosystem Requirements

4.3. Curriculum Redesign and Pedagogical Adaptation

4.4. Assessment Integrity and Academic Standards

4.5. Faculty Development and Change Management

4.6. Student Support, Inclusion, and Digital Equity

4.7. Quality Assurance, Data Governance, and Continuous Improvement

4.8. Sequencing Models for Transition from Conventional to Blended and Online Provision

4.9. Toward a University-Wide Framework for Bringing Conventional Institutions Online

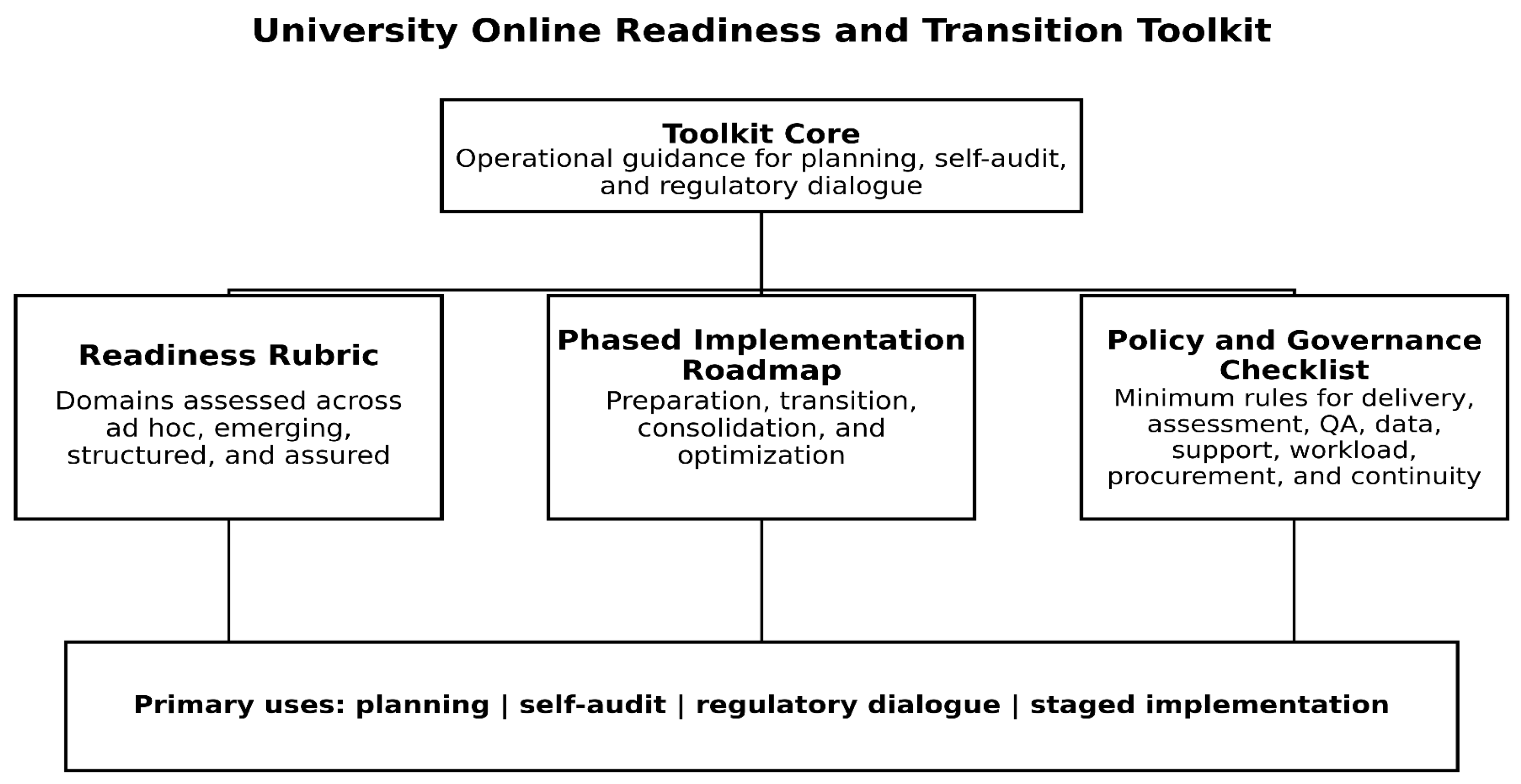

4.10. Companion output: University Online Readiness and Transition Toolkit

| Domain | Ad hoc | Emerging | Structured | Assured |

|---|---|---|---|---|

| Leadership and governance | Pilots lack formal authority | Committee exists but with limited mandate | Digital transition owned by senior leadership and academic governance | Board/senate oversight, clear accountability, recurring review |

| Policy and regulation | Rules absent or face-to-face only | Provisional guidance in place | Approved policies for delivery, assessment, QA, and student support | Policies reviewed routinely and aligned with regulation |

| Infrastructure and LMS ecosystem | Standalone platform, unstable support | Basic LMS and communication tools | Interoperable systems, helpdesk, identity, library, backup | Resilient ecosystem with service standards and continuity planning |

| Curriculum redesign | Content upload dominates | Selected modules redesigned | Programme-level redesign with standards and templates | Continuous evidence-led redesign across programmes |

| Assessment integrity | Replication of invigilated exams | Mixed online methods with partial safeguards | Authentic design plus identity and misconduct procedures | AI-aware, privacy-conscious, standards-aligned assessment regime |

| Faculty capability | Voluntary technical training only | Structured workshops and basic support | Instructional design support, workload recognition, communities of practice | Capability embedded in CPD, promotion, and QA |

| Learner support and inclusion | Reactive technical help only | Orientation and limited advising | Integrated academic, technical, library, and wellbeing support | Targeted equity measures and accessible multi-channel support |

| QA, data, and AI governance | Fragmented reporting and weak oversight | Basic indicators and local practice | Integrated QA review, analytics rules, data responsibilities | Transparent, rights-based governance with improvement loops |

| Finance and sustainability | Project-funded and uncertain | Partial budgeting for key systems | Recurring budget and cost model established | Sustainable financing and periodic value review |

5. Conclusion

Funding

Ethical Approval

Data Availability

Conflicts of Interest

Use of AI Tools

References

- Ali, R.; Georgiou, H. A process for institutional adoption and diffusion of blended learning in higher education. High. Educ. Policy 2025, 38, 523–544. [Google Scholar] [CrossRef]

- Anthony, B.; Kamaludin, A.; Romli, A.; Mat Raffei, A.F.; Phon, D.N.A.L.E.; Abdullah, A.; Ming, G.L. Blended learning adoption and implementation in higher education: A theoretical and systematic review. Technol. Knowl. Learn. 2022, 27, 531–578. [Google Scholar] [CrossRef]

- Arksey, H.; O’Malley, L. Scoping studies: Towards a methodological framework. Int. J. Soc. Res. Methodol. 2005, 8, 19–32. [Google Scholar] [CrossRef]

- Butler-Henderson, K.; Crawford, J. A systematic review of online examinations: A pedagogical innovation for scalable authentication and integrity. Comput. Educ. 2020, 159, 104024. [Google Scholar] [CrossRef]

- Castro Benavides, L.M.; Tamayo Arias, J.A.; Arango Serna, M.D.; Branch Bedoya, J.W.; Burgos, D. Digital transformation in higher education institutions: A systematic literature review. Sensors 2020, 20, 3291. [Google Scholar] [CrossRef] [PubMed]

- DiMaggio, P.J.; Powell, W.W. The iron cage revisited: Institutional isomorphism and collective rationality in organizational fields. Am. Sociol. Rev. 1983, 48, 147–160. [Google Scholar] [CrossRef]

- ENQA. Considerations for QA of e-Learning Provision. 2018. Available online: https://www.enqa.eu/publications/considerations-for-qa-of-e-learning-provision/.

- Farias-Gaytan, S.; Aguaded, I.; Ramirez-Montoya, M.-S. Digital transformation and digital literacy in the context of complexity within higher education institutions: A systematic literature review. Humanit. Soc. Sci. Commun. 2023, 10, 386. [Google Scholar] [CrossRef]

- Fernández, A.; Gómez, B.; Binjaku, K.; Kajo Meçe, E. Digital transformation initiatives in higher education institutions: A multivocal literature review. Educ. Inf. Technol. 2023, 28, 12351–12382. [Google Scholar] [CrossRef] [PubMed]

- Gašević, D.; Tsai, Y.-S.; Drachsler, H. Learning analytics in higher education: Stakeholders, strategy and scale. Internet High. Educ. 2022, 52, 100833. [Google Scholar] [CrossRef]

- Gao, Y.; Wong, S.L.; Md Khambari, M.N.; Noordin, N. A bibliometric analysis of online faculty professional development in higher education. Res. Pract. Technol. Enhanc. Learn. 2022, 17, 17. [Google Scholar] [CrossRef]

- García-Machado, J.J.; Martínez Ávila, M.; Dospinescu, N.; Dospinescu, O. How the support that students receive during online learning influences their academic performance. Educ. Inf. Technol. 2024, 29, 20005–20029. [Google Scholar] [CrossRef]

- Govers, M.; van Amelsvoort, P. A theoretical essay on socio-technical systems design thinking in the era of digital transformation. Gr. Interakt. Organ. Z. Angew. Organ. 2023, 54, 27–40. [Google Scholar] [CrossRef]

- Graham, C.R.; Woodfield, W.; Harrison, J.B. A framework for institutional adoption and implementation of blended learning in higher education. Internet High. Educ. 2013, 18, 4–14. [Google Scholar] [CrossRef]

- Graham, C.R.; Woodfield, W.; Harrison, J.B. Blended learning in higher education: Institutional adoption and implementation. Comput. Educ. 2014, 75, 185–195. [Google Scholar] [CrossRef]

- Holden, O.L.; Norris, M.E.; Kuhlmeier, V.A. Academic integrity in online assessment: A research review. Front. Educ. 2021, 6, 639814. [Google Scholar] [CrossRef]

- Hong, Q.N.; Fàbregues, S.; Bartlett, G.; Boardman, F.; Cargo, M.; Dagenais, P.; Gagnon, M.-P.; Griffiths, F.; Nicolau, B.; O’Cathain, A.; et al. The Mixed Methods Appraisal Tool (MMAT) version 2018 for information professionals and researchers. Educ. Inf. 2018, 34, 285–291. [Google Scholar] [CrossRef]

- Jisc. Framework for Digital Transformation in Higher Education. 2023. Available online: https://www.jisc.ac.uk/guides/framework-for-digital-transformation-in-higher-education.

- Jones, K.M.L. Learning analytics and higher education: A proposed model for establishing informed consent mechanisms to promote student privacy and autonomy. Int. J. Educ. Technol. High. Educ. 2019, 16, 24. [Google Scholar] [CrossRef]

- Levac, D.; Colquhoun, H.; O’Brien, K.K. Scoping studies: Advancing the methodology. Implement. Sci. 2010, 5, 69. [Google Scholar] [CrossRef]

- Mabidi, N. A systematic review of the transformative impact of the digital revolution on higher education in South Africa. S. Afr. J. High. Educ. 2024, 38, 97–113. [Google Scholar] [CrossRef]

- Martin, F.; Bolliger, D.U. Developing an online learner satisfaction framework in higher education through a systematic review of research. Int. J. Educ. Technol. High. Educ. 2022, 19, 50. [Google Scholar] [CrossRef]

- Newman, T.; McGill, L.; Knight, S. How to Approach Digital Transformation in Higher Education: Report and Case Studies. JISC. 2025. Available online: https://www.jisc.ac.uk/reports/how-to-approach-digital-transformation-in-higher-education.

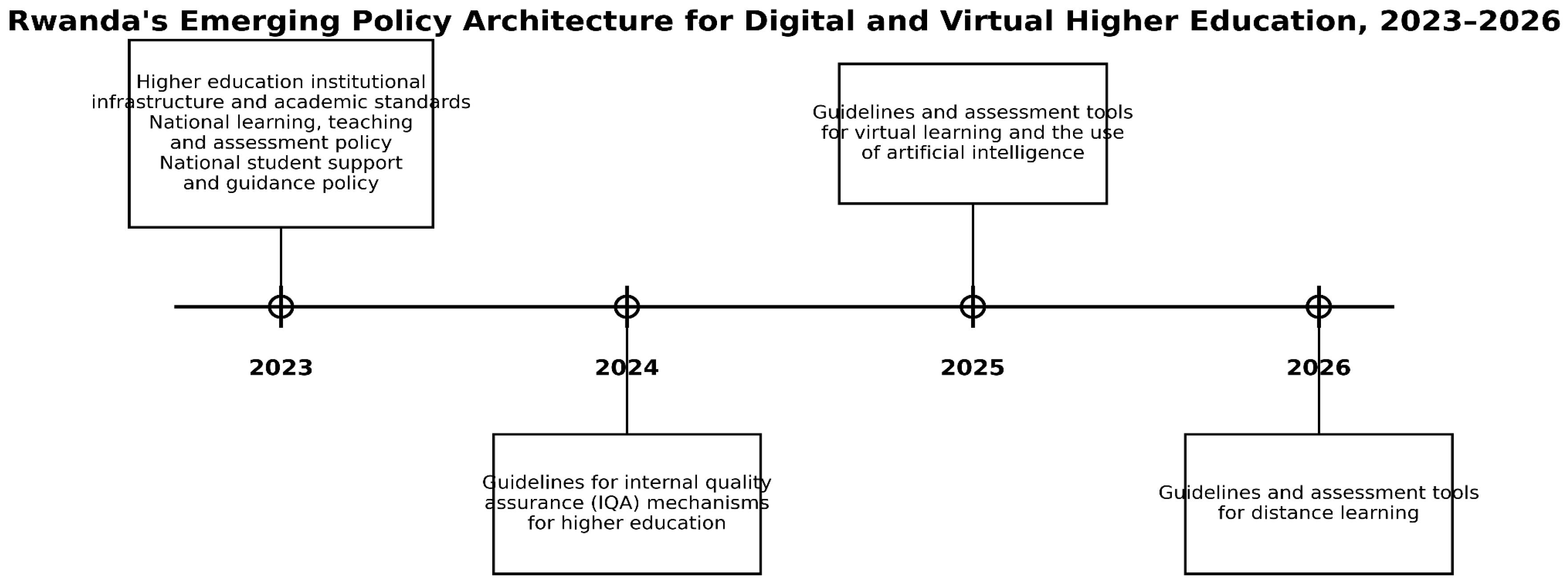

- Rwanda Higher Education Council. Higher Education Institutional Infrastructure and Academic Standards. 2023a. Available online: https://www.hec.gov.rw/publications/guidelines.

- Rwanda Higher Education Council. National Learning, Teaching and Assessment Policy. 2023b. Available online: https://www.hec.gov.rw/publications/policies.

- Rwanda Higher Education Council. National Student Support and Guidance Policy. 2023c. Available online: https://www.hec.gov.rw/publications/policies.

- Rwanda Higher Education Council. Guidelines for Internal Quality Assurance (IQA) Mechanisms for Higher Education. 2024. Available online: https://www.hec.gov.rw/publications/guidelines.

- Rwanda Higher Education Council. Guidelines and Assessment Tools for the Virtual Learning and the Use of Artificial Intelligence in Rwanda’s Higher Learning Institutions. 2025. Available online: https://www.hec.gov.rw/publications/guidelines.

- Rwanda Higher Education Council. Guidelines and Assessment Tools for Distance Learning. 2026. Available online: https://www.hec.gov.rw/publications/guidelines.

- Sanders, D.A.; Mukhari, S.S. The perceptions of lecturers about blended learning at a particular higher institution in South Africa. Educ. Inf. Technol. 2024, 29, 11517–11532. [Google Scholar] [CrossRef]

- Sangwa, S.; Manirakiza, R.; Mutabazi, P. Assessing students’ readiness for online and distance learning in Rwanda. Res. J. Educ. 2020, 8, 1–9. [Google Scholar]

- Tricco, A.C.; Lillie, E.; Zarin, W.; O’Brien, K.K.; Colquhoun, H.; Levac, D.; Moher, D.; Peters, M.D.J.; Horsley, T.; Weeks, L.; et al. PRISMA extension for scoping reviews (PRISMA-ScR): Checklist and explanation. Ann. Intern. Med. 2018, 169, 467–473. [Google Scholar] [CrossRef]

- Tulinayo, F.; Ssentume, P.; Najjuma, R. Digital technologies in resource constrained higher institutions of learning: A study on students’ acceptance and usability. Int. J. Educ. Technol. High. Educ. 2018, 15, 36. [Google Scholar] [CrossRef]

- UNESCO. Guidance for Generative AI in Education and Research. 2023. Available online: https://doi.org/10.54675/EWZM9535.

- UNESCO. AI and Education: Protecting the Rights of Learners. 2025a. Available online: https://www.unesco.org/en/articles/ai-and-education-protecting-rights-learners.

- UNESCO. UNESCO Survey: Two-Thirds of Higher Education Institutions Have or are Developing Guidance on AI Use. 2025b. Available online: https://www.unesco.org/en/articles/unesco-survey-two-thirds-higher-education-institutions-have-or-are-developing-guidance-ai-use.

- Wang, L. Putting Digital Transformation at the Heart of HE Systems. UNESCO. 2023. Available online: https://www.unesco.org/en/articles/putting-digital-transformation-heart-he-systems.

- Whittemore, R.; Knafl, K. The integrative review: Updated methodology. J. Adv. Nurs. 2005, 52, 546–553. [Google Scholar] [CrossRef] [PubMed]

| Search Dimension | Focus | Typical Terms | Eligibility Emphasis |

|---|---|---|---|

| Sector and modality | Higher education and forms of digital provision | higher education; online learning; blended learning; distance learning; digital transformation | University-level relevance |

| Institutional architecture | Governance and operating model | governance; strategy; policy; regulation; accreditation; quality assurance | Institution or system-level explanatory value |

| Operational domains | Capabilities required at scale | LMS; infrastructure; curriculum redesign; assessment integrity; faculty development; student support; data governance; AI governance | Transferable implications for scaled provision |

| Context filter | African and policy-reference relevance | Africa; African higher education; Rwanda | Contextual transferability and regulatory significance |

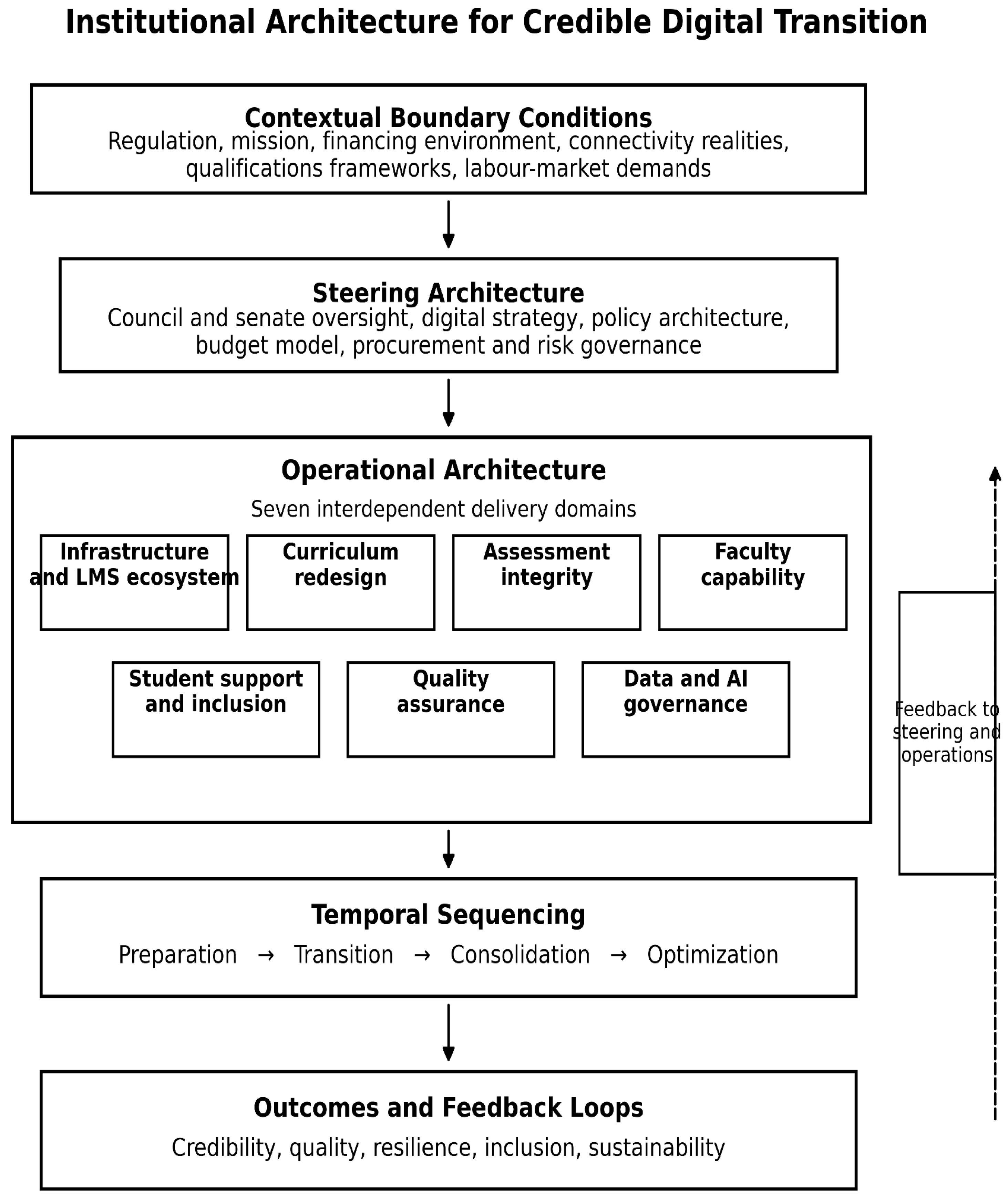

| Layer | Core Function | Typical Institutional Expressions |

|---|---|---|

| Contextual boundary conditions | Define opportunities and constraints | Regulation, mission, financing environment, connectivity realities, qualifications frameworks, labour-market demands |

| Steering architecture | Confers legitimacy, direction, and resources | Council/senate oversight, digital strategy, policy architecture, budget model, procurement and risk governance |

| Operational architecture | Builds delivery capability | Infrastructure ecosystem, curriculum redesign, assessment integrity, faculty capability, learner support, QA, data and AI governance |

| Temporal sequencing | Orders implementation and learning | Preparation, transition, consolidation, optimization |

| Outcomes and feedback loops | Stabilize credibility and improvement | Quality monitoring, learner experience evidence, analytics with safeguards, financial review, policy revision |

| Stage | Strategic Purpose | Essential Actions | Main Risk if Bypassed |

|---|---|---|---|

| Preparation | Establish mandate and minimum conditions | Readiness audit; regulatory mapping; governance assignment; baseline policies; infrastructure minimums; programme prioritization | Launch without capability or legitimacy |

| Transition | Pilot and learn under controlled conditions | Curriculum redesign; staff development; learner onboarding; assessment redesign; intensified QA monitoring | Isolated pilots mistaken for scalable model |

| Consolidation | Move from projects to operating model | Policy formalization; interoperability improvements; support stabilization; budget alignment; routine quality review | Persistent fragmentation and quality drift |

| Optimization | Deepen improvement and innovation | Analytics with safeguards; AI governance refinement; differentiated support; external partnerships; advanced blended or online models | Sophisticated rhetoric built on unstable foundations |

| Policy Area | Minimum Provision | Lead Office(s) |

|---|---|---|

| Digital learning strategy | Institutional purpose, scope, target modes, resourcing principles, review cycle | Senior leadership; academic affairs; planning |

| Academic regulations | Rules for online attendance, participation, progression, records, appeals, and equivalence | Senate; registry; academic affairs |

| Assessment integrity and AI use | Permissible AI use, disclosure, authorship, misconduct, identity assurance, proportional safeguards | Academic affairs; QA; legal/ethics |

| Internal quality assurance | Approval, monitoring, review, learner feedback, complaint handling, enhancement cycle | QA unit; faculties; senate committees |

| Data and learning analytics governance | Purpose limitation, access rights, retention, consent/notice, human oversight, security | ICT; data protection/legal; QA |

| Student support and accessibility | Orientation, advising, disability support, library access, wellbeing referral, communication standards | Student affairs; library; ICT; faculties |

| Staff workload and development | Workload recognition, training expectations, support roles, incentives, review | HR; academic affairs; faculties |

| Procurement, cybersecurity, and continuity | Vendor due diligence, interoperability, backup, incident response, business continuity | ICT; procurement; finance; legal/risk |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).