Submitted:

02 April 2026

Posted:

03 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Mathematical Framework

2.1. Problem Setting

2.2. LMCGP for Chained GPs Regression Framework

- as the vector of latent function evaluations for the j-th parameter of likelihood associated to the d-th output, where .

- as the stacked vector of all latent function evaluations of likelihood associated to the d-th output

- as the grand vector of all evaluations across all outputs.

2.3. Variational Inference

2.4. Model Setup

3. Results and Discussions

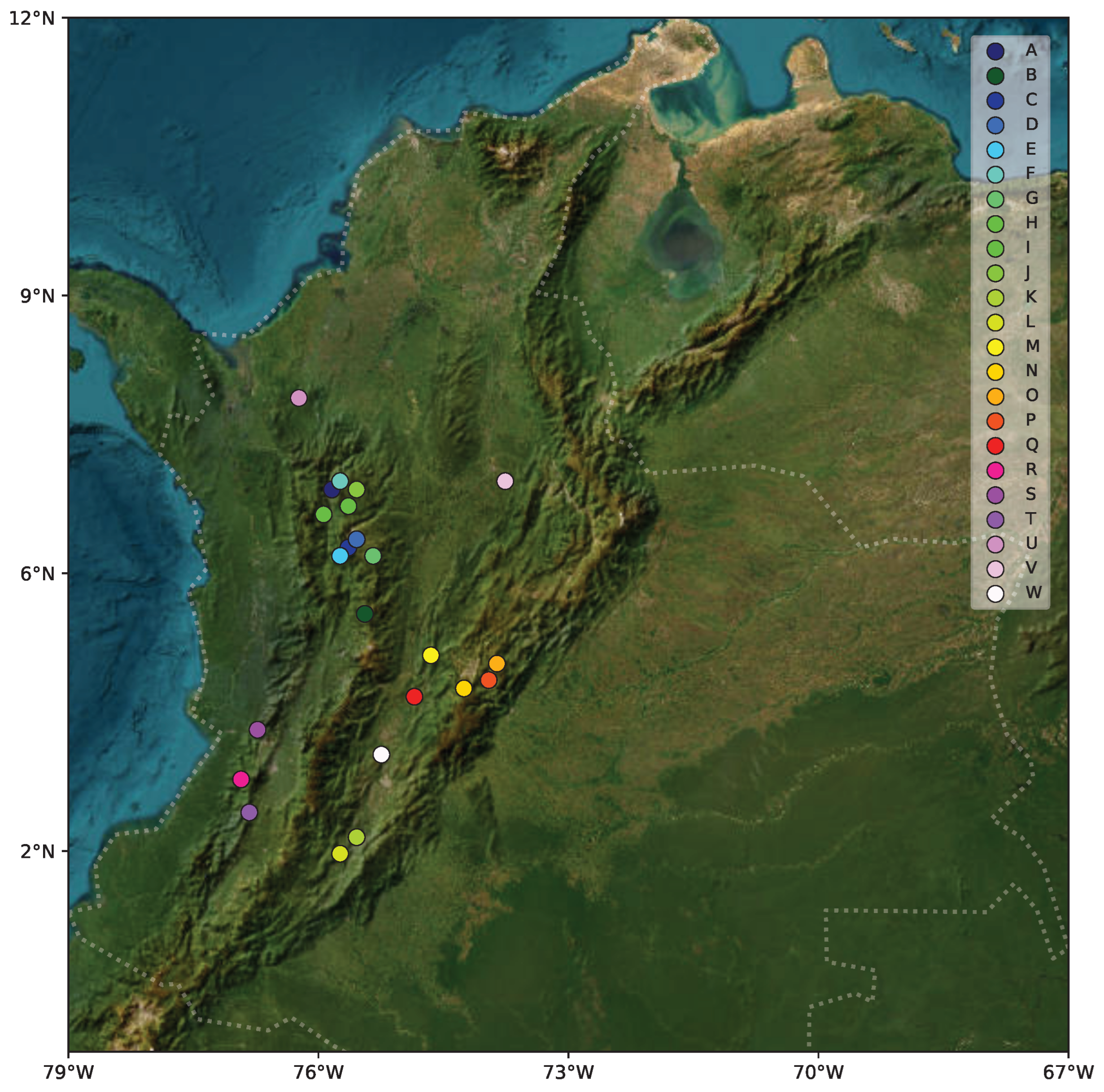

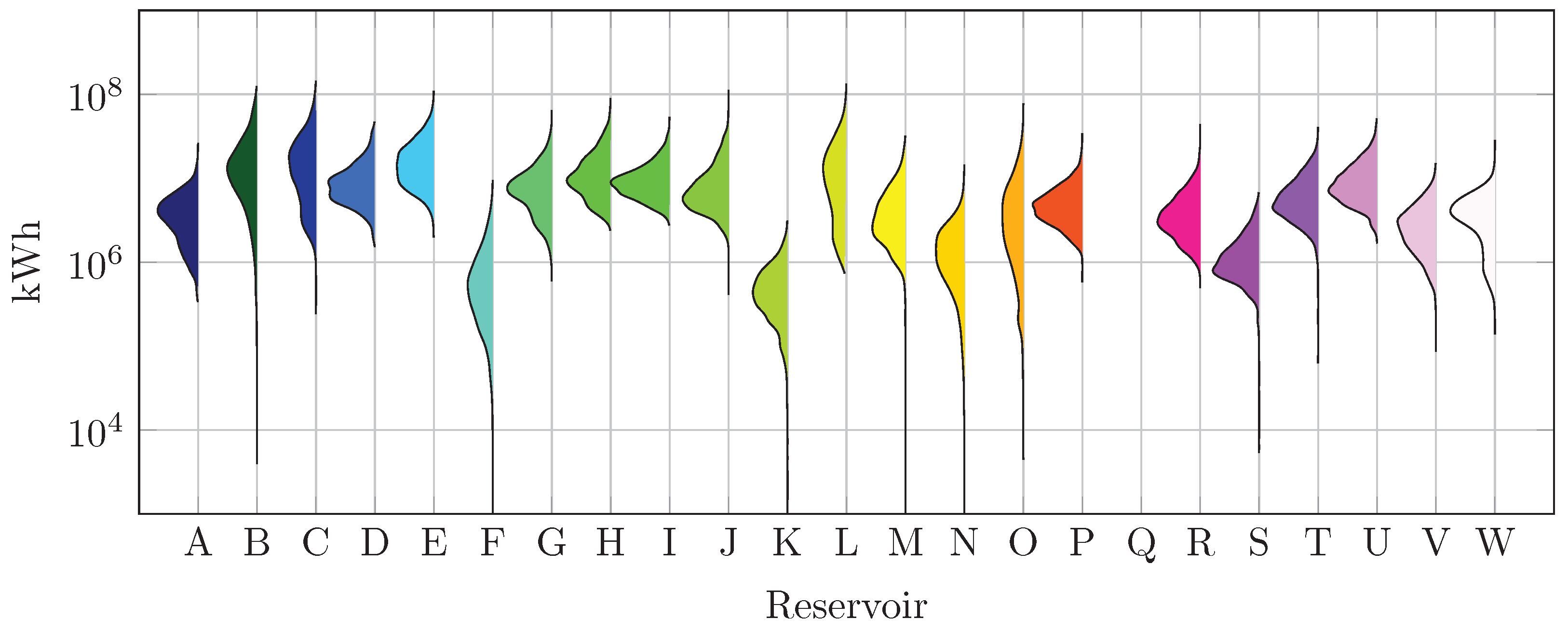

3.1. Dataset Collection

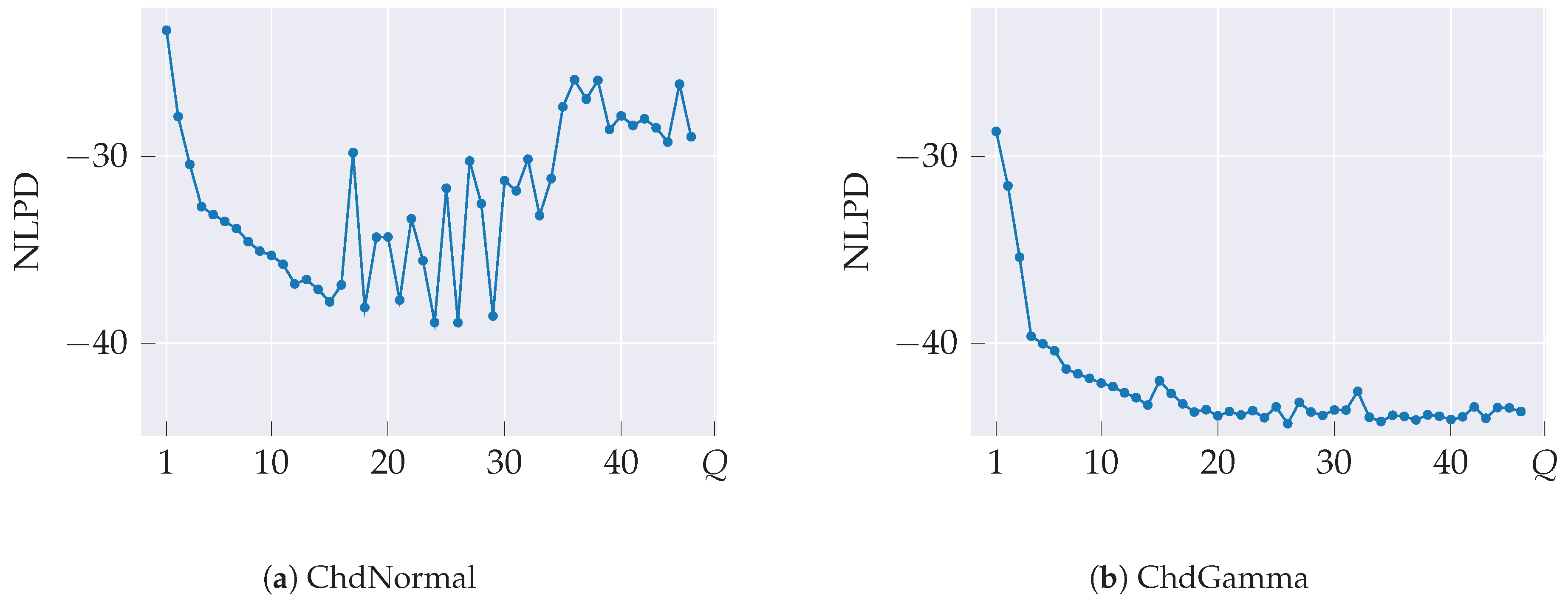

3.2. Model Setup and Hyperparameter Tuning

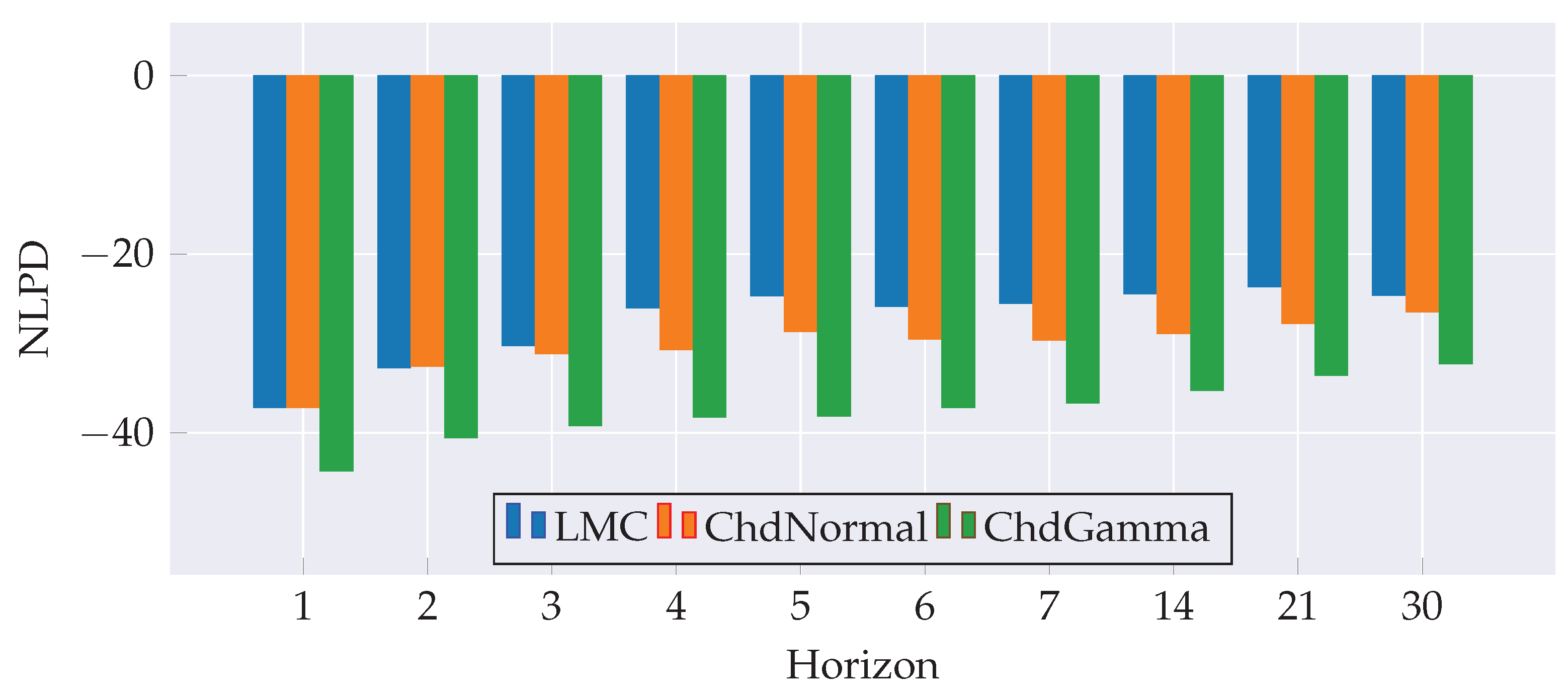

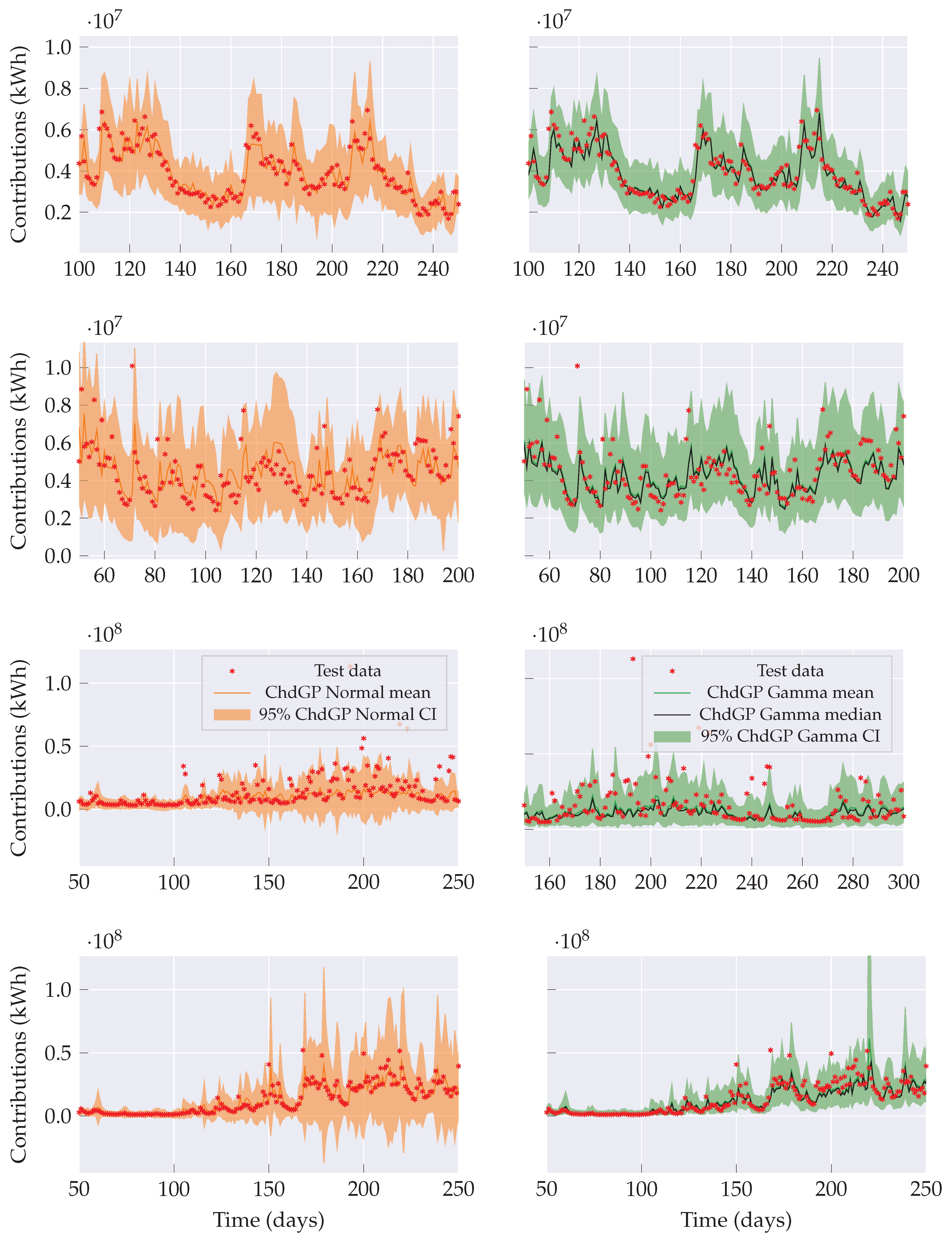

3.3. Performance Analysis

4. Concluding Remarks and Future Work

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Hojjati-Najafabadi, A.; Mansoorianfar, M.; Liang, T.; Shahin, K.; Karimi-Maleh, H. A review on magnetic sensors for monitoring of hazardous pollutants in water resources. Science of the Total Environment 2022, 824, 153844. [Google Scholar] [CrossRef]

- Lavers, D.; Ramos, M.H.; Magnusson, L.; Pechlivanidis, I.; Klein, B.; Prudhomme, C.; Arnal, L.; Crochemore, L.; Van Den Hurk, B.; Weerts, A.; et al. A vision for hydrological prediction. Atmosphere 2020, 11. [Google Scholar] [CrossRef]

- Yang, D.; Yang, Y.; Xia, J. Hydrological cycle and water resources in a changing world: A review. Geography and Sustainability 2021, 2, 115–122. [Google Scholar] [CrossRef]

- Morales-Marín, L.A.; Rodríguez, E.A.; Jaramillo, F. Water resources challenges in Colombia: hydrological science solutions for a sustainable future. Hydrological Sciences Journal 2026, 0, 1–20. [Google Scholar] [CrossRef]

- Yang, D.; Yang, Y.; Xia, J. Hydrological cycle and water resources in a changing world: A review. Geography and Sustainability 2021, 2, 115–122. [Google Scholar] [CrossRef]

- Lavers, D.A.; Ramos, M.H.; Magnusson, L.; Pechlivanidis, I.; Klein, B.; Prudhomme, C.; Arnal, L.; Crochemore, L.; Van den Hurk, B.; Weerts, A.H.; et al. A vision for hydrological prediction. Atmosphere 2020, 11, 237. [Google Scholar] [CrossRef]

- Yaseen, Z.; Allawi, M.; Yousif, A.; Jaafar, O.; Hamzah, F.; El-Shafie, A. Non-tuned machine learning approach for hydrological time series forecasting. Neural Computing and Applications 2018, 30, 1479–1491. [Google Scholar] [CrossRef]

- Kashani, M.; Ghorbani, M.; Dinpashoh, Y.; Shahmorad, S. Integration of Volterra model with artificial neural networks for rainfall-runoff simulation. Journal of Hydrology 2016, 540, 340–354. [Google Scholar] [CrossRef]

- Cheng, M.; Fang, F.; Kinouchi, T.; Navon, I.; Pain, C. Long lead-time daily and monthly streamflow forecasting using machine learning methods. Journal of Hydrology 2020, 590, 125376. [Google Scholar] [CrossRef]

- Sit, M.; Demiray, B.; Xiang, Z.; Ewing, G.; Sermet, Y.; Demir, I. A comprehensive review of deep learning applications in hydrology and water resources. Water Science and Technology 2020, 82, 2635–2670. [Google Scholar] [CrossRef]

- Wang, X.; Wang, Y.; Yuan, P.; Wang, L.; Cheng, D. An adaptive daily runoff forecast model using VMD-LSTM-PSO hybrid approach. Hydrological Sciences Journal 2021, 66, 1488–1502. [Google Scholar] [CrossRef]

- Wang, Z.; Lou, Y. Hydrological time series forecast model based on wavelet de-noising and ARIMA-LSTM. In Proceedings of the IEEE ITNEC, 2019, pp. 1697–1701. [CrossRef]

- Sahoo, B.; Jha, R.; Singh, A.; Kumar, D. LSTM recurrent neural network for low-flow hydrological forecasting. Acta Geophysica 2019, 67, 1471–1481. [Google Scholar] [CrossRef]

- Li, Y.; Yang, J. Hydrological time series prediction model based on attention-LSTM neural network. In Proceedings of the Proceedings of the 2019 2nd International Conference on Machine Learning and Machine Intelligence (MLMI ’19), New York, NY, USA, 2019; pp. 21–25. [CrossRef]

- Lakshminarayanan, B.; Pritzel, A.; Blundell, C. Simple and Scalable Predictive Uncertainty Estimation using Deep Ensembles. arXiv 2017, [1612.01474].

- Gal, Y.; Ghahramani, Z. Dropout as a Bayesian approximation: Representing model uncertainty in deep learning. In Proceedings of the ICML, 2016, pp. 1050–1059.

- Quilty, J.; Adamowski, J. A stochastic wavelet-based framework for forecasting hydrological processes. Environmental Modelling and Software 2020, 130, 104718. [Google Scholar] [CrossRef]

- Niu, W.; Feng, Z. Evaluating AI methods for streamflow forecasting. Sustainable Cities and Society 2021, 64, 102562. [Google Scholar] [CrossRef]

- Sun, N.; Zhang, S.; Peng, T.; Zhang, N.; Zhou, J.; Zhang, H. Random Forest and Gaussian Process Regression for streamflow forecasting. Water 2022, 14, 1828. [Google Scholar] [CrossRef]

- Yang, J.; Jakeman, A.; Fang, G.; Chen, X. Uncertainty analysis of a semi-distributed hydrologic model based on a Gaussian process emulator. Environmental Modelling and Software 2018, 101, 289–300. [Google Scholar] [CrossRef]

- Martínez-Acosta, L.; Medrano-Barboza, J.; López-Ramos, Á.; Remolina López, J.; López-Lambraño, Á. SARIMA approach to generating synthetic monthly rainfall in the Sinú river watershed in Colombia. Atmosphere 2020, 11, 602. [Google Scholar] [CrossRef]

- Pastrana-Cortés, J.D.; Gil-Gonzalez, J.; Álvarez Meza, A.M.; Cárdenas-Peña, D.A.; Orozco-Gutiérrez, A.A. Scalable and Interpretable Forecasting of Hydrological Time Series Based on Variational Gaussian Processes. Water 2024, 16. [Google Scholar] [CrossRef]

- Perrin, C.; Michel, C.; Andréassian, V. Does a large number of parameters enhance model performance? Comparative assessment of common catchment model structures on 429 catchments. Journal of Hydrology 2001, 242, 275–301. [Google Scholar] [CrossRef]

- Hensman, J.; Matthews, A.; Ghahramani, Z. Scalable Variational Gaussian Process Classification. In Proceedings of the Proceedings of the 18th International Conference on Artificial Intelligence and Statistics (AISTATS), San Diego, CA, USA, 2015; Vol. 38, Proceedings of Machine Learning Research, pp. 351–360.

- Bruinsma, W.; Perim, E.; Tebbutt, W.; Hosking, S.; Solin, A.; Turner, R. Scalable Exact Inference in Multi-Output Gaussian Processes. In Proceedings of the Proceedings of the 37th International Conference on Machine Learning (ICML), 2020, Vol. 119, Proceedings of Machine Learning Research, pp. 1190–1201.

- Saul, A.; Hensman, J.; Vehtari, A.; Lawrence, N. Chained Gaussian Processes. In Proceedings of the Proceedings of the 19th International Conference on Artificial Intelligence and Statistics (AISTATS), Cadiz, Spain, 2016; Vol. 51, Proceedings of Machine Learning Research, pp. 1431–1440.

- Moreno-Muñoz, P.; Artés, A.; Álvarez, M. Heterogeneous Multi-output Gaussian Process Prediction. In Proceedings of the Advances in Neural Information Processing Systems (NeurIPS), Red Hook, NY, USA, 2018; Vol. 31, pp. 6711–6720.

- Álvarez, M.; Rosasco, L.; Lawrence, N. Kernels for Vector-Valued Functions: A Review; Now Publishers Inc.: New York, NY, USA, 2012. [Google Scholar]

- Giraldo, J.J.; Álvarez, M. A fully natural gradient scheme for improving inference of the heterogeneous multi-output Gaussian process model. IEEE Transactions on Neural Networks and Learning Systems 2021, 33, 6429–6442. [Google Scholar] [CrossRef] [PubMed]

- Salimbeni, H.; Eleftheriadis, S.; Hensman, J. Natural Gradients in Practice: Non-conjugate Variational Inference in Gaussian Process Models. In Proceedings of the Proceedings of the 21st International Conference on Artificial Intelligence and Statistics (AISTATS), Playa Blanca, Lanzarote, Spain, 2018; Vol. 84, Proceedings of Machine Learning Research, pp. 689–697.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).