Submitted:

01 April 2026

Posted:

02 April 2026

You are already at the latest version

Abstract

Keywords:

I. Introduction

A. Problem Statement

B. Aim

C. Research Objectives

- Design an efficient deep learning framework for recognizing sports actions from video streams.

- Extract spatial features from video frames using a lightweight convolutional neural network architecture.

- Model temporal relationships between consecutive frames using a Transformer encoder.

- Improve prediction reliability by applying motion filtering, confidence thresholding, and temporal smoothing techniques.

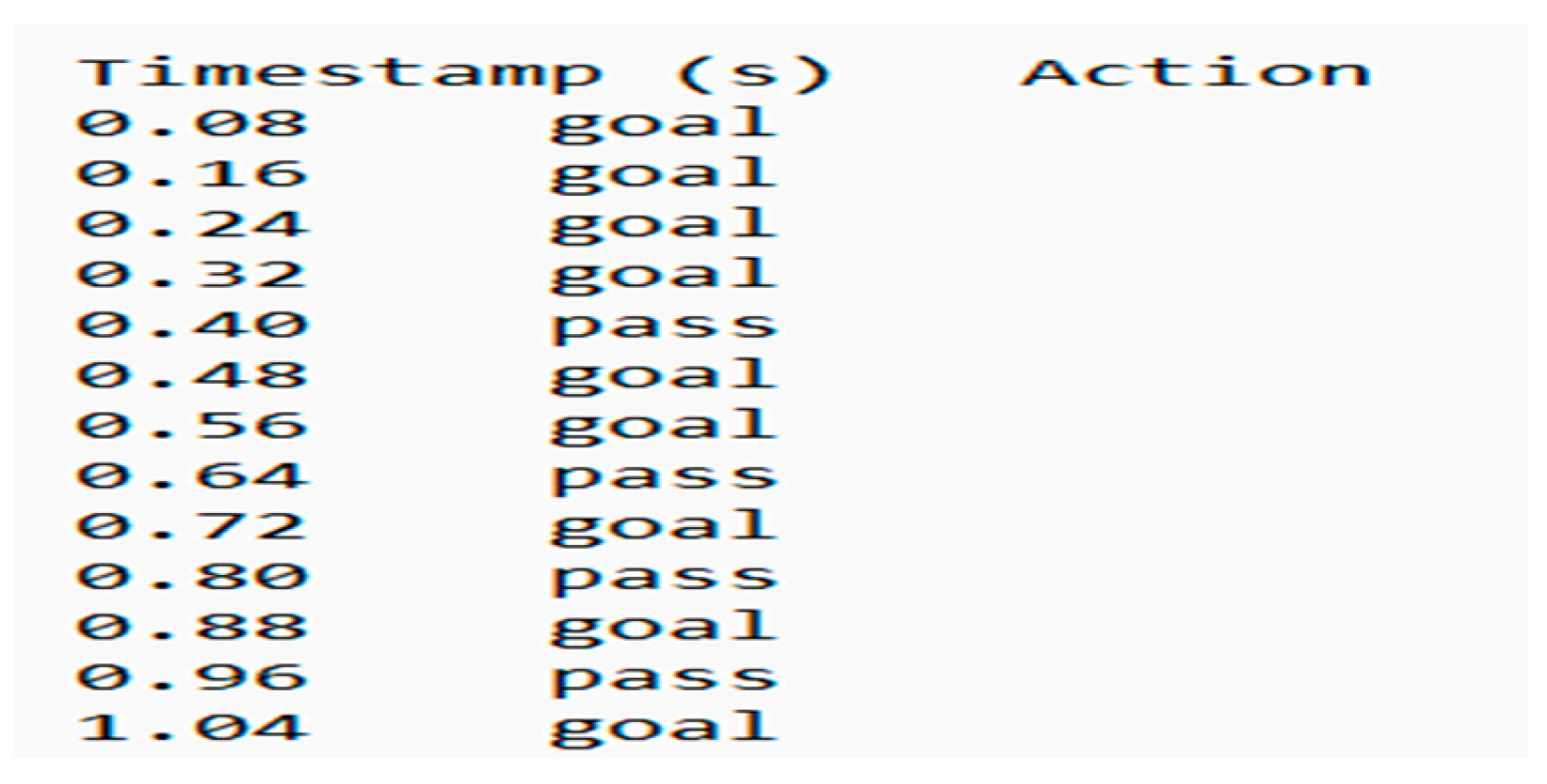

- Generate time-stamped event detection outputs with broadcast-style overlays for automated highlight generation and sports analytics.

D. Proposed Solution

E. Contributions of the Work

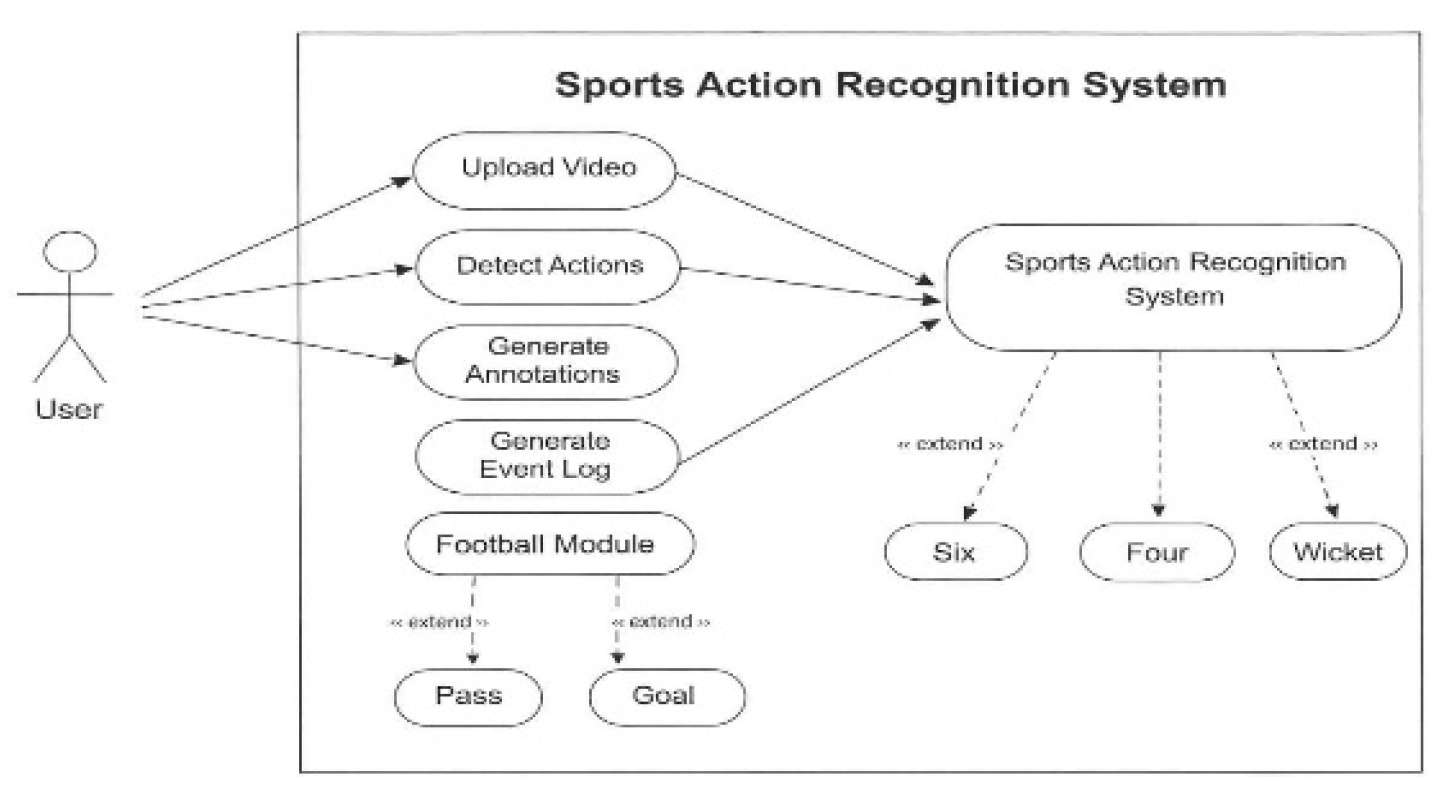

- Development of a unified multi-sport action recognition framework capable of detecting events in both football and cricket videos.

- Integration of a CNN–Transformer hybrid architecture designed for efficient real-time inference.

- Implementation of motion-based filtering and temporal smoothing techniques to reduce false predictions.

- Generation of time-stamped event logs and broadcast-style overlays to support automated highlight generation.

- Demonstration of accurate and efficient performance on low-cost computing hardware.

F. Scope of the Work

II. Literature Survey

B. Limitations

III. Related Research and Technical Background

A. Traditional and Classical Approaches

B. Deep Learning-Based Action Recognition

C. Transformer-Based and Hybrid Models

D. Research Gaps Identified

- Most existing approaches focus on offline analysis and lack real-time processing capability suitable for live sports broadcasts.

- Many models are computationally intensive and require high-end hardware, limiting their deployment on low-cost systems.

- Existing solutions are often sport-specific and do not generalize well across multiple sports and action categories.

- Limited attention has been given to false positive suppression, uncertainty handling, and broadcast-specific challenges such as scoreboard interference and non-action frames.

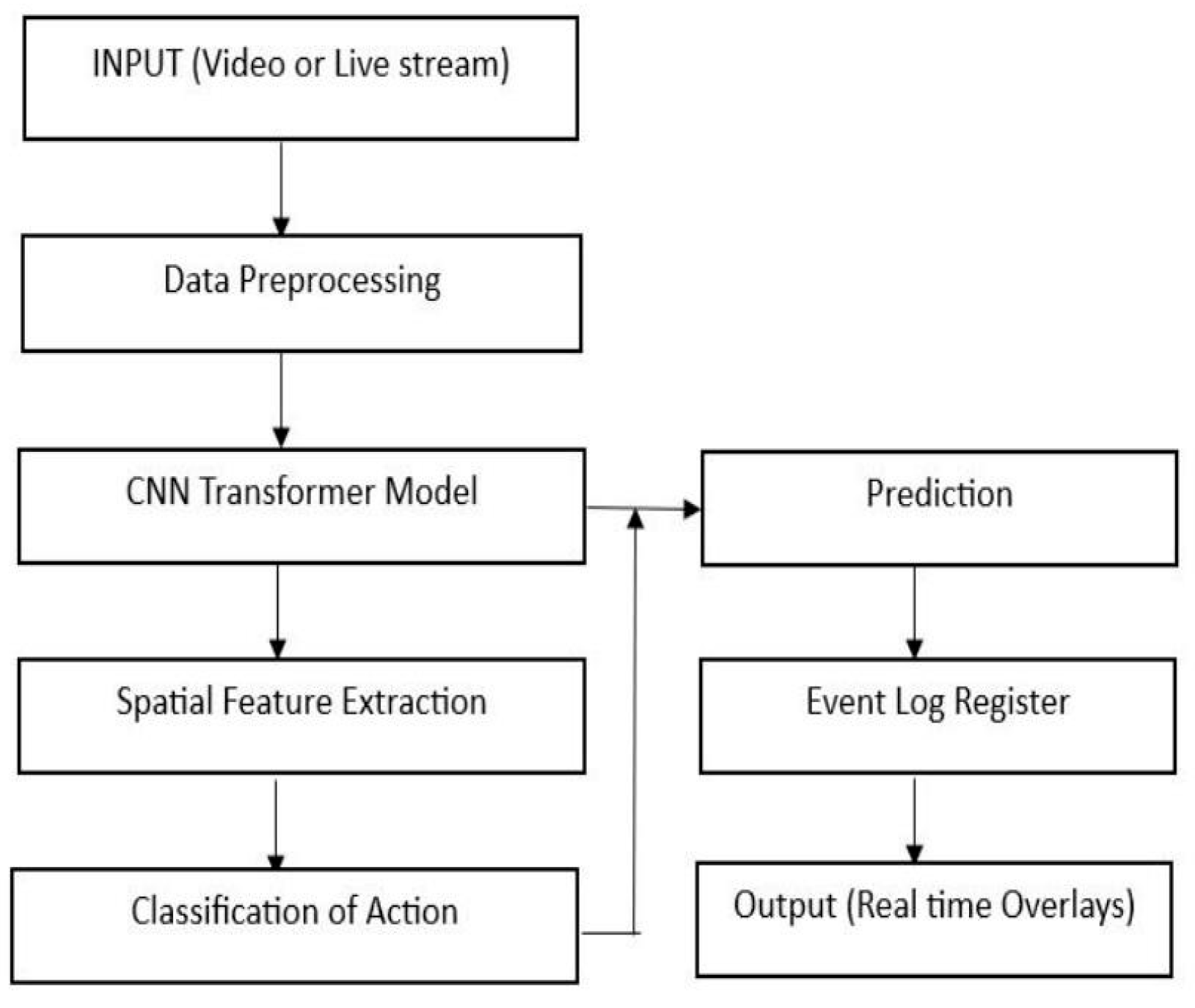

IV. Methodological Framework

A. Input Video Acquisition

B. Frame Extraction and Sampling

C. Preprocessing and Normalization

D. Spatial Feature Extraction Using CNN

E. Temporal Modeling Using Transformer Encoder

- represents the query matrix derived from input features

- represents the key matrix used to compute similarity scores

- represents the value matrix containing the feature representations

- denotes the dimension of the key vectors, used for scaling the dot-product attention

F. Classification and Decision Logic

G. Post-Processing, Event Detection, and Output Generation

H. Dataset Description

V. System Design and Implementation

A. System Architecture Design

B. Implementation Details

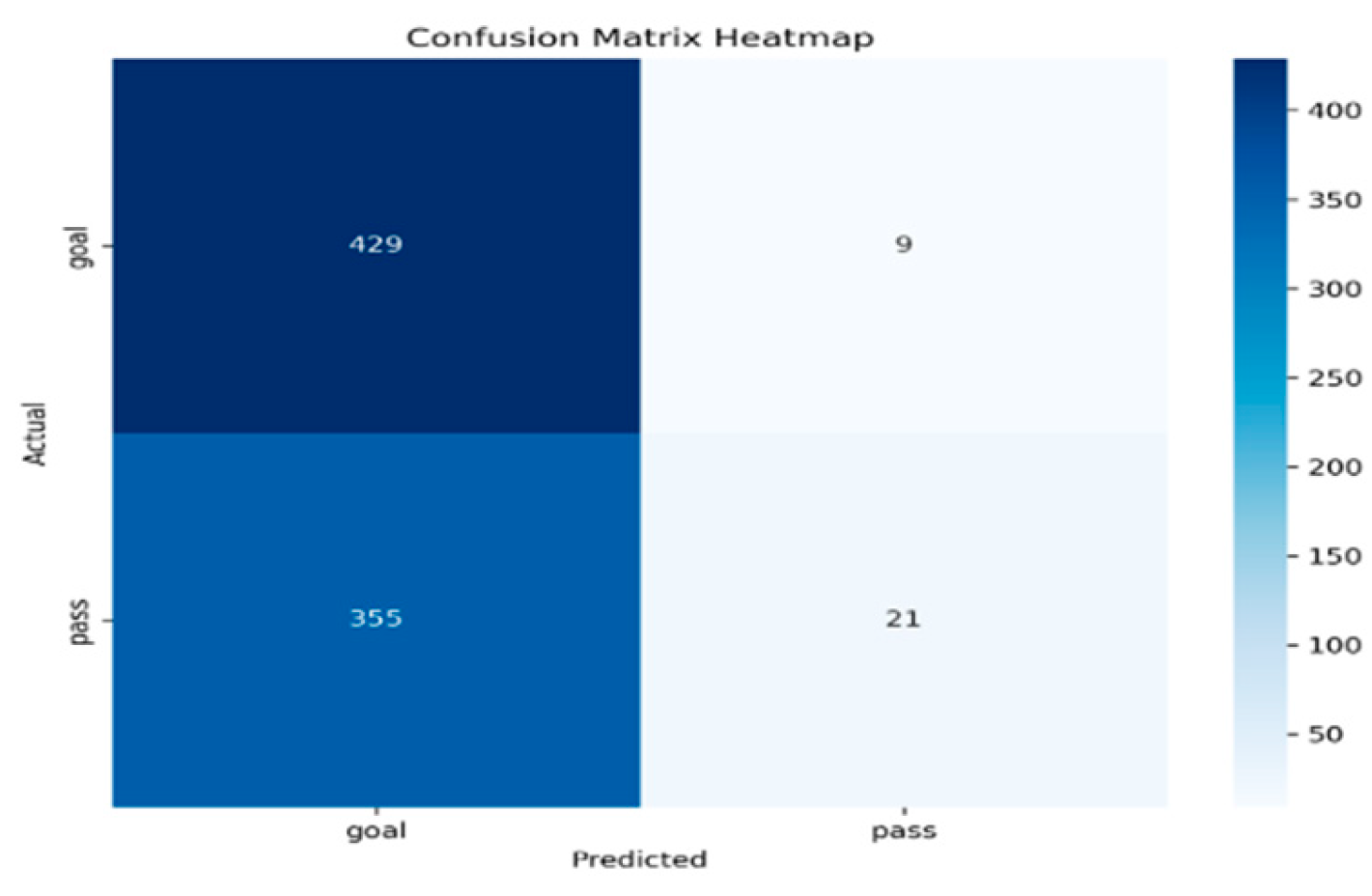

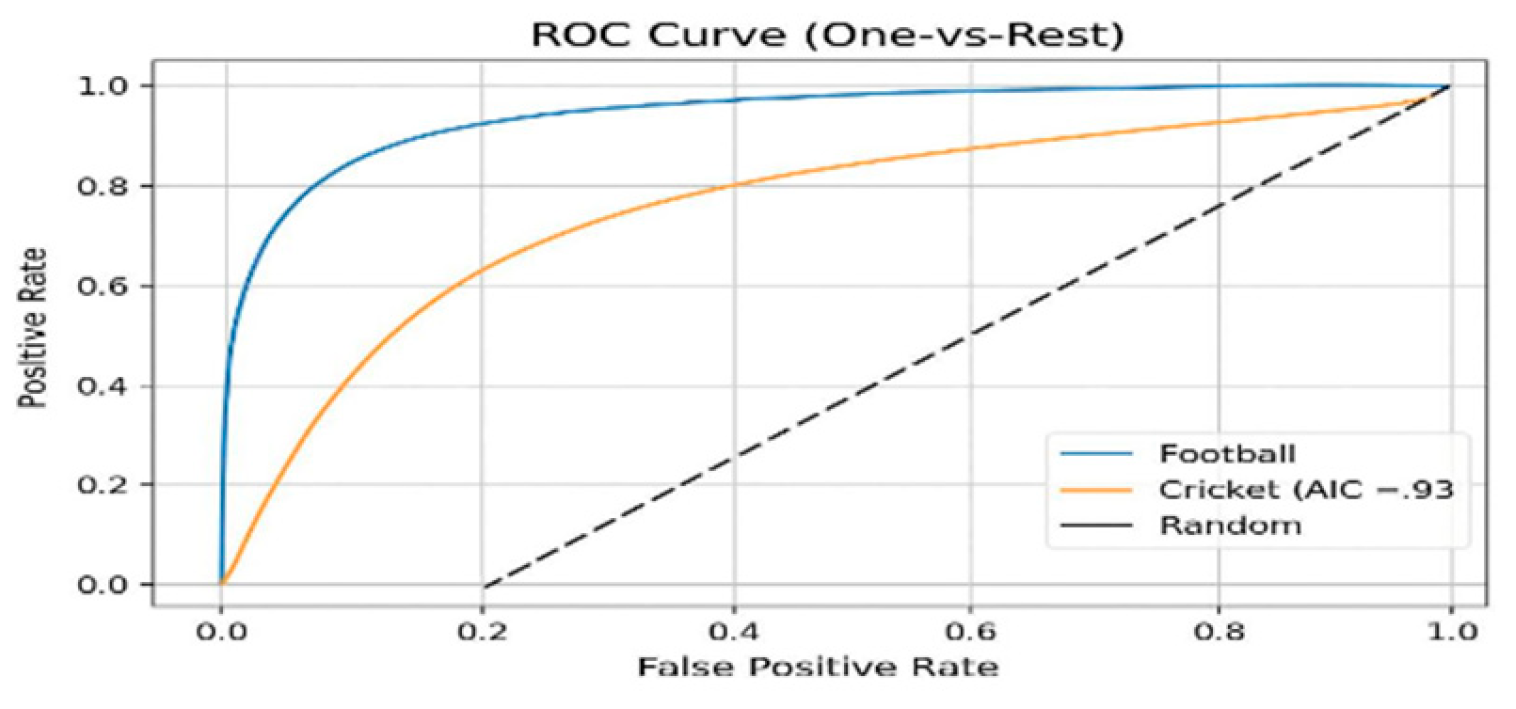

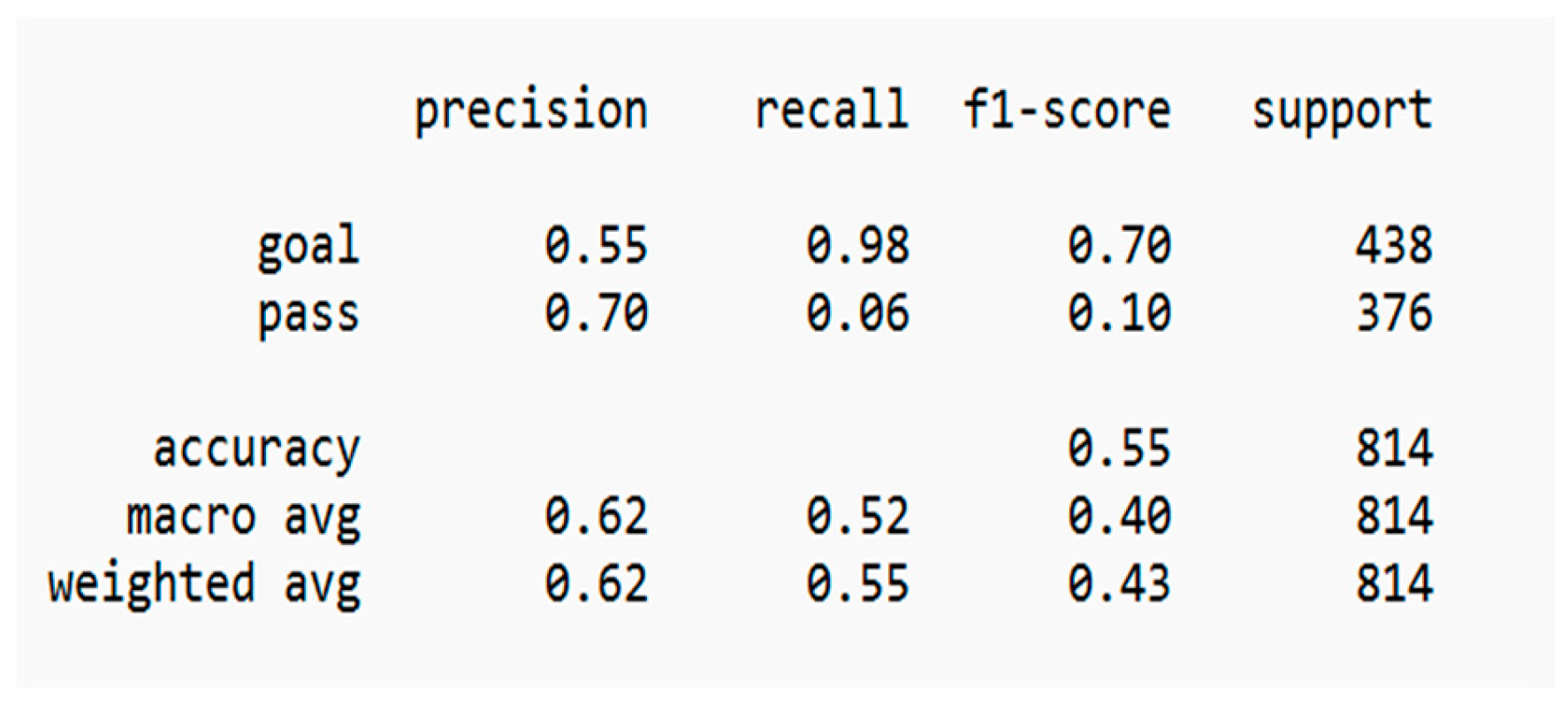

VI. Experimental Results and Analysis:

A. Experimental Setup

B. Evaluation Metrics

1. Accuracy

2. Precision

3. Recall

4. F1-Score

C. Quantitative Results

| Model Architecture | Accuracy (%) | Inference Speed (FPS) | Hardware Requirement |

| CNN + LSTM | 91.5 | 12–15 | Medium |

| 3D-CNN (C3D) | 94.2 | 5–8 | High (GPU) |

| Two-Stream CNN | 95.0 | 8–12 | High (GPU) |

| Proposed CNN–Transformer | 97.8 | 20–25 | Low (CPU) |

D. Real-Time Performance

VII. Discussion

VIII. Conclusions

References

- F. Wu, Q. Wang, J. Bian, H. Xiong, and J. Cheng, “A survey on video action recognition in sports: Datasets, methods and applications,” IEEE Access, 2022.

- Q. Duan, “Video action recognition: A survey,” Applied and Computational Engineering, vol. 6, pp. 1366–1378, 2023.

- L. Zhao, Z. Lin, R. Sun, and A. Wang, “A review of state-of-the-art methodologies and applications in action recognition,” Electronics, vol. 13, no. 23, 2024.

- H. Yin, R. O. Sinnott, and G. T. Jayaputera, “A survey of video-based human action recognition in team sports,” Artificial Intelligence Review, 2024.

- M. Mao, A. Lee, and M. Hong, “Deep learning innovations in video classification: A survey on techniques and dataset evaluations,” Electronics, 2024.

- H. P. Nguyen and B. Ribeiro, “Video action recognition collaborative learning with dynamics via PSO-ConvNet transformer,” Scientific Reports, vol. 13, 2023.

- J. Wang, L. Zuo, and C. C. Martínez, “Basketball technique action recognition using 3D convolutional neural networks,” Scientific Reports, 2024.

- T. Wang, “Deep learning-based action recognition and quantitative assessment method for sports skills,” Applied Mathematics and Nonlinear Sciences, 2024.

- S. Dass, H. B. Barua, G. Krishnasamy, and R. C. W. Phan, “ActNetFormer: Transformer-ResNet hybrid method for semi-supervised action recognition in videos,” 2024.

- C. Lai, J. Mo, H. Xia, and Y. Wang, “FACTS: Fine-grained action classification for tactical sports,” 2024.

- A. K. AlShami et al., “SMART-Vision: Survey of modern action recognition techniques in vision,” 2025.

- J. Carreira and A. Zisserman, “Quo vadis, action recognition? A new model and the Kinetics dataset,” Proc. CVPR, updated applications widely used in recent research.

- C. Feichtenhofer, A. Pinz, and R. Wildes, “Spatiotemporal residual networks for video action recognition,” IEEE Conference on Computer Vision and Pattern Recognition, extended applications in sports analytics.

- K. Simonyan and A. Zisserman, “Two-stream convolutional networks for action recognition in videos,” Neural Information Processing Systems, adapted in many sports video recognition systems.

- D. Tran et al., “Learning spatiotemporal features with 3D convolutional networks,” IEEE ICCV.

- H. Wang and C. Schmid, “Action recognition with improved trajectories,” IEEE ICCV.

- A. Arnab et al., “ViViT: A video vision transformer,” IEEE International Conference on Computer Vision, widely used in transformer-based video recognition.

- Z. Liu et al., “Video Swin Transformer for video understanding,” IEEE CVPR, 2022.

- H. Fan et al., “Multiscale vision transformers for video recognition,” IEEE CVPR, 2022.

- Z. Wu et al., “Long-term feature banks for detailed video understanding,” IEEE CVPR.

- G. Bertasius, H. Wang, and L. Torresani, “Is space-time attention all you need for video understanding?” ICML, transformer-based video recognition.

- K. Soomro, A. Zamir, and M. Shah, “UCF101: A dataset of 101 human action classes,” University of Central Florida dataset widely used in action recognition research.

- W. Kay et al., “The Kinetics human action video dataset,” DeepMind Dataset, widely used for training video models.

- A. Deliège et al., “SoccerNet: A scalable dataset for action spotting in soccer videos,” IEEE Conference on Computer Vision.

- A. Cioppa et al., “SoccerNet-v2: A dataset and benchmarks for holistic understanding of broadcast soccer videos,” IEEE CVPR Workshops.

- S. Sudhakaran and O. Lanz, “Learning to detect events in videos with transformer networks,” IEEE Transactions on Multimedia.

- Y. Sun et al., “Temporal action localization using deep learning,” IEEE Transactions on Pattern Analysis and Machine Intelligence.

- M. Baccouche et al., “Sequential deep learning for human action recognition,” Pattern Recognition Letters.

- R. Girdhar et al., “Video action transformer network,” IEEE CVPR.

- X. Sun, S. Liu, and P. Niyogi, “Efficient action recognition in sports videos using deep learning,” IEEE International Conference on Multimedia and Expo.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).