Submitted:

31 March 2026

Posted:

02 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

- detect syntax errors without the need to complete the code generation;

- execute generated code as soon as possible;

- detect semantic and runtime errors without the need to complete the code generation.

2. Materials and Methods

- Context-free grammar inference performed alongside LLM inference can detect syntax errors early [8].

- REPL can be used to execute code line-by-line as it is generated.

- LLM inference should be stopped once an error occurs.

- be the vocabulary of tokens produced by the LLM,

- be the set of all finite sequences of tokens (“words”),

- be the set of all finite sequences of lexemes of the lexer of programming language p.

- is labelled S.

- If a node v is labelled and has children , then there is a production

- The leaves of T are labelled by tokens in . Reading the leaf labels from left to right gives theyieldof T.

| Algorithm 1:RunCodeFromStream |

|

3. Results

- The first (so-called “sync”) executes the code only after the LLM has finished the generation of the code snippet.

- The second (so-called “async”) parses and executes the code on each line of inference.

- If the generated code contains errors, the async algorithm will halt on error earlier than the sync algorithm.

- If the generated code is correct, the async algorithm will execute the code earlier (first-output time).

- 1.

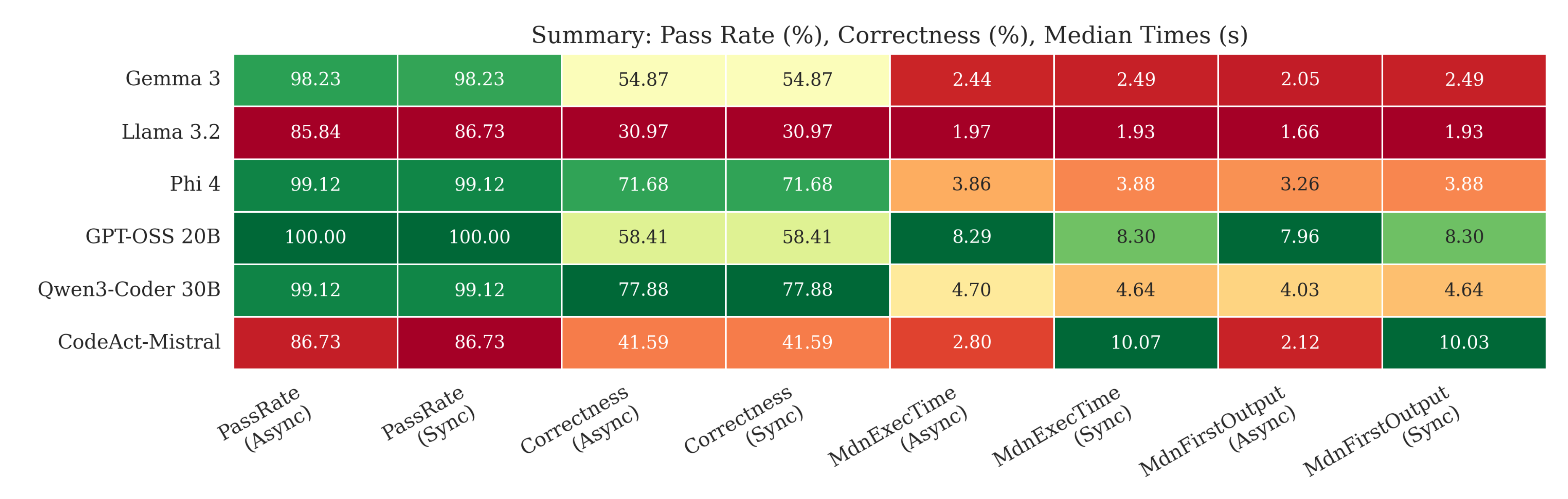

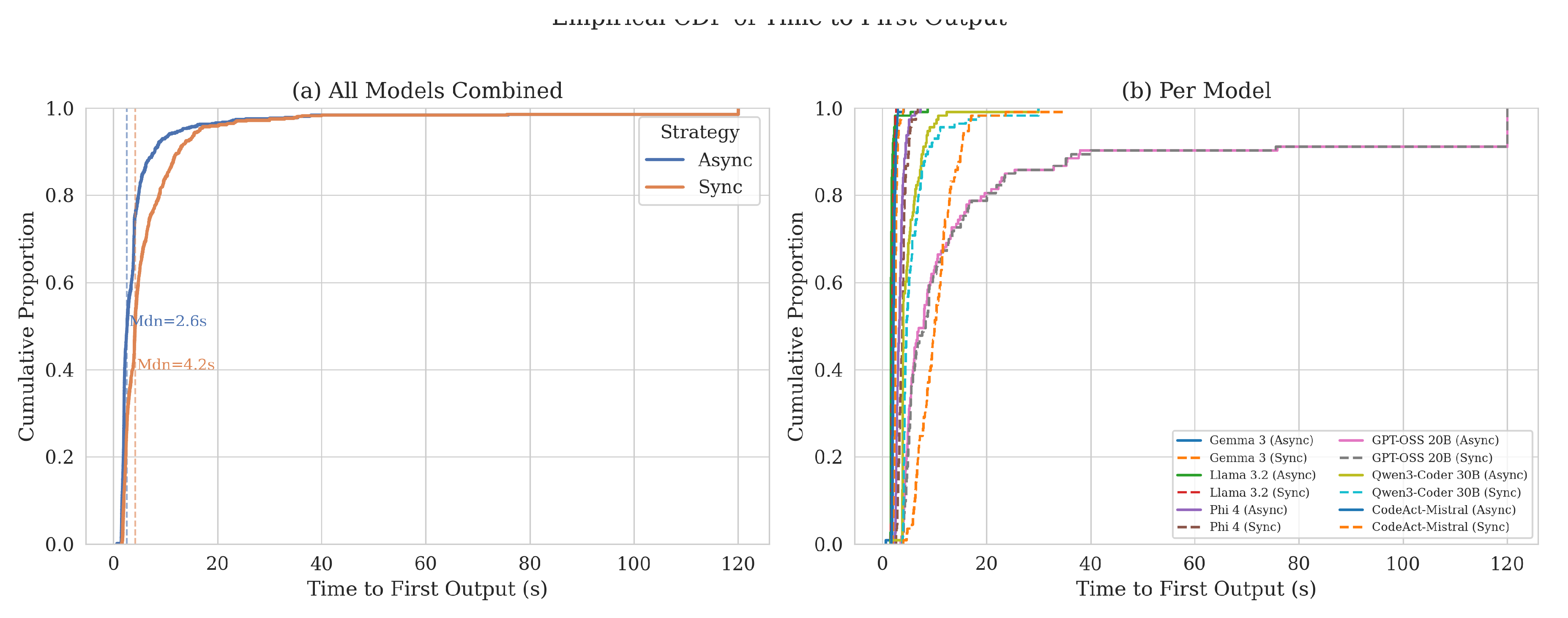

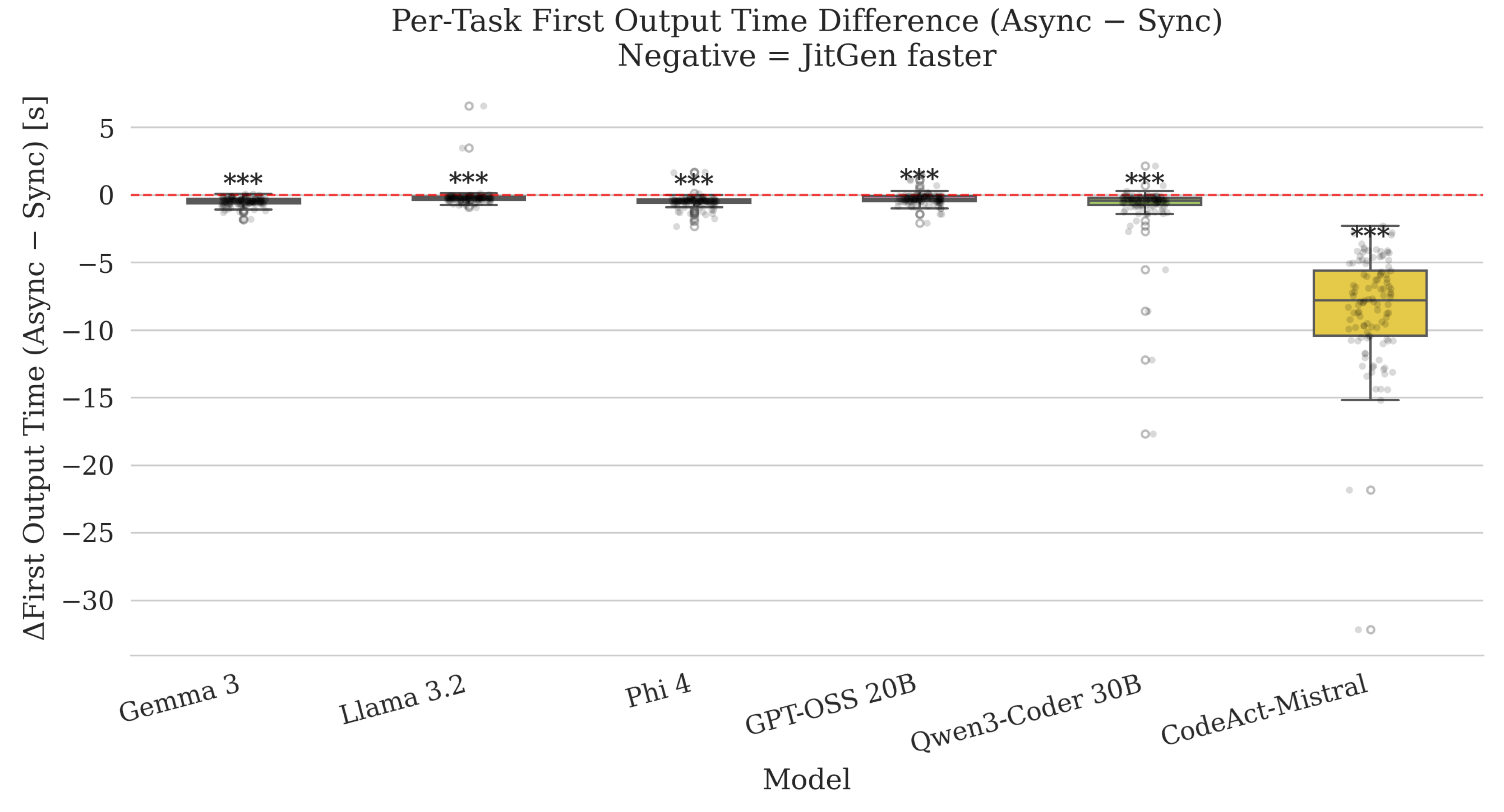

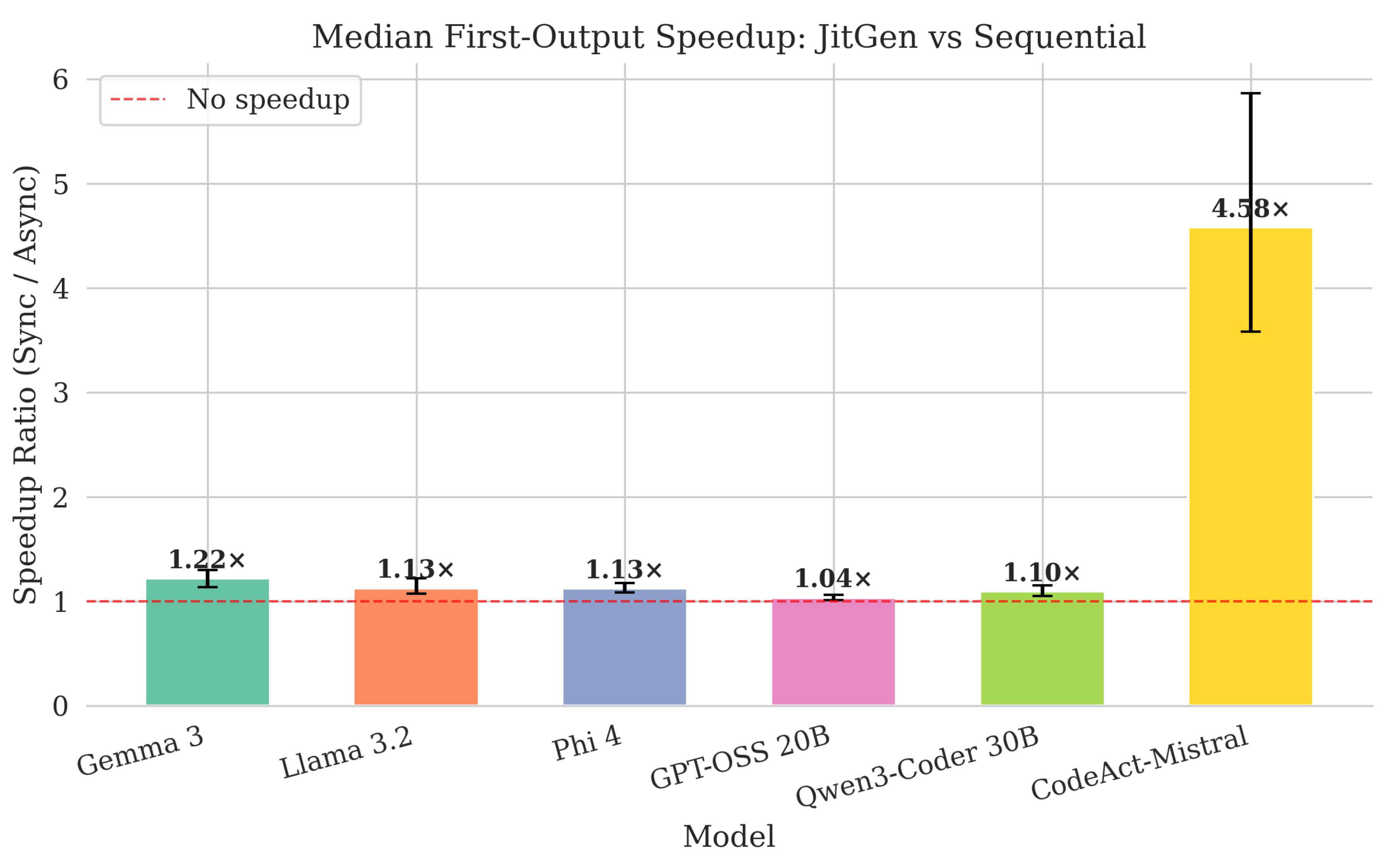

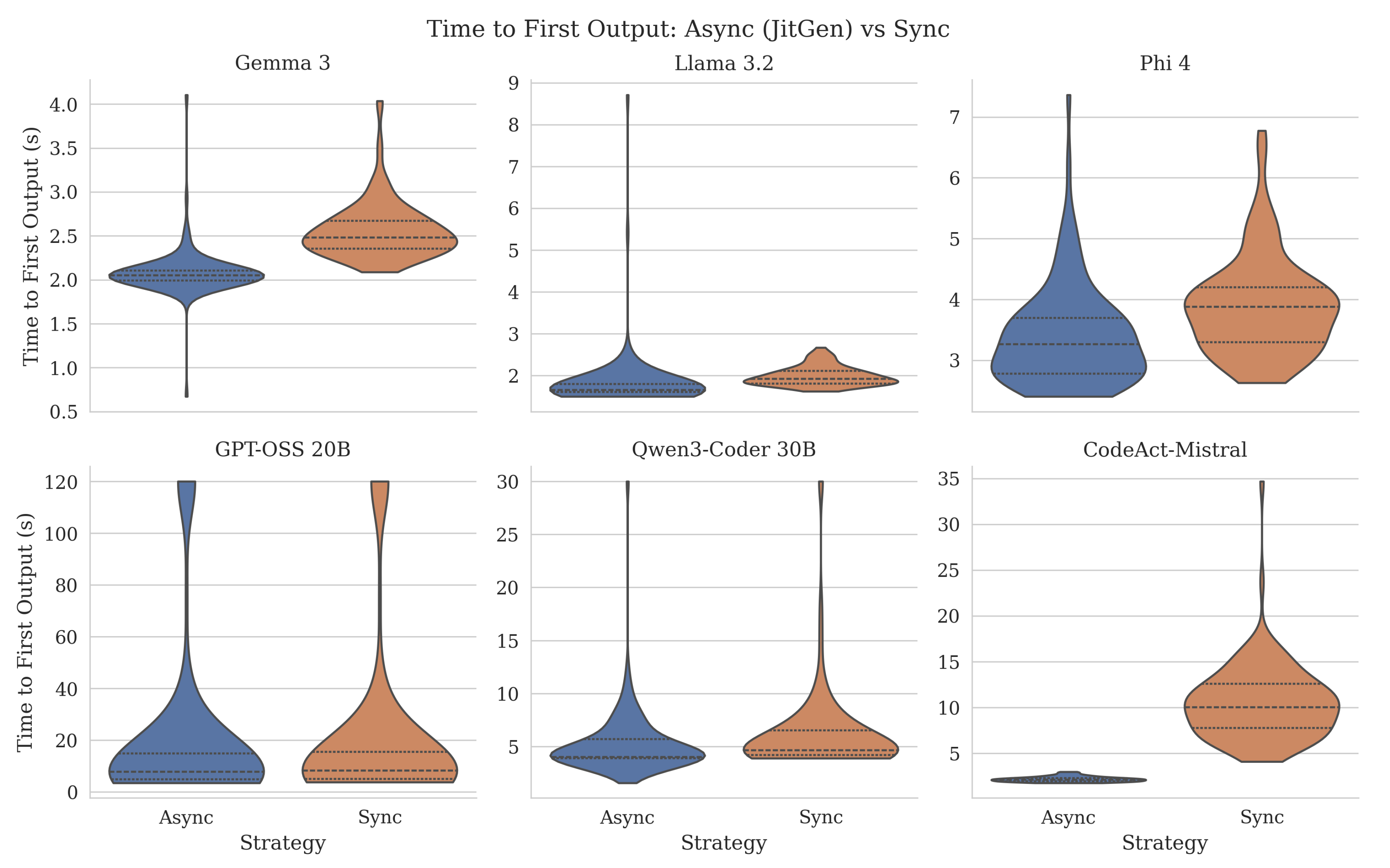

- Time to first output is substantially lower under async (JitGen) for every model; paired Wilcoxon tests remain significant after Bonferroni correction (Table A1).

- 2.

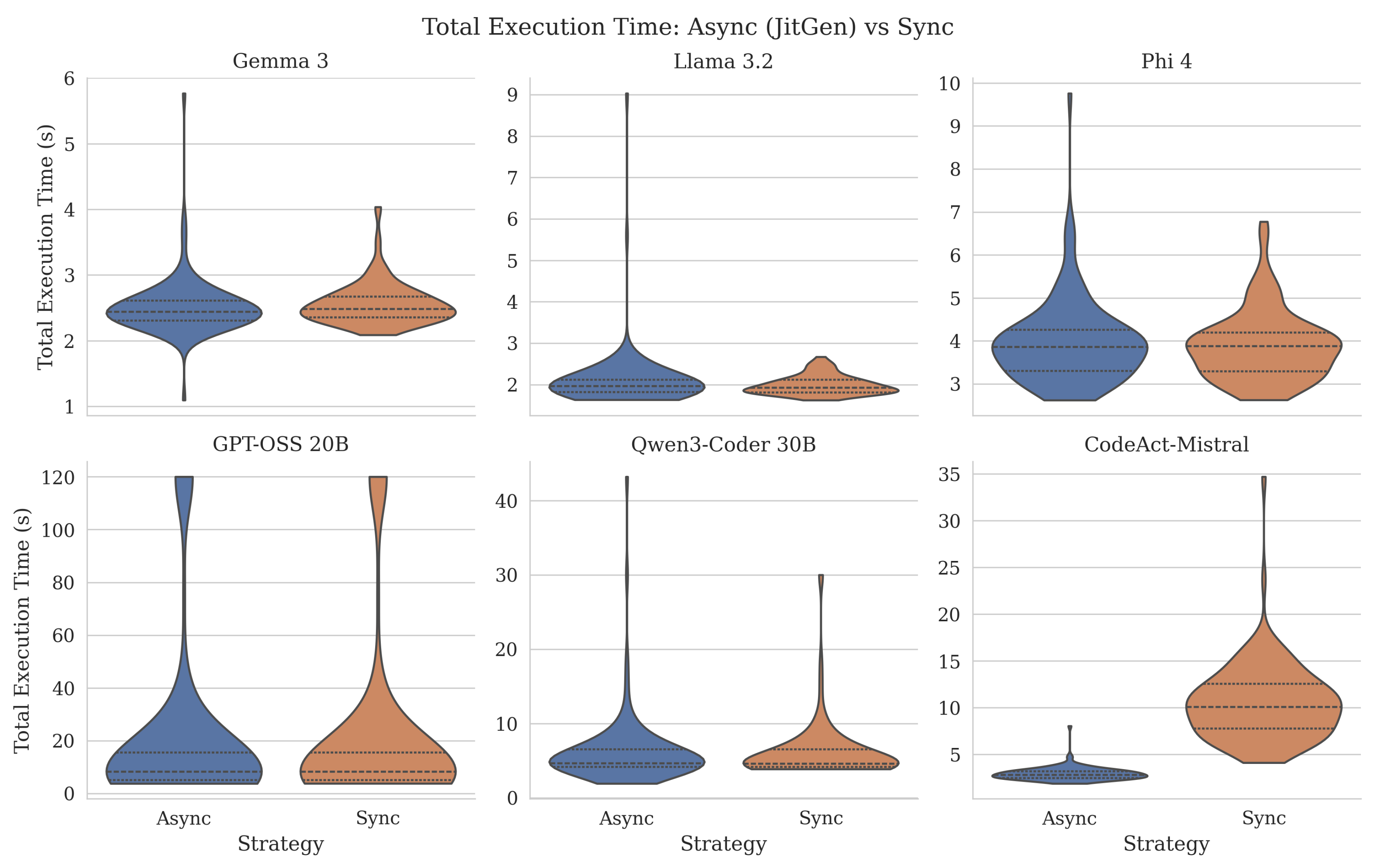

- Total execution time shows the largest async advantage for CodeAct–Mistral (roughly an order of magnitude at the median), consistent with more top-level, line-by-line executable script structure [14]. For GPT-OSS 20B and Qwen3-Coder 30B, median total times are nearly unchanged between strategies, and differences are not significant after correction—whereas first-output gains remain clear, matching a setting where much of the stream is shared reasoning before runnable code appears [15,16].

- 3.

- Gemma 3 and Phi 4 exhibit significant paired differences for total execution time in our tests (Table A1); Llama 3.2 does not for total time after correction, despite faster first output under async.

- 4.

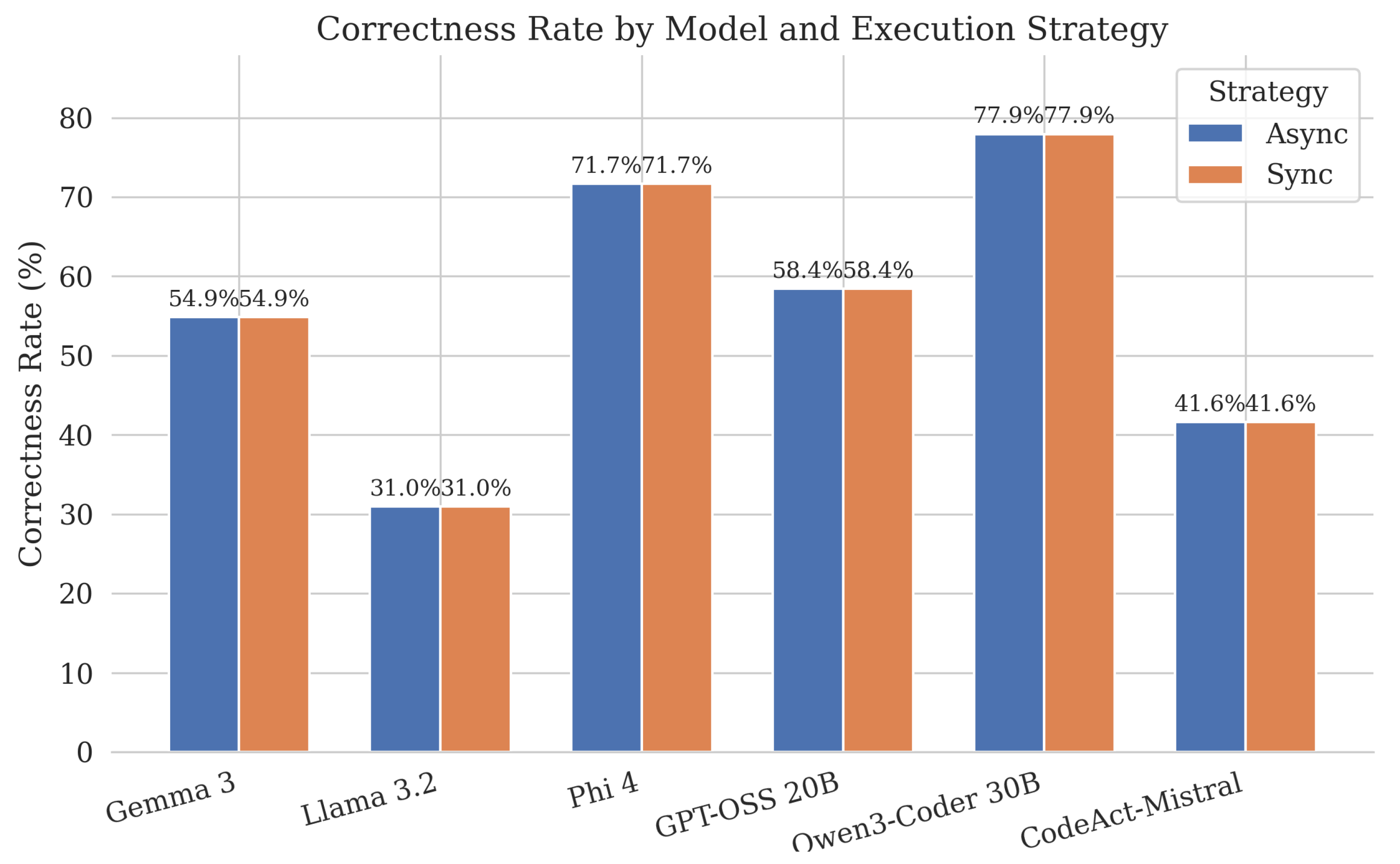

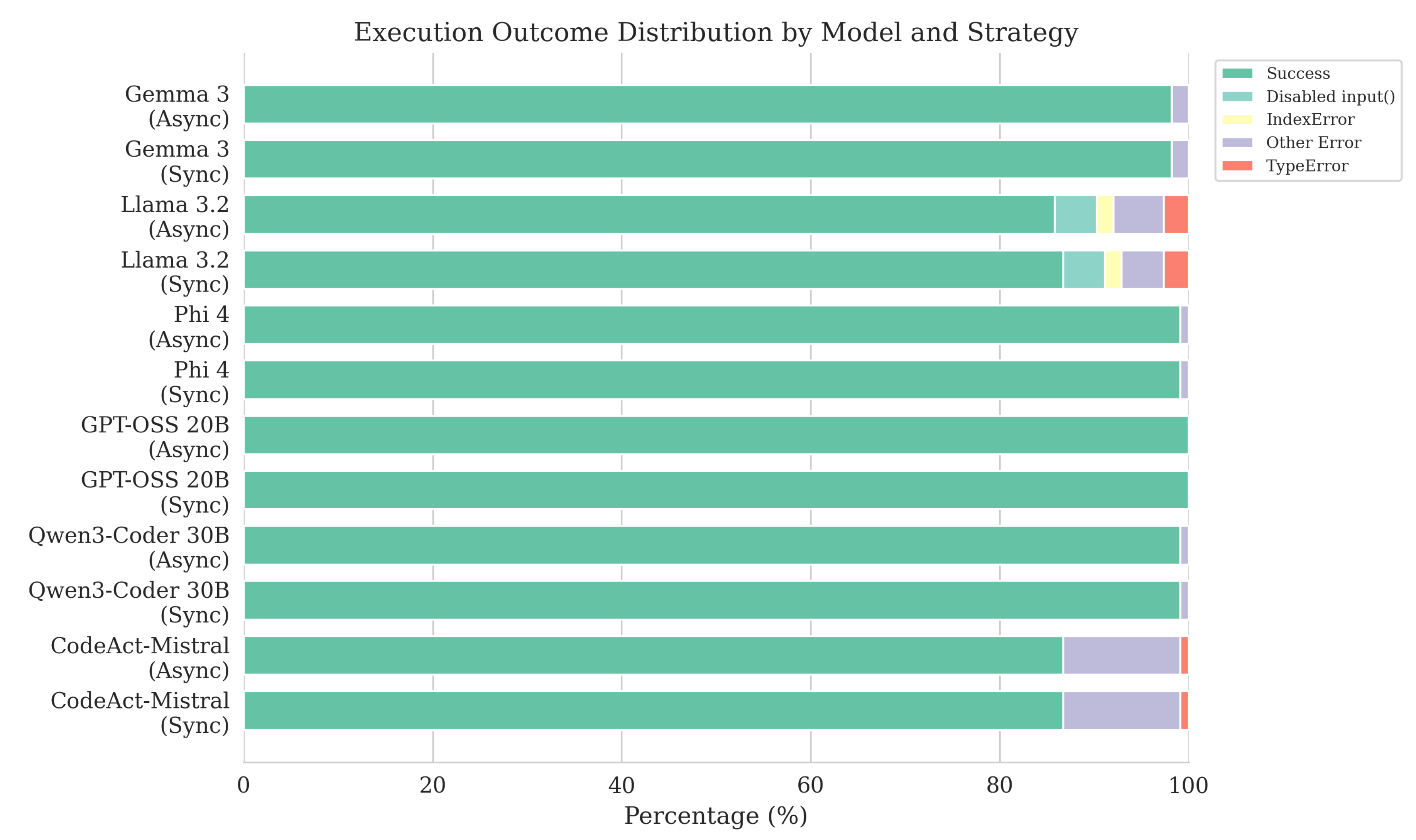

- Correctness and timeout rates match between async and sync for each model; pass rates match for five of six models, with Llama 3.2 differing by less than one percentage point (85.84% vs 86.73%; Table A2).

4. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Informed Consent Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| DFA | Deterministic Finite Automaton |

| LLM | Large Language Model |

| REPL | Read-Eval-Print Loop |

Appendix A.

Appendix A.1. Dataset

Appendix A.2. Extended Benchmark Figures

Appendix A.3. Supplementary Tables

| Model | Metric | N | Mean | Med. | W | p | r | Sig. | |

|---|---|---|---|---|---|---|---|---|---|

| Gemma 3 | Exec. time | 113 | 803 | 0.751 | Yes | ||||

| Gemma 3 | First output | 113 | 8 | 0.9975 | Yes | ||||

| Llama 3.2 | Exec. time | 113 | 2222 | 0.00423 | 0.0507 | 0.310 | No | ||

| Llama 3.2 | First output | 113 | 248 | 0.923 | Yes | ||||

| Phi 4 | Exec. time | 113 | 1715 | 0.468 | Yes | ||||

| Phi 4 | First output | 113 | 221 | 0.931 | Yes | ||||

| GPT-OSS 20B | Exec. time | 113 | 2763 | 0.190 | 1.000 | 0.142 | No | ||

| GPT-OSS 20B | First output | 113 | 653 | 0.797 | Yes | ||||

| Qwen3-Coder 30B | Exec. time | 113 | 2792 | 0.220 | 1.000 | 0.133 | No | ||

| Qwen3-Coder 30B | First output | 113 | 225 | 0.930 | Yes | ||||

| CodeAct-Mistral | Exec. time | 113 | 0 | 1.000 | Yes | ||||

| CodeAct-Mistral | First output | 113 | 0 | 1.000 | Yes |

| Model | Algo. | N | Pass | Correct | Timeout |

|---|---|---|---|---|---|

| CodeAct-Mistral | Async | 113 | 86.73 | 41.59 | 0.0 |

| CodeAct-Mistral | Sync | 113 | 86.73 | 41.59 | 0.0 |

| GPT-OSS 20B | Async | 113 | 100.00 | 58.41 | 0.0 |

| GPT-OSS 20B | Sync | 113 | 100.00 | 58.41 | 0.0 |

| Gemma 3 | Async | 113 | 98.23 | 54.87 | 0.0 |

| Gemma 3 | Sync | 113 | 98.23 | 54.87 | 0.0 |

| Llama 3.2 | Async | 113 | 85.84 | 30.97 | 0.0 |

| Llama 3.2 | Sync | 113 | 86.73 | 30.97 | 0.0 |

| Phi 4 | Async | 113 | 99.12 | 71.68 | 0.0 |

| Phi 4 | Sync | 113 | 99.12 | 71.68 | 0.0 |

| Qwen3-Coder 30B | Async | 113 | 99.12 | 77.88 | 0.0 |

| Qwen3-Coder 30B | Sync | 113 | 99.12 | 77.88 | 0.0 |

| Model | Algo. | No input() |

Index err. |

Other err. |

Success | Type err. |

|---|---|---|---|---|---|---|

| CodeAct-Mistral | Async | 0 | 0 | 14 | 98 | 1 |

| CodeAct-Mistral | Sync | 0 | 0 | 14 | 98 | 1 |

| GPT-OSS 20B | Async | 0 | 0 | 0 | 113 | 0 |

| GPT-OSS 20B | Sync | 0 | 0 | 0 | 113 | 0 |

| Gemma 3 | Async | 0 | 0 | 2 | 111 | 0 |

| Gemma 3 | Sync | 0 | 0 | 2 | 111 | 0 |

| Llama 3.2 | Async | 5 | 2 | 6 | 97 | 3 |

| Llama 3.2 | Sync | 5 | 2 | 5 | 98 | 3 |

| Phi 4 | Async | 0 | 0 | 1 | 112 | 0 |

| Phi 4 | Sync | 0 | 0 | 1 | 112 | 0 |

| Qwen3-Coder 30B | Async | 0 | 0 | 1 | 112 | 0 |

| Qwen3-Coder 30B | Sync | 0 | 0 | 1 | 112 | 0 |

Appendix A.4. Benchmark Prompts

References

- Li, R.; Allal, L.B.; Zi, Y.; Muennighoff, N.; Kocetkov, D.; Mou, C.; Marone, M.; Akiki, C.; Li, J.; Chim, J.; et al. StarCoder: may the source be with you! arXiv 2023, arXiv:2305.06161. [Google Scholar] [CrossRef]

- Islam, M.A.; Ali, M.E.; Parvez, M.R. MapCoder: Multi-Agent Code Generation for Competitive Problem Solving. arXiv 2024, arXiv:cs.CL/2405.11403. [Google Scholar]

- Zhong, L.; Wang, Z.; Shang, J. Debug like a Human: A Large Language Model Debugger via Verifying Runtime Execution Step-by-step. arXiv 2024, arXiv:cs.SE/2402.16906. [Google Scholar]

- Xu, S.; Li, Z.; Mei, K.; Zhang, Y. AIOS Compiler: LLM as Interpreter for Natural Language Programming and Flow Programming of AI Agents. arXiv 2024, arXiv:cs.CL/2405.06907. [Google Scholar]

- Hassan, A.E.; Oliva, G.A.; Lin, D.; Chen, B.; Jiang, Z.M. Towards AI-Native Software Engineering (SE 3.0): A Vision and a Challenge Roadmap. arXiv 2024, arXiv:2410.06107. [Google Scholar] [CrossRef]

- Larbi, M.; Akli, A.; Papadakis, M.; Bouyousfi, R.; Cordy, M.; Sarro, F.; Traon, Y.L. When Prompts Go Wrong: Evaluating Code Model Robustness to Ambiguous, Contradictory, and Incomplete Task Descriptions. arXiv 2025, arXiv:2507.20439. [Google Scholar] [CrossRef]

- Dong, Y.; Liu, Y.; Jiang, X.; Gu, B.; Jin, Z.; Li, G. Rethinking Repetition Problems of LLMs in Code Generation. In Proceedings of the Proceedings of the 63rd Annual Meeting of the Association for Computational Linguistics; Che, W., Nabende, J., Shutova, E., Pilehvar, M.T., Eds.; Vienna, Austria, 2025; Volume 1, pp. 965–985. [Google Scholar] [CrossRef]

- Ugare, S.; Suresh, T.; Kang, H.; Misailovic, S.; Singh, G. SynCode: LLM Generation with Grammar Augmentation, 2024. arXiv arXiv:cs.LG/2403.01632.

- Austin, J.; Odena, A.; Nye, M.; Bosma, M.; Michalewski, H.; Dohan, D.; Jiang, E.; Cai, C.; Terry, M.; Le, Q.; et al. Program Synthesis with Large Language Models. arXiv arXiv:2108.07732. [PubMed]

- Wilcoxon, F. Individual Comparisons by Ranking Methods. Biometrics Bulletin 1945, 1, 80–83. [Google Scholar] [CrossRef]

- Pratt, J.W. Remarks on Zeros and Ties in the Wilcoxon Signed Rank Procedures. Journal of the American Statistical Association 1959, 54, 655–667. [Google Scholar] [CrossRef]

- Bonferroni, C. Teoria statistica delle classi e calcolo delle probabilità. Pubblicazioni del R Istituto Superiore di Scienze Economiche e Commerciali di Firenze 1936, 8, 3–62. [Google Scholar]

- Kerby, D.S. The Simple Difference Formula: An Approach to Teaching Nonparametric Correlation. Comprehensive Psychology 2014, 3, 11.IT.3.1. [Google Scholar] [CrossRef]

- Wang, X.; Chen, Y.; Yuan, L.; Zhang, Y.; Li, Y.; Peng, H.; Ji, H. Executable Code Actions Elicit Better LLM Agents. arXiv 2024, arXiv:2402.01030. [Google Scholar] [CrossRef]

- Agarwal, S.; Ahmad, L.; Ai, J.; Altman, S.; Applebaum, A.; Arbus, E.; Arora, R.K.; Bai, Y.; et al.; OpenAI; : gpt-oss-120b & gpt-oss-20b Model Card. arXiv 2025, arXiv:cs.CL/2508.10925. [Google Scholar]

- Yang, A.; Li, A.; Yang, B.; Zhang, B.; Hui, B.; Zheng, B.; Yu, B.; Gao, C.; Huang, C.; Lv, C.; et al. Qwen3 Technical Report. arXiv 2025, arXiv:2505.09388. [Google Scholar] [CrossRef]

| Model | Algo. | N | Exec. time | First output | ||||

|---|---|---|---|---|---|---|---|---|

| Mean | Med. | Std | Mean | Med. | Std | |||

| CodeAct-Mistral | Async | 113 | 2.926 | 2.802 | 0.719 | 2.163 | 2.117 | 0.286 |

| CodeAct-Mistral | Sync | 113 | 10.490 | 10.074 | 4.084 | 10.466 | 10.027 | 4.085 |

| GPT-OSS 20B | Async | 113 | 20.523 | 8.295 | 32.635 | 20.179 | 7.958 | 32.730 |

| GPT-OSS 20B | Sync | 113 | 20.464 | 8.301 | 32.636 | 20.464 | 8.300 | 32.636 |

| Gemma 3 | Async | 113 | 2.516 | 2.442 | 0.444 | 2.081 | 2.055 | 0.273 |

| Gemma 3 | Sync | 113 | 2.565 | 2.486 | 0.327 | 2.565 | 2.485 | 0.327 |

| Llama 3.2 | Async | 113 | 2.101 | 1.969 | 0.778 | 1.821 | 1.659 | 0.765 |

| Llama 3.2 | Sync | 113 | 1.992 | 1.930 | 0.231 | 1.992 | 1.930 | 0.231 |

| Phi 4 | Async | 113 | 3.969 | 3.864 | 0.983 | 3.395 | 3.263 | 0.823 |

| Phi 4 | Sync | 113 | 3.886 | 3.884 | 0.805 | 3.886 | 3.884 | 0.805 |

| Qwen3-Coder 30B | Async | 113 | 6.232 | 4.696 | 4.852 | 5.232 | 4.027 | 2.967 |

| Qwen3-Coder 30B | Sync | 113 | 6.100 | 4.642 | 4.019 | 6.100 | 4.642 | 4.019 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).