Submitted:

01 April 2026

Posted:

02 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

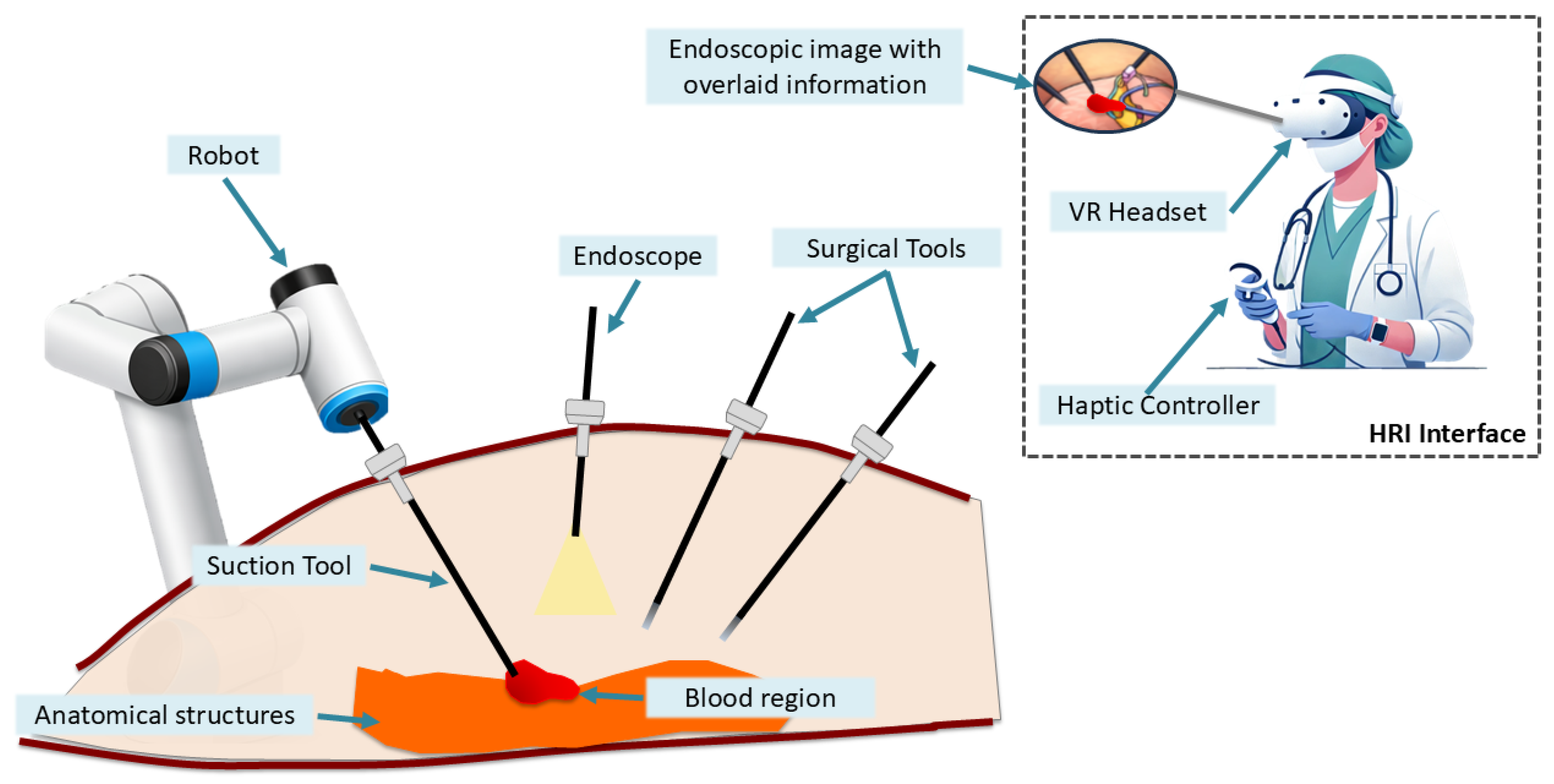

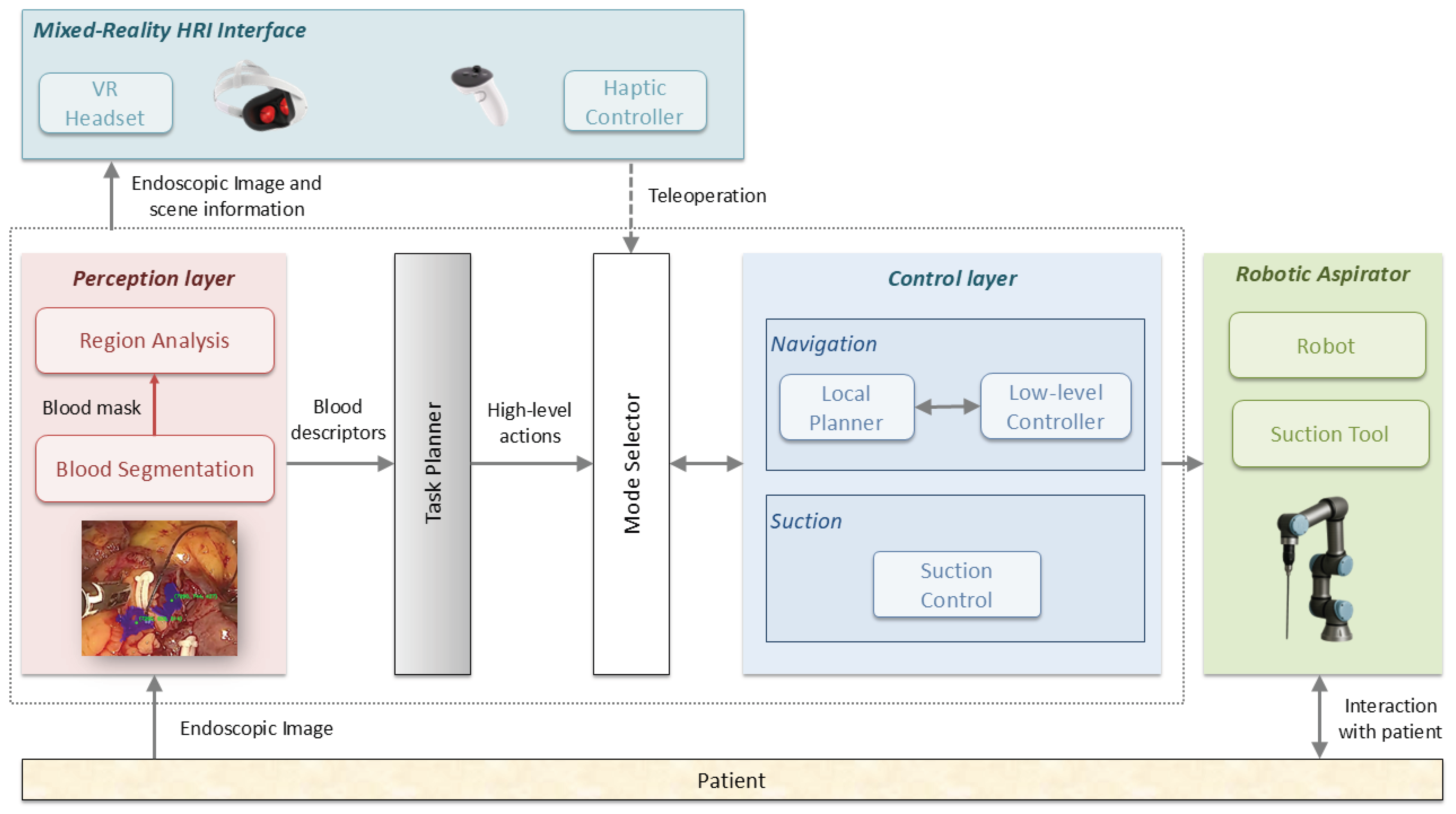

- The design and implementation of a unified framework for autonomous surgical blood aspiration, integrating perception, a task planner for high-level actions, a navigation controller based on artificial potential fields, together with a mixed-reality human-robot interaction interface for human supervision and teleoperation if required.

- Analysis of different suction strategies based on centroid-based computation methods for the target selection, including a novel evaluation of their spatial discrepancy and its impact on the robotic navigation behavior.

- An extensive experimental validation under multiple representative bleeding scenarios, providing a systematic comparison of four centroid-based strategies in terms of reaction time, suction efficiency, and removal performance.

2. Material and Methods

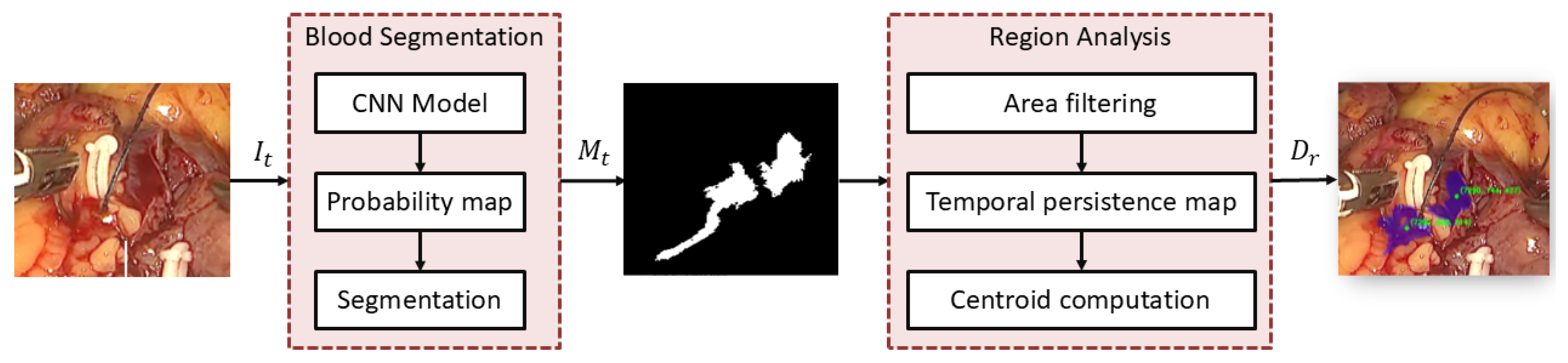

2.1. Blood Segmentation and Region Analysis

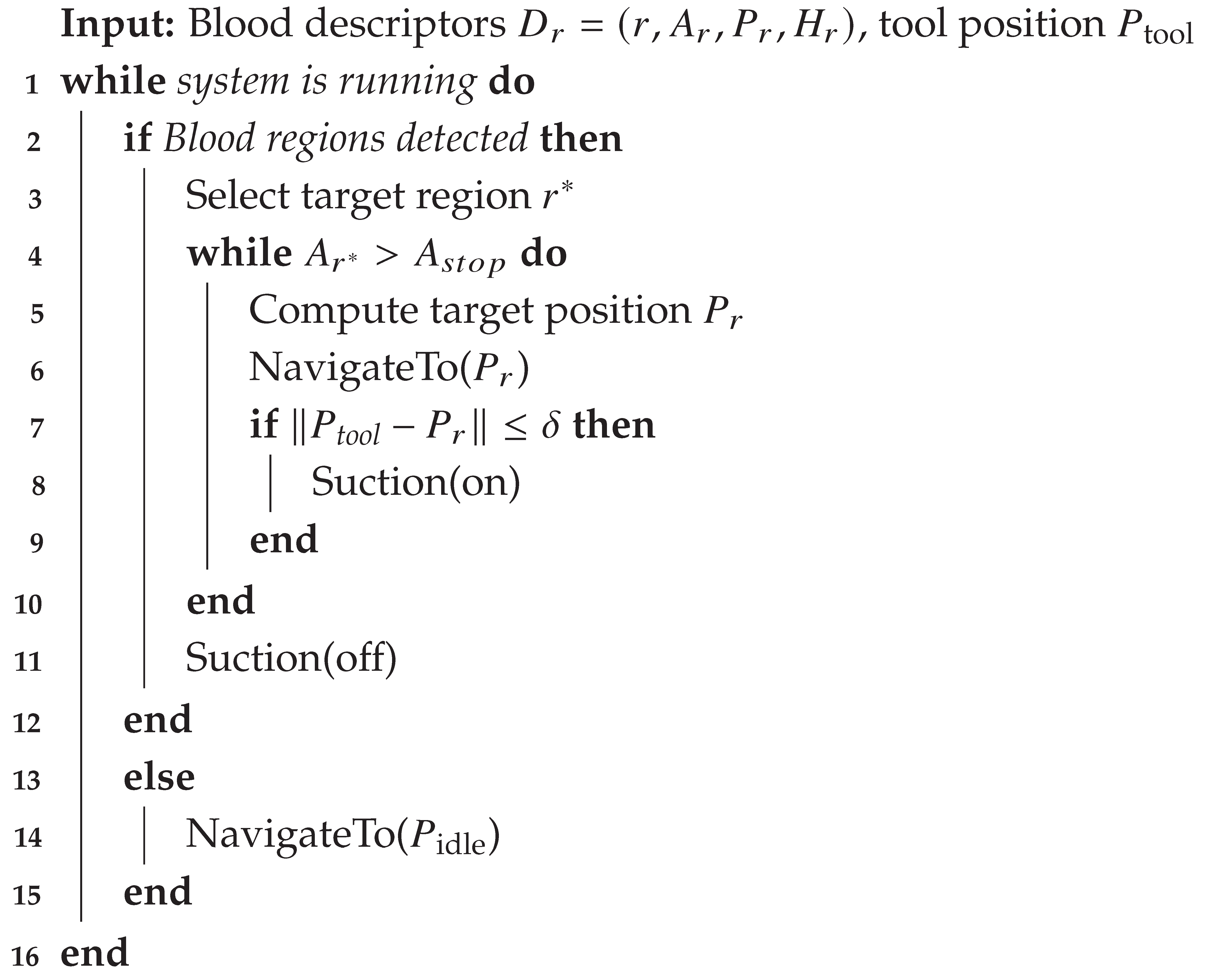

2.2. Task Planner

| Algorithm 1: Task planner workflow |

|

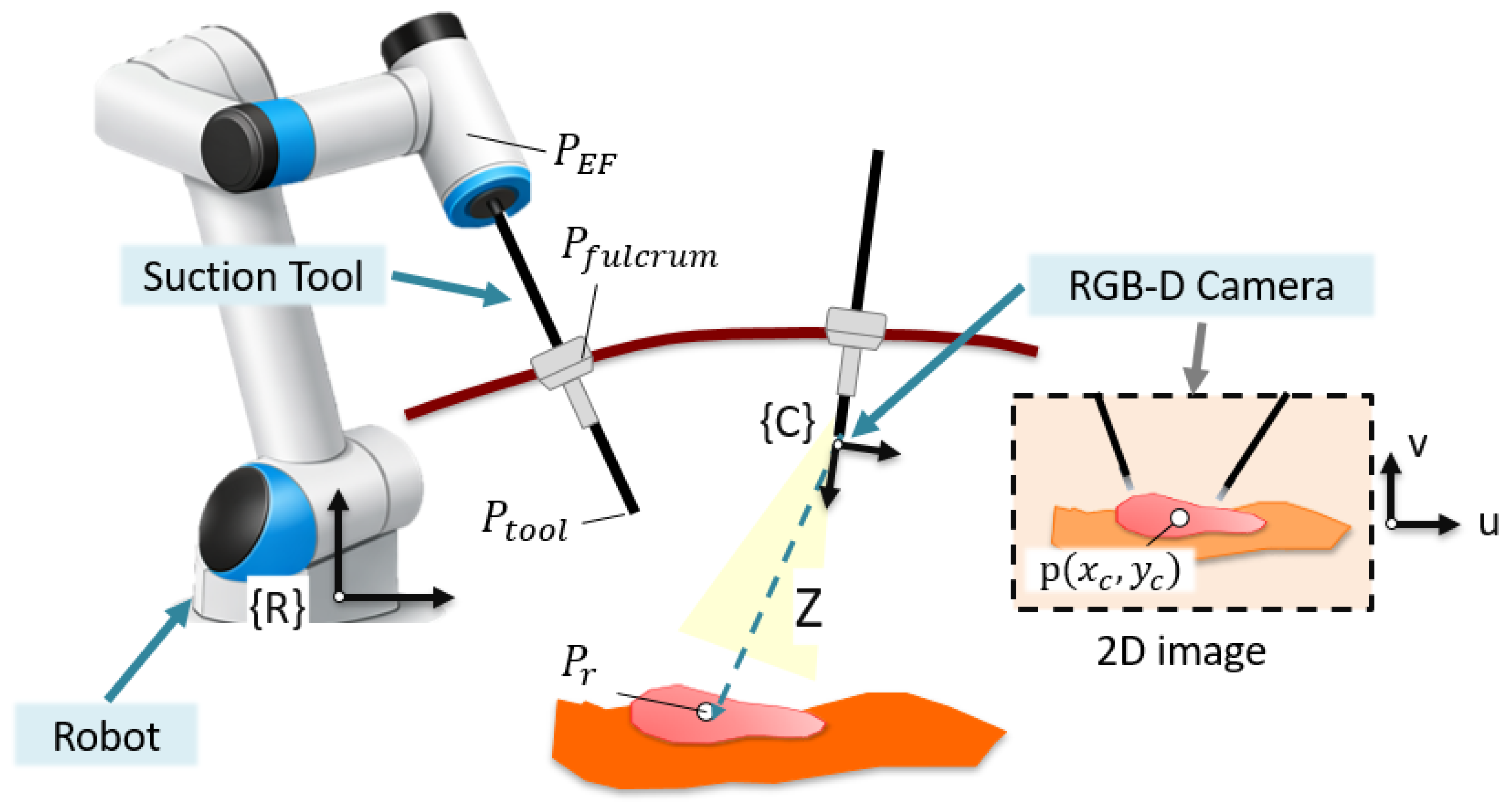

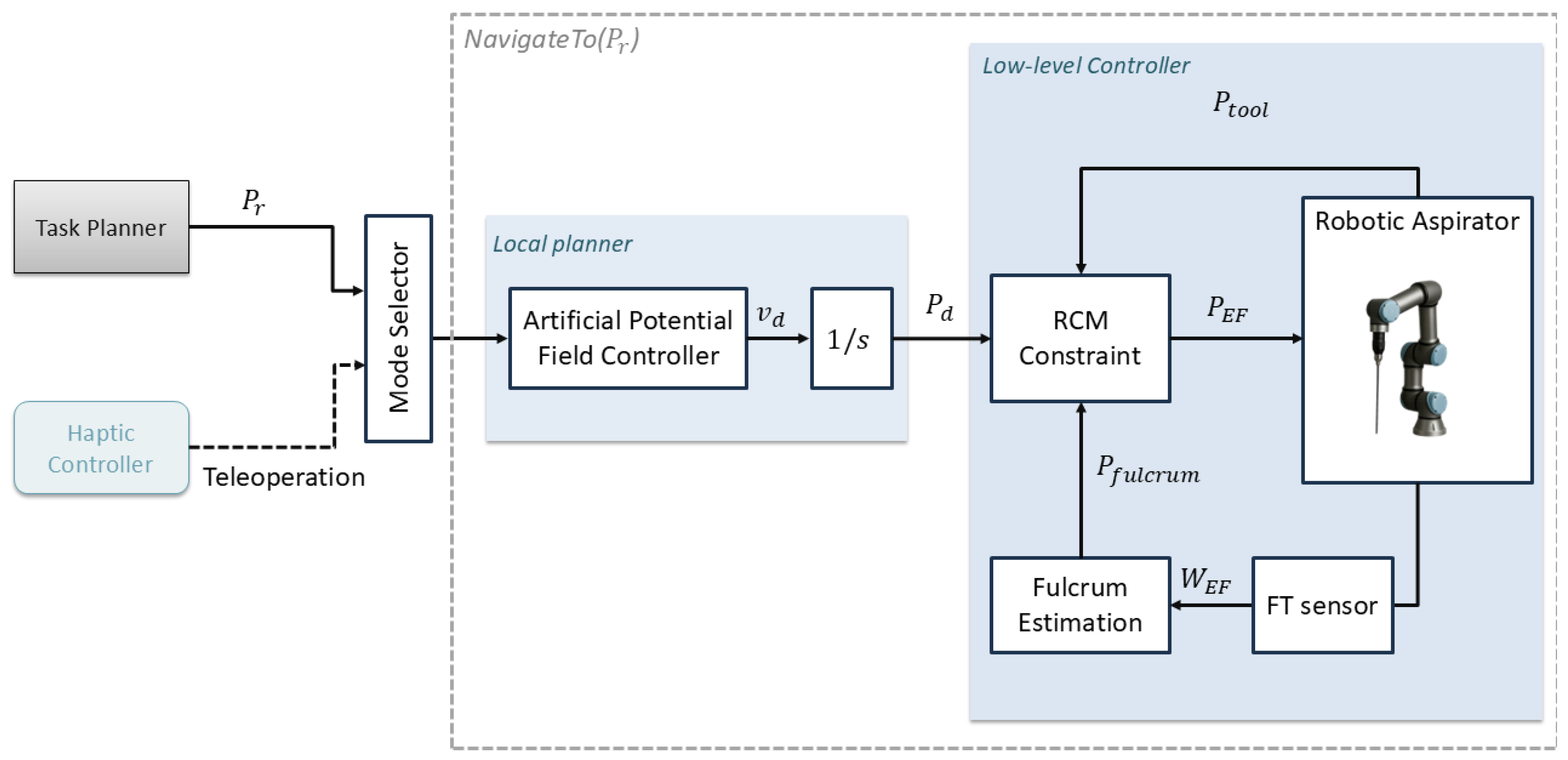

2.3. Autonomous Navigation Method

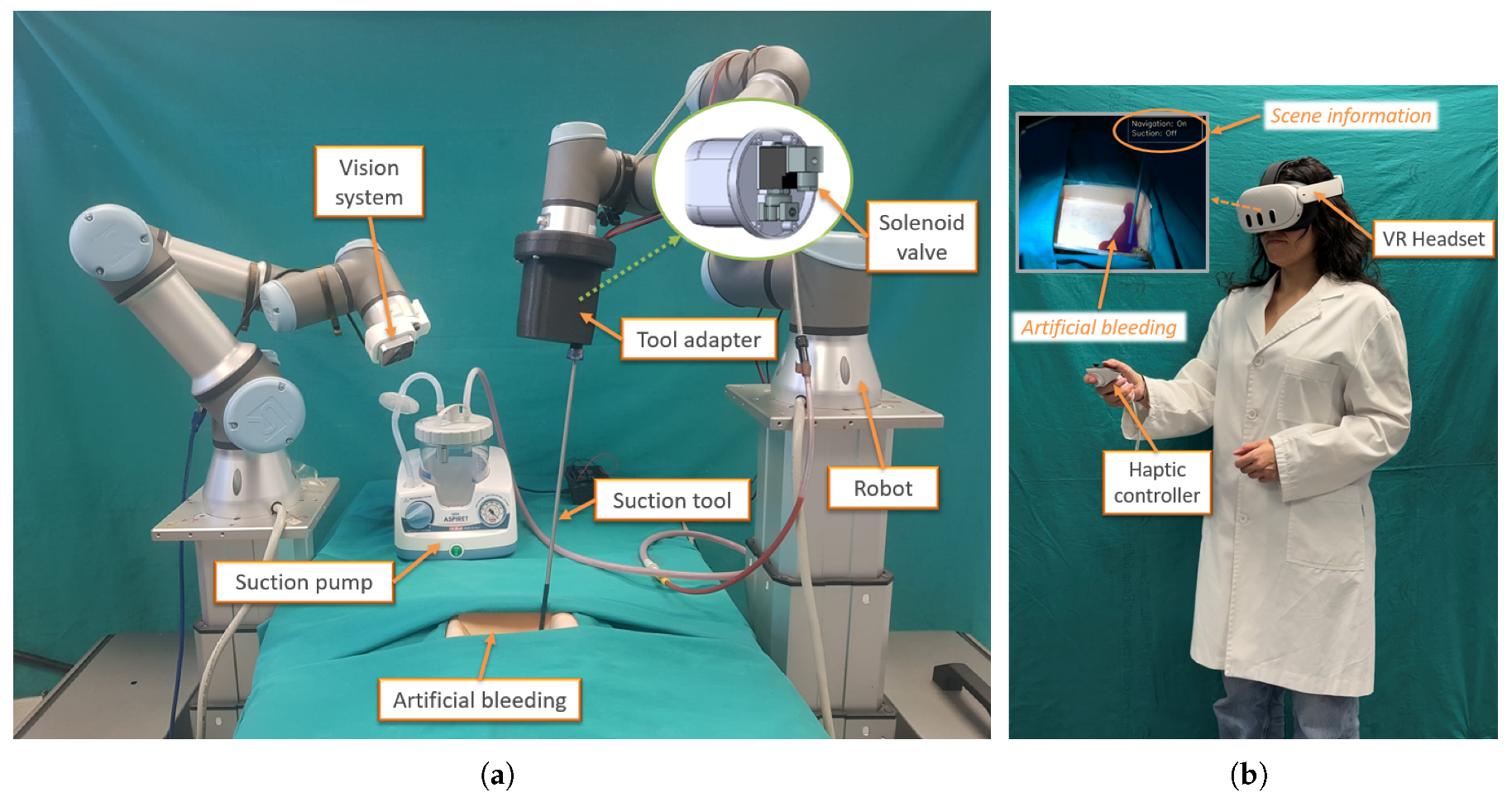

2.4. Experimental Setup

- Navigation: on/off. This indicator informs the human assistant that a bleeding region has been detected and that the system has initiated tool navigation towards the target position.

- Suction: on/off. This indicator is set to on when the suction tool is activated, and to off otherwise.

2.5. Evaluation Methodology

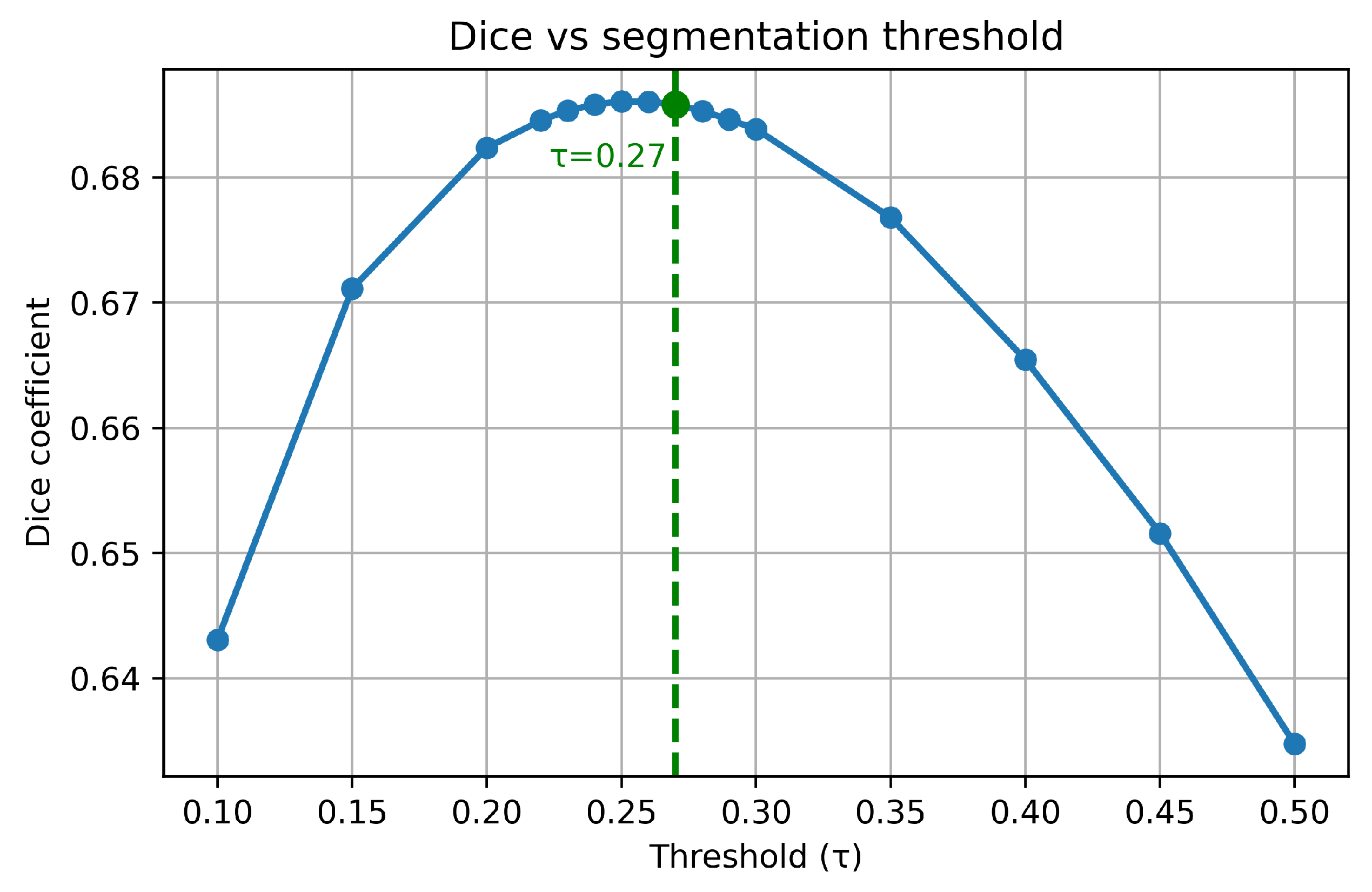

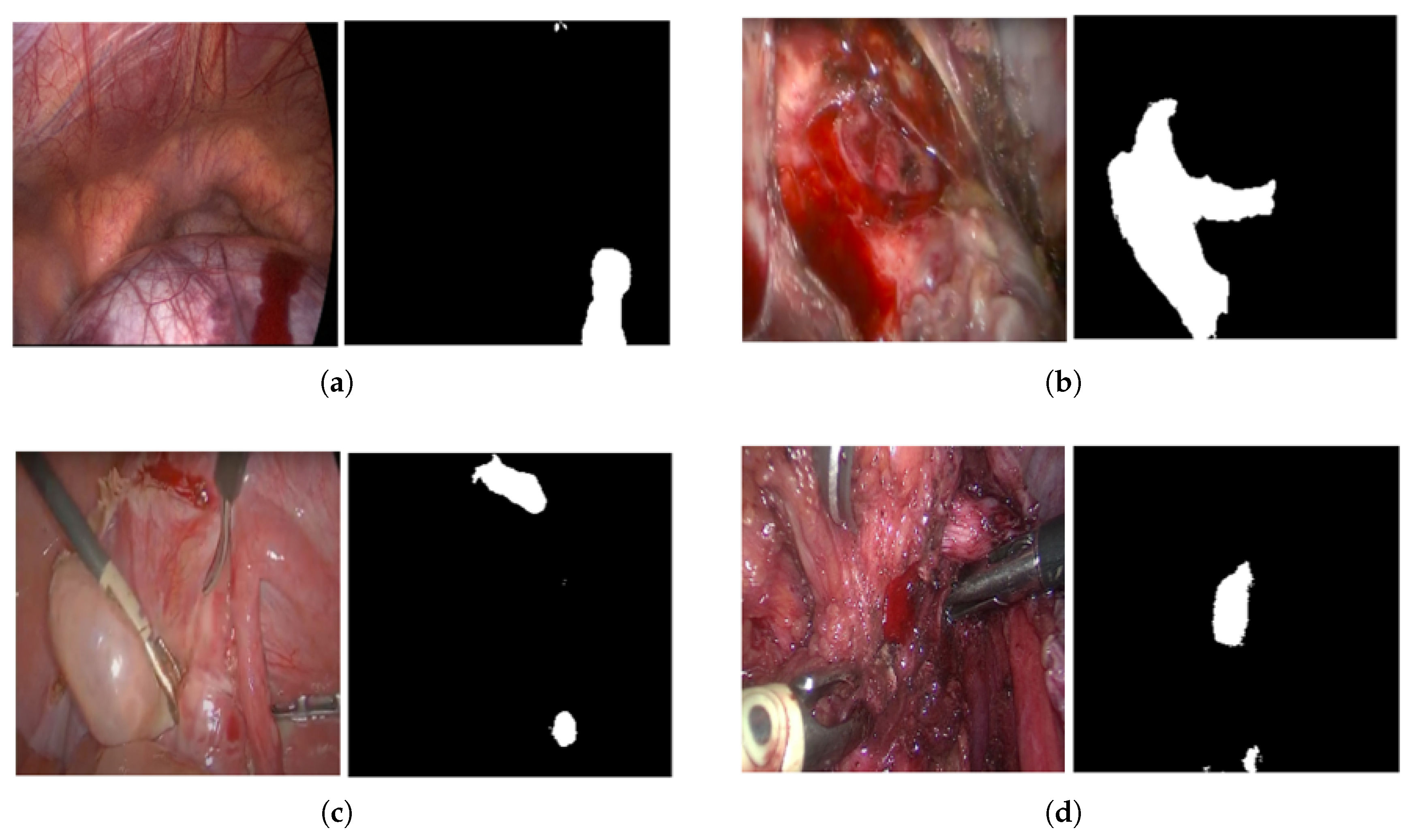

2.5.1. Blood Segmentation Evaluation

2.5.2. Robotic Aspirator Evaluation

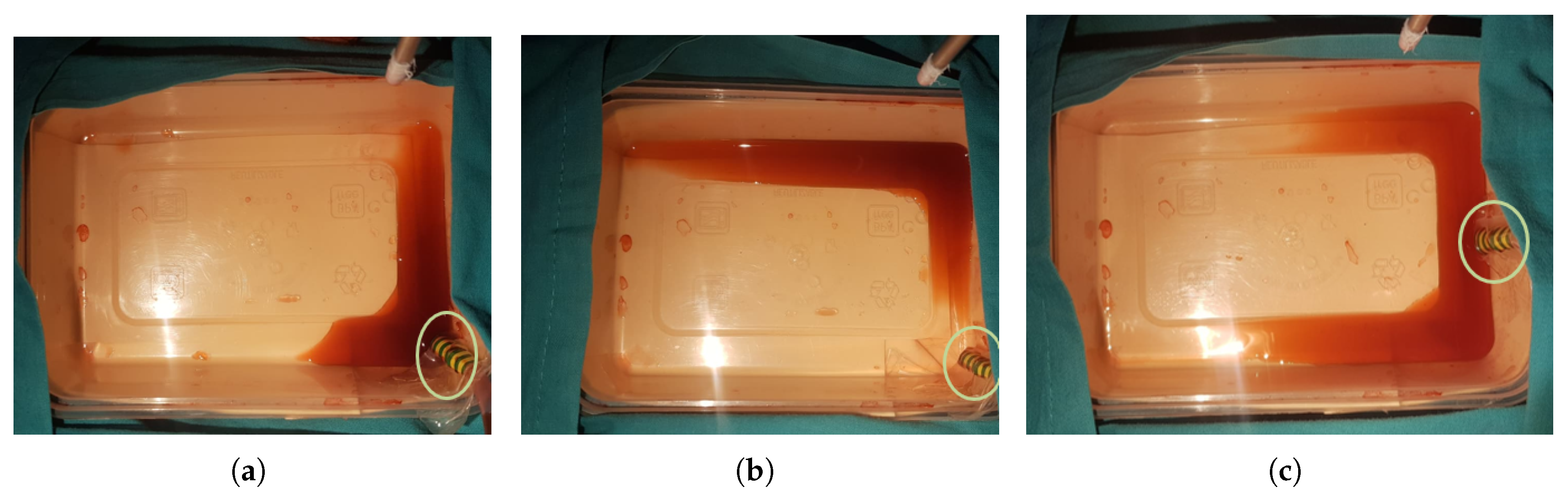

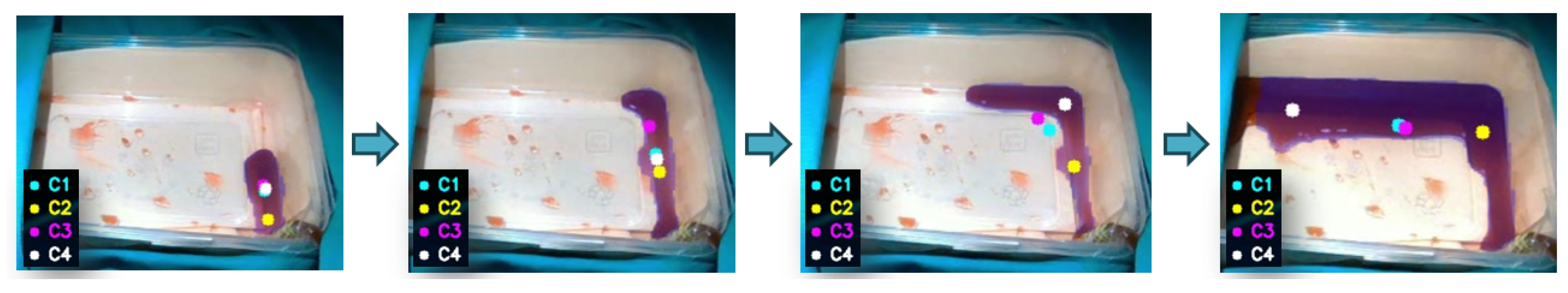

- Source-centered accumulation (S1): the inclination of the blood container is such that blood remains localized in close proximity to the bleeding source. This condition represents a flat or near-horizontal surgical field where gravitational effects are minimal, allowing blood to pool around the origin of bleeding.

- Downstream flow accumulation (S2): the blood container is inclined such that blood flows away from the bleeding source, accumulating at a distal location. This scenario mimics surgical conditions where the patient’s anatomy or positioning introduces a slope, such as in laparoscopic procedures or surgeries involving angled anatomical structures, where fluids tend to migrate due to gravity.

- Bilateral flow distribution (S3): in this case, the bleeding source is positioned such that blood spreads semi-symmetrically to both sides of the source, creating multiple accumulation regions, leading to a more complex spatial distribution of blood. This situation reflects cases where anatomical features or tissue geometries cause blood to bifurcate, such as around raised structures, cavities, or during procedures with uneven surfaces where fluid disperses in multiple directions

- Geometric centroid (C1): This method provides a global estimate of the spatial distribution of the bleeding region. The centroid is computed as:

- Source-oriented centroid (C2): This strategy exploits the temporal persistence map to prioritize pixels in the vicinity of the bleeding source. Let denote the subset of persistent pixels:where denotes the p-th percentile of persistence values within the bleeding region ( in our implementation). The centroid is then computed as:

-

Front-oriented centroid (C3): In contrast to the previous strategy, this method prioritizes pixels with lower persistence, which are assumed to correspond to the advancing bleeding front. Each pixel is assigned a weight inversely proportional to its persistence value:The weighted centroid is then computed as:

-

Deepest-point target (C4): This strategy selects the most interior point of the bleeding region by exploiting the distance transform of the segmented mask. Let denote the distance from pixel to the nearest boundary of the bleeding region. The target index is defined as:The corresponding image-plane coordinates are then given by

- Reaction time (s): time between a blood region is detected and the robot starts navigating.

- Suction time (s): corresponds to the duration required to complete the removal of the target bleeding region. To ensure comparability across trials with different initial blood distributions, suction time was normalized by multiplying it by the factor , where represents the average blood area across trials within the same bleeding scenario, and A is the maximum area within each trial.

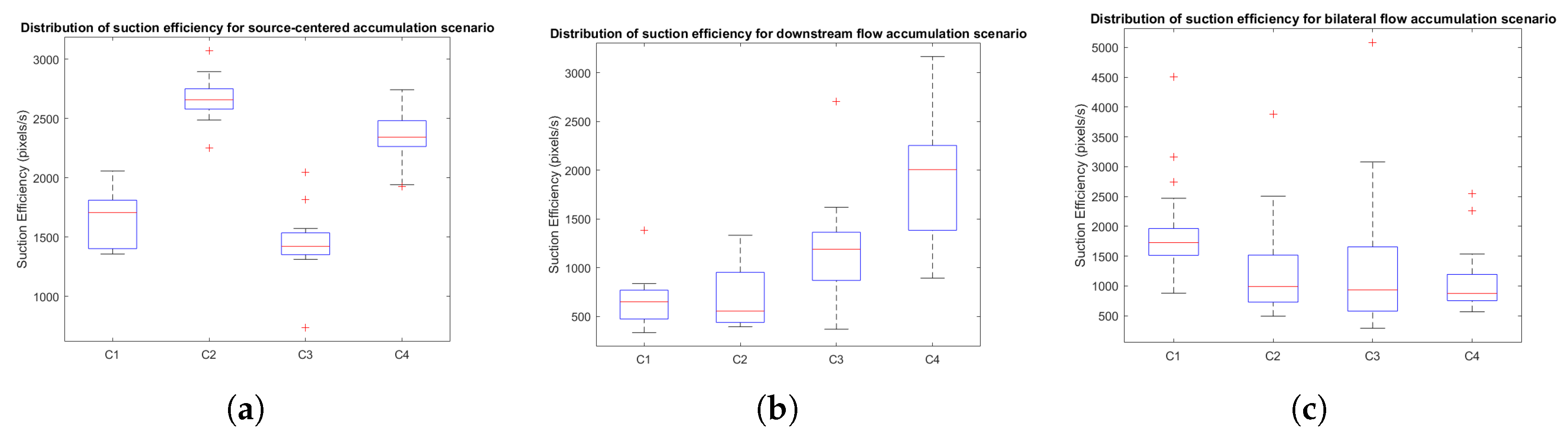

- Suction efficiency (pixels/s): defined as the rate of blood removal, computed as the maximum area within each trial, A, divided by the non-normalized suction time.

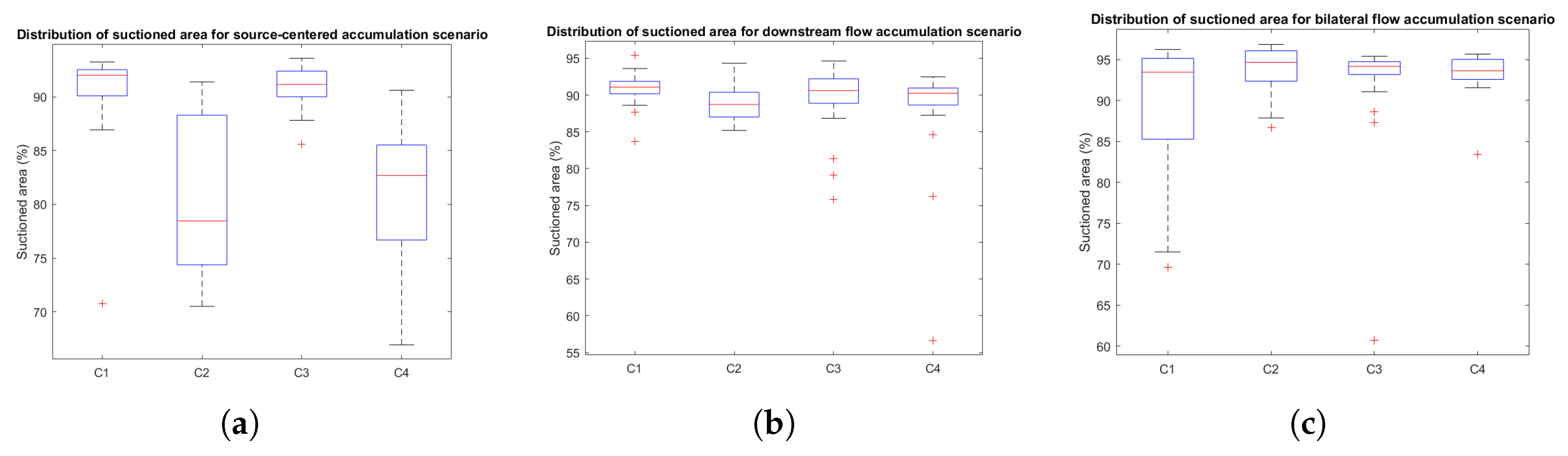

- Removed area (%): represents the percentage of blood that was successfully removed upon completion of the suction process.

3. Results

3.1. Blood Segmentation Results

3.2. Robotic Aspirator Performance

3.2.1. Geometric Analysis of Centroid Strategies

3.2.2. Quantitative Performance Evaluation

3.2.3. Distribution Analysis of Performance Metrics

4. Discussion

5. Conclusion

Author Contributions

Funding

Data Availability Statement

Conflicts of Interest

References

- Yang, G.Z.; Cambias, J.; Cleary, K.; Daimler, E.; Drake, J.; Dupont, P.E.; Hata, N.; Kazanzides, P.; Martel, S.; Patel, R.V.; et al. Medical robotics-Regulatory, ethical, and legal considerations for increasing levels of autonomy. Science robotics 2017, 2. [Google Scholar] [CrossRef]

- Estebanez, B.; del Saz-Orozco, P.; García-Morales, I.; Muñoz, V.F. Interfaz multimodal para un asistente robótico quirúrgico: uso de reconocimiento de maniobras quirúrgicas. Revista Iberoamericana de Automática e Informática Industrial RIAI 2011, 8, 24–34. [Google Scholar] [CrossRef]

- Stolzenburg, J.U.; Franz, T.; Kallidonis, P.; Minh, D.; Dietel, A.; Hicks, J.; Nicolaus, M.; Al-Aown, A.; Liatsikos, E. Comparison of the FreeHand® robotic camera holder with human assistants during endoscopic extraperitoneal radical prostatectomy. BJU International 2011, 107, 970–974. [Google Scholar] [CrossRef] [PubMed]

- Noonan, D.; Mylonas, G.; Shang, J.; Payne, C.; Darzi, A.; Yang, G.Z. Gaze contingent control for an articulated mechatronic laparoscope. In Proceedings of the 2010 3rd IEEE RAS & EMBS International Conference on Biomedical Robotics and Biomechatronics. IEEE, 9 2010, pp. 759–76. [CrossRef]

- Laina, I.; Rieke, N.; Rupprecht, C.; Vizcaíno, J.P.; Eslami, A.; Tombari, F.; Navab, N. Concurrent segmentation and localization for tracking of surgical instruments. In Proceedings of the Lecture Notes in Computer Science (including subseries Lecture Notes in Artificial Intelligence and Lecture Notes in Bioinformatics). Springer Verlag, 9 2017, Vol. 10434 LNCS, pp. 664–672. [CrossRef]

- Du, X.; Kurmann, T.; Chang, P.L.; Allan, M.; Ourselin, S.; Sznitman, R.; Kelly, J.D.; Stoyanov, D. Articulated multi-instrument 2-d pose estimation using fully convolutional networks. IEEE Transactions on Medical Imaging 2018, 37, 1276–1287. [Google Scholar] [CrossRef] [PubMed]

- Rivas-Blanco, I.; Lopez-Casado, C.; Perez-del Pulgar, C.J.; Garcia-Vacas, F.; Fraile, J.C.; Munoz, V.F. Smart Cable-Driven Camera Robotic Assistant. IEEE Transactions on Human-Machine Systems 2018, 48, 183–196. [Google Scholar] [CrossRef]

- Attanasio, A.; Scaglioni, B.; Leonetti, M.; Frangi, A.F.; Cross, W.; Biyani, C.S.; Valdastri, P. Autonomous Tissue Retraction in Robotic Assisted Minimally Invasive Surgery - A Feasibility Study. IEEE Robotics and Automation Letters 2020, 5, 6528–6535. [CrossRef]

- Nguyen, N.D.; Nguyen, T.; Nahavandi, S.; Bhatti, A.; Guest, G. Manipulating soft tissues by deep reinforcement learning for autonomous robotic surgery. In Proceedings of the SysCon 2019 - 13th Annual IEEE International Systems Conference, Proceedings. Institute of Electrical and Electronics Engineers Inc., 4 2019. [CrossRef]

- Seita, D.; Krishnan, S.; Fox, R.; McKinley, S.; Canny, J.; Goldberg, K. Fast and Reliable Autonomous Surgical Debridement with Cable-Driven Robots Using a Two-Phase Calibration Procedure. In Proceedings of the Proceedings - IEEE International Conference on Robotics and Automation. Institute of Electrical and Electronics Engineers Inc., 9 2018, pp. 6651–6658. [CrossRef]

- Shademan, A.; Decker, R.S.; Opfermann, J.D.; Leonard, S.; Krieger, A.; Kim, P.C. Supervised autonomous robotic soft tissue surgery. Science translational medicine 2016, 8. [Google Scholar] [CrossRef]

- Saeidi, H.; Opfermann, J.D.; Kam, M.; Wei, S.; Leonard, S.; Hsieh, M.H.; Kang, J.U.; Krieger, A. Autonomous robotic laparoscopic surgery for intestinal anastomosis. Science robotics 2022, 7. [Google Scholar] [CrossRef]

- Mikada, T.; Kanno, T.; Kawase, T.; Miyazaki, T.; Kawashima, K. Suturing Support by Human Cooperative Robot Control Using Deep Learning. IEEE Access 2020, 8, 167739–167746. [Google Scholar] [CrossRef]

- Chow, D.L.; Newman, W. Improved knot-tying methods for autonomous robot surgery. In Proceedings of the 2013 IEEE International Conference on Automation Science and Engineering (CASE). IEEE, 8 2013, pp. 461–465. [CrossRef]

- Barragan, J.A.; Yang, J.; Yu, D.; Wachs, J.P. A neurotechnological aid for semi-autonomous suction in robotic-assisted surgery. Scientific Reports 2022, 12 12, 4504. [Google Scholar] [CrossRef]

- Richter, F.; Shen, S.; Liu, F.; Huang, J.; Funk, E.K.; Orosco, R.K.; Yip, M.C. Autonomous Robotic Suction to Clear the Surgical Field for Hemostasis Using Image-Based Blood Flow Detection. IEEE Robotics and Automation Letters 2021, 6, 1383–1390. [Google Scholar] [CrossRef]

- Ou, Y.; Tavakoli, M. Learning Autonomous Surgical Irrigation and Suction With the da Vinci Research Kit Using Reinforcement Learning. IEEE Transactions on Automation Science and Engineering 2025, 22, 16753–16767. [Google Scholar] [CrossRef]

- Garcia-Martinez, A.; Vicente-Samper, J.M.; Sabater-Navarro, J.M. Automatic detection of surgical haemorrhage using computer vision. Artificial Intelligence in Medicine 2017, 78, 55–60. [Google Scholar] [CrossRef] [PubMed]

- Horita, K.; Hida, K.; Itatani, Y.; Fujita, H.; Hidaka, Y.; Yamamoto, G.; Ito, M.; Obama, K. Real-time detection of active bleeding in laparoscopic colectomy using artificial intelligence. Surgical endoscopy 2024, 38, 3461–3469. [Google Scholar] [CrossRef] [PubMed]

- Hua, S.; Gao, J.; Wang, Z.; Yeerkenbieke, P.; Li, J.; Wang, J.; He, G.; Jiang, J.; Lu, Y.; Yu, Q.; et al. Automatic bleeding detection in laparoscopic surgery based on a faster region-based convolutional neural network. Annals of translational medicine 2022, 10. [Google Scholar] [CrossRef]

- Rabbani, N.; Seve, C.; Bourdel, N.; Bartoli, A. Video-based Computer-aided Laparoscopic Bleeding Management: a Space-time Memory Neural Network with Positional Encoding and Adversarial Domain Adaptation. Proceedings of Machine Learning Research 2022, 172, 1–14. [Google Scholar]

- Richter, F.; Zhang, Y.; Zhi, Y.; Orosco, R.K.; Yip, M.C. Augmented reality predictive displays to help mitigate the effects of delayed telesurgery. Proceedings - IEEE International Conference on Robotics and Automation, 2019; pp. 444–450. [Google Scholar] [CrossRef]

- Lillicrap, T.P.; Hunt, J.J.; Pritzel, A.; Heess, N.; Erez, T.; Tassa, Y.; Silver, D.; Wierstra, D. Continuous control with deep reinforcement learning. arXiv arXiv:1509.02971. [CrossRef]

- Khatib, O. Real-time obstacle avoidance for manipulators and mobile robots. Proceedings - IEEE International Conference on Robotics and Automation, 1985; pp. 500–505. [Google Scholar] [CrossRef]

- Xia, X.; Li, T.; Sang, S.; Cheng, Y.; Ma, H.; Zhang, Q.; Yang, K. Path Planning for Obstacle Avoidance of Robot Arm Based on Improved Potential Field Method. Sensors 2023, 23. [Google Scholar] [CrossRef]

- Chen, Q.; Liu, Y.; Chen, Z.; Zhou, Y. An Autonomous Obstacle Avoidance Path Planning Method Involving PSO for Dual-Arm Surgical Robot. In Proceedings of the 2022 5th International Conference on Mechatronics, Robotics and Automation (ICMRA), 2022; pp. 1–6. [Google Scholar] [CrossRef]

- Surya Prakash, S.K.; Prajapati, D.; Narula, B.; Shukla, A. iAPF: an improved artificial potential field framework for asymmetric dual-arm manipulation with real-time inter-arm collision avoidance. Frontiers in Robotics and AI 2025, 12–2025. [Google Scholar] [CrossRef]

- Tang, A.; Cao, Q.; Pan, T. Spatial motion constraints for a minimally invasive surgical robot using customizable virtual fixtures. The International Journal of Medical Robotics and Computer Assisted Surgery 2014, 10, 447–460. Available online: https://onlinelibrary.wiley.com/doi/pdf/10.1002/rcs.1551. [CrossRef]

- Hao, L.; Liu, D.; Du, S.; Wang, Y.; Wu, B.; Wang, Q.; Zhang, N. An improved path planning algorithm based on artificial potential field and primal-dual neural network for surgical robot. Computer Methods and Programs in Biomedicine 2022, 227, 107202. [Google Scholar] [CrossRef]

- Galan-Cuenca, A.; De Luis-Moura, D.; Herrera-Lopez, J.M.; Rollon, M.; Garcia-Morales, I.; Muñoz, V.F. Sutura automatizada para una plataforma robotica de asistencia a la cirugia laparoscopica. Revista Iberoamericana de Automatica e Informatica industrial 21. [CrossRef]

| Centroid strategies | |||||

|---|---|---|---|---|---|

| Bleeding scenario | C1 | C2 | C3 | C4 | Total |

| S1 | 20 | 20 | 20 | 20 | 80 |

| S2 | 20 | 20 | 20 | 20 | 80 |

| S3 | 20 | 20 | 20 | 20 | 80 |

| Total | 60 | 60 | 60 | 60 | 240 |

| Bleeding scenario | ||||||

|---|---|---|---|---|---|---|

| S1 | 22.49 | 1.31 | 17.32 | 23.25 | 13.40 | 18.18 |

| S2 | 48.52 | 4.33 | 40.21 | 51.25 | 38.14 | 41.24 |

| S3 | 30.41 | 2.65 | 47.04 | 31.12 | 51.34 | 47.58 |

| Mean | 33.81 | 2.76 | 34.86 | 35.21 | 34.29 | 35.67 |

| Centroid | Reaction time (s) | Removed area (%) | Suction time (s) | Suction efficiency (pixels/s) |

|---|---|---|---|---|

| C1 | 0.0337 | 90.39 % | 3.9010 | 1658.5 |

| C2 | 0.0307 | 80.51 % | 2.3877 | 2668.9 |

| C3 | 0.0260 | 90.96 % | 4.5476 | 1444.8 |

| C4 | 0.0325 | 81.31 % | 2.7165 | 2356.8 |

| Centroid | Reaction time (s) | Removed area (%) | Suction time (s) | Suction efficiency (pixels/s) |

|---|---|---|---|---|

| C1 | 0.0318 | 90.82 % | 11.2268 | 660.6 |

| C2 | 0.0272 | 89.03 % | 11.6913 | 677.8 |

| C3 | 0.0353 | 89.31 % | 7.2107 | 1156 |

| C4 | 0.0349 | 87.69 % | 3.9516 | 1893.8 |

| Centroid | Reaction time (s) | Removed area (%) | Suction time (s) | Suction efficiency (pixels/s) |

|---|---|---|---|---|

| C1 | 0.0309 | 89.51 % | 5.5110 | 1915.6 |

| C2 | 0.0283 | 93.82 % | 9.9348 | 1235.4 |

| C3 | 0.0316 | 91.86 % | 11.9343 | 1282.7 |

| C4 | 0.0294 | 92.86 % | 10.3484 | 1057.7 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).