Submitted:

01 April 2026

Posted:

02 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

2.1. Field-Oriented Control with PI Regulation

2.2. Model Predictive Control for Motor Drives

2.3. Online Stochastic Optimization Models and Algorithms Related to Servo Motor Control

3. Methods

3.1. Physical Principles of Servo Motor Control

3.2. Online Stochastic Optimization Problem

- Assumption 1 (Bounded feasible set): The decision set is bounded with diameter .

- Assumption 2 (Convexity): The loss and constraint functions are convex and differentiable with respect to .

- Assumption 3 (Slater condition): There exists a strictly feasible point and a constant such that for all .

3.3. Online Stochastic Optimization Model and Algorithm for Servo Motor Control

State variables

Control variables

Stochastic and uncertain variables

| Algorithm 1 MSALM |

|

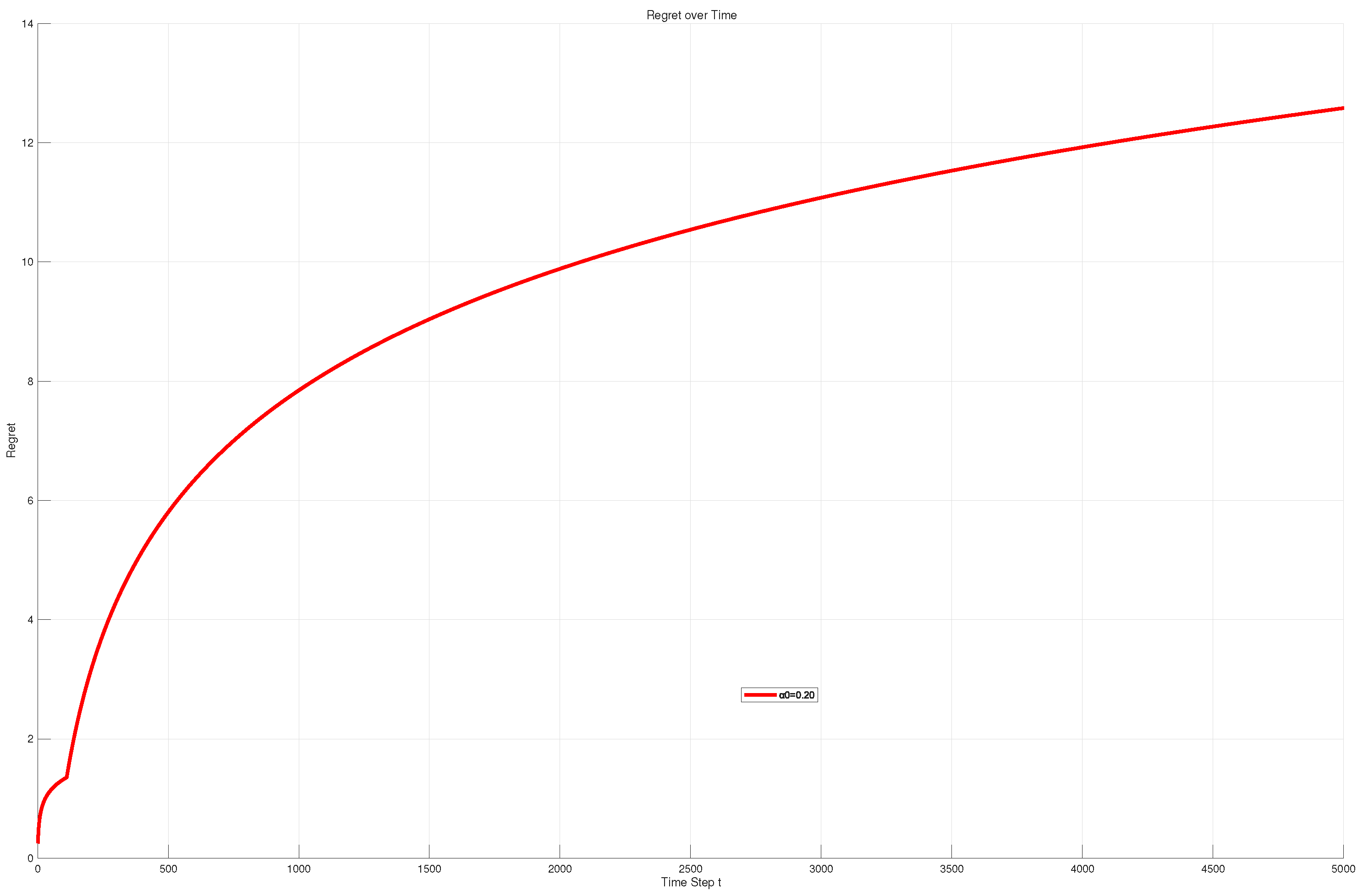

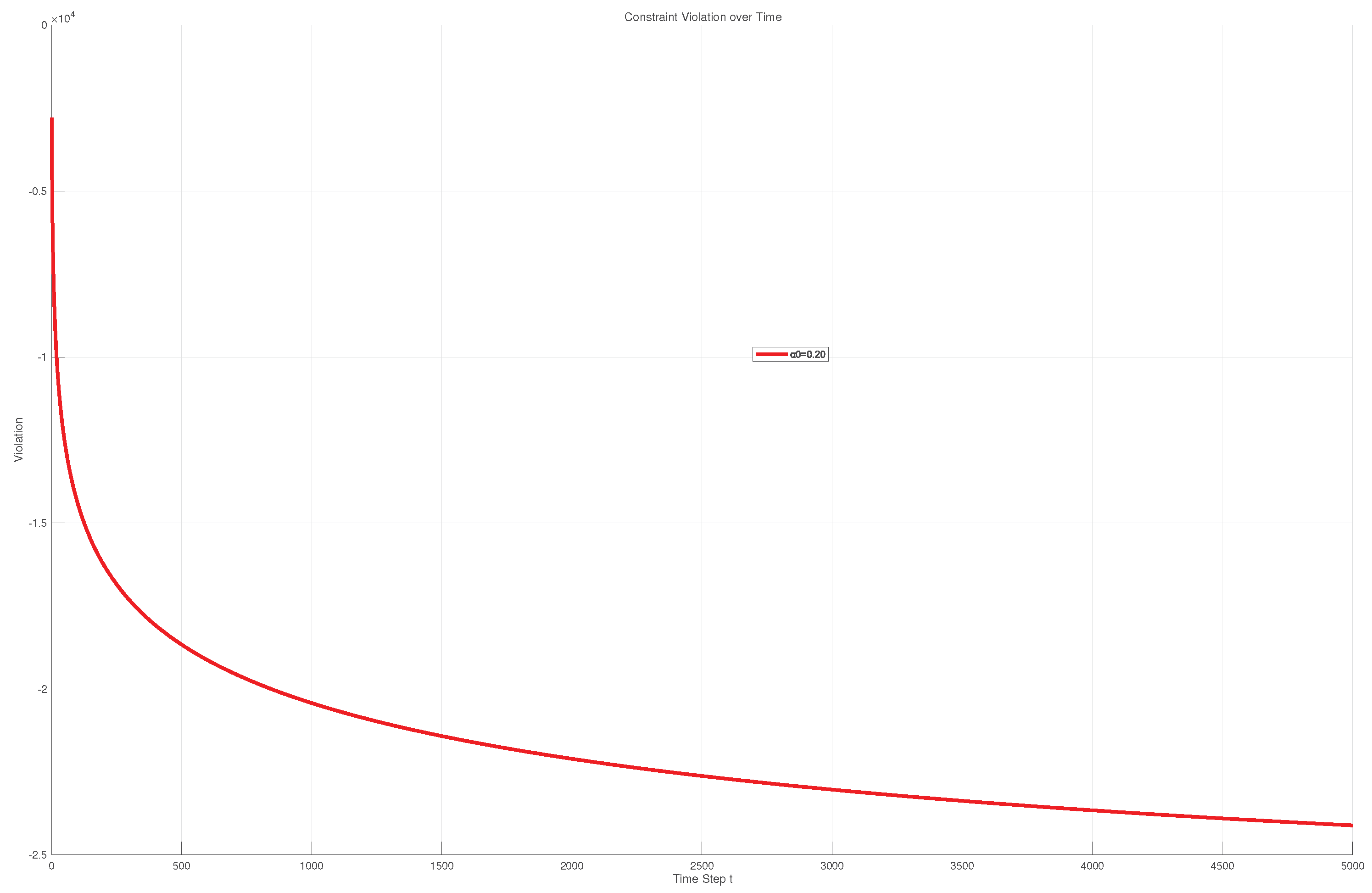

4. Results

4.1. FOC-Based Motor Control Using Online Stochastic Optimization

5. Conclusions

References

- Leonhard, W. Control of Electrical Drives; Springer: Berlin, Germany, 2001. [Google Scholar]

- Blaschke, F. The principle of field orientation as applied to the new transvector closed-loop control system for rotating-field machines. Siemens Rev. 1972, 34, 217–220. [Google Scholar]

- Ellis, G. Control System Design Guide, 4th ed.; Butterworth-Heinemann: Oxford, UK, 2012. [Google Scholar]

- Oztok, M.F.; Dursun, E.H. Application of fuzzy logic control for enhanced speed control and efficiency in PMSM drives using FOC and SVPWM. Phys. Scr. 2025, 100, 075272. [Google Scholar] [CrossRef]

- Mohapatra, B.K.; Sharma, V.; Bhowmik, P.; Mishra, R. An optimal multi-objective control architecture of PMSM drives. Sci. Rep. 2026, 16, 1–15. [Google Scholar] [CrossRef]

- Riccio, J.; Karamanakos, P.; Tarisciotti, L.; Degano, M.; Zanchetta, P.; Gerada, C. Improved modulated model predictive control: Reducing total harmonic distortion, computational burden, and parameter sensitivity. IEEE Access 2025, 13, 90795–90808. [Google Scholar] [CrossRef]

- Yu, H.; Neely, M.J. A low complexity algorithm with O(T) regret and O(1) constraint violations for online convex optimization with long term constraints. J. Mach. Learn. Res. 2020, 21, 1–24. [Google Scholar]

- Cao, X.; Zhang, J.; Poor, H.V. Online stochastic optimization with time-varying distributions. IEEE Trans. Autom. Control 2020, 66, 1840–1847. [Google Scholar] [CrossRef]

- Nair, R.R.; Behera, L.; Kumar, S. Gain scheduled PI control of PMSM for electric vehicle applications. IEEE Trans. Veh. Technol. 2020, 69, 14810–14820. [Google Scholar]

- Yao, J.; Jiao, Z.; Ma, D. Adaptive robust control of DC motors with unknown dead-zone nonlinearity. IEEE Trans. Ind. Electron. 2015, 62, 1633–1642. [Google Scholar]

- Pham, N.T.; Le, D.T.; Pham, T.T. Improved disturbance rejection of induction motor drives using PI–VGSTASM control and torque disturbance estimation. TELKOMNIKA 2026, 24, 1–10. [Google Scholar]

- Rodríguez, J.; Cortes, P. Predictive Control of Power Converters and Electrical Drives; Wiley: Chichester, UK, 2012. [Google Scholar]

- Fuentes, E.; Silva, C.A.; Kennel, R.M. MPC implementation of a quasi-time-optimal speed control for a PMSM drive, with inner modulated-FS-MPC torque control. IEEE Trans. Ind. Electron. 2016, 63, 3897–3905. [Google Scholar] [CrossRef]

- Wang, F.; Mei, X.; Rodriguez, J.; Kennel, R. Model predictive control for electrical drive systems—An overview. CES Trans. Electr. Mach. Syst. 2017, 1, 219–230. [Google Scholar] [CrossRef]

- Ahmed, A.A.; Koh, B.K.; Lee, Y.I. A comparison of finite control set and continuous control set model predictive control schemes for speed control of induction motors. IEEE Trans. Ind. Inform. 2018, 14, 1334–1346. [Google Scholar] [CrossRef]

- Sun, T.; Jia, C.; Liang, J.; Li, K.; Peng, L.; Wang, Z.; Huang, H. Improved modulated model-predictive control for PMSM drives with reduced computational burden. IET Power Electron. 2020, 13, 3163–3170. [Google Scholar] [CrossRef]

- Mesbah, A. Stochastic model predictive control: An overview and perspectives for future research. IEEE Control Syst. Mag. 2016, 36, 30–44. [Google Scholar]

- Li, T.; Sun, X.D.; Lei, G.; Yang, Z.B.; Guo, Y.G.; Zhu, J.G. Finite-control-set model predictive control of permanent magnet synchronous motor drive systems—An overview. IEEE/CAA J. Autom. Sin. 2022, 9, 2087–2105. [Google Scholar] [CrossRef]

- Krupa, P.; Alvarado, I.; Limon, D.; Alamo, T. Implementation of model predictive control for tracking in embedded systems using a sparse extended ADMM algorithm. IEEE Trans. Control Syst. Technol. 2022, 30, 1798–1805. [Google Scholar] [CrossRef]

- Zinkevich, M. Online convex programming and generalized infinitesimal gradient ascent. In Proceedings of the 20th International Conference on Machine Learning, Washington, DC, USA, 21–24 August 2003; pp. 928–935. [Google Scholar]

- Shalev-Shwartz, S. Online learning and online convex optimization. Found. Trends Mach. Learn. 2012, 4, 107–194. [Google Scholar] [CrossRef]

- Hazan, E. Introduction to online convex optimization. Found. Trends Optim. 2016, 2, 157–325. [Google Scholar] [CrossRef]

- Neely, M.J.; Yu, H. Online convex optimization with time-varying constraints. arXiv 2017, arXiv:1702.04783. [Google Scholar] [CrossRef]

- Yu, H.; Neely, M.J.; Wei, X. Online convex optimization with stochastic constraints. In Proceedings of the 31st Conference on Neural Information Processing Systems (NeurIPS 2017), Long Beach, CA, USA, 4–9 December 2017; pp. 1428–1438. [Google Scholar]

- Zhang, Y.; Dall’Anese, E.; Hong, M. Online proximal-ADMM for time-varying constrained convex optimization. IEEE Trans. Signal Inf. Process. Netw. 2021, 7, 144–155. [Google Scholar] [CrossRef]

- Rockafellar, R.T. Augmented Lagrangians and applications of the proximal point algorithm in convex programming. Math. Oper. Res. 1976, 1, 97–116. [Google Scholar] [CrossRef]

- Liu, H.Y.; Xiao, X.T.; Zhang, L.W. Augmented Lagrangian methods for time-varying constrained online convex optimization. J. Oper. Res. Soc. China 2023, 13, 364–392. [Google Scholar] [CrossRef]

- Wang, Z.W.; Xue, D.; Zhai, Y.J.; Li, C. A model-based stochastic augmented Lagrangian method for online stochastic optimization, Computational Mathematics and Mathematical Physics, Under Review, Article ID: ComMat2660048Wang.

| Polynomial Degree | Mean Test | Improvement | Number of Parameters |

|---|---|---|---|

| 1st Degree | 88.32% | — | 4 |

| 2nd Degree | 92.08% | 3.76% | 10 |

| 3rd Degree | 94.70% | 2.61% | 20 |

| 4th Degree | 95.17% | 0.47% | 35 |

| 5th Degree | 95.36% | 0.19% | 56 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).