Submitted:

01 April 2026

Posted:

01 April 2026

Read the latest preprint version here

Abstract

Keywords:

1. Introduction

The degree to which a model “knows” something is directly encoded in the topological properties of its parameter space as signal propagates forward, and these properties are measurable before the final output is committed.

Contributions.

- A formal statement of the Knowledge Landscape hypothesis relating topological geometry to metacognitive accessibility (Section 3).

- Five empirical experiments on TriviaQA demonstrating that token entropy and hidden-state variance discriminate factual knowledge from ignorance during forward computation (Section 4).

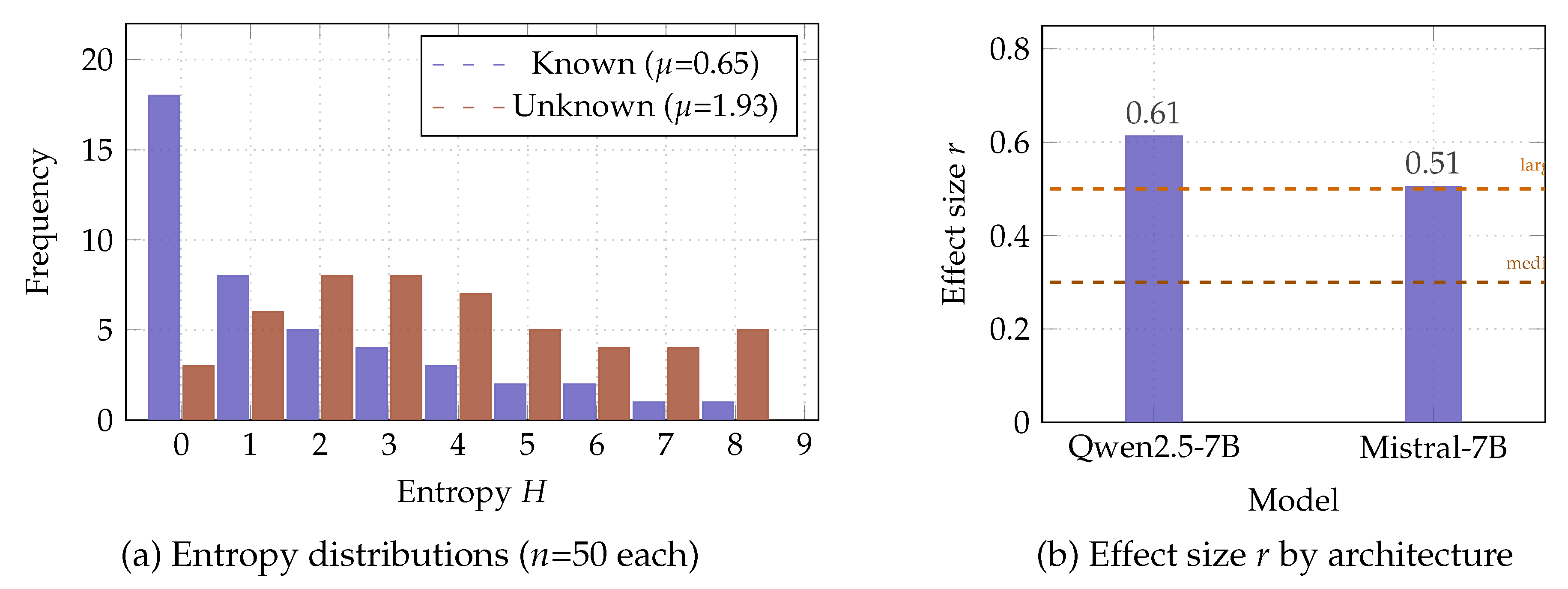

- Multi-model validation across Qwen2.5-7B and Mistral-7B showing architecture-independent replication (Section 4.1).

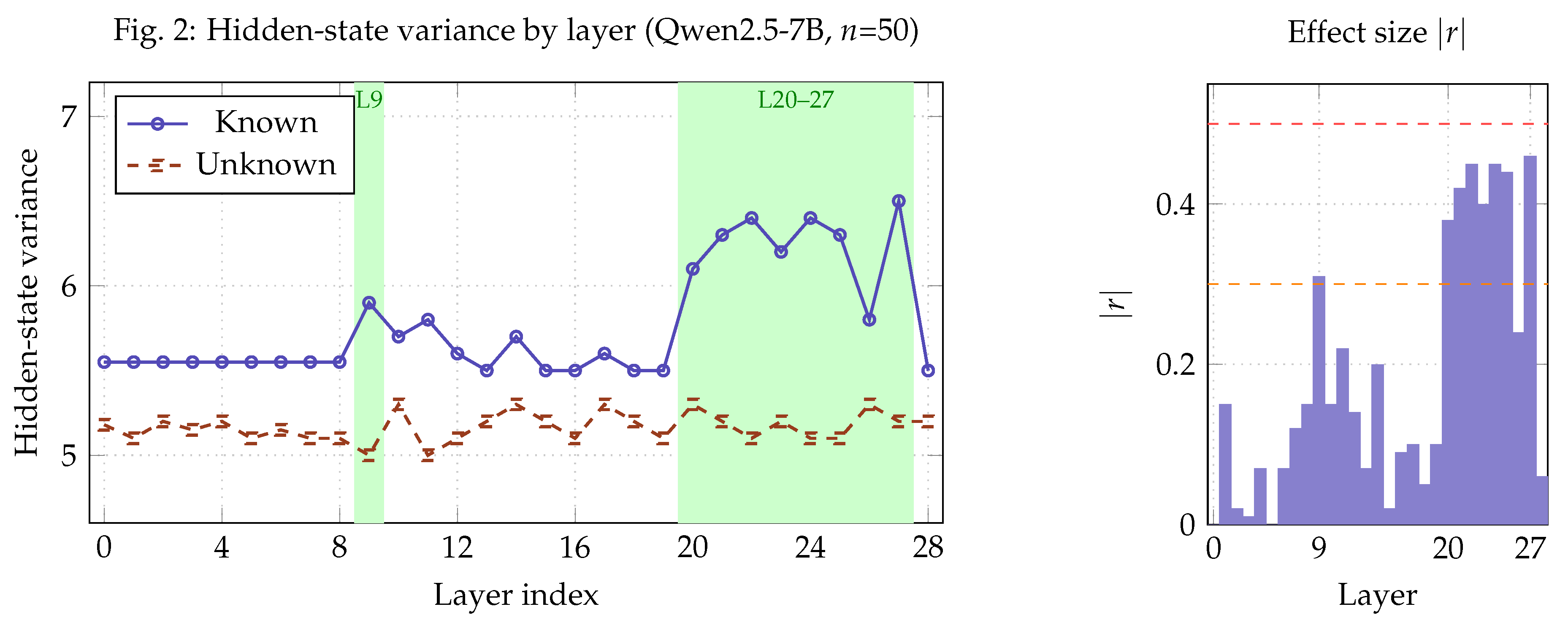

- Identification of a metacognitive locus at layers 9 and 20–27 of Qwen2.5-7B (Section 4.3).

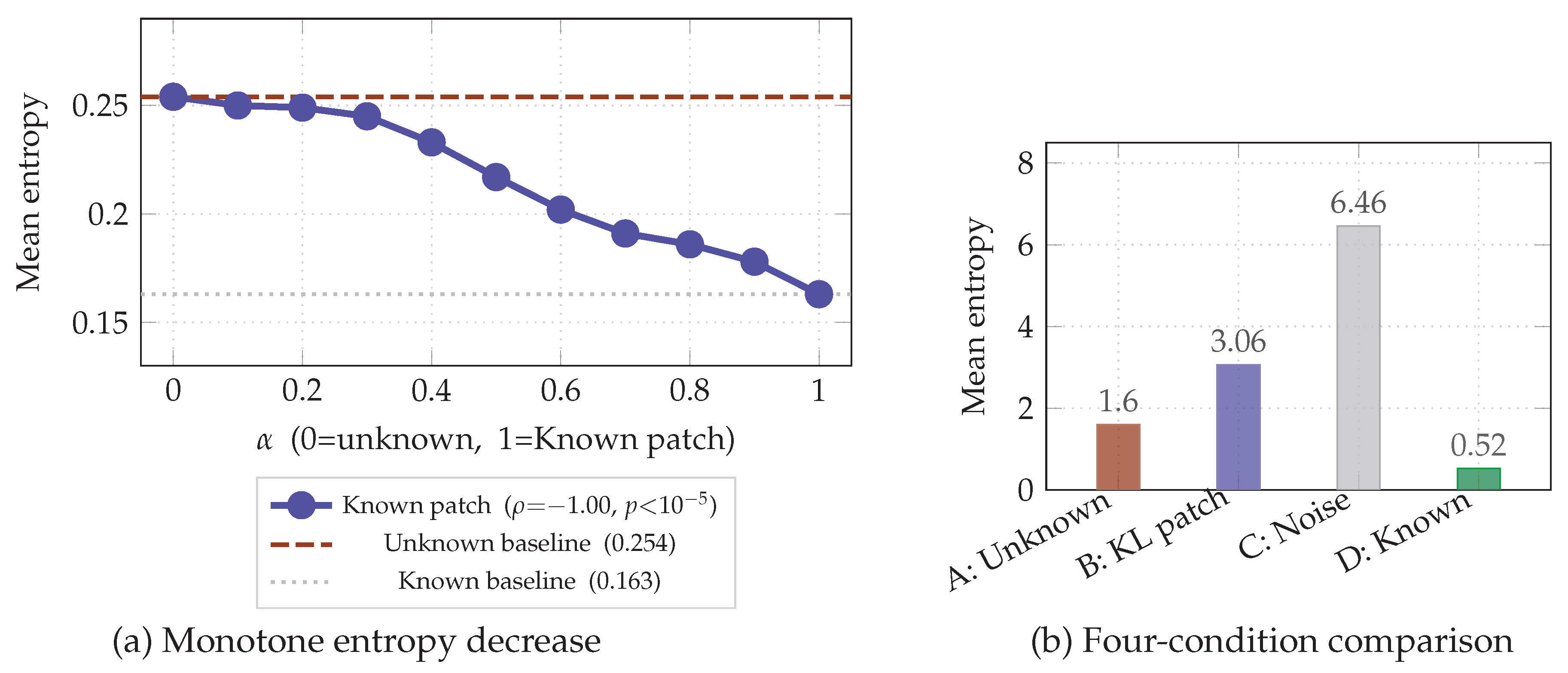

- Causal evidence via activation patching with monotone interpolation (Spearman , ) (Section 4.5).

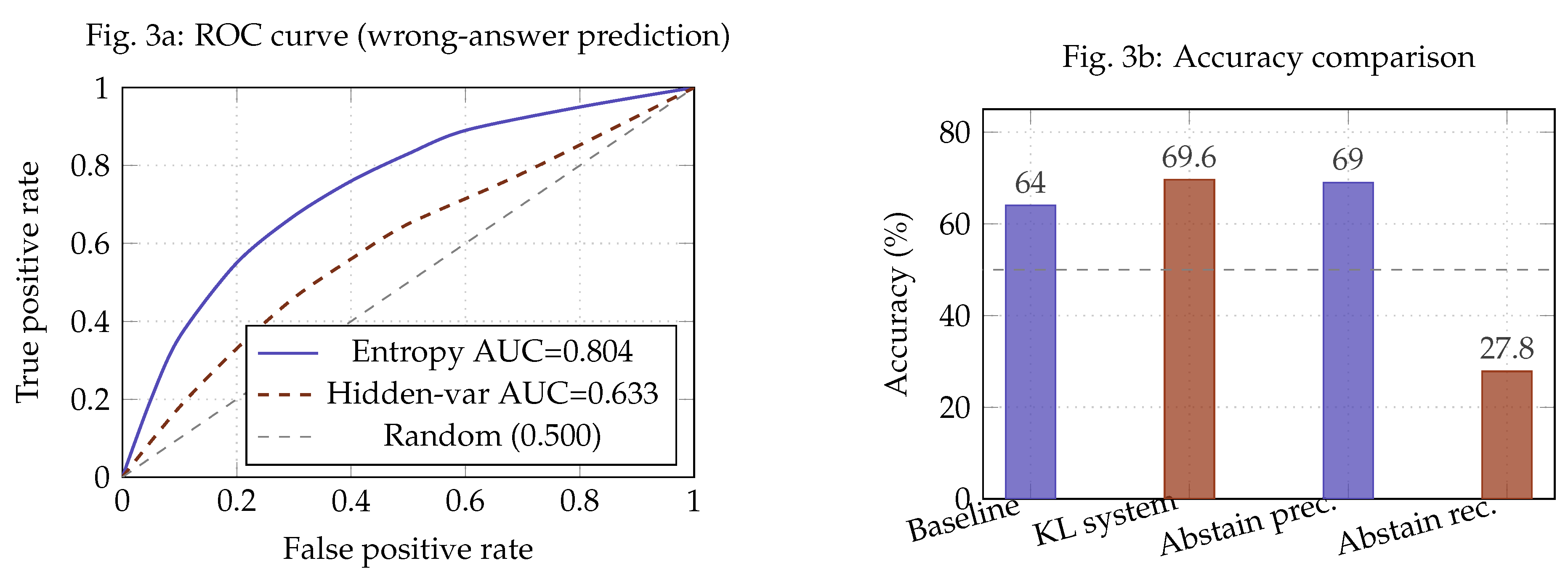

- A lightweight abstention system that achieves ROC-AUC and pp accuracy gain without fine-tuning (Section 4.4).

2. Background and Related Work

2.1. Uncertainty Quantification in LLMs

2.2. Loss Landscape Geometry

2.3. Mechanistic Interpretability

2.4. Dual-Process Theories and AI Metacognition

2.5. Concurrent Work

3. The Knowledge Landscape Hypothesis

3.1. Formal Statement

3.2. Dual-Hemisphere Processing Model

3.3. Combined Metacognitive Score

4. Experiments

Setup.

4.1. Experiment 1: Token Entropy — Multi-Model Validation

| Model | Condition | Mean H | Std | p-value | r |

|---|---|---|---|---|---|

| Qwen2.5-7B-Instruct | Known | 0.647 | 0.967 | 0.613 | |

| Unknown | 1.925 | 1.440 | |||

| Mistral-7B-Instruct-v0.3 | Known | 0.693 | — | 0.505 | |

| Unknown | 1.600 | — |

4.2. Experiment 2: Attention Head Locality

4.3. Experiment 3: Hidden-State Variance and the Metacognitive Locus

| Layer range | Known var | Unknown var | p-value | r |

|---|---|---|---|---|

| 0–8 | ||||

| 9 | 5.90 | 5.00 | 0.0077 | |

| 10–19 | mixed | mixed | ||

| 20–27 | 6.1–6.5 | 5.1–5.3 | to | |

| 27 (peak) | 6.50 | 5.20 | ||

| 28 | 0.60 |

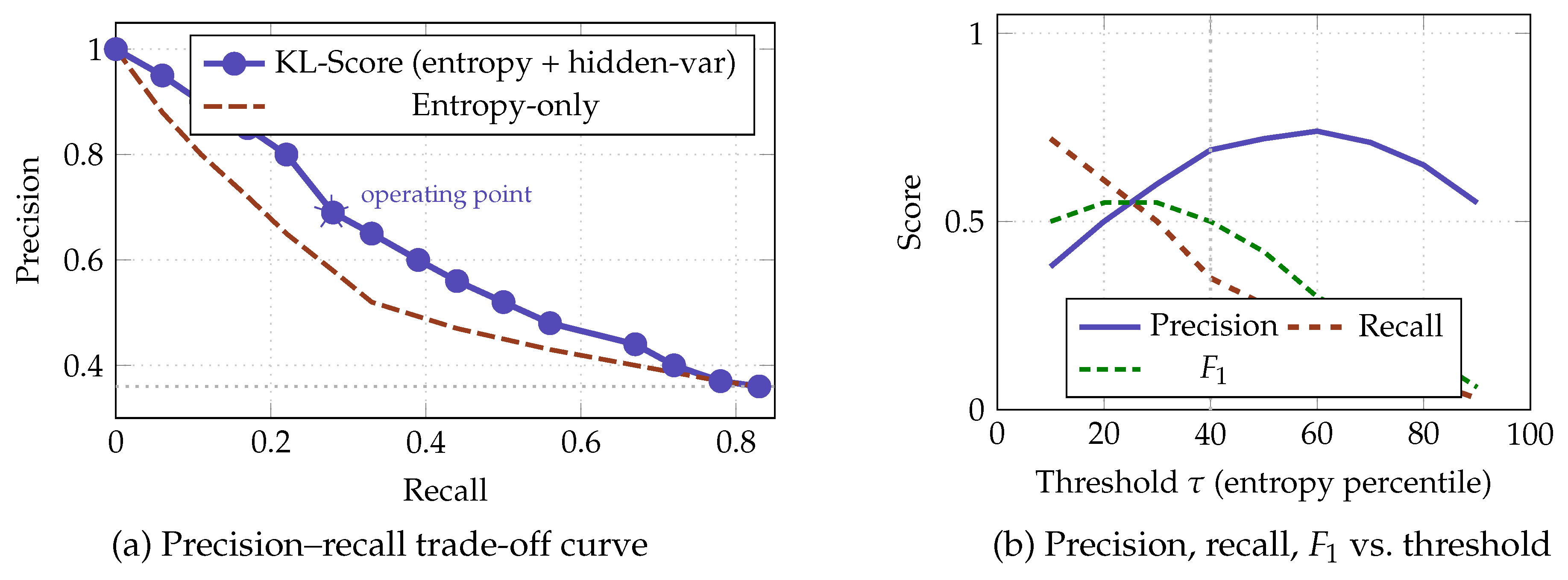

4.4. Experiment 4: Metacognitive Abstention System

Implementation.

Results.

| Metric | Value |

|---|---|

| Baseline accuracy | 64.0% |

| KL accuracy (answered only) | 69.6% |

| Accuracy gain () | +5.6 pp |

| Abstention rate | 14.5% (29/200) |

| Abstention precision | 69.0% |

| Abstention recall | 27.8% |

| AUC (entropy signal) | 0.804 |

| AUC (hidden-state signal) | 0.633 |

Comparison to post-hoc baselines.

4.5. Experiment 5: Causal Analysis Via Activation Patching

Protocol.

Results.

| Mean entropy | from | |

|---|---|---|

| 0.0 | 0.254 | — |

| 0.2 | 0.249 | |

| 0.4 | 0.233 | |

| 0.6 | 0.202 | |

| 0.8 | 0.186 | |

| 1.0 | 0.163 |

Comparison to random patching.

5. Discussion

5.1. In-Computation vs. Post-Hoc Metacognition

5.2. Interpretation of Hidden-State Divergence Direction

5.3. Negative Result: Attention Locality

5.4. Limitations

- Two models. While both Qwen2.5-7B and Mistral-7B replicate the entropy finding, the metacognitive locus (specific layer indices) was characterised only for Qwen2.5-7B. Cross-model locus mapping is left for future work.

- Task scope. Experiments focus on factual recall. Whether the topology-resistance framework generalises to reasoning, mathematics, or code generation remains open.

- Abstention recall. The current system detects only 27.8% of incorrect answers. Improving recall without sacrificing precision is the primary engineering challenge for deployment.

- Causal scope. The monotone interpolation result is statistically compelling at the aggregate level but noisy at the individual-pair level (). Stronger causal evidence would require larger same-category corpora or steering-vector interventions (Turner et al., 2023).

6. Conclusions

References

- Anderson, P. W. 1972. More is different: Broken symmetry and the nature of the hierarchical structure of science. Science 177, 4047: 393–396. [Google Scholar] [CrossRef] [PubMed]

- Baeck, A., J. Wagemans, and H. P. Op de Beeck. 2017. Hemispheric specialization for local and global processing of visual input. Neuropsychologia 95: 44–54. [Google Scholar] [CrossRef]

- Baldassi, C., F. Pittorino, and R. Zecchina. 2020. Shaping the learning landscape in neural networks around wide flat minima. Proceedings of the National Academy of Sciences 117, 1: 161–170. [Google Scholar] [CrossRef] [PubMed]

- Gal, Y., and Z. Ghahramani. 2016. Dropout as a Bayesian approximation: Representing model uncertainty in deep learning. In Proceedings of the 33rd International Conference on Machine Learning. PMLR: Vol. 48, pp. 1050–1059. Available online: https://proceedings.mlr.press/v48/gal16.html.

- Gazzaniga, M. S., ed. 2000. The new cognitive neurosciences, 2nd ed. MIT Press. [Google Scholar]

- Hochreiter, S., and J. Schmidhuber. 1997. Flat minima. Neural Computation 9, 1: 1–42. [Google Scholar] [CrossRef] [PubMed]

- IAAR-Shanghai. 2024. Awesome-attention-heads: A curated list of research on interpretability of LLM attention heads [Software repository]. GitHub. Available online: https://github.com/IAAR-Shanghai/Awesome-Attention-Heads.

- IBM Research. 2025. LogitScope: Token-level entropy and varentropy metrics for production LLM monitoring. arXiv: Available online: https://arxiv.org/abs/2603.24929.

- Ji, Z., N. Lee, R. Frieske, T. Yu, D. Su, Y. Xu, and P. Fung. 2023. Survey of hallucination in natural language generation. ACM Computing Surveys 55, 12: 1–38. [Google Scholar] [CrossRef]

- Joshi, M., E. Choi, D. Weld, and L. Zettlemoyer. 2017. TriviaQA: A large scale distantly supervised challenge dataset for reading comprehension. In Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics. ACL: pp. 1601–1611. [Google Scholar] [CrossRef]

- Kadavath, S., T. Conerly, A. Askell, T. Henighan, D. Drain, and E. Perez. 2022. Language models (mostly) know what they know. arXiv. Available online: https://arxiv.org/abs/2207.05221.

- Kriegeskorte, N., M. Mur, and P. Bandettini. 2008. Representational similarity analysis: Connecting the branches of systems neuroscience. Frontiers in Systems Neuroscience 2: Article 4. [Google Scholar] [CrossRef] [PubMed]

- Kuhn, L., Y. Gal, and S. Farquhar. 2023. Semantic uncertainty: Linguistic invariances for uncertainty estimation in natural language generation. Proceedings of the 11th International Conference on Learning Representations; OpenReview. Available online: https://openreview.net/forum?id=VD-AYtP0dve.

- Kumaran, D., A. Conmy, F. Barbero, S. Osindero, V. Patraucean, and P. Veličković. 2026. How do LLMs compute verbal confidence? arXiv. Available online: https://arxiv.org/abs/2603.17839.

- Li, Z., Y. Xu, and Y. Liu. 2025. From passive metric to active signal: The evolving role of uncertainty quantification in large language models. arXiv. Available online: https://arxiv.org/abs/2601.15690.

- Malinin, A., and M. Gales. 2021. Uncertainty estimation in autoregressive structured prediction. Proceedings of the 9th International Conference on Learning Representations; OpenReview. Available online: https://openreview.net/forum?id=jN5y-zb5Q7m.

- Manakul, P., A. Liusie, and M. J. F. Gales. 2023. SelfCheckGPT: Zero-resource black-box hallucination detection for generative large language models. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing. ACL: pp. 9004–9017. [Google Scholar] [CrossRef]

- Maynez, J., S. Narayan, B. Bohnet, and R. McDonald. 2020. On faithfulness and factuality in abstractive summarization. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics. ACL: pp. 1906–1919. [Google Scholar] [CrossRef]

- Miao, M. M., and L. Ungar. 2026. Closing the confidence–faithfulness gap in large language models. arXiv. Available online: https://arxiv.org/abs/2603.25052.

- Mindlin, I., I. Rahwan, and J.-F. Bonnefon. 2025. Fast, slow, and metacognitive thinking in artificial intelligence. npj Artificial Intelligence 2: Article 12. [Google Scholar] [CrossRef]

- Olsson, C., N. Elhage, N. Nanda, N. Joseph, N. DasSarma, and C. Olah. 2022. In-context learning and induction heads. Transformer Circuits Thread. Available online: https://transformer-circuits.pub/2022/in-context-learning-and-induction-heads/index.html.

- Steyvers, M., and M. A. K. Peters. 2025. Metacognition and uncertainty communication in humans and large language models. Current Directions in Psychological Science 34, 2: 89–97. [Google Scholar] [CrossRef]

- Tononi, G., O. Sporns, and G. M. Edelman. 1994. A measure for brain complexity: Relating functional segregation and integration in the nervous system. Proceedings of the National Academy of Sciences 91, 11: 5033–5037. [Google Scholar] [CrossRef]

- Turner, A., L. Thiergart, D. Udell, J. Leike, J. Wu, and M. MacDiarmid. 2023. Activation addition: Steering language models without optimization. arXiv. Available online: https://arxiv.org/abs/2308.10248.

- Wen, B., S. Peng, J. Tang, and Y. Liu. 2025. Attention heads of large language models: A survey. Patterns 6, 2: Article 100988. [Google Scholar] [CrossRef]

- Xiong, M., Z. Hu, X. Lu, Y. Li, J. Fu, J. He, and B. Hooi. 2024. Can LLMs express their uncertainty? An empirical evaluation of confidence elicitation in large language models. Proceedings of the 12th International Conference on Learning Representations; OpenReview. Available online: https://openreview.net/forum?id=gjeQKFxFpZ.

- Zhao, B., R. Walters, and R. Yu. 2025. Symmetry in neural network parameter spaces. Transactions on Machine Learning Research. Available online: https://arxiv.org/abs/2506.13018.

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).