Submitted:

01 April 2026

Posted:

02 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

2. Related Work

3. Materials and Methods

3.1. Datasets

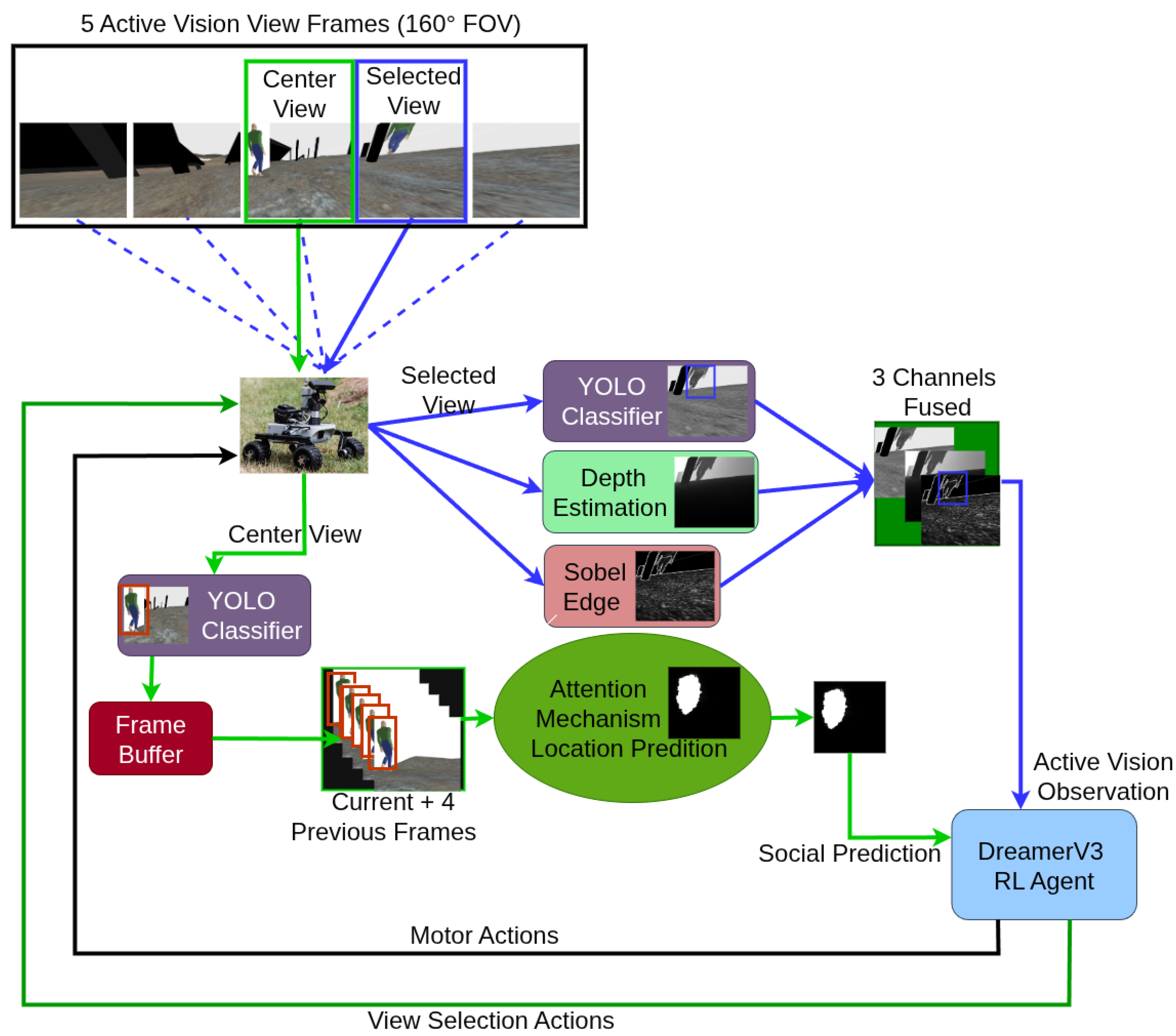

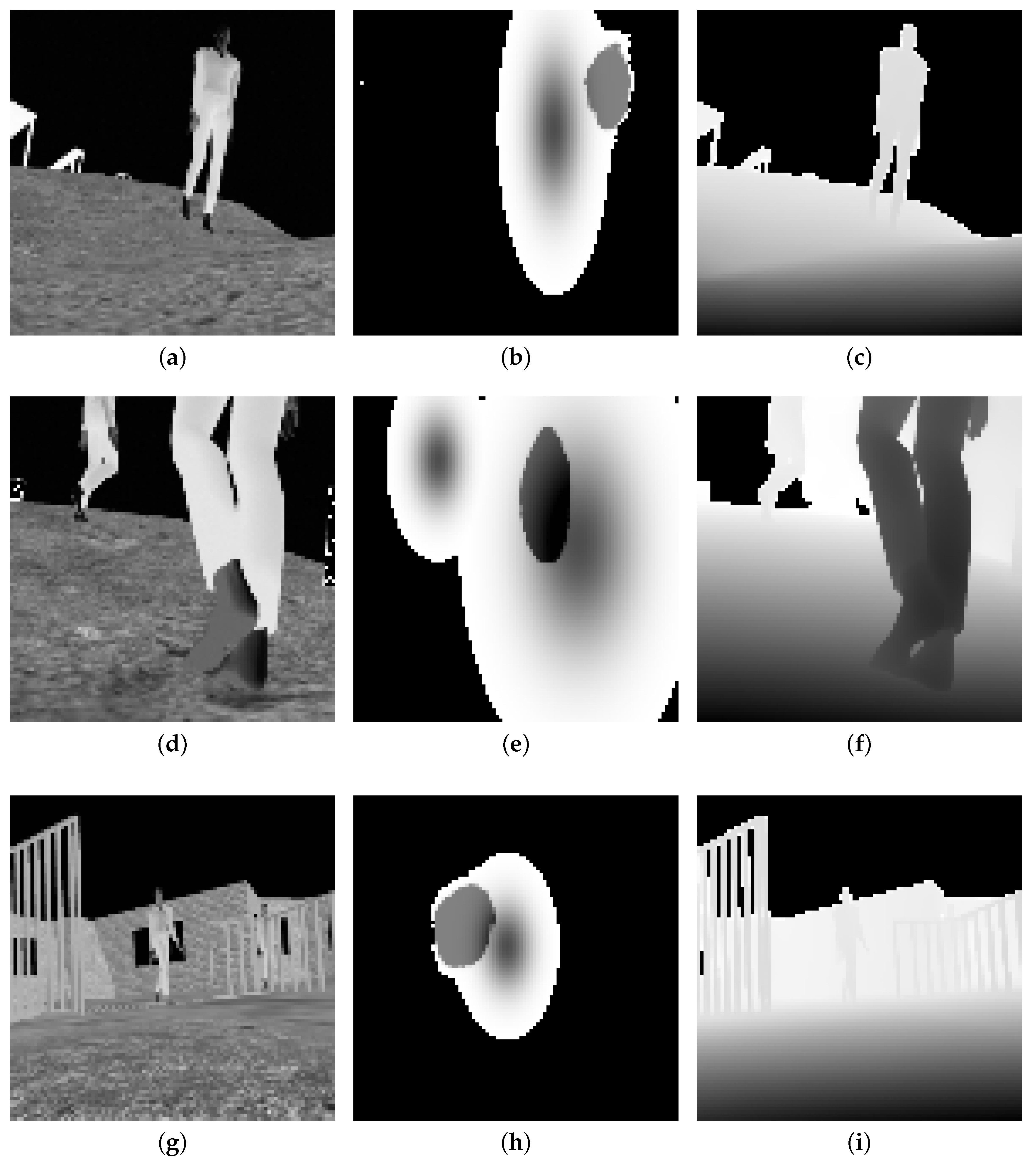

3.2. Spatiotemporal Attention Mechanism

3.3. Reinforcement Learning

3.3.1. RL Agent Reward Design

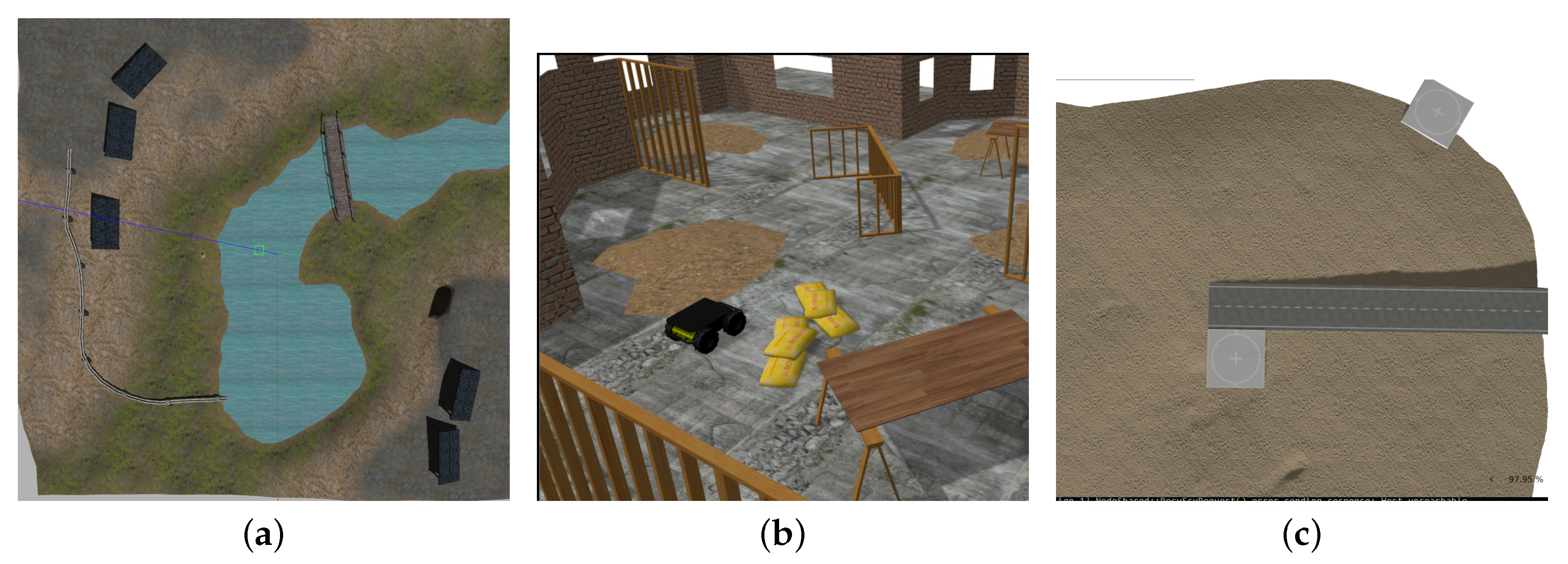

3.3.2. Environment Configuration

3.3.3. Multi-Process Architecture Integration

3.3.4. RL Agent Image Observation Space Design

- Channel 1: Grayscale image with masks. The current RGB frame is converted to luminance and normalised to . Pixels inside the YOLO pedestrian bounding boxes are overwritten with the constant value to emphasize occupied regions.

- Channel 2: Human traversability heat-map predicted by the attention model; values are logit-scaled to .

- Channel 3: Depth map acquired from a simulated Intel Realsense at 30 Hz, clipped to 0–10 m and linearly mapped to .

3.4. Perception Pipeline

- 1.

- YOLO inference produces person detections, which are rendered as thick bounding-box borders that survive downsampling.

- 2.

- Monocular depth inference produces a geometry channel from the same selected view.

- 3.

- Sobel edge magnitude provides a lightweight structural signal that preserves obstacle boundaries and traversability-relevant texture at low resolution.

- Fused image: the stacked outputs of YOLO/Sobel, depth, and grayscale from the selected rectified view.

- Heatmap-derived vector: row and column sums of the center-view attention heatmap.

- View token: the active window index.

- IMU: orientation from the onboard inertial measurement unit.

- Target: goal-relative features for PointNav.

- Velocities: current wheel velocities.

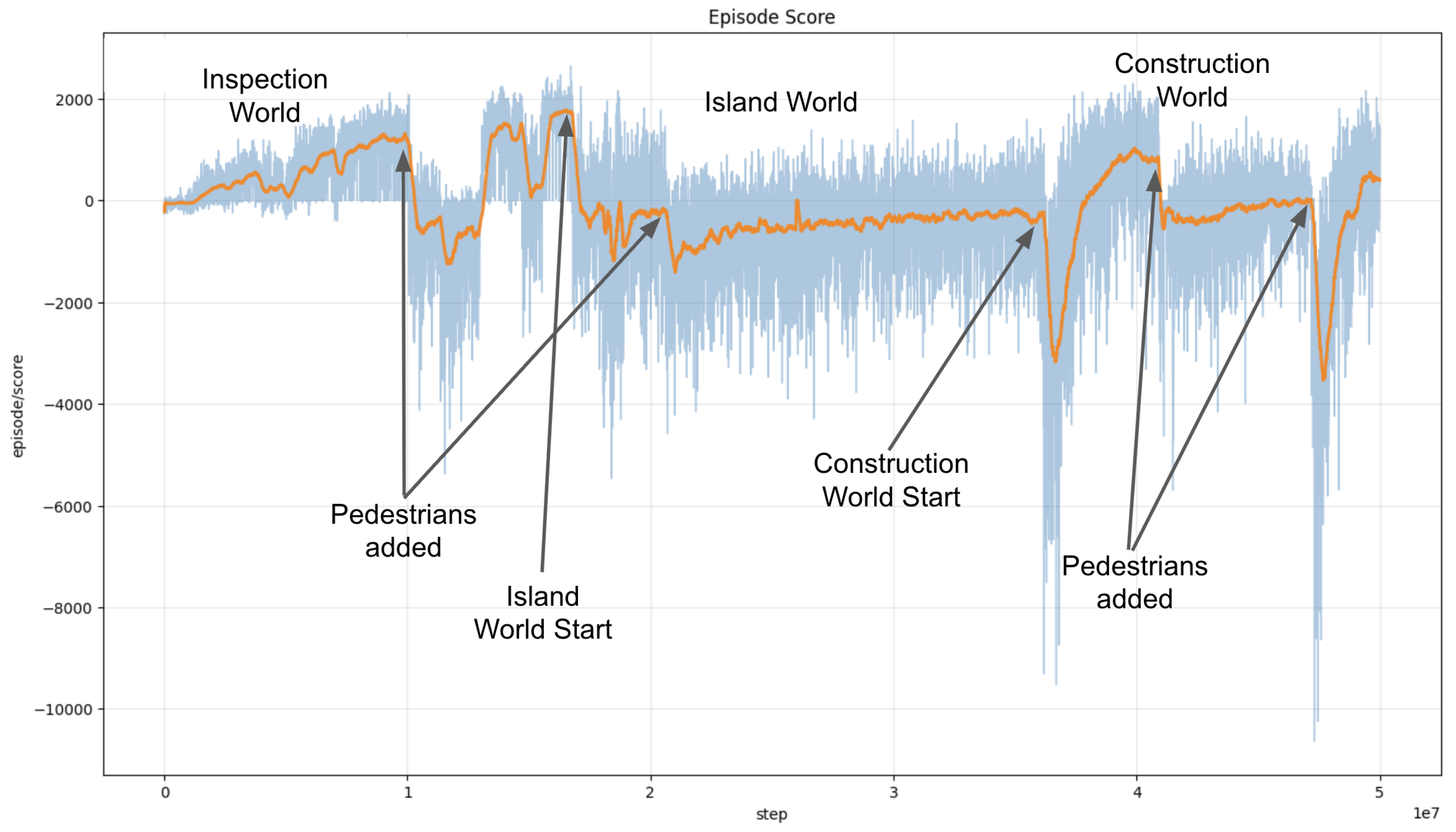

3.5. Curriculum Training

3.6. Comparison Method: NAV2

4. Results

5. Conclusions

Author Contributions

Funding

Institutional Review Board Statement

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| CNN | Convolutional Neural Network |

| FOV | Field of View |

| LSTM | Long Short Term Memory |

| MPC | Model Predictive Control |

| RL | Reinforcement Learning |

| YOLO | You Only Look Once |

References

- Shang, J.; Ryoo, M.S. Active vision reinforcement learning under limited visual observability. Advances in Neural Information Processing Systems 2023, 36, 10316–10338. [Google Scholar]

- Dass, S.; Hu, J.; Abbatematteo, B.; Stone, P.; Martín-Martín, R. Learning to look: Seeking information for decision making via policy factorization. arXiv 2024. arXiv:2410.18964. [CrossRef]

- Wang, G.; Li, H.; Zhang, S.; Guo, D.; Liu, Y.; Liu, H. Observe then act: Asynchronous active vision-action model for robotic manipulation. IEEE Robotics and Automation Letters, 2025. [Google Scholar]

- Grimes, M.K.; Modayil, J.V.; Mirowski, P.W.; Rao, D.; Hadsell, R. Learning to look by self-prediction. Transactions on Machine Learning Research, 2023. [Google Scholar]

- Hafner, D.; Pasukonis, J.; Ba, J.; Lillicrap, T. Mastering diverse control tasks through world models. Nature 2025, 1–7. [Google Scholar]

- Rios-Martinez, J.; Spalanzani, A.; Laugier, C. From proxemics theory to socially-aware navigation: A survey. International Journal of Social Robotics 2015, 7, 137–153. [Google Scholar] [CrossRef]

- Dautenhahn, K. Socially intelligent robots: Dimensions of human-robot interaction. Philosophical Transactions of the Royal Society B: Biological Sciences 2007, 362, 679–704. [Google Scholar] [CrossRef] [PubMed]

- Hall, E.T. The Hidden Dimension; Anchor Books: New York, NY, USA, 1966. [Google Scholar]

- Thrun, S.; Fox, D.; Burgard, W. The dynamic window approach to collision avoidance. IEEE Robotics and Automation Magazine 1997, 4, 23–33. [Google Scholar]

- Alonso-Mora, J.; Breitenmoser, A.; Rufli, M.; Beardsley, P.; Siegwart, R. Optimal reciprocal collision avoidance for multiple non-holonomic robots. In Distributed Autonomous Robotic Systems: The 10th International Symposium; Springer, 2013; pp. 203–216. [Google Scholar]

- Charalampous, K.; Kostavelis, I.; Gasteratos, A. Robot navigation in large-scale social maps: An action recognition approach. Expert Systems with Applications 2016, 66, 261–273. [Google Scholar] [CrossRef]

- Vega, A.; Cintas, R.; Manso, L.J.; Bustos, P.; Núñez, P. Socially-accepted path planning for robot navigation based on social interaction spaces. In Proceedings of the Iberian Robotics Conference; Springer, 2019; pp. 644–655. [Google Scholar]

- Tai, L.; Zhang, J.; Liu, M.; Burgard, W. Socially compliant navigation through raw depth inputs with generative adversarial imitation learning. In Proceedings of the IEEE International Conference on Robotics and Automation (ICRA), Brisbane, Australia; 2018; pp. 1111–1117. [Google Scholar]

- Xie, Z.; Dames, P. DRL-VO: Learning to navigate through crowded dynamic scenes using velocity obstacles. IEEE Transactions on Robotics 2023, 39, 2700–2719. [Google Scholar] [CrossRef]

- Golchoubian, M.; Ghafurian, M.; Dautenhahn, K.; Azad, N.L. Uncertainty-aware DRL for autonomous vehicle crowd navigation in shared space. IEEE Transactions on Intelligent Vehicles, 2024. [Google Scholar]

- Wang, Y.; Xie, Y.; Xu, D.; Shi, J.; Fang, S.; Gui, W. Heuristic dense reward shaping for learning-based map-free navigation of industrial automatic mobile robots. ISA Transactions 2025, 156, 579–596. [Google Scholar] [CrossRef] [PubMed]

- Bae, J.; Kim, J.; Yun, J.; Kang, C.; Choi, J.; Kim, C.; Lee, J.; Choi, J.; Choi, J. SiT Dataset: Socially Interactive Pedestrian Trajectory Dataset for Social Navigation Robots. In Proceedings of the 37th Conference on Neural Information Processing Systems (NeurIPS) Datasets and Benchmarks Track, New Orleans, LA, USA, 2023. [Google Scholar]

- Vice, J.M.; Sukthankar, G. DUnE: A Versatile Dynamic Unstructured Environment for Off-Road Navigation. Robotics 2025, 14, 35. [Google Scholar] [CrossRef]

- Yang, L.; Kang, B.; Huang, Z.; Zhao, Z.; Xu, X.; Feng, J.; Zhao, H. Depth Anything V2, 2024. arXiv arXiv:cs.CV/2406.09414.

- Ali, N. Computer vision-guided autonomous grasping system using Leo Rover with robotic arm. Master’s thesis, University of South-Eastern Norway, 2024. [Google Scholar]

- Macenski, S.; Martín, F.; White, R.; Ginés Clavero, J. The Marathon 2: A Navigation System. In Proceedings of the 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), 2020. [Google Scholar]

- Macenski, S.; Moore, T.; Lu, D.; Merzlyakov, A.; Ferguson, M. From the desks of ROS maintainers: A survey of modern and capable mobile robotics algorithms in the robot operating system 2. In Robotics and Autonomous Systems; 2023. [Google Scholar]

- Macenski, S.; Booker, M.; Wallace, J. Open-Source, Cost-Aware Kinematically Feasible Planning for Mobile and Surface Robotics. 2024. [Google Scholar]

| Condition | <0.5m | <0.8m | <1.2m | Goals | Goals per <0.5m |

Goals per all <0.8m |

|---|---|---|---|---|---|---|

| Construction | ||||||

| Nav2 (forward) | 92 | 65 | 124 | 278 | 3.02 | 1.77 |

| Nav2 (w/reverse) | 97 | 61 | 138 | 320 | 3.30 | 2.03 |

| YOLO+Depth | 14 | 17 | 77 | 142 | 10.14 | 4.58 |

| No Social Nav | 64 | 88 | 168 | 284 | 4.44 | 1.87 |

| Attention (ours) | 5 | 11 | 54 | 104 | 20.8 | 6.50 |

| Active Vision (ours) | 3 | 13 | 59 | 153 | 51 | 9.56 |

| Island | ||||||

| Nav2 (forward) | 192 | 213 | 245 | 485 | 2.53 | 1.20 |

| Nav2 (w/reverse) | 236 | 217 | 261 | 597 | 2.53 | 1.32 |

| YOLO+Depth | 20 | 40 | 137 | 440 | 22 | 7.33 |

| No Social Nav | 214 | 208 | 247 | 531 | 2.48 | 1.26 |

| Attention (ours) | 13 | 46 | 139 | 448 | 34.46 | 7.59 |

| Active Vision (ours) | 11 | 45 | 186 | 537 | 48.82 | 9.59 |

| Inspection | ||||||

| Nav2 (forward) | 206 | 250 | 239 | 196 | 0.95 | 0.43 |

| Nav2 (w/reverse) | 196 | 211 | 283 | 197 | 1.01 | 0.48 |

| YOLO+Depth | 77 | 84 | 123 | 361 | 4.69 | 2.24 |

| No Social Nav | 108 | 108 | 129 | 439 | 4.06 | 2.03 |

| Attention (ours) | 54 | 74 | 121 | 324 | 6 | 2.53 |

| Active Vision (ours) | 29 | 39 | 126 | 293 | 10.10 | 4.31 |

| Social Encounters per Goal ( m) | d | |||

|---|---|---|---|---|

| Construction World | ||||

| Attention vs YOLO+Depth | -0.0808 | -0.68 | 0.26 | |

| Attention vs No Social Nav | -0.2107 | -1.14 | 0.002 | ** |

| Attention vs Nav2 (w/reverse) | -0.3016 | -1.10 | 0.008 | ** |

| Active Vision vs Attention | -0.0300 | -0.65 | 0.26 | |

| Active Vision vs No Social Nav | -0.2407 | -1.39 | <0.001 | *** |

| Active Vision vs Nav2 (w/reverse) | -0.3316 | -1.28 | <0.001 | *** |

| Island World | ||||

| Attention vs YOLO+Depth | -0.0216 | -0.28 | 0.71 | |

| Attention vs No Social Nav | -0.4262 | -2.57 | <0.001 | *** |

| Attention vs Nav2 (w/reverse) | -0.3874 | -1.90 | <0.001 | *** |

| Active Vision vs Attention | -0.0045 | -0.08 | 0.71 | |

| Active Vision vs No Social Nav | -0.4308 | -2.72 | <0.001 | *** |

| Active Vision vs Nav2 (w/reverse) | -0.3919 | -2.00 | <0.001 | *** |

| Inspection World | ||||

| Attention vs YOLO+Depth | -0.0684 | -0.60 | 0.01 | * |

| Attention vs No Social Nav | -0.1103 | -0.66 | 0.01 | * |

| Attention vs Nav2 (w/reverse) | -0.3734 | -1.72 | <0.001 | *** |

| Active Vision vs Attention | -0.0722 | -0.63 | 0.01 | * |

| Active Vision vs No Social Nav | -0.1825 | -1.11 | <0.001 | *** |

| Active Vision vs Nav2 (w/reverse) | -0.4456 | -2.04 | <0.001 | *** |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).