Submitted:

31 March 2026

Posted:

01 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

Main Objective

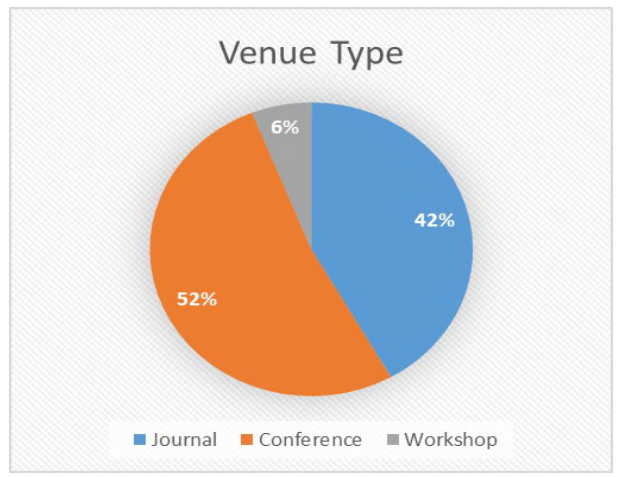

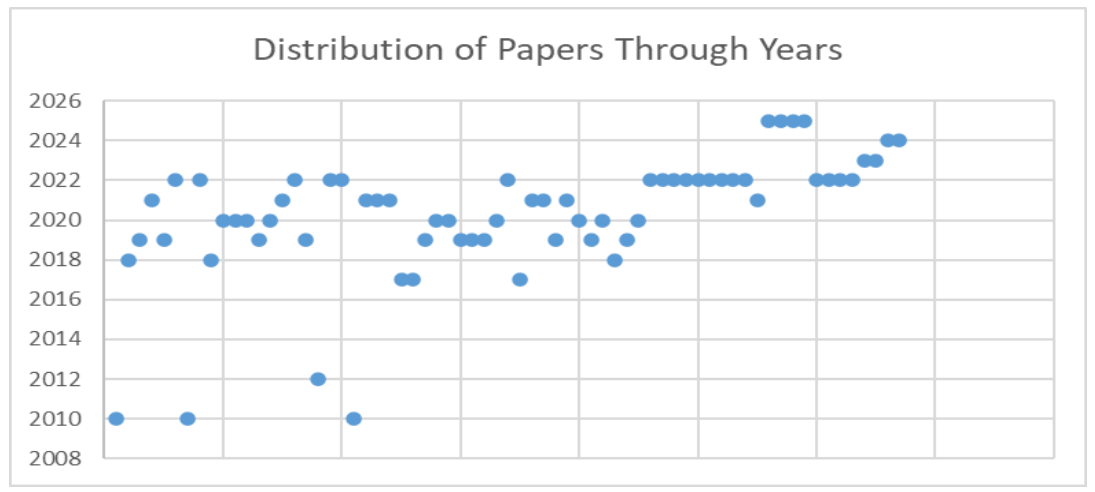

- Identify the most valuable venues of papers in the field of unfairness detection in MLS.

- Explore different software fairness definitions.

- Recognize types of addressed problems (detection, analysis, or evaluation).

- Find approaches for fairness testing (algorithms or tools) and explore fairness testing levels in MLS.

- Provide researchers with datasets, algorithms, and models for detecting unfairness in MLS and explain the reasons behind their biases.

- Investigate the gaps in software fairness in MLS research topics.

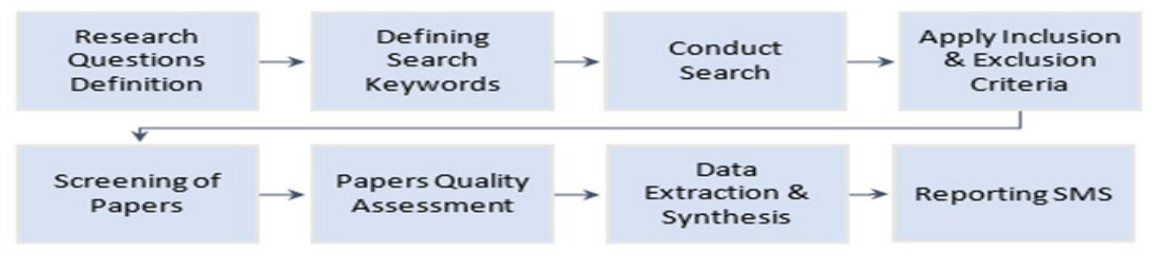

2. Research Methodology

2.1. Methods Overview

- Population: Machine learning-based systems, artificial intelligence, fairness techniques, methods, models, and bias.

- Intervention: Software engineering, software unfairness detection.

- Comparison: Research publication statistics, fairness approaches, fairness testing levels, evaluation metrics, solutions, biased algorithms, and datasets.

- Outcomes: Software fairness definitions and techniques used in MLS, fairness testing levels, biased algorithms and datasets, solutions for mitigating unfairness, and identification of research gaps.

- Context: Research content relevant to both academia and industry.

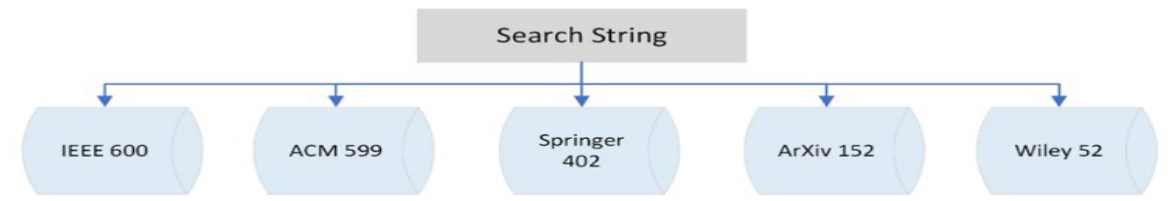

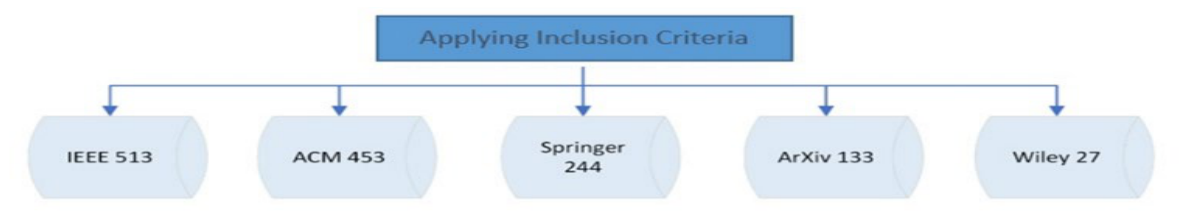

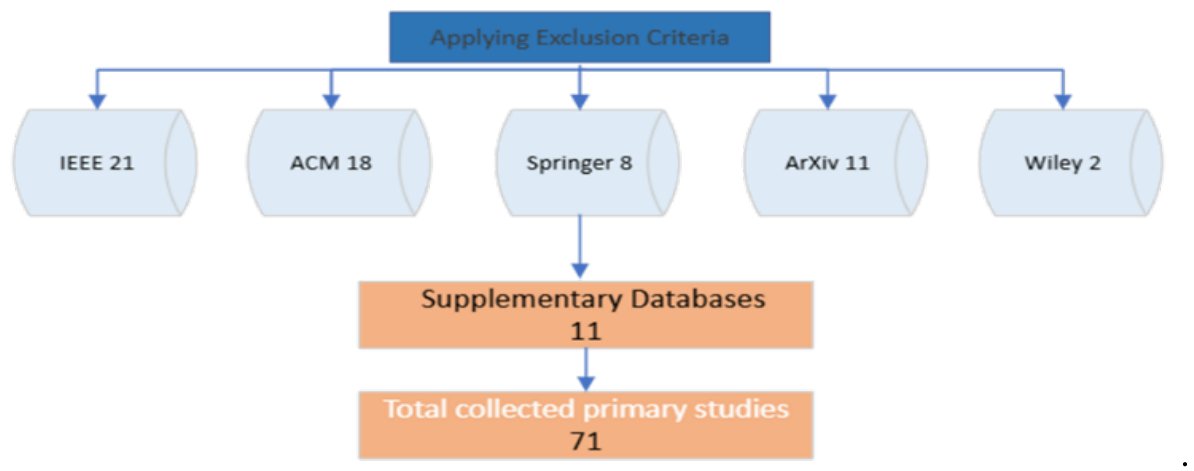

2.2. Search Strategy

2.3. Narrative for Study Screening and Selection

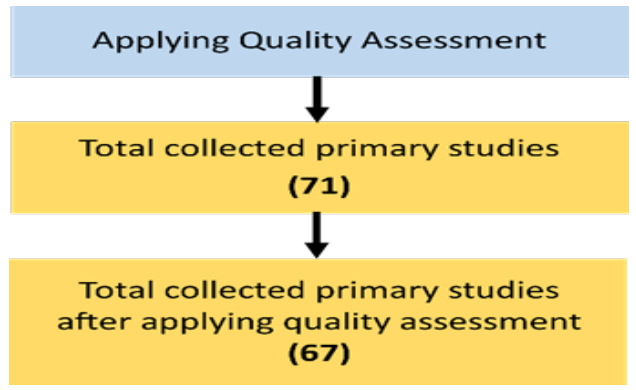

2.3. Quality Assessment and Data Analysis

- Does the study specify the goal of the research?

- Does the study propose a solution for unfairness detection in MLS?

- Does the study evaluate the proposed idea?

2.4. Data Extraction

2.5. Threats to Validity

3. Background

3.1. Machine Learning-Based Systems and Deep Learning

3.2. Deep Learning Tasks and Architectures

3.3. ML and DL Tasks in Data Mining

3.4. Types of Datasets

3.5. Software Fairness

3.6. Testing Fairness in MLS

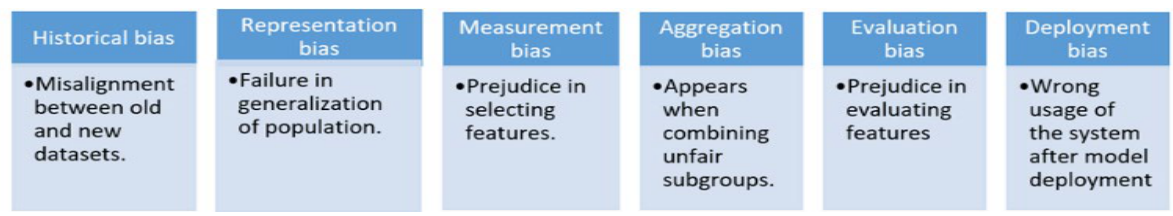

3.7. Fairness and Bias in MLS

4. Results and Discussion

| Venue | Study ID | Publisher |

| Journal of Data and Information Quality | PS26 | ACM |

| Journal on Emerging Technologies in Computing Systems | PS3 | ACM |

| Journal of Computing Sciences in Colleges | PS4 | ACM |

| Journal of Artificial Intelligence Research | PS6 | JAIR |

| IEEE Transactions on Industrial Informatics | PS8 | IEEE |

| Journal of Machine Learning Research | PS13 | JLMR |

| Neural Computing and Applications | PS20, PS28 | Springer |

| Data Mining and Knowledge Discovery | PS21, PS57 | Springer |

| Data Science and Engineering | PS23 | Springer |

| International Journal of Data Science and Analytics | PS24 | Springer |

| DBLP CoRR journal | PS26, PS60 | DBLP |

| Knowledge-Based Systems | PS36 | Elsevier |

| International Journal of Intelligent Systems | PS37 | WILEY |

| IEEE Intelligent Systems | PS40 | IEEE |

| Algorithms | PS44 | MDPI |

| Advances in Neural Information Processing Systems | PS45 | NeurIPS |

| IEEE Transactions on Software Engineering | PS55 | IEEE |

| International Journal of Crowd Science | PS58 | DBLP |

| ACM Transactions on Software Engineering and Methodology | PS59 | ACM |

| Journal of Technology in Human Service | PS63 | DBLP |

| Electronics | PS64 | MDPI |

| Expert Systems | PS66 | WILY |

| ACM Transactions on Knowledge Discovery from Data | PS67 | ACM |

| Venue | Study ID | Publisher |

| Journal of Data and Information Quality | PS26 | ACM |

| Venue | Study ID | Publisher |

| The World Wide Web Conference | PS2, PS5 | ACM |

| International Conference on Computer, Control, and Communication | PS7 | IEEE |

| IEEE/ACM International Workshop on Software Fairness | PS9 | IEEE/ACM |

| IEEE International Symposium on Technology and Society | PS10 | IEEE |

| IEEE TrustCom 2020 | PS11 | IEEE |

| IEEE Conference on Decision and Control | PS12 | IEEE |

| International Conference on Testing Software and Systems | PS14 | SPRINGER |

| International Conference on the Quality of Information and Communications Technology | PS15, PS17 | SPRINGER |

| International Conference on Computer-Aided Verification | PS16 | SPRINGER |

| Joint European Conference on Machine Learning and Knowledge Discovery in Databases | PS18 | SPRINGER |

| Companion Proceedings of the Web Conference 2021 | PS22 | ACM |

| Proceedings of the 23rd ACM SIGKDD | PS25 | ACM |

| Proceedings of the Conference on Fairness, Accountability, and Transparency | PS27, PS30, PS38 | ACM |

| Proceedings of the AAAI/ACM Conference on AI | PS29 | ACM |

| ACL Workshop on Gender Bias for Natural Language Processing | PS31 | ACL |

| European Software Engineering Conference and Symposium on the Foundations of Software Engineering | PS32 | ACM |

| ACM Conference (Conference’17) | PS33 | ACM |

| Annual Meeting of the Association for Computational Linguistics | PS34 | ACL |

| INNS Big Data and Deep Learning Conference | PS35 | SPRINGER |

| Proceedings of the 36th International Conference on Machine Learning | PS41, PS42 | PMLR |

| AAAI Conference on Artificial Intelligence | PS43 | AAAI |

| International Conference on Software Engineering | PS46, PS47, PS51, PS52, PS54, PS56 | IEEE/ACM |

| International Workshop on Equitable Data and Technology | PS48 | ACM |

| IEEE International Conference on Software Testing, Verification, and Validation Workshop | PS49 | IEEE |

| ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering | PS50 | ACM |

| IEEE/ACM 7th International Workshop on Metamorphic Testing | PS53 | IEEE/ACM |

| ACM SIGSOFT International Symposium on Software Testing and Analysis | PS61 | ACM |

| International Conference on Evaluation and Assessment in Software Engineering | PS65 | EASE |

| Venue | Study ID | Publisher |

| Empirical Software Engineering | PS19, PS62 | SPRINGER |

5. Related Work

6. Conclusions

Author Contributions

Funding

Data Availability Statement

Acknowledgments

Conflicts of Interest

Abbreviations

| ML | Machine Learning |

| DL | Deep Learning |

| NN | Neural Network |

| DNN | Deep Neural Network |

| CNN | Convolutional Neural Network |

| RNN | Recurrent Neural Network |

| LSTM | Long Short-Term Memory |

| NLP | Natural Language Processing |

| BERT | Bidirectional Encoder Representations from Transformers |

| GPT | Generative Pre-trained Transformer |

Appendix A: Quality Assurance

| ID | Reference | Title | QA1 | QA2 | QA3 | Score | ID after QA |

| S1 | Tremblay, Monica Chiarini, Kaushik Dutta, and Debra Vandermeer. Journal of Data and Information Quality (JDIQ) 2.1 ACM (2010) | Using data mining techniques to discover bias patterns in missing data. | 1 | 1 | 1 | 3 | PS1 |

| S2 | Krasanakis, Emmanouil, et al. Proceedings of the 2018 World Wide Web Conference. ACM 2018. | Adaptive sensitive reweighting to mitigate bias in fairness-aware classification | 1 | 1 | 0.5 | 2.5 | PS2 |

| S3 | Wang, Weijia, and Bill Lin. ACM Journal on Emerging Technologies in Computing Systems (JETC) 15.2 (2019): 1-17. | Trained biased number representation for ReRAM-based neural network accelerators. | 1 | 1 | 1 | 3 | PS3 |

| S4 | Thambawita, Vajira, et al. ACM Transactions on Computing for Healthcare 1.3 (2020): 1-29. | An extensive study on cross-dataset bias and evaluation metrics interpretation for machine learning applied to gastrointestinal tract abnormality classification. | 1 | 0.5 | 0 | 1.5 | |

| S5 | Amend, Jack J., and Scott Spurlock. Journal of Computing Sciences in Colleges ACM 36.5 (2021): 14-23. | Improving machine learning fairness with sampling and adversarial learning. | 1 | 1 | 0.5 | 2.5 | PS4 |

| S6 | Baniecki, Hubert, et al. The Journal of Machine Learning Research 22.1 (2021): 9759-9765. | dalex: Responsible machine learning with interactive explainability and fairness in Python. | 1 | 0.5 | 0 | 1.5 | |

| S7 | Wu, Yongkai, Lu Zhang, and Xintao Wu. The World Wide Web Conference. ACM 2019. | On convexity and bounds of fairness-aware classification. | 1 | 1 | 0.5 | 2.5 | PS5 |

| S8 | Caton, Simon, Saiteja Malisetty, and Christian Haas. Journal of Artificial Intelligence ACM Research 74 (2022): 1011-1035. | Impact of Imputation Strategies on Fairness in Machine Learning.” | 1 | 1 | 1 | 3 | PS6 |

| S9 | Kamiran, Faisal, and Toon Calders. 2009 2nd international conference on computer, control, and communication. IEEE, 2009. | Classifying without discriminating. | 1 | 1 | 0.5 | 2.5 | PS7 |

| S10 | DeBrusk, Chris. MIT Sloan Management Review (2018). | The risk of machine-learning bias (and how to prevent it. | 1 | 0.5 | 0 | 1.5 | |

| S11 | Zhou, Xiaokang, et al. IEEE Transactions on Industrial Informatics (2022). | Distribution Bias Aware Collaborative Generative Adversarial Network for Imbalanced Deep Learning in Industrial IoT. | 1 | 0.5 | 0.5 | 2 | PS8 |

| S12 | Verma, Sahil, and Julia Rubin. 2018 ieee/acm international workshop on software fairness (fairware). IEEE, 2018. | Fairness definitions explained. | 1 | 1 | 0 | 2 | PS9 |

| S13 | KIEMDE, Sountongnoma Martial Anicet, and Ahmed Dooguy KORA. 2020. | Fairness of Machine Learning Algorithms for the Black Community | 1 | 0.5 | 0.5 | 2 | PS10 |

| S14 | Xie, Wentao, and Peng Wu. 2020. | Fairness Testing of Machine Learning Models Using Deep Reinforcement Learning | 1 | 1 | 1 | 3 | PS11 |

| S15 | Olfat, Matt, and Yonatan Mintz. 2020. | Flexible Regularization Approaches for Fairness in Deep Learning | 1 | 1 | 0.5 | 2.5 | PS12 |

| S16 | Zafar, Muhammad Bilal, et al. The Journal of Machine Learning Research 20.1 (2019): 2737-2778. | Fairness constraints: A flexible approach for fair classification. | 1 | 1 | 1 | 3 | PS13 |

| S17 | Sharma, Arnab, and Heike Wehrheim, IFIP International Conference on Testing Software and Systems. Springer, Cham, 2020. | Automatic fairness testing of machine learning models. | 1 | 1 | 1 | 3 | PS14 |

| S18 | Villar, David, and Jorge Casillas. International Conference on the Quality of Information and Communications Technology. Springer, Cham, 2021. | Facing Many Objectives for Fairness in Machine Learning. | 1 | 0.5 | 1 | 2.5 | PS15 |

| S19 | Guan, Ji, Wang Fang, and Mingsheng Ying. International Conference on Computer Aided Verification. Springer, Cham, 2022. | Verifying Fairness in Quantum Machine Learning. | 1 | 1 | 1 | 3 | PS16 |

| S20 | Shin Nakajima and Tsong Yueh Chen (2019) | Generating Biased Dataset for Metamorphic Testing of Machine Learning Programs | 1 | 0.5 | 0.5 | 2 | PS17 |

| S21 | Kamishima, Toshihiro, et al. Joint European conference on machine learning and knowledge discovery in databases. Springer, Berlin, Heidelberg, 2012. | Fairness-aware classifier with prejudice remover regularizer. | 1 | 1 | 0.5 | 2.5 | PS18 |

| S22 | Perera, Anjana, et al. 2022 | Search-based fairness testing for regression-based machine learning systems. | 1 | 1 | 1 | 3 | PS19 |

| S23 | Tian, Huan, et al. Neural Computing and Applications (2022): 1-19. | Image fairness in deep learning: problems, models, and challenges. | 1 | 0.5 | 0.5 | 2 | PS20 |

| S24 | Calders, Toon, and Sicco Verwer. Data mining and knowledge discovery 21.2 (2010): 277-292. | Three naive Bayes approaches for discrimination-free classification. | 1 | 1 | 0.5 | 2.5 | PS21 |

| S25 | Sun, Haipei, et al. Companion Proceedings of the Web Conference 2021. 2021. | Automating fairness configurations for machine learning. | 1 | 0.5 | 1 | 2.5 | PS22 |

| S26 | Abraham, Savitha Sam. Data Science and Engineering 6.4 (2021): 485-499. | FairLOF: Fairness in Outlier Detection. | 1 | 1 | 1 | 3 | PS23 |

| S27 | Wang, Yanchen, and Lisa Singh International Journal of Data Science and Analytics 12.2 (2021): 101-119. | Analyzing the impact of missing values and selection bias on fairness. | 1 | 1 | 1 | 3 | PS24 |

| S28 | Corbett-Davies, Sam, et al. Proceedings of the 23rd acm sigkdd international conference on knowledge discovery and data mining. 2017. | Algorithmic decision making and the cost of fairness. | 1 | 1 | 0.5 | 2.5 | PS25 |

| S29 | Berk, Richard, et alarXiv preprint arXiv:1706.02409 (2017). | A convex framework for fair regression. | 1 | 1 | 0,.5 | 2.5 | PS26 |

| S30 | Celis, L. Elisa, et al.Proceedings of the conference on fairness, accountability, and transparency. 2019. | Classification with fairness constraints: A meta-algorithm with provable guarantees. | 1 | 1 | 1 | 3 | PS27 |

| S31 | Prates, Marcelo OR, Pedro H. Avelar, and Luís C. Lamb Neural Computing and Applications 32.10 (2020): 6363-6381. | Assessing gender bias in machine translation: a case study with Google translates. | 1 | 1 | 1 | 3 | PS28 |

| S32 | Fazelpour, Sina, and Zachary C. Lipton. Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society. 2020. | Algorithmic Fairness from a Non-ideal Perspective | 1 | 1 | 0.5 | 2.5 | PS29 |

| S33 | Hutchinson, Ben, and Margaret Mitchell. Proceedings of the conference on fairness, accountability, and transparency. 2019. | 50 years of test (un) fairness: Lessons for machine learning. | 1 | 0.5 | 0.5 | 2 | PS30 |

| S34 | Font, Joel Escudé, and Marta R. Costa-Jussa. arXiv preprint arXiv:1901.03116 (2019). | Equalizing gender biases in neural machine translation with word embedding techniques. | 1 | 1 | 1 | 3 | PS31 |

| S35 | Aggarwal, Aniya, et al. Proceedings of the 2019 27th ACM Joint Meeting on European Software Engineering Conference and Symposium on the Foundations of Software Engineering. 2019. | Black box fairness testing of machine learning models. | 1 | 1 | 1 | 3 | PS32 |

| S36 | Jones, Gareth P., et al. arXiv preprint arXiv:2010.03986 (2020). | Metrics and methods for a systematic comparison of fairness-aware machine learning algorithms. | 1 | 1 | 0.5 | 2.5 | PS33 |

| S37 | Krishna, Satyapriya, et al. arXiv preprint arXiv:2203.08670 (2022). | Measuring Fairness of Text Classifiers via Prediction Sensitivity. | 1 | 1 | 0.5 | 2.5 | PS34 |

| S38 | Barocas, Solon, Moritz Hardt, and Arvind Narayanan. Nips tutorial 1 (2017) | Fairness in machine learning. | 1 | 0.5 | 0.5 | 2 | PS35 |

| S39 | Varley, Michael, and Vaishak Belle. Knowledge-Based Systems 215 (2021): 106715. | Fairness in machine learning with tractable models. | 1 | 1 | 0.2 | 2.5 | PS36 |

| S40 | Valdivia, Ana, Javier Sánchez-Monedero, and Jorge CasillasInternational Journal of Intelligent Systems 36.4 (2021): 1619-1643. | How fair can we go in machine learning? Assessing the boundaries of accuracy and fairness. | 1 | 1 | 1 | 3 | PS37 |

| S41 | Friedler, Sorelle A., et al. Proceedings of the conference on fairness, accountability, and transparency. 2019. | A comparative study of fairness-enhancing interventions in machine learning. | 1 | 1 | 0.5 | 2.5 | PS38 |

| S42 | Ferrari, Elisa, and Davide Bacciu. arXiv preprint arXiv:2105.06345 (2021). | Addressing Fairness, Bias, and Class Imbalance in Machine Learning: the FBI-loss. | 1 | 1 | 1 | 3 | PS39 |

| S43 | Du, Mengnan, et al. IEEE Intelligent Systems 36.4 (2020): 25-34. | Fairness in deep learning: A computational perspective. | 1 | 0.5 | 0.5 | 2 | PS40 |

| S44 | Huang, Lingxiao, and Nisheeth Vishnoi. International Conference on Machine Learning. PMLR, 2019. | Stable and fair classification. | 1 | 0.5 | 0.5 | 2 | PS41 |

| S45 | Celis, L. Elisa, et alInternational Conference on Machine Learning. PMLR, 2021. | Fair classification with noisy protected attributes: A framework with provable guarantees.” | 1 | 1 | 0.5 | 2.5 | PS42 |

| S46 | Goel, Naman, Mohammad Yaghini, and Boi Faltings. Proceedings of the AAAI Conference on Artificial Intelligence. Vol. 32. No. 1. 2018. | Non-discriminatory machine learning through convex fairness criteria. | 1 | 1 | 0.5 | 2.5 | PS43 |

| S47 | Shrestha, Yash Raj, and Yongjie Yang. Algorithms 12.9 (2019): 199. | Fairness in algorithmic decision-making: Applications in multi-winner voting, machine learning, and recommender systems. | 1 | 0.5 | 0.5 | 2 | PS44 |

| S48 | Mandal, Debmalya, et al. Advances in neural information processing systems 33 (2020): 18445-18456. | Ensuring fairness beyond the training data. | 1 | 0.5 | 0.5 | 2 | PS45 |

| S49 | Gao, Xuanqi, et al. | FairNeuron: improving deep neural network fairness with adversary games on selective neurons. | 1 | 1 | 1 |

3 |

PS46 |

| S50 | Tizpaz-Niari, Saeid, et al. | Fairness-aware configuration of machine learning libraries. | 1 | 1 | 0.5 | 2.5 | PS47 |

| S51 | Chakraborty, Joymallya, Suvodeep Majumder, and Huy Tu. | Fair-SSL: Building fair ML Software with less data. | 1 | 0.5 | 0.5 | 2 | PS48 |

| S52 | Patel, Ankita Ramjibhai, et al. | A combinatorial approach to fairness testing of machine learning models. | 1 | 1 | 1 | 3 | PS49 |

| S53 | Chen, Zhenpeng, et al. | MAAT: a novel ensemble approach to addressing fairness and performance bugs for machine learning software. | 1 | 0.5 | 1 | 2.5 | PS50 |

| S54 | Li, Yanhui, et al. | Training data debugging for the fairness of machine learning software. | 1 | 0.5 | 0.5 | 2 | PS51 |

| S55 | Fan, Ming, et al. | Explanation-guided fairness testing through genetic algorithm. | 1 | 1 | 0.5 | 2.5 | PS52 |

| S56 | Pu, Muxin, et al. | Fairness evaluation in deepfake detection models using metamorphic testing. | 1 | 1 | 1 | 3 | PS53 |

| S57 | Zheng, Haibin, et al. | Neuronfair: Interpretable white-box fairness testing through biased neuron identification. | 1 | 1 | 0.5 | 2.5 | PS54 |

| S58 | Zhang, Peixin, et al. | Automatic fairness testing of neural classifiers through adversarial sampling. | 1 | 0.5 | 0.5 | 2 | PS55 |

| S59 | Zhang, Peixin, et al. | White-box Fairness Testing through Adversarial Sampling | 1 | 0.5 | 1 | 2.5 | PS56 |

| S60 | Fabris, Alessandro, et al | Algorithmic fairness datasets: the story so far. | 1 | 0.5 | 0.5 | 2 | PS57 |

| S61 | Zhang, Jiehuang, Ying Shu, and Han Yu. | Fairness in design: A framework for facilitating ethical artificial intelligence designs. | 1 | 0.5 | 0.5 | 2 | PS58 |

| S62 | Majumder, Suvodeep, et al. “ | Fair enough: Searching for sufficient measures of fairness. | 1 | 0.5 | 0.5 | 2 | PS59 |

| S63 | Wang, Zichong, et al. “ | Towards fair machine learning software: Understanding and addressing model bias through counterfactual thinking. | 1 | 1 | 1 | 3 | PS60 |

| S64 | Guo, Huizhong, et al. | Fairrec: fairness testing for deep recommended systems. | 0.5 | 1 | 0.5 | 2 | PS61 |

| S65 | Hort, Max, et al. | Search-based automatic repair for fairness and accuracy in decision-making software. | 1 | 1 | 0.5 | 2.5 | PS62 |

| S66 | Bantilan, Niels. | Themis-ml: A fairness-aware machine learning interface for end-to-end discrimination discovery and mitigation. | 1 | 1 | 1 | 3 | PS63 |

| S67 | Ling, Jiasheng, et al. | Machine Learning-Based Multilevel Intrusion Detection Approach | 1 | 1 | 0.5 | 2.5 | PS64 |

| S68 | Nasiri, Roya. | Testing Individual Fairness in Graph Neural Networks | 1 | 0.5 | 0.5 | 2 | PS65 |

| S69 | Consuegra-Ayala et al. | Bias mitigation for fair automation of classification tasks. | 1 | 1 | 1 | 3 | PS66 |

| S70 |

Paiheng Xu et al. |

GFairHint: Improving Individual Fairness for Graph Neural Networks via Fairness Hint. | 1 | 1 | 1 | 3 | PS67 |

| S71 | Bahangulu et al. | Algorithmic bias, data ethics, and governance: Ensuring fairness, transparency, and compliance in AI-powered business analytics applications | 0.5 | 0.5 | 0.5 | 1.5 |

Appendix B: Research Gaps Summary

| Category | Sub-category | Research Gap | Primary Study ID |

|

1. fairness in machine learning |

Fairness Metrics and Definitions |

• Investigate incorporating fairness metrics into neural networks. • Measures for fairness. • Improve the definitions of fairness in data analysis. • Study other fairness metrics beyond individual fairness. • Much research relies on specific fairness metrics while ignoring others. |

PS4 PS18 PS22 PS23 PS30 PS36 PS59 |

| Fairness Across ML Tasks | • Include fairness constraints in supervised (regression, recommendation) and unsupervised (set selection, ranking) tasks. • Fairness in natural language understanding, resource allocation, representation learning, and causal learning. • Fairness in regression-based systems. • Discover limitations in fair regression. • Fairness in CNNs and DNNs. • Extend DNN fairness testing to CNNs. |

PS12 PS13 PS15 PS26 PS44 PS46 PS61 |

|

|

Bias Mitigation Techniques |

• More effective mitigation strategies beyond simple ML modifications. • Retraining as a solution to genetic fairness testing. • Explore hyper-parameter configurations that lead to high fairness. • Improve semi-supervised techniques to achieve fairness with limited labeled data. • Investigate ways to adjust the learning process to account for biases. |

PS29 PS47 PS48 PS50 PS52 PS66 |

|

|

Sensitive Attributes & Social Constructs |

• Study numerical attributes and groups of attributes as sensitive. • Consider income, race, religious beliefs, age, nationality. • Explore multiple and continuous sensitive features. • Study other social constructs and stereotypes. |

PS7 PS21 PS31 |

|

|

Fairness Testing Frameworks |

• Extend reinforcement learning-based testing for fairness. • Extend Themis for algorithmic bias testing. • FairRec for multi-attribute group fairness testing. • White-box and black-box fairness testing. • Explore other measures of fairness in white-box testing. • More techniques for black-box testing to detect individual discrimination. |

PS14 PS19 PS49 PS61 PS63 |

|

|

Fairness in Real-World Contexts |

• Evaluate fairness-accuracy tradeoffs in real-world scenarios. • Fairness in temporal settings. • Study real-world data issues and their impact on fairness. • Add data documentation to future projects. • Extend fairness to ethical values like privacy and explainability. |

PS27 PS57 |

|

|

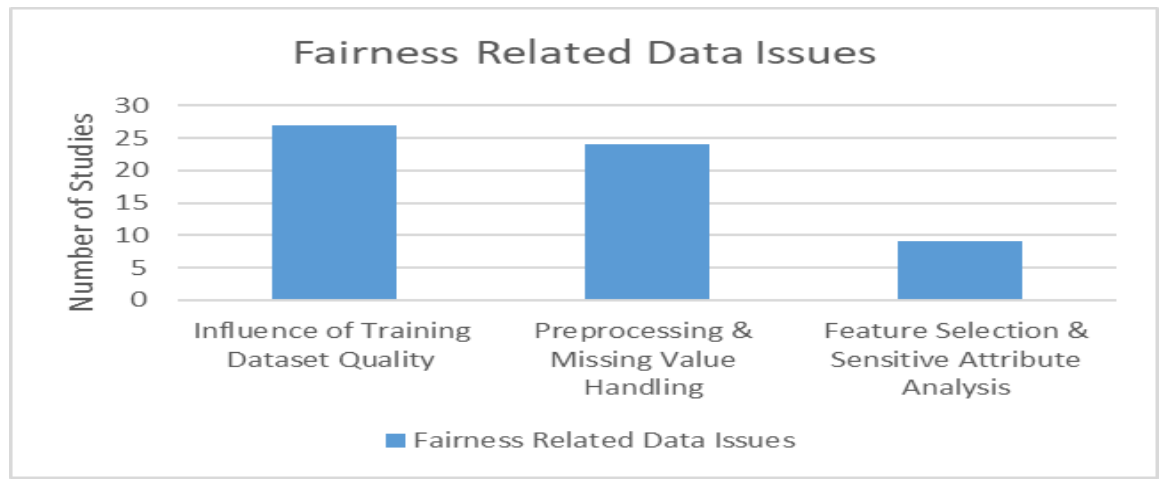

2. Feature Selection & Data Handling |

• Improve quality of datasets with test case generation approaches. • Feature selection in large datasets. • Investigating advanced feature selection techniques. • Adding datasets, algorithms, and imputation strategies. • Preprocessing of missing training data. • Pre-processing training data. • Study other datasets and fairness-accuracy tradeoffs. • Explore different characteristics in training data. |

PS1 PS6 PS24 PS37 PS41 PS43 PS45 PS51 PS64 |

|

|

3. Model Optimization & Training Efficiency |

• New ways for faster training processes. • Training CNNs with low-precision weights. • Improve algorithms for efficiency and equity. • Improve stability by shifting decision boundaries. • Implement models in industrial IoT for imbalanced learning. • Improve quantum decision models with fairness guarantees. • Enhance scalability of fairness testing for large datasets and complex graphs. |

PS2 PS3 PS8 PS16 PS25 PS65 PS66 |

|

|

4. Testing & Evaluation Frameworks |

• Improve white-box models for robustness. • Explore test case generation approaches. • DNN white-box testing challenges. • Try different equivalence classes for retraining. • Study bias kernels detected by verification algorithms. • Neuron coverage as a distortion metric. • Explore causal techniques, post-processing, interpretability, calibration. • Extend counterfactual thinking to text and image processing |

PS17 PS32 PS33 PS34 PS54 PS55 PS56 PS60 |

|

|

5. Reinforcement Learning & Reward Functions |

• Study other definitions of reward functions in black-box testing. • Extend RL-based testing frameworks. • Determine ideal G-ratio for fairness testing. |

PS11 | |

|

6. Ethics, Law, and Societal Impact |

• Types of laws or regulations. • Ethical difficulty in statistical machine translation. • Algorithms raise complex questions for researchers and policymakers. • More debates on fairness including technical and cultural causes. • Transparency in model complexity and fairness tradeoff. • Algorithmic choices and social context. • Fair unified solutions. • Interdisciplinary collaboration (CS, statistics, cognitive science). |

PS28 PS30 PS38 PS39 PS58 |

|

|

7. Emerging Applications & Techniques |

• Deep clustering, adversarial training, and attacks. • Study proposed methods with image compression and deepfake techniques. • Investigate noise models for non-binary attributes. • Long-term studies on bias mitigation effects. |

PS20 PS42 PS53 |

References

- Tremblay, Monica Chiarini, Kaushik Dutta, and Debra Vandermeer. “Using data mining techniques to discover bias patterns in missing data.” Journal of Data and Information Quality (JDIQ) 2.1 ACM (2010). [CrossRef]

- Krasanakis, Emmanouil, Eleftherios Spyromitros-Xioufis, Symeon Papadopoulos, and Yiannis Kompatsiaris. “Adaptive sensitive reweighting to mitigate bias in fairness-aware classification.” Proceedings of the 2018 World Wide Web Conference. ACM (2018). [CrossRef]

- Wang, Weijia, and Bill Lin. “Trained biased number representation for ReRAM-based neural network accelerators.” ACM Journal on Emerging Technologies in Computing Systems (JETC) 15.2 (2019): 1-17. [CrossRef]

- Amend, Jack J., and Scott Spurlock. “Improving machine learning fairness with sampling and adversarial learning.” Journal of Computing Sciences in Colleges ACM 36.5 (2021): 14-23. [CrossRef]

- Wu, Yongkai, Lu Zhang, and Xintao Wu. “On convexity and bounds of fairness-aware classification.” The World Wide Web Conference. ACM (2019). [CrossRef]

- Caton, Simon, Saiteja Malisetty, and Christian Haas. “Impact of Imputation Strategies on Fairness in Machine Learning.” Journal of Artificial Intelligence Research 74 (2022): 1011-1035. [CrossRef]

- Kamiran, Faisal, and Toon Calders. “Classifying without discriminating.” 2nd International Conference on Computer, Control, and Communication. IEEE (2009). [CrossRef]

- Zhou, Xiaokang, Yiyong Hu, Jiayi Wu, Wei Liang, Jianhua Ma, and Qun Jin. “Distribution Bias Aware Collaborative Generative Adversarial Network for Imbalanced Deep Learning in Industrial IoT.” IEEE Transactions on Industrial Informatics (2022). [CrossRef]

- Verma, Sahil, and Julia Rubin. “Fairness definitions explained.” IEEE/ACM International Workshop on Software Fairness (Fairware). IEEE (2018). [CrossRef]

- KIEMDE, Sountongnoma Martial Anicet, and Ahmed Dooguy. “Fairness of Machine Learning Algorithms for the Black Community.” KORA (2020). [CrossRef]

- Xie, Wentao, and Peng Wu. “Fairness Testing of Machine Learning Models Using Deep Reinforcement Learning.” 2020 IEEE 19th International Conference on Trust, Security and Privacy in Computing and Communications (TrustCom). IEEE (2020). [CrossRef]

- Olfat, Matt, and Yonatan Mintz. “Flexible Regularization Approaches for Fairness in Deep Learning.” 2020 59th IEEE Conference on Decision and Control (CDC). IEEE (2020). [CrossRef]

- Zafar, Muhammad Bilal, Isabel Valera, Manuel Gomez-Rodriguez, and Krishna P. Gummadi. “Fairness constraints: A flexible approach for fair classification.” The Journal of Machine Learning Research 1 (2019): 2737-2778. [CrossRef]

- Sharma, Arnab, and Heike Wehrheim. “Automatic fairness testing of machine learning models.” IFIP International Conference on Testing Software and Systems. Springer, Cham (2020). [CrossRef]

- Villar, David, and Jorge Casillas. “Facing Many Objectives for Fairness in Machine Learning.” International Conference on the Quality of Information and Communications Technology. Springer, Cham (2021). [CrossRef]

- Guan, Ji, Wang Fang, and Mingsheng Ying. “Verifying Fairness in Quantum Machine Learning.” International Conference on Computer Aided Verification. Springer, Cham (2022). [CrossRef]

- Nakajima, Shin, and Tsong Yueh Chen. “Generating biased dataset for metamorphic testing of machine learning programs.” IFIP International Conference on Testing Software and Systems. Springer, Cham (2019). [CrossRef]

- Kamishima, Toshihiro, Shotaro Akaho, Hideki Asoh, and Jun Sakuma. “Fairness-aware classifier with prejudice remover regularizer.” Joint European Conference on Machine Learning and Knowledge Discovery in Databases. Springer, Berlin, Heidelberg (2012). [CrossRef]

- Perera, Anjana, Aldeida Aleti, Chakkrit Tantithamthavorn, Jirayus Jiarpakdee, Burak Turhan, Lisa Kuhn, and Katie Walker. “Search-based fairness testing for regression-based machine learning systems.” Empirical Software Engineering 27.3 (2022): 1-36. [CrossRef]

- Tian, Huan, Tianqing Zhu, Wei Liu, and Wanlei Zhou. “Image fairness in deep learning: problems, models, and challenges.” Neural Computing and Applications (2022): 1-19. [CrossRef]

- Calders, Toon, and Sicco Verwer. “Three naive Bayes approaches for discrimination-free classification.” Data Mining and Knowledge Discovery 21.2 (2010): 277-292. [CrossRef]

- Sun, Haipei, Yiding Yang, Yanying Li, Huihui Liu, Xinchao Wang, and Wendy Hui Wang. “Automating fairness configurations for machine learning.” Companion Proceedings of the Web Conference (2021). [CrossRef]

- Abraham, Savitha Sam. “FairLOF: Fairness in Outlier Detection.” Data Science and Engineering 6.4 (2021): 485-499. [CrossRef]

- Wang, Yanchen, and Lisa Singh. “Analyzing the impact of missing values and selection bias on fairness.” International Journal of Data Science and Analytics 12.2 (2021): 101-119. [CrossRef]

- Corbett-Davies, Sam, Emma Pierson, Avi Feller, Sharad Goel, and Aziz Huq. “Algorithmic decision making and the cost of fairness.” Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining (2017). [CrossRef]

- Berk, Richard, Hoda Heidari, Shahin Jabbari, Matthew Joseph, Michael Kearns, Jamie Morgenstern, Seth Neel, and Aaron Roth. “A convex framework for fair regression.” Not published arXiv preprint arXiv:1706.02409 (2017). [CrossRef]

- Celis, L. Elisa, Lingxiao Huang, Vijay Keswani, and Nisheeth K. Vishnoi. “Classification with fairness constraints: A meta-algorithm with provable guarantees.” Proceedings of the Conference on Fairness, Accountability, and Transparency (2019). [CrossRef]

- Prates, Marcelo OR, Pedro H. Avelar, and Luís C. Lamb. “Assessing gender bias in machine translation: a case study with Google Translate.” Neural Computing and Applications 32.10 (2020): 6363-6381. [CrossRef]

- Fazelpour, Sina, and Zachary C. Lipton. “Algorithmic Fairness from a Non-ideal Perspective.” Proceedings of the AAAI/ACM Conference on AI, Ethics, and Society (2020). [CrossRef]

- Hutchinson, Ben, and Margaret Mitchell. “50 years of test (un) fairness: Lessons for machine learning.” Proceedings of the Conference on Fairness, Accountability, and Transparency (2019). [CrossRef]

- Font, Joel Escudé, and Marta R. Costa-Jussa. “Equalizing gender biases in neural machine translation with word embeddings techniques.” arXiv preprint arXiv:1901.03116 (2019). Association for Computational Linguistics (ACL). [CrossRef]

- Aggarwal, Aniya, Pranay Lohia, Seema Nagar, Kuntal Dey, and Diptikalyan Saha. “Black box fairness testing of machine learning models.” Proceedings of the 2019 27th ACM Joint Meeting on European Software Engineering Conference and Symposium on the Foundations of Software Engineering (2019). [CrossRef]

- Jones, Gareth P., James M. Hickey, Pietro G. Di Stefano, Charanpal Dhanjal, Laura C. Stoddart, and Vlasios Vasileiou. “Metrics and methods for a systematic comparison of fairness-aware machine learning algorithms.” arXiv preprint arXiv:2010.03986 (2020). [CrossRef]

- [34] Krishna, Satyapriya, Rahul Gupta, Apurv Verma, Jwala Dhamala. “Measuring Fairness of Text Classifiers via Prediction Sensitivity.” arXiv preprint arXiv:2203.08670 (2022). Association for Computational Linguistics. [CrossRef]

- Oneto, Luca, and Silvia Chiappa. “Fairness in machine learning.” Recent Trends in Learning from Data: Tutorials from the INNS Big Data and Deep Learning Conference (INNSBDDL2019). Springer International Publishing (2020). [CrossRef]

- Varley, Michael, and Vaishak Belle. “Fairness in machine learning with tractable models.” Knowledge-Based Systems 215 (2021): 106715. [CrossRef]

- Valdivia, Ana, Javier Sánchez-Monedero, and Jorge Casillas. “How fair can we go in machine learning? Assessing the boundaries of accuracy and fairness.” International Journal of Intelligent Systems 36.4 (2021): 1619-1643. [CrossRef]

- Friedler, Sorelle A., et al. “A comparative study of fairness-enhancing interventions in machine learning.” Proceedings of the Conference on Fairness, Accountability, and Transparency (2019). [CrossRef]

- [39] Ferrari, Elisa, and Davide Bacciu. “Addressing Fairness, Bias and Class Imbalance in Machine Learning: the FBI-loss.” arXiv preprint arXiv:2105.06345 (2021). [CrossRef]

- Du, Mengnan, et al. “Fairness in deep learning: A computational perspective.” IEEE Intelligent Systems 36.4 (2020): 25-34. [CrossRef]

- Huang, Lingxiao, and Nisheeth Vishnoi. “Stable and fair classification.” International Conference on Machine Learning. PMLR (2019). [CrossRef]

- Celis, L. Elisa, Lingxiao Huang, Vijay Keswani, and Nisheeth K. Vishnoi. “Fair classification with noisy protected attributes: A framework with provable guarantees.” International Conference on Machine Learning. PMLR (2021). [CrossRef]

- Goel, Naman, Mohammad Yaghini, and Boi Faltings. “Non-discriminatory machine learning through convex fairness criteria.” Proceedings of the AAAI Conference on Artificial Intelligence. Vol. 32. No. 1. (2018). [CrossRef]

- Shrestha, Yash Raj, and Yongjie Yang. “Fairness in algorithmic decision-making: Applications in multi-winner voting, machine learning, and recommender systems.” Algorithms 12.9 (2019): 199. [CrossRef]

- Mandal, Debmalya, Samuel Deng, Suman Jana, Jeannette Wing, and Daniel J. Hsu. “Ensuring fairness beyond the training data.” Advances in Neural Information Processing Systems 33 (2020): NeurIPS Proceedings 18445-18456. [CrossRef]

- Gao, Xuanqi, Juan Zhai, Shiqing Ma, Chao Shen, Yufei Chen, and Qian Wang. “FairNeuron: improving deep neural network fairness with adversary games on selective neurons.” Proceedings of the 44th International Conference on Software Engineering (2022). [CrossRef]

- Tizpaz-Niari, Saeid, Ashish Kumar, Gang Tan, and Ashutosh Trivedi. “Fairness-aware configuration of machine learning libraries.” Proceedings of the 44th International Conference on Software Engineering (2022). [CrossRef]

- Chakraborty, Joymallya, Suvodeep Majumder, and Huy Tu. “Fair-SSL: Building fair ML Software with less data.” Proceedings of the 2nd International Workshop on Equitable Data and Technology (2022). [CrossRef]

- Patel, Ankita Ramjibhai, Jaganmohan Chandrasekaran, Yu Lei, Raghu N. Kacker, and D. Richard Kuhn. “A combinatorial approach to fairness testing of machine learning models.” 2022 IEEE International Conference on Software Testing, Verification and Validation Workshops (ICSTW). IEEE (2022). [CrossRef]

- Chen, Zhenpeng, Jie M. Zhang, Federica Sarro, and Mark Harman. “MAAT: a novel ensemble approach to addressing fairness and performance bugs for machine learning software.” Proceedings of the 30th ACM Joint European Software Engineering Conference and Symposium on the Foundations of Software Engineering (2022). [CrossRef]

- Li, Yanhui, Linghan Meng, Lin Chen, Li Yu, Di Wu, Yuming Zhou, and Baowen Xu. “Training data debugging for the fairness of machine learning software.” Proceedings of the 44th International Conference on Software Engineering (2022). [CrossRef]

- Fan, Ming, Wenying Wei, Wuxia Jin, Zijiang Yang, and Ting Liu. “Explanation-guided fairness testing through genetic algorithm.” Proceedings of the 44th International Conference on Software Engineering (2022). [CrossRef]

- Pu, Muxin, Meng Yi Kuan, Nyee Thoang Lim, Chun Yong Chong, and Mei Kuan Lim. “Fairness evaluation in deepfake detection models using metamorphic testing.” 2022 IEEE/ACM 7th International Workshop on Metamorphic Testing (MET). IEEE (2022). [CrossRef]

- Zheng, Haibin, Zhiqing Chen, Tianyu Du, Xuhong Zhang, Yao Cheng, Shouling Ji, Jingyi Wang, Yue Yu, and Jinyin Chen. “Neuronfair: Interpretable white-box fairness testing through biased neuron identification.” Proceedings of the 44th International Conference on Software Engineering (2022). [CrossRef]

- Zhang, Peixin, Jingyi Wang, Jun Sun, Xinyu Wang, Guoliang Dong, Xingen Wang, Ting Dai, and Jin Song Dong. “Automatic fairness testing of neural classifiers through adversarial sampling.” IEEE Transactions on Software Engineering 48.9 (2021): 3593-3612. [CrossRef]

- Zhang, Peixin, Jingyi Wang, Jun Sun, Guoliang Dong, Xinyu Wang, Xingen Wang, Jin Song Dong, and Ting Dai. “White-box fairness testing through adversarial sampling.” Proceedings of the ACM/IEEE 42nd International Conference on Software Engineering (2020). [CrossRef]

- Fabris, Alessandro, Stefano Messina, Gianmaria Silvello, and Gian Antonio Susto. “Algorithmic fairness datasets: the story so far.” Data Mining and Knowledge Discovery 36.6 (2022): 2074-2152. [CrossRef]

- Zhang, Jiehuang, Ying Shu, and Han Yu. “Fairness in design: A framework for facilitating ethical artificial intelligence designs.” International Journal of Crowd Science 7.1 (2023): 32-39. [CrossRef]

- Majumder, Suvodeep, Joymallya Chakraborty, Gina R. Bai, Kathryn T. Stolee, and Tim Menzie. “Fair enough: Searching for sufficient measures of fairness.” ACM Transactions on Software Engineering and Methodology 32.6 (2023): 1-22. [CrossRef]

- Wang, Zichong, Yang Zhou, Meikang Qiu, Israat Haque, Laura Brown, Yi He, Jianwu Wang, David Lo, and Wenbin Zhang. “Towards fair machine learning software: Understanding and addressing model bias through counterfactual thinking.” arXiv preprint arXiv:2302.08018 (2023). [CrossRef]

- Guo, Huizhong, Jinfeng Li, Jingyi Wang, Xiangyu Liu, Dongxia Wang, Zehong Hu, Rong Zhang, and Hui Xue. “Fairrec: fairness testing for deep recommender systems.” Proceedings of the 32nd ACM SIGSOFT International Symposium on Software Testing and Analysis (2023). [CrossRef]

- Hort, M., Zhang, J. M., Sarro, F., & Harman, M. “Search-based automatic repair for fairness and accuracy in decision-making software.” Empirical Software Engineering 29.1 (2024): 36. [CrossRef]

- Bantilan, Niels. “Themis-ml: A fairness-aware machine learning interface for end-to-end discrimination discovery and mitigation.” Journal of Technology in Human Services 36.1 (2018): 15-30. [CrossRef]

- Ling, Jiasheng, Lei Zhang, Chenyang Liu, Guoxin Xia, and Zhenxiong Zhang. “Machine Learning-Based Multilevel Intrusion Detection Approach.” Electronics 14, no. 2 (2025): 323. [CrossRef]

- Nasiri, Roya. “Testing Individual Fairness in Graph Neural Networks.” arXiv preprint arXiv:2504.18353 (2025). [CrossRef]

- Consuegra-Ayala, Juan Pablo, Yoan Gutiérrez, Yudivian Almeida-Cruz, and Manuel Palomar. “Bias mitigation for fair automation of classification tasks.” Expert Systems 42, no. 2 (2025): e13734. DOI: DOI: 10.1111/exsy.13734.

- Xu, Paiheng, Yuhang Zhou, Bang An, Wei Ai, and Furong Huang. “Gfairhint: Improving individual fairness for graph neural networks via fairness hint.” ACM Transactions on Knowledge Discovery from Data 19, no. 3 (2025): 1-22. [CrossRef]

- Foidl, Harald, and Michael Felderer. “Risk-based data validation in machine learning-based software systems.” Proceedings of the 3rd ACM SIGSOFT International Workshop on Machine Learning Techniques for Software Quality Evaluation (2019). [CrossRef]

- Riccio, Vincenzo, Gunel Jahangirova, Andrea Stocco, Nargiz Humbatova, Michael Weiss, and Paolo Tonella. “Testing machine learning based systems: a systematic mapping.” Empirical Software Engineering 25.6 (2020): 5193-5254. [CrossRef]

- Mehrabi, Ninareh, Fred Morstatter, Nripsuta Saxena, Kristina Lerman, and Aram Galstyan. “A survey on bias and fairness in machine learning.” ACM Computing Surveys (CSUR) 54.6 (2021): 1-35. [CrossRef]

- Alkatheri, Maha Saleh. “Testing Machine-Learning-based Software for Fairness: Ontology-Guided Test Cases Generation” (2022). [CrossRef]

- Le Quy, Tuan, Abir Roy, Vasileios Iosifidis, Wei Zhang, and Eirini Ntoutsi. “A survey on datasets for fairness-aware machine learning.” Wiley Interdisciplinary Reviews: Data Mining and Knowledge Discovery (2022). [CrossRef]

- Caton, Simon, and Christian Haas. “Fairness in machine learning: A survey.” arXiv preprint arXiv:2010.04053 (2020). [CrossRef]

- Richardson, Brianna, and Juan E. Gilbert. “A Framework for Fairness: A Systematic Review of Existing Fair AI Solutions.” arXiv preprint arXiv:2112.05700 (2021). [CrossRef]

- Keele, Staffs. Guidelines for performing systematic literature reviews in software engineering. Vol. 5. Technical report, Ver. 2.3 EBSE Technical Report. EBSE (2007). [CrossRef]

- Pessach, Dana, and Erez Shmueli. “A review on fairness in machine learning.” ACM Computing Surveys (CSUR) 55.3 (2022): 1-44. [CrossRef]

- Kohavi, Ronny, and Barry Becker. “Adult data set.” UCI Machine Learning Repository 5 (1996): 2093. [CrossRef]

- Hofmann, Hans. “Statlog (German credit data).” UCI Machine Learning Repository 10 (1994): C5NC77. [CrossRef]

- Ofer, Dan. “ProPublica (COMPAS).” Kaggle (2016). [CrossRef]

- Jui, Tonni Das, and Pablo Rivas. “Fairness issues, current approaches, and challenges in machine learning models.” International Journal of Machine Learning and Cybernetics (2024): 1-31. [CrossRef]

- Chen, Zhenpeng, Jie M. Zhang, Max Hort, Mark Harman, and Federica Sarro. “Fairness testing: A comprehensive survey and analysis of trends.” ACM Transactions on Software Engineering and Methodology 33.5 (2024): 1–59. [CrossRef]

- Petersen, Kai, Robert Feldt, Shahid Mujtaba, and Michael Mattsson. “Systematic mapping studies in software engineering.” in 12th International Conference on Evaluation and Assessment in Software Engineering (EASE). BCS Learning & Development, 2008. [CrossRef]

- Ahuja, Ravinder, Aakarsha Chug, Shaurya Gupta, Pratyush Ahuja, and Shruti Kohli. “Classification and clustering algorithms of machine learning with their applications.” in Nature-inspired computation in data mining and machine learning, pp. 225-248. Cham: Springer International Publishing, 2019. [CrossRef]

| Id | Research questions | Rationale |

| RQ1 | What is the distribution of papers through venues and years? | Identify the kind of seminars for collected papers, published journals, conferences, timeline, and range of publishing dates. |

| RQ2 | What are the different definitions of software fairness? | Explore software fairness definitions from primary studies. |

| RQ3 | What types of problems are addressed? | Identify the type of addressed problem (detection, analysis, or evaluation). |

| RQ4 | What different approaches of fairness testing are presented? | Describe different approaches used in fairness testing solutions, such as algorithms or tools. And explore fairness testing levels in MLS. |

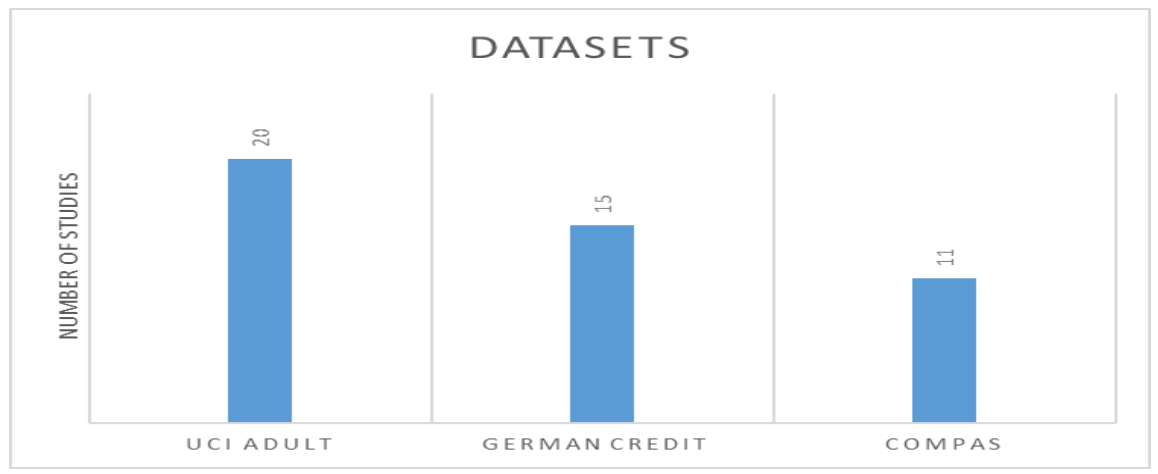

| RQ5 | Which datasets are used to detect the unfairness of MLS? | Identify some datasets/algorithms/models that are used to detect unfairness in MLS and explain the reasons behind their biases. |

| RQ6 | What are the research gaps and trends discovered in the reviewed studies? | Explore the gaps in software fairness in MLS research topics. |

| Main Digital Libraries | Supplementary Databases/Websites |

|

IEEE Xplore Springer Link ACM Digital Library Wiley Online Library ArXiv |

Google Scholar Research Gate |

| Search keywords | RQs | Possible Values |

| Journals, Conferences, Year | RQ1 | Journals, conferences, university names and types, published year |

| Definition | RQ2 | Fairness definition |

| Problem Types | RQ3 | Analyzing, reviewing, detecting, evaluating, or testing solutions. |

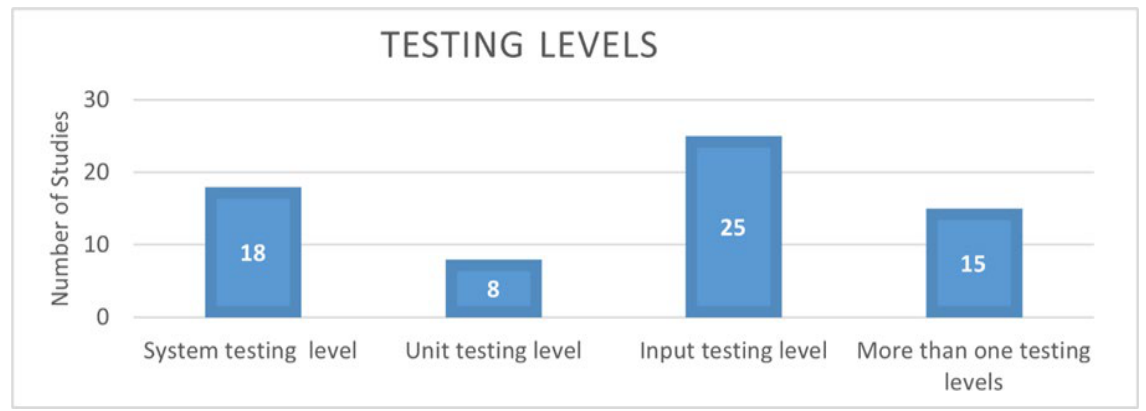

| Methodology | RQ4 | Approaches, tools, algorithms, unit testing, input testing, or system testing. |

| Datasets/Algorithms/Models | RQ5 | Bias/unfairness, datasets, models, algorithms |

| Research gaps | RQ6 | Trend, future work, research gap |

| Category | Concept/Metric | Fairness Definition | Study ID |

|

General Fairness |

Fairness | Ethical principle ensuring equitable and unbiased treatment across individuals/groups. | PS66 |

| Fairness-aware Model | A model that avoids discrimination and promotes fairness. | PS66 | |

| Fairness Degree | Max difference in predictions for pairs differing only in sensitive attributes. | PS19 | |

| Fairness Through Unawareness | Fairness by excluding sensitive attributes from decision-making. | PS38 | |

| Counterfactual Fairness | Prediction remains unchanged if the individual belongs to a different group. | PS38 | |

| Algorithmic Bias | Bias from mathematical rules favoring certain attributes. | PS63 | |

|

Individual Fairness |

Individual Fairness | Similar individuals should receive similar outcomes. | PS67, PS10, PS23, PS26 |

| Individual Discrimination | Discrimination between individuals differs only in protected attributes. | PS32, PS11, PS32 | |

|

Group Fairness |

Group Fairness | Equal outcomes across demographic groups (e.g., gender, race). | PS10, PS23, PS26 |

| Fairness Constraints | Constraints like demographic parity, equal opportunity, disparate impact. | PS23, PS26 | |

|

Fairness Metrics |

Demographic Parity | Outcome is independent of the protected attribute. | PS15, PS25 |

| Equal Opportunity | Equal true positive rates across groups. | PS25, PS6, PS39 | |

| Predictive Parity | Equal positive predictive value across groups. | PS15, PS35 | |

| Disparate Impact | Ratio of favorable outcomes between groups. | PS25, PS6 | |

| Average Absolute Odds | Average difference in false/true positive rates across groups. | PS6, PS35 | |

| Theil Index | Measures inequality in prediction outcomes. | PS6 | |

| Calibration | Predicted score should reflect actual outcomes equally across groups. | PS35, PS38 |

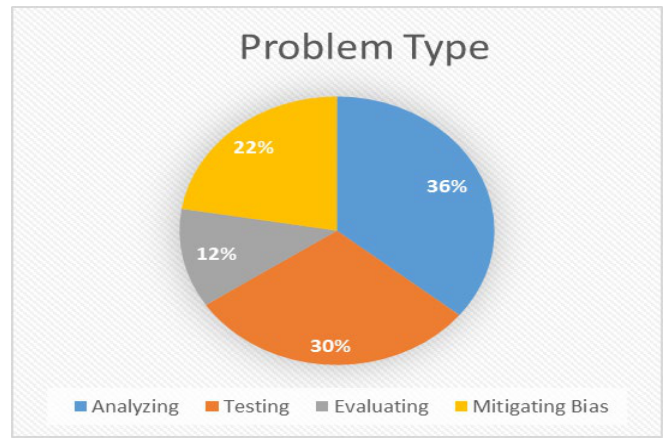

| Problem Type | Problem Description | Number of Studies | Studies IDs |

| Analyzing | The process of systematically examining unfairness detection proposed methods to understand their effectiveness and limitations. | 24 | PS1, PS4, PS5, PS6, PS12, PS16, PS18, PS20, PS21, PS22, PS24, PS25, PS26, PS28, PS36, PS37, PS41, PS42, PS45, PS46, PS58, PS61, PS66 |

| Mitigating Bias | The process of implementing strategies or algorithms that ensure fair outcomes and mitigate bias in unfairness detection systems. | 15 | PS3, PS7, PS8, PS13, PS15, PS23, PS27, PS31, PS34, PS43, PS48, PS53, PS59, PS64, PS67 |

| Testing | The process of proposing testing solutions for checking whether the model produces fair outcomes and follows fairness metrics. | 20 | PS2, PS10, PS11, PS14, PS17, PS19, PS32, PS35, PS39, PS49, PS50, PS51, PS52, PS54, PS55, PS56, PS60, PS62, PS63, PS65 |

| Evaluating | The process of comparing different approaches, analyzing outcomes, and validating results. | 8 | PS9, PS29, PS30, PS33, PS40, PS44, PS47, PS57 |

| Problem Type | Suggested Solutions |

| Analyzing | Optimization methods, Analysis of Fairness Metrics |

| Mitigating Bias | Mitigation methods include outlier detection, ranking, imputation, data massaging, dataset sampling, and data augmentation. |

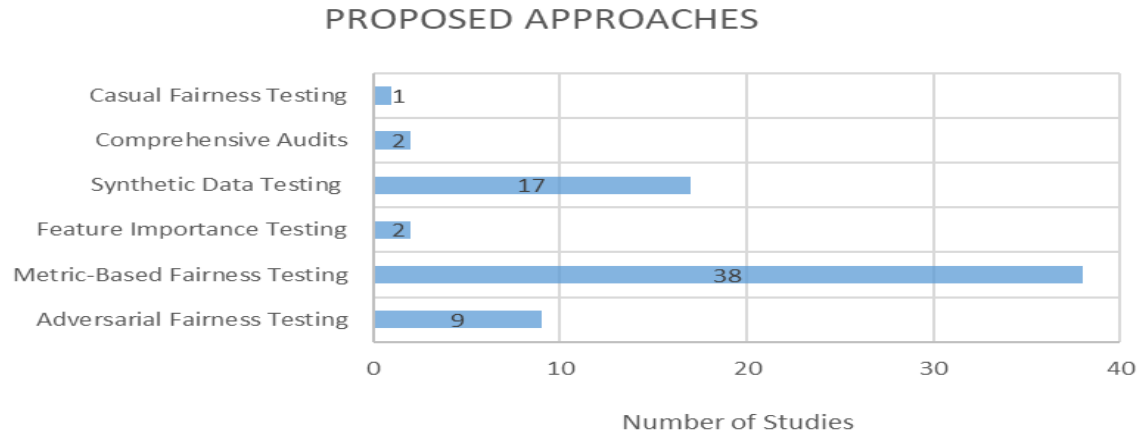

| Testing | White/black box testing, test case generation tools, comprehensive audits, adversarial fairness testing, feature importance testing, metric-based fairness testing, synthetic data testing, and casual fairness testing. |

| Evaluating | Compare unfairness detection approaches, evaluate methods by using different benchmark datasets with suggested solutions, and compare multiple fairness metrics. |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license (http://creativecommons.org/licenses/by/4.0/).