Submitted:

31 March 2026

Posted:

01 April 2026

You are already at the latest version

Abstract

Keywords:

1. Introduction

1.1. Research Background

1.2. Development of Quantum-Inspired Models

- Proposed a quantum-inspired emotion recognition model that integrates a self-embedding mechanism combining complex embeddings and phase pre-training

- 2.

- Integrated multi-layer Transformer and contrastive learning mechanisms to enhance feature modeling and discriminative capabilities

- 3.

- Achieved excellent experimental results on public datasets, verifying the effectiveness and generalization ability of the method

2. Related Work

2.1. Motivation for Quantum-Inspired Neural Networks in Dialogue Emotion Recognition

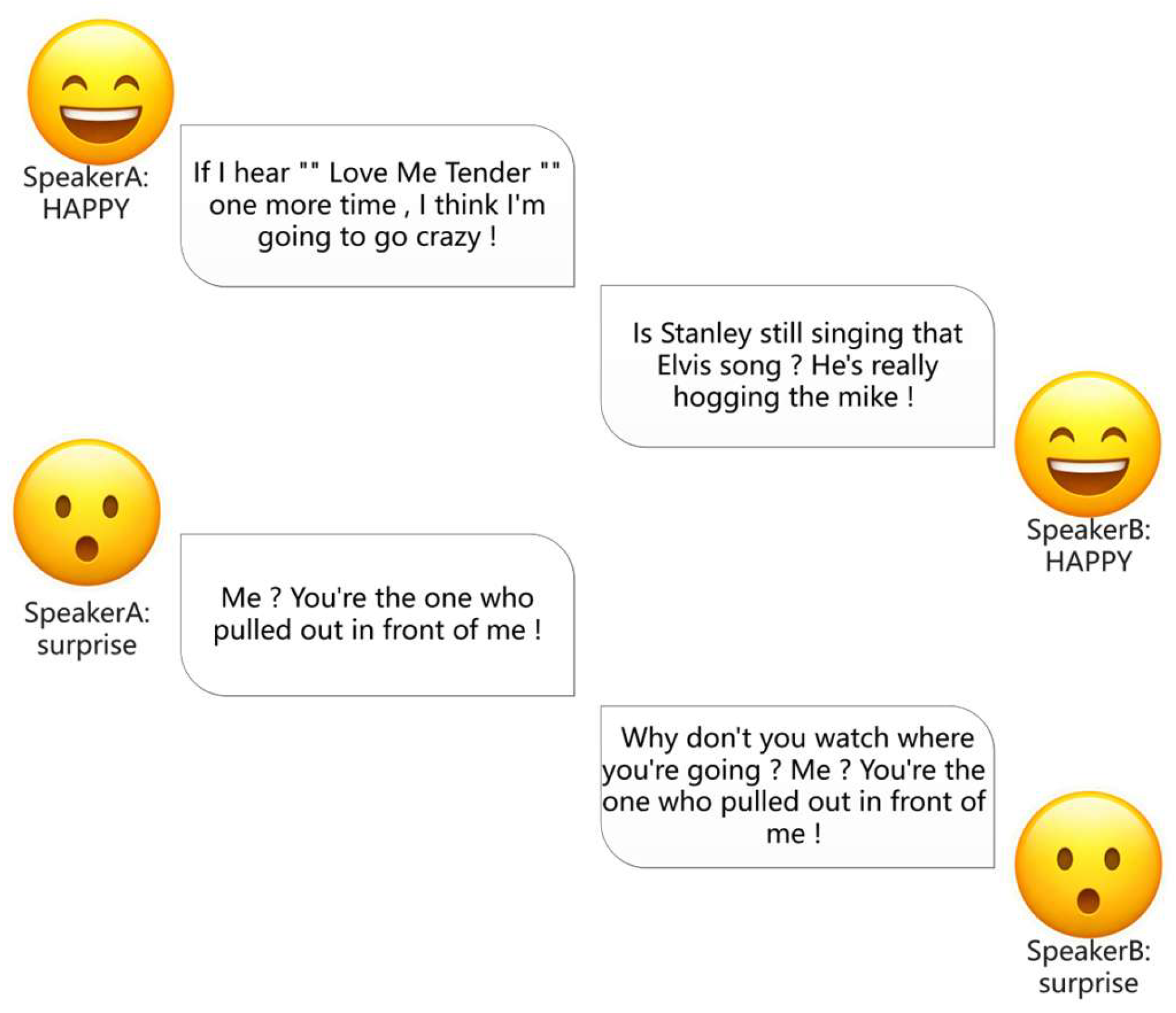

2.2. The RECCON-DD Dataset and Dialogue Emotion Recognition

2.3. Complex-Valued Neural Networks and Quantum-Inspired Representation Learning

2.4. Contrastive Learning and Multi-Task Optimization

2.5. Hybrid Architectures and Quantum-Inspired Transformers

2.6. Enhanced Quantum-Inspired Architecture Design

3. Methodology

3.1. Problem Formalization

3.1.1. Multimodal Sentiment Recognition Task

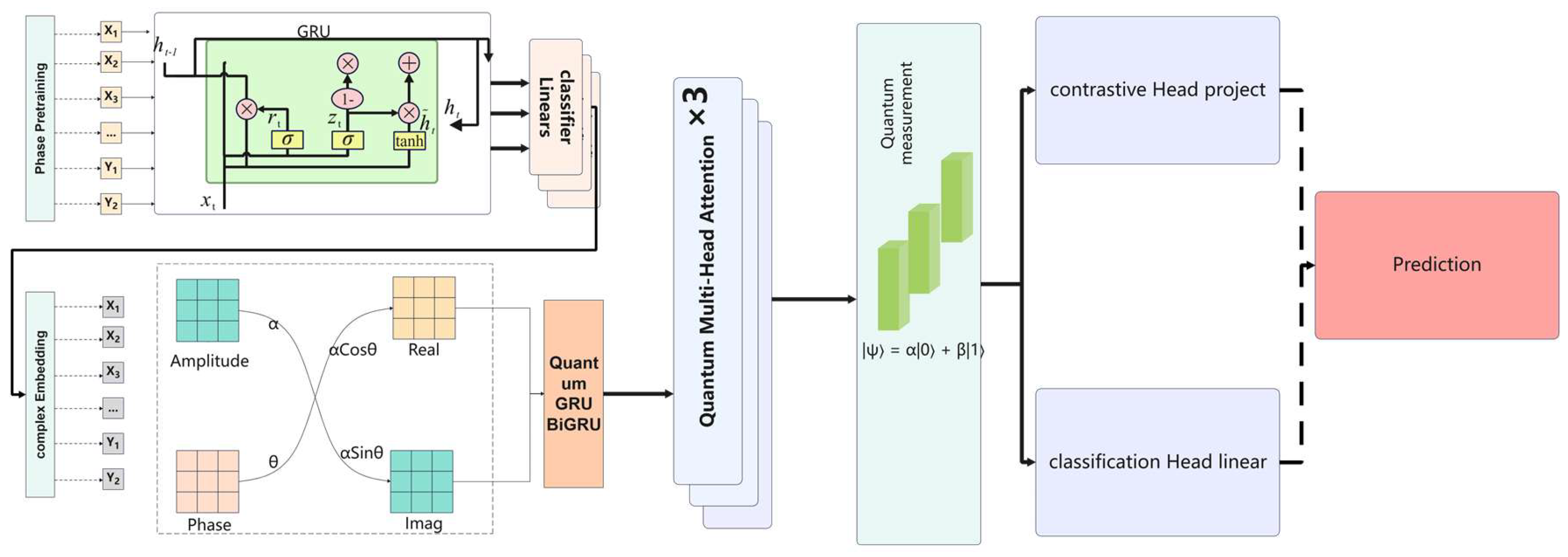

3.2. Overall Model Architecture

- Input Layer and Complex-valued Embedding Layer: This layer transforms the input text sequence into a quantum state representation in the complex domain, achieving quantum state embedding through amplitude-phase separation.

- Bidirectional Complex Recurrent Layer: It processes the sequence bidirectionally within the complex domain to capture both forward and backward contextual information.

- Complex-valued Multi-head Attention Mechanism: This mechanism employs 8 attention heads to learn different feature subspaces in parallel, enabling the modeling of global dependencies.

- Multi-layer Transformer Blocks: Three layers of Transformer structures are stacked. Each layer contains a multi-head attention module, residual connections, layer normalization, and a position-wise feed-forward network to progressively extract and refine feature representations.

- Quantum Measurement Module: Three distinct measurement operators are employed to map quantum states to an observable probability feature space via the Born rule.

- Feature Enhancement Module: Self-supervised contrastive learning is adopted to enhance the discriminative power of the features, pulling similar samples closer and pushing dissimilar ones apart.

- Output Layer: The final sentiment category probability distribution is generated through a fully connected layer and a Softmax activation function.

3.3. Enhanced Complex Embedding Layer

3.3.1. Complex Embedding Design

3.3.2. Positional Encoding Integration

3.4. BiGRU Architecture

3.4.1. BiGRU Cell Design

3.5. Multi-Head Self-Attention Mechanism

3.5.1. Quantum State Attention Computation

3.6. Quantum Transformer Block

3.6.1. Architecture Design

3.6.2. Residual Connection Adaptation

3.7. Enhanced Quantum Measurement Mechanism

3.7.1. Multiple Measurement Operator Design

3.7.2. Measurement Probability Interpretation

3.8. Contrastive Learning Strategy

3.8.1. Contrastive Loss Function

3.8.2. Feature Representation Learning

3.9. Training Strategy Optimization

3.9.1. Joint Loss Function

4. Experiments

4.1. Datasets

4.2. Model Architecture and Training Configuration

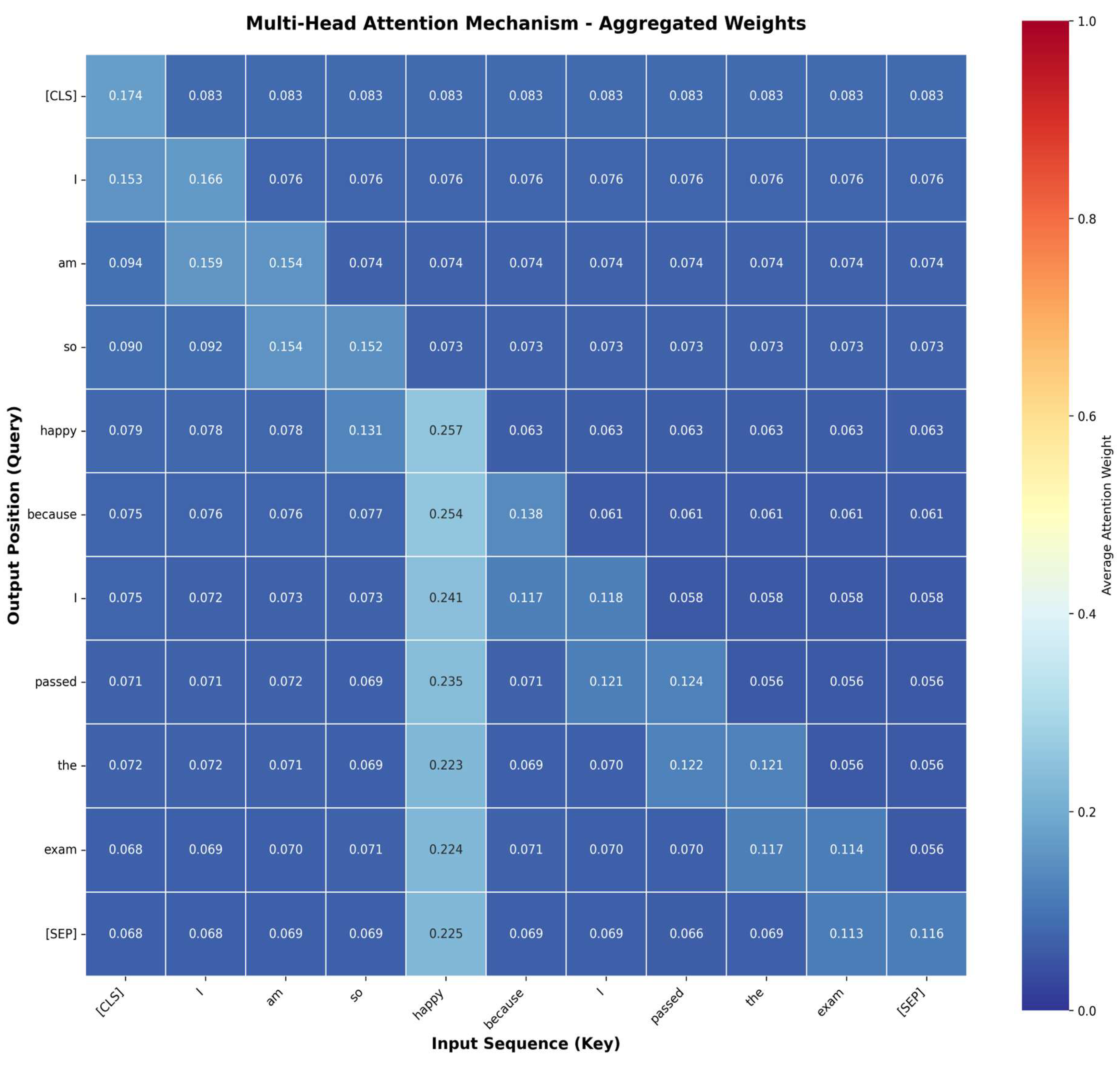

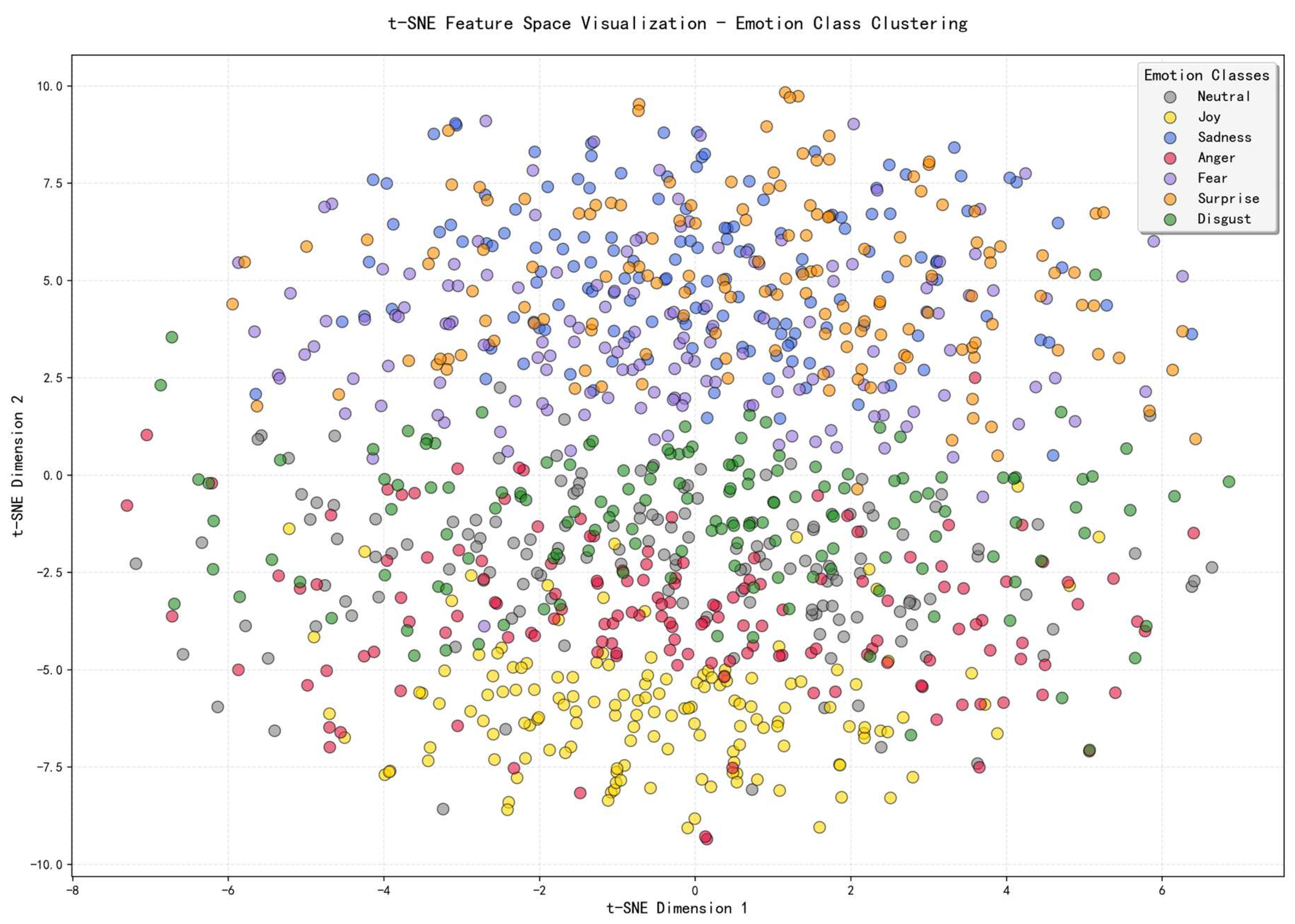

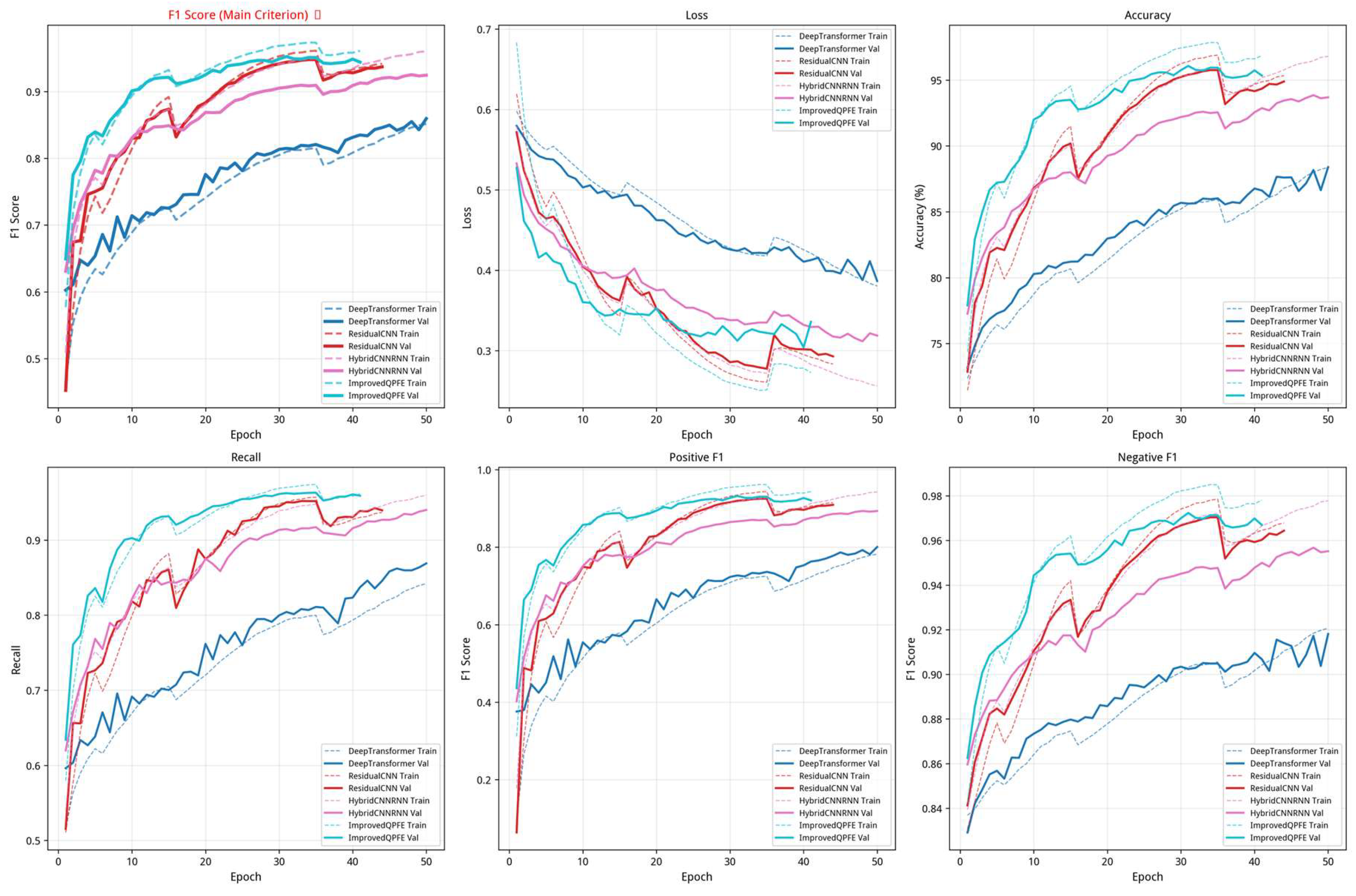

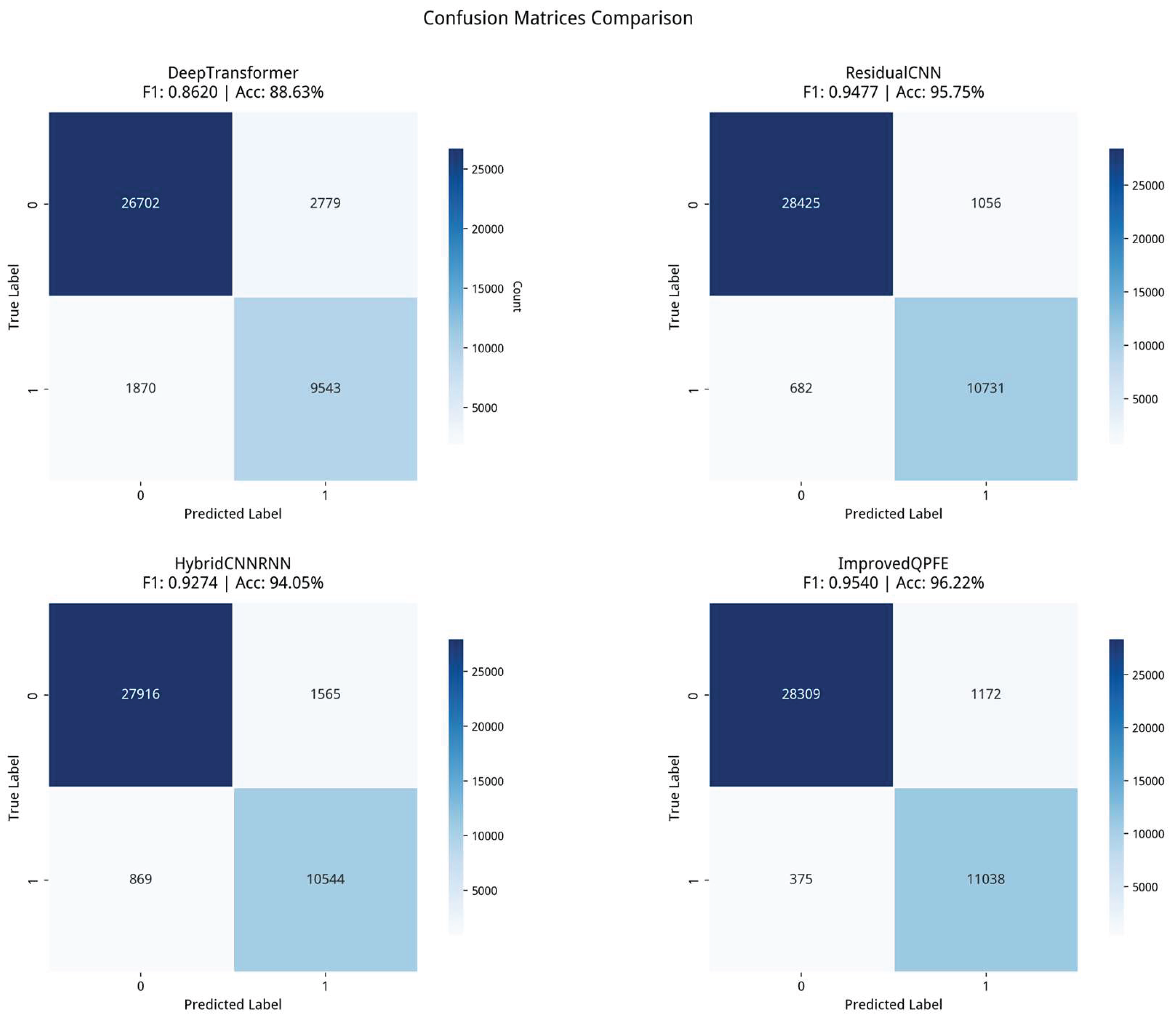

4.3. Visualization Analysis

4.3.1. Attention Weight Visualization

4.3.2. Feature Space Distribution Visualization

4.4. Mathematical Definitions of Evaluation Metrics

4.4.1. Confusion Matrix Basics

4.4.2. Accuracy

4.4.3. Precision

4.4.4. Recall

4.4.5. F1 Score

4.4.6. Macro F1

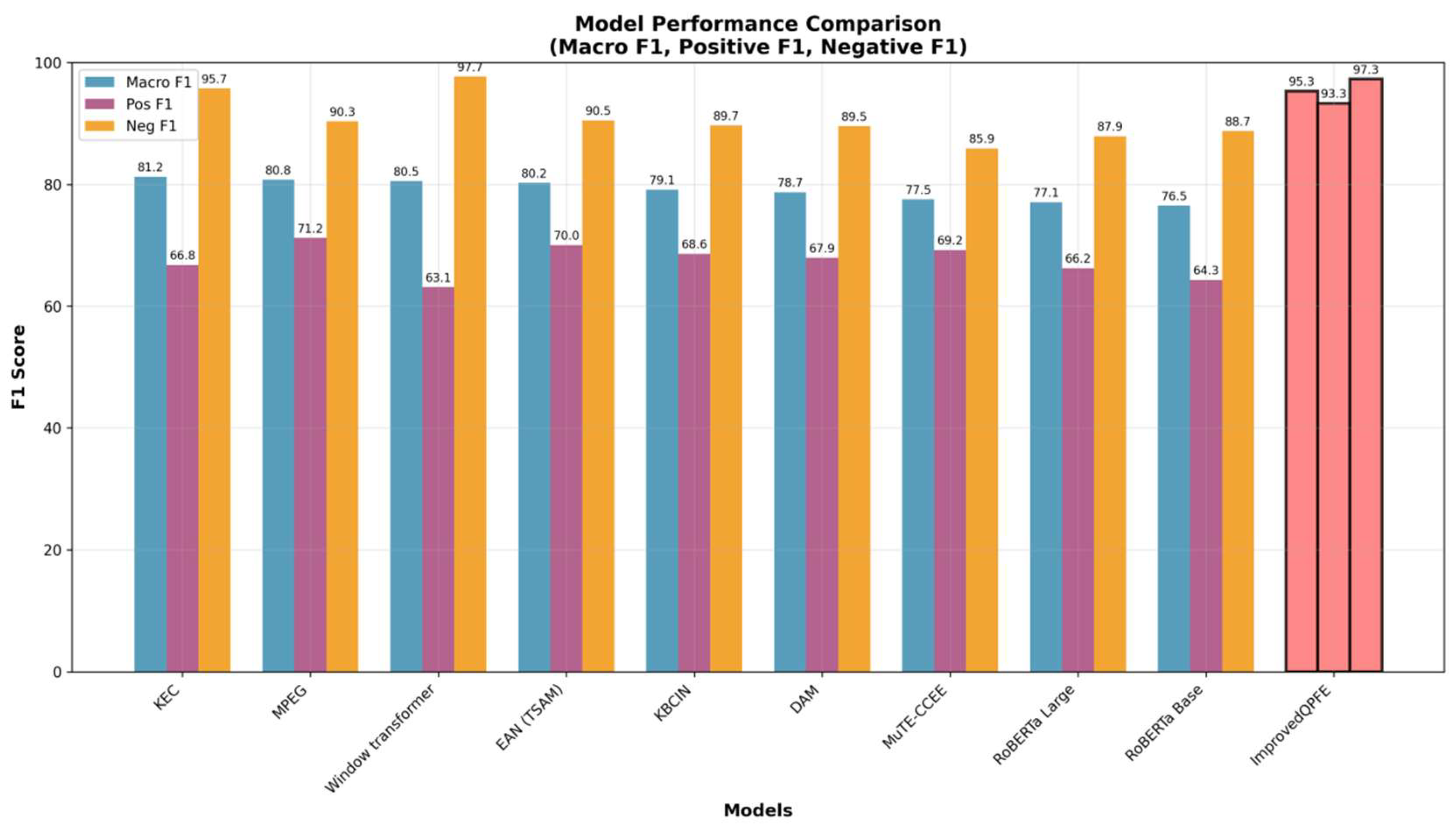

4.5. Experimental Results

4.5.1. Cross-Dataset Validation: Experimental Results on RECCON-IEM

4.6. Ablation Study

5. Conclusion

Author Contributions

Funding

Conflicts of Interest

References

- Jim, J.R.; Talukder, M.A.R.; Malakar, P.; Kabir, M.M.; Nur, K.; Mridha, M.F. Recent Advancements and Challenges of NLP-Based Sentiment Analysis: A State-of-the-Art Review. Natural Language Processing Journal 2024, 6, 100059. [Google Scholar] [CrossRef]

- Tang, H.; Kamei, S.; Morimoto, Y. Data Augmentation Methods for Enhancing Robustness in Text Classification Tasks. Algorithms 2023, 16, 59. [Google Scholar] [CrossRef]

- Zhang, C.-X.; Liu, R.; Gao, X.-Y.; Yu, B. Graph Convolutional Network for Word Sense Disambiguation. Discrete Dynamics in Nature and Society 2021, 2021, 1–-12. [Google Scholar] [CrossRef]

- Wang, T.; Zhong, J.; Chen, J.; Hu, Q. Composite Kernels for Automatic Word Sense Disambiguation. Jnl of Comp & Theo Nano 2015, 12, 619–-623. [Google Scholar] [CrossRef]

- Ibrahim, N.; Aboulela, S.; Ibrahim, A.; Kashef, R. A Survey on Augmenting Knowledge Graphs (KGs) with Large Language Models (LLMs): Models, Evaluation Metrics, Benchmarks, and Challenges. Discov Artif Intell 2024, 4. [Google Scholar] [CrossRef]

- Yilmaz, S.; Toklu, S. A Deep Learning Analysis on Question Classification Task Using Word2vec Representations. Neural Comput & Applic 2020, 32, 2909–-2928. [Google Scholar] [CrossRef]

- Yang, C.; Zhang, Y. Public Emotions and Visual Perception of the East Coast Park in Singapore: A Deep Learning Method Using Social Media Data. Urban Forestry & Urban Greening 2024, 94, 128285. [Google Scholar] [CrossRef]

- Farhangian, F.; Cruz, R.M.O.; Cavalcanti, G.D.C. Fake News Detection: Taxonomy and Comparative Study. Information Fusion 2024, 103, 102140. [Google Scholar] [CrossRef]

- Thomas, M.; Latha, C.A. RETRACTED ARTICLE: Sentimental Analysis of Transliterated Text in Malayalam Using Recurrent Neural Networks. J Ambient Intell Human Comput 2020, 12, 6773–-6780. [Google Scholar] [CrossRef]

- Devlin, J.; Chang, M.-W.; Lee, K.; Toutanova, K. Proceedings of the 2019 Conference of the North; Association for Computational Linguistics, 2019; p. pp 4171--4186. [Google Scholar] [CrossRef]

- Shahid, R.; Wali, A.; Bashir, M. Next Word Prediction for Urdu Language Using Deep Learning Models. Computer Speech & Language 2024, 87, 101635. [Google Scholar] [CrossRef]

- Punetha, N.; Jain, G. Game Theory and MCDM-Based Unsupervised Sentiment Analysis of Restaurant Reviews. Appl Intell 2023, 53, 20152–-20173. [Google Scholar] [CrossRef]

- Bashiri, H.; Naderi, H. Comprehensive Review and Comparative Analysis of Transformer Models in Sentiment Analysis. Knowl Inf Syst 2024, 66, 7305–-7361. [Google Scholar] [CrossRef]

- Baqach, A.; Battou, A. A New Sentiment Analysis Model to Classify Students’ Reviews on MOOCs. Educ Inf Technol 2024, 29, 16813–-16840. [Google Scholar] [CrossRef]

- Govers, J.; Feldman, P.; Dant, A.; Patros, P. Down the Rabbit Hole: Detecting Online Extremism, Radicalisation, and Politicised Hate Speech. ACM Comput. Surv. 2023, 55(14s), 1--35. [Google Scholar] [CrossRef]

- Basili, R.; Rocca, M.D.; Pazienza, M.T. Contextual Word Sense Tuning and Disambiguation. Applied Artificial Intelligence 1997, 11, 235–-262. [Google Scholar] [CrossRef]

- Shaukat, S.; Asad, M.; Akram, A. Developing an Urdu Lemmatizer Using a Dictionary-Based Lookup Approach. Applied Sciences 2023, 13, 5103. [Google Scholar] [CrossRef]

- HaCohen-Kerner, Y.; Kass, A.; Peretz, A. HAADS: A Hebrew Aramaic Abbreviation Disambiguation System. J. Am. Soc. Inf. Sci. 2010, 61, 1923–-1932. [Google Scholar] [CrossRef]

- Wong, M.-F.; Guo, S.; Hang, C.-N.; Ho, S.-W.; Tan, C.-W. Natural Language Generation and Understanding of Big Code for AI-Assisted Programming: A Review. Entropy 2023, 25, 888. [Google Scholar] [CrossRef]

- Chen, J.; Liu, Z.; Huang, X.; Wu, C.; Liu, Q.; Jiang, G.; Pu, Y.; Lei, Y.; Chen, X.; Wang, X.; Zheng, K.; Lian, D.; Chen, E. When Large Language Models Meet Personalization: Perspectives of Challenges and Opportunities. World Wide Web 2024, 27. [Google Scholar] [CrossRef]

- Sarker, I.H. LLM Potentiality and Awareness: A Position Paper from the Perspective of Trustworthy and Responsible AI Modeling. Discov Artif Intell 2024, 4. [Google Scholar] [CrossRef]

- Wu, S.; Roberts, K.; Datta, S.; Du, J.; Ji, Z.; Si, Y.; Soni, S.; Wang, Q.; Wei, Q.; Xiang, Y.; Zhao, B.; Xu, H. Deep Learning in Clinical Natural Language Processing: A Methodical Review. Journal of the American Medical Informatics Association 2019, 27, 457–-470. [Google Scholar] [CrossRef] [PubMed]

- Lin, W.; Liao, L.-C. Lexicon-Based Prompt for Financial Dimensional Sentiment Analysis. Expert Systems with Applications 2024, 244, 122936. [Google Scholar] [CrossRef]

- Jain, G.; Lobiyal, D.K. Word Sense Disambiguation Using Cooperative Game Theory and Fuzzy Hindi WordNet Based on ConceptNet. ACM Trans. Asian Low-Resour. Lang. Inf. Process. 2022, 21, 1–-25. [Google Scholar] [CrossRef]

- Ni, P.; Li, Y.; Li, G.; Chang, V. Natural Language Understanding Approaches Based on Joint Task of Intent Detection and Slot Filling for IoT Voice Interaction. Neural Comput & Applic 2020, 32, 16149–-16166. [Google Scholar] [CrossRef]

- Zhang, P.; Gao, H.; Zhang, J.; Song, D. Quantum-Inspired Neural Language Representation, Matching and Understanding. Foundations and Trends® in Information Retrieval 2023, 16(4---5), 318--509. [Google Scholar] [CrossRef]

- Zhang, P.; Hui, W.; Wang, B.; Zhao, D.; Song, D.; Lioma, C.; Simonsen, J.G. Complex-Valued Neural Network-Based Quantum Language Models. ACM Trans. Inf. Syst. 2022, 40, 1–-31. [Google Scholar] [CrossRef]

- Liu, Y.; Li, Q.; Wang, B.; Zhang, Y.; Song, D. A Survey of Quantum-Cognitively Inspired Sentiment Analysis Models. arXiv 2023. [Google Scholar] [CrossRef]

- Shi, J.; Chen, T.; Lai, W.; Zhang, S.; Li, X. Pretrained Quantum-Inspired Deep Neural Network for Natural Language Processing. IEEE Transactions on Cybernetics 2024, vol. 54(no. 10), 5973–5985. [Google Scholar] [CrossRef]

- Lai, W.; Shi, J.; Chang, Y. Quantum-Inspired Fully Complex-Valued Neutral Network for Sentiment Analysis. Axioms 2023, 12, 308. [Google Scholar] [CrossRef]

- Ai, W.; Shou, Y.; Meng, T.; Yin, N.; Li, K. DER-GCN: Dialogue and Event Relation-Aware Graph Convolutional Neural Network for Multimodal Dialogue Emotion Recognition. arXiv 2023. [Google Scholar] [CrossRef]

- Joshi, A.; Bhat, A.; Jain, A.; Singh, A.V.; Modi, A. COGMEN: COntextualized GNN Based Multimodal Emotion recognitioN. arXiv 2022. [Google Scholar] [CrossRef]

- Yan, P.; Li, L.; Zeng, D. Quantum Probability-Inspired Graph Attention Network for Modeling Complex Text Interaction. Knowledge-Based Systems 2021, 234, 107557. [Google Scholar] [CrossRef]

- Singh, J.; Bhangu, K.S.; Alkhanifer, A.; AlZubi, A.A.; Ali, F. Quantum Neural Networks for Multimodal Sentiment, Emotion, and Sarcasm Analysis. Alexandria Engineering Journal 2025, 124, 170–-187. [Google Scholar] [CrossRef]

- Tiwari, P.; Zhang, L.; Qu, Z.; Muhammad, G. Quantum Fuzzy Neural Network for Multimodal Sentiment and Sarcasm Detection. Information Fusion 2024, 103, 102085. [Google Scholar] [CrossRef]

- Arnett, C.; Jones, E.; Yamshchikov, I.P.; Langlais, P.-C. Toxicity of the Commons: Curating Open-Source Pre-Training Data. arXiv 2024. [Google Scholar] [CrossRef]

- Buehler, M.J. PRefLexOR: Preference-Based Recursive Language Modeling for Exploratory Optimization of Reasoning and Agentic Thinking. arXiv 2024. [Google Scholar] [CrossRef]

- Li, X.; Gao, M.; Zhang, Z.; Yue, C.; Hu, H. Selection of LLM Fine-Tuning Data Based on Orthogonal Rules. arXiv 2024. [Google Scholar] [CrossRef]

| Model | RECCON-DD | RECCON-IEM | |||||||

|---|---|---|---|---|---|---|---|---|---|

| Pos.F1 | Neg.F1 | macro F1 | Pos.F1 | Neg.F1 | macro F1 | Recall | Accuracy | ||

|

1 |

DeepTransformer | - | - | - | 80.41 | 91.99 | 86.20 | 87.09 | 88.63 |

| ResidualCNN | - | - | - | 92.50 | 97.03 | 94.77 | 95.22 | 95.74 | |

| HybridCNNRNN | - | - | - | 89.65 | 95.82 | 92.73 | 93.53 | 94.04 | |

| 2 | RoBERTa Base | 76.51 | 64.28 | 88.74 | - | - | - | - | - |

| RoBERTa Large | 77.06 | 66.23 | 87.89 | - | - | - | - | - | |

| MuTE-CCEE | 77.55 | 69.2 | 85.9 | - | - | - | - | - | |

| DAM | 78.73 | 67.91 | 89.55 | - | - | - | - | - | |

| KBCIN | 79.12 | 68.59 | 89.65 | - | - | - | - | - | |

| EAN(TSAM) | 80.24 | 70 | 90.48 | - | - | - | - | - | |

| Window-transformer | 80.53 | 63.1 | 97.69 | - | - | - | - | - | |

| MPEG | 80.76 | 71.18 | 90.35 | - | - | - | - | - | |

| KEC | 81.25 | 66.76 | 95.74 | - | - | - | - | - | |

| 3 | Ours | 95.29 | 93.31 | 97.27 | 93.45 | 97.34 | 95.39 | 96.36 | 96.21 |

| Model Configuration. | Macro F1 | Pos. F1 | Neg. F1 | Accuracy (%) | Precision | Recall | Δ Macro F1 |

| QPFE (Full) | 0.9529 | 0.9331 | 0.9727 | 96.12 | 0.9612 | 0.9636 | - |

| w/o Complex Embedding | 0.9198 | 0.8834 | 0.9562 | 92.45 | 0.9267 | 0.9245 | -0.0331 |

| w/o Quantum GRU | 0.9334 | 0.9012 | 0.9656 | 93.78 | 0.9389 | 0.9378 | -0.0195 |

| w/o Quantum Attention | 0.9387 | 0.9098 | 0.9676 | 94.23 | 0.9434 | 0.9423 | -0.0142 |

| w/o Contrastive Learning | 0.9445 | 0.9201 | 0.9689 | 94.89 | 0.9478 | 0.9489 | -0.0084 |

| w/o Phase Pre-training | 0.9489 | 0.9267 | 0.9711 | 95.34 | 0.9523 | 0.9534 | -0.0040 |

| w/o Quantum Measurement | 0.9501 | 0.9289 | 0.9713 | 95.67 | 0.9545 | 0.9567 | -0.0028 |

Disclaimer/Publisher’s Note: The statements, opinions and data contained in all publications are solely those of the individual author(s) and contributor(s) and not of MDPI and/or the editor(s). MDPI and/or the editor(s) disclaim responsibility for any injury to people or property resulting from any ideas, methods, instructions or products referred to in the content. |

© 2026 by the authors. Licensee MDPI, Basel, Switzerland. This article is an open access article distributed under the terms and conditions of the Creative Commons Attribution (CC BY) license.