3.3. Experimental Results

All the results in this article were collected using two custom-built Python scripts. The fptp_analyzer.py script (

Supplementary Materials/Code/fptp_analyzer.py) interfaces with MongoDB's explain() command in two verbosity modes: explain("queryPlanner") captures the Pipeline Optimizer's rewriting output — the optimized operator order and the stages absorbed into

$cursor — while explain("allPlansExecution") runs all candidate plans in parallel during the trial period and returns the FPTP metrics for each: Advanced, Works, and Score. Execution time for the scalability analysis is measured separately using a dedicated Python script, which runs each pipeline 20 times with planCacheClear before every run and reports the median (

Supplementary Materials/Code/benchmark.py). All raw results are written to JSON dump files for offline analysis (

Supplementary Materials/DumpFiles/ *.json).

All tests are run under controlled conditions that are identical across both database versions: 50K documents, the same collection schema, the same eight indexes, and the same nine pipeline definitions. The only variable is the MongoDB version — 6.0.3 as the baseline and 8.2.5 as the current production release at the time of writing (February 2026).

The nine tests are designed to cover a representative range of aggregation pipeline patterns, from simple single-operator queries to complex multi-stage pipelines involving grouping, unwinding, and sorting. Each test targets a specific aspect of optimizer behavior: plan selection under competing indexes, interaction with the SBE engine, index-provided sort and early termination, ESR index design, and preference bias at low selectivity. The tests are presented in order of increasing complexity, and for each one the query definition, FPTP race results for both versions, and a comparative discussion are provided.

The fptr_analyzer.py script displays information on the console for each query regarding the logical plan and the selected physical plan, as well as statistical information for all analyzed plans (

Supplementary Materials/

Figure S1).

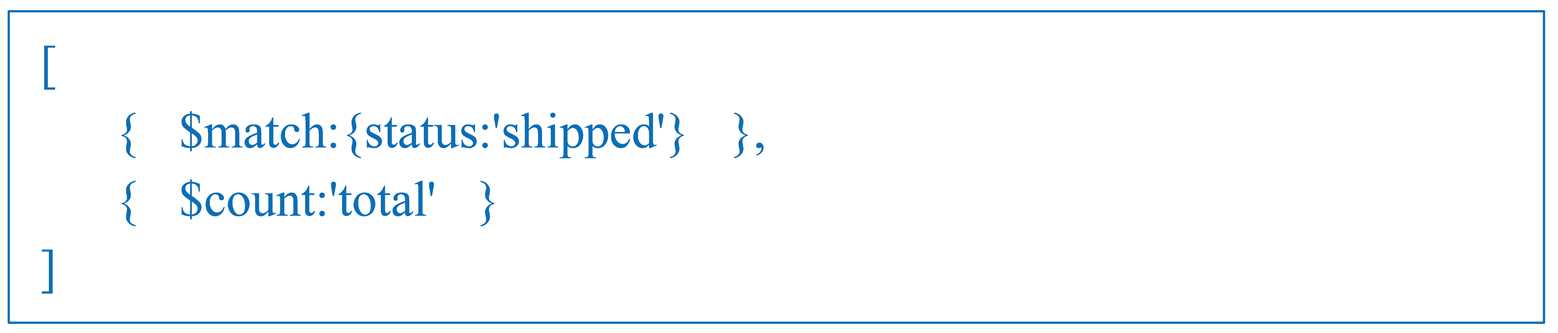

Test 1: Simple $match on status with $count

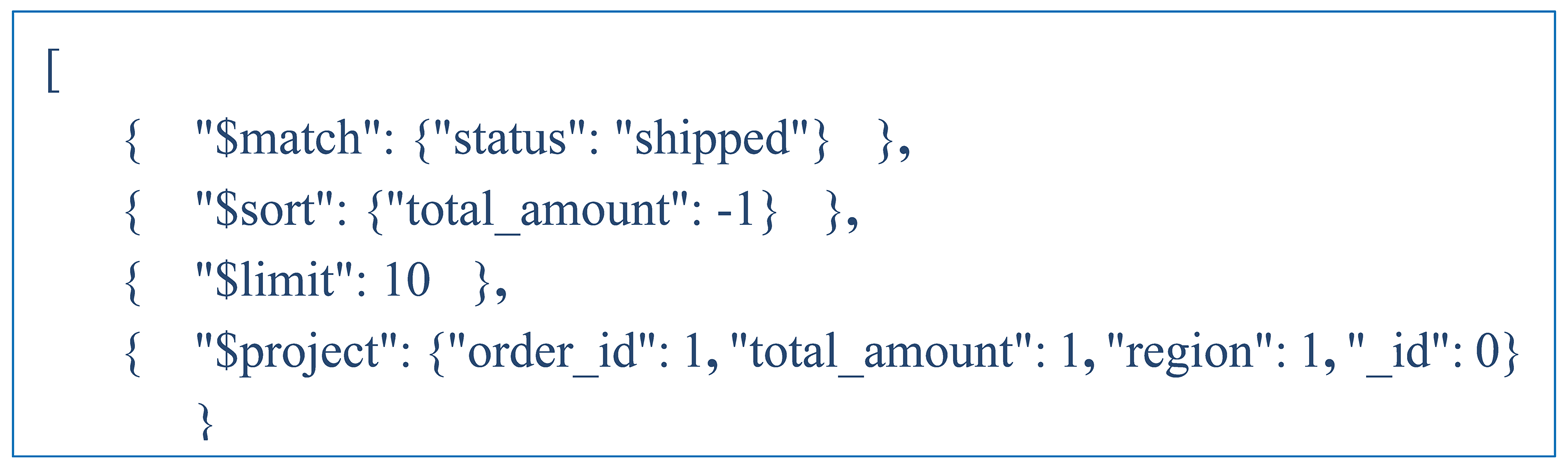

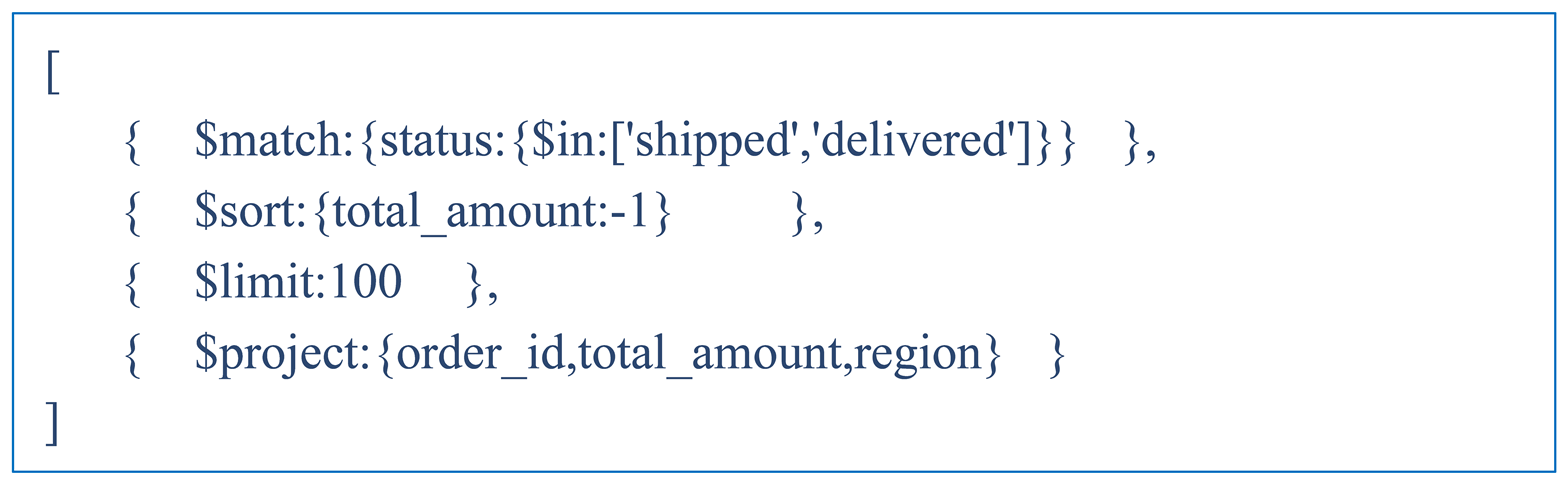

This test uses a straightforward pipeline that filters orders by status and counts the results. The query is shown in . The goal is to check whether FPTP activates when only one applicable index exists and the pipeline ends with $count.

|

Listing 1. Simple $match on status with $count. |

|

In both versions, FPTP does not engage — there are no competing plans to race. The more interesting finding is the difference in how the two versions execute the query. MongoDB 6.0.3 uses a specialized COUNT_SCAN operator that reads index keys directly without loading the underlying documents — 0 documents examined, 12,523 keys scanned, 4ms. In MongoDB 8.2.5 the winning stage is GROUP, executed by the SBE engine, also at 4ms. This is not a regression — it reflects how the SBE engine implements $count in the 8.x versions through a more general aggregation mechanism rather than a dedicated operator. The result is the same, but the implementation method is less specialized. The conclusion is that when only a single index is applied and there are no competing plans, FPTP has nothing to compete with and is not activated.

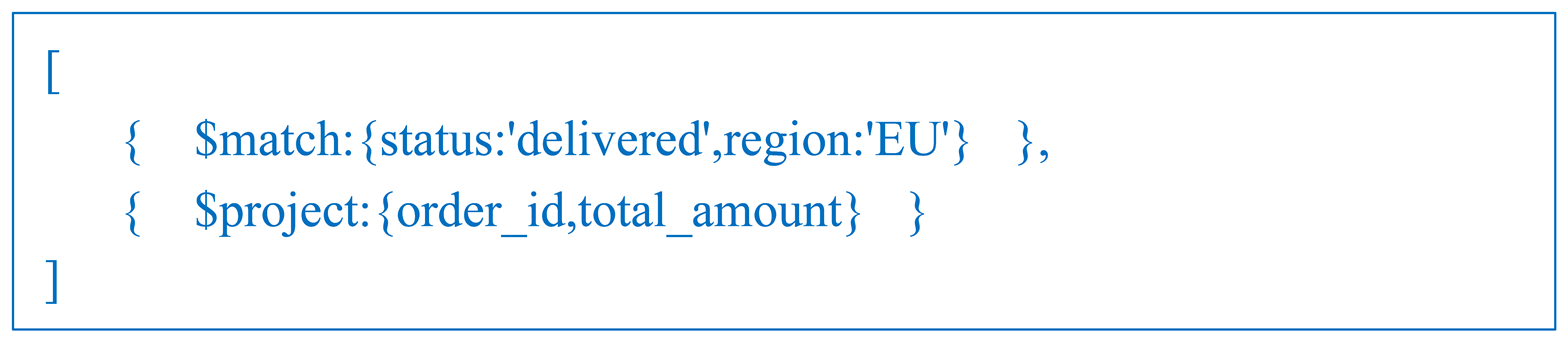

Test 2: $match on status + region (compound index)

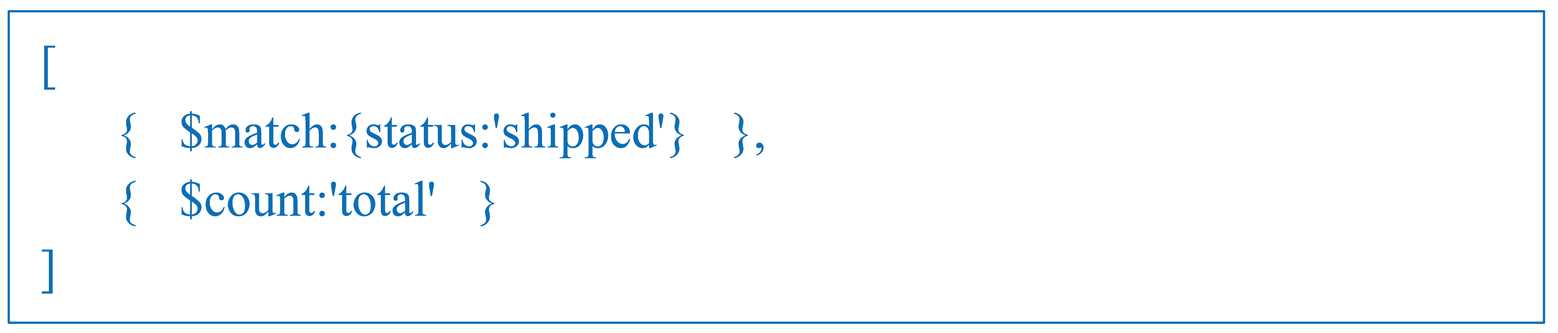

This test examines FPTP behavior when a compound index (idx_status_region) competes against two single-field indexes. The hypothesis is that the compound index will dominate, since it covers both predicates in a single scan. The query is shown in Listing 2.

|

Listing 2. $match on status and region with $project. |

|

The results are summarized in

Table 3.

FPTP activates in both versions with three competing plans, and the winning strategy is identical in both — execution time is 6.5ms in 6.0.3 and 8ms in 8.2.5, a 23% slowdown in the newer version. Plan 0 achieves Advanced = Works = 101, meaning every index key scan maps directly to a result document with no wasted work. The single-field indexes, by contrast, require a FETCH followed by a post-filter on the second predicate, with only around 19–25% of fetched documents passing the filter — hence the low Advanced counts. FPTP correctly identifies the compound index as the dominant plan.

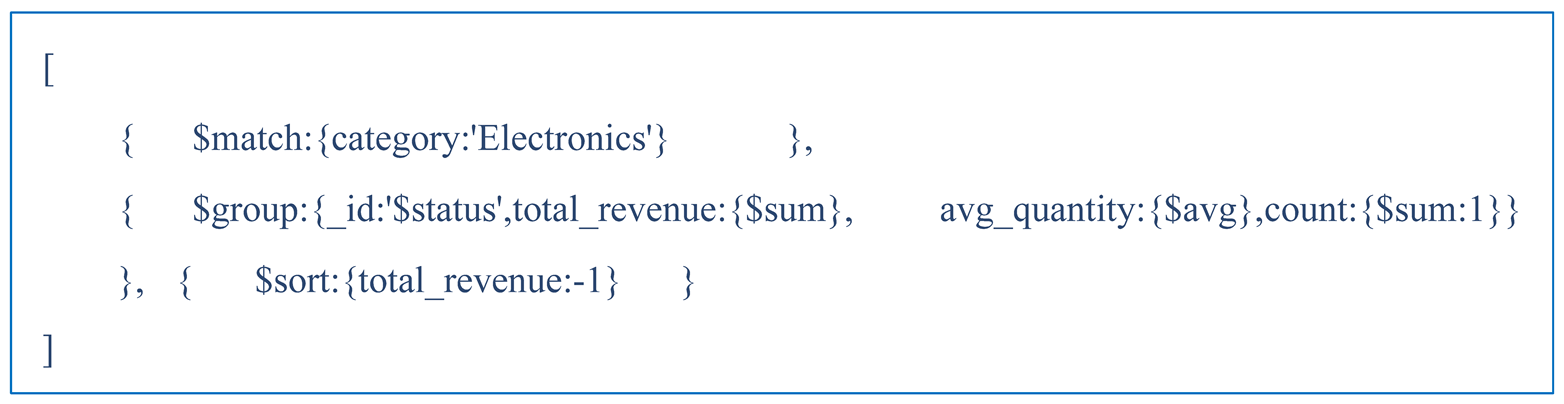

Test 3: $match → $group (aggregation by category, SBE engine)

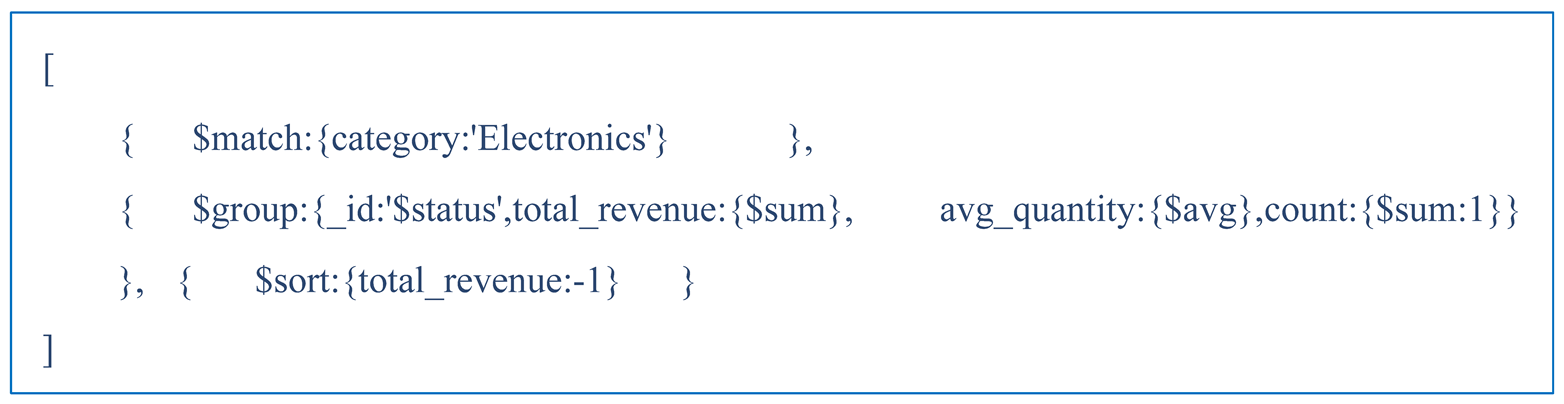

This test looks at how FPTP behaves when the pipeline includes a $group operator and execution is handled by the SBE engine. The query is shown in Listing 3.

|

Listing 3. $match on category followed by $group and $sort. |

|

The results are summarized in

Table 4.

Test 3 exposes a structural problem that remains unresolved in MongoDB 8.2.5. Both candidate plans produce an identical Score of 2.0002 in both versions — the winner (idx_category) is chosen by its position in the plan array, not because it outperforms the alternative on any metric. The key difference between versions is in the availability of Works: in 6.0.3, Works=0 because the SBE engine does not track work units, and the blocking nature of $group means Advanced=0 throughout the trial period; in 8.2.5, Works=101 is available. Even so, this changes nothing — both plans process the same number of documents, and the Score remains numerically indistinguishable. Execution time improves from 42ms in 6.0.3 to 31ms in 8.2.5, a 26% gain. Despite idx_cat_status_amount being the theoretically better choice — it covers both category and status — FPTP cannot identify it as such when the scores are tied. The problem is structural: resolving it would require statistics on $group output cardinality, which FPTP does not have access to.

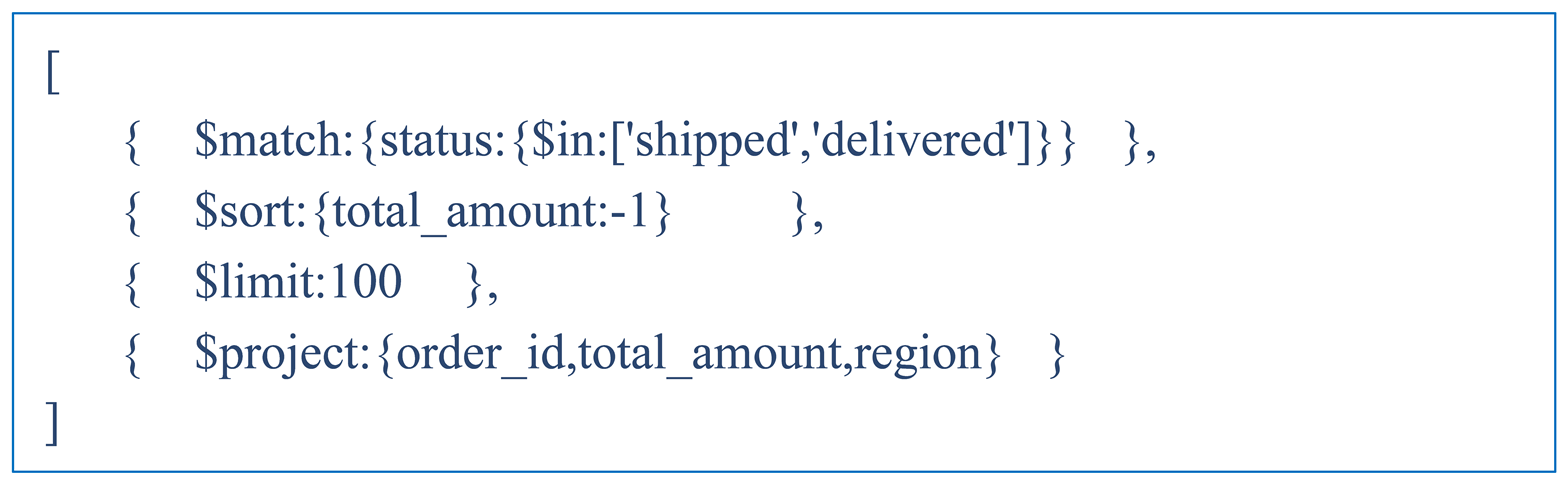

Test 4: $match → $sort → $limit (Top-N query)

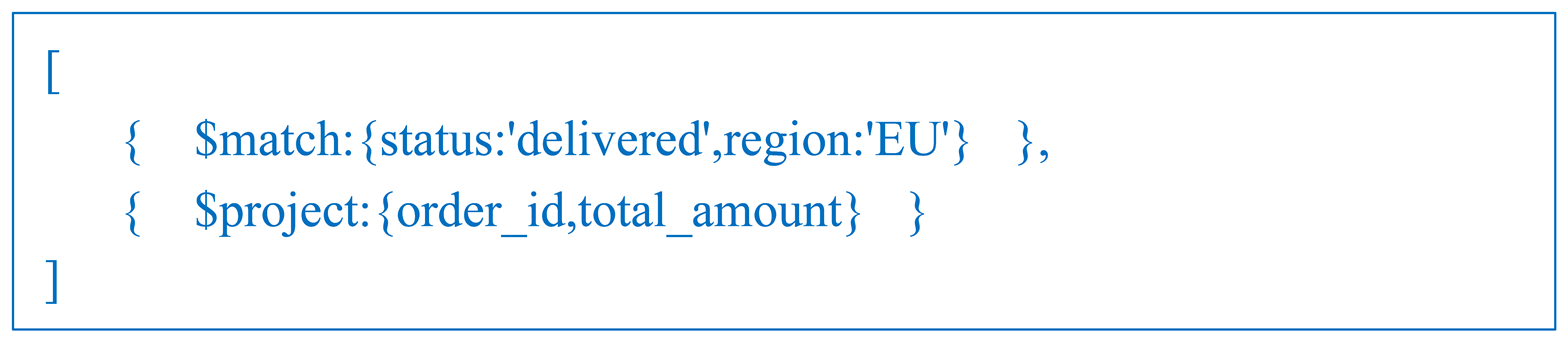

This test examines FPTP behavior on a Top-N query. The hypothesis is that idx_total_amount, being a descending index, provides index-provided sort and allows early termination after 100 documents. The query is shown in Listing 4.

|

Listing 4. Top-N query with $match, $sort, $limit, and $project. |

|

The results are summarized in

Table 5.

Plan 0 wins outright in both versions. idx_total_amount is a descending index — MongoDB scans keys in descending order and stops as soon as 100 documents have been collected. The 188 keys examined break down as 100 results plus 88 documents that did not pass the $match filter. Plans 1 and 2 have no such shortcut: they require an in-memory sort of roughly 25,000 matching documents before $limit can be applied, which means Advanced=0 throughout the trial period. A Score of 2.5321 against 1.0001 is a decisive margin. Execution time is 2ms in both versions. The Pipeline Optimizer applies the same transformation in both versions, collapsing 4 logical stages into a single $cursor with index-provided sort (*).

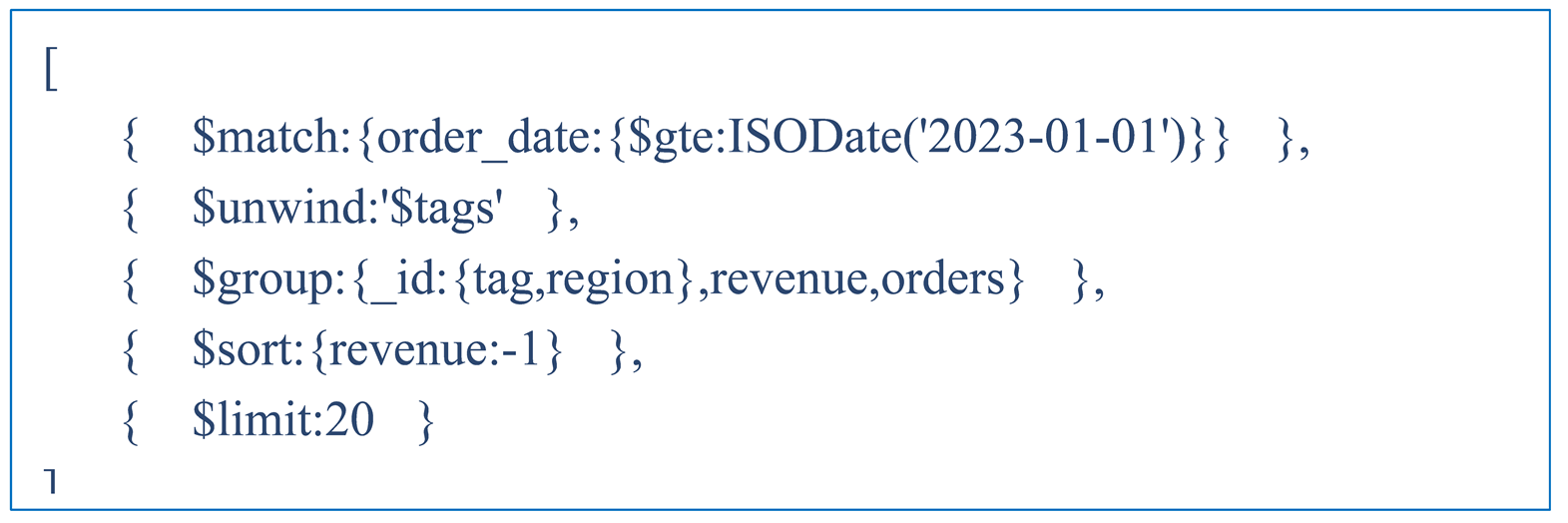

Test 5: $match → $unwind → $group → $sort → $limit

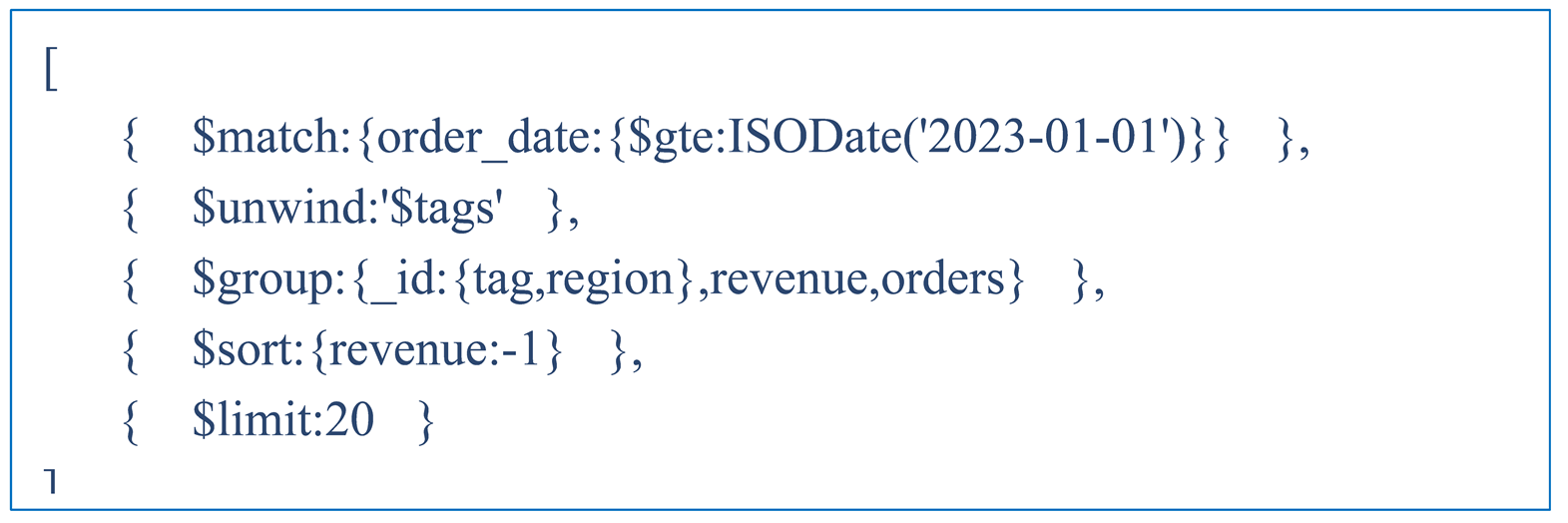

This test looks at FPTP behavior on a more complex pipeline that combines a date range filter with $unwind and $group. The query is shown in Listing 5.

|

Listing 5. Complex pipeline with $unwind and $group over a date range. |

|

The results are summarized in

Table 6.

FPTP does not activate in either version — only one index applies to the date range predicate, leaving nothing to race. After $match, 25,045 documents pass the filter; after $unwind, the document count grows to approximately 38,000, at an average of around 1.5 tags per document; the final output is 20 documents. Winning Plan Stage is PROJECTION_SIMPLE. $cursor absorbs $match + $project. The main source of latency is $unwind, which expands the tags array and multiplies the document stream before it reaches $group. The $sort and $limit stages that follow $group cannot be reordered by the Pipeline Optimizer, as $group is a blocking operator whose output order is undefined relative to any index.

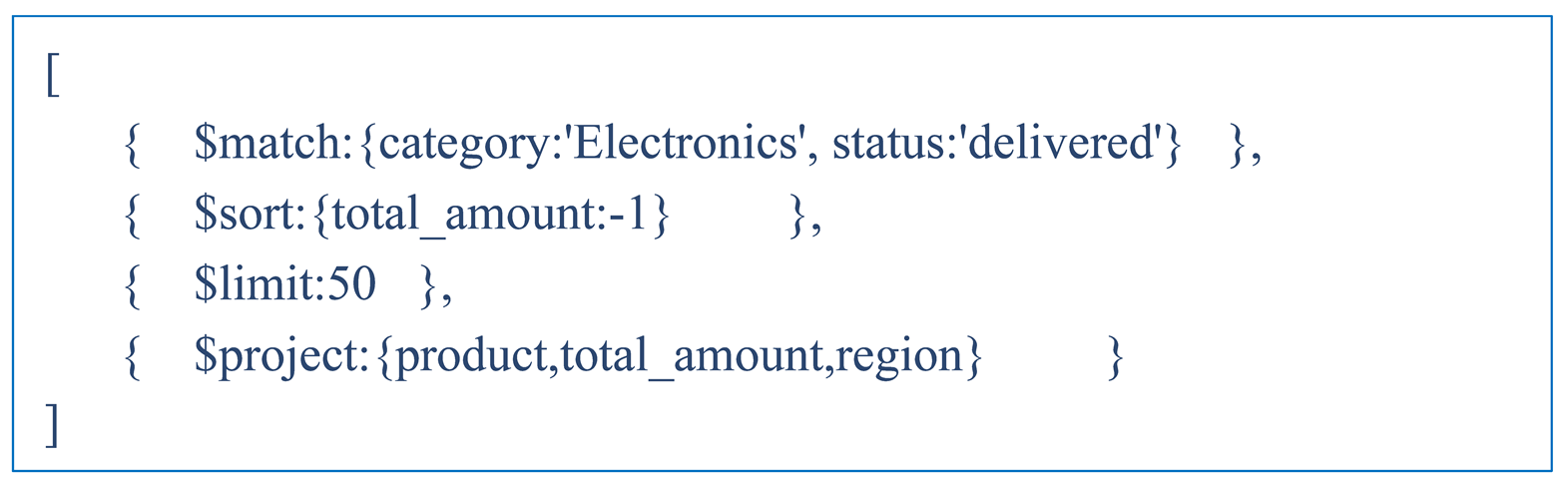

Test 6: Optimal compound index (ESR rule)

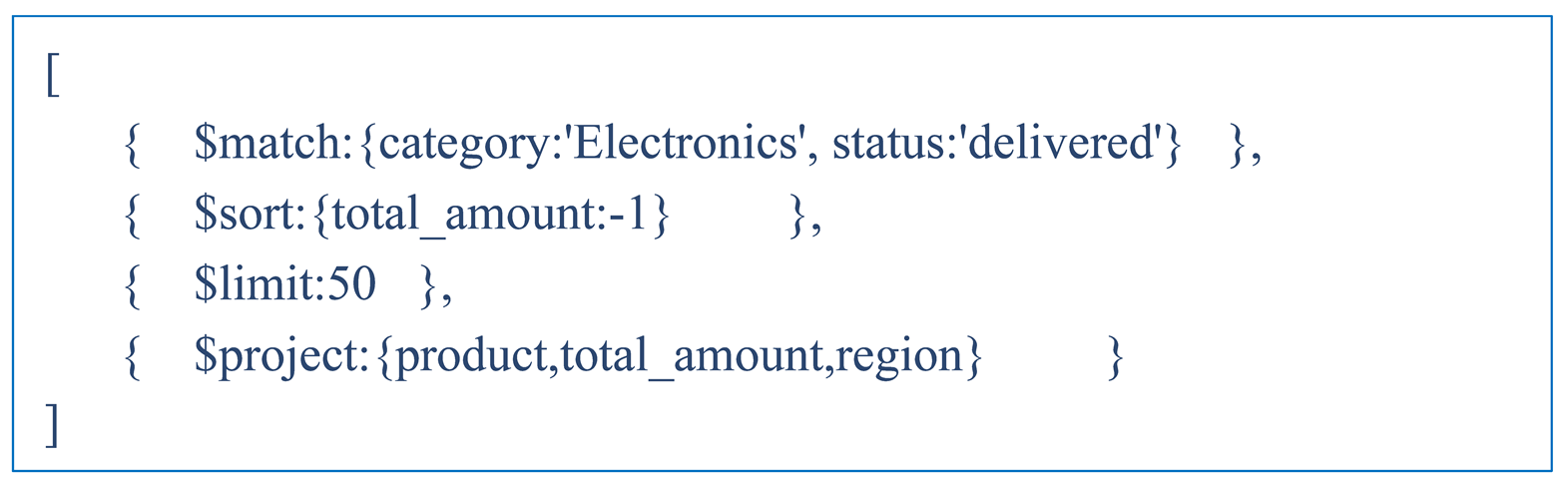

This test is an empirical verification of the Equality → Sort → Range (ESR) rule. The rule defines the recommended field order for compound indexes in MongoDB: equality predicates first, the sort field next, and range predicates last. An index following this order can simultaneously narrow the scan to a specific data segment and provide index-provided sort, eliminating the need for a physical sort node. The hypothesis is that idx_cat_status_amount = { category: 1, status: 1, total_amount: -1 } will dominate through index-provided sort. The query is shown in Listing 6.

|

Listing 6. ESR-compliant query with $match, $sort, $limit, and $project. |

|

The results are summarized in

Table 7.

Test 6 produces the most stable result in the study: 1ms in both versions without exception. A Score of 3.0002, where Advanced = Works = 50, is the highest value observed across all nine tests — three times the score of any competing plan, and identical in both versions. The ESR field order delivers two things at once: the equality fields (category, status) restrict the scan to a narrow data segment, while the sort field (total_amount DESC) provides index-provided sort and allows early termination after exactly 50 documents. The ESR principle for index design is confirmed as version independent.

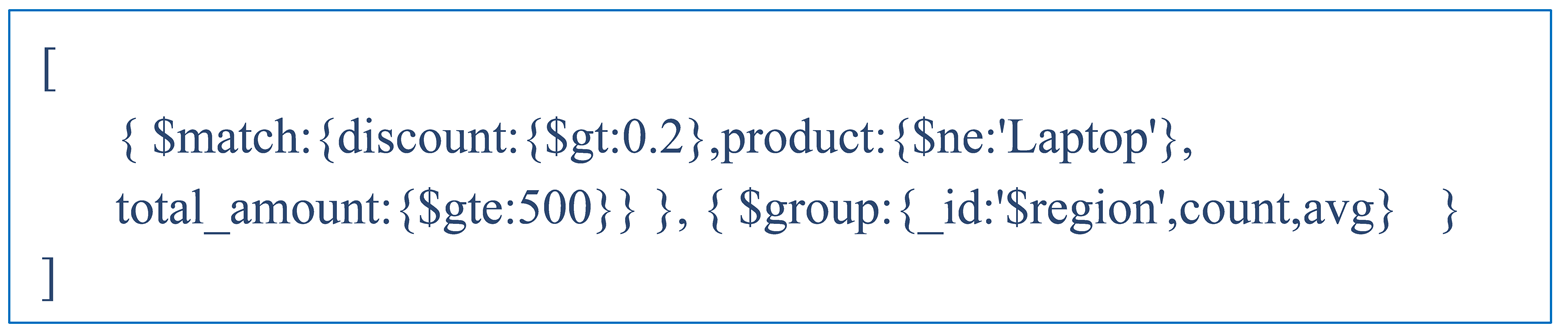

Test 7: IXSCAN preference bias

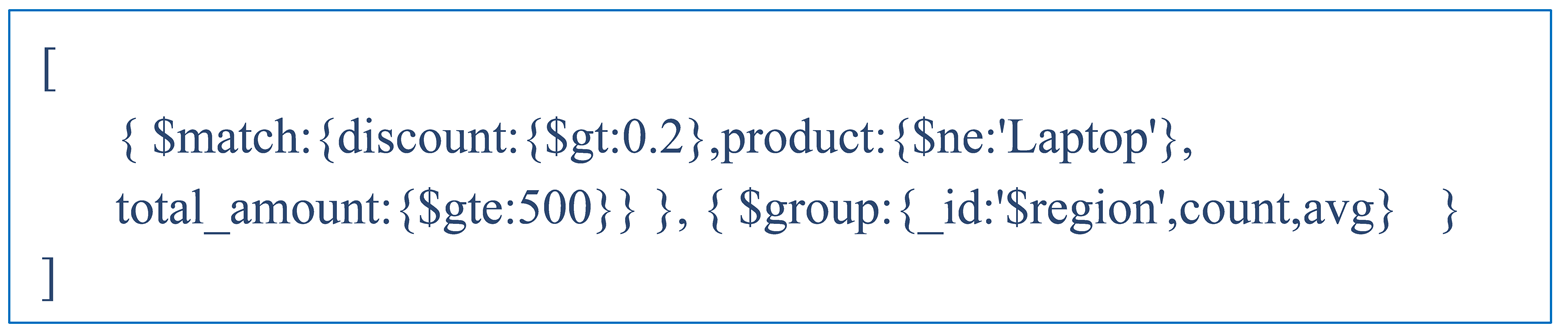

This test examines plan selection behavior when the query predicates have low combined selectivity. The query is shown in Listing 7.

|

Listing 7. Low selectivity $match with $group aggregation. |

|

The results are summarized in

Table 8.

FPTP does not activate in either version — there are no competing plans. The interesting finding here is how the two versions differ in their plan choice. MongoDB 6.0.3 picks COLLSCAN at 163ms — a reasonable decision given that an Index Filter Ratio of 0.0001 means the index covers almost no documents, and the mandatory FETCH for every index key makes IXSCAN more expensive than scanning the collection outright. MongoDB 8.2.5 picks IXSCAN[idx_total_amount] at the same selectivity and runs in 134ms — not because the choice is better, but because the specific combination of data and hardware happens to favor it at 50K documents. The selected index does not even cover the $match predicates, yet 8.2.5 still prefers it, scanning 48,895 out of 50,000 documents with the added overhead of index traversal.

The IXSCAN preference bias documented by Tao et al. [

4] for MongoDB 7.0.1 is still present in 8.2.5 — and under worse conditions than before. Where 6.0.3 correctly fell back to COLLSCAN at ratio=0.0001, 8.2.5 chooses the index regardless. The bias has not been fixed.

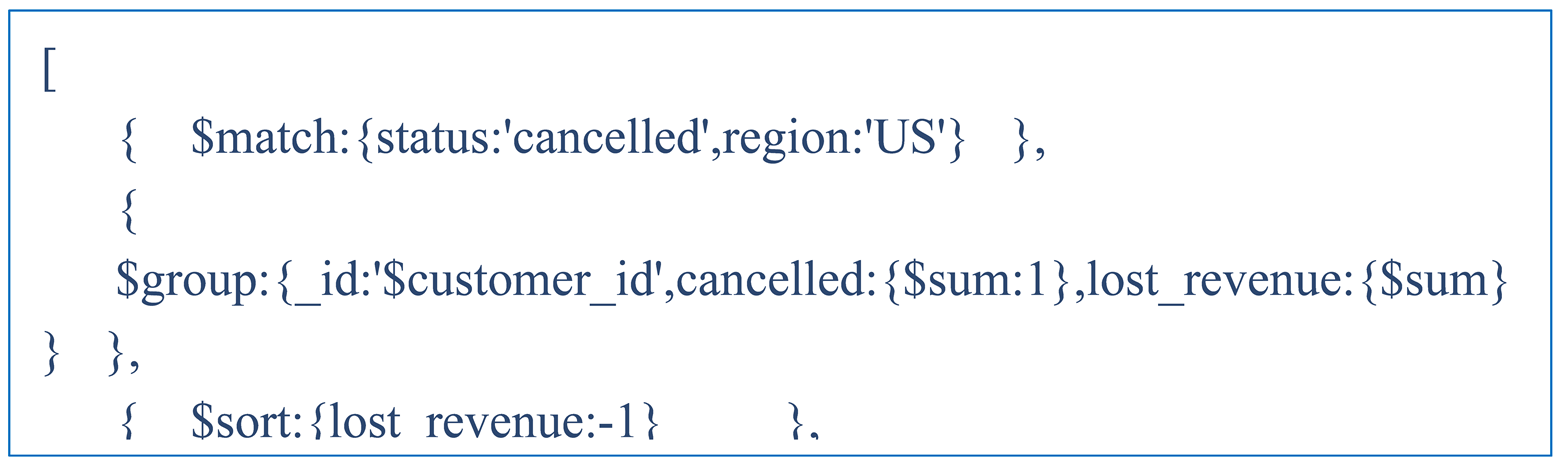

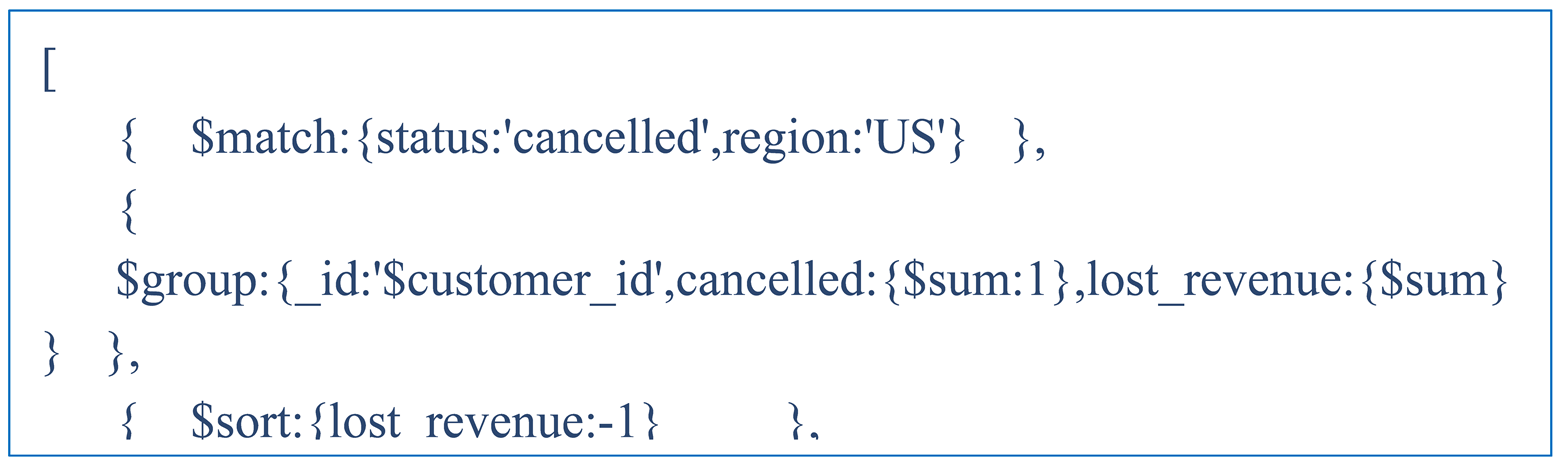

Test 8: $match → $group — cancelled orders by customer (SBE engine)

This test analyzes cancelled orders grouped by customers, with the SBE engine handling execution and a compound index competing against two single-field indexes. The query is shown in Listing 8.

|

Listing 8. Cancelled orders analysis by customer with $group, $sort, and $limit. |

|

The results are summarized in

Table 9.

Unlike Test 3, where both plans scored identically and the winner was chosen by position, FPTP correctly differentiates the plans here. idx_status_region covers both $match predicates and scans only 3,166 documents, giving an Index Filter Ratio of 0.7429. This translates into a clear Score advantage: 2.0002 for Plan 0 against roughly 1.19 for the alternatives. As in Test 3, Works=0 in 6.0.3 due to the SBE engine not tracking work units, while Works=101 is available in 8.2.5. Execution time is 16.5ms in 6.0.3 and 15ms in 8.2.5, a 9% improvement.

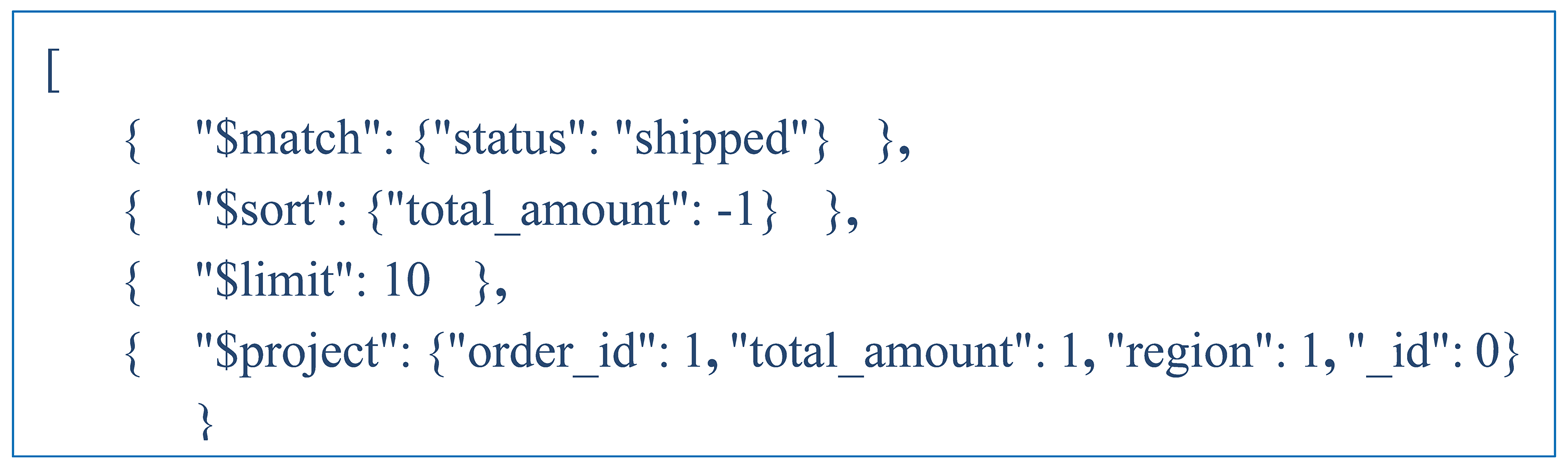

Test 9: $match → $group — cancelled orders by customer (SBE engine)

This test verifies the early termination effect when $limit is placed at position 3 out of 4 stages in the pipeline. The query is shown in Listing 9.

|

Listing 9. Early termination query with $limit at position 3/4. |

|

Test 9 shows FPTP behaving consistently across both versions in an early termination scenario. Plan 0 delivers 10 documents during the trial period through index-provided sort, stopping as soon as the $limit is satisfied. Plans 1 and 2 require a physical sort node and cannot return a single document until all matching documents have been sorted — Advanced=0 throughout the trial period. The resulting Score gap, roughly 2.4 against 1.0001, follows the same pattern seen in Tests 4 and 6. The small numerical differences between versions — docsExamined=25 in 6.0.3 versus 36 in 8.2.5, Score=2.4002 versus 2.2780 — fall within the expected range given different random data distributions. The fact that Works is available in both versions confirms that Works=0 in 6.0.3 is specific to the SBE engine and $group pipelines, not a general limitation of the FPTP mechanism.

3.6. Effect of Data Distribution on FPTP Behavior

To check whether the results hold under more realistic conditions, the experiments are repeated on a collection with the same schema and size (50,000 documents) but with a skewed distribution: Electronics accounts for 65% of categories, US for 55% of regions, shipped and delivered together for 75% of order statuses, prices follow a Pareto distribution, and 70% of orders fall within the last six months of the date range.

The results are split into three groups. The first group covers the stable tests — Top-N sort, the ESR index query, grouping by customer_id, and the early termination verification query. All four show identical FPTP behavior, the same winning index, and execution time differences of 0 to 8%. Index-based optimizations are unaffected by data distribution, a finding that aligns with the size-independence observed in

Section 3.4.

The second group covers tests where the volume of the result stream changes with the distribution. For the $match + $group query on category, the number of documents scanned grows from 16,587 to 32,679 because Electronics now dominates the data, and execution time climbs from 44ms to 71ms. For the $unwind + $group query, the number of documents returned grew from 24,836 to 40,170 because orders are now concentrated in the last six months of the date range, pushing execution time from 125ms to 308ms. In both cases, FPTP behavior and the winning index stay the same — what changes is the volume of data being processed, not how the plan is selected.

The third group contains just one test — the IXSCAN preference bias query — where the effect goes in the opposite direction. Execution time drops from 137ms to 39ms under the skewed distribution. Under uniform data, the predicates on discount and total_amount touch close to 49,000 out of 50,000 documents. Under skewed data, the Pareto-distributed prices produce higher effective selectivity, and the number of documents scanned falls from 48,962 to 14,831. The IXSCAN preference bias itself does not go away — MongoDB picks idx_total_amount in both cases — but its practical impact is much smaller when the data is skewed.

Taken together, these results confirm that the plan selection and FPTP behavior described in

Section 3.3 are qualitatively valid under non-uniform data. The absolute execution times, however, depend heavily on data distribution for any query that does not benefit from early termination.